ECCNN: The Electron Configuration Convolutional Neural Network for Predictive Materials Science and Drug Discovery

This article explores the Electron Configuration Convolutional Neural Network (ECCNN), a novel machine learning framework that uses raw electron configuration data to predict material properties with exceptional accuracy and sample...

ECCNN: The Electron Configuration Convolutional Neural Network for Predictive Materials Science and Drug Discovery

Abstract

This article explores the Electron Configuration Convolutional Neural Network (ECCNN), a novel machine learning framework that uses raw electron configuration data to predict material properties with exceptional accuracy and sample efficiency. We provide a comprehensive analysis for researchers and drug development professionals, covering the foundational principles of ECCNN, its methodological implementation for predicting thermodynamic stability, strategies for troubleshooting and optimizing the model, and a comparative validation against established benchmarks. The discussion highlights how ECCNN's unique approach mitigates inductive bias and accelerates the discovery of new compounds, with direct implications for developing advanced pharmaceuticals and biomaterials.

What is ECCNN? Unpacking the Electron Configuration Framework for Material Property Prediction

The accurate prediction of thermodynamic stability represents a fundamental challenge in materials science and drug discovery. Traditional machine learning approaches for this task have predominantly relied on features derived from elemental composition and atomic properties, which can introduce significant inductive biases and limit model generalizability [1]. The Electron Configuration Convolutional Neural Network (ECCNN) framework introduces a paradigm shift by using raw electron configuration data as its primary input. This approach leverages the intrinsic electronic structure of atoms—the distribution of electrons across atomic orbitals—which is the foundational basis for understanding chemical bonding and stability [1]. By organizing these configurations into structured matrices and processing them through convolutional layers, the ECCNN model captures complex, non-local interactions that are often missed by models built on pre-defined feature sets, enabling more accurate and sample-efficient predictions of compound stability [1].

This document provides detailed application notes and experimental protocols for implementing the ECCNN framework, specifically tailored for researchers exploring new compounds for pharmaceutical development.

Quantitative Performance Comparison

The following table summarizes the performance of the ECCNN-based ensemble model against other state-of-the-art composition-based models on compound stability prediction tasks.

Table 1: Performance Comparison of Composition-Based Stability Prediction Models

| Model Name | Core Input Feature | Architecture | AUC Score | Key Advantage |

|---|---|---|---|---|

| ECSG (ECCNN with Stacked Generalization) [1] | Electron Configuration Matrix | Convolutional Neural Network with Ensemble | 0.988 | High accuracy & superior sample efficiency |

| Roost [1] | Interatomic Interactions (Graph of Elements) | Graph Neural Network | Not Explicitly Reported | Captures relational structure between atoms |

| Magpie [1] | Atomic Property Statistics (e.g., radius, mass) | Gradient Boosted Regression Trees | Not Explicitly Reported | Utilizes a wide range of elemental properties |

The ECCNN framework, when combined with other models in a stacked generalization ensemble (ECSG), demonstrates remarkable sample efficiency, achieving performance equivalent to existing models using only one-seventh of the training data [1].

Experimental Protocols

Protocol 1: Input Matrix Construction from Electron Configuration

Objective: To transform the electron configuration of a chemical compound into a standardized input matrix for the ECCNN model.

Materials:

- Periodic table with electron configuration data for elements 1-118.

- Computational environment (e.g., Python) for data processing.

Methodology:

- Elemental Decomposition: Parse the chemical formula of the target compound to identify the constituent elements and their stoichiometric ratios.

- Orbital Mapping: For each element in the compound, map its electron configuration to a predefined list of 168 atomic orbitals, covering all possible energy levels and orbital types (s, p, d, f) [1].

- Population Vector Creation: For each element, generate a vector of length 168, where each entry corresponds to an orbital. The value for each orbital is the electron occupancy number for that element.

- Composition Weighting: Multiply each element's population vector by its stoichiometric fraction in the compound.

- Matrix Assembly: Create a master matrix with dimensions 118 (elements) × 168 (orbitals) × 8. The third dimension (8) is used to represent different materials properties or to stack multiple representations, forming the complete input tensor for the ECCNN [1].

Protocol 2: ECCNN Model Architecture and Training

Objective: To construct and train the Electron Configuration Convolutional Neural Network.

Materials:

- Configured input matrices from Protocol 1.

- Deep learning framework (e.g., TensorFlow, PyTorch).

- Computational resources (GPU recommended).

- Labeled dataset of compounds with known stability (e.g., from Materials Project, JARVIS) [1].

Methodology:

- Network Architecture:

- Input Layer: Accepts the 118×168×8 electron configuration matrix [1].

- Convolutional Layers:

- Apply two consecutive convolutional operations using 64 filters each with a 5×5 kernel size [1].

- After the second convolution, apply Batch Normalization (BN) to stabilize training [1].

- Follow with a 2×2 Max Pooling operation to reduce spatial dimensionality and introduce translational invariance [1].

- Flattening Layer: Flatten the output of the final pooling layer into a one-dimensional feature vector [1].

- Fully Connected (Dense) Layers: Process the flattened features through one or more fully connected layers to produce the final stability prediction (e.g., decomposition energy, $\Delta H_d$) [1].

- Model Training:

- Compilation: Use an appropriate optimizer (e.g., Adam), loss function (e.g., Mean Squared Error for regression), and tracking metrics (e.g., Accuracy, AUC).

- Fitting: Train the model on the prepared dataset. Utilize techniques like validation splits and early stopping to prevent overfitting.

- Ensemble Integration (ECSG): Use the trained ECCNN as a base-level model within a stacked generalization framework. Combine its predictions with those from other models (e.g., Magpie, Roost) using a meta-learner to produce the final, robust prediction [1].

Protocol 3: Validation with First-Principles Calculations

Objective: To validate the stability predictions of the ECCNN/ECSG model using Density Functional Theory (DFT).

Materials:

- List of candidate compounds predicted to be stable by the ECCNN model.

- DFT simulation software (e.g., VASP, Quantum ESPRESSO).

- High-performance computing (HPC) cluster.

Methodology:

- Structure Generation: For each candidate compound, generate a plausible crystal structure.

- DFT Calculation: Perform a full DFT calculation to determine the compound's ground-state energy and compute its decomposition energy ($\Delta H_d$) relative to other phases in its chemical space [1].

- Stability Assessment: A compound is considered thermodynamically stable if its $\Delta H_d$ is above the convex hull formed by competing phases [1].

- Model Verification: Compare the DFT-calculated stability with the ECCNN model's prediction. The model has demonstrated remarkable accuracy in such validations, correctly identifying stable compounds such as new two-dimensional wide bandgap semiconductors and double perovskite oxides [1].

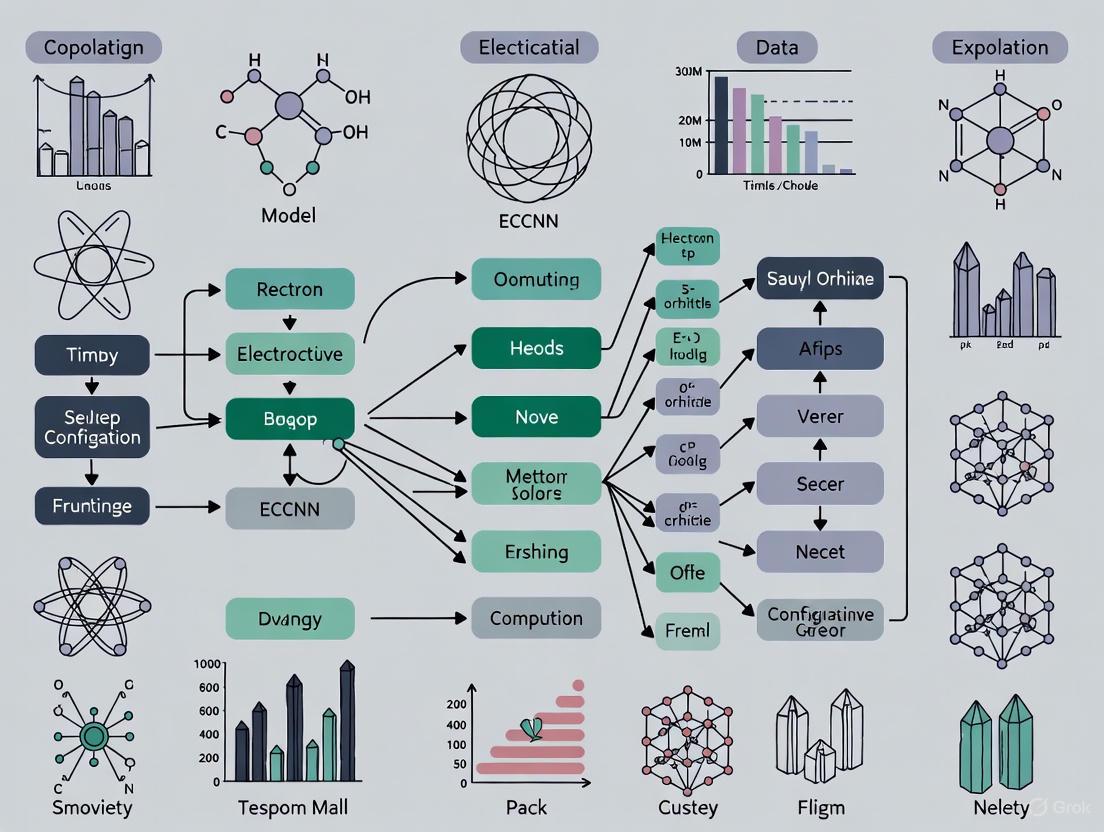

Workflow Visualization

The following diagram illustrates the complete ECCNN-based prediction workflow, from raw chemical formula to final stability assessment.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for ECCNN Implementation and Validation

| Item / Resource | Function / Description | Example Sources |

|---|---|---|

| Materials Databases | Provides labeled data (formation energies, structures) for model training and benchmarking. | Materials Project (MP), Open Quantum Materials Database (OQMD), JARVIS [1] |

| Deep Learning Framework | Software library for building, training, and deploying the ECCNN model. | TensorFlow with Keras, PyTorch [2] |

| DFT Software | Performs first-principles calculations to validate model predictions and generate reference data. | VASP, Quantum ESPRESSO [1] |

| High-Performance Computing (HPC) | Provides the computational power required for training deep learning models and running DFT calculations. | Local/National Clusters, Cloud Computing Platforms |

| Stacked Generalization Library | Implements the ensemble framework that combines ECCNN with other models to improve accuracy. | Custom implementation using Scikit-learn |

In the pursuit of novel materials and therapeutics, researchers increasingly rely on machine learning (ML) models to predict key properties, such as the thermodynamic stability of compounds, from their chemical composition. A significant challenge in this endeavor is inductive bias, where the assumptions and pre-defined feature sets built into a model limit its ability to generalize to new, unexplored areas of chemical space [1]. Composition-based models are particularly susceptible, as they often rely on hand-crafted features derived from specific domain knowledge, which may not fully capture the underlying physical principles governing material behavior [1]. For instance, models that assume material properties are solely determined by elemental composition or specific interatomic interactions can introduce a large inductive bias, reducing predictive accuracy for out-of-sample compounds [1].

The Electron Configuration Convolutional Neural Network (ECCNN) model was developed to mitigate this issue by using a more fundamental representation of atoms: their electron configuration (EC) [1]. The EC describes the distribution of electrons within an atom across different energy levels and is a foundational input for first-principles quantum mechanical calculations [1]. By building a model around this intrinsic atomic property, ECCNN aims to reduce the reliance on idealized assumptions and provide a more generalizable basis for prediction. Furthermore, the ECCNN is not designed to operate in isolation. Its true power is realized when it is integrated with other models based on diverse knowledge sources through an ensemble framework called ECSG (Electron Configuration models with Stacked Generalization) [1]. This framework strategically combines ECCNN with other models to create a super learner that compensates for the individual weaknesses and biases of each component model.

Comparative Analysis of Model Architectures and Performance

The ECSG framework integrates three distinct models, each rooted in different domain knowledge, to create a robust and accurate predictor of compound stability. The following table summarizes the key characteristics of these base models.

Table 1: Foundation Models within the ECSG Ensemble Framework

| Model Name | Underlying Knowledge Domain | Core Input Features | Algorithm/Methodology | Key Strengths |

|---|---|---|---|---|

| Magpie [1] | Atomic Properties | Statistical features (mean, deviation, range) of elemental properties (e.g., atomic mass, radius) [1]. | Gradient Boosted Regression Trees (XGBoost) [1] | Provides a broad, statistical overview of elemental diversity. |

| Roost [1] | Interatomic Interactions | Chemical formula represented as a complete graph of elements [1]. | Graph Neural Network with attention mechanism [1] | Captures complex relationships and message-passing between atoms. |

| ECCNN (Proposed) [1] | Electron Configuration | Matrix encoding the electron configuration of the material's constituent elements [1]. | Convolutional Neural Network (CNN) [1] | Leverages a fundamental, intrinsic atomic property with low inductive bias. |

The performance of the resulting ECSG super learner is quantitatively superior to its individual components. The ensemble model was experimentally validated on the JARVIS database, where it achieved an exceptional Area Under the Curve (AUC) score of 0.988 in predicting compound stability [1]. A critical advantage of this approach is its remarkable sample efficiency; the ECSG model attained equivalent accuracy using only one-seventh of the data required by existing models like ElemNet [1]. This demonstrates that the framework not only achieves higher peak performance but does so more efficiently, a crucial factor when experimental or computational data is scarce and expensive to produce.

Table 2: Quantitative Performance Metrics of the ECSG Model

| Metric | ECSG Performance | Comparative Context |

|---|---|---|

| Predictive Accuracy (AUC) | 0.988 [1] | Superior to individual base models (Magpie, Roost, ECCNN). |

| Data Efficiency | Achieves target accuracy with 1/7 the data [1] | Significantly more efficient than existing models (e.g., ElemNet). |

| Application Validation | Correctly identified stable compounds validated by DFT [1] | Demonstrates practical utility and reliability in real-world discovery. |

Experimental Protocols for Model Application and Validation

Protocol 1: Data Preparation and Input Encoding for ECCNN

Objective: To transform the chemical composition of a compound into a structured matrix suitable for input into the ECCNN model. Materials: A list of elements and their stoichiometric proportions in the compound; a reference database of atomic electron configurations. Procedure:

- Composition Parsing: Deconstruct the chemical formula (e.g., Cs₂AgBiBr₆) into its constituent elements and their respective atomic counts.

- Electron Configuration Mapping: For each unique element in the formula, retrieve its full electron configuration (e.g., Br: [Ar] 4s² 3d¹⁰ 4p⁵).

- Matrix Encoding: Encode the electron configuration information for all elements into a unified input matrix with dimensions of 118 (elements) × 168 (features) × 8 (channels). The specific methodology for this encoding is detailed in the base-level models subsection of the source material [1].

- Data Normalization: Apply standard scaling to the input matrix to ensure numerical stability during model training.

Protocol 2: Training the ECSG Ensemble Model

Objective: To integrate the predictions of Magpie, Roost, and ECCNN into a single, high-performance super learner using stacked generalization. Materials: Pre-processed training datasets with known stability labels (e.g., from the Materials Project or JARVIS databases); implemented Magpie, Roost, and ECCNN models. Procedure:

- Base Model Training: Independently train the three foundation models (Magpie, Roost, ECCNN) on the same training dataset.

- Meta-Feature Generation: Use the trained base models to generate predictions (meta-features) on a held-out validation set. This prevents data leakage and ensures the meta-learner generalizes.

- Meta-Learner Training: Train a final model (the meta-learner) on the predictions from the base models. The input to this model is the vector of predictions from Magpie, Roost, and ECCNN, and the output is the final, refined stability prediction.

- Model Validation: Assess the performance of the fully assembled ECSG model on a separate, unseen test set using metrics such as AUC, precision, and recall.

Protocol 3: Virtual High-Throughput Screening for Stable Compounds

Objective: To employ the trained ECSG model for the discovery of new thermodynamically stable compounds in uncharted compositional space. Materials: A library of candidate chemical compositions; the pre-trained ECSG model. Procedure:

- Library Curation: Generate a list of plausible chemical compositions within a targeted space (e.g., double perovskites, two-dimensional semiconductors).

- Stability Prediction: Input each candidate composition into the ECSG model to obtain a prediction for its thermodynamic stability (often characterized by its decomposition energy, ΔH_d).

- Candidate Ranking: Rank all candidates based on their predicted stability score.

- First-Principles Validation: Select the top-ranked candidates for validation using computationally intensive but highly accurate Density Functional Theory (DFT) calculations to confirm their stability on the convex hull [1] [3].

- Experimental Proposal: The compounds that pass the DFT validation step are prime candidates for experimental synthesis and characterization.

Visualization of the ECSG Workflow and ECCNN Architecture

ECSG Ensemble Workflow

ECCNN Model Architecture

Table 3: Key Computational Tools and Datasets for ECCNN Research

| Item Name | Function/Description | Relevance to ECCNN/ECSG Research |

|---|---|---|

| JARVIS/Materials Project Databases | Extensive repositories of computed material properties and crystal structures [1]. | Provide the essential labeled datasets (formation energies, stability) required for training and benchmarking models. |

| Density Functional Theory (DFT) | A computational quantum mechanical method for modeling the electronic structure of matter [3]. | Serves as the source of high-fidelity training data and the ultimate validation tool for predicted stable compounds. |

| Graph Neural Networks (GNN) | A class of neural networks that operate on graph-structured data [1]. | The core architecture of the Roost model, which complements ECCNN by modeling interatomic interactions. |

| Stacked Generalization | An ensemble method that combines multiple models via a meta-learner [1]. | The foundational technique for integrating ECCNN with Magpie and Roost to create the high-performance ECSG super learner. |

| Python ML Frameworks (e.g., PyTorch, TensorFlow) | Open-source libraries for building and training deep learning models [4]. | Provide the programmatic environment for implementing, training, and deploying the ECCNN and ECSG models. |

The Electron Configuration Convolutional Neural Network (ECCNN) model represents a significant advancement in the application of deep learning to materials science, specifically for predicting the thermodynamic stability of inorganic compounds [5]. This framework is part of a broader research thesis exploring how domain-specific knowledge of quantum mechanics can be structurally integrated into neural network architectures to reduce inductive biases and improve predictive performance. Traditional machine learning models for material property prediction often rely on features derived from specific domain knowledge, which can introduce substantial biases and limit generalization capabilities [5]. The ECCNN framework addresses this limitation by utilizing the fundamental quantum mechanical property of electron configuration as its primary input, encoded as a multi-dimensional tensor.

The ECCNN model was developed to address the limited understanding of electronic internal structure in existing composition-based models [5]. By building upon the demonstrated success of convolutional neural networks in detecting spacial patterns [6], the ECCNN architecture applies these capabilities to the structured representation of electron configuration information. This approach is integrated into an ensemble framework known as Electron Configuration models with Stacked Generalization (ECSG), which combines ECCNN with other models based on diverse knowledge domains (Magpie and Roost) to create a super learner that mitigates the limitations of individual models [5]. Experimental results validating this approach have shown exceptional performance, achieving an Area Under the Curve score of 0.988 in predicting compound stability within the JARVIS database, with remarkable efficiency in sample utilization [5].

Theoretical Foundation of Electron Configuration

Fundamental Principles

Electron configuration describes the distribution of electrons of an atom or molecule in atomic or molecular orbitals [7]. This distribution follows well-established principles from quantum mechanics that govern how electrons occupy available energy states around an atomic nucleus. The electron configuration of an atomic species provides critical understanding of the shape and energy of its electrons, which directly influences bonding ability, magnetism, and other chemical properties [8]. In the orbital approximation, each electron occupies an orbital described by a wavefunction, characterized by a set of quantum numbers that effectively serve as an electron's "address" within the atom [8].

The arrangement of electrons follows three fundamental rules:

- Aufbau Principle: Electrons fill the lowest energy orbitals first before moving to higher energy levels [9]. The typical filling order follows: 1s < 2s < 2p < 3s < 3p < 4s < 3d < 4p < 5s < 4d < 5p < 6s < 4f < 5d < 6p < 7s < 5f < 6d < 7p [9].

- Pauli Exclusion Principle: An orbital can hold a maximum of two electrons with opposite spins [9]. This principle prohibits any two electrons in an atom from having identical quantum numbers.

- Hund's Rule: Electrons will fill degenerate orbitals (orbitals with the same energy) singly before pairing up [9]. This ensures the maximum number of unpaired electrons, minimizing electron-electron repulsion.

Quantum Numbers and Notation

Each electron in an atom is described by four quantum numbers that emerge from the solution to the Schrödinger equation:

Table: Quantum Numbers Defining Electron States

| Quantum Number | Symbol | Role | Allowed Values |

|---|---|---|---|

| Principal | n | Indicates shell/energy level | Positive integers (1, 2, 3, ...) |

| Orbital Angular Momentum | l | Indicates subshell and orbital shape | Integers from 0 to n-1 (s=0, p=1, d=2, f=3) |

| Magnetic | ml | Specifies orbital orientation | Integers from -l to +l |

| Spin Magnetic | ms | Specifies electron spin direction | +1/2 or -1/2 (spin up/down) |

The standard notation for electron configuration consists of the principal quantum number, the subshell label (s, p, d, f), and a superscript indicating the number of electrons in that subshell [7]. For example, phosphorus (atomic number 15) is written as 1s² 2s² 2p⁶ 3s² 3p³ [10]. An abbreviated notation uses the previous noble gas in brackets to represent the core electrons; for phosphorus, this becomes [Ne] 3s² 3p³ [10].

Tensor Encoding Methodology

Input Schema Specification

The ECCNN model utilizes a structured tensor representation of electron configuration information with dimensions of 118 × 168 × 8 [5]. This specific dimensional schema transforms the abstract concept of electron configuration into a format amenable to convolutional neural network processing, effectively creating an "image" of quantum mechanical properties that the CNN can analyze for spatial patterns [6].

Table: ECCNN Input Tensor Dimensions

| Dimension | Size | Representation |

|---|---|---|

| First Dimension | 118 | Comprehensive coverage of all known elements (atomic numbers 1-118) |

| Second Dimension | 168 | Total available atomic orbitals across all elements |

| Third Dimension | 8 | Feature channels representing electron occupancy and spin information |

The 118 elements correspond to all known chemical elements from hydrogen (1) to oganesson (118), ensuring comprehensive coverage of the periodic table [5]. The 168 orbitals dimension encompasses the complete set of atomic orbitals available across these elements, organized by principal quantum number (n) and azimuthal quantum number (l). The 8 feature channels encode multiple aspects of electron occupancy, including presence/absence, spin states, and potentially other quantum mechanical properties relevant to material stability.

Electron Configuration to Tensor Mapping

The encoding process transforms the electron configuration of each element into a structured format within the tensor. For any given element with atomic number Z (where 1 ≤ Z ≤ 118), the encoding procedure follows these steps:

- Element Indexing: The element is positioned along the first tensor dimension at index Z-1.

- Orbital Mapping: Each possible atomic orbital (defined by n and l quantum numbers) is mapped to a specific position along the second dimension.

- Feature Population: For each orbital in the element's electron configuration, the corresponding features (occupancy, spin, etc.) are encoded along the third dimension.

For example, oxygen (atomic number 8) with electron configuration 1s² 2s² 2p⁴ would have its 1s, 2s, and 2p orbitals mapped to specific positions in the second dimension, with the third dimension encoding the occupancy counts (2, 2, and 4 respectively) and possibly spin information following Hund's rule [9].

The following Graphviz diagram illustrates the complete tensor encoding workflow:

Orbital Mapping Schema

The systematic organization of atomic orbitals along the second dimension follows quantum mechanical principles. The mapping schema accounts for all possible orbitals up to those required for the highest atomic number (118), with the 168 value representing the cumulative count of distinct orbital types across all principal quantum levels.

Table: Orbital Classification Schema

| Principal Quantum Number (n) | Azimuthal Quantum Numbers (l) | Orbital Types | Orbital Count |

|---|---|---|---|

| 1 | 0 (s) | 1s | 1 |

| 2 | 0 (s), 1 (p) | 2s, 2p | 4 |

| 3 | 0 (s), 1 (p), 2 (d) | 3s, 3p, 3d | 9 |

| 4 | 0 (s), 1 (p), 2 (d), 3 (f) | 4s, 4p, 4d, 4f | 16 |

| 5 | 0 (s), 1 (p), 2 (d), 3 (f) | 5s, 5p, 5d, 5f | 16 |

| 6 | 0 (s), 1 (p), 2 (d), 3 (f) | 6s, 6p, 6d, 6f | 16 |

| 7 | 0 (s), 1 (p), 2 (d), 3 (f) | 7s, 7p, 7d, 7f | 16 |

| Higher orbitals | Additional types | g, h, etc. | Remaining orbitals to reach 168 |

This systematic organization ensures that the spatial relationships between orbitals in the tensor reflect their quantum mechanical relationships, enabling the CNN to detect meaningful patterns that correlate with material stability.

ECCNN Architecture and Workflow

Model Architecture

The ECCNN model processes the 118×168×8 electron configuration tensor through a structured deep learning architecture specifically designed to extract hierarchical features relevant to thermodynamic stability prediction [5]. The architecture consists of the following components:

- Input Layer: Accepts the 118×168×8 tensor representation of electron configurations.

- Convolutional Layers: Two convolutional operations, each with 64 filters of size 5×5, designed to detect local patterns in the electron configuration data.

- Batch Normalization: Applied after the second convolutional layer to stabilize training and improve convergence.

- Pooling Layer: 2×2 max pooling following batch normalization to reduce spatial dimensions while retaining important features.

- Flattening Layer: Converts the multi-dimensional feature maps into a one-dimensional vector.

- Fully Connected Layers: Process the flattened features to generate the final stability prediction.

The following Graphviz diagram illustrates the complete ECCNN model architecture within the broader ECSG framework:

Ensemble Integration via Stacked Generalization

The ECCNN model functions as a core component within the broader ECSG (Electron Configuration models with Stacked Generalization) ensemble framework [5]. This integration strategy combines three distinct models based on complementary domain knowledge:

- ECCNN: Leverages electron configuration information, representing intrinsic atomic characteristics that may introduce fewer inductive biases compared to manually crafted features [5].

- Magpie: Emphasizes statistical features derived from various elemental properties, including atomic number, atomic mass, and atomic radius, using gradient-boosted regression trees (XGBoost) [5].

- Roost: Conceptualizes the chemical formula as a complete graph of elements, employing graph neural networks with attention mechanisms to capture interatomic interactions [5].

The stacked generalization approach uses the predictions from these three base models as inputs to a meta-level model, which generates the final stability prediction. This ensemble strategy effectively mitigates the limitations and biases of individual models, creating a super learner with enhanced predictive performance [5].

Experimental Protocols and Validation

Model Training Protocol

The experimental validation of the ECCNN framework followed a rigorous protocol to ensure robust performance assessment [5]:

- Data Source and Preparation: Models were trained and validated using data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database. The dataset comprises inorganic compounds with known thermodynamic stability labels.

- Training-Test Split: The data was partitioned using standard machine learning practices, with a significant portion held out for testing to evaluate generalization performance.

- Performance Metrics: Model performance was quantified using the Area Under the Curve (AUC) score, with the ECSG framework achieving an exceptional AUC of 0.988 in predicting compound stability [5].

- Comparative Analysis: The ECCNN and ECSG models were benchmarked against existing approaches, demonstrating substantial improvements in sample efficiency - requiring only one-seventh of the data used by existing models to achieve equivalent performance [5].

- Validation Method: The predictive capabilities were further validated through first-principles calculations (density functional theory) on newly identified stable compounds, confirming the model's accuracy in practical applications [5].

Application Case Studies

The ECCNN framework was evaluated through two substantive case studies demonstrating its utility in materials discovery:

- Two-Dimensional Wide Bandgap Semiconductors: The model successfully identified novel two-dimensional semiconductors with wide bandgaps, which are crucial for electronic and optoelectronic applications.

- Double Perovskite Oxides: The framework facilitated the exploration of new double perovskite oxide structures, a class of materials with diverse functional properties.

In both cases, subsequent validation using density functional theory (DFT) calculations confirmed the remarkable accuracy of the model in correctly identifying stable compounds [5].

Research Reagent Solutions

Table: Essential Computational Resources for ECCNN Implementation

| Resource | Specification | Application in ECCNN Research |

|---|---|---|

| Materials Databases | JARVIS, Materials Project (MP), Open Quantum Materials Database (OQMD) | Source of training data with known stability labels for inorganic compounds [5] |

| Quantum Chemistry Codes | Density Functional Theory (DFT) Software (VASP, Quantum ESPRESSO) | Validation of predicted stable compounds through first-principles calculations [5] |

| Deep Learning Frameworks | PyTorch, TensorFlow with GPU acceleration | ECCNN model implementation and training [6] [5] |

| Electron Configuration Data | NIST Atomic Spectra Database, Computational Chemistry Resources | Source of ground-state electron configurations for encoding [10] |

| High-Performance Computing | GPU clusters (NVIDIA CUDA), High-memory nodes | Handling large-scale tensor operations and training ensemble models [5] |

The encoding of electron configuration as a 118×168×8 tensor represents a sophisticated methodology for integrating quantum mechanical principles into deep learning architectures for materials science. The ECCNN framework demonstrates how structured representation of fundamental atomic properties can enhance predictive performance while reducing sample efficiency requirements. By transforming abstract electron configuration information into a spatial format amenable to convolutional processing, this approach enables the detection of complex patterns correlated with material stability. The integration of ECCNN into the broader ECSG ensemble framework through stacked generalization further enhances predictive capabilities by combining complementary knowledge domains. This methodology establishes a powerful paradigm for materials discovery that effectively balances physical intuition with data-driven pattern recognition.

The Critical Role of Thermodynamic Stability in Materials Discovery and Drug Development

Thermodynamic stability is a fundamental property that dictates the viability, performance, and longevity of substances across scientific disciplines. In materials science and pharmaceutical development, understanding and controlling thermodynamic stability is crucial for transitioning from theoretical predictions to practical applications. The emergence of advanced computational models, particularly the Electron Configuration Convolutional Neural Network (ECCNN), is revolutionizing our ability to predict stability with unprecedented accuracy and efficiency. This framework integrates electron-level information with powerful machine learning, enabling researchers to navigate complex compositional spaces and accelerate the discovery of novel, stable compounds.

This document provides detailed application notes and experimental protocols for applying these advanced thermodynamic stability tools, with a specific focus on the integration and utility of the ECCNN model.

Application Notes: Thermodynamic Stability in Materials Discovery

The discovery of new functional materials is often limited by the challenge of ensuring their thermodynamic stability under operating conditions. Computational predictions are vital for prioritizing promising candidates for synthesis.

Key Concepts and Challenges

A material's thermodynamic stability is typically assessed by its decomposition energy (ΔHd), which represents the energy difference between the compound and its competing phases in a phase diagram [1]. A negative ΔHd indicates stability against decomposition. However, a critical challenge is that thermodynamic stability does not guarantee synthesizability [11]. Synthesis is a pathway-dependent kinetic process, and a stable material may be challenging to produce if all synthesis routes encounter competing, kinetically favorable phases. For instance, the synthesis of promising materials like bismuth ferrite (BiFeO₃) is often plagued by impurities because the desired phase is only stable over a narrow window of conditions [11].

Quantitative Insights from ECCNN and Ensemble Models

The ECCNN model addresses the limitations of traditional models by using intrinsic electron configuration data as input, which introduces fewer inductive biases compared to hand-crafted features [1]. When integrated into an ensemble framework like ECSG (Electron Configuration models with Stacked Generalization), its predictive power is significantly enhanced.

The table below summarizes the performance of different machine learning models in predicting inorganic compound stability, demonstrating the superior efficiency and accuracy of the ECCNN-based ensemble approach [1].

Table 1: Performance Comparison of ML Models for Stability Prediction

| Model Name | Input Features | Key Innovation | AUC Score | Data Efficiency |

|---|---|---|---|---|

| ECSG (Ensemble) | Electron Configuration, Atomic Properties, Interatomic Interactions | Stacked generalization combining multiple knowledge domains | 0.988 | Requires only 1/7 of the data to match benchmark performance |

| ECCNN | Electron Configuration Matrix (118x168x8) | Uses raw electron configuration data with convolutional layers | Part of Ensemble | High (leads to ensemble efficiency) |

| Roost | Chemical Formula (as a graph) | Graph neural network capturing interatomic interactions | Benchmark | Lower |

| Magpie | Elemental Property Statistics | Uses statistical features of atomic properties | Benchmark | Lower |

Case Study: Thermodynamics-Defying Materials for EV Batteries

Recent research has uncovered materials in a "metastable" state that exhibit flipped thermodynamic responses, such as shrinking when heated (negative thermal expansion) or expanding when crushed (negative compressibility) [12]. The ECCNN framework, with its sensitivity to electronic structure, is ideally suited to explore such anomalous stability landscapes.

Furthermore, this metastability offers a revolutionary application: restoring aged electric vehicle (EV) batteries to their original performance. By applying a specific electrochemical driving force (voltage), the battery material can be pushed from its degraded metastable state back to its pristine stable state, effectively recovering lost driving range without physical replacement [12].

Application Notes: Thermodynamic Stability in Drug Development

In pharmaceutical science, thermodynamic stability is paramount for ensuring the safety, efficacy, and shelf-life of drug substances and products.

The Energetic Basis of Binding and Formulation

The binding affinity (Ka) of a drug to its target is governed by the Gibbs free energy change (ΔG), which is composed of both enthalpic (ΔH) and entropic (ΔS) components (ΔG = ΔH - TΔS) [13]. A comprehensive thermodynamic profile is essential because:

- Entropy-Enthalpy Compensation: Optimizing a drug candidate often improves ΔH but worsens ΔS, or vice versa, resulting in no net gain in ΔG [13].

- Hydrophobicity Limits: While increasing a drug's hydrophobicity often improves binding entropy, it can push the compound beyond its solubility limit, rendering it useless as a drug [13].

Stability of Biologics and Formulations

For complex therapeutics like proteins, stability is a major concern. A key challenge is "cold instability," where a therapeutic protein can lose stability and aggregate faster at refrigerated storage temperatures (e.g., 4°C) than at slightly higher temperatures (e.g., 8°C) [14]. This occurs because the free energy difference (ΔG) between the native and aggregation-prone states decreases at lower temperatures, increasing the population of the aggregation-prone state [14]. This phenomenon underscores why accelerated stability studies at higher temperatures do not always predict real-time stability at recommended storage conditions.

For solid dosage forms, moisture uptake is a primary driver of chemical degradation. Predictive modeling of drug product stability in blister packs must account for multiple kinetic processes: water vapor permeation through the packaging, sorption by the drug product, and water consumption due to hydrolytic degradation [15]. Advanced models interconnect these processes to predict the relative humidity inside the blister cavity and the resulting drug content over the product's shelf life [15].

Regulatory Application of Predictive Stability

Risk-Based Predictive Stability (RBPS) tools, such as the Accelerated Stability Assessment Program (ASAP), are routinely used in pharmaceutical development. These tools leverage thermodynamic principles to model degradation and predict shelf-life in a matter of weeks. Industry experience confirms that data from these models can be successfully incorporated into regulatory submissions across all phases of development [16].

Table 2: Industry Case Studies Utilizing Predictive Stability in Regulatory Submissions

| Case Study Focus | Phase | RBPS Application | Regulatory Outcome |

|---|---|---|---|

| Setting initial shelf-life for an Oral Solution | Phase 1 | ASAP used to support 6-month shelf-life at 2-8°C. | Accepted in Belgium without queries [16]. |

| Supporting a new tablet strength | Phase 1 | ASAP predicted 3-year shelf-life for a new strength of a stable formulation. | Accepted in the USA, UK, and several other countries [16]. |

| Change in capsule shell | Phase 1 | ASAP supported 12-month shelf-life for a formulation changed from gelatin to HPMC shell. | Accepted in the USA without queries [16]. |

| Shelf-life for Parenteral Product | Phase 1 | ASAP supported 12-month shelf-life for an IV solution stored at 5°C. | Initially questioned in Germany; accepted after providing initial long-term data [16]. |

Experimental Protocols

This section provides detailed methodologies for key experiments and computational workflows cited in this document.

Protocol: ECCNN Model for Predicting Inorganic Compound Stability

This protocol outlines the procedure for developing and applying the ECCNN model to predict the thermodynamic stability of inorganic compounds [1].

1. Data Preparation

- Source: Obtain formation energies and stability labels from curated databases like the Materials Project (MP) or the Open Quantum Materials Database (OQMD).

- Input Encoding: Encode the chemical composition of a compound into an electron configuration matrix of dimensions 118 (elements) x 168 (electron orbitals) x 8 (features per orbital). This matrix serves as the direct input to the ECCNN.

2. Model Architecture and Training

- Convolutional Layers: Pass the input matrix through two consecutive convolutional layers, each using 64 filters with a 5x5 kernel size.

- Feature Reduction: After the second convolution, apply Batch Normalization (BN) and a 2x2 max-pooling operation.

- Fully Connected Layers: Flatten the extracted features into a one-dimensional vector and connect to one or more fully connected (dense) layers.

- Output: The final layer provides a prediction for the target property (e.g., formation energy, stability classification).

- Training: Train the model using standard backpropagation and optimization algorithms (e.g., Adam) on a dataset of known compounds.

3. Ensemble with Stacked Generalization (ECSG)

- Base Models: Train the ECCNN alongside two other models based on different knowledge domains (e.g., Magpie for atomic properties, Roost for interatomic interactions).

- Meta-Model: Use the predictions of these base models as inputs to a final "meta-learner" (e.g., a linear model) to produce the final, refined stability prediction. This mitigates the individual biases of any single model.

4. Validation

- Performance Metrics: Validate the model using the Area Under the Curve (AUC) score on a held-out test set from the JARVIS database. The ECSG framework achieves an AUC of 0.988 [1].

- Prospective Validation: Apply the trained model to explore uncharted composition spaces (e.g., for two-dimensional wide bandgap semiconductors) and validate top predictions using first-principles Density Functional Theory (DFT) calculations.

Protocol: Accelerated Stability Assessment Program (ASAP) for Drug Product Shelf-Life

This protocol describes the use of ASAP to rapidly predict the shelf-life of a solid oral drug product [16].

1. Sample Preparation

- For a worst-case scenario assessment, gently crush representative tablets to increase surface area exposure to humidity.

2. Forced Degradation Study

- Conditions: Expose the powdered sample to a minimum of five different temperature and humidity conditions. A typical range is from 50°C/75% RH to 80°C/10% RH.

- Duration: Conduct the study over a period of 2 to 12 weeks, pulling samples at regular intervals.

- Packaging: Perform the study in open-dish or otherwise unprotective packaging to isolate the intrinsic stability of the formulation.

3. Analytics and Identification of SLLA

- At each time point, analyze samples for assay (potency) and degradation products (e.g., via HPLC).

- Identify the Shelf-Life Limiting Attribute (SLLA), which is the degradation product that reaches its acceptance limit first.

4. Modeling and Prediction

- Model Fitting: Fit the rate of formation of the SLLA to the Arrhenius equation and a humidity model (e.g., modified Arrhenius) using the data from all stress conditions.

- Shelf-Life Prediction: Use the fitted model to extrapolate the degradation rate to the intended long-term storage condition (e.g., 25°C/60% RH). Calculate the time for the SLLA to reach its specification limit.

- Statistical Confidence: Report the prediction with a 95% confidence interval. For early-phase clinical trials, a conservative shelf-life of 1-2 years is typically assigned, even if the model predicts a longer life.

5. Regulatory Submission

- Include the ASAP study report, model parameters, and predictions in the regulatory filing (e.g., an IMPD or IND).

- Commit to an ongoing stability program using traditional long-term studies to continually verify the prediction.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table details key computational and experimental resources critical for thermodynamic stability research.

Table 3: Key Research Reagent Solutions for Thermodynamic Studies

| Item Name | Function/Application | Relevance to Field |

|---|---|---|

| Electron Configuration Encoder | Software that converts a chemical formula into a 3D matrix of electron orbital data. | Provides the fundamental input for the ECCNN model, enabling stability prediction from first principles [1]. |

| Isothermal Calorimetry (ITC) | Instrumentation used to directly measure the heat change (enthalpy, ΔH) of a binding interaction. | Provides a full thermodynamic profile (ΔG, ΔH, ΔS) for drug-target binding, guiding enthalpic optimization [13]. |

| Cellular Thermal Shift Assay (CETSA) | A method to confirm direct drug-target engagement in a physiologically relevant cellular environment. | Provides functional validation of binding, bridging the gap between biochemical potency and cellular efficacy [17]. |

| Chemical Denaturants (e.g., Guanidine HCl) | Agents used to progressively unfold proteins in stability studies. | Used with the Linear Extrapolation Method to determine the Gibbs free energy of protein folding (ΔG°water) at different temperatures [14]. |

| High-Barrier Blister Packaging Materials | Pharmaceutical packaging with low water vapor transmission rates. | Used in stability models as the first barrier against moisture ingress; its properties (kperm) are key model inputs [15]. |

Workflow and Pathway Visualizations

ECCNN Model Architecture and Workflow

The following diagram illustrates the flow of data and processing steps within the ECCNN model and the broader ECSG ensemble framework for predicting compound stability.

Drug Product Stability Prediction Workflow

This diagram outlines the integrated experimental and modeling workflow for predicting drug product stability, from forced degradation to regulatory submission.

Positioning ECCNN within the Broader Ecosystem of Composition-Based ML Models

In the discovery of new materials and molecules, composition-based machine learning (ML) models represent a paradigm shift, enabling rapid property prediction using only chemical formula as input. These models are critically important in early development phases where comprehensive structural data is unavailable or prohibitively expensive to obtain through experimental techniques or density functional theory (DFT) calculations [1]. Unlike structure-based models that require detailed atomic arrangements, composition-based models operate on elemental constituents alone, allowing researchers to screen vast chemical spaces efficiently [1].

The fundamental challenge in composition-based modeling lies in transforming chemical formulae into informative numerical representations that capture essential physicochemical principles. Different approaches encode composition information based on varying theoretical frameworks, each introducing specific inductive biases that influence model performance and generalizability [1]. Within this ecosystem, the Electron Configuration Convolutional Neural Network (ECCNN) emerges as a novel approach that incorporates quantum mechanical insights through explicit electron configuration representation, addressing limitations of existing methods while demonstrating remarkable predictive accuracy and data efficiency [1].

The ECCNN Model: Architecture and Implementation

Theoretical Foundation and Input Representation

The ECCNN model is grounded in the fundamental principle that electron configuration provides a quantum-mechanically rigorous description of atomic characteristics that ultimately determine molecular properties and stability [1] [18]. Where other models rely on manually crafted features or idealized assumptions about atomic interactions, ECCNN utilizes the inherent electronic structure of atoms as its primary input, potentially introducing fewer inductive biases and offering a more physically meaningful representation [1].

The input to ECCNN is a structured matrix representation of electron configurations across elements. Specifically, the model accepts input tensors of dimension 118×168×8, encoding electron occupation patterns across different energy levels and orbitals for each of the 118 elements in the periodic table [1]. This grid-based representation enables the application of convolutional neural networks, which can detect localized patterns and hierarchical features within the electron configuration space that correlate with macroscopic material properties and stability [1].

Network Architecture and Training

The ECCNN architecture employs a convolutional neural network framework specifically designed to process the electron configuration input matrix [1]. The network begins with two consecutive convolutional operations, each utilizing 64 filters with a 5×5 kernel size to detect localized patterns in the electron configuration features [1]. The second convolutional layer is followed by batch normalization, which stabilizes training and improves convergence, and a 2×2 max pooling layer that reduces spatial dimensions while retaining the most salient features [1].

Following the convolutional layers, the feature maps are flattened into a one-dimensional vector and passed through fully connected layers that ultimately produce the target property prediction [1]. This architectural choice leverages the spatial relationships within electron configuration data, allowing the model to learn chemically meaningful patterns that correlate with material stability and other properties of interest [1].

Table 1: ECCNN Architectural Specifications

| Component | Specifications | Function |

|---|---|---|

| Input Dimension | 118×168×8 | Encoded electron configuration data |

| Convolutional Layers | 2 layers, 64 filters each (5×5) | Local pattern detection in electron features |

| Pooling | 2×2 max pooling | Spatial dimension reduction |

| Normalization | Batch normalization | Training stabilization |

| Output Layers | Fully connected layers | Final property prediction |

Comparative Analysis of Composition-Based Models

The Ecosystem of Composition-Based Approaches

The landscape of composition-based ML models encompasses several distinct approaches, each with unique theoretical foundations and representation strategies. The Magpie model emphasizes comprehensive elemental property statistics, incorporating features such as atomic number, atomic mass, atomic radius, and various other physicochemical properties [1]. It calculates statistical moments (mean, mean absolute deviation, range, minimum, maximum, mode) across these properties for each compound and employs gradient-boosted regression trees (specifically XGBoost) for prediction [1].

In contrast, the Roost model conceptualizes chemical formulae as complete graphs of elements, utilizing graph neural networks with attention mechanisms to capture interatomic interactions and message-passing processes between atoms [1]. This approach explicitly models relationships between constituent elements, potentially capturing synergistic effects in multi-component systems.

The ECCNN model differs fundamentally by focusing exclusively on electron configuration as its primary input feature, positing that this quantum mechanical property provides a more direct and less biased foundation for predicting material behavior [1]. This theoretical positioning suggests complementary strengths with existing approaches, each emphasizing different aspects of compositional information.

Performance Comparison and Synergistic Integration

When evaluated on thermodynamic stability prediction using the Joint Automated Repository for Various Integrated Simulations (JARVIS) database, the ECCNN model demonstrates superior performance with an Area Under the Curve (AUC) score of 0.988 [1]. Remarkably, ECCNN achieves sample efficiency seven times greater than existing models, requiring only one-seventh of the data to achieve comparable performance [1]. This exceptional data efficiency is particularly valuable in materials science where labeled data is often scarce and computationally expensive to generate.

The most powerful implementation of ECCNN comes through its integration with other models via stacked generalization. The Electron Configuration models with Stacked Generalization (ECSG) framework combines ECCNN with Magpie and Roost to create a super learner that mitigates individual model biases and leverages complementary strengths [1]. This ensemble approach consistently outperforms individual models by exploiting the unique representational strengths of each approach: Magpie's comprehensive elemental statistics, Roost's interatomic relationship modeling, and ECCNN's electron configuration focus [1].

Table 2: Performance Comparison of Composition-Based Models

| Model | Theoretical Basis | Key Features | AUC Score | Sample Efficiency |

|---|---|---|---|---|

| ECCNN | Electron configuration | Quantum mechanical representation, CNN processing | 0.988 [1] | 7× better than baseline [1] |

| Magpie | Elemental property statistics | Statistical moments of atomic properties | Benchmark for comparison [1] | Baseline |

| Roost | Graph neural networks | Attention mechanisms, interatomic relationships | Benchmark for comparison [1] | Baseline |

| ECSG (Ensemble) | Stacked generalization | Combines ECCNN, Magpie, Roost | Superior to individual models [1] | Enhanced through complementarity |

Experimental Protocols and Implementation

Data Preparation and Preprocessing Protocol

Materials: The primary data source for training stability prediction models is typically large-scale materials databases such as the Materials Project (MP), Open Quantum Materials Database (OQMD), or Joint Automated Repository for Various Integrated Simulations (JARVIS) [1]. These databases provide formation energies and decomposition energies (ΔH_d) derived from DFT calculations, which serve as the target variable for stability prediction [1].

Procedure:

- Data Collection: Extract chemical compositions and corresponding formation energies from chosen materials database. The JARVIS database was utilized in the development of ECCNN [1].

- Input Encoding: Transform chemical compositions into electron configuration matrix representations. For each element in the compound, generate its complete electron configuration across all orbitals.

- Matrix Construction: Create an input tensor of dimensions 118×168×8, representing 118 elements with electron configurations encoded across 168 features with 8 channels [1].

- Data Partitioning: Split the dataset into training (70%), validation (15%), and test (15%) sets, ensuring representative distribution of compound types across partitions [1].

- Data Augmentation: Enhance training data through random rotations and strains applied to molecular geometries to improve model robustness and transferability [19].

Model Training Protocol

Materials: Python-based deep learning frameworks such as TensorFlow or PyTorch; computational resources with GPU acceleration significantly reduce training time.

Procedure:

- Architecture Initialization: Construct the ECCNN model with the specified architecture: two convolutional layers (64 filters, 5×5 kernel), batch normalization, max pooling (2×2), and fully connected layers [1].

- Hyperparameter Selection: Determine optimal hyperparameters through cross-validation-based grid search. Key hyperparameters include learning rate, batch size, dropout rate, and regularization strength [18].

- Loss Function Definition: Employ mean squared error (MSE) for regression tasks or cross-entropy for classification tasks, depending on the specific prediction target.

- Model Training: Implement training with early stopping based on validation loss to prevent overfitting. Monitor convergence of both training and validation curves.

- Ensemble Integration: For ECSG implementation, train ECCNN alongside Magpie and Roost models, then apply stacked generalization to combine predictions [1].

Model Validation and Interpretation Protocol

Materials: Holdout test set not used during training; external validation datasets where available; SHAP (Shapley Additive exPlanations) or similar interpretation tools for model explainability.

Procedure:

- Performance Evaluation: Calculate standard metrics including Area Under the Curve (AUC), accuracy, R², mean absolute error (MAE), and root mean square error (RMSE) on the test set [1].

- Comparative Analysis: Benchmark ECCNN performance against established baselines including Magpie and Roost models using identical train/test splits [1].

- Ablation Studies: Systematically remove components of the model architecture to quantify their contribution to overall performance.

- Feature Importance Analysis: Apply model interpretation techniques to identify which electron configuration features most strongly influence predictions.

- Transfer Testing: Evaluate model performance on structurally distinct compound classes not represented in the training data to assess generalizability.

Visualization and Workflow Diagrams

ECCNN Model Workflow

ECSG Ensemble Framework

Research Reagent Solutions

Table 3: Essential Research Materials and Computational Tools

| Resource | Type | Function | Access |

|---|---|---|---|

| Materials Project Database | Data Resource | Provides formation energies and structural information for training data | Public API [1] |

| JARVIS Database | Data Resource | Benchmark dataset for stability prediction performance validation | Public access [1] |

| Electron Configuration Encoder | Software Tool | Transforms chemical compositions into 118×168×8 input matrices | Custom implementation [1] |

| Deep Learning Framework | Software Tool | Model architecture implementation and training (TensorFlow/PyTorch) | Open source |

| Magpie Feature Set | Software Tool | Generates statistical features from elemental properties | Open source [1] |

| Roost Implementation | Software Tool | Graph neural network for composition-based prediction | Open source [1] |

Applications and Future Directions

The ECCNN model demonstrates particular strength in predicting thermodynamic stability of inorganic compounds, achieving remarkable accuracy in identifying stable compounds with an AUC of 0.988 [1]. This capability has been successfully applied to explore new two-dimensional wide bandgap semiconductors and double perovskite oxides, with validation from first-principles calculations confirming the model's predictive reliability [1]. The exceptional sample efficiency of ECCNN—requiring only one-seventh of the data to achieve performance comparable to other models—makes it particularly valuable for exploring uncharted compositional spaces where data is scarce [1].

Future development directions for ECCNN and similar electron configuration-based models include integration with experimental data from pharmaceutical development pipelines, extension to dynamic property prediction under varying environmental conditions, and incorporation of transfer learning approaches to leverage related chemical domains. The success of ECCNN within the ECSG ensemble framework suggests that further hybridization with physically-informed models and attention mechanisms could enhance interpretability while maintaining high predictive accuracy [1]. As materials science and drug development increasingly embrace data-driven approaches, ECCNN represents a significant advancement in composition-based modeling that effectively bridges quantum mechanical principles with practical materials design challenges.

Building and Applying ECCNN: From Architecture Design to Real-World Discovery

The Electron Configuration Convolutional Neural Network (ECCNN) represents a specialized deep learning architecture designed to predict the thermodynamic stability of inorganic compounds directly from their electron configuration (EC) data. This model was developed to address significant limitations in existing machine learning approaches for materials science, which often rely on hand-crafted features derived from specific domain knowledge that can introduce substantial inductive biases, ultimately reducing predictive accuracy and generalization performance [1]. By using electron configuration as a fundamental input feature, the ECCNN leverages an intrinsic atomic characteristic that provides a more direct relationship to chemical properties and reactivity, potentially introducing fewer biases compared to manually engineered features [1].

The ECCNN forms a critical component of an ensemble framework known as Electron Configuration models with Stacked Generalization (ECSG), which integrates multiple models grounded in distinct domains of knowledge to create a super learner that mitigates individual model limitations and harnesses synergistic effects [1]. Within this ensemble, ECCNN specifically addresses the limited consideration of electronic internal structure in existing models, complementing other approaches that focus on interatomic interactions and atomic properties [1]. This architectural approach has demonstrated remarkable performance in predicting compound stability, achieving an Area Under the Curve (AUC) score of 0.988 on the Joint Automated Repository for Various Integrated Simulations (JARVIS) database, while exhibiting exceptional sample efficiency by requiring only one-seventh of the data used by existing models to achieve equivalent performance [1].

ECCNN Architecture Specifications

Input Representation and Preprocessing

The ECCNN architecture accepts a highly structured input representation derived from electron configuration data:

- Input Tensor Dimensions:

118 × 168 × 8[1] - Element Representation: The first dimension (118) corresponds to the atomic number, encompassing all elements from hydrogen (1) to oganesson (118)

- Electron Orbital Mapping: The second dimension (168) represents the possible electron orbitals across all energy levels

- Configuration Parameters: The third dimension (8) encodes electron occupancy and related configuration parameters

This structured input format enables the model to learn directly from the fundamental quantum mechanical properties of elements, bypassing the need for manually crafted features that may introduce human bias into the prediction process [1].

Core Architectural Components

The ECCNN implements a sequential architecture with the following layer composition:

| Layer Type | Filter Size | Number of Filters | Stride | Padding | Output Activation |

|---|---|---|---|---|---|

| Input Layer | - | - | - | - | 118 × 168 × 8 |

| Convolutional 1 | 5 × 5 | 64 | 1 × 1 | Prespecified | 118 × 168 × 64 |

| Convolutional 2 | 5 × 5 | 64 | 1 × 1 | Prespecified | 118 × 168 × 64 |

| Batch Normalization | - | - | - | - | 118 × 168 × 64 |

| Max Pooling | 2 × 2 | - | 2 × 2 | - | 59 × 84 × 64 |

| Flatten | - | - | - | - | 317,184 |

| Fully Connected 1 | - | - | - | - | Programmer-defined |

| Fully Connected 2 | - | - | - | - | Programmer-defined |

| Output Layer | - | - | - | - | Stability Prediction |

Table 1: Detailed ECCNN architecture specifications showing the transformation of input data through successive layers [1].

Computational Workflow Visualization

Diagram 1: ECCNN computational workflow showing data transformation from input to stability prediction.

Layer-Type Deep Dive

Convolutional Layer Operations

Convolutional layers serve as the fundamental feature extraction components within the ECCNN architecture, performing localized pattern recognition across the electron configuration input tensor [20]. These layers implement a sliding window operation that processes small subsections of the input data, allowing the network to learn hierarchical features from local electron orbital patterns to global electronic structure characteristics [21].

The convolutional operation in ECCNN involves:

- Filter Application: Each of the 64 filters (size 5×5) convolves across the input dimensions, computing element-wise multiplications and summing the results to produce activation maps [20]

- Local Connectivity: Neurons in convolutional layers connect only to local regions of the input volume, significantly reducing parameter counts compared to fully connected architectures [21]

- Parameter Sharing: The same filter weights are applied across all spatial positions, enabling translation-invariant feature detection and further reducing model complexity [22]

The output spatial dimensions of each convolutional layer can be calculated using the standard formula:

Output Size = [(Input Size - Filter Size + 2 × Padding) / Stride] + 1 [23]

For the ECCNN's first convolutional layer with an input size of 118×168, 5×5 filters, stride of 1, and appropriate padding, the output maintains similar spatial dimensions while expanding the depth to 64 feature maps [1].

Batch Normalization Implementation

Batch normalization (BN) is applied following the second convolutional layer in the ECCNN architecture, serving to stabilize and accelerate training through normalization of activation distributions [24]. The BN operation transforms each feature map using mini-batch statistics:

BN(x) = γ ⊙ (x - μ̂B) / σ̂B + β [24]

Where:

- x: Input activation from the previous layer

- μ̂_B: Sample mean of the minibatch

- σ̂_B: Sample standard deviation of the minibatch

- γ: Learnable scale parameter

- β: Learnable shift parameter

- ⊙: Element-wise multiplication

The benefits of batch normalization in ECCNN include:

- Training Acceleration: By reducing internal covariate shift, BN enables the use of higher learning rates and faster convergence [24]

- Regularization Effect: The noise introduced by mini-batch statistics calculation provides a mild regularizing effect, reducing overfitting [24]

- Gradient Flow Improvement: Normalized activations mitigate vanishing/exploding gradient problems in deep networks [22]

For ECCNN's application to electron configuration data, batch normalization ensures stable learning despite potential variations in electron distribution patterns across different elemental groups in the periodic table.

Fully Connected Network Integration

Following feature extraction through convolutional layers and spatial downsampling via pooling, the ECCNN architecture incorporates fully connected (FC) layers to perform the final stability classification [20]. The flattening operation transforms the multi-dimensional feature maps (59 × 84 × 64) into a one-dimensional vector (317,184 elements) that serves as input to the FC layers [1].

The fully connected component implements:

- Global Integration: Combining localized features detected by convolutional filters into global representations relevant for stability prediction [25]

- Non-linear Transformation: Applying activation functions to introduce non-linear decision boundaries essential for complex pattern recognition [23]

- Classification: Mapping the integrated features to final stability predictions through weighted combinations [20]

Unlike the convolutional layers that maintain spatial relationships through weight sharing and local connectivity, FC layers implement full connectivity where each input influences every output, enabling complex hierarchical decision-making based on the extracted electron configuration features [25].

Experimental Protocols and Methodologies

Model Training Protocol

The training procedure for ECCNN follows a standardized deep learning workflow with specific adaptations for electron configuration data:

| Training Phase | Hyperparameter | Value/Range | Justification |

|---|---|---|---|

| Data Preparation | Input Normalization | Per-minibatch | Enables stable convergence for electron features |

| Initialization | Weight Distribution | He Normal | Suitable for ReLU activation variants |

| Optimization | Algorithm | Adam | Adaptive learning rates for electron configuration patterns |

| Learning Rate | Schedule | Cyclical | Balances convergence speed and stability |

| Regularization | L2 Parameter | 1e-4 | Prevents overfitting to specific electron configurations |

| Early Stopping | Patience Epochs | 20 | Terminates training when validation performance plateaus |

Table 2: ECCNN training protocol specifications with hyperparameters and implementation rationale.

Step-by-Step Training Procedure:

- Input Preprocessing: Normalize electron configuration tensors using minibatch statistics

- Forward Propagation: Pass input through convolutional, batch normalization, and fully connected layers

- Loss Calculation: Compute cross-entropy loss between predictions and stability labels

- Backward Propagation: Calculate gradients with respect to all trainable parameters

- Parameter Update: Adjust weights using Adam optimizer with specified learning rate

- Validation: Evaluate model performance on holdout set after each epoch

- Checkpointing: Save model weights when validation performance improves

- Termination: Stop training when validation performance fails to improve for 20 consecutive epochs

Performance Evaluation Methodology

The ECCNN model evaluation follows rigorous benchmarking protocols to ensure predictive accuracy and generalization capability:

- Dataset: Joint Automated Repository for Various Integrated Simulations (JARVIS) database [1]

- Evaluation Metric: Area Under the Curve (AUC) of Receiver Operating Characteristic curve

- Cross-Validation: k-fold cross-validation with stratified sampling across compound classes

- Baseline Comparison: Performance comparison against Magpie and Roost models [1]

- Statistical Significance: Repeated evaluations with different random seeds to ensure result stability

The exceptional performance of ECCNN within the ECSG ensemble framework demonstrates its value in thermodynamic stability prediction, achieving 0.988 AUC while requiring substantially less training data than conventional approaches [1].

Research Reagent Solutions

Essential computational tools and datasets required for ECCNN implementation and experimentation:

| Research Reagent | Specification | Application in ECCNN Research |

|---|---|---|

| Electron Configuration Data | 118×168×8 Tensor Format | Fundamental input representation for model training |

| JARVIS Database | Publicly Available Materials Data | Primary source of stability labels for supervised learning |

| Deep Learning Framework | PyTorch/TensorFlow with CUDA Support | GPU-accelerated model implementation and training |

| Computational Resources | High-Memory GPU (16GB+) | Handling large electron configuration tensors during training |

| Materials Project API | RESTful Interface | Access to complementary materials data for transfer learning |

| Hyperparameter Optimization | Bayesian Optimization Framework | Efficient search of architectural and training parameters |

Table 3: Essential research reagents and computational resources for ECCNN implementation and experimentation.

Ablation Study Framework

To evaluate the contribution of individual architectural components, a structured ablation study framework is recommended:

Diagram 2: ECCNN ablation study framework for evaluating component contributions to model performance.

Key Ablation Conditions:

- Baseline ECCNN: Complete architecture as described in Section 2.2

- Without Batch Normalization: Remove BN layer to assess training stability effects

- Single Convolutional Layer: Reduce to one convolutional layer to evaluate feature extraction depth requirements

- Alternative Classifier: Replace fully connected layers with global average pooling

- Simplified Input: Reduce electron configuration representation complexity

Each ablation condition should be evaluated across multiple metrics including AUC, training convergence speed, parameter efficiency, and inference time to comprehensively understand architectural contributions.

The ECCNN architecture represents a significant advancement in computational materials science by enabling direct learning from fundamental electron configuration data without relying on hand-crafted features that introduce human bias [1]. The thoughtful integration of convolutional layers for hierarchical feature extraction, batch normalization for training stability, and fully connected networks for complex decision-making creates a powerful framework for predicting thermodynamic stability of inorganic compounds [1].

This architecture has demonstrated particular value in materials discovery applications, including the exploration of new two-dimensional wide bandgap semiconductors and double perovskite oxides, where it has successfully identified stable compounds subsequently validated through first-principles calculations [1]. The efficiency of ECCNN in sample utilization enables rapid screening of compositional spaces that would be prohibitively expensive using traditional computational approaches, accelerating the discovery of novel materials for energy and optoelectronic applications [3].

Future research directions include architectural extensions to handle temporal electron dynamics, integration with generative models for inverse materials design, and adaptation to related prediction tasks such as bandgap estimation and defect tolerance assessment. The ECCNN framework establishes a foundation for electron configuration-informed deep learning that can be extended across multiple domains of materials science research.

In computational materials science and drug development, the accurate prediction of material properties and compound stability is paramount for accelerating the discovery of new functional materials and therapeutic agents. Within the specific context of Convolutional Neural Network Electron Configuration (ECCNN) model research, the transformation of raw chemical formulas into structured, model-ready inputs represents a critical first step that directly influences predictive performance [1]. This process, known as featurization or descriptor engineering, converts fundamental chemical information into numerical representations that machine learning algorithms, particularly deep learning models, can process.

The ECCNN framework leverages the electron configuration (EC) of elements as a foundational input, treating it as an intrinsic atomic property that may introduce fewer inductive biases compared to manually crafted features [1]. This approach provides significant advantages for predicting thermodynamic stability and other chemical properties, achieving state-of-the-art performance with remarkable sample efficiency. Experimental results have demonstrated that models based on electron configuration can achieve equivalent accuracy with only one-seventh of the data required by existing models [1]. This document provides detailed application notes and protocols for constructing robust data preparation pipelines tailored to ECCNN-based research, enabling researchers to standardize and optimize this crucial preprocessing phase.

Conceptual Framework: Chemical Featurization Strategies

The process of transforming chemical formulas into machine-learning inputs involves multiple strategic approaches, each with distinct advantages and limitations. The choice of featurization strategy significantly impacts model performance, interpretability, and generalizability.

Electron Configuration as a Fundamental Descriptor

Electron configuration (EC) describes the distribution of electrons within an atom across atomic orbitals and energy levels. Unlike manually engineered features, EC represents an intrinsic atomic property that forms the physical basis for chemical behavior and bonding patterns [1]. In ECCNN implementations, electron configuration information is encoded as a 3D tensor with dimensions 118 × 168 × 8, corresponding to the 118 elements in the periodic table and their respective electron orbital characteristics [1]. This representation serves as direct input to convolutional neural networks capable of learning hierarchical patterns from the electronic structure.

The theoretical foundation for using EC as a primary descriptor stems from quantum mechanics, where electron configurations determine atomic properties including electronegativity, ionization potential, and atomic radius—all critical factors influencing molecular stability and reactivity. By leveraging this fundamental information, ECCNN models establish a more physically-grounded approach to materials prediction compared to methods relying solely on compositional fractions or structural features.

Comparison of Featurization Approaches

Table 1: Comparison of Chemical Featurization Strategies for Machine Learning