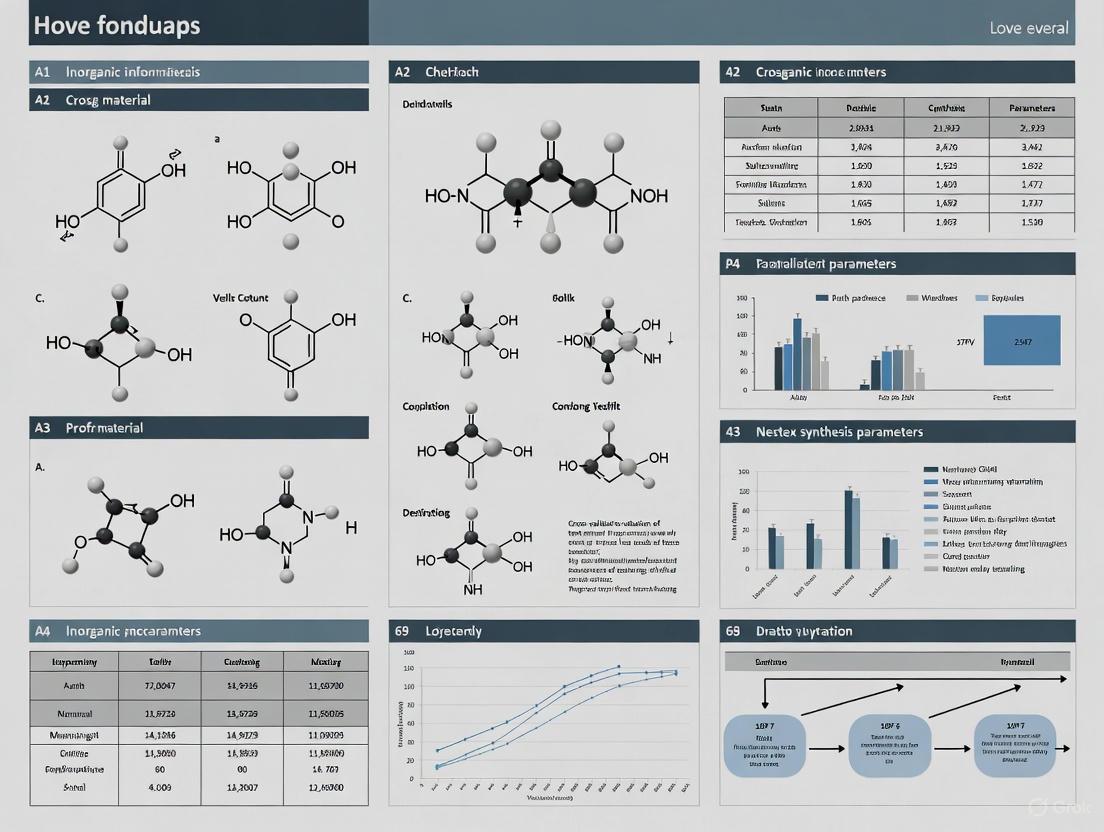

Cross-Validation of Text-Mined Synthesis Parameters: A Practical Guide for Biomedical Researchers

This article provides a comprehensive framework for validating text-mined materials synthesis parameters, addressing a critical bottleneck in data-driven research.

Cross-Validation of Text-Mined Synthesis Parameters: A Practical Guide for Biomedical Researchers

Abstract

This article provides a comprehensive framework for validating text-mined materials synthesis parameters, addressing a critical bottleneck in data-driven research. Tailored for researchers, scientists, and drug development professionals, it explores foundational concepts of extracting synthesis data from scientific literature using natural language processing and machine learning. The content covers practical methodological applications across domains like inorganic materials and metal-organic frameworks (MOFs), alongside critical troubleshooting strategies for common data pitfalls. Finally, it examines rigorous validation techniques and comparative performance analysis, offering actionable insights for building reliable predictive synthesis models to accelerate biomedical innovation.

Understanding Text-Mining and Cross-Validation in Synthesis Science

The Critical Need for Data-Driven Synthesis Prediction

The discovery and development of new functional molecules and materials are fundamental to addressing global challenges in healthcare, energy, and sustainability. However, traditional synthesis planning, reliant on expert intuition and trial-and-error approaches, has become a critical bottleneck. In pharmaceutical research, this contributes to development costs exceeding $2 billion per approved drug and timelines stretching over 10-15 years [1]. Similarly, in materials science, the vast chemical space of possible structures—exceeding millions for metal-organic frameworks (MOFs) alone—makes exhaustive experimental exploration impossible [2]. This review examines how data-driven synthesis prediction, built upon automated text mining and machine learning, is transforming these fields by converting published literature into actionable, predictive knowledge.

From Text to Data: Automated Extraction of Synthesis Protocols

The scientific literature contains a wealth of unstructured synthesis information. Automated extraction methods are essential to convert this into structured, machine-readable data.

Text Mining Evolution and Techniques

The field has evolved from manual curation to increasingly sophisticated automated approaches [3]:

- Manual Curation: Experts meticulously extract data, providing high-quality foundations for databases like the CoRE MOF database [3]. This method is reliable but not scalable.

- Rule-Based Systems: Early automation used regular expressions (RegEx) to identify specific parameters (e.g., surface area, pore volume) by searching for numerical values paired with units (e.g., m² g⁻¹) [3].

- Machine Learning-Based NLP: Models like BERT and its domain-specific variants (SciBERT, MatBERT) enable more sophisticated understanding of scientific text [3] [4]. These models can perform named entity recognition (NER) to identify and classify key concepts.

- Large Language Models (LLMs): Recent advances with models like GPT-4 and Llama3.1 offer context-aware information extraction with minimal domain-specific training, enabling more flexible and comprehensive data extraction [3].

Applied Workflows in Materials Science

MOF Synthesis Extraction: A complete machine learning workflow was developed for MOFs, involving automatic data mining from scientific literature to create the SynMOF database [2]. The process used HTML parsing, synthesis paragraph identification via a decision tree, and entity annotation using modified ChemicalTagger software. This extracted six key synthesis parameters: metal source, linker, solvent, additive, synthesis time, and temperature [2].

Gold Nanoparticle Protocol Mining: A specialized pipeline processed 4.9 million publications to identify gold nanoparticle synthesis articles [4]. This combined unsupervised filtering (regular expression queries, TF-IDF vectorization) with a supervised BERT-based classifier (MatBERT) fine-tuned to identify synthesis paragraphs. The resulting dataset codified synthesis procedures, morphologies, and size data from 7,608 synthesis paragraphs [4].

Table 1: Key Synthesis Parameters Extracted via Text Mining

| Material System | Extracted Synthesis Parameters | Data Source | Number of Records |

|---|---|---|---|

| Metal-Organic Frameworks (MOFs) | Metal source, organic linker, solvent, additive, temperature, time | Scientific literature | 983 MOF structures [2] |

| Gold Nanoparticles (AuNPs) | Precursors & amounts, synthesis actions & conditions, morphology, size, aspect ratio | Scientific literature | 5,154 articles [4] |

Cross-Validation: Ensuring Predictive Reliability

Cross-validation is a critical methodology for assessing how well predictive models generalize to independent datasets. It is used when the goal is prediction and provides an out-of-sample estimate of model performance, helping to detect overfitting [5].

Cross-Validation Techniques

- k-Fold Cross-Validation: The dataset is randomly partitioned into k equal-sized folds. Each fold serves as validation data once, while the remaining k-1 folds form the training data. The k results are averaged into a single performance estimate [5].

- Leave-One-Out Cross-Validation (LOOCV): A special case where k equals the number of observations. Each single data point serves as the validation set in turn [5].

- Stratified k-Fold Cross-Validation: Partitions are selected so the mean response value is approximately equal in all folds, often used for binary classification to maintain class proportions [5].

The following workflow diagram illustrates the integration of text mining and cross-validation in a predictive modeling pipeline for synthesis parameters:

Performance Comparison: Data-Driven vs. Alternative Methods

Predictive Accuracy in MOF Synthesis

In a landmark study, machine learning models trained on the text-mined SynMOF database were directly compared to predictions from human experts [2]. The models used random forest and neural network architectures with two types of MOF structure representations: molecular fingerprints of linkers combined with metal encodings, and a recently developed MOF representation [2].

Table 2: MOF Synthesis Prediction Performance

| Prediction Method | Temperature Prediction (r²) | Time Prediction (r²) | Solvent/Additive Prediction |

|---|---|---|---|

| Machine Learning Models (Random Forest) | Positive correlation [2] | Positive correlation [2] | Via property prediction & nearest neighbor search [2] |

| Human Experts (Synthesis Survey) | Outperformed by ML [2] | Outperformed by ML [2] | Not Specified |

For solvent and additive prediction, researchers employed an innovative approach: rather than classifying specific chemicals, models predicted solvent properties (e.g., partition coefficients, boiling point), with a nearest-neighbor search identifying solvents matching these properties [2]. Additives were classified by acidity/basicity strength (acidic, basic, or none) [2].

Validated Synthesis Planning in Drug Discovery

Beyond materials science, data-driven synthesis planning shows strong experimental validation in pharmaceutical contexts. A computational pipeline for generating structural analogs of parent drug molecules demonstrated robust experimental performance [6]. The method combined substructure replacement, retrosynthetic analysis, and guided forward-synthesis networks.

For Ketoprofen and Donepezil analogs, the pipeline achieved:

- 12 out of 13 successfully synthesized computer-designed analogs [6]

- 6 μM binders to COX-2 identified from Ketoprofen analogs (one with better binding than parent) [6]

- 5 submicromolar binders to acetylcholinesterase from Donepezil analogs [6]

However, binding affinity predictions aligned with experimental values only to within an order of magnitude, indicating that while synthesis planning is robust, property prediction remains challenging [6].

The Scientist's Toolkit: Research Reagent Solutions

This section details essential computational and experimental resources for implementing data-driven synthesis prediction.

Table 3: Essential Tools for Data-Driven Synthesis Prediction

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| MatBERT [4] | NLP Model | Domain-specific language understanding for materials science | Pre-trained on 2 million materials science papers; classifies synthesis paragraphs [4] |

| ChemicalTagger [2] | NLP Software | Annotates chemical experimental phrases | Identifies and tags synthesis parameters in scientific text [2] |

| BERTopic [3] | Topic Modeling | Captures high-level thematic distribution in text datasets | Used in CTCL framework to model topic distributions for data synthesis [3] |

| AiZynthFinder [7] | Retrosynthesis Tool | Predicts synthetic routes for organic molecules | Generates routes compared via similarity metrics [7] |

| CTCL-Generator [8] | Synthetic Data Generator | Creates privacy-preserving synthetic text data | Generates training data while maintaining privacy guarantees [8] |

| rxnmapper [7] | Reaction Mapping Tool | Assigns atom-mapping for chemical reactions | Essential for calculating bond formation similarity in synthetic routes [7] |

Data-driven synthesis prediction represents a paradigm shift from intuition-based to algorithmic-driven discovery. Experimental validations confirm that machine learning models can now outperform human experts in predicting synthesis conditions for materials like MOFs [2], while computational pipelines can successfully design synthesizable drug analogs [6]. The integration of cross-validation ensures these models generalize beyond their training data.

Future progress will likely involve multi-modal AI systems that process textual, visual, and structural information simultaneously [3], along with integration into autonomous laboratories for closed-loop design-synthesis-testing cycles. As these technologies mature, they promise to significantly accelerate the discovery of new functional molecules and materials, ultimately reducing development timelines and costs across pharmaceutical and materials industries.

The systematic design of novel compounds and materials relies on structured, actionable data. However, a vast majority of chemical knowledge exists only within the unstructured text of millions of scientific papers, creating a significant bottleneck for research acceleration [9]. For decades, the extraction of synthesis recipes from literature has been a labor-intensive, manual process, severely limiting the efficiency of large-scale data accumulation [10]. The field has progressively developed automated solutions to this problem, evolving from rigid, handcrafted rules to sophisticated neural models that can understand context and reason about chemical concepts. This guide objectively compares the performance of these technological paradigms—rule-based NLP, traditional machine learning, and modern Large Language Models (LLMs)—within the critical context of cross-validating text-mined synthesis parameters. For researchers in drug development and materials science, understanding the strengths, limitations, and optimal application of each technique is fundamental to building reliable, automated discovery pipelines.

The Evolution of NLP Techniques for Chemical Text

The journey of Natural Language Processing (NLP) began in the 1950s with rule-based systems that used handwritten, expert-defined rules to interpret language [10] [11]. These systems were narrowly focused and struggled with the diversity of natural language. The late 1980s and 1990s saw a shift to statistical and machine learning methods, which learned language patterns from large datasets [10] [11]. A true paradigm shift occurred with the introduction of the transformer architecture in 2017, which, with its attention mechanism, enabled the development of Large Language Models (LLMs) that demonstrate a remarkable grasp of language and context [10] [12]. The following diagram illustrates this technological evolution and its impact on chemical data extraction tasks.

Comparative Analysis of NLP Techniques

The following table summarizes the core characteristics, strengths, and weaknesses of the three primary NLP paradigms used for extracting synthesis information.

Table 1: Comparison of NLP Techniques for Synthesis Recipe Extraction

| Technique | Core Principle | Key Strengths | Key Weaknesses |

|---|---|---|---|

| Rule-Based NLP | Relies on handcrafted lexicons, grammar rules, and semantic logic [12]. | - High precision in narrow domains.- Transparent and interpretable.- Computationally efficient. | - Brittle; fails with new phrasing [13].- Poor scalability across diverse tasks.- Requires massive expert effort to build & maintain [9]. |

| Traditional Machine Learning | Uses statistical models trained on annotated corpora to identify patterns (e.g., NER) [10] [11]. | - More flexible than rule-based systems.- Can generalize to unseen text to some degree. | - Requires large, labeled datasets for training [9].- Feature engineering is complex and critical.- Performance is tied to the training domain. |

| Large Language Models (LLMs) | Leverages deep neural networks with billions of parameters, pre-trained on vast text corpora, to understand and generate language [10] [12]. | - Exceptional flexibility with diverse language [13].- Requires no task-specific training data for basic use (zero-shot) [9].- Capable of complex reasoning and strategy evaluation [14]. | - Can hallucinate or generate incorrect data [9].- High computational cost for training and inference.- Struggles with generating valid chemical representations (e.g., SMILES) [14]. |

Experimental Performance and Benchmarking

Objective benchmarking is crucial for selecting the appropriate NLP tool. Recent studies have quantitatively evaluated different LLMs against specific chemical extraction tasks, providing valuable performance data.

Table 2: Performance of Various LLMs on Chemical Data Extraction Tasks

| Task Description | Models Evaluated | Key Performance Metrics | Interpretation & Best Performer |

|---|---|---|---|

| Extracting synthesis conditions from Metal-Organic Framework (MOF) literature [15]. | GPT-4 Turbo, Claude 3 Opus, Gemini 1.5 Pro | - Claude: Excelled in providing complete synthesis data.- Gemini: Outperformed in accuracy, obedience, and proactive structuring. | Gemini and Claude achieved the highest scores in accuracy and adherence to prompts, making them suitable benchmarks. GPT-4 showed strong logical reasoning but was less effective on quantitative metrics. |

| Evaluating route-to-prompt alignment in steerable retrosynthetic planning [14]. | Claude-3.7-Sonnet, GPT-4o, DeepSeek-V3, GPT-4o-mini | - Claude-3.7-Sonnet achieved the highest scores, successfully evaluating complex strategic features.- Performance scaled strongly with model size; smaller models (e.g., GPT-4o-mini) performed near random. | The latest, largest models demonstrate sophisticated chemical reasoning. Smaller models lack the capacity for meaningful chemical analysis without fine-tuning. |

| Accuracy of extracting six specific synthesis conditions for MOFs using open-source models [13]. | Qwen3 Series, GLM-4.5 Series (14B to 355B parameters) | - Most models achieved accuracies exceeding 90%.- The largest model reached 100% accuracy.- A smaller model (Qwen3-32B) achieved 94.7% accuracy. | Open-source models can match proprietary model performance for specific extraction tasks, offering a cost-effective and transparent alternative. |

Detailed Experimental Protocol: LLM-Based Synthesis Condition Extraction

The methodology for benchmarking LLMs, as conducted in the studies cited above, typically follows a structured pipeline [15] [13]:

- Data Collection and Pre-processing: Full-text scientific articles (e.g., from PDFs) are collected. In some workflows, documents are split into smaller chunks or paragraphs to identify text relevant to experimental synthesis [13].

- Model Prompting: A carefully designed prompt (prompt engineering) is constructed to instruct the LLM to extract specific entities. For example: "From the following text, extract the synthesis conditions for the metal-organic framework. Return the data in a structured JSON format with the following keys: 'temperature', 'time', 'solvent', 'linker', 'metal_precursor'."

- Constrained Decoding & Validation: To enhance reliability, domain knowledge is integrated. This can involve:

- Evaluation against Ground Truth: The model's extractions are compared against a human-annotated "gold-standard" test set. Standard metrics like Accuracy (exact match), Precision, Recall, and F1-score are calculated. In the MOF-ChemUnity benchmark, accuracy for each of the six synthesis conditions was reported [13].

The Scientist's Toolkit: Research Reagent Solutions

Building and validating an NLP pipeline for synthesis extraction requires a suite of software and model "reagents." The following table details key resources.

Table 3: Essential Tools for NLP-Based Chemical Data Extraction

| Tool / Model Name | Type | Primary Function in Extraction Workflow |

|---|---|---|

| spaCy [11] | Rule-Based / ML NLP Library | Provides industrial-strength, pre-trained models for foundational NLP tasks like tokenization, named entity recognition (NER), and dependency parsing, which can serve as a preprocessing step. |

| NLTK [11] | Rule-Based / ML NLP Library | A gateway library for educational purposes and prototyping, offering resources for text processing (tokenization, parsing). Less optimized for large-scale applications than spaCy. |

| GPT-4 / GPT-4o [16] | Proprietary LLM (Decoder) | A powerful, general-purpose LLM used for complex extraction and reasoning tasks. Often serves as a top-performing benchmark in studies but is a closed-source, commercial API [15] [13]. |

| Claude 3.7 Sonnet [14] | Proprietary LLM (Decoder) | Excels in providing complete data and advanced chemical reasoning, demonstrating state-of-the-art performance in evaluating complex synthetic routes [15] [14]. |

| Gemini 1.5 Pro [15] | Proprietary LLM (Decoder) | Noted for high accuracy, obedience to prompt instructions, and proactive structuring of responses, making it highly suitable for structured data extraction tasks [15]. |

| ChemDFM [17] | Domain-Specific LLM | A pioneering LLM specifically pre-trained and fine-tuned on chemical literature (34B tokens). It is designed to understand and reason with chemical knowledge in a dialogue, surpassing general-purpose open-source models on chemistry tasks. |

| LLaMA 3 / Qwen / GLM [13] | Open-Source LLM (Decoder) | A family of powerful, commercially friendly open-source models. Benchmarks show they can achieve over 90% accuracy in synthesis condition extraction, offering a transparent and cost-effective alternative to proprietary models [13]. |

Integrated Workflows and Future Outlook

The most powerful modern applications leverage LLMs not as standalone generators, but as reasoning "engines" within a larger, validated workflow. The emerging paradigm for reliable extraction and cross-validation combines the strategic understanding of LLMs with the precision of traditional tools and domain knowledge, as shown in the following workflow.

This architecture is exemplified in two advanced applications:

- Steerable Synthesis Planning: Here, an LLM evaluates potential retrosynthetic pathways generated by traditional search software. The chemist can guide the process using natural language (e.g., "avoid late-stage functional group transformations"), and the LLM acts as a judge to select the routes that best align with this strategy [14].

- Multi-Agent Experimental Systems: LLMs are integrated as the "brain" of autonomous research platforms. Frameworks like LLM-RDF employ multiple specialized agents (e.g., Literature Scouter, Experiment Designer, Result Interpreter) that work together to perform an end-to-end synthesis development cycle, from literature search to hardware execution and data analysis [16].

The future of synthesis parameter extraction lies in this synergistic approach, which mitigates the weaknesses of any single technique. The growing prowess of open-source models promises to make these powerful workflows more accessible, reproducible, and cost-effective for the entire research community [13].

In data-driven research, particularly in fields utilizing text-mined synthesis parameters for materials science and drug development, the ability to accurately predict outcomes for new, unseen data is paramount [18]. Model validation is the critical process that ensures the machine learning (ML) models powering these predictions are robust and reliable, moving beyond mere memorization of training data to genuine generalization [19]. Two foundational pillars of this validation landscape are the holdout method and k-fold cross-validation. The holdout method provides a straightforward, computationally efficient means of evaluation, while k-fold cross-validation offers a more robust, thorough assessment at a higher computational cost [19] [20].

This guide provides an objective comparison of these two core validation methods. It is framed within the practical challenges of working with text-mined scientific data, where dataset sizes may be limited, and the cost of failed experiments in the lab is high. By understanding the trade-offs between these methods, researchers can make informed decisions that enhance the credibility and impact of their predictive models.

Foundational Concepts and Definitions

The Holdout Method

The holdout method is one of the most fundamental validation techniques. It involves splitting the available dataset into two distinct parts [19]:

- Training Set: This subset is used to train the machine learning algorithm, allowing it to learn the underlying relationships in the data.

- Test Set (or Hold-out Set): This subset is set aside and used exclusively for the final, unbiased evaluation of the model's performance after training is complete [19] [18].

The primary purpose of holdout data is to act as a safeguard against overfitting—a scenario where a model performs well on its training data but fails to generalize to new, unseen data [19]. By validating on an independent holdout set, practitioners can obtain a more realistic estimate of how the model will perform in a real-world setting, such as predicting the synthesizability of a new compound [21].

K-Fold Cross-Validation

K-fold cross-validation (K-fold CV) is a more advanced resampling technique designed to provide a more comprehensive performance evaluation. The core process involves [22]:

- Randomly shuffling the dataset and dividing it into

kequal-sized subsets, known as "folds." - For each of the

kiterations, one fold is designated as the validation set, and the remainingk-1folds are combined to form the training set. - The model is trained on the training set and evaluated on the validation set.

- After all

kiterations, the performance metrics from each round are averaged to produce a single, aggregated estimate of model performance.

This method ensures that every data point in the dataset is used exactly once for validation, maximizing data utilization and providing a more stable performance estimate by averaging multiple validation rounds [18] [22].

The Three-Way Holdout Method

For complex model development involving hyperparameter tuning, a simple two-way split is often insufficient. The Three-way Holdout Method introduces a crucial third dataset [18]:

- Training Set: Used for initial model training.

- Validation Set: Used for an unbiased evaluation of the model during hyperparameter tuning and model selection.

- Test Set (Hold-out): Used for the final, independent evaluation once the model and its parameters are finalized.

This method prevents information from the test set from indirectly influencing the model development process, thus giving a truer measure of generalization error [18].

Methodological Comparison: Holdout vs. K-Fold Cross-Validation

The choice between holdout and k-fold cross-validation involves a fundamental trade-off between computational efficiency and the reliability of the performance estimate. The table below summarizes their core characteristics.

Table 1: Core Characteristics of Holdout and K-Fold Cross-Validation

| Feature | Holdout Method | K-Fold Cross-Validation |

|---|---|---|

| Core Process | Single split into training and test sets [19]. | Multiple splits; data rotated through training and validation roles [22]. |

| Data Utilization | Lower; each data point is used for either training or testing, but not both [19]. | Higher; every data point is used for both training and validation once [22]. |

| Primary Advantage | Computational simplicity and speed; clear separation for independent testing [20]. | More reliable and robust performance estimate; reduces variance of the estimate [22]. |

| Primary Disadvantage | Performance estimate can have high variance depending on a single, potentially unlucky, data split [20]. | Significantly higher computational cost (requires training k models) [20]. |

| Best-Suited For | Very large datasets, initial model prototyping, or when a truly independent test set is required [19] [20]. | Small to medium-sized datasets, final model evaluation, and hyperparameter tuning [18]. |

The Bias-Variance Trade-off in K-Fold CV

The choice of k in k-fold cross-validation is not arbitrary; it directly involves a bias-variance trade-off [23] [22]:

- Small

k(e.g., 5): Results in a smaller validation set and a larger training set in each fold. This can lead to a pessimistic bias in the performance estimate (because the model is trained on less data) but has lower variance in the estimate between folds. - Large

k(e.g., 10 or Leave-One-Out): Results in a larger validation set and a model trained on nearly all the data in each fold. This reduces bias but increases the variance of the performance estimate because the validation sets between folds are more similar to each other [22].

Conventional choices like k=5 or k=10 are popular because they often provide a good balance between these two extremes [22]. However, research suggests that the optimal k can depend on both the specific dataset and the model being used, rather than convention alone [23].

Experimental Protocols and Validation Workflows

Adhering to strict experimental protocols is essential for obtaining valid and reproducible results in model validation.

Protocol for the Three-Way Holdout Method

This protocol is critical for proper model development and evaluation [18]:

- Split the Data: Partition the data into training, validation, and test sets (e.g., 60/20/20).

- Train and Tune: Train multiple models with different hyperparameters on the training set. Evaluate their performance on the validation set to select the best-performing hyperparameters.

- Final Training (Optional): Retrain the selected model with the optimal hyperparameters on the combined training and validation data to leverage all available data.

- Final Evaluation: Test the final model exactly once on the held-out test set to obtain an unbiased estimate of its generalization performance.

- Production Training: Finally, retrain the model on the entire dataset (training, validation, and test) before deployment.

A critical rule is to use the test set only for the final evaluation. Using it for iterative tuning or model selection will lead to information leakage and an optimistically biased performance estimate [18].

Protocol for K-Fold Cross-Validation

The standard workflow for k-fold CV is as follows [22]:

- Shuffle and Split: Randomly shuffle the dataset and split it into

kfolds. Using stratification is recommended for imbalanced datasets to preserve the class distribution in each fold [18]. - Iterative Training and Validation: For each fold

i(from 1 tok):- Use fold

ias the validation set. - Use the remaining

k-1folds as the training set. - Train the model and compute the performance metric on the validation set.

- Use fold

- Aggregate Results: Calculate the average and standard deviation of the

kperformance metrics. The average represents the expected model performance, while the standard deviation indicates its stability across different data subsets.

Validation Workflow Diagram

The diagram below illustrates the logical sequence of the three-way holdout and k-fold cross-validation methods, highlighting their key differences.

Performance and Reliability Comparison

Empirical evidence and statistical theory highlight the differing reliability of these two methods. A study on bankruptcy prediction using random forest and XGBoost models found that k-fold cross-validation is, on average, a valid technique for selecting the best-performing model for new data [24]. However, it also revealed a crucial caveat: for specific train/test splits, k-fold CV can fail, selecting models with poor out-of-sample performance [24]. This underscores that the reliability of model selection depends heavily on the relationship between the training and test data, an element of irreducible uncertainty that practitioners must acknowledge [24].

The holdout method's performance estimate can be unstable, especially with smaller datasets, as it depends entirely on a single, random split of the data [20]. K-fold CV mitigates this by providing an average over multiple splits.

Table 2: Quantitative Performance Comparison in a Model Selection Task

| Model Type | Validation Method | Finding on Average | Key Risk / Variability |

|---|---|---|---|

| Random Forest & XGBoost (Bankruptcy Prediction) [24] | K-Fold Cross-Validation | A valid technique for model selection. | Can be unreliable for specific train/test splits; 67% of selection regret variability was due to the particular data split. |

| General Machine Learning Models [20] | Holdout Validation | Provides a quick, computationally cheap estimate. | The estimate can have high variance; a single unlucky split can give a misleading result. |

Practical Implementation and Best Practices

Guidance for Method Selection

Choosing the right validation method is a contextual decision. The following guidance can help researchers select the appropriate tool:

- For Large Datasets: The holdout method is often sufficient and computationally efficient. The law of large numbers ensures that a single split is likely to be representative of the overall data distribution [19].

- For Small to Medium Datasets: K-fold cross-validation (with

k=5ork=10) is strongly recommended. It maximizes data usage for both training and validation, providing a more reliable performance estimate [18] [22]. - For Model Comparison and Hyperparameter Tuning: K-fold CV is the "gold standard" as it reduces the variance in performance estimates, allowing for more confident comparisons between different models or hyperparameter settings [22].

- For a Final, Independent Test: Always maintain a strict holdout test set for the final evaluation of a chosen model, even when using k-fold CV for development. This provides the best estimate of real-world performance [18].

The Scientist's Toolkit: Key Validation Concepts

This table details the essential "reagents" for any model validation experiment.

Table 3: Essential Concepts for Model Validation

| Concept / Tool | Function & Purpose |

|---|---|

| Stratification | A sampling technique used during data splitting to ensure that the distribution of a target variable (e.g., class labels) is consistent across training, validation, and test sets. This is crucial for imbalanced datasets [18]. |

| Holdout Test Set | The pristine, untouched subset of data used solely for the final performance report of a fully-trained model. It simulates the model's encounter with truly new data in production [19] [18]. |

| Nested Cross-Validation | A sophisticated technique where an inner k-fold CV loop is used for hyperparameter tuning, and an outer k-fold CV loop is used for model performance estimation. It provides an almost unbiased performance estimate but is computationally very intensive [24]. |

| Data Leakage Prevention | The practice of ensuring no information from the test set influences the training process. This includes performing operations like feature scaling after splitting the data and within each fold of CV, not before [18]. |

Application in Text-Mined Synthesis Research

The validation principles discussed are acutely relevant in the domain of text-mined synthesis research for materials and drug development. In these fields, datasets are often:

- Limited in Size: Manually curating high-quality synthesis data from literature is time-consuming, leading to smaller datasets that benefit greatly from k-fold cross-validation's efficient data use [21].

- Noisy: Automated text-mining can introduce extraction errors, making robust validation even more critical to ensure models learn true patterns rather than artifacts of the data collection process [21] [4].

- Require High Generalization: The ultimate goal is to accurately predict synthesis outcomes for novel compounds. A rigorous validation protocol is the best defense against deploying an overfitted, underperforming model into the experimental workflow.

For instance, studies predicting solid-state synthesizability of ternary oxides or planning synthesis routes for gold nanoparticles rely on validated machine learning models built from text-mined data [21] [4]. The choice between holdout and k-fold validation in such contexts directly impacts the confidence researchers can have in the model's predictions before committing to costly and time-consuming lab experiments.

The field of materials science has witnessed exponential growth in research publications, creating both an invaluable knowledge resource and a significant data extraction challenge. Nowhere is this more evident than in the domains of inorganic materials and metal-organic frameworks (MOFs), where synthesis parameters critically determine material properties and functionality. Text mining has emerged as a powerful methodology to convert unstructured scientific texts into structured, machine-readable data, enabling large-scale analysis and prediction of synthesis-property relationships [25]. This comparison guide examines leading datasets and approaches in this domain, with particular emphasis on their application in cross-validating synthesis parameters for inorganic materials and MOFs.

The exponential growth of MOF literature exemplifies this challenge and opportunity. By 2022, the Cambridge Crystallographic Data Center had documented more than 110,000 MOF structures, rendering conventional trial-and-error synthesis increasingly inefficient for exploring this vast chemical space [26]. Similar challenges exist across solid-state inorganic chemistry, where synthesis recipes remain buried in unstructured experimental paragraphs. This guide systematically compares the leading resources that aim to address these challenges through automated data extraction, structuring, and validation methodologies.

Key Datasets and Their Methodologies

Comprehensive Dataset Comparison

Table 1: Comparison of Major Text-Mined Synthesis Datasets

| Dataset/System | Source Materials | Extraction Method | Key Parameters | Scale | Primary Application |

|---|---|---|---|---|---|

| CederGroup Text-Mined Dataset [27] | 95,283 solid-state synthesis paragraphs | NLP pipeline with materials entity recognition | Starting compounds, synthesis steps, conditions, chemical equations | 30,031 chemical reactions | General inorganic materials synthesis prediction |

| MOFh6 System [26] | Raw MOF articles with DOIs | Multi-agent LLM framework (GPT-4o-mini) | 14 synthesis parameters including metal precursors, organic linkers, solvent systems | 99% extraction accuracy | MOF synthesis protocol standardization |

| Yaghi et al. ChatGPT Approach [28] | 228 peer-reviewed MOF papers | ChatGPT prompt engineering | Synthesis conditions, crystallization parameters | 26,257 distinct parameters for ~800 MOFs | MOF crystallization prediction (87% accuracy) |

| CSD MOF Decomposition Dataset [29] | 28,994 3D MOFs from Cambridge Structural Database | Automated decomposition algorithm | Metal nodes, organic linkers, pore limiting diameters | 14,296 single metal-linker MOFs | Porosity prediction from components |

Experimental Protocols and Extraction Methodologies

Each dataset employs distinct methodological approaches for information extraction and validation:

The CederGroup pipeline utilizes a combination of text mining and natural language processing approaches to convert unstructured scientific paragraphs describing inorganic materials synthesis into "codified recipes" of synthesis. Their methodology involves several specialized steps: paragraphs classification to identify synthesis-related content, materials entity recognition (MER) to identify relevant chemical entities, and similarity analysis of precursors in solid-state synthesis [27]. This multi-stage approach ensures comprehensive coverage of synthesis parameters while maintaining contextual accuracy.

The MOFh6 system employs a dynamic multi-agent framework based on large language models (specifically GPT-4o-mini) that reconstructs complete semantic contexts through specialized agents for synthesis data parsing, table data processing, and chemical abbreviation resolution. A notable innovation is its dual-verification mechanism of regular expressions and LLM to resolve co-references from abbreviations to full names, addressing a significant challenge in chemical text mining [26]. The system achieves 94.1% abbreviation resolution accuracy across five major publishers and maintains a precision of 0.93 ± 0.01 in parameter extraction.

The Yaghi et al. approach leverages ChatGPT with specialized prompt engineering to process relevant sections in MOF research papers and extract, clean up, and organize synthesis data. This methodology demonstrates the capability of large language models to achieve high-accuracy extraction with minimal coding knowledge requirements [28]. The extracted data subsequently trains machine learning models that achieve 87% accuracy in predicting MOF experimental crystallization outcomes.

Table 2: Performance Metrics of Extraction Methodologies

| Methodology | Extraction Accuracy | Processing Speed | Key Innovation | Limitations |

|---|---|---|---|---|

| CederGroup NLP Pipeline | Not specified | Not specified | Materials entity recognition | Limited to solid-state synthesis |

| MOFh6 Multi-agent LLM | 99% | 9.6s per article, 36s for synthesis localization | Cross-paragraph semantic fusion | Requires institutionally authorized crawlers |

| ChatGPT Prompt Engineering | High (specific metric not provided) | Very fast (batch processing) | Minimal coding knowledge requirement | Dependent on carefully crafted prompts |

| CSD Decomposition [29] | 87.8% success rate | Not specified | Automated MOF deconstruction to components | Limited to structurally characterized MOFs |

Cross-Validation of Synthesis Parameters

Workflow for Cross-Validation

The integration of multiple text-mined datasets enables robust cross-validation of synthesis parameters, a critical requirement for ensuring data reliability in materials research. The following diagram illustrates a comprehensive workflow for cross-validating text-mined synthesis parameters across multiple datasets:

Applications in Predictive Modeling

Cross-validated synthesis parameters serve as critical inputs for machine learning models predicting material properties and synthesis outcomes. For instance, the CSD MOF decomposition dataset enables prediction of guest accessibility with 80.5% accuracy based solely on metal and linker identities, without requiring a priori knowledge of the MOF structure [29]. This approach uses a random forest classifier trained on chemical descriptors of metal-linker combinations to predict whether resulting MOF structures will be accessible to guests (defined as having a pore limiting diameter >2.4 Å).

Similarly, the Yaghi et al. ChatGPT-mined dataset facilitates machine learning models that achieve 87% accuracy in predicting MOF experimental crystallization outcomes [28]. This demonstrates the practical utility of validated synthesis parameters in guiding experimental work and reducing trial-and-error approaches.

Another application comes from MOF-based mixed matrix membranes (MMMs) for CO2 capture, where machine learning models trained on literature data reveal optimal MOF structures with pore size >1 nm and surface area of ~800 m² g⁻¹ [30]. The experimental validation of these predictions demonstrates how cross-validated data can overcome traditional permeability-selectivity trade-offs in membrane design.

Essential Research Reagent Solutions

The experimental protocols revealed through text mining efforts rely on carefully selected reagents and synthesis conditions. The following table summarizes key "research reagent solutions" commonly identified across text-mined MOF and inorganic materials synthesis data:

Table 3: Essential Research Reagents in Text-Mined Synthesis Protocols

| Reagent Category | Specific Examples | Function in Synthesis | Prevalence in Datasets |

|---|---|---|---|

| Metal Precursors | Copper ions, Zinc nitrate, Iron chloride | Form secondary building units (SBUs) as metal nodes | Universal across MOF datasets |

| Organic Linkers | 1,3,5-benzenetricarboxylic acid (BTC), 2-methylimidazole | Connect metal nodes to form framework structures | Universal across MOF datasets |

| Solvent Systems | DMF, water, ethanol, DEF | Medium for reaction and crystal growth | >90% of MOF synthesis procedures |

| Modulators | Acetic acid, nitric acid, hydrochloric acid | Control crystal growth and morphology | ~40% of advanced MOF syntheses |

| Structure-Directing Agents | Alkyl ammonium salts, surfactants | Influence pore structure and morphology | ~25% of complex structure syntheses |

These reagent categories represent the fundamental building blocks identified through analysis of text-mined synthesis data. Their specific combinations and concentrations, along with processing parameters such as temperature, reaction time, and activation protocols, collectively determine the structural characteristics and properties of the resulting materials [31] [26].

Integration Pathways for Data-Driven Materials Discovery

The convergence of text-mined datasets with experimental validation and machine learning prediction creates a powerful framework for accelerated materials discovery. The following diagram illustrates this integrated pathway, highlighting how cross-validation enhances reliability at each stage:

This integrated approach demonstrates how cross-validated text mining transforms materials research from isolated investigations into a cumulative, data-driven science. As these methodologies mature, they enable increasingly accurate predictive models for synthesis outcomes and material properties, ultimately reducing the time and resource investments required for materials development [25] [32].

The future trajectory of this field points toward even tighter integration of text mining with experimental automation. Recent advances include the incorporation of text-mined synthesis data with autonomous laboratories and multi-agent AI systems that can process textual, visual, and structural information in a unified way [25] [32]. These developments promise to further accelerate the discovery and optimization of inorganic materials and MOFs for applications ranging from carbon capture to drug delivery.

Social and Anthropogenic Biases in Historical Synthesis Literature

The increasing reliance on data-driven methods to predict and plan inorganic material synthesis has uncovered a critical, yet long-overlooked issue: the historical literature used to train these models is not objective. It is permeated by social and anthropogenic biases—systematic skews resulting from the cumulative choices, heuristics, and social influences of human scientists. These biases can significantly hinder exploratory discovery by limiting the chemical and synthetic space that machine learning models can effectively learn from and propose. This guide compares the performance of traditional, human-selected synthesis data against emerging, bias-aware approaches, framing the comparison within the broader thesis that cross-validation of text-mined synthesis parameters is essential for robust and generalizable synthesis prediction.

Research by Jia et al. demonstrates that these biases manifest in two primary forms: reagent choice bias and reaction condition bias [33]. Their analysis of reported crystal structures revealed that amine choices in hydrothermal synthesis follow a power-law distribution, where a small fraction of amines (17%) account for the majority (79%) of reported compounds. This "rich-get-richer" distribution aligns with models of social influence, suggesting that researchers are disproportionately influenced by precedent and popularity when selecting reactants. Similarly, an analysis of unpublished laboratory notebooks showed that the selection of reaction conditions, such as temperature and time, is also highly constrained and non-random [33]. These human-selected datasets form the foundation of many predictive models, thereby encoding these limitations and perpetuating them in future research recommendations.

The following table summarizes the key characteristics and inherent biases of different sources of synthesis data, from traditional human-curated literature to modern approaches designed to mitigate bias.

Table 1: Comparison of Synthesis Data Sources and Their Anthropogenic Biases

| Data Source | Nature of Bias | Impact on Predictive Models | Exploratory Potential |

|---|---|---|---|

| Traditional Literature (Human-Selected Recipes) | - Reagent popularity bias (power-law distribution) [33]- Conditional bias (narrow, socially influenced parameter ranges) [33]- Success-only bias (systematic omission of failed experiments) [34] | Models are exploitative; they excel at predicting known successes but have poor failure prediction and low accuracy for unexplored spaces [33] [34]. | Limited; reinforces existing knowledge and frequently leads to local optimization rather than true discovery. |

| Text-Mined Datasets (e.g., from Solid-State Literature [35]) | - Inherits all biases present in the source literature.- May introduce text-mining selection biases (e.g., from paragraph classification or named entity recognition models). | Provides broad, large-scale data for analysis but models trained on it will perpetuate and amplify historical human biases [33]. | Uncovers broad patterns in historical practice, but the exploration is confined to previously documented paths. |

| Randomized Experiments (Controlled Generation) | - Minimizes anthropogenic bias by using probability density functions to select parameters [33] [34].- Includes successful and failed outcomes. | Models trained on smaller randomized datasets outperform those trained on larger human-selected datasets. They are more robust and optimistic for exploration [33]. | High; efficiently maps the viable synthesis space and reveals previously unknown parameter windows for successful reactions. |

| High-Throughput & Automated Workflows (e.g., RAPID, ESCALATE [34]) | - Reduces human decision-making at the experimental stage.- Captures fine-grained, standardized data, including negative results. | Enables the creation of high-quality, bias-reduced datasets ideal for training highly generalizable and exploratory models [34]. | Maximum; allows for the systematic interrogation of high-dimensional synthesis spaces that are intractable for human-guided exploration. |

Experimental Protocols for Identifying and Quantifying Bias

Protocol: Data-Mining for Reagent Popularity Bias

This methodology was used to identify the power-law distribution in reagent choices [33].

- Data Collection: Assemble a dataset of reported synthesis reactions from a specific domain (e.g., amine-templated metal oxides) using text-mining of scientific literature or analysis of crystal structure databases [33].

- Entity Recognition: Extract all reactant reagents mentioned in the synthesis paragraphs or database entries. Advanced methods may use a BiLSTM-CRF (Bidirectional Long Short-Term Memory with a Conditional Random Field) neural network or fine-tuned Large Language Models (LLMs) for precise material entity recognition [35] [36].

- Frequency Analysis: Calculate the frequency of use for each unique reagent.

- Distribution Fitting: Analyze the frequency distribution. A power-law distribution, where a small number of reagents account for a large majority of syntheses, is indicative of strong anthropogenic bias driven by precedent and social influence [33].

Protocol: Evaluating Bias via Randomized Experimentation

This protocol tests the core hypothesis that human-selected reaction conditions are suboptimal for exploration and model training [33].

- Baseline Establishment: Start with a set of historical, human-selected synthesis data for a specific material system.

- Randomized Design: Generate a set of synthesis experiments where parameters (e.g., reactant concentrations, temperature, time) are chosen randomly using probability density functions, rather than by human intuition [33] [34].

- Parallel Experimentation: Conduct both the human-selected and randomly generated experiments (e.g., 548 random experiments as in the cited study [33]) and record all outcomes, including successes and failures.

- Model Training & Comparison:

- Train Machine Learning Model A on the large, human-selected dataset.

- Train Machine Learning Model B on the smaller, randomized experimental dataset.

- Performance Evaluation: Compare the performance of both models on a held-out test set or in predicting the outcomes of new, exploratory syntheses. The key finding is that Model B, trained on less but less-biased data, will outperform Model A in predictive accuracy and utility for exploration [33].

Workflow: From Text-Mining to Bias-Aware Synthesis Prediction

The diagram below illustrates a comprehensive workflow for cross-validating text-mined synthesis parameters to build bias-aware predictive models.

The Scientist's Toolkit: Key Research Reagents and Platforms

This table details essential reagents, materials, and computational platforms central to conducting research in text-mined synthesis and bias mitigation.

Table 2: Essential Research Reagents and Platforms for Synthesis Informatics

| Tool/Reagent | Type | Function in Research |

|---|---|---|

| CTAB (Cetyltrimethylammonium bromide) | Chemical Reagent | A common capping agent in seed-mediated gold nanoparticle synthesis; its presence and concentration are key text-mined parameters influencing nanoparticle morphology [36]. |

| Amine Templates (e.g., ethylenediamine) | Chemical Reagent | Common reactants in hydrothermal synthesis of metal oxides; their popularity bias is a canonical example of anthropogenic bias in literature data [33]. |

| ChemDataExtractor / OSCAR4 | Software Tool | Natural Language Processing (NLP) toolkits specifically designed for automated extraction of chemical information (materials, properties, synthesis) from scientific text [35]. |

| BiLSTM-CRF Network | Algorithm | A neural network architecture used for Material Entity Recognition (MER), identifying and classifying material names (e.g., target, precursor) in synthesis paragraphs [35]. |

| RAPID / ESCALATE | Automated Platform | High-Throughput Experimentation (HTE) systems that minimize human bias by performing many reactions robotically, generating standardized, fine-grained data for model training [34]. |

| Llama-2 / GPT | Large Language Model | Fine-tuned LLMs can perform joint Named Entity Recognition and Relation Extraction (NERRE) to build structured synthesis recipes directly from literature text [36]. |

The objective comparison presented in this guide clearly demonstrates that historical synthesis literature, while a rich data source, carries significant anthropogenic biases that impair its utility for guiding exploratory research. The reliance on "tried and true" reagents and conditions creates a feedback loop that limits discovery. The cross-validation of text-mined parameters against data from randomized or high-throughput experiments is not merely an academic exercise; it is a necessary step for building reliable and innovative synthesis prediction tools. Models trained on smaller, less-biased datasets have been proven to outperform those trained on larger, human-selected datasets, highlighting that data quality and diversity are more important than sheer volume [33]. The future of synthesis planning lies in integrating the scale of text-mined historical data with the rigor of bias-aware data generation, moving from exploitative to truly exploratory science.

Implementing Cross-Validation Pipelines for Text-Mined Parameters

The ever-increasing volume of academic and technical literature presents both an unprecedented opportunity and a significant challenge for researchers. In fields ranging from drug development to materials science, crucial information about experimental procedures and synthesis parameters remains locked within unstructured textual data. Text mining pipelines have emerged as essential tools for automating the extraction of structured knowledge from this data deluge, potentially accelerating research cycles and enabling data-driven discovery. However, the performance and reliability of these pipelines vary considerably based on their architectural components and validation methodologies.

This guide provides an objective comparison of text-mining approaches, with a specific focus on their application within a broader thesis context: cross-validation of text-mined synthesis parameters. For researchers and drug development professionals, selecting the appropriate pipeline components is not merely a technical exercise but a critical determinant of research validity. We present experimental data comparing algorithmic performance, detail essential methodologies, and provide a structured framework for implementing a complete pipeline from literature procurement to final "recipe" extraction, with all analysis framed against the rigorous standard of prospective validation in scientific discovery.

Pipeline Architecture: Core Components and Comparative Performance

A complete text-mining pipeline is a multi-stage system where the output of each stage feeds into the next. The choice of techniques at each stage significantly impacts the final quality of the extracted synthesis parameters. The performance of these components is not theoretical; it must be evaluated empirically, as complexity does not always guarantee superior results.

Text Preprocessing and Feature Engineering

Text preprocessing serves as the foundational filter for raw data, improving quality and relevance by removing noise and standardizing the input text [37]. This stage includes tokenization (breaking text into smaller units like words or sentences), stopword removal (eliminating common words like "the" or "and" which can reduce text size by 35-45%), and text normalization [37]. The decision to apply preprocessing is data-driven and is particularly crucial when dealing with real-world documents that often contain inconsistent formatting, misspellings, and unwanted characters [37] [38].

Following preprocessing, feature engineering transforms the cleaned text into a numerical format that machine learning models can process.

Table 1: Comparison of Feature Extraction Techniques

| Technique | Best For | Strengths | Limitations | Reported Contextual Performance |

|---|---|---|---|---|

| Bag of Words (BoW) | Basic text classification, spam detection, initial categorization [39] | Computational simplicity, intuitive implementation, effective for simple taxonomic tasks [39] | Ignores word order and context, poor at capturing meaning [39] | Effective in procurement document classification where word presence is a strong signal [40] |

| TF-IDF | Information retrieval, document classification, highlighting distinctive terms [39] | Down-weights common terms, highlights unique and informative words in a document corpus [39] | More computationally intensive than BoW; still does not capture semantic relationships [39] | Superior to BoW in identifying key contract clauses and technical specifications [41] |

| N-grams | Sentiment analysis, phrase detection, capturing local context [39] | Captures local word order and context (e.g., "not good" vs "good") [39] | Can lead to high dimensionality and sparsity; large N can cause overfitting [39] | Improves accuracy in dependency parsing of test cases in software engineering [38] |

| Word Embeddings & Deep Learning | Complex semantic similarity, context-aware tasks [37] | Captures complex linguistic patterns and semantic meanings; state-of-the-art for many NLP tasks [37] | High computational resource requirement; risk of overfitting with limited data; less interpretable [42] [40] | Outperforms others in fine-grained Named Entity Recognition (NER) for material science concepts [43] |

Entity Recognition and Relationship Extraction

This is the core "understanding" phase of the pipeline. Named Entity Recognition (NER) is used to identify and categorize key entities within the text, such as material names, chemical compounds, numerical parameters, or process names [39] [43]. In a materials science context, this could involve annotating texts with concepts from a specialized ontology, distinguishing between 179 distinct classes such as mm:ProcessingTemperature or mm:AlloyComposition [43].

The emerging paradigm of neurosymbolic AI combines the statistical power of language models with the structured, logical knowledge of ontologies. This integration allows for more interpretable and logically consistent extraction, which is crucial for validating synthesis parameters [43]. For example, an ontology can enforce that a mm:HotRolling process must act upon a mm:MetallicMaterial, providing a sanity check for the model's extractions.

Cross-Validation and Prospective Validation in Text Mining

A central tenet of our thesis context is that models performing well on conventional random-split validation can fail catastrophically when applied to real-world discovery tasks. This is because their applicability domain is often limited to compounds or materials similar to those in the training set [44]. True validation must simulate the prospective use case: predicting genuinely novel synthesis parameters.

Beyond Random Splits: k-fold n-step Forward Cross-Validation

In real-world research, the goal is to predict the properties of novel compounds or materials that have not yet been synthesized, representing a significant challenge of out-of-distribution data [44]. The k-fold n-step forward cross-validation (SFCV) method addresses this by simulating temporal or logical progression [44].

In drug discovery, this can be implemented by sorting a dataset of compounds by a key property like LogP (hydrophobicity) and then sequentially training on earlier, less drug-like compounds to predict the properties of later, more optimized ones [44]. This method provides a more realistic assessment of a model's utility in a real discovery pipeline than conventional random splits.

Key Metrics for Prospective Performance

When evaluating models for prospective prediction, standard metrics like accuracy are insufficient. Two critical metrics adapted from materials science are:

- Discovery Yield: Measures the model's ability to identify materials or compounds whose properties lie outside the range of the training data—for instance, predicting a higher efficacy or a more desirable release profile than previously known [44].

- Novelty Error: Assesses whether the model can generalize to new, unseen data that differs significantly from the training set, helping to define the model's true applicability domain [44].

Table 2: Comparative Performance of ML Models for Prospective Property Prediction

| Model Algorithm | Best Use-Case Scenario | Key Strengths | Validation Performance (on SFCV) | Considerations for Recipe Extraction |

|---|---|---|---|---|

| Random Forest (RF) | Medium-sized, structured datasets (e.g., tabular features from text) [44] [42] | Robust to overfitting, good interpretability, can handle mixed data types [44] [42] | Good performance in bioactivity prediction with limited data (~25 trees) [44] | Ideal when features are a mix of numerical parameters and categorical entity tags. |

| Gradient Boosting (e.g., LGBM) | Tasks requiring high predictive accuracy with structured data [42] | High accuracy, can capture complex non-linear relationships [42] | Top performer in predicting drug release from polymer-based long-acting injectables [42] | Best choice when prediction accuracy of a single parameter (e.g., yield) is paramount. |

| Multi-Layer Perceptron (MLP) | Large datasets with complex non-linear patterns [44] [42] | High model capacity, can learn intricate feature interactions [42] | Risk of overfitting in low-data regimes (e.g., bioactivity prediction) [44] | Use only when a very large corpus of annotated recipes is available. |

| Rule-Based Systems | Well-structured domains with clear, consistent patterns (e.g., extracting dates, doses) [39] [40] | Easier to implement, highly interpretable, fit-for-purpose, requires no training data [40] | Highly effective in extracting structured data from multilingual procurement documents [40] | Unbeatable for extracting specific, predictable parameters from standardized document sections. |

Comparative Analysis: Pipeline Performance Across Domains

The optimal pipeline configuration is highly dependent on the specific domain and the nature of the source texts. Below, we compare experimental outcomes from three distinct fields to illustrate this dependency.

Software Engineering: Simplicity vs. Complexity

An industrial case study at ALSTOM Sweden on clustering software test cases found that the impact of algorithmic complexity on performance is nuanced. While advanced methods (e.g., neural network embeddings) can detect complex semantic relationships, their superiority is not absolute [38]. The study concluded that for many practical tasks, simpler, interpretable solutions (e.g., string distance methods) are often preferred unless accuracy is heavily compromised, highlighting the importance of balancing complexity with utility and transparency, especially in safety-critical domains [38].

Healthcare Procurement: The Power of Hybrid Approaches

A large-scale project mining millions of multilingual healthcare procurement documents demonstrated the enduring value of rule-based methods and domain lexicons in complex, real-world environments [40]. While deep learning models dominate academic literature, this industrial application successfully used a hybrid method that leveraged domain knowledge to generalize across multiple tasks and languages. The key lesson was that practitioners should focus on real needs and resource constraints rather than defaulting to the most complex algorithm [40].

Materials Science: Precision via Ontologies

Research on extracting Process-Structure-Property entities highlights the advantage of ontology-based approaches. Using the MaterioMiner dataset, which links textual entities to a Materials Mechanics Ontology, researchers achieved fine-grained Named Entity Recognition (NER) across 179 distinct classes [43]. This symbolic approach provides a structured, standardized framework for knowledge representation, ensuring that extracted entities like "solution heat treatment" or "yield strength" are unambiguous and computationally tractable, which is vital for building reliable knowledge graphs of synthesis recipes [43].

The Scientist's Toolkit: Essential Research Reagents

Implementing a text-mining pipeline requires both software and conceptual "reagents." The following table details key resources mentioned in the cited research.

Table 3: Key Research Reagent Solutions for Text-Mining Pipelines

| Item / Resource | Function / Application | Relevance to Pipeline Stage |

|---|---|---|

| RDKit [44] | An open-source toolkit for Cheminformatics; used for standardizing molecular structures (SMILES) and calculating molecular descriptors (e.g., ECFP4 fingerprints, LogP). | Featurization & Data Standardization. Critical for converting chemical names extracted from text into standardized, computable representations. |

| SpaCy / NLTK [39] | Industrial-strength natural language processing libraries. Provide pre-trained models for core tasks like Tokenization, Part-of-Speech (POS) Tagging, and Named Entity Recognition (NER). | Text Preprocessing & Entity Recognition. The foundation for parsing and initially understanding text structure and content. |

| SciBERT [40] | A pre-trained language model based on BERT but trained on a large corpus of scientific publications. | Feature Extraction & Semantic Similarity. Excels at understanding the context and language specific to academic papers, improving entity and relationship extraction. |

| Scikit-learn [44] | A core library for machine learning in Python. Offers implementations of classic algorithms (Random Forest, SVMs) and utilities for model evaluation (cross-validation, metrics). | Model Training & Evaluation. The standard toolbox for building and validating traditional ML models in the pipeline. |

| Protégé [43] | An open-source platform for building and managing ontologies. | Knowledge Representation. Used to define the formal schema (ontology) that gives structure and meaning to the extracted entities and their relationships. |

| TopicTracker [45] | A specialized software pipeline for text mining on PubMed data. It automates querying, trend analysis, and the creation of semantic network maps from scientific literature. | Literature Procurement & Trend Analysis. Useful for the initial stage of gathering and getting an overview of the relevant domain literature. |

Experimental Protocols and Workflow Visualization

Protocol for k-fold n-step Forward Cross-Validation

This protocol is adapted from bioactivity prediction studies to validate text-mined synthesis parameters [44].

- Dataset Preparation: Compile a dataset of historical synthesis data (e.g., compounds, processing conditions, and their resulting properties). Standardize all entities (e.g., chemical structures, parameter names) using tools like RDKit and an ontology.

- Data Sorting: Sort the entire dataset in a logical order that mimics real-world optimization. This could be by a key physicochemical property (e.g.,

LogP), by publication date, or by structural similarity. - Fold Creation: Divide the sorted dataset into

ksequential bins (e.g., 10 bins). - Iterative Training and Testing:

- Iteration 1: Train the model on data from Bin 1. Validate its predictions on Bin 2.

- Iteration 2: Train the model on data from Bins 1 and 2. Validate on Bin 3.

- Continue this process until the final iteration, which trains on Bins 1 through

k-1and tests on Bink.

- Metric Calculation: For each iteration, calculate prospective metrics like Discovery Yield and Novelty Error in addition to standard metrics like Root Mean Square Error (RMSE). Aggregate results across all folds.

Protocol for Ontology-Based Entity Recognition

This protocol is used for creating a fine-grained, annotated dataset from materials science literature [43].

- Ontology Development: Develop or select a domain-specific ontology (e.g., the Materials Mechanics Ontology) that defines the classes and relationships of interest (e.g.,

mm:Processing,mm:Property,mm:Material). - Corpus Collection: Gather a corpus of relevant scientific publications (PDFs or plain text).

- Annotation: Manually annotate text spans in the corpus, linking them to classes in the ontology. This is typically done by multiple human raters to ensure consistency.

- Curation & Adjudication: Resolve annotation disagreements between raters to create a gold-standard dataset.

- Model Fine-Tuning: Use the annotated dataset to fine-tune a pre-trained language model (e.g., SciBERT) for the NER task. This teaches the model to recognize domain-specific entities.

Complete Text-Mining Pipeline Workflow

The following diagram illustrates the logical flow and component relationships of a complete text-mining pipeline, integrating the key stages discussed in this guide.

Diagram Title: End-to-End Text-Mining Pipeline for Recipe Extraction

Building a robust text-mining pipeline for recipe extraction is a multifaceted endeavor that requires careful, deliberate choices at every stage. As the comparative data shows, there is no single "best" algorithm or approach. The optimal configuration is dictated by the specific domain, the quality and structure of the source texts, and—critically—the required standard of validation.

For research centered on the cross-validation of text-mined synthesis parameters, the following principles are paramount. First, prospective validation strategies like k-fold n-step forward cross-validation are non-negotiable for assessing real-world utility. Second, the trade-off between complexity and interpretability must be actively managed, with simpler, rule-based methods often providing surprising value in structured domains. Finally, the integration of symbolic knowledge (via ontologies) with statistical language models represents the cutting edge for achieving both high precision and logical consistency. By adopting this structured, empirically-grounded approach, researchers can transform unstructured literature into a reliable, computable resource for accelerating scientific discovery.

Material Entity Recognition and Synthesis Operation Classification in Practice

The exponential growth of materials science literature presents both an unprecedented opportunity and a significant challenge for researchers. With millions of publications containing valuable synthesis protocols and experimental data, manual extraction of this information is becoming increasingly impractical. Automated material entity recognition and synthesis operation classification have emerged as critical technologies for converting unstructured scientific text into structured, machine-readable data that can power data-driven materials discovery [46] [25]. These natural language processing (NLP) techniques enable researchers to systematically organize experimental information from scientific papers, facilitating the creation of comprehensive knowledge bases that capture the complex relationships between synthesis parameters and material properties.

This guide provides an objective comparison of current approaches for extracting materials synthesis information from scientific literature, with a specific focus on their performance, methodological foundations, and practical applicability. The evaluation is framed within the broader context of cross-validating text-mined synthesis parameters—a crucial step toward building trustworthy data-driven workflows in experimental materials science. As text mining technologies increasingly inform experimental planning and autonomous laboratories, understanding the strengths and limitations of different extraction methods becomes essential for researchers seeking to leverage these powerful tools [21].

Performance Comparison of Material Information Extraction Systems

Quantitative Performance Metrics Across Domains

Table 1: Performance comparison of entity recognition systems in scientific domains

| System Name | Domain | Architecture | Key Entities Extracted | Performance (F1 Score) | Training Data Size |

|---|---|---|---|---|---|

| SURUS [47] | Clinical Trials | PubMedBERT | PICO elements, study design | 0.95 (in-domain), 0.84-0.90 (out-of-domain) | 39,531 labels across 400 abstracts |

| T2BR (Battery Recipes) [46] | Battery Materials | Transformer NER | Precursors, active materials, synthesis conditions | 88.18% (cathode), 94.61% (cell assembly) | 30 entities across 2,174 papers |

| Gold Nanoparticle NLP [4] | Nanomaterials | MatBERT + LDA | Morphologies, sizes, synthesis actions | Not explicitly reported | 5,154 records from 4.9M publications |

| MatSciBERT [48] | General Materials | Domain-adapted BERT | Material names, properties, synthesis parameters | SOTA on multiple materials NER tasks | 285M words from 150K papers |

Table 2: Comparison of large language model performance on scientific extraction tasks

| Model | Task | Approach | Performance | Limitations |

|---|---|---|---|---|

| GPT-4 [46] | Battery recipe extraction | Few-shot learning | Lower than fine-tuned transformers | Higher cost, potential hallucinations |

| Llama 3 [49] | Multilabel document classification | Zero-shot, instruction tuning | Micro F1-score: 0.88 | Struggles with rare labels (F1: 0.30) |

| Fine-tuned BERT variants [47] [46] | Named entity recognition | Supervised fine-tuning | F1: 0.84-0.95 | Requires annotated training data |

Cross-Domain Performance Analysis

The performance comparison reveals a consistent pattern across domains: specialized, fine-tuned transformer models generally outperform both traditional machine learning approaches and general-purpose large language models for structured information extraction tasks. The SURUS system demonstrates exceptional in-domain performance (F1: 0.95) for clinical trial data, while maintaining robust out-of-domain capability (F1: 0.84-0.90) [47]. Similarly, the T2BR protocol for battery recipes achieves notably high performance on cell assembly entity recognition (F1: 94.61%), though slightly lower on cathode material synthesis (F1: 88.18%) [46].

When comparing architectural approaches, BERT-based models fine-tuned on domain-specific corpora consistently establish state-of-the-art results. MatSciBERT, trained on 285 million words from peer-reviewed materials science publications, demonstrates superior performance over general scientific language models like SciBERT on multiple materials-specific NER tasks [48]. This performance advantage highlights the importance of domain adaptation through continued pre-training on specialized corpora.

Experimental Protocols and Methodologies

Text-Mining Workflow for Synthesis Information Extraction

Detailed Methodological Approaches

Literature Collection and Preprocessing: The initial phase involves gathering relevant scientific literature through publisher APIs or existing databases. The T2BR protocol collected 5,885 papers using targeted queries via the ScienceDirect RESTful API, focusing on specific battery materials [46]. Similarly, the gold nanoparticle dataset was built by processing nearly 5 million materials science publications obtained through agreements with major scientific publishers [4]. Preprocessing typically involves converting documents to plain text, segmenting into paragraphs, and cleaning irrelevant content such as copyright notices and page headers.

Domain-Specific Filtering: A critical step involves filtering the corpus to retain only publications relevant to the target domain. Multiple approaches exist for this task:

- Machine Learning Classification: The T2BR protocol employed a TF-IDF-based XGBoost classifier trained on 1,000 annotated abstracts, achieving an F1-score of 85.19% for identifying battery recipe papers [46].

- Unsupervised Topic Modeling: Both the battery recipe and gold nanoparticle pipelines utilized Latent Dirichlet Allocation (LDA) to identify paragraphs related to specific topics such as synthesis procedures and characterization results [4] [46].

- Transformer-Based Classification: The gold nanoparticle pipeline used MatBERT, a materials-specific BERT model, fine-tuned on 739 annotated paragraphs to identify synthesis-related content [4].

Named Entity Recognition Implementation: The core extraction phase employs sequence labeling models to identify and classify relevant entities:

- Architecture Selection: Modern systems typically use transformer-based architectures fine-tuned on annotated corpora. The SURUS system demonstrates the effectiveness of PubMedBERT for clinical trial data [47], while MatSciBERT shows advantages for general materials science texts [48].

- Annotation Strategy: High-quality training data is essential, typically created by domain experts following detailed annotation guidelines. The SURUS system achieved an inter-annotator agreement of 0.81 (Cohen's κ) and 0.88 (F1) through rigorous annotation protocols [47].

- Entity Schema Design: Successful systems define comprehensive entity schemas covering the target domain. The T2BR protocol extracts 30 distinct entities covering precursors, synthesis conditions, and assembly parameters [46].

Validation and Cross-Validation Protocols

Table 3: Validation methodologies for text-mined synthesis data

| Validation Approach | Implementation Examples | Advantages | Limitations |

|---|---|---|---|

| Manual Verification | Human-curated ternary oxides dataset [21] | High accuracy, identifies subtle errors | Time-consuming, not scalable |

| Cross-Dataset Validation | Comparing text-mined vs. manual synthesis records [21] | Identifies systematic extraction errors | Requires alternative data sources |