Conditional Generation for Targeted Material Properties: AI-Driven Design in Drug Discovery and Beyond

This article explores the transformative role of conditional generative models in designing materials and molecules with precisely targeted properties.

Conditional Generation for Targeted Material Properties: AI-Driven Design in Drug Discovery and Beyond

Abstract

This article explores the transformative role of conditional generative models in designing materials and molecules with precisely targeted properties. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive overview of the field, from foundational concepts to real-world applications. We delve into key methodologies like diffusion models and autoregressive architectures, highlighting their use in inverse design for pharmaceuticals and advanced materials. The content addresses critical challenges such as model guidance with non-differentiable simulators and synthetic accessibility, while also covering essential validation protocols and comparative analyses of different AI approaches. By synthesizing insights from cutting-edge research, this article serves as a guide for leveraging conditional generation to accelerate innovation in biomedicine and material science.

The Foundations of Conditional Generation: From Core Concepts to Scientific Imperatives

Conditional generation represents a paradigm shift in computational materials science, moving beyond uncontrolled synthesis to enable the targeted design of novel substances. This approach frames the discovery process as an inverse problem: instead of analyzing a given structure to determine its properties, it starts with a set of desired properties and generates atomic configurations that satisfy them [1]. In the context of materials research, this typically involves learning the conditional probability distribution p(x|y), where x represents the crystal structure (including lattice parameters, atomic coordinates, and atom types) and y represents the conditioning variables, such as chemical composition, external pressure, or target material properties [2]. This capability is fundamentally transforming the design of advanced materials, including crystalline structures and multiphase microstructures, by providing researchers with precise control over the generative process.

Key Methodological Frameworks

Flow-Based Models for Crystal Structure Prediction

CrystalFlow exemplifies the flow-based approach to conditional generation for crystalline materials. This framework utilizes Continuous Normalizing Flows (CNFs) trained with Conditional Flow Matching (CFM) to transform a simple prior distribution into the complex data distribution of crystal structures [2]. The model simultaneously generates lattice parameters, fractional coordinates, and atom types while explicitly preserving the periodic-E(3) symmetries inherent to crystalline systems through an equivariant geometric graph neural network [2]. A key advancement in CrystalFlow is its rotation-invariant lattice parameterization, which decouples rotational and structural information via polar decomposition (L = Qexp(∑i=1⁶kᵢBᵢ)) [2]. This architecture enables data-efficient learning and high-quality sampling while being approximately an order of magnitude more computationally efficient than diffusion-based models due to requiring fewer integration steps [2].

Conditional Latent Diffusion Models for Microstructure Generation

For 3D multiphase heterogeneous microstructure generation, conditional Latent Diffusion Models (LDMs) have demonstrated remarkable capability. These models operate in a compressed latent space to dramatically reduce computational costs while maintaining high output fidelity [3]. The framework typically consists of three sequentially trained modules: a Variational Autoencoder (VAE) that compresses high-dimensional 3D microstructures into compact latent representations; a Feature Predictor (FP) network that predicts microstructural features and manufacturing parameters from these representations; and the conditional LDM that generates realistic microstructures guided by user specifications [3]. This approach can generate high-resolution 3D microstructures (e.g., 128 × 128 × 64 voxels, representing >10⁶ voxels) within seconds per sample, overcoming the scalability limitations of traditional simulation-based methods that often require hours or days of computation [3].

Extended Flow Matching for Enhanced Control

Extended Flow Matching (EFM) represents a direct extension of flow matching that learns a "matrix field" corresponding to the continuous map from the space of conditions to the space of distributions [4]. This approach allows researchers to introduce explicit inductive bias to how the conditional distribution changes with respect to conditions, which is particularly valuable for applications like style transfer or when minimizing the sensitivity of distributions to input conditions [4]. The MMOT-EFM variant, for instance, aims to minimize the Dirichlet energy to control distribution sensitivity [4].

Table 1: Performance Comparison of Conditional Generation Models

| Model | Architecture | Application Domain | Key Conditioning Variables | Reported Performance |

|---|---|---|---|---|

| CrystalFlow | Flow-based (CNF/CFM) | Crystalline materials | Composition, pressure, material properties | Comparable to state-of-the-art on MP-20/MPTS-52 benchmarks; ~10x faster than diffusion models [2] |

| Conditional LDM | Latent Diffusion | 3D multiphase microstructures | Volume fractions, tortuosities | Generates >10⁶ voxel structures in ~0.5 seconds; matches target descriptors [3] |

| Modifier/Generator | Diffusion/Flow Matching | Crystal structures | Formation energy, chemical features | 41% (modifier) and 82% (generator) accuracy in producing target structures [1] |

Experimental Protocols and Implementation

Conditional Crystal Structure Generation Protocol

Objective: To generate stable crystal structures with targeted properties using flow-based generative models.

Materials and Computational Resources:

- Training Data: Curated datasets such as MP-20 (45,231 structures) or MPTS-52 (40,476 structures) with up to 52 atoms per unit cell [2]

- Representation: Crystal structure

M = (A, F, L)whereA= atom types,F= fractional coordinates,L= lattice matrix [2] - Software Framework: PyTorch or JAX with specialized libraries for geometric deep learning

- Hardware: GPU acceleration (e.g., NVIDIA A100) for efficient training and sampling

Methodology:

- Data Preprocessing:

- Convert crystal structures to rotation-invariant representation using polar decomposition

- Normalize lattice parameters and fractional coordinates

- Encode atom types as categorical vectors

Model Architecture:

- Implement Continuous Normalizing Flows with Conditional Flow Matching objective

- Design equivariant graph neural network for symmetry preservation

- Parameterize time-dependent vector fields for lattice, coordinates, and atom types

Conditioning Mechanism:

- Embed conditioning variables (e.g., target properties, composition) into the network

- Utilize cross-attention or feature-wise linear modulation for condition integration

Training Procedure:

- Optimize flow matching objective with Adam or similar optimizer

- Monitor performance on validation split

- Employ early stopping based on likelihood or sample quality metrics

Sampling and Validation:

- Sample initial structures from Gaussian prior

- Solve ODE with numerical solver (e.g., Runge-Kutta, Dormand-Prince)

- Validate generated structures with DFT calculations for stability and property verification [2]

Conditional 3D Microstructure Generation Protocol

Objective: To synthesize 3D multiphase microstructures with targeted morphological characteristics.

Materials and Data Sources:

- Training Data: Experimentally obtained tomography data or physics-based simulation data (e.g., Cahn-Hilliard generated structures) [3]

- Microstructural Descriptors: Volume fractions, tortuosities, phase connectivity metrics [3]

- Software: Custom LDM implementation with 3D convolutional networks

- Hardware: High-memory GPU for 3D volume processing

Methodology:

- Data Preparation:

- Segment 3D tomography data into distinct phases

- Compute target descriptors (volume fraction, tortuosity) for each sample

- Preprocess volumes to standardized dimensions and voxel spacing

Model Framework:

- Train VAE to compress 3D microstructures into latent representations

- Develop feature predictor network to estimate descriptors from latent codes

- Implement conditional LDM with U-Net backbone for generative process

Conditioning Implementation:

- Concatenate conditioning vector (target volume fractions, tortuosities) with latent codes

- Integrate conditions into U-Net through cross-attention layers

- Enable interpolation in condition space for exploratory design

Training Strategy:

- Pre-train VAE and feature predictor separately

- Train LDM with denoising diffusion objective conditioned on descriptors

- Balance reconstruction quality and condition matching through multi-term loss function

Generation and Analysis:

- Sample from latent prior conditioned on target descriptors

- Execute reverse diffusion process to generate 3D volumes

- Quantitatively verify generated structures match target descriptors

- Predict manufacturing parameters (e.g., annealing conditions) for experimental realization [3]

Table 2: Essential Research Reagents and Computational Tools

| Item | Function/Application | Implementation Details |

|---|---|---|

| Equivariant GNN | Models symmetry-preserving transformations | SE(3)-equivariant layers; periodic boundary conditions [2] |

| Conditional Flow Matching | Training objective for flow models | Replaces simulation-based training; enables efficient sampling [2] |

| Latent Diffusion Model | Generates high-resolution 3D structures | Operates in compressed latent space; reduces computational demands [3] |

| Rotation-Invariant Lattice Parameterization | Represents crystal lattices | Polar decomposition L = Qexp(∑i=1⁶kᵢBᵢ); decouples rotation and structure [2] |

| Microstructural Descriptors | Quantifies morphological features | Volume fraction, tortuosity; used as conditioning variables [3] |

| Descriptor Predictor Network | Estimates features from latent codes | Enables conditioning on structural characteristics [3] |

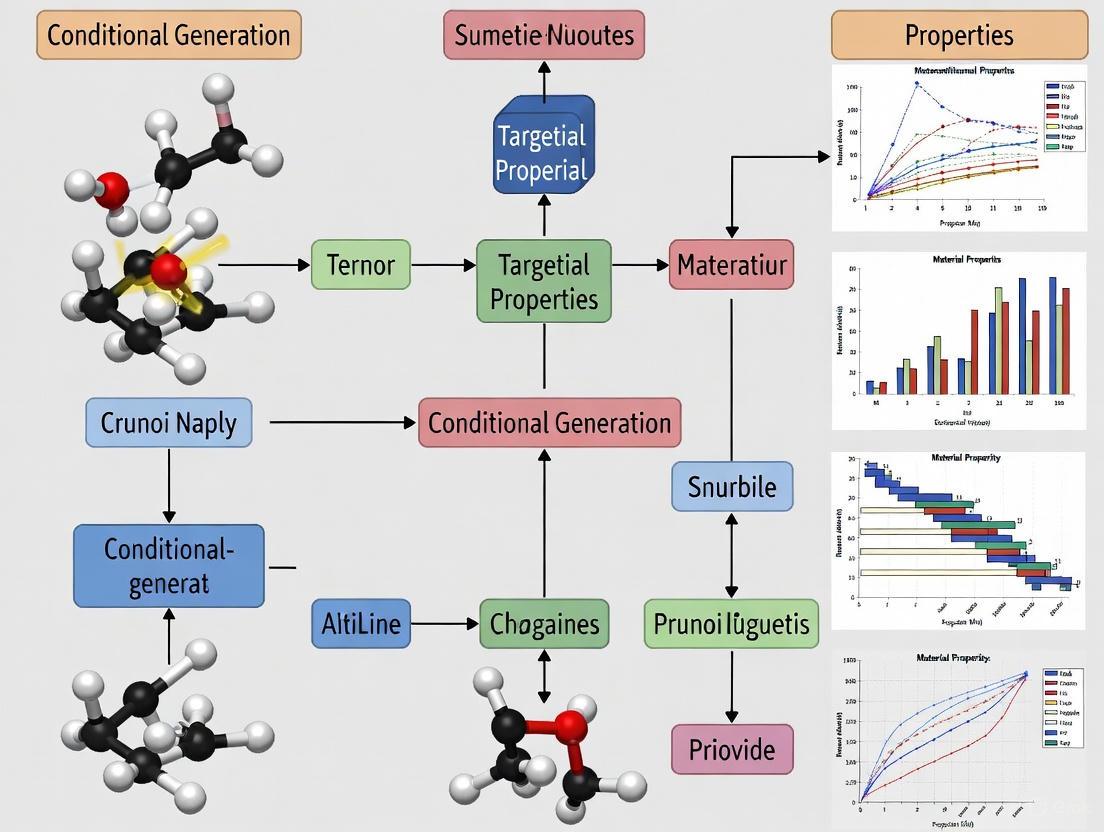

Visualization of Methodologies

Diagram Title: Conditional Crystal Structure Generation Framework

Diagram Title: Conditional 3D Microstructure Generation Workflow

Applications and Research Impact

Conditional generation methodologies are making significant contributions across multiple domains of materials research. In crystalline materials discovery, these approaches enable the prediction of stable structures under specific chemical compositions and external conditions, dramatically accelerating the identification of novel materials with tailored electronic, mechanical, or thermal properties [2] [1]. For organic photovoltaics and energy materials, conditional generation facilitates the design of microstructures with optimal phase separation and charge transport pathways by controlling volume fractions and tortuosities of donor and acceptor phases [3]. The technology also bridges the digital-design-to-experimental-realization gap by predicting manufacturing parameters likely to produce the generated microstructures, addressing the critical "manufacturability gap" in materials design [3].

The experimental protocols outlined herein provide researchers with practical frameworks for implementing these advanced generative approaches. The quantitative performance metrics demonstrate that conditional generation achieves substantial improvements over traditional methods in both accuracy and computational efficiency, enabling the exploration of materials spaces that were previously inaccessible through conventional simulation or experimentation alone. As these methodologies continue to mature, they promise to fundamentally transform the paradigm of materials design from serendipitous discovery to targeted, rational engineering.

The discovery and development of new functional materials and therapeutic molecules represent a fundamental bottleneck in scientific and industrial progress. Traditional methods, which often rely on exhaustive trial-and-error or the screening of predefined compound libraries, are increasingly inadequate for navigating the virtually infinite spaces of possible molecular and crystalline structures. The number of theoretically synthesizable organic compounds is estimated to be between 10³⁰ and 10⁶⁰, a scope that makes comprehensive exploration impossible through conventional means [5]. This sheer diversity, while holding immense potential, creates a critical bottleneck: the efficient identification of candidates that possess not just one, but a balanced set of desired properties for a specific application.

Targeted property design, or conditional generation, emerges as a necessary paradigm to overcome this bottleneck. Unlike general generative methods that learn the broad distribution of existing structures, conditional generative models aim to sample from a constrained distribution, focusing computational resources on regions of the chemical or materials space that are most relevant to a predefined goal [6]. This approach shifts the discovery process from one of blind search to one of intelligent, goal-directed design, significantly enhancing efficiency and the probability of success.

The Conditional Generation Framework

At its core, conditional generation is a computational strategy designed to generate novel structures (e.g., molecules, crystals) that are not only valid and novel but also possess specific, user-defined properties. The fundamental objective is to sample from the conditional distribution ( P(C|y) ), where ( C ) is a structure and ( y ) is the set of target properties, rather than from the general distribution ( P(C) ) of all known structures [6].

The PODGen framework provides a robust and transferable implementation of this principle. It reformulates the problem as sampling from the distribution ( \pi^(C) = P^(C)P^*(y|C) ), where:

- ( P^*(C) ) is the probability of the structure provided by a general generative model.

- ( P^*(y|C) ) is the probability of the target properties given the structure, provided by predictive models [6].

This framework integrates a generative model, predictive models, and an efficient sampling method like Markov Chain Monte Carlo (MCMC) with a Metropolis-Hastings algorithm to iteratively propose and accept new structures that satisfy the target criteria [6].

Application Note: Drug Candidate Optimization with STELLA

This protocol details the use of the STELLA (Systematic Tool for Evolutionary Lead optimization Leveraging Artificial intelligence) framework for the multi-parameter optimization of drug candidates. STELLA combines an evolutionary algorithm for fragment-based chemical space exploration with a clustering-based conformational space annealing (CSA) method for balanced exploration and exploitation [5].

Detailed Methodology

Step 1: Initialization

- Input: A single seed molecule or a user-defined pool of starting molecules.

- Process: Generate an initial molecular pool by applying the FRAGRANCE fragment-based mutation operator to the seed molecule(s) [5].

Step 2: Molecule Generation Loop (Iterative) For each iteration, perform the following steps:

- Variant Generation: Create new molecular variants from the current pool using three operators:

- FRAGRANCE Mutation: A fragment replacement method that enhances structural diversity [5].

- MCS-based Crossover: Recombines molecules based on their maximum common substructure to explore new scaffolds.

- Trimming: Removes parts of molecules to simplify structures and explore property changes.

- Scoring: Evaluate each generated molecule using a user-defined objective function. This function incorporates and weights the specific pharmacological properties to be optimized (e.g., docking score, Quantitative Estimate of Drug-likeness (QED)) [5].

- Clustering-based Selection:

- Cluster all molecules (generated variants plus the existing pool) based on structural similarity.

- Select the molecule with the best objective score from each cluster.

- If the number of selected top-scoring molecules is below a target value, iteratively select the next best molecules from each cluster until the target is met.

- Progressively reduce the distance cutoff used for clustering in each cycle, gradually shifting the selection pressure from maintaining diversity to pure objective score optimization [5].

Step 3: Termination

- The loop continues until a user-defined termination condition is met (e.g., a maximum number of iterations, a performance plateau) [5].

Workflow Diagram

Performance Data

Table 1: Comparative Performance of STELLA vs. REINVENT 4 in a PDK1 Inhibitor Case Study [5]

| Metric | REINVENT 4 | STELLA | Relative Improvement |

|---|---|---|---|

| Total Hit Compounds | 116 | 368 | +217% |

| Average Hit Rate | 1.81% per epoch | 5.75% per iteration | +218% |

| Mean Docking Score (GOLD PLP Fitness) | 73.37 | 76.80 | +4.7% |

| Mean QED | 0.75 | 0.75 | No change |

| Unique Scaffolds | Baseline | 161% more | +161% |

Research Reagent Solutions

Table 2: Key Computational Tools for Generative Molecular Design

| Research Reagent | Function in Protocol |

|---|---|

| STELLA Framework | Metaheuristic platform providing the evolutionary algorithm and clustering-based CSA for multi-parameter optimization. |

| FRAGRANCE Operator | Fragment replacement tool crucial for introducing structural diversity during the mutation step. |

| Docking Software (e.g., GOLD) | Predicts the binding affinity (docking score) of generated molecules to a target protein, a key parameter in the objective function. |

| Objective Function | A user-defined mathematical function that combines and weights target properties (e.g., QED, toxicity) into a single score for optimization. |

Application Note: Materials Discovery with PODGen

This protocol describes the use of the PODGen framework for the conditional generation of novel crystal structures, specifically targeting topological insulators (TIs). The framework uses predictive models to guide a general generative model toward regions of materials space that satisfy desired property criteria [6].

Detailed Methodology

Step 1: Framework Setup

- Integrate Models: Combine a pre-trained general generative model (e.g., diffusion, autoregressive) with one or more predictive models ( P(y\|C) ) for the target properties (e.g., topological classification, band gap).

- Define Target: Specify the target property value ( y ) for conditional generation.

Step 2: Markov Chain Monte Carlo (MCMC) Sampling

- Initialize Chain: Start with an initial crystal structure ( C_0 ).

- Propose New Structure: For each step ( t ) in the MCMC chain, propose a new crystal structure ( C' ) based on the previous structure ( C_{t-1} ). This proposal is typically made by the underlying generative model.

- Calculate Acceptance Probability: Determine whether to accept the new structure ( C' ) with a probability given by the Metropolis-Hastings algorithm: ( A(C'|C{t-1}) = \min\left{1, \frac{P(C')P(y|C')}{P(C{t-1})P(y|C_{t-1})}\right} ) Where ( P(C) ) comes from the generative model and ( P(y|C) ) comes from the predictive models [6].

- Iterate: Repeat the proposal and acceptance steps for a sufficient number of iterations to sample effectively from the target conditional distribution ( \pi^*(C) ).

Step 3: High-Throughput Validation

- Pass the generated crystals through a workflow involving structure optimization, property verification (e.g., using first-principles calculations), and deduplication to filter and confirm viable candidates [6].

Workflow Diagram

Performance Data

Table 3: Performance of PODGen in Generating Topological Insulators [6]

| Metric | Unconstrained Generation | PODGen (Conditional) | Improvement |

|---|---|---|---|

| Success Rate for TIs | Baseline | 5.3x higher | +430% |

| Generation of Gapped TIs | Rare | Consistent success | Significant |

| Total New TIs/TCIs Generated | Not specified | 19,324 | N/A |

| Promising Stable Candidates | N/A | 5 (e.g., CsHgSb, NaLaB₁₂) | N/A |

Research Reagent Solutions

Table 4: Key Computational Tools for Conditional Materials Generation

| Research Reagent | Function in Protocol |

|---|---|

| PODGen Framework | Provides the MCMC sampling infrastructure to integrate generative and predictive models for conditional sampling. |

| Generative Model (e.g., CDVAE, CrystalFormer) | Learns the general distribution of crystal structures ( P(C) ) and proposes new candidate structures. |

| Predictive Models (e.g., Graph Neural Networks) | Approximates ( P(y|C) ), the probability of a target property given a crystal structure. |

| First-Principles Calculation Software (e.g., DFT) | Used for final validation of generated materials' properties, stability, and electronic structure. |

The empirical data from both drug and materials discovery domains unequivocally demonstrate that conditional generation is a powerful tool for overcoming the scientific bottleneck posed by vast design spaces. STELLA's ability to generate over 200% more hit candidates with significantly greater scaffold diversity than a state-of-the-art deep learning model highlights its efficacy in balancing multiple, often conflicting, objectives in drug design [5]. Similarly, PODGen's 5.3-fold increase in the success rate for generating topological insulators proves its utility in targeted materials discovery, particularly for finding rare classes of materials like gapped TIs that are elusive through unconstrained methods [6].

The underlying strength of these frameworks lies in their systematic approach to the exploration-exploitation trade-off. STELLA achieves this through its clustering-based CSA, which explicitly manages structural diversity throughout the optimization process [5]. PODGen, on the other hand, leverages the mathematical rigor of MCMC sampling to bias the generation process toward a desired property landscape [6]. Both methods move beyond simple pattern recognition to active, goal-oriented search.

In conclusion, targeted property design via conditional generation is not merely an incremental improvement but a necessary evolution in the methodology of scientific discovery. By directly addressing the bottleneck of immense search spaces, it enables researchers to focus resources efficiently, accelerating the development of novel therapeutics and advanced materials with tailored properties. The continued development and adoption of these frameworks promise to be a cornerstone of data-driven science in the coming decades.

The discovery and development of new functional materials are pivotal for technological progress, yet traditional methods often entail timelines of 10–20 years, dissuading investment and hindering innovation [7]. The inversion of structure-property relationships—designing a material with a specific set of target properties—remains a particularly formidable challenge. Conditional generative artificial intelligence (AI) has emerged as a powerful paradigm to address this inverse design problem directly. By learning the underlying probability distribution of material structures and properties, these models can generate novel, viable candidates that are optimized for desired characteristics. Among the various architectures, Diffusion Models, Autoregressive Models, and Variational Autoencoders (VAEs) have demonstrated significant potential. This document details the application of these three key architectural paradigms within the context of targeted material properties research, providing application notes, structured data, and experimental protocols for researchers and scientists.

Generative models are a class of machine learning algorithms that learn the underlying probability distribution ( P(x) ) of a dataset to generate new, similar data samples [8]. In conditional generation, this objective shifts to learning ( P(C | y) ), the probability of a crystal structure ( C ) given a target property ( y ) [6]. This enables the inverse design of materials.

Table 1: Core Characteristics of Key Generative Models in Materials Science.

| Feature | Variational Autoencoders (VAEs) | Autoregressive Models | Diffusion Models |

|---|---|---|---|

| Core Principle | Maps data to a latent (hidden) probabilistic distribution and reconstructs it [8]. | Predicts the next element in a sequence based on all previous elements [8]. | Iteratively adds noise to data and then learns to reverse this process [8] [9]. |

| Primary Strength | Stable training; provides a continuous, interpretable latent space for smooth interpolation [8] [7]. | Simple and stable training; highly effective for sequential data [8]. | High-quality, diverse output generation; more stable training than GANs [8] [9]. |

| Key Weakness | Can produce blurry or averaged outputs; may struggle with fine details [8]. | Sequential generation can be slow; error propagation in long sequences [8]. | Slow inference due to iterative sampling; computationally intensive [8] [6]. |

| Ideal Materials Use Case | Anomaly detection, representation learning, and exploring continuous property variations [8]. | Generating crystal structures or molecules as a sequence of tokens [6]. | Generating high-fidelity, complex microstructures (e.g., dendrites) and crystal structures [6] [9]. |

Application Notes in Materials Science

Variational Autoencoders (VAEs)

VAEs have established a strong foothold in molecular and material design. Their key advantage lies in their structured latent space. By encoding input data into a probabilistic distribution, VAEs learn a continuous, smooth latent representation. This allows researchers to perform meaningful operations in this latent space, such as interpolating between two material structures to discover intermediates with tailored properties or perturbing a known structure to generate novel analogues [8] [7]. This makes them particularly suitable for tasks like molecular generation and optimization, where exploring the chemical space around a known lead compound is necessary.

Autoregressive Models

Autoregressive models treat a material's structure—whether a molecule represented as a SMILES string or a crystal structure represented as a sequence of tokens—as an ordered sequence. They generate new materials one unit at a time, with each step conditioned on all previously generated units. This approach is inherently well-suited for sequential data and has been successfully applied in models like CrystalFormer for crystal structure generation [6]. Their training process is typically more stable than that of adversarial methods, and they can capture complex, long-range dependencies within the data, making them powerful for de novo structure assembly.

Diffusion Models

Inspired by non-equilibrium thermodynamics, diffusion models have recently gained prominence for generating high-quality, diverse samples. These models operate through a forward process, where noise is gradually added to data until it becomes pure Gaussian noise, and a reverse process, where a neural network is trained to denoise this back into a coherent structure [8] [9]. This architecture excels at capturing complex data distributions and producing high-fidelity outputs. They are now rivaling and even surpassing GANs in quality, especially in conditional generation tasks like text-to-image synthesis and, crucially, inverse materials design, where they can generate detailed microstructures from property constraints [8] [9].

Conditional Generation for Targeted Properties

The true power of these generative models is unlocked when they are applied to conditional generation, directly targeting specific material properties. The fundamental goal is to sample from the conditional distribution ( P(C | y) ), where ( C ) is a crystal structure and ( y ) is a target property [6]. Using Bayes' theorem, this can be reframed as sampling from ( P(C)P(y | C) ), which forms the basis for many conditional generation frameworks [6].

Frameworks like PODGen (Predictive models to Optimize the Distribution of the Generative model) operationalize this principle. PODGen integrates a general generative model (which provides ( P(C) )) with predictive models (which provide ( P(y | C) )) and uses an efficient sampling method like Markov Chain Monte Carlo (MCMC) to guide the generation toward structures that satisfy the target conditions [6]. This approach is highly transferable and can be applied across different generative and predictive backbones.

Table 2: Representative Performance Metrics in Material Conditional Generation.

| Generative Model | Application / Target | Reported Performance / Outcome | Source / Framework |

|---|---|---|---|

| Conditional Diffusion | Inverse design of polymer microstructures for target Young's modulus and Poisson's ratio [9]. | Successfully predicts processing temperature and generates corresponding dendritic microstructure from mechanical properties. | [9] |

| PODGen (MCMC-based) | Generation of Topological Insulators (TIs). | Success rate of generating TIs was 5.3 times higher than unconstrained generation; consistently produced gapped TIs [6]. | [6] |

| VAE | Molecular discovery and optimization. | Generates novel molecules by sampling and interpolating in a continuous latent space [7]. | [7] |

Diagram 1: Workflow for the PODGen conditional generation framework.

Experimental Protocols

Protocol: Conditional Generation of Topological Insulators using PODGen

Objective: To generate novel crystal structures identified as Topological Insulators (TIs) using a conditional generation framework [6].

Research Reagents & Computational Tools:

- Generative Model: A general generative model (e.g., CDVAE, CrystalFormer) trained on a crystal database like the Materials Project. Function: Provides the base distribution of realistic crystal structures ( P(C) ) [6].

- Predictive Models: One or more pre-trained property predictors. Function: Approximates ( P(y | C) ), the probability that a generated structure ( C ) possesses the target property ( y ) (e.g., topological band structure) [6].

- Sampling Algorithm: Markov Chain Monte Carlo (MCMC) with Metropolis-Hastings algorithm. Function: Efficiently samples from the complex target distribution ( π(C) = P(C)P(y | C) ) [6].

Procedure:

- Initialization: Define the target property ( y ) (e.g., "is a topological insulator"). Start the MCMC chain with an initial crystal structure ( C_0 ), which can be randomly sampled from the generative model.

- Proposal: At each step ( t ), propose a new candidate structure ( C' ). This is achieved by using the generative model to produce a new structure or by perturbing the current structure ( C_{t-1} ).

- Evaluation: Feed the proposed structure ( C' ) to the predictive model(s) to evaluate ( P(y | C') ).

- Acceptance Calculation: Compute the acceptance ratio ( A^* ) based on the product of the generative model's probability and the predictive model's score for both the proposed and current structures. The acceptance probability is ( A = min(1, A^*) ) [6].

- Transition: With probability ( A ), accept the new structure ( Ct = C' ). Otherwise, reject it and retain the current structure ( Ct = C_{t-1} ).

- Iteration: Repeat steps 2-5 for a predefined number of iterations or until convergence criteria are met.

- Validation: The final accepted structures in the chain should be validated through first-principles calculations (e.g., DFT) to confirm their topological properties and dynamic stability [6].

Protocol: Inverse Prediction of Process Parameters and Microstructures using a Conditional Diffusion Model

Objective: To inversely predict the processing temperature and corresponding dendritic microstructure of a thermoplastic resin given desired mechanical properties (Young's modulus and Poisson's ratio) [9].

Research Reagents & Computational Tools:

- Training Dataset: Paired data of processing temperatures, resulting microstructures (e.g., from phase-field method simulations), and their homogenized mechanical properties [9].

- Conditional Diffusion Model: A U-Net architecture trained on the above dataset. Function: Learns the reverse denoising process conditioned on the mechanical property labels [9].

Procedure:

- Data Generation: a. Microstructure Generation: Use the phase-field method to simulate the growth of dendritic microstructures at various isothermal crystallization temperatures [9]. b. Property Calculation: Perform homogenization analysis (e.g., using the Finite Element Method) on the generated microstructures to compute their effective Young's modulus and Poisson's ratio [9]. c. Dataset Assembly: Create a final dataset where each entry is a tuple of (Mechanical Properties, Processing Temperature, Microstructure).

- Model Training: a. Train the conditional diffusion model to learn the mapping from the mechanical properties (the condition) to the joint distribution of processing temperatures and microstructures.

- Inverse Generation: a. Input: Specify the desired Young's modulus and Poisson's ratio. b. Sampling: Start from a pure noise tensor and iteratively denoise it using the trained diffusion model, guided by the input mechanical properties. c. Output: The model generates a plausible processing temperature and a high-fidelity dendritic microstructure that is predicted to yield the desired properties [9].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key computational tools and data resources for generative materials science.

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| Materials Project [10] [11] | Database | A primary source of crystal structures and computed properties for training generative and predictive models. |

| Phase-Field Method [9] | Simulation | Generates realistic training data for microstructures (e.g., dendrites) resulting from specific process parameters. |

| Homogenization Analysis (XFEM/FEM) [9] | Simulation | Calculates the macroscopic mechanical properties of a generated microstructure, enabling the link between structure and property. |

| Predictive Property Models (e.g., GNNs) [6] | Machine Learning Model | Approximates ( P(y|C) ), a critical component for guiding conditional generation frameworks like PODGen. |

| Markov Chain Monte Carlo (MCMC) [6] | Algorithm | An efficient sampling method for exploring the high-dimensional space of material structures under property constraints. |

| Density Functional Theory (DFT) [6] [7] | Simulation | Used for final, high-accuracy validation of generated material candidates' properties and stability. |

Diffusion, Autoregressive, and VAE models each offer distinct and complementary pathways for accelerating the discovery of next-generation materials. The shift from unconstrained generation to conditional generation represents a critical evolution, moving the field from mere exploration of chemical space to targeted, goal-directed design. Frameworks that intelligently combine generative models with predictive property models are already demonstrating dramatic improvements in the success rate of discovering materials with pre-specified, advanced functionalities, such as topological insulators and polymers with tailored mechanical properties. As these architectures mature and integrate more deeply with high-throughput computational validation and automated experiments, they promise to significantly compress the two-decade timeline traditionally associated with materials innovation.

The accurate computational representation of molecules is a foundational step in modern drug discovery and materials science. Translating molecular structures into a computer-readable format enables the application of artificial intelligence (AI) and deep learning (DL) to model, analyze, and predict molecular behavior and properties [12]. The choice of representation—whether as a simplified string, a graph, or a three-dimensional structure—directly influences a model's ability to navigate the vast chemical space and generate novel compounds with targeted characteristics [12]. This document details the predominant molecular representation paradigms and their experimental protocols, framed within the critical context of conditional generation, a methodology aimed at designing molecules and materials with user-defined properties.

Molecular representation serves as the bridge between chemical structures and their predicted biological, chemical, or physical properties [12]. The table below summarizes the core modalities, their advantages, and their relevance to conditional generation.

Table 1: Core Molecular Representation Modalities for Conditional Generation

| Representation Modality | Key Description | Common Formats / Models | Primary Applications in Conditional Generation |

|---|---|---|---|

| Sequence-Based | Treats molecular structure as a linear string of symbols. | SMILES, SELFIES, Transformer-based Language Models [12] | Initial lead discovery, generating syntactically valid molecules from a learned chemical "language". |

| Graph-Based | Represents atoms as nodes and bonds as edges in a graph. | Graph Neural Networks (GNNs), KA-GNN [13] | Property prediction, scaffold hopping, modeling molecular interactions without pre-defined rules. |

| 3D Structure-Based | Encodes the spatial coordinates and geometric relationships of atoms. | Molecular Graphs, Volumetric Data, MolEM framework [14] | Structure-based drug design (SBDD), generating molecules to fit specific protein pockets. |

| Hybrid & Multimodal | Combines multiple representation types to capture complementary information. | Multimodal learning, contrastive learning frameworks [12] | Improving prediction accuracy and generalization by providing a more holistic molecular view. |

Performance Benchmarking of AI-Driven Representation Frameworks

The integration of AI has led to novel frameworks that leverage these representations for generative tasks. The following table benchmarks the performance of several state-of-the-art models, highlighting their application in conditional generation.

Table 2: Performance Benchmarking of Advanced Generative Frameworks

| Model / Framework | Core Architecture | Key Conditional Generation Task | Reported Performance / Advantage |

|---|---|---|---|

| VGAN-DTI [15] | GAN + VAE + MLP | Drug-Target Interaction (DTI) Prediction | 96% accuracy, 95% precision in DTI prediction; generates diverse molecular candidates. |

| KA-GNN [13] | Graph Neural Network with Kolmogorov-Arnold Networks | Molecular Property Prediction | Consistently outperforms conventional GNNs in accuracy and computational efficiency on molecular benchmarks. |

| MolEM [14] | Variational Expectation-Maximization on 3D Graphs | 3D Molecular Graph Generation for SBDD | Significantly outperforms baselines in generating molecules with high binding affinities and realistic structures. |

| PODGen [6] | Predictive models guiding a Generative model via MCMC | Crystal Structure Generation for Target Properties | Success rate of generating target topological insulators is 5.3x higher than unconstrained generation. |

| FP-BERT [12] | Transformer-based Pre-training on Fingerprints | Molecular Property Classification & Regression | Derives high-dimensional representations from ECFP fingerprints for downstream task prediction. |

Application Notes & Experimental Protocols

Protocol 1: Conditional Generation using the PODGen Framework

The PODGen framework exemplifies a highly transferable approach for conditional generation in materials discovery, using predictive models to optimize the distribution of a generative model [6].

Application Note: This protocol is designed for the goal-directed discovery of crystalline materials, such as topological insulators. It requires a pre-trained general generative model and one or more predictive property models.

Workflow Diagram: PODGen Conditional Generation

Step-by-Step Procedure:

- Initialization: Begin with an initial crystal structure,

C_t-1. - Proposal: Use a general generative model (e.g., a diffusion or autoregressive model) to propose a new crystal structure,

C', by sampling from its learned distributionP(C). - Property Prediction: Pass the proposed structure

C'through one or more predictive models to estimate the probabilityP(y|C')that it possesses the target propertyy. - MCMC Evaluation: Calculate the acceptance ratio

A*for the Metropolis-Hastings algorithm:A*(C' | C_t-1) = [P(C') * P(y|C')] / [P(C_t-1) * P(y|C_t-1)]Accept the proposed structureC'as the new stateC_twith probabilitymin(1, A*). - Iteration: Repeat steps 2-4 for a predefined number of iterations. The final accepted samples will be distributed according to the target conditional distribution

π(C) = P(C)P(y|C), effectively biasing the output toward structures with the desired property [6].

Protocol 2: 3D Molecular Graph Generation with the MolEM Framework

For structure-based drug design, the MolEM framework addresses the challenge of generating 3D molecular graphs within a protein binding pocket without relying on a pre-defined, suboptimal atom ordering [14].

Application Note: This protocol is for generating novel 3D ligand molecules conditioned on a specific protein pocket. It jointly learns the molecular graph and the generative sequence order.

Workflow Diagram: MolEM 3D Graph Generation

Step-by-Step Procedure:

- Problem Formulation: Represent the protein-ligand complex using 3D graphs. The protein pocket and the ligand molecule are represented as sets of atoms with their 3D coordinates and attributes [14].

- Variational EM Framework:

- E-step (Inference): Fix the parameters of the molecule generator and update the ordering generator. The goal is to approximate the true posterior distribution of sequential orders

p(π | G, Pocket)by minimizing the Kullback-Leibler (KL) divergence. - M-step (Learning): Fix the distribution of the sequential order

πand update the molecule generator. The objective is to maximize the expected log-likelihood of generating the molecular graphGgiven the orderπand the pocket [14].

- E-step (Inference): Fix the parameters of the molecule generator and update the ordering generator. The goal is to approximate the true posterior distribution of sequential orders

- Iteration: Alternate between the E-step and M-step until convergence. This process tightens the evidence lower bound (ELBO) of the graph likelihood.

- Conformation Refinement (Optional but Recommended): Incorporate a molecular docking tool like QuickVina 2 to refine the generated ligand's binding pose. This step ensures the generation of realistic and stable conformations within the protein pocket, improving the credibility of the 3D structures [14].

Protocol 3: Enhancing Prediction with Kolmogorov-Arnold GNNs (KA-GNN)

KA-GNNs integrate novel Kolmogorov-Arnold Networks (KANs) into GNNs to boost molecular property prediction, a key component for evaluating generated molecules [13].

Application Note: This protocol outlines how to replace standard MLP transformations in a GNN with Fourier-based KAN layers to improve expressivity, efficiency, and interpretability in property prediction tasks.

Workflow Diagram: KA-GNN Model Architecture

Step-by-Step Procedure:

- Graph Input: Represent the molecule as a graph with node features (e.g., atom type, charge) and edge features (e.g., bond type).

- KAN-based Initialization:

- Node Embedding: Pass the concatenation of a node's atomic features and the averaged features of its neighboring bonds through a Fourier-based KAN layer.

- Edge Embedding (in KA-GAT): Form edge embeddings by fusing bond features with the features of the two endpoint nodes using a KAN layer [13].

- KAN-augmented Message Passing: Perform message passing following a GCN or GAT scheme. However, update node features using residual KAN layers instead of standard MLP transformations.

- KAN-based Readout: Aggregate the final node embeddings into a graph-level representation using a readout function built with KAN layers.

- Property Prediction: The resulting graph representation is used for the final prediction of molecular properties. The model can be trained end-to-end, and the KAN layers can offer improved interpretability by highlighting chemically meaningful substructures [13].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Computational Tools for Molecular Representation and Generation

| Item Name | Type | Function / Application |

|---|---|---|

| SMILES / SELFIES | String Representation | A standardized text-based format for representing molecular structures, serving as input for language models [12]. |

| RDKit | Cheminformatics Software | Open-source toolkit for cheminformatics, used for manipulating molecules, generating fingerprints, and canonicalizing structures. |

| Graph Neural Network (GNN) | Deep Learning Model | A neural network architecture that operates directly on graph structures, fundamental for graph-based molecular representation [12] [13]. |

| Kolmogorov-Arnold Network (KAN) | Deep Learning Model | An alternative to MLPs that uses learnable univariate functions on edges, offering improved expressivity and interpretability in models like KA-GNN [13]. |

| Variational Autoencoder (VAE) | Generative Model | A deep learning model that learns a latent representation of input data, used for generating novel molecular structures [12] [15]. |

| Generative Adversarial Network (GAN) | Generative Model | A framework where two neural networks contest to generate new, synthetic data indistinguishable from real data [15]. |

| Molecular Docking Software (e.g., QuickVina 2) | Simulation Tool | Predicts the preferred binding orientation of a small molecule (ligand) to a protein target, used for validating and refining generated structures [14]. |

| Markov Chain Monte Carlo (MCMC) | Sampling Algorithm | A computational algorithm used to sample from a probability distribution, crucial for conditional generation frameworks like PODGen [6]. |

Traditional materials discovery has long relied on empirical, trial-and-error methodologies, requiring extensive experimentation and often exceeding a decade from conception to deployment [16]. This process is fundamentally limited by the vastness of chemical space, which is estimated to exceed 10^60 drug-like molecules, making exhaustive exploration impractical [17]. Inverse design represents a paradigm shift in materials science. Instead of testing known materials for desired properties, researchers start by defining the target properties, and artificial intelligence (AI) algorithms work backward to propose novel candidate structures predicted to achieve them [18]. This approach automates ideation, explores unconventional solutions beyond human intuition, and dramatically accelerates the discovery timeline from decades to years [19].

This transition is powered by generative AI models. Unlike discriminative models that predict properties from structures (y = f(x)), generative models learn the underlying probability distribution P(x) of the data, enabling the creation of entirely new material samples [17]. A critical feature is the model's latent space, a lower-dimensional representation of the structure-property relationship. By navigating this space based on target properties, these models achieve true inverse design, directly generating stable and novel materials for applications in catalysts, electronics, and polymers [17].

Core AI Methodologies for Inverse Design

Several generative AI models have proven effective for the inverse design of materials. The table below summarizes the key model types, their principles, and applications in materials science.

Table 1: Key Generative AI Models for Materials Inverse Design

| Model Type | Core Principle | Example in Materials Science | Key Advantage |

|---|---|---|---|

| Diffusion Models [20] [17] | Generates data by iteratively denoising from a random initial state, following a learned reverse process. | MatterGen [20], SCIGEN [21] | High quality and stability of generated crystal structures. |

| Variational Autoencoders (VAEs) [17] | Learns a probabilistic latent space of data; an encoder maps inputs to this space, and a decoder generates new samples. | CDVAE [20] [6] | Provides a structured latent space for interpolation and generation. |

| Generative Flow Networks (GFlowNets) [17] | Learns a stochastic policy to sequentially build objects with probabilities proportional to a given reward. | Crystal-GFN [17] | Efficiently explores compositional spaces for diverse candidates. |

| Conditional Frameworks [6] | Integrates predictive property models with a generative model to steer generation toward a target property. | PODGen [6] | Model-agnostic; highly effective for hitting specific, rare property targets. |

Performance Comparison of Generative Models

The advancement of these models has led to significant improvements in the quality and success rate of generated materials. The following table quantifies the performance of leading models against previous state-of-the-art methods.

Table 2: Quantitative Performance of Generative Materials Models

| Model | Stable, Unique & New (SUN) Materials | Distance to DFT Relaxed Structure (RMSD) | Key Achievement |

|---|---|---|---|

| MatterGen [20] | More than doubles the percentage of SUN materials vs. prior models. | Over ten times closer to the local energy minimum than previous models. | 78% of generated structures are stable (<0.1 eV/atom from convex hull). |

| MatterGen (Fine-Tuned) [20] | Successfully generates stable, new materials with desired chemistry, symmetry, and properties. | N/A | Generated a material synthesized and measured to be within 20% of the target property. |

| SCIGEN [21] | Generated over 10 million candidate materials with target geometric patterns. | N/A | Led to the synthesis and experimental validation of two new magnetic compounds (TiPdBi, TiPbSb). |

| Conditional Generation (PODGen) [6] | Success rate for generating topological insulators was 5.3x higher than unconstrained generation. | N/A | Consistently generated gapped topological insulators, which general methods rarely produce. |

Application Notes and Protocols

The following section provides detailed methodologies for implementing AI-driven inverse design, from a general workflow to a specific protocol for conditional generation.

General Workflow for AI-Driven Inverse Design

The inverse design process can be conceptualized as a multi-stage, iterative pipeline. The diagram below outlines the key stages from objective definition to experimental validation.

Detailed Protocol: Conditional Generation with the PODGen Framework

The PODGen framework is a powerful, model-agnostic approach for conditional generation that integrates predictive and generative models. The following protocol details its implementation for discovering materials with a target property, such as a specific bandgap or magnetic density.

Protocol Title: Inverse Design of Crystals using the PODGen Conditional Generation Framework.

Objective: To generate novel, stable crystal structures that possess a user-defined target property.

Experimental Principle: The framework uses Markov Chain Monte Carlo (MCMC) sampling to steer a generative model's output. It iteratively refines candidate structures, accepting or rejecting new proposals based on the joint probability of the structure's likelihood (P(C)) and its predicted probability of having the target property (P(y|C)) [6].

Step-by-Step Procedure:

- Initialization:

- Obtain a pre-trained generative model (

Generator) that providesP(C), the probability of a crystal structureC. - Obtain one or more pre-trained predictive models (

Predictors) that provideP(y|C), the probability of the target propertyygiven a structureC. - Initialize the MCMC chain by generating an initial crystal structure

C_0from theGenerator.

- Obtain a pre-trained generative model (

MCMC Iteration Loop: For a predetermined number of steps (e.g., 10,000 iterations):

- Proposal: Use the

Generatorto propose a new candidate crystal structureC'based on the current structureC_t-1. - Evaluation: Calculate the acceptance ratio

A*:A*(C'|C_t-1) = [ P(C') * P(y|C') ] / [ P(C_t-1) * P(y|C_t-1) ]whereP(C')andP(C_t-1)are from theGenerator, andP(y|C')andP(y|C_t-1)are from thePredictor(s)[6]. - Accept/Reject Decision: Generate a random number

ufrom a uniform distribution between 0 and 1. Ifu ≤ A*, accept the proposed structure (C_t = C'). Otherwise, reject it and keep the current structure (C_t = C_t-1).

- Proposal: Use the

Output: After the MCMC chain completes, the final set of accepted structures represents a sample from the target conditional distribution

P(C|y). These are the candidate materials predicted to have the desired property.

The logical flow and key components of this protocol are visualized below.

The Scientist's Toolkit: Research Reagent Solutions

This section catalogs the essential computational tools, models, and datasets that form the modern toolkit for AI-driven inverse design.

Table 3: Essential "Reagents" for AI-Driven Inverse Design

| Tool/Resource Name | Type | Primary Function | Application Note |

|---|---|---|---|

| MatterGen [20] | Generative Model (Diffusion) | Generates stable, diverse inorganic materials across the periodic table; can be fine-tuned for property constraints. | A foundational model; demonstrated capability for inverse design on magnetism, chemistry, and symmetry. |

| SCIGEN [21] | Generative Tool (Constraint) | Applies user-defined geometric structural rules to steer existing generative models (e.g., DiffCSP). | Crucial for designing quantum materials (e.g., Kagome lattices) where specific geometry dictates properties. |

| PODGen Framework [6] | Conditional Framework | Integrates any generative and predictive models for highly efficient targeted discovery. | Ideal for optimizing the generation of materials with rare properties, like topological insulators. |

| Aethorix v1.0 [22] | Industrial Platform | Integrates generative AI, LLMs for literature mining, and machine-learned potentials for rapid property prediction. | Designed for scalable industrial R&D, incorporating operational constraints and synthesis viability. |

| Alex-MP-20 / Alex-MP-ICSD [20] | Training Dataset | Large, curated datasets of stable crystal structures from the Materials Project and Alexandria. | Used for training and benchmarking generative models. Essential for ensuring model performance. |

| Machine-Learned Interatomic Potentials (MLIPs) [17] [22] | Property Predictor | Fast, accurate surrogates for DFT calculations to assess stability and properties of generated candidates. | Enables high-throughput screening of thousands of candidates at near-DFT accuracy but lower computational cost. |

Validation and Synthesis

The ultimate test for any AI-designed material is its experimental realization and performance confirmation.

- Computational Validation: Prior to synthesis, candidate materials undergo rigorous computational checks. This typically involves Density Functional Theory (DFT) relaxation to confirm thermodynamic stability (e.g., energy above the convex hull < 0.1 eV/atom) [20] and calculation of target properties. Machine-learned potentials can accelerate this step without sacrificing significant accuracy [22].

- Experimental Synthesis and Characterization: Successful candidates are then synthesized in the lab. For example, AI-generated compounds TiPdBi and TiPbSb were synthesized in solid-state chemistry labs, and their magnetic properties were measured, with results largely aligning with model predictions [21]. In another case, a polymer designed by AI for a specific glass-transition temperature (Tg) was synthesized and measured to have a Tg within ~5% of the target [18]. This closes the loop, providing critical feedback to refine the AI models.

Methodologies and Real-World Applications in Drug and Material Design

Diffusion models have emerged as a leading generative AI framework, demonstrating significant potential to accelerate and transform the traditionally slow and costly process of drug discovery [23] [24]. These models learn to generate data by iteratively denoising random noise, a process that can be guided to create novel molecular structures with specific, desirable properties. This capability is particularly valuable for inverse design, where target properties are defined first and the molecular structure is derived accordingly [25]. Within the broader context of conditional generation for targeted material properties research, diffusion models offer a powerful paradigm for the on-demand engineering of novel therapeutics [6]. This document provides detailed application notes and protocols for applying these models to the two principal therapeutic modalities: small molecules and therapeutic peptides, highlighting the distinct challenges and methodological adaptations each requires.

Comparative Analysis of Modalities

The application of diffusion models must be tailored to the distinct molecular representations, chemical spaces, and design objectives of small molecules versus therapeutic peptides. A systematic comparison of these modalities is provided in the table below.

Table 1: Key Challenges and Design Focus for Different Therapeutic Modalities

| Feature | Small Molecules | Therapeutic Peptides |

|---|---|---|

| Primary Design Focus | Structure-based design; generating novel, pocket-fitting ligands with desired physicochemical properties [23] [24]. | Generating functional sequences and designing de novo structures [23] [24]. |

| Critical Challenges | Ensuring chemical synthesizability [23] [24]. | Achieving biological stability against proteolysis, ensuring proper folding, and minimizing immunogenicity [23] [24]. |

| Shared Hurdles | Scarcity of high-quality experimental data; need for accurate scoring functions; crucial requirement for experimental validation [23] [24]. | Scarcity of high-quality experimental data; need for accurate scoring functions; crucial requirement for experimental validation [23] [24]. |

| Future Potential | Integration into automated, closed-loop Design-Build-Test-Learn (DBTL) platforms [23] [24]. | Integration into automated, closed-loop Design-Build-Test-Learn (DBTL) platforms [23] [24]. |

The performance of generative models is quantified using a standard set of benchmarks. The following table summarizes key metrics and the performance of several state-of-the-art models on the QM9 and GEOM-Drugs datasets.

Table 2: Performance Metrics of Select 3D Molecular Diffusion Models

| Model | Dataset | Validity (Val) (%) | Uniqueness (Uniq) (%) | Novelty (%) | Molecule Stability (MS) (%) |

|---|---|---|---|---|---|

| GCDM [26] | QM9 | 96.4 | 99.9 | 59.8 | 95.3 |

| GeoLDM [26] | QM9 | 94.8 | 98.3 | ~50 | 96.1 |

| GCDM [26] | GEOM-Drugs | 71.4 | 100.0 | 100.0 | - |

| EDM [26] | GEOM-Drugs | 32.1 | 100.0 | 100.0 | - |

Experimental Protocols

Protocol 1: Conditional Generation of Small Molecules using a Predictive Framework

This protocol describes the use of the PODGen (Predictive models to Optimize the Distribution of the Generative model) framework for the conditional generation of crystal materials, a method highly transferable to small molecule design [6].

Key Research Reagents & Solutions

- Generative Model: A general probabilistic generative model (e.g., diffusion, autoregressive, flow-based) that provides ( P(C) ), an approximation of the true crystal structure distribution [6].

- Predictive Models: Multiple property prediction models that provide ( P(y|C) ), the probability of a target property ( y ) given a crystal structure ( C ) [6].

- Sampling Algorithm: Markov Chain Monte Carlo (MCMC) with the Metropolis-Hastings algorithm for efficient sampling from the complex target distribution [6].

Procedure

- Framework Setup: Integrate a pre-trained generative model with one or more predictive models trained on relevant property data.

- Target Definition: Define the conditional distribution for generation as ( π(C) = P(C)P(y|C) ), where ( P(C) ) comes from the generative model and ( P(y|C) ) from the predictive models [6].

- MCMC Sampling: a. Initialize a sequence of crystal structures, ( C0 ). b. For each sampling step ( t ), propose a new candidate structure ( C' ) based on the previous structure ( C{t-1} ). c. Calculate the acceptance probability: ( A(C' | C{t-1}) = \min(1, \frac{π(C')}{π(C{t-1})}) ) [6]. d. Accept or reject the candidate ( C' ) with probability ( A ).

- Output: The sequence of accepted structures, which will converge to samples from the target conditional distribution ( π(C) ), yielding structures with the desired properties.

Protocol 2: Text-Guided Multi-Property Molecular Optimization

This protocol utilizes a transformer-based diffusion language model (TransDLM) to optimize generated molecules for multiple properties while retaining their core structural scaffolds, mitigating errors from external predictors [27].

Key Research Reagents & Solutions

- Source Molecule: The initial molecule to be optimized.

- Textual Property Descriptions: Natural language descriptions of the target properties (e.g., "high solubility," "low clearance").

- Pre-trained Language Model: A model capable of encoding both molecular SMILES/nomenclature and textual descriptions into a shared latent space.

- Transformer-based Diffusion Language Model (TransDLM): The core model that performs iterative denoising.

Procedure

- Representation: a. Convert the source molecule into its standardized chemical nomenclature or a SMILES string. b. Encode the molecular representation and the textual property descriptions using the pre-trained language model to create a fused guidance signal [27].

- Noise Sampling: a. Sample initial molecular word vectors from the token embeddings of the source molecule. This biases the generation process to retain the original scaffold [27].

- Conditional Denoising: a. Apply noise to the molecular word vectors. b. Train the TransDLM to denoise the vectors, using the fused textual and molecular guidance to implicitly steer the optimization towards the desired properties, without a separate predictor [27]. c. Iterate the denoising steps until a clear molecular sequence is generated.

- Output & Validation: a. Decode the generated sequence into a molecular structure. b. Validate the output using relevant chemical and property checks.

Visualization of Workflows

Conditional Molecular Generation Workflow

The following diagram illustrates the high-level iterative process of conditional molecular generation, which forms the basis for protocols like PODGen [6].

Molecular Graph Diffusion (MG-DIFF) Process

This diagram outlines the key components of the MG-DIFF model, which employs a discrete diffusion process for molecular graph generation and optimization [28].

The Scientist's Toolkit

Table 3: Essential Computational Tools and Frameworks

| Tool/Resource | Type | Primary Function | Relevant Protocol |

|---|---|---|---|

| PODGen Framework [6] | Computational Framework | Integrates generative and predictive models for conditional generation via MCMC sampling. | Protocol 1 |

| TransDLM [27] | Deep Learning Model | Text-guided molecular optimization via a diffusion language model. | Protocol 2 |

| MG-DIFF [28] | Deep Learning Model | Molecular graph generation and optimization using a discrete mask-and-replace diffusion strategy. | - |

| Geometry-Complete Diffusion Model (GCDM) [26] | Deep Learning Model | Generates valid 3D molecules using SE(3)-equivariant networks and geometric features. | - |

| REINVENT 4 [25] | Software Framework | An open-source generative AI framework for small molecule design using RNNs, transformers, and reinforcement learning. | - |

Evolvable Conditional Diffusion represents a methodological advancement in generative AI for scientific discovery, enabling the guidance of diffusion models using black-box, non-differentiable multi-physics models. This approach formulates guidance as an optimization problem where updates to the descriptive statistic for the denoising distribution optimize a desired fitness function, derived through the lens of probabilistic evolution [29]. The resulting algorithm is analogous to gradient-based guided diffusion but operates without derivative computation, facilitating applications in domains like computational fluid dynamics and electromagnetics where differentiable proxies are unavailable [29]. This protocol details the methodology and applications for targeted material properties research.

Conditional generation aims to produce samples that satisfy specific requirements, a capability crucial for scientific domains like drug development and materials science. While guided diffusion models typically require differentiable models for gradient-based steering, most established multi-physics numerical models in scientific computing are non-differentiable black-box systems [29]. Evolvable Conditional Diffusion addresses this limitation by incorporating principles from evolutionary computation, treating the guidance process as a derivative-free optimization problem [29]. This enables researchers to leverage existing high-fidelity physics simulators without modification, facilitating autonomous scientific discovery pipelines that integrate with autonomous laboratories [29].

Background and Technical Foundations

Diffusion Models for Scientific Generation

Diffusion models are probabilistic generative models that learn data distributions through iterative denoising processes [29]. The forward process progressively adds Gaussian noise to data:

[q(\boldsymbol{x}t|\boldsymbol{x}{t-1}) = \mathcal{N}(\boldsymbol{x}t;\ \sqrt{1-{\beta}t}\boldsymbol{x}{t-1},\ {\beta}t\boldsymbol{I})]

while the reverse denoising process:

[p{\boldsymbol{\theta}}(\boldsymbol{x}{t-1}|\boldsymbol{x}t) = \mathcal{N}(\boldsymbol{x}{t-1};\ \boldsymbol{\mu}{\boldsymbol{\theta}}(\boldsymbol{x}t),\ \boldsymbol{\Sigma}{\boldsymbol{\theta}}(\boldsymbol{x}t))]

learns to reconstruct data from noise [29]. For conditional generation, guidance mechanisms steer this denoising trajectory toward regions satisfying specific objectives.

Limitations of Gradient-Based Guidance

Traditional guided diffusion requires differentiable models to compute gradients for steering the generation process [29]. This presents a significant barrier in scientific domains where validated multi-physics models (e.g., computational fluid dynamics, electromagnetic simulators) are implemented as black-box, non-differentiable systems, creating a disconnect between state-of-the-art generative AI and established scientific computing infrastructure [29].

Evolvable Conditional Diffusion Methodology

Core Theoretical Framework

Evolvable Conditional Diffusion reformulates guidance as a black-box optimization problem where the probabilistic distribution from the pre-trained diffusion model evolves to favor designs maximizing specific performance criteria [29]. The method optimizes a fitness function through updates to the descriptive statistic for the denoising distribution, deriving an evolution-guided approach from first principles through probabilistic evolution [29]. Notably, the update algorithm resembles conventional gradient-based guided diffusion under specific assumptions but requires no derivative computation [29].

Derivative-Free Gradient Estimation

Instead of relying on differentiable models, the method directly estimates fitness function gradients from samples drawn from the evolved distribution, with corresponding fitness values evaluated using non-differentiable solvers [29]. This approach maintains compatibility with existing scientific computing tools while providing the guidance necessary for targeted generation.

Application Protocols

Protocol 1: Fluidic Channel Topology Optimization

Objective: Generate fluidic channel designs optimizing for specific flow characteristics using non-differentiable CFD solvers.

Pre-trained Model Preparation:

- Utilize a diffusion model trained on diverse fluidic channel topologies

- Establish baseline generation capability without performance guidance

Evolutionary Guidance Setup:

- Initialization: Generate initial population from pre-trained model

- Fitness Evaluation: Process designs through black-box CFD solver

- Gradient Estimation: Calculate fitness gradients from sample population

- Distribution Update: Modify denoising distribution parameters based on estimated gradients

- Iteration: Repeat steps 1-4 until convergence

Validation Metrics:

- Comparison against baseline designs from unguided model

- Physical verification using high-fidelity CFD simulation

Protocol 2: Meta-surface Design for Electromagnetic Applications

Objective: Generate meta-surface designs with target frequency response properties using non-differentiable electromagnetic solvers.

Workflow Implementation:

- Conditioning: Define target frequency response as fitness function

- Generation: Produce candidate designs through diffusion process

- Simulation: Evaluate candidates using electromagnetic solver

- Selection: Identify high-performing designs based on fitness

- Distribution Update: Evolve denoising distribution toward high performers

- Convergence Check: Repeat until target specifications met

Performance Validation:

- Physical measurement of fabricated designs

- Comparison against conventional optimization approaches

Comparative Analysis

Table 1: Comparison of Guidance Approaches for Diffusion Models

| Feature | Gradient-Based Guidance | Evolvable Conditional Diffusion |

|---|---|---|

| Differentiability Requirement | Requires differentiable models | Compatible with non-differentiable black-box models |

| Physics Model Compatibility | Limited to differentiable proxies | Works with established multi-physics solvers |

| Optimization Approach | Local gradient descent | Derivative-free global exploration |

| Solution Diversity | May converge to local optima | Maintains diversity through population-based approach |

| Implementation Complexity | Requires model differentiation | Gradient estimation from samples |

Table 2: Application Performance in Scientific Domains

| Application Domain | Performance Metric | Baseline Diffusion | Evolvable Conditional Diffusion |

|---|---|---|---|

| Fluidic Topology Design | Flow efficiency improvement | Reference | Significant enhancement |

| Meta-surface Design | Target frequency accuracy | Reference | Better objective satisfaction |

| Computational Requirements | Solver evaluations | N/A | Additional sampling overhead |

| Design Quality | Physical feasibility | Maintained | Maintained with performance gains |

Research Reagent Solutions

Table 3: Essential Components for Experimental Implementation

| Component | Function | Implementation Examples |

|---|---|---|

| Pre-trained Diffusion Model | Base generation capability | Models trained on domain-specific datasets (e.g., molecular structures, material topologies) |

| Multi-physics Solver | Fitness evaluation | Computational Fluid Dynamics (CFD), electromagnetic simulators, molecular dynamics packages |

| Evolutionary Optimization Framework | Derivative-free guidance | Custom implementation based on probabilistic evolution principles |

| Performance Metrics | Solution quality assessment | Domain-specific fitness functions (e.g., flow efficiency, quality factors) |

| Validation Infrastructure | Physical verification | Fabrication and testing capabilities for generated designs |

Workflow Visualization

Diagram 1: Evolutionary Guidance Workflow (83 characters)

Diagram 2: Method Comparison (79 characters)

Evolvable Conditional Diffusion provides a mathematically grounded framework for incorporating black-box, non-differentiable physics models into guided diffusion processes. By combining the distribution modeling capabilities of diffusion models with the derivative-free optimization of evolutionary algorithms, this approach enables targeted generation in scientific domains where differentiable proxies are unavailable or inaccurate. The methodology demonstrates significant promise for accelerating materials discovery and optimization while maintaining compatibility with established scientific computing infrastructure. Future work should focus on scaling the approach to higher-dimensional design spaces and integrating it with autonomous experimental systems for closed-loop discovery.

Autoregressive (AR) models have emerged as a powerful paradigm for image generation, rivaling the performance of diffusion models. However, integrating precise spatial controls for conditional generation has remained a significant challenge. Traditional approaches often require full fine-tuning of pre-trained models, which is computationally expensive and inefficient. This application note details recent breakthroughs in plug-and-play frameworks that enable efficient conditional generation for AR models, with particular relevance to material science and drug discovery research where controlled generation of molecular structures and material configurations is paramount.

Recent research has produced several innovative architectures that enable precise control over AR image generation without the need for extensive retraining. These frameworks share a common goal: to inject conditional signals such as edges, depth maps, or segmentation masks into pre-trained AR models with minimal computational overhead.

- ControlAR: Introduces a lightweight control encoder that transforms spatial inputs into control tokens and employs conditional decoding where next-token prediction is conditioned on both previous image tokens and current control tokens. This approach strengthens control capability without increasing sequence length [30] [31].

- Efficient Control Model (ECM): Features a distributed architecture with context-aware attention layers that refine conditional features using real-time generated tokens, and a shared gated feed-forward network designed to maximize utilization of limited capacity [32] [33].

- EditAR: A unified framework that takes both images and instructions as inputs, predicting edited image tokens in a standard next-token prediction paradigm. It demonstrates the potential for creating a single foundational model for various conditional generation tasks [34].

Quantitative Performance Comparison

The following tables summarize the quantitative performance and efficiency metrics of leading plug-and-play frameworks for conditional generation in AR models.

Table 1: Performance Comparison on Conditional Generation Tasks (FID Scores)

| Framework | Base AR Model | Canny Edge | Depth Map | Segmentation | Params (Control) |

|---|---|---|---|---|---|

| ControlAR | LlamaGen | 10.85 | 12.34 | 11.92 | ~58M |

| ECM | VARd30 (2B) | 9.76 | 11.05 | 10.83 | 58M |

| EditAR | LlamaGen | 11.23 | 12.87 | 12.15 | ~65M |

| Prefill Baseline | LlamaGen | 26.45 | 28.91 | 27.64 | N/A |

Table 2: Training Efficiency and Inference Speed

| Framework | Training Epochs | Training Time Reduction | Inference Speed (vs Diffusion) | Multi-Resolution Support |

|---|---|---|---|---|

| ControlAR | 30 | 40% | 2.1x | Yes |

| ECM | 15 | 55% | 2.5x | Limited |

| EditAR | 25 | 45% | 1.8x | Yes |

| Prefill Baseline | 30 | 0% | 1.2x | No |

Experimental Protocols

ControlAR Implementation Protocol

Objective: Implement conditional control in AR models using conditional decoding methodology.

Materials:

- Pre-trained AR model (e.g., LlamaGen)

- Control image dataset (edges, depth maps, etc.)

- Computing resources: 4-8 GPUs (e.g., NVIDIA A100)

Procedure:

- Control Encoder Setup:

- Initialize a Vision Transformer (ViT) as control encoder

- Explore effective pre-training schemes (vanilla or self-supervised)

- Transform 2D spatial controls into sequential control tokens

Conditional Decoding Integration:

- Fuse control tokens with image tokens at intermediate layers

- Implement per-token fusion similar to positional encodings

- Maintain original AR model parameters frozen

Training Configuration:

- Batch size: 64-128 depending on GPU memory

- Learning rate: 1e-4 with cosine decay

- Training duration: 25-30 epochs

- Optimizer: AdamW

Multi-Resolution Extension:

- Implement multi-scale training with varying control input sizes

- Adjust control token sequence length accordingly

- Validate on arbitrary aspect ratios

ECM Training Protocol

Objective: Achieve efficient conditional generation with scale-based AR models.

Materials:

- Scale-based AR model (e.g., VAR)

- Control conditioning data

- Computing resources: 4+ GPUs

Procedure: