Computational vs. Experimental Inorganic Crystal Structures: A Comparative Analysis for Advanced Materials Discovery

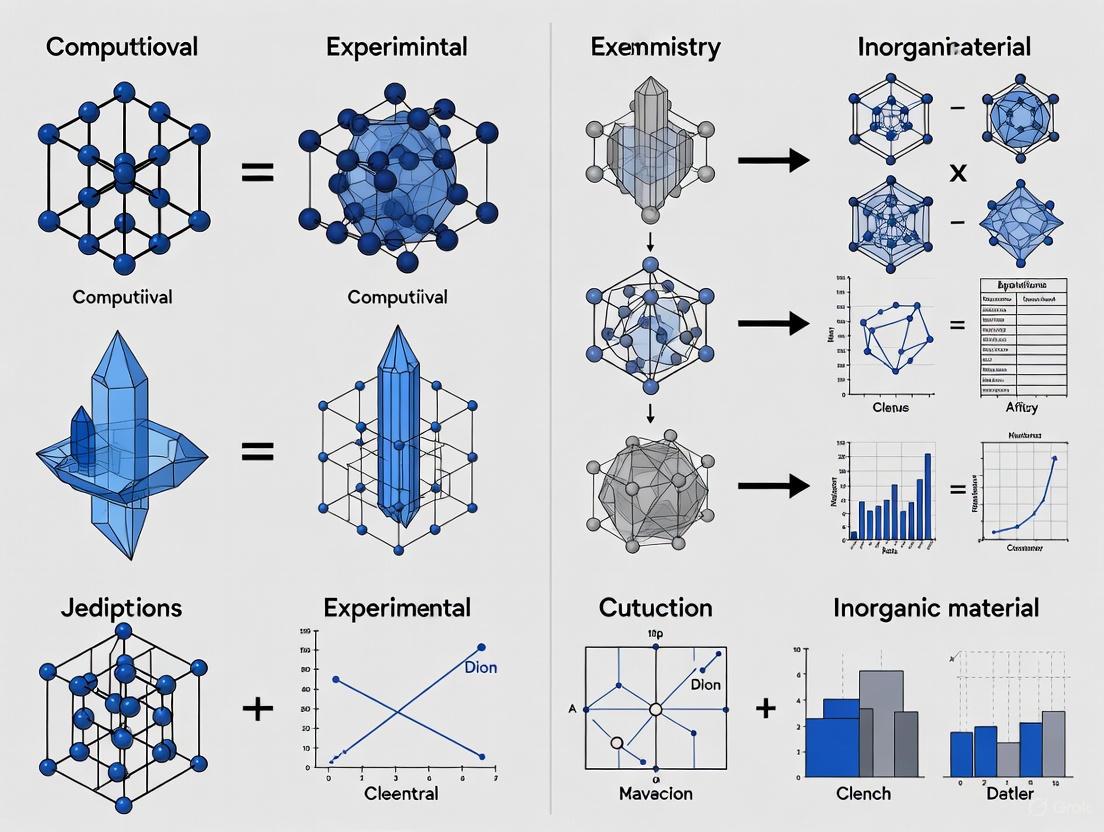

This article provides a comprehensive comparative analysis of computational and experimental methods for determining inorganic crystal structures, a critical area for researchers in materials science and drug development.

Computational vs. Experimental Inorganic Crystal Structures: A Comparative Analysis for Advanced Materials Discovery

Abstract

This article provides a comprehensive comparative analysis of computational and experimental methods for determining inorganic crystal structures, a critical area for researchers in materials science and drug development. It explores the foundational principles of both approaches, examines cutting-edge methodological advances including generative AI and deep learning, addresses common challenges and optimization strategies, and establishes robust frameworks for validation. By synthesizing insights from large-scale database comparisons and recent high-impact studies, this analysis serves as a guide for leveraging the synergistic potential of computational and experimental techniques to accelerate the discovery and development of novel functional materials.

Foundations of Crystal Structure Determination: Bridging Theoretical and Experimental Approaches

The Essential Role of Crystal Structures in Materials Science and Drug Development

In the fields of materials science and drug development, the crystal structure—the ordered, repeating arrangement of atoms, ions, or molecules in a crystalline material—serves as the fundamental blueprint that dictates material properties and biological activity [1] [2]. This ordered structure arises from the intrinsic nature of constituent particles to form symmetric patterns that repeat along the principal directions of three-dimensional space [1]. The smallest repeating unit possessing the full symmetry of the crystal structure is the unit cell, characterized by its lattice parameters (the lengths of cell edges a, b, c and the angles between them α, β, γ) [1] [3]. In materials science, crystal structure determines critical properties including mechanical behavior, optical transparency, and electronic band structure [1] [4]. Similarly, in the pharmaceutical industry, the crystalline form of a drug profoundly influences its solubility, stability, dissolution rate, bioavailability, and tabletability [2]. Understanding these structures enables researchers to engineer materials and drugs with optimized performance characteristics.

Comparative Analysis: Computational versus Experimental Structure Determination

The determination of crystal structures has evolved into two complementary paradigms: experimental techniques that physically measure diffraction patterns, and computational approaches that predict structures from first principles or data-driven models. The table below summarizes the core methodologies, strengths, and limitations of each approach.

Table 1: Comparison of Experimental and Computational Crystal Structure Determination Methods

| Aspect | Experimental Approaches | Computational Approaches |

|---|---|---|

| Primary Methods | X-ray diffraction (XRD), Neutron diffraction [5] | Crystal Structure Prediction (CSP), Generative AI models [6] [7] |

| Key Output | Experimental electron density map leading to an atomic model [8] | Predicted low-energy crystal structures and landscapes [7] |

| Key Strength | Direct experimental observation; High precision for heavy atoms [5] | Reveals all thermodynamically plausible polymorphs; No synthesis required [7] |

| Key Limitation | Difficulty locating light atoms (e.g., H); Requires high-quality crystals [5] | Accuracy depends on the energy model; Can be computationally expensive [6] |

| Typical Resolution | Atomic coordinates precise to a few trillionths of a meter [5] | Lattice energy differences resolvable to <1 kJ mol⁻¹ [7] |

| Throughput | Single-structure determination | High-throughput screening of thousands of candidates [7] |

| Role in Discovery | Validation and detailed analysis of synthesized materials [9] | De novo design and prioritization of candidates for synthesis [6] |

Experimental Determination Workflow

The following diagram illustrates the standard workflow for determining a crystal structure experimentally using X-ray diffraction, the most common method.

Figure 1: Experimental XRD Workflow.

The process begins with growing a high-quality single crystal of the material [9]. The crystal is exposed to a beam of X-rays, which have wavelengths comparable to atomic distances (≈ 2.0 × 10⁻¹⁰ meters), causing them to diffract [5]. The angles and intensities of these diffracted beams are recorded. The core challenge is solving the "phase problem" to convert the measured diffraction patterns into an electron density map [8] [5]. Finally, an atomic model is built into the electron density and iteratively refined against the experimental data, resulting in a validated structure that is often deposited in a public database like the Protein Data Bank (PDB) or Cambridge Structural Database (CSD) [8] [7].

Computational Prediction Workflow

The workflow for computational crystal structure prediction, particularly for novel materials, relies on exploring the energy landscape to find stable arrangements.

Figure 2: Computational CSP Workflow.

The process starts with a defined chemical composition or system. Initial candidate structures are generated using sampling algorithms like quasi-random sampling or genetic algorithms to explore the vast configurational space [7]. Each candidate undergoes lattice energy minimization using force fields or density functional theory (DFT) to find its most stable configuration [7]. The optimized structures are ranked by their calculated lattice energy, with the lowest-energy structures representing the most thermodynamically stable predicted forms [7]. The most promising candidates then have their functional properties predicted before being prioritized for experimental synthesis and validation [7].

Experimental Protocols for Structure Determination

Protocol 1: Single-Crystal X-ray Diffraction for a Small Organic Molecule

This protocol is standard for determining the precise atomic structure of a small-molecule organic compound, crucial for pharmaceutical development [2] [5].

- Crystallization: Dissolve the pure compound in a suitable solvent and allow slow evaporation or use vapor diffusion to grow a single crystal of sufficient size (typically >0.1 mm in each dimension).

- Crystal Mounting: Select a single crystal under a microscope and mount it on a thin glass fiber or loop. Center the crystal on the goniometer of the X-ray diffractometer.

- Data Collection: Cool the crystal to a low temperature (e.g., 100 K) using a cryostream to reduce thermal motion. Expose the crystal to a monochromatic X-ray beam (e.g., from a Cu or Mo source) and collect diffraction images as the crystal is rotated.

- Data Processing: Index the diffraction spots to determine the unit cell parameters. Integrate the intensities of the reflections and correct for absorption and other experimental factors.

- Structure Solution: Solve the phase problem using direct methods (for small molecules) or molecular replacement (if a similar structure is known) to generate an initial electron density map.

- Refinement and Analysis: Build an atomic model into the electron density. Refine the model (atomic coordinates, displacement parameters) against the diffraction data using least-squares algorithms. Validate the final structure using geometric and statistical criteria.

Protocol 2: High-Throughput Computational Crystal Structure Prediction (CSP)

This protocol, as demonstrated in a large-scale survey of over 1000 organic molecules, is used to generate crystal energy landscapes [7].

- Molecular Preparation: Obtain or compute a molecular structure. For rigid molecules, use the in-crystal conformation from a database or optimize it using quantum chemistry methods (e.g., DFT with B3LYP functional and a 6-311G basis set) [7].

- Intermolecular Potential Generation: Perform a distributed multipole analysis (DMA) on the molecular electron density to derive atom-atom intermolecular potentials (electrostatics, dispersion, repulsion) for accurate lattice energy calculation [7].

- Configuration Space Sampling: Use a global search algorithm (e.g., quasi-random sampling in GLEE package or a genetic algorithm) to generate a wide array of initial crystal packing arrangements across common space groups and variable unit cell parameters [7].

- Lattice Energy Minimization: Optimize each generated trial structure by minimizing the lattice energy with respect to the unit cell parameters and molecular rigid-body coordinates using the prepared intermolecular potential [7].

- Cluster and Rank Structures: Remove duplicate structures from the minimized set. Rank the unique, low-energy structures by their final lattice energy to create the crystal energy landscape.

- Analysis and Validation: Identify the global minimum structure and low-lying polymorphs. Compare predicted structures to known experimental forms if available. The close energy ranking (often within 1 kJ mol⁻¹) of experimentally observed structures validates the predictive accuracy of the landscape [7].

The following table lists key computational and experimental resources used in modern crystal structure research.

Table 2: Key Research Reagents and Resources for Crystallography

| Tool / Resource | Type | Primary Function |

|---|---|---|

| Cambridge Structural Database (CSD) | Database | Curated repository of experimentally determined organic and organometallic crystal structures for analysis and molecular replacement [7]. |

| Protein Data Bank (PDB) | Database | Repository for 3D structures of proteins, nucleic acids, and their complexes with drugs, critical for structural biology [8]. |

| X-ray Diffractometer | Instrument | Generates and measures X-ray diffraction patterns from single-crystal or powder samples for structure determination [5]. |

| Global Lattice Energy Explorer (GLEE) | Software | Performs quasi-random sampling of crystal packing space and lattice energy minimization for CSP [7]. |

| CrystaLLM | AI Model | A large language model trained on CIF files to generate plausible novel crystal structures autoregressively [10]. |

| Neutron Source | Facility | Provides a beam of neutrons for neutron diffraction experiments, which are particularly effective for locating light atoms like hydrogen [5]. |

| Quantum Chemistry Code (e.g., Gaussian) | Software | Performs ab initio calculation of molecular wavefunctions, used for deriving accurate intermolecular forces for CSP [7]. |

The determination and prediction of crystal structures stand as a cornerstone of modern materials science and drug development. While experimental techniques like X-ray diffraction provide the essential ground truth for atomic-level architecture, computational methods like CSP and generative AI are rapidly expanding the frontier by predicting stable, yet unsynthesized, structures and mapping complex energy landscapes. The most powerful approach is an integrated one, where computational predictions guide experimental synthesis, and experimental results, in turn, validate and improve computational models. As generative AI and large-scale validation studies continue to mature, this synergistic relationship promises to dramatically accelerate the discovery and rational design of next-generation functional materials and life-saving therapeutics.

This guide provides a comparative analysis of three pivotal resources—Materials Project, ICSD, and AFLOWLIB—in the context of computational and experimental inorganic crystal structures research. Understanding their distinct data origins, capabilities, and limitations is fundamental for selecting the appropriate tool in materials discovery and validation pipelines.

The landscape of materials databases is broadly divided between those housing experimentally determined structures and those containing computationally generated ones. The Inorganic Crystal Structure Database (ICSD) is the foundational repository for experimentally determined inorganic crystal structures, serving as a critical benchmark for truth in the field [11]. In contrast, The Materials Project (MP) and AFLOWLIB are large-scale, high-throughput computational databases that use density functional theory (DFT) to predict material properties [11]. They often use the ICSD as a source of initial structures for their calculations [12].

The core distinction lies in the nature of their data. ICSD provides the experimentally observed structure, while MP and AFLOW provide computationally "relaxed" structures—the final structure is a prediction based on an initial input, which may have been an experimental structure from ICSD [13]. Most data served by the Materials Project's API are computationally predicted, and a theoretical tag of False simply indicates that the representative structure is deemed the same as an experimentally obtained one within a set of tolerances [13].

Quantitative Comparison of Database Contents

The following table summarizes the key quantitative and qualitative attributes of the three databases, highlighting their primary functions and data types.

Table 1: Core Characteristics and Data Comparison

| Feature | Materials Project (MP) | AFLOWLIB (AFLOW) | Inorganic Crystal Structure Database (ICSD) |

|---|---|---|---|

| Primary Data Type | Computational (DFT) [13] | Computational (DFT) [11] | Experimental [11] |

| Data Origin | High-throughput DFT calculations; initial structures often from ICSD [12] [13] | High-throughput automated computational framework [11] | Curated experimental literature and publications [11] |

| Key Content | Calculated properties (formation energy, band structure, elasticity); crystal structures | Calculated properties, crystal structures, phase diagrams, and material descriptors | Experimentally refined crystal structures and atomic coordinates |

| Example Property: Band Gaps | Primarily GGA-level DFT, known to underestimate gaps [14] | GGA-level DFT; some universal correction schemes applied [14] | N/A (contains structures, not directly calculated properties) |

| Band Gap Accuracy (RMSE) | ~0.75-1.05 eV (vs. experiment) [14] | ~0.75-1.05 eV (vs. experiment) [14] | N/A |

| API Access | Yes (RESTful API) [13] | Yes (RESTful API) [15] | Limited (typically commercial license) |

Comparative Analysis of Methodologies and Workflows

The value and limitations of each database are rooted in their underlying methodologies. A comparative analysis of their approaches, particularly for a critical property like band gaps, reveals their respective strengths and roles in research.

Computational Workflows and Data Generation

The Materials Project and AFLOWLIB employ high-throughput density functional theory (DFT) calculations. These frameworks automatically run thousands of simulations using consistent parameters, enabling the systematic comparison of materials across a vast chemical space [11]. AFLOWLIB, for instance, is described as an "automatic framework for high-throughput materials discovery" [11]. These platforms often begin with experimental crystal prototypes from the ICSD to generate candidate structures for computation [12].

Table 2: Key "Research Reagent Solutions" in Computational Materials Science

| Resource / Tool | Function in Research |

|---|---|

| Density Functional Theory (DFT) | The foundational computational method for calculating electronic structure and properties of materials from first principles. |

| Projector Augmented-Wave (PAW) Pseudopotentials | Used in DFT codes (e.g., VASP) to represent the core electrons and nucleus, improving computational efficiency [12]. |

| Perdew-Burke-Ernzerhof (PBE) Functional | A specific and widely used approximation (GGA) for the exchange-correlation term in DFT [12] [14]. |

| Hybrid Functionals (e.g., HSE06) | A more advanced and computationally expensive class of functionals that provides greater accuracy, particularly for electronic properties like band gaps [14]. |

| Vienna Ab initio Simulation Package (VASP) | A widely used software package for performing DFT calculations, employed by many high-throughput efforts [12] [14]. |

Diagram 1: The integrated materials discovery workflow, showing the interaction between experimental and computational databases.

Experimental vs. Computational Band Gaps: A Workflow Case Study

Band gap is a critical property for semiconductors. A key limitation of standard DFT methods (GGA, like PBE) used in major computational databases is the systematic underestimation of band gaps. As noted in a study on a hybrid-functional band gap database, the root-mean-square error (RMSE) of GGA-calculated gaps compared to experiment is typically 0.75–1.05 eV for databases like MP and AFLOW [14]. This can lead to the misclassification of small-gap semiconductors as metals [14].

To address this, advanced methodologies are employed. One study created a more accurate database by using a hybrid functional (HSE06) and considering stable magnetic ordering (including antiferromagnetism), achieving a significantly lower RMSE of 0.36 eV for benchmark materials [14]. This workflow, implemented in the AMP2 package, also involved careful material selection from the ICSD and filtering using data from the Materials Project to focus on semiconductors [14]. This case illustrates how computational databases are evolving and how they can be used in conjunction with experimental data for improved accuracy.

Research Applications and Integrated Use

The true power of these resources is realized when they are used in an integrated manner, as part of a larger materials discovery workflow.

High-Throughput Screening for Specific Applications: Researchers use the computationally-predicted properties in MP and AFLOWLIB to rapidly screen thousands of candidates for specific applications, such as identifying new stable metal oxide materials for electrocatalysis [11] or solid-state electrolytes for batteries [11]. This virtual screening drastically reduces the time and cost of initial discovery by prioritizing the most promising candidates for experimental synthesis.

Seed Data for Machine Learning and Active Learning: The large, structured datasets from computational databases are invaluable for training machine learning (ML) models. For example, the

alexandriadatabase of millions of DFT calculations was used to train models that predict material properties, with model error typically decreasing as training data increased [16]. Furthermore, systems like the Computational Autonomy for Materials Discovery (CAMD) use active learning, where an agent is seeded with data from the OQMD (which includes ICSD entries) to autonomously propose the next most promising crystal structures to simulate, efficiently exploring chemical space [12].Bridging Computation and Experiment with Specialized Databases: Next-generation, AI-driven platforms are emerging to better integrate computational and experimental data. The Digital Catalysis Platform (DigCat), for instance, integrates over 800,000 experimental and computational data points, using AI-driven models to provide predictive insights [11]. Similarly, the Dynamic Database of Solid-State Electrolytes (DDSE) contains over 2,500 experimentally validated electrolytes alongside computationally predicted candidates [11]. These platforms represent a move beyond static repositories toward dynamic, predictive discovery tools.

The discovery and development of new functional materials hinge on the availability of accurate crystallographic data. For research involving inorganic crystalline materials, three databases form a cornerstone of computational and experimental studies: the Inorganic Crystal Structure Database (ICSD), Pearson's Crystal Data (PCD), and the Crystallography Open Database (COD). These repositories provide critical structural information, yet they differ significantly in content, scope, and application, influencing their utility for specific research tasks such as high-throughput virtual screening, machine learning, and experimental data validation. A comparative analysis reveals that the ICSD stands as the largest curated database of fully identified inorganic structures, PCD offers extensive data including disorder information, and the COD operates on an open-access model. This guide provides an objective comparison of these databases, supported by experimental data and methodological protocols, to inform their application in computational and experimental materials research.

The table below summarizes the core characteristics of the three databases, highlighting their primary focus and data accessibility.

Table 1: Core Characteristics of ICSD, PCD, and COD

| Database | Full Name | Primary Focus | Access Model |

|---|---|---|---|

| ICSD | Inorganic Crystal Structure Database [17] [18] | Experimental and theoretical inorganic crystal structures [17] | Commercial [17] [18] |

| PCD | Pearson's Crystal Data [19] | Inorganic compounds, including disordered structures [19] | Commercial [19] |

| COD | Crystallography Open Database [19] | Open-access collection of crystal structures [19] | Open Access [19] |

A quantitative comparison of their contents and scope provides a clearer picture of their respective coverages and common applications in materials science research.

Table 2: Quantitative Comparison of Database Contents and Scope

| Feature | ICSD | PCD | COD |

|---|---|---|---|

| Total Entries | >240,000 crystal structures (2021) [17] | 303,855 entries [19] | Not Specified in Search Results |

| Data Timeline | Records from 1913 to present [17] | Not Specified | Not Specified |

| Key Content Types | Experimental inorganic, metal-organic, and theoretical structures [17] | Ordered and disordered inorganic structures [19] | Open-access crystal structures [19] |

| Notable Features | Contains structural descriptors, bibliographic data, and keywords; high-quality curated data [17] [18] | Used for evaluating uncertainties in experimental lattice parameters [19] | Used alongside ICSD and PCD for validating computational predictions [19] |

| Typical Research Applications | Training and benchmarking machine learning models [20]; validating computational structures [19] | Benchmarking and validating computational methods [19] | Validating computational predictions [19] |

Experimental Protocols for Database Utilization

Protocol 1: Validating Computational Crystal Structures

Objective: To assess the accuracy of Density Functional Theory (DFT) calculations by comparing computed lattice parameters with experimental data from the ICSD and PCD [19].

- Data Retrieval: Extract experimental crystallographic data (lattice parameters, space group) from PCD using a Python script. Retrieve computational data from sources like the Materials Project using the pymatgen package [19].

- Data Standardization: Transform computationally derived primitive unit cells into conventional cells to enable direct comparison with experimental data [19].

- Comparison and Analysis: Calculate the relative difference for lattice parameters ( a, b, c ) and volume ( V ) between computational and experimental entries. For compounds with multiple experimental entries, use the standard deviation of the mean to evaluate experimental uncertainty [19].

- Stability Assessment: Compare the computed "E above Hull" value (a measure of thermodynamic stability) against experimental synthesizability, noting that metastable phases may exist experimentally despite positive E above Hull values [19].

Protocol 2: Training Machine Learning Models on Synthetic Data

Objective: To train a deep learning model for space group classification from powder X-ray diffractograms (XRD) using synthetically generated crystals, overcoming limitations of directly using the ICSD (e.g., limited size, class imbalance) [20].

- Synthetic Crystal Generation: Generate training crystals by randomly placing atoms on the Wyckoff positions of a given space group. The occupation probabilities and lattice parameters are drawn from kernel density estimates based on ICSD statistics [20].

- Diffractogram Simulation: Simulate the powder X-ray diffractogram for each generated synthetic crystal [20].

- Model Training: Implement a deep ResNet-like model and train it using online learning on a continuous, distributed stream of synthetic diffractograms. This approach prevents overfitting and allows training on millions of unique patterns per hour [20].

- Model Validation: Evaluate the final model's accuracy on a held-out test set of diffractograms simulated from real, unseen ICSD crystals to benchmark performance [20].

The following diagram illustrates the workflow for generating synthetic crystals and training the machine learning model, as described in the protocol.

Workflow for ML Model Training Using Synthetic Crystals

The table below lists key computational tools and data resources essential for working with crystallographic databases and conducting related research.

Table 3: Essential Reagents and Resources for Crystallographic Analysis

| Item Name | Function/Brief Explanation | Relevance to Databases |

|---|---|---|

| Python Materials Genomics (pymatgen) | A robust, open-source Python library for materials analysis [19]. | Enables programmatic access to and analysis of data from the Materials Project API, facilitating comparison with experimental data from ICSD/PCD [19]. |

| Density Functional Theory (DFT) | A computational method for electronic structure calculations used to predict crystal properties and perform geometry optimization [19]. | Used to generate computational crystal structures for validation against experimental databases like ICSD and PCD [19]. |

| Box-Behnken Design (BBD) | A design-of-experiment (DoE) methodology used to optimize processes by systematically exploring the relationship between multiple factors [21]. | Can be applied to optimize experimental parameters (e.g., for material synthesis) before structural characterization and database deposition [21]. |

| ResNet-like Deep Learning Model | A type of convolutional neural network (CNN) architecture effective for image pattern recognition [20]. | Can be trained on synthetic diffractograms derived from ICSD statistics to automatically classify space groups from experimental XRD patterns [20]. |

The selection of a crystallographic database is a critical step that shapes the design and outcome of materials research. The ICSD is the premier resource for curated, fully identified inorganic crystal structures and is invaluable for benchmarking and training models. PCD provides comprehensive data, including on disordered structures, useful for broad validation studies. The COD offers an open-access alternative. As computational methods, particularly generative AI and deep learning, continue to evolve, the role of these experimental databases will expand beyond mere repositories to become foundational components for validating in-silico discoveries and guiding the targeted synthesis of new materials.

In the discovery and development of new materials and pharmaceuticals, researchers navigate two distinct yet complementary worlds: the pristine, theoretical realm of 0K idealized structures and the complex, dynamic reality of room temperature experimental data. Idealized structures, typically derived from computational methods like Density Functional Theory (DFT), represent the theoretical ground state of a perfect crystal at absolute zero temperature and without defects [19]. In contrast, real-world experimental data captured at room temperature reflect the true behavior of materials under practical conditions, complete with thermal vibrations, entropy effects, and environmental interactions [22]. This guide provides a comprehensive comparison of these two approaches, examining their fundamental differences, methodological frameworks, and implications for research outcomes across materials science and drug development.

Core Conceptual Differences and Theoretical Foundations

Fundamental Physical Distinctions

The divergence between 0K idealized structures and room temperature experimental data stems from fundamental physical principles that govern material behavior at different energy states.

Idealized 0K Structures represent a theoretical construct where atoms occupy precise lattice positions in a perfect crystal at absolute zero. At this temperature, the system exists in its quantum mechanical ground state with zero-point energy as the only contribution, and entropy effects are eliminated [19]. Computational models at 0K assume complete absence of thermal vibrations and atomic displacements, resulting in perfectly symmetric unit cells with mathematically precise bond lengths and angles. These structures represent the minimum energy configuration in a potential energy landscape without kinetic energy contributions.

Room Temperature Experimental Data captures the dynamic reality of materials under ambient conditions. At approximately 298K, atoms undergo significant thermal vibrations and experience entropy-driven disorder effects [22]. Crystal structures exhibit atomic displacement parameters (ADPs) that quantify the smearing of atomic positions around their mean locations. Real-world samples contain inherent imperfections including defects, impurities, and varied grain boundaries that influence measurable properties.

Methodological Frameworks and Approximations

Computational Approaches for 0K Structures rely heavily on Density Functional Theory (DFT) with various exchange-correlation functionals. The Local Density Approximation (LDA) tends to overestimate interatomic forces, leading to contracted lattice parameters, while the Generalized Gradient Approximation (GGA) provides more accurate parameters but fails to properly describe non-local correlation forces like London dispersion forces [19]. These calculations typically employ the Perdew-Burke-Ernzerhof (PBE)-GGA functional and projected augmented wave (PAW) method, assuming periodic boundary conditions in a perfect crystal lattice [19].

Experimental Techniques for Room Temperature Data include X-ray diffraction (XRD), electron diffraction, and nuclear magnetic resonance (NMR) spectroscopy. These methods directly measure electron densities or atomic positions but include uncertainties from instruments, samples, and refinement procedures [19]. For organic and pharmaceutical compounds, experimental structures often reveal metastable polymorphs that would be disregarded in computational searches focused solely on global energy minima [22].

Table: Fundamental Characteristics of 0K Idealized vs. Room Temperature Experimental Structures

| Characteristic | 0K Idealized Structures | Room Temperature Experimental Data |

|---|---|---|

| Temperature | 0 K (absolute zero) | ~298 K (ambient conditions) |

| Thermal Energy | Negligible (zero-point only) | Significant thermal vibrations |

| Entropy Effects | Not considered | Critical for stability |

| Atomic Positions | Perfect lattice points | Probability distributions (ADPs) |

| Structural Disorder | Absent | Common (static/dynamic) |

| Energy Landscape | Global minimum search | Multiple local minima accessible |

| Experimental Validation | Indirect (computational) | Direct measurement |

Quantitative Comparison: Performance Metrics and Data Analysis

Lattice Parameter and Volume Discrepancies

Comparative studies reveal systematic differences between computational predictions and experimental measurements for inorganic compounds. When comparing over 38,000 compounds with multiple experimental entries, the average uncertainties in experimental cell volume range between 0.1% and 1%, with approximately 11% of compounds exhibiting variations exceeding 1% in cell parameters between different experimental determinations [19].

DFT calculations consistently show functional-dependent deviations from experimental values. LDA typically underestimates lattice parameters by 1-3%, while GGA approximations tend to overestimate them by 2-4% compared to room temperature experimental data [19]. These discrepancies become particularly pronounced in layered structures where van der Waals forces play a significant role, as standard DFT functionals do not properly describe these non-local correlation forces [19].

Table: Uncertainty Ranges in Structural Parameters

| Parameter | Computational Uncertainty (0K) | Experimental Uncertainty (298K) |

|---|---|---|

| Lattice Parameters | 1-4% (method dependent) | 0.1-1% (sample/source dependent) |

| Bond Lengths | 0.01-0.05 Å | 0.001-0.01 Å |

| Cell Volume | 2-8% | 0.1-1% |

| Angle Measurements | 1-3 degrees | 0.1-0.5 degrees |

| Energy Differences | 1-2 kJ/mol (recent advances) | N/A (directly measurable) |

Stability and Free Energy Predictions

Recent advances in free-energy calculations have significantly improved the accuracy of predicting crystal form stability under real-world conditions. For industrially relevant compounds, calculated free energies now achieve standard errors of just 1-2 kJ mol⁻¹, allowing more reliable prediction of polymorph stability relationships [22].

The "energy above hull" (Eₕₒₗₗ) metric represents the stability of a compound relative to the most stable phase or decomposition products. Computational databases like the Materials Project provide Eₕₒₗₗ values for thousands of compounds, but these often disagree with experimental observations, particularly for metastable phases that are kinetically stabilized at room temperature [19]. For pharmaceutical compounds, free energy differences of just 1-2 kJ mol⁻¹ can determine which polymorph appears under specific temperature and humidity conditions [22].

Experimental Protocols and Methodologies

Computational Methods for 0K Idealized Structures

First-Principles DFT Calculations follow a standardized protocol beginning with geometry optimization of the initial crystal structure. Researchers typically employ plane-wave basis sets with pseudopotentials to describe electron-ion interactions, using either LDA or GGA exchange-correlation functionals [19]. For improved accuracy, hybrid functionals like PBE0 that incorporate Hartree-Fock exchange are increasingly used, though at greater computational cost.

The composite PBE0 + MBD + Fvib approach combines a hybrid functional (PBE0) with many-body dispersion (MBD) energy corrections and vibrational free energy (Fvib) contributions at finite temperature [22]. Phonon calculations determine vibrational properties using density functional perturbation theory or finite-displacement methods, with imaginary frequencies indicating structural instabilities. The final output is an optimized crystal structure with precise atomic coordinates, lattice parameters, and electronic properties, representing the theoretical ground state [19].

Crystal Structure Prediction (CSP) protocols involve generating multiple plausible crystal packing arrangements through global lattice energy minimization. Researchers use Monte Carlo methods or genetic algorithms to explore the conformational landscape, ranking structures by their lattice energy [22]. For pharmaceutical applications, CSP typically considers multiple possible polymorphs, hydrates, and solvates that might form under different conditions.

Experimental Structure Determination at Room Temperature

X-ray Crystallography remains the gold standard for experimental structure determination. Single crystals of suitable size (0.1-0.5 mm) are mounted on a goniometer and exposed to X-ray radiation, typically from laboratory sources or synchrotrons [23]. Diffraction patterns are collected across multiple orientations, with modern detectors capturing complete datasets in hours to days.

Data reduction involves integrating reflection intensities and correcting for experimental factors like absorption, polarization, and extinction. The phase problem is solved using direct methods, Patterson methods, or molecular replacement with known structures. Researchers refine the structural model against the diffraction data using least-squares or maximum-likelihood approaches, optimizing atomic coordinates, displacement parameters, and occupancy factors [23]. The final model includes R-factors quantifying agreement between the model and experimental data.

Electron Diffraction Techniques have emerged as powerful alternatives, particularly for microcrystalline materials that cannot form large single crystals. Continuous rotation electron diffraction (cRED) collects data from nanocrystals (100 nm - 1 μm) by continuously rotating the crystal in the electron beam [23]. The method is particularly valuable for pharmaceutical polymorphs and materials that are difficult to crystallize in large form.

The recently developed ionic Scattering Factors (iSFAC) modeling method enables experimental determination of partial atomic charges through electron diffraction [23]. This approach refines the scattering factor for each atom as a combination of theoretical scattering factors for neutral and ionic forms, providing absolute values for partial charges on an individual atomic basis.

Visualization of Methodological Relationships

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Essential Computational Tools for 0K Structure Prediction

| Tool/Resource | Function | Application Context |

|---|---|---|

| VASP | DFT calculations with PAW pseudopotentials | Electronic structure, geometry optimization |

| Quantum ESPRESSO | Open-source DFT suite | Plane-wave calculations, phonon spectra |

| Gaussian | Quantum chemistry package | Molecular orbital, energy calculations |

| Materials Project | Computational database | Pre-calculated material properties, Eₕₒₗₗ values |

| CSD/Mercury | Cambridge Structural Database tools | Experimental structure visualization, analysis |

| Phoenix | CSP software | Polymorph prediction, crystal energy landscapes |

Experimental Materials and Characterization Tools

Table: Essential Experimental Resources for Room Temperature Structure Analysis

| Material/Equipment | Function | Application Context |

|---|---|---|

| Single Crystals (0.1-0.5 mm) | XRD sample requirements | High-resolution structure determination |

| Microcrystalline Powder | Electron diffraction samples | Nanocrystal structure analysis |

| Synchrotron Radiation | High-intensity X-ray source | Rapid data collection, small crystals |

| Cryostream Cooler | Temperature control (100-500K) | Variable-temperature studies |

| Mo/Kα X-ray Sources | Laboratory X-ray generation | Routine structure determination |

| CCD/Photon Counting Detectors | Diffraction pattern capture | High-sensitivity data collection |

Implications for Materials Science and Pharmaceutical Development

Practical Consequences of the Temperature Gap

The discrepancies between 0K idealized structures and room temperature experimental data have significant practical implications across multiple research domains. In pharmaceutical development, polymorph prediction remains challenging because computational methods focused on global energy minima may miss metastable forms that persist under ambient conditions [22]. The formation of hydrates and solvates—critically important for drug bioavailability—depends strongly on temperature and relative humidity factors absent in 0K calculations [22].

In energy materials research, properties like ionic conductivity in battery materials or charge transport in photovoltaic compounds exhibit strong temperature dependence that cannot be captured through ground-state calculations alone [19]. For example, lithium ion migration barriers calculated at 0K may significantly underestimate room temperature conductivity due to neglected vibrational contributions to ion hopping.

For catalysis and surface science, reaction pathways and adsorption energies computed using idealized surfaces at 0K often disagree with experimental measurements under operating conditions, where thermal motions and surface reconstructions dramatically alter catalytic activity.

Emerging Approaches for Bridging the Divide

Recent methodological advances show promise for reconciling the gap between computational predictions and experimental observations. The development of temperature- and humidity-dependent free-energy calculations allows researchers to place both hydrate and anhydrate crystal structures on the same energy landscape with defined error bars [22]. These approaches incorporate finite-temperature corrections through quasiharmonic approximation or molecular dynamics simulations.

Experimental electron diffraction techniques now enable direct measurement of partial atomic charges through ionic Scattering Factors (iSFAC) modeling, providing quantitative validation for computational charge distribution predictions [23]. This method has been successfully applied to pharmaceutical compounds including ciprofloxacin and amino acids, revealing charge distributions consistent with quantum chemical computations.

Multi-scale modeling approaches combine the accuracy of quantum mechanical methods for local interactions with classical force fields for longer-range effects and molecular dynamics for finite-temperature properties. These hierarchical methods provide a more complete picture of material behavior across temperature regimes.

The divergence between 0K idealized structures and room temperature experimental data represents both a challenge and an opportunity for materials research. While computational methods provide fundamental insights into crystal engineering and materials design, their predictions must be validated against experimental evidence obtained under relevant conditions. The research community increasingly recognizes that complementary use of both approaches delivers the most robust understanding of material behavior.

Future progress will likely focus on improving the accuracy of finite-temperature free energy calculations, developing more sophisticated functionals that better describe dispersion forces and electron correlation, and enhancing experimental techniques for characterizing dynamic disorder and transient states. As these methodologies converge, researchers will gain unprecedented ability to predict and control material properties across the temperature spectrum from absolute zero to ambient conditions and beyond.

The accurate prediction of inorganic crystal structures from composition alone represents a fundamental challenge in materials science, with profound implications for the discovery of new functional materials. The core problem, known as Crystal Structure Prediction (CSP), seeks to determine the stable crystal structure of an inorganic material based solely on its chemical composition—a capability that would significantly accelerate the discovery of novel materials with tailored properties [24]. Despite decades of development, the field faces significant challenges in objectively evaluating the performance of different CSP algorithms, primarily due to the complex nature of structural similarity assessment and the absence of standardized quantitative metrics [25] [24]. This evaluation challenge creates substantial uncertainty when comparing results across multiple studies and methodologies, mirroring the broader difficulties in assessing variations across experimental measurements that form the focus of this article.

Traditionally, the verification of predicted crystal structures has relied heavily on manual inspection by experts, comparison with experimentally observed structures, analysis of formation enthalpies, success rate calculations, and computation of distances between structures [24]. Each of these approaches introduces its own sources of variability and uncertainty. For instance, manual structural inspection inevitably incorporates subjective judgment, while energy comparisons using Density Functional Theory (DFT) calculations are computationally intensive and may yield different results based on the specific computational parameters employed [25] [24]. The pressing need for standardized evaluation protocols in CSP mirrors the broader scientific challenge of quantifying and managing uncertainty across multiple experimental measurements, particularly when those measurements are obtained through fundamentally different methodological approaches.

Performance Comparison of Major CSP Algorithm Categories

Quantitative Benchmarking Results

The recent introduction of CSPBench, a comprehensive benchmark suite with 180 test structures, has enabled more systematic comparison of CSP algorithms [25]. The performance of 13 state-of-the-art algorithms across different methodological categories reveals significant variations in prediction accuracy, highlighting the uncertainty inherent in different computational approaches.

Table 1: Performance Comparison of Major CSP Algorithm Categories

| Algorithm Category | Representative Examples | Key Characteristics | Performance Insights |

|---|---|---|---|

| De novo DFT-based | CALYPSO [26], USPEX [24] | Combines global search with DFT energy calculations; computationally intensive | Considered leading methods but performance "far from satisfactory"; often cannot identify structures with correct space groups [25] |

| ML Potential-based | GN-OA [26], AGOX with M3GNet [27] | Uses machine learning potentials for energy prediction; faster than DFT | Achieves "competitive performance" compared to DFT-based algorithms; performance strongly depends on potential quality and optimization algorithm [25] |

| Template-based | TCSP [28], CSPML [26] | Uses element substitution on known structures followed by relaxation | Successful when similar templates exist; limited by available template structures [25] [24] |

| Open-source DFT-based | CrySPY , XtalOpt | Open-source alternatives combining search algorithms with DFT | Less established than leading closed-source options; varying success rates [25] |

Success Rates and Identification Capabilities

A critical performance metric is the ability of CSP algorithms to correctly identify known crystal structures. Benchmark results demonstrate substantial variations in success rates across methodological approaches. Template-based algorithms show success primarily when applied to test structures with similar templates available, while most other algorithms struggle to even identify structures with the correct space groups [25]. The machine learning potential-based CSP algorithms have achieved competitive performance compared to DFT-based approaches, though their effectiveness is strongly determined by both the quality of the neural potentials and the global optimization algorithms employed [25]. These performance variations underscore the measurement uncertainties inherent in different computational methodologies, where success rates can fluctuate significantly based on the specific structures being predicted and the parameter settings of each algorithm.

Experimental Protocols and Methodologies

Density Functional Theory (DFT) Protocols

DFT-based CSP methods represent the traditional computational approach, combining global search algorithms with quantum mechanical calculations. The general experimental protocol involves several standardized steps [25]:

- Initial Structure Generation: Structures within the first population are randomly generated while adhering to proper physical constraints, including interatomic distances and crystal symmetry.

- Structure Characterization and Filtering: Similar crystal structures are removed using characterization techniques such as bond characterization metrics and coordination characterization functions to streamline the search space.

- Local Energy Minimization: Once structures are established for each population, local optimizations are performed using DFT-based methods to locate local energy minima.

- Structural Evolution: New structures are generated based on information from previous generations using swarm intelligence algorithms such as particle swarm optimization or evolutionary algorithms.

For DFT calculations, structural relaxations are typically performed using the Vienna Ab initio Simulation Package (VASP) with the Perdew-Burke-Ernzerhof generalized gradient approximation for the exchange-correlation functional [25]. Due to extreme computational demands, benchmark studies often allocate a fixed number of DFT energy calculations (e.g., 3,000) across different test samples to ensure fair comparison [25].

Machine Learning Potential Protocols

ML-based CSP methodologies employ significantly different experimental protocols that leverage neural network potentials trained on DFT data [28]:

- Potential Training: Neural network potentials are trained on existing DFT databases to learn the relationship between atomic configurations and energy/forces.

- Structure Sampling and Generation: Various sampling methods are employed, including quasi-random methods, genetic algorithms, particle swarm optimization, and Bayesian optimization.

- Efficient Structure Relaxation: Generated structures are optimized using the neural network potentials, enabling rapid energy evaluations and force calculations.

- Candidate Ranking and Validation: Final candidate structures are typically validated using higher-level DFT calculations to confirm stability.

The SPaDe-CSP workflow exemplifies a specialized ML approach for organic crystals that employs machine learning models to predict space group candidates and crystal density, using these predictions to filter randomly sampled lattice parameters before crystal structure generation [28]. This approach demonstrates how methodological variations can significantly impact computational efficiency and success rates.

Performance Metric Evaluation Protocols

The evaluation of CSP performance itself requires standardized protocols to ensure meaningful comparisons. Recent work has established methodology for assessing various performance metrics [24]:

- Perturbation Analysis: Two perturbation methods generate crystal structures with varying magnitudes from stable reference structures—random perturbations applied to each atomic site independently, and symmetric perturbations applied only to Wyckoff sites without disrupting symmetry.

- Correlation Assessment: The correlation between formation energy differences and performance metric distances is calculated relative to perturbation magnitudes.

- Multi-metric Evaluation: No single structure similarity measure can fully characterize prediction quality against ground state structures, necessitating the use of multiple complementary metrics.

Workflow Visualization of CSP Methodologies

CSP Methodology Workflow

This workflow diagram illustrates the parallel methodological approaches in crystal structure prediction, highlighting the multiple pathways that can lead to varying results and contributing to measurement uncertainty. The process begins with chemical composition input, which branches into three distinct methodological categories, each with its own structure generation and relaxation approaches, ultimately converging on structure evaluation and benchmarking.

Research Reagent Solutions: Computational Tools for CSP

Table 2: Essential Computational Tools for Crystal Structure Prediction Research

| Tool Name | Type/Function | Key Features | Application in CSP |

|---|---|---|---|

| VASP [25] | Quantum Chemistry Software | Density Functional Theory calculations; plane-wave basis set | Gold standard for energy calculations in DFT-based CSP methods |

| CALYPSO [25] [24] | CSP Algorithm | Particle swarm optimization; symmetry handling; closed-source | Leading de novo CSP method; combines global search with DFT |

| USPEX [25] [24] | CSP Algorithm | Evolutionary algorithms; structure characterization; closed-source | Established CSP method using genetic algorithms with DFT |

| CrySPY [25] [24] | CSP Algorithm | Genetic algorithm/Bayesian optimization with DFT; open-source | Open-source alternative for DFT-based structure prediction |

| M3GNet [25] | Machine Learning Potential | Graph networks; universal potential for elements | ML potential for energy prediction in GN-OA and AGOX algorithms |

| PyXtal [28] | Structure Generation | Python library; symmetry analysis; random structure generation | Generate initial crystal structures for CSP workflows |

| CSPBench [25] | Benchmarking Suite | 180 test structures; quantitative metrics | Standardized evaluation of CSP algorithm performance |

| PFP [28] | Neural Network Potential | Pre-trained models; organic and inorganic systems | Structure relaxation in ML-based CSP workflows |

The comparative analysis of crystal structure prediction methodologies reveals significant variations in performance across different algorithmic approaches, highlighting the inherent uncertainties in computational materials science. The development of comprehensive benchmarking suites like CSPBench with 180 test structures and standardized quantitative metrics represents a crucial advancement toward more reliable evaluation [25]. Nevertheless, the observation that most current CSP algorithms cannot consistently identify structures with correct space groups, coupled with the strong dependence of ML-based methods on potential quality and optimization algorithms, underscores the ongoing challenges in the field [25].

These uncertainties mirror broader issues in experimental sciences, where methodological variations, computational parameters, and evaluation criteria significantly impact measured outcomes. The move toward multi-metric evaluation approaches, which recognize that no single similarity measure can fully characterize prediction quality, provides a framework for managing this uncertainty [24]. As the field continues to evolve, with new algorithms combining machine learning potentials with global search [28] [29], the development of robust, standardized evaluation protocols will be essential for meaningful comparison of results across studies and for advancing toward the ultimate goal of reliable crystal structure prediction from composition alone.

Advanced Methodologies: From Density Functional Theory to Generative AI

Density Functional Theory (DFT) stands as a cornerstone of computational materials science and quantum chemistry, enabling the prediction of electronic, structural, and magnetic properties of atoms, molecules, and solids. The practicality of DFT hinges on approximations for the exchange-correlation (XC) functional, which accounts for quantum mechanical electron-electron interactions. The Local Density Approximation (LDA) and Generalized Gradient Approximation (GGA) represent the most fundamental and widely used classes of these functionals. The choice between them, along with the modern necessity of including dispersion corrections for many systems, directly determines the accuracy and predictive power of computational studies. This guide provides a comparative analysis of LDA, GGA, and dispersion-corrected methods, focusing on their performance in predicting inorganic crystal structures and properties, thereby offering researchers a framework for selecting the appropriate computational tool.

Theoretical Foundations of DFT Approximations

The Local Density Approximation (LDA)

The Local Density Approximation (LDA) represents the simplest approach to defining the exchange-correlation functional. It assumes that the exchange-correlation energy per electron at a point in space is equal to that of a uniform electron gas having the same density as the local density at that point. The LDA functional is expressed as: [ E{xc}^{LDA}[\rho] = \int \rho(\mathbf{r}) \epsilon{xc}(\rho(\mathbf{r})) d\mathbf{r} ] where ( \rho(\mathbf{r}) ) is the electron density and ( \epsilon_{xc}(\rho) ) is the exchange-correlation energy per particle of a homogeneous electron gas of density ( \rho ) [30]. Despite its simplicity, LDA often provides surprisingly good results for bond lengths and vibrational frequencies, but it systematically suffers from overbinding, leading to underestimated lattice parameters and overestimated bulk moduli and binding energies [30] [19] [31].

The Generalized Gradient Approximation (GGA)

The Generalized Gradient Approximation (GGA) improves upon LDA by incorporating the gradient of the electron density ( \nabla\rho(\mathbf{r}) ) in addition to the density itself. This accounts for the non-uniformity of the real electron density, leading to a more sophisticated functional form: [ E{xc}^{GGA}[\rho] = \int \epsilon{xc}(\rho(\mathbf{r}), \nabla\rho(\mathbf{r})) d\mathbf{r} ] Specific GGA functionals, such as the Perdew-Burke-Ernzerhof (PBE) functional, were developed to satisfy fundamental physical constraints [19]. GGA generally corrects LDA's overbinding tendency, yielding more accurate lattice parameters and bond energies [30]. For instance, GGA reduces the mean absolute error in the atomization energies of 20 simple molecules from 31.4 kcal/mol in LDA to 7.9 kcal/mol [30].

The Critical Need for Dispersion Corrections

A fundamental limitation of standard LDA and GGA functionals is their inadequate description of London dispersion forces. These are weak, non-local correlation forces arising from correlated electron motion between spatially separated fragments [19]. This omission is particularly detrimental for systems where van der Waals (vdW) interactions are crucial, such as layered materials, molecular crystals, and adsorption processes. Dispersion-corrected DFT (d-DFT) methods, such as the Grimme's D3 correction, augment standard XC functionals by adding an empirical, non-local energy term to account for these forces, dramatically improving the description of vdW-bound systems [32] [33].

Performance Comparison: Accuracy Across Material Classes

The relative performance of LDA, GGA, and dispersion-corrected methods varies significantly across different classes of materials. The tables below summarize key quantitative comparisons for inorganic crystals and layered structures.

Table 1: Comparison of LDA and GGA for Bulk Inorganic Crystals

| Property | LDA Performance | GGA Performance | Example System | Quantitative Data |

|---|---|---|---|---|

| Lattice Parameters | Systematic underestimation | Generally more accurate, slight overestimation possible | L1₀-MnAl [31] | LDA: a=2.76 Å, c=3.50 Å; GGA: a=2.81 Å, c=3.56 Å; Exp: a=2.81-2.83 Å, c=3.57-3.58 Å |

| Bonding Energy | Overbinding | Improved, but can underbind | 20 Simple Molecules [30] | Mean Absolute Error: LDA=31.4 kcal/mol, GGA=7.9 kcal/mol |

| Magnetic Ground State | Can be incorrect | More reliable | Solid Iron [30] | LDA: fcc non-magnet; GGA: correct bcc ferromagnet |

| Band Gap | Underestimation | Slight improvement, but still underestimates | Semiconductors | Consistent underestimation vs. experiment [34] |

Table 2: Impact of Dispersion Corrections on Layered and Molecular Structures

| System Class | Standard GGA Performance | GGA + Dispersion Correction Performance | Quantitative Data |

|---|---|---|---|

| Layered Materials | Severely overestimates interlayer distances, fails to bind | Accurate interlayer spacing and binding | e.g., Black Phosphorus [19] |

| Organic Crystals | Poor reproduction of cell parameters and packing | High accuracy in structure reproduction | RMSD for 241 structures: 0.084 Å (ordered) [33] |

| 2D vdW Heterostructures | Unreliable interlayer distances and moiré potentials | Accurate structures, enabling property prediction | Band energy errors as low as 35 meV [32] |

| Molecular Adsorption | Weak, non-existent binding | Physically accurate binding energies | Critical for catalysis and gas storage |

Experimental Protocols for Method Validation

Benchmarking against Experimental Crystal Structures

A robust method for validating the accuracy of DFT functionals involves comparing computationally relaxed crystal structures against high-quality experimental diffraction data.

- Protocol Details: This typically involves a two-step process. First, a high-quality dataset of experimental crystal structures is curated from databases like the Inorganic Crystal Structure Database (ICSD) or the Cambridge Structural Database (CSD). Second, the experimental structures are used as input for geometry optimization calculations using different XC functionals. The computed structures (lattice parameters and atomic coordinates) are then compared against the experimental benchmark [19] [33].

- Key Metrics: Common metrics include:

- Root-Mean-Square Cartesian Displacement (RMSD): Measures the average deviation of atomic positions after energy minimization. A study of 241 organic crystals using d-DFT reported an average RMSD of 0.084 Å for ordered structures, indicating high accuracy [33].

- Relative Error in Lattice Parameters: The percentage error in calculated lattice constants (a, b, c) and cell volume compared to experimental values.

- "E above Hull": A metric used in materials databases to assess a compound's thermodynamic stability relative to competing phases. Accurate computational methods should correctly identify stable compounds with low E above hull values [19].

Crystal Structure Prediction (CSP) Blind Tests

Crystal Structure Prediction (CSP), particularly for organic molecules, is a stringent test for computational methods. Blind tests, where theorists predict crystal structures based only on the chemical diagram, have been instrumental in driving method development.

- Protocol Details: Participants are given the chemical structure of one or more target molecules and must predict their most stable crystalline polymorphs using any computational method. The predictions are later compared against unpublished experimental structures [35].

- Performance of d-DFT: The inclusion of dispersion corrections has been a game-changer for CSP. A 2007 study noted that a d-DFT method correctly predicted all four crystal structures in a blind test, establishing its reliability for such challenging tasks [33]. Modern workflows increasingly combine machine learning for initial structure sampling with d-DFT for final refinement and ranking, significantly improving success rates and efficiency [28] [36] [35].

Visualization of Workflows and Relationships

DFT Functional Selection Workflow

The following diagram illustrates a logical decision pathway for selecting an appropriate XC functional based on the system of study and target properties.

Machine Learning-Augmented Crystal Structure Prediction

Modern crystal structure prediction, especially for complex organic molecules, leverages machine learning to enhance the efficiency of traditional DFT-based workflows.

The Scientist's Toolkit: Essential Computational Reagents

Table 3: Key Software and Methodological "Reagents" for Computational Studies

| Tool Name | Type | Primary Function | Relevance to XC Functionals |

|---|---|---|---|

| VASP [31] [33] | Software Package | Plane-wave DFT code for periodic systems | Implements LDA, GGA (PBE), and various dispersion corrections (D2, D3). |

| Quantum ESPRESSO [34] | Software Package | Open-source suite for materials modeling | Supports LDA, GGA, and beyond for solid-state calculations. |

| Grimme's D3 Correction [32] [33] | Method | Empirical dispersion correction | Adds van der Waals interactions to standard LDA/GGA functionals. |

| Neural Network Potentials (NNPs) [28] [32] | Machine Learning Potentials | High-speed, quantum-accurate force fields | Trained on DFT data (often PBE-D3) for efficient structure relaxation in CSP. |

| Pseudo-/Projector Augmented-Wave (PAW) [19] [34] | Method | Treats core-valence electron interaction | Essential for plane-wave codes; pseudopotentials are often functional-specific. |

| Materials Project API [19] | Database & Tool | Access to computed properties of thousands of materials | Provides data primarily calculated with the PBE functional. |

The comparative analysis of LDA, GGA, and dispersion-corrected DFT methods reveals a clear trajectory of improvement. While LDA provides a foundational benchmark, GGA offers a systematic upgrade for structural properties and bonding energies in covalently and ionically bonded inorganic solids. However, the critical advancement for achieving quantitative accuracy across a broader range of materials, particularly those dominated by weak interactions, has been the incorporation of dispersion corrections.

The future of computational materials research lies in the intelligent integration of these methods. The rise of machine learning interatomic potentials (MLIPs) trained on DFT-D3 data promises to bridge the gap between quantum accuracy and molecular dynamics timescales [32]. Furthermore, machine learning is being directly applied to enhance the crystal structure prediction pipeline itself, using predicted space groups and packing densities to guide sampling and reduce the computational cost of finding global minima [28] [36] [35]. For researchers, the choice of functional is no longer a simple binary but a strategic decision: GGA (PBE) remains a robust standard for many inorganic crystals, but the inclusion of dispersion corrections is now essential for layered materials, molecular crystals, and any system where van der Waals forces play a non-negligible role. This nuanced understanding empowers scientists to select the most effective computational tool for their specific research challenge.

The discovery of new crystalline materials is a cornerstone of innovation in fields ranging from pharmaceuticals to renewable energy. Traditional crystal structure prediction (CSP) methods, which rely on computationally expensive global optimization techniques and explicit energy calculations, are facing significant challenges in exploring the vastness of chemical space [6]. Generative artificial intelligence represents a paradigm shift, learning the underlying distribution of known crystal structures to directly propose novel and plausible candidates, dramatically accelerating the materials discovery pipeline [6]. Within this emerging field, text-guided generative AI has introduced a remarkably intuitive interface: the ability to generate crystal structures using natural language descriptions or specific chemical constraints.

This comparative analysis focuses on Chemeleon, a pioneering text-guided diffusion model for crystal structure generation, and contrasts its architecture, capabilities, and performance with other leading approaches in the computational chemistry landscape. We examine how these technologies are reshaping inorganic materials research by enabling more targeted exploration of crystal chemical space.

Model Architectures and Methodologies

Chemeleon: A Text-Guided Diffusion Model

Chemeleon employs a denoising diffusion probabilistic model to generate crystal structures conditioned on textual descriptions [37] [38]. Its architecture is built on a cross-modal learning framework that aligns text embeddings with structural representations. The model training involves two critical stages:

- Crystal CLIP Training: A Transformer-encoder-based model (BERT) is trained using contrastive learning to align text embeddings with three-dimensional structural embeddings derived from graph neural networks (GNNs). This creates a shared latent space where semantically similar text and crystal structures are positioned close together [37].

- Denoising Diffusion Model Training: A diffusion model is trained to generate crystal structures conditioned on the text embeddings produced by the Crystal CLIP model. Conditional sampling is implemented using a classifier-free guidance scheme, which enhances the relevance of generated structures to the input text [37].

Chemeleon supports multiple input modalities: natural language prompts (e.g., "A crystal structure of LiMnO₄ with orthorhombic symmetry"), target chemical compositions, and navigation of chemical systems through element specification [37].

Alternative Architectural Approaches

Other generative models for crystals employ distinct architectural paradigms, offering different trade-offs between generation flexibility, structural validity, and conditioning mechanisms.

CrystaLLM (Autoregressive Large Language Model): This approach challenges conventional structural representations by training a decoder-only Transformer model directly on the text of Crystallographic Information Files (CIFs) [10]. Unlike Chemeleon's diffusion-based approach, CrystaLLM treats crystal structure generation as an autoregressive next-token prediction task, generating CIF file contents token-by-token. The model is trained on millions of CIF files and can be prompted with cell composition and space group information [10].

SPaDe-CSP (Machine Learning-Based Sampling): This method combines predictive machine learning models with structure relaxation for organic molecule CSP [28]. It uses two LightGBM models—a space group predictor and a packing density predictor—to constrain the initial sampling space, reducing the generation of low-density, unstable structures. This sample-then-filter strategy is followed by structure relaxation via a neural network potential (NNP) [28].

Generative Adversarial Networks (GANs): While not represented in the search results for inorganic materials, GANs have been applied to organic crystal generation [28]. These models train a generator network to produce realistic structures and a discriminator to distinguish them from real ones, though they can be challenging to train and may be limited to specific molecular families with sufficient training data [28].

Table 1: Comparative Overview of Model Architectures

| Model | Architecture | Primary Conditioning | Representation | Generation Approach |

|---|---|---|---|---|

| Chemeleon | Denoising Diffusion Model | Text prompts, Composition | 3D structural embeddings | Iterative denoing conditioned on text embeddings |

| CrystaLLM | Autoregressive LLM | Cell composition, Space group | CIF file text | Next-token prediction of CIF syntax |

| SPaDe-CSP | ML Predictors + NNP | Molecular structure | Crystallographic parameters | Space group/density prediction + relaxation |

Workflow Visualization

The following diagram illustrates the core training and generation workflow for the Chemeleon model:

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Rigorous benchmarking of generative crystal structure models remains challenging due to dataset quality issues and inadequate metrics [39]. Recent research highlights that widely used metrics often misreport performance, and common datasets suffer from inadequate splits and significant duplication [39]. With these caveats in mind, the available performance data from published studies is summarized below.

Table 2: Experimental Performance Comparison

| Model | Training Data | Success/Validity Rate | Notable Applications | Key Limitations |

|---|---|---|---|---|

| Chemeleon | MP-40 (text descriptions via OpenAI API) [37] | Reported metrics include structure matching, composition matching, crystal system matching [37] | Li-P-S-Cl quaternary space for solid-state batteries [38] | Performance depends on quality of text descriptions |

| CrystaLLM | ~2.2 million CIF files [10] | Generates plausible structures for wide range of unseen inorganic compounds [10] | Validated by ab initio simulations [10] | Limited to CIF syntax generation |

| SPaDe-CSP | Cambridge Structural Database (169k entries) [28] | 80% success rate on organic crystals (vs. 40% for random CSP) [28] | Organic molecules of varying complexity [28] | Specific to organic molecules with Z' = 1 |

Experimental Protocols and Evaluation Methodologies

Chemeleon Evaluation Protocol

Chemeleon's evaluation involves generating crystal structures based on textual descriptions in a test set, followed by comprehensive metrics assessment [37]:

- Sampling: Generate 20 structures for each textual description in the test set.

- Validation:

- validsamples: Count of chemically valid structures generated.

- uniquesamples: Count of unique structures after deduplication.

- structurematching: Percentage matching ground truth structures.

- compositionmatching: Percentage with correct composition.

- crystalsystemmatching: Percentage with correct crystal system.

- Application Testing: Demonstrate performance on targeted discovery tasks, such as generating stable phases in the Li-P-S-Cl quaternary system for solid-state battery applications [38].

CrystaLLM Evaluation Protocol

CrystaLLM employs a different evaluation strategy focused on structural plausibility [10]:

- Test Sets: Use of a standard test set (~10,000 CIF files) and a challenge set (70 structures, mostly unseen during training).

- Prompting: Two prompting strategies—with cell composition only, and with both cell composition and space group.

- Assessment:

- Ab initio Validation: Generated structures are validated using density functional theory (DFT) calculations.

- Plausibility Judgment: Experts assess whether generated structures represent plausible inorganic crystals.

- Controlled Generation: The model demonstrates capability to generate different structural phases for the same composition when provided with different space group prompts.

Successful implementation of text-guided generative AI for crystal structure research requires both computational tools and data resources.

Table 3: Essential Research Reagent Solutions

| Tool/Resource | Type | Primary Function | Relevance to Generative AI |

|---|---|---|---|

| Crystallographic Information File (CIF) | Data Format | Standardized text representation of crystal structures [10] | Native representation for CrystaLLM; parseable output for all models |

| Materials Project (MP-40) | Dataset | Curated inorganic crystal structures with <40 atoms [37] | Primary training data for Chemeleon |

| Cambridge Structural Database (CSD) | Dataset | Comprehensive organic and metal-organic crystal structures [28] | Training data for organic-focused models (e.g., SPaDe-CSP) |

| Neural Network Potentials (NNPs) | Software | Force fields with near-DFT accuracy [28] | Structure relaxation in hybrid workflows (e.g., SPaDe-CSP) |

| SMACT | Software | Chemical system exploration and filtering [37] | Validating chemical feasibility in Chemeleon composition generation |

| Pymatgen | Software | Python materials analysis library [37] | Structure matching and deduplication in generated sets |

Research Applications and Practical Implementation

Targeted Materials Discovery

Text-guided generative models excel at exploring complex multi-component chemical systems that would be prohibitively expensive to investigate through traditional CSP methods. Chemeleon has demonstrated particular utility in predicting stable phases in the Li-P-S-Cl quaternary space, which is relevant to solid-state battery electrolytes [38]. This approach enables researchers to navigate crystal chemical space using intuitive constraints—either through natural language descriptions of desired material characteristics or by specifying target elements and stoichiometries.

The following diagram illustrates a practical research workflow for using Chemeleon in a targeted discovery campaign:

Implementation Considerations

Researchers implementing these technologies should consider several practical aspects:

Input Design for Chemeleon: For optimal results, text prompts should include both composition (e.g., "LiMnO₄") and crystal system (e.g., "orthorhombic symmetry") [37]. The

--n-atomsparameter should be consistent with the stoichiometry of the provided composition.Quality Control: All generated structures require rigorous validation. Chemeleon's workflow includes deduplication using Pymatgen's StructureMatcher and chemical feasibility checks via SMACT [37].

Computational Requirements: Chemeleon implementation requires PyTorch (≥1.12) and GPU support for efficient training and sampling [37].

Text-guided generative AI represents a transformative advancement in computational materials science, offering a more intuitive and efficient pathway for crystal structure discovery. Chemeleon's diffusion-based approach provides flexible conditioning capabilities through natural language prompts, while alternative architectures like CrystaLLM demonstrate the viability of treating crystal generation as a text modeling problem.

The field continues to face challenges in standardized benchmarking, with recent research highlighting issues in dataset quality and evaluation metrics [39]. Future developments will likely focus on improved conditioning mechanisms, integration with property prediction models, and more robust validation methodologies. As these technologies mature, they promise to significantly accelerate the discovery of novel materials for energy storage, electronics, and other advanced applications by enabling more targeted and efficient exploration of crystal chemical space.