Computational Prediction of Solid-State Reactions: From Fundamentals to AI-Driven Synthesis

This article provides a comprehensive review of computational methods accelerating the prediction and optimization of solid-state reaction synthesis.

Computational Prediction of Solid-State Reactions: From Fundamentals to AI-Driven Synthesis

Abstract

This article provides a comprehensive review of computational methods accelerating the prediction and optimization of solid-state reaction synthesis. Aimed at researchers and scientists, it explores the foundational challenges that make synthesis prediction difficult, details the rise of high-throughput and machine learning approaches, and examines advanced algorithms for troubleshooting failed reactions. The content further compares the performance of different computational strategies against experimental validation, highlighting how these tools are closing the gap between theoretical materials design and practical synthesis, with significant implications for the accelerated discovery of functional materials.

The Solid-State Synthesis Challenge: Why Predicting Reactions is Difficult

Solid-state synthesis is a fundamental methodology for producing a wide range of solid materials, from advanced inorganic compounds to pharmaceutical peptides, without employing solvents as a primary reaction medium. This technique involves chemical reactions between solid precursors through processes such as atomic diffusion and heat treatment at elevated temperatures, resulting in materials with specific crystalline structures and functional properties. The core principle involves transforming powdered solid reactants into new compounds via diffusion-controlled mechanisms at temperatures below their melting points. In the context of modern materials science, this method is indispensable for creating novel compounds with tailored characteristics for technological applications, including energy storage, electronics, and pharmaceuticals.

The industrial significance of solid-state synthesis has grown substantially due to its critical role in manufacturing next-generation materials. It enables precise control over composition, crystal structure, and physical properties, making it particularly valuable for producing functional ceramics, intermetallics, framework structures, and advanced battery materials. As industries increasingly demand materials with higher performance, safety, and sustainability profiles, solid-state synthesis provides a pathway to meet these requirements through controlled material architectures and simplified processing workflows.

Fundamental Principles and Methodologies

Core Mechanisms and Reaction Pathways

Solid-state reactions proceed through distinct mechanistic stages that differentiate them from solution-based synthesis. The process initiates with the formation of nucleation sites at points of contact between reactant particles, followed by atomic interdiffusion across these interfaces to create a primary product layer. As the reaction progresses, ionic migration through this product layer becomes rate-limiting, with subsequent crystal growth and microstructural development determining the final material properties. Key parameters governing these reactions include particle size and morphology, interfacial contact area, diffusion coefficients, and thermodynamic driving forces.

The reaction pathway can be visualized through the following experimental workflow:

Figure 1: Generalized workflow for solid-state synthesis of inorganic materials, highlighting key processing stages from precursor preparation to final product characterization.

Experimental Protocols for Oxide Materials Synthesis

Protocol: Solid-State Synthesis of Ternary Oxide Ceramics

This protocol outlines the standardized procedure for synthesizing ternary oxide compounds via solid-state reaction routes, adapted from methodologies employed for battery materials and advanced ceramics [1] [2].

Materials and Equipment:

- High-purity precursor powders (typically carbonates, oxides, or hydroxides)

- Mortar and pestle (agate preferred) or ball milling apparatus

- Hydraulic press for pelletization

- High-temperature furnace with programmable controller

- Alumina or platinum crucibles

- Glove box for moisture-sensitive materials (if required)

Procedure:

- Precursor Preparation: Weigh starting materials in stoichiometric proportions according to the target composition. Account for possible volatilization (e.g., of lithium) with 3-5% excess of volatile components.

- Mixing and Grinding: Combine powders and grind thoroughly for 30-45 minutes using mechanical mixing (ball milling) or manual grinding with mortar and pestle. For hygroscopic materials, perform this step in an inert atmosphere.

- Calcination: Transfer the mixed powder to an appropriate crucible and heat in a furnace using a controlled temperature program. Typical calcination conditions include:

- Heating rate: 2-5°C/min to target temperature (800-1100°C for most oxides)

- dwell time: 6-12 hours at maximum temperature

- Cooling rate: 1-3°C/min to room temperature

- Atmosphere: ambient air, oxygen, or inert gas as required

- Intermediate Grinding: Remove the calcined material, regrind thoroughly to homogenize and break up aggregates, then re-pelletize.

- Sintering: Subject the pellets to a final heat treatment at higher temperatures (1000-1400°C) for 12-48 hours to achieve desired crystallinity and density.

- Characterization: Analyze the final product using X-ray diffraction, electron microscopy, and electrochemical methods as required.

Critical Parameters:

- Particle size distribution of precursors significantly impacts reaction kinetics

- Heating rates affect nucleation density and microstructure

- Atmosphere control prevents undesirable oxidation states or composition deviations

Computational Prediction of Synthesizability

Data-Driven Approaches for Synthesis Planning

The integration of computational methods with solid-state synthesis has emerged as a transformative approach for predicting synthesizability and optimizing reaction conditions. Machine learning models trained on literature-derived synthesis data can effectively identify promising candidate materials and appropriate processing parameters before experimental attempts [1]. These approaches address the fundamental challenge in materials discovery: while high-throughput computational screening can generate thousands of hypothetical compounds with promising properties, experimental validation remains a significant bottleneck due to the time-intensive nature of synthesis optimization.

Positive-unlabeled (PU) learning frameworks have demonstrated particular utility for predicting solid-state synthesizability, especially given the scarcity of documented failed synthesis attempts in scientific literature [1]. These methods leverage manually curated datasets of successfully synthesized materials to train classifiers that can distinguish between potentially synthesizable and non-synthesizable compositions. For instance, applying PU learning to 4,103 ternary oxides from the Materials Project database enabled prediction of 134 hypothetically synthesizable compositions from a set of 4,312 candidates [1].

Table 1: Computational Metrics for Solid-State Synthesizability Prediction

| Method | Data Source | Key Features | Prediction Accuracy | Limitations |

|---|---|---|---|---|

| Positive-Unlabeled Learning [1] | Human-curated literature data (4,103 ternary oxides) | Uses only positive and unlabeled examples; accounts for kinetic factors | Identified 134 synthesizable compounds from 4,312 candidates | Limited by quality of underlying datasets; difficult to estimate false positives |

| Energy Above Convex Hull (Eℎull) | Materials Project database | Thermodynamic stability metric; difference between compound energy and decomposition products | Extensive use in high-throughput screening | Insufficient alone; neglects kinetic barriers and synthesis conditions |

| Text-Mined Synthesis Planning [1] | Automated extraction from scientific literature (64,000+ articles) | Natural language processing of synthesis parameters | 51% overall accuracy in extracted synthesis conditions | Low data quality; limited by information completeness in source literature |

| Tolerance Factor Approaches [1] | Crystal structure databases (e.g., ICSD) | Structural stability indicators for specific crystal families | Improved performance over traditional tolerance factors for perovskites | Limited to specific structural families; does not account for processing effects |

Integration of Computational and Experimental Workflows

The synergy between computational prediction and experimental validation creates an iterative materials discovery cycle. Computational models suggest promising compositions and initial synthesis parameters, which are then tested experimentally. The results feed back into the models to improve their predictive accuracy. This approach is particularly valuable for emerging material systems such as solid-state battery electrolytes, where traditional trial-and-error methods would be prohibitively time-consuming and resource-intensive.

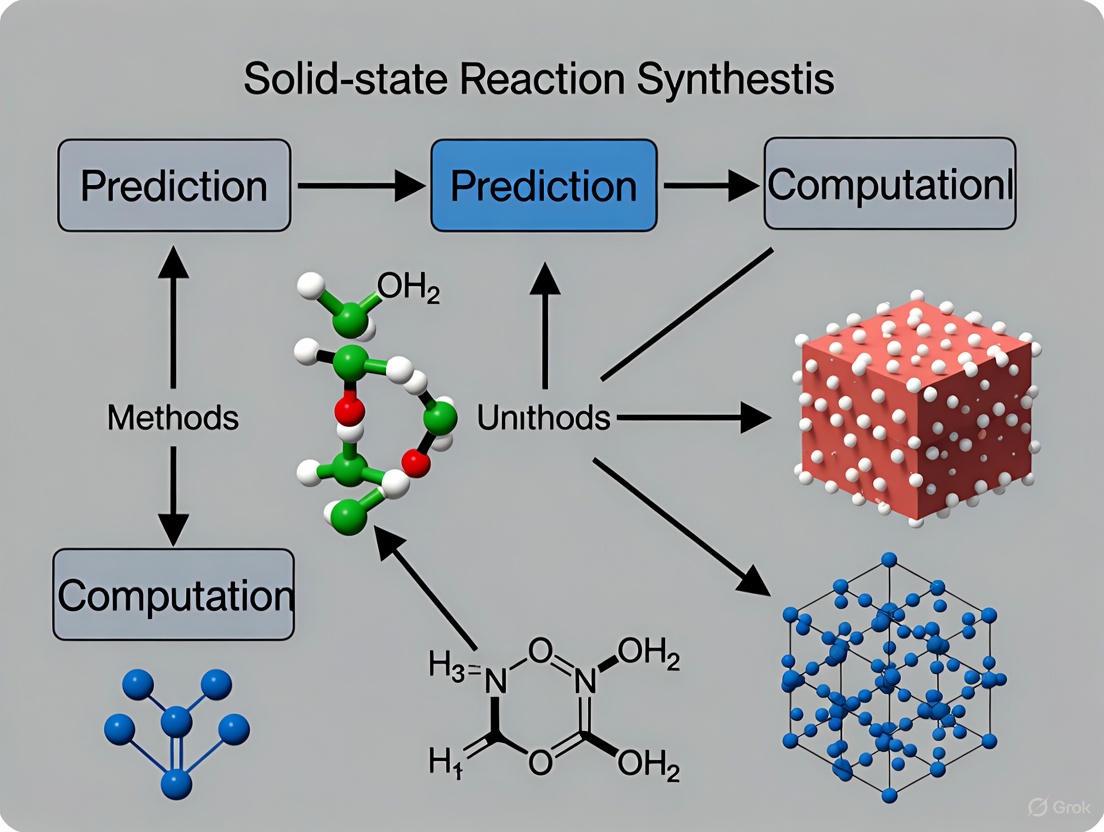

The relationship between computational prediction and experimental synthesis can be visualized as follows:

Figure 2: Integrated computational-experimental workflow for predicting and validating solid-state synthesizability, highlighting the iterative feedback loop between prediction and experimentation.

Industrial Applications and Market Impact

Energy Storage and Solid-State Batteries

Solid-state synthesis has found particularly significant application in the development of next-generation energy storage technologies, especially solid-state batteries (SSBs). These batteries replace the liquid electrolyte found in traditional lithium-ion batteries with solid electrolytes, enabling higher energy density, enhanced safety, faster charging, and longer cycle life [3] [4] [5]. The global solid-state battery market is projected to grow from $899.1 million in 2025 to $14.46 billion by 2034, representing a compound annual growth rate of 36.1% [6].

The industrial adoption of solid-state batteries is advancing through progressive commercialization milestones. Several major manufacturers have announced ambitious timelines for mass production, with initial applications focusing on electric vehicles and high-end consumer electronics. The table below summarizes key developments and projections:

Table 2: Solid-State Battery Commercialization Timeline and Market Projections

| Company/Institution | Technology Focus | Commercialization Status | Key Metrics | Target Applications |

|---|---|---|---|---|

| Toyota [5] | Sulfide electrolytes | Mass production planned for 2027-2028 | Partnership with Idemitsu Kosan ($142M investment) | Electric vehicles |

| Samsung SDI [4] [5] | Sulfide-based electrolytes | Mass production planned for 2027 | 500 Wh/kg energy density; 900 Wh/L volumetric density | EVs, luxury vehicles |

| Panasonic [4] | Small-format cells | Mass production 2025-2029 | 3-minute charge to 80%; >30,000 cycle life | Drones, consumer electronics |

| QuantumScape [5] | Ceramic separators | Pilot production (Murata partnership) | Cobra separator process | Electric vehicles |

| CATL, BYD, Others [3] [4] | Multiple approaches (sulfide, oxide, polymer) | Industrialization verification (2024-2026) | Chinese government $6B R&D initiative | EVs, energy storage |

Solid-state synthesis enables the production of various electrolyte classes for these applications, including oxide-based ceramics (e.g., garnets, NASICON), sulfide glasses and crystals, and solid polymer electrolytes [2]. Each material system requires specific synthesis approaches and faces distinct challenges in scaling toward industrial production.

Pharmaceutical and Biotechnology Applications

In the pharmaceutical sector, solid-phase peptide synthesis (SPPS) represents a specialized form of solid-state synthesis that has revolutionized peptide-based drug development. Introduced by Robert Bruce Merrifield in the 1960s, this technique involves assembling peptides stepwise on an insoluble solid support, dramatically improving efficiency compared to solution-phase methods [7].

Protocol: Solid-Phase Peptide Synthesis (SPPS)

This protocol outlines the standard procedure for synthesizing peptides using solid-phase methodology, widely employed in pharmaceutical research and development.

Materials and Equipment:

- Resin support (e.g., polystyrene-based with appropriate linker)

- Protected amino acids (Fmoc or Boc protection strategies)

- Coupling reagents (HATU, HBTU, DIC, etc.)

- Deprotection reagents (piperidine for Fmoc; TFA for Boc)

- Cleavage cocktail (typically TFA with scavengers)

- Solvents (DMF, DCM, NMP)

- Peptide synthesizer (automated or manual)

Procedure:

- Resin Swelling: Place the resin in a reaction vessel and swell with an appropriate solvent (e.g., DMF) for 15-30 minutes.

- Deprotection: Remove the N-α protecting group (e.g., 20% piperidine in DMF for Fmoc chemistry) with agitation for 5-20 minutes.

- Washing: Wash the resin multiple times with DMF to remove deprotection reagents.

- Coupling: Add the next Fmoc-protected amino acid (3-5 equivalents) with coupling reagents (e.g., HATU/HOAt with DIEA) in DMF. Agitate for 30-90 minutes.

- Washing: Wash thoroughly with DMF after coupling completion.

- Repetition: Repeat steps 2-5 for each additional amino acid in the sequence.

- Final Deprotection: Remove the N-terminal protecting group after sequence completion.

- Cleavage: Treat the resin-bound peptide with cleavage cocktail (e.g., TFA/water/triisopropylsilane 95:2.5:2.5) for 2-4 hours to release the peptide and remove side-chain protecting groups.

- Precipitation and Purification: Precipitate the crude peptide in cold ether, then purify by preparative HPLC and characterize by mass spectrometry.

Critical Parameters:

- Coupling efficiency must exceed 99.5% per cycle for reasonable yields of longer peptides

- Aggregation-prone sequences may require special strategies (e.g., backbone protection, alternative solvents)

- Final crude peptide purity depends on sequence length and complexity

The versatility of SPPS has enabled the development of over 100 approved peptide therapeutics, with hundreds more in clinical trials for conditions including diabetes, cancer, and rare diseases [7]. The methodology supports incorporation of non-natural amino acids, post-translational modifications, and various conjugation strategies, making it indispensable for modern peptide-based drug discovery.

Research Reagent Solutions and Essential Materials

Successful implementation of solid-state synthesis methodologies requires specific materials and reagents tailored to each application. The following table outlines key research reagents and their functions across different solid-state synthesis domains:

Table 3: Essential Research Reagents for Solid-State Synthesis Applications

| Reagent/Material | Function | Application Examples | Critical Parameters |

|---|---|---|---|

| Sulfide Solid Electrolytes [4] | Li-ion conduction in solid-state batteries | Li₁₀GeP₂S₁₂ (LGPS), argyrodites | Ionic conductivity (>10 mS/cm); air stability; compatibility with electrodes |

| Oxide Solid Electrolytes [3] [2] | Li-ion conduction; thermal/electrochemical stability | Garnets (LLZO), perovskites (LLTO), NASICON | Sintering behavior; interfacial stability; mechanical properties |

| Polymer Electrolytes [4] | Flexible ion conduction membranes | PEO-based composites; fluorine-containing polyethers | Ionic conductivity; electrochemical window; mechanical strength |

| Amino Acid Derivatives [7] | Building blocks for peptide synthesis | Fmoc- or Boc-protected amino acids | Purity (>99%); optical purity; compatibility with resin chemistry |

| Coupling Reagents [7] | Activate carboxyl groups for amide bond formation | HATU, HBTU, TBTU, DIC | Coupling efficiency; racemization minimization; byproduct formation |

| Specialized Resins [7] | Solid support for peptide assembly | Wang resin, Rink amide resin, CTC resin | Loading capacity; swelling properties; cleavage characteristics |

| Precursor Oxides/Carbonates [1] [2] | Starting materials for ceramic synthesis | Li₂CO₃, TiO₂, Co₃O₄, MnO₂ | Purity (>99.9%); particle size distribution; reactivity |

| Dopant Compounds [2] | Modify electrical/structural properties | Al₂O₃ (for LLZO), MgO (for cathodes) | Solubility limits; distribution homogeneity; charge compensation |

Challenges and Future Perspectives

Despite significant advances, solid-state synthesis faces several persistent challenges that limit its broader implementation. For inorganic materials, interfacial instability between components, high manufacturing costs, and scalability issues remain substantial barriers to commercialization [4] [2]. In battery applications, solid electrolytes face challenges related to interfacial resistance, limited power density at room temperature, and mechanical stability during cycling. For peptide synthesis, length limitations (typically <50 amino acids) and significant solvent consumption present ongoing constraints [7].

Future developments will likely focus on overcoming these limitations through several key strategies:

Advanced Computational Guidance: Improved machine learning models incorporating synthesis pathway prediction and real-time experimental feedback will accelerate materials optimization and reduce development cycles [1].

Process Innovation: Novel manufacturing approaches such as dry electrode processing, vapor deposition techniques, and continuous flow systems may address scalability challenges in solid-state battery production [4] [2].

Interface Engineering: Tailored interfacial layers and architecture designs will mitigate compatibility issues between solid-state battery components [2].

Hybrid Material Systems: Multifunctional composites combining different electrolyte classes (e.g., polymer-ceramic hybrids) may provide optimized performance profiles unattainable with single-phase materials.

Sustainable Synthesis: Reduced solvent consumption, improved atom economy, and greener reagent alternatives will address environmental concerns, particularly in pharmaceutical applications [7].

As these technological advances mature, solid-state synthesis will continue to enable transformative materials solutions across energy, healthcare, and electronics sectors, underscoring its enduring industrial importance in the technology landscape of the coming decades.

The predictive synthesis of inorganic solid-state materials represents a critical bottleneck in the computational materials discovery pipeline. While high-throughput calculations can rapidly identify promising new compounds with targeted properties, transforming these digital designs into physical reality remains challenging due to three interconnected hurdles: kinetic barriers that control reaction rates, intermediate phases that dictate reaction pathways, and metastability considerations that determine phase accessibility. Solid-state reactions are complex processes governed by diffusion-limited transformations where precursors must mix at the atomic scale to form new crystalline phases. The inherent complexity of these processes arises from the concerted displacements and interactions among many species over extended distances, making them difficult to model compared to molecular transformations [8]. Understanding and navigating these hurdles is essential for developing reliable computational methods that can predict viable synthesis routes for both stable and metastable materials, thereby accelerating the discovery of new functional materials for energy, electronics, and beyond.

Theoretical Frameworks and Computational Descriptors

Thermodynamic versus Kinetic Control in Solid-State Reactions

The competition between thermodynamic and kinetic factors fundamentally governs solid-state synthesis outcomes. Thermodynamic stability, typically evaluated through convex-hull analysis using density functional theory (DFT) calculations, indicates whether a material is stable or metastable relative to competing phases [9]. However, thermodynamic stability alone does not guarantee synthesizability, as kinetic barriers can prevent the formation of even highly stable compounds [10] [8].

Kinetic control becomes particularly important for accessing metastable materials, which are invaluable for numerous technologies including photovoltaics and structural alloys [11] [8]. The potential energy surface schematic below illustrates the relationship between stable and metastable states:

Potential Energy Landscape of Solid-State Reactions illustrates multiple pathways with different activation barriers (ΔG‡) leading to intermediate phases, metastable phases, and the ground state.

Key Computational Descriptors for Predicting Synthesizability

Computational models rely on specific descriptors to predict synthesis outcomes. The following table summarizes key descriptors used in computational synthesis prediction:

Table 1: Computational Descriptors for Solid-State Synthesis Prediction

| Descriptor Category | Specific Descriptors | Computational Method | Predictive Utility |

|---|---|---|---|

| Thermodynamic | Energy above hull (ΔEhull), Formation energy (ΔHf), Reaction energy (ΔGrxn) | DFT (e.g., PBEsol, PBE, SCAN) | Phase stability, Driving force for reactions [11] [8] [9] |

| Kinetic | Activation energy barriers (Ea), Diffusion coefficients, Nucleation barriers | DFT, Phase-field modeling, Kinetic Monte Carlo | Reaction rates, Intermediate phase stability [12] [13] |

| Structural | Phase fraction evolution, Ionic concentration profiles, Interface mobility | Phase-field modeling with charge neutrality constraints | Microstructural evolution, Reaction progression [12] |

| Metastability | Metastability threshold (ΔGms), Energy landscape local minima | Metastable phase diagrams, DFT calculations | Accessibility of metastable phases [11] |

These descriptors enable the development of predictive models for synthesis outcomes. For example, the metastability threshold (ΔGms)—defined as the excess energy stored in a metastable phase relative to the ground state—helps determine which metastable phases can form under specific conditions [11]. Similarly, reaction energies (ΔGrxn) calculated using DFT provide insight into the thermodynamic driving force for reactions, with more negative values generally favoring faster kinetics, though this can be complicated by intermediate phase formation [8].

Application Notes: Computational Protocols

Protocol: Phase-Field Modeling of Solid-State Metathesis Reactions

Application: Modeling the evolution of ionic concentrations and phase fractions during diffusion-limited solid-state metathesis reactions.

Computational Methodology:

- Governing Equations: Implement evolution equations for mole fraction of each ion based on free energy reduction, with energy landscapes containing local minima at stable product compositions [12].

- Constraints: Apply two Lagrange multipliers to enforce electroneutrality and sum of mole fractions constraints [12].

- Numerical Implementation: Solve the derived partial differential equations for cation and anion dynamics using finite element or finite difference methods.

- Mobility Parameters: Define effective mobilities for cations and anions based on experimental diffusion coefficients or DFT calculations [12].

- Validation: Compare predictions against experimental phase evolution data, such as TEM observations of FeS2 synthesis reactions [12].

Key Parameters:

- Characteristic mobility (sum of effective ionic mobilities) controls reaction rate

- Mobility ratio (anions/cations) influences reaction progression manner

- Initial phase fractions and boundary conditions

Interpretation: The model predicts nonplanar phase evolution patterns observed in thin-film reactions, providing insight into how ion mobilities affect reaction kinetics and pathway selection [12].

Protocol: Metastable Phase Diagram Construction

Application: Predicting which metastable phases can form under non-equilibrium conditions and their stability ranges.

Computational Methodology:

- Structure Selection: Identify all known polymorphs for the material system of interest (e.g., A, B, C, H, and X phases for Ln2O3) [11].

- DFT Calculations: Perform full structural relaxations using appropriate functionals (e.g., PBEsol for Ln2O3) that correctly reproduce known phase stabilities [11].

- Energy Benchmarking: Calculate energies of all phases relative to the ground state for each composition.

- Diagram Construction: Generate phase diagrams as a function of both composition and metastability threshold (ΔG) [11].

- Threshold Determination: Identify the metastability threshold for each phase—the energy at which it becomes competitive with the ground state [11].

Key Parameters:

- DFT functional selection critical for accuracy

- Metastability threshold (ΔGms) determines phase accessibility

- Chemical trends across compositional series

Interpretation: Metastable phase diagrams successfully predicted the sequence of irradiation-induced phase transformations in Lu2O3, forming three metastable phases with increasing fluence [11].

Protocol: ARROWS3 for Precursor Selection

Application: Autonomous optimization of precursor selection for solid-state synthesis through active learning from experimental outcomes.

Computational Methodology:

- Initial Ranking: Generate precursor sets stoichiometrically balanced for the target and rank by thermodynamic driving force (ΔG) to form the target using Materials Project data [8].

- Experimental Testing: Propose testing highly-ranked precursors at multiple temperatures to map reaction pathways [8].

- Intermediate Analysis: Identify intermediates formed at each step using XRD with machine-learned analysis [8].

- Pathway Determination: Determine which pairwise reactions led to each observed intermediate phase [8].

- Driving Force Update: Prioritize precursor sets that maintain large driving force (ΔG') at the target-forming step after accounting for intermediate formation [8].

- Iterative Optimization: Repeat until target is obtained with sufficient yield or all precursor sets exhausted.

Key Parameters:

- Thermodynamic driving force (ΔG) for initial ranking

- Remaining driving force (ΔG') after intermediate formation

- Temperature progression for pathway mapping

Interpretation: ARROWS3 successfully identified all effective synthesis routes for YBa2Cu3O6.5 from 188 experiments while requiring fewer iterations than black-box optimization methods, and also guided synthesis of metastable Na2Te3Mo3O16 and LiTiOPO4 [8].

Experimental Validation and Case Studies

Case Study: Metastable Phases in Ln2O3 System

The lanthanide sesquioxides (Ln2O3) system provides an excellent case study for metastable phase formation due to its rich polymorphism. Computational predictions using metastable phase diagrams successfully guided experimental observation of multiple phase transitions under irradiation in Lu2O3 [11]. The sequence of transformations—forming three distinct metastable phases with increasing irradiation fluence—demonstrated how first-principles thermodynamics can interpret and even predict metastability under non-equilibrium conditions.

The workflow for this integrated computational-experimental approach is illustrated below:

Computational-Experimental Workflow for Metastable Phase Prediction shows the integrated approach from first-principles calculations to experimental validation.

Case Study: Intermediate Phase Engineering in Perovskite Solar Cells

Intermediate phases play a crucial role in the crystallization of perovskite solar cells (PSCs), where they act as thermodynamic templates that regulate crystal growth kinetics. The two-step (2S) nucleation theory provides a framework for understanding these processes, showing that intermediate phases have lower nucleation energy barriers compared to the final crystalline phase [14]. This explains why precursor-to-perovskite transitions often proceed through metastable intermediate structures that reduce defect densities and enhance film uniformity.

Table 2: Quantitative Comparison of Nucleation Barriers

| Nucleation Type | Energy Barrier | Formation Rate | Intermediate Stability | Application in PSCs |

|---|---|---|---|---|

| Classical One-Step | High (ΔG1) | Slow | No intermediates | Poor film quality, high defects [14] |

| Two-Step with Intermediate | Lower (ΔG2) | Faster | Metastable intermediates | Improved crystallization, reduced defects [14] |

The quantitative comparison reveals why intermediate phase engineering has become pivotal for advancing perovskite photovoltaics, enabling power conversion efficiencies approaching 27% [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Research Resources for Solid-State Synthesis Prediction

| Resource Category | Specific Tools/Resources | Function | Access Method |

|---|---|---|---|

| DFT Codes | VASP, Quantum ESPRESSO | First-principles energy calculations | Academic licenses, Open source [11] |

| Materials Databases | Materials Project, OQMD | Thermodynamic data for stability analysis | Web API, Public access [8] [9] |

| Phase-Field Modeling | Custom implementations, MOOSE | Microstructural evolution prediction | Open source, Custom development [12] |

| Text-Mined Synthesis Data | Natural language processing pipelines | Historical synthesis recipe analysis | Custom implementation [9] |

| Active Learning Algorithms | ARROWS3, Bayesian optimization | Autonomous experimental optimization | Custom implementation [8] |

Experimental Reagents and Synthesis Considerations

Precursor Selection Heuristics:

- Reaction Energy: Precursors with large negative ΔG values generally provide stronger driving forces but may form stable intermediates that consume this driving force [8].

- Decomposition Temperature: Precursors with matching decomposition temperatures promote synchronous reactions that minimize intermediate formation [8].

- Ionic Mobility: Consider relative mobilities of cations and anions—systems with balanced mobilities often exhibit more uniform reaction progression [12].

- Intermediate Reactivity: Select precursors that form reactive intermediates rather than inert byproducts that halt the reaction [8].

Synthesis Condition Optimization:

- Temperature Profiling: Use stepwise temperature increases to map reaction pathways and identify intermediate formation stages [8].

- Atmosphere Control: Balance reactions with volatile atmospheric gases (O2, CO2) when necessary to maintain stoichiometry [9].

- Time Considerations: Balance between complete reaction progression and avoidance of undesirable phase transformations to metastable or stable competing phases.

The computational prediction of solid-state synthesis outcomes has advanced significantly through frameworks that address kinetic barriers, intermediate phases, and metastability in an integrated manner. Phase-field models incorporating charge neutrality constraints [12], metastable phase diagrams derived from first-principles thermodynamics [11], and active learning algorithms that optimize precursor selection [8] represent powerful approaches to overcoming these fundamental hurdles.

Looking forward, several emerging trends promise to further accelerate progress. The integration of artificial intelligence with intermediate phase engineering shows particular potential, where machine learning models can accelerate the design of molecular additives and predict low-dimensional intermediate structures [14]. Additionally, theory-guided data science approaches combined with advanced in situ characterization techniques will enable more closed-loop feedback between computational prediction and experimental validation [10]. However, challenges remain in fully capturing the complexity of solid-state reaction mechanisms and in expanding computational models to encompass the vast diversity of inorganic materials systems.

As these methods mature, they will gradually transform materials synthesis from an empirical art to a predictive science, ultimately enabling the rapid realization of computationally designed materials with tailored properties and functionalities. This paradigm shift will be essential for addressing pressing technological needs in energy storage, electronics, and sustainable materials design.

{ARTICLE CONTENT STARTS HERE}

The Limitations of Traditional Thermodynamic Metrics (e.g., Energy Above Hull)

Traditional thermodynamic metrics, most notably the energy above hull (Ehull), serve as a foundational tool for predicting the synthesizability of solid-state materials. While this metric provides a crucial first-pass assessment of thermodynamic stability by measuring a compound's energy distance to the convex hull of stable phases, it possesses significant limitations that can critically misguide experimental synthesis efforts. This application note details these constraints through quantitative data, outlines advanced computational protocols to complement Ehull analysis, and provides a visualization-driven toolkit to help researchers navigate the complex landscape of solid-state reaction prediction. By integrating dynamic stability assessments, finite-temperature effects, and synthesis pathway analysis, we present a multifaceted strategy to move beyond the binary classification of "stable" or "unstable" and towards a probabilistic, actionable prediction of synthesizability.

In computational materials discovery, the energy above hull (Ehull) is a primary metric for assessing a compound's thermodynamic stability. It represents the energy difference, per atom, between a given compound and its most stable decomposition products into other phases on the convex hull at the same composition [15]. A compound with an Ehull of 0 meV/atom is thermodynamically stable, while a positive value indicates a tendency to decompose into a combination of more stable phases.

However, the metric's simplicity is also its primary weakness. It is a T = 0 K ground-state property that does not account for kinetic barriers, finite-temperature effects, or configurational entropy, which are often decisive in real-world synthesis. Consequently, many metastable compounds (Ehull > 0) are successfully synthesized, while some computed-stable compounds prove elusive. Relying solely on Ehull can lead to the dismissal of promising metastable materials or the futile pursuit of stable-yet-kinetically-inaccessible phases.

Key Limitations and Supporting Quantitative Data

The limitations of Ehull can be categorized and illustrated with specific examples. The following table summarizes the core challenges and their practical implications for synthesis prediction.

Table 1: Key Limitations of the Energy Above Hull Metric

| Limitation | Description | Impact on Synthesis Prediction |

|---|---|---|

| Static, T=0 K Nature | Calculated from DFT energies at 0 K, ignoring temperature-dependent free energy contributions (vibrational, configurational, electronic entropy). | Fails to predict stability crossover at synthesis temperatures; inaccurate for phases stabilized by entropy. |

| Neglect of Kinetics | Provides no information on the energy barriers of decomposition or formation reactions. | Cannot distinguish between a rapidly decomposing phase and a metastable phase with high kinetic persistence. |

| Dependence on Reference Data | Accuracy is contingent on the completeness and quality of the known phases used to construct the convex hull. | An incomplete hull can falsely label a true stable phase as metastable (false positive), and vice versa. |

| Dimensionality Complexity | Interpretation becomes geometrically and computationally more challenging with increasing number of chemical species (e.g., ternary, quaternary) [15]. | Increases the risk of misidentifying the correct decomposition pathway and its energy. |

| Synthesizability Blindness | A negative decomposition energy for a proposed synthesis reaction does not guarantee the compound's overall stability [15]. | May suggest a compound is synthesizable when it is, in fact, unstable with respect to other unconsidered competing phases. |

A concrete example from the literature discussion highlights the synthesizability blindness: For an oxynitride compound BaTaNO₂, a calculation of its formation energy from a proposed precursor reaction might yield a negative value, suggesting a spontaneous reaction. However, its computed Ehull is +32 meV/atom, indicating metastability, as its true decomposition products are a mixture of Ba₄Ta₂O₉, Ba(TaN₂)₂, and Ta₃N₅ [15]. This underscores that Ehull, while imperfect, provides a more comprehensive stability picture than a single, pre-defined reaction energy.

Experimental and Computational Protocols

To mitigate the limitations of Ehull, a multi-pronged computational protocol is recommended. The workflow below integrates stability analysis with dynamic and kinetic assessments.

Diagram 1: Protocol for integrated synthesizability assessment.

Protocol for Hull Construction and EhullCalculation

This protocol outlines the steps for a robust stability assessment using the convex hull.

- Software Requirements: Density Functional Theory (DFT) code (e.g., VASP, Quantum ESPRESSO), Python Materials Genomics (pymatgen) library, access to materials database (e.g., Materials Project).

- Step 1: Data Acquisition. For the A-B-N-O chemical system of your target (e.g., ABO₂N), obtain the relaxed DFT energies (eV/atom) for all known phases within this system from your calculations and reference databases.

- Step 2: Hull Construction. Using the pymatgen phase diagram module, input the list of phases and their energies. The algorithm will compute the lower convex envelope (the hull) in the energy-composition space.

- Step 3: Ehull Calculation. For your target phase, the Ehull is computed as the vertical energy distance to this hull. Pymatgen automatically determines the precise decomposition products and their stoichiometric coefficients (e.g., for BaTaNO₂: 2/3 Ba₄Ta₂O₉ + 7/45 Ba(TaN₂)₂ + 8/45 Ta₃N₅) and calculates the energy difference [15].

- Step 4: Interpretation. An Ehull < 20-30 meV/atom often suggests a material may be synthesizable as a metastable phase, though this threshold is system-dependent.

Protocol for Dynamic Stability Assessment

A thermodynamically stable compound must also be dynamically stable (no imaginary phonon frequencies).

- Software Requirements: DFT code with phonopy or similar phonon calculation package.

- Step 1: Structure Relaxation. Fully relax the target crystal structure to its ground state using DFT, ensuring accurate forces and stresses.

- Step 2: Supercell Creation. Generate a suitable supercell of the relaxed structure for finite-displacement phonon calculations.

- Step 3: Force Calculation. Calculate the Hellmann-Feynman forces for a set of displaced supercells.

- Step 4: Phonon Dispersion. Post-process the force constants to plot the phonon dispersion spectrum along high-symmetry paths in the Brillouin zone.

- Step 5: Analysis. The absence of significant imaginary (negative) frequencies confirms dynamic stability. Their presence indicates lattice instability, a critical red flag beyond a positive Ehull.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential computational "reagents" and resources for conducting the analyses described in this note.

Table 2: Essential Computational Tools for Solid-State Stability Analysis

| Tool / Resource | Type | Function in Analysis |

|---|---|---|

| VASP | Software | Performs first-principles DFT calculations to determine the total energy of a crystal structure, the foundational data for Ehull [15]. |

| pymatgen | Python Library | Provides robust algorithms for constructing phase diagrams, calculating Ehull, and determining decomposition pathways [15]. |

| Phonopy | Software | Calculates phonon spectra and thermodynamic properties from DFT forces, used to assess dynamic stability. |

| Materials Project | Database | A repository of computed DFT energies for thousands of known and predicted materials, providing essential reference data for hull construction [15]. |

| AutoDock Vina | Docking Software | An example of a tool from a related field (drug discovery) that faces analogous scoring function challenges, highlighting the universality of the problem [16] [17]. |

| Machine Learning Potentials (e.g., CHGNET) | Software/Model | Machine-learned interatomic potentials trained on DFT data can approximate energies and dynamics faster than full DFT, useful for rapid screening [15]. |

Visualizing the Decomposition Pathway

A key output of the convex hull analysis is the identification of the exact decomposition reaction for a metastable phase. The following diagram illustrates this relationship and the central role of Ehull.

Diagram 2: Relationship between a metastable phase, its decomposition products, and E_hull.

The energy above hull is an indispensable but fundamentally limited metric in the computational prediction of solid-state synthesis. Its status as a zero-temperature, thermodynamic ground-state property renders it blind to the kinetic and finite-temperature realities of the laboratory. By integrating Ehull analysis with assessments of dynamic stability via phonons, phase persistence at temperature via molecular dynamics, and a careful mapping of synthesis pathways, researchers can construct a more reliable and nuanced predictive framework. The future of synthesis prediction lies not in discarding Ehull, but in augmenting it with a suite of complementary computational protocols that bridge the gap between thermodynamic potential and experimental achievability.

{ARTICLE CONTENT ENDS HERE}

Application Notes

Multiscale modeling represents a paradigm shift in computational materials science, enabling the integration of data across vastly different spatial and temporal scales to predict and optimize synthesis outcomes. This approach seamlessly connects quantum-level interactions to macroscopic reactor performance, providing a comprehensive framework for rational synthesis design [18]. The core value of these methods lies in their ability to replace traditional trial-and-error experimentation with physics-informed, data-driven prediction, significantly accelerating development cycles in materials science and heterogeneous catalysis [19].

Key Concepts and Workflow Integration

The multiscale approach is fundamentally structured in a hierarchical manner:

Atomistic Scale (Quantum Mechanics): Density Functional Theory (DFT) and other ab initio methods provide foundational data on adsorption energies, reaction barriers, and electronic structures. These calculations offer unparalleled insight into reaction mechanisms but are typically limited to small system sizes and short timescales [19] [20].

Mesoscopic Scale (Microkinetics & Surface Models): Kinetic parameters derived from atomistic calculations are integrated into microkinetic models that describe the time evolution of surface species and reaction rates under specific conditions. This scale bridges quantum mechanics and macroscopic phenomena [20].

Macroscopic Scale (Reactor Engineering): Computational Fluid Dynamics (CFD) incorporates reaction kinetics with transport phenomena (mass, heat, and momentum transfer) to predict the overall performance of full-scale reactors [18] [21].

Automated workflows like AMUSE (Automated MUltiscale Simulation Environment) demonstrate the practical integration of these scales, beginning with DFT data, proceeding through automated reaction network analysis and microkinetic modeling, and culminating in CFD simulations of reactor performance [20].

Applications in Synthesis and Catalysis

Multiscale modeling has demonstrated particular utility in several domains:

Heterogeneous Catalysis: Modeling of catalytic ammonia synthesis and decomposition reveals how atomic-scale interactions impact overall reactor efficiency, enabling the design of catalysts that operate under milder conditions than the conventional Haber-Bosch process [18].

Area-Selective Atomic Layer Deposition (ASALD): CFD modeling guides reactor design and operating conditions for bottom-up nanopatterning, addressing misalignment issues in semiconductor manufacturing by ensuring effective reagent separation and homogeneous exposure across substrates [21].

Solid-State Materials Synthesis: Text-mining of historical synthesis recipes from literature, while challenging for direct machine learning prediction, has enabled the identification of anomalous synthesis procedures that inspired new mechanistic hypotheses about how materials form [9].

Table 1: Computational Methods Across Scales in Synthesis

| Scale | Computational Method | Key Outputs | Typical System Size | Limitations |

|---|---|---|---|---|

| Atomistic | Density Functional Theory (DFT) | Reaction energies, activation barriers, electronic structure | ~100-1000 atoms | High computational cost; limited to short timescales |

| Atomistic/Mesoscopic | Kinetic Monte Carlo (kMC) | Temporal evolution of surface processes, growth rates | Micrometers | Requires pre-defined reaction network; stiffness issues |

| Mesoscopic | Microkinetic Modeling | Surface coverages, reaction rates, selectivity | Reactor segment | Assumes mean-field approximation; neglects spatial correlations |

| Macroscopic | Computational Fluid Dynamics (CFD) | Temperature, pressure, concentration profiles in reactors | Full reactor system | Requires simplified kinetics; high computational cost for complex chemistry |

Protocols

Protocol: Automated Multiscale Workflow for Catalytic Reaction Engineering

This protocol outlines the procedure for implementing the AMUSE workflow to model catalytic reactions from first principles to reactor performance prediction [20].

Step 1: DFT Calculations and Energy Profile Generation

- Objective: Obtain accurate energetics for reaction intermediates and transition states.

- Procedure:

- System Setup: Select appropriate catalytic surface model (e.g., slab model with periodic boundary conditions).

- Computational Parameters: Choose DFT functional (e.g., RPBE, BEEF-vdW), plane-wave cutoff, and k-point sampling suitable for the system.

- Geometry Optimization: Optimize structures of all proposed reaction intermediates and transition states.

- Frequency Calculations: Perform vibrational analysis to confirm transition states (one imaginary frequency) and obtain thermodynamic corrections.

- Energy Extraction: Extract electronic energies and compute Gibbs free energy corrections.

- Quality Control: Verify transition states connect appropriate intermediates through nudged elastic band (NEB) calculations. Confirm convergence of key parameters (energy, forces).

Step 2: Automated Reaction Network Analysis with AutoProfLib

- Objective: Systematically identify reaction mechanisms from DFT output [20].

- Procedure:

- Input Preparation: Provide CONTCAR and OUTCAR files from VASP calculations, or equivalent structural and energy data from other DFT codes.

- Structure Processing: The PreProcessor class extracts atomic coordinates and energies, with optional thermodynamic corrections using the ASE library.

- Molecular Graph Construction: Transform 3D structures into molecular graphs using NetworkX library, generating adjacency matrices based on atomic connectivity.

- Reaction Path Identification: Apply chemically allowed transformations (e.g., bond formation/breaking, adsorption/desorption) to establish connectivity between intermediates.

- Network Generation: Output complete reaction network with associated energy profiles for all identified pathways.

- Quality Control: Manually verify critical reaction steps. Check for missing pathways by comparing with established mechanistic knowledge.

Step 3: Microkinetic Modeling with PyMKM

- Objective: Predict reaction rates and surface coverages under operational conditions [20].

- Procedure:

- Parameter Input: Import reaction network from AutoProfLib. Input operational conditions (temperature, pressure, gas-phase concentrations).

- Rate Constant Calculation: Compute forward and reverse rate constants for elementary steps using transition state theory.

- Equation System Setup: Formulate ordinary differential equations describing the time evolution of surface species concentrations.

- Steady-State Solution: Numerically solve ODE system to obtain steady-state surface coverages and reaction rates.

- Sensitivity Analysis: Identify rate-determining steps and critical surface intermediates.

- Quality Control: Verify mass balance conservation. Check for numerical stability across temperature and pressure ranges.

Step 4: Reactor-Scale Integration via CFD

- Objective: Incorporate microkinetics into reactor-scale transport phenomena [20].

- Procedure:

- Reactor Geometry: Create or import 3D reactor geometry in CFD preprocessor.

- Mesh Generation: Generate computational mesh with appropriate refinement near catalytic surfaces.

- Physics Setup: Define fluid properties, boundary conditions, and transport models.

- Reaction Integration: Implement microkinetic model as source terms in species transport equations.

- Solution: Solve coupled Navier-Stokes and reaction-transport equations to obtain spatial distributions of velocity, temperature, and species concentrations.

- Performance Analysis: Extract overall conversion, selectivity, and yield metrics.

- Quality Control: Perform mesh independence study. Validate against experimental data when available.

Protocol: Text-Mining Historical Synthesis Data for Mechanistic Insight

This protocol describes an approach for extracting and analyzing solid-state synthesis recipes from scientific literature, adapted from methodologies applied to 31,782 text-mined solid-state synthesis recipes [9].

Step 1: Literature Procurement and Preprocessing

- Objective: Acquire and prepare digital scientific literature for synthesis information extraction.

- Procedure:

- Source Identification: Obtain full-text permissions from scientific publishers (e.g., RSC, Wiley, Elsevier, ACS).

- Document Collection: Download publications in HTML/XML format (post-2000 for easier parsing).

- Paragraph Classification: Identify synthesis paragraphs using probabilistic keyword matching of terms associated with materials synthesis.

- Text Normalization: Standardize chemical nomenclature and abbreviations.

Step 2: Materials Extraction and Role Assignment

- Objective: Identify and classify chemical compounds mentioned in synthesis procedures.

- Procedure:

- Chemical Entity Recognition: Replace all chemical compounds with a generalized

tag. - Contextual Analysis: Apply BiLSTM-CRF (Bidirectional Long Short-Term Memory with Conditional Random Field) neural network to classify materials as targets, precursors, or other roles based on sentence context.

- Stoichiometric Balancing: Attempt to reconstruct balanced chemical reactions from identified precursors and targets.

- Data Compilation: Assemble extracted information into structured database format (e.g., JSON).

- Chemical Entity Recognition: Replace all chemical compounds with a generalized

Step 3: Synthesis Operation Classification

- Objective: Categorize and parameterize synthesis operations and conditions.

- Procedure:

- Keyword Clustering: Apply Latent Dirichlet Allocation (LDA) to cluster synonymous process descriptors (e.g., "calcined," "fired," "heated").

- Operation Classification: Label sentences into operation categories (mixing, heating, drying, shaping, quenching).

- Parameter Extraction: Associate and extract relevant parameters (times, temperatures, atmospheres) with each operation type.

- Process Reconstruction: Build Markov chain representations to reconstruct synthesis flowcharts.

Step 4: Data Analysis and Anomaly Detection

- Objective: Identify unusual synthesis patterns that may reveal novel mechanistic insights.

- Procedure:

- Statistical Analysis: Characterize datasets against the "4 Vs" of data science (Volume, Variety, Veracity, Velocity).

- Pattern Recognition: Identify synthesis procedures that deviate significantly from conventional practices.

- Hypothesis Generation: Manually examine anomalous recipes to formulate new mechanistic hypotheses.

- Experimental Validation: Design targeted experiments to test hypotheses derived from text-mined anomalies.

Table 2: Key Reagent Solutions for Multiscale Synthesis Studies

| Reagent/Category | Function in Synthesis | Example Applications | Notes & Considerations |

|---|---|---|---|

| Precursor Compounds | Source of target material constituents | Solid-state synthesis of oxides, nanoparticles | Selection impacts reaction kinetics & thermodynamics; influences precursor conversion pathways [9] |

| Surface Inhibitors | Passivate non-growth areas in selective deposition | Area-selective atomic layer deposition (ASALD) | Includes polymeric inhibitors (ODTS, PMMA) and small molecule inhibitors (acetylacetone); critical for bottom-up patterning [21] |

| Contrast Agents | Modify electron density for scattering experiments | Contrast Variation SAXS of protein-nucleic acid complexes | Enables component-specific visualization in multi-component systems; must be inert to system being studied [22] |

| Computational Reagents (DFT Functionals) | Describe electron exchange-correlation in quantum calculations | Catalyst screening, reaction mechanism studies | Range-separated and double-hybrid functionals improve accuracy for non-covalent interactions and transition states [19] |

| Reactor Design Elements | Control transport phenomena and reagent separation | Spatial ALD reactors, catalytic reactors | Annular reaction zones and asymmetrical inlets enhance uniformity and minimize reagent intermixing [21] |

Workflow Diagrams

Multiscale Modeling Workflow

Text Mining Synthesis Protocols

The Critical Need for Computational Guidance in Materials Discovery

The transition from a theoretical material structure to a synthesized, characterized compound represents one of the most significant challenges in materials science. Traditional experimental approaches, often reliant on trial-and-error, struggle with the immense complexity of modern materials systems, where compositions, structures, and processing parameters create a virtually infinite design space [23]. This bottleneck is particularly acute in solid-state reaction synthesis, where reaction pathways are complex, intermediates are difficult to characterize, and outcomes are heavily influenced by kinetic and thermodynamic factors [24]. The critical need for computational guidance stems from this fundamental limitation: without predictive models, the discovery of novel functional materials for applications in energy storage, electronics, and medicine remains slow, costly, and largely serendipitous.

Computational methods have evolved from supplementary tools to central components of the materials discovery pipeline. The "fourth paradigm" of materials science harnesses accumulated data and machine learning (ML) to significantly accelerate discovery by predicting properties rapidly and accurately [25]. This shift is transforming research workflows, enabling researchers to prioritize the most promising synthetic targets before ever entering the laboratory.

Computational Methods Landscape

The computational toolkit for materials discovery spans multiple scales, from electronic structure calculations to macroscopic property prediction. Each method offers distinct capabilities that address specific aspects of the discovery pipeline.

Table 1: Key Computational Methods in Materials Discovery

| Method Category | Representative Techniques | Primary Applications | Scale Limitations |

|---|---|---|---|

| Quantum Chemistry | Density Functional Theory (DFT), Coupled Cluster (CC), Hartree-Fock (HF) [19] | Electronic structure prediction, reaction mechanism elucidation, transition state characterization [19] | Computationally expensive for large systems (>1000 atoms) |

| Molecular Mechanics | Classical force fields, Molecular Dynamics (MD) [19] | Large-scale structural modeling, conformational sampling, thermodynamic properties [19] | Accuracy dependent on force field parameterization |

| Machine Learning | Graph Neural Networks (GNNs), Large Language Models (LLMs), Bayesian Optimization [23] [25] | Property prediction, synthesizability classification, inverse design [23] [25] | Requires large, high-quality datasets for training |

| Multiscale Modeling | QM/MM, Bayesian experimental autonomous researchers [26] [27] | Bridging atomic-scale interactions with macroscopic behavior [26] | Integration challenges between scale-dependent physics |

Quantum Mechanical Foundations

Quantum chemistry provides the theoretical foundation for computational materials science, offering a rigorous framework for understanding molecular structure, reactivity, and properties at the atomic level [19]. Density Functional Theory (DFT) has become particularly influential due to its favorable balance between computational cost and accuracy, making it suitable for calculating ground-state properties of medium to large molecular systems [19]. Recent enhancements, including range-separated and double-hybrid functionals coupled with empirical dispersion corrections (DFT-D3, DFT-D4), have extended DFT's applicability to non-covalent systems, transition states, and electronically excited configurations relevant to catalysis and materials design [19].

For highest accuracy, post-Hartree-Fock methods like Coupled Cluster with Single, Double, and perturbative Triple excitations (CCSD(T)) remain the gold standard, though their steep computational cost limits application to smaller systems [19]. Fragment-based approaches such as the Fragment Molecular Orbital (FMO) method and ONIOM provide practical strategies for extending quantum treatments to larger systems by focusing computational resources on chemically relevant regions [19].

Data-Driven and Machine Learning Approaches

Machine learning has revolutionized materials discovery by enabling rapid property prediction and pattern recognition in high-dimensional spaces. Graph Neural Networks (GNNs) excel at modeling crystal structures by treating atoms as nodes and bonds as edges, naturally capturing topological relationships that influence material properties [25]. For synthesizability prediction, Large Language Models (LLMs) fine-tuned on crystal structure databases have demonstrated remarkable capabilities, with the Crystal Synthesis LLM (CSLLM) framework achieving 98.6% accuracy in distinguishing synthesizable from non-synthesizable structures—significantly outperforming traditional thermodynamic and kinetic stability metrics [25].

The integration of ML with physical models creates particularly powerful hybrid approaches. Machine-learning force fields can approach the accuracy of ab initio methods while dramatically reducing computational costs, enabling large-scale molecular dynamics simulations previously considered infeasible [23]. Similarly, Bayesian optimization frameworks like the MAMA BEAR system have demonstrated autonomous experimental capability, conducting over 25,000 experiments with minimal human oversight to discover record-breaking energy-absorbing materials [27].

Experimental Protocols: Computational Guidance in Action

Protocol 1: Predicting Solid-State Synthesizability with CSLLM

Purpose: To assess the synthesizability of proposed crystal structures and identify appropriate synthetic routes and precursors using the Crystal Synthesis Large Language Models (CSLLM) framework [25].

Input Requirements: Crystallographic information file (CIF) or POSCAR format containing lattice parameters, space group, atomic coordinates, and composition.

Procedure:

- Data Preparation: Convert crystal structure to "material string" representation, which integrates essential crystal information in a compact text format [25].

- Synthesizability Assessment:

- Input material string into Synthesizability LLM

- Model returns binary classification (synthesizable/non-synthesizable) with probability score

- Accuracy: 98.6% on testing data, outperforming energy-above-hull (74.1%) and phonon stability (82.2%) methods [25]

- Synthetic Method Classification:

- For synthesizable structures, input to Method LLM

- Model classifies as solid-state or solution synthesis (91.0% accuracy) [25]

- Precursor Identification:

- For solid-state reactions, input to Precursor LLM

- Model suggests appropriate precursor combinations (80.2% success rate) [25]

- Validation: Cross-reference suggested precursors with reaction energy calculations and combinatorial analysis

Output: Synthesizability probability, recommended synthetic method, candidate precursors, and confidence metrics.

Protocol 2: Autonomous Materials Discovery with Self-Driving Labs

Purpose: To accelerate materials discovery through autonomous experimentation systems that combine robotics, AI, and real-time characterization [27].

System Components:

- Robotics Platform: Automated systems for sample handling, processing, and characterization

- AI Controller: Bayesian optimization algorithms for experimental design and decision-making

- Characterization Suite: Integrated analytical techniques (XRD, spectroscopy, etc.)

- Data Pipeline: Automated data processing and feature extraction

Workflow:

- Objective Definition: Specify target properties (e.g., maximize energy absorption, optimize conductivity)

- Initial Design Space: Define parameter ranges (composition, processing conditions, etc.)

- Autonomous Experimentation Cycle:

- AI selects most informative experiments based on current knowledge

- Robotics platform executes experiments (synthesis, processing, characterization)

- Data is automatically processed and fed back to AI controller

- Model updates understanding of parameter-property relationships

- Termination: Process continues until performance targets are met or computational budget is exhausted

Validation: The MAMA BEAR system conducted over 25,000 autonomous experiments, discovering polymeric materials with unprecedented energy absorption (75.2% efficiency, doubling previous benchmarks) [27].

Protocol 3: Multiscale Modeling of Carbon Nanotube Synthesis

Purpose: To simulate carbon nanotube (CNT) growth mechanisms across multiple temporal and spatial scales, from atomic interactions to reactor-level phenomena [26].

Computational Framework:

- Atomistic Simulations:

- Machine Learning Integration:

- Reactor-Scale Modeling:

Application: Reveals dynamic behavior of catalyst nanoparticles, chirality-controlled growth processes, and the influence of etching agents on CNT quality [26].

Visualization of Workflows

Computational-Experimental Feedback Loop

Solid-State Synthesis Prediction

Research Reagent Solutions: Computational Tools

Table 2: Essential Computational Tools for Materials Discovery

| Tool/Platform | Type | Primary Function | Application Example |

|---|---|---|---|

| CAMD (Toyota) | Cloud Computing Platform | Accelerates materials discovery using AI to prioritize simulations [28] | Identified ~30,000 new likely synthesizable compounds [28] |

| CSLLM Framework | Large Language Model | Predicts synthesizability, methods, and precursors for crystals [25] | 98.6% accuracy in synthesizability prediction for 3D crystals [25] |

| MAMA BEAR | Autonomous Research System | Bayesian optimization for materials experimentation [27] | Discovered record-breaking energy-absorbing material (75.2% efficiency) [27] |

| Piro | Synthesis Planning | Recommends synthesis routes using ML and physical models [28] | Predicts reactant combinations for target crystalline compounds [28] |

| DFT Software | Quantum Chemistry | Calculates electronic structure and properties from first principles [19] | Models reaction mechanisms and catalytic processes in solid-state synthesis [26] |

The integration of computational guidance with materials discovery represents a paradigm shift that is fundamentally transforming materials science. By combining quantum mechanical accuracy with data-driven efficiency and autonomous experimentation, researchers can now navigate the vast materials design space with unprecedented precision. The protocols and tools outlined here—from CSLLM's remarkable synthesizability prediction to self-driving labs' autonomous discovery—demonstrate that computational guidance is no longer optional but essential for advancing functional materials. As these technologies mature and become more accessible, they promise to accelerate the development of materials needed to address critical challenges in energy, healthcare, and sustainability. The future of materials discovery lies not in replacing researchers, but in empowering them with computational tools that amplify human creativity and intuition.

Computational Toolbox: High-Throughput and Machine Learning Methods

Density Functional Theory (DFT) for Calculating Reaction Energetics and Descriptors

Density Functional Theory (DFT) constitutes a computational quantum mechanical modelling method extensively used in physics, chemistry, and materials science to investigate the electronic structure of many-body systems, particularly their ground state [29]. This approach determines properties of many-electron systems by using functionals—functions that accept another function as input and output a single real number—specifically the spatially dependent electron density [29]. Within the context of solid-state reaction synthesis prediction, DFT enables researchers to calculate critical thermodynamic properties that govern synthesis feasibility and pathway selection. The versatility and computational efficiency of DFT have established it as a cornerstone method in computational materials science and chemistry, facilitating the prediction of material behavior from quantum mechanical first principles without requiring empirical parameters for many properties [29].

The application of DFT to solid-state synthesis problems represents a significant advancement over traditional trial-and-error experimental approaches. By computing energetics and identifying key descriptors, researchers can now pre-screen synthesis routes, predict intermediate formations, and optimize precursor selection before undertaking costly laboratory experiments. This computational guidance is particularly valuable for targeting metastable materials, which often require precise kinetic control to avoid thermodynamically favored byproducts [30]. The following sections detail the theoretical foundation, practical protocols, and specific applications of DFT in calculating reaction energetics and descriptors critical to solid-state synthesis prediction.

Theoretical Background

Fundamental Principles of DFT

The theoretical foundation of DFT rests upon the pioneering Hohenberg-Kohn theorems, which provide the formal justification for using electron density as the fundamental variable describing many-electron systems [29]. The first Hohenberg-Kohn theorem establishes that the ground-state properties of a many-electron system are uniquely determined by its electron density, a function of only three spatial coordinates. This revolutionary insight reduces the complexity of the many-body problem from 3N variables (for N electrons) to just three, offering tremendous computational simplification [29].

The second Hohenberg-Kohn theorem defines an energy functional for the system and demonstrates that the ground-state electron density minimizes this functional. The total energy functional can be expressed as:

[ E[n] = T[n] + U[n] + \int V(\mathbf{r})n(\mathbf{r})\,\mathrm{d}^{3}\mathbf{r} ]

where (T[n]) represents the kinetic energy functional, (U[n]) the electron-electron interaction functional, and the final term describes the interaction with an external potential (V(\mathbf{r})) [29]. The challenge in practical implementations arises because the exact forms of (T[n]) and (U[n]) remain unknown and must be approximated.

The Kohn-Sham formulation, developed by Walter Kohn and Lu Jeu Sham, provides a practical framework for applying DFT by replacing the original interacting system with an auxiliary non-interacting system that reproduces the same electron density [29]. This approach leads to the Kohn-Sham equations:

[ \left[-\frac{\hbar^2}{2m}\nabla^2 + V{\text{eff}}(\mathbf{r})\right] \psii(\mathbf{r}) = \epsiloni \psii(\mathbf{r}) ]

where (V_{\text{eff}}) is an effective potential that includes external, Hartree, and exchange-correlation contributions [29]. The accuracy of DFT calculations critically depends on the approximation used for the exchange-correlation functional, with ongoing development of improved functionals representing a major research area in computational chemistry and materials science.

DFT in Solid-State Synthesis Prediction

For solid-state synthesis prediction, DFT calculations primarily provide access to thermodynamic properties that govern reaction feasibility and competition. The formation energy of a compound indicates its thermodynamic stability relative to its constituent elements or competing phases [30]. More importantly, the reaction energy ((\Delta G)) between potential precursor sets determines the thermodynamic driving force for a particular synthesis pathway, with more negative values generally favoring product formation [30].

Despite its power, DFT has recognized limitations in accurately describing certain phenomena critical to solid-state synthesis. These include intermolecular interactions (particularly van der Waals forces), charge transfer excitations, transition states, global potential energy surfaces, and strongly correlated systems [29]. The incomplete treatment of dispersion interactions can adversely affect accuracy in systems dominated by these forces or where they compete significantly with other effects [29]. Ongoing methodological developments continue to address these limitations through improved functionals and correction schemes.

Computational Protocols

General Workflow for Reaction Energetics

A standardized computational workflow ensures consistent and reliable prediction of reaction energetics for solid-state synthesis. The following protocol outlines the key steps from initial setup to final analysis:

Step 1: System Definition and Precursor Selection

- Define the target material's composition and crystal structure

- Identify potential precursor compounds from experimental databases

- Ensure stoichiometric balance for each precursor set to yield the target composition

Step 2: Computational Parameters Selection

- Select appropriate exchange-correlation functional (see Table 1 for guidance)

- Choose basis sets/pseudopotentials for all elements (see Table 2 for recommendations)

- Determine k-point mesh for Brillouin zone sampling

- Set energy and force convergence criteria (typically 10(^{-5}) eV for energy and 0.01 eV/Å for forces)

Step 3: Structure Optimization

- Obtain or generate initial crystal structures for all compounds (target, precursors, potential intermediates)

- Perform geometry optimization for all structures using selected parameters

- Verify convergence and confirm structures represent local minima through frequency calculations where feasible

Step 4: Energy Calculations

- Compute total energies for all optimized structures

- Account for relevant environmental conditions (temperature, pressure) through thermodynamic corrections where necessary

Step 5: Reaction Energy Calculation

- Calculate formation energies: (Ef = E{\text{compound}} - \sum E_{\text{elements}})

- Compute reaction energies: (\Delta E{\text{rxn}} = E{\text{products}} - E_{\text{reactants}})

- Consider competing reactions and potential byproducts

Step 6: Descriptor Extraction

- Compute electronic structure descriptors (band gaps, density of states, etc.)

- Calculate structural descriptors (bond lengths, coordination environments, etc.)

- Derive thermodynamic descriptors (driving force, stability relative to competing phases)

This workflow provides a robust framework for assessing synthesis feasibility, with particular attention to identifying potential intermediate compounds that might hinder target formation [30].

Protocol for Gold(III) Complexes Study

A recent investigation of gold(III) complexes established a specialized protocol for kinetic properties, highlighting the critical importance of methodological selection [31]. The study employed 154 distinct computational protocols with nonrelativistic Hamiltonians, systematically evaluating 31 basis sets for gold, 52 basis sets for ligand atoms, and 71 levels of theory (including HF, MP2, and 69 DFT functionals) [31]. Additionally, seven protocols with relativistic Hamiltonians using all-electron basis sets for Au were assessed [31].

The findings revealed that structural predictions remained relatively insensitive to the computational protocol. In contrast, the activation Gibbs free energy ((\Delta G^\ddagger)) exhibited pronounced functional dependence, with variations exceeding 100 kJ/mol across different methods [31]. This sensitivity underscores the necessity for careful method validation when studying reaction kinetics and transition states.

Table 1: Key DFT Functionals and Their Applications in Solid-State Chemistry

| Functional | Type | Strengths | Limitations | Representative Applications |

|---|---|---|---|---|

| PBE [32] | GGA | Reasonable accuracy for solids, computational efficiency | Underestimates band gaps, poor for dispersion | General solid-state calculations, preliminary screening |

| SCAN [32] | meta-GGA | Improved accuracy for diverse bonding environments | Higher computational cost | Complex oxides, materials with mixed bonding |

| HSE06 [32] | Hybrid | Accurate band gaps, improved electronic structure | Significant computational cost | Electronic properties, defect calculations |

| B3LYP [31] | Hybrid | Good performance for molecular systems | Parameterized for molecules, less reliable for solids | Molecular complexes, cluster models |

| RPBE [31] | GGA | Improved surface energies | Variable performance for bulk properties | Surface reactions, catalysis |

Table 2: Recommended Basis Sets for Selected Elements in Solid-State Synthesis

| Element | Basis Set / Pseudopotential | Application Notes | References |

|---|---|---|---|

| Gold | def2-TZVP with relativistic corrections | Essential for Au(III) complexes; all-electron relativistic for accuracy | [31] |

| Transition Metals | PAW pseudopotentials with high cutoff | Balance of accuracy and efficiency for oxides | [32] [30] |

| O, N, C | 6-311G(d) or plane-wave 500-600 eV | Standard for organic ligands; plane-wave for solids | [31] [33] |

| Alkali Metals | Standard pseudopotentials with semicore | Adequate for ionic compounds | [30] |

Workflow for Descriptor Calculation in Perovskite Oxides

For multicomponent perovskite oxides, a specialized protocol has been developed to predict cation ordering, a critical factor influencing material properties [32]. This approach combines DFT calculations with data-driven descriptor identification to achieve accurate prediction of experimental ordering patterns.

Step 1: Structure Generation

- Generate multiple candidate structures with different cation arrangements

- Ensure comprehensive sampling of possible configurations

Step 2: DFT Calculations

- Perform geometry optimization using consistent parameters (typically PBE functional)

- Compute total energies for all configurations

- Calculate electronic structure properties

Step 3: Descriptor Computation

- Compute energy descriptors: formation energy, ordering energy ((\Delta E{\text{ord}} = E{\text{ordered}} - E_{\text{disordered}}))

- Calculate structural descriptors: ionic radii mismatch, tolerance factor, bond length variance

- Derive electronic descriptors: Madelung energy, Bader charges, density of states features

Step 4: Model Building

- Correlate DFT-calculated descriptors with experimental ordering behavior

- Develop predictive models using machine learning or simple classification schemes

- Validate models against experimental datasets

This protocol successfully identified descriptors that correctly ranked up to 93% of compositions in an experimental dataset of 190 perovskite oxides, distinguishing between cation ordered and disordered structures [32]. The descriptors enabled high-throughput virtual screening of multicomponent oxides by predicting dominant ordering prior to experimental verification.

The following diagram illustrates the complete computational workflow for solid-state synthesis prediction, integrating both reaction energetics and descriptor calculation:

Diagram 1: Computational workflow for solid-state synthesis prediction using DFT, illustrating the sequential steps from target definition to feasibility assessment.