Computational Discovery of Thermodynamically Stable Compounds: Machine Learning and High-Throughput Strategies for Materials and Drug Development

This article explores the transformative role of computational methods, particularly machine learning (ML) and high-throughput (HTP) density functional theory (DFT), in predicting the thermodynamic stability of new compounds.

Computational Discovery of Thermodynamically Stable Compounds: Machine Learning and High-Throughput Strategies for Materials and Drug Development

Abstract

This article explores the transformative role of computational methods, particularly machine learning (ML) and high-throughput (HTP) density functional theory (DFT), in predicting the thermodynamic stability of new compounds. It covers foundational concepts like decomposition energy and the convex hull, then details advanced methodologies from ensemble ML frameworks to automated free energy calculations. The content addresses critical challenges such as model bias and data efficiency, while providing a comparative analysis of different approaches. Validated by case studies across material classes and successful integration into drug discovery pipelines, this review serves as a comprehensive guide for researchers and scientists aiming to accelerate the discovery of stable functional materials and pharmaceuticals.

The Foundation of Stability: Understanding Thermodynamic Properties and Computational Screening

This technical guide provides a comprehensive framework for assessing the thermodynamic stability of inorganic compounds through the principles of decomposition energy and the convex hull construction. Intended for researchers engaged in the computational discovery of materials, this whitepaper details the theoretical foundations, computational methodologies, and advanced data-driven approaches essential for predicting compound stability. By synthesizing current literature and computational protocols, we establish a rigorous workflow for stability assessment that integrates first-principles calculations, convex hull analysis, and machine learning techniques. The protocols outlined herein serve as critical components for high-throughput screening of thermodynamically stable compounds in advanced materials research.

The discovery of new functional materials necessitates efficient computational methods to assess thermodynamic stability, a fundamental property determining a compound's synthesizability and persistence under operational conditions. Traditional experimental approaches to stability determination are resource-intensive and low-throughput, creating a critical bottleneck in materials development pipelines. Computational materials science addresses this challenge through first-principles calculations and data-driven approaches that predict stability from fundamental physical laws. Central to these methods is the concept of the convex hull of stability, a geometric construction in energy-composition space that identifies the most thermodynamically favorable phases at given compositions. Compounds lying on this hull are stable against decomposition into other phases, while those above the hull are metastable or unstable, with their decomposition energy quantifying their thermodynamic instability.

Theoretical Foundations: From Formation Energy to Decomposition Energy

Beyond Formation Enthalpy

The thermodynamic stability of compounds has traditionally been discussed in terms of formation enthalpy (ΔHf), which represents the energy change when a compound forms from its constituent elements in their standard states. However, this metric provides an incomplete picture of thermodynamic stability, as a compound competes thermodynamically not only with elemental phases but also with all other compounds in the same chemical space [1]. The more relevant quantity for stability assessment is the decomposition enthalpy (ΔHd), which represents the energy difference between a compound and the most stable combination of other compounds (and sometimes elements) with the same overall composition [1] [2].

Decomposition Reaction Classification

Decomposition reactions determining compound stability fall into three distinct types, each with different implications for thermodynamic stability and synthesizability:

Table 1: Classification of Decomposition Reactions

| Reaction Type | Description | Prevalence* | Implications for Synthesis |

|---|---|---|---|

| Type 1 | Decomposition products are exclusively elemental phases (ΔHd = ΔHf) | ~3% (81% are binaries) | Stability can be modulated by adjusting elemental chemical potentials |

| Type 2 | Decomposition products are exclusively other compounds | ~63% | Insensitive to adjustments in elemental chemical potentials |

| Type 3 | Decomposition products include both compounds and elements | ~34% | Partial sensitivity to elemental chemical potential adjustments |

*Prevalence data based on analysis of 56,791 compounds in the Materials Project database [1]

Analysis of 56,791 compounds reveals that Type 2 decompositions are most prevalent, especially for non-binary compounds, where less than 1% compete for stability exclusively with elements [1]. This distribution underscores why benchmarking computational methods solely against experimental formation enthalpies provides limited insight, as ΔHd rarely equals ΔHf, particularly for multicomponent systems.

The Convex Hull: Geometric Construction of Stability

Fundamental Principles

The convex hull of stability represents the lowest energy surface in composition space for a given chemical system, constructed by connecting the energies of the most thermodynamically stable compounds at each composition [3] [4]. For a multi-component system, the convex hull is constructed in (M-1)-dimensional composition space, where M represents the number of elements in the system, with energy per atom as the vertical axis [4].

The mathematical construction involves computing the convex hull of a set of points in (composition, energy) space, which yields the minimum-energy "envelope" representing the most thermodynamically favorable configuration for any composition within the system. Materials lying precisely on this hull are stable against decomposition into other phases, while those above the hull are unstable, with the vertical distance to the hull quantifying their thermodynamic instability [3] [2].

Energy Above Hull Calculation

The energy above hull (Ehull) represents the decomposition energy of a compound and is calculated as the energy difference between the compound and the linear combination of competing phases that minimizes the combined energy at the same average composition [2]. For a compound ABC, this is expressed as:

ΔHd = EABC - EA-B-C

where EA-B-C represents the minimum energy of all possible combinations of competing compounds and elements in the A-B-C system with the same average composition as ABC [1].

The practical calculation requires normalized energies (eV/atom) and careful balancing of stoichiometric coefficients to maintain composition equality. For example, for BaTaNO2 with decomposition products 2/3 Ba₄Ta₂O₉ + 7/45 Ba(TaN₂)₂ + 8/45 Ta₃N₅, the energy above hull is calculated as [2]:

Ehull = E(BaTaNO2) - [(2/3)E(Ba₄Ta₂O₉) + (7/45)E(Ba(TaN₂)₂) + (8/45)E(Ta₃N₅)]

This calculation ensures the same average composition on both sides of the reaction when using normalized (eV/atom) energies [2].

Computational Methodologies and Protocols

Density Functional Theory Approaches

Density Functional Theory (DFT) serves as the foundational computational method for calculating compound energies required for convex hull construction. The accuracy of stability predictions depends critically on the choice of exchange-correlation functional:

Table 2: Performance of DFT Functionals for Stability Prediction

| Functional | Type | Mean Absolute Difference (ΔHf) | Mean Absolute Difference (ΔHd) | Systematic Errors |

|---|---|---|---|---|

| PBE | GGA | 196 meV/atom | 70 meV/atom (all types) | Understabilizes compounds relative to elements |

| SCAN | meta-GGA | 88 meV/atom | 59 meV/atom (all types) | Reduced systematic error |

| PBE (compounds only) | GGA | - | 35 meV/atom (Type 2 only) | - |

| SCAN (compounds only) | meta-GGA | - | 35 meV/atom (Type 2 only) | - |

Performance data based on comparison with experimental formation enthalpies for 1012 compounds [1]

For decomposition reactions involving only compounds (Type 2), both PBE and SCAN functionals achieve accuracy within ~35 meV/atom, comparable to experimental uncertainty [1]. This highlights the importance of selecting appropriate validation metrics when assessing computational methods.

Phase Diagram Construction Protocol

The Materials Project implements a standardized methodology for constructing phase diagrams from DFT-calculated energies:

- Energy Collection: Obtain DFT-calculated energies for all known compounds within the chemical system of interest [4]

- Formation Energy Calculation: For each compound, calculate the formation energy per atom from constituent elements: ΔEf = E - Σniμi where E is the total energy of the compound, ni is the number of moles of component i, and μi is the energy per atom of component i [4]

- Convex Hull Construction: Compute the convex hull of all points in (composition, energy) space using algorithms such as QuickHull [5] [4]

- Stability Assessment: For each compound, calculate the energy above hull (decomposition energy) as the vertical distance to the convex hull surface [4]

This methodology has been implemented in the pymatgen package with the following code structure:

Machine Learning Enhancement

Machine learning approaches offer accelerated stability predictions by leveraging existing DFT data. Recent advances include ensemble methods that combine models based on different domain knowledge:

- ECCNN (Electron Configuration Convolutional Neural Network): Utilizes electron configuration information as intrinsic atomic features [6]

- Roost: Models chemical formulas as complete graphs of elements using message-passing graph neural networks [6]

- Magpie: Incorporates statistical features of various elemental properties [6]

The ECSG (Electron Configuration models with Stacked Generalization) framework integrates these approaches, achieving an Area Under the Curve score of 0.988 for stability prediction while requiring only one-seventh of the data used by existing models to achieve comparable performance [6].

Advanced Applications and Case Studies

Convex Hull-Aware Active Learning

Convex Hull-Aware Active Learning (CAL) represents a novel Bayesian approach that optimizes the exploration of compositional space by directly reasoning about uncertainty in the convex hull [5]. Unlike traditional active learning that focuses on reducing uncertainty in energy surfaces, CAL selects composition-phase pairs that minimize the entropy of the probabilistic convex hull, dramatically reducing the number of energy evaluations needed to determine phase stability [5].

The CAL algorithm:

- Models energy surfaces with Gaussian process regressions

- Generates posterior samples of possible convex hulls

- Computes the expected information gain for potential observations

- Selects compositions that maximize information about the hull [5]

This approach is particularly valuable for complex systems where DFT calculations are computationally expensive, such as high-entropy materials, liquids, glasses, and highly correlated systems [5].

Technetium Carbides System Investigation

A hybrid DFT-machine learning study of technetium carbides (Tc-C) demonstrates the application of convex hull analysis for nuclear materials [7]. Researchers employed a data-driven approach to explore the complete compositional/configurational space of carbon interstitial defects in hexagonal and cubic technetium lattices:

- Generated 320,149 hexagonal and 11,937 cubic Tc-C structures

- Used machine learning to predict formation energies across the configurational space

- Constructed convex hulls identifying the most stable ordered phases at 0 K

- Accounted for configurational and vibrational entropy to predict finite-temperature stability [7]

This approach reconciled long-standing discrepancies between theoretical predictions and experimental observations, revealing how ordered ground-state configurations transform into disordered solid solutions at elevated temperatures [7].

MXene Discovery for Battery Applications

Computational discovery of a novel double transition metal nitride MXene (Nb2TiN2) demonstrates the role of stability assessment in materials design [8]. Researchers employed DFT calculations to:

- Assess thermodynamic stability of the MAX phase precursor (Nb2TiAlN2)

- Confirm exfoliation feasibility to produce the MXene

- Evaluate functionalized Nb2TiN2S2 as an anchoring material for Li-Se batteries

- Analyze binding affinity with lithium polyselenides and reaction kinetics [8]

Stability analysis confirmed the compound's viability for energy storage applications, highlighting how convex hull calculations guide the discovery of functional materials.

Table 3: Computational Resources for Stability Assessment

| Resource | Type | Function | Access |

|---|---|---|---|

| Materials Project API | Database | Provides computed energies for ~56,791 compounds | https://materialsproject.org |

| pymatgen | Software Library | Phase diagram construction and analysis | Python package |

| VASP | DFT Code | First-principles energy calculations | Commercial license |

| CHGNET | Machine Learning | Neural network potential trained on Materials Project data | Open source |

| AFLOW | Database | Automated high-throughput calculations | https://aflow.org |

The computational assessment of thermodynamic stability through decomposition energy and convex hull analysis has matured into an essential capability for materials discovery. The integration of first-principles calculations with machine learning approaches and active learning strategies continues to enhance the efficiency and accuracy of stability predictions. As these methods evolve, they will enable more comprehensive exploration of complex compositional spaces, including high-entropy systems, disordered materials, and multi-component phases. The standardization of computational protocols and the growing availability of materials data infrastructure will further accelerate the discovery of novel functional compounds with tailored properties for energy, electronic, and quantum applications.

The discovery of new, thermodynamically stable compounds is a fundamental driver of innovation across industries, from pharmaceutical development to renewable energy materials. For decades, computational methods, particularly Density Functional Theory (DFT), have served as essential tools for predicting compound stability and properties prior to costly experimental synthesis. Simultaneously, traditional experimental screening methods have been the workhorse for empirical validation. However, both approaches face severe limitations that create a significant predictive power gap in the efficient discovery of novel compounds. DFT, while revolutionary, is hampered by well-documented accuracy-performance trade-offs and steep computational scaling that limits system size [9] [10]. Experimental approaches, on the other hand, are often described as a "quiet crisis" in modern R&D, with one survey finding that 94% of research teams have abandoned promising projects because simulations were too slow or resource-intensive [11]. This whitepaper provides an in-depth analysis of these computational bottlenecks, presents quantitative data on their impact, and explores emerging computational strategies that are beginning to bridge this gap in the pursuit of thermodynamically stable compounds.

Fundamental Limitations of Traditional Density Functional Theory

The Accuracy Challenge: The Exchange-Correlation Functional Problem

DFT achieves its computational tractability by reformulating the many-electron Schrödinger equation into a problem of electron density, with a crucial but unknown term called the exchange-correlation (XC) functional [9]. The accuracy of any DFT calculation depends entirely on the approximation used for this functional. Despite its proven utility, this fundamental compromise means that traditional DFT often fails to achieve chemical accuracy (approximately 1 kcal/mol error relative to experiment), with errors typically 3 to 30 times larger than this threshold [9]. This accuracy gap prevents computational models from reliably predicting experimental outcomes, forcing researchers to still rely heavily on laboratory testing.

The pursuit of better XC functionals has been described as a search for the "Divine Functional" [9]. For over two decades, progress through traditional approaches has stagnated, with even machine learning attempts initially staying within the conventional paradigm of hand-designed density descriptors rather than embracing true deep learning [9].

The Scaling Problem: Computational Cost Versus System Size

The standard DFT algorithm scales as O(N³), where N is the number of electrons in the system [10] [12]. This cubic scaling creates a fundamental limitation that restricts routine DFT calculations to systems comprising only a few hundred atoms. While this has proven sufficient for many applications, it renders DFT infeasible for the large, complex systems relevant to many modern materials science challenges, including disordered systems, complex interfaces, and materials with large unit cells.

Table 1: Computational Scaling and Limitations of Traditional DFT

| Aspect | Limitation | Impact on Research |

|---|---|---|

| Algorithmic Scaling | O(N³) with system size [10] [12] | Limits studies to small systems (typically <500 atoms) |

| Accuracy Error | 3-30× larger than chemical accuracy [9] | Unable to reliably predict experimental outcomes |

| Functional Transferability | Limited across different chemical spaces [9] | Requires re-parameterization for different material classes |

Attempts to overcome this scaling limitation through linear-scaling DFT methods or orbital-free DFT have not yielded a general solution applicable to all materials systems [12]. This cubic bottleneck means that as system size increases, computational requirements quickly become prohibitive. For example, a system of 131,072 atoms would be entirely infeasible to study with conventional DFT, requiring what would amount to "centuries of computing time" [12].

Bottlenecks in Experimental Discovery and Validation

The limitations of computational methods inevitably shift burden onto experimental workflows, which face their own profound constraints. Traditional experimental approaches to discovering stable compounds often rely on trial-and-error methodologies that are inherently slow, resource-intensive, and limited in their ability to explore vast compositional spaces.

A recent survey of 300 materials science and engineering professionals revealed the extent of this problem, with 94% of R&D teams reporting they had to abandon at least one project in the past year due to time or computing resource constraints [11]. This represents what industry leaders describe as "the quiet crisis of modern R&D: the experiments that never happen" [11] – promising research directions that are never pursued due to methodological limitations.

Despite these challenges, organizations report saving approximately $100,000 per project on average by leveraging computational simulation instead of purely physical experiments [11]. This demonstrated return on investment highlights the economic incentive for overcoming the current bottlenecks. Furthermore, researchers show willingness to trade a small amount of accuracy for dramatic speed improvements, with 73% of respondents indicating they would accept slightly reduced precision for a 100-fold increase in simulation speed [11].

Emerging Solutions: Machine Learning and Advanced Algorithms

Machine Learning-Augmented DFT

Several promising approaches are emerging to address DFT's fundamental limitations. Microsoft Research has developed Skala, a deep learning-based XC functional that reaches the accuracy needed to reliably predict experimental outcomes within its trained chemical space [9]. This approach circumvented the traditional "Jacob's ladder" hierarchy of hand-designed density descriptors by using a scalable deep-learning approach trained on an unprecedented quantity of diverse, highly accurate data [9].

For the scaling problem, machine learning frameworks like Materials Learning Algorithms (MALA) demonstrate how neural networks can predict electronic structures at previously inaccessible scales [12]. This approach leverages the nearsightedness of electronic structure – the principle that electronic effects decay rapidly with distance – to create models that make local predictions based on atomic environments [12]. This method has demonstrated the ability to handle systems of over 100,000 atoms with computational costs orders of magnitude lower than conventional DFT.

Table 2: Performance Comparison of Traditional vs. ML-Augmented Computational Methods

| Method | Computational Scaling | Maximum Practical System Size | Key Advantage |

|---|---|---|---|

| Traditional DFT | O(N³) [10] | Hundreds of atoms | Established, transferable |

| Linear-Scaling DFT | O(N) (in theory) [10] | Thousands of atoms | Better scaling for large systems |

| ML Electronic Structure (MALA) | ~O(N) [12] | 100,000+ atoms | Enables previously impossible simulations |

| ML-XC Functionals (Skala) | O(N³) (same as DFT) [9] | Standard system sizes | Reaches experimental accuracy |

Ensemble Machine Learning for Stability Prediction

Beyond improving DFT itself, machine learning approaches are being applied directly to predict thermodynamic stability. Recent research demonstrates that ensemble models based on stacked generalization can accurately predict compound stability while achieving remarkable data efficiency [6]. The Electron Configuration models with Stacked Generalization (ECSG) framework achieves an Area Under the Curve (AUC) score of 0.988 in predicting compound stability and requires only one-seventh of the data used by existing models to achieve equivalent performance [6].

This approach integrates three models based on different domain knowledge – Magpie (atomic properties), Roost (interatomic interactions), and ECCNN (electron configuration) – to mitigate the inductive biases that plague single-model approaches [6]. By combining knowledge across different scales, the model more effectively navigates unexplored composition spaces to identify novel stable compounds.

Workflow Automation and Scalable Screening

Advanced computational pipelines are also addressing the synthesis prediction challenge. Researchers at the University of Chicago developed a computational tool that predicts which metal-organic frameworks (MOFs) will be most stable for a given application [13]. Their approach uses thermodynamic integration (often called "computational alchemy") to convert candidate MOFs into simpler systems with known thermodynamic stability, enabling large-scale screening of synthesizable materials [13].

This tool successfully predicted a new iron-sulfur MOF that was subsequently synthesized and characterized, validating both the prediction and the structure [13]. Such approaches accelerate the discovery process by focusing experimental efforts on the most promising candidates.

Experimental Protocols and Methodologies

High-Accuracy Data Generation for ML-XC Functionals

The development of accurate machine-learned XC functionals requires extensive training data from high-accuracy wavefunction methods. The protocol used for Microsoft's Skala functional involved:

- Pipeline Construction: Building a scalable pipeline to generate highly diverse molecular structures [9]

- Expert Collaboration: Partnering with domain experts (e.g., Prof. Amir Karton) who applied high-accuracy wavefunction methods to compute energy labels [9]

- Substantial Computing Resources: Leveraging Azure compute resources via Microsoft's Accelerating Foundation Models Research program [9]

- Dataset Creation: Generating a dataset two orders of magnitude larger than previous efforts, containing approximately 150,000 accurate energy differences for sp molecules and atoms [9]

This methodology demonstrates the substantial upfront investment required to create the training data necessary for accurate ML-based functionals, with the benefit being long-term application across numerous industrial domains.

Ensemble Model Development for Stability Prediction

The ECSG framework for stability prediction employs a sophisticated ensemble approach:

- Base Model Selection: Integrating three foundational models (Magpie, Roost, ECCNN) based on complementary domain knowledge [6]

- Feature Engineering: Magpie computes statistical features from elemental properties; Roost represents chemical formulas as graphs; ECCNN encodes electron configurations as convolutional inputs [6]

- Stacked Generalization: Using base model outputs as inputs to a meta-level model that produces final predictions [6]

- Validation: Rigorous testing on benchmark datasets and prospective prediction of novel compounds with validation against DFT calculations [6]

This methodology effectively reduces inductive bias by combining models grounded in different theoretical frameworks, resulting in improved generalization and sample efficiency.

Thermodynamic Integration for Synthesizability Prediction

The computational tool for predicting MOF stability employs thermodynamic integration:

- Pathway Definition: Converting the MOF into a simpler system with known thermodynamic stability on the computer [13]

- Work Calculation: Measuring the work done along this transformation pathway [13]

- Stability Calculation: Calculating the stability of the original MOF from the integration results [13]

- Classical Approximation: Using classical physics approximations of quantum mechanics to reduce computational cost from "centuries" to approximately one day [13]

- Experimental Validation: Synthesizing and characterizing top candidates to validate predictions [13]

This approach enables high-throughput screening of potential MOFs by attaching stability predictions to candidate designs before experimental attempts.

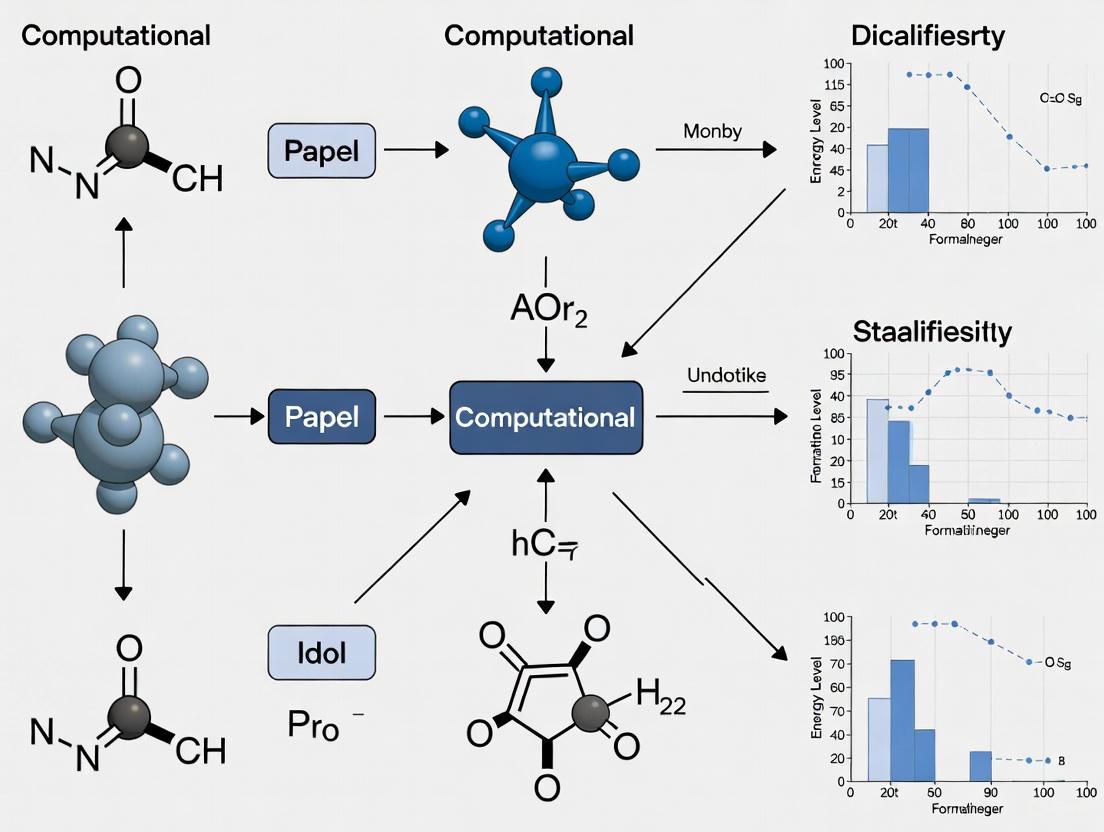

Visualization of Workflows and Methodologies

ML-Enhanced Electronic Structure Prediction Workflow

ML-Enhanced Electronic Structure Prediction Workflow

Ensemble Model Framework for Stability Prediction

Ensemble Model Framework for Stability Prediction

Essential Research Reagents and Computational Tools

Table 3: Key Computational Tools and Resources for Stability Prediction

| Tool/Resource | Type | Primary Function | Application in Research |

|---|---|---|---|

| Skala [9] | ML-XC Functional | Reaches chemical accuracy for DFT | Predicting experimental outcomes in silico |

| MALA [12] | ML Electronic Structure | Predicts electronic structure at scale | Large-scale simulations (100,000+ atoms) |

| ECSG Framework [6] | Ensemble ML Model | Predicts thermodynamic stability | High-efficiency screening of novel compounds |

| Computational Alchemy [13] | Screening Pipeline | Predicts MOF synthesizability | Accelerating discovery of stable frameworks |

| Materials Project [6] | Database | Curated materials properties | Training data for ML models |

| Quantum ESPRESSO [12] | DFT Code | First-principles calculations | Generating reference data for ML training |

The limitations of traditional DFT and experimental methods have long constrained the pace of discovery for thermodynamically stable compounds. The fundamental accuracy challenge of the exchange-correlation functional and the computational scaling barrier have rendered many important problems intractable. However, emerging approaches combining deep learning with physical principles are beginning to overcome these limitations. Machine-learned XC functionals like Skala demonstrate that experimental accuracy is achievable within defined chemical spaces [9]. Frameworks like MALA show that the scaling bottleneck can be circumvented to enable simulations at previously impossible scales [12]. Ensemble methods like ECSG prove that data-efficient stability prediction is feasible, requiring only fractions of the data needed by traditional approaches [6].

As these technologies mature and integrate into research workflows, they promise to shift the balance from laboratory-driven to computation-driven discovery, potentially compressing development timelines exponentially [14]. For researchers pursuing thermodynamically stable compounds, the evolving computational toolkit offers increasingly powerful means to navigate the vast compositional space and identify promising candidates with unprecedented efficiency and accuracy. The computational bottleneck, while still present, is becoming increasingly permeable to innovative methodologies that combine physical insight with data-driven learning.

The discovery of new materials, particularly thermodynamically stable compounds, has traditionally been a painstakingly slow process guided by intuition and trial-and-error experimentation. This paradigm has undergone a seismic shift with the emergence of materials databases as the foundational enabler of artificial intelligence (AI) in materials science. These curated repositories of computed and experimental properties provide the essential training data that fuels machine learning models, transforming the discovery pipeline from a artisanal craft to a high-throughput computational science. The integration of these databases with AI has created a powerful synergy, enabling researchers to navigate the vast combinatorial space of potential materials with unprecedented efficiency and precision.

The critical importance of this data foundation becomes evident when considering the challenge of predicting thermodynamic stability—a fundamental requirement for synthesizing practical materials. While AI can rapidly generate thousands of candidate structures with desired properties, the accuracy of these predictions hinges entirely on the quality and scope of the underlying training data [15]. Materials databases have thus become the bedrock upon which computational discovery research is built, serving not merely as archival repositories but as active instruments driving scientific progress.

The Ecosystem of Materials Databases

The landscape of materials databases has evolved significantly since the 2011 Materials Genome Initiative spurred the development of computational materials databases using quantum mechanical modeling approaches like density functional theory (DFT) [15]. Today's ecosystem comprises multiple complementary resources that collectively provide comprehensive coverage of inorganic compounds and their properties.

Major Computational Databases

Leading computational databases have pioneered the large-scale systematic characterization of materials properties through high-throughput DFT calculations. These initiatives share the common goal of accelerating materials design but employ distinct methodologies and focus areas.

Table 1: Major Open-Access Computational Materials Databases

| Database Name | Primary Focus | Notable Features | Key Applications |

|---|---|---|---|

| Materials Project (MP) | Quantum-mechanical properties of inorganic compounds | ~200,000 entries; REST API for data access [15] [16] | Stability prediction, property screening [6] |

| Open Quantum Materials Database (OQMD) | Thermodynamic stability of inorganic crystals | DFT-calculated formation energies, phase diagrams | Stability analysis, materials discovery [6] |

| AFLOW | High-throughput computational materials science | Automated calculation workflows; extensive property data | Crystal structure prediction, property analysis [16] |

| GNoME (Graph Networks for Materials Exploration) | Novel stable crystal structures | 2.2 million predicted structures including 380,000 stable candidates [17] | Expansion of known chemical space, stability prediction [18] |

Specialized and Experimental Databases

Beyond comprehensive computational repositories, specialized databases have emerged to address specific material classes or incorporate experimental data. The Northeast Materials Database (NEMAD), for instance, focuses specifically on magnetic materials, containing 67,573 entries with detailed structural and magnetic properties extracted from scientific literature using large language models [19]. This database includes critical experimental properties such as Curie temperatures, coercivity, and magnetization, enabling machine learning models to predict magnetic behavior with significantly higher accuracy than possible with DFT alone [19].

The movement toward database integration and standardization represents another critical advancement. The OPTIMADE consortium has developed a standardized API that provides unified access to multiple materials databases, addressing the previous fragmentation in the ecosystem [16]. This initiative brings together major databases including Materials Project, AFLOW, OQMD, and the Crystallography Open Database, creating a federated network that dramatically improves accessibility for researchers [16].

Database-Driven AI Methodologies for Stability Prediction

The integration of materials databases with AI has enabled sophisticated methodologies for predicting thermodynamic stability—a crucial filter in materials discovery. The following experimental protocol exemplifies how researchers leverage these data resources to identify novel stable compounds.

Ensemble Machine Learning Framework for Stability Prediction

Recent advances have demonstrated the power of ensemble approaches that combine multiple models to mitigate individual biases and improve prediction accuracy. The Electron Configuration models with Stacked Generalization (ECSG) framework exemplifies this methodology [6].

Table 2: Machine Learning Models in the ECSG Ensemble Framework

| Model | Domain Knowledge Basis | Architecture | Strengths |

|---|---|---|---|

| Magpie | Atomic properties (atomic number, mass, radius) | Gradient-boosted regression trees (XGBoost) | Captures elemental diversity through statistical features [6] |

| Roost | Interatomic interactions | Graph neural networks with attention mechanism | Learns relationships between atoms in crystal structure [6] |

| ECCNN (Electron Configuration CNN) | Electron configuration | Convolutional neural network | Incorporates fundamental electronic structure with minimal bias [6] |

Experimental Protocol: Ensemble Prediction of Thermodynamic Stability

Data Acquisition and Preprocessing: Extract formation energies and structural information for stable and unstable compounds from the Materials Project, OQMD, or JARVIS databases. The dataset must include decomposition energies (ΔH_d) calculated with reference to the convex hull of stable phases [6].

Feature Engineering:

- For Magpie: Compute statistical features (mean, variance, range, etc.) across elemental properties for each compound [6].

- For Roost: Represent crystal structures as complete graphs with atoms as nodes and implement message-passing between neighboring atoms [6].

- For ECCNN: Encode electron configuration information as a 118×168×8 matrix representing the electron distribution across energy levels for each element [6].

Model Training and Stacked Generalization:

- Independently train each base model (Magpie, Roost, ECCNN) on the training dataset.

- Use the predictions from these base models as input features for a meta-learner (typically a simpler model like logistic regression or shallow neural network) [6].

- Train the meta-learner to produce final stability predictions, effectively learning how to weight the contributions of each base model.

Validation and Performance Assessment: Evaluate model performance using the Area Under the Curve (AUC) metric, with high-performing ensembles achieving AUC scores of 0.988 as demonstrated in recent implementations [6]. Cross-validation against held-out test sets and external databases ensures generalizability.

This ensemble approach demonstrates remarkable data efficiency, requiring only one-seventh of the data needed by single models to achieve comparable performance—a significant advantage when exploring uncharted compositional spaces [6].

Database-Driven AI Discovery Workflow

Case Study: Discovering Stable Superconducting Hydrides

The power of this database-AI synergy is exemplified by the search for thermodynamically stable ambient-pressure superconducting hydrides. Researchers recently screened the GNoME database, which contains thousands of predicted stable hydrides, using a multi-stage computational workflow [18]:

Initial Filtering: 851 cubic hydrides with fewer than 40 atoms per primitive cell were selected from the GNoME database based on structural simplicity and potential synthesizability [18].

DFT Pre-screening: Spin-polarized DFT calculations identified 261 nonmagnetic metallic hydrides from the initial set, as metallic behavior is prerequisite for conventional superconductivity [18].

Machine Learning Prioritization: An Atomistic Line Graph Neural Network (ALIGNN) model predicted superconducting critical temperatures (Tc), prioritizing compounds with Tc ≥ 5 K for further analysis [18].

High-Fidelity Validation: Density functional perturbation theory (DFPT) calculations with the Allen-Dynes formula provided reliable Tc estimates, ultimately identifying 22 thermodynamically stable cubic hydrides with Tc exceeding 4.2 K [18].

This methodology successfully identified promising candidates like cubic LiZrH₆Ru, a vacancy-ordered double perovskite structure with a predicted T_c of 17 K at ambient pressure—making it both stable and potentially technologically relevant [18].

Researchers working at the intersection of AI and materials science rely on a sophisticated toolkit of computational resources, databases, and software frameworks. The table below details key solutions essential for conducting cutting-edge research in computationally discovered thermodynamically stable compounds.

Table 3: Essential Research Tools and Resources for AI-Driven Materials Discovery

| Tool/Resource | Type | Primary Function | Application in Stability Research |

|---|---|---|---|

| OPTIMADE API | Standardized API | Unified interface for querying multiple materials databases [16] | Accessing consistent materials data across different repositories for model training |

| Density Functional Theory (DFT) | Computational Method | First-principles calculation of electronic structure and energy [6] | Determining formation energies and decomposition energies for stability assessment |

| GNoME Database | Materials Database | Repository of millions of predicted crystal structures [18] [17] | Source of novel candidate materials for stability screening and validation |

| Stacked Generalization Framework | ML Methodology | Ensemble machine learning combining multiple models [6] | Improving stability prediction accuracy by reducing individual model biases |

| ALIGNN Model | Machine Learning Model | Graph neural network for materials property prediction [18] | Predicting superconducting critical temperatures and other properties for screening |

| High-Throughput Virtual Screening | Computational Workflow | Automated rapid assessment of material properties [20] | Efficiently evaluating thousands of candidates from databases before experimental synthesis |

Challenges and Future Directions

Despite significant progress, substantial challenges remain in fully leveraging materials databases for AI-driven discovery. The synthesis bottleneck represents perhaps the most significant hurdle; while AI can predict thousands of stable compounds, most remain computationally discovered but experimentally unrealized [15]. This challenge stems from the critical distinction between thermodynamic stability and synthesizability—a material may be stable but lack a viable kinetic pathway for its formation under practical conditions [15].

The problem is fundamentally one of data scarcity and bias. Existing databases predominantly contain successful synthesis outcomes, while failed attempts—crucial negative training data—are rarely published or systematically recorded [15]. This limitation is particularly acute for synthesis optimization, where the relevant experimental parameters (precursor quality, heating rates, atmospheric conditions) operate across vast temporal and spatial scales that challenge both simulation and data collection [15].

Future progress depends on addressing several key frontiers:

Comprehensive Synthesis Databases: Developing standardized databases that capture both successful and failed synthesis attempts, including detailed procedural parameters [15].

Autonomous Laboratories: Implementing self-driving laboratories that integrate AI-guided prediction with robotic synthesis and characterization, creating closed-loop discovery systems [21].

Explainable AI: Developing interpretable models that provide physical insights alongside predictions, building researcher trust and enabling scientific discovery rather than black-box optimization [21].

Improved Multi-scale Modeling: Advancing simulation capabilities to bridge the vast gap between atomic-scale predictions and experimental synthesis conditions [15].

Future Vision: Closed-Loop Materials Discovery

Materials databases have fundamentally transformed the landscape of materials discovery, evolving from passive repositories to active engines driving AI-enabled innovation. By providing the essential data foundation for machine learning models, these databases have enabled researchers to navigate the vast combinatorial space of potential materials with unprecedented efficiency, particularly in the critical domain of thermodynamically stable compounds. The continued integration of computational databases with experimental characterization, synthesis protocols, and automated laboratories promises to further accelerate this transformation, ultimately closing the loop between prediction and realization. As these resources continue to grow in scope and sophistication, they will undoubtedly unlock new frontiers in the development of advanced materials for sustainable technologies, energy solutions, and next-generation electronics, firmly establishing data as the catalyst of the modern materials revolution.

The discovery of new, thermodynamically stable compounds is a fundamental objective in materials science and drug development. The vastness of possible chemical spaces makes experimental trial-and-error approaches prohibitively expensive and time-consuming. Computational models have therefore become indispensable for constraining this exploration space and identifying the most promising candidates for synthesis [6]. These models primarily fall into two categories: composition-based models and structure-based models. The distinction lies in the type of input data they utilize; composition-based models predict properties using only the chemical formula, whereas structure-based models additionally require the three-dimensional atomic arrangement. Within the context of discovering stable compounds, thermodynamic stability is typically assessed through the decomposition energy (ΔHd), which represents the energy difference between a compound and its most stable competing phases on the convex hull of a phase diagram [6]. This whitepaper provides an in-depth technical guide to these two modeling paradigms, detailing their underlying principles, methodologies, and applications in computational discovery research.

Defining the Modeling Paradigms

Composition-Based Models

Composition-based models predict material properties, such as formation energy or thermodynamic stability, based solely on the chemical formula. They do not require any information about the atomic-scale structure of the material.

- Input Data: The primary input is the elemental composition (e.g., NaCl, Fe₂O₃). This information is then transformed into a machine-readable format using features derived from domain knowledge [6].

- Core Principle: These models operate on the assumption that the chemical composition is the primary determinant of a material's properties. The significant advantage is that for novel materials, the composition can be known a priori and used for screening before any structural information is available [6].

- Common Feature Sets: To overcome the limited information in a raw formula, hand-crafted features are used. These can include:

- Elemental Properties: Statistical summaries (mean, range, mode, etc.) of properties like atomic radius, electronegativity, and valence electron count for the elements in the compound [6].

- Electron Configuration (EC): The distribution of electrons in atomic energy levels, which is a fundamental atomic characteristic used in first-principles calculations [6].

Structure-Based Models

Structure-based models incorporate the three-dimensional atomic coordinates of a compound, providing a more complete physical description by accounting for atomic bonding and geometric arrangement.

- Input Data: These models require a crystal structure, including the unit cell, atomic positions, and the species of each atom.

- Core Principle: The stability and properties of a material are determined not only by its constituent elements but also by how those atoms are arranged and bonded in space. The atomic structure directly influences the potential energy surface of the system [22].

- Common Structural Representations:

- Graph-Based Representations: The crystal structure is treated as a graph, where atoms are nodes and chemical bonds are edges. Graph Neural Networks (GNNs) can then learn from this representation by passing messages between connected atoms [22].

- Coordinate-Based Inputs: Atomic coordinates are used directly, often in conjunction with interatomic potentials, to calculate the system's total energy and forces [22].

The choice between composition-based and structure-based approaches involves trade-offs between computational cost, data requirements, and predictive accuracy. The table below summarizes the core distinctions between these two paradigms.

Table 1: Key Differences Between Composition-Based and Structure-Based Models

| Feature | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Primary Input | Chemical formula | Atomic coordinates and crystal structure |

| Information Completeness | Lower | Higher |

| Typical Applications | High-throughput screening of compositional space, initial stability prediction [6] | Accurate property prediction, crystal structure prediction, studying defect properties [22] |

| Computational Cost | Very low (post-training) | High (requires energy calculations or complex graph processing) |

| Data Dependency | Can work with only composition data | Requires structural data, which can be scarce for novel materials |

| Handling of Novel Materials | Directly applicable to any chemical formula | Structural information for unexplored compounds is often unknown |

| Example Techniques | Magpie (elemental statistics), Roost (graph from formula), ECCNN (electron configuration) [6] | Graph Neural Networks (GNNs) for formation energy, empirical potential functions [22] |

A critical performance metric for classification models predicting stability is the Area Under the Curve (AUC). Advanced ensemble composition-based models have demonstrated an AUC of 0.988 on benchmark datasets, indicating a very high ability to distinguish stable from unstable compounds [6]. Furthermore, such models can achieve high accuracy with significantly less data than earlier models, requiring only about one-seventh of the data to match the performance of their predecessors [6].

Methodologies and Experimental Protocols

This section outlines standard protocols for developing and applying both types of models, drawing from recent research.

Protocol for Composition-Based Stability Prediction

The following workflow is adapted from state-of-the-art ensemble machine learning frameworks for predicting thermodynamic stability [6].

Data Collection and Curation

- Source: Extract compounds and their labeled stability (e.g., stable/unstable or formation energy) from large materials databases such as the Materials Project (MP) or the Open Quantum Materials Database (OQMD) [6].

- Preprocessing: Standardize chemical formulas and resolve data inconsistencies.

Feature Engineering

- Generate a diverse set of features from the chemical composition to create a rich input vector. Common approaches include:

- Magpie Features: Calculate statistical features (mean, standard deviation, range, etc.) for a suite of elemental properties for all elements in the compound [6].

- Electron Configuration (EC) Encoding: Encode the electron configuration of each element present into a fixed-size matrix, which can then be processed by a convolutional neural network (CNN) [6].

- Generate a diverse set of features from the chemical composition to create a rich input vector. Common approaches include:

Model Training and Ensemble Construction

- Train multiple base models, each leveraging different feature sets or algorithms to ensure diversity and reduce individual model bias:

- Model 1: Train a model like Magpie using gradient-boosted regression trees on elemental property statistics.

- Model 2: Train a graph-based model like Roost, which represents the formula as a graph of elements.

- Model 3: Train a custom model like ECCNN on electron configuration matrices.

- Use a stacked generalization technique to combine these base models. The outputs of the base models become the input features for a meta-learner (e.g., a linear model or another classifier), which produces the final stability prediction [6].

- Train multiple base models, each leveraging different feature sets or algorithms to ensure diversity and reduce individual model bias:

Validation

- Validate the model's performance on held-out test sets using metrics like AUC. Apply the trained model to unexplored composition spaces and validate top predictions with first-principles calculations like Density Functional Theory (DFT) [6].

Protocol for Structure-Based Stability Assessment

This protocol describes an approach that combines machine learning with empirical potentials for stable crystal structure prediction [22].

Data Preparation

- Acquire crystal structures from databases like the Cambridge Structural Database (CSD) or the MP.

- For each structure, calculate or retrieve target properties such as formation energy.

Formation Energy Prediction with a Graph Neural Network (GNN)

- Representation: Convert each crystal structure into a graph where atoms are nodes and edges represent interatomic bonds or proximity.

- Model Training: Train a GNN to predict the formation energy of the crystal structure directly from its graph representation. The GNN learns to aggregate information from neighboring atoms to make an accurate prediction [22].

Stability Assessment using Empirical Potentials

- Calculate the Lennard-Jones (L-J) potential or other relevant empirical potentials for the crystal structure. The L-J potential assesses the van der Waals interactions and provides insight into the dynamic stability of the structure. A value approaching zero is often indicative of a stable configuration [22].

- Contact Map Analysis: Analyze the bonding situation between atoms in the crystal using a contact map to screen for structurally sound and stable materials [22].

Structure Search and Optimization

- Employ a Bayesian optimization algorithm to search for crystal structures that simultaneously exhibit low (negative) predicted formation energy from the GNN and a Lennard-Jones potential near zero [22]. This dual requirement ensures both thermodynamic and dynamic stability.

Visualization of Workflows

The following diagrams illustrate the logical flow and key components of the two modeling approaches.

Composition-Based Model Workflow

Structure-Based Model Workflow

The following table details key computational tools, databases, and algorithms used in the development and application of stability prediction models.

Table 2: Key Resources for Computational Stability Prediction

| Resource Name | Type | Function in Research |

|---|---|---|

| Materials Project (MP) [6] | Database | Provides a vast repository of computed crystal structures and their properties, including formation energy and stability, for training and benchmarking models. |

| Open Quantum Materials Database (OQMD) [6] | Database | Another comprehensive source of calculated thermodynamic and structural data for inorganic crystals, used as a training data source. |

| Graph Neural Network (GNN) [22] | Algorithm | A type of neural network that operates directly on graph-structured data, ideal for learning from crystal structures by modeling atomic interactions. |

| Stacked Generalization [6] | Machine Learning Technique | An ensemble method that combines multiple base models (learners) through a meta-learner to improve overall predictive accuracy and reduce bias. |

| Density Functional Theory (DFT) [6] | Computational Method | Used as a high-accuracy (but computationally expensive) benchmark to validate the stability predictions made by machine learning models. |

| Bayesian Optimization [22] | Algorithm | An efficient strategy for global optimization of black-box functions, used to search for crystal structures with optimal stability properties. |

| Lennard-Jones Potential [22] | Empirical Potential | A simple model describing the potential energy of interaction between a pair of atoms, used to assess the dynamic stability of a predicted crystal structure. |

Advanced Computational Methodologies: From Ensemble ML to High-Throughput Workflows

The discovery of new, thermodynamically stable compounds is a fundamental challenge in materials science and drug development. The compositional space of potential inorganic materials alone is estimated to be on the order of 10^10 quaternary compositions, while known stable solids number only in the hundreds of thousands, creating a proverbial "needle-in-a-haystack" discovery problem [23]. Conventional approaches to assessing thermodynamic stability through density functional theory (DFT) calculations, while accurate, consume substantial computational resources, yielding low efficiency in exploring new compounds [6].

Machine learning (ML) offers a promising avenue for expediting this discovery process by rapidly predicting thermodynamic stability. However, most existing ML models are constructed based on specific domain knowledge or idealized scenarios, potentially introducing significant inductive biases that limit their predictive performance and generalization capabilities [6]. For instance, models that assume material performance is solely determined by elemental composition may introduce large inductive bias, reducing effectiveness in predicting stability [6].

This technical guide explores the Electron Configuration models with Stacked Generalization (ECSG) framework, an ensemble machine learning approach that addresses these limitations by amalgamating models rooted in distinct domains of knowledge. By mitigating individual model biases through stacked generalization, the ECSG framework demonstrates exceptional accuracy and sample efficiency in predicting compound stability, opening new avenues for accelerated materials discovery and optimization in pharmaceutical and energy applications.

The Thermodynamic Stability Prediction Challenge

Defining Thermodynamic Stability

The thermodynamic stability of a material is quantitatively defined by its decomposition enthalpy (ΔHd), which represents the total energy difference between a given compound and competing compounds in a specific chemical space [6]. This metric is determined through a convex hull construction in formation enthalpy (ΔHf)-composition space, where stable compositions lie on the lower convex enthalpy envelope (the convex hull), and unstable compositions lie above it [23].

The critical distinction between formation energy (ΔHf) and decomposition energy (ΔHd) is essential for understanding the prediction challenge. While ΔHf quantifies the energy of compound formation from its elements, ΔHd arises from competition between ΔHf values for all compounds within a chemical space and typically spans a much smaller energy range (0.06 ± 0.12 eV/atom) compared to ΔHf (-1.42 ± 0.95 eV/atom) [23]. This makes ΔH_d a more sensitive and subtle quantity to predict, despite being the ultimate determinant of thermodynamic stability.

Limitations of Current Machine Learning Approaches

Current ML approaches for stability prediction face several significant limitations:

Compositional Model Deficiencies: Composition-based models that rely solely on chemical formula without structural information often perform poorly on predicting compound stability, making them considerably less useful than DFT for discovery and design of new solids [23].

Single-Model Bias: Models built on a single hypothesis or idealized scenario may introduce large inductive biases, as the ground truth may lie outside the model's parameter space [6].

Error Propagation: While ML models can predict formation energies with accuracy approaching DFT error, they lack the systematic error cancellation that benefits DFT when making stability predictions [23].

Data Imbalance Challenges: The extreme imbalance between stable and unstable compositions in chemical space leads to biased models that struggle to identify the rare stable compounds [24].

Ensemble Learning Foundations

Ensemble learning is a methodological framework that combines multiple models to produce better predictive performance than could be obtained from any individual constituent model. The core principle is that by aggregating predictions from diverse models, the ensemble can reduce variance, minimize bias, and improve generalization [25].

The ECSG framework employs stacked generalization, an advanced ensemble technique that combines multiple different models (often of different types) by using their predictions as inputs to a final meta-model. This meta-model learns how to best combine the base models' predictions, aiming for better performance than any individual model [25]. The theoretical foundation rests on the concept that models grounded in different knowledge domains or assumptions will exhibit different error distributions, and a learned combination can capitalize on their complementary strengths.

The ECSG Framework: Architecture and Implementation

The ECSG framework employs a stacked generalization architecture that integrates three base models rooted in distinct domains of knowledge: Magpie, Roost, and ECCNN. This multi-scale approach ensures complementarity by incorporating domain knowledge from interatomic interactions, atomic properties, and electron configurations [6].

Base Model Specifications

Magpie: Atomic Property Statistics

The Magpie model emphasizes statistical features derived from various elemental properties, including atomic number, atomic mass, atomic radius, electronegativity, and valence states [6]. For each elemental property, Magpie calculates six statistical measures across the composition:

- Mean: Average value of the property across elements

- Mean Absolute Deviation: Average absolute difference from the mean

- Range: Difference between maximum and minimum values

- Minimum: Smallest value in the composition

- Maximum: Largest value in the composition

- Mode: Most frequently occurring value

These feature vectors are then processed using gradient-boosted regression trees (XGBoost) to predict stability [6]. This approach captures the diversity of elemental characteristics within materials, providing broad descriptive information for thermodynamic property prediction.

Roost: Graph Neural Networks for Interatomic Interactions

Roost (Representation Learning from Stoichiometry) conceptualizes the chemical formula as a complete graph of elements, employing message-passing graph neural networks to learn relationships among atoms [6]. The architecture incorporates an attention mechanism to capture the varying strengths of interatomic interactions that critically determine thermodynamic stability.

Key implementation details:

- Graph Representation: Atoms represented as nodes, with edges representing possible interactions

- Message Passing: Information exchange between nodes updates feature representations

- Attention Mechanism: Learns relative importance of different atomic interactions

- Compositional Readout: Aggregates node features into a compositional representation

This approach avoids manual feature engineering by directly learning relevant representations from stoichiometric information [23].

ECCNN: Electron Configuration Convolutional Neural Network

The Electron Configuration Convolutional Neural Network (ECCNN) is a novel architecture developed specifically for the ECSG framework to address the limited understanding of electronic internal structure in existing models. The model input is a matrix of dimensions 118 × 168 × 8, encoded from the electron configuration of materials [6].

Table: ECCNN Architecture Specifications

| Layer Type | Parameters | Activation | Output Shape |

|---|---|---|---|

| Input Layer | 118×168×8 electron configuration matrix | - | 118×168×8 |

| 2D Convolution | 64 filters, 5×5 kernel | ReLU | 118×168×64 |

| 2D Convolution | 64 filters, 5×5 kernel | ReLU | 118×168×64 |

| Batch Normalization | - | - | 118×168×64 |

| Max Pooling | 2×2 pool size | - | 59×84×64 |

| Flatten | - | - | 316,224 |

| Fully Connected | 256 units | ReLU | 256 |

| Output Layer | 1 unit (stability prediction) | Linear | 1 |

The electron configuration input delineates the distribution of electrons within an atom, encompassing energy levels and electron counts at each level. This information is crucial for understanding chemical properties and reaction dynamics, and serves as a fundamental input for first-principles calculations [6].

Meta-Model and Training Protocol

The meta-model in the ECSG framework employs stacked generalization to combine predictions from the three base models. The training process follows a two-stage procedure:

Stage 1: Base Model Training

- Train each base model (Magpie, Roost, ECCNN) independently on the training dataset

- Use k-fold cross-validation to generate out-of-fold predictions for the meta-training set

- Preserve model weights and architectures for final ensemble

Stage 2: Meta-Model Training

- Use base model predictions as input features for the meta-model

- Train meta-model (typically a linear model or simple neural network) to learn optimal combination

- Validate on holdout set to prevent overfitting

The complete framework is implemented in Python using PyTorch or TensorFlow for deep learning components and scikit-learn for traditional ML components.

Experimental Results and Performance Analysis

Quantitative Performance Metrics

The ECSG framework was rigorously evaluated against individual base models and existing approaches using datasets from the Materials Project and JARVIS database [6]. Performance was assessed using multiple metrics with a focus on stability prediction accuracy.

Table: Comparative Performance of Stability Prediction Models

| Model | AUC Score | MAE (eV/atom) | Data Efficiency | Stable Compound Identification Accuracy |

|---|---|---|---|---|

| ECSG (Ensemble) | 0.988 | 0.06 | 1/7 of data for same performance | 94.2% |

| ECCNN Only | 0.972 | 0.08 | Baseline | 89.5% |

| Roost Only | 0.961 | 0.09 | 1/2 of data for same performance | 85.7% |

| Magpie Only | 0.947 | 0.11 | 1/3 of data for same performance | 82.3% |

| ElemNet | 0.932 | 0.14 | Requires full dataset | 78.6% |

| Traditional DFT | N/A | 0.02-0.05 | N/A | 99.9% |

The ECSG framework demonstrated exceptional sample efficiency, achieving equivalent accuracy with only one-seventh of the data required by existing models [6]. This has significant implications for exploring novel chemical spaces where data is scarce.

Case Study: Two-Dimensional Wide Bandgap Semiconductors

In application to two-dimensional wide bandgap semiconductors, the ECSG framework successfully identified 17 previously unreported stable compounds from a candidate set of 2,348 compositions. Subsequent validation using DFT calculations confirmed stability in 15 of the 17 predictions, demonstrating remarkable accuracy in correctly identifying stable compounds [6].

The workflow for this case study followed a systematic approach:

- Candidate Generation: Enumerate possible compositions within defined elemental constraints

- Stability Screening: Apply ECSG framework to predict decomposition enthalpy

- Candidate Selection: Filter compositions predicted to be stable (ΔH_d < 0.05 eV/atom)

- DFT Validation: Perform first-principles calculations to confirm stability

This approach reduced the computational cost of screening by approximately 90% compared to pure DFT-based discovery while maintaining high predictive accuracy.

Case Study: Double Perovskite Oxides

In exploration of double perovskite oxides for photovoltaic applications, the ECSG framework screened over 5,000 candidate compositions and identified 43 promising stable materials. Validation through DFT calculations confirmed 38 of these as thermodynamically stable, representing a significant expansion of known stable double perovskite phases [6].

The framework particularly excelled in identifying stability trends related to B-site cation ordering and oxygen octahedral distortions, demonstrating its ability to capture subtle structural-compositional relationships without explicit structural input.

Research Reagent Solutions

Implementing the ECSG framework requires both computational tools and data resources. The following table outlines essential components for experimental replication and application.

Table: Essential Research Reagents for ECSG Implementation

| Resource Category | Specific Tools/Resources | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Computational Frameworks | PyTorch, TensorFlow | Deep learning model implementation | ECCNN and Roost implementation |

| scikit-learn | Traditional ML algorithms | Magpie model and meta-model | |

| XGBoost | Gradient boosted trees | Magpie model training | |

| Data Resources | Materials Project (MP) Database | Training data and validation | ~85,000 inorganic crystals with DFT calculations [23] |

| JARVIS Database | Benchmarking and validation | Includes stability data for evaluation [6] | |

| OQMD Database | Additional training data | Expands compositional diversity | |

| Feature Engineering | pymatgen | Materials analysis | Electron configuration featurization |

| Magpie feature sets | Atomic property descriptors | 145 elemental properties with statistics [6] | |

| Validation Tools | DFT codes (VASP, Quantum ESPRESSO) | First-principles validation | Ground truth stability assessment [6] |

| PHONOPY | Lattice dynamics | Dynamic stability assessment |

Methodological Protocols

Data Preprocessing and Feature Engineering

The electron configuration encoding for the ECCNN model follows a specific protocol to transform compositional information into the input matrix:

Elemental Electron Configuration Representation:

- For each element, generate a complete electron configuration notation

- Map orbital occupations to a standardized feature vector

- Account for all possible orbitals up to n=7 with s, p, d, f subshells

Compositional Encoding:

- For a given composition, calculate weighted electron configurations based on stoichiometry

- Generate a 118×168×8 tensor representing the complete electron configuration landscape

- Apply normalization to account for compositional variations

Feature Scaling:

- Use MinMaxScaler to normalize features to [0,1] interval: X_normalize = (x - min)/(max - min) [26]

- Mitigate disparity in feature scales to promote equitable weight distribution

- Enhance model performance and training efficiency

Model Training and Optimization

The training protocol for the complete ECSG framework involves coordinated optimization of multiple components:

Hyperparameter Optimization Strategy:

- ECCNN: Learning rate (1e-4 to 1e-3), filter sizes (3×3 to 7×7), number of filters (32-128)

- Roost: Message-passing steps (3-10), hidden dimension (64-256), attention heads (4-16)

- Magpie: Tree depth (3-10), learning rate (0.01-0.3), number of estimators (100-1000)

- Meta-Model: Regularization strength, combination weights

Training uses k-fold cross-validation with k=5 to prevent overfitting and ensure robust performance estimation.

Validation and Interpretation Protocols

Model validation follows a multi-tiered approach to ensure predictive reliability:

- Holdout Validation: Reserve 20% of data for final performance assessment

- Cross-Validation: 5-fold cross-validation for hyperparameter tuning

- External Dataset Validation: Application to novel chemical spaces not represented in training data

- DFT Validation: First-principles calculations for promising candidates

For model interpretation, the framework employs SHapley Additive exPlanations (SHAP) to identify critical features governing stability predictions [26]. In perovskite stability analysis, for instance, the third ionization energy of the B element and electron affinity of ions at the X site emerge as critically important features [26].

The ECSG framework represents a significant advancement in computational prediction of thermodynamically stable compounds through its innovative use of ensemble machine learning and stacked generalization. By integrating models grounded in diverse knowledge domains—atomic properties (Magpie), interatomic interactions (Roost), and electron configurations (ECCNN)—the framework effectively mitigates individual model biases while capitalizing on complementary strengths.

With an AUC score of 0.988 in predicting compound stability and requiring only one-seventh of the data used by existing models to achieve equivalent performance, the ECSG framework offers unprecedented efficiency in materials discovery [6]. Its successful application in identifying new two-dimensional wide bandgap semiconductors and double perovskite oxides, validated through first-principles calculations, demonstrates both its practical utility and remarkable accuracy.

For researchers and drug development professionals, this framework provides a powerful tool for navigating unexplored composition spaces, significantly accelerating the discovery of stable compounds for pharmaceutical, energy, and electronic applications. The reduced computational cost and enhanced predictive accuracy open new possibilities for high-throughput materials design and optimization, potentially transforming approaches to computational materials discovery.

The discovery of new, thermodynamically stable compounds is a fundamental challenge in materials science and drug development. Traditional experimental approaches and even first-principles computational methods like Density Functional Theory (DFT) consume substantial resources, yielding low efficiency in exploring vast compositional spaces [6]. Within this context, electron configuration represents an intrinsic atomic property that provides a foundational descriptor for predicting material stability and properties without introducing significant inductive biases. Electron configurations describe the arrangement of electrons around an atomic nucleus, summarizing where electrons are located within specific orbital shells and subshells [27]. This configuration is crucial because it determines an element's chemical behavior, including how it forms bonds and the stability of the resulting compounds.

The valence electrons, located in the outermost shell, serve as the primary determining factor for an element's unique chemistry [28]. As the electron configuration dictates how atoms interact and form chemical bonds, it follows that configurations yielding lower energy, more stable states would correlate strongly with thermodynamic stability in compounds. Historically, the role of stable electron configurations in governing the properties of chemical elements and compounds has been recognized for decades [29]. What has recently transformed the field is the ability to incorporate these fundamental atomic descriptors into machine learning frameworks for accelerated computational discovery, creating powerful predictive tools that leverage both physical principles and statistical learning.

Theoretical Foundation of Electron Configurations

Fundamental Principles and Notation

The electron configuration of an atom represents the distribution of electrons among the orbital shells and subshells [28]. This arrangement follows specific quantum mechanical principles that dictate how electrons occupy available energy states:

- Aufbau Principle: Electrons fill orbitals in order of increasing energy, starting with the lowest energy orbitals first. The typical filling order follows: 1s, 2s, 2p, 3s, 3p, 4s, 3d, 4p, 5s, 4d, 5p, 6s, 4f, 5d, 6p, 7s, 5f, 6d, and 7p [28].

- Pauli Exclusion Principle: No two electrons can have the same set of four quantum numbers. Each orbital can hold a maximum of two electrons with opposite spins [28].

- Hund's Rule: When filling degenerate orbitals (orbitals of equal energy), electrons will occupy empty orbitals singly before pairing up [27].

The notation for writing electron configurations begins with the shell number (n) followed by the type of orbital (s, p, d, or f), with a superscript indicating the number of electrons in that orbital. For example, oxygen with 8 electrons has the configuration: 1s²2s²2p⁴ [27]. For heavier elements, a shorthand notation uses the previous noble gas to represent the core electrons. For instance, phosphorus (15 electrons) can be written as [Ne] 3s²3p³, where [Ne] represents the electron configuration of neon (1s²2s²2p⁶) [30].

Relationship to Periodic Properties

Electron configurations directly determine periodic properties that influence chemical behavior and compound stability:

- Atomic Size: The size of atoms increases down the periodic table as additional electron shells are added. Across a period, atomic size decreases due to increasing effective nuclear charge (Z_eff = #protons - Core # Electrons) pulling electrons closer to the nucleus [27].

- Electronegativity: This property, measuring an atom's ability to attract electrons, increases from left to right and bottom to top in the periodic table (excluding noble gases), with fluorine being the most electronegative element [27].

- Ionization Energy: The energy required to remove an electron follows the same trend as electronegativity, with higher ionization energies for more electronegative elements [27].

Table 1: Electron Capacity of Orbital Types

| Orbital Type | Number of Orbitals | Maximum Electron Capacity |

|---|---|---|

| s | 1 | 2 |

| p | 3 | 6 |

| d | 5 | 10 |

| f | 7 | 14 |

These periodic properties, derived from electron configurations, provide crucial insights into how elements will interact and form stable compounds, making them invaluable descriptors for predictive modeling in materials discovery.

Electron Configuration as a Descriptor for Thermodynamic Stability

Advantages Over Traditional Approaches

Traditional methods for determining thermodynamic stability of compounds rely heavily on constructing convex hulls using formation energies derived from experimental data or DFT calculations [6]. These approaches, while valuable, are characterized by inefficiency and consume substantial computational resources. The computation of energy via these methods consumes substantial computation resources, thereby yielding low efficiency and limited efficacy in exploring new compounds [6]. Machine learning approaches trained on existing materials databases have emerged as a promising alternative, enabling rapid and cost-effective predictions of compound stability [6].

However, many existing machine learning models introduce significant biases through their assumptions about material composition and structure. For instance, models that rely solely on elemental composition or assume specific structural relationships may introduce large inductive biases that limit their predictive accuracy and generalizability [6]. Electron configuration as a descriptor offers distinct advantages by representing an intrinsic atomic characteristic that underlies the fundamental chemical behavior of elements. Unlike manually crafted features, electron configuration stands as an intrinsic characteristic that may introduce less inductive biases [6]. By capturing the electronic structure that governs atomic interactions, electron configuration provides a more physically grounded foundation for predicting compound stability.

Mechanistic Rationale for Stability Prediction