Composition vs. Structure: A Comparative Guide to Stability Models in Drug Development

This article provides a comprehensive comparison of composition-based and structure-based models for predicting molecular stability, a critical factor in drug discovery and development.

Composition vs. Structure: A Comparative Guide to Stability Models in Drug Development

Abstract

This article provides a comprehensive comparison of composition-based and structure-based models for predicting molecular stability, a critical factor in drug discovery and development. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles, methodological approaches, and practical applications of both paradigms. The content delves into troubleshooting common challenges, optimizing model performance, and validating predictions through case studies and performance benchmarks. By synthesizing insights from current literature, this guide aims to equip practitioners with the knowledge to select and implement the most effective stability modeling strategies for their specific projects, ultimately accelerating the development of stable and effective therapeutics.

Core Principles: Understanding the Basis of Composition and Structure Models

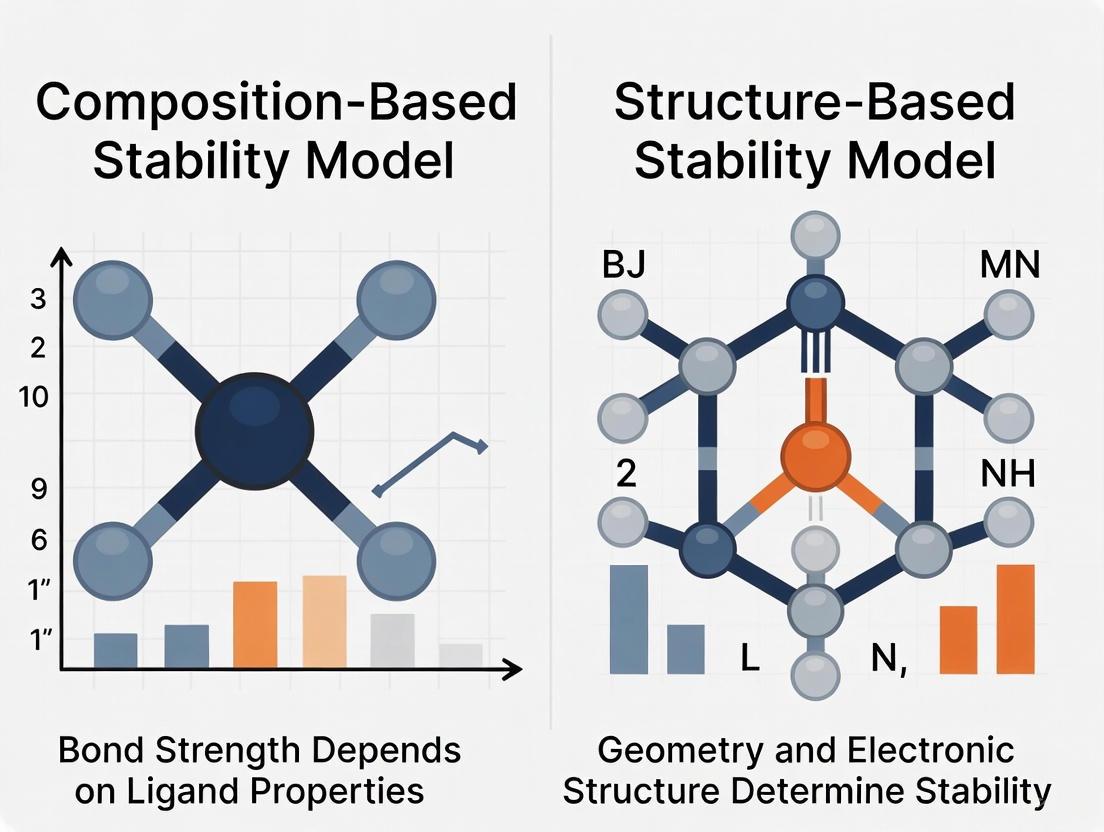

The accelerating discovery of new materials relies heavily on computational models to predict key properties, with a fundamental division existing between two primary approaches: composition-based and structure-based models. Composition-based models predict material properties using only information derived from the chemical formula, such as elemental components and their ratios, without any knowledge of the atomic arrangement in three-dimensional space [1]. In contrast, structure-based models require detailed crystallographic data, including atomic coordinates and bonding information, to make their predictions [2]. This distinction is particularly crucial for exploring uncharted regions of chemical space, where structural information remains unknown and composition-based approaches provide the only feasible path for initial screening [1] [2]. The inputs for composition-based models generally fall into two categories: direct chemical formula representations and engineered features derived from elemental properties, with recent advances in deep learning blurring the lines between these approaches by enabling models to automatically learn relevant features from minimal input data [3].

Experimental Protocols for Model Evaluation

Benchmarking Datasets and Validation Methodologies

To ensure fair and meaningful comparisons between different modeling approaches, researchers typically employ standardized benchmarking datasets and validation protocols. Key datasets used for evaluating stability prediction models include experimentally synthesized compounds from the Inorganic Crystal Structure Database (ICSD) and hypothetical materials from computational databases such as the Materials Project (MP), Open Quantum Materials Database (OQMD), and JARVIS-DFT [4] [2] [3]. For composition-based models specifically, the training process involves using chemical formulas and associated properties from these databases, with careful segregation of training, validation, and test sets to prevent data leakage and ensure generalizability [5].

The most common validation approach is k-fold cross-validation, where the dataset is partitioned into k subsets, with each subset serving as a test set while the remaining k-1 subsets are used for training [3]. For stability prediction, models are typically evaluated on their ability to classify compounds as stable or unstable, with stability often defined by the energy above the convex hull (Ehull)—a computational measure of thermodynamic stability derived from DFT calculations [2]. Performance metrics include mean absolute error (MAE) for regression tasks (e.g., formation energy prediction) and area under the curve (AUC) for classification tasks (e.g., stable/unstable classification), with the latter being particularly important for assessing the model's ability to distinguish between stable and unstable compounds in high-throughput screening scenarios [1].

Composition-Based Model Architectures and Training

Table 1: Overview of Composition-Based Model Architectures

| Model Type | Key Input Features | Representative Algorithms | Primary Applications |

|---|---|---|---|

| Element-Fraction Models | Elemental composition percentages | ElemNet [3], Fully Connected DNNs | Formation energy prediction, stability classification |

| Feature-Engineered Models | Statistical features of elemental properties | Magpie [1], Roost [1] | Thermodynamic stability prediction, property screening |

| Language Model-Based Approaches | Tokenized element sequences | BERTOS [5], MatBERT [4] | Oxidation state prediction, cross-modal knowledge transfer |

| Ensemble/Hybrid Models | Multiple feature representations | ECSG [1], Multimodal transfer learning [4] | High-accuracy stability prediction, exploration of novel compositions |

The experimental workflow for developing composition-based models begins with data preparation and featurization. For simple element-fraction models, this involves representing each compound as a vector of elemental percentages, typically using a one-hot encoding or atomic fraction representation across the periodic table [3]. More advanced feature-engineered approaches calculate statistical metrics (mean, variance, range, etc.) for various elemental properties such as atomic radius, electronegativity, valence electron configuration, and other physicochemical characteristics [1].

The ECCnn model introduces a novel featurization approach by representing electron configuration as a 2D matrix input (118×168×8) that captures the distribution of electrons within an atom across energy levels [1]. This representation enables the application of convolutional neural networks to detect patterns in electronic structure that correlate with material stability and properties.

For transformer-based language models like BERTOS, chemical formulas are tokenized into sequences of element symbols sorted by electronegativity and processed through self-attention mechanisms to predict properties such as oxidation states for all elements in the compound [5]. These models are typically pretrained on large unlabeled datasets of chemical formulas followed by fine-tuning on specific property prediction tasks.

Performance Comparison: Composition-Based vs. Structure-Based Models

Quantitative Benchmarking on Stability Prediction

Table 2: Performance Comparison of Composition-Based and Structure-Based Models on Stability and Property Prediction Tasks

| Model Category | Specific Model | Test Dataset | Performance Metric | Result | Key Advantage |

|---|---|---|---|---|---|

| Composition-Based (Deep Learning) | ElemNet [3] | OQMD (275,759 compositions) | MAE (Formation Enthalpy) | 0.050 eV/atom | No manual feature engineering required |

| Composition-Based (Ensemble) | ECSG [1] | JARVIS | AUC (Stability Classification) | 0.988 | Exceptional data efficiency |

| Composition-Based (Language Model) | BERTOS [5] | ICSD (52,147 samples) | Accuracy (Oxidation State) | 96.82% | Composition-only input for structure-agnostic prediction |

| Structure-Based (Graph Neural Network) | CGCNN [2] | NRELMatDB (15,500 structures) | MAE (Total Energy) | 0.041 eV/atom | Incorporates spatial arrangement information |

| Cross-Modal Transfer | imKT@ModernBERT [4] | LLM4Mat-Bench (20 tasks) | Average MAE Improvement | 15.7% | Leverages knowledge from multiple modalities |

The performance data reveals several key insights about the relative strengths of different modeling approaches. Composition-based models consistently demonstrate strong predictive accuracy while operating with significantly less input information than their structure-based counterparts [1] [3]. The ECSG ensemble framework achieves remarkable data efficiency, requiring only one-seventh of the training data to match the performance of existing models, which is particularly valuable for exploring novel compositional spaces where data is scarce [1].

For specific applications such as oxidation state prediction, composition-based language models like BERTOS achieve exceptional accuracy (96.82% for all elements, 97.61% for oxides) while requiring only chemical formulas as input [5]. This capability is particularly valuable for high-throughput screening of hypothetical material compositions where structural data is unavailable.

Structure-based models, particularly crystal graph neural networks, maintain an advantage for properties strongly dependent on spatial arrangement, with MAEs of approximately 0.04 eV/atom for total energy prediction [2]. However, recent cross-modal knowledge transfer approaches have narrowed this gap by implicitly incorporating structural knowledge into composition-based models through techniques like pretraining chemical language models on multimodal embeddings [4].

Trade-offs in Practical Applications

The choice between composition-based and structure-based modeling involves several practical considerations beyond pure predictive accuracy. Composition-based models enable rapid screening of vast chemical spaces—evaluating billions of potential compositions—which is computationally intractable for structure-based approaches that require explicit atomic coordinates [6] [3]. This capability makes them invaluable for the initial stages of materials discovery when structural information is unavailable.

However, structure-based models provide more physically interpretable insights into structure-property relationships, capturing how specific bonding environments and spatial arrangements influence material behavior [2]. They generally achieve higher accuracy for properties strongly dependent on crystal structure, such as mechanical properties and electronic band structure [4].

Emerging cross-modal approaches attempt to bridge this divide by transferring knowledge from structure-aware models to composition-based predictors, either implicitly through aligned embedding spaces or explicitly by generating probable crystal structures from compositions [4]. These hybrid approaches have demonstrated state-of-the-art performance on multiple benchmarks, achieving the best results in 25 out of 32 tasks on the LLM4Mat-Bench and MatBench datasets [4].

Research Reagent Solutions: Computational Tools for Materials Discovery

Table 3: Essential Computational Resources for Composition-Based Modeling

| Resource Name | Type | Primary Function | Relevance to Composition-Based Models |

|---|---|---|---|

| OQMD [3] | Materials Database | DFT-computed formation enthalpies | Training data for stability prediction models |

| Materials Project [2] | Materials Database | Crystal structures and computed properties | Benchmarking and transfer learning |

| ICSD [5] | Experimental Database | Experimentally characterized crystal structures | Source of ground-truth oxidation states and stability data |

| JARVIS-DFT [4] | Materials Database | DFT-computed properties for 2D materials | Evaluation of model generalizability |

| CALPHAD [6] | Thermodynamic Modeling | Phase diagram calculation | Feature generation and model training |

| Pymatgen [5] | Python Library | Materials analysis | Feature extraction and data preprocessing |

Workflow and Signaling Pathways in Composition-Based Modeling

The following diagram illustrates the typical workflow for developing and applying composition-based models for stability prediction, highlighting the key decision points and methodological approaches:

Composition-Based Modeling Workflow

The conceptual "signaling pathway" in composition-based models illustrates how information flows from chemical composition to property prediction. For feature-engineered models, this pathway involves transforming elemental compositions into statistical representations of atomic properties, which are then processed by machine learning algorithms to identify complex correlations with material stability [1]. In deep learning approaches like ElemNet, the model automatically learns relevant features through multiple hidden layers, effectively creating an optimized pathway from elemental inputs to property predictions without manual feature engineering [3]. For cross-modal transfer learning, the pathway becomes more complex, incorporating knowledge distilled from structure-based models either implicitly through aligned embedding spaces or explicitly through structure generation, thereby enriching the compositional representation with structural insights without requiring explicit structural inputs [4].

The comparison between composition-based and structure-based models reveals a complementary relationship rather than a strict hierarchy. Composition-based models excel in exploratory research phases where structural information is unavailable, enabling rapid screening of vast compositional spaces with increasingly competitive accuracy [1] [3]. Their efficiency advantage is particularly pronounced for applications requiring the evaluation of millions of potential compounds, such as in the discovery of new battery materials, catalysts, or high-temperature alloys [6].

Structure-based models remain essential for detailed property prediction and understanding structure-property relationships in known materials systems [2]. However, the emerging paradigm of cross-modal knowledge transfer suggests a future where the boundaries between these approaches become increasingly blurred, with composition-based models incorporating structural insights without requiring explicit atomic coordinates [4].

For researchers and development professionals, the selection between these approaches should be guided by specific research objectives: composition-based models for initial exploration and screening of novel chemical spaces, structure-based models for detailed investigation of promising candidates, and hybrid approaches for maximizing predictive accuracy across diverse materials classes. As both methodologies continue to advance, their strategic integration will undoubtedly accelerate the discovery and development of novel materials with tailored properties.

In the field of computational research, predicting the stability of molecules and materials is a fundamental task. Two dominant paradigms have emerged: composition-based models, which rely solely on chemical formulas, and structure-based models, which use the precise three-dimensional (3D) atomic coordinates and conformations. This guide provides a detailed comparison of these approaches, focusing on their underlying principles, performance, and practical applications for researchers and drug development professionals.

Core Concepts: Composition-Based vs. Structure-Based Models

The primary distinction between these model classes lies in their input data and the type of information they capture.

- Composition-Based Models: These models predict properties based on the elemental composition of a compound (e.g., its chemical formula). They do not require or use any information about the spatial arrangement of atoms.

- Inputs: Chemical formula and derived features (e.g., statistical properties of constituent elements, electron configurations).

- Advantage: Highly efficient and applicable when structural data is unavailable.

- Limitation: Cannot capture properties arising from 3D geometry, such as stereochemistry or binding pose, which can introduce predictive bias [1].

- Structure-Based Models: These models explicitly use the 3D atomic coordinates and molecular conformation as input to predict properties and stability.

- Inputs: The 3D spatial coordinates (x, y, z) of atoms and their types, which define the molecule's conformation.

- Advantage: Can directly compute geometric and physical interactions (e.g., van der Waals forces, hydrogen bonding), leading to higher accuracy for tasks like binding affinity prediction and stability assessment [7] [8].

- Limitation: More computationally intensive and requires accurate 3D structures, which may not always be available.

The following diagram illustrates the fundamental logical relationship between these two approaches and their reliance on different types of input data.

Performance Comparison: Key Metrics and Experimental Data

Experimental data from recent studies demonstrates the distinct strengths and applications of structure-based models. The table below summarizes quantitative comparisons of different model types on benchmark tasks.

Table 1: Performance comparison of composition-based and structure-based models

| Model / Framework | Primary Input Type | Key Performance Metric | Reported Result | Key Advantage / Application |

|---|---|---|---|---|

| ECSG (Ensemble) [1] | Composition | AUC for Stability Prediction | 0.988 | High sample efficiency; requires only 1/7 of data to match other models' performance. |

| GNN for Crystals [7] | Structure (3D Graphs) | Accuracy in Energy Ordering | Correctly ranks polymorphic structures | Accurately predicts total energy for both ground-state and high-energy crystals. |

| DiffGui [8] | Structure (3D Coordinates) | PoseBusters (PB) Validity | ~90% (estimated from context) | Generates molecules with high binding affinity, rational 3D structure, and desired drug-like properties. |

| GIE-RC Autoencoder [9] | Structure (Relative Coords) | Reconstruction RMSD under Noise | ~0.19 Å (for 5% noise on 24-atom system) | Robust conformation generation; less sensitive to error than Cartesian coordinates. |

Analysis of Comparative Data

- Accuracy and Robustness: Structure-based models like the GIE-RC Autoencoder show superior robustness in 3D structure reconstruction. When subjected to a 5% noise level, it achieved an RMSD of only 0.19 Å for a 24-atom system, significantly outperforming models based on Cartesian coordinates (1.15 Å RMSD) [9]. This demonstrates their resilience to input perturbations, which is critical for reliable predictions.

- Task-Specific Superiority: For applications where 3D geometry is paramount, such as structure-based drug design (SBDD), structure-based models are indispensable. DiffGui excels at generating molecules with high binding affinity and, crucially, with valid 3D geometries (high PB-validity), a common challenge for generative models that ignore structural feasibility [8].

- Efficiency of Composition-Based Models: The ECSG ensemble model demonstrates that composition-based approaches can be highly effective for specific tasks like thermodynamic stability prediction, achieving an AUC of 0.988. Their major advantage is sample efficiency, requiring less data to achieve strong performance, making them ideal for rapid, large-scale screening when structures are unknown [1].

Experimental Protocols and Methodologies

The performance data presented above is derived from rigorous experimental protocols. Below is a detailed workflow for a typical structure-based modeling experiment, illustrating the key steps from data preparation to model evaluation.

Detailed Protocol Breakdown

1. Data Preparation

- Sources: High-quality 3D structures are sourced from public databases like the Protein Data Bank (PDB) for biomacromolecules, the Cambridge Structural Database (CSD) for small molecules, or generated through Molecular Dynamics (MD) simulations for conformational sampling [9] [10].

- Curation: Datasets are carefully curated to remove erroneous structures and annotated with target properties (e.g., formation energy, binding affinity).

2. Feature Representation

- 3D Graph Representation: Atoms are treated as nodes, and chemical bonds or spatial proximities are treated as edges. This is a common and powerful representation for Graph Neural Networks (GNNs) [7] [8].

- Invariant Coordinate Systems: Methods like Graph Information-Embedded Relative Coordinate (GIE-RC) are used instead of standard Cartesian coordinates. GIE-RC is translationally and rotationally invariant, making the model less sensitive to the initial orientation of the molecule and more robust to errors [9].

- Internal Coordinates: Some models use bond lengths, angles, and dihedrals, which are also inherently invariant to rotation and translation [9].

3. Model Architecture

- Equivariant Graph Neural Networks (EGNNs): These are state-of-the-art for 3D molecular data. They ensure that the model's predictions (e.g., on energy) are consistent regardless of how the molecule is rotated or translated in space (a property known as E(3)-equivariance) [8].

- Diffusion Models: Used for generative tasks, such as creating new 3D molecules. They work by progressively adding noise to a structure and then training a network to reverse this process, learning to generate realistic structures from noise [8].

- Autoencoders (AEs): Used to learn a compressed, informative representation (latent space) of molecular conformations, which can then be used for efficient sampling and generation [9] [11].

4. Training Objective

- Energy Minimization: Models are trained to predict the total energy or formation energy of a structure, often using Density Functional Theory (DFT) calculations as the ground truth [7].

- Reconstruction Loss: In autoencoders, the model is trained to accurately reconstruct its input 3D structure from the latent representation [9].

- Property Prediction: Many models are trained with multi-task objectives to predict not just stability, but also other key properties like drug-likeness (QED) and synthetic accessibility (SA) [8].

5. Evaluation Benchmarking

- Binding Affinity: Estimated using scoring functions like Vina Score, a standard for evaluating protein-ligand interactions [8].

- Geometric Accuracy: Measured by Root-Mean-Square Deviation (RMSD) between predicted and ground-truth atomic positions. Lower RMSD indicates higher geometric fidelity [9] [8].

- Drug-Likeness and Validity: Assessed using metrics like Quantitative Estimate of Drug-likeness (QED), Synthetic Accessibility (SA),- PoseBusters (PB) Validity (checks for physical realism and chemical correctness), and RDKit validity (checks for chemical sanity) [8].

Table 2: Key software and databases for structure-based modeling

| Resource Name | Type | Primary Function | Relevance to Structure-Based Models |

|---|---|---|---|

| Protein Data Bank (PDB) | Database | Repository for 3D structural data of proteins and nucleic acids. | The primary source of experimental 3D structures for training and benchmarking models of biomolecules [10]. |

| Cambridge Structural Database (CSD) | Database | Repository for experimentally determined organic and metal-organic crystal structures. | The primary source of 3D structures for small molecules and periodic materials [7]. |

| AlphaFold2/3 | Software | AI system that predicts 3D protein structures from amino acid sequences. | Provides highly accurate protein structures for SBDD when experimental structures are unavailable [10] [8]. |

| RDKit | Software | Open-source toolkit for Cheminformatics and Machine Learning. | Used for processing molecules, calculating molecular descriptors (QED, LogP), and checking chemical validity [8]. |

| OpenBabel | Software | Chemical toolbox designed to speak many languages of chemical data. | Often used to convert file formats and assign bond types based on atomic coordinates in generative workflows [8]. |

| AutoDock Vina | Software | Molecular docking and virtual screening program. | The standard tool for rapid estimation of binding affinity, used to evaluate generated molecules in SBDD [8]. |

| PDBbind | Dataset | A curated database of experimentally measured binding affinities for protein-ligand complexes in the PDB. | A critical benchmark dataset for training and evaluating models that predict protein-ligand binding [8]. |

The choice between composition-based and structure-based models is not a matter of one being universally superior, but rather of selecting the right tool for the scientific question at hand.

- Composition-based models offer unparalleled speed and utility for the initial exploration of vast chemical spaces, especially when structural information is absent. Their high sample efficiency makes them ideal for prioritizing candidates for further study [1].

- Structure-based models are essential when the 3D conformation dictates function. They provide the accuracy and geometric realism required for rational drug design, materials stability prediction, and understanding conformational dynamics [9] [7] [8].

The future of computational stability prediction lies in the intelligent integration of both approaches, leveraging the scalability of composition-based screening to feed into high-fidelity, structure-based validation and optimization.

The Role of Thermodynamic Stability in Drug Development

Thermodynamic stability is a critical quality attribute in drug development, governing the shelf life, efficacy, and safety of pharmaceutical products. At its core, thermodynamic stability describes the energetic balance of a drug molecule and its interactions with biological targets, excipients, and solvent systems. Unlike kinetic stability which concerns the rate of change, thermodynamic stability determines the ultimate state a system will reach at equilibrium, defining fundamental parameters such as solubility, bioavailability, and binding affinity [12]. A comprehensive understanding of thermodynamic principles allows researchers to select optimal solid forms, predict shelf life, and design molecules with improved binding characteristics, ultimately accelerating the development of effective therapeutics.

The drug development landscape is increasingly leveraging two complementary approaches for stability assessment: composition-based models that utilize chemical formula information to predict properties, and structure-based models that incorporate detailed atomic arrangements and geometric relationships [1]. Composition-based models offer advantages in early discovery when structural data may be unavailable, while structure-based models provide deeper mechanistic insights but require more extensive characterization. This guide objectively compares these approaches through the lens of thermodynamic stability, providing researchers with experimental data and methodologies to inform their development strategies.

Composition-Based vs. Structure-Based Stability Models: A Comparative Framework

Table 1: Comparison of Composition-Based and Structure-Based Stability Models

| Feature | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Primary Input Data | Elemental composition, stoichiometry [1] | Atomic coordinates, bond lengths, spatial relationships [7] |

| Information Content | Lower (elemental proportions only) [1] | Higher (complete geometric arrangement) [7] |

| Computational Demand | Lower | Higher (requires structural optimization) |

| Applicability Stage | Early discovery, unexplored chemical spaces [1] | Late discovery, optimization phases |

| Key Strengths | Rapid screening of vast compositional spaces [1] | Accurate energy ranking of polymorphic structures [7] |

| Main Limitations | Cannot distinguish between structural isomers [1] | Requires known or predicted crystal structures [1] |

| Sample Efficiency | High (achieves performance with less data) [1] | Lower (requires substantial training data) |

The fundamental distinction between these modeling approaches lies in their input data requirements and information content. Composition-based models utilize statistical features derived from elemental properties such as atomic number, mass, and radius, or even electron configuration information [1]. These models are particularly valuable when exploring uncharted chemical territories where structural information is unavailable. In contrast, structure-based models, particularly graph neural networks (GNNs), represent crystals as graphs of atoms connected by bonds, enabling them to learn complex relationships in atomic arrangements and accurately rank polymorphic structures by their energy [7]. This capability is crucial for predicting thermodynamic stability, as the most stable polymorph typically has the lowest energy [7].

Experimental Approaches for Thermodynamic Stability Assessment

Thermodynamic Profiling of Amorphous and Coamorphous Systems

Table 2: Experimental Thermodynamic Parameters of Azelnidipine Solid Forms

| Solid Form | Glass Transition Temperature (Tg/K) | Transition Temperature to β-Crystal (T/K) | Activation Energy for Decomposition (Ea/kJ mol−1) |

|---|---|---|---|

| α-Amorphous Phase (α-AP) | 365.5 | 237.7 | 133.0 |

| β-Amorphous Phase (β-AP) | 358.9 | 400.3 | 114.2 |

| Azelnidipine-Piperazine Coamorphous (CAP) | 347.6 | 231.4 | 131.6 |

Experimental assessment of thermodynamic stability employs both solid-state and solution-based methods. A comprehensive study on azelnidipine, a calcium channel blocker, demonstrates how different solid forms exhibit distinct thermodynamic profiles [13] [14]. The preparation of two amorphous phases (α-AP and β-AP) from different crystalline polymorphs, along with a coamorphous phase (CAP) with piperazine, revealed that no general relationship exists between solid physical stability and solution chemical stability [13] [14]. For instance, while α-AP showed the highest glass transition temperature (indicating better solid-state physical stability), β-AP proved to be the most thermodynamically stable form in solution at room temperature [13] [14].

Key Experimental Protocols

Protocol 1: Preparation and Characterization of Amorphous and Coamorphous Phases

- Preparation of Amorphous Phases: Crystalline drug substance is heated to 10-20°C above its melting point (150°C for α-azelnidipine; 240°C for β-azelnidipine) and maintained for 3 minutes. The melt is rapidly quenched using liquid nitrogen and subsequently milled under cryogenic conditions [13].

- Preparation of Coamorphous Phases: Drug and coformer (e.g., piperazine) are combined in molar ratios (1:2 for azelnidipine:piperazine) and ground for 30 minutes using an oscillatory disc mill. The process includes periodic stops every 10 minutes to scrape jar walls and disperse heat [13].

- Characterization: Samples are analyzed using Powder X-ray Diffraction (PXRD) to confirm amorphous nature, Fourier-Transform Infrared Spectroscopy (FTIR) to identify molecular interactions, and Temperature-Modulated Differential Scanning Calorimetry (TMDSC) to determine glass transition temperatures [13].

Protocol 2: Solubility-Based Thermodynamic Stability Assessment

- Solubility Measurements: Excess solid form is added to 0.01 M HCl medium and equilibrated at multiple temperatures (298, 304, 310, 316, and 322 K) with constant agitation [13] [14].

- Sample Analysis: Suspensions are filtered and concentrations determined via HPLC or UV-Vis spectroscopy [13].

- Data Analysis: Solubility values are used to calculate transition temperatures between amorphous and crystalline forms and thermodynamic parameters including free energy, enthalpy, and entropy of transition [13] [14].

Protocol 3: Machine Learning Model Training for Stability Prediction

- Data Collection: Large-scale datasets from materials databases (Materials Project, OQMD, JARVIS) provide formation energies and decomposition energies for training [1].

- Feature Engineering: For composition-based models, elemental properties are converted to statistical features; for structure-based models, crystal structures are represented as graphs [1] [7].

- Model Training: Ensemble approaches combine models based on different principles (e.g., Magpie, Roost, ECCNN) using stacked generalization to reduce bias and improve prediction accuracy of thermodynamic stability [1].

Research Reagent Solutions: Essential Materials for Thermodynamic Studies

Table 3: Essential Research Reagents and Materials for Thermodynamic Stability Assessment

| Reagent/Material | Function | Application Example |

|---|---|---|

| Differential Scanning Calorimeter (DSC) | Measures thermal transitions (Tg, melting point, decomposition) | Determining glass transition temperatures of amorphous phases [13] |

| Isothermal Titration Calorimeter (ITC) | Directly measures binding thermodynamics | Determining ΔH, ΔS, and Ka for drug-target interactions [12] |

| Powder X-ray Diffractometer | Identifies solid-state form and amorphous character | Confirming successful preparation of amorphous phases [13] |

| High-Performance Liquid Chromatography | Quantifies drug concentration and degradation products | Analyzing solubility and chemical stability in solution studies [13] |

| Oscillatory Ball Mill | Prepires coamorphous systems by mechanical grinding | Manufacturing coamorphous systems without solvents [13] |

| Fluorescence-Based Thermal Shift Assay | Medium-throughput screening of thermal denaturation | Prescreening compounds for thermodynamic profiling [15] |

Visualization of Workflows and Model Architectures

Experimental Workflow for Thermodynamic Stability Assessment

Ensemble Machine Learning Framework for Stability Prediction

Thermodynamic stability assessment provides fundamental insights that bridge drug discovery and development. The complementary approaches of composition-based and structure-based modeling offer distinct advantages at different stages of the pharmaceutical pipeline, with composition-based methods enabling rapid exploration of chemical space and structure-based methods providing accurate ranking of stable forms for lead optimization [1] [7]. Experimental validation remains crucial, as demonstrated by the complex relationship between solid-state and solution stability observed in amorphous azelnidipine systems [13] [14].

Future directions in thermodynamic stability assessment include the integration of artificial intelligence with high-throughput experimental validation, the development of standardized protocols for biologics stability assessment [16], and the application of novel thermodynamic principles such as metastable materials with negative thermal expansion [17]. As the field advances, the systematic application of thermodynamic principles will continue to enable more efficient drug development, reducing late-stage failures and accelerating the delivery of effective therapies to patients.

Stability is a paramount property in both pharmaceutical and materials science, though its definition and assessment differ significantly between these fields. In drug development, stability refers to a substance's capacity to retain its chemical identity, potency, and purity over time under the influence of various environmental factors. For materials science, particularly for inorganic compounds, thermodynamic stability is typically represented by the decomposition energy (ΔH₍d₎), defined as the total energy difference between a given compound and its competing compounds in a specific chemical space [1]. The accurate prediction of stability is crucial as it determines the feasible synthesis pathways for new materials and the shelf-life and efficacy of pharmaceutical products.

A critical framework for understanding these applications is the comparison between composition-based and structure-based models for stability prediction. Composition-based models predict properties using only the chemical formula of a compound, without geometric structural information. In contrast, structure-based models incorporate detailed structural data, including the proportions of each element and the geometric arrangements of atoms [1]. This guide objectively compares the performance, experimental protocols, and applications of these modeling approaches across the diverse domains of small molecules, biologics, and inorganic materials.

Fundamental Differences: Small Molecules vs. Biologics

Small molecule drugs and biologics represent two distinct classes of pharmaceuticals, each with unique stability profiles and testing requirements.

Small molecule drugs are medications with a low molecular weight, consisting of chemically synthesized compounds with straightforward structures. They are generally shelf-stable, relatively easy to manufacture, and are typically administered orally in pill form [18]. Their small size allows them to be easily absorbed into the bloodstream and interact with specific molecules within cells [18].

Biologics, or large molecule drugs, have a high molecular weight and are complex proteins manufactured or extracted from living organisms. They are inherently less stable than small molecules, costly to produce, and typically require administration via injection or infusion [18]. Their complex structure makes them sensitive to environmental stresses such as agitation, temperature fluctuations, and interactions with container surfaces [19].

Table 1: Fundamental Characteristics and Stability Testing of Small Molecules vs. Biologics

| Characteristic | Small Molecules | Biologics |

|---|---|---|

| Molecular Size | Low molecular weight [18] | High molecular weight [18] |

| Structural Complexity | Simple, chemically defined structure [18] | Complex, heterogeneous protein structure [18] |

| Inherent Stability | Generally high; shelf-stable [18] | Generally low; less stable [18] |

| Typical Administration Route | Oral (pill) [18] | Intravenous or infusion [18] |

| Primary Stability Concern | Chemical degradation | Physical (e.g., aggregation, denaturation) and chemical degradation [19] |

| Common Storage Condition | 25°C / 60% Relative Humidity [19] | 2-8°C (refrigerated) or frozen [19] |

| Special Stability Testing | Standard temperature/humidity | Agitation, freeze-thaw cycling, container orientation, surface interaction [19] |

These fundamental differences necessitate distinct stability testing protocols. For biologics, additional studies are required to evaluate sensitivity to freeze-thaw cycles, which can cause protein damage and concentration inconsistencies, and interactions with packaging materials, which can lead to aggregation or leaching [19]. The basic design of a stability study, however, shares similarities: both involve a written protocol, storage under controlled conditions, and testing at specified intervals (e.g., 1, 3, 6, 9, 12, 18, and 24 months) to establish a shelf-life [19].

Composition-Based vs. Structure-Based Stability Models

The prediction of stability, particularly in materials science, relies on two fundamental modeling paradigms. The choice between them involves a trade-off between computational efficiency and informational depth.

Composition-based models use the chemical formula of a compound as input. A key advantage is their applicability in the early stages of material discovery when the precise atomic structure is unknown. As structural information often requires complex experimental techniques or computationally expensive simulations, composition-based models allow for rapid high-throughput screening of new chemical spaces [1]. However, a potential drawback is that by ignoring structural information, they may lack accuracy for certain properties [1].

Structure-based models incorporate detailed structural data, including the geometric arrangements of atoms in a crystal lattice (crystallographic data). These models, such as Crystal Graph Neural Networks (GNNs), typically contain more extensive information and can be more accurate for modeling experimentally synthesized compounds [4]. Their primary limitation is their reliance on known crystal structures, making them unsuitable for predicting the stability of entirely new, uncharacterized materials where the structure is not yet known [1] [4].

Table 2: Comparison of Composition-Based and Structure-Based Models for Stability Prediction

| Feature | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Primary Input Data | Chemical formula (elemental composition) [1] | Crystallographic data (atomic structure) [1] [4] |

| Information Depth | Limited to elemental stoichiometry | Includes atomic geometry and bonding [1] |

| Computational Cost | Lower | Higher |

| Applicability to Novel Materials | High; ideal for exploring uncharted chemical space [1] | Low; requires known crystal structure [1] [4] |

| Key Advantage | High-throughput screening without a priori structure knowledge [1] | Richer feature set, often higher accuracy for known structures [1] |

| Common Algorithms | ElemNet, Roost, Magpie, Chemical Language Models (CLMs) [1] [4] | Crystal Graph Neural Networks (GNNs) [4] |

| Example Performance (AUC) | 0.988 (ECSG model on JARVIS database) [1] | State-of-the-art for synthesized compounds [4] |

Recent research has focused on bridging the gap between these two paradigms. For instance, cross-modal knowledge transfer seeks to enhance composition-based models by leveraging information from the structural domain. This can be done implicitly, by pretraining chemical language models on multimodal embeddings, or explicitly, by using a large language model to generate predicted crystal structures, which are then analyzed by a structure-aware predictor [4].

Experimental Protocols and Modeling Workflows

Experimental Stability Protocol for Biologics

The stability testing of biologics follows a rigorous, standardized protocol to ensure product safety and efficacy [19].

- Protocol Development: A detailed, QA-reviewed stability protocol is written, defining the study's scope and methods.

- Storage Condition Setup: Samples are placed in stability chambers under conditions matching intended storage (typically 5°C or frozen for biologics). Humidity control is not generally applied for refrigerated or frozen conditions.

- Sample Orientation & Agitation: Unlike small molecules, biologics may be tested in different orientations (upright, inverted) and subjected to agitation to assess sensitivity to physical forces.

- Sampling and Testing: Samples are tested at baseline (time zero) and at predefined intervals (e.g., 1, 3, 6, 9, 12, 18, and 24 months). Tests assess purity, potency, and safety.

- Data Analysis and Shelf-Life Estimation: The data collected over time is analyzed to estimate the product's shelf-life. Accelerated stability studies at harsher conditions may be used for initial shelf-life estimation, but must be followed by real-time condition testing [19].

Workflow for the ECSG Ensemble Machine Learning Model

The ECSG (Electron Configuration models with Stacked Generalization) framework is a state-of-the-art approach for predicting the thermodynamic stability of inorganic compounds. Its workflow is designed to mitigate the inductive bias inherent in single-model approaches [1].

Diagram 1: ECSG model workflow

The ECSG framework integrates three base models, each founded on distinct domains of knowledge, to create a more robust "super learner" [1]:

- Input Encoding: The chemical composition is encoded into three different input representations.

- Base Model Prediction:

- Magpie: Uses statistical features (mean, deviation, range) of elemental properties (e.g., atomic radius, electronegativity) and is trained with gradient-boosted trees [1].

- Roost: Represents the formula as a graph of elements, using a graph neural network with an attention mechanism to model interatomic interactions [1].

- ECCNN (Electron Configuration Convolutional Neural Network): A novel model that uses the electron configuration of constituent atoms as input, processed through convolutional layers to capture intrinsic electronic structure [1].

- Stacked Generalization: The predictions from these three base models are used as input features for a meta-level model (the "super learner"), which produces the final, more accurate stability prediction [1].

Performance Data and Comparison

The performance of stability models can be evaluated quantitatively. The following tables summarize key experimental data for machine learning models in materials science and predictive modeling in pharmaceutical development.

Table 3: Performance of Machine Learning Models for Predicting Material Thermodynamic Stability

| Model Name | Model Type | Key Input Features | Reported Performance (AUC) | Data Efficiency |

|---|---|---|---|---|

| ECSG (Ensemble) [1] | Composition-based Ensemble | Electron Configuration, Elemental Statistics, Interatomic Interactions | 0.988 (on JARVIS database) [1] | Requires only 1/7 of the data to match performance of existing models [1] |

| ECCNN [1] | Composition-based (CNN) | Electron Configuration Matrix | High (part of ensemble) | Not reported separately |

| Roost [1] | Composition-based (GNN) | Elemental Graph with Attention | High (part of ensemble) | Not reported separately |

| Cross-Modal imKT (e.g., imKT@ModernBERT) [4] | Composition-based (CLM) | Chemical Formula (pretrained on multimodal embeddings) | MAE of 0.1172 for Total Energy prediction (39.6% improvement) [4] | Improved via knowledge transfer |

Table 4: Performance of Cross-Modal Knowledge Transfer on Material Property Prediction Tasks

| Predictive Task | Previous SOTA Model | SOTA with Cross-Modal Transfer | Performance Improvement (MAE Reduction) |

|---|---|---|---|

| Formation Energy per Atom (FEPA) | MatBERT-109M (MAE: 0.126) [4] | imKT@ModernBERT (MAE: 0.11488) [4] | +8.8% [4] |

| Total Energy | MatBERT-109M (MAE: 0.194) [4] | imKT@ModernBERT (MAE: 0.1172) [4] | +39.6% [4] |

| Band Gap (MBJ) | MatBERT-109M (MAE: 0.491) [4] | imKT@ModernBERT (MAE: 0.3773) [4] | +23.2% [4] |

| Exfoliation Energy | MatBERT-109M (MAE: 37.445) [4] | imKT@RoFormer (MAE: 29.5) [4] | +21.2% [4] |

In pharmaceutical stability, predictive modeling using Accelerated Stability Assessment Procedure (ASAP), kinetic modeling, and Machine Learning (ML) is gaining confidence. These science-based approaches can compensate for incomplete real-time data in regulatory submissions, potentially accelerating patient access to new medicines. This applies to both synthetic small molecules and complex biologics, where prior knowledge can be used to build robust prediction models [20].

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key reagents, computational tools, and datasets essential for conducting stability research in the featured fields.

Table 5: Key Resources for Stability Research and Modeling

| Tool/Resource | Category | Function and Application |

|---|---|---|

| Stability Chambers | Laboratory Equipment | Provides controlled environments (temperature, humidity) for long-term and accelerated stability studies of pharmaceutical products [19]. |

| JARVIS Database [1] | Computational Database | A comprehensive materials database used for training and benchmarking machine learning models for property prediction, including stability [1]. |

| Materials Project (MP) Database [1] | Computational Database | A widely used database of computed materials properties, including formation energies and crystal structures, essential for structure-based modeling [1]. |

| Graph Neural Network (GNN) Libraries | Software/Toolkit | Enables the development of structure-based models (e.g., Crystal GNNs) that learn from the graph representation of crystal structures [4]. |

| Chemical Language Models (CLMs) | Software/Algorithm | A type of composition-based model that treats chemical formulas as sequences, enabling property prediction and exploration of chemical space [4]. |

| XGBoost / LGBoost | Software/Algorithm | Gradient boosting algorithms used for building predictive models, such as the Magpie model, which uses elemental features [1] [21]. |

| Electron Configuration Data | Fundamental Data | The distribution of electrons in atomic orbitals; used as a fundamental, low-bias input feature for models like ECCNN [1]. |

The comparative analysis of stability applications across small molecules, biologics, and materials reveals both stark contrasts and unifying themes. While small molecules and biologics demand distinct stability testing protocols due to their inherent physicochemical differences, the underlying principles of scientific rigor and predictive accuracy remain constant. In materials science, the dichotomy between composition-based and structure-based models highlights a fundamental trade-off between exploration speed and predictive detail.

The emergence of advanced computational strategies, such as ensemble methods like ECSG and cross-modal knowledge transfer, is pushing the boundaries of predictive stability science. These approaches synergistically combine the strengths of different models and data modalities, leading to significant improvements in accuracy and data efficiency. As these methodologies continue to mature and gain regulatory acceptance, they hold the promise of dramatically accelerating the discovery of stable new materials and the development of safe, effective, and accessible pharmaceutical products for patients worldwide.

Advantages and Inherent Limitations of Each Approach

Predicting the stability of materials and biologics is a critical task in both drug development and materials science. Two fundamentally different computational approaches have emerged: composition-based models that predict stability directly from chemical formulas or sequences, and structure-based models that rely on three-dimensional atomic coordinates. Composition-based methods leverage machine learning (ML) on large datasets of chemical compositions to rapidly screen for stable candidates, prioritizing speed and breadth. In contrast, structure-based methods employ physics-based simulations or deep learning on structural data to understand the energetic and physical principles governing stability, prioritizing mechanistic insight and accuracy. This guide provides an objective comparison of these paradigms, supported by experimental data and detailed methodologies, to inform researchers and scientists in selecting the appropriate tool for their stability challenges.

Comparative Analysis of Composition-Based and Structure-Based Stability Models

The table below summarizes the core characteristics, advantages, and inherent limitations of composition-based and structure-based stability modeling approaches.

Table 1: Comparative overview of composition-based and structure-based stability models.

| Aspect | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Fundamental Principle | Learns stability from statistical patterns in chemical composition or sequence data [22] [23]. | Predicts stability from 3D atomic coordinates using physical energy functions or deep learning on structures [24] [25]. |

| Primary Input | Chemical formula, SMILES string, elemental descriptors [23] [26]. | 3D structure from PDB, AlphaFold2, or molecular dynamics simulations [24] [27]. |

| Typical Output | Stability classification (stable/unstable) or regression of energy above hull (Eh) [22] [23]. | Change in Gibbs free energy (ΔΔG) upon mutation or perturbation [24]. |

| Key Advantages | - High throughput: Can screen millions of candidates rapidly [23] [26].- Low computational cost [23].- Effective when structures are unknown or unreliable [26]. | - Mechanistic Insight: Reveals atomic-level causes of instability [24].- High accuracy for localized changes when a reliable structure is available [24] [25].- Generalizable across different mutations on the same structure. |

| Inherent Limitations | - Black box: Limited insight into root causes of instability [22].- Data dependency: Performance hinges on quality and size of training data [23] [26].- Struggles with novelty: Poor performance on chemistries outside training distribution [23]. | - Structure dependency: Accuracy is limited by the quality of the input 3D model [24] [25].- High computational cost, limiting throughput [24] [28].- Challenging for large conformational changes [27] [25]. |

Experimental Protocols for Benchmarking Stability Models

To objectively evaluate the performance of stability prediction models, controlled benchmarking experiments are essential. The following protocols detail standard methodologies for assessing both composition-based and structure-based approaches.

Protocol for Composition-Based Model Benchmarking

This protocol is designed to evaluate the performance of machine learning models in predicting the thermodynamic stability of inorganic crystals, a common application in materials discovery [23].

Table 2: Key reagents and computational tools for composition-based model benchmarking.

| Reagent / Tool | Function in the Protocol |

|---|---|

| Matbench Discovery | A Python package and framework for benchmarking ML energy models as pre-filters in a high-throughput search for stable inorganic crystals [23]. |

| Random Forest Classifier | A tree-based ML algorithm used as a baseline or benchmark model for stability classification tasks [22] [23]. |

| Gradient Boosting Tree (GBT) | An ensemble ML method often used in composition-stability models, known for high performance [22]. |

| Formation Energy & Energy Above Hull (Eₕ) | The key stability metrics. Eₕ represents the energy to the convex hull of the phase diagram, with Eₕ < 0 eV/atom indicating thermodynamic stability [23]. |

| Materials Project Database | A source of high-throughput density functional theory (DFT) data used for training and testing models [23]. |

Procedure:

- Dataset Curation: Obtain a large dataset of known and hypothetical materials with associated stability labels (e.g., Eₕ from DFT). The training set should be retrospective, while the test set should be prospectively generated to simulate a real discovery campaign and ensure a realistic covariate shift [23].

- Model Training: Train the composition-based ML models (e.g., Random Forest, Gradient Boosting Trees) on the retrospective training data. Inputs are typically composition-based feature vectors [22] [23].

- Performance Evaluation: Apply the trained models to the prospective test set. Critical evaluation metrics include:

- False Positive Rate (FPR): The proportion of unstable materials incorrectly predicted as stable. This is a key cost-saving metric in discovery [23].

- True Positive Rate (TPR): The proportion of stable materials correctly identified.

- Precision: The fraction of predicted stable materials that are truly stable.

- Accuracy: The overall correctness of the model.

- Analysis: Compare the metrics across different ML methodologies. Note that accurate regressors (low mean absolute error) can still have high FPR if predictions near the decision boundary (0 eV/atom) are misclassified, highlighting the need for classification metrics over pure regression accuracy [23].

Protocol for Structure-Based Model Benchmarking

This protocol assesses the accuracy of structure-based tools in predicting the change in protein stability due to missense mutations, a critical task in variant interpretation and protein engineering [24].

Procedure:

- Structure Preparation: Obtain a high-resolution (preferably < 2.0 Å) experimental structure of the protein from the Protein Data Bank (PDB). X-ray crystallography structures are preferred for their high resolution [24]. Alternatively, a high-confidence ab initio model from AlphaFold2 can be used, paying close attention to regions with high pLDDT confidence scores (>70) [24] [25].

- Stability Calculation: Use a structure-based stability prediction tool like FoldX. FoldX uses an energy function that includes van der Waals, solvation, hydrogen bonding, electrostatics, and entropy effects to compute the change in Gibbs free energy (ΔΔG) between the native and variant structures [24].

- Experimental Validation: Compare the computational predictions with experimentally determined stability measures, such as:

- Melting temperature (Tₘ) shifts.

- Changes in free energy of unfolding (ΔG) measured by techniques like circular dichroism (CD) or differential scanning calorimetry (DSC).

- Performance Evaluation: Quantify performance using:

- Correlation coefficient between predicted ΔΔG and experimental ΔΔG or Tₘ shifts.

- Root Mean Square Error (RMSE) of the predictions.

- Classification accuracy for distinguishing stabilizing from destabilizing mutations.

Key Considerations:

- The accuracy of the structure-based prediction is highly sensitive to the quality and resolution of the input protein structure [24].

- Performance can vary significantly between different protein domains and structural contexts [24].

- AlphaFold2 models, while highly accurate for many targets, can contain errors in domain orientations or dynamic regions, which may impact stability calculations [25].

Research Reagent Solutions

The following table lists essential tools and databases used in the development and application of stability models.

Table 3: Key research reagents and tools for stability modeling.

| Category | Tool / Reagent | Function |

|---|---|---|

| Databases | Protein Data Bank (PDB) | Repository for experimentally determined 3D structures of proteins and nucleic acids, crucial for structure-based modeling [24]. |

| AlphaFold Protein Structure Database | Provides over 200 million predicted protein structures, enabling structure-based approaches for proteins without experimental structures [27] [25]. | |

| Materials Project / Cambridge Structural Database (CSD) | Sources of computational and experimental materials data for training and validating composition-based models [26]. | |

| Software & Algorithms | FoldX | Industry-standard "gold-standard" software for predicting the effect of mutations on protein stability from a 3D structure [24]. |

| AlphaFold2 (AF2) | Deep learning system for highly accurate protein structure prediction from amino acid sequences [27] [25]. | |

| Matbench Discovery | Python package providing an evaluation framework for benchmarking ML models on materials stability prediction tasks [23]. | |

| Experimental Data Types | Cross-linking Mass Spectrometry (XL-MS) | Provides distance constraints that can be integrated into tools like AlphaLink to guide and improve structure prediction [27]. |

| NMR Data (NOEs, RDCs) | Provides experimental restraints on distances and orientations for validating and refining predicted protein structures and ensembles [27] [25]. |

Workflow and Logical Relationships

The following diagram illustrates the logical relationship and typical workflow between composition-based and structure-based modeling approaches, highlighting their complementary roles.

Stability Model Selection and Integration Workflow

The diagram visualizes the two parallel modeling pathways. The composition-based approach (green) is optimized for high-throughput screening of large chemical spaces, making it ideal for the initial phase of discovery. The structure-based approach (red) provides deep mechanistic insight and accurate quantification of stability for a smaller number of candidates. Crucially, the workflows are not isolated; promising candidates identified by composition-based screening can be analyzed in detail using structure-based methods. Furthermore, the high-fidelity results from structure-based analysis can be used to augment training datasets, thereby improving the performance of the faster composition-based models in an iterative feedback loop [23] [26].

Methodologies in Action: Techniques and Tools for Stability Prediction

The discovery and development of new functional materials are crucial for technological progress, yet traditional experimental and computational methods remain time-consuming and resource-intensive. In this landscape, machine learning (ML) has emerged as a powerful tool for accelerating materials discovery, particularly through techniques that predict material properties and stability directly from chemical composition. Composition-based ML models offer a significant advantage by enabling the screening of vast compositional spaces without requiring precise structural data, which is often unavailable for novel, unsynthesized materials [1]. These methods can be broadly categorized into those utilizing elemental composition data and those incorporating electron configuration information, each with distinct approaches for representing and learning from chemical data.

This guide provides an objective comparison of leading composition-based techniques, evaluating their performance, data efficiency, and applicability against traditional structure-based models and manual feature engineering. We focus on methodologies that have demonstrated state-of-the-art performance in predicting key material properties, with special attention to thermodynamic stability—a critical filter in materials design.

Comparative Analysis of Composition-Based Models

Key Models and Performance Metrics

Table 1: Comparison of leading composition-based machine learning models for materials property prediction.

| Model Name | Input Representation | Core Methodology | Key Performance Metrics | Reported Advantages |

|---|---|---|---|---|

| ECSG [1] | Electron configuration matrices | Ensemble learning with stacked generalization (Magpie, Roost, ECCNN) | AUC: 0.988 for stability prediction; 7x data efficiency over benchmarks | Mitigates inductive bias; exceptional sample efficiency |

| ElemNet [29] | Elemental composition fractions | Deep neural network (17 layers) | MAE: 0.050 ± 0.0007 eV/atom (9% of MAD); 30% more accurate than conventional ML | Automatic feature learning; no domain knowledge required |

| Cross-Modal Transfer [4] | Multimodal embeddings (composition → structure) | Chemical language models with implicit/explicit knowledge transfer | MAE reduced by 15.7% on average across 18 JARVIS-DFT tasks | State-of-the-art on 25/32 benchmark tasks; enhances interpretability |

| Ensemble of Experts [30] | Tokenized SMILES strings | Ensemble of pre-trained models on related properties | Outperforms standard ANNs under severe data scarcity | Effective in data-limited scenarios; captures complex molecular interactions |

| Bilinear Transduction [31] | Stoichiometry-based representations | Transductive learning of property value differences | 1.8× better extrapolative precision for materials; 3× boost in OOD recall | Superior out-of-distribution extrapolation capability |

Quantitative Performance Comparison

Table 2: Detailed performance metrics across different material property prediction tasks.

| Property/Task | Dataset | Best Performing Model | Performance Metric | Comparison to Baseline |

|---|---|---|---|---|

| Formation Energy Prediction | OQMD [29] | ElemNet | MAE: 0.050 ± 0.0007 eV/atom | 30% more accurate than physical-attributes-based ML |

| Thermodynamic Stability | JARVIS [1] | ECSG | AUC: 0.988 | Superior to single-model approaches |

| Formation Energy | MatBench [31] | Bilinear Transduction | Lower OOD MAE | Improved extrapolation beyond training distribution |

| Total Energy | JARVIS-DFT [4] | imKT@ModernBERT | MAE: 0.1172 ± 0.0005 | 39.6% improvement over MatBERT-109M |

| Band Gap (MBJ) | JARVIS-DFT [4] | imKT@ModernBERT | MAE: 0.3773 ± 0.0030 | 23.2% improvement over MatBERT-109M |

| Glass Transition Temperature | Polymer Systems [30] | Ensemble of Experts | Higher predictive accuracy | Significantly outperforms standard ANNs under data scarcity |

Experimental Protocols and Methodologies

Ensemble Framework with Stacked Generalization (ECSG)

The ECSG framework addresses limitations of single-model approaches by combining three distinct models based on different knowledge domains [1]:

Magpie: Utilizes statistical features (mean, variance, range, etc.) of elemental properties like atomic number, mass, and radius, implemented with gradient-boosted regression trees (XGBoost).

Roost: Represents chemical formulas as complete graphs of elements, employing graph neural networks with attention mechanisms to capture interatomic interactions.

ECCNN (Electron Configuration Convolutional Neural Network): Processes electron configuration data through convolutional layers to capture electronic structure information crucial for stability prediction.

The electron configuration input is encoded as a 118×168×8 matrix representing electron distributions across energy levels [1]. The ensemble uses stacked generalization, where base model predictions serve as inputs to a meta-learner that produces final predictions, effectively reducing inductive biases inherent in individual models.

ECSG Ensemble Architecture: Illustrates the stacked generalization approach integrating Magpie, Roost, and ECCNN models.

Cross-Modal Knowledge Transfer

Recent advancements employ cross-modal learning to bridge composition-based and structure-based paradigms [4]:

Implicit Knowledge Transfer (imKT): Aligns chemical language model embeddings with those from multimodal foundation models trained on crystal structure, electronic states, charge density, and text.

Explicit Knowledge Transfer (exKT): Generates crystal structures from composition using large language models like CrystaLLM, then applies structure-aware graph neural networks for property prediction.

This approach enables composition-based models to leverage structural information without requiring explicit structural data for new compositions, significantly enhancing predictive accuracy across multiple property tasks.

Data Scarcity Solutions

The Ensemble of Experts (EE) framework addresses data scarcity through [30]:

- Pre-training experts on large datasets of related physical properties

- Tokenizing SMILES strings for improved chemical structure interpretation

- Combining expert knowledge through ensemble methods for target properties with limited data

This approach demonstrates particular effectiveness for predicting complex properties like glass transition temperature and Flory-Huggins interaction parameters in polymer systems, where experimental data is traditionally limited.

Composition-Based vs. Structure-Based Approaches

Relative Advantages and Limitations

Table 3: Comparison between composition-based and structure-based prediction models.

| Aspect | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Input Requirements | Only chemical composition [1] | Complete crystal structure data [32] |

| Applicability Domain | Unexplored compositional spaces [1] | Compounds with known structures [32] |

| Data Efficiency | High (ECSG uses 1/7 data for same performance) [1] | Lower (requires extensive structural data) |

| Extrapolation Capability | Limited for OOD property values [31] | Better for structural analogs |

| Implementation Complexity | Lower (simpler inputs) | Higher (requires structural representation) |

| Performance | Competitive for many properties [29] | Generally higher when structures are available |

Integration Approaches

Hybrid methodologies are emerging to leverage strengths of both approaches:

Materials Maps [32]: Graph-based representations that integrate structural information with composition-based property predictions, enabling visualization of material relationships.

Cross-Modal Transfer [4]: Transfers knowledge from structure-based models to enhance composition-based predictors, achieving state-of-the-art performance on multiple benchmarks.

Cross-Modal Knowledge Transfer: Shows how structural data enhances composition-based models through embedding alignment.

The Scientist's Toolkit

Table 4: Key databases and resources for composition-based materials informatics.

| Resource Name | Type | Key Features | Application in Composition-Based ML |

|---|---|---|---|

| OQMD [29] | Computational Database | DFT-computed formation enthalpies, 275,778 unique compositions | Primary training data for formation energy prediction |

| Materials Project [1] | Computational Database | Extensive crystallographic and energetic data | Source of formation energies for stability determination |

| JARVIS [1] | Computational Database | Diverse quantum mechanical properties | Benchmarking stability prediction models |

| StarryData2 [32] | Experimental Database | Curated experimental data from 7,000+ papers | Integrating experimental observations with computational data |

| MatBench [31] | Benchmarking Suite | Standardized tasks for materials ML | Comparative model evaluation |

Representation and Encoding Methods

Element Fractions: Raw compositional data representing proportions of constituent elements [29]

Electron Configuration Matrices: 118×168×8 tensor representing electron distributions across energy levels [1]

SMILES Strings: Tokenized molecular representations enhancing chemical structure interpretation [30]

Magpie Features: Statistical summaries (mean, variance, range, etc.) of elemental properties [1]

Graph Representations: Elemental relationships modeled as complete graphs with attention mechanisms [1]

Composition-based machine learning techniques have evolved from simple elemental proportion models to sophisticated frameworks incorporating electron configurations, cross-modal transfer, and ensemble methods. The ECSG framework demonstrates how combining diverse knowledge domains through stacked generalization can achieve exceptional predictive accuracy and data efficiency, while cross-modal approaches bridge the gap between composition-based and structure-based paradigms.

For researchers and development professionals, the choice between composition-based and structure-based approaches depends on specific application constraints. Composition-based models excel in exploring novel compositional spaces where structural data is unavailable, while structure-based models remain valuable when complete crystallographic information is accessible. Emerging hybrid approaches that transfer knowledge between these paradigms offer promising directions for future development.

As these technologies mature, composition-based techniques will play an increasingly vital role in accelerating materials discovery, particularly when integrated with experimental validation and high-throughput computational screening. The continued development of multimodal learning strategies and interpretable models will further enhance their utility across diverse materials science applications.

The prediction of three-dimensional protein structures from amino acid sequences is a fundamental challenge in computational biology and structural bioinformatics. For decades, three primary structure-based techniques have been developed and refined to address this challenge: homology modeling, threading, and ab initio folding [33] [34]. These methods differ fundamentally in their reliance on existing structural templates, their underlying principles, and their applicability to various protein classes. Homology modeling, also known as comparative modeling, predicts protein structure based on its alignment to one or more related protein structures with known experimental configurations [34]. Threading, or fold recognition, operates on the premise that the number of unique protein folds in nature is limited, allowing a target sequence to be aligned to structural templates even in the absence of significant sequence similarity [35]. In contrast, ab initio folding attempts to predict protein structure from sequence alone using physical principles and statistical potentials without explicit reliance on structural templates [33] [36]. Understanding the performance characteristics, methodological foundations, and limitations of these approaches is essential for researchers selecting appropriate tools for protein structure prediction in biological and pharmaceutical research.

Performance Comparison of Structure Prediction Techniques

Key Performance Metrics Across Methods

The performance of structure prediction methods is typically evaluated using metrics such as RMSD (Root Mean Square Deviation), TM-score (Template Modeling Score), and CPU time requirements. Different methods exhibit distinct performance profiles across these metrics, making them suitable for different applications.

Table 1: Performance Comparison of Structure Prediction Techniques

| Method | Typical RMSD Range (Å) | Key Strengths | Primary Limitations | Optimal Use Cases |

|---|---|---|---|---|

| Homology Modeling | 1-5 (high similarity templates) | High accuracy when >30% sequence identity to template; Fast execution | Requires identifiable homologous templates; Accuracy decreases sharply below 30% identity | Proteins with clear homologs in PDB; High-throughput applications |

| Threading | 3-8 | Can detect distant homologs missed by sequence alignment; Identifies structural analogs | Struggles with novel folds; Alignment accuracy depends on template quality | Proteins with known folds but low sequence similarity; Fold recognition |

| Ab Initio | 3-10+ | No template required; Can theoretically predict novel folds | Computationally intensive; Lower accuracy for larger proteins | Small proteins (<120 residues); Novel folds without templates |

| Deep Learning (e.g., AlphaFold) | 1-3 (backbone) | Near-experimental accuracy for many targets; Integrated approach | Limited performance on orphan proteins; Challenges with dynamic regions | General-purpose prediction; Complex structures |

Quantitative Performance Data

Reported performance results from various prediction algorithms demonstrate significant differences in capability. In comparative studies of ab initio prediction algorithms, average normalized RMSD scores have been reported to range from 11.17 to 3.48 Å, with the I-TASSER algorithm identified as a top performer when considering both RMSD scores and CPU time [33]. The incorporation of specific algorithmic settings such as protein representation and fragment assembly were found to have definite positive influence on running time and predicted structure quality respectively [33].

Recent evaluations on short peptides have revealed complementary strengths between different approaches. For more hydrophobic peptides, AlphaFold and Threading tend to complement each other, while for more hydrophilic peptides, PEP-FOLD and Homology Modeling show synergistic performance [37]. PEP-FOLD was found to provide both compact structures and stable dynamics for most peptides, while AlphaFold generated compact structures for the majority of test cases [37].

Methodological Foundations and Experimental Protocols

Homology Modeling Workflow

Homology modeling relies on the fundamental observation that protein structure is more conserved than sequence during evolution. The methodology follows a systematic multi-step process:

Template Identification: The target sequence is compared against protein structure databases (primarily PDB) using sequence search tools like BLAST, PSI-BLAST, or HHsearch to identify potential templates with significant sequence similarity [34].

Target-Template Alignment: A sequence alignment is constructed between the target and selected template(s). This represents the most critical step determining final model quality.

Backbone Generation: Coordinates from the template structure are copied to the aligned regions of the target sequence.

Loop Modeling: Unaligned regions (insertions/deletions) are modeled using database search or ab initio methods.

Side-Chain Placement: Side chains are added using rotamer libraries that capture preferred amino acid side-chain conformations.

Model Refinement: Energy minimization and molecular dynamics are applied to remove steric clashes and optimize geometry.

The quality of the resulting model is highly dependent on the sequence identity between target and template. Above 50% sequence identity, models are typically highly reliable; between 30-50%, the core region is generally accurate but errors may occur in loops and side chains; below 30%, homology modeling becomes challenging and often unreliable [34].

Homology Modeling Workflow

Threading Methodology

Threading methods address the limitation of homology modeling when sequence similarity is too low to detect by conventional means but structural similarity may still exist. The core algorithm involves:

Fold Library Screening: The target sequence is systematically tested against a library of protein folds or structural motifs.

Scoring Function Evaluation: Each potential sequence-structure alignment is evaluated using knowledge-based potentials that capture residue-residue interactions, solvation effects, and secondary structure compatibility.

Alignment Optimization: An optimal alignment is sought between the sequence and each potential structural template, typically using advanced algorithms like Monte Carlo methods, dynamic programming, or integer linear programming to overcome the NP-complete nature of the problem [35].

The success of threading depends critically on the quality of the scoring function and the diversity of the fold library. Modern threading approaches incorporate machine learning to improve fold recognition and alignment accuracy.

Threading Methodology Workflow

Ab Initio Folding Protocols

Ab initio protein structure prediction aims to build models from physical principles without relying on evolutionary information from known structures. The fundamental approach involves:

Conformational Sampling: Generating a large ensemble of possible protein conformations through techniques like fragment assembly, replica exchange Monte Carlo, or molecular dynamics simulations.

Energy Evaluation: Scoring each conformation using force fields that may include physics-based terms (van der Waals, electrostatics, solvation) and knowledge-based statistical potentials derived from known protein structures.