Composition vs. Structure: A Comparative Analysis of Synthesizability Models in Drug and Materials Discovery

Predicting the synthesizability of novel chemical compounds is a critical challenge in drug and materials discovery.

Composition vs. Structure: A Comparative Analysis of Synthesizability Models in Drug and Materials Discovery

Abstract

Predicting the synthesizability of novel chemical compounds is a critical challenge in drug and materials discovery. This article provides a comprehensive comparison of two dominant computational approaches: composition-based models, which rely solely on chemical formulas, and structure-based models, which incorporate three-dimensional atomic arrangements. We explore the foundational principles, methodological workflows, and practical applications of each paradigm, drawing on recent advances in machine learning and retrosynthesis tools. The analysis addresses key challenges such as data scarcity and computational cost, while presenting validation studies that benchmark model performance against experimental outcomes. Aimed at researchers and development professionals, this review synthesizes strategic insights for selecting and optimizing synthesizability prediction tools to accelerate the design of viable therapeutic candidates and functional materials.

Defining the Battle: Core Principles of Composition-Based and Structure-Based Predictors

In the accelerated discovery of new materials and drug candidates, predicting whether a proposed compound can actually be synthesized is a critical bottleneck. Computational models for assessing synthesizability have largely evolved into two distinct paradigms: composition-based and structure-based approaches. Composition-based models predict synthesizability using only the chemical formula of a material, analyzing elemental combinations and stoichiometries. In contrast, structure-based models require detailed three-dimensional atomic coordinates, leveraging the complete crystallographic information to make predictions [1].

This division is fundamental, as each approach operates on different input data, captures distinct aspects of chemistry and physics, and offers unique advantages and limitations. Understanding this dichotomy is essential for researchers selecting appropriate tools for specific discovery pipelines. This guide provides an objective comparison of these methodologies, supported by experimental data and implementation protocols, to inform their application in scientific research and drug development.

Core Principles and Technical Implementation

Composition-Based Models: Learning from Elemental Relationships

Composition-based models treat a chemical formula as their sole input, completely disregarding how atoms are arranged in space. The foundational premise is that the synthesizability of a compound is implicitly encoded in the identity and proportion of its constituent elements [2]. These models convert stoichiometries into machine-readable numerical vectors (features) using properties such as atomic radius, electronegativity, ionization energy, and valence electron counts, often combined through weighted averages, maximum/minimum values, or other statistical aggregations [1].

Common featurizers like MAGPIE and JARVIS implement this approach, generating hundreds of descriptors from elemental properties [1]. For example, a composition-based model might represent a material like TiO₂ by creating features from the atomic radius and electronegativity of titanium and oxygen, their stoichiometric ratio, and the overall Mendeleev number of the composition. These models are particularly valuable in the early stages of exploration when thousands of potential compositions need to be screened rapidly, and structural data is unavailable [2].

Structure-Based Models: Decoding Atomic Arrangements

Structure-based models operate on the principle that atomic-level structure—including bonding networks, coordination environments, and symmetry—is a primary determinant of a material's stability and synthesizability. These models require a full description of the crystal structure, typically from a CIF or POSCAR file, which includes lattice parameters, atomic coordinates, and space group information [3] [4].

These models employ sophisticated representations to encode periodic crystal structures. The Crystal Graph Convolutional Neural Network (CGCNN) creates a graph where atoms are nodes and edges represent bonds, capturing local connectivity [5]. The Smooth Overlap of Atomic Positions (SOAP) descriptor quantifies the local chemical environments around each atom [1]. Recent advancements include the Fourier-Transformed Crystal Properties (FTCP) representation, which incorporates information from both real and reciprocal space to better describe periodicity [5]. Large Language Models (LLMs) have also been adapted for this purpose by converting crystal structures into specialized text sequences ("material strings") that can be processed by natural language algorithms [4].

Performance Comparison: A Quantitative Analysis

Direct experimental comparisons reveal distinct performance profiles for composition and structure-based models. The following table summarizes key performance metrics from recent studies.

Table 1: Comparative Performance of Composition and Structure-Based Models

| Model Type | Representative Approach | Reported Accuracy | Key Strengths | Principal Limitations |

|---|---|---|---|---|

| Composition-Based | Semi-supervised learning on stoichiometry [2] | Recall: 83.4%, Precision: 83.6% | Rapid screening, high throughput, applicable when structures are unknown | Cannot distinguish polymorphs, misses structural stability cues |

| Structure-Based | Crystal Graph Neural Networks (CGCNN) [5] | Precision/Recall: ~82% | Accounts for polymorphs, captures bonding and coordination | Requires full 3D structure, computationally more intensive |

| Structure-Based | Fourier-Transformed Crystal Properties (FTCP) with Deep Learning [5] | Precision: 82.6%, Recall: 80.6% | Incorporates reciprocal-space information, high fidelity | Complex feature calculation, requires structural data |

| Structure-Based | Crystal Synthesis Large Language Models (CSLLM) [4] | Accuracy: 98.6% | State-of-the-art accuracy, generalizes to complex structures | Requires extensive data curation and computational resources |

The data demonstrates a clear accuracy advantage for structure-based models, with the CSLLM framework achieving remarkable 98.6% accuracy on testing data, significantly outperforming traditional thermodynamic and kinetic stability metrics [4]. However, composition-based models remain valuable for high-throughput initial screening due to their computational efficiency and applicability when structural data is unavailable.

Table 2: Hybrid Model Performance in Experimental Validation

| Study Focus | Model Architecture | Experimental Validation Result |

|---|---|---|

| Synthesizability-guided pipeline for materials discovery [6] | Rank-average ensemble of composition and structure encoders | Successfully synthesized 7 out of 16 targeted compounds (44% success rate) within 3 days |

| Machine-Learning-Assisted prediction of synthesizable structures [3] | Symmetry-guided structure derivation with Wyckoff encode-based ML | Identified 92,310 potentially synthesizable structures from 554,054 GNoME candidates |

Methodologies: Experimental Protocols and Workflows

Protocol for Composition-Based Synthesizability Prediction

Data Curation: Collect a dataset of known synthesizable and non-synthesizable compositions from databases like the Materials Project (MP) [6]. Label a composition as synthesizable if any polymorph has an associated experimental entry in the Inorganic Crystal Structure Database (ICSD). Compositions where all polymorphs are flagged as theoretical are considered non-synthesizable [6].

Feature Generation: Use featurizers such as Composition Analyzer Featurizer (CAF) or mat2vec to convert chemical formulae into numerical feature vectors. These typically include stoichiometric attributes, elemental property statistics (average, range, variance), and electron orbital characteristics [1].

Model Training: Implement a classifier such as XGBoost or a fine-tuned transformer model (e.g., MTEncoder). For data with limited negative examples, apply Positive-Unlabeled (PU) learning techniques, which treat unlabeled data as a mixture of positive and negative samples [7] [2].

Validation: Evaluate using time-split validation, training on data before a specific date and testing on compositions added afterward, to simulate real-world discovery progression [5].

Protocol for Structure-Based Synthesizability Prediction

Data Preparation: Obtain crystal structures in CIF or POSCAR format. For synthesizable examples, use experimentally confirmed structures from ICSD. For non-synthesizable examples, use theoretical structures with low "crystal-likeness" scores from computational databases [4].

Structure Representation: Convert crystals into a model-ready format. Options include:

- Crystal Graphs for CGCNN [5]

- Material Strings for LLM-based approaches (e.g., "SP | a, b, c, α, β, γ | (AS1-WS1[WP1])...") [4]

- Smooth Overlap of Atomic Positions (SOAP) descriptors [1]

Model Training: Fine-tune a Graph Neural Network (e.g., from JMP model) or a Large Language Model (e.g., LLaMA) on the structured data. For LLMs, this involves domain-specific adaptation to align linguistic features with crystallographic concepts [6] [4].

Evaluation: Assess model performance on hold-out test sets containing diverse crystal systems and compositions, including complex structures with large unit cells to evaluate generalization capability [4].

Integrated Hybrid Workflow

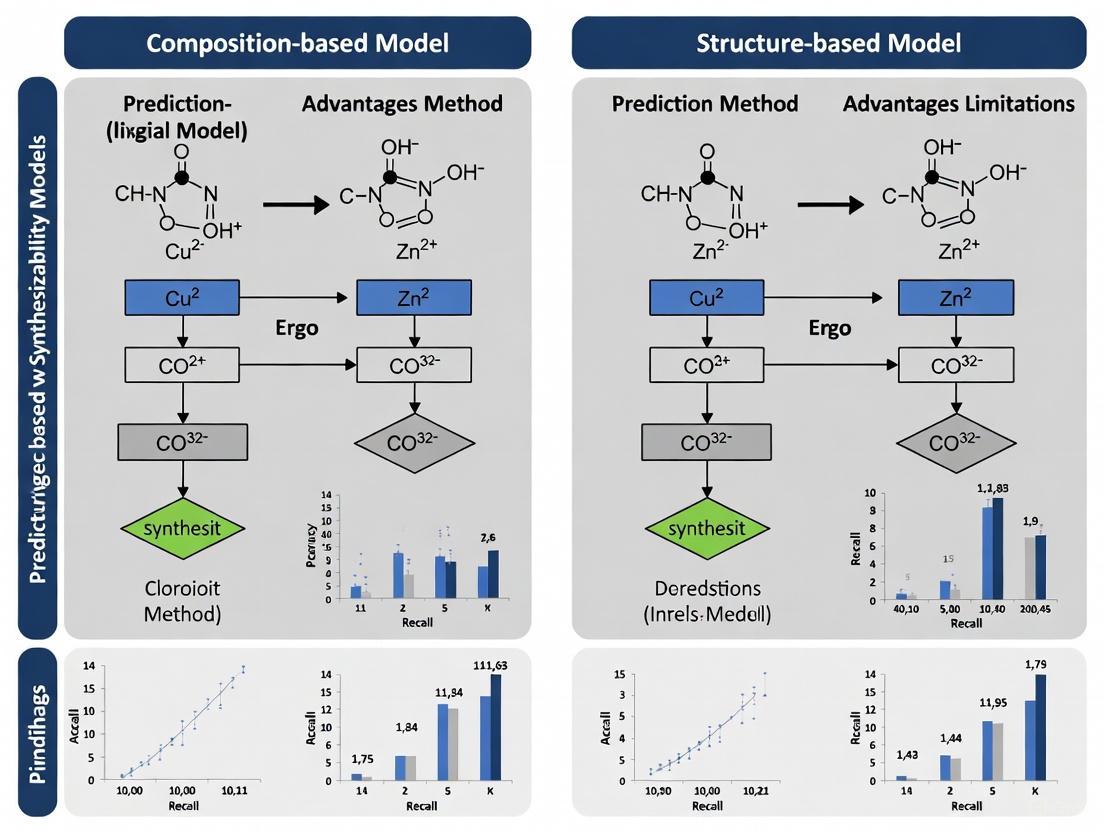

Leading-edge research increasingly combines both approaches. The following diagram illustrates a synthesizability-driven crystal structure prediction (CSP) framework that integrates both methodologies:

Synthesizability-Driven Crystal Structure Prediction Workflow

Essential Research Reagents and Computational Tools

Table 3: Key Research Resources for Synthesizability Prediction

| Resource Name | Type | Primary Function | Access/Implementation |

|---|---|---|---|

| Materials Project (MP) Database [3] [5] | Data Repository | Source of computed material properties and structures; provides training data and benchmark candidates | Public database (https://materialsproject.org/) |

| Inorganic Crystal Structure Database (ICSD) [7] [4] | Data Repository | Curated collection of experimentally synthesized crystal structures; serves as ground truth for synthesizable materials | Licensed database |

| Composition Analyzer Featurizer (CAF) [1] | Software Tool | Generates numerical compositional features from chemical formulas for ML model input | Open-source Python program |

| Structure Analyzer Featurizer (SAF) [1] | Software Tool | Extracts numerical structural features from CIF files by generating supercells | Open-source Python program |

| AiZynthFinder [8] [9] | Software Tool | Computer-Aided Synthesis Planning (CASP) tool; used for retrosynthesis analysis and route prediction | Open-source toolkit |

| Positive-Unlabeled (PU) Learning [7] [2] | Methodology | Enables training classification models when only positive (synthesizable) and unlabeled examples are available | Algorithmic implementation |

The fundamental divide between composition-based and structure-based models represents a trade-off between computational efficiency and predictive accuracy. Composition-based approaches provide rapid, high-throughput screening capabilities essential for exploring vast compositional spaces, while structure-based methods deliver superior accuracy by accounting for the critical role of atomic arrangement in determining synthesizability.

The emerging trend toward hybrid models that integrate both compositional and structural signals demonstrates promising results, achieving experimental synthesis success rates that significantly advance the field [6]. Furthermore, the application of large language models to synthesizability prediction represents a paradigm shift, achieving unprecedented accuracy above 98% by effectively processing textual representations of crystal structures [4].

For researchers and drug development professionals, the selection of an appropriate model depends critically on the discovery context: composition-based screening for initial exploration of large chemical spaces, structure-based evaluation for prioritizing candidates with known structures, and hybrid approaches for maximizing experimental success rates in resource-constrained environments. As these methodologies continue to evolve, they promise to significantly narrow the gap between computational materials design and experimental realization, accelerating the discovery of novel functional materials and therapeutic agents.

The accuracy of machine learning models in predicting material synthesizability is fundamentally governed by the type of input data they utilize. The field is currently divided between two principal paradigms: composition-based models that rely solely on chemical formulas, and structure-based models that require full 3D atomic coordinates. Composition-based approaches offer simplicity and computational efficiency, operating on readily available stoichiometric data. In contrast, structure-based models demand more complex, computationally derived crystal structure information but capture richer geometric and topological features. This guide objectively compares the performance, data requirements, and experimental validation of these competing approaches, examining how each data type influences predictive accuracy, practical utility, and ultimately, success in guiding experimental synthesis.

Comparative Performance Analysis: Composition vs. Structure-Based Models

Quantitative Performance Metrics

Table 1: Performance comparison of synthesizability prediction models based on input data type

| Model Category | Specific Model | Key Input Data | Accuracy/Performance | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Composition-Based | StoiGPT-FT [10] | Stoichiometric formula only | Outperforms structure-based GPT on polymorph-level synthesizability [10] | Computational efficiency; works without structural data [10] | Cannot distinguish between different polymorphs of the same composition [10] |

| Structure-Based | StructGPT-FT [10] | Text description of crystal structure | High accuracy; slightly outperforms graph-based models (PU-CGCNN) [10] | Distinguishes between polymorphs; captures spatial relationships [4] [10] | Requires full crystal structure; computationally intensive [10] |

| Structure-Based | PU-GPT-embedding [10] | Text-embedding representation of structure | Superior to both StructGPT-FT and PU-CGCNN [10] | LLM embeddings outperform traditional graph representations [10] | Depends on quality of structural description and conversion [10] |

| Structure-Based | Crystal Synthesis LLM (CSLLM) [4] | Text representation ("material string") of 3D crystal structure | 98.6% accuracy in synthesizability classification [4] | Outperforms thermodynamic (74.1%) and kinetic (82.2%) stability methods [4] | Requires comprehensive structural data representation [4] |

| Integrated Approach | Unified Composition+Structure [6] | Combined composition and crystal structure data | Successfully synthesized 7 of 16 predicted candidates experimentally [6] | Rank-average ensemble leverages strengths of both data types [6] | Increased model complexity and data requirements [6] |

Experimental Validation and Real-World Performance

Recent experimental studies provide critical validation of these computational approaches. A synthesizability-guided pipeline that integrated both compositional and structural signals identified 24 highly synthesizable candidates from a pool of 4.4 million computational structures [6]. Through automated laboratory synthesis, researchers successfully characterized 16 targets and confirmed 7 matched the predicted crystal structure, including one novel and one previously unreported compound [6]. This demonstrates that models using structural data can indeed transition from computational prediction to successful laboratory synthesis.

The performance advantage of structure-based models is particularly evident in their ability to overcome limitations of traditional stability metrics. The CSLLM framework achieves 98.6% accuracy in synthesizability classification, significantly outperforming traditional thermodynamic screening based on energy above hull (74.1%) and kinetic stability assessment via phonon spectrum analysis (82.2%) [4]. This substantial performance gap highlights how data-driven structural approaches capture synthesizability factors beyond pure thermodynamic considerations.

Experimental Protocols and Methodologies

Data Curation and Preprocessing Protocols

Table 2: Data preparation methodologies for synthesizability prediction models

| Experimental Step | Composition-Based Protocols | Structure-Based Protocols | Integrated Approach Protocols |

|---|---|---|---|

| Positive Sample Collection | Use known synthesized compositions from databases like Materials Project [10] | Extract experimentally validated crystal structures from ICSD or COD [4] [11] | Combine both compositional and structural databases; label based on experimental confirmation [6] |

| Negative Sample Generation | Treat compositions with no synthesized polymorphs as unsynthesizable [10] | Use PU learning models (CLscore <0.1) to identify non-synthesizable structures [4] | Apply rank-average ensemble methods to combine signals from both data types [6] |

| Data Representation | Direct use of stoichiometric formulas [10] | Convert CIF files to text descriptions using tools like Robocrystallographer [10] | Use separate encoders for composition (transformer) and structure (graph neural network) [6] |

| Model Training | Fine-tune LLMs on composition-only data [10] | Fine-tune LLMs on text descriptions of crystal structures [4] [10] | End-to-end fine-tuning of both encoders with binary cross-entropy loss [6] |

| Validation Approach | Hold-out test sampling of positive and unlabeled data [10] | α-estimation for precision and false positive rates in PU learning [10] | Experimental synthesis validation of top-ranked candidates [6] |

Structural Representation Methods

A critical methodological challenge for structure-based models is converting 3D atomic coordinates into machine-readable formats. Several advanced representation techniques have emerged:

- Material Strings: CSLLM uses a specialized text representation that integrates space group, lattice parameters, and atomic coordinates in Wyckoff positions, efficiently capturing essential crystal information in a compact format [4].

- Text Descriptions: Robocrystallographer generates human-readable text descriptions of crystal structures, which can be processed by LLMs to create embedding representations [10].

- 3D Pixel-wise Images: Some deep learning models represent crystals as 3D color-coded images, enabling convolutional neural networks to learn hidden structural and chemical patterns [11].

- Graph Representations: Crystal structures can be represented as graphs with atoms as nodes and bonds as edges, processed by graph neural networks [10].

- Atom Pair Maps (APM): This numerical matrix representation encodes physicochemical properties of all atom pairs and their interatomic distances, capturing 3D shape information for both compounds and protein binding pockets [12].

Data Processing Pathways for Synthesizability Models

Table 3: Key databases, tools, and computational resources for synthesizability prediction research

| Resource Name | Type/Function | Specific Application in Synthesizability Research |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [4] | Experimental crystal structure database | Source of synthesizable (positive) crystal structures for model training [4] |

| Materials Project [4] [10] | Computational materials database | Provides both synthesized and hypothetical structures; source of composition and structure data [4] [10] |

| Crystallographic Open Database (COD) [11] | Open-access crystal structure database | Source of experimentally synthesized crystalline materials for training data [11] |

| Robocrystallographer [10] | Text description generator | Converts CIF-formatted crystal structures into textual descriptions for LLM processing [10] |

| Atom Pair Map (APM) [12] | Molecular representation tool | Generates numerical matrices encoding 3D spatial arrangement of atoms for structure-based screening [12] |

| Positive-Unlabeled (PU) Learning [4] [10] | Machine learning framework | Addresses the challenge of lacking true negative samples in synthesizability prediction [4] [10] |

| Retro-Rank-In [6] | Precursor-suggestion model | Generates ranked lists of viable solid-state precursors for target compounds after synthesizability assessment [6] |

The comparative analysis reveals that the choice between composition-based and structure-based models involves fundamental trade-offs between computational efficiency and predictive precision. Composition-based models offer practical advantages for high-throughput screening of large chemical spaces where structural data is unavailable or computationally prohibitive. However, structure-based models demonstrate superior accuracy in distinguishing synthesizable materials, particularly for polymorph prediction, and have proven capable of guiding successful experimental synthesis campaigns. The emerging trend toward integrated approaches that combine both compositional and structural signals represents a promising direction, leveraging the strengths of both data types while mitigating their individual limitations. As experimental validation continues to benchmark computational predictions, the field appears to be evolving toward context-dependent model selection, where the optimal data input type is determined by specific research goals, available computational resources, and the desired balance between screening throughput and prediction accuracy.

The acceleration of computational materials discovery has created a fundamental bottleneck: the experimental synthesis of predicted compounds. For years, thermodynamic stability metrics, particularly energy above the convex hull (Ehull), served as the primary proxy for synthesizability. However, this composition-centric approach has proven insufficient, as many compounds with favorable formation energies remain unsynthesized, while various metastable structures are experimentally realized [4]. This limitation has spurred the development of a new generation of predictive models that leverage structural data—the precise three-dimensional atomic arrangements within crystal structures—to achieve a more accurate assessment of synthesizability.

This guide provides an objective comparison of these two methodological paradigms: traditional composition-based models versus emerging structure-based approaches. By examining their underlying protocols, performance metrics, and practical applications, we aim to delineate the specific advantages that structural information provides in bridging the gap between theoretical prediction and experimental realization in materials science and drug discovery.

Performance Comparison: Composition-Based vs. Structure-Based Models

Quantitative comparisons across recent studies consistently demonstrate that models incorporating structural data significantly outperform those relying solely on composition. The table below summarizes key performance metrics from several investigations.

Table 1: Performance Comparison of Synthesizability Prediction Models

| Model / Framework | Input Data Type | Key Performance Metric | Result | Reference |

|---|---|---|---|---|

| Thermodynamic (Ehull) | Composition | Accuracy | 74.1% | [4] |

| Kinetic (Phonon) | Structure | Accuracy | 82.2% | [4] |

| CSLLM (Synthesizability LLM) | Structure (Textualized) | Accuracy | 98.6% | [4] |

| FTCP + Deep Learning | Structure (FTCP) | Overall Accuracy | 82.6% | [5] |

| Compositional MTEncoder | Composition | (AUPRC - Rank-Based) | Part of Ensemble | [6] |

| PU Learning (Jang et al.) | Structure | Recall | 86.2% | [5] |

| Human-Curated PU Learning | Structure & Synthesis Data | (Identified 134 synthesizable compositions) | Applied to Ternary Oxides | [7] |

The performance advantage of structure-based models is multifaceted. The Crystal Synthesis Large Language Model (CSLLM) framework not only achieves state-of-the-art accuracy in binary classification but also extends its capability to predict viable synthetic methods and appropriate precursors with over 90% and 80% accuracy, respectively [4]. Furthermore, structure-based models demonstrate superior generalization ability, accurately predicting the synthesizability of complex experimental structures that far exceed the complexity of their training data [4].

In drug design, the integration of structural data is equally critical. Frameworks like DiffSBDD and Rag2Mol leverage 3D structural information from protein pockets to generate novel drug candidates with superior binding affinities and drug-like properties, directly addressing the synthesizability and practicality challenges that plague composition-only or simple graph-based approaches [13] [14].

Experimental Protocols and Methodologies

The fundamental difference between the two classes of models lies in their input representation and data processing. The following workflow diagrams and protocol details illustrate these distinctions.

Composition-Based Model Protocol

Composition-based models primarily operate on the stoichiometric chemical formula of a material.

Step-by-Step Protocol:

- Input: A chemical composition (e.g., NaCl, CaTiO₃).

- Featurization: The composition is converted into a numerical vector. Common methods include:

- One-Hot Encoding: A 94-dimensional vector representing presence/absence of each element in the periodic table [5].

- Stoichiometric Features: Properties derived from the elemental ratios.

- Elemental Property Vectors: Using properties like electronegativity, atomic radius, etc., averaged over the composition.

- Model Training: A classifier (e.g., a neural network or tree-based model) is trained on a dataset where labels ("synthesizable" or "non-synthesizable") are assigned based on database records (e.g., presence in the ICSD for positive labels) [6] [5].

- Output: A synthesizability score or a binary classification.

Structure-Based Model Protocol

Structure-based models use the full crystallographic information, capturing atomic coordinates, lattice parameters, and symmetry.

Step-by-Step Protocol:

- Input: A crystal structure file (e.g., CIF or POSCAR) containing lattice parameters, atomic species, and fractional coordinates.

- Structure Representation: The 3D structure is transformed into a computationally usable format. Key methods include:

- Crystal Graph: A graph where nodes are atoms and edges represent bonds or spatial proximity. This is used by Graph Neural Networks (GNNs) like CGCNN [5].

- Material String: A specialized text representation developed for LLMs, condensing symmetry information (space group, Wyckoff positions) into a compact string format, avoiding redundant coordinate listings [4].

- Fourier-Transformed Crystal Properties (FTCP): A representation that incorporates both real-space and reciprocal-space features to capture crystal periodicity and elemental properties [5].

- 3D Point Cloud: Used in SBDD, representing the protein pocket and ligand as 3D coordinates and atom types [13].

- Model Training: Specialized architectures process these representations:

- Graph Neural Networks (GNNs): Operate directly on crystal graphs [6] [5].

- Large Language Models (LLMs): Fine-tuned on material strings to predict synthesizability and synthesis pathways [4].

- Equivariant Diffusion Models: Used in SBDD to generate molecules in 3D space conditioned on protein pockets [13].

- Output: A comprehensive synthesis report, often including a synthesizability score, recommended synthesis method (e.g., solid-state or solution), and a list of potential precursors.

The experimental protocols above rely on a suite of computational tools and data resources. The following table details the key components of the modern synthesizability predictor's toolkit.

Table 2: Key Research Reagents and Resources for Synthesizability Prediction

| Item Name | Type | Primary Function | Relevance |

|---|---|---|---|

| ICSD (Inorganic Crystal Structure Database) | Database | Source of experimentally confirmed, synthesizable crystal structures for model training. | Serves as the primary source of positive examples in supervised learning [4] [7] [5]. |

| Materials Project (MP) | Database | Provides a large repository of DFT-calculated structures (both theoretical and experimental). | Used to construct balanced datasets and for large-scale screening of candidate materials [4] [6] [5]. |

| Pymatgen | Software Library | Python library for materials analysis; enables manipulation of crystal structures and parsing of CIF/POSCAR files. | Crucial for featurization, data preprocessing, and accessing databases via the Materials API [5]. |

| CrabNet | Model | Composition-based model using self-attention to capture elemental interactions. | A high-performing baseline for composition-only approaches [5]. |

| CGCNN | Model | Graph Neural Network that operates on crystal graphs. | A foundational architecture for structure-based property prediction [5]. |

| FTCP | Featurization Method | Generates a Fourier-transformed representation of crystal properties. | Captures periodicity and elemental features in both real and reciprocal space [5]. |

| Positive-Unlabeled (PU) Learning | Algorithm | A semi-supervised learning technique for when only positive (synthesizable) and unlabeled data are available. | Addresses the critical lack of confirmed negative samples (non-synthesizable structures) [7] [5]. |

| Robocrystallographer | Software | Generates text-based summaries of crystal structures from CIF files. | Can be used to create descriptive text for fine-tuning LLMs on structural data [3]. |

The evidence from recent research presents a clear and compelling case: structural data provides a decisive information advantage over composition alone in predicting material synthesizability. While composition-based models offer a valuable and computationally lightweight first pass, their accuracy is fundamentally limited because they cannot discern polymorphs or account for the kinetic and spatial factors that govern real-world synthesis.

Structure-based models, through representations like crystal graphs, material strings, and FTCP descriptors, capture the essential atomic-level interactions and symmetry constraints that determine whether a theoretical structure can be realized in the laboratory. This is evidenced by their dramatic performance improvements, with accuracy reaching up to 98.6% in the most advanced frameworks [4]. The paradigm is shifting from merely identifying stable compositions to holistically evaluating synthesizable structures, complete with actionable guidance on methods and precursors. For researchers and drug development professionals, this means that prioritizing structural data and the models that leverage it is no longer an optimization—it is a necessity for efficient and successful discovery.

In the field of computational materials science and drug development, predicting whether a theoretical chemical structure can be successfully synthesized in the laboratory remains a fundamental challenge. The journey of materials design has evolved through multiple paradigms, from trial-and-error experiments to the current data-driven approaches that leverage machine learning (ML) and artificial intelligence [15]. Synthesizability prediction – determining the probability that a proposed material or compound can be experimentally realized – sits at the critical junction between computational prediction and practical application. This capability is essential for transforming theoretical innovations into real-world technologies, from novel pharmaceuticals to advanced energy materials.

The central methodological divide in synthesizability prediction lies between composition-based approaches that analyze chemical formulas and elemental properties, and structure-based methods that incorporate spatial arrangement and bonding information. Compositional models offer computational efficiency but lack structural insights, while structural models provide richer information at greater computational cost. Hybrid frameworks that integrate both compositional and structural features represent an emerging trend that aims to balance comprehensiveness with efficiency. This review provides a systematic comparison of representative tools and featurizers across these categories, with particular focus on their experimental performance in predicting synthesizability.

Composition and Structure Analyzer/Featurizer (CAF/SAF)

The Composition Analyzer Featurizer (CAF) and Structure Analyzer Featurizer (SAF) are open-source Python tools designed to generate explainable features for machine learning models in materials science [1]. CAF operates on chemical formulas provided in Excel files, generating 133 numerical compositional features derived from elemental properties and stoichiometric relationships. SAF processes crystal structure files (.cif format), creating supercells and extracting 94 numerical structural features that describe spatial arrangements and coordination environments.

A key innovation of the CAF/SAF framework is its emphasis on human-interpretable features that maintain physical significance, contrasting with "black box" representations that dominate some deep learning approaches. The featurizers implement sophisticated chemical sorting algorithms, using principles like electronegativity ordering or Mendeleev numbers to ensure consistent representation of chemical formulas [1]. This interpretability enables researchers to understand which physical and chemical factors drive synthesizability predictions, making the tools particularly valuable for scientific discovery rather than mere prediction.

Graph-Based Encoding Methods

Graph-based encodings represent materials as mathematical graphs where atoms correspond to nodes and chemical bonds form edges. The Crystal Graph Convolutional Neural Network (CGCNN) framework processes these graphs to learn material properties directly from atomic connections and coordinates [1]. Unlike predefined feature sets, CGCNN automatically learns relevant representations through graph convolution operations that propagate information across bonded atoms.

For large language models (LLMs), specialized graph encoding techniques have been developed to represent graph structures as text. Research from Google Research identifies three critical factors in graph encoding for LLMs: node encoding (representing individual nodes), edge encoding (describing relationships), and structural characteristics of the graph itself [16]. Its study introduced the "incident" encoding method, which significantly improved LLM performance on graph reasoning tasks – in some cases by up to 60% compared to other encoding schemes [16]. This approach enables LLMs to reason about connectivity patterns, detect cycles, and calculate network properties, extending their capabilities beyond traditional natural language processing.

Crystal Synthesis Large Language Models (CSLLM)

The Crystal Synthesis Large Language Models (CSLLM) framework represents a specialized application of LLMs to synthesizability prediction [15]. CSLLM employs three distinct models: a Synthesizability LLM that predicts whether a structure can be synthesized, a Method LLM that recommends synthesis approaches (solid-state or solution), and a Precursor LLM that identifies suitable chemical precursors. The system uses a novel "material string" representation that encodes essential crystal information in a compact text format, enabling efficient fine-tuning of LLMs on crystallographic data.

Table 1: Overview of Representative Featurizers and Their Capabilities

| Featurizer | Type | Input Format | Number of Features | Key Capabilities |

|---|---|---|---|---|

| CAF | Compositional | Chemical formula (Excel) | 133 | Elemental properties, stoichiometric ratios |

| SAF | Structural | Crystal structure (.cif) | 94 | Spatial arrangements, coordination environments |

| CGCNN | Graph-based | Crystal structure | N/A (learned representations) | Automatic feature learning from atomic connections |

| CSLLM | Hybrid (LLM) | Material string text | N/A (embedding dimensions) | Synthesizability prediction, method recommendation, precursor identification |

| Incident Encoding | Graph-to-text | Graph structure | Varies | LLM-compatible graph representation |

Experimental Performance Comparison

Synthesizability Prediction Accuracy

Recent research demonstrates substantial performance differences between featurization approaches for synthesizability prediction. The CSLLM framework achieved remarkable 98.6% accuracy in predicting synthesizability of 3D crystal structures, significantly outperforming traditional screening methods based on thermodynamic stability (74.1% accuracy) and kinetic stability (82.2% accuracy) [15]. This performance advantage persisted even for complex structures with large unit cells, where CSLLM maintained 97.9% accuracy, demonstrating exceptional generalization capability.

Alternative machine learning approaches also show promising results. A synthesizability-guided pipeline that integrated compositional and structural signals through a rank-average ensemble method successfully identified synthesizable candidates from millions of simulated structures [6]. In experimental validation, this approach successfully synthesized 7 of 16 target materials, with the entire experimental process completed in just three days – demonstrating the practical utility of accurate synthesizability prediction [6].

Table 2: Synthesizability Prediction Performance Across Methods

| Prediction Method | Accuracy | Advantages | Limitations |

|---|---|---|---|

| Thermodynamic (Energy above hull) | 74.1% | Physical interpretability, well-established | Misses synthesizable metastable phases |

| Kinetic (Phonon spectrum) | 82.2% | Accounts for dynamic stability | Computationally expensive, limited predictive value |

| CSLLM Framework | 98.6% | High accuracy, suggests methods and precursors | Requires substantial training data |

| Composition-Structure Ensemble | High experimental success (7/16 targets) | Balanced approach, practical validation | Complex implementation |

Classification Performance for Material Structures

In crystal structure classification tasks, the combined SAF+CAF feature set demonstrated competitive performance against established featurizers. When classifying nine structure types in equiatomic AB intermetallics, SAF+CAF achieved F-1 scores of 0.983 (XGBoost), 0.978 (SVM), and 0.94 (PLS-DA) – comparable to results from JARVIS, MAGPIE, mat2vec, and OLED datasets [1]. The Smooth Overlap of Atomic Positions (SOAP) featurizer achieved similar performance (F-1 scores: 0.983 XGBoost, 0.978 SVM, 0.94 PLS-DA) but required 6,633 features, making it significantly more computationally expensive than the more interpretable SAF+CAF approach [1].

The performance of graph-based encodings in LLMs varies substantially based on task complexity. For basic graph tasks like edge existence detection, LLMs performed only marginally better than random guessing in some configurations, but the optimal "incident" encoding provided dramatic improvements [16]. Model scale generally correlated with performance on graph reasoning tasks, though even the largest models struggled with certain challenges like cycle detection in path graphs [16].

Experimental Protocols and Methodologies

CSLLM Training and Evaluation

The CSLLM framework was trained on a balanced dataset comprising 70,120 synthesizable crystal structures from the Inorganic Crystal Structure Database (ICSD) and 80,000 non-synthesizable structures identified from 1,401,562 theoretical structures using positive-unlabeled learning [15]. The training approach involved:

- Data Curation: Selecting crystal structures with ≤40 atoms and ≤7 different elements, excluding disordered structures

- Negative Sample Identification: Using a pre-trained PU learning model with CLscore threshold <0.1 to identify non-synthesizable examples

- Model Architecture: Employing multiple specialized LLMs fine-tuned on material-specific data

- Evaluation: Rigorous testing on held-out datasets including complex structures with large unit cells

This comprehensive training strategy enabled the CSLLM to learn the subtle relationships between crystal features and synthesizability, achieving state-of-the-art performance through domain-specific fine-tuning that aligned the LLMs' attention mechanisms with materials science principles [15].

CAF/SAF Feature Generation and Validation

The CAF and SAF featurizers were validated through systematic comparison with established feature sets on standardized classification tasks [1]. The experimental protocol included:

- Feature Generation: CAF processed chemical formulas to generate 133 compositional features; SAF analyzed .cif files to produce 94 structural features

- Model Training: Using PLS-DA, SVM, and XGBoost algorithms on the generated features

- Performance Benchmarking: Comparing classification accuracy against JARVIS, MAGPIE, mat2vec, and OLED feature sets

- Interpretability Analysis: Identifying the most statistically significant features contributing to classification accuracy

This methodology demonstrated that the CAF/SAF feature set provided a cost-efficient and reliable solution for structure classification, with the advantage of human-interpretable features that facilitate scientific insight rather than functioning as black-box predictors [1].

Graph Encoding for LLMs

The experimental evaluation of graph encoding methods employed the GraphQA benchmark specifically designed to evaluate LLMs on graph reasoning tasks [16]. The methodology encompassed:

- Graph Generation: Creating diverse graph types using Erdős-Rényi, scale-free, Barabasi-Albert, and stochastic block models

- Encoding Variations: Testing different node encodings (integers, names, letters) and edge encodings (parenthesis notation, phrases, symbolic representations)

- Prompting Strategies: Comparing zero-shot, few-shot, chain-of-thought, and specialized graph prompting approaches

- Task Evaluation: Assessing performance on edge existence, node degree calculation, connectivity checks, and cycle detection

This systematic approach identified the critical importance of encoding selection, with the "incident" encoding consistently outperforming other methods across most graph reasoning tasks [16].

Workflow and Signaling Pathways

Featurization and Prediction Workflow for Material Synthesizability

Research Reagent Solutions

Table 3: Essential Computational Tools for Synthesizability Prediction

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Materials Project Database | Data Resource | Provides computational material properties | Source of training data and benchmarking |

| Inorganic Crystal Structure Database (ICSD) | Data Resource | Experimentally confirmed crystal structures | Source of synthesizable positive examples |

| Derwent Innovations Index | Data Resource | Patent information and technological applications | Tracking innovation in specific domains like sustainable aviation fuels [17] |

| Python Scikit-learn | Software Library | Machine learning algorithms (SVM, XGBoost) | Model training and evaluation |

| Matminer | Featurization Toolkit | Composition and structure feature generation | Benchmarking and comparison of feature sets |

| PyTorch/TensorFlow | Deep Learning Frameworks | Neural network implementation | Graph neural networks and transformer models |

| JMP/MTEncoder | Pre-trained Models | Compositional transformers for materials | Base models for fine-tuning synthesizability predictors |

The comparative analysis of representative featurizers reveals distinct performance advantages for different synthesizability prediction scenarios. Composition-based approaches like CAF offer computational efficiency and interpretability, while structure-based methods like SAF and graph encodings capture essential spatial relationships that significantly enhance prediction accuracy. Hybrid frameworks that integrate multiple feature types, particularly the CSLLM approach achieving 98.6% accuracy, demonstrate the profound benefits of combining compositional and structural information.

For researchers and drug development professionals, selection criteria should balance accuracy requirements with interpretability needs and computational resources. The emerging generation of large language models fine-tuned on materials science data represents a transformative development, offering unprecedented accuracy while additionally providing synthesis method recommendations and precursor identification. As these tools continue to evolve, their integration into automated discovery pipelines promises to accelerate the transformation of theoretical predictions into synthesized materials, ultimately bridging the critical gap between computational design and experimental realization.

Inside the Models: Methodologies, Workflows, and Real-World Application Scenarios

The accelerated discovery of new materials and molecules through computational methods has unveiled a significant bottleneck: many theoretically predicted candidates are not synthetically accessible. Synthesizability prediction has thus emerged as a critical frontier in materials science and drug discovery, aiming to bridge the gap between in-silico design and experimental realization. Current machine learning approaches for this challenge can be broadly categorized into two paradigms: composition-based models, which rely solely on chemical formulas, and structure-based models, which utilize full crystallographic or structural information. Composition-based methods offer the advantage of applicability even when atomic arrangements are unknown, making them suitable for the earliest stages of discovery where countless compositions are screened. In contrast, structure-based methods can differentiate between polymorphs of the same composition, capturing essential physics that governs synthetic accessibility but requiring detailed structural data that may not be available for truly novel materials. This article provides a comparative analysis of these competing architectures, examining their underlying algorithms, performance metrics, and practical utility in guiding experimental synthesis, with a focus on providing researchers with actionable insights for selecting and implementing these powerful tools.

Comparative Analysis of Model Architectures and Performance

Composition-Based Models: Learning from Chemical Formulas Alone

Composition-based models operate on the principle that a material's synthesizability can be inferred from its elemental components and their stoichiometric relationships, without requiring knowledge of its atomic structure. These models are particularly valuable for high-throughput screening of vast compositional spaces where structural data is unavailable or computationally prohibitive to generate.

SynthNN: This deep learning model employs an atom2vec representation, which learns optimal embeddings for each element directly from the distribution of synthesized materials in databases like the Inorganic Crystal Structure Database (ICSD). By treating synthesizability prediction as a positive-unlabeled (PU) learning problem, SynthNN addresses the fundamental challenge that most unsynthesized materials are merely unlabeled rather than definitively unsynthesizable. In benchmark tests, SynthNN demonstrated a remarkable 7× higher precision at identifying synthesizable materials compared to traditional density functional theory (DFT) formation energy calculations, and outperformed 20 expert materials scientists with 1.5× higher precision while completing tasks five orders of magnitude faster. The model autonomously learned fundamental chemical principles including charge-balancing, chemical family relationships, and ionicity from the data alone, without explicit programming of these concepts [18].

Compositional MTEncoder: This approach adapts transformer architecture—foundationally designed for natural language processing—to interpret chemical formulas as sequential data. Fine-tuned specifically for synthesizability prediction, it captures complex, long-range dependencies between elements in a stoichiometry. In a combined pipeline with structural models, it contributes to a rank-average ensemble method that successfully identified hundreds of highly synthesizable candidates from millions of computed structures [6].

Table 1: Key Performance Metrics of Composition-Based Models

| Model Name | Architecture | Key Advantage | Reported Performance | Primary Application |

|---|---|---|---|---|

| SynthNN | Atom2Vec + Deep Neural Network | No structural data required; high throughput | 7× higher precision than DFT-based screening | Inorganic crystalline materials discovery |

| Compositional MTEncoder | Fine-tuned Transformer | Captures long-range elemental dependencies | Effective in rank-average ensembles with structural models | Broad inorganic crystal screening |

| CSLLM (Compositional Component) | Fine-tuned Large Language Model | Exceptional accuracy on balanced datasets | 98.6% accuracy on test set [4] | 3D crystal structure synthesizability |

Structure-Based Models: Leveraging Atomic Arrangements for Accurate Predictions

Structure-based synthesizability models utilize the full three-dimensional atomic configuration of materials, enabling them to capture polymorph-specific synthetic accessibility and local coordination environments that composition-based approaches cannot discern.

Crystal Graph Neural Networks: These models represent crystal structures as graphs where nodes correspond to atoms and edges represent interatomic interactions within a specified cutoff radius. The JMP model, fine-tuned for synthesizability prediction, processes these graphs to learn both local coordination environments and global crystal symmetry patterns. This approach directly addresses the limitation of composition-only models that cannot distinguish between different structural polymorphs of the same composition, such as diamond and graphite, which have dramatically different synthetic pathways and accessibility [6] [3].

Crystal Synthesis Large Language Models (CSLLM): This innovative framework adapts large language models (LLMs) to process crystal structures by converting them into specialized "material strings"—a text representation that integrates space group, lattice parameters, and essential atomic coordinates while eliminating redundant information. The structural component of CSLLM achieved state-of-the-art accuracy (98.6%) in synthesizability classification, significantly outperforming traditional thermodynamic (74.1%) and kinetic (82.2%) stability metrics. The model also demonstrated exceptional generalization capability when tested on complex structures with large unit cells that considerably exceeded the complexity of its training data [4].

Wyckoff Encode-Based Models: These approaches leverage the mathematical language of crystallography by encoding the Wyckoff positions—the set of equivalent positions in a space group—that atoms occupy in a crystal structure. This representation captures essential symmetry information that governs synthetic accessibility. Integrated within a synthesizability-driven crystal structure prediction framework, this method enabled the identification of 92,310 potentially synthesizable structures from 554,054 candidates in the GNoME database and successfully reproduced 13 experimentally known XSe (X = Sc, Ti, Mn, Fe, Ni, Cu, Zn) structures [3].

Table 2: Key Performance Metrics of Structure-Based Models

| Model Name | Architecture | Structural Representation | Reported Performance | Notable Application |

|---|---|---|---|---|

| Crystal Graph Neural Network | Graph Neural Network | Crystal structure graphs | Part of ensemble identifying 7/16 successful syntheses [6] | Metal oxide screening |

| CSLLM | Fine-tuned Large Language Model | "Material string" text representation | 98.6% accuracy; outperforms stability metrics [4] | General 3D crystal structures |

| Wyckoff Encode-Based Model | Custom ML Model | Wyckoff position encoding | Identified 92k synthesizable candidates from GNoME [3] | Chalcogenide materials discovery |

Hybrid and Ensemble Approaches: Combining Multiple Signals

Recognizing the complementary strengths of composition and structure-based approaches, several research groups have developed hybrid models that integrate both signals for enhanced synthesizability assessment.

Rank-Average Ensemble: This approach combines predictions from separate composition and structure models through a Borda fusion method, which converts probabilities to ranks and averages them across both models. This ensemble technique was applied to screen over 4.4 million computational structures, identifying approximately 500 high-priority candidates after applying practical filters (removing platinoid elements, non-oxides, and toxic compounds). Experimental validation of 16 targets resulted in 7 successful syntheses that matched the target structure, including one completely novel and one previously unreported compound. The entire experimental process from screening to characterization was completed in just three days, demonstrating the remarkable acceleration enabled by these integrated ML approaches [6].

Synthesizability-Driven Crystal Structure Prediction: This framework combines symmetry-guided structure derivation from known prototypes with machine learning-based synthesizability evaluation. By generating candidate structures through group-subgroup relations from synthesized prototypes rather than random generation, this method ensures that sampled structures retain atomic spatial arrangements of experimentally realizable materials. The resulting structures are classified into configuration subspaces using Wyckoff encodes and filtered by synthesizability probability before final evaluation, creating a more efficient search path for synthesizable candidates [3].

Experimental Protocols and Validation Methodologies

Data Curation and Training Strategies

The performance of synthesizability models heavily depends on their training data and learning frameworks. Key considerations include:

Positive and Negative Sample Selection: Most models use experimentally synthesized structures from databases like the Inorganic Crystal Structure Database (ICSD) as positive examples. The critical challenge lies in constructing reliable negative sets of unsynthesizable materials, often addressed through positive-unlabeled (PU) learning approaches. For instance, one method applies a pre-trained PU learning model to assign CLscores to theoretical structures, with scores below 0.1 indicating non-synthesizability [4] [18].

Data Balancing and Representation: The CSLLM framework utilized a balanced dataset containing 70,120 synthesizable crystal structures from ICSD and 80,000 non-synthesizable structures screened from over 1.4 million theoretical structures. This balanced approach prevents model bias toward either class and enhances generalization [4].

Text-Based Crystal Representations: For LLM-based approaches, converting crystal structures into efficient text formats is essential. The "material string" representation condenses essential crystallographic information (space group, lattice parameters, atomic coordinates) while eliminating redundancy by leveraging symmetry information rather than listing all atomic positions [4].

Experimental Validation and Performance Metrics

Rigorous experimental validation remains the gold standard for assessing synthesizability model performance:

Experimental Synthesis Success Rates: The most compelling validation comes from actual synthesis attempts of model-predicted candidates. In one notable study, a combined compositional and structural synthesizability score was used to evaluate structures from the Materials Project, GNoME, and Alexandria databases, identifying several hundred highly synthesizable candidates. Subsequent experimental synthesis across 16 targets successfully yielded 7 matches to the predicted structures, with the entire process completed in just three days [6].

Comparison to Traditional Methods: Models are typically benchmarked against traditional synthesizability proxies like formation energy calculations and charge-balancing criteria. The CSLLM framework significantly outperformed both thermodynamic (energy above hull ≥0.1 eV/atom) and kinetic (lowest phonon frequency ≥ -0.1 THz) stability metrics, achieving 98.6% accuracy compared to 74.1% and 82.2%, respectively [4].

Retrospective Prediction Accuracy: Models are frequently tested on their ability to correctly classify known synthesized and non-synthesized materials. SynthNN demonstrated 7× higher precision than DFT-calculated formation energies at identifying synthesizable materials and outperformed all 20 expert materials scientists in a head-to-head comparison [18].

Essential Research Reagents and Computational Tools

Table 3: Key Research Reagents and Computational Tools for Synthesizability Prediction

| Resource Name | Type | Primary Function | Access Information |

|---|---|---|---|

| Materials Project | Database | Source of computed material structures and properties | https://materialsproject.org/ |

| Inorganic Crystal Structure Database (ICSD) | Database | Curated experimental crystal structures for training | https://icsd.fiz-karlsruhe.de/ |

| AiZynthFinder | Software Tool | Retrosynthesis planning for synthesizability assessment | Open-source (GitHub) |

| GNoME Database | Database | Source of predicted crystal structures for screening | https://github.com/google-deepmind/materials_discovery |

| DeepSA | Web Tool | Deep learning predictor for compound synthesis accessibility | https://bailab.siais.shanghaitech.edu.cn/services/deepsa/ |

| Retro* | Algorithm | Neural-based A*-like algorithm for synthetic route finding | Implementation dependent |

| JMP Model | Pre-trained Model | Graph neural network for crystal structure property prediction | https://github.com/facebookresearch/jmp |

The comparative analysis of composition-based and structure-based synthesizability prediction models reveals a complementary relationship rather than a clear superiority of one approach over the other. Composition-based models like SynthNN offer unparalleled screening throughput and applicability to early discovery stages where structural data is unavailable. In contrast, structure-based approaches such as crystal graph networks and CSLLM provide higher resolution predictions that account for polymorph-specific synthesizability, albeit with increased computational requirements and data dependencies. The most promising results emerge from hybrid approaches that leverage both compositional and structural signals through ensemble methods, as demonstrated by the successful experimental synthesis of 7 out of 16 predicted candidates.

Future research directions should address critical challenges such as data bias in training sets [19], domain adaptation for specialized material classes, and integration of synthesis route planning directly into the prediction pipeline. As these models continue to mature, they will play an increasingly vital role in accelerating the discovery of functional materials and therapeutic compounds by ensuring that computationally designed candidates are not only theoretically promising but also experimentally accessible.

The discovery of new inorganic crystalline materials is a fundamental driver of technological innovation. However, a significant bottleneck exists in translating computationally predicted materials into experimentally realized compounds. The central challenge lies in accurately predicting synthesizability—whether a proposed material can be synthesized in a laboratory using current methods. Traditionally, this task has relied on the expertise of solid-state chemists or computational proxies like thermodynamic stability, but these approaches are either slow, subjective, or inaccurate [18]. The failure to account for kinetic stabilization, precursor availability, and complex human factors means that many materials predicted to be stable are, in practice, unsynthesizable [5].

To address this, machine learning models have emerged as powerful tools for predicting synthesizability. These models largely fall into two categories: composition-based models, which use only the chemical formula as input, and structure-based models, which require full crystal structure information. Composition-based models like SynthNN are exceptionally well-suited for the initial, high-throughput screening of vast chemical spaces where structures are unknown. This guide provides an objective comparison of these approaches, detailing their performance, methodologies, and ideal use cases to inform researchers and drug development professionals in their materials discovery pipelines.

Model Performance Comparison

The performance of synthesizability prediction models varies significantly based on their input data and design. The table below summarizes key performance metrics for prominent models as reported in the literature.

Table 1: Performance Comparison of Selected Synthesizability Prediction Models

| Model Name | Input Type | Key Performance Metric | Reported Result | Key Advantage |

|---|---|---|---|---|

| SynthNN [18] | Composition | Precision | 7x higher than DFT formation energy | High-speed screening of compositional space |

| CSLLM [4] | Structure | Accuracy | 98.6% | State-of-the-art accuracy; predicts methods & precursors |

| FTCP-based Model [5] | Structure | Precision/Recall | 82.6%/80.6% | Uses Fourier-transformed crystal properties |

| PU-CGCNN [10] | Structure | True Positive Rate (Recall) | ~83% (estimated from graph) | Traditional graph-based structure model |

| PU-GPT-embedding [10] | Structure (Text Embedding) | True Positive Rate (Recall) | ~87% (estimated from graph) | Combines LLM embeddings with PU learning |

Quantitative benchmarks show a clear performance-efficiency trade-off. Structure-based models like the Crystal Synthesis Large Language Model (CSLLM) achieve top-tier accuracy (98.6%) by leveraging rich structural information [4]. In a direct material discovery challenge, the composition-based SynthNN outperformed 20 expert material scientists, achieving 1.5× higher precision and completing the task five orders of magnitude faster than the best human expert [18]. This highlights the primary strength of composition-based models: unparalleled efficiency for initial screening.

Detailed Experimental Protocols

Understanding the experimental setup and training methodologies is crucial for interpreting model performance claims.

Protocol for Composition-Based Models (e.g., SynthNN)

Composition-based models are trained to distinguish synthesizable compositions from a background of hypothetical ones.

- Data Curation: The standard practice involves using the Inorganic Crystal Structure Database (ICSD) as a source of positive examples (synthesized materials) [18] [2] [10]. A critical challenge is the lack of a definitive set of unsynthesizable materials. To address this, researchers often use Positive-Unlabeled (PU) learning, which treats hypothetical materials from databases like the Materials Project (MP) as unlabeled data, probabilistically reweighting them according to their likelihood of being synthesizable [18] [10].

- Model Input & Architecture: These models, including SynthNN, often use learned vector representations (embeddings) for each atom in the periodic table. These embeddings are optimized alongside a deep neural network that processes the chemical formula [18]. This allows the model to learn chemical principles like charge-balancing and ionicity directly from data without explicit human guidance.

- Training Objective: The model is trained as a binary classifier, learning to output a synthesizability score or probability. The loss function is adjusted to account for the PU learning framework, preventing the model from simply labeling all unobserved materials as unsynthesizable [18] [2].

Protocol for Structure-Based Models (e.g., CSLLM, PU-CGCNN)

Structure-based models predict synthesizability from the atomic arrangement of a crystal structure.

- Data Curation: These models also use the ICSD for positive examples. For negative examples, a common method is to use a pre-trained PU learning model to screen large databases of theoretical structures (e.g., from MP) and select those with the lowest "crystal-likeness" scores as non-synthesizable examples, creating a balanced dataset [4].

- Structure Representation: A key differentiator is how the 3D crystal structure is converted into a model-readable input. Methods include:

- Crystal Graphs (CGCNN): Represents the crystal as a graph with atoms as nodes and bonds as edges, capturing periodicity and local environments [5] [10].

- Text Descriptions (CSLLM): A more recent approach uses a "material string" or tools like Robocrystallographer to generate a human-readable text description of the structure, which is then fed into a fine-tuned Large Language Model (LLM) [4] [10].

- LLM Embeddings: The text description of a structure can be converted into a numerical vector (embedding) using a pre-trained LLM, which is then used as input to a standard classifier [10].

- Training Objective: Similar to composition models, the goal is binary classification. The CSLLM framework fine-tunes three specialized LLMs not only for synthesizability classification but also for predicting synthetic methods and suitable precursors [4].

Workflow Visualization

The following diagram illustrates the contrasting workflows for composition-based and structure-based synthesizability prediction, highlighting their different inputs, processes, and primary applications.

Successful synthesizability prediction and materials discovery rely on a ecosystem of computational tools and data resources.

Table 2: Key Resources for Synthesizability Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [18] [4] | Materials Database | The authoritative source of experimentally synthesized inorganic crystal structures; serves as the primary source of positive training data. |

| Materials Project (MP) [5] [10] | Materials Database | A large repository of DFT-calculated material structures and properties; a common source of hypothetical/unlabeled data for training. |

| Positive-Unlabeled (PU) Learning [18] [2] [10] | Machine Learning Framework | A semi-supervised learning technique critical for training models where only positive (synthesized) examples are definitively known. |

| CrabNet [5] | Machine Learning Model | A composition-based model using self-attention mechanisms; often used as a benchmark for composition-only property prediction. |

| CGCNN [5] [10] | Machine Learning Model | A pioneering model that uses graph neural networks on crystal structures; a standard baseline for structure-based prediction. |

| Robocrystallographer [10] | Software Tool | Generates text descriptions of crystal structures, enabling the use of LLMs for structure-based tasks. |

Composition-based and structure-based models for synthesizability prediction are not mutually exclusive but are complementary tools that address different stages of the materials discovery pipeline. Composition-based models like SynthNN are the workhorses for initial exploration, capable of rapidly filtering millions of potential formulas down to a manageable set of promising candidates based on chemical composition alone [18]. Their speed and efficiency are unmatched for surveying vast, uncharted chemical spaces.

In contrast, structure-based models like CSLLM provide a powerful tool for detailed validation and synthesis planning, offering higher accuracy and the ability to predict not just if a material can be made, but how and from what [4]. The emerging trend of using LLMs and their embeddings shows significant promise for both improving performance and providing explainable insights [10].

The most effective future research pipelines will likely leverage a hybrid approach: using composition-based models for the initial wide net and applying more computationally intensive structure-based models to the resulting shortlist for final prioritization and experimental guidance. As these models continue to evolve, integrating them directly with automated synthesis platforms will further close the loop between computational prediction and experimental realization, dramatically accelerating the discovery of new functional materials [6].

The accelerating discovery of novel materials and molecules through computational methods has unveiled a significant bottleneck: many theoretically predicted structures are not experimentally realizable. This challenge has propelled the development of synthesizability models, which aim to prioritize candidates that can be practically fabricated. These models largely fall into two competing paradigms: those based solely on chemical composition and those that incorporate detailed three-dimensional structural information. Composition-based models leverage elemental stoichiometry and properties to estimate synthesizability, offering computational speed and applicability early in the design process when structural data may be unavailable. In contrast, structure-based models utilize atomic coordinates, bonding networks, and symmetry information to make more nuanced predictions that account for kinetic accessibility and synthetic pathways. This guide objectively compares the performance of these approaches, examining their underlying methodologies, predictive accuracy, and practical utility in guiding experimental synthesis across materials science and drug discovery. The emergence of sophisticated techniques like retrosynthesis planning and 3D conditional generation represents a pivotal advancement, enabling a more integrated strategy that bridges the historic divide between compositional and structural analysis for targeted design.

Comparative Performance: Composition-Based vs. Structure-Based Models

Table 1: Performance Metrics of Representative Synthesizability Models

| Model Name | Model Type | Key Features / Representation | Reported Accuracy / Performance | Key Advantages | Limitations |

|---|---|---|---|---|---|

| CSLLM (Synthesizability LLM) [4] | Structure-based | Fine-tuned LLM using "material string" text representation | 98.6% accuracy | Exceptional generalization to complex structures; predicts methods & precursors | Requires structured crystal data; computationally intensive |

| Integrative Model [6] | Hybrid (Composition & Structure) | Ensemble of composition transformer + structure GNN | High synthesizability ranking (7/16 targets successfully synthesized) | Combines complementary signals; demonstrated experimental success | Complex training procedure; requires both composition and structure data |

| FTCP Deep Learning Model [5] | Structure-based | Fourier-Transformed Crystal Properties (real & reciprocal space) | 82.6% precision, 80.6% recall for ternary crystals | Captures crystal periodicity; faster than DFT | Performance varies by material system |

| CLscore (Jang et al.) [4] | Structure-based | Positive-unlabeled learning on crystal structures | 87.9% accuracy for 3D crystals | Effective with limited negative data | Accuracy constrained by training data quality |

| Composition-only MTEncoder [6] | Composition-based | Fine-tuned transformer on elemental stoichiometry | Provides baseline synthesizability probability | Fast prediction; applicable when structure unknown | Lacks structural nuance; generally lower accuracy than structure-aware models |

| SynthNN [4] | Composition-based | Composition embeddings from elemental properties | Moderate accuracy (specific metrics not provided) | Simple and fast for initial screening | Cannot distinguish polymorphs |

The quantitative comparison reveals a consistent performance advantage for structure-based models, which achieve notably higher accuracy in predicting synthesizability across diverse material systems. The CSLLM framework exemplifies this superior performance, achieving 98.6% accuracy on testing data by leveraging a comprehensive text representation of crystal structures that encodes lattice parameters, space groups, and Wyckoff positions [4]. This significantly outperforms traditional thermodynamic and kinetic stability metrics, which achieve only 74.1% and 82.2% accuracy, respectively, as primary synthesizability filters [4]. Structure-based approaches fundamentally excel because they account for atomic arrangements, coordination environments, and symmetry elements that directly influence synthetic accessibility—factors completely absent in composition-only analysis.

However, composition-based models maintain utility for high-throughput initial screening when structural data is unavailable or for prioritizing elemental combinations for further exploration. Their principal limitation is the inability to distinguish between different polymorphs of the same composition, such as diamond versus graphite, which exhibit dramatically different synthesizability and properties [3]. The integrative model demonstrates the power of hybrid approaches, combining compositional and structural signals through a rank-average ensemble to successfully guide experimental synthesis, resulting in seven successfully characterized novel compounds from a prioritized candidate list [6].

Methodological Approaches: Experimental Protocols and Workflows

Structure-Based Synthesizability Prediction with CSLLM

The Crystal Synthesis Large Language Model (CSLLM) framework employs a sophisticated methodology for predicting synthesizability, synthetic methods, and suitable precursors [4]. The experimental protocol involves several critical stages:

Data Curation and Balanced Dataset Construction: Researchers compiled a dataset of 70,120 synthesizable crystal structures from the Inorganic Crystal Structure Database (ICSD), ensuring experimental validity by excluding disordered structures and limiting to compositions with ≤40 atoms and ≤7 elements. For negative examples, they applied a pre-trained positive-unlabeled (PU) learning model to screen 1.4 million theoretical structures from computational databases, selecting 80,000 with the lowest crystal-likeness scores (CLscore <0.1) as non-synthesizable examples. This balanced dataset encompasses seven crystal systems and elements spanning atomic numbers 1-94 [4].

Text Representation via Material String: A crucial innovation involves converting crystal structures into a condensed text representation called "material string" to efficiently fine-tune LLMs. This representation follows the format:

SP | a, b, c, α, β, γ | (AS1-WS1[WP1-x1,y1,z1]; AS2-WS2[WP2-x2,y2,z2]; ...)where SP is the space group, a/b/c/α/β/γ are lattice parameters, and AS-WS[WP-x,y,z] represents atomic symbol, Wyckoff site symbol, and Wyckoff position coordinates. This format eliminates redundancy in CIF files while preserving essential crystallographic information [4].Model Architecture and Fine-Tuning: The framework employs three specialized LLMs fine-tuned on the material string representations: a Synthesizability LLM for binary classification, a Method LLM for classifying solid-state vs. solution synthesis, and a Precursor LLM for identifying suitable precursor compounds. Domain-focused fine-tuning aligns the LLMs' linguistic capabilities with material-specific features, refining attention mechanisms and reducing hallucinations [4].

Validation and Generalization Testing: The model underwent rigorous testing on holdout datasets and demonstrated 97.9% accuracy on complex structures with large unit cells, significantly exceeding thermodynamic (energy above hull ≥0.1 eV/atom) and kinetic (lowest phonon frequency ≥ -0.1 THz) screening methods [4].

Retrosynthesis Planning with GDiffRetro

GDiffRetro introduces a dual-graph enhanced molecular representation and 3D diffusion generation for retrosynthesis prediction, addressing limitations in existing semi-template methods [20] [21]. The experimental methodology comprises:

Dual Graph Reaction Center Identification: The approach represents molecular structures using both the original molecular graph and its corresponding dual graph, where each node corresponds to a face in the original graph. This integration enables the model to capture face information critical for identifying stable structural motifs (e.g., benzene rings) that are unlikely to serve as reaction centers. Given a product molecule (\mathcal{M} = {\mathbf{A}, \mathbf{X}}) with adjacency matrix (\mathbf{A}) and node features (\mathbf{X}), the model processes both representations to predict bond breakage probabilities for reaction center identification [20].