Combating Overfitting: Practical Strategies for Robust Machine Learning on Small Materials Science Datasets

This article provides a comprehensive guide for researchers and professionals in materials science and drug development on mitigating overfitting in machine learning models when working with limited datasets.

Combating Overfitting: Practical Strategies for Robust Machine Learning on Small Materials Science Datasets

Abstract

This article provides a comprehensive guide for researchers and professionals in materials science and drug development on mitigating overfitting in machine learning models when working with limited datasets. It explores the fundamental causes and consequences of overfitting specific to small data regimes, details practical methodologies from data-centric approaches to advanced algorithms, offers troubleshooting and optimization techniques for model refinement, and establishes frameworks for rigorous validation and benchmarking. By synthesizing current research and real-world applications, this guide aims to equip scientists with the knowledge to build more generalizable and reliable predictive models, thereby accelerating materials discovery and development.

Understanding the Small Data Dilemma: Why Overfitting Plagues Materials Science ML

Troubleshooting Guides

Why is my model performing perfectly on training data but failing on new experimental materials?

Problem: You have developed a machine learning model that achieves high accuracy on your training data but shows poor predictive performance when applied to new, unseen material compositions or structures. This is the classic sign of overfitting [1] [2].

Explanation: In materials science, overfitting occurs when your model learns not only the underlying patterns in your limited dataset but also the noise and random fluctuations specific to that data [1]. An overfitted model is excessively complex, containing more parameters than can be justified by the available data [1]. In the context of small materials data, this often happens because the model essentially "memorizes" the training examples rather than learning generalizable relationships between material descriptors and target properties [3].

Troubleshooting Steps:

- Compare Training and Validation Performance: Check if your model's accuracy or R² score on the training data is significantly higher (e.g., >15-20%) than on your validation or test set [4] [2].

- Evaluate Model Complexity: Assess if your model has too many parameters (e.g., features, network layers) relative to your number of data points. A good rule of thumb for regression is to have at least 10-15 observations per independent variable [1].

- Analyze Feature Selection: Determine if irrelevant or redundant material descriptors have been included in the model, which can cause the model to learn spurious correlations [3].

How can I detect potential overfitting when working with my small dataset?

Early detection of overfitting is crucial for developing reliable predictive models in materials science. The following table summarizes key indicators and diagnostic methods.

Table: Diagnostic Indicators of Overfitting in Small Data Regimes

| Indicator | Description | Diagnostic Method |

|---|---|---|

| Large Performance Gap | A significant difference between model performance on training data versus validation/test data [4] [2]. | Calculate and compare metrics (e.g., RMSE, MAE, R²) between training and hold-out sets. |

| High Model Variance | Model predictions change drastically when trained on different subsets of the available data [2]. | Use resampling techniques like bootstrapping or repeated cross-validation to assess prediction stability [5]. |

| Sensitivity to Noise | The model learns random fluctuations in the training data that do not represent the true structure-property relationship [1]. | Introduce small perturbations to input features and observe the magnitude of change in predictions. |

| Overly Complex Model | The model has more parameters than can be reliably estimated from the number of available observations [1]. | Compare the number of model parameters/features to the number of data samples. |

Experimental Protocols for Mitigating Overfitting

Protocol: Cross-Validation for Robust Performance Estimation

Purpose: To reliably estimate how your model will generalize to unseen material data and tune hyperparameters without requiring a separate large test set [4] [2].

Procedure:

- Data Partitioning: Randomly shuffle your dataset and split it into k equally sized folds (typically k=5 or k=10).

- Iterative Training and Validation: For each unique fold:

- Designate the current fold as the validation set.

- Designate the remaining k-1 folds as the training set.

- Train your model on the training set.

- Evaluate the model on the validation set and record the performance metric.

- Performance Calculation: Calculate the average performance across all k validation folds. This average is a more robust estimate of generalization error than a single train-test split [2].

Considerations for Materials Data: Ensure that the splitting strategy accounts for any inherent data clustering (e.g., by material family) to avoid over-optimistic estimates.

Protocol: Regularization for Controlling Model Complexity

Purpose: To prevent the model from becoming overly complex by adding a penalty to the loss function, thereby discouraging it from relying too heavily on any single feature or parameter [1] [4].

Procedure:

- Select a Regularization Technique:

- L1 (Lasso) Regularization: Adds a penalty equal to the absolute value of the magnitude of coefficients. This can drive some coefficients to zero, effectively performing feature selection [4].

- L2 (Ridge) Regularization: Adds a penalty equal to the square of the magnitude of coefficients. This shrinks coefficients but does not force them to zero [4].

- ElasticNet: Combines both L1 and L2 penalties [4].

- Hyperparameter Tuning: Use cross-validation (as described in Protocol 2.1) to find the optimal value for the regularization strength parameter (often denoted as λ or alpha). This balances the trade-off between fitting the data and keeping the model simple.

Protocol: Data Augmentation Based on Physical Models

Purpose: To artificially increase the size and diversity of your training dataset by creating new, realistic data points from existing ones, leveraging domain knowledge in materials science [3] [6].

Procedure:

- Identify Augmentable Features: Determine which material descriptors or input conditions can be varied in a physically meaningful way (e.g., elemental composition ratios, synthetic condition parameters like temperature or pressure).

- Define Transformation Rules: Establish the physical laws or empirical rules that govern how these features can be changed. For instance, use phase diagram information or known scaling laws to generate new, plausible virtual samples [6].

- Generate Synthetic Data: Apply these transformations to your original dataset to create new data points. This can be done through interpolation between known data points or by adding small, realistic noise to existing samples.

- Validate Augmented Data: Ensure that the newly generated data adheres to known physical constraints and property ranges.

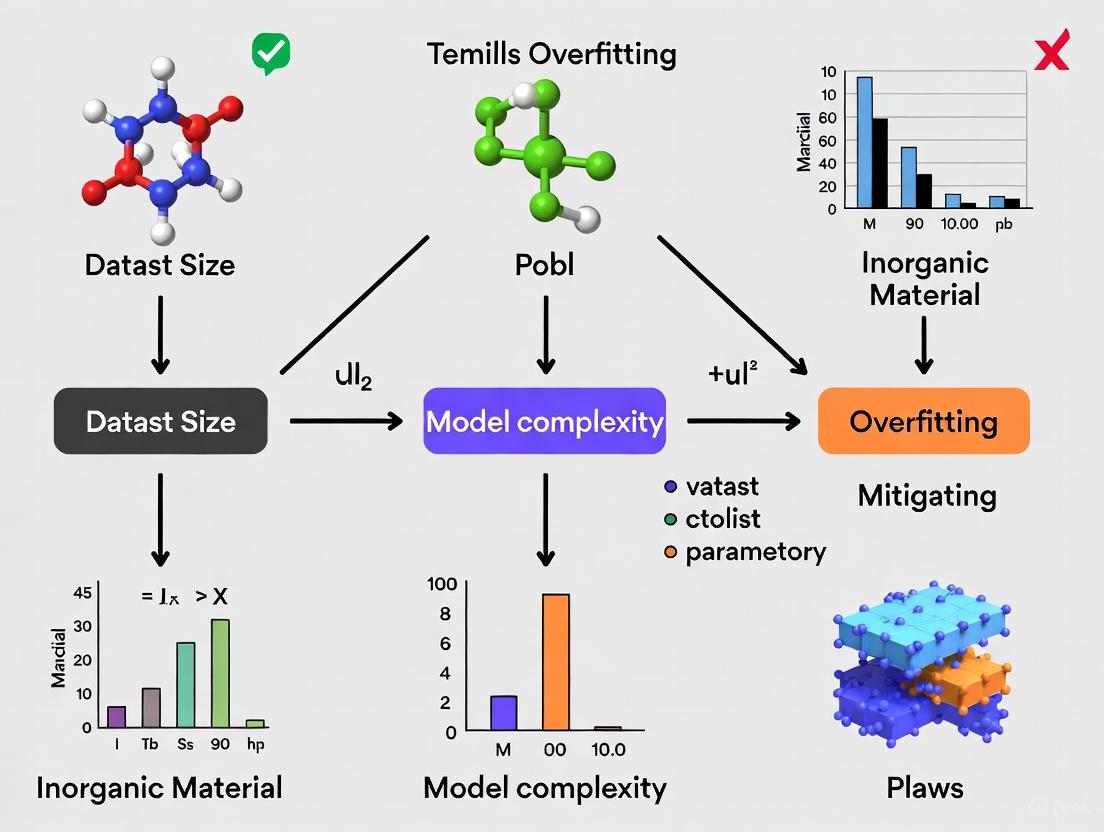

Workflow Visualization for Overfitting Management

The following diagram illustrates a logical workflow for diagnosing and mitigating overfitting when working with small datasets in materials science.

Diagram: A workflow for diagnosing and mitigating overfitting in small data regimes.

The Scientist's Toolkit: Research Reagent Solutions

This table outlines key computational and strategic "reagents" essential for combating overfitting in materials informatics projects.

Table: Essential Solutions for Managing Overfitting

| Tool / Technique | Category | Primary Function | Application Context |

|---|---|---|---|

| k-Fold Cross-Validation [4] [2] | Model Evaluation | Provides a robust estimate of model generalization error by rotating data through training and validation splits. | Essential for all model development and hyperparameter tuning with limited data. |

| L1 & L2 Regularization [1] [4] | Algorithmic Strategy | Penalizes model complexity within the algorithm itself to prevent over-reliance on specific features. | Applied during the training of linear models, neural networks, and other algorithms. |

| Physical Model-Based Data Augmentation [3] [6] | Data Strategy | Increases effective dataset size by generating new, physically plausible data points from existing ones. | Used when domain knowledge is strong but experimental data is scarce and costly to produce. |

| Transfer Learning [3] [7] [6] | Machine Learning Strategy | Leverages knowledge from a model pre-trained on a large, related dataset to boost performance on a small target dataset. | Ideal when a large dataset exists for a related property or material system, but the target dataset is small. |

| Active Learning [3] [6] | Machine Learning Strategy | Iteratively selects the most informative data points to be experimentally measured next, optimizing resource use. | Applied in high-throughput experimentation or computational screening to guide the most efficient data acquisition. |

| Feature Selection Algorithms (Filter, Wrapper, Embedded) [3] | Data Preprocessing | Identifies and retains the most relevant material descriptors, reducing dimensionality and noise. | Used when a large number of features (e.g., from DFT calculations or descriptor software) have been generated. |

Frequently Asked Questions (FAQs)

What is the fundamental difference between overfitting and underfitting?

Overfitting occurs when a model is too complex and learns both the underlying pattern and the noise in the training data, resulting in low error on training data but high error on unseen data. Underfitting occurs when a model is too simple to capture the underlying trend in the data, resulting in high error on both training and test data [1] [2]. The goal is to find a balance between the two, achieving a "well-fitted" model that generalizes well [2].

My materials dataset is unavoidably small. Which algorithms are best suited for this scenario?

With small data, preference should be given to simpler, less flexible models that are less prone to overfitting. These include:

- Linear models with strong regularization (Lasso, Ridge, ElasticNet) [4].

- Random Forests, which have built-in mechanisms to reduce variance [6].

- K-Nearest Neighbors can be effective for small, dense datasets [6].

- Support Vector Machines with linear kernels [6].

Avoid very complex models like large deep neural networks unless you are using strategies like transfer learning, where the model is first pre-trained on a large, related dataset [3] [6].

Can overfitting occur even if I don't have a large number of features?

Yes. While a high number of features relative to data points is a common cause, overfitting can also occur if the model itself is too complex (e.g., a very deep decision tree) for the amount of data available, or if it learns spurious correlations present in the small sample [1] [8]. The core issue is the ratio of model complexity to effective information in the data.

How does transfer learning help prevent overfitting in materials science?

Transfer learning addresses the small data problem at its root. Instead of training a model from scratch on your small dataset, you start with a model that has already been pre-trained on a large, general materials dataset (e.g., for predicting formation energies). This model has already learned fundamental relationships between chemical composition, structure, and properties. You then fine-tune this model on your small, specific dataset. This process requires less data to achieve high performance and significantly reduces the risk of overfitting because the model is not learning from a blank slate [3] [7] [6].

What are the ethical implications of overfitting in drug development research?

Overfitting can have serious real-world consequences. In drug development, an overfitted model might:

- Misguide Research Directions: Falsely identify a molecular compound as promising, leading to wasted resources and delays in finding effective treatments [5].

- Create False Positives: Predict non-existent efficacy or safety profiles, potentially putting clinical trial participants at risk [5].

- Erode Trust: Undermine trust in data-driven approaches and hinder the adoption of machine learning in the field. Therefore, rigorously validating models and being transparent about the risk of overfitting is an ethical imperative [5].

Technical Support Center: FAQs on Data Scarcity & Overfitting

FAQ 1: Why is overfitting a particularly critical problem in materials science research? Overfitting occurs when a model learns not only the underlying patterns in the training data but also the noise and random fluctuations, leading to poor performance on new, unseen data [9]. In materials science, where datasets are often small and high-dimensional (many features but few samples), this is a fundamental challenge [10]. An overfit model can generate misleading predictions for new material properties, such as catalytic activity or battery performance, directing experimental validation down costly and unproductive paths and ultimately hindering scientific discovery [10].

FAQ 2: What are the primary causes of data scarcity and fragmentation in scientific datasets? Data scarcity in fields like materials science stems from a combination of technical and social-institutional barriers [10]:

- High Cost of Data Generation: Experimental data, such as from clinical trials or materials testing, is often limited, costly, or difficult to access [11] [12].

- Data Fragmentation: Research organizations often manage over 100 distinct data sources, with data hoarded in proprietary or incompatible formats [10].

- Misaligned Incentives: Academic and research institutions frequently undervalue and fail to reward the tedious work of data curation, sharing, and the development of sustainable infrastructure [10].

FAQ 3: How can I detect if my model is overfitting? The primary indicator of overfitting is a significant performance gap between training and validation metrics [9]. For example, your model may show 99% accuracy on training data but only 70% on a held-out test set [9]. Monitoring the loss during training is also key; if the training loss continues to decrease while the validation loss stops improving or begins to increase, the model is likely overfitting to the training data [13].

FAQ 4: Can synthetic data truly be trusted for rigorous scientific research? Yes, when applied correctly. Synthetic data is artificially generated information that mimics the statistical properties of real-world data [12]. It has proven valuable in simulating rare events or edge cases and for privacy-preserving data sharing [12]. Its reliability depends on the quality of the generation method (e.g., GANs, VAEs, Diffusion Models) and rigorous validation to ensure it faithfully represents the physical or chemical principles of the system under study [14] [12].

Troubleshooting Guides for Small Datasets

Guide 1: Mitigating Overfitting in a Standard Machine Learning Workflow

- Problem: Model performance is excellent on training data but poor on validation/test data.

- Solution: Implement a systematic combination of techniques tailored for small datasets.

Workflow Diagram: Overfitting Mitigation Pathway

Detailed Steps:

- Simplify the Model: Begin with a less complex model architecture (e.g., fewer layers or parameters). If data is extremely limited, classical machine learning algorithms like SVM or XGBoost can outperform deep neural networks [15] [13].

- Apply Regularization: Introduce constraints to prevent the model from becoming overly complex.

- L1 (Lasso): Adds a penalty equal to the absolute value of coefficients, which can zero out less important features (feature selection) [16].

- L2 (Ridge): Adds a penalty equal to the squared value of coefficients, which shrinks all weights uniformly [16] [9].

- Dropout: For neural networks, randomly deactivate neurons during training to force robust learning [15] [13].

- Augment Data: Artificially expand your dataset using domain-specific techniques. For non-image data, this could involve synthetic data generation [15] [12].

- Use Transfer Learning: Leverage a model pre-trained on a large, general dataset (even from a different domain) and fine-tune only the final layers on your specific small dataset [15] [13]. This capitalizes on general features learned from big data.

- Rigorous Validation: Use K-fold cross-validation to make maximal use of limited data for performance estimation and apply early stopping to halt training as soon as validation performance degrades [16] [13].

Guide 2: Implementing a Synthetic Data Generation Protocol

- Problem: Insufficient real-world data for training a robust model.

- Solution: Generate high-quality synthetic data to augment the training set.

Workflow Diagram: Synthetic Data Generation and Validation

Detailed Steps:

- Select a Generation Method: Choose an algorithm based on your data type and needs.

- Generative Adversarial Networks (GANs): Use two competing neural networks (a generator and a discriminator) to produce highly realistic data. Effective for time series and image data [12] [17].

- Variational Autoencoders (VAEs): Compress and reconstruct data, good for generating structured data with controlled variations [12].

- Diffusion Models: Iteratively refine random noise to generate high-fidelity data, known for fine-grained realism [12].

- Monte Carlo Simulations: Rule-based methods that generate numerical data based on predefined distributions and probabilistic models [12].

- Generate the Data: Produce a synthetic dataset. This can be fully synthetic (created from scratch) or partially synthetic (where only sensitive fields in a real dataset are replaced) [12].

- Validate Rigorously: It is crucial to ensure the synthetic data retains the statistical properties of the real data without replicating sensitive information. Use statistical tests and, where possible, domain expert evaluation [12].

- Augment the Training Set: Combine the validated synthetic data with your original real data to create a larger, more diverse training set [17].

Quantitative Data on Techniques

Table 1: Comparison of Regularization Techniques for Small Datasets

| Technique | Mechanism | Effect on Weights | Best Use-Case in Materials Science |

|---|---|---|---|

| L1 (Lasso) [16] [9] | Adds penalty based on absolute value of coefficients. | Can zero out weights, performing feature selection. | When you have many material features (e.g., elemental descriptors) and suspect many are irrelevant. |

| L2 (Ridge) [16] [9] | Adds penalty based on squared value of coefficients. | Shrinks all weights uniformly but does not zero them. | When many features are correlated (e.g., various spectroscopic intensities). |

| Dropout [15] [13] | Randomly drops neurons during training. | Reduces reliance on any single neuron, encouraging redundancy. | In deep neural networks for predicting material properties from complex patterns. |

| Early Stopping [13] [9] | Halts training when validation performance degrades. | Prevents the model from over-optimizing on training data noise. | A universal tactic for all iterative training processes with limited data. |

Table 2: Overview of Synthetic Data Generation Methods

| Method | Key Principle | Strengths | Common Applications in Science |

|---|---|---|---|

| GANs [12] [17] | Adversarial training between generator and discriminator networks. | High-fidelity, realistic data generation. | Generating synthetic time-series data (e.g., VIX), molecular structures, sensor data. |

| VAEs [12] | Compression and probabilistic reconstruction of data. | Controlled variations; good for structured data. | Creating variations of molecular representations; generating material spectra. |

| Diffusion Models [12] | Iterative denoising from random noise. | State-of-the-art output quality and fine-grained control. | Generating high-resolution material microstructures or synthetic images. |

| Monte Carlo [12] | Random sampling based on defined probabilities. | Interpretable, rule-based; good for simulating processes. | Simulating experimental outcomes, risk modeling in drug development. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Mitigating Data Scarcity

| Tool / Technique | Function | Relevance to Materials Science |

|---|---|---|

| Transfer Learning [15] [13] | Leverages knowledge from pre-trained models, reducing the need for vast labeled datasets. | Fine-tune models pre-trained on large chemical databases for specific property prediction tasks. |

| Data Augmentation [15] [12] | Artificially expands the training set by creating modified versions of existing samples. | Applying symmetries, adding noise, or using generative models to create plausible new material data points. |

| K-Fold Cross-Validation [16] [13] | Robust validation technique that maximizes the use of limited data for performance estimation. | Provides a more reliable estimate of model performance on small materials datasets than a single train-test split. |

| Feature Selection (e.g., L1) [16] [13] | Identifies and retains the most informative features, reducing dimensionality and noise. | Isolates key elemental or structural descriptors that drive a material property, improving model interpretability. |

| Foundation Models (e.g., SymTime) [14] | A pre-trained model developed on a massive, diverse dataset (including synthetic data) for a broad domain. | Can be fine-tuned for various downstream tasks like prediction and classification with minimal task-specific data. |

FAQ: Understanding and Mitigating Overfitting

What is overfitting and why is it a critical issue in research?

Overfitting occurs when a machine learning model learns the training data too well, including its noise and random fluctuations, instead of the underlying pattern. This results in a model that performs exceptionally on training data but poorly on any new, unseen data [18] [19] [20].

In the context of research, this is critical because an overfitted model cannot generalize. Its predictions and conclusions are valid only for the specific dataset it was trained on, making its findings unreliable and misleading for real-world applications or scientific discovery [21] [20].

How can I quickly diagnose if my model is overfitting?

You can diagnose overfitting by monitoring key performance metrics during your experiment. The most common indicators are [18] [22] [23]:

- Performance Discrepancy: A significant gap between high performance (e.g., accuracy) on training data and low performance on validation or test data.

- Loss Curves: Training loss continues to decrease, but validation loss begins to increase after a certain point.

- Over-Confidence: The model is highly confident in its incorrect predictions on new data, indicating it memorized details rather than understanding patterns.

For a quick check, reserve a portion of your data as a test set from the beginning. If your model's error rate is low on the training set but high on this unseen test set, it signals overfitting [19] [20].

What are the consequences of overfitting in scientific studies?

The consequences of overfitting in research extend beyond poor model performance:

- False Discoveries: Overfit models can detect subtle, spurious patterns that do not represent true underlying relationships. This can lead to high-profile false discoveries that cannot be replicated, as witnessed in fields like high-energy physics [21].

- Wasted Resources: Basing further research, experiments, or drug development on the false leads from an overfitted model wastes significant time, funding, and scientific effort [21].

- Reduced Predictive Power: The model fails in its primary purpose: to make accurate predictions on new data. This renders it useless for practical applications, such as diagnosing diseases from new medical images or predicting material properties [19] [23].

- Lack of Generalizability: The model's results are tailored to the specific sample set and cannot be applied to the broader population, undermining the scientific goal of finding general truths [21].

What is "overhyping" and how does it relate to overfitting?

Overhyping is a specific, often unintentional, form of overfitting that occurs when a researcher adjusts analysis hyperparameters to improve results for a specific dataset [21].

Hyperparameters include choices like feature selection, data pre-processing settings, or classifier parameters. When these are tuned and re-tuned based on performance on a single dataset, the model becomes tailored to that data's noise. The same hyperparameters will likely not work on a new dataset, leading to non-replicable results. This is a major barrier to replicability in scientific literature [21].

Troubleshooting Guide: Diagnosing and Fixing Overfitting

Diagnostic Protocols

Use these methodologies to systematically identify overfitting in your experiments.

Protocol 1: Monitoring Training Dynamics with k-Fold Cross-Validation

This protocol provides a robust estimate of your model's ability to generalize.

- Objective: To obtain an unbiased performance estimate and detect overfitting.

- Procedure:

- Randomly split your entire dataset into k equally sized subsets (or "folds"). Common choices are k=5 or k=10 [19].

- For each fold i (where i=1 to k):

- Set aside fold i as the validation set.

- Use the remaining k-1 folds as the training set.

- Train your model on the training set.

- Evaluate the model on the validation set and record the performance score (e.g., error rate).

- Calculate the average performance across all k validation runs. This is your cross-validation score [19] [20].

- Interpretation: A high average error on the validation folds, especially when compared to a low error on the training folds, is a clear indicator of overfitting [19].

Protocol 2: Analyzing Training and Validation Curves

This visual method helps you identify the point at which your model begins to overfit.

- Objective: To visually identify the divergence between training and validation performance.

- Procedure:

- During the model training process, record the loss (or accuracy) for both the training and validation sets at every epoch.

- Plot these values on the same graph: epochs on the x-axis and loss/accuracy on the y-axis.

- Analyze the curves for divergence [18].

- Interpretation: An ideal fit shows both curves improving together. Overfitting is indicated when the training loss continues to decrease while the validation loss stops improving and starts to increase [18].

Table: Key Metrics for Diagnosing Model Fit

| Metric | Underfitting | Good Fit | Overfitting |

|---|---|---|---|

| Training Accuracy | Low | High | Very High |

| Validation Accuracy | Low | High | Low |

| Training Loss | High | Low | Very Low |

| Validation Loss | High | Low | High |

| Primary Indicator | High Bias [22] | Balanced Bias/Variance [22] | High Variance [22] |

Mitigation Strategies

Implement these solutions to prevent and correct overfitting in your models.

Solution 1: Applying Regularization

Regularization techniques penalize model complexity to prevent the model from becoming too sensitive to noise.

- L1 Regularization (Lasso): Adds a penalty equal to the absolute value of the model's coefficients. This can shrink some coefficients to zero, effectively performing feature selection [18] [22].

- Cost Function: ( J(\theta) = \text{Loss}(\theta) + \lambda \sum{j=1}^{n} |\thetaj| ) [18]

- L2 Regularization (Ridge): Adds a penalty equal to the square of the coefficients. This forces weights to be small but rarely zero [18] [22].

- Cost Function: ( J(\theta) = \text{Loss}(\theta) + \lambda \sum{j=1}^{n} \thetaj^2 ) [18]

- Dropout (for Neural Networks): Randomly "drops out" a percentage of neurons during each training step. This prevents the network from becoming over-reliant on any single neuron and forces it to learn more robust features [23] [24].

Table: Regularization Techniques at a Glance

| Technique | Best For | Key Mechanism | Typical Hyperparameter Values |

|---|---|---|---|

| L1 (Lasso) | Feature-rich datasets where you suspect redundancy [22]. | Shrinks coefficients, can set some to zero [18]. | (\lambda): 1e-3 to 1e-6 [24] |

| L2 (Ridge) | General-purpose use; when you want to retain all features [22]. | Shrinks all coefficients evenly [18]. | (\lambda): 1e-3 to 1e-6 [24] |

| Dropout | Neural Networks of all kinds [23]. | Randomly disables neurons during training [23]. | Dropout Rate: 0.2 to 0.5 [24] |

Solution 2: Data Augmentation and Expansion

Increasing the amount and diversity of your training data is one of the most effective ways to combat overfitting [23].

- Data Augmentation: Artificially expand your dataset by creating modified versions of existing data. For materials science datasets, this could include [18] [23]:

- Image Data: Applying rotations, flips, cropping, adjusting brightness/contrast to micrograph images.

- Numerical Data: Adding small amounts of random noise to input variables (if physically meaningful).

- Synthetic Data Generation: When real data is scarce, use computer models to generate realistic, synthetic data that fills gaps in your training set [23].

Solution 3: Implementing Early Stopping

Stop the training process before the model begins to overfit.

- Objective: To halt training when performance on the validation set stops improving.

- Procedure:

- Benefit: Prevents the model from over-optimizing on the training data and saves computation time [18].

Workflow Diagrams

Diagram 1: Overfitting Diagnosis and Mitigation Workflow

Diagram 2: The Bias-Variance Tradeoff and Model Complexity

The Scientist's Toolkit: Essential Research Reagents for Mitigating Overfitting

Table: Key "Reagent Solutions" for Robust Model Development

| Reagent / Tool | Function / Purpose | Considerations for Small Datasets |

|---|---|---|

| k-Fold Cross-Validation | Robust performance estimation by partitioning data into k subsets for iterative training/validation [19] [20]. | With very small datasets, use higher k (e.g., Leave-One-Out) but be aware of unstable estimates [21]. |

| L1 & L2 Regularization | Penalizes model complexity to prevent over-reliance on specific features; L1 can perform feature selection [18] [24]. | Start with small λ values (e.g., 1e-4) to avoid introducing excessive bias (underfitting). |

| Data Augmentation | Artificially expands training set by creating plausible variations of existing data [18] [23]. | Critically important for small datasets. Ensure transformations are physically meaningful for your materials science domain. |

| Early Stopping | Halts training when validation performance degrades, preventing the model from learning noise [18] [20]. | Set a low patience parameter to stop quickly, as small datasets can overfit in fewer epochs. |

| Pre-trained Models (Transfer Learning) | Leverages features learned from large, general datasets as a starting point for a new task [23]. | Highly effective when domain-related pre-trained models exist; requires less data to achieve good performance. |

| Simplified Model Architectures | Reduces the number of model parameters (e.g., layers, neurons), lowering capacity to memorize [18] [23]. | Prefer simpler models (linear models, shallow trees) as a baseline before trying complex architectures. |

The Bias-Variance Tradeoff Explained in the Context of Materials Datasets

In materials science, the journey from data to discovery is often paved with small, expensive-to-acquire datasets. Whether designing new alloys or optimizing drug formulations, researchers must build predictive models that are both accurate and reliable. The bias-variance tradeoff is a fundamental machine learning concept that describes the tension between a model's simplicity and its complexity, directly impacting its ability to generalize from training data to new, unseen data. For materials scientists, mastering this tradeoff is not merely academic; it is essential for mitigating overfitting and ensuring that models yield trustworthy, actionable insights. This guide provides targeted troubleshooting advice to help you navigate these challenges.

FAQs & Troubleshooting Guides

FAQ 1: What exactly are bias and variance, and how do they manifest in materials data?

Question: I often hear that my model might have "high bias" or "high variance." What do these terms mean in the practical context of predicting material properties?

Answer: Bias and variance are two sources of error that contribute to a model's prediction inaccuracy. In simple terms, bias is the error from overly simplistic assumptions, while variance is the error from excessive sensitivity to the training data's noise.

Symptoms and Diagnosis Table:

| Error Type | What It Is | Common Symptoms in Materials Data | Visual Model Behavior |

|---|---|---|---|

| High Bias (Underfitting) | The model is too simple to capture underlying patterns (e.g., using a linear model for a complex relationship) [25] [26]. | High error on both training and validation/test data. Poor performance even on data it was trained on [25]. | A straight line fit to data with a clear non-linear trend (e.g., predicting polymer strength from chain length). |

| High Variance (Overfitting) | The model is too complex and learns the noise in the training data, not just the true signal [27] [28]. | Low error on training data, but high error on validation/test data [25] [26]. The model is not generalizable. | A complex, "wiggly" curve that passes through every training data point but fails on new data (e.g., predicting perovskite efficiency). |

The total error of a model can be decomposed into three parts: Bias² + Variance + Irreducible Error. The goal is to find the model complexity that minimizes the sum of bias and variance errors [27] [26].

FAQ 2: Why is the bias-variance tradeoff particularly critical for small materials datasets?

Question: My dataset has only ~100 samples due to high experimental costs. Why is overfitting a more severe threat in this scenario?

Answer: Smaller datasets are less likely to accurately represent the full complexity and diversity of the population you are studying. This makes them inherently more prone to overfitting, as a complex model can easily "memorize" the limited samples instead of learning a generalizable rule [29] [3]. In such cases, the model's performance on its training data will be deceptively high, but it will fail catastrophically when presented with new data from a slightly different synthesis condition or composition [29].

Experimental Protocol: Diagnosing the Tradeoff with Learning Curves

- Partition Data: Split your data into training and validation sets (e.g., 80/20).

- Train Incrementally: Train your model on progressively larger subsets of the training data (e.g., 10%, 20%, ..., 100%).

- Record Metrics: For each subset, calculate and record the model's error on both the training subset and the validation set.

- Plot and Analyze: Generate a plot with the dataset size on the x-axis and error on the y-axis.

- High Bias Indication: Both training and validation errors converge to a high value.

- High Variance Indication: A large gap exists between a low training error and a high validation error.

FAQ 3: What are the most effective strategies to control for high variance and overfitting?

Question: My model shows a big gap between training and test error. What concrete steps can I take to reduce variance?

Answer: Mitigating high variance involves simplifying the model or reducing its capacity to learn noise. Here are proven methodologies:

Troubleshooting Guide Table:

| Strategy | Methodology | Application Context in Materials Science |

|---|---|---|

| Regularization [25] | Add a penalty to the model's loss function for large coefficients. L1 (Lasso) can force some feature weights to zero, performing feature selection. L2 (Ridge) shrinks all weights. | Use L1 regularization to identify the most critical elemental descriptors (e.g., which atomic radii or electronegativities truly drive catalytic activity). |

| Cross-Validation [29] | Use techniques like repeated k-fold cross-validation to evaluate model performance more reliably and guide hyperparameter tuning. | In a study with n=146 samples, using 5-fold cross-validation provides a more realistic performance estimate than a single train-test split [29]. |

| Ensemble Methods [25] | Combine predictions from multiple models (e.g., Random Forests via bagging) to average out their individual variances. | Predict the thermal stability of a new polymer composite by aggregating predictions from hundreds of decision trees, each trained on a slightly different data bootstrap. |

| Hyperparameter Tuning [29] [25] | Systematically search for optimal model settings (e.g., regularization strength, tree depth) that balance bias and variance. | Use a grid search to find the optimal polynomial degree for relating processing temperature to battery material conductivity, avoiding overly complex functions. |

FAQ 4: How can I address high bias and underfitting in my models?

Question: My model is performing poorly on all data. How can I reduce its bias and capture more complex relationships?

Answer: High bias indicates your model is not powerful enough for the problem. To address it:

- Increase Model Complexity: Move from linear models to more complex algorithms like Support Vector Machines with non-linear kernels, ensemble methods (e.g., Gradient Boosting), or carefully designed neural networks [28].

- Feature Engineering: Create new, more informative descriptors. This is where domain knowledge is critical.

- Protocol: Use tools like Dragon or RDKit to generate a vast pool of structural descriptors [3]. Then, employ feature selection methods (filter, wrapper, or embedded) to identify the most predictive subset. Alternatively, create domain-knowledge-based descriptors (e.g., combining atomic radius and electronegativity into a new feature).

- Reduce Regularization: If you are applying strong regularization, slightly reducing its strength can allow the model to fit the data more closely.

FAQ 5: How can I build trust in my model's predictions, especially when it's complex?

Question: My neural network model is accurate but acts as a "black box." How can I explain its predictions to my colleagues?

Answer: The field of Explainable AI (XAI) addresses this exact issue. For complex models, you can use post-hoc explanation techniques to understand which features the model deemed most important for a specific prediction [30].

Protocol: Using SHAP for Model Interpretation

- Train Model: Train your best-performing complex model (e.g., a gradient boosting machine or neural network).

- Calculate SHAP Values: Use the SHAP (SHapley Additive exPlanations) Python library. For a given prediction, SHAP calculates the contribution of each feature to the final output.

- Visualize: Create a SHAP summary plot or force plot to see the global and local importance of your material descriptors (e.g., it might reveal that valence electron count is the dominant factor in predicting alloy hardness). This bridges the gap between a black-box model and actionable scientific insight [30].

The Scientist's Toolkit: Key Research Reagents & Computational Solutions

- Table 1: Essential "Reagents" for Robust Materials Informatics

| Tool / Solution | Type | Primary Function | Example in Materials Research |

|---|---|---|---|

| Cross-Validation (e.g., k-Fold) | Statistical Method | Provides a robust estimate of model performance and mitigates overfitting by rotating training/validation splits [29]. | Evaluating the true predictive power of a model for drug solubility with limited experimental data points. |

| L1 / L2 Regularization | Algorithmic Technique | Shrinks model coefficients to prevent overcomplexity and can perform automatic feature selection (L1) [25]. | Identifying the key process parameters (e.g., annealing time, temperature) that affect semiconductor crystal quality. |

| Random Forest / XGBoost | Ensemble Algorithm | Reduces prediction variance by averaging the results of multiple models (e.g., decision trees) [25]. | Predicting the bandgap of novel perovskite compounds based on elemental and structural features. |

| SHAP / LIME | Explainable AI (XAI) Library | Explains individual predictions from any complex model, increasing trust and interpretability [30]. | Understanding why a model predicts a specific polymer formulation will have high tensile strength. |

| Training History Analysis | Diagnostic Tool | Monitoring loss curves helps detect overfitting (diverging train/validation loss) and can signal the optimal stopping point [31]. | Stopping the training of a deep learning model on spectral data before it starts to memorize noise. |

Troubleshooting Guides

Why is my model's performance excellent on training data but poor on new experimental data?

Problem: This is a classic sign of overfitting, where a model memorizes the noise and specific patterns in the training data instead of learning the underlying generalizable relationships [9]. This is particularly common in materials science where datasets are small and feature-rich [3] [8].

Solution:

- Action 1: Simplify your model. Reduce model complexity by using models with fewer parameters. For example, choose a linear model with regularization over a deep neural network, or limit the depth of a decision tree [13] [32] [9].

- Action 2: Apply regularization. Add L1 (Lasso) or L2 (Ridge) regularization to your model's loss function. This penalizes overly complex models and discourages the model from relying too heavily on any single feature [32] [9].

- Action 3: Implement rigorous validation. Use k-fold cross-validation (e.g., 5-fold) to validate your model. This ensures the model is evaluated on different data splits, providing a more reliable estimate of its performance on unseen data [13] [33] [9].

How can I build a reliable predictive model with only 50 data points?

Problem: With a very small dataset, there is a high risk of the model learning spurious correlations that do not generalize, leading to overfitting [3] [8].

Solution:

- Action 1: Employ feature selection. Reduce the number of input descriptors by using feature selection methods (e.g., filtered, wrapped, or embedded methods) to retain only the most relevant features. This reduces the dimensionality and helps the model focus on meaningful signals [3] [13] [32].

- Action 2: Utilize data augmentation. Artificially expand your dataset by creating modified versions of existing samples. For non-image data, this could involve adding small amounts of noise or generating synthetic data points based on domain knowledge [13] [32].

- Action 3: Leverage transfer learning. Use a pre-trained model from a related materials domain or a large public database and fine-tune its last few layers on your small dataset. This allows you to benefit from general features learned on a larger corpus of data [3] [13].

My active learning loop seems to be stuck, not finding better materials. What should I check?

Problem: The sampling strategy in your active learning cycle may be inefficient, or the surrogate model itself may be overfitted, leading to poor decisions about which experiment to perform next [34] [35].

Solution:

- Action 1: Verify your utility function. In active learning, the utility (or acquisition) function dictates which sample to query next. If using an uncertainty-based method, ensure that the model's uncertainty estimates are well-calibrated. Consider switching to or hybridizing with a diversity-based strategy to better explore the search space [34] [35].

- Action 2: Re-assess the surrogate model. An overfitted surrogate model will provide misleading predictions and uncertainties. Incorporate regularization and cross-validation into the training of the surrogate model itself to ensure its robustness [34] [35].

- Action 3: Incorporate hybrid strategies. Instead of relying on a single principle (e.g., only uncertainty), use hybrid strategies that balance exploration (diversity) and exploitation (uncertainty or expected improvement), as these have been shown to perform well in early stages of data acquisition [35].

Frequently Asked Questions (FAQs)

What are the most effective methods to prevent overfitting in small datasets?

The most effective methods combine data-centric, model-centric, and strategic approaches [3] [13] [9]:

- Model-Centric Techniques:

- Regularization (L1/L2): Adds a penalty to the model's loss function to discourage complexity [32] [9].

- Dropout: Randomly ignores a subset of neurons during training in neural networks to prevent co-adaptation [13] [32].

- Early Stopping: Halts training when performance on a validation set stops improving, preventing the model from over-optimizing to the training noise [13] [32] [9].

- Data-Centric Techniques:

- Strategic Techniques:

How do I know if my materials model is overfitted?

You can detect overfitting by looking for the following indicators [33] [9]:

- A significant performance gap: The model's performance (e.g., R², MAE) is much better on the training data than on the validation or test data. This is the most telling sign.

- Unrealistically high training accuracy: The model achieves near-perfect performance on the training set, which is often implausible for real-world materials data.

- Validation with an independent test set: The model performs poorly on a completely hold-out dataset that was never used during training or validation. A robust validation protocol is essential for this [33].

Can using domain knowledge really help with small data problems?

Yes, integrating domain knowledge is a powerful strategy to combat overfitting in small data regimes [3] [36]. It helps in several ways:

- Informed Feature Engineering: Domain knowledge can guide the creation of physically meaningful descriptors, which allows the model to focus on relevant patterns instead of spurious correlations [3].

- Constraining Models: Physical principles can be embedded into the model's architecture or loss function, ensuring that predictions are physically plausible and reducing the hypothesis space the model needs to explore [36].

- Guiding Prior Distributions: In Bayesian methods, domain knowledge can be used to set informative prior distributions, which is particularly valuable when data is scarce [36].

Is cross-validation sufficient to ensure my model will generalize?

While necessary, cross-validation alone is not always sufficient. Its effectiveness depends on correct implementation [33]:

- Preventing Data Leakage: It is critical that information from the validation set does not leak into the training process during feature selection or hyperparameter tuning. The validation set must remain a truly independent simulation of unseen data [33].

- Representative Splits: The data splits must be representative of the overall data distribution. For materials data with inherent groupings (e.g., from different synthesis batches), stratified or group-based cross-validation may be necessary.

- Final Hold-out Test: A best practice is to still reserve a final, completely untouched test set for a final evaluation of the model's generalization after the entire model development and cross-validation cycle is complete [33].

Table 1: Benchmarking of Active Learning Strategies for Small-Sample Regression in Materials Science [35]

| Strategy Category | Example Methods | Performance in Early Stages (Data-Scarce) | Performance in Later Stages (Data-Rich) |

|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms random sampling | Converges with other methods |

| Diversity-Hybrid | RD-GS | Clearly outperforms random sampling | Converges with other methods |

| Geometry-Only | GSx, EGAL | Performance closer to random sampling | Converges with other methods |

| Random Sampling | (Baseline) | (Baseline for comparison) | (Baseline for comparison) |

Table 2: Common Overfitting Detection Metrics and Their Interpretation

| Metric | Comparison | Indicator of Overfitting |

|---|---|---|

| MAE / RMSE | Training value << Validation value | Yes |

| R² Score | Training value >> Validation value | Yes |

| Loss Function | Training loss << Validation loss | Yes |

Experimental Protocols & Workflows

Protocol: Rigorous Validation for Small Materials Datasets

Objective: To reliably detect overfitting and estimate model performance on unseen data when the total dataset is small (N < 200). Materials: A single, curated materials dataset with features and target property. Methodology:

- Initial Split: First, split the data into a hold-out test set (e.g., 20%) and a working set (80%). The hold-out test set is put aside and not used until the very end.

- Cross-Validation: On the working set, perform k-fold cross-validation (k=5 or 10 is common). This involves: a. Shuffling the working set and splitting it into k equal-sized folds. b. For each unique fold as the validation set, train the model on the remaining k-1 folds. c. Calculate the performance metrics on the validation fold.

- Hyperparameter Tuning: Use the cross-validation process to tune model hyperparameters. The average performance across the k folds guides the selection of the best parameters.

- Final Assessment: Train the final model on the entire working set using the best-found hyperparameters. Evaluate this model once on the untouched hold-out test set to obtain an unbiased estimate of its generalization error [33].

Protocol: Implementing an Active Learning Cycle

Objective: To efficiently guide experiments or computations towards materials with desired properties, minimizing the number of required samples. Materials: A large pool of unlabeled candidate materials (e.g., from combinatorial space) and a method to label them (computation or experiment). Methodology:

- Initialization: Start with a small, randomly selected set of labeled data (L).

- Model Training: Train a surrogate model (e.g., a regression model) on the current labeled set L.

- Query Selection: Use a utility function (e.g., an uncertainty-based or hybrid strategy) to select the most informative sample(s) from the unlabeled pool (U).

- Labeling & Update: Obtain the label (property value) for the selected sample(s) through experiment or computation. Add this newly labeled data to L and remove it from U.

- Iteration: Repeat steps 2-4 until a stopping criterion is met (e.g., a performance target is achieved or the experimental budget is exhausted) [34] [35].

Diagram 1: Active learning workflow for targeted materials design.

Diagram 2: Rigorous validation protocol to prevent overfitting.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational & Experimental Tools for Small-Data Materials Research

| Tool / Solution | Function | Relevance to Small Data & Overfitting |

|---|---|---|

| AutoML Frameworks | Automates the process of model selection and hyperparameter tuning [35]. | Reduces manual tuning bias and efficiently finds a robust model architecture, saving time and resources. |

| L1/L2 Regularization | A technique that adds a penalty to the loss function to constrain model complexity [32] [9]. | Directly prevents overfitting by discouraging the model from relying too heavily on any single feature. |

| Monte Carlo Dropout | A variant of dropout used during inference to estimate model uncertainty [35]. | Provides uncertainty estimates for predictions, which is crucial for active learning and assessing model reliability. |

| Data Augmentation Tools | Software for generating synthetic data points from existing data (e.g., via transformations or noise addition) [13]. | Artificially increases the effective size of the training set, helping models learn more generalizable patterns. |

| Domain Knowledge Descriptors | Physically meaningful features generated from scientific principles [3] [36]. | Guides the model to learn correct underlying relationships, reducing the risk of learning spurious correlations. |

A Practical Toolkit: Data, Algorithmic, and Strategic Solutions for Small Datasets

Frequently Asked Questions (FAQs)

Q1: How can generative models like TVAE and CTGAN help mitigate overfitting in my materials science research with small datasets? These models address overfitting by tackling the root cause: limited data. They learn the underlying distribution and complex relationships within your original, small dataset of material properties. By generating high-quality, synthetic data that mirrors the statistical characteristics of the real data, they artificially expand your training set. This provides the machine learning model with a more comprehensive feature space to learn from, preventing it from memorizing noise and specific patterns that do not generalize. In practice, training predictive models on a mix of real and synthetic data has been shown to significantly improve performance on unseen test data, a key indicator of reduced overfitting [37].

Q2: My dataset has a mix of continuous (e.g., yield strength) and categorical (e.g., crystal structure) data. Which model is most suitable? Both CTGAN and TVAE were specifically designed to handle this challenge. They use advanced techniques to model mixed data types simultaneously. CTGAN uses a conditional generator and training-by-sampling to effectively deal with imbalanced categorical columns [38]. TVAE uses a variational autoencoder architecture that also incorporates special treatments for mixed data types through its data transformation layers [39].

Q3: What are the key steps to validate that my synthetic materials data is high-quality and useful? Validation is a multi-step process:

- Statistical Similarity: Compare the distributions (via histograms) and basic statistics (mean, standard deviation, min, max) of the synthetic and real features. The synthetic data should recreate these closely [37].

- Relationship Preservation: Check that correlations between variables (e.g., between defect depth and residual strength) are maintained in the synthetic data. Pearson correlation coefficient analysis is a common method for this [37].

- Physical Plausibility: Manually inspect the synthetic data for physically impossible values (e.g., a defect depth greater than the wall thickness) and filter them out [37].

- Utility Test: The ultimate test is to use the synthetic data in your downstream task. Train your predictive model on an augmented dataset (real + synthetic) and evaluate its performance on a held-out set of real data. An improvement in metrics like R² signifies successful augmentation [37].

Q4: I am concerned about the computational cost. How do these models compare in terms of training time and resources? As deep learning models, TVAE and CTGAN can be computationally intensive. The training time depends on your dataset size, the number of epochs, and your hardware.

- CUDA Support: Both models in the SDV implementation support using CUDA for faster training on GPUs, which can significantly speed up the process [39].

- Epochs: You can control the training duration via the

epochsparameter. Starting with the default (e.g., 300) and monitoring the loss values can help you find a good balance between time and quality [39]. - Comparative Resource Use: The specific computational demands of TVAE versus CTGAN can vary based on the dataset and hyperparameters. It is advisable to run pilot tests on a subset of your data.

Q5: After training a synthesizer, the sampled data contains some unrealistic outliers. How can I fix this? This is a common issue. You can employ a two-pronged approach:

- Post-sampling Filtering: Implement a rule-based filter to remove synthetic samples that violate known physical constraints (e.g., a synthetic material with a negative density or a tensile strength outside a possible range) [37].

- Adjust Sampling Parameters: Some synthesizers offer parameters like

enforce_min_max_valuesandenforce_rounding, which can help ensure generated numerical values stay within the observed boundaries of the real data [39].

Troubleshooting Guides

Problem: The machine learning model trained on synthetic data shows no improvement or performs worse. This indicates that the synthetic data may not be capturing the true patterns of your materials data.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Poor quality synthetic data. The generative model did not learn the real data distribution effectively. | Check statistical similarity and correlation preservation between real and synthetic data [37]. | Increase the number of epochs during training [39]. Experiment with different models (TVAE vs. CTGAN) [37]. Adjust the generative model's hyperparameters (e.g., batch_size, learning_rate) [39]. |

| Data preprocessing errors. The real data was not in the correct format for the synthesizer. | Ensure continuous data is represented as floats and discrete data as integers or strings. Check for and handle any missing values before training [40]. | Preprocess the real data to meet the model's requirements. For the SDV library, this is often handled automatically, but it's a critical step when using the standalone CTGAN library [40]. |

| Excessive synthetic data. Using too much synthetic data can drown out the signal from the limited real data. | Experiment with different ratios of real to synthetic data in the training set (e.g., 50%-50%, 70%-30%). | Reduce the amount of synthetic data used for augmentation. The goal is to complement the real data, not replace it entirely. |

Problem: The training process for the generative model is unstable or fails to converge. This is often observed as highly fluctuating or non-decreasing loss values.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inappropriate learning rate. A learning rate that is too high can prevent convergence. | Enable verbose training to monitor the loss and see if it oscillates wildly [39]. |

Decrease the learning rate. For CTGAN, a typical learning rate is 2e-4 [41]. |

| Issues with the training data. The dataset may be too small or have severe imbalances. | Analyze your dataset for severe class imbalances in categorical columns. | For CTGAN, the conditional generator and training-by-sampling are designed to handle this. For TVAE, ensure your dataset is as balanced as possible [38]. |

| Model-specific instability. GANs like CTGAN are known to be tricky to train. | Use the get_loss_values() method to track the loss and see if it is converging [39]. |

Consider using the TVAE model, which may offer more stable training due to its variational autoencoder foundation compared to the adversarial training of GANs [39] [38]. Train for more epochs. |

Experimental Protocols & Data

Summary of Model Performance in a Materials Study The following table summarizes the quantitative results from a study that used TVAE, CopulaGAN, and CTGAN to augment data for predicting the residual strength of corroded pipelines. The performance was measured by the improvement in the R² score of a LightGBM model on a held-out test set [37].

Table 1: Comparison of Data Augmentation Models on a Materials Dataset

| Generative Model | Base Model | Key Improvement (R² Score Increase) |

|---|---|---|

| TVAE | LightGBM | +3.12% |

| CopulaGAN | LightGBM | +4.46% |

| CTGAN | LightGBM | +3.60% |

Source: Adapted from "Advancing LightGBM with data augmentation for predicting the residual strength of corroded pipelines" [37].

Detailed Methodology for a Materials Data Augmentation Experiment

Data Collection and Preprocessing:

- Gather the limited original materials dataset (e.g., from experiments or finite element simulations).

- Preprocess the data: handle missing values, and ensure continuous features are represented as floats and categorical features as strings or integers. The SDV library can automate much of this [40].

- Clean the data by removing any physically impossible outliers (e.g., a defect depth larger than the wall thickness) [37].

Synthesizer Training:

- Initialize Model: Choose a synthesizer (e.g.,

TVAESynthesizerorCTGAN) and initialize it with the dataset's metadata and desired parameters. - Set Parameters: Common parameters include

epochs(number of training cycles, start with 300-500),verbose=Trueto monitor progress, andcuda=Trueto use GPU acceleration if available [39]. - Fit Model: Train the synthesizer on the entire preprocessed real dataset using the

.fit(data)method [39].

- Initialize Model: Choose a synthesizer (e.g.,

Synthetic Data Generation and Validation:

- Sample Data: Use the

.sample(num_rows)method to generate a synthetic dataset. The size can be a multiple of your original dataset (e.g., 5x or 10x) [39]. - Validate Quality:

- Compare the distributions of individual features (e.g., wall thickness, yield strength) between real and synthetic data using histograms [37].

- Compare the Pearson correlation matrices to ensure relationships between variables are preserved [37].

- Filter out any synthetic samples that violate domain knowledge.

- Sample Data: Use the

Downstream Task Evaluation:

- Create an augmented training set by combining the original data with a portion of the validated synthetic data.

- Train a downstream machine learning model (e.g., a regressor to predict material strength) on this augmented set.

- Evaluate the model's performance on a completely held-out test set that contains only real, unseen data. Compare metrics like R² or Mean Absolute Error against a model trained only on the original small dataset [37].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Generative Modeling Experiment

| Item / Solution | Function in the Experiment |

|---|---|

| Original Materials Dataset | The small, high-value dataset of real measurements (e.g., from mechanical tests, characterizations). Serves as the ground truth for training the generative model. |

| SDV (Synthetic Data Vault) Library | The primary software toolkit providing user-friendly, high-level APIs for implementing TVAESynthesizer and CTGANSynthesizer, handling data transformation and modeling [39] [40]. |

| Computational Resources (GPU) | A graphics processing unit to accelerate the training of deep learning-based synthesizers via CUDA, significantly reducing computation time [39]. |

| Validation Framework (e.g., Jupyter Notebook with Pandas, Matplotlib) | A environment for analyzing and comparing the statistical properties (distributions, correlations) of the real and synthetic datasets to ensure quality [37]. |

| Downstream Predictive Model (e.g., LightGBM, Random Forest) | A machine learning model used for the ultimate scientific task (e.g., predicting strength). Its performance on a test set is the final measure of the synthetic data's utility [37]. |

Workflow Visualization

Below is a workflow diagram illustrating the complete pipeline for leveraging generative models to combat overfitting in materials science.

Synthetic Data Generation and Validation Workflow

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental limitation of the standard SMOTE algorithm that can lead to overfitting in small materials datasets?

The standard SMOTE algorithm generates new synthetic samples through simple linear interpolation between a minority class instance and one of its k-nearest neighbors [42]. This mechanism has two key limitations that can lead to overfitting, particularly in small datasets common in materials science:

- Density Over-amplification: In high-density regions of minority class samples, SMOTE may produce an excessive number of synthetic instances, causing the model to overfit to these localized areas [42].

- Distributional Distortion: The linearly interpolated samples may not conform to the original underlying data distribution, potentially creating a distorted representation of the minority class and generating unrealistic or noisy samples that cross into the majority class space [42] [43].

FAQ 2: Which SMOTE variants are specifically designed to mitigate overfitting by generating more realistic synthetic samples?

Several advanced variants modify the data generation mechanism to create samples that better preserve the original data distribution, thereby reducing the risk of overfitting [42].

- ISMOTE (Improved SMOTE): This method adaptively expands the synthetic sample generation space. Instead of generating samples only between two original samples, it creates new samples around them using a random quantity based on the Euclidean distance. This alleviates distortions in local data distribution and density [42].

- Borderline-SMOTE: This technique focuses oversampling on the "borderline" minority instances—those that are closer to the decision boundary and thus more critical for classification. This avoids generating samples in already well-defined minority regions [44] [45].

- K-Means SMOTE: This algorithm first clusters the data using K-means. It then targets oversampling to clusters with a high proportion of minority classes and a sparse distribution of minority samples, ensuring generated samples align with the natural data structure [44].

- Counterfactual SMOTE: A novel approach that combines SMOTE with a counterfactual generation framework, intrinsically performing oversampling near the decision boundary but within a "safe region" to generate informative yet non-noisy minority samples [46].

FAQ 3: Should the test set be resampled when using SMOTE in an experimental protocol?

No. The test set must never be resampled [47]. The core purpose of a test set is to provide an unbiased evaluation of the model's performance on real-world, imbalanced data. Resampling the test set would create a unrealistic scenario and invalidate performance metrics. Resampling techniques like SMOTE should be applied only to the training data, and any associated hyperparameter tuning (like the k for nearest neighbors) should be performed using a separate validation set derived from the training data [47].

FAQ 4: How does data complexity, such as noise and class overlap, affect the choice of a resampling method?

Data complexity factors like noise (minority samples in majority regions) and overlap (where classes cannot be linearly separated) significantly aggravate the class imbalance problem [48] [45] [49].

- Problem: Standard SMOTE can amplify noise by generating synthetic samples around these outlier points. In regions of high class overlap, it can create more ambiguity by generating synthetic minority samples in the majority class domain, a problem known as overgeneralization [45].

- Solutions:

- Use Hybrid Methods: Integrate SMOTE with data cleaning techniques. For example, SMOTE-ENN and SMOTE-Tomek Links apply SMOTE first and then use undersampling to remove noisy or overlapping samples from both classes [48] [45].

- Select Robust Variants: Choose variants like Safe-Level-SMOTE or Borderline-SMOTE that are designed to avoid generating samples in unsafe, noisy regions [44].

Troubleshooting Guides

Issue 1: Model performance degrades after applying SMOTE, showing high accuracy on the majority class but poor recall on the minority class.

This is a classic sign of overgeneralization or the introduction of noise by the resampling method [45].

- Possible Causes:

- SMOTE is generating synthetic samples in the majority class region.

- The dataset has a high degree of noise or class overlap.

- The parameter

kfor nearest neighbors is too small, leading to overfitting to local clusters, or too large, introducing irrelevant neighbors.

- Recommended Solutions:

- Apply a Filter: Use a hybrid method like SMOTE-ENN to remove noisy samples after oversampling [45].

- Switch Variants: Implement Borderline-SMOTE or Safe-Level-SMOTE to focus on safer, more informative regions for sample generation [44].

- Tune Hyperparameters: Systematically optimize the

kparameter using cross-validation on the training set. Consider using a largerkfor sparse datasets.

Issue 2: The synthetic data generated by SMOTE does not appear to reflect the true distribution of the minority class in my materials data.

This concern is valid, as theoretical analysis shows SMOTE-generated patterns do not necessarily conform to the original minority class distribution [43].

- Possible Causes:

- The linear interpolation of SMOTE is too simplistic for the potentially complex, non-linear manifold of your materials data.

- The minority class may consist of several sub-concepts or "small disjuncts" that SMOTE fails to capture.

- Recommended Solutions:

- Use Cluster-based SMOTE: Apply K-Means SMOTE, which first identifies natural clusters and then performs oversampling within them, better preserving the overall data structure [44].

- Explore Advanced Variants: Consider ISMOTE, which expands the generation space to create a more realistic distribution [42], or FLEX-SMOTE, which is designed to flexibly adjust to different minority class distributions [50].

The following table summarizes key performance metrics from recent studies comparing various SMOTE variants. These metrics are crucial for evaluating the effectiveness of these techniques in mitigating overfitting and improving model robustness.

Table 1: Comparative Performance of SMOTE Variants on Public Datasets

| Algorithm | Key Improvement | Reported Performance Improvement (Relative) | Best Suited For |

|---|---|---|---|

| ISMOTE [42] | Expands sample generation space to alleviate density distortion. | F1-score: +13.07%G-mean: +16.55%AUC: +7.94% | Datasets where standard SMOTE causes overfitting in high-density regions. |

| Borderline-SMOTE [44] | Focuses oversampling on borderline minority instances. | (Widely reported to improve precision and recall at the decision boundary) | Problems where the boundary between classes is critical. |

| K-Means SMOTE [44] | Uses clustering to oversample in appropriate regions. | (Improves data representation by considering cluster structure) | Datasets with inherent sub-concepts within the minority class. |

| SMOTE-ENN [45] | Combines oversampling with cleaning of both classes. | (Effective at improving G-mean and AUC in complex, noisy data) | Complex datasets with significant noise or class overlap. |

Experimental Protocols for Key SMOTE Variants

Protocol 1: Implementing and Evaluating the ISMOTE Algorithm

This protocol is based on the improved SMOTE algorithm designed to generate a more realistic data distribution [42].

- Input: Original imbalanced training set ( U = UL \cup UM ), where ( UL ) is the minority class and ( UM ) is the majority class.

- Synthetic Sample Generation:

- For a given minority instance ( xi ), select one of its k-nearest neighbors, ( x{zi} ).

- Generate a base sample on the line segment between ( xi ) and ( x{zi} ).

- Calculate the Euclidean distance ( d ) between ( xi ) and ( x{zi} ).

- Multiply ( d ) by a random number ( \delta ) between 0 and 1 to get a random quantity ( r = \delta \times d ).

- The new synthetic sample ( x{new} ) is generated by adding or subtracting this random quantity ( r ) from the base sample's position vector, ensuring it is created around the line connecting ( xi ) and ( x_{zi} ).

- Output: A balanced training set with synthetic minority samples that better reflect the expanded sample space.

- Validation: Always validate the model, trained on the resampled data, on a pristine, untouched test set that reflects the original imbalance [47].

Protocol 2: A Standard Workflow for Applying SMOTE Variants in Materials Science

The diagram below illustrates a robust experimental workflow for applying SMOTE variants in a materials science research project, incorporating best practices to mitigate overfitting.

Diagram 1: SMOTE Application Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools and Algorithms

| Item / Algorithm | Function / Purpose | Key Application in Materials Science |

|---|---|---|

| Standard SMOTE [42] | Generates synthetic minority samples via linear interpolation to balance class distribution. | Baseline oversampling for initial attempts to handle imbalance in datasets like catalyst screening [51]. |

| ISMOTE [42] | Expands the sample generation space to create more realistic distributions and reduce overfitting. | For advanced applications where standard SMOTE leads to distribution distortion, e.g., in polymer property prediction. |

| Borderline-SMOTE [45] | Selectively oversamples minority instances near the decision boundary. | Improving model accuracy in critical classification tasks, such as distinguishing between high/low-performance materials. |

| K-Means SMOTE [44] | Uses clustering to identify sparse minority regions for targeted oversampling. | Handling complex materials data with multiple distinct sub-classes (e.g., different crystal structure phases). |

| SMOTE-ENN [45] | A hybrid method that oversamples the minority class and then cleans both classes by removing noisy samples. | Deploying on noisy experimental data with significant class overlap to create a clearer decision boundary. |

| XGBoost Classifier [51] | A powerful ensemble learning algorithm often used with resampled data for final prediction. | Building high-performance prediction models for tasks like mechanical property prediction or catalyst design [51]. |

Frequently Asked Questions

1. My model performs well on training data but poorly on validation data. What is happening? This is a classic sign of overfitting. Your model has likely learned the noise and specific details of your training data, rather than the underlying pattern that generalizes to new data. This is a common risk with complex, non-linear models like Gradient Boosted Trees or large Neural Networks, especially when the training dataset is small [52].

2. I have a very small dataset. Should I even consider using non-linear models? Yes, but with extreme caution and strategic modifications. While small datasets increase the risk of overfitting for non-linear models, their ability to capture complex, non-linear relationships can still be crucial [53] [3]. The key is to use strong regularization, prefer models with built-in feature selection, and employ techniques like data augmentation or transfer learning to effectively "increase" your data size [3] [54].

3. Is there a trade-off between model interpretability and performance? Not necessarily. A common misconception is that only black-box models can achieve high accuracy. Recent research shows that a new generation of Generalized Additive Models (GAMs) can provide high performance while remaining fully interpretable. These models capture non-linear relationships for each feature in an additive manner, making them both powerful and transparent [55].

4. How can I identify if my dataset contains non-linear relationships? A good first step is to use non-linear feature selection methods. Linear methods often fail to identify these patterns. If non-linear methods consistently select a different set of features as important, it is a strong indicator that your data has non-linear dependencies that a linear model would miss [53].

Troubleshooting Guides

Problem: Diagnosing High Bias (Underfitting) or High Variance (Overfitting)

Symptoms and Diagnosis:

| High Bias (Underfitting) | High Variance (Overfitting) | |

|---|---|---|

| Training Error | High | Very Low |

| Validation Error | High | High |

| Model Behavior | Oversimplifies the problem, fails to capture underlying trends [25]. | Memorizes the training data, including its noise [25]. |

| Common in | Linear models on complex problems [25]. | Complex models (deep trees, NNs) on small datasets [52] [25]. |

Mitigation Strategies:

- If your model has High Bias (Underfitting):

- Increase Model Complexity: Switch from a linear model to a non-linear one like Random Forest or a shallow Neural Network.

- Feature Engineering: Add more informative features or create new features through combination (e.g., using SISSO in materials science [3]).

- Reduce Regularization: Lower the strength of L1 or L2 regularization [25].

- If your model has High Variance (Overfitting):

- Gather More Data: This is one of the most effective solutions [56]. If impossible, use data augmentation techniques (e.g., bootstrapping, SMOTE, generative models [54]).

- Increase Regularization: Tune hyperparameters like L2 regularization in regression,

max_depthin trees, or dropout in Neural Networks [52] [25]. - Simplify the Model: Use a simpler algorithm (e.g., Linear Model instead of NN) or reduce the number of parameters [25].