Closing the Loop in Computational Materials Design: AI, Autonomous Labs, and the Future of Accelerated Discovery

This article explores the paradigm of 'closing the loop' in computational materials design, a transformative approach that integrates AI-driven prediction, automated synthesis, and high-throughput characterization into a rapid, iterative cycle.

Closing the Loop in Computational Materials Design: AI, Autonomous Labs, and the Future of Accelerated Discovery

Abstract

This article explores the paradigm of 'closing the loop' in computational materials design, a transformative approach that integrates AI-driven prediction, automated synthesis, and high-throughput characterization into a rapid, iterative cycle. Tailored for researchers, scientists, and drug development professionals, we examine the foundational principles of this data-centric philosophy, detail the methodologies powering autonomous discovery platforms, and address critical challenges in data integrity and model reliability. By highlighting validated success stories and comparative analyses, this review provides a comprehensive framework for implementing closed-loop systems to drastically shorten the materials development timeline, with significant implications for pharmaceutical development and biomedical innovation.

The Foundations of Closed-Loop Design: From Linear Research to an Integrated Discovery Engine

Defining the 'Closed-Loop' Paradigm in Materials Science

The 'Closed-Loop' paradigm in materials science represents a fundamental shift from traditional, linear research and development processes towards an integrated, iterative, and autonomous system. Framed within a broader thesis on closing the loop in computational materials design, this paradigm leverages a tight integration of computational prediction, experimental validation, and data-driven learning to accelerate the discovery and development of new materials. The core objective is to create a self-optimizing system where data from each cycle directly informs and improves the next, significantly reducing the time and cost associated with traditional methods [1] [2]. This approach is not merely a technological upgrade but a revolutionary framework that merges materials data infrastructure, artificial intelligence (AI), and robotics to foster a more sustainable and efficient research ecosystem [2].

The urgency for adopting this paradigm is amplified by global sustainability challenges. The transition towards a circular economy has made the principles of resource efficiency, end-of-life recovery, and minimized environmental footprints operational imperatives for engineering and design teams [3]. The closed-loop paradigm provides the technological backbone to actualize these principles, enabling the design of materials with predefined lifecycle trajectories, including disassembly and reuse.

Core Principles and Technological Framework

The closed-loop paradigm is built upon several interdependent pillars that work in concert to create a continuous cycle of innovation.

The Autonomous Discovery Cycle

At the heart of the closed-loop paradigm is the concept of the self-driving laboratory (SDL). An SDL is an automated experimental platform that integrates machine learning algorithms and robotics to execute experimental cycles with minimal human intervention [1]. This represents a tangible realization of the closed-loop, moving from a human-led, sequential process to an autonomous, iterative one.

Integrated Data Infrastructure

A foundational element is the materials data infrastructure, which serves as the central nervous system for the entire operation. It is responsible for the collection, storage, and curation of data generated from both simulations and experiments. This infrastructure ensures that data is FAIR (Findable, Accessible, Interoperable, and Reusable), providing the high-quality, structured datasets necessary to train robust machine learning models [2].

Multi-Scale Computational Design

Closing the loop requires computational design to operate across all scales of materials development, from atomic structure to functional component. The "Scales of Design" framework articulates this comprehensive view [4]:

- Micro: Material-level behavior, phase interactions, and structure-property relationships.

- Meso: Architected materials and engineered geometries like metamaterials and lattices.

- Macro: Component, product, and system-level design, including topology optimization. This multi-scale perspective ensures that insights from one scale can directly influence decisions at another, creating a cohesive design and development pipeline.

Circularity by Design

Finally, the paradigm incorporates the principles of circular design directly into the materials discovery process. This includes designing for disassembly, modularity, and the use of material passports that detail composition and recycling pathways [3]. By integrating these considerations at the computational design stage, the resulting materials are inherently more sustainable and easier to reintegrate into the production loop at the end of their life.

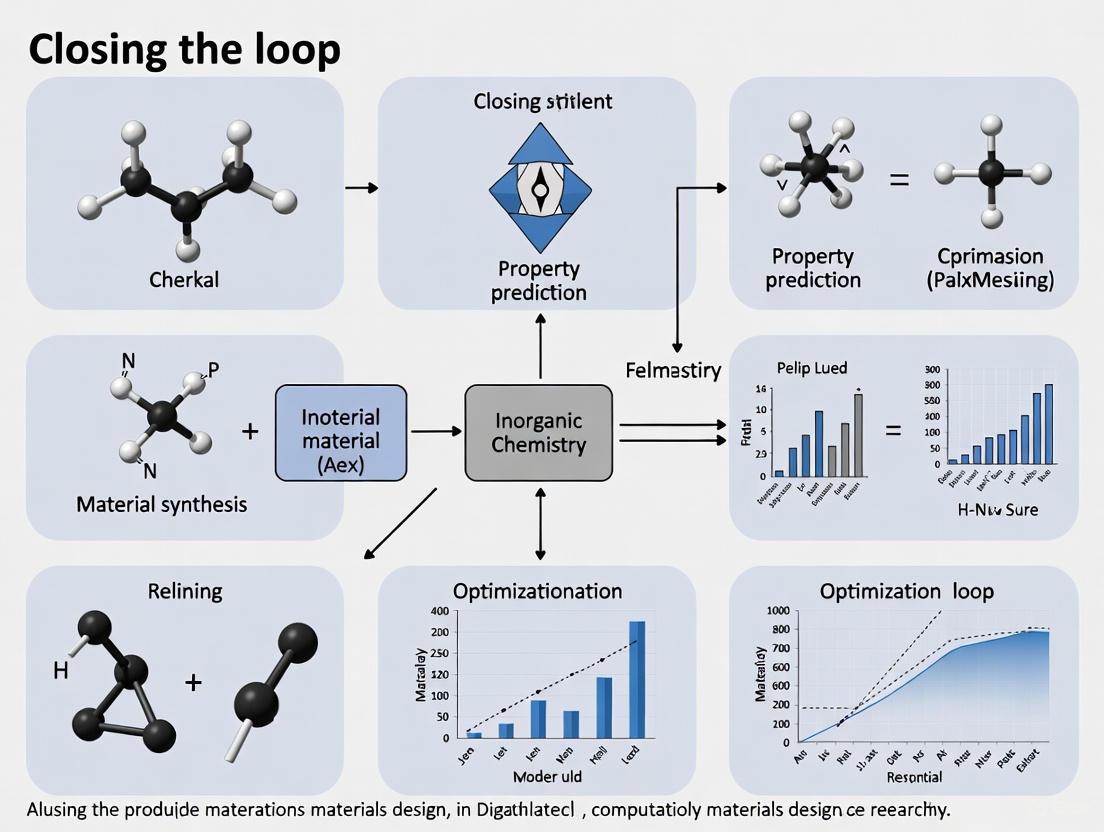

The following diagram illustrates the logical workflow and the continuous, iterative nature of this closed-loop system.

Quantitative Landscape of Sustainable Materials

The adoption of closed-loop principles is accelerating the development and application of sustainable materials. The quantitative data below summarizes the market growth and adoption rates of key material classes that are central to the circular economy, as of 2025 [3].

Table 1: Adoption Metrics for Key Sustainable Materials (2025)

| Material Class | Global Production Capacity / Adoption Rate | Key Applications | Primary Drivers |

|---|---|---|---|

| Bioplastics | Exceeded 4.5 million tonnes [3] | Packaging, consumer electronics, automotive components [3] | Improved processing tech, compostability, supply chain traceability [3] |

| Mycelium-Based Composites | Adoption has doubled since 2022 [3] | Furniture, packaging, building insulation [3] | Low embodied energy, end-of-life compostability, scalability [3] |

| Recycled Construction Materials | Constitute >30% of new builds in several G7 economies [3] | Modular construction, building components [3] | Building codes, procurement frameworks, digital material passports [3] |

| Overall Sustainable Materials Market | CAGR of 18% (2022-2025) [3] | Cross-industry | Legislative mandates, consumer preferences, corporate commitments [3] |

Experimental Methodologies for Closed-Loop Research

Implementing the closed-loop paradigm requires specific experimental and computational protocols. This section details a general methodology for an SDL and a specific protocol for reaction optimization, a common application.

Generalized Workflow for a Self-Driving Laboratory

The operation of an SDL can be broken down into a repeatable, automated workflow. The following diagram outlines the key stages involved in a single cycle of experimentation and learning.

Step-by-Step Protocol:

- Hypothesis Generation (A): An AI model, typically using a Bayesian optimization algorithm, analyzes all existing data from previous cycles and proposes the next set of experimental conditions that are most likely to improve the target objective (e.g., yield, conductivity) [1]. This step identifies the most informative experiments, not just random trials.

- Experimental Proposal (B): The algorithm outputs a specific, machine-readable set of parameters (e.g., temperature, concentration, stoichiometry) for the robotic systems to execute.

- Automated Execution (C): Robotic platforms and automated systems (e.g., liquid handlers, synthesis robots) perform the physical experiments without human intervention, ensuring high precision and reproducibility [1].

- In-Line Characterization (D): Integrated analytical instruments (e.g., spectrometers, chromatographs) automatically characterize the products of the reaction or synthesis in real-time, generating raw performance data.

- Data Processing (E): The raw data is automatically processed to extract relevant performance metrics (e.g., conversion efficiency, material property measurements) and formatted for the AI model.

- Model Update (F): The AI model is retrained with the new experimental data, updating its understanding of the complex relationship between input parameters and outcomes. This updated model then returns to Step A to generate a new, more informed hypothesis, closing the loop [1].

Specific Protocol: Closed-Loop Optimization of a Photocatalytic Reaction

This protocol applies the general SDL workflow to the specific task of optimizing a photocatalytic reaction, a application noted in the search results [1].

Objective: Maximize the hydrogen evolution rate (HER) from a water-splitting photocatalytic system. AI Model: Bayesian Optimization with Expected Improvement as the acquisition function. Experimental Setup: A high-throughput photoreactor array equipped with automated liquid handling for catalyst precursor injection and a gas chromatograph (GC) for in-line hydrogen quantification.

Procedure:

- Initialize Database: Populate the AI model with an initial dataset of 10-20 historical data points linking catalyst composition (e.g., metal ratios), synthesis conditions (e.g., calcination temperature), and measured HER.

- Run Optimization Cycle:

- The AI model processes the current data and suggests the next 5 catalyst compositions and synthesis conditions to test.

- A robotic arm dispenses precursor solutions into well plates according to the suggested compositions.

- An automated synthesis system processes the plates (e.g., heating, drying, calcining).

- The synthesized catalyst libraries are automatically transferred to the photoreactor array, which is illuminated under a standard light source.

- The GC system samples the headspace of each reactor at regular intervals, measuring the hydrogen production rate.

- Data Integration: The measured HER for each experiment is automatically tagged with its corresponding input parameters and added to the central database.

- Iterate: Steps 2-3 are repeated until a predefined performance threshold is met (e.g., HER > X mmol/g/h) or a set number of cycles is completed.

The Researcher's Toolkit: Essential Reagents and Materials

The practical implementation of the closed-loop paradigm, particularly in fields like polymer science or catalysis, relies on a set of key materials and software tools.

Table 2: Essential Research Reagents and Computational Tools

| Item | Function / Rationale |

|---|---|

| Catalyst Precursors | Metal salts or organometallic compounds used to explore vast composition spaces for catalytic activity, a common target for SDLs [1]. |

| Monomer Libraries | A collection of diverse building blocks (e.g., for bioplastics like PLA and PHA) for high-throughput synthesis and screening of polymers with tailored properties [3]. |

| Mycelium Strains | The vegetative part of fungi, used as a feedstock for growing lightweight, strong, and biodegradable composites, aligning with circular design principles [3]. |

| Post-Consumer Recyclate | Processed waste materials (e.g., recycled PET, reclaimed steel) used as feedstock for designing new materials with high recycled content [3]. |

| MedeA Software Environment | A computational platform for atomistic simulation, property prediction, and data management, facilitating the computational design pillar of the closed-loop [5]. |

| VASP (Vienna Ab initio Simulation Package) | A powerful package for first-principles quantum mechanical calculations, fundamental to predicting material properties at the atomic scale [5]. |

| GRACE Interatomic Potentials | Foundational machine-learning interatomic potentials used for highly accurate and efficient molecular dynamics simulations [5]. |

The Shift from Traditional Linear Workflows to Iterative Cycles

The field of computational materials science is undergoing a profound transformation, moving away from traditional, sequential research and development (R&D) processes toward integrated, iterative cycles that dramatically accelerate discovery and design. This paradigm shift, often termed "closing the loop," represents a fundamental reimagining of how materials research is conducted. Traditional approaches to materials development have primarily been driven by experience, intuition, and trial-and-error methodologies, often resulting in processes that are time-consuming, labor-intensive, and marked by low success rates [6]. These linear workflows typically involve discrete, disconnected stages: hypothesis generation, computational modeling, experimental synthesis, and characterization, with minimal feedback between phases.

In contrast, modern iterative cycles implement automation and machine learning surrogatization within closed-loop computational workflows, creating a continuous, integrated process where each stage informs and optimizes the others [7]. This approach is particularly transformative for materials science, where the chemical space is virtually infinite, and traditional methods struggle to identify promising target molecules from tens of millions of possible structures [6]. The implementation of closed-loop frameworks is revolutionizing how we discover and apply new knowledge, potentially unlocking advanced materials required for more efficient solar cells, higher-capacity batteries, and critical carbon capture technologies – accelerating our path to carbon neutrality [6].

Quantitative Acceleration: Measuring the Impact of Iteration

The acceleration achievable through closed-loop frameworks is not merely theoretical but has been rigorously quantified across multiple dimensions of the materials discovery process. Recent research has identified four distinct sources of speedup that contribute to the overall efficiency gains when shifting from linear to iterative workflows [7].

Table 1: Quantified Acceleration from Closed-Loop Framework Components in Computational Materials Discovery

| Acceleration Source | Speedup Factor | Contribution to Overall Efficiency |

|---|---|---|

| Task Automation | Component of overall reduction | Eliminates manual intervention in routine calculations and data processing |

| Calculation Runtime Improvements | Component of overall reduction | Optimizes computational performance through hardware and algorithm advances |

| Sequential Learning-Driven Search | Component of overall reduction | Guides exploration toward promising regions of materials space |

| Combined Effect of Above Three Sources | ~10× (over 90% time reduction) | Enables rapid hypothesis evaluation without surrogate models |

| Surrogatization with Machine Learning | ~15-20× (over 95% time reduction) | Replaces expensive simulations with instant property predictions |

From a combination of the first three sources of acceleration – task automation, calculation runtime improvements, and sequential learning-driven design space search – researchers estimate that overall hypothesis evaluation time can be reduced by over 90%, achieving a speedup of approximately 10× [7]. Further, by introducing surrogatization into the loop, where expensive simulations are replaced with machine learning models, the design time can be reduced by over 95%, achieving a speedup of approximately 15-20× [7]. These quantitative improvements present a clear value proposition for utilizing closed-loop approaches for accelerating materials discovery.

Core Components of Modern Iterative Workflows

Generative AI and Machine Learning Integration

Generative artificial intelligence is emerging as a powerful tool for advancing the design of functional materials such as metal-organic frameworks (MOFs), covalent-organic frameworks (COFs), and zeolites [8]. These models suggest new candidate materials with specific targeted properties, significantly accelerating the material discovery process by identifying promising candidates early in the research cycle [8]. Several generative AI approaches have demonstrated particular promise in designing nanoporous materials:

- Generative Adversarial Networks (GANs): Used for generating diverse and high-quality material designs, such as the ZeoGAN model for designing pure silica zeolites with specific methane adsorption properties [8].

- Variational Autoencoders (VAEs): Effective for exploring continuous latent spaces of materials structures, as demonstrated by the Supramolecular VAE (SmVAE) for designing MOFs for carbon dioxide separation from natural gas [8].

- Diffusion Models: Emerging as powerful tools for generating molecular structures, such as DiffLinker for designing MOF linkers for CO2 capture applications [8].

- Genetic Algorithms (GAs): Excel at optimizing materials with desired properties through evolutionary operations [8].

- Reinforcement Learning (RL): Effective for exploring large design spaces through reward-driven optimization [8].

These generative models enable more efficient exploration of the vast material space with reduced sampling requirements, facilitating material design where desired properties directly guide the generation of suitable material structures [8]. This approach is particularly compelling for porous frameworks, given their modular nature, which allows for precise tuning of building blocks to achieve targeted properties.

Molecular Dynamics Simulations in Iterative Cycles

Molecular dynamics (MD) simulations have become an integral component of closed-loop materials design, providing atomic-level insights that bridge computational predictions and experimental validation. MD simulations predict how every atom in a protein or other molecular system will move over time, based on a general model of the physics governing interatomic interactions [9]. These simulations capture the behavior of proteins and other biomolecules in full atomic detail and at very fine temporal resolution, revealing positions of all atoms at femtosecond resolution [9].

The value of MD simulations in iterative workflows is particularly evident in applications such as vaccine development, where simulations can predict physical properties of engineered protein particles fused with antigens, including self-assembly capability, hydrophobicity, and overall stability [10]. For example, in developing hepatitis B core (HBc) protein-based virus-like particle vaccines, large partial VLP models containing 17 chains for HBc chimeric model vaccines were constructed based on wild-type HBc assembly templates [10]. Findings from simulation analysis demonstrated good consistency with experimental results pertaining to surface hydrophobicity and overall stability of chimeric vaccine candidates, enabling an MD-guided design approach that minimizes design failures and provides guidance for downstream processing [10].

Table 2: Research Reagent Solutions in Closed-Loop Materials Discovery

| Research Reagent/Resource | Function in Iterative Workflows | Application Examples |

|---|---|---|

| Molecular Dynamics Software | Predicts atomic-level trajectories and properties | GROMACS, AMBER, NAMD for simulating biomolecules and materials |

| Generative AI Models | Creates novel molecular structures with targeted properties | GANs, VAEs, Diffusion Models for de novo materials design |

| High-Throughput Computational Screening | Rapidly evaluates thousands of candidate materials | Materials Project for calculating properties across 150,000 materials |

| Machine Learning Potentials | Accelerates molecular simulations with quantum accuracy | Orbital Materials' "Orb" and DP Technology's "DPA-2" |

| Automated Experimentation Platforms | Enables rapid experimental validation of computational predictions | High-throughput synthesis and characterization systems |

The effectiveness of iterative cycles in materials design fundamentally depends on access to vast amounts of high-quality data and robust computational infrastructure. Platforms like the Materials Project have become critical enablers of this new paradigm by providing free, public resources with data on approximately 150,000 materials, including information on electronic structure, phonon and thermal properties, elastic/mechanical properties, and more [11]. Powered by hundreds of millions of CPU-hours invested into high-quality calculations, such infrastructure provides the foundational data necessary for training machine learning models and validating computational predictions [11].

The Materials Project exemplifies how community resources can accelerate closed-loop design, with demonstrated successes in multiple domains:

- Thermoelectrics Discovery: Screening of tens of thousands of materials with predicted electron transport properties revealed a family of promising XYZ2 candidates, leading to the experimental realization of materials including YCuTe2 (zT = 0.75) and TmAgTe2 (zT = 0.47, 1.8 theoretical) [11].

- Transparent Conductors: Predictions identified materials with s-p hybridized valence bands, resulting in the synthesis of Ba2BiTaO6 with excellent transparency and readily dopable with potassium [11].

- Phosphors Design: Statistical analysis of existing materials followed by structure prediction led to the discovery of the first known Sr-Li-Al-N quaternary, showing green-yellow/blue emission with quantum efficiency of 25-55% [11].

These successes demonstrate how integrated data infrastructure enables the iterative design cycle, where computational predictions inform experimental synthesis, which in turn validates and improves computational models.

Experimental Protocols in Closed-Loop Frameworks

Protocol for Generative AI-Driven Materials Discovery

The application of generative AI in nanoporous materials design follows a structured methodology that integrates computational generation with validation [8]:

Problem Formulation: Define target properties and constraints based on application requirements (e.g., CO2 uptake > 2 mmol g−1 at 0.1 bar for carbon capture materials).

Model Selection and Training: Choose appropriate generative architecture (GAN, VAE, Diffusion, etc.) and train on relevant materials datasets (e.g., 78,238 MOFs from the hMOF dataset for linker design).

Candidate Generation: Deploy trained model to generate novel structures with targeted properties, typically producing thousands to millions of candidate materials.

Structural Validation: Implement multiple validation checks including:

- Interatomic distance analysis to ensure physical realism

- Chemical stability assessment using molecular dynamics simulations

- Synthesizability evaluation using metrics like SAscore and SCscore

Property Validation: Conduct high-fidelity simulations (e.g., Grand Canonical Monte Carlo for gas adsorption, MD for stability) to verify predicted properties.

Experimental Synthesis and Testing: Prioritize top candidates for experimental realization, completing the loop between computation and experiment.

Protocol for Molecular Dynamics-Guided Vaccine Design

The integration of MD simulations within vaccine development workflows follows a distinct iterative protocol [10]:

System Preparation: Construct atomic-level models of vaccine candidates based on experimental templates (e.g., building 17-chain partial VLP models of HBc chimeric vaccines from wild-type HBc assembly templates).

Simulation Parameters: Employ appropriate force fields (e.g., updated, more precise forcefield descriptions) and simulation conditions reflecting physiological environments.

Equilibration and Production: Perform extensive equilibration followed by microsecond-scale production runs to sample relevant conformational states.

Property Analysis: Calculate key properties including:

- Surface hydrophobicity via residue contact analysis

- Structural stability through root-mean-square deviation and fluctuation

- Self-assembly propensity via interface interaction energies

Experimental Correlation: Compare simulation predictions with experimental measurements of stability, hydrophobicity, and immunogenicity.

Design Iteration: Use simulation insights to guide subsequent design modifications, focusing on stabilizing mutations or optimized epitope insertion strategies.

Workflow Visualization: Linear vs. Iterative Approaches

The fundamental differences between traditional linear workflows and modern iterative cycles can be visualized through the following computational graphs:

Linear Research Workflow - Traditional sequential process with minimal feedback between stages.

Iterative Research Workflow - Modern closed-loop process with continuous AI-enabled feedback.

Closed-Loop Framework - Integrated system with AI orchestration connecting all components.

Challenges and Future Prospects

Despite the significant promise of iterative cycles in computational materials design, several challenges remain before widespread industrial implementation can be achieved [6]:

- Data Limitations: The effectiveness of AI models fundamentally depends on access to vast amounts of high-quality experimental data, yet materials development datasets often suffer from incompleteness, inconsistency, and inaccuracy.

- Complex Production Environments: Materials performance characteristics vary significantly across different application contexts and manufacturing conditions, challenging AI models' generalization capabilities beyond controlled laboratory settings.

- High Development Costs: The field demands substantial capital investment and continuous technical iteration, requiring multidisciplinary teams with diverse expertise spanning materials science, chemistry, AI algorithms, and practical implementation experience.

To overcome these obstacles, businesses and research institutions are increasingly forging collaborative partnerships to create universal or domain-specific datasets, while continuously improving algorithms through iterative development cycles [6]. Crucially, integrating purely data-driven approaches with first principles methodologies enhances extensibility – while deep learning algorithms excel at fitting available data, first principles and domain knowledge can effectively extrapolate to areas with limited or no empirical data [6].

The future of closed-loop materials design will likely involve increasingly sophisticated integration of generative AI, high-throughput computing, and automated experimentation. As these technologies mature and become more accessible, they promise to transform materials science from a discovery-driven discipline to a design-oriented field, delivering the breakthroughs necessary for a more sustainable and technologically advanced future.

The discovery and development of new materials are fundamental to technological progress, impacting sectors from energy storage to quantum computing. Traditionally, materials discovery has been a slow process, heavily reliant on serendipitous findings and Edisonian trial-and-error approaches [12]. However, a transformative paradigm has emerged: closed-loop computational materials design. This framework integrates theoretical models, computational tools, and experimental validation into an iterative, self-improving cycle, dramatically accelerating the pace of discovery.

This paradigm shifts materials science from a linear, sequential process to an integrated, cyclical one. By treating materials databases not as static snapshots but as evolving systems, and by using machine learning to guide which experiment or simulation to perform next, researchers can systematically explore vast materials spaces with unprecedented efficiency [13]. This guide details the core components and methodologies of this integrated approach, providing researchers with the technical foundation for implementing closed-loop strategies in their own materials discovery workflows.

The Closed-Loop Framework in Practice

The closed-loop framework for materials discovery is an iterative process where each cycle refines the understanding and direction of the search. A generalized workflow for this framework can be visualized as follows:

Figure 1: The iterative closed-loop framework for materials discovery, showing how theoretical models, computation, and experimentation interact in a cyclical process that continuously improves predictive models.

As illustrated in Figure 1, the process begins with initial training data from existing knowledge, which informs theoretical and physical models. These models drive computational screening to generate candidate materials, which are then prioritized for experimental synthesis and characterization. The results from these experiments are fed back as new data points, refining the models and initiating the next cycle. This iterative process of prediction, validation, and learning enables researchers to rapidly converge on materials with desired properties [13].

The power of this framework lies in its ability to actively address the out-of-distribution generalization problem, where machine learning models perform poorly on data that differs significantly from their training set [13]. By intentionally seeking out and testing materials that are distinct from known examples, and then incorporating the results back into the training data, the model's predictive capability across a broader chemical space is systematically enhanced.

Core Component 1: Theoretical and Physical Models

Theoretical models form the foundational layer of the closed-loop framework, providing the physical principles and constraints that guide the entire discovery process. These models range from quantum mechanical calculations to mesoscale phenomenological theories.

Density Functional Theory (DFT) and Electronic Structure Calculations

At the atomic scale, Density Functional Theory (DFT) serves as a cornerstone for computational materials science. DFT enables the calculation of electronic structures and properties of materials from first principles, providing key parameters such as formation energy, band gap, and density of states [14]. In the context of superconducting materials discovery, for instance, DFT calculations can help identify compounds with electronic structures conducive to Cooper pair formation, even if the exact mechanism of high-temperature superconductivity remains elusive.

Mesoscale and Phenomenological Models

For properties emergent at larger scales, mesoscale models are indispensable. A prime example is the Time-Dependent Ginzburg-Landau (TDGL) theory for shape memory alloys. This phase-field model captures the underlying physics of martensitic transformations, simulating microstructure evolution and resulting stress-strain behavior under applied loads [15]. The model employs symmetry-adapted strain components (e₂, e₃) as order parameters, with the total system energy described by a Landau polynomial expansion. Such models can simulate the hysteretic behavior of materials, with the enclosed area of the stress-strain loop quantifying energy dissipation—a key property for applications requiring low hysteresis [15].

Core Component 2: Computational Methods and Machine Learning

Computational tools act as the engine of the closed-loop framework, translating theoretical models into specific predictions and prioritizing candidates for experimental testing.

High-Throughput Computational Screening

High-throughput computation enables the rapid virtual screening of thousands of compounds. Projects like the Materials Project and the Open Quantum Materials Database (OQMD) have created massive repositories of calculated materials properties, serving as initial search spaces for discovery campaigns [13] [12]. For example, in the search for new superconductors, these databases provided hundreds of thousands of candidate compositions that were initially filtered using machine learning models before experimental validation [13].

Machine Learning for Property Prediction

Machine learning models are trained on existing materials data to predict properties of unexplored compounds. In superconducting materials discovery, the Representation learning from Stoichiometry (RooSt) model has been successfully employed to predict critical temperature (Tc) using only chemical composition [13]. This approach is particularly valuable when crystal structure information is unavailable for candidate materials.

A critical aspect of ML-guided discovery is uncertainty quantification. The Mean Objective Cost of Uncertainty (MOCU) is an objective-based uncertainty quantification scheme that measures the deterioration in performance of a designed operator due to model uncertainty [15]. MOCU-based experimental design recommends the next experiment that maximally reduces the uncertainty impacting the materials property of interest, leading to more efficient discovery.

Active Learning and Sequential Learning

Active learning strategies enable the iterative selection of the most informative data points to improve the model. In practice, this involves selecting materials that are both predicted to have high performance (e.g., high Tc) and are sufficiently distinct from known materials in the training data [13]. This balance between exploitation (testing promising candidates) and exploration (testing candidates in underrepresented regions of materials space) is crucial for effective discovery.

Table 1: Performance Comparison of Materials Discovery Approaches

| Methodology | Discovery Acceleration Factor | Key Features | Limitations |

|---|---|---|---|

| Traditional Edisonian | 1x (baseline) | Relies on intuition and serendipity | High cost, time-consuming, many dead ends |

| High-Throughput Screening | 2-5x | Systematic testing of large arrays | Still tests many unpromising candidates |

| Closed-Loop ML | 10-25x [16] | Iterative refinement with experimental feedback | Requires initial dataset, complex infrastructure |

Core Component 3: Experimental Validation and Feedback

Experimental validation serves as the ground truth for computational predictions and provides critical feedback to improve the models in the closed-loop cycle.

Synthesis of Predicted Materials

The transition from virtual prediction to tangible material requires careful synthesis planning. Key considerations include:

- Stability Prioritization: Candidates calculated to be thermodynamically stable (e.g., with energy above hull, Eoverhull = 0.00 eV/atom) or nearly stable (Eoverhull < 0.05 eV/atom) are prioritized to increase synthesis likelihood [13].

- Metallicity Consideration: For superconductivity searches, metals and easily-doped materials are favored, as large band gap insulators are generally incapable of superconductivity without doping [13].

- Compositional Exploration: Due to sensitivity to disorder and lattice parameters, researchers often explore several compositions near each prediction [13].

Characterization and Property Verification

Robust characterization is essential to confirm successful synthesis and measure target properties. Standard protocols include:

- Structural Characterization: Powder X-ray diffraction (XRD) is used to verify phase purity and crystal structure of synthesized materials [13].

- Superconductivity Testing: Temperature-dependent AC magnetic susceptibility measurements identify superconductors through their perfect diamagnetism below Tc [13].

- Property-Processing Relationships: As material properties are highly sensitive to processing conditions, systematic investigation of synthesis parameters is crucial. For instance, A3B compounds (including A15 family superconductors) exhibit significant variations in properties with slight compositional changes [13].

Case Studies in Integrated Materials Discovery

Superconducting Materials Discovery

In a landmark demonstration of the closed-loop framework, researchers discovered a new superconductor in the Zr-In-Ni system and re-discovered five others unknown to the initial training data through four iterative cycles [13]. The methodology employed:

- Initial Training: An ensemble of RooSt models was trained on the SuperCon database containing known superconductors.

- Prediction and Filtering: The models predicted Tc for compounds in the MP and OQMD databases, followed by filtering based on stability and distance from known superconductors.

- Experimental Validation: Selected candidates were synthesized and tested for superconductivity.

- Model Refinement: Both positive and negative results were incorporated into the training set, refining subsequent predictions.

This approach more than doubled the success rate for superconductor discovery compared to conventional methods [13].

Sustainable Cement Design

The closed-loop framework has also been successfully applied to sustainable materials design. Researchers used machine learning to accelerate the development of green cements incorporating algal biomatter [17]. The process involved:

- Multi-Objective Optimization: Simultaneously minimizing global warming potential (GWP) while maintaining compressive strength requirements.

- Accelerated Testing: Implementing early-stopping criteria to rapidly screen promising formulations.

- Life-Cycle Assessment Integration: Incorporating LCA directly into the design objective.

This approach achieved a cement formulation with a 21% reduction in GWP while meeting strength requirements, using only 28 days of experiment time and attaining 93% of the achievable improvement [17].

Implementing a closed-loop materials discovery pipeline requires leveraging specific databases, software tools, and experimental resources. The following table details key components of the research toolkit:

Table 2: Essential Resources for Closed-Loop Materials Discovery

| Resource Category | Specific Tools/Examples | Function and Application |

|---|---|---|

| Materials Databases | Materials Project (MP) [13] [12], Open Quantum Materials Database (OQMD) [13], SuperCon [13] | Provide initial training data and candidate search spaces for virtual screening |

| Computational Resources | National Energy Research Scientific Computing Center (NERSC) [12], High-performance computing clusters [12] | Enable high-throughput calculations and machine learning model training |

| Machine Learning Frameworks | Representation learning from Stoichiometry (RooSt) [13], Gaussian process models [17] | Predict material properties and quantify uncertainty for experimental design |

| Experimental Characterization | Powder X-ray diffraction (XRD) [13], Temperature-dependent AC magnetic susceptibility [13] | Verify synthesis success and measure functional properties |

| Experimental Design Methods | Mean Objective Cost of Uncertainty (MOCU) [15], Active learning [13] | Identify the most informative experiments to perform next |

Implementation Protocols

Protocol 1: Machine Learning-Guided Discovery of Functional Materials

This protocol outlines the general methodology for implementing a closed-loop discovery campaign for functional materials such as superconductors.

Data Curation and Preprocessing

Model Training and Prediction

- Train ensemble of machine learning models (e.g., RooSt) on known materials data

- Apply trained models to candidate databases (MP, OQMD) to predict target properties

- Filter predictions based on stability, synthesizability, and dissimilarity to training data

Candidate Selection and Experimental Design

- Prioritize candidates using MOCU or other criteria that balance exploitation and exploration

- Consider practical constraints: ease of synthesis, safety, and characterization feasibility

Experimental Validation and Feedback

- Synthesize prioritized candidates using standard solid-state reactions

- Characterize structural properties (XRD) and functional properties (e.g., superconductivity)

- Incorporate both successful and failed predictions into training data

- Retrain models and iterate the process

Protocol 2: MOCU-Based Experimental Design for Shape Memory Alloys

This specific protocol details the implementation of MOCU for designing shape memory alloys with minimal energy dissipation [15].

Define Uncertainty Class

- Let model parameters be θ = [θ₁, θ₂, ..., θk] with prior distribution f(θ)

- For SMAs, parameters may include dopant identity and concentration

Compute Robust Material

- For a given θ, identify material with minimal dissipation: ζθ = argminζ E[J(ζ, Θ)|θ]

- Compute expected cost: E[J(ζθ, Θ)|θ]

Calculate MOCU

- MOCU = E[E[J(ζθ, Θ)|θ]] - J(ζθ, *θ)

- Where ζθ is the ideal material if true parameters *θ were known

Select Optimal Experiment

- For each candidate experiment ξ, compute posterior expected MOCU for each possible outcome

- Select experiment ξ that minimizes the expected MOCU remaining after measurement

This methodology has been shown to identify optimal dopants and concentrations for SMAs with significantly higher efficiency than random selection or pure exploitation strategies [15].

The integration of theory, computation, and experimentation within a closed-loop framework represents a paradigm shift in materials science. By combining physical models with machine learning and iterative experimental feedback, researchers can systematically explore vast materials spaces with unprecedented efficiency, achieving acceleration factors of 10-25x compared to traditional approaches [16]. This guide has detailed the core components, methodologies, and practical implementations of this approach, providing researchers with the technical foundation to advance materials discovery for applications ranging from superconductivity to sustainable construction. As computational power grows and algorithms become more sophisticated, this integrated framework promises to dramatically accelerate the design of next-generation materials addressing critical global challenges.

The Role of the Materials Genome Initiative (MGI) in Driving This Shift

The Materials Genome Initiative (MGI), launched in 2011, represents a fundamental transformation in the philosophy of materials research and development (R&D). Established as a multi-agency initiative by the White House, the MGI was designed to deploy advanced materials twice as fast and at a fraction of the cost compared to traditional methods, thereby enhancing U.S. global competitiveness [18] [19] [20]. This initiative emerged in response to the recognition that discovering new materials and manufacturing commercial products from them typically required a long, iterative, and expensive developmental cycle that could span several decades [18]. The MGI introduced a new paradigm that strategically integrates computation, experimental tools, and digital data into a cohesive Materials Innovation Infrastructure (MII) to accelerate every stage of the materials development continuum [18] [20].

The core thesis of the MGI aligns precisely with the concept of "closing the loop" in computational materials design. It promotes a research philosophy that replaces traditional linear development with an integrated, iterative process where computation guides experiment, experimental observation informs theory, and data flows seamlessly between all stages [18] [21] [22]. This closed-loop approach enables researchers to navigate the complex landscape of material composition, structure, and properties with unprecedented efficiency, significantly compressing the timeline from discovery to deployment.

The Conceptual Framework: Closing the Materials Development Loop

The Materials Innovation Infrastructure (MII)

The MGI introduced the foundational concept of the Materials Innovation Infrastructure (MII), a framework that combines experimental tools, digital data, and computational modeling with artificial intelligence and machine learning (AI/ML) [18]. This infrastructure enables researchers to predict a material's composition and processing requirements to achieve desired physical properties for specific applications. The MII serves as the technological backbone that makes closed-loop materials design possible, providing the tools, data, and computational power necessary for rapid iteration and optimization.

From Linear Progression to Integrated Iteration

A fundamental conceptual shift promoted by the MGI involves transforming the traditional Materials Development Continuum (MDC). Where the MDC previously represented a multi-stage, linear process from discovery through development, optimization, and deployment, the MGI paradigm promotes integration and iteration across all stages [18]. This re-envisioning enables seamless information flow and feedback loops that greatly accelerate materials deployment while reducing costs. The paradigm emphasizes that to accelerate materials discovery, design, manufacture, and deployment, computation, data, and experiment must be brought together in a tightly integrated manner [20].

Figure 1: The Closed-Loop Materials Design Workflow Enabled by MGI

Core Methodologies: Implementing the Closed-Loop Approach

The Integrated Computational-Experimental Workflow

The implementation of closed-loop materials design relies on specific methodological frameworks that enable tight integration between computational prediction and experimental validation. The core workflow typically follows these stages:

- Computational Guidance: Theoretical models and AI-driven simulations propose promising material compositions or structures with desired properties.

- Robotic Synthesis: Advanced robotics and automated systems physically create the proposed materials.

- High-Throughput Characterization: Automated characterization tools rapidly measure key properties of the synthesized materials.

- Data Integration and Model Refinement: Experimental results feed back into computational models to improve their predictive accuracy.

- Iterative Optimization: The cycle repeats, with each iteration refining the material design toward the target specifications.

This methodology has been successfully applied across diverse material systems, from metallic alloys for aerospace applications to organic molecules for OLED displays and advanced ceramics for high-temperature actuators [23] [24] [22].

Self-Driving Laboratories (SDLs)

A pinnacle achievement of the MGI approach is the development of Self-Driving Laboratories (SDLs), which fully automate the closed-loop materials discovery process [18]. SDLs represent the most complete implementation of the MGI philosophy, integrating AI, autonomous experimentation (AE), and robotics in a closed-loop manner that can design experiments, synthesize materials, characterize functional properties, and iteratively refine models without human intervention [18]. This capability enables thousands of experiments in rapid succession, converging on optimal solutions far more efficiently than traditional approaches.

Table 1: Key Computational Methods in Closed-Loop Materials Design

| Method | Length Scale | Key Applications | Role in Closed-Loop Design |

|---|---|---|---|

| Density Functional Theory (DFT) | Electronic/Atomic (Ångström) | Prediction of fundamental material properties from quantum mechanics [25] [26] | Provides foundational data for AI/ML models; predicts ground-state properties [25] |

| Molecular Dynamics | Nano/Microscale | Study of larger-scale phenomena, assembly of nanoparticles [25] | Bridges quantum and continuum scales; simulates thermodynamic forces [27] |

| Multiscale Modeling | Multiple Scales | Determination of engineering-scale properties without sacrificing atomic-scale accuracy [25] | Enables prediction of directly applicable engineering properties [25] [27] |

| Machine Learning Potentials | Atomic/Continuum | Development of surrogate models with quantum accuracy at lower computational cost [24] | Accelerates screening of candidate materials; replaces physics-based models [18] |

| CALPHAD | Micro/Macroscale | Thermodynamic modeling of metallic alloy systems [20] | Provides industrial-ready thermodynamic data for alloy design [20] |

Research Reagent Solutions for Closed-Loop Materials Design

Implementing closed-loop materials design requires specific computational and experimental "reagents" – essential tools and platforms that enable the iterative design process.

Table 2: Essential Research Reagent Solutions for Closed-Loop Materials Design

| Tool Category | Specific Solutions | Function in Workflow |

|---|---|---|

| Computational Frameworks | Density Functional Theory (DFT) codes (Quantum ESPRESSO, VASP) [26] | Predict fundamental electronic, optical, and transport properties from quantum mechanics [25] [26] |

| AI/ML Platforms | Generative AI multi-agent frameworks [23] | Enable closed-loop design of advanced materials like copper-based alloys for extreme environments [23] |

| Autonomous Experimentation | Self-driving laboratories (SDLs) with robotic synthesis [18] | Integrate AI, autonomous experimentation, and robotics for continuous operation without human intervention [18] |

| Data Infrastructure | Centralized materials databases with standardized formats [19] [22] | Enable data sharing and collaboration; provide training data for AI/ML models [19] |

| Characterization Tools | Autonomous electron microscopy, scanning probe microscopy [18] | Provide high-throughput structural and property characterization with minimal human operation [18] |

Enabling Infrastructure and Tools

The Digital Backbone: Data Standards and Sharing

A critical enabler of the MGI's closed-loop approach is the development of robust data infrastructure [19]. The initiative has focused significant effort on establishing mature, consistent data libraries and repositories that adhere to the FAIR principles (Findable, Accessible, Interoperable, and Reusable) [18] [22]. This digital backbone allows researchers to build upon previous work, compare results across different systems and institutions, and train accurate machine learning models on comprehensive datasets. The MGI's 2021 Strategic Plan specifically identifies "Harnessing the power of materials data" as one of its three primary goals, recognizing that high-quality, accessible data is essential for accelerating materials innovation [19].

Key Federal Programs and Initiatives

Several flagship federal programs provide the institutional and funding framework that supports the MGI's mission:

DMREF (Designing Materials to Revolutionize and Engineer our Future): The National Science Foundation's principal program responsive to the MGI, DMREF fosters the design, discovery, and development of materials by harnessing data and computational tools in concert with experiment and theory [21]. The program requires collaborative, interdisciplinary teams that engage in a "closed-loop" research process where theory guides computation, computation guides experiments, and experiments inform theory [21].

Materials Innovation Platforms (MIP): NSF-developed ecosystems that include in-house research scientists, external users, and other contributors who share tools, codes, samples, data, and knowledge to strengthen collaborations and accelerate materials development [20].

NIST Materials Genome Program: A broad effort to support the MGI through intramural research and targeted grants, with core focus areas on data and model dissemination, data and model quality, and data-driven materials R&D [20].

Energy Materials Network: A DOE-established community of practice focused on advancing critical energy technologies through state-of-the-art materials R&D that integrates all phases from discovery to scale-up and qualification [20].

Table 3: Major MGI-Aligned Programs and Their Contributions to Closed-Loop Design

| Program | Lead Agency | Key Focus Areas | Role in Advancing Closed-Loop Design |

|---|---|---|---|

| DMREF | NSF (with multiple federal partners) [21] | All materials research topics [21] | Principal program requiring integrated teams and closed-loop research [21] |

| Materials Innovation Platforms (MIP) | NSF | Specific domains: semiconductors, biomaterials [20] | Creates self-sustaining ecosystems for materials discovery with shared tools and data [20] |

| NIST Materials Genome Program | NIST | Data quality, dissemination, and data-driven R&D [20] | Provides critical data infrastructure and standards for the materials community [20] |

| Energy Materials Network | DOE | Critical energy technologies [20] | Accelerates energy materials development through integrated R&D from discovery to qualification [20] |

| AFRL Autonomous Research Facilities | DOD | Defense-related materials and manufacturing [20] | Develops autonomous material characterization, fabrication, and data repositories [20] |

Case Studies and Applications

Accelerated Development of Structural Alloys

The MGI approach has demonstrated remarkable success in accelerating the development of advanced structural alloys for demanding applications. One notable example involves the integrated design of materials for Rotating Detonation Engines (RDEs), emerging propulsion technology that can deliver satellites to precise orbits with less fuel consumption and reduced emissions [23]. Through a DMREF-funded project, researchers are developing a generative AI multi-agent framework that enables closed-loop design of copper-based alloys for extreme dynamic environments [23]. This approach integrates experimental research, simulations, and AI tools to efficiently improve advanced structural alloys crucial for next-generation propulsion systems.

Another DMREF project, "Structural Alloys for Fatigue Endurance (SAFE)," addresses the critical challenge of understanding fatigue behavior in additively manufactured alloys [23]. The research team is creating an integrated database and knowledge map that correlates material processing parameters, microstructure, mechanical characterization, and computational experiments to understand and predict fatigue behavior in Ti-6Al-4V, an alloy widely used in aerospace applications [23]. This data-driven approach exemplifies how the MGI paradigm can address complex, multi-scale materials challenges that have traditionally limited material performance and reliability.

Discovery of Functional Materials

The closed-loop approach has proven particularly powerful for discovering new functional materials with tailored electronic and optical properties. In one groundbreaking example, researchers applied quantum mechanical simulations to design, in silico, a room-temperature polar metal exhibiting unexpected stability, and then successfully synthesized this material using high-precision pulsed laser deposition [22]. This theory-guided experimental effort revealed a new member of an exceedingly rare class of materials that could enable technologies requiring unusual ferroelectric behavior.

In the domain of organic electronics, researchers have utilized high-throughput virtual screening combining theory, quantum chemistry, machine learning, cheminformatics, and experimental characterization to explore a space of 1.6 million organic light-emitting diode (OLED) molecules [22]. This tightly integrated approach resulted in a set of experimentally synthesized molecules with state-of-the-art external quantum efficiencies, demonstrating how the MGI paradigm can efficiently navigate vast chemical spaces to identify optimal materials for specific applications.

Challenges and Future Directions

Persistent Challenges in Implementation

Despite significant progress, substantial challenges remain in fully realizing the goals of the MGI. The greatest successes to date have occurred in areas where both the theories of the materials and the software to translate those theories into practical engineering decisions are most developed, such as metallic systems using the CALPHAD modeling approach [20]. However, significant barriers exist when research must "stray far from current systems" or when "physics-informed models are unavailable or not mature enough for immediate engineering use," as is often the case in polymer systems [20].

Other impediments include the extensive domain knowledge required for implementation, the need for in-house modeling capacity, and the associated costs, which may make it difficult for small enterprises with limited expertise and resources to undertake significant integrated computational materials engineering campaigns [20]. Additionally, cultural and incentive-related challenges persist, particularly around data and software sharing, as "there is little academic or industrial reward for publishing data and software, despite broad recognition of the value of data sharing in principle" [20].

The Path Forward: AI and Autonomous Experimentation

Future advancements in closed-loop materials design will be increasingly driven by more sophisticated AI approaches and expanded capabilities in autonomous experimentation. The MGI community is focusing on developing next-generation physics-based models enabled by advances in computing, creating surrogate models that provide AI-driven approximations to physics-based models (resulting in materials digital twins), and advancing autonomous experimentation tools and self-driving laboratories across different functional areas [18]. These developments promise to further reduce the need for human intervention in the design loop, accelerating the pace of materials discovery while potentially discovering novel materials and phenomena that might not be intuitively predicted by human researchers.

The MGI continues to evolve its strategic focus, with recent emphasis on unifying the materials innovation infrastructure, harnessing the power of materials data, and educating, training, and connecting the materials R&D workforce [19]. These priorities acknowledge that technological advancements must be accompanied by corresponding developments in infrastructure and human capital to fully realize the potential of closed-loop materials design. As these integrated approaches mature, they promise to transform how advanced materials are discovered, designed, developed, and fabricated into devices and products, with profound implications for national security, economic security, and human well-being [18].

The field of computational materials design is undergoing a fundamental paradigm shift, moving from traditional, linear research methods to integrated, autonomous closed-loop systems. This new paradigm, often called "closed-loop computational materials design," represents a transformative approach where artificial intelligence (AI), robust data infrastructures, and high-throughput automation converge to create continuous, self-optimizing research systems [7]. These systems are designed to accelerate the discovery and development of novel materials—a process historically hampered by lengthy, resource-intensive trial-and-error methods—thereby addressing critical needs in decarbonization, healthcare, and advanced technology [6] [28].

The core of this shift lies in creating a tightly coupled workflow where computational models propose new material candidates, automated systems synthesize and test them, and AI algorithms analyze the results to propose the next best experiment. This creates a virtuous cycle of learning and discovery, drastically compressing development timelines. This whitepaper provides an in-depth technical examination of the three key drivers—AI advancements, data infrastructures, and high-throughput automation—that enable this closed-loop framework, offering detailed methodologies and quantitative insights for researchers, scientists, and drug development professionals.

Core Driver 1: AI and Machine Learning Algorithms

Artificial intelligence serves as the computational brain of the closed-loop system, enabling rapid prediction, generation, and optimization of new materials. The evolution has progressed from forward-screening methods to sophisticated inverse design and generative models.

From Forward Screening to Inverse Design

Traditional forward screening follows a "generate-and-test" paradigm, computationally creating or sourcing candidate materials from databases and then filtering them based on target properties using simulations or machine learning surrogates [29]. Frameworks like AFLOW and Atomate automate high-throughput density functional theory (DFT) calculations for this purpose [29]. While effective, this approach struggles with the astronomically large materials design space, making it inefficient for identifying optimal candidates [29].

In contrast, inverse design inverts this workflow, starting from the desired properties and asking AI to generate candidate materials that meet those specifications [29]. This is a more direct path to solving application-specific challenges. Early inverse design employed classical optimization algorithms, but these often lacked the flexibility and power for vast, complex search spaces.

Table 1: Evolution of Key AI/ML Methods in Materials Design

| Method Category | Example Algorithms | Original Year | Essence & Best Use Cases |

|---|---|---|---|

| Evolutionary Algorithms | Genetic Algorithm (GA), Particle Swarm Optimization (PSO) | 1970s-1990s | Intuitive, robust optimization inspired by natural selection/swarm behavior. Effective for continuous and multi-modal problems. [29] [30] |

| Adaptive Learning | Bayesian Optimization (BO), Deep Reinforcement Learning (RL) | 1978, 2013 | Data-efficient global optimization of black-box functions (BO) and learning complex policies from raw data (RL). [31] [29] |

| Deep Generative Models | Variational Autoencoder (VAE), Generative Adversarial Network (GAN), Diffusion Models | 2013-2020 | Direct generation of novel molecular structures. Powerful for inverse design by learning complex structure-property relationships. [32] [29] |

| Generalist Models | Large Language Models (LLMs), Graph Neural Networks (GNNs) | 2017 onward | LLMs reason across diverse data types (text, figures); GNNs excel at representing atomistic systems for property prediction. [31] [32] [29] |

Advanced AI Techniques in Practice

Bayesian Optimization (BO) is a cornerstone of active learning in closed-loop systems. It functions as a sophisticated experimental recommender system, using past results to model the landscape of material performance and intelligently propose the next experiment to find the global optimum [33]. At Boston University, the MAMA BEAR system used BO to conduct over 25,000 experiments autonomously, discovering a record-breaking energy-absorbing material [31].

Generative Models are revolutionizing inverse design. For instance, Diffusion Models and Physics-Informed VAEs can generate novel, chemically realistic crystal structures by embedding fundamental principles like crystallographic symmetry and periodicity directly into their learning process [32] [29]. Cornell researchers have developed such models to ensure generated materials are scientifically meaningful, moving beyond simple trial-and-error [32].

Knowledge Distillation is another key technique, where large, complex models are compressed into smaller, faster versions. Cornell researchers have shown these distilled models can run more efficiently and sometimes even outperform their larger counterparts, making them ideal for high-throughput molecular screening on limited computational hardware [32].

Core Driver 2: Data Infrastructures and Management

The performance of AI models in materials science is fundamentally constrained by the availability of high-quality, large-scale data. Robust data infrastructure is therefore not just supportive but essential for closing the loop.

FAIR Data Principles and Public Databases

Adhering to the FAIR (Findable, Accessible, Interoperable, and Reusable) data principles is a critical best practice. The Boston University self-driving lab project, for example, made its dataset publicly available through BU Libraries, embracing this standard to enhance collaborative science [31]. Key public databases include:

- The High-Throughput Experimental Materials Database (HTEM-DB): Hosted by NREL, this open database contains over 100,000 unique compositions and processing conditions, featuring web-based search tools and an API for machine learning applications [34].

- Other Computational Databases: Large-scale, computationally generated datasets, such as those from the Materials Project and Google DeepMind's GNoME (which predicted 2.2 million new crystal structures), provide an invaluable starting point for training AI models and initial screening [6] [29] [28].

End-to-End Data Management Platforms

Integrated software platforms are necessary to manage the data deluge from high-throughput experiments. NREL's COMBIgor is a prominent open-source software package designed specifically for loading, storing, processing, and visualizing combinatorial materials science data [34]. Such infrastructures implement automated data harvesting, processing, and alignment from deposition and characterization instruments, creating a centralized data warehouse that feeds directly into AI models and visualization tools [34].

Core Driver 3: High-Throughput and Automated Experimentation

Automation provides the physical hands of the closed-loop system, translating digital designs into tangible samples and data points at a scale and speed impossible for human researchers alone.

The Rise of Self-Driving Labs (SDLs)

Self-Driving Laboratories (SDLs) are integrated research systems that combine robotics, AI, and autonomous experimentation to run and analyze thousands of experiments in real-time [31]. They represent the ultimate expression of high-throughput automation. A leading vision is evolving SDLs from isolated, lab-centric tools into shared, community-driven experimental platforms, akin to cloud computing resources for the research community [31]. This democratizes access to cutting-edge experimentation capabilities.

Integrated Workflows in Action: The MIT CRESt System

The CRESt (Copilot for Real-world Experimental Scientists) platform developed at MIT is a state-of-the-art example of a fully integrated closed-loop system [33]. Its workflow, which led to the discovery of a record-performance fuel cell catalyst, exemplifies the synergy between the three core drivers.

Diagram 1: Closed Loop Workflow of MIT CRESt System

The Scientist's Toolkit: Research Reagent Solutions for an SDL

Table 2: Essential Components of a Modern Self-Driving Lab for Materials Science

| Item / Reagent Solution | Function in the Workflow |

|---|---|

| Liquid-Handling Robot | Automates the precise dispensing and mixing of precursor solutions to create material samples with high reproducibility. [33] |

| High-Throughput Synthesis System (e.g., Carbothermal Shock) | Enables rapid synthesis of vast material libraries (e.g., 900+ chemistries) by quickly processing samples under controlled conditions. [33] |

| Automated Electrochemical Workstation | Performs high-throughput functional testing (e.g., 3,500 tests) of material properties, such as catalytic activity for fuel cells. [33] |

| Automated Characterization Equipment (e.g., SEM) | Provides rapid structural and compositional analysis of synthesized materials through automated electron microscopy and other techniques. [33] |

| Computer Vision System | Monitors experiments via cameras and Vision Language Models to detect issues (e.g., sample misplacement) and suggest corrections, improving reproducibility. [33] |

| Cloud-Based Data Platform | Centralizes and manages the vast quantities of generated data, making it FAIR and accessible for AI analysis and modeling. [34] [35] |

Quantitative Acceleration and Performance Metrics

The ultimate validation of the closed-loop framework lies in its measurable acceleration of the R&D process. Recent studies have rigorously quantified this speedup.

Table 3: Measured Acceleration from Closed-Loop Frameworks in Materials Discovery

| Source of Speedup | Description of Acceleration | Quantitative Impact |

|---|---|---|

| Task Automation | Removal of manual intervention in routine processes. | Contributes to an overall ~10x speedup (over 90% reduction in time) when combined with runtime improvements and sequential learning. [7] |

| Calculation Runtime Improvements | Use of faster simulations and ML surrogate models. | |

| Sequential Learning (e.g., BO) | AI reduces the number of experiments needed to find an optimum. | |

| Surrogatization | Replacing expensive, high-fidelity simulations with instant ML predictions. | Adds to the above, achieving a total ~15-20x speedup (over 95% reduction in design time). [7] |

| End-to-End Workflow (e.g., CRESt) | Integrated discovery of a novel catalyst from a vast search space. | Explored 900+ chemistries and conducted 3,500 tests in three months, achieving a 9.3-fold improvement in power density per dollar. [33] |

| Pharmaceutical Discovery | Compression of early-stage discovery and preclinical timelines. | AI platforms report design cycles ~70% faster and requiring 10x fewer synthesized compounds than industry norms. [35] |

The following diagram illustrates the core optimization logic that enables this acceleration, using Bayesian Optimization as a prime example.

Diagram 2: Bayesian Optimization Loop for Experiment Design

Experimental Protocols for Closed-Loop Materials Discovery

This section outlines a generalized experimental protocol based on successful implementations like the MIT CRESt system [33], providing a template researchers can adapt.

Protocol: Autonomous Discovery of a Multielement Fuel Cell Catalyst

Objective: To autonomously discover a high-performance, low-cost multielement catalyst for a direct formate fuel cell. Primary AI Driver: Multimodal Active Learning integrating literature knowledge, experimental data, and human feedback. Automation Core: Robotic arms, liquid handlers, and automated characterization tools.

Step-by-Step Procedure:

Problem Formulation & Initialization:

- Researcher Input: Define the primary objective (e.g., "maximize power density per dollar") and specify constraints (e.g., limit precious metal content).

- AI Setup: The system (e.g., CRESt) is initialized. It ingests relevant scientific literature and existing materials databases to build a foundational knowledge base.

Search Space Definition & Reduction:

- The AI creates a high-dimensional representation of potential recipes based on its knowledge base.

- Principal Component Analysis (PCA) is performed on this knowledge embedding to identify a reduced search space that captures most of the performance variability. This step prevents the AI from getting lost in a vast parameter space.

Iterative Closed-Loop Cycle:

- a) AI Proposal: The Bayesian Optimization algorithm, operating in the reduced search space, proposes a batch of promising material recipes (chemical compositions and processing parameters).

- b) Robotic Synthesis: A liquid-handling robot precisely dispenses precursor solutions. A carbothermal shock system or other high-throughput synthesizer rapidly processes the samples to create the target material libraries.

- c) Automated Characterization & Testing: The sample library is transferred to an automated electron microscope for structural analysis and an electrochemical workstation for functional testing (e.g., measuring catalytic activity and durability).

- d) Multimodal Data Integration & Learning:

- Results from characterization and testing are fed back into the AI's model.

- Computer vision models analyze micrograph images to check for synthesis issues.

- The system can incorporate human feedback via natural language (e.g., "focus on compositions with higher iron content").

- The knowledge base is updated, and the reduced search space is redefined for the next cycle.

Termination and Validation:

- The loop continues until a performance target is met or a set number of cycles is completed.

- The final, AI-predicted optimal material is validated in a real-world device (e.g., a working fuel cell) to confirm its record performance, as was done with the 8-element catalyst discovered by CRESt.

The integration of AI advancements, robust data infrastructures, and high-throughput automation is fundamentally closing the loop in computational materials design. This synergy creates a new, accelerated paradigm for research and development, moving from artisanal, hypothesis-driven approaches to industrial-scale, AI-guided discovery engines [28]. As these technologies mature and become more accessible—evolving into shared community resources—they hold the undeniable potential to unlock breakthroughs in energy storage, carbon capture, drug discovery, and quantum computing, ultimately accelerating our path to a more sustainable and technologically advanced future [6] [31].

Methodologies in Action: AI, Robotics, and Real-World Applications

Machine Learning for Property Prediction and Inverse Materials Design

The discovery and development of new materials are fundamental to technological progress. Traditional materials design relies on empirical methods and trial-and-error experimentation, which are often time-consuming, resource-intensive, and costly [36]. The emerging paradigm of computational materials design seeks to overcome these limitations by creating a closed-loop process, integrating high-throughput computation, data-driven modeling, and inverse design. This loop begins with the generation of large datasets, either computationally or experimentally, from which machine learning (ML) models learn the complex relationships between a material's composition/structure and its properties. These models can then predict properties for new, unseen materials or, more powerfully, invert the process to design materials with user-specified, optimal properties. By iterating between prediction, design, and experimental validation, this framework aims to dramatically accelerate the discovery of novel materials for applications ranging from energy storage to pharmaceuticals [37]. This article provides an in-depth technical guide to the machine learning methods enabling this vision, focusing on property prediction and inverse design.

Machine Learning for Materials Property Prediction

Property prediction is a foundational task in computational materials science. Accurate predictors are essential for both virtual screening of large candidate databases and as objective functions for subsequent inverse design.

Challenges in Predictive Modeling

A significant challenge in real-world property prediction is generalizing to out-of-distribution (OOD) data, particularly when seeking materials with exceptional, extreme properties that fall outside the range of the training data. Classical ML models often struggle with extrapolation in the property value range [38]. Furthermore, many domains of practical interest, such as pharmaceuticals and sustainable energy carriers, suffer from a scarcity of reliable, high-quality labeled data, which impedes the development of robust predictors [39].

Advanced Methods for Enhanced Prediction

Recent research has introduced innovative methods to address these challenges.

Transductive Approaches for OOD Extrapolation: As detailed in npj Computational Materials, a bilinear transduction method has been developed for zero-shot extrapolation to higher property value ranges [38]. This method reparameterizes the prediction problem: instead of predicting a property value for a new candidate material directly, it learns how property values change as a function of material differences in representation space. During inference, a property value for a new sample is predicted based on a chosen training example and the representation-space difference between that training example and the new sample. This approach has been shown to improve extrapolative precision by 1.8× for materials and 1.5× for molecules, and it can boost the recall of high-performing candidates by up to 3× compared to baseline methods like Ridge Regression, MODNet, and CrabNet [38].

Mitigating Negative Transfer in Multi-Task Learning (MTL): Multi-task learning leverages correlations among related properties to improve data efficiency. However, it is often undermined by negative transfer (NT), where updates from one task degrade performance on another, a problem exacerbated by severe task imbalance [39]. To address this, Adaptive Checkpointing with Specialization (ACS) has been proposed. This training scheme for multi-task graph neural networks uses a shared, task-agnostic backbone with task-specific heads. It adaptively checkpoints model parameters whenever a task's validation loss reaches a new minimum, preserving the best-performing model for each task. This strategy effectively mitigates NT while preserving the benefits of inductive transfer, enabling accurate property predictions with as few as 29 labeled samples [39].

Table 1: Performance Comparison of Property Prediction Methods on Solid-State Materials Benchmarks (Mean Absolute Error) [38]

| Property | Dataset | Ridge Regression | CrabNet | Bilinear Transduction |

|---|---|---|---|---|

| Bulk Modulus | AFLOW | 12.1 GPa | 10.8 GPa | 9.5 GPa |

| Shear Modulus | AFLOW | 9.7 GPa | 8.9 GPa | 8.2 GPa |

| Debye Temperature | AFLOW | 51.2 K | 49.8 K | 45.1 K |

| Formation Energy | Matbench | 0.12 eV | 0.09 eV | 0.08 eV |

Table 2: Performance of Multi-Task Learning with ACS on Molecular Property Benchmarks (Average ROC-AUC) [39]

| Method | ClinTox | SIDER | Tox21 |

|---|---|---|---|

| Single-Task Learning (STL) | 0.823 | 0.635 | 0.801 |

| Multi-Task Learning (MTL) | 0.839 | 0.652 | 0.815 |

| MTL with Global Loss Checkpointing | 0.841 | 0.658 | 0.819 |

| ACS (Proposed) | 0.862 | 0.665 | 0.828 |

Inverse Materials Design Frameworks