Bridging Theory and Lab: AI-Driven Methods for Predicting Synthesis of Novel Crystal Structures

The acceleration of computational materials discovery has created a pressing challenge: determining which theoretically predicted crystal structures can be successfully synthesized in the laboratory.

Bridging Theory and Lab: AI-Driven Methods for Predicting Synthesis of Novel Crystal Structures

Abstract

The acceleration of computational materials discovery has created a pressing challenge: determining which theoretically predicted crystal structures can be successfully synthesized in the laboratory. This article provides a comprehensive overview of the latest computational frameworks, particularly advanced large language models and generative AI, that are revolutionizing the prediction of synthesizability, synthetic methods, and suitable precursors. We explore the foundational principles of crystal structure prediction, detail cutting-edge methodological applications for inverse design, address critical troubleshooting and optimization challenges, and present rigorous validation benchmarks. Aimed at researchers and development professionals in materials science and pharmaceuticals, this review synthesizes key insights to guide the efficient transition of in-silico discoveries into tangible, synthesizable materials for advanced applications.

The Synthesizability Challenge: From Computational Prediction to Experimental Realization

The Critical Gap Between Thermodynamic Stability and Experimental Synthesizability

Application Notes: Quantifying and Bridging the Synthesis Gap

The pursuit of novel functional materials, particularly in pharmaceutical and energy applications, is increasingly powered by computational design. While thermodynamic stability calculated at 0 K is a foundational metric for predicting viable compounds, it is insufficient for guaranteeing that a material can be experimentally realized [1]. This application note details the critical metrics and methodologies for evaluating synthesizability, providing researchers with a framework to prioritize candidate materials for laboratory investigation.

Table 1: Key Metrics for Assessing Synthesizability

| Metric Category | Specific Metric | Description | Quantitative Threshold (Typical) | Interpretation |

|---|---|---|---|---|

| Thermodynamic | Formation Energy (ΔH_f) | Energy released upon formation from elements; a proxy for stability. | ΔH_f < 0 (exothermic) [1] | Negative values indicate stability relative to elements, but not to other competing phases. |

| Energy Above Hull (E_ah) | Energy difference between a compound and the most stable decomposition products on the convex hull [1]. | E_ah < 50-100 meV/atom [1] | A primary metric; lower values indicate higher thermodynamic stability and likelihood of synthesizability. | |

| Chemical Heuristics | Charge Neutrality | Net charge of a crystal structure must be zero. | Net Charge = 0 | A fundamental rule-of-thumb; violations indicate an unrealistic structure. |

| Electronegativity Balance | Difference in electronegativity between cation and anion. | Varies by system | Guides the prediction of stable binary and ternary compounds. | |

| Data-Driven | Synthesizability Score | Output from machine learning models trained on known synthesized materials. | Probability (e.g., 0.0 to 1.0) | Higher scores indicate higher similarity to known, synthesizable materials in feature space. |

Experimental Protocols

Protocol 1: Computational Workflow for Synthesizability Screening

Objective: To systematically screen thousands of theoretical crystal structures and rank them by their potential for experimental synthesis.

Materials and Software:

- Input: Database of candidate crystal structures (e.g., from the Materials Project, OQMD).

- Software: Density Functional Theory (DFT) code (e.g., VASP, Quantum ESPRESSO), phonon calculation software, Python/R for data analysis, machine learning libraries.

Methodology:

- Phase Stability Analysis: For each candidate structure, calculate the total energy and determine its Energy Above Hull (Eah). Compounds with Eah < 50 meV/atom should be prioritized for subsequent analysis [1].

- Thermodynamic Integration: Calculate the finite-temperature Gibbs free energy (G = H - TS) by determining vibrational (phonon) contributions. This assesses stability under realistic reaction conditions, moving beyond the 0 K approximation [1].

- Reaction Driving Force Calculation: Identify all competing phases and calculate the energy landscape for possible decomposition pathways. A significant negative driving force for the target compound's formation is critical.

- Data-Driven Prioritization: Input structural and electronic descriptors (e.g., symmetry, density, elemental fractions) into a pre-trained synthesizability classifier [1]. This model, often trained using positive-unlabeled learning techniques, scores candidates based on their resemblance to known synthesized materials.

- Final Triage: Integrate the E_ah, Gibbs free energy, and synthesizability score to generate a final ranked list of candidate materials for experimental validation.

Protocol 2: Meta-Analysis for Synthesis Condition Guidance

Objective: To quantitatively synthesize data from published literature on the synthesis of analogous materials to infer successful reaction conditions.

Methodology (Adapted from Quantitative Evidence Synthesis) [2]:

- Systematic Literature Review: Conduct a systematic search of scientific databases for experimental synthesis reports of chemically similar compounds.

- Data Extraction: Extract relevant data from each study, including: precursor materials, synthesis method (e.g., solid-state, sol-gel), temperature, pressure, duration, and reported successful outcome (e.g., phase purity).

- Effect Size Calculation: For this context, a useful effect measure could be the success rate for a given synthesis parameter (e.g., temperature range). The data can be structured for analysis.

- Multilevel Meta-Analytic Modeling: Employ a multilevel meta-regression model to account for the non-independence of multiple observations from the same study. This model explains heterogeneity in success rates based on different synthesis parameters [2].

- Interpretation: The model output will identify which synthesis parameters (e.g., a specific temperature window or the use of a particular precursor) are most statistically associated with successful synthesis, providing direct guidance for experimental design.

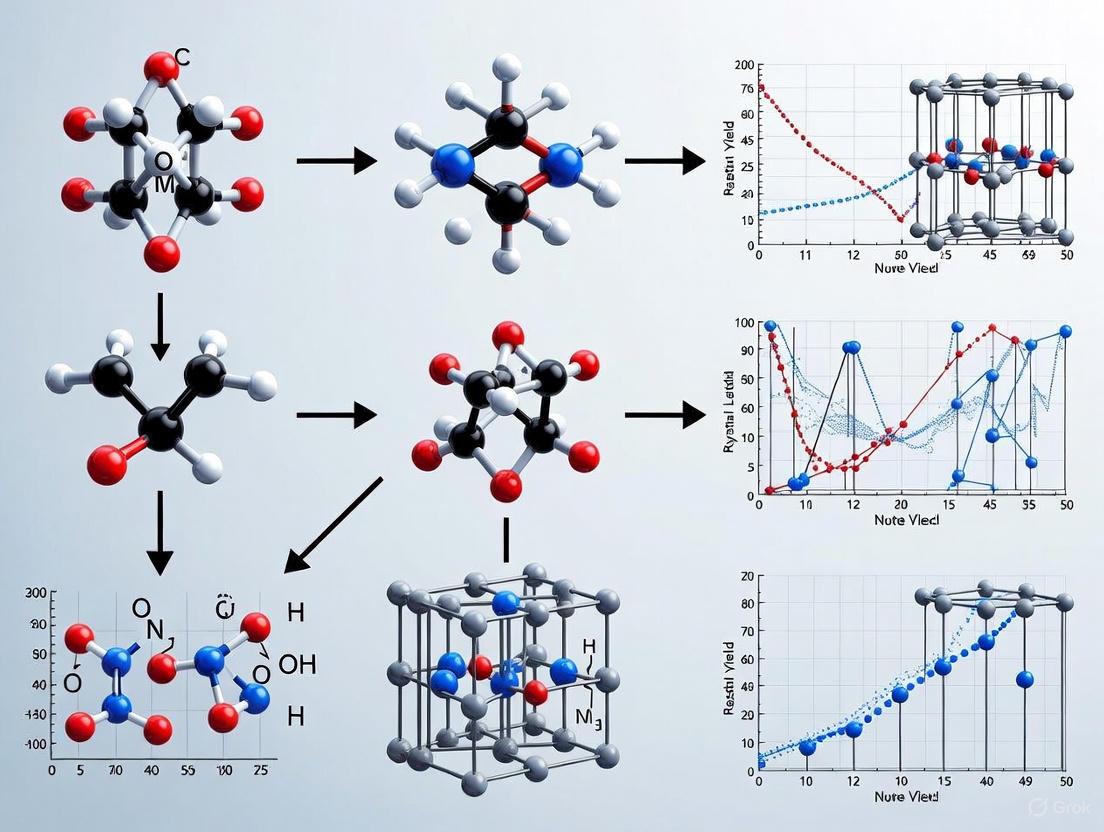

Workflow Visualization

Diagram 1: Computational screening workflow for synthesizability.

Diagram 2: Meta-analysis protocol for synthesis guidance.

The Scientist's Toolkit: Research Reagent Solutions

| Resource Name | Type | Function/Benefit |

|---|---|---|

| Reaxys [3] | Chemical Database | Provides extensive data on chemical reactions and substances, useful for building training sets for ML models and finding analogous synthesis routes. |

| SciFinder [3] | Chemical Database | A comprehensive source for chemical literature, substance data, and reaction information, enabling deep background research. |

| Science of Synthesis [3] | Synthetic Methods Resource | Provides critical evaluated data on synthetic organic and organometallic methods, useful for establishing heuristic rules. |

| Inorganic Syntheses [3] | Synthetic Methods Resource | Offers reproducible and tested methods for the synthesis of inorganic compounds, a key source of reliable experimental data. |

| metafor (R Package) [2] | Statistical Software | Enables the implementation of advanced multilevel meta-analysis and meta-regression models for synthesizing literature data. |

| WebAIM Contrast Checker [4] | Accessibility Tool | Ensures color choices in data visualization meet WCAG guidelines, guaranteeing legibility for all researchers [5] [6] [7]. |

Historical Evolution of Crystal Structure Prediction (CSP) Paradigms

The evolution of Crystal Structure Prediction (CSP) represents a fundamental paradigm shift in materials science, transitioning from reliance on serendipitous discovery to the proactive computational design of functional materials. This evolution has been characterized by four distinct paradigms, as outlined in recent comprehensive reviews: the first and second paradigms built foundations through trial-and-error experiments and scientific theories, respectively; the third leveraged computational methods like density functional theory (DFT); while the current fourth paradigm harnesses accumulated data and machine learning (ML) to significantly accelerate materials discovery [8] [9]. The critical challenge has remained bridging the gap between theoretical predictions and practical synthesis, as numerous structures with favorable formation energies have yet to be synthesized, while various metastable structures are successfully synthesized despite less favorable formation energies [8]. This application note details the experimental protocols and methodological frameworks that have emerged across these evolutionary stages, with particular emphasis on their application within synthetic methods research for theoretical crystal structures.

Quantitative Evolution of CSP Methodologies

Table 1: Historical Timeline of Major CSP Paradigms and Their Capabilities

| Era | Dominant Paradigm | Key Methodologies | Primary Limitations | Synthesizability Guidance |

|---|---|---|---|---|

| Pre-2000s | Empirical & Theoretical Foundations | Trial-and-error experiments, Theory-guided synthesis [9] | Time-consuming, Labor-intensive, Resource-heavy [10] | Minimal direct computational guidance |

| 2000-2015 | Computational Screening & Global Optimization | DFT calculations, Genetic Algorithms (USPEX), Particle Swarm Optimization (CALYPSO) [9] [10] | Exponential growth of search space with atom count, Computational cost of DFT [10] | Thermodynamic (formation energy) and kinetic (phonon) stability [8] |

| 2015-2022 | Early Machine Learning Integration | ML force fields, Graph Neural Networks, Positive-Unlabeled learning for synthesizability [8] [10] | Limited transferability of ML potentials, Dearth of molecular crystal datasets [11] | ML synthesizability scores (e.g., CLscore < 0.1 for non-synthesizable) [8] |

| 2022-Present | Generative AI & Large Language Models | Diffusion models, Conditional generation, Fine-tuned LLMs (CSLLM), Generative adversarial networks [8] [9] [10] | "Hallucination" in generated structures, Need for effective text representations [8] | Direct synthesis route and precursor prediction (>90% accuracy) [8] |

Table 2: Performance Comparison of Modern CSP and Synthesizability Prediction Methods

| Method/Model | Prediction Accuracy | Synthesizability Metric | Precursor Prediction Accuracy | Computational Efficiency |

|---|---|---|---|---|

| Thermodynamic Stability | 74.1% [8] | Energy above hull ≥0.1 eV/atom [8] | Not capable | Low (requires DFT calculations) |

| Kinetic Stability | 82.2% [8] | Lowest phonon frequency ≥ -0.1 THz [8] | Not capable | Very Low (requires phonon calculations) |

| PU Learning (CLscore) | 87.9% [8] | CLscore threshold (e.g., <0.1 for non-synthesizable) [8] | Not capable | Medium |

| Teacher-Student Network | 92.9% [8] | Binary classification [8] | Not capable | Medium |

| CSLLM Framework | 98.6% [8] | Multi-task classification [8] | 80.2% success for binary/ternary compounds [8] | High (after initial training) |

Protocol 1: Traditional CSP via Global Optimization Algorithms

Background and Principles

Traditional CSP methods, dominant in the third paradigm, focus on identifying global energy minima on high-dimensional potential energy surfaces. The fundamental challenge lies in the exponential growth of possible structures with increasing atoms per unit cell, estimated by ( C \approx \exp(a \cdot d) ), where ( d = 3N + 3 ) represents degrees of freedom for N atoms [10]. These methods combine global search algorithms with energy evaluation using DFT or empirical potentials.

Step-by-Step Experimental Protocol

Step 1: Initial Structure Generation

- Generate random initial structures with symmetry constraints (space groups) and physical distance constraints (minimum interatomic distances) [10].

- For molecular crystals, use algorithms like Rigid Press in Genarris 3.0 to achieve maximally close-packed structures based on geometric considerations [11].

- Determine target unit cell volumes using machine-learned models or empirical relationships [11].

Step 2: Structure Relaxation and Energy Evaluation

- Perform local optimization using DFT with dispersion corrections for accurate intermolecular interactions [11].

- Alternative: Use classical force fields for larger systems, acknowledging potential accuracy limitations [10].

- Calculate formation energies and energy above the convex hull to assess thermodynamic stability [8].

Step 3: Structural Evolution via Global Optimization

- Genetic Algorithm (USPEX): Apply selection, crossover, and mutation operators to generate new candidate structures, prioritizing those with lower energies [10].

- Particle Swarm Optimization (CALYPSO): Update particle positions (structures) based on individual and swarm best positions using fingerprinting to eliminate duplicates [10].

- Random Search (AIRSS): Generate numerous random structures with constraints for extensive exploration, particularly effective for high-pressure phases [10].

Step 4: Convergence and Validation

- Iterate Steps 2-3 until energy convergence is achieved (typically < 0.1 meV/atom change between iterations).

- Validate dynamic stability through phonon spectrum calculations, identifying imaginary frequencies that indicate instability [8].

- For synthesizability assessment, use thermodynamic metrics (formation energy < 0) or kinetic metrics (no imaginary phonon frequencies), despite their noted limitations [8].

Protocol 2: Machine Learning-Assisted CSP Workflows

Background and Principles

ML-assisted CSP addresses the computational bottlenecks of traditional methods by leveraging pattern recognition in existing crystallographic databases. This approach encompasses multiple applications: using ML force fields for faster energy evaluations, implementing deep learning models for direct property predictions, and applying generative models for inverse design [10].

Step-by-Step Experimental Protocol

Step 1: Data Preparation and Representation

- Curate training datasets from crystallographic databases (ICSD, Materials Project, CCDC) [9] [10].

- Convert crystal structures into numerical representations:

- Graph Representations: Atoms as nodes, bonds as edges for Graph Neural Networks [10].

- Text Representations: Develop "material strings" integrating lattice parameters, space groups, and Wyckoff positions for LLM processing [8].

- Volumetric Representations: Electron density or atomic density grids for CNN-based models [9].

Step 2: Model Selection and Training

- For Force Fields: Train MLIPs (MACE, AIMNet) on DFT data for specific chemical systems, ensuring adequate coverage of configuration space [11].

- For Property Prediction: Implement GNNs with message-passing layers to learn structure-property relationships from databases [8] [10].

- For Synthesizability: Train binary classifiers using positive samples (ICSD) and negative samples (low-CLscore theoretical structures) [8].

Step 3: Structure Generation and Optimization

- ML-Relaxation: Use MLIPs for rapid geometry optimization of candidate structures before DFT verification [11].

- Active Learning: Iteratively improve MLIPs by incorporating DFT calculations of promising candidates [10].

- Down-Selection: Apply clustering and ranking pipelines to reduce thousands of generated candidates to manageable numbers for high-fidelity calculation [11].

Step 4: Validation and Synthesis Guidance

- Perform final DFT validation on top-ranked candidates to confirm stability [11].

- Predict synthesizability using specialized models like SynthNN or PU learning models [8].

- Identify potential synthesis routes through analogy-based recommendation systems [8].

Protocol 3: LLM-Based Synthesis Prediction Framework (CSLLM)

Background and Principles

The Crystal Synthesis Large Language Models (CSLLM) framework represents the cutting edge of the fourth paradigm, addressing the critical synthesizability gap through specialized LLMs fine-tuned on comprehensive crystallographic data. This approach transforms CSP from purely stability-based assessment to direct synthesis planning, achieving 98.6% accuracy in synthesizability prediction and >90% accuracy in synthetic method classification [8].

Step-by-Step Experimental Protocol

Step 1: Dataset Curation for LLM Fine-Tuning

- Positive Examples: Curate 70,120 synthesizable crystal structures from ICSD, excluding disordered structures and limiting to ≤40 atoms and ≤7 elements [8].

- Negative Examples: Select 80,000 non-synthesizable structures from 1.4M theoretical structures using PU learning model (CLscore < 0.1 threshold) [8].

- Balance Considerations: Ensure dataset covers 7 crystal systems and elements 1-94 (excluding 85,87) for comprehensive coverage [8].

Step 2: Crystal Structure Representation for LLMs

- Develop "material string" text representation: SP | a, b, c, α, β, γ | (AS1-WS1[WP1...]) [8].

- This representation integrates space group (SP), lattice parameters, and atomic species with Wyckoff positions, eliminating redundant coordinate information [8].

- Convert CIF/POSCAR files to material string format for LLM processing.

Step 3: Three-Tiered LLM Framework Implementation

- Synthesizability LLM: Fine-tune on binary classification task (synthesizable vs. non-synthesizable) using balanced dataset [8].

- Method LLM: Train on multi-class classification of synthetic methods (solid-state, solution, etc.) using experimental literature data [8].

- Precursor LLM: Develop for precursor identification through text-based reasoning on synthesis literature [8].

Step 4: Prediction and Validation Pipeline

- Input theoretical crystal structures in material string format.

- Sequential processing through the three specialized LLMs:

- Synthesizability classification (98.6% accuracy)

- Method recommendation (91.0% accuracy)

- Precursor suggestion (80.2% success rate) [8]

- Calculate reaction energies and perform combinatorial analysis to suggest additional potential precursors [8].

- Deploy user-friendly interface for automated prediction from uploaded crystal structure files [8].

Table 3: Key Research Reagents and Computational Resources for Modern CSP

| Category | Item/Resource | Specification/Purpose | Application Context |

|---|---|---|---|

| Computational Software | VASP [8] [10] | DFT calculations for energy evaluation | Structure relaxation, energy above hull calculations |

| CALYPSO [10] | Particle swarm optimization for CSP | Global structure search, particularly under pressure | |

| USPEX [9] [10] | Genetic algorithm for CSP | Evolutionary structure search and prediction | |

| Genarris 3.0 [11] | Random molecular crystal generation | Polymorph sampling, initial structure generation | |

| Data Resources | ICSD [8] | Database of experimentally confirmed structures | Positive samples for synthesizability training |

| Materials Project [8] | Database of theoretical calculations | Source of candidate structures, property data | |

| CCDC [11] | Cambridge Structural Database | Molecular crystal data for ML training | |

| ML Frameworks | CSLLM [8] | Fine-tuned LLM for synthesis prediction | Synthesizability, method and precursor prediction |

| GNN Models [8] [10] | Graph neural networks for property prediction | Rapid property screening without DFT | |

| MLIPs (MACE, AIMNet) [11] | Machine learning interatomic potentials | Accelerated structure relaxation and sampling | |

| Representation Methods | Material String [8] | Text representation for crystal structures | LLM processing of structural information |

| CIF Format [8] | Crystallographic Information File | Standard structural representation | |

| Graph Representations [10] | Atoms as nodes, bonds as edges | GNN-based property prediction |

Advanced Applications and Specialized Methodologies

Molecular Crystal Prediction with Genarris 3.0

Molecular crystals present unique challenges due to their flexibility and weak intermolecular interactions. The Genarris 3.0 package addresses these through specialized protocols:

Rigid Press Algorithm Implementation:

- Apply regularized hard-sphere potential to achieve maximally close-packed structures based on purely geometric considerations [11].

- Generate random structures in all space groups compatible with molecular symmetry [11].

- Interface with MLIPs (MACE-OFF23) for accelerated energy evaluations and geometry relaxations [11].

Polymorph Landscape Mapping:

- Implement clustering and down-selection workflows to manage thousands of generated candidates [11].

- Use dispersion-inclusive DFT for final ranking to achieve chemical accuracy [11].

- Successfully predict complex targets including aspirin, HMX, CL-20 with varying Z' values [11].

Phase Transition Analysis Through Advanced Simulation

Understanding solid-solid phase transitions is crucial for materials processing and stability:

Multi-Particle Simulation Approach:

- Design models with tunable particles (4,000 to 100,000 particles) to explore general transformation behavior [12].

- Capture classical mechanisms (Bain, Kurdjumov-Sachs, Nishiyama-Wassermann) and discover new pathways [12].

- Reveal coordinated multiunit shearing motions not previously predicted [12].

Pathway Determination Protocol:

- Link transformation pathways to particle interaction shapes rather than before/after configuration comparisons [12].

- Provide simulated templates for interpreting experimental data on invisible transitions [12].

- Enable experimental design through particle interaction tuning to control transition pathways [12].

The historical evolution of CSP paradigms has progressively narrowed the gap between computational prediction and experimental realization. While early paradigms established fundamental principles and computational frameworks, the current fourth paradigm directly addresses the synthesizability challenge through specialized AI systems. The CSLLM framework exemplifies this progression, achieving unprecedented accuracy in predicting not just stability but viable synthesis routes and precursors. As CSP continues to evolve, integration across paradigms—combining the physical rigor of DFT with the pattern recognition capabilities of ML and the reasoning capacity of LLMs—will further accelerate the discovery and realization of functional materials. The protocols detailed herein provide researchers with comprehensive methodologies spanning this evolutionary spectrum, enabling more efficient translation of theoretical crystal structures into synthetic targets.

A central challenge in modern materials discovery lies in bridging the gap between computationally predicted crystal structures and their experimental realization. The accurate classification of a theoretical structure as synthesizable or non-synthesizable is a critical bottleneck. This process is fundamentally constrained by the quality, scope, and curation of the underlying data used to train predictive models. Traditional screening methods that rely solely on thermodynamic stability, such as formation energy or energy above the convex hull, often fail to account for kinetic and synthetic accessibility, leading to significant false positives and negatives [8]. This application note details robust data curation strategies essential for constructing reliable datasets that enable accurate synthesizability prediction, directly supporting research aimed at identifying viable synthetic pathways for theoretical crystals.

Data Curation Foundation

The foundation of any synthesizability model is a comprehensive and well-curated dataset. Reliable data curation transforms raw structural information into a structured knowledge base that is Findable, Accessible, Interoperable, and Reusable (FAIR) [13].

- Primary Data Repositories: Experimental crystal structures are primarily sourced from curated databases such as the Inorganic Crystal Structure Database (ICSD) [8] and the Cambridge Structural Database (CSD). The CSD, for instance, houses over 1.2 million experimental organic and metal-organic structures, each subjected to a multi-stage curation process [13].

- Theoretical Structure Sources: Hypothetical or calculated structures can be sourced from the Materials Project (MP), the Open Quantum Materials Database (OQMD), and the JARVIS database [8]. These provide a pool of candidate structures considered "non-synthesized," though careful processing is required to label them as non-synthesizable.

- The Curation Pipeline: As practiced by the CCDC for the CSD, effective curation involves both automated checks and human expert review. This includes checks for data accuracy, chemical connectivity assignment, bond type validation, and consistency of metadata, ensuring the data is of high quality for subsequent analysis [13] [14].

The Critical Challenge of Negative Data

A unique and significant challenge in synthesizability prediction is the definition and procurement of reliable negative samples—confirmed non-synthesizable structures. Unlike synthesizable structures, which are documented in experimental databases, non-synthesizable structures are rarely reported. The following protocol outlines the established methodologies to address this challenge.

Protocols for Curating a Balanced Synthesizability Dataset

This protocol describes the construction of a dataset for training machine learning models to classify synthesizable versus non-synthesizable inorganic crystal structures.

Table 1: Key Research Reagent Solutions for Data Curation

| Item Name | Function/Description | Key Features |

|---|---|---|

| ICSD Database | Primary source of synthesizable (positive) crystal structures. | Contains experimentally validated, curated structures [8]. |

| Materials Project (MP) | Source of hypothetical, non-synthesized (unlabeled) crystal structures. | Provides a large repository of computationally generated structures [8] [15]. |

| PU Learning Model | A pre-trained machine learning model used to score and identify likely non-synthesizable structures from a pool of hypotheticals. | Generates a "CLscore"; low scores (<0.1) indicate high confidence of non-synthesizability [8]. |

| Robocrystallographer | An open-source toolkit that converts CIF-formatted crystal structures into human-readable text descriptions. | Enables the use of structural data by Large Language Models (LLMs) by creating a text representation [15]. |

| CIF (Crystallographic Information File) | Standard text file format representing crystallographic information. | The common starting point for data processing; contains lattice parameters, atomic coordinates, and symmetry [13]. |

Step-by-Step Procedure

Step 1: Curating Positive (Synthesizable) Samples

- Source: Download crystal structures from the ICSD.

- Filtering: Apply filters to ensure data quality and manageability.

- Exclude structures with disorder.

- Limit structures to those with ≤ 40 atoms per unit cell and ≤ 7 different elements to reduce complexity [8].

- Output: A curated set of confirmed synthesizable structures (e.g., 70,120 structures).

Step 2: Sourcing and Labeling Negative (Non-Synthesizable) Samples

This is the most critical and non-trivial step. The following workflow uses Positive-Unlabeled (PU) Learning.

- Source Unlabeled Data: Aggregate a large collection of theoretical structures from MP, OQMD, and JARVIS (e.g., ~1.4 million structures) [8].

- Apply Pre-Trained PU Model: Use a pre-trained model (e.g., the model from Jang et al.) to calculate a synthesizability confidence score (CLscore) for every theoretical structure [8].

- Define Negative Class: Select structures with the lowest CLscores as high-confidence negative samples. A common threshold is CLscore < 0.1 [8].

- Validation: Verify the threshold by calculating CLscores for the known positive set from the ICSD. A high percentage (e.g., >98%) should have CLscores > 0.1, validating the threshold choice [8].

- Output: A curated set of high-confidence non-synthesizable structures (e.g., 80,000 structures).

Step 3: Data Representation for Modeling

For use with modern LLMs, crystal structures must be converted into a text-based format.

- Input: Use the CIF or POSCAR files of the curated positive and negative sets.

- Conversion: Utilize the Robocrystallographer tool to generate a text description of each crystal structure. This description includes information on crystal system, lattice parameters, atomic sites, and local coordination environments [15].

- Alternative - Material String: For a more compact representation, create a "material string" that condenses essential crystal information: Space Group | Lattice Parameters | (Element-Site-Wyckoff Position[Atomic Coordinates]), etc. [8].

- Output: A final dataset where each crystal structure is represented by a text string, labeled as synthesizable or non-synthesizable.

The following diagram illustrates the logical workflow of the complete data curation process.

Advanced Curation: From Structure to Explanation

Beyond basic classification, data curation enables explainable synthesizability predictions. Using the text-represented dataset, Large Language Models can be fine-tuned not only to predict synthesizability but also to generate human-readable explanations for its decisions [15]. This involves creating a training dataset where the input is the material string or text description, and the output is the classification and/or the reasoning behind it (e.g., "this structure is non-synthesizable due to unrealistically short bond lengths and a high-energy polyhedral arrangement").

Table 2: Comparison of Synthesizability Prediction Models and Their Data Foundations

| Model / Method | Data Foundation | Key Performance Metric | Advantages / Disadvantages |

|---|---|---|---|

| Thermodynamic (Energy above Hull) | DFT-calculated formation energies. | ~74.1% accuracy [8]. | Adv: Physically intuitive. Disadv: Misses metastable & kinetically accessible phases. |

| CSLLM (Synthesizability LLM) | 150,120 structures (curated ICSD + PU-selected negatives) represented as "material strings" [8]. | 98.6% accuracy [8]. | Adv: High accuracy & generalizability; can predict methods & precursors. |

| PU-GPT-Embedding Classifier | Text embeddings from Robocrystallographer descriptions of MP structures [15]. | Outperforms graph-based models [15]. | Adv: High performance & cost-effective; enables explainability. |

Robust data curation is the cornerstone of accurate synthesizability prediction. The strategies outlined—leveraging established experimental databases, applying PU learning to intelligently label negative samples, and converting structural data into text representations—create a powerful, reliable dataset. This rigorously curated data enables the training of advanced models like CSLLM, which significantly outperform traditional stability-based screening methods. By implementing these protocols, researchers can build a solid data foundation to effectively bridge the gap between theoretical crystal structures and their practical synthesis, accelerating the discovery of novel functional materials.

The Role of Major Crystal Structure Databases (ICSD, MP) in Training Predictive Models

The discovery and synthesis of new inorganic crystalline materials are pivotal for advancements in various technological fields, including batteries, catalysts, and photovoltaics. While computational power and methods for virtual materials design have advanced significantly, the actual synthesis of predicted materials often remains a slow, empirical process of trial and error [16]. Major crystal structure databases serve as the foundational data repositories that bridge this gap between computational prediction and experimental realization. The Inorganic Crystal Structure Database (ICSD) and the Materials Project (MP) are two preeminent resources in this domain. This application note details how these databases are critically employed to train and validate predictive models for crystal structure and synthesis pathway prediction, providing detailed protocols for researchers engaged in identifying synthetic methods for theoretical crystal structures.

The Inorganic Crystal Structure Database (ICSD)

The ICSD is a comprehensive collection of experimentally determined inorganic crystal structures. The database, accessible via FIZ Karlsruhe and NIST, contains over 210,000 entries from the scientific literature dating back to 1913 [17]. It is the primary source of experimentally validated inorganic structures for the research community.

Key Features and Access Methods:

- Content: Over 210,000 curated inorganic crystal structures [17].

- Access Options: ICSD Web (browser-based), ICSD Desktop (Windows-based local installation), and a RESTful API Service for direct data access [18].

- Updates: The database is updated biannually, typically in April and October [18].

- Functionality: All versions provide advanced search capabilities across more than 70 characteristics, crystal structure visualization, and powder pattern simulation tools [18].

The Materials Project (MP)

The Materials Project is an open-access database that leverages high-throughput density functional theory (DFT) calculations to compute the properties of both known and predicted materials. It serves as a massive repository of computationally derived material properties.

Key Features:

- Content: Contains hundreds of thousands of structures, with properties derived from DFT calculations. A significant portion of its data is cross-referenced with ICSD IDs, linking computational predictions to experimentally known structures [19].

- Access: A free graphical user interface (GUI) is available, with full API access offered for automated data retrieval and integration into data mining workflows [19] [20].

- Methodology: The newer data in MP utilizes the r²SCAN meta-GGA functional, which provides improved accuracy for magnetic moments, thermodynamic stability, and magnetic ordering in oxides compared to the previously used PBE functional [21].

Table 1: Comparison of the ICSD and Materials Project Databases

| Feature | ICSD | Materials Project (MP) |

|---|---|---|

| Data Origin | Experimental (X-ray, neutron diffraction) | Computational (Density Functional Theory) |

| Primary Content | Over 210,000 curated inorganic structures [17] | Hundreds of thousands of calculated structures & properties [19] |

| Key Use Case | Source of experimental ground truth; training on empirical data | Virtual screening & property prediction; data mining [22] |

| Access | Subscription-based (with demo options) [18] | Freemium model (Open GUI, paid API tiers) [20] |

| Notable Features | Biannual updates; advanced search & visualization [18] | Cross-referenced ICSD IDs; r²SCAN functional for improved accuracy [19] [21] |

Database-Driven Predictive Modeling: Applications and Protocols

Crystal structure databases are not merely archival; they are the training grounds for sophisticated machine learning (ML) models. The following sections outline key modeling paradigms and provide detailed protocols for their implementation.

Crystal Structure Prediction (CSP)

The challenge of CSP involves predicting the stable atomic arrangement of a crystal given only its chemical composition. ML models trained on databases like the MP and the Open Quantum Materials Database (OQMD) have dramatically reduced the computational cost of this process.

Case Study: A Graph Network and Optimization Algorithm Framework A landmark study demonstrated a flexible framework for CSP combining a materials database, a graph network (GN) model, and an optimization algorithm (OA) [22].

- Database: The model was trained on two distinct databases: the OQMD and the Matbench formation energy dataset (MatB), which is derived from the Materials Project [22].

- Model: A graph network (MEGNet) was used to represent crystal structures and establish a correlation between the crystal graph and its formation enthalpy [22].

- Result: The GN(MatB) model combined with Bayesian Optimization (BO) successfully predicted the crystal structures of 29 binary compounds with a computational cost three orders of magnitude lower than conventional DFT-based screening methods [22].

Experimental Protocol: Crystal Structure Prediction using a Pre-Trained GN Model

Objective: To predict the ground-state crystal structure of a binary compound (e.g., CsPbI₃) using a database-trained model.

Materials: Python environment with pymatgen, tensorflow/pytorch, and the pre-trained MEGNet model.

Procedure:

- Data Preparation: The chemical composition (e.g., "CsPbI₃") is defined. Any known structural constraints (e.g., desired number of atoms per cell) should be specified.

- Model Loading: Import the pre-trained GN model (e.g., MEGNet formation enthalpy model) that was trained on a large database (OQMD or MatB).

- Optimization Loop: a. The optimization algorithm (e.g., Bayesian Optimization) proposes a candidate crystal structure (atomic coordinates and lattice vectors). b. The candidate structure is converted into a crystal graph representation. c. The GN model predicts the formation enthalpy (ΔH) for the candidate. d. The optimization algorithm uses the predicted ΔH to propose a new, lower-energy candidate structure.

- Termination: The loop iterates until a convergence criterion is met (e.g., minimal change in predicted ΔH over several iterations).

- Validation: The final predicted structure should be validated by performing a single DFT calculation to confirm its stability.

Synthesis Pathway Prediction

Predicting how a target material can be synthesized is a critical step toward its experimental realization. Data-driven models use historical synthesis data from databases to recommend precursor materials and conditions.

Case Study: ElemwiseRetro for Inorganic Synthesis Recipe Prediction The ElemwiseRetro model is a graph neural network designed specifically for inorganic retrosynthesis [16]. Its formulation treats the problem as selecting appropriate precursor "templates" for "source elements" in the target material.

- Training Data: The model was trained on a text-mined inorganic reaction database of 13,477 curated synthesis recipes [16].

- Method: The target composition is encoded as a graph. The model first identifies "source elements" (e.g., Li, La, Zr in Li₇La₃Zr₂O₁₂) that must be provided by precursors, and "non-source elements" that may come from the environment. It then selects a precursor template (e.g., "Li₂CO₃", "La₂O₃", "ZrO₂") for each source element from a library of 60 commonly used precursors [16].

- Result: ElemwiseRetro achieved a top-1 exact match accuracy of 78.6% and a top-5 accuracy of 96.1%, significantly outperforming a popularity-based baseline model. The model outputs a probability score for each predicted recipe, which correlates with confidence and provides experimental priority [16].

Experimental Protocol: Predicting Synthesis Recipes with ElemwiseRetro

Objective: To predict a set of solid-state precursors for a target inorganic material (e.g., Li₇La₃Zr₂O₁₂). Materials: Access to the ElemwiseRetro model (code and weights); a list of source elements and precursor templates.

Procedure:

- Input: Encode the target composition (e.g., Li₇La₃Zr₂O₁₂) as a graph using a pre-trained material representation.

- Element Masking: Apply a source element mask to identify elements that must be provided by precursors (Li, La, Zr) versus those that can be environmental (O).

- Precursor Classification: For each source element, the model's precursor classifier selects the most probable precursor compound from its template library.

- Recipe Scoring: The joint probability of the complete set of precursors is calculated to generate a ranked list of synthesis recipes.

- Output: The model returns the top-k (e.g., k=5) precursor sets, each with a probability score to guide experimental prioritization.

Symmetry-Aware Generative Design

Generative models represent a proactive approach to materials discovery, creating novel, stable crystal structures that meet specific property targets. Incorporating symmetry constraints is vital for generating physically realistic crystals.

Case Study: WyCryst Framework The WyCryst framework addresses the critical need for symmetry compliance in generative AI models [23].

- Representation: It uses a Wyckoff position-based representation for inorganic crystals, which inherently respects the symmetry constraints of allowed space groups [23].

- Model: A property-directed variational autoencoder (VAE) is trained on database structures to generate new, symmetry-compliant crystal structures [23].

- Validation: The framework successfully reproduced known materials like CaTiO₃ and CsPbI₃ and discovered eight new, dynamically stable ternary compounds, which were validated using an automated DFT workflow [23].

Table 2: Key Research Reagent Solutions for Predictive Modeling

| Item / Resource | Function / Description | Relevance to Predictive Modeling |

|---|---|---|

| ICSD API Service [18] | A RESTful API for direct programmatic access to the ICSD. | Enables large-scale data mining projects by allowing batch retrieval of crystal structures and associated data outside the standard GUI. |

| MPRester Python Client [19] | The official Python client for interacting with the Materials Project API. | Facilitates querying material IDs, properties, and cross-referenced ICSD IDs directly within a Python script or Jupyter notebook for model training. |

| pymatgen Library [21] | A robust, open-source Python library for materials analysis. | Provides critical tools for manipulating crystal structures (e.g., converting to primitive/conventional cells), analysis, and file I/O, which are essential for data pre-processing. |

| Precursor Template Library [16] | A curated list of ~60 common inorganic precursor chemicals (e.g., carbonates, oxides). | Serves as the predefined "vocabulary" for synthesis prediction models like ElemwiseRetro, ensuring predicted precursors are chemically realistic and commercially available. |

| Automated DFT Workflow [23] | A computational pipeline for high-throughput first-principles calculations. | Used for the final validation and refinement of AI-predicted crystal structures, confirming their thermodynamic and dynamic stability. |

Workflow Visualization

The following diagrams summarize the logical relationships and workflows described in this application note.

Diagram 1: The overall workflow from database to prediction and validation.

Diagram 2: The ElemwiseRetro synthesis prediction workflow [16].

The ICSD and Materials Project are indispensable infrastructure in modern materials science, providing the high-quality, large-scale data required to power next-generation predictive models. As demonstrated by the featured case studies, these databases enable a range of applications from direct crystal structure prediction to the complex task of suggesting viable synthesis pathways. The integration of these data-driven models with robust experimental protocols and validation workflows, as outlined in this note, creates a powerful pipeline for accelerating the discovery and synthesis of novel functional materials.

The accurate prediction of crystal stability is a cornerstone of computational materials science, directly influencing the targeted synthesis of novel compounds in fields ranging from electronics to pharmaceutical development. Two metrics have become foundational for these assessments: the Energy Above Hull (Ehull) and Phonon Analysis. The Ehull provides a thermodynamic measure of a compound's stability relative to competing phases, while phonon analysis probes dynamic stability by assessing vibrational properties. However, within the critical context of identifying viable synthetic pathways for theoretical crystal structures, a nuanced understanding of their specific limitations is paramount. Over-reliance on these metrics without acknowledging their constraints can lead to the dismissal of synthesizable materials or, conversely, the pursuit of fundamentally unstable candidates. This application note details these limitations and provides best-practice protocols to guide researchers toward more robust stability evaluations.

Critical Limitations of the Energy Above Hull (E_hull)

The Energy Above Hull is a thermodynamic metric that quantifies the stability of a compound by calculating its energy difference from the convex hull formed by the most stable phases in a given chemical space. A compound with an E_hull of 0 eV/atom is thermodynamically stable, while a positive value indicates a metastable or unstable phase.

Table 1: Key Limitations of the Energy Above Hull Metric

| Limitation | Underlying Cause | Practical Consequence |

|---|---|---|

| Zero-Kelvin Thermodynamics | E_hull is typically calculated at 0K, considering only enthalpy (H) and ignoring entropy (S) [24]. | Fails to predict temperature-dependent phase stability, potentially misclassifying high-temperature stable phases as unstable. |

| No Synthesis Pathway | E_hull indicates a compound's relative stability but provides no information on the kinetic pathway or barrier to form it [25]. | A low-E_hull compound may be impossible to synthesize if the energy barrier for its formation is insurmountable under practical conditions. |

| Metastability Misinterpretation | A positive E_hull signifies metastability, but does not inherently predict synthesizability [24]. | Promising metastable phases (e.g., diamond) may be incorrectly dismissed based on E_hull alone. |

| Sensitivity to Reference States | The calculated value depends entirely on the set of competing phases used to construct the convex hull [25]. | An incomplete or inaccurate set of reference phases leads to an erroneous E_hull, compromising its predictive power. |

A critical, yet often overlooked, limitation is the exclusion of temperature effects. As noted in community discussions, the Ehull "is just a reflection of the enthalpy term in the Gibbs free energy (G = H - TS)" [24]. Consequently, a phase with a higher Ehull (B) might become more stable than a phase with a lower Ehull (A) at elevated temperatures if it possesses a higher entropy. This explains why phase B might form at higher temperatures than phase A, a trend that a standard 0K convex hull analysis cannot capture [24]. Furthermore, numerous metastable phases with positive Ehull values are routinely synthesized. Their successful formation is often dictated by kinetic stabilization or the existence of specific environmental conditions (e.g., high pressure) that locally stabilize the phase, moving it below the energy of amorphous or other competing transitional states [24].

Critical Limitations of Traditional Phonon Analysis

Phonon calculations determine the dynamic stability of a crystal structure by computing its vibrational spectrum. A dynamically stable structure exhibits exclusively positive phonon frequencies across the entire Brillouin Zone (BZ). The presence of imaginary frequencies (negative values) indicates a dynamic instability, meaning the structure will undergo a distortion to a more stable configuration.

Table 2: Key Limitations of Traditional Phonon Analysis

| Limitation | Underlying Cause | Practical Consequence |

|---|---|---|

| Computational Cost | Phonon calculations, especially for large unit cells, require expensive supercell-based force calculations [26] [27]. | Becomes prohibitively time-consuming for high-throughput screening or complex molecular crystals, limiting its practical application. |

| Sensitivity to Numerical Parameters | Weak intermolecular forces in molecular crystals require extremely stringent numerical accuracy for reliable force constants [26]. | Small errors in energy or force calculations can artificially introduce or mask imaginary frequencies, leading to false positives/negatives. |

| Limited Cell Size Sensitivity | A common simplification is to test phonons only at the BZ center (Γ-point) or with a small supercell (e.g., 2x2) [27]. | May miss instabilities that require a larger periodicity (supercell) to manifest, resulting in false positives for stability [27]. |

| Harmonic Approximation | Standard calculations assume a perfectly harmonic crystal potential [28]. | Fails at finite temperatures where anharmonic effects dominate, limiting the real-world predictive accuracy for thermal properties and phase transitions. |

The "Legoland approach" to studying 2D materials, which involves idealized computational models, can compound these issues, leading to false predictions [25]. A significant challenge is that full phonon band structure calculations are so time-consuming that they are often avoided in large-scale discovery studies [27]. To address this, the Center and Boundary Phonon (CBP) protocol has been developed, which tests stability using a 2x2 supercell, effectively evaluating phonons at the center and boundary of the BZ. While this method is more efficient, it can still miss unstable modes that require even larger supercells to be observed, highlighting a inherent trade-off between computational cost and completeness [27].

Best-Practice Experimental Protocols

To overcome the limitations of individual metrics, an integrated and cautious approach is required. The following protocols outline a robust workflow for stability assessment.

Protocol 4.1: Integrated Stability Assessment Workflow

This workflow combines thermodynamic and dynamic stability checks with a pathway to resolve instabilities.

Diagram Title: Integrated Stability Assessment Workflow

Protocol 4.2: Finite-Temperature Hull Analysis

- Objective: To account for the effect of temperature on thermodynamic stability.

- Procedure: a. Access Tool: Utilize the "finite temperature estimation" feature available in phase diagram applications like the one provided by the Materials Project [24]. b. Set Parameters: Input the chemical system and the temperature range of interest for synthesis (e.g., 300K - 1500K). c. Analyze Shift: Observe how the convex hull and the E_hull of specific phases change with temperature. A phase moving closer to or onto the hull at higher temperatures indicates entropic stabilization.

- Interpretation: A phase that is metastable at 0K but becomes stable at a higher, experimentally relevant temperature is a promising synthetic target. This explains why phase 'B' with a higher 0K E_hull might form at higher temperatures than phase 'A' [24].

Protocol 4.3: Resolving Dynamic Instabilities via the CBP Protocol

- Objective: To generate a dynamically stable structure from an initially unstable one [27].

- Procedure: a. Identify Unstable Mode: For the unstable material, compute the Hessian matrix (force constants) for a 2x2 supercell. Diagonalize it to find the eigenvector (phonon mode) with a negative eigenvalue (imaginary frequency) [27]. b. Displace Atoms: Displace all atoms in the supercell along the direction of the unstable eigenvector. The displacement amplitude should be small, typically chosen so that the maximum atomic displacement is 0.1 Å [27]. c. Relax Structure: Perform a full structural relaxation (both atomic positions and cell vectors) of the displaced configuration without any symmetry constraints. d. Validate Stability: Perform a new phonon calculation on the final relaxed structure to confirm the absence of imaginary frequencies.

- Note: If multiple unstable modes exist, start with the mode with the most negative eigenvalue. This procedure can successfully yield dynamically stable crystals, as demonstrated for 49 out of 137 unstable 2D materials, often with significantly altered electronic properties [27].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Computational Tools and Methods for Stability Analysis

| Tool / Method | Function | Key Consideration |

|---|---|---|

| Density Functional Theory (DFT) | The first-principles computational method for calculating total energy, electronic structure, and interatomic forces. | Essential for computing E_hull and force constants for phonons. Requires careful selection of exchange-correlation functional, especially for dispersive forces [25] [26]. |

| Finite-Temperature Phase Diagram | A tool that estimates the Gibbs free energy at non-zero temperatures, allowing for entropy-driven effects. | Crucial for moving beyond 0K thermodynamics and predicting temperature-dependent phase stability [24]. |

| CBP (Center & Boundary Phonon) Protocol | A stability test that evaluates phonons at the Brillouin zone center and boundary using a 2x2 supercell. | A computationally efficient screening tool, but may miss instabilities requiring larger supercells [27]. |

| Minimal Molecular Displacement (MMD) | A computational method that uses molecular coordinates to reduce the cost of phonon calculations in molecular crystals. | Can reduce computational cost by up to a factor of 10 while maintaining accuracy, particularly in the low-frequency region [26]. |

| Machine Learning Potentials | Models trained on DFT data to provide accurate forces and energies at a fraction of the computational cost. | A promising route for complex systems, but requires extensive training data and their accuracy for low-frequency phonons is still under development [26]. |

The Energy Above Hull and phonon analysis are indispensable but imperfect tools. The Ehull is a ground-state thermodynamic metric blind to temperature and kinetics, while traditional phonon analysis is often hampered by computational cost and methodological approximations. The path to reliable synthetic predictions lies not in abandoning these metrics, but in applying them judiciously within an integrated workflow. This involves complementing Ehull with finite-temperature analysis and employing efficient protocols like the CBP to probe and correct dynamic instabilities. By acknowledging and actively addressing these limitations, researchers can significantly narrow the gap between theoretical prediction and experimental realization, accelerating the discovery and synthesis of novel functional materials.

AI and Inverse Design: Cutting-Edge Frameworks for Synthesis Prediction

The discovery of functional materials has long been hindered by a critical bottleneck: the significant gap between computationally predicted crystal structures and their actual synthesizability in laboratory settings. While high-throughput computational screening and generative models have identified millions of theoretical materials with promising properties, most remain theoretical constructs because traditional synthesizability assessments based on thermodynamic formation energies or kinetic stability provide incomplete guidance for experimental realization [8]. This synthesis barrier represents a fundamental challenge in materials science, particularly for researchers and drug development professionals who require physically realizable compounds with specific functional characteristics.

The Crystal Synthesis Large Language Model (CSLLM) framework emerges as a transformative solution to this long-standing problem. By leveraging specialized large language models (LLMs) fine-tuned on comprehensive materials data, CSLLM addresses three critical aspects of materials synthesis: predicting whether a crystal structure can be synthesized, determining appropriate synthetic methods, and identifying suitable chemical precursors [8] [29]. This framework represents a paradigm shift from stability-based screening to direct synthesizability prediction, potentially accelerating the translation of theoretical material designs into tangible compounds for scientific and pharmaceutical applications.

CSLLM Architecture and Core Components

The CSLLM framework employs a modular architecture consisting of three specialized LLMs, each dedicated to a specific aspect of the synthesis prediction pipeline. This division of labor allows for targeted expertise while maintaining interoperability between components.

Table 1: Core Components of the CSLLM Framework

| Component Name | Primary Function | Key Performance Metrics | Methodology |

|---|---|---|---|

| Synthesizability LLM | Binary classification of synthesizability | 98.6% accuracy, outperforms traditional methods by 106.1% (thermodynamic) and 44.5% (kinetic) [8] [29] | Fine-tuned transformer architecture on 150,120 crystal structures |

| Method LLM | Classification of synthetic approaches | 91.02% accuracy in classifying solid-state vs. solution methods [8] [29] | Multi-class classification using material string representations |

| Precursor LLM | Identification of suitable chemical precursors | 80.2% success rate for binary and ternary compounds [8] [29] | Sequence generation with combinatorial analysis |

The architectural innovation of CSLLM lies in its domain-specific fine-tuning approach. Rather than employing general-purpose LLMs, the framework adapts transformer-based models to the specialized domain of inorganic crystal structures through comprehensive training on balanced datasets of synthesizable and non-synthesizable materials [8]. Each component model shares a common foundation in processing text-based representations of crystal structures but diverges in their final prediction tasks and output formats.

The Material String: A Novel Text Representation for Crystals

A fundamental innovation enabling the CSLLM framework is the development of the "material string" representation, which transforms complex crystal structure data into a format amenable to LLM processing. Traditional representations like CIF files contain significant redundancy, while POSCAR formats lack symmetry information. The material string overcomes these limitations through a compact, reversible text encoding that preserves essential structural information [8].

The material string format follows this general structure:

SP | a, b, c, α, β, γ | (AS1-WS1[WP1-x1,y1,z1]), (AS2-WS2[WP2-x2,y2,z2]), ... | SG

Where:

SPdenotes the crystal system (cubic, hexagonal, etc.)a, b, c, α, β, γrepresent lattice parametersASindicates atomic speciesWSspecifies Wyckoff site symbolsWPprovides Wyckoff position coordinatesSGdenotes the space group

This efficient representation eliminates redundant atomic coordinates while preserving complete crystallographic information, enabling effective fine-tuning of LLMs without overwhelming sequence lengths.

Experimental Protocols and Implementation

Dataset Curation and Preparation Protocol

The performance of CSLLM stems from its comprehensive training on carefully curated datasets. The following protocol details the dataset construction process:

Materials and Data Sources:

- Positive Examples: 70,120 synthesizable crystal structures from the Inorganic Crystal Structure Database (ICSD) [8]

- Negative Examples: 80,000 non-synthesizable structures identified from 1,401,562 theoretical structures across multiple databases (Materials Project, Computational Material Database, OQMD, JARVIS) [8]

- Selection Criterion: CLscore < 0.1 from pre-trained PU learning model for negative examples [8]

Procedure:

- Filter ICSD entries to include only ordered crystal structures with ≤40 atoms and ≤7 distinct elements

- Exclude disordered structures to maintain focus on well-defined crystals

- Compute CLscores for all candidate structures using the pre-trained PU learning model

- Select structures with CLscore < 0.1 as negative examples (98.3% of positive examples had CLscore > 0.1, validating this threshold)

- Apply material string conversion to all selected structures

- Partition dataset into training, validation, and test sets with stratified sampling

This protocol yields a balanced dataset encompassing seven crystal systems and elements with atomic numbers 1-94 (excluding 85 and 87), providing comprehensive coverage of inorganic crystal chemical space [8].

Model Training and Fine-Tuning Methodology

Research Reagent Solutions: Table 2: Essential Computational Resources for CSLLM Implementation

| Resource Category | Specific Tools/Platforms | Application in CSLLM |

|---|---|---|

| Base LLM Architectures | LLaMA, ChatGPT variants [8] | Foundation models for fine-tuning |

| Materials Databases | ICSD, Materials Project, OQMD, JARVIS [8] | Source of training and evaluation data |

| Computational Frameworks | PyTorch, Transformers, MatDeepLearn | Model implementation and training |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-score | Performance quantification |

| High-Performance Computing | GPU clusters (NVIDIA A100/V100) | Accelerated model training |

The fine-tuning process follows a multi-stage protocol:

Stage 1: Preprocessing and Tokenization

- Convert all crystal structures to material string format

- Implement appropriate tokenization for the specific base LLM

- Create sequence batches with balanced class representation

Stage 2: Model Configuration

- Adapt transformer architecture with domain-specific vocabulary

- Configure attention mechanisms for crystallographic patterns

- Implement task-specific output heads for each LLM component

Stage 3: Training Procedure

- Employ gradual unfreezing of transformer layers

- Utilize discriminative learning rates across layers

- Implement early stopping based on validation performance

- Apply regularization techniques to mitigate overfitting

Stage 4: Validation and Testing

- Evaluate model performance on held-out test sets

- Assess generalization on complex structures with large unit cells

- Compare against traditional thermodynamic and kinetic stability metrics

This protocol yields the exceptional performance demonstrated by CSLLM, with the Synthesizability LLM achieving 98.6% accuracy on test data, significantly outperforming traditional methods based on energy above hull (74.1%) or phonon spectrum analysis (82.2%) [8].

Advanced Applications and Workflow Integration

High-Throughput Screening of Theoretical Structures

The CSLLM framework enables automated synthesizability assessment at scales previously unattainable. The following application note details a protocol for screening theoretical material databases:

Application Context: Identification of synthesizable candidates from generative design outputs or high-throughput computational screening [30]

Procedure:

- Input Preparation: Convert theoretical crystal structures to material string format

- Batch Processing: Utilize CSLLM's user-friendly interface for bulk upload and prediction [8] [29]

- Synthesizability Filtering: Apply Synthesizability LLM to identify promising candidates

- Method Assignment: Classify synthetic approaches for prioritization

- Precursor Identification: Generate potential precursor combinations

- Property Prediction: Integrate with graph neural networks for multi-property assessment [8]

Performance Metrics: In a demonstration application, CSLLM successfully screened 105,321 theoretical structures and identified 45,632 as synthesizable, with subsequent property prediction for 23 key material characteristics [8].

Precursor Identification and Reaction Analysis

For the Precursor LLM component, the framework implements a sophisticated protocol for precursor recommendation:

Input: Crystal structure of target compound (binary or ternary) Processing:

- Encode target structure as material string

- Generate candidate precursors through sequence generation

- Apply combinatorial analysis to evaluate precursor combinations

- Calculate reaction energies for thermochemical assessment [8] Output: Ranked list of precursor combinations with associated feasibility metrics

The Precursor LLM achieves an 80.2% success rate in identifying appropriate solid-state synthesis precursors for common binary and ternary compounds, providing critical guidance for experimental planning [8].

Validation Framework and Performance Metrics

Comparative Performance Assessment

The exceptional capabilities of CSLLM are demonstrated through rigorous benchmarking against traditional synthesizability assessment methods:

Table 3: Performance Comparison of Synthesizability Assessment Methods

| Assessment Method | Underlying Principle | Accuracy | Limitations |

|---|---|---|---|

| CSLLM Framework | Pattern recognition in experimental data | 98.6% [8] [29] | Requires comprehensive training data |

| Thermodynamic Stability | Energy above convex hull (≥0.1 eV/atom) | 74.1% [8] | Many metastable compounds are synthesizable |

| Kinetic Stability | Phonon spectrum analysis (≥ -0.1 THz) | 82.2% [8] | Computationally expensive, false negatives |

| PU Learning Models | Positive-unlabeled learning (CLscore) | 87.9% [8] | Limited to specific material systems |

Generalization Testing Protocol

To validate robustness beyond standard test conditions, the CSLLM framework was subjected to rigorous generalization testing:

Test Set Composition: Structures with complexity significantly exceeding training data, particularly large-unit-cell compounds [8]

Procedure:

- Curate independent set of complex crystal structures

- Apply Synthesizability LLM without additional fine-tuning

- Compare predictions against experimental synthesizability data

- Quantify performance degradation relative to standard test set

Results: The Synthesizability LLM maintained 97.9% accuracy on complex structures, demonstrating exceptional generalization capability beyond its training distribution [8].

Integration with Materials Discovery Pipeline

The CSLLM framework represents a critical bridge between computational materials design and experimental realization. Its modular architecture allows seamless integration with existing materials informatics workflows:

Upstream Integration:

- Accepts output from generative design models [30]

- Processes candidates from high-throughput DFT screenings

- Incorporates structures from substitution-based prediction

Downstream Applications:

- Guides experimental synthesis planning

- Prioritizes resource allocation for promising candidates

- Informs rational precursor selection

- Accelerates functional materials development

This integration effectively addresses the critical bottleneck in materials discovery, enabling researchers to focus experimental efforts on theoretically designed compounds with high probability of successful synthesis. The framework's user-friendly interface further enhances accessibility, allowing experimental researchers to upload crystal structure files and receive synthesizability assessments and precursor recommendations without deep computational expertise [8] [29].

The Crystal Synthesis Large Language Model framework represents a paradigm shift in materials synthesizability prediction. By leveraging domain-adapted LLMs trained on comprehensive crystallographic data, CSLLM achieves unprecedented accuracy in predicting synthesizability, classifying synthetic methods, and identifying appropriate precursors. Its performance significantly surpasses traditional stability-based assessments while providing actionable guidance for experimental synthesis.

The framework's modular architecture, innovative material string representation, and robust validation protocols establish a new standard for data-driven synthesis prediction. As the field advances, future iterations may incorporate additional capabilities such as reaction condition optimization, yield prediction, and integration with robotic synthesis platforms. For researchers and drug development professionals, CSLLM offers a powerful tool to bridge the gap between theoretical design and experimental realization, accelerating the discovery of novel functional materials for diverse applications.

Specialized LLMs for Synthesizability, Method Classification, and Precursor Identification

Performance Benchmarks of Crystal Synthesis Large Language Models (CSLLM)

The Crystal Synthesis Large Language Models (CSLLM) framework utilizes three specialized, fine-tuned models to address the core challenges in transitioning from theoretical crystal structures to experimental synthesis. The performance of these models, validated on comprehensive datasets, significantly surpasses traditional computational screening methods [8] [29].

Table 1: Performance Metrics of the CSLLM Framework Components

| CSLLM Component | Primary Function | Key Performance Metric | Reported Accuracy | Comparative Traditional Method Performance |

|---|---|---|---|---|

| Synthesizability LLM | Predicts whether an arbitrary 3D crystal structure is synthesizable [8]. | Accuracy on testing data [8] [29]. | 98.6% [8] [29] | Energy above hull (0.1 eV/atom): 74.1% [8]. Phonon spectrum stability (-0.1 THz): 82.2% [8]. |

| Method LLM | Classifies the appropriate synthetic method (e.g., solid-state or solution) [8]. | Classification accuracy [8] [29]. | 91.0% [8] [29] | Not Applicable (Traditional methods lack this specific classification capability). |

| Precursor LLM | Identifies suitable solid-state synthesis precursors for binary and ternary compounds [8]. | Precursor prediction success rate [8] [29]. | 80.2% [8] [29] | Not Applicable (Traditional methods lack this specific prediction capability). |

Experimental Protocols for CSLLM Implementation

Data Curation and Dataset Construction

A critical foundation for the CSLLM framework is a robust and balanced dataset for model training and validation [8].

- Positive Samples (Synthesizable Crystals): Curate 70,120 experimentally confirmed synthesizable crystal structures from the Inorganic Crystal Structure Database (ICSD) [8].

- Negative Samples (Non-Synthesizable Crystals): Generate 80,000 non-synthesizable examples from a pool of 1,401,562 theoretical structures sourced from databases like the Materials Project (MP) and the Open Quantum Materials Database (OQMD) [8].

- Validation: Verify that 98.3% of the positive samples from ICSD have a CLscore greater than the 0.1 threshold, affirming the validity of the negative set [8].

Crystal Structure Text Representation (Material String)

To enable LLMs to process crystal structures efficiently, a concise and information-dense text representation is required [8] [31].

- Objective: Create a reversible text format that comprehensively encodes lattice parameters, composition, atomic coordinates, and symmetry without the redundancy of CIF or POSCAR files [8].

- Protocol:

- Record Space Group (SP): Note the crystal's space group symbol or number.

- Record Lattice Parameters: List the six lattice parameters in order: a, b, c, α, β, γ.

- Record Atomic Species and Positions: For each unique atomic site, record the atomic species (AS), Wyckoff site (WS), and the specific Wyckoff position (WP) [8].

- Output: The final "material string" follows the format:

SP | a, b, c, α, β, γ | (AS1-WS1[WP1]), (AS2-WS2[WP2]), ...[8]. This representation allows for the complete mathematical reconstruction of a material's primitive cell [31].

Model Fine-Tuning and Workflow Execution

The core of the CSLLM framework involves fine-tuning large language models for specialized tasks [8] [31].

- Model Selection: Base models such as LLaMA can be used as a starting point for domain-specific fine-tuning [31].

- Fine-Tuning Technique: Employ efficient fine-tuning methods like Low-Rank Adaptation (LoRA). A typical configuration uses a rank of 32, which can be combined with 4-bit quantization to reduce computational demands [31].

- Execution Workflow: The operational pipeline for using the trained CSLLM models is as follows:

Successful implementation of the CSLLM framework relies on several key data and software resources.

Table 2: Key Research Reagents and Computational Tools

| Resource Name | Type | Primary Function in CSLLM Workflow |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [8] | Database | Source of experimentally verified, synthesizable crystal structures used as positive training examples. |

| Materials Project (MP) [8] | Database | Source of theoretical crystal structures used for generating non-synthesizable (negative) training examples. |

| Pre-trained PU Learning Model [8] | Computational Model | Used to assign a CLscore to theoretical structures, enabling the identification of high-confidence non-synthesizable samples. |

| Material String Format [8] [31] | Data Representation | A concise text-based representation of crystal structures that enables efficient fine-tuning and querying of LLMs. |

| Open-Source LLMs (e.g., LLaMA, Qwen) [31] | Base Model | Foundational large language models that can be fine-tuned on specialized datasets to create the specialized CSLLM components. |

| AiZynthFinder | Software Tool | An open-source toolkit for computer-aided synthesis planning (CASP), useful for validating precursor suggestions [32]. |

Generative AI Models for Inverse Design of Experimentally Synthesizable Crystals

The discovery of new crystalline materials is a cornerstone of technological advancement, impacting fields from drug development to energy storage. Traditional crystal structure prediction (CSP) methods, while effective, are computationally intensive as they require explicit energy calculations for each candidate structure during a search process [9]. Generative artificial intelligence (AI) represents a paradigm shift, learning the underlying probability distribution of known crystal structures to directly propose novel, plausible candidates, thereby accelerating the initial stages of materials discovery [9]. This document provides application notes and detailed protocols for leveraging state-of-the-art generative AI models in the inverse design of crystals, with a focus on their pathway to experimental synthesis. The content is framed within a broader thesis on identifying synthetic methods for theoretical crystal structures.

Current Generative AI Models for Crystals

Several generative architectures have been adapted for crystal structure generation. Diffusion models gradually refine noise into a structured crystal lattice, often producing high-quality, stable structures [33] [34]. Autoregressive large language models (LLMs), such as CrystaLLM, treat crystal representations as text sequences, generating structures token-by-token [35]. Generative Adversarial Networks (GANs) pit two neural networks against each other to produce realistic crystal images from a latent space [36], while Variational Autoencoders (VAEs) learn a compressed, continuous representation of crystals that can be sampled from to generate new structures [9].

The table below summarizes the key characteristics and performance metrics of prominent models.

Table 1: Performance Comparison of Key Generative AI Models for Crystals

| Model Name | Architecture | Key Representation | Stability Rate (↑) | Novelty Rate (↑) | Notable Features |

|---|---|---|---|---|---|

| MatterGen [33] | Diffusion | Atom types, fractional coordinates, lattice vectors | 78.0% (below 0.1 eV/atom) | 61.0% | High stability, broad conditioning, physically-informed diffusion |

| CrystaLLM [35] | Autoregressive Transformer | CIF text file tokens | N/A | N/A | Generates valid CIF syntax, can be guided by MCTS |

| SLICES [37] | String-based/VAE | Invertible and invariant string | 94.95% reconstruction rate | N/A | High invertibility, guarantees crystallographic invariances |

| CCDCGAN [36] | GAN | Reversible crystal image | 90.7% unreported structures | 90.7% | Optimizes formation energy in latent space |

Protocols for Inverse Design Using Generative AI

This section outlines a generalized workflow and specific protocols for using generative AI in crystal inverse design.

The following diagram illustrates the end-to-end workflow for the generative inverse design of crystals, from model selection to experimental validation.

Protocol 1: Generating Stable, Diverse Crystals with MatterGen

Application: This protocol uses the MatterGen diffusion model to generate a diverse set of stable inorganic crystals without specific property constraints, serving as a starting point for exploration [33].

- Step 1: Model Setup. Initialize the pretrained MatterGen base model, which has been trained on the diverse Alex-MP-20 dataset comprising over 600,000 stable structures [33].

- Step 2: Unconditional Generation. Execute the model's reverse diffusion process. The model will iteratively refine random noise into complete crystal structures defined by their atom types (A), fractional coordinates (X), and periodic lattice (L) [33].

- Step 3: Structure Relaxation. Pass the generated structures through a machine learning force field (MLFF) for initial relaxation. This step brings the crystals closer to their local energy minimum without the cost of DFT [33].

- Step 4: Stability and Uniqueness Check. Analyze the relaxed structures.