Bridging the Virtual and Real: A Comparative Guide to Computational and Experimental Materials Properties for Biomedical Innovation

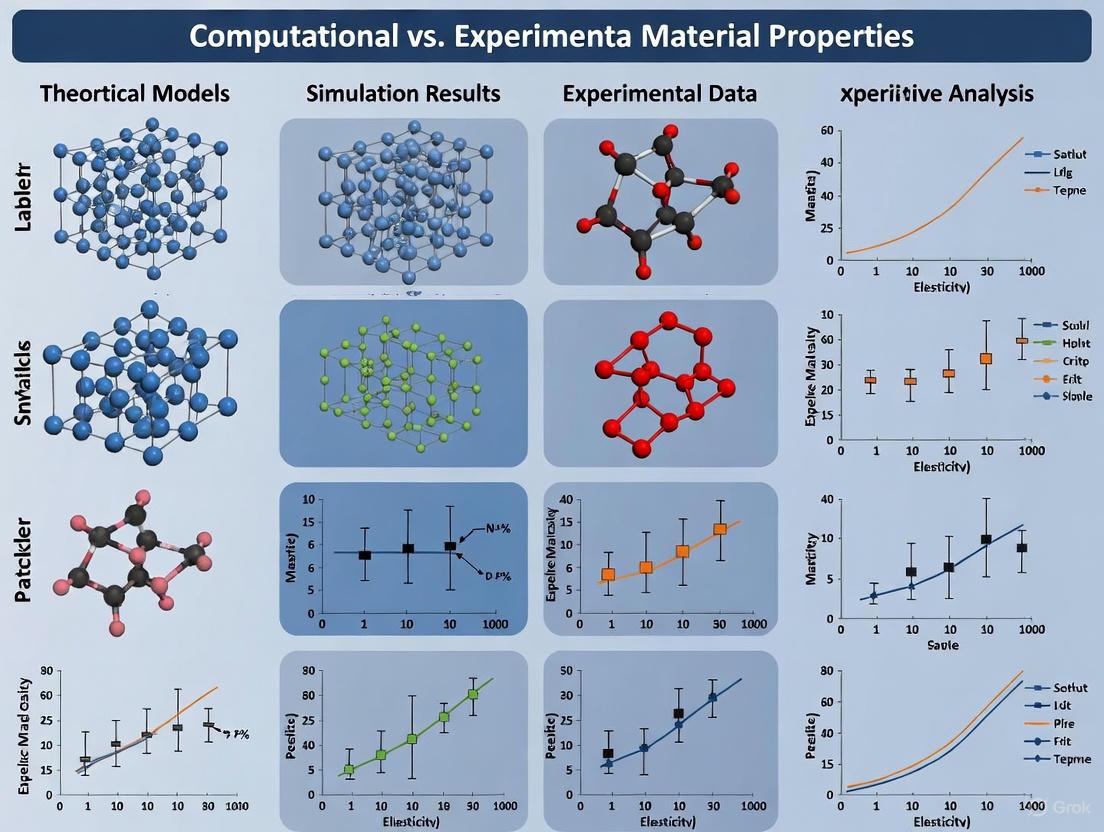

This article provides a comprehensive analysis for researchers and drug development professionals on the integrated use of computational and experimental methods in materials science.

Bridging the Virtual and Real: A Comparative Guide to Computational and Experimental Materials Properties for Biomedical Innovation

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the integrated use of computational and experimental methods in materials science. It explores the foundational principles connecting atomic-scale simulations to macroscopic properties, details the application of high-throughput virtual screening and AI-driven design for accelerated discovery, and addresses key challenges in reproducibility and data integration. Through comparative case studies across polymers, ceramics, and nanomaterials, it validates the synergistic power of combined approaches for predicting material behavior, with specific implications for pharmaceutical development, drug delivery systems, and biomedical devices.

From Atoms to Applications: The Fundamental Bridge Between Computation and Experiment

The fundamental properties of all materials—be it the exceptional strength of a jet engine turbine or the high ionic conductivity of a flexible battery—are governed by a complex hierarchy of interactions that span from the quantum behavior of electrons to the collective behavior of atoms and microstructures. Understanding this multiscale nature is paramount for designing next-generation materials with tailored properties. This guide provides a comparative analysis of the computational and experimental methodologies employed to probe these relationships, objectively evaluating their respective capabilities, limitations, and synergistic potential.

The core challenge in materials science lies in connecting phenomena across vast spatial and temporal scales. Electronic structure at the sub-nanometer level dictates atomic bonding, which in turn influences nanoscale phenomena like dislocation motion and phase nucleation. These nanoscale events collectively define microstructural evolution, which finally determines the macroscopic bulk properties measured in the laboratory [1]. No single experimental or computational technique can seamlessly traverse this entire spectrum, necessitating a combined approach where methods validate and inform one another.

Comparative Analysis of Research Methodologies

Quantitative Comparison of Computational and Experimental Approaches

The following table summarizes the primary techniques used in multiscale materials research, highlighting their respective outputs and roles in connecting material behavior across different scales.

Table 1: Comparison of Computational and Experimental Methods in Multiscale Materials Research

| Method Category | Specific Technique | Primary Scale of Focus | Key Outputs/Measurables | Role in Connecting Properties |

|---|---|---|---|---|

| Computational / Ab Initio | Density Functional Theory (DFT) | Electronic / Atomic (Å - nm) | Electronic structure, bonding, elastic constants, stacking fault energy [1] [2] | Links electron interactions to fundamental atomic-scale properties. |

| Computational / Atomistic | Molecular Dynamics (MD) | Nano - Micro (nm - µm) | Diffusion coefficients, dislocation dynamics, phase transformation pathways [3] | Bridges atomic interactions to nanoscale mechanisms and kinetics. |

| Computational / Microstructural | CALPHAD & Machine Learning (ML) | Micro - Macro (µm - mm) | Phase stability, phase fractions, thermodynamic properties [3] | Connects thermodynamics to microstructural formation. |

| Experimental / Microscopy | Scanning Electron Microscopy (SEM), TEM | Micro - Nano (µm - nm) | Microstructure imaging, phase distribution, chemical analysis [4] | Visually characterizes the microstructure resulting from lower-scale phenomena. |

| Experimental / Diffraction | X-ray Diffraction (XRD) | Atomic / Crystalline (Å - nm) | Crystal structure, phase identification, lattice parameters [4] [2] | Quantifies crystal structure and phase, validating computational predictions. |

| Experimental / Property Measurement | ZEM-3, Laser Flash Analysis | Macro (mm - cm) | Seebeck coefficient, electrical conductivity, thermal conductivity [4] | Measures macroscopic functional properties for application validation. |

Accuracy, Reliability, and Implementation Requirements

A critical assessment of these methodologies must consider their practical implementation, including the computational resources, costs, and inherent limitations.

Table 2: Practical Implementation and Limitations of Key Methods

| Method | Typical Implementation Workflow | Computational/Experimental Cost | Key Limitations & Uncertainties |

|---|---|---|---|

| Density Functional Theory (DFT) | 1. Structure selection & initialization.2. Choice of exchange-correlation functional (e.g., GGA-PBE) [2].3. Self-consistent field calculation.4. Property extraction from results. | High computational cost; limited to ~100-1000 atoms. Requires high-performance computing. | Accuracy depends on xc-functional; cannot simulate dynamics at realistic timescales; zero-Kelvin approximation. |

| CALPHAD & ML Screening | 1. Generate large composition datasets (e.g., 150,000 compositions) [3].2. Train ML models on thermodynamic data.3. High-throughput screening of billions of compositions [3].4. Down-select candidates for detailed study. | Moderate to high computational cost for database generation and ML training. | Relies on the quality and completeness of the underlying thermodynamic database; ML model accuracy is data-dependent. |

| X-ray Diffraction (XRD) | 1. Sample preparation (powder or solid).2. Measurement with Cu-Kα radiation [4].3. Peak identification and analysis.4. Phase quantification via Rietveld refinement. | Moderate equipment cost; relatively fast measurement time. | Detection limit of ~5 wt% for minor phases [4]; surface-sensitive; requires crystalline samples. |

| Thermoelectric Property Measurement | 1. Fabricate dense pellet (e.g., via Vacuum Hot Pressing).2. Measure Seebeck coefficient & electrical conductivity (e.g., ZEM-3) [4].3. Measure thermal diffusivity (e.g., laser flash).4. Calculate ZT and power factor. | High equipment cost; requires careful sample preparation and calibration. | Challenging for high-resistivity materials; contact resistance can affect accuracy; indirect measurement of thermal conductivity. |

Detailed Experimental and Computational Protocols

Protocol: High-Throughput Computational Screening of Alloy Compositions

This Integrated Computational Materials Engineering (ICME) protocol, as demonstrated for Ni-based superalloys, links thermodynamics to target microstructures [3].

Step 1: Dataset Generation

- Define compositional ranges for each element based on known alloys (e.g., Cr: 10-25 wt%, Ni: balance, etc.).

- Generate a vast set of random compositions (e.g., 150,000) within these ranges, using element-specific step sizes (e.g., 1 wt% for Cr, 0.01 wt% for C).

- Use thermodynamic software (e.g., Thermo-Calc with TCNI12 database) to calculate key properties (solidus/liquidus temperatures, γ/γ' phase fractions, TCP phase stability) for each composition at multiple temperatures, creating a dataset of ~750,000 points [3].

Step 2: Machine Learning Model Training

- Train separate ML models (e.g., regression for phase fractions, classification for phase stability) on the CALPHAD-generated dataset.

- Validate models on a hold-out test set. Reported accuracies can be high, e.g., 99.3% for γ single-phase classification and 96.0% for TCP phase prediction [3].

Step 3: High-Throughput Screening

- Apply the trained ML models to screen a massive composition space (e.g., two billion alloys) based on criteria like narrow solidification range, high γ' phase fraction, and absence of detrimental TCP phases.

- This step rapidly narrows the candidate pool from billions to thousands.

Step 4: Advanced Screening with Physical Descriptors

- Incorporate atomistic simulations to calculate nanoscale physical descriptors such as lattice misfit (for interfacial energy), atomic mobility of key elements (for precipitate coarsening), and lattice distortion (for recrystallization behavior) [3].

- Select final candidate compositions (e.g., 12) predicted to achieve the target microstructure, such as fine intragranular γ' precipitates within coarse γ grains.

Step 5: Experimental Validation

- Synthesize the top-ranked candidate alloy.

- Characterize the resulting microstructure using techniques like scanning electron microscopy (SEM) to validate the predictions of precipitate size, distribution, and phase morphology [3].

Protocol: Synthesis and Characterization of Bulk Thermoelectric Materials

This protocol outlines the experimental process for fabricating and evaluating a bulk thermoelectric intermetallic compound, such as AlSb [4].

Step 1: Controlled Melting for Synthesis

- Weigh high-purity elemental shots (e.g., Al 99.9%, Sb 99.999%) in an inert atmosphere glove box to prevent oxidation. Small excess of one element (e.g., 2-3 at% Al) may be required to compensate for processing losses [4].

- Load the mixture into a boron nitride-coated graphite crucible within a vacuum furnace.

- Melt the constituents at high temperature (e.g., 1273 K) under an argon atmosphere to prevent sublimation and oxidation.

Step 2: Pulverization and Consolidation

- Pulverize the resulting brittle ingot using a mortar and pestle or a high-energy vibratory mill.

- Sieve the powder to achieve a uniform particle size (e.g., using a 325-mesh sieve).

- Consolidate the powder into a highly dense pellet using Vacuum Hot Pressing (VHP). Example parameters: 80 MPa pressure, 1173 K temperature, for 6 hours [4].

Step 3: Phase and Microstructural Characterization

- Perform X-ray Diffraction (XRD) with Cu-Kα radiation to confirm the formation of a single phase and identify any secondary phases.

- Use Scanning Electron Microscopy (SEM) to examine surface morphology, particle size, and the presence of voids. A relative density of ~99% can be achieved with optimized milling [4].

Step 4: Measurement of Thermoelectric Properties

- Cut a bar-shaped sample (e.g., 3 x 3 x 10 mm³).

- Measure the Seebeck coefficient (S) and electrical conductivity (σ) simultaneously using a instrument like ZEM-3, which employs a four-probe method, over a temperature range (e.g., 300-873 K).

- Measure thermal diffusivity (d) using the laser flash method.

- Calculate the thermal conductivity (κ) using the formula: κ = ρ × Cp × d, where ρ is density (from Archimedes' principle) and Cp is specific heat capacity.

- Compute the dimensionless figure of merit: ZT = (S²σ/κ)T.

Workflow Visualization: An Integrated Multiscale Approach

The following diagram illustrates the interconnected workflow of a multiscale materials design project, integrating both computational and experimental streams.

Diagram Title: Integrated Multiscale Materials Design Workflow

This workflow demonstrates the synergy between methods. The computational stream generates predictive models and candidate materials, which are then synthesized and characterized in the experimental stream. The experimental results feed back to validate and refine the computational models, creating a closed-loop design process [3] [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key materials and software solutions commonly employed in the field.

Table 3: Essential Research Reagents and Computational Tools

| Category / Item | Specific Example(s) | Function / Application | Key Characteristics / Notes |

|---|---|---|---|

| High-Purity Elements | Aluminum (99.9%), Antimony (99.999%) shots [4] | Starting materials for synthesis of intermetallic compounds (e.g., AlSb). | High purity is critical to avoid unintended secondary phases that can degrade properties. |

| Computational Databases | TCNI12 (for Ni-alloys) [3] | Provide critically assessed thermodynamic data for CALPHAD calculations and ML training. | Database quality directly limits the accuracy and predictive capability of ICME frameworks. |

| Specialized Consolidation Equipment | Vacuum Hot Press (VHP) | Simultaneously applies heat and pressure to powder samples to create dense, near-net-shape bulk materials for property testing [4]. | Enables production of samples with >99% relative density, crucial for accurate property measurement. |

| Property Measurement Systems | ZEM-3 (ULVAC-RIKO) [4] | Measures Seebeck coefficient and electrical conductivity of solids using a four-probe method. | Standard tool for characterizing thermoelectric performance. Challenging for high-resistivity materials. |

| Quantum Mechanics Software | CASTEP [2] | A commercial software package for performing DFT calculations to determine electronic, optical, elastic, and thermal properties. | Uses plane-wave pseudopotential methods; requires significant computational resources for large systems. |

The journey from electron interactions to bulk material properties is a quintessential multiscale problem. As this guide has illustrated, neither computational nor experimental approaches exist in isolation; the most powerful insights emerge from their strategic integration. Computational models, grounded in ab initio principles and scaled up through ML and ICME, provide unprecedented speed in exploring vast material spaces and predicting novel compositions [3] [5]. Experimental techniques remain the indispensable anchor of truth, providing critical validation and revealing the complex, real-world microstructural features that models must capture.

The future of materials research lies in further deepening this integration. This includes the development of more accurate and efficient machine learning interatomic potentials [3], the creation of more comprehensive materials property databases for training, and the establishment of standardized benchmark challenges [6] to objectively compare and improve predictive models across the community. By continuing to refine this multiscale, multi-method toolkit, researchers can systematically dismantle the barriers between quantum mechanics and macroscopic performance, accelerating the design of materials that meet the demanding challenges of future technologies.

This guide provides an objective comparison of three core computational paradigms—Density Functional Theory (DFT), Molecular Dynamics (MD), and Finite Element Analysis (FEA)—in materials property research. The following table summarizes their fundamental characteristics, primary applications, and representative performance data from recent studies.

Table 1: Core Computational Paradigms at a Glance

| Paradigm | Fundamental Principle | Spatial Scale | Temporal Scale | Key Outputs | Representative Accuracy vs. Experiment |

|---|---|---|---|---|---|

| Density Functional Theory (DFT) | Quantum mechanics; uses electron density to solve for system energy and structure [7]. | Ångströms to nanometers (Electrons/Atomistic) | Picoseconds (Electronic Ground State) | Electronic band structure, formation energies, atomic forces [7]. | Bandgap of Silicon: ~0.6% error; Elastic constants of Copper: ~2.3% error [7]. |

| Molecular Dynamics (MD) | Classical mechanics; numerically integrates Newton's laws of motion for atoms [8] [9]. | Nanometers to hundreds of nanometers (Atomistic) | Nanoseconds to microseconds | Trajectories, diffusion coefficients, phase transition mechanisms, mechanical properties [8] [10]. | Lattice parameters/Elastic constants of Ti: Sub-1% error achievable with advanced ML potentials [11]. |

| Finite Element Analysis (FEA) | Continuum mechanics; solves partial differential equations for stress, heat, etc., over a discretized domain [12]. | Micrometers to meters (Continuum) | Milliseconds to seconds | Stress/strain distributions, temperature fields, deformation, vibration modes [10] [12]. | Biomechanical implant performance: Clinically valid predictions of range of motion and stress [12]. |

In-Depth Methodological Comparison

Density Functional Theory (DFT)

DFT is a first-principles quantum mechanical approach used to investigate the electronic structure of many-body systems.

- Experimental Protocol for Material Property Prediction: A standard workflow involves:

- Crystal Structure Initialization: Creating an initial atomic configuration of the material.

- Geometry Optimization: Iteratively solving the Kohn-Sham equations to find the ground-state electron density and energy, followed by adjustment of atomic positions to find the most stable structure [13] [14].

- Property Calculation: Using the optimized structure to compute target properties. For example, the stress-strain response is calculated by applying small deformations to the crystal lattice and recomputing the energy and stress tensor for each step.

- Performance Data: DFT-FE, a massively parallel finite-element-based DFT code, demonstrates strong performance, outperforming plane-wave codes by 5-10 fold in systems with more than 10,000 electrons and scaling efficiently up to 192,000 MPI tasks [14].

Molecular Dynamics (MD)

MD simulates the physical movements of atoms and molecules over time, based on forces derived from interatomic potentials.

- Experimental Protocol for a Tensile Test: A typical simulation to determine mechanical properties involves:

- System Preparation: Constructing a simulation box with atoms in the desired crystal phase (e.g., B2 austenite for NiTi shape memory alloys) and equilibrating it at the target temperature and pressure [15].

- Deformation: Applying a constant strain rate (e.g., 10¹⁰ s⁻¹) to the simulation box along a specific crystallographic direction while controlling temperature.

- Data Collection: Monitoring the virial stress as a function of applied strain to generate a stress-strain curve, from which properties like elastic modulus, transformation stress, and hysteresis can be extracted [15].

- Performance Data: The EMFF-2025 neural network potential (NNP) for high-energy materials achieves DFT-level accuracy with mean absolute errors (MAE) for forces predominantly within ± 2 eV/Å, enabling high-fidelity prediction of structure and decomposition mechanisms [9].

Finite Element Analysis (FEA)

FEA is a numerical method for solving engineering and mathematical physics problems governed by partial differential equations, such as solid mechanics and heat transfer.

- Experimental Protocol for an Implant Biomechanics Study:

- Model Reconstruction: Creating a 3D geometric model from medical scan data (e.g., CT or MRI) [12].

- Meshing: Discretizing the geometry into smaller, simple elements (e.g., tetrahedra). The material properties (e.g., Young's modulus, Poisson's ratio) are assigned to each element [12].

- Applying Loads and Boundary Conditions: Simulating physiological loads and moments, and constraining the model appropriately [12].

- Solving and Post-processing: Computing resultant parameters like von Mises stress, strain energy, and range of motion for comparison with an intact biological state or other designs [12].

Integrated Multi-Scale Workflows

A powerful trend in computational materials science is the integration of these paradigms to bridge scales and overcome individual limitations.

Diagram: A Multi-Scale Simulation Workflow for Material Design

- DFT-Informed MD: Machine-learning interatomic potentials (MLIPs) are trained on DFT data, enabling large-scale MD simulations with near-DFT accuracy. For instance, a foundational MACE-MP-0 model can be fine-tuned with minimal data to achieve sub-kJ mol⁻¹ accuracy for complex properties like sublimation enthalpies of molecular crystals [16].

- MD-Informed FEA: Parameters extracted from MD simulations, such as solidification rates and temperature gradients from rapid cooling processes, are used to inform macroscopic FEA models. This coupling has been successfully applied to model residual stress in additively manufactured AlSi alloys [10].

- Experimental Data Fusion: Experimental data can be directly integrated into the training of computational models. A notable example is training a machine learning force field for titanium concurrently on DFT data and experimental elastic constants, resulting in a model that satisfies all target objectives with higher accuracy than models trained on a single data source [11].

Essential Research Reagent Solutions

This table details key software and computational "reagents" essential for modern computational materials science research.

Table 2: Key Computational Tools and Resources

| Tool Name | Paradigm | Primary Function | Key Application Example |

|---|---|---|---|

| DFT-FE [13] [14] | DFT | Massively parallel real-space DFT code using finite-element discretization. | Large-scale pseudopotential and all-electron calculations for systems with up to ~100,000 electrons. |

| LAMMPS [15] | MD | A classical molecular dynamics simulator highly versatile for a wide range of materials. | Studying superelasticity, phase transformations (e.g., in NiTi), and thermal decomposition. |

| ABAQUS [10] [12] | FEA | A comprehensive software suite for finite element analysis and computer-aided engineering. | Modeling biomechanical implants [12] and process-induced stresses in additive manufacturing [10]. |

| MACE [16] | ML/DFT/MD | A machine learning interatomic potential architecture using higher body-order messages. | Data-efficient fine-tuning for first-principles quality properties of molecular crystals. |

| EMFF-2025 [9] | ML/MD | A general neural network potential for C, H, N, O-based high-energy materials. | Predicting mechanical properties and high-temperature decomposition mechanisms of energetic materials. |

| DiffTRe [11] | ML/MD | A method for training ML potentials directly on experimental data. | Fusing experimental mechanical properties and lattice parameters with DFT data for accurate force fields. |

The accurate characterization of material properties forms the foundational pillar of research and development across diverse scientific disciplines, including materials science, chemistry, and drug discovery. Understanding the intricate relationships between a material's structure and its resulting properties enables researchers to design novel compounds with tailored functionalities. In modern scientific practice, characterization methodologies span three fundamental domains: structural analysis examining atomic and molecular arrangements, microstructural investigation probing morphological features at larger length scales, and electrochemical characterization exploring redox behavior and electron transfer processes. Each domain provides complementary insights that collectively paint a comprehensive picture of material behavior.

The integration of computational predictions with experimental validation has emerged as a powerful paradigm in accelerated materials discovery and drug development. While computational approaches like AlphaFold 2 for protein structure prediction and density functional theory (DFT) for material properties offer unprecedented speed and theoretical insights, their predictions require rigorous experimental verification to establish real-world relevance [17] [18]. This comparison guide objectively examines the capabilities, limitations, and appropriate applications of key characterization techniques across these three cornerstone domains, providing researchers with a framework for selecting optimal methodologies for their specific research objectives.

Structural Characterization

Structural characterization elucidates the atomic and molecular arrangement of materials, providing fundamental insights into their intrinsic properties and functions. This domain is crucial for understanding protein-ligand interactions in drug discovery, crystallographic features in materials science, and molecular conformations in chemical research.

Comparative Analysis: Experimental vs. Computational Approaches

Table 1: Comparison of Structural Characterization Techniques

| Technique | Resolution | Sample Requirements | Key Applications | Limitations |

|---|---|---|---|---|

| X-ray Diffraction (XRD) | Atomic level | Crystalline solid | Crystal structure determination, phase identification | Limited to crystalline materials; bulk analysis |

| Cryo-Electron Microscopy | Near-atomic (2-4 Å) | Frozen-hydrated samples | Membrane protein structures, large complexes | Expensive instrumentation; sample preparation challenges |

| AlphaFold 2 Prediction | Residual level | Protein sequence | Protein structure prediction, model generation | Limited conformational diversity; underestimated binding pockets |

| Nuclear Magnetic Resonance | Atomic level | Soluble proteins, small molecules | Solution-state structures, dynamics | Molecular size limitations; complex data interpretation |

Experimental Protocols and Methodologies

X-ray Diffraction (XRD): In high-speed characterization setups, XRD employs a polychromatic X-ray beam (first harmonic energy of ~24 keV) directed toward the specimen. Diffracted X-rays form Debye-Scherrer rings captured by a scintillator-coupled high-speed camera at rates of 1 MHz or higher. Rietveld refinement of the diffraction patterns provides quantitative crystal structure information, including lattice parameters and atomic positions [19].

Cryo-Electron Microscopy: For structural biology applications, samples are vitrified in liquid ethane and imaged under cryogenic conditions. Recent studies of human sweet taste receptors (TAS1R2-TAS1R3 heterodimer) achieved resolutions of 2.5-3.5 Å using 300 kV cryo-EM instruments, enabling visualization of sucralose binding exclusively to the Venus flytrap domain of TAS1R2 [20].

AlphaFold 2 Protocol: The AI-based prediction system uses multiple sequence alignments and template structures from the Protein Data Bank (mostly from releases prior to April 30, 2018). Prediction accuracy is assessed using the predicted local distance difference test (pLDDT) score, where values >90 indicate high confidence, 70-90 indicate good backbone prediction, and <50 suggest unstructured regions [17].

Performance Comparison: Computational vs. Experimental Structures

Table 2: AlphaFold 2 Performance vs. Experimental Structures for Nuclear Receptors

| Parameter | AlphaFold 2 Performance | Experimental Structures | Biological Implications |

|---|---|---|---|

| Overall RMSD | High accuracy for stable conformations | Reference standard | Proper stereochemistry achieved |

| Ligand-binding pocket volume | Systematically underestimates by 8.4% on average | Accurate volume representation | Impacts drug binding predictions |

| Domain variability | LBDs (CV=29.3%) more variable than DBDs (CV=17.7%) | Similar trend observed | Functional implications for flexibility |

| Conformational diversity | Captures single state | Multiple biologically relevant states | Misses functional asymmetry in homodimers |

The comparative analysis of AlphaFold 2 predictions against experimental nuclear receptor structures reveals both remarkable achievements and significant limitations. While AF2 achieves high accuracy in predicting stable conformations with proper stereochemistry, it shows limitations in capturing the full spectrum of biologically relevant states, particularly in flexible regions and ligand-binding pockets [17]. This systematic underestimation of binding pocket volumes (8.4% on average) has direct implications for structure-based drug design, where accurate pocket geometry is crucial for predicting ligand binding affinities.

Microstructural Characterization

Microstructural characterization investigates the morphological features, phase distribution, and compositional variations that influence macroscopic material properties. This domain bridges the gap between atomic-scale structure and bulk behavior.

Integrated Characterization Workflow

Microstructural Analysis Workflow: Integrating complementary techniques for comprehensive characterization.

Multi-Technique Experimental Approach

Advanced microstructural characterization increasingly relies on integrating multiple complementary techniques. A novel experimental method synchronizes full-ring XRD, stereographic digital image correlation (stereo-DIC), and phase-contrast imaging (PCI) to characterize polycrystalline metals at 1 MHz or higher during dynamic loading [19]. This simultaneous approach captures both continuum response and microstructural evolution, enabling researchers to correlate mechanical properties with underlying structural changes in real-time.

For synthesized materials like CeO₂ ceramics, combined computational and experimental approaches provide insights into structure-property relationships. First-principles computational studies reveal volume optimization and electronic structure, while experimental techniques validate these predictions and measure functional properties [18]. The integration of computational and experimental data through graph-based machine learning, as demonstrated in materials informatics approaches, creates comprehensive materials maps that visualize relationships between structural features and properties [21] [22].

Case Study: CeO₂ Ceramics Characterization

Table 3: Comparative Microstructural Properties of Synthesized vs. Commercial CeO₂

| Property | Synthesized CeO₂ (CS) | Commercial CeO₂ (CP) | Characterization Technique |

|---|---|---|---|

| Band gap | 2.4-2.5 eV | 2.4-2.5 eV | UV-Vis Spectroscopy |

| Oxygen content | Higher | Lower | Elemental Analysis |

| Oxygen vacancy concentration | Higher | Lower | Raman Spectroscopy |

| Grain boundary blocking factor | 0.42 | 0.62 | Electrical Impedance Spectroscopy |

| Ionic conductivity | Higher | Lower | Electrical Impedance Spectroscopy |

| Biocompatibility (IC₅₀) | 65.94 µg/ml | 86.88 µg/ml | Cytotoxicity Testing |

The comparative study of synthesized (CS) and commercially procured (CP) CeO₂ samples demonstrates how synthesis methods critically impact functional properties. While both samples exhibited the characteristic fluorite structure confirmed by XRD and showed similar band gaps (2.4-2.5 eV), the synthesized CeO₂ displayed higher oxygen content, enhanced electronic density near the Fermi level, and superior ionic conductivity [18]. These microstructural differences translated directly to functional advantages, including better performance as solid electrolytes in intermediate-temperature solid oxide fuel cells (IT-SOFCs) and improved biocompatibility with higher inhibitory efficacy against cancer cell lines (IC₅₀ ≈ 65.94 µg/ml for CS vs. ≈ 86.88 µg/ml for CP).

Electrochemical Characterization

Electrochemical characterization techniques probe electron transfer processes, redox behavior, and interfacial phenomena that underpin energy storage, corrosion resistance, and catalytic activity.

Advanced Scanning Electrochemical Microscopy (SECM)

SECM Operational Modes: Diverse approaches for electrochemical characterization.

Emerging SECM Techniques and Applications

Scanning Electrochemical Microscopy (SECM) has evolved beyond traditional feedback and generation/collection modes to include sophisticated operational techniques with enhanced capabilities. The surface interrogation (SI) mode enables quantification of active site densities and reaction kinetics by electrochemically titrating adsorbed species with a redox mediator generated at the ultramicroelectrode (UME) tip [23]. This approach has proven particularly valuable for studying electrocatalytic reactions where adsorbates play crucial roles in reaction mechanisms.

Recent innovations in SECM methodology have addressed longstanding limitations. Novel approach curves based on shear-force and capacitance principles enable probe positioning near non-flat surfaces, expanding SECM applications to rough catalyst-coated substrates and solid/gas interfaces [23]. The sequential voltammetric SECM (SV-SECM) permits simultaneous identification of numerous species under complex working conditions and enables mapping of facet-dependent products selectively. These advancements have successfully expanded SECM to challenging electrocatalytic reactions including the N₂ reduction reaction (NRR), NO₃⁻ reduction reaction (NO3RR), and CO₂ reduction reaction (CO2RR), as well as more complicated electrolysis systems like gas diffusion electrodes [23].

Alternative Electrochemical Methods

For non-conductive media and insoluble compounds, researchers have developed innovative approaches like the charging-discharging method for investigating water-insoluble azo dyes. This technique serves as an alternative means of registering the initial oxidation event required for HOMO-LUMO transition in non-conductive media like DMSO [24]. When combined with quantum chemical calculations at the B3LYP/6-311++G theoretical level, this approach provides both experimental and theoretical insights into electrochemical behavior of challenging compounds.

Electrical impedance spectroscopy (EIS) remains a powerful technique for characterizing electrical properties of materials, as demonstrated in CeO₂ ceramic studies. EIS revealed higher ionic conductivity in synthesized CeO₂, with a lower grain boundary blocking factor (αgb = 0.42) compared to commercial CeO₂ (αgb = 0.62), directly linking microstructural features to electrochemical performance [18].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Advanced Characterization

| Reagent/Material | Function | Application Examples | Key Characteristics |

|---|---|---|---|

| Ammonium cerium nitrate ((NH₄)₂Ce(NO₃)₆) | Cerium precursor | Sol-gel synthesis of CeO₂ nanoparticles | 99% extra pure AR grade |

| Redox mediators (Ferrocene derivatives) | Electron transfer mediators | SECM experiments | Reversible electrochemistry, tunable potentials |

| Sisal fibers | Natural reinforcement | Bio-composite materials | High cellulose content, low density (5-20 wt%) |

| Polyester resin | Polymer matrix | Fiber-reinforced composites | Economic viability, moderate mechanical properties |

| Dulbecco's Modified Eagle Medium (DMEM) | Cell culture medium | Biocompatibility testing | Supports growth of various cell lines |

| LSO:Ce scintillator | X-ray detection | High-speed XRD | 2.4 mm thickness, 77.4 mm diameter |

| Ammonium hydroxide (NH₄OH) | Precipitation agent | Nanoparticle synthesis | 25% extra pure AR grade for pH control |

The comparative analysis of structural, microstructural, and electrochemical characterization techniques reveals a complex landscape where computational and experimental approaches offer complementary strengths. Computational methods like AlphaFold 2 and DFT calculations provide unprecedented speed and theoretical insights but systematically miss important biological states and material properties [17] [18]. Experimental techniques deliver ground-truth validation but face limitations in temporal resolution, sample requirements, and operational complexity.

The integration of multiple characterization methodologies through frameworks like graph-based machine learning creates powerful synergies for materials discovery [21] [22]. Similarly, the development of novel operational modes in established techniques like SECM continuously expands the boundaries of what can be characterized [23]. For researchers navigating this complex landscape, the selection of appropriate characterization strategies must be guided by specific research questions, material systems, and the critical balance between throughput and accuracy.

As characterization technologies continue to advance, particularly with the integration of AI-driven analysis and high-throughput experimental platforms, the fundamental relationship between structure and function will become increasingly predictable. However, this analysis demonstrates that experimental validation remains an indispensable cornerstone of materials research and drug development, ensuring that computational predictions translate to real-world performance.

The foundational principle of materials science, the structure-property paradigm, establishes that a material's macroscopic behavior is fundamentally dictated by its atomic-scale arrangement. Understanding the precise relationship between how atoms are organized and the resulting material properties enables the targeted design of new materials for specific applications, from lightweight alloys to sustainable energy technologies. Traditionally, elucidating these relationships relied heavily on experimental trial and error. Today, however, a powerful synergy has emerged between computational prediction and experimental validation, creating an accelerated path for materials innovation [25] [26].

This guide compares the key methodologies—computational modeling and experimental characterization—used to decode the structure-property paradigm. It objectively evaluates their performance in predicting and verifying material behavior, providing researchers with a clear framework for selecting the right tool for their specific development goals.

Computational and Experimental Approaches: A Comparative Workflow

The process of linking atomic structure to macroscopic properties follows a defined pathway, which can be executed through computational, experimental, or integrated workflows. The diagram below illustrates the logical relationships and key decision points in this research process.

Figure 1: Research pathways for investigating the structure-property paradigm, showing computational, experimental, and integrated AI-driven approaches with their interactions.

Quantitative Comparison of Methodologies

Performance Metrics for Computational vs. Experimental Approaches

The choice between computational and experimental methods involves trade-offs in accuracy, cost, and throughput. The following table summarizes quantitative comparisons based on current research.

Table 1: Performance comparison of computational and experimental methods for materials research

| Metric | Computational Methods | Experimental Methods | Integrated AI Approaches |

|---|---|---|---|

| Accuracy | DFT: Moderate [27]CCSD(T): Chemical accuracy [27] | High for measured properties [27] | Improves with data volume; follows scaling laws [28] [29] |

| Throughput | High-throughput screening of thousands of candidates [30] | Limited by synthesis/measurement time [30] | 900+ chemistries explored in 3 months [29] |

| Cost per Sample | Low after initial setup [26] | High (equipment, materials, labor) [26] | Moderate (automation reduces labor) [29] |

| Data Output | Electronic structure, bonding, properties [25] [27] | Empirical performance data, microstructures [31] | Multimodal data (images, spectra, properties) [29] |

| Time to Solution | Days to weeks for screening [30] | Months to years for development [30] | Significant acceleration (3-10x) [29] |

| Limitations | Accuracy trade-offs, model transferability [25] [32] | Resource-intensive, slower iteration [30] | Initial setup complexity, data requirements [29] |

Comparison of Computational Techniques

Within computational materials science, different techniques offer varying levels of accuracy and computational expense, making them suitable for different research questions.

Table 2: Comparison of computational chemistry techniques for atomic-scale modeling

| Method | Accuracy Level | System Size Limit | Computational Cost | Key Outputs |

|---|---|---|---|---|

| Density Functional Theory (DFT) | Moderate [27] | Hundreds of atoms [27] | Moderate [27] | Total energy, electronic structure [25] [27] |

| Coupled-Cluster Theory (CCSD(T)) | Chemical accuracy (gold standard) [27] | Tens of atoms (traditional) [27] | Very high [27] | High-accuracy energies, excited states [27] |

| Molecular Dynamics (MD) | Varies with force field | Thousands of atoms [28] | Low to moderate [28] | Atom trajectories, thermodynamic properties [28] |

| Machine Learning Potentials | Near-CCSD(T) for trained systems [27] | Thousands of atoms [27] | Low (after training) [27] | Multiple properties from single model [27] |

Detailed Experimental and Computational Protocols

Computational Workflow for Atomic-Level Prediction

Objective: To predict how elemental doping affects the mechanical properties of Mg-Li alloys through atomic-scale simulation [25].

Methodology:

- Model Construction: Create initial crystal structure models of Mg-Li alloy unit cells using known lattice parameters. Generate doped structures by substituting specific atomic sites with alloying elements (e.g., Al, Zn, Y) [25].

- Electronic Structure Calculation: Employ Density Functional Theory (DFT) calculations to solve the quantum mechanical equations for the system. Typical parameters include:

- Exchange-Correlation Functional: PBE or similar GGA functional

- Basis Set: Plane-wave basis set with pseudopotentials

- Cut-off Energy: 520 eV for plane-wave basis

- k-point Sampling: Monkhorst-Pack grid with spacing of 0.03 Å⁻¹

- Convergence Criteria: Energy tolerance of 10⁻⁶ eV/atom, force tolerance of 0.01 eV/Å [25]

- Property Extraction: Calculate mechanical properties including elastic constants (C₁₁, C₁₂, C₄₄), bulk modulus, and shear modulus from the strain-energy relationship. Derive thermodynamic stability from formation energy calculations [25].

- Electronic Analysis: Examine electronic density of states (DOS), charge density distribution, and chemical bonding patterns to identify strengthening mechanisms at the electronic level [25].

Validation: Compare calculated lattice parameters and elastic moduli with available experimental data to assess predictive accuracy [25].

Integrated AI-Driven Experimental Protocol

Objective: To autonomously discover high-performance fuel cell catalyst materials through robotic experimentation and machine learning [29].

Methodology:

- Automated Synthesis:

- High-Throughput Characterization:

- Multimodal Data Integration:

- Active Learning Loop:

- Train machine learning models on accumulated experimental data and literature knowledge [29].

- Use Bayesian optimization in a reduced search space to propose new promising material compositions for subsequent testing rounds [29].

- Iterate the process by feeding new experimental results back into the model to refine predictions [29].

Output: Identification of optimal catalyst composition achieving record power density in direct formate fuel cells [29].

Sim2Real Transfer Learning Protocol

Objective: To bridge computational databases and limited experimental data for predicting real-world material properties [28].

Methodology:

- Pretraining Phase:

- Fine-Tuning Phase:

- Scaling Law Analysis:

- Quantify how prediction error decreases with increasing computational database size according to the power law: prediction error = Dn^(-α) + C, where n is database size, α is decay rate, and C is transfer gap [28].

- Use scaling behavior to estimate data requirements for target accuracy and performance limits [28].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key computational and experimental resources for structure-property research

| Tool/Resource | Type | Primary Function | Application Example |

|---|---|---|---|

| VASP | Software | Quantum mechanical DFT calculations | Predicting formation energies of alloys [25] |

| Materials Project | Database | Computational materials data repository | Providing training data for machine learning models [31] [28] |

| MatDeepLearn | Software Framework | Graph-based deep learning for materials | Creating materials maps and property prediction [31] |

| StarryData2 | Database | Curated experimental data from publications | Accessing experimental thermoelectric properties [31] |

| CRESt Platform | Integrated System | AI-guided robotic experimentation | Autonomous discovery of fuel cell catalysts [29] |

| RadonPy | Software | Automated molecular dynamics simulations | Building polymer properties database [28] |

| MEHnet | Neural Network | Multi-task electronic property prediction | Calculating multiple molecular properties simultaneously [27] |

The comparison between computational and experimental approaches to the structure-property paradigm reveals a clear evolution from isolated methodologies to integrated, AI-driven frameworks. Computational methods provide unprecedented atomic-scale insights and high-throughput screening capabilities, while experimental techniques deliver essential validation and discovery of complex real-world behavior. The most powerful emerging approach combines these strengths through transfer learning [28] and autonomous experimentation [29], effectively bridging the gap between theoretical prediction and practical application.

For researchers, the optimal strategy involves selecting computational methods for initial screening and atomic-scale mechanism understanding, followed by targeted experimental validation of promising candidates. As scaling laws [28] continue to improve predictive accuracy and automated platforms [29] reduce experimental bottlenecks, this integrated approach will dramatically accelerate the design of next-generation materials for sustainability [26], energy applications [30], and beyond.

Granular materials, encompassing substances from pharmaceutical powders to geotechnical sands, exhibit a complex range of behaviors—solid-like, fluid-like, and gas-like—that emerge from particle-scale interactions. Understanding these materials requires bridging the gap between microscopic particle dynamics and macroscopic continuum behavior, a fundamental challenge across scientific and engineering disciplines. In pharmaceutical development, granular materials constitute the primary components of solid dosage forms, where their processing behavior and final performance are dictated by intricate interactions between active pharmaceutical ingredients (APIs) and excipients during manufacturing processes such as die-compaction and granulation [33]. Despite their prevalence, predicting granular behavior remains notoriously difficult due to inherent nonlinearity, discontinuity, and heterogeneity that characterize these systems [34].

The investigation of granular materials spans both computational and experimental paradigms, each with distinct advantages and limitations. Computational approaches enable particle-scale insight into mechanisms otherwise unobservable, while experimental methods provide essential validation benchmarks for model verification. This guide systematically compares the predominant methodologies—computational granular mechanics and experimental characterization—framed within the context of material properties research, to provide researchers with a clear framework for selecting appropriate investigative strategies based on their specific research objectives and constraints.

Comparative Analysis of Methodologies: Capabilities and Limitations

Table 1: Comparison of Computational and Experimental Approaches to Granular Materials Research

| Methodology | Key Applications | Spatial Resolution | Temporal Coverage | Key Strengths | Primary Limitations |

|---|---|---|---|---|---|

| Discrete Element Method (DEM) | Particle-particle interactions, granular flow [34] | Particle-scale | Full process simulation | Captures discrete nature; Provides detailed particle-scale data | Computationally expensive for large systems; Challenging parameter calibration [35] |

| Machine Learning-Accelerated Models | Surrogate modeling, parameter calibration, pattern recognition [35] | Varies with training data | Varies with training data | Rapid prediction once trained; Can discover hidden patterns | Requires extensive training data; Black-box nature; Limited interpretability [36] |

| Continuum Methods (FEM) | Macroscale boundary value problems [35] | Continuum level | Full process simulation | Efficient for engineering-scale problems; Well-established | Loses particle-scale information; Requires phenomenological constitutive laws [36] |

| X-Ray CT Imaging | 3D internal structure visualization, strain mapping [37] | Micron-scale | Single time points or slow processes | Non-destructive; Provides actual internal structure | Limited temporal resolution; Complex data processing |

| Split Hopkinson Bar (SHB) | High-rate loading behavior (~10² s⁻¹) [38] | Bulk response | Milliseconds | Characterizes dynamic strength; Controlled strain rates | Challenging specimen preparation; Boundary effects |

| Plate Impact Tests | Shock compression (~10⁵ s⁻¹) [38] | Bulk response | Microseconds | Extreme condition data; Shock wave propagation | Specialized equipment required; Complex analysis |

Experimental Protocols for Granular Material Characterization

Benchmark Granular Material Preparation Using 3D Printing

The verification of numerical simulations requires benchmarks based on real material behavior. Recent advances utilize 3D printing technology to create artificial particles with controlled characteristics for discrete element method (DEM) verification [37].

- Particle Fabrication: Artificial particles are prepared using a 3D printer, effectively reducing errors in particle modeling for DEM analysis

- Particle Characterization: The printed particles undergo comprehensive measurement of their characteristics including:

- Size distribution using standardized sieving or imaging techniques

- Frictional properties through direct shear measurements between particle surfaces

- Coefficients of restitution via drop tests or pendulum impact tests

- Elastic stiffness using nanoindentation or compression testing

- Angle of Repose Testing: The flow properties of the printed particles are quantified through:

- Cubical container tests where material is drained from one compartment to another

- Cylical container methods where material is released from a rotating cylinder

- Internal Visualization: The internal structure of samples in repose state is examined using X-ray CT analysis, providing:

- Strain distribution inside the samples

- Displacement levels and vectors within the packed material

This protocol provides a comprehensive benchmark dataset encompassing both individual particle properties and collective bulk behavior, essential for validating computational models [37].

High-Rate Mechanical Characterization

Understanding granular material behavior under dynamic loading conditions requires specialized experimental configurations capable of capturing high-rate phenomena.

- Specimen Preparation: Two soil types—fine cohesive soil (Soil1) and coarse cohesionless soil (Soil2)—are prepared under constant humidity conditions, maintaining their "as-delivered" state rather than pure dry conditions [38]

- Quasi-Static Testing:

- Static compression behavior under uniaxial strain is derived from oedometer tests following DIN 18135 standard

- Quasi-static shear strength properties are determined in triaxial tests following DIN 18137 standard

- Dynamic Triaxial Testing:

- Employ a Split-Hopkinson Bar (SHB) with triaxial pressure cell

- Use specimen diameter of 75mm with reduced length of 25mm to ensure dynamic stress equilibrium

- Implement five strain gauges to record stress wave propagation through bars

- Incorporate force foils (F.F.) for direct stress derivation and optical extensometer (O.E.) for strain measurement

- Conduct tests under unconsolidated-undrained (UU) conditions with constant confinement pressure

- Shock Compression Testing:

- Utilize a newly developed plate impact capsule for shock compression studies

- Achieve strain rates up to 10⁵ s⁻¹ to investigate shock wave propagation

- Measure shock-particle velocity relationships and stress-wave profiles

This multi-rate experimental approach enables the characterization of granular materials across a wide spectrum of loading conditions, from quasi-static to shock compression [38].

Computational Modeling Approaches

Particle-Based Computational Methods

Computational granular mechanics employs diverse numerical techniques, each with specific advantages for capturing different aspects of granular behavior.

- Discrete Element Method (DEM): Models particles as discrete elements interacting through contact forces; ideal for capturing particle-scale phenomena and interactions [35]

- Material Point Method (MPM): Particularly effective for granular flow and phase transition problems, handling large deformations effectively [34]

- Smoothed Particle Hydrodynamics (SPH): Meshfree method suitable for fluid-granular interactions and flow behavior simulation [34] [35]

- Particle Finite Element Method (PFEM): Effective for granular deformation and failure analysis, combining particle tracking with finite element analysis [34]

- Peridynamics (PD): Suitable for modeling particle crushing and fracturing in granular solids, especially effective for cemented granular materials [34] [35]

- Molecular Dynamics (MD): Models granular materials at the microscale, capturing atomic-scale interactions relevant to particle behavior [34]

- Lattice-Boltzmann Method (LBM): Specialized for fluid-particle interactions in granular media, particularly pore-scale flow phenomena [34]

Machine Learning Integration in Granular Mechanics

Artificial intelligence approaches are transforming computational granular mechanics through three primary pathways [35]:

- ML-Accelerated Simulations: Machine learning enables faster computational modeling through:

- Multiscale and constitutive modeling of granular soils

- MLP-based contact laws in DEM simulations

- CNN-based intrusive reduced-order modeling of DEM

- RNN and GNN-based non-intrusive reduced-order modeling

- ML-Enabled Pattern Recognition: Machine learning facilitates knowledge discovery through:

- Particle shape and size analysis from imaging data

- 3D reconstruction of granular materials from limited data

- Force-chain network prediction and analysis

- Characterization of macroscopic behavior from particle-scale data

- ML-Assisted Inverse Analysis: Machine learning addresses inverse problems through:

- Parameter calibration in DEM simulations

- Simulation-based optimization tasks

- Uncertainty quantification in granular systems

Figure 1: Machine Learning Workflow for Granular Materials. This diagram illustrates the integration of machine learning approaches in computational granular mechanics, from data processing to model deployment.

The Scientist's Toolkit: Essential Research Solutions

Table 2: Essential Research Reagents and Materials for Granular Materials Research

| Research Solution | Function | Application Context | Key Characteristics |

|---|---|---|---|

| 3D Printed Granular Proxies | Benchmark material for DEM verification [37] | Experimental calibration of computational models | Controlled geometry; Reproducible properties; Customizable shapes |

| Pneumatic Dry Granulation (PDG) System | Dry granulation via roller compaction with air classification [39] | Pharmaceutical powder processing | Minimal heat generation; Suitable for heat-sensitive materials; High drug loading capacity (70-100%) |

| Moisture-Activated Dry Granulation (MADG) | Wet granulation using 1-4% water with moisture-absorbing materials [39] | Pharmaceutical formulation | No drying process; Shorter process time; Continuous processing capability |

| Foam Granulation Binders | Wet granulation using foam as binder [39] | Pharmaceutical production | Uniform binder distribution; Reduced water requirement; No spray nozzle clogging |

| Hyperelastic Model Frameworks | Constitutive modeling of granular materials [40] | Computational mechanics | Power law dependencies of elastic moduli on mean stress; Stress distribution prediction |

| X-Ray CT Visualization System | Non-destructive internal structure analysis [37] | Experimental characterization | 3D internal visualization; Strain distribution mapping; Particle arrangement analysis |

Integrated Research Workflow: Bridging Computation and Experiment

Successful granular materials research requires the integration of computational and experimental approaches through a systematic workflow that leverages the strengths of both paradigms.

Figure 2: Integrated Computational-Experimental Research Workflow. This diagram outlines a systematic approach for granular materials research that combines experimental characterization with computational modeling.

The investigation of granular materials demands careful selection of appropriate methodologies based on specific research goals, with computational and experimental approaches offering complementary insights. For particle-scale mechanism discovery, Discrete Element Method combined with X-ray CT visualization provides unparalleled resolution of discrete interactions. For engineering-scale prediction of bulk behavior, continuum methods enhanced with machine learning surrogates offer practical efficiency. In pharmaceutical applications, advanced granulation technologies enable precise control of material properties for optimized product performance. The emerging integration of artificial intelligence with traditional computational mechanics represents a paradigm shift, offering accelerated discovery while maintaining physical fidelity. By strategically selecting and integrating these diverse methodologies, researchers can effectively navigate the complex multiscale behavior of granular systems across disciplines from geotechnics to pharmaceutical development.

High-Throughput Design and AI-Driven Discovery: Accelerating Materials Innovation

The Materials Project and Large-Scale Computational Repositories

The field of materials science has been transformed by the emergence of large-scale computational repositories that enable data-driven discovery. These platforms shift the traditional "Edisonian" paradigm of materials research—characterized by extensive trial-and-error experimentation—toward a more strategic "materials by design" approach [41]. By leveraging high-performance computing and quantum mechanical calculations, researchers can now virtually screen thousands of materials to identify promising candidates before ever entering a laboratory [41] [42]. The Materials Project stands at the forefront of this revolution, providing open access to calculated properties of both known and hypothetical materials. This guide objectively compares The Materials Project with other prominent platforms, examining their distinct approaches to computational and experimental data integration within the broader context of materials research methodologies.

Comparative Analysis of Major Platforms

The table below provides a systematic comparison of four major platforms in the computational materials science landscape, highlighting their distinct approaches, data types, and primary functionalities.

Table 1: Comparative analysis of major computational materials repositories

| Platform | Primary Data Type | Core Methodology | Data Sources | Key Features | Access Method |

|---|---|---|---|---|---|

| The Materials Project | Computational (primarily) | High-throughput DFT calculations [42] | ICSD, computationally predicted structures [43] | ~140,000 inorganic compounds; property prediction tools [44]; synthesizability skylines [41] | REST API; web interface [44] |

| Materials Cloud | Computational (with provenance) | AiiDA workflow manager [45] | User submissions with full provenance | Focus on reproducibility and provenance tracking; interactive workflow exploration [45] | Archive submission; explore section [45] |

| HTEM-DB | Experimental | High-throughput combinatorial experiments [46] | Controlled deposition and characterization | Inorganic thin-film experimental data; integrated with laboratory instrumentation [46] | Web interface; API [46] |

| AFLOWlib | Computational | High-throughput DFT (aflow) [45] | ICSD, calculated structures | Automated calculation workflows; standardized data generation [45] | Web access; data downloads |

Methodological Approaches: Computational versus Experimental

Computational Workflows in The Materials Project

The Materials Project employs a sophisticated computational framework centered on density functional theory (DFT) calculations executed across multiple supercomputing facilities [41] [42]. The workflow begins with defining a chemical space of interest, followed by high-throughput DFT calculations using standardized parameters. The computed properties—including electronic structure, thermodynamic stability, and optical characteristics—are stored in a structured database [42]. A critical innovation is the "synthesizability skyline" approach, which identifies materials that cannot be synthesized by comparing energies of crystalline and amorphous phases, thereby helping experimentalists avoid dead ends [41].

Experimental Data Generation in HTEM-DB

The High-Throughput Experimental Materials Database (HTEM-DB) employs a fundamentally different approach centered on combinatorial experiments. This methodology involves depositing material libraries through controlled vapor deposition techniques, followed by automated characterization of composition, structure, and optoelectronic properties [46]. The Research Data Infrastructure (RDI) integrates directly with experimental instruments, establishing a communication pipeline between researchers and data systems to ensure comprehensive metadata collection [46]. This experimental focus provides ground-truth validation data that complements computational predictions from platforms like The Materials Project.

Data Characteristics and Applications

Data Types and Fidelity

A critical distinction between these platforms lies in their data characteristics and optimal applications. The Materials Project primarily provides computationally derived properties based on DFT, which offers broad coverage of chemical space but with known accuracy limitations for certain material classes [42]. In contrast, HTEM-DB offers experimental measurements with inherent real-world variability but more limited compositional coverage due to practical synthesis constraints [46]. Materials Cloud occupies a unique position by capturing complete computational provenance, enabling both the reuse of data and the reproduction of entire simulation workflows [45].

Table 2: Data characteristics and research applications across platforms

| Platform | Primary Data Characteristics | Accuracy Considerations | Optimal Research Applications |

|---|---|---|---|

| The Materials Project | Uniform, high-volume computed properties [42] | DFT limitations for correlated electrons; requires validation [42] | Initial screening; trend analysis; novel material prediction [41] [42] |

| Materials Cloud | Full provenance computational data [45] | Depends on original calculation methods | Reproducible research; workflow development; educational purposes [45] |

| HTEM-DB | Experimental measurements with real-world variability [46] | Experimental uncertainty; synthesis condition dependencies | Experimental validation; machine learning on empirical data [46] |

| AFLOWlib | Standardized computational properties [45] | Consistent but subject to DFT limitations | High-throughput screening; materials informatics [45] |

Complementary Strengths and Research Synergies

The most powerful research strategies leverage the complementary strengths of both computational and experimental repositories. Computational platforms excel at rapidly exploring vast chemical spaces and predicting novel materials, while experimental databases provide crucial validation and address synthesis realities [41] [46]. This synergy enables "closed-loop" materials discovery, where computational predictions guide experimental synthesis, and experimental results refine computational models [46]. The multifidelity approach combines different levels of computational accuracy with experimental validation to balance resource constraints with scientific rigor [42].

Essential Research Toolkit

Table 3: Essential tools and resources for computational materials research

| Tool/Resource | Type | Primary Function | Platform Association |

|---|---|---|---|

| Pymatgen | Software library | Materials analysis [42] | Materials Project [42] |

| AiiDA | Workflow manager | Provencence tracking & automation [45] | Materials Cloud [45] |

| FireWorks | Workflow manager | Computational workflow management [42] | Materials Project [42] |

| COMBIgor | Data analysis | Combinatorial experimental data analysis [46] | HTEM-DB [46] |

| DFT Codes | Simulation software | Electronic structure calculations [42] | Multiple platforms |

| REST APIs | Data access | Programmatic data retrieval [44] | Multiple platforms |

The Materials Project, Materials Cloud, and HTEM-DB represent complementary paradigms in modern materials research, each with distinct strengths and applications. The Materials Project provides unparalleled breadth in computational materials screening, while Materials Cloud offers unique capabilities for reproducible computational science through comprehensive provenance tracking. HTEM-DB contributes essential experimental data that anchors computational predictions in empirical reality. Researchers are increasingly adopting hybrid strategies that leverage the strengths of each platform, combining high-throughput computation with experimental validation to accelerate materials discovery. This integrated approach represents the future of materials research, where computational and experimental methodologies converge to create a more efficient, predictive pathway from material concept to functional implementation.

The integration of artificial intelligence (AI) and machine learning (ML) is fundamentally reshaping materials science. By acting as powerful surrogates for computationally intensive methods like density functional theory (DFT), tools such as ChatGPT Materials Explorer (CME) and AtomGPT are accelerating the prediction of material properties and the design of novel substances [47] [48] [49]. This guide provides a comparative analysis of these emerging AI tools, framing them within the ongoing dialogue between computational prediction and experimental validation.

The AI Revolution in Materials Informatics

The traditional materials discovery pipeline, heavily reliant on DFT calculations, faces significant challenges due to its high computational cost and cubic scaling with system size, which severely limits high-throughput screening [49]. AI models, particularly graph neural networks (GNNs) and large language models (LLMs), are overcoming these barriers by learning complex structure-property relationships from existing datasets, enabling property prediction and inverse design thousands of times faster than traditional methods [47] [49].

A critical challenge with general-purpose AI models is their tendency to produce hallucinations or factually incorrect information, with estimated rates between 10% and 39% [50] [51]. This is often due to training on generic sources like Wikipedia, which contain biases toward "hot" materials already frequently studied in literature, leading to a narrow exploration of chemical space [52]. Specialized tools like CME and AtomGPT address this by integrating directly with curated materials databases and employing physics-informed models, significantly improving accuracy and reliability for scientific applications [50] [47] [52].

Tool-Specific Profiles & Mechanisms

ChatGPT Materials Explorer (CME)

CME is a custom GPT assistant built on the OpenAI GPT-4o architecture and specifically tailored for materials science applications [47]. Its primary innovation lies in connecting a powerful language model to specialized databases and predictive models, functioning like a dedicated research assistant that can dig through vast datasets, predict material behavior without physical testing, and assist with scientific writing [50] [51].

Core Architecture & Workflow: CME was developed using the GPT Builder "Create" interface, configuring its behavior through natural language and connecting it to external APIs [47]. Its capabilities are powered by integration with several core resources:

- Databases: National Institute of Science and Technology-Joint Automated Repository for Various Integrated Simulations (NIST-JARVIS), Materials Project, Open Databases Integration for Materials Design (OPTIMADE), and National Institutes of Health-Chemistry Agent Connecting Tool Usage to Science (NIH-CACTUS) [50] [51] [47].

- Predictive Models: Integrated with the Atomistic Line Graph Neural Network (ALIGNN) model to predict key properties like formation energy, bandgap, and total energy directly from atomic structure [47].

- Literature Search: Utilizes the arXiv API to find relevant recent scientific papers [47].

AtomGPT

AtomGPT is a generative pre-trained transformer specifically developed for atomistic materials design. It demonstrates capabilities for both forward property prediction and inverse structure generation [48]. Unlike CME, which leverages an existing LLM, AtomGPT is a trained model from the ground up on materials data.

Core Architecture & Workflow: AtomGPT tokenizes atomic species, lattice parameters, and fractional coordinates into sequences, treating crystal generation as a language modeling task [49]. Its self-attention mechanisms capture long-range chemical dependencies, enabling it to:

- Predict Material Properties: Using a combination of chemical and structural text descriptions, it predicts formation energies, electronic band gaps, and superconducting transition temperatures with accuracy comparable to GNN models [48].

- Generate Novel Structures: It can perform inverse design, generating candidate atomic structures for target properties, such as new superconductors [48] [49].

Other Notable Models

The field is rapidly expanding with other specialized models demonstrating significant capabilities:

- AlloyGPT: A generative, alloy-specific language model that performs concurrent forward property prediction and inverse design for additively manufacturable alloys, demonstrating high predictive accuracy (R² = 0.86-0.99) [53].

- Generative Model Benchmarks: A systematic benchmark (AtomBench) compared AtomGPT against other architectures like the Crystal Diffusion Variational Autoencoder (CDVAE) and FlowMM on tasks of reconstructing crystal structures. The study found CDVAE performed most favorably, followed by AtomGPT, and then FlowMM [49].

Performance Comparison & Experimental Data

Accuracy and Hallucination Resistance

A key test for specialized AI tools is their accuracy compared to general-purpose models. In a controlled evaluation, CME was tested against GPT-4o and ChemCrow (a chemistry-focused AI agent) on eight materials science questions, ranging from simple molecular formulas to interpreting phase diagrams [50] [51].

Table 1: Performance Comparison on Domain-Specific Questions

| AI Model | Correct Answers (Out of 8) | Key Strengths and Weaknesses |

|---|---|---|

| ChatGPT Materials Explorer (CME) | 8 | Correctly answered all questions, including database queries and property predictions [50] [51]. |

| GPT-4o | 5 | Provided generic, incomplete, or sometimes misleading responses to domain-specific queries [50] [47]. |

| ChemCrow | 5 | Struggled with accurate database interactions and property predictions [47]. |

This experiment highlights how domain-specific grounding mitigates the hallucination problem. CME's perfect score is attributed to its direct access to authoritative databases and integration with physics-based models like ALIGNN [50] [47].

Inverse Design and Benchmarking

For inverse design, the performance of generative models is measured by how accurately they can reconstruct known crystal structures based on target properties. The AtomBench benchmark provides a rigorous comparison using metrics like Kullback-Leibler (KL) divergence and mean absolute error (MAE) on datasets from JARVIS Supercon 3D and Alexandria [49].

Table 2: Inverse Design Model Benchmarking on Superconductivity Datasets

| Model | Architecture | Key Performance Metrics (KL Divergence & MAE) |

|---|---|---|

| CDVAE | Diffusion Variational Autoencoder | Most favorable performance in reconstructing crystal structures [49]. |

| AtomGPT | Transformer (Generative Pretrained) | Intermediate performance, behind CDVAE but ahead of FlowMM [49]. |

| FlowMM | Riemannian Flow Matching | Least favorable performance among the three models benchmarked [49]. |

This benchmarking demonstrates that while AtomGPT is a powerful tool, the choice of model architecture can significantly impact performance for specific inverse design tasks.

Experimental Protocols & Methodologies

Protocol: Benchmarking Hallucination Resistance

This protocol is based on the methodology used to evaluate CME against other AI models [50] [51] [47].

- Question Set Curation: Develop a set of 8 diverse materials science questions. These should cover:

- Factual recall (e.g., molecular formula of common compounds like aspirin or ibuprofen).

- Data retrieval (e.g., "list all materials containing silicon and carbon from JARVIS-DFT").

- Interpretation (e.g., explaining a phase diagram).

- Model Testing: Submit each question in the set to the models under evaluation (e.g., CME, GPT-4o, ChemCrow).

- Response Validation: Manually check all model responses against ground-truth data from authoritative sources like the connected databases (NIST-JARVIS, Materials Project) or established scientific knowledge.

- Scoring: Score each response as correct or incorrect based on factual accuracy and completeness. The model with the highest number of fully correct answers demonstrates superior hallucination resistance.

Protocol: Inverse Design Benchmarking

This protocol is derived from the AtomBench benchmark for generative atomic structure models [49].

- Dataset Selection: Select appropriate DFT-relaxed crystal structure datasets for training and testing (e.g., JARVIS Supercon 3D, Alexandria DS-A/B).

- Model Training & Configuration: Train the generative models (AtomGPT, CDVAE, FlowMM) on a subset of the data. Use consistent hyperparameters and training procedures appropriate for each architecture.

- Task Definition: Task each model with reconstructing crystal structures from the test set. In a conditional generation setting, provide target properties (e.g., superconducting critical temperature) as input.

- Evaluation:

- Distribution-level Metrics: Calculate the Kullback-Leibler (KL) divergence between the predicted and reference distributions of lattice parameters. A lower KL divergence indicates a better match to the true data distribution.

- Per-structure Metrics: Calculate the Mean Absolute Error (MAE) of individual lattice constants (a, b, c) between the generated structures and the ground-truth DFT structures.

- Ranking: Rank models based on their aggregate performance across these metrics to determine their inverse design accuracy.

The following workflow diagram illustrates the key steps for training and benchmarking inverse design models:

The Scientist's Toolkit: Essential Research Reagents

In the context of AI-driven materials informatics, "research reagents" refer to the fundamental software, data, and computational resources required to develop and deploy tools like CME and AtomGPT.

Table 3: Key Reagents for AI-Driven Materials Discovery

| Reagent Solution | Function | Example Databases/Tools |

|---|---|---|

| Curated Materials Databases | Provide structured, high-quality data for training AI models and grounding responses. Essential for reducing AI hallucinations. | JARVIS-DFT [47] [49], Materials Project [50] [47], OQMD [47] [52], AFLOW [47] |

| Graph Neural Network (GNN) Models | Act as fast, accurate surrogates for DFT calculations, predicting material properties from atomic structures. | ALIGNN (for formation energy, bandgap) [47], Other GNNs for property prediction [49] |

| Generative Model Architectures | Enable inverse design by learning the conditional probability distribution of crystal structures given target properties. | Transformer (AtomGPT) [48] [49], Diffusion Models (CDVAE) [49], Flow Matching (FlowMM) [49] |

| Benchmarking Frameworks | Provide standardized datasets and metrics to objectively compare the performance of different AI models. | AtomBench [49] |

The emergence of specialized AI tools like ChatGPT Materials Explorer and AtomGPT marks a significant leap forward for computational materials research. By integrating with curated databases and physics-based models, they offer a powerful, efficient, and increasingly reliable means to predict properties and design new materials, thereby accelerating the entire discovery pipeline. The choice between tool types depends on the research goal: chatbot-style assistants like CME lower the barrier to entry for data retrieval and analysis, while generative models like AtomGPT and AlloyGPT offer direct, programmatic pathways for inverse design. As benchmarking efforts like AtomBench mature, they will provide critical guidance for researchers selecting the most appropriate tool, ensuring that AI-driven discoveries are both innovative and robust.