Bridging Quantum and Data: Machine Learning Revolutionizes Density Functional Theory for Biomedical Innovation

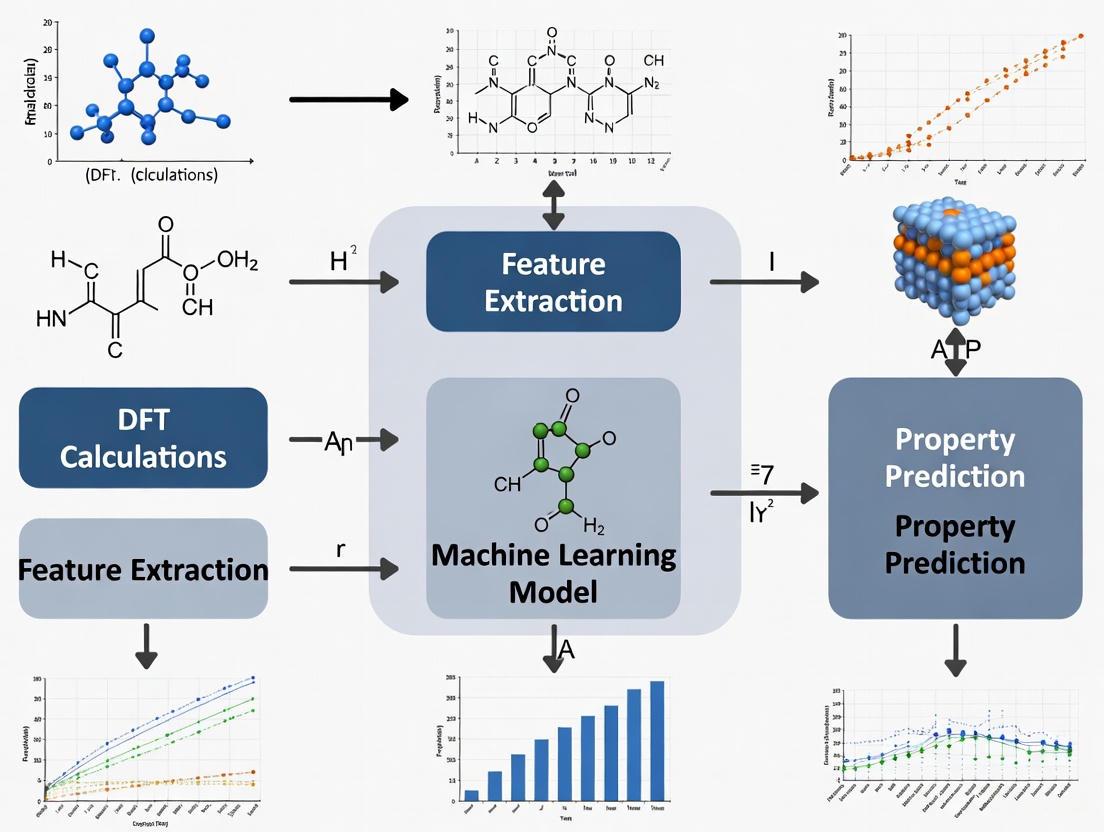

This article explores the transformative integration of Machine Learning (ML) with Density Functional Theory (DFT), a pivotal shift in computational science for biomedical and materials research.

Bridging Quantum and Data: Machine Learning Revolutionizes Density Functional Theory for Biomedical Innovation

Abstract

This article explores the transformative integration of Machine Learning (ML) with Density Functional Theory (DFT), a pivotal shift in computational science for biomedical and materials research. It covers the foundational principles of using ML to bypass the computational bottlenecks of the Kohn-Sham equations, detailed methodologies for emulating electronic properties and learning exchange-correlation functionals, strategies for troubleshooting transferability and data quality, and rigorous validation against high-accuracy benchmarks. Aimed at researchers and drug development professionals, this review synthesizes how these ML-accelerated workflows are enabling the rapid, accurate discovery of novel materials and therapeutic compounds, fundamentally reshaping predictive modeling in the life sciences.

The Quantum-Mechanical Bridge: How ML is Redefining the Foundations of DFT

Density Functional Theory (DFT) has established itself as a cornerstone of computational materials science and drug discovery, enabling the study of electronic structures from first principles. At its core lies the Kohn-Sham (KS) equation, which transforms the intractable many-electron problem into an effective single-electron problem. While this reformulation makes calculations feasible, the computational process of solving these equations—typically through an iterative self-consistent field (SCF) procedure—creates a fundamental bottleneck. This "Kohn-Sham bottleneck" manifests as the high computational cost required to: (1) determine the KS wavefunctions, (2) construct the associated electron density, and (3) solve for the eigenvalues that describe the system's electronic states. The challenge escalates dramatically with system size and complexity, limiting the practical application of DFT to large molecular systems or long-time-scale molecular dynamics simulations relevant to drug development.

Machine learning (ML) presents a paradigm shift for overcoming this bottleneck. By learning the complex mappings between atomic structure and electronic properties directly from data, ML models can emulate key parts of the DFT workflow, bypassing the need for computationally expensive iterative solutions. This article details the latest ML methodologies and protocols designed to overcome the KS bottleneck, enabling accurate and efficient electronic structure calculations for research and development.

Machine Learning Approaches to the Kohn-Sham Challenge

Several distinct ML strategies have emerged to address different aspects of the KS bottleneck. The table below summarizes the primary approaches, their specific targets, and their performance.

Table 1: Machine Learning Approaches for Overcoming the Kohn-Sham Bottleneck

| ML Approach | Computational Target | Key Innovation | Reported Performance & System |

|---|---|---|---|

| Unsupervised Representation Learning [1] | KS Wavefunctions | Uses a Variational Autoencoder (VAE) to compress high-dimensional KS wavefunctions into a low-dimensional latent space (${10}^{3}-{10}^{4}$ times smaller) [1]. | MAE of 0.11 eV for GW quasiparticle energies of 2D metals/semiconductors [1]. |

| End-to-End DFT Emulation [2] | Entire DFT Workflow | Maps atomic structure directly to electron density, then predicts energies, forces, and other properties, bypassing the explicit KS solution [2]. | Chemical accuracy achieved for organic molecules and polymers; orders of magnitude speedup [2]. |

| Learned Exchange-Correlation (XC) Functional [3] [4] | XC Functional | Employs deep learning to create a non-local XC functional (e.g., Skala) trained on high-accuracy quantum data [3]. | Reaches chemical accuracy (<1 kcal/mol) for atomization energies at semi-local DFT cost [3]. |

| On-the-Fly Machine-Learned Force Fields (MLFF) [5] | Forces and Energies for MD | Uses a Gaussian Multipole (GMP) descriptor for efficient, element-count-independent force field learning during molecular dynamics [5]. | Stable, >20 ps MD simulations for multi-element alloys (up to 6 elements) [5]. |

Detailed Experimental Protocols

This section provides detailed methodologies for implementing the key ML approaches described above, providing a practical guide for researchers.

Protocol: Unsupervised Learning of Kohn-Sham States with a VAE

This protocol outlines the procedure for compressing KS wavefunctions using a Variational Autoencoder (VAE) as described in Nature Communications (2024) [1]. The primary goal is to learn a low-dimensional representation that retains the essential physical information of the original, high-dimensional wavefunctions.

Primary Research Application: Creating a compressed, generative representation of electronic structure for use in downstream tasks, such as predicting quasiparticle band structures within the GW formalism.

Materials/Software Requirements:

- Input Data: Kohn-Sham wavefunction moduli, ( {|}{\phi}{n{{\bf{k}}}}\left({{\bf{r}}}\right){|} ), represented on a real-space grid of dimensions ( Rx \times Ry \times Rz ), indexed by band (( n )) and k-point (( {{\bf{k}} )).

- Computational Framework: A deep learning environment (e.g., Python with TensorFlow or PyTorch) capable of building and training convolutional neural networks (CNNs) and VAEs.

- Reference Data: For downstream supervised learning (e.g., GW bandstructures), corresponding quasiparticle energies are required.

Step-by-Step Procedure:

- Data Preparation: Collect the KS wavefunctions from a set of DFT calculations for a range of materials of interest. The wavefunctions should be formatted as 2D or 3D arrays (depending on the system dimensionality).

- VAE Architecture Definition:

- Encoder (( e{\theta} )): Design a network that maps the input wavefunction to a latent distribution.

- Layers 1-2: Implement 2D/3D Convolutional Neural Networks (CNNs) to capture local spatial patterns in the wavefunction.

- Symmetry Enforcement: Use circular padding in CNNs to respect the periodic boundary conditions of the crystal [1].

- Invariance Layer: Insert a Global Average Pooling (GAP) layer after the CNNs to enforce translational invariance with respect to the unit cell's origin [1].

- Output: The encoder outputs two vectors in the latent space: the mean (( {{\mathbf{\mu}}} \in {{\mathbb{R}}}^{p} )) and the logarithm of the variance (( \log {{\mathbf{\sigma}}}^2 )), which define the posterior distribution.

- Decoder (( d{\theta} )): Mirror the encoder structure to reconstruct the input wavefunction from a latent vector sampled from the distribution defined by ( {{\mathbf{\mu}}} ) and ( {{\mathbf{\sigma}}} ).

- Encoder (( e{\theta} )): Design a network that maps the input wavefunction to a latent distribution.

- Loss Function and Training:

- Define the total loss function, ( {\mathscr{L}} ), as a weighted sum of a reconstruction loss and a regularization term:

$${{\mathscr{L}}}=\frac{1}{T}{\sum }_{n{{\bf{k}}}}^{T}{{|||}}{\phi }_{n{{\bf{k}}}}{{|}}-{d}_{\theta }({e}_{\theta }({{|}}{\phi }_{n{{\bf{k}}}}{{|}})){{|}}{{{|}}}^{2} + \beta \cdot D_{KL}(q(z | |{\phi }_{n{{\bf{k}}}})|) | N(0,{{\rm I}}))$$ - The first term is the Mean Squared Error (MSE) between the input and reconstructed wavefunction.

- The second term is the Kullback-Leibler (KL) Divergence, which forces the latent space distribution to approximate a standard normal distribution. The parameter ( \beta ) controls the strength of this regularization.

- Train the VAE using an optimizer (e.g., Adam) by minimizing ( {\mathscr{L}} ) over the training set of KS states.

- Define the total loss function, ( {\mathscr{L}} ), as a weighted sum of a reconstruction loss and a regularization term:

Downstream Application: The trained encoder can be used to convert new KS wavefunctions into their latent representations. These compact vectors can then serve as input to a separate, supervised neural network trained to predict properties like GW quasiparticle energies.

Troubleshooting Tips:

- If reconstruction quality is poor, consider increasing the dimensionality of the latent space (( p )) or adjusting the ( \beta ) parameter.

- To improve the physical smoothness of the latent space, ensure the training data includes KS states from closely spaced k-points.

Protocol: On-the-Fly Machine-Learned Force Field Molecular Dynamics

This protocol describes the implementation of an on-the-fly ML force field based on the Normalized Gaussian Multipole (GMP) descriptor, as published in Journal of Chemical Theory and Computation (2024) [5]. This method is particularly powerful for molecular dynamics (MD) simulations of systems with high chemical complexity.

Primary Research Application: Performing stable, long-time-scale MD simulations without the need for pre-training, automatically generating a force field that scales efficiently with the number of chemical elements.

Materials/Software Requirements:

Step-by-Step Procedure:

- Initialization: Start an MD simulation (e.g., in the NVT or NVK ensemble) using the DFT code. The on-the-fly MLFF will be inactive at this stage.

- Descriptor Calculation (GMP): For each new atomic configuration encountered during the MD trajectory, compute the GMP descriptor for every atom.

- The GMP descriptor represents the atomic environment using a fixed-length vector based on a Gaussian representation of atomic valence densities, independent of the number of chemical elements [5].

- Uncertainty Quantification & Active Learning:

- The ML model (e.g., Bayesian linear regression) uses the GMP features to predict atomic forces and energy and provides a Bayesian uncertainty estimate for its predictions.

- Decision Point: For each MD step, compare the model's uncertainty to a pre-defined threshold.

- Low Uncertainty: Use the MLFF-predicted forces to propagate the dynamics. This is the "fast" path that bypasses the KS bottleneck.

- High Uncertainty: Trigger a full DFT calculation on the current atomic configuration. This new data point is added to the training set, and the ML model is updated. The DFT-calculated forces are used for the MD step.

- Model Update: Retrain the regression model on the updated training set after incorporating new data from high-uncertainty steps.

Validation and Analysis:

- Stability Check: Monitor the total variation distance (TVD) of the pair correlation functions (PCFs) between the MLFF-MD and a reference AIMD trajectory to ensure the model's stability and accuracy [5].

- Property Calculation: Once a stable trajectory is obtained, compute the desired thermodynamic or dynamic properties (e.g., diffusion coefficients, free energies).

Troubleshooting Tips:

- If the number of DFT calls does not decrease over time, the uncertainty threshold may be set too low, or the system may be exploring many novel configurations.

- For systems with severe element segregation, the model may require more data to learn all the unique chemical interactions.

The logical workflow and decision points for this on-the-fly protocol are summarized in the diagram below.

On-the-Fly MLFF Workflow: This diagram illustrates the decision-making process during an on-the-fly machine-learned force field molecular dynamics simulation, showing how the model selectively invokes DFT calculations based on uncertainty.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational "reagents"—software, descriptors, and datasets—essential for implementing the ML-driven DFT workflows discussed in this article.

Table 2: Essential "Research Reagents" for Machine Learning-Enhanced DFT

| Reagent Name/Type | Function/Purpose | Key Features / Relevance to KS Bottleneck |

|---|---|---|

| Variational Autoencoder (VAE) [1] | Compresses high-dimensional KS wavefunctions into a low-dimensional, smooth latent space. | Enables generative modeling of electronic structure; representation is ${10}^{3}-{10}^{4}$ times smaller than input [1]. |

| Gaussian Multipole (GMP) Descriptor [5] | Describes an atom's chemical environment for force prediction. | Fixed-length descriptor; scales efficiently with number of chemical elements, unlike SOAP [5]. |

| Skala Functional [3] | A deep learning-based exchange-correlation (XC) functional. | Learns non-local representations; targets chemical accuracy at the computational cost of semi-local DFT [3]. |

| AGNI Fingerprints [2] | Atomic descriptors representing the structural and chemical environment. | Translation, permutation, and rotation invariant; used as input for predicting charge density and other properties [2]. |

| MSR-ACC/TAE25 Dataset [3] | A high-accuracy dataset of total atomization energies. | Used for training and validating ML-based XC functionals like Skala on chemically accurate data [3]. |

| SPARC / VASP / CASTEP [2] [5] | DFT software packages. | Provide the electronic structure engine for generating reference data and are often the platform for integrating on-the-fly MLFFs [2] [5]. |

The Kohn-Sham bottleneck, long a fundamental constraint in computational chemistry and materials physics, is now being decisively addressed by a new generation of machine learning workflows. The protocols detailed herein—ranging from unsupervised wavefunction learning and end-to-end DFT emulation to robust on-the-fly force fields—demonstrate that it is possible to achieve the accuracy of high-level electronic structure theory at a fraction of the computational cost. For researchers and drug development professionals, these tools unlock new possibilities: screening vast libraries of molecular candidates with chemical accuracy, simulating complex biological processes at atomic resolution, and exploring reaction mechanisms that were previously beyond computational reach. As these ML-driven methodologies continue to mature and integrate, they promise to make predictive, first-principles modeling a routine tool in the quest for new drugs and advanced materials.

Density Functional Theory (DFT) stands as a cornerstone of modern computational chemistry and materials science, enabling the prediction of electronic structure and properties from first principles. The fundamental theorem of DFT states that all ground-state properties of a many-electron system are uniquely determined by its electron density. However, the practical accuracy of DFT calculations hinges on the exchange-correlation (XC) functional, which accounts for quantum mechanical effects not captured in simple electrostatic models. The pursuit of a "universal functional" that delivers chemical accuracy across diverse systems and elements represents a grand challenge in the field.

Traditional approaches to developing XC functionals have followed a heuristics-based paradigm, systematically climbing "Jacob's ladder" by incorporating increasingly complex physical ingredients—from local density (LDA) to generalized gradient approximations (GGA) and hybrid functionals. While this progression has yielded significant improvements, each rung on the ladder introduces greater computational cost without guaranteeing proportional gains in accuracy. Moreover, these functionals still struggle with quantitative prediction of formation enthalpies, band gaps, and reaction barriers, limiting their predictive power for materials discovery and drug development.

The integration of Machine Learning (ML) with DFT has emerged as a transformative pathway to transcend these limitations. By leveraging data-driven approaches, researchers can now develop more accurate and efficient approximations to the universal functional, create machine learning interatomic potentials (MLIPs) that preserve quantum accuracy at reduced computational cost, and establish robust structure-property relationships for accelerated materials design. This Application Note details the protocols and methodologies underpinning these advances, providing researchers with practical frameworks for implementation.

ML-Enhanced XC Functionals: Protocols and Performance

Core Methodology: Energy and Potential Learning

Recent breakthroughs in ML-accelerated DFT have demonstrated that incorporating both energies and quantum potentials during training enables the development of more accurate and transferable XC functionals. This approach, pioneered by Gavini and colleagues, leverages the fact that potentials provide a stronger training foundation than energies alone, as they more sensitively capture subtle electronic variations across chemical systems [4].

Experimental Protocol: ML-XC Functional Development

Data Acquisition: Obtain high-quality quantum many-body (QMB) reference data for small systems (atoms, diatomic molecules) where exact solutions are computationally feasible. The training set should include:

- Total energies from coupled-cluster or quantum Monte Carlo calculations

- Electronic potentials and their spatial variations

- Systems with diverse bonding characteristics (metallic, covalent, ionic)

Feature Engineering: Represent the electron density and its gradients using:

- Local density approximations at grid points

- Density gradient components (∇ρ)

- Kinetic energy density (τ)

- Hartree-Fock exchange fractions for hybrid functionals

Model Architecture: Implement a multi-layer perceptron (MLP) or deep neural network with:

- 3-5 hidden layers with 50-200 neurons per layer

- Swish or ReLU activation functions

- Residual connections to facilitate training depth

- Physical constraints to ensure functional derivatives satisfy sum rules

Training Protocol:

- Use leave-one-out cross-validation to assess transferability

- Apply k-fold cross-validation (k=5-10) to prevent overfitting

- Utilize physically-informed regularization to maintain functional convexity

- Optimize with adaptive moment estimation (Adam) or L-BFGS algorithms

Validation: Benchmark against experimental data for:

- Formation enthalpies of binary and ternary alloys

- Band gaps of semiconductors and insulators

- Reaction energies and barrier heights

- Structural parameters (bond lengths, angles)

Performance Metrics and Comparative Analysis

Table 1: Performance Comparison of Traditional vs. ML-Enhanced DFT Approaches for Formation Enthalpy Prediction

| Method | Training Data | MAE (eV/atom) | Computational Cost | Transferability |

|---|---|---|---|---|

| LDA | N/A | 0.15-0.25 | Low | Moderate |

| GGA (PBE) | N/A | 0.10-0.20 | Low | Moderate |

| Hybrid (HSE06) | N/A | 0.05-0.15 | High | Good |

| ML-XC (Linear Correction) | 50-100 binary alloys | 0.08-0.12 | Low | Limited |

| ML-XC (Neural Network) | 100-200 binary/ternary alloys | 0.03-0.06 | Medium | Good |

| ML-XC (Potential-Enhanced) | 5-10 atoms + simple molecules | 0.02-0.04 | Medium | Excellent |

The ML-enhanced approach demonstrates particular strength in predicting formation enthalpies for ternary systems like Al-Ni-Pd and Al-Ni-Ti, which are crucial for high-temperature applications in aerospace and protective coatings [6]. By learning the systematic errors of traditional DFT, the ML-corrected functionals achieve accuracy接近ing high-level quantum chemistry methods at a fraction of the computational cost.

Machine Learning Interatomic Potentials: Bridging Accuracy and Efficiency

Development Workflow for General NNPs

Machine Learning Interatomic Potentials (MLIPs) represent a powerful alternative to traditional force fields, offering DFT-level accuracy for molecular dynamics simulations of large systems and extended timescales. The EMFF-2025 potential for C, H, N, O-based high-energy materials exemplifies this approach, achieving accurate predictions of structures, mechanical properties, and decomposition characteristics across 20 different molecular systems [7].

Experimental Protocol: MLIP Development via Transfer Learning

Base Model Preparation:

- Start with a pre-trained neural network potential (e.g., Deep Potential framework)

- Ensure the base model captures diverse bonding environments and elemental interactions

- The DP-CHNO-2024 model serves as an effective foundation for energetic materials

Target System Data Generation:

- Perform targeted DFT calculations on new molecular systems not in the original training set

- Sample diverse configurations including:

- Equilibrium crystal structures

- Perturbed geometries (±0.05-0.1 Å atomic displacements)

- Reaction pathways with bond breaking/forming

- High-temperature configurations from ab initio MD

Transfer Learning Implementation:

- Freeze early layers of the neural network preserving general chemical knowledge

- Retrain final layers with new system-specific data (typically 100-500 configurations)

- Use a reduced learning rate (10-50% of original) to fine-tune parameters

- Employ a combined loss function balancing energy and force accuracy

Validation and Deployment:

- Validate against held-out DFT calculations for energies (target MAE < 0.1 eV/atom) and forces (target MAE < 2 eV/Å)

- Test transferability to related chemical systems not included in training

- Deploy for large-scale molecular dynamics simulations (10,000-1,000,000 atoms)

Performance Benchmarking

The EMFF-2025 framework demonstrates that transfer learning with minimal additional data enables the development of highly accurate potentials. Quantitative assessment shows mean absolute errors predominantly within ±0.1 eV/atom for energies and ±2 eV/Å for forces across a wide temperature range [7]. This accuracy permits reliable investigation of thermal decomposition mechanisms and mechanical properties previously inaccessible through conventional force fields or direct DFT-MD.

Descriptor Strategies for Electrocatalyst Design

Descriptor Classification and Selection Guidelines

In ML-accelerated electrocatalyst discovery, descriptors serve as quantitative representations of material features that determine catalytic performance. Three fundamental descriptor classes enable efficient structure-property mapping across different phases of the discovery pipeline [8].

Table 2: Electrocatalysis Descriptor Classes and Their Applications

| Descriptor Class | Key Examples | Data Requirements | Computational Cost | Primary Use Cases |

|---|---|---|---|---|

| Intrinsic Statistical | Magpie features (132 elemental attributes), atomic number, valence electron count | Low (elemental data only) | Very Low | High-throughput initial screening, binary classification (active/inactive) |

| Electronic Structure | d-band center, orbital occupation, spin magnetic moment, Bader charges | Medium (requires DFT) | Medium | Mechanism interpretation, activity trend analysis, fine screening |

| Geometric/Microenvironment | Coordination number, interatomic distances, local strain, site symmetry | High (requires optimized structures) | High | Complex environments (alloys, SACs, DACs), support effect quantification |

Experimental Protocol: Hierarchical Descriptor Implementation

Phase 1: Initial Screening with Intrinsic Descriptors

- Compute 132 Magpie features for candidate elements

- Apply feature selection (variance threshold, correlation analysis)

- Train gradient boosting regressor (GBR) or random forest (RF) models

- Screen 10,000+ compositions in coarse-filter approach

Phase 2: Electronic Descriptor Analysis

- Perform DFT optimization on top 5-10% candidates from Phase 1

- Calculate electronic descriptors: d-band center (εd), projected density of states (PDOS)

- Develop customized composite descriptors (e.g., ARSC for dual-atom catalysts)

- Apply recursive feature elimination to identify minimal predictive descriptor set

Phase 3: Microenvironment Refinement

- For most promising candidates, analyze local coordination environments

- Quantify metal-support interactions, strain effects, solvation models

- Validate descriptor transferability across material classes

Customized Composite Descriptors: The ARSC Framework

For complex catalytic systems like dual-atom catalysts (DACs), customized composite descriptors integrate multiple physical effects into compact, interpretable expressions. The ARSC descriptor framework exemplifies this approach, combining:

- Atomic property effects via d-band shape parameters (ϕxx)

- Reactant-based screening for heteronuclear systems (ϕopt)

- Synergistic effects through physics-guided feature engineering (ϕxy)

- Coordination effects with experimental verification (Φ)

This methodology achieved accuracy comparable to ~50,000 DFT calculations while training on fewer than 4,500 data points, dramatically accelerating the exploration of 840 transition metal DACs for ORR, OER, CO2RR, and NRR [8].

Research Reagent Solutions: Essential Computational Tools

Table 3: Key Software and Methodological "Reagents" for ML-DFT Workflows

| Research Reagent | Type | Primary Function | Application Context |

|---|---|---|---|

| Deep Potential (DP) | MLIP Framework | Generates neural network potentials from DFT data | Large-scale MD with quantum accuracy [7] |

| DP-GEN | Automated Workflow | Implements active learning for MLIP development | Adaptive sampling of configuration space [7] |

| EMTO-CPA | DFT Code | Exact muffin-tin orbital method with coherent potential approximation | Alloy formation enthalpy calculations [6] |

| Skala Functional | ML-XC Functional | Deep-learning-powered exchange-correlation functional | High-accuracy DFT without Jacob's ladder trade-offs [9] |

| ARSC Descriptor | Composite Descriptor | Encodes atomic, reactant, synergistic, and coordination effects | Rapid screening of dual-atom catalysts [8] |

| TDDFT-GPU | GPU Implementation | Time-dependent DFT on massively parallel GPUs | Excited-state calculations for large systems [10] |

Integrated Workflow for Materials Discovery

The most impactful applications of ML-DFT integration combine multiple methodologies into cohesive discovery pipelines. The following workflow exemplifies this integrated approach, synthesizing elements from across the protocols detailed in this document.

This integrated workflow enables researchers to efficiently navigate vast chemical spaces, from initial screening of thousands of candidates to detailed investigation of selected leads with DFT-level accuracy at molecular dynamics scale. The continuous feedback loop ensures iterative improvement of both models and descriptors, accelerating the discovery cycle for advanced materials targeting specific application requirements.

In the framework of density functional theory (DFT), the electron density, denoted as ρ(r), is the fundamental variable that uniquely determines all ground-state properties of an interacting electron system, as established by the Hohenberg-Kohn theorems [11] [12]. This foundational principle enables the replacement of the complex many-body wavefunction with the electron density as the central quantity of interest, significantly simplifying computational approaches. The Kohn-Sham equations transform this theoretical framework into a practical tool by mapping the system of interacting electrons onto a fictitious system of non-interacting electrons moving within an effective potential [13]. This effective potential comprises the external potential (from atomic nuclei), the Hartree potential (electron-electron repulsion), and the exchange-correlation potential, which encapsulates all many-body effects not captured by the other terms [11].

The accuracy of DFT calculations critically depends on the approximations used for the exchange-correlation functional, which remains unknown in its exact form [13]. The hierarchy of approximations ranges from the Local Density Approximation (LDA), which depends only on the local electron density, to Generalized Gradient Approximations (GGA) that incorporate density gradients, meta-GGAs that additionally include the kinetic energy density, and hybrid functionals that mix a portion of exact Hartree-Fock exchange with DFT exchange [12] [13]. The pursuit of more accurate functionals represents an active research frontier, directly impacting the reliability of predicted material properties, reaction mechanisms, and electronic behaviors in computational materials science and drug development [14] [15].

Table 1: Hierarchy of Exchange-Correlation Functionals in DFT

| Functional Type | Dependence | Key Characteristics | Example Functionals |

|---|---|---|---|

| Local Density Approximation (LDA) | Local electron density ρ(r) | Simple, efficient, often over-binds | SVWN [13] |

| Generalized Gradient Approximation (GGA) | ρ(r), ∇ρ(r) | Improved molecular geometries & energies | PBE, BLYP [13] |

| meta-GGA | ρ(r), ∇ρ(r), kinetic energy density | Better for properties sensitive orbital shapes | SCAN [12] |

| Hybrid | ρ(r), ∇ρ(r), exact exchange | Mixes DFT & Hartree-Fock exchange | B3LYP, PBE0 [12] [13] |

Machine Learning Charge Density Prediction with Δ-SAED Protocol

Background and Principle

The accurate prediction of electron density is paramount in DFT, as it forms the basis for calculating all other ground-state properties. Traditional machine learning approaches for charge density prediction have targeted the total charge density (TCD) directly [16]. However, the Δ-SAED method introduces a paradigm shift by leveraging physical prior knowledge. Instead of learning the total charge density from scratch, Δ-SAED learns the difference charge density (DCD), defined as the difference between the TCD and the superposition of atomic electron densities (SAED) [16]. This approach effectively incorporates the physical ansatz that the electron density of a molecular or solid-state system can be reasonably initialized as a sum of isolated atomic densities, with the machine learning model capturing the complex redistribution due to chemical bonding.

This Δ-learning strategy has demonstrated robust improvements in prediction accuracy across diverse benchmark datasets, including organic molecules (QM9), battery cathode materials (NMC), and inorganic crystals (Materials Project) [16]. By reducing the complexity of the function that the neural network must approximate, Δ-SAED enhances data efficiency and model transferability, which is particularly valuable when training data is limited or when applying models to unseen chemical spaces in high-throughput screening applications.

Detailed Δ-SAED Protocol

Objective: To accurately predict the ground-state electron density of a molecular or solid-state system using a machine learning model trained on difference charge density.

Workflow Overview: The diagram below illustrates the integrated machine learning and DFT workflow for the Δ-SAED protocol:

Step-by-Step Procedures:

Reference Data Generation via DFT:

- Perform DFT calculations using established codes (Quantum ESPRESSO, VASP, CP2K) for a diverse set of atomic structures relevant to your research domain [17].

- Critical Step: During the calculation setup, ensure the output includes not only the final self-consistent total charge density (ρ(t)) but also the initial superposition of atomic electron densities (ρ(a)) used to start the calculation.

- Compute the difference charge density (DCD, ρ(d)) for each structure according to: ρ(d)(r) = ρ(t)(r) - ρ(a)(r). This ρ(d) becomes the target for machine learning training [16].

Model Training:

- Architecture Selection: Employ an E(3)-equivariant neural network architecture, such as Charge3Net, which incorporates high-order messages to accurately capture complex chemical environments [16]. Grid-based methods are recommended over basis-based methods for their higher accuracy, despite increased computational cost [16].

- Training Target: Optimize model parameters by minimizing the loss function between predicted DCD (ρ̂(d)) and true DCD (ρ(d)) from DFT. The mean absolute error (MAE) metric is commonly used [16]:

ε_mae = [∫_Ω d³r |ρ(r) - ρ̂(r)|] / [∫_Ω d³r ρ_t(r)] × 100%

Prediction and Reconstruction:

- For a new atomic structure, first compute its SAED (ρ(a)) using the same atomic densities as in training.

- Pass the structure through the trained model to obtain the predicted DCD (ρ̂(d)).

- Reconstruct the predicted total charge density by adding the SAED to the predicted difference: ρ̂(t)(r) = ρ(a)(r) + ρ̂(d)(r) [16].

Validation and Quality Control:

- Benchmark the predicted charge densities against held-out DFT calculations.

- For non-self-consistent (single-shot) DFT calculations using the ML-predicted density, verify that derived properties (e.g., formation energies, band structures) achieve chemical accuracy compared to fully self-consistent DFT results [16].

- Monitor the radial distribution of prediction errors to ensure the model correctly captures both short-range (near nuclei) and long-range (bonding regions) electronic interactions.

Table 2: Performance of Δ-SAED vs Traditional TCD Learning on Benchmark Datasets

| Dataset | System Type | MAE Reduction with Δ-SAED | Structures with Improved Accuracy |

|---|---|---|---|

| QM9 | Organic Molecules | Significant reduction [16] | >99% [16] |

| NMC | Battery Cathode Materials | Significant reduction [16] | >99% [16] |

| Materials Project | Inorganic Crystals | Significant reduction [16] | ~90% [16] |

| Si Allotropy | Silicon Polymorphs | Enables transferability to chemical accuracy for derived properties [16] | Nearly 100% for non-self-consistent calculations [16] |

Automated DFT Workflows for High-Throughput Screening

Workflow Architecture and Design Principles

Automated DFT workflows are computational frameworks designed to manage, execute, and document high volumes of DFT calculations with minimal manual intervention [17]. These workflows are essential for robust and reproducible computational research, particularly in machine learning where large, consistent datasets are required. The architecture is typically layered and modular, often implemented in Python and built on workflow engines like AiiDA, JARVIS-Tools, or pyiron [17]. Key design principles include engine-agnostic interfaces (compatible with multiple DFT codes like VASP, Quantum ESPRESSO, CP2K), protocol-driven calculations (using standardized "fast," "moderate," and "precise" settings), and comprehensive provenance tracking to ensure full reproducibility [17].

A representative automated workflow for high-throughput screening might encompass structure generation, parameter convergence, job submission, error handling, and post-processing analysis. The diagram below illustrates such a workflow:

Protocol for High-Throughput Screening

Objective: To systematically screen a large number of materials structures for target properties (e.g., band gaps, adsorption energies, formation energies) using automated DFT workflows.

Step-by-Step Procedures:

Structure Generation and Input Preparation:

- Structure Sources: Import structures from databases (Materials Project, COD) or generate them using algorithms for defect enumeration, surface slab creation, or substitutional doping [17].

- Input Parameter Convergence: Automatically converge critical parameters including k-point mesh density and plane-wave cutoff energy. The workflow should iteratively refine these parameters until threshold changes in total energy (e.g., < 1 meV/atom) are achieved [17].

- Standardization: Utilize standardized input objects (e.g., ASE Atoms class, OPTIMADE format) to ensure consistency across different DFT codes [17].

Job Execution and Error Handling:

- HPC Integration: Interface natively with HPC schedulers (SLURM, PBS) for job submission, monitoring, and resubmission [17].

- Robust Error Handling: Implement automated handlers for common DFT errors:

- SCF non-convergence: Increase electronic step limits, switch mixing algorithms (e.g., from Pulay to RMM-DIIS), or modify mixing parameters [17].

- Geometry optimizer stalls: Change optimization algorithms (e.g., from BFGS to FIRE) or adjust step sizes [17].

- Wall-time failures: Implement automatic checkpointing and restarts [17].

Post-processing and Property Extraction:

- Property Computation: Automatically calculate target properties such as band structures, density of states (DOS), elastic constants, Bader charges, and formation energies [17].

- Thermodynamic Analysis: Perform energy-volume equation of state fitting (e.g., using Birch-Murnaghan formalism) and compute thermodynamic properties under the quasi-harmonic approximation (QHA) if needed [17].

- Validation: Implement automated validation checks comparing calculated properties to experimental data or high-fidelity references where available [17].

Data Management and Provenance:

- Structured Storage: Deposit all inputs, outputs, and calculated properties into structured databases (SQL, NoSQL, HDF5) with comprehensive metadata indexing [17].

- Provenance Tracking: Ensure full reproducibility by recording a complete provenance graph of all calculations, including code versions, parameters, and intermediate results [17].

Table 3: Key Computational Tools and Resources for DFT-ML Research

| Tool Category | Specific Examples | Function and Application |

|---|---|---|

| DFT Codes | VASP, Quantum ESPRESSO, CP2K, CASTEP | Perform the core quantum mechanical calculations to generate reference electronic structure data and properties [17]. |

| Workflow Managers | AiiDA, pyiron, JARVIS-Tools | Automate calculation workflows, ensure reproducibility, and manage computational provenance [17]. |

| ML Charge Density Models | Charge3Net, Δ-SAED method | Predict accurate electron densities for structures, enabling rapid non-self-consistent property calculations [16]. |

| Exchange-Correlation Functionals | PBE (GGA), SCAN (meta-GGA), B3LYP (Hybrid) | Define the approximation for the exchange-correlation energy, critically determining calculation accuracy [12] [13]. |

| Basis Sets | Plane Waves, Gaussian Basis Sets (cc-pVTZ, pc-n) | Represent the Kohn-Sham orbitals; choice affects convergence and accuracy, with large sets needed for accurate densities [12]. |

| Structure Manipulation | Pymatgen, ASE | Create, manipulate, and analyze atomic structures; crucial for input preparation and post-processing [17]. |

Applications in Nanomaterials and Drug Development Research

The integration of DFT with machine learning is particularly impactful in the field of nanomaterials research and drug development. ML-driven charge density models and automated workflows enable the rapid screening and design of novel nanomaterials with tailored electronic, catalytic, and optical properties [15]. Specific applications include predicting band gaps for optoelectronic materials, calculating adsorption energies for catalytic applications, and elucidating reaction mechanisms at nanomaterial surfaces [15].

In drug development contexts, DFT-based molecular dynamics (AIMD) simulations provide insights into drug-receptor interactions, solvation effects, and reaction pathways in complex biomolecular systems [14]. The combination of these accurate but expensive simulations with machine learning potentials has opened new possibilities for simulating larger systems and longer timescales, directly impacting rational drug design [14] [15]. Furthermore, the calculation of NMR and EPR parameters using relativistic DFT provides crucial spectroscopic information that can be directly compared with experimental results, aiding in compound characterization and verification [14].

Why Now? The Convergence of Big Quantum Data and Advanced Algorithms

The integration of quantum computing into machine learning workflows, particularly for domains like Density Functional Theory (DFT), is transitioning from theoretical exploration to practical application. This shift is driven by a critical convergence of three factors: the emergence of large-scale, high-quality quantum data from advanced hardware; significant algorithmic breakthroughs that leverage this data; and the maturation of the software and control systems needed to run these workflows efficiently and reliably. For researchers in drug development and materials science, this creates an unprecedented opportunity to tackle computational problems that have historically been intractable for classical computers alone, such as highly accurate molecular simulations. The following application notes and protocols detail the quantitative evidence, experimental methodologies, and essential tools enabling this transition.

Quantitative Landscape: Market and Performance Data

The following tables summarize key quantitative data that underscores the rapid advancement and commercial potential of quantum technologies in scientific domains.

Table 1: Quantum Technology Market Projections and Investment (2024-2035)

| Metric | 2024 Value | 2035 Projection | Key Context & Sources |

|---|---|---|---|

| Total Quantum Tech (QT) Market | Not Specified | Up to $97B [18] | Encompasses computing, sensing, and communication. |

| Quantum Computing Market | ~$4B [18] | $28B - $72B [18] | Captures the bulk of the QT revenue. |

| Quantum Sensing Market | Not Specified | $7B - $10B [18] | |

| Value in Life Sciences | Not Specified | $200B - $500B [19] | Specific to quantum computing in pharma R&D. |

| Annual QT Start-up Funding | ~$2.0B [18] | N/A | 50% increase from $1.3B in 2023 [18]. |

| Public QT Funding (Gov't) | $1.8B (announced) [18] | N/A | Japan announced a further $7.4B in 2025 [18]. |

Table 2: Documented Quantum Application Performance (2024-2025)

| Application Area | Organization | Quantum System Used | Reported Performance / Milestone |

|---|---|---|---|

| Financial Trading | HSBC | IBM Heron [20] | 34% improvement in bond trading predictions vs. classical alone [20]. |

| Engineering Simulation | Ansys | IonQ [20] | 12% speedup in fluid interaction analysis for medical devices [20]. |

| Production Logistics | Ford Otosan | D-Wave [20] | Reduced scheduling times from 30 minutes to under 5 minutes; deployed in production [20]. |

| Chemical Simulation | IBM & RIKEN | IBM Heron + Fugaku Supercomputer [20] | Simulated molecules "beyond the ability of classical computers alone" at utility scale [20]. |

| Computer Calibration | Quantum Machines | QUAlibrate Framework [21] | Reduced calibration of superconducting qubits from hours to 140 seconds [21]. |

| Algorithm Speed | Google Quantum AI | DQI Algorithm (Theoretical) [22] | Certain optimization problems require ~million quantum ops vs. >10^23 classical ops [22]. |

Experimental Protocols for Quantum-Enhanced DFT and ML Workflows

This section provides detailed methodologies for key experiments and workflows that integrate quantum computing with machine learning for molecular simulation.

Protocol 1: Quantum-Accelerated Computational Chemistry Workflow

This protocol is adapted from industry collaborations, such as that between AstraZeneca, Amazon Web Services, and IonQ, to demonstrate a quantum-accelerated workflow for studying chemical reactions relevant to drug synthesis [19].

- Objective: To model the energy profile of a chemical reaction using a hybrid quantum-classical computational chemistry workflow.

Materials & Prerequisites:

- Classical Computing Resources: High-performance computing (HPC) cluster for pre- and post-processing.

- Quantum Computing Access: Cloud access to a quantum processing unit (QPU) or advanced simulator (e.g., via Amazon Braket, IBM Cloud).

- Software Stack: Python with libraries like Qiskit or Pennylane for quantum circuit definition, and classical computational chemistry software (e.g., PySCF).

- Target Molecule: A small molecule or reaction intermediate (e.g., for a Suzuki–Miyaura cross-coupling reaction).

Procedure:

- System Preparation (Classical):

- Define the molecular geometry of reactants and products.

- Use classical methods (e.g., Hartree-Fock) to generate an initial guess for the molecular Hamiltonian.

- Active Space Selection: Identify a subset of molecular orbitals and electrons most relevant to the chemical process (e.g., using a classical Complete Active Space SCF calculation).

- Hamiltonian Mapping (Classical):

- Transform the electronic Hamiltonian of the selected active space into a qubit representation using a fermion-to-qubit mapping (e.g., Jordan-Wigner or Bravyi-Kitaev).

- Quantum Circuit Execution (Hybrid):

- Algorithm Selection: Employ the Variational Quantum Eigensolver (VQE) algorithm.

- Ansatz Design: Construct a parameterized quantum circuit (ansatz) suitable for chemical systems, such as the Unitary Coupled Cluster (UCC) ansatz.

- Parameter Optimization: On the classical computer, use an optimizer (e.g., COBYLA, SPSA) to variationally minimize the energy expectation value. Each optimization step requires multiple executions of the parameterized quantum circuit on the QPU to estimate the energy.

- Result Analysis (Classical):

- Use the optimized parameters to compute the final, high-accuracy ground state energy.

- Compare the quantum-computed reaction energy profile with results from classical methods like Density Functional Theory (DFT) and experimental data.

- System Preparation (Classical):

Protocol 2: Quantum Machine Learning for Molecular Property Prediction

This protocol outlines a hybrid quantum-classical machine learning approach for predicting molecular properties, leveraging methodologies explored by companies like Merck KGaA and Amgen in collaboration with QuEra [19].

- Objective: To train a Quantum Neural Network (QNN) to predict the biological activity of a drug candidate based on its molecular descriptors.

Materials & Prerequisites:

- Dataset: A curated set of molecules with known biological activity (e.g., IC50 values). Molecular descriptors or fingerprints must be precomputed.

- Quantum Computing Access: Cloud-based QPU or simulator with machine learning libraries (e.g., TensorFlow Quantum, Pennylane).

- Software: Python with scikit-learn for classical ML baseline, and a QML library.

Procedure:

- Data Preprocessing (Classical):

- Standardize the molecular descriptor data (e.g., normalize features, handle missing values).

- Split the dataset into training, validation, and test sets.

- Data Encoding (Quantum):

- Design a feature map to encode classical molecular descriptor data (vector ( x )) into a quantum state ( |\phi(x)\rangle ). This can be achieved using gates like Pauli rotations (RX, RY, RZ).

- Model Construction (Hybrid):

- Construct a Variational Quantum Circuit (VQC), also known as a Quantum Neural Network.

- The circuit typically consists of the fixed feature map followed by a parameterized circuit (e.g., composed of layers of rotational gates and entangling gates).

- Model Training (Hybrid):

- Define a cost function (e.g., mean squared error for regression, cross-entropy for classification).

- Use a classical optimizer to tune the parameters of the VQC. The quantum device evaluates the cost function for given parameters, and the classical optimizer suggests updates.

- Model Evaluation (Classical):

- Use the trained model to make predictions on the held-out test set.

- Benchmark the performance (e.g., R² score, ROC-AUC) against classical machine learning models like Random Forests or Gradient Boosting.

- Data Preprocessing (Classical):

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Tools for Quantum-Enhanced DFT and ML Research

| Item / Solution | Category | Function & Application |

|---|---|---|

| QUAlibrate [21] | Control Software | An open-source framework that automates and drastically reduces quantum computer calibration time, essential for maintaining QPU performance for long-running chemistry simulations [21]. |

| Qiskit [23] [24] | Software SDK | An open-source full-stack SDK for creating, simulating, and running quantum circuits on IBM hardware or simulators. Includes Qiskit Machine Learning for building QML models [23]. |

| TensorFlow Quantum [24] | Software Library | A library for prototyping hybrid quantum-classical ML models. Enables the integration of quantum circuits and models with the classical TensorFlow ecosystem [24]. |

| PennyLane [23] [24] | Software Library | A cross-platform Python library for differentiable quantum computing, allowing seamless training of quantum circuits using classical automatic differentiation, ideal for VQE and QNNs [23]. |

| Amazon Braket / IBM Cloud | Cloud Platform | Provide cloud-based access to simulators and various QPU backends (e.g., from Rigetti, OQC, IonQ, IBM), lowering the barrier to entry for experimental workflows [23]. |

| Variational Quantum Eigensolver (VQE) | Algorithm | A leading hybrid quantum-classical algorithm for finding approximate eigenvalues of molecular Hamiltonians, making it a cornerstone for near-term quantum chemistry [25]. |

| Quantum Support Vector Machine (QSVM) | Algorithm | A quantum-enhanced kernel method for classification that can efficiently handle high-dimensional feature spaces, potentially useful for classifying molecular properties [23] [25]. |

| Error Suppression & Mitigation | Software/Technique | Techniques (e.g., those developed by Q-CTRL, or embedded in vendor SDKs) to reduce the impact of noise on current-generation "noisy" quantum processors, improving result fidelity [18]. |

Critical Pathways: Error Correction and Quantum Control

The viability of complex simulations hinges on the stability and accuracy of quantum computations. Recent breakthroughs in quantum error correction (QEC) and control are therefore foundational to "Why Now?"

- The Problem: Physical qubits are sensitive to environmental noise, leading to high error rates that corrupt calculations [20].

- The Solution - QEC: QEC uses multiple physical qubits to form one more-stable logical qubit. By continuously measuring syndromes to detect errors and applying real-time corrections, the integrity of the logical qubit is maintained [18] [20].

- Recent Progress (2024-2025): In 2024, Google's Willow chip demonstrated significant advancements in error correction with a low error rate [18]. Companies including IBM, Quantinuum, and QuEra have all announced new QEC architectures and logical processors, indicating that building a fault-tolerant quantum computer is now primarily an engineering challenge [18] [20]. This progress directly enables the longer, more complex computations required for accurate DFT simulations.

From Theory to Practice: A Guide to ML-DFT Workflows and Their Biomedical Applications

Density Functional Theory (DFT) has established itself as the cornerstone of computational materials science and drug discovery, providing essential insights into electronic structures that govern material and molecular properties. The integration of machine learning (ML) with DFT has emerged as a transformative approach, overcoming DFT's traditional computational bottlenecks and enabling investigations at unprecedented scales. This application note details protocols for constructing end-to-end ML-driven DFT emulation frameworks, validated through case studies in energetic materials and pharmaceutical design. We present quantitative performance benchmarks, standardized workflows for system mapping and property prediction, and a comprehensive toolkit for researchers. The documented methodologies achieve up to three orders of magnitude speedup while maintaining DFT-level accuracy, opening new frontiers in predictive materials modeling and rational drug design.

Density Functional Theory revolutionized computational chemistry and materials science by formulating electronic structure calculations in terms of electron density rather than complex wavefunctions [26]. This fundamental principle enables the prediction of material and molecular properties from first principles, making DFT an indispensable tool across scientific disciplines. In pharmaceutical research, DFT provides quantum mechanical precision for studying drug-receptor interactions, molecular reactivity, and material properties at electronic scales [27] [28]. However, conventional DFT calculations face significant computational constraints due to their cubic scaling with system size, typically limiting routine applications to systems of a few hundred atoms [26].

Machine learning frameworks now circumvent these limitations through local environment mapping and neural network surrogates. By leveraging the "nearsightedness" of electronic interactions—where local electronic structure depends primarily on nearby atomic environments—ML models can predict electronic properties with DFT-level accuracy while achieving linear scaling [26]. This paradigm shift enables electronic structure calculations for systems containing hundreds of thousands of atoms, bridging atomic-scale interactions with macroscopic material behaviors.

Computational Frameworks for DFT Emulation

Foundational ML-DFT Architectures

Local Density of States (LDOS) Learning Framework: The Materials Learning Algorithms (MALA) package implements an end-to-end workflow where bispectrum coefficients encode atomic positions relative to each point in real space, and neural networks map these descriptors to the local density of states [26]. This approach separates the problem into local mappings, enabling parallel processing and system-size independence. The LDOS encodes the local electronic structure and serves as the fundamental quantity from which observables like electronic density, density of states, and total free energy are derived [26].

Neural Network Potentials (NNPs) for Energetic Materials: The EMFF-2025 framework demonstrates a specialized approach for C, H, N, O-based high-energy materials (HEMs), leveraging transfer learning to achieve DFT-level accuracy in predicting structures, mechanical properties, and decomposition characteristics [7]. Built upon the Deep Potential (DP) scheme, this model combines high accuracy with computational efficiency, enabling large-scale molecular dynamics simulations of complex reactive processes [7].

Active Learning Integration: Simple Active Learning (SAL) workflows implement on-the-fly training of ML potentials during molecular dynamics simulations, continuously improving model accuracy through targeted DFT calculations [29]. This approach combines the efficiency of ML potentials with the accuracy of reference DFT calculations, automatically identifying configurations where the model requires refinement and retraining accordingly [29].

Quantitative Performance Benchmarks

Table 1: Accuracy Benchmarks for ML-DFT Frameworks

| Framework | System Type | Energy MAE | Force MAE | Property Predictions | Reference |

|---|---|---|---|---|---|

| EMFF-2025 | C,H,N,O HEMs | < 0.1 eV/atom | < 2.0 eV/Å | Mechanical properties, decomposition pathways | [7] |

| MALA (Beryllium) | Metallic systems | - | - | Electronic density, free energy, forces | [26] |

| B3LYP Functional | Molecular drugs | ~2.2 kcal/mol (atomization) | - | Geometries, transition barriers, ionization | [28] |

Table 2: Computational Efficiency Comparisons

| Method | System Size | Calculation Time | Scaling Behavior | Hardware Requirements |

|---|---|---|---|---|

| Conventional DFT | 256 atoms | Reference | ~N³ | High-performance computing |

| MALA ML-DFT | 131,072 atoms | 48 minutes | ~N | 150 standard CPUs |

| EMFF-2025 | 20 HEMs | Efficient screening | - | - |

Protocol: End-to-End DFT Emulation Workflow

System Preparation and Descriptor Calculation

Step 1: Atomic Configuration Preprocessing

- Generate initial atomic configurations through molecular dynamics or experimental coordinates

- Apply periodic boundary conditions appropriate for the target system

- For crystalline materials, include defect structures and surfaces relevant to properties of interest

Step 2: Descriptor Computation

- Calculate bispectrum coefficients for each point in real space using LAMMPS integration [26]

- Set cutoff radius consistent with the nearsightedness principle (typically 4-6 Å)

- Encode atomic density within local environments using order parameters that respect physical symmetries

Neural Network Training and Validation

Step 3: Initial Model Training

- Implement feed-forward neural networks using PyTorch framework [26]

- Train on diverse reference systems (256 atoms for bulk materials)

- Use energy and force predictions from DFT calculations as training targets

- Employ stratified sampling to ensure adequate representation of different bonding environments

Step 4: Active Learning Implementation

- Integrate Simple Active Learning workflow for molecular dynamics simulations [29]

- Configure triggering criteria for reference DFT calculations (uncertainty thresholds, regular intervals)

- Implement rewind mechanism to continue from last accurate simulation point

- Set convergence criteria for model accuracy and property prediction stability

Property Prediction and Validation

Step 5: Electronic Structure Analysis

- Compute electronic density and density of states from predicted LDOS [26]

- Derive total free energy and atomic forces for molecular dynamics simulations

- Calculate band structures and density of states for crystalline materials

Step 6: Experimental Validation

- Compare predicted crystal structures and mechanical properties with experimental data [7]

- Validate thermal decomposition pathways against experimental observations

- Benchmark electronic properties against spectroscopic measurements

Application in Pharmaceutical Sciences

Drug Design and Optimization

DFT calculations provide critical insights for pharmaceutical development by elucidating electronic interactions between drug molecules and biological targets. The exceptional accuracy of DFT (approximately 0.1 kcal/mol) enables precise reconstruction of molecular orbital interactions, facilitating rational drug design [27].

Table 3: DFT Applications in Drug Development

| Application Area | DFT Methodology | Key Parameters | Impact on Drug Development |

|---|---|---|---|

| API-Excipient Compatibility | Fukui function analysis | Reactive site identification | Guided stability-oriented co-crystal design [27] |

| Nanodrug Delivery Systems | van der Waals & π-π stacking calculations | Interaction energies | Optimized carrier surface distribution [27] |

| Solubility & Release Kinetics | COSMO solvation models | ΔG solvation | Controlled-release formulation design [27] |

| Reaction Mechanism Elucidation | Transition state modeling | Activation energies | Enzyme inhibition optimization [28] |

COVID-19 Research Applications

DFT has played a critical role in pandemic response through rapid screening of therapeutic candidates. Researchers have employed DFT to study amino acids as immunity boosters, identify arginine as particularly effective, and analyze tetrazole derivatives for anti-COVID-19 activity [28]. These applications demonstrate DFT's versatility in addressing emergent health challenges through electronic structure analysis.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Computational Tools for DFT Emulation

| Tool/Category | Specific Implementation | Function | Application Context |

|---|---|---|---|

| DFT Engines | Quantum ESPRESSO, ADF, BAND | Reference calculations | Provide training data for ML potentials [26] [29] |

| ML Potential Frameworks | MALA, EMFF-2025, DP-GEN | Surrogate model training | Large-scale property prediction [7] [26] |

| Descriptor Calculators | LAMMPS | Atomic environment encoding | Convert atomic positions to feature vectors [26] |

| Active Learning Workflows | Simple Active Learning (SAL) | On-the-fly training | Self-improving MD simulations [29] |

| Force Field Integrators | ONIOM, M3GNet | Multiscale simulations | Hybrid QM/MM calculations [27] |

| Analysis Packages | PyTorch, scikit-learn | Model evaluation | Performance validation [26] |

Future Perspectives and Development

The integration of machine learning with DFT continues to evolve, with several emerging trends shaping future developments. Hybrid methodologies that combine ML efficiency with DFT accuracy are expanding to more complex systems, including heterogeneous interfaces and disordered materials. Deep learning approaches are being applied directly to approximate kinetic energy density functionals, potentially overcoming fundamental limitations of traditional exchange-correlation functionals [27]. The development of transferable potential frameworks, demonstrated by EMFF-2025's application across multiple high-energy materials, points toward more generalized models that maintain accuracy across diverse chemical spaces [7].

As these methodologies mature, ML-enhanced DFT emulation will increasingly serve as the foundation for predictive materials science and pharmaceutical development, enabling first-principles accuracy at scales previously inaccessible to computational investigation. This paradigm shift promises to accelerate the design cycle for functional materials and therapeutic compounds, fundamentally transforming computational discovery processes.

Learning the Exchange-Correlation Functional with Neural Networks

Density Functional Theory (DFT) is a cornerstone computational method for solving the many-body Schrödinger equation, with unparalleled impact across quantum chemistry, materials science, and drug discovery [30] [31]. Its practical success hinges entirely on the exchange-correlation (XC) functional, which encapsulates complex electron interactions. The exact form of this functional remains unknown, forcing approximations and limiting accuracy, particularly for systems with strong electron correlations [32].

Traditional approaches to developing XC functionals, like the Local Density Approximation (LDA) and Generalized Gradient Approximation (GGA), rely on heuristic rules and analytic solutions to specific limits. These forms are inherently inflexible, making it difficult to incorporate new numerical data from high-level quantum theories [30]. Machine learning (ML), particularly neural networks (NNs), offers a path beyond these constraints. NN XC functionals provide a universal, highly flexible framework for interpolation and approximation, capable of learning directly from data generated by quantum Monte Carlo or post-Hartree-Fock methods, promising a new frontier of accuracy in DFT [30] [31].

This document details the application notes and protocols for constructing, training, and implementing neural network-based exchange-correlation functionals, contextualized within a broader research thesis on machine learning-enhanced DFT workflows.

Neural Network Architectures for XC Functionals

The design of the neural network architecture is critical for accurately representing the physical relationship between the electron density and the XC functional. The following table compares the primary architectures explored in recent literature.

Table 1: Comparison of Neural Network Architectures for XC Functionals

| Architecture Name | Input Features | Output(s) | Key Features & Advantages | Example System Tested |

|---|---|---|---|---|

| Point-to-Point (LDA-like) [30] | Electron density ((n)) at a single point in space. | XC potential ((v{xc})) or energy density ((\epsilon{xc})) at that point. | Simple, fully-connected network; mirrors locality of LDA. | 3D inhomogeneous electron gas in a harmonic oscillator potential. |

| Region-to-Point (GGA-like) [30] | Electron density in a 5×5×5 cube surrounding a point (enables gradient calculation). | XC potential ((v{xc})) or energy density ((\epsilon{xc})) at the central point. | Captures inhomogeneity; learns gradients without explicit feature engineering. | 3D inhomogeneous electron gas; Crystalline Silicon. |

| Two-Component (NN-E & NN-V) [31] | NN-E: (n), (\sigma) (gradient squared).NN-V: (\epsilon_{xc}), (n), (\sigma), (\gamma), (\nabla^2 n). | NN-E: (\epsilon{xc}).NN-V: (v{xc}). | Separates energy and potential; ensures correct physical link; "economical" for memory. | Crystalline silicon, benzene, ammonia, atoms/molecules from IP13/03 dataset. |

| Differentiable Neural Functional (Grad DFT) [32] | Features for a given approximation (e.g., for GGA: (n), (\sigma)). | Exchange-correlation energy ((E_{xc})). | Fully differentiable library (JAX); enables end-to-end training against energies and properties. | Experimental dissociation energies of dimers, including transition metals. |

The logical flow and data transformation within a Two-Component NN architecture can be visualized as follows:

The workflow for developing and deploying an NN XC functional, from data generation to self-consistent calculation, is outlined below:

Performance and Validation

Trained NN XC functionals must be quantitatively evaluated against traditional functionals and reference data. The following table summarizes key performance metrics from documented experiments.

Table 2: Quantitative Performance of NN XC Functionals on Test Systems

| NN Functional Type | Training System | Test System | Key Metric: MAE (vs. Reference) | Performance Summary |

|---|---|---|---|---|

| NN LDA [30] | 3D electron gas (harmonic potential) | Crystalline Silicon (diamond) | Vxc MAE: 0.6 mHa × Bohr³ | Excellent agreement with reference Octopus data. |

| NN GGA [30] | 3D electron gas (harmonic potential) | Crystalline Silicon (diamond) | Vxc MAE: 18.1 mHa × Bohr³ | Reasonable agreement; errors in high-density-variation regions. |

| Two-Component NN [31] | Crystalline Si, Benzene, NH3 (PBE) | Atoms/Molecules (IP13/03 dataset) | Total Energy Relative Error: ~0.01% | Small error on unseen data; functional works in self-consistent cycle. |

Experimental Protocols

Protocol 1: Data Generation for Training

Objective: To generate a high-quality dataset of electron densities and their corresponding XC potentials/energies for training NN models.

Materials:

- Software: A real-space DFT code like Octopus [30] [31].

- XC Functional: A standard functional (e.g., PBE [31] or LDA [30]) to generate target data.

- System Selection: A diverse set of systems (e.g., 3D electron gases in harmonic potentials [30], simple atoms, molecules like benzene and ammonia, and crystalline solids like silicon [31]).

Procedure:

- Define Calculation Parameters:

- For each system, define the simulation box (e.g., a 40 × 40 × 40 Bohr parallelepiped).

- Set a real-space grid (e.g., 32 × 32 × 32 points, corresponding to a ~1.25 Bohr spacing).

- Run DFT Calculations:

- Perform a self-consistent field (SCF) calculation for each system using the chosen standard XC functional.

- Upon convergence, output the final electron density ((n(\mathbf{r}))) and the corresponding XC potential ((v_{xc}(\mathbf{r}))) for every grid point in the system.

- Calculate Additional Features (for GGA and beyond):

- From the electron density grid, compute its derivatives:

- (\sigma = |\nabla n|^2) (squared gradient)

- (\gamma = \langle \nabla \sigma, \nabla n \rangle)

- (\nabla^2 n) (Laplacian)

- From the electron density grid, compute its derivatives:

- Dataset Assembly:

Protocol 2: Two-Component NN Training

Objective: To train a two-component neural network that separately predicts the XC energy density and the XC potential while preserving their physical relationship [31].

Materials:

- Software: A deep learning framework like TensorFlow/PyTorch.

- Dataset: Generated from Protocol 1.

Procedure: Stage 1: Pre-train the NN-V Component

- Fix NN-E: Do not train NN-E at this stage. Use the reference (\epsilon_{xc}^{PBE}) from your dataset as input to NN-V.

- Configure NN-V Inputs: For each point, feed the following features into NN-V: reference (\epsilon_{xc}), (n), (\sigma), (\gamma), and (\nabla^2 n).

- Train NN-V: Minimize a loss function (e.g., Mean Squared Error) between the NN-V output ((v{xc}^{predicted})) and the reference potential ((v{xc}^{PBE})) from the dataset. The goal is to teach NN-V the precise mapping from energy density to potential.

- Freeze NN-V: Once NN-V is trained, freeze its weights.

Stage 2: Train the NN-E Component

- Connect the Network: The output of NN-E ((\epsilon_{xc}^{predicted})) is now fed as input to the frozen NN-V.

- Define the Composite Loss Function: The total loss for training NN-E is a weighted sum of:

- Potential Loss: ((v{xc}^{PBE} - v{xc}^{predicted})^2). This ensures the final potential is accurate.

- Boundary Condition Losses:

- ((\epsilon_{xc}^{predicted}(n \to 0) - 0)^2): Ensures energy density vanishes as density vanishes.

- ((\epsilon{xc}^{predicted}(\sigma \to 0) - \epsilon{xc}^{LDA}(n))^2): Ensures the functional reduces to LDA where the density is uniform.

- Train NN-E: Minimize the composite loss function by updating only the weights of NN-E. This trains NN-E to produce an energy density that, when passed through the physically-grounded NN-V, yields a correct potential and obeys key physical constraints.

Protocol 3: Self-Consistent Implementation and Testing

Objective: To integrate a trained NN XC functional into a DFT code and run self-consistent calculations to validate its performance and transferability.

Materials:

- DFT Platform: A code that allows for user-defined XC functionals, such as Octopus.

- Trained NN Model: The final model from Protocol 2.

Procedure:

- Integration:

- Implement a wrapper routine in the DFT code that, given an electron density grid (n(\mathbf{r})), calls the trained NN model to compute (v_{xc}(\mathbf{r})) at every point.

- Self-Consistent Cycle:

- Initialize the electron density (e.g., from superposition of atomic densities).

- Begin the Kohn-Sham cycle. Instead of calling a standard LibXC functional, use the NN wrapper to compute the XC potential.

- Construct the Kohn-Sham Hamiltonian, solve for the orbitals, and compute a new electron density.

- Repeat until the density or total energy converges.

- Validation:

- Energy Accuracy: Compare the total energy and XC energy from the NN-driven calculation against results from high-level theories or experimental data (e.g., dissociation energies of dimers [32]).

- Transferability: Test the NN functional on systems not included in the training set (e.g., new molecules or materials) to assess its generalizability [30] [31].

- Property Prediction: Evaluate the model's performance on predicting properties beyond total energy, such as forces, dipole moments, and electronic eigenvalues.

The Scientist's Toolkit

Table 3: Essential Software and Data Resources for NN XC Functional Development

| Resource Name | Type | Primary Function | Relevant Citation |

|---|---|---|---|

| Octopus | Software | Real-space DFT code used for generating training data and implementing NN XC functionals in self-consistent field calculations. | [30] [31] |

| Grad DFT | Software | A fully differentiable, JAX-based library for quick prototyping and training of machine learning-enhanced XC energy functionals. | [32] |

| LibXC | Software | A standard library of exchange-correlation functionals; used to generate target data for pre-training stages and for benchmark comparisons. | [31] |

| TensorFlow / PyTorch | Software | Deep learning frameworks used for constructing, training, and deploying neural network models for the XC functional. | [30] |

| Quantum Monte Carlo / Post-HF Data | Data | High-precision data from advanced electronic structure methods, serving as the ultimate target for training highly accurate NN functionals. | [30] [31] |

| Quantum Chemical Databases (e.g., IP13/03) | Data | Curated datasets of molecular properties (e.g., energies) for validating the transferability and accuracy of developed NN functionals. | [31] |

Atom-Centered Fingerprints and Density Descriptors for Model Input

In machine learning for chemistry and materials science, descriptors transform raw atomic Cartesian coordinates into a numerical representation that encodes essential invariances and physical properties. The accuracy, speed, and reliability of machine learning interatomic potentials (MLIPs) depend strongly on this choice of input representation. Effective descriptors must be invariant to fundamental symmetries: translation and rotation of the entire system, and permutation of like atoms. Atom-centered fingerprints and electronic density descriptors have emerged as powerful classes of representations that fulfill these requirements while capturing the local chemical environment or global electronic structure critical for predicting material properties.

Atom-centered descriptors typically encode the local atomic environment around a central atom using a structural representation, while electronic density descriptors capture features related to the electron density distribution. These representations enable machine learning models to bypass the explicit, computationally expensive solution of the Kohn-Sham equations in Density Functional Theory (DFT), achieving orders of magnitude speedup while maintaining chemical accuracy. This protocol details the application of these descriptors within DFT-machine learning workflows.

Atom-Centered Symmetry Functions and Fingerprints

Definition and Purpose

Atom-centered fingerprints are fixed-length numerical vectors that describe the chemical environment surrounding each atom in a structure. They serve as input for machine learning models that predict atomic-scale properties, effectively replacing the explicit calculation of electronic structure in DFT. Their primary function is to convert the atomic configuration into a machine-readable format that respects physical symmetries.

Key Methodologies and Protocols

Automated Fingerprint Selection Protocol: A critical advancement is the automated selection of optimal fingerprints from a large pool of candidates. The following protocol, adapted from Imbalzano et al., streamlines the construction of neural network potentials [33]:

- Generate a Large Candidate Pool: Create an extensive initial set of symmetry functions or fingerprint candidates. These often include radial functions (describing the density of neighboring atoms at various distances) and angular functions (describing the angular distribution of atom triplets).

- Compute Correlation Matrix: Calculate the intrinsic correlations between all fingerprint candidates across the training dataset of atomic configurations.

- Select Informative Subset: Identify a minimal subset of fingerprints that maintains low mutual correlation while maximally spanning the variability in the training data. This prevents redundancy and overfitting.

- Validate and Balance: Evaluate the selected subset on a validation set to ensure the resulting ML potential strikes the desired balance between computational efficiency and predictive accuracy.

AGNI Fingerprints Workflow: The AGNI (Atom-Centered Neural Network) fingerprints represent a specific implementation widely used for creating ML force fields [2]. The protocol for their application in an ML-DFT framework is as follows:

- Input: Atomic configuration of the system.

- Descriptor Calculation: For each atom

i, the fingerprint is computed by summing Gaussian functions centered on neighboring atomsjwithin a cutoff radius. The functions incorporate interatomic distancesR_ijand can be extended to include angular information via three-body terms involving atomsjandk. - Output: A translation, permutation, and rotation-invariant vector for each atom

i, describing its local chemical environment.

Application Notes

Automated fingerprint selection can greatly simplify the construction of neural network potentials and accelerate the evaluation of Gaussian approximation potentials (GAP) based on the smooth overlap of atomic positions (SOAP) kernel [33]. These fingerprints have been successfully applied to diverse systems, including water, Al-Mg-Si alloys, and small organic molecules [33] [2].

Electronic Density-Based Descriptors

Density of States (DOS) Similarity Descriptor

The DOS provides a comprehensive summary of a material's electronic structure. A tailored fingerprint has been developed to facilitate quantitative comparison of DOS spectra across different materials [34].

Protocol: Constructing a DOS Fingerprint

This protocol transforms a continuous DOS, ρ(ε), into a binary-valued 2D map [34].

- Energy Reference Shift: Shift the energy spectrum so that the reference energy (e.g., the Fermi level) is at

ε_ref = 0. - Non-Uniform Energy Discretization: Integrate the DOS over

N_εintervals of variable width,Δε_i, to create a histogram{ρ_i}. The interval widths are defined to provide finer discretization around the feature region (|ε| < W, whereWis a tunable parameter) and coarser discretization away from it. This focuses the descriptor on physically relevant energy regions.Δε_i = n(ε_i, W, N) * Δε_minwherenis an integer-valued function that increases from 1 to a maximumNas|ε|increases beyondW. - Histogram Intensity Discretization: Discretize each column