Beyond Thermodynamics: The Critical Role of Convex-Hull Stability in Predicting Synthesizable Materials and Pharmaceuticals

This article explores the pivotal role of convex-hull stability analysis in predicting the synthesizability of new materials and pharmaceutical polymorphs.

Beyond Thermodynamics: The Critical Role of Convex-Hull Stability in Predicting Synthesizable Materials and Pharmaceuticals

Abstract

This article explores the pivotal role of convex-hull stability analysis in predicting the synthesizability of new materials and pharmaceutical polymorphs. We cover foundational principles, from defining the energy above hull as a key metric to its interpretation for thermodynamic stability. The piece delves into advanced computational methodologies, including machine learning and active learning, that are revolutionizing stability prediction. It also addresses critical challenges like vibrational instability and the overprediction problem, offering troubleshooting strategies and optimization techniques. Finally, we provide a framework for validating predictions, comparing different model performances, and translating computational results into successful experimental synthesis, with specific implications for drug development and biomedical research.

The Bedrock of Stability: Understanding Convex-Hull Fundamentals and Thermodynamic Principles

Defining the Convex Hull and Energy Above Hull in Phase Diagrams

In the pursuit of novel materials and compounds, a fundamental challenge for researchers is predicting whether a target phase is thermodynamically stable—and therefore likely synthesizable—or metastable. The convex hull, derived from thermodynamic potentials, provides the definitive mathematical framework for answering this question. Within materials science and drug development, the convex hull of a phase diagram identifies the set of stable phases at specific compositions, while the "energy above hull" (often denoted Ehull) quantifies the degree of metastability for any phase not on this hull [1] [2]. This guide details the theory, computation, and application of these concepts, framing them within the emerging research paradigm that uses quantitative stability metrics to rationally guide synthesis efforts.

Core Theoretical Concepts

The Convex Hull: A Geometric Foundation for Thermodynamic Stability

The convex hull of a set of points is the smallest convex set that contains all points [3]. In thermodynamics, these "points" are the Gibbs free energies of various phases across a composition space. The convex hull is the lower envelope of these energies, defining the minimum possible energy for any given composition [1] [4].

Formation Energy Precursor: The construction of a compositional phase diagram begins with calculating the formation energy (ΔEf) for every known compound in a chemical system. For a phase composed of N components, this is given by: ΔEf = E − ∑iN ni μi where E is the total energy of the phase, ni is the number of moles of component i, and μi is the energy per atom of the pure component i (e.g., elemental references) [1]. This energy is typically normalized per atom.

The Hull Construction: The convex hull is taken over the set of points in (energy, composition) space [1]. Graphically, for a binary system, one can imagine stretching a rubber band below all the (composition, energy) data points; the shape formed by the rubber band is the convex hull [4]. Phases whose energies lie directly on this hull are considered thermodynamically stable at 0 K, meaning they have no driving force to decompose into other phases [1] [2].

Energy Above Hull: Quantifying Metastability

The energy above hull (Ehull) is a critical metric defined as the vertical energy distance from a phase's formation energy to the convex hull at its specific composition [5]. It represents the decomposition energy—the energy released (per atom) when a metastable phase decomposes into a combination of the stable phases on the hull [1] [5].

A phase with an Ehull = 0 meV/atom is stable. A positive Ehull indicates a metastable phase. The magnitude of Ehull indicates the energy penalty associated with its metastability; a higher value generally implies a greater driving force for decomposition and, thus, potentially greater synthetic challenge [6]. However, phases with positive Ehull can often be synthesized, as kinetics and other factors play a significant role [6].

Table: Interpretation of Energy Above Hull Values

| Ehull (meV/atom) | Thermodynamic Stability | Synthesizability Implication |

|---|---|---|

| 0 | Stable | Synthesizable under equilibrium conditions |

| 0 < Ehull ≤ ~25 | Metastable | Often synthesizable (kinetic stabilization) |

| ~25 < Ehull ≤ ~100 | Metastable | Challenging to synthesize |

| > ~100 | Highly Unstable | Unlikely to be synthesizable via conventional means |

Computational Methodology and Workflows

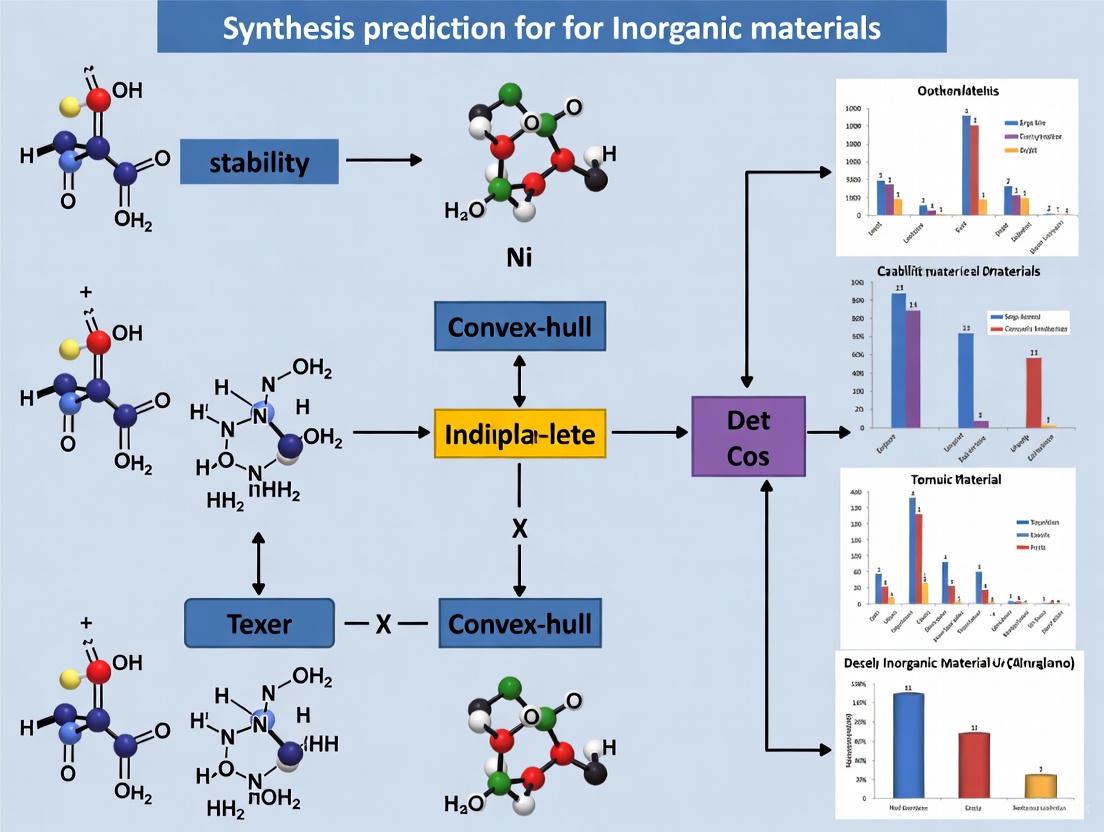

The following diagram illustrates the logical workflow for constructing a phase diagram and determining phase stability using the convex hull method.

Standard Convex Hull Construction

The foundational methodology involves density functional theory (DFT) calculations to compute the formation energies of all known compounds in a chemical system. The convex hull is then constructed from these energies, typically at 0 K and 0 atm, to determine stable phases and decomposition energies [1]. The pymatgen code snippet below demonstrates this standard approach:

Code: Standard phase diagram construction using pymatgen and the Materials Project API [1].

Advanced Protocol: Convex Hull-Aware Active Learning (CAL)

For complex systems where exhaustive energy calculation is prohibitively expensive, a novel Bayesian active learning algorithm can be employed. Convex Hull-Aware Active Learning (CAL) directly minimizes the uncertainty in the convex hull itself, rather than in the entire energy landscape, leading to greater efficiency [7] [8].

Detailed CAL Methodology:

- Probabilistic Modeling: Model the energy surface of each phase using a Gaussian Process (GP), which provides a posterior distribution over possible energy surfaces given initial observations [7] [8].

- Hull Sampling: Sample from the GP posterior to generate an ensemble of possible energy surfaces. Compute the convex hull for each sampled surface using a standard algorithm like QuickHull [7].

- Uncertainty Quantification: This ensemble of hulls represents the epistemic uncertainty about the true convex hull. The probability of a composition being stable is estimated by the fraction of sampled hulls on which it lies [7].

- Optimal Data Selection: The next composition to observe (via expensive DFT calculation) is chosen as the one that provides the maximum expected information gain about the convex hull, dramatically reducing the number of calculations needed to resolve it [7] [8].

Table: Essential Research Reagents & Computational Tools

| Item / Software | Function in Stability Research |

|---|---|

| VASP / Quantum ESPRESSO | First-Principles DFT code for calculating accurate formation energies. |

| pymatgen | Python library for phase diagram construction, analysis, and materials data. |

| Materials Project API | Source of pre-computed formation energies for a vast array of compounds. |

| Gaussian Process Regression | Core statistical model for Bayesian uncertainty quantification in CAL. |

| QuickHull Algorithm | Standard computational geometry algorithm for efficient convex hull calculation. |

Advanced Analysis and Research Applications

Determining Decomposition Products and Pathways

A phase not on the convex hull will decompose into the set of stable phases that define the hull at its composition. The decomposition reaction is found by determining the linear combination of stable phases that minimizes the energy at the target composition. For example, the oxynitride BaTaNO₂ is calculated to be 32 meV/atom above the hull, with decomposition products: ⅔ Ba₄Ta₂O₉ + ⁷⁄₄₅ Ba(TaN₂)₂ + ⁸⁄₄₅ Ta₃N₅ [5]. The energy above hull is calculated using the normalized (eV/atom) energies of these phases with their respective coefficients [5].

Beyond 0 K: The Entropy Challenge and Finite-Temperature Estimates

A critical limitation of standard convex hull analysis is its basis in internal energy at 0 K. Temperature-dependent entropic effects (vibrational, configurational, electronic) can significantly alter phase stability [6]. While Materials Project data is primarily at 0 K, its Phase Diagram app includes a finite-temperature estimation feature that uses machine-learned descriptors for the Gibbs free energy, providing a crucial, though approximate, view of temperature-dependent stability [6].

The convex hull and energy above hull provide an unambiguous thermodynamic foundation for predicting phase stability. The Ehull value serves as a powerful, quantitative descriptor for high-throughput screening of material databases, flagging promising candidate materials for synthesis [6]. Research now focuses on integrating these thermodynamic metrics with kinetic factors to build more comprehensive synthesizability models [6].

Future research directions include the wider adoption of Convex Hull-Aware Active Learning (CAL) and other Bayesian methods for efficient exploration of complex chemical spaces, particularly for high-entropy materials, liquids, and correlated systems [7] [8]. These approaches promise to deliver not only a predicted hull but also a quantitative measure of uncertainty, enabling end-to-end uncertainty quantification in the emerging paradigm of computational materials design and accelerating the discovery of novel, synthesizable materials.

The discovery and synthesis of new inorganic materials represent a central pursuit in solid-state chemistry, capable of driving significant scientific and technological advancements. While high-throughput computational methods now generate millions of candidate crystal structures, determining which are experimentally accessible remains a critical bottleneck. This whitepaper examines the central role of convex hull stability in synthesis prediction research, contrasting its thermodynamic completeness with its kinetic limitations. We demonstrate that while thermodynamic stability assessed through convex hull construction provides a essential first-principles filter for material viability, it often fails to account for the experimental reality of kinetic trapping—where metastable phases with favorable formation pathways can be synthesized despite thermodynamic instability. Through analysis of current synthesizability prediction models, experimental validation studies, and emerging network-based approaches, we provide a framework for integrating both thermodynamic and kinetic considerations into a unified synthesizability assessment pipeline, ultimately bridging the gap between computational prediction and experimental realization.

The combinatorics of materials discovery present an immense challenge. Considering just combinations of four elements from approximately 80 technologically relevant elements yields roughly 1.6 million quaternary chemical spaces to explore, before even considering stoichiometric variations or crystal structure possibilities [9]. While computational materials discovery methods have reached maturity, generating vast databases of predicted candidate structures through active learning and density functional theory (DFT) calculations, the number of proposed inorganic crystals now exceeds experimentally synthesized compounds by more than an order of magnitude [10].

The central challenge in computational materials discovery has shifted from generating candidate structures to predicting synthesizability—determining which computationally predicted materials can actually be fabricated in laboratory settings. The principal limitation of current approaches lies in their fundamental reliance on thermodynamic stability assessed through convex hull construction at zero Kelvin, which inherently overlooks the finite-temperature effects, entropic factors, and kinetic considerations that govern synthetic accessibility in experimental environments [10] [11]. This theoretical-experimental gap manifests clearly in materials databases: for example, the Materials Project lists 21 SiO₂ structures within 0.01 eV of the convex hull, yet cristobalite (β-quartz), the second most common SiO₂ phase, is not among these 21 theoretically "stable" structures [10].

This whitepaper examines the critical intersection between thermodynamic stability and kinetic trapping within materials synthesis prediction research. We explore how convex hull stability provides necessary but insufficient conditions for experimental synthesis, survey emerging computational frameworks that integrate kinetic and synthetic accessibility metrics, and provide methodological guidance for researchers navigating the transition from computational prediction to experimental realization.

The Convex Hull: Thermodynamic Foundation

Fundamental Principles and Construction

In computational materials science, the convex hull represents the multidimensional surface formed by the lowest energy combination of all phases in a chemical space [12]. The construction of this hull begins with the calculation of formation enthalpies (ΔHf) for all known and hypothetical compounds within a specific chemical system using density functional theory (DFT). When these energies are plotted against composition, the convex hull emerges as the lower convex envelope lying beneath all points in the composition space [9].

Compositions lying directly on this hull are considered thermodynamically stable, meaning they possess lower formation energies than any combination of other phases at the same overall composition. The vertical distance between a compound's formation energy and the convex hull defines its energy above hull (ΔHd), which quantifies its thermodynamic instability relative to competing phases [9]. This energy difference, typically on the order of 0.06 ± 0.12 eV/atom (much smaller than the typical formation energy range of -1.42 ± 0.95 eV/atom), represents the subtle energetic quantity that ultimately determines thermodynamic stability [9].

Table 1: Key Energy Metrics in Stability Assessment

| Metric | Definition | Typical Range | Interpretation |

|---|---|---|---|

| Formation Energy (ΔHf) | Energy to form compound from elements | -1.42 ± 0.95 eV/atom | Tendency to form from elements |

| Decomposition Enthalpy (ΔHd) | Distance to convex hull | 0.06 ± 0.12 eV/atom | Thermodynamic stability |

| Energy Above Hull | Positive ΔHd values | 0-0.5 eV/atom (typical) | Degree of instability |

The convex hull construction generates what can be conceptualized as a materials stability network—a scale-free network where stable materials represent nodes connected by tie-lines (edges) representing two-phase equilibria [12]. This network perspective reveals that certain phases act as "hubs" with significantly more connections than others, with O₂, Cu, H₂O, H₂, C, and Ge emerging as the most highly connected species in the current stability network [12].

Algorithmic Implementation and Active Learning

Recent advances have addressed the computational challenge of convex hull determination through novel algorithms like Convex Hull-Aware Active Learning (CAL). This Bayesian algorithm selects experiments to minimize uncertainty in the convex hull construction, prioritizing compositions near the hull while leaving significant uncertainty in compositions quickly determined to be hull-irrelevant [13]. This approach allows the convex hull to be predicted with significantly fewer energy calculations than methods focusing solely on energy prediction [13].

The following diagram illustrates the conceptual relationship between formation energy, the convex hull, and material stability:

Beyond Thermodynamics: The Kinetic Trapping Phenomenon

Theoretical Framework

Kinetic trapping represents the experimental reality that metastable materials—those with positive energy above hull—can often be synthesized and persist indefinitely under appropriate conditions. This phenomenon occurs when kinetic barriers prevent the system from reaching the thermodynamic ground state, effectively trapping it in a local energy minimum [10] [11].

The relationship between thermodynamic stability and kinetic accessibility manifests through several mechanisms:

- Precursor Selection: The choice of starting materials can create favorable local energy landscapes that direct synthesis toward metastable products through low-energy nucleation pathways [11].

- Reaction Kinetics: Finite-temperature synthesis conditions introduce entropic and kinetic factors that may favor metastable phases with lower activation energies for formation, despite their thermodynamic instability [10].

- Synthetic Technique: Advanced synthesis methods (e.g., thin-film deposition, solution-based techniques) can access metastable phases unreachable through conventional solid-state reactions [11].

The following experimental workflow illustrates how synthesizability prediction integrates both thermodynamic and kinetic considerations:

Network-Based Synthesizability Prediction

An innovative approach to synthesizability prediction emerges from analyzing the temporal dynamics of the materials stability network. By combining convex hull data with historical discovery timelines extracted from citation records, researchers have shown that the likelihood of a hypothetical material being synthesized correlates with its position within the evolving network [12].

This network-based analysis reveals that the materials stability network has evolved into a scale-free topology with a degree distribution following a power law (p(k) ~ k^(-γ), with γ ≈ 2.6±0.1 after the 1980s), similar to other complex networks like the world-wide-web or social networks [12]. This network topology indicates the presence of highly connected "hub" materials that disproportionately influence synthesizability, with oxygen-bearing materials historically acting as dominant hubs [12].

Six key network properties have been identified as predictive features for synthesizability [12]:

- Degree centrality: Number of tie-lines connected to a material

- Eigenvector centrality: Influence of a material in the network

- Mean shortest path length: Average distance to all other materials

- Mean degree of neighbors: Connectivity of neighboring materials

- Clustering coefficient: Interconnectedness of a material's neighbors

Computational Frameworks for Synthesizability Prediction

Integrated Compositional and Structural Models

Current state-of-the-art approaches for synthesizability prediction integrate complementary signals from both composition and crystal structure through multi-modal machine learning frameworks [10]. These models address the fundamental limitation of purely thermodynamic assessments by learning the complex relationship between material characteristics and experimental accessibility.

The integrated model developed in recent research uses two specialized encoders [10]:

- Compositional Encoder: A fine-tuned compositional MTEncoder transformer (fc) that processes stoichiometric information and elemental properties

- Structural Encoder: A graph neural network fine-tuned from the JMP model (fs) that analyzes crystal structure graphs

These encoders transform inputs into latent representations (zc = fc(xc; θc) and zs = fs(xs; θs)), which then feed separate multi-layer perceptron heads that output synthesizability scores. The models are trained end-to-end on binary classification tasks using data from the Materials Project, with labels determined by the presence or absence of experimental entries in the Inorganic Crystal Structure Database (ICSD) [10].

Table 2: Machine Learning Approaches for Stability and Synthesizability Prediction

| Model Type | Key Features | Strengths | Limitations |

|---|---|---|---|

| Compositional Models | Elemental stoichiometry and properties [9] | Fast screening of unknown compositions [9] | Poor stability prediction (cannot distinguish polymorphs) [9] |

| Structural Models | Crystal structure graphs [10] [9] | Accurate stability assessment [9] | Requires known crystal structure [9] |

| Integrated Models | Composition + structure [10] | State-of-the-art synthesizability prediction [10] | Computational complexity |

| Network Models | Position in stability network [12] | Captures historical discovery patterns [12] | Limited to explored chemical spaces [12] |

Performance Assessment and Validation

When evaluating machine learning models for materials discovery, it is crucial to distinguish between formation energy prediction and stability prediction. While numerous models approach DFT accuracy for formation energy prediction, they often perform poorly on stability prediction because DFT benefits from systematic error cancellation when comparing energies of chemically similar compounds, while ML models typically do not [9].

This performance gap has significant practical implications: compositional ML models exhibit high rates of false positives, predicting many materials as stable that DFT calculations indicate are unstable [9]. This limitation substantially impedes their utility for efficient materials discovery, particularly in sparse chemical spaces where few stoichiometries have stable compounds [9].

Experimental Validation and Case Studies

Synthesizability-Guided Discovery Pipeline

Recent research has demonstrated an integrated synthesizability-guided pipeline that successfully bridged the computational-experimental gap [10]. This approach screened 4.4 million computational structures through a multi-stage process:

- Initial Screening: 1.3 million structures calculated to be synthesizable through convex hull analysis

- High-Synthesizability Filter: Selection of candidates with >0.95 rank-average synthesizability score

- Compositional Filtering: Removal of platinoid-group elements, non-oxides, and toxic compounds

- Retrosynthetic Planning: Application of precursor-suggestion models (Retro-Rank-In) and temperature prediction models (SyntMTE) trained on literature-mined synthesis recipes

This pipeline yielded approximately 500 high-priority candidates, from which 24 targets were selected for experimental validation [10]. Through high-throughput automated synthesis, 16 samples were successfully characterized, with 7 matching the target structure—including one completely novel compound and one previously unreported structure [10]. The entire experimental process from computational selection to characterization was completed in just three days, demonstrating the practical efficiency of synthesizability-guided approaches [10].

The Critical Role of Synthesis Planning

A significant limitation of convex hull stability emerges in its inability to provide guidance on synthesis parameters—which precursors to use, what reaction temperatures and times are optimal, or which atmospheric conditions are required [11]. This has prompted research into text-mining synthesis recipes from literature to build predictive models for synthesis planning.

However, critical analysis of text-mined synthesis data has revealed substantial challenges in the "4 Vs" of data science [11]:

- Volume: 31,782 solid-state recipes represent limited coverage of chemical space

- Variety: Significant biases in researched material families

- Veracity: Extraction errors and reporting inconsistencies

- Velocity: Historical data lacks information on novel synthesis techniques

Despite these limitations, the most valuable insights from text-mined data have come from anomalous recipes that defy conventional synthetic intuition, leading to new mechanistic hypotheses about how solid-state reactions proceed [11].

Research Reagent Solutions

Table 3: Essential Computational and Experimental Resources

| Resource Category | Specific Tools | Function | Access |

|---|---|---|---|

| Computational Databases | Materials Project [10], GNoME [10], Alexandria [10], OQMD [12] | Source of DFT-calculated structures and energies | Public web platforms |

| Synthesis Databases | Text-mined synthesis recipes [11] | Training data for synthesis prediction models | Research access |

| Stability Analysis | Convex hull construction algorithms [13] [12] | Determine thermodynamic stability | Integrated in databases |

| Synthesizability Models | Compositional & structural ML models [10] | Predict experimental accessibility | Research implementations |

| Synthesis Planning | Retro-Rank-In [10], SyntMTE [10] | Predict precursors and conditions | Research implementations |

| Experimental Validation | High-throughput robotics [10], Automated XRD [10] | Rapid synthesis and characterization | Specialist facilities |

The convex hull remains an essential foundation for materials stability assessment, providing critical thermodynamic constraints on synthesizability. However, the experimental reality of kinetic trapping necessitates moving beyond purely thermodynamic considerations to integrated models that account for synthetic accessibility, precursor selection, and kinetic pathways.

The most promising approaches combine compositional and structural information with historical synthesis data and network-based analysis to create synthesizability scores that better align with experimental outcomes. As these models mature, they increasingly bridge the gap between computational prediction and experimental realization, as demonstrated by recent success in synthesizing novel compounds identified through synthesizability-guided pipelines.

Future progress will require improved synthesis databases that address current limitations in volume, variety, veracity, and velocity, alongside more sophisticated models that explicitly incorporate kinetic barriers and synthetic pathway analysis. Through these advances, the research community can increasingly leverage the power of computational materials discovery while navigating the complex interplay between thermodynamic stability and kinetic trapping that ultimately determines synthetic success.

The Overprediction Problem in Crystal Structure Prediction

Computational materials discovery has generated millions of hypothetical crystal structures through databases like the Materials Project, GNoME, and Alexandria, surpassing the number of experimentally synthesized compounds by more than an order of magnitude [10]. The central challenge in this field is the overprediction problem – the tendency of computational methods to generate far more plausible crystal structures than are actually experimentally accessible. This problem creates significant inefficiencies in materials discovery, as researchers may waste resources attempting to synthesize theoretically favored structures that cannot be practically realized [14].

The prevailing approach to screening candidate materials has relied heavily on density functional theory (DFT) calculations of thermodynamic stability, typically using the convex-hull distance (energy above the convex hull) as the primary filter [10]. While this method constitutes a useful first filter, it typically overlooks finite-temperature effects, namely entropic and kinetic factors, that govern synthetic accessibility [10]. This fundamental limitation has created a pressing need for more accurate synthesizability assessments to efficiently steer scientists toward compounds that are readily accessible in the laboratory [10].

Root Causes of Overprediction

Limitations of Thermodynamic Stability Metrics

The convex-hull stability approach operates effectively at 0 Kelvin but fails to account for critical factors that determine synthesizability at experimental conditions:

- Neglect of kinetic factors: Energy barriers between polymorphs can prevent access to thermodynamically favored structures [14]

- Entropic effects: Finite-temperature free energy landscapes differ significantly from 0K potential energy surfaces [14]

- Synthetic pathway complexity: The availability of suitable precursors and reaction pathways fundamentally determines synthesizability [15]

The disconnect is evident in real systems: The Materials Project lists 21 SiO₂ structures within 0.01 eV of the convex hull, yet the second most common phase (cristobalite) is not among these 21 [10]. Similarly, numerous structures with favorable formation energies remain unsynthesized, while various metastable structures with less favorable formation energies are successfully synthesized [15].

Finite-Temperature Effects on Energy Landscapes

At the heart of the overprediction problem lies the discrepancy between potential energy surfaces (used in conventional CSP) and free energy surfaces (relevant to experimental crystallization):

- Potential energy surfaces are much rougher than free energy surfaces [14]

- Thermal energy allows minima separated by small energy barriers (on the order of kT) to coalesce into single free energy basins [14]

- Free energy basins typically correspond not to a single potential energy minimum but rather an ensemble of minima [14]

This coalescence effect means that conventional CSP methods that treat each local minimum as a potentially observable polymorph inevitably overpredict the number of accessible structures.

Computational Approaches to Reduce Overprediction

Synthesizability-Guided Frameworks

Recent advances integrate complementary signals from composition and crystal structure to predict synthesizability more accurately:

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Approach | Accuracy/Performance | Key Features |

|---|---|---|---|

| Combined Compositional & Structural Score [10] | Integrated ML model with composition & structure encoders | Successfully synthesized 7 of 16 predicted targets | Rank-average ensemble of composition and structure predictions |

| Crystal Synthesis LLM (CSLLM) [15] | Fine-tuned large language models on crystal structures | 98.6% accuracy on test data | Predicts synthesizability, synthetic methods, and precursors |

| Threshold Clustering [14] | Monte Carlo sampling with energy thresholds | Significant reduction in predicted polymorphs for benzene, acrylic acid, resorcinol | Groups minima into finite-temperature basins |

| Positive-Unlabeled Learning [15] | Semi-supervised ML on known and theoretical structures | 87.9% accuracy for 3D crystals | Identifies non-synthesizable structures from large theoretical databases |

Unified Synthesizability Model Architecture

A state-of-the-art synthesizability model integrates compositional and structural information through a dual-encoder architecture [10]:

- Compositional encoder: Fine-tuned MTEncoder transformer operating on stoichiometry and elemental properties

- Structural encoder: Graph neural network fine-tuned from the JMP model processing crystal structure graphs

- Rank-average ensemble: Combines predictions from both encoders via Borda fusion for enhanced ranking

This approach screened 4.4 million computational structures, identified 1.3 million as synthesizable, and ultimately led to successful experimental synthesis of 7 out of 16 characterized targets within just three days [10].

Large Language Models for Synthesizability Prediction

The Crystal Synthesis Large Language Model (CSLLM) framework demonstrates how domain-adapted LLMs can transform synthesizability prediction [15]:

- Specialized components: Three dedicated LLMs for synthesizability prediction, synthetic method classification, and precursor identification

- Material string representation: Efficient text representation integrating essential crystal information (space group, lattice parameters, atomic coordinates)

- Balanced dataset: 70,120 synthesizable structures from ICSD + 80,000 non-synthesizable structures identified via PU learning

The CSLLM framework significantly outperforms traditional stability-based screening, achieving 98.6% accuracy compared to 74.1% for energy-above-hull (≥0.1 eV/atom) and 82.2% for phonon stability thresholds [15].

Finite-Temperature Clustering Methods

Threshold Clustering Workflow

The threshold algorithm addresses overprediction by accounting for the coalescence of potential energy minima at finite temperatures [14]:

Diagram 1: Threshold clustering workflow for reducing CSP overprediction. This method groups minima separated by small energy barriers into finite-temperature basins.

The threshold clustering workflow operates on the original CSP energy surface, eliminating ambiguity regarding the connection between the reduced structure set and the original landscape [14]. This method has demonstrated significant reductions in predicted polymorphs for model systems including benzene, acrylic acid, and resorcinol.

Molecular Dynamics for Free Energy Clustering

Alternative approaches use molecular dynamics and enhanced sampling simulations to group CSP structures into free energy clusters [14]. While physically rigorous, these methods have not been widely adopted due to their complexity in both simulation and analysis, and limitations in using common MD force fields rather than the more accurate energy models typically required for CSP [14].

Topological and Physical Descriptor Approaches

For molecular crystals, the CrystalMath approach demonstrates how mathematical principles can predict stable structures without reliance on interatomic interaction models [16]:

- Principal axis alignment: Molecules orient such that principal inertial axes align with specific crystallographic directions

- Ring plane vector alignment: Normal vectors to chemically rigid subgraphs align with crystallographic planes

- Geometric order parameters: Heavy atoms occupy positions corresponding to minima of geometric order parameters

This topological approach, combined with filtering based on van der Waals free volume and intermolecular close contact distributions from the Cambridge Structural Database, enables prediction of stable structures entirely mathematically without force field dependencies [16].

Experimental Protocols and Validation

High-Throughput Experimental Validation

Rigorous validation of synthesizability predictions requires high-throughput experimental workflows:

Table 2: Experimental Synthesis and Characterization Methods

| Method | Application | Implementation Details | Output Metrics |

|---|---|---|---|

| Solid-State Synthesis [10] | Inorganic crystal synthesis | Precursor grinding and calcination in muffle furnace | Phase purity, crystallinity |

| X-ray Diffraction [10] | Phase identification | Automated XRD characterization | Structure matching, phase identification |

| Retrosynthetic Planning [10] | Synthesis pathway prediction | Retro-Rank-In precursor suggestion + SyntMTE temperature prediction | Viable precursor pairs, calcination temperatures |

Retrosynthetic Planning Protocol

The synthesis planning workflow combines two specialized models [10]:

- Precursor suggestion: Retro-Rank-In model produces ranked lists of viable solid-state precursors

- Temperature prediction: SyntMTE predicts calcination temperatures required to form target phases

- Reaction balancing: Automatic balancing of chemical reactions and computation of precursor quantities

Both models are trained on literature-mined corpora of solid-state synthesis recipes, ensuring practical relevance [10].

Target Selection and Batch Processing

To optimize experimental efficiency [10]:

- Web-assisted novelty assessment: LLM-based searching to identify previously synthesized compounds

- Expert validation: Removal of targets with unrealistic oxidation states or well-explored compositions

- Recipe-similarity batching: Automatic selection of targets that can be synthesized simultaneously in furnace batches

This approach enabled efficient experimental validation of 24 targets across two batches, with 8 samples lost to crucible bonding issues and 16 successfully characterized [10].

Large-Scale Method Validation

Comprehensive validation across diverse molecular systems is essential for assessing CSP method performance:

- Multi-tier benchmark sets: 66 molecules with 137 experimentally known polymorphs spanning rigid molecules to flexible drug-like compounds [17]

- Clustering analysis: Removal of trivial duplicates through RMSD-based clustering (e.g., RMSD₁₅ < 1.2 Å) [17]

- Free energy calculations: Temperature-dependent stability assessment through free energy calculations [17]

This rigorous validation framework demonstrated that proper clustering can significantly improve ranking of experimentally observed polymorphs, addressing one aspect of the overprediction problem [17].

Research Reagent Solutions: Computational Tools for CSP

Table 3: Essential Computational Tools for Crystal Structure Prediction

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| Matbench Discovery [18] | Evaluation framework | Benchmarks ML energy models as DFT pre-filters | Prospective materials discovery |

| UBEM Approach [19] | Graph neural network | Predicts volume-relaxed energies from unrelaxed structures | High-throughput stability screening |

| GLEE Program [14] | CSP software | Generates initial crystal structure landscapes | Polymorph sampling |

| CSLLM Framework [15] | Fine-tuned LLMs | Predicts synthesizability, methods, and precursors | Synthesis-aware candidate screening |

| Threshold Algorithm [14] | Monte Carlo method | Estimates energy barriers between minima | Finite-temperature basin identification |

The overprediction problem in crystal structure prediction represents a fundamental challenge in computational materials science. While convex-hull stability remains a valuable initial filter, overcoming overprediction requires moving beyond thermodynamic stability to incorporate kinetic accessibility, synthetic pathway feasibility, and finite-temperature effects.

The most promising approaches share a common theme: integrating multiple complementary signals – compositional, structural, and synthetic – to provide a more realistic assessment of synthesizability. As these methods continue to mature, they promise to significantly accelerate the discovery of novel functional materials by focusing experimental resources on genuinely accessible candidates.

The integration of synthesizability prediction directly into materials discovery pipelines, as demonstrated by the successful experimental validation of computationally predicted targets, marks a critical step toward bridging the gap between theoretical prediction and practical synthesis in materials science.

The prediction of synthesizable materials has long been dominated by the analysis of thermodynamic stability, primarily through the computation of the energy above the convex hull. While this approach identifies structurally plausible compounds, it frequently fails to predict which materials can be experimentally realized, as it overlooks critical kinetic and synthetic factors. This whitepaper examines how porosity, solvent inclusion, and other stabilization mechanisms control synthetic accessibility, moving beyond a purely thermodynamic framework. We present recent advances in computational models, including synthesizability-guided pipelines and large language models, that achieve unprecedented accuracy in predicting experimental outcomes by integrating these features. Furthermore, we provide detailed protocols and a curated toolkit to empower researchers in deploying these strategies for accelerated materials and drug development.

In computational materials discovery, density functional theory (DFT) methods have been extensively used to evaluate candidate structures, with thermodynamic stability judgments typically based on an material's energy above the convex hull [10]. This approach, while accurate for identifying ground-state structures at zero Kelvin, often favors low-energy configurations that are not experimentally accessible, creating a significant gap between prediction and synthesis [10] [15].

The principal shortcoming of convex-hull analysis lies in its neglect of finite-temperature effects, entropic contributions, and kinetic factors that govern synthetic accessibility in real laboratory conditions [10]. For instance, the Materials Project lists 21 SiO₂ structures within 0.01 eV of the convex hull, yet the common cristobalite phase is not among them [10]. This demonstrates that thermodynamic stability, while necessary, is insufficient for predicting synthesizability.

Key Stabilization Mechanisms Beyond Thermodynamics

Porosity and Framework Stabilization

Porous materials can achieve stabilization through specific structural mechanisms that defy simple thermodynamic predictions. In crystalline systems, framework stability often depends on the balanced interplay of different bonding types and pore stabilization mechanisms.

- Carbon-Induced Porosity: In hydrogen storage materials like MgH₂, doping with Ni/C nano-catalysts creates a growing porous structure during cycling that maintains high capacity. This carbon-induced-porosity stabilization mechanism stabilizes the proportion of rapid hydrogen absorption process, directly enhancing cycling performance [20].

- Non-Metal Organic Frameworks: Porous ammonium halide salts demonstrate how ionic clustering can direct crystallization into predictable porous frameworks. The charged nodes in these salt frameworks create tight ionic clusters that enable the formation of isoreticular series of porous structures, analogous to metal-organic frameworks but without directional coordinate covalent bonding [21].

- Cohesive Granular Systems: In powder systems, porous aggregates can be stabilized through a balance between cohesion strength and external pressure. The final porosity depends on the scaled cohesion force and the lateral distance between branches of ballistic deposits, with Coulomb friction alone capable of stabilizing pores, particularly for non-spherical particles [22].

Solvent Inclusion and Templating Effects

Solvent interactions play a crucial role in stabilizing metastable phases and directing synthesis pathways through both thermodynamic and kinetic pathways.

- Solvent-Assisted Bio-oil Stabilization: The stabilization of bio-oil organic phases demonstrates how solvent polarity and hydrogen-donating capability significantly impact stabilization efficiency. Highly polar solvents like methanol and ethanol yield higher oil fractions (64% and 62% respectively) by effectively reducing polymerization and coking during mild hydrotreatment processes [23].

- Solvent Templating in Porous Salts: For porous organic salts, solvent inclusion during crystallization can template specific porous frameworks. Computational crystal structure prediction (CSP) reveals that certain ammonium halide salts form stable, predictable porous structures with channel sizes and geometries that can be designed a priori, enabled by solvent-directed crystallization [21].

Table 1: Quantitative Comparison of Stabilization Mechanisms

| Mechanism | Material System | Key Parameters | Performance Impact | Reference |

|---|---|---|---|---|

| Carbon-Induced Porosity | MgH₂ Ni/C for H₂ storage | Porous structure growth during cycling | Maintains 98.8% capacity after 50 cycles vs. 85.2% for undoped MgH₂ | [20] |

| Solvent Polarity Stabilization | Bio-oil organic phase | Solvent polarity, hydrogen-donating capability | Methanol: 74.8% efficiency; Ethyl ether: 63.6% efficiency | [23] |

| Ionic Cluster Direction | Porous ammonium halide salts | Ionic charge density, linker rigidity | Iodine capture exceeding most MOFs | [21] |

| Cohesive Pore Stabilization | Cohesive granular powders | Cohesion force, external pressure, particle shape | Stronger effect for nonspherical particles vs. round particles | [22] |

Computational Advances in Synthesizability Prediction

Integrated Synthesizability Models

Recent approaches have moved beyond standalone thermodynamic assessments to integrated models that simultaneously evaluate composition and structure.

Synthesizability Prediction Workflow

A synthesizability-guided pipeline for materials discovery combines compositional and structural signals through a dual-encoder architecture [10]. The model uses a fine-tuned compositional MTEncoder transformer for stoichiometric analysis and a graph neural network (GNN) for crystal structure evaluation, with outputs combined via rank-average ensemble (Borda fusion) to prioritize candidates [10]. This approach identified several hundred highly synthesizable candidates from over 4.4 million computational structures, with experimental validation successfully synthesizing 7 of 16 targets within just three days [10].

Large Language Models for Synthesis Prediction

The Crystal Synthesis Large Language Models (CSLLM) framework represents a breakthrough in synthesizability prediction, achieving 98.6% accuracy by specializing LLMs for three distinct tasks: synthesizability classification, synthetic method recommendation, and precursor identification [15]. This significantly outperforms traditional methods based on energy above hull (74.1% accuracy) or phonon spectrum analysis (82.2% accuracy) [15].

The framework uses a novel text representation called "material string" that efficiently encodes crystal information for LLM processing, enabling the identification of 45,632 synthesizable materials from 105,321 theoretical structures [15].

Machine Learning for Complex Chemical Spaces

For complex material classes like Zintl phases, graph neural networks with upper bound energy minimization (UBEM) have demonstrated remarkable efficiency in discovering stable compounds [19]. This approach screened over 90,000 hypothetical Zintl phases and identified 1,810 new thermodynamically stable phases with 90% precision validated by DFT calculations, more than doubling the accuracy of existing models like M3GNet (40% precision) on the same dataset [19].

Table 2: Computational Methods for Synthesizability Prediction

| Method | Approach | Accuracy/Performance | Advantages | Limitations |

|---|---|---|---|---|

| Integrated Synthesizability Model | Combines composition (transformer) and structure (GNN) encoders | Successfully synthesized 7/16 predicted targets in 3 days | Integrates complementary signals from composition and structure | Requires curated training data from experimental databases |

| CSLLM Framework | Three specialized LLMs for synthesizability, method, and precursors | 98.6% synthesizability accuracy; >90% method classification | Outstanding generalization to complex structures beyond training data | Dependent on comprehensive dataset for fine-tuning |

| UBEM-GNN for Zintl Phases | Scale-invariant GNN predicting volume-relaxed energy from unrelaxed structures | 90% precision in predicting DFT-stable phases; 27 meV/atom MAE | 2× more accurate than M3GNet; avoids full DFT relaxation | Limited to volume relaxation only |

| Bayesian Optimization for ESF Maps | Parallel multi-objective Bayesian optimization for energy-structure-function maps | 100× acceleration; saved >500,000 CPU hours | Dramatically reduces computational cost for porous materials screening | Complex implementation for multi-objective optimization |

Experimental Protocols and Methodologies

Synthesizability-Guided Experimental Pipeline

The experimental validation of computationally predicted materials requires a systematic approach to candidate selection and synthesis.

Prioritization Protocol:

- Initial Screening: Begin with a large pool of computational structures (e.g., 4.4 million) and apply a synthesizability score threshold (e.g., rank-average >0.95) [10].

- Composition Filtering: Remove compounds containing platinoid group elements, non-oxides, and toxic compounds [10].

- Literature Validation: Use web-searching LLMs and expert judgment to exclude previously synthesized candidates and unrealistic oxidation states [10].

- Synthesis Planning: Apply precursor-suggestion models (e.g., Retro-Rank-In) and temperature prediction models (e.g., SyntMTE) to generate viable synthesis routes [10].

High-Throughput Synthesis:

- Use automated solid-state laboratory systems with benchtop muffle furnaces

- Employ parallel processing with batches of 12 samples simultaneously

- Characterize products automatically by X-ray diffraction (XRD)

- Address crucible bonding issues through appropriate material selection [10]

Solvent-Assisted Stabilization Protocol

For solvent-dependent stabilization systems like bio-oil upgrading:

Feed Preparation:

- Blend 80% by-weight bio-oil organic phase (BOP) with 20% by-weight solvent [23]

- Test solvents with varying polarity: methanol (polar), ethanol (polar-protic), isopropyl alcohol (less polar), ethyl ether (nonpolar) [23]

Stabilization Procedure:

- Load reactor with Ru/C catalyst (5 wt% metal loading, 686.55 m²/g BET surface area) [23]

- Process under mild hydrodeoxygenation conditions (subcritical up to 200°C) [23]

- Analyze products for density, viscosity, total acid number, elemental composition

- Calculate degree of deoxygenation, dehydration, and energy efficiency [23]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents for Stabilization Studies

| Reagent/Material | Function/Application | Example Specifications | Reference |

|---|---|---|---|

| Ru/C Catalyst | Bio-oil stabilization via mild hydrodeoxygenation | 5 wt% metal loading, 686.55 m²/g BET, 3.3 nm pore diameter | [23] |

| Ni/C Nano-catalyst | Hydrogen storage material doping for porosity induction | Creates growing porous structure in MgH₂ during cycling | [20] |

| DEHPA Extractant | Solvent-impregnated resin for metal ion separation | Di-2-ethylhexyl phosphoric acid; requires capacity stabilization | [24] |

| Amberlite XAD-2 | Macroporous support for solvent-impregnated resins | Styrene-divinylbenzene copolymer for extractant immobilization | [24] |

| Tetrahedral Amine Linkers | Building blocks for porous organic salt frameworks | Tetrakis-(4-aminophenyl)methane (TAPM) for ammonium halide salts | [21] |

| Trigonal Triamine Linkers | Rigid components for predictable porous salt formation | TT, TTBT, TAPT for isoreticular frameworks with halide anions | [21] |

The reliance on convex-hull stability as the primary metric for synthesizability prediction represents an oversimplified approach that fails to capture the complex kinetic and synthetic factors governing experimental realization. Porosity mechanisms, solvent inclusion, and framework stabilization effects play decisive roles in determining which computationally predicted materials can be successfully synthesized. The integration of these factors into machine learning models, particularly through synthesizability-guided pipelines and specialized large language models, has demonstrated remarkable improvements in prediction accuracy and experimental success rates. As these computational approaches continue to evolve, incorporating increasingly sophisticated representations of stabilization mechanisms, they promise to significantly accelerate the discovery and development of novel materials for advanced technological applications.

In computational materials discovery, the prediction of a material's synthesizability is a critical bottleneck. While high-throughput simulations can generate millions of candidate structures, most prove inaccessible in the laboratory. Within this challenge, convex-hull stability has emerged as a foundational metric for prioritizing candidates for experimental synthesis [10] [11]. The hull distance, or energy above hull, quantifies a compound's thermodynamic stability relative to competing phases in its chemical space. A material on the convex hull (0 eV/atom hull distance) is thermodynamically stable at 0 K, while a positive value indicates metastability or instability [1] [9].

However, hull distance interpretation is nuanced. Traditional density functional theory (DFT) methods, while accurate at zero Kelvin, often favor low-energy structures that are not experimentally accessible, overlooking finite-temperature effects and kinetic barriers [10]. This limitation has spurred the development of synthesizability scores that integrate hull stability with compositional and structural features [10], as well as advanced active learning approaches that directly minimize uncertainty in the convex hull itself [7] [8]. This technical guide details the interpretation of hull distances across system complexities, framed within the broader thesis that accurate stability assessment is indispensable for—but not the sole determinant of—successful synthesis prediction.

Core Concepts: The Fundamentals of Convex Hull Construction

Thermodynamic Foundation

The construction of a phase diagram begins with the calculation of formation energies. For a phase composed of N components, the formation energy per atom, ΔEƒ, is calculated as:

ΔEƒ = E - ∑ᵢᴺnᵢμᵢ

where E is the total energy of the phase, nᵢ is the number of moles of component i, and μᵢ is the chemical potential (energy) of component i [1]. For solid-state systems at 0 K and 0 atm pressure, the relevant thermodynamic potential simplifies to the internal energy, E [1].

The Convex Hull Algorithm

The convex hull is constructed by taking the lower convex envelope of the formation energies of all known compounds in a chemical system. The hull represents the set of stable phase-composition pairs with the lowest possible energy at their respective compositions [1] [7]. In a binary A-B system, the hull is a line; in a ternary A-B-C system, it becomes a surface; and in higher-dimensional systems, it is a hyper-surface [1].

Table 1: Key Mathematical Definitions in Hull Analysis

| Term | Symbol | Definition | Interpretation |

|---|---|---|---|

| Formation Energy | ΔEƒ | E - ∑ᵢᴺnᵢμᵢ | Energy to form a compound from its constituent elements. |

| Hull Distance | ΔEd | ΔEƒ,compound - ΔEƒ,hull | Decomposition energy to the most stable phases. |

| Stable Compound | ΔEd ≤ 0 | Lies on the convex hull. | Thermodynamically stable at 0 K. |

| Metastable Compound | ΔEd > 0 | Lies above the convex hull. | May be synthesizable kinetically. |

The following diagram illustrates the convex hull construction process and the determination of the hull distance in a binary system:

Diagram Title: Convex Hull Construction and Hull Distance Calculation

Computational Methodologies: From DFT to Machine Learning

Density Functional Theory Workflows

DFT provides the foundational energy calculations for hull construction. The Materials Project methodology involves:

- Energy Calculations: Performing DFT calculations with appropriate exchange-correlation functionals (GGA/GGA+U/R2SCAN) for all known compounds in a chemical system [1].

- Mixing Schemes: Applying energy corrections to ensure consistency across different calculation methods, particularly when mixing GGA/GGA+U and R2SCAN results [1].

- Hull Construction: Using the

pymatgencode to build the phase diagram and calculate hull distances [1].

Table 2: Computational Methods for Hull Analysis

| Method | Key Principle | Application in Hull Analysis | Key Researchers |

|---|---|---|---|

| Standard DFT | First-principles energy calculation. | Provides formation energies for hull construction. | Materials Project [1] |

| Convex Hull-Aware Active Learning (CAL) | Bayesian optimization targeting hull uncertainty. | Minimizes experiments needed to resolve the hull; provides uncertainty quantification. | Novick et al. [7] [8] |

| Machine-Learned Formation Energies | Statistical models trained on DFT data. | Rapid screening; limited by poor stability prediction accuracy. | Multiple models [9] |

Advanced Approaches: Convex Hull-Aware Active Learning

The Convex Hull-Aware Active Learning (CAL) algorithm represents a significant advancement for efficiently mapping phase diagrams. CAL uses Gaussian process regressions to model energy surfaces and produces a posterior distribution over possible convex hulls [7] [8]. The algorithm's policy selects the next composition to observe based on expected information gain for the hull itself, not just the energy surface. This allows the convex hull to be predicted with significantly fewer observations than brute-force approaches [8].

Diagram Title: Convex Hull-Aware Active Learning Workflow

Experimental Protocols: From Computation to Synthesis

Synthesizability-Guided Pipeline

A recently developed synthesizability-guided pipeline demonstrates the integration of hull analysis with experimental synthesis. The methodology involves:

- Candidate Screening: Screening 4.4 million computational structures from databases (Materials Project, GNoME, Alexandria) using a combined compositional and structural synthesizability score [10].

- Stability Filtering: Applying a rank-average ensemble of composition and structure-based models to prioritize highly synthesizable candidates, followed by filtering out platinoid elements, non-oxides, and toxic compounds [10].

- Synthesis Planning: Using Retro-Rank-In for precursor suggestion and SyntMTE for calcination temperature prediction, both trained on literature-mined solid-state synthesis corpora [10].

- High-Throughput Validation: Executing syntheses in an automated solid-state laboratory with characterization via X-ray diffraction (XRD) [10].

This pipeline successfully synthesized 7 of 16 target materials, with the entire experimental process completed in just three days, highlighting the practical utility of synthesizability assessment beyond hull stability alone [10].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools for Hull Analysis

| Reagent/Tool | Function/Role | Application Context |

|---|---|---|

| Thermo Scientific Thermolyne Muffle Furnace | High-temperature calcination of solid-state precursors. | Experimental synthesis of predicted materials [10]. |

| X-ray Diffractometer (XRD) | Phase identification and structure verification of synthesis products. | Experimental validation of synthesized materials [10]. |

| pymatgen (Python) | Open-source library for phase diagram construction and materials analysis. | Computational hull construction and analysis [1]. |

| Gaussian Process Regression | Bayesian modeling of energy surfaces with uncertainty quantification. | Core component of CAL algorithm for probabilistic hulls [7] [8]. |

| Retro-Rank-In & SyntMTE | Machine learning models for precursor and synthesis condition prediction. | Synthesis planning for computationally identified candidates [10]. |

Advanced Concepts: Stability Prediction Challenges and Synthesizability

The Critical Distinction: Formation Energy vs. Stability

A crucial limitation of machine-learned formation energies has been identified: while models can predict formation energy with reasonable accuracy, they perform poorly at predicting compound stability [9]. This occurs because:

- Different Energy Scales: Formation energies (ΔHƒ) typically span several eV/atom, while hull distances (ΔHd) are 1-2 orders of magnitude smaller (mean ± deviation = 0.06 ± 0.12 eV/atom) [9].

- System-Dependent Nature: Hull distance is a relative measure within a chemical system, not an intrinsic property, making it difficult to learn from compositional features alone [9].

- Lack of Error Cancellation: DFT benefits from systematic error cancellation when comparing energies of chemically similar compounds, while ML models do not necessarily preserve these relative rankings [9].

Beyond Thermodynamic Stability: The Synthesizability Score

The recognition that hull stability alone is insufficient for synthesis prediction has led to the development of unified synthesizability models. These integrate:

- Compositional Signals: Elemental chemistry, precursor availability, redox and volatility constraints.

- Structural Signals: Local coordination, motif stability, and packing environments [10].

Such models demonstrate that accurately predicting which compounds can be fabricated requires moving beyond the 0 K thermodynamic stability provided by the hull distance to include additional chemical and structural insights [10].

The interpretation of hull distances forms a necessary but insufficient component of synthesizability prediction. While the convex hull provides a fundamental thermodynamic filter at 0 K, successful experimental synthesis depends on additional factors including finite-temperature effects, kinetic barriers, and precursor accessibility. The emerging paradigm integrates hull stability within broader synthesizability frameworks that leverage both compositional and structural descriptors, along with literature-mined synthesis knowledge for pathway planning [10]. Furthermore, advanced computational approaches like convex hull-aware active learning are increasing the efficiency of stability mapping itself [7] [8]. The integration of these methodologies—combining accurate hull analysis with synthesizability prediction and experimental validation—represents the most promising path toward overcoming the predictive synthesis bottleneck in computational materials discovery.

Computational Advances: Machine Learning and Active Learning for Hull Construction

The discovery of new functional materials is a central goal of solid-state chemistry and materials science, capable of driving significant scientific and technological advancements. For decades, density functional theory (DFT) has served as the computational cornerstone for predicting material stability, predominantly through the calculation of formation enthalpies and convex-hull analysis [25]. This approach determines a compound's thermodynamic stability relative to competing phases in a chemical space, with structures on or near the convex hull considered potentially stable [19]. However, traditional DFT-driven stability assessment faces profound challenges that limit its predictive power for experimental synthesis. The method suffers from intrinsic energy resolution errors in exchange-correlation functionals, often rendering it unreliable for quantitatively predicting formation enthalpies and phase diagrams, particularly for complex ternary systems [25]. Furthermore, DFT calculations typically consider zero-temperature thermodynamics, overlooking finite-temperature effects, entropic contributions, and kinetic factors that govern synthetic accessibility in laboratory settings [10].

The fundamental disconnect between thermodynamic stability predicted by DFT and practical synthesizability has created a critical bottleneck in materials discovery pipelines. While high-throughput DFT calculations have generated millions of putative crystal structures in databases like the Materials Project, GNoME, and Alexandria, the number of proposed inorganic crystals now exceeds experimentally synthesized compounds by more than an order of magnitude [10]. This disparity highlights the pressing need for more sophisticated approaches that can accurately distinguish theoretically stable structures from those truly synthesizable in laboratory conditions. As computational materials design increasingly relies on these vast digital repositories, bridging the gap between DFT-based stability prediction and experimental synthesizability has emerged as one of the most significant challenges in the field.

The DFT Foundation: Limitations and Fundamental Gaps

Density functional theory provides the fundamental framework for understanding phase stability through total energy calculations. The standard approach involves computing the enthalpy of formation (H_f) for a compound relative to its constituent elements in their ground states according to the equation:

\begin{equation} Hf (A{xA}B{xB}C{xC}\cdots ) = H(A{xA}B{xB}C{xC}\cdots ) - xA H(A) -xB H(B) - xC H(C) - \cdots \end{equation}

where (H(A{x{A}}B{x{B}}C{x{C}})) represents the enthalpy per atom of the intermetallic compound or alloy, and (H(A)), (H(B)), and (H(C)) are the enthalpies per atom of elements A, B and C in their ground-state structures [25]. Structures with negative formation energies are considered potentially stable, with those lying on the convex hull deemed thermodynamically stable at zero temperature.

Despite its theoretical foundation, DFT exhibits systematic errors that limit its predictive accuracy for phase stability. The inherent accuracy of the energy functionals used in these calculations lacks the necessary energy resolution for reliable phase diagram prediction, particularly for ternary systems [25]. These errors, while often negligible in relative comparisons of similar structures, become critical when assessing the absolute stability of competing phases in complex alloys. As a result, direct predictions of phase diagrams using uncorrected DFT frequently fail to match experimental observations, especially in multicomponent systems relevant to advanced technological applications.

The limitation of conventional stability prediction extends beyond technical accuracy to fundamental conceptual gaps. Traditional convex-hull analysis assumes that synthesizability is primarily governed by thermodynamic stability at zero temperature. However, experimental evidence consistently demonstrates that metastable structures (those above the convex hull) are routinely synthesized, while many thermodynamically stable structures prove challenging to realize in practice [15]. This paradox underscores the critical influence of kinetic factors, precursor availability, and synthetic pathway accessibility in determining practical synthesizability—factors largely absent from standard DFT stability assessments.

Table 1: Key Limitations of DFT in Stability Prediction

| Limitation Category | Specific Challenge | Impact on Prediction Accuracy |

|---|---|---|

| Functional Accuracy | Intrinsic energy resolution errors in exchange-correlation functionals | Systematic errors in formation enthalpies, especially for ternary systems |

| Thermodynamic Scope | Focus on zero-temperature, equilibrium conditions | Overlooks finite-temperature effects, entropic contributions, and kinetic factors |

| Synthesizability Gap | Inability to account for experimental accessibility | Fails to distinguish theoretically stable versus practically synthesizable compounds |

| Computational Cost | High computational expense for complex systems | Limits exploration of complex compositions and structural diversity |

Machine Learning Solutions for Enhanced Stability Prediction

Machine learning approaches have emerged as powerful tools to address the limitations of DFT-based stability prediction, operating through two primary paradigms: correcting DFT calculations and direct stability prediction. These methods leverage patterns in existing materials data to enhance predictive accuracy while reducing computational cost.

Correcting DFT Errors with Machine Learning

One innovative approach involves using machine learning to systematically correct errors in DFT-calculated formation enthalpies. Researchers have developed neural network models that predict the discrepancy between DFT-calculated and experimentally measured enthalpies for binary and ternary alloys and compounds [25]. These models utilize a structured feature set comprising elemental concentrations, atomic numbers, and interaction terms to capture key chemical and structural effects. Implementation typically involves a multi-layer perceptron regressor with multiple hidden layers, optimized through leave-one-out cross-validation and k-fold cross-validation to prevent overfitting [25]. This hybrid DFT-ML approach maintains the physical foundation of DFT while significantly improving its quantitative accuracy for formation energy prediction.

Direct Stability Prediction with Graph Neural Networks

Beyond correcting DFT, graph neural networks have demonstrated remarkable capability in predicting thermodynamic stability directly from crystal structures. The Upper Bound Energy Minimization (UBEM) approach represents a particularly advanced implementation, using a scale-invariant GNN model to predict volume-relaxed energies from unrelaxed crystal structures [19]. This method successfully identified 1,810 new thermodynamically stable Zintl phases from a search space of over 90,000 hypothetical structures, achieving a remarkable 90% precision when validated against DFT calculations [19]. This performance significantly exceeded traditional MLIPs like M3GNet, which achieved only 40% precision on the same dataset.

Advanced Synthesizability Prediction with Large Language Models

Recent breakthroughs have adapted large language models for synthesizability prediction, framing crystal structures as text sequences using specialized representations like "material strings" [15]. The Crystal Synthesis Large Language Models framework employs three specialized LLMs to predict synthesizability, synthetic methods, and suitable precursors respectively [15]. This approach has demonstrated extraordinary accuracy, with the Synthesizability LLM achieving 98.6% accuracy—significantly outperforming traditional thermodynamic methods based on energy above hull (74.1% accuracy) and kinetic methods based on phonon spectrum analysis (82.2% accuracy) [15].

Table 2: Machine Learning Approaches for Stability and Synthesizability Prediction

| Method Category | Key Innovation | Reported Performance | Applications |

|---|---|---|---|

| DFT Error Correction | Neural networks predicting discrepancy between DFT and experimental enthalpies | Improved accuracy in formation enthalpy prediction for ternary systems | Al-Ni-Pd and Al-Ni-Ti systems for high-temperature applications |

| Graph Neural Networks | Upper Bound Energy Minimization (UBEM) using unrelaxed structures | 90% precision in identifying stable Zintl phases; 27 meV/atom MAE | Discovery of novel Zintl phases from >90,000 candidates |

| Large Language Models | CSLLM framework using text representations of crystal structures | 98.6% accuracy in synthesizability prediction | Screening of 105,321 theoretical structures, identifying 45,632 as synthesizable |

| Similarity-Based Methods | Generalized Convex Hull with adapted SOAP kernel | Improved lattice energy prediction with Gaussian process regression | Molecular crystal structure prediction and stabilizable structure identification |

Integrated Workflows: From Prediction to Synthesis

The most advanced computational pipelines integrate stability prediction with synthesis planning, creating end-to-end frameworks for materials discovery. These workflows typically begin with large-scale candidate generation, proceed through successive filtering stages, and culminate in synthesis pathway prediction.

Synthesizability-Guided Discovery Pipeline

A comprehensive synthesizability-guided pipeline for materials discovery demonstrates this integrated approach [10]. The process initiates with a pool of 4.4 million computational structures, which undergo successive filtering using a combined compositional and structural synthesizability score. This score integrates complementary signals from composition and crystal structure through two encoders: a fine-tuned compositional MTEncoder transformer for stoichiometric information and a graph neural network fine-tuned from the JMP model for structural information [10]. The model is trained on data from the Materials Project, with labels assigned according to whether experimental entries exist in the ICSD for given structures.

The screening process employs a rank-average ensemble method that aggregates predictions from both composition and structure models:

\begin{equation}

\mathrm{RankAvg}(i)=\frac{1}{2N}\sum{m\in{c,s}}\left(1+\sum{j=1}^{N}\mathbf{1}!\big[s{m}(j)

where (s_{m}(i)) represents the synthesizability probability predicted by model (m) for candidate (i) [10]. This approach prioritizes candidates based on their relative synthesizability ranking rather than applying absolute probability thresholds.

Following synthesizability screening, successful pipelines incorporate retrosynthetic planning to generate feasible synthesis routes. This involves applying precursor-suggestion models like Retro-Rank-In to produce ranked lists of viable solid-state precursors, followed by synthesis condition prediction using models like SyntMTE to determine appropriate calcination temperatures [10]. These models are trained on literature-mined corpora of solid-state synthesis, encoding collective experimental knowledge from published literature.

Experimental Validation and Performance

The ultimate test of these integrated workflows lies in experimental validation. In one implemented pipeline, researchers selected 24 targets across two batches of 12 based on recipe similarity, enabling parallel synthesis in a high-throughput laboratory setting [10]. The samples were weighed, ground, and calcined in a Thermo Scientific Thermolyne Benchtop Muffle Furnace, with subsequent characterization by X-ray diffraction. Of the 16 successfully characterized samples, seven matched the target structure, including one completely novel and one previously unreported structure [10]. This successful experimental validation, completed in just three days, demonstrates the practical utility of synthesizability-guided discovery pipelines in accelerating materials development.

Implementing effective stability and synthesizability prediction requires specialized computational tools and resources. The following table summarizes key components of the modern computational materials scientist's toolkit.

Table 3: Essential Resources for Stability and Synthesizability Prediction

| Tool Category | Specific Tools/Models | Function | Application Context |

|---|---|---|---|

| Materials Databases | Materials Project, OQMD, AFLOW, JARVIS, ICSD | Provide training data and reference structures for stability assessment | Source of known stable and theoretical compounds for model training |

| ML Models for Stability | GNNs with UBEM approach, M3GNet, MatFormer | Predict thermodynamic stability from composition or structure | High-throughput screening of hypothetical compounds |

| Synthesizability Models | CSLLM Framework, SynthNN, Composition/Structure Integrative Models | Predict experimental accessibility beyond thermodynamic stability | Prioritizing candidates for experimental synthesis |

| Synthesis Planning | Retro-Rank-In, SyntMTE, Precursor LLMs | Suggest precursors and synthesis conditions | Transitioning from predicted structures to viable synthesis recipes |

| Descriptors & Kernels | SOAP, Material String, Voronoi Tessellations | Represent crystal structures for ML processing | Featurization for various machine learning algorithms |

| Validation Tools | Phonon spectrum calculation, XRD simulation, Elastic constant computation | Confirm dynamic and mechanical stability | Final verification before experimental synthesis |

The integration of machine learning with traditional quantum mechanical calculations represents a paradigm shift in stability prediction for materials discovery. By addressing fundamental limitations of DFT through data-driven approaches, these methods provide more accurate assessment of thermodynamic stability while simultaneously incorporating practical synthesizability considerations. The most successful frameworks combine compositional and structural information through ensemble methods, leverage retrosynthetic planning for pathway prediction, and validate predictions through high-throughput experimental synthesis.

As these methodologies continue to mature, several emerging trends promise to further enhance their capabilities. The development of more sophisticated text representations for crystal structures will improve the performance of LLM-based approaches, while larger and more diverse training datasets will enhance model generalizability across chemical spaces. Additionally, increased integration of kinetic and thermodynamic factors in synthesizability assessment will better capture the complexities of real-world synthesis.

These computational advances are progressively bridging the gap between theoretical prediction and experimental realization in materials science. By providing more reliable assessment of which computationally predicted materials can be successfully synthesized in the laboratory, integrated stability-synthesizability pipelines are accelerating the discovery of novel functional materials and transforming the approach to materials design across scientific and industrial domains.