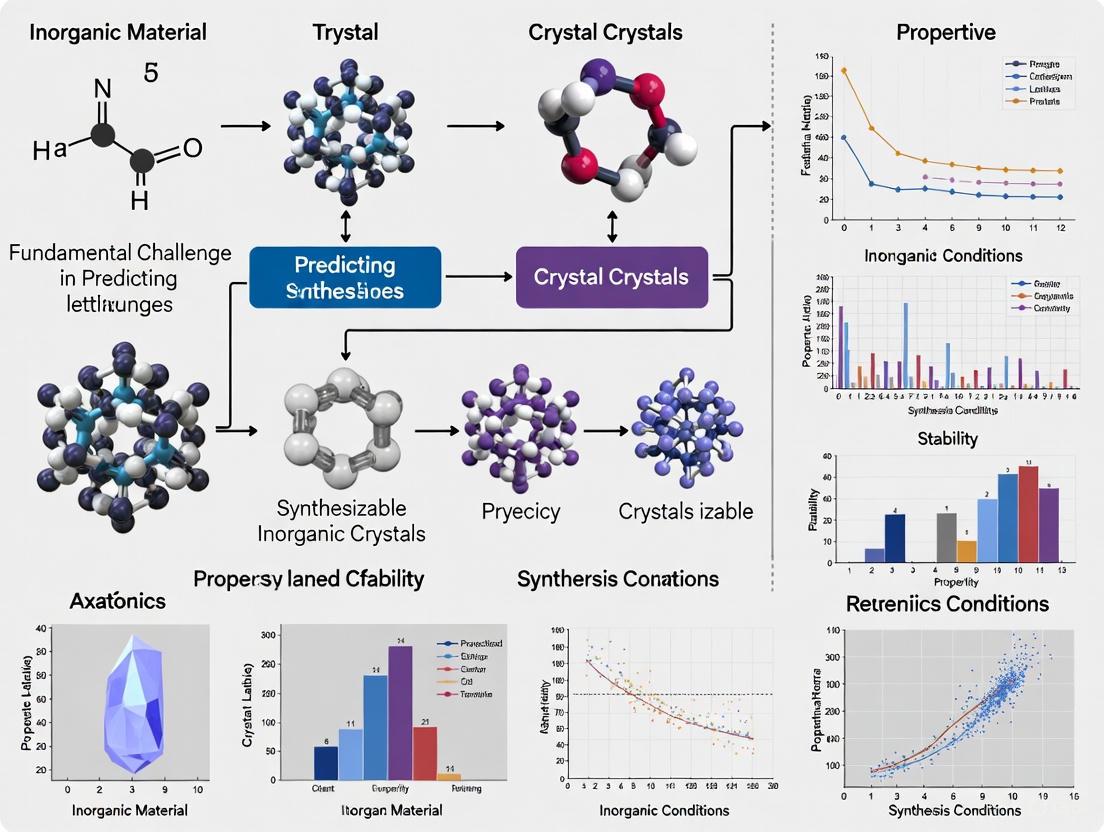

Beyond Thermodynamics: Tackling the Fundamental Challenges in Predicting Synthesizable Inorganic Crystals

The acceleration of computational materials design has starkly contrasted with the slow, empirical nature of experimental synthesis, creating a critical bottleneck in materials discovery.

Beyond Thermodynamics: Tackling the Fundamental Challenges in Predicting Synthesizable Inorganic Crystals

Abstract

The acceleration of computational materials design has starkly contrasted with the slow, empirical nature of experimental synthesis, creating a critical bottleneck in materials discovery. This article explores the fundamental challenges in predicting the synthesizability of inorganic crystals, moving beyond traditional proxies like thermodynamic stability. We examine the limitations of conventional methods and the rise of advanced machine learning solutions, including deep learning models and large language models, which learn synthesizability rules directly from experimental data. The scope covers foundational concepts, methodological innovations for practical application, strategies to overcome data and model limitations, and rigorous validation of these new approaches. For researchers and development professionals, this synthesis provides a crucial guide to navigating the transition from virtual candidate to synthetically accessible material, a transformation with profound implications for the development of new functional materials.

Why Synthesizability is a Grand Challenge in Materials Informatics

Advancements in computational chemistry and materials science, particularly in crystal structure prediction (CSP), have revolutionized the virtual design of new materials with targeted properties [1]. High-throughput computational screening and AI-powered generative models can now propose millions of hypothetical candidate materials. However, a critical bottleneck persists: the vast majority of these computationally designed materials, despite being thermodynamically stable, are not synthesizable [1] [2]. This creates a fundamental gap between theoretical predictions and experimental realization, severely limiting the impact and throughput of materials discovery pipelines.

The core challenge lies in the complex nature of synthesizability itself. Unlike thermodynamic stability, which can be reasonably estimated from first principles, synthesizability encompasses kinetic factors, precursor selection, reaction pathways, and specific experimental conditions—most of which cannot be fully predicted based on thermodynamic or kinetic constraints alone [1] [3]. This complexity is compounded by the fact that experimental synthesis reports (positive data) are documented in scientific literature, while failed synthesis attempts (negative data) are rarely reported, creating a fundamental data limitation for machine learning models [3] [2]. This article examines the fundamental challenges in predicting synthesizable inorganic crystals and explores the integrated computational and experimental strategies being developed to overcome the synthesis bottleneck.

Current Computational Paradigms for Predicting Synthesizability

Thermodynamic Stability and Its Limitations

Traditional computational materials discovery has relied heavily on thermodynamic energy-based stability predictions as a proxy for synthesizability. Density Functional Theory (DFT) calculations are used to determine a material's formation energy and assess whether it is stable against decomposition into competing phases [3]. While necessary for identifying stable materials, this approach has proven insufficient for predicting synthesizability. A significant limitation is that many thermodynamically stable materials remain unsynthesized, while many metastable materials (those not at the global energy minimum) can be successfully synthesized through kinetically controlled pathways [1] [2].

The performance of formation energy calculations as a synthesizability filter is quantitatively limited; they capture only approximately 50% of synthesized inorganic crystalline materials [3]. Similarly, the commonly employed charge-balancing criterion—filtering materials based on net neutral ionic charge—also shows poor performance, with only 37% of known synthesized materials satisfying this constraint [3]. These findings underscore that synthesizability depends on factors beyond simple thermodynamics or charge neutrality.

Data-Driven Machine Learning Approaches

Machine learning (ML) models trained on databases of known materials have emerged as powerful tools for synthesizability prediction. These approaches can be broadly categorized by their input data type and methodological framework.

Table 1: Comparison of Machine Learning Approaches for Synthesizability Prediction

| Model Type | Input Data | Key Advantages | Limitations | Representative Models |

|---|---|---|---|---|

| Composition-Based | Chemical formula only | Applicable when structure is unknown; fast screening | Cannot differentiate between polymorphs | SynthNN [3] |

| Structure-Based | Crystal structure | Accounts for structural polymorphs; higher accuracy | Requires predicted structure | PU-CGCNN [2], Synthesizability-driven CSP [1] |

| Positive-Unlabeled (PU) Learning | Composition or structure | Handles lack of negative data; realistic for materials space | Complex training and evaluation | Various implementations [3] [2] |

| LLM-Based | Textual structure descriptions | Human-interpretable; explainable predictions | Dependent on description quality | StructGPT, PU-GPT-embedding [2] |

Composition-based models like SynthNN (Synthesizability Neural Network) operate solely on chemical formulas, making them applicable for screening materials where atomic structure is unknown. These models learn chemical principles directly from data distributions, implicitly capturing relationships like charge-balancing, chemical family tendencies, and ionicity without explicit programming [3]. Remarkably, in benchmark tests, SynthNN achieved 1.5× higher precision in identifying synthesizable materials compared to the best human experts and completed the task five orders of magnitude faster [3].

Structure-based models leverage the atomic arrangement of crystal structures, enabling them to differentiate between polymorphs of the same composition—a critical capability given the prevalence of polymorphic materials like diamond and graphite [1]. These models utilize various structure representations, including graph-based encodings, Fourier-transformed crystal features, and Wyckoff position encodings [1] [2].

The Positive-Unlabeled (PU) learning framework addresses a fundamental data challenge in synthesizability prediction. Since only synthesized materials (positive examples) are definitively known, while unsynthesized materials constitute an unlabeled set (which may contain both synthesizable and non-synthesizable materials), PU learning provides appropriate mathematical foundations for model training [3] [2].

Recent advances incorporate Large Language Models (LLMs) fine-tuned on text descriptions of crystal structures generated by tools like Robocrystallographer. These models not only achieve performance comparable to traditional graph-neural networks but also provide human-readable explanations for their predictions, enhancing interpretability [2]. The LLM-based workflow can generate explanations for the factors governing synthesizability and extract underlying physical rules to guide chemists in modifying non-synthesizable hypothetical structures [2].

Performance Benchmarking of Computational Approaches

Table 2: Performance Comparison of Synthesizability Prediction Methods

| Method | True Positive Rate (Recall) | Approximated Precision | Key Strengths | Test Conditions |

|---|---|---|---|---|

| DFT Formation Energy [3] | ~50% | Not specified | Strong theoretical foundation | Captured % of known synthesized materials |

| Charge-Balancing [3] | 37% (all inorganics), 23% (Cs compounds) | Not specified | Chemically intuitive, fast | Percentage of known materials that are charge-balanced |

| SynthNN (Composition-Based) [3] | Not specified | 7× higher than DFT | High throughput; learns chemical principles | Comparison against human experts |

| PU-CGCNN (Structure-Based) [2] | Baseline | Baseline | Established structure-based benchmark | MP30 dataset with α-estimation |

| StructGPT (LLM-Based) [2] | Comparable to PU-CGCNN | Comparable to PU-CGCNN | Explainable predictions | Same as above with GPT-4o-mini |

| PU-GPT-Embedding [2] | Highest among compared methods | Highest among compared methods | Combines LLM representations with PU-classifier | Same as above |

The performance advantages of machine learning approaches are evident in these comparisons. The integration of structural information typically improves prediction quality over composition-only models, while LLM-based approaches offer additional benefits in interpretability and potential cost efficiency [2].

Experimental Validation and Synthesis Planning

Autonomous Synthesis Platforms

The translation of computational predictions to synthesized materials is increasingly being automated through autonomous synthesis platforms. These systems integrate robotics, real-time analytics, and synthesis planning algorithms to execute multi-step synthesis of inorganic materials with minimal human intervention.

The hardware infrastructure for such platforms typically includes:

- Liquid handling robots and robotic grippers for precise transfer of reagents and vessels

- Computer-controlled heater/shaker blocks for reaction execution

- Automated purification systems for product isolation

- Analytical instrumentation (e.g., LC/MS, NMR) for product verification and quantification [4]

A significant engineering challenge involves developing universally applicable purification strategies that can be automated. While specialized approaches like Burke's iterative MIDA-boronate coupling platform use catch-and-release methods for specific reactions, a general purification strategy for diverse inorganic materials remains elusive [4].

Synthesis Route Prediction

Predicting viable synthesis routes represents a complementary approach to synthesizability assessment. Recent work has developed element-wise graph neural networks to predict inorganic synthesis recipes from target compositions [5]. These models outperform popularity-based statistical baselines and demonstrate temporal validity—when trained on data until 2016, they successfully predict synthetic precursors for materials synthesized after 2016 [5].

The probability scores generated by these models correlate with prediction accuracy, serving as useful confidence metrics that enable experimentalists to prioritize synthesis attempts [5]. This capability is particularly valuable for resource-intensive solid-state synthesis experiments.

Integrated Workflows and Case Studies

Synthesizability-Driven Crystal Structure Prediction

A promising framework for bridging the synthesis gap integrates synthesizability evaluation directly into the crystal structure prediction process. This synthesizability-driven CSP approach combines symmetry-guided structure generation with machine learning-based synthesizability assessment [1].

The methodology involves three key stages, illustrated in the workflow below:

This workflow successfully reproduced 13 experimentally known XSe (X = Sc, Ti, Mn, Fe, Ni, Cu, Zn) structures and identified 92,310 potentially synthesizable structures from the 554,054 candidates initially predicted by the GNoME (Graph Networks for Materials Exploration) project [1]. The approach also predicted three novel HfV₂O₇ phases with low formation energies and high synthesizability, demonstrating its potential for discovering viable synthesis targets [1].

Explainable AI for Synthesis Guidance

A significant advancement in synthesizability prediction is the development of explainable AI approaches that not only predict synthesizability but also provide human-understandable reasons for the predictions. As illustrated below, LLM-based workflows can generate structural descriptions and synthesizability explanations:

This explainable AI framework helps materials scientists understand the structural or chemical features that contribute to synthesizability challenges, enabling more informed design of synthesizable materials [2].

- Materials Project (MP) Database: Contains computational and experimental data for over 150,000 materials, including crystal structures, formation energies, and band structures. Essential for training ML models and benchmarking predictions [1] [2].

- Inorganic Crystal Structure Database (ICSD): Comprehensive collection of experimentally determined inorganic crystal structures. Serves as the primary source of "positive" examples for synthesizability models [3].

- Robocrystallographer: Open-source toolkit for generating text-based descriptions of crystal structures. Converts CIF files into natural language descriptions for LLM-based prediction approaches [2].

- Open Reaction Database: Emerging source of standardized chemical reaction data, including synthesis procedures and outcomes. Addresses critical data limitations in synthesis planning [4].

Experimental Infrastructure

- Automated Synthesis Platforms: Integrated systems like the Chemputer or Eli Lilly's automated synthesis platform that translate digital synthesis recipes into physical operations. Enable high-throughput experimental validation [4].

- Advanced Characterization Suite: Combination of analytical techniques including LC/MS for separation and identification, NMR for structural elucidation, and corona aerosol detection (CAD) for universal quantitation without standards [4].

- Chemical Inventory Management: Automated storage and retrieval systems for maintaining extensive collections of precursors and building blocks. Critical for enabling diverse synthesis campaigns [4].

The synthesis bottleneck represents a fundamental challenge in materials discovery that intersects computational prediction, experimental synthesis, and data science. While significant progress has been made in developing computational models that surpass human expert performance in identifying synthesizable materials, fully bridging the gap between virtual design and real-world materials requires continued advancement in several key areas.

The integration of explainable AI approaches will be crucial for building trust in predictive models and providing actionable insights for materials design. Furthermore, the development of universal purification strategies and more sophisticated synthesis route prediction algorithms will enhance the feasibility of autonomous materials synthesis. As these technologies mature, the vision of fully autonomous materials discovery pipelines—from computational design to synthesized and characterized materials—comes closer to reality, promising to accelerate the development of next-generation materials for energy, electronics, and beyond.

The convergence of synthesizability-driven CSP, explainable AI, and autonomous synthesis platforms represents a paradigm shift in materials discovery, one that ultimately transforms the synthesis bottleneck from a formidable barrier into a manageable engineering challenge.

The discovery of new inorganic crystalline materials has been revolutionized by computational methods, particularly density functional theory (DFT), which can screen millions of hypothetical compounds for desirable properties. However, a critical bottleneck persists: the significant disparity between computationally predicted materials and those that can be successfully synthesized in the laboratory. For decades, thermodynamic stability, typically assessed through formation energy and energy above the convex hull, has served as the primary proxy for synthesizability. This paradigm operates on the assumption that compounds with favorable formation energies are synthetically accessible, while those with unfavorable energies are not. Yet, this assumption fails to account for the complex kinetic and experimental factors that govern actual synthesis outcomes. This whitepaper examines the fundamental limitations of relying solely on thermodynamic stability for synthesizability prediction and explores the emerging data-driven approaches that are bridging this critical gap.

The inadequacy of traditional metrics is quantitatively evident. While thermodynamic stability methods can identify compounds unlikely to decompose, they incorrectly label many metastable-yet-synthesizable materials as non-synthesizable while also missing numerous energetically favorable compounds that have never been synthesized. This discrepancy arises because synthesis is governed not only by thermodynamic driving forces but also by kinetic pathways, precursor selection, reaction conditions, and experimental feasibility constraints that transcend simple thermodynamic considerations [6]. The development of accurate synthesizability predictors therefore represents a fundamental challenge in the field of computational materials design, one that must account for the complex interplay of multiple physical and chemical factors beyond bulk thermodynamic stability.

The Quantitative Shortcomings of Thermodynamic Stability Metrics

Traditional approaches for identifying promising synthesizable materials typically involve assessing thermodynamic formation energies or energy above convex hull via DFT calculations. The underlying premise is that materials with negative formation energies and small or positive energies above the convex hull are thermodynamically stable or metastable and thus potentially synthesizable. However, numerous structures with favorable formation energies have yet to be synthesized, while various metastable structures with less favorable formation energies are successfully synthesized [7]. This fundamental disconnect reveals the limitations of thermodynamic stability as a comprehensive synthesizability metric.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Prediction Method | Key Metric | Accuracy/Performance | Principal Limitation |

|---|---|---|---|

| Thermodynamic Stability (Formation Energy) | Energy above hull ≥0.1 eV/atom | 74.1% accuracy [7] | Misses metastable phases; ignores kinetic factors |

| Kinetic Stability (Phonon Spectrum) | Lowest frequency ≥ -0.1 THz | 82.2% accuracy [7] | Computationally expensive; imaginary frequencies don't preclude synthesis |

| Charge Balancing | Net ionic charge neutrality | 37% of known compounds charge-balanced [3] | Overly simplistic; fails for metallic/covalent systems |

| SynthNN (Composition-based ML) | Precision in discovery | 7× higher precision than formation energy [3] | Lacks structural information |

| CSLLM Framework (Structure-based LLM) | Overall accuracy | 98.6% accuracy [7] | Requires structural input; training data limitations |

The performance gap between thermodynamic metrics and modern machine learning approaches is striking. Large Language Models (LLMs) fine-tuned for synthesizability prediction, such as the Crystal Synthesis LLM (CSLLM) framework, achieve 98.6% accuracy in testing, significantly outperforming traditional thermodynamic screening based on energy above hull (74.1%) and kinetic stability assessment via phonon spectrum analysis (82.2%) [7]. Similarly, the SynthNN model demonstrates 7× higher precision in identifying synthesizable materials compared to DFT-calculated formation energies [3]. These quantitative comparisons underscore the severe limitations of relying solely on thermodynamic stability for synthesizability assessment.

Fundamental Limitations of the Thermodynamic Paradigm

The Metastability Challenge

Thermodynamic stability metrics fundamentally fail to account for metastable materials that can be synthesized through kinetic control. Many experimentally realized compounds are metastable under standard conditions but become accessible through specific synthesis pathways that bypass thermodynamic equilibrium. For instance, in the La-Si-P ternary system, computational insights reveal that thermodynamic stability alone cannot explain the synthetic challenges encountered for predicted ternary phases (La₂SiP, La₅SiP₃, and La₂SiP₃). Molecular dynamics simulations using machine learning interatomic potentials indicate that the rapid formation of a Si-substituted LaP crystalline phase creates a kinetic barrier to synthesizing the predicted ternary compounds, despite their computed thermodynamic stability [6]. This exemplifies how kinetic competition between phases, rather than thermodynamic stability alone, governs synthetic accessibility.

Beyond Bulk Energetics: The Role of Synthesis Environment

Traditional thermodynamic approaches typically consider only the bulk energetics of the starting materials and final products, ignoring the complex reaction pathways and environmental factors that dictate experimental synthesis. The synthesis process is influenced by numerous factors beyond bulk thermodynamics, including precursor selection, reaction kinetics, temperature profiles, pressure conditions, and the presence of catalysts or flux agents. These factors collectively determine whether a thermodynamically favorable compound can actually be synthesized [6]. Phase diagrams offer a more direct correlation with synthesizability as they delineate stable phases under varying temperatures, pressures, and compositions. However, constructing the free energy surface for all possible phases as a function of these variables is computationally impractical for high-throughput screening [7].

Emerging Approaches Beyond Thermodynamic Stability

Machine Learning with Positive-Unlabeled Learning

Machine learning approaches, particularly those employing Positive-Unlabeled (PU) learning frameworks, have emerged as powerful alternatives to thermodynamic stability metrics. These methods treat synthesizability prediction as a classification problem where experimentally reported structures serve as positive examples, while hypothetical structures from computational databases are treated as unlabeled (rather than definitively negative) examples. This paradigm acknowledges that most unsynthesized materials are not inherently unsynthesizable but simply not yet synthesized.

The SynthNN model exemplifies this approach, utilizing a deep learning architecture that learns an optimal representation of chemical formulas directly from the distribution of previously synthesized materials without requiring assumptions about charge balancing or thermodynamic stability [3]. Remarkably, without any prior chemical knowledge, SynthNN learns the chemical principles of charge-balancing, chemical family relationships, and ionicity, utilizing these principles to generate synthesizability predictions [3]. In a head-to-head material discovery comparison against 20 expert material scientists, SynthNN outperformed all experts, achieving 1.5× higher precision and completing the task five orders of magnitude faster than the best human expert [3].

Large Language Models for Crystal Structure Synthesis Prediction

Recent advances have demonstrated the exceptional capability of Large Language Models (LLMs) in predicting synthesizability by learning from text representations of crystal structures. The Crystal Synthesis Large Language Models (CSLLM) framework utilizes three specialized LLMs to predict the synthesizability of arbitrary 3D crystal structures, possible synthetic methods, and suitable precursors, respectively [7]. To enable LLM processing, crystal structures are converted into a text representation termed "material string" that integrates essential crystal information including space group, lattice parameters, and Wyckoff positions in a compact format [7].

Table 2: Performance of CSLLM Framework Components

| CSLLM Component | Prediction Task | Performance | Key Innovation |

|---|---|---|---|

| Synthesizability LLM | Binary classification (synthesizable/non-synthesizable) | 98.6% accuracy [7] | Outperforms energy (74.1%) and phonon (82.2%) methods |

| Method LLM | Synthetic route classification (solid-state/solution) | 91.0% accuracy [7] | Predicts appropriate synthesis approach |

| Precursor LLM | Suitable precursor identification | 80.2% success rate [7] | Suggests chemical precursors for synthesis |

The exceptional performance of LLM-based approaches stems from their ability to learn complex patterns from comprehensive datasets of known materials. These models leverage not only structural and compositional features but also implicit knowledge about synthetic accessibility learned from the entire corpus of reported inorganic crystals. Furthermore, fine-tuned LLMs provide explainable synthesizability predictions, generating human-readable explanations for the factors governing synthesizability and extracting underlying physical rules [2].

Experimental Protocols and Methodologies

Dataset Construction for Synthesizability Prediction

Robust synthesizability prediction requires carefully curated datasets with balanced positive and negative examples. The following protocol outlines the dataset construction process used in state-of-the-art models:

- Positive Example Selection: Extract experimentally confirmed crystal structures from the Inorganic Crystal Structure Database (ICSD). Apply filtering to include only ordered structures with limited elemental diversity (e.g., ≤7 different elements) and reasonable unit cell size (e.g., ≤40 atoms) to ensure tractability [7].

- Negative Example Generation: Calculate CLscore (a synthesizability metric) for a large pool of theoretical structures from materials databases (Materials Project, Computational Materials Database, Open Quantum Materials Database, JARVIS) using pre-trained Positive-Unlabeled learning models. Select structures with the lowest CLscores (e.g., <0.1) as negative examples of non-synthesizable materials [7].

- Composition Balancing: Ensure balanced representation across different crystal systems (cubic, hexagonal, tetragonal, orthorhombic, monoclinic, triclinic, and trigonal) and element combinations to prevent algorithmic bias [7].

- Text Representation: Convert crystal structures to text format using either the "material string" representation (integrating space group, lattice parameters, and Wyckoff positions) or tools like Robocrystallographer that generate natural language descriptions of crystal structures [7] [2].

LLM Fine-Tuning Protocol

For LLM-based synthesizability prediction, the following fine-tuning protocol has proven effective:

- Model Selection: Utilize foundation models like GPT-4o-mini as base architectures, which have demonstrated superior performance compared to earlier models like GPT-3.5 [2].

- Input Formatting: Structure input prompts to include both stoichiometric information and structural descriptions. Experiments show that models incorporating structural information (StructGPT) outperform composition-only models (StoiGPT) for polymorph-specific synthesizability assessment [2].

- Fine-Tuning Strategy: Employ supervised fine-tuning on the curated dataset of positive and negative synthesizability examples. Use appropriate batch sizes and learning rates to maintain the model's general knowledge while adapting it to the specific synthesizability prediction task.

- Embedding Alternative: For cost-efficient deployment, consider extracting embedding representations from the LLM and training separate classifier networks, which can reduce inference costs by 57% compared to full LLM inference [2].

Validation and Benchmarking

Rigorous validation of synthesizability models requires specialized protocols:

- Hold-Out Testing: Reserve 20% of positive and unlabeled data as a hold-out test dataset for assessing model performance [2].

- PU-Learning Metrics: Employ PU-learning specific evaluation metrics including true positive rate (recall) and α-estimation for approximating precision and false positive rates, acknowledging the absence of true negative examples [2].

- Comparative Benchmarking: Compare performance against multiple baselines including random guessing, charge-balancing approaches, formation energy thresholds, and human expert performance [3].

- Generalization Testing: Validate model performance on structurally complex materials with large unit cells that exceed the complexity of training data to assess generalization capability [7].

Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Tools for Synthesizability Research

| Resource/Tool | Type | Function/Purpose | Access |

|---|---|---|---|

| ICSD (Inorganic Crystal Structure Database) | Database | Primary source of experimentally confirmed crystal structures for positive examples | Commercial |

| Materials Project | Database | Source of hypothetical structures for negative example generation | Public |

| Robocrystallographer | Software Tool | Generates text-based descriptions of crystal structures for LLM input | Open Source |

| CLscore Model | Computational Model | Generates synthesizability scores for theoretical structures | Research Implementation |

| Material String Representation | Data Format | Compact text representation of crystal structure for LLM processing | Research Implementation |

| PU-CGCNN | Computational Model | Graph neural network for synthesizability prediction; baseline comparator | Research Implementation |

| VASP | Software Package | DFT calculations for traditional thermodynamic stability assessment | Commercial |

| Fine-Tuned LLMs (e.g., StructGPT) | Computational Model | LLM specialized for synthesizability prediction | Research Implementation |

The limitations of thermodynamic stability as a synthesizability proxy are both quantitative and fundamental. With accuracy rates of approximately 74-82% compared to 92-99% for advanced machine learning approaches, thermodynamic metrics alone are insufficient for reliable synthesizability assessment in computational materials discovery. The emerging paradigm of data-driven synthesizability prediction, particularly through LLMs and specialized machine learning models, represents a transformative advancement that directly addresses the complex interplay of thermodynamic, kinetic, and experimental factors governing materials synthesis.

The implications for materials research are profound. By integrating accurate synthesizability predictors into computational screening workflows, researchers can prioritize experimental efforts on genuinely accessible materials with high potential for successful synthesis. Furthermore, the ability to predict not just synthesizability but also appropriate synthetic methods and potential precursors represents a crucial step toward autonomous materials discovery pipelines. As these models continue to evolve, incorporating richer experimental data and more sophisticated representations of synthetic pathways, they will increasingly narrow the gap between computational prediction and experimental realization, accelerating the discovery of novel functional materials for energy, electronics, and beyond.

Within the high-throughput computational search for novel synthesizable inorganic crystals, the principle of charge-balancing stands as a foundational heuristic. This whitepaper examines its role as a critical, yet ultimately limited, filter in materials discovery pipelines. While charge neutrality is a non-negotiable requirement for stable crystalline compounds, an over-reliance on this single metric constitutes the "Charge-Balancing Fallacy"—the assumption that charge balance alone is a sufficient predictor of synthesizability and thermodynamic stability. Through a critical analysis of contemporary literature and emerging benchmarking frameworks, this article delineates the boundaries of charge-balancing's utility. It argues that for computational materials science to overcome its major discovery challenges, it must move beyond this and other isolated heuristics and adopt integrated, quantitative, and probabilistic assessment models that account for the complex multi-dimensional parameter space governing crystal formation.

The discovery of novel inorganic crystalline materials is a cornerstone of technological advancement, underpinning progress in domains from clean energy to biomedicine [8]. However, the traditional experimental discovery process is slow, often averaging two decades from initial research to commercialization, and is inherently limited in its ability to explore the vastness of chemical space [8]. This space is astronomically large; for just quaternary materials, conservative estimates suggest over 10^10 compositions are possible when considering only electronegativity and charge-balancing rules [9].

In response, computational materials science has emerged as a powerful tool for accelerating discovery. The paradigm has shifted from post-rationalizing experimental observations to truly predictive workflows where theory leads experimentation [8]. Central to these high-throughput computational pipelines are filters—computational expressions of human domain knowledge and scientific principles used to screen millions of hypothetical candidate compounds and weed out those that are likely unsynthesizable or unstable [10]. These filters can be categorized as "hard" or "soft":

- Hard Filters encode non-negotiable physical laws. A prime example is charge neutrality, a fundamental requirement for any stable crystalline compound.

- Soft Filters encode empirical rules of thumb, such as the Hume-Rothery rules for solid solubility, which are frequently broken but still provide useful guidance [10].

While indispensable, an over-reliance on any single heuristic, including the foundational principle of charge balance, can become a fallacy that limits discovery. This article examines the precise nature of this Charge-Balancing Fallacy and explores the path toward more robust, integrated discovery frameworks.

The Charge-Balancing Heuristic: Utility and Limitations

The Fundamental Necessity of Charge Balance

The charge-balancing heuristic is rooted in the unequivocal requirement that a stable crystalline compound must be electrically neutral overall. In the context of inorganic crystals, this typically involves ensuring that the positive charges from cations balance the negative charges from anions within a given composition. This principle is so fundamental that it is often one of the first and most strictly applied filters in a materials screening pipeline. Its application drastically reduces the combinatorial search space, making computational surveys of hypothetical materials tractable [10] [9].

The Fallacy: Sufficiency versus Necessity

The "Charge-Balancing Fallacy" arises not from a misunderstanding of its necessity, but from the incorrect assumption of its sufficiency. A charge-balanced composition is a necessary condition for stability, but it is far from a sufficient predictor of actual synthesizability or thermodynamic persistence. This fallacy manifests in several critical ways:

Neglect of Structural Stability: A composition can be charge-balanced yet correspond to numerous potential atomic arrangements (polymorphs), most of which may be energetically unfavorable. The accurate prediction of the most stable crystal structure—the ground-state configuration—remains one of the most significant challenges in computational materials science [8] [11]. The solid-state packing arrangement is a key driver of a material's properties, and small changes can drastically alter its stability and functionality [8].

Oversimplification of Thermodynamics: Thermodynamic stability is not determined by a compound's formation energy in isolation, but by its energetic competition with all other phases in its chemical space, quantified by its energy above the convex hull (Ehull) [9]. A charge-balanced compound can easily have a positive Ehull, indicating that it is metastable and will tend to decompose into a mixture of more stable compounds.

Exclusion of Kinetic and Synthetic Factors: Synthesizability is influenced by kinetic barriers, reaction pathways, and processing conditions, which are not captured by a simple charge-balancing check. A charge-balanced compound may be thermodynamically stable but impossible to synthesize under practical conditions, or it may form undesirable, metastable polymorphs [12].

The following table summarizes key heuristics beyond charge balance that are critical for assessing synthesizability and stability.

Table 1: Key Heuristics and Metrics for Predicting Crystal Stability and Synthesizability

| Heuristic/Metric | Type | Description | Limitations |

|---|---|---|---|

| Charge Neutrality [10] | Hard Filter | Ensures the compound's overall charge is zero. | Necessary but insufficient; does not guarantee stability. |

| Energy Above Hull (E_hull) [9] | Quantitative Metric | Energy difference between a compound and the most stable mixture of other phases from the convex hull phase diagram. | Primary indicator of thermodynamic stability; requires accurate energy calculations. |

| Structure Prediction Accuracy [11] | Quantitative Challenge | The ability to computationally predict the correct ground-state crystal structure from a composition. | Computationally expensive; accuracy is tied to the method's cost. |

| Electronegativity Balance [10] | Soft Filter | Checks for reasonable electronegativity differences between elements. | An empirical rule that is frequently violated in known stable compounds. |

Quantitative Frameworks for Moving Beyond the Fallacy

To overcome the limitations of isolated heuristics, the field is moving toward standardized, quantitative evaluation frameworks that integrate multiple stability criteria.

Benchmarking Crystal Structure Prediction

The performance of Crystal Structure Prediction (CSP) algorithms is critical, as accurate structure prediction is a prerequisite for reliable property calculation. Historically, evaluating CSP algorithms relied heavily on manual inspection and comparison of formation energies [11]. This lack of standardized metrics made it difficult to compare different methods objectively.

Recent work has focused on developing quantitative CSP performance metrics that automatically determine the quality of predicted structures against known ground truths. These include a suite of structure similarity metrics that, when combined, capture key aspects of structural fidelity, even when predicted structures have different spatial symmetries than the target [11]. The move toward such automated, quantitative benchmarking is essential for rigorously evaluating and improving the computational tools that underpin modern materials discovery.

Prospective vs. Retrospective Evaluation

A major disconnect in the field has been between retrospective benchmarking on known, stable materials and prospective performance in a genuine discovery campaign targeting unknown materials. To address this, frameworks like Matbench Discovery have been developed to simulate real-world discovery [9].

This framework highlights a critical misalignment: a model can exhibit excellent regression performance (e.g., low Mean Absolute Error in formation energy) but still have a high false-positive rate for stable materials if its predictions lie close to the stability decision boundary (0 eV/atom Ehull). This underscores why evaluation must be based on task-relevant classification metrics (e.g., precision and recall for stability) rather than regression accuracy alone [9]. The following table contrasts different model evaluation approaches.

Table 2: Comparison of Model Evaluation Paradigms in Materials Discovery

| Evaluation Aspect | Traditional/Restricted Approach | Advanced/Prospective Approach | Implication |

|---|---|---|---|

| Primary Metric | Regression accuracy (e.g., MAE of formation energy) [9] | Classification performance (e.g., F1-score for stability) [9] | Focuses on correct decision-making, not just numerical accuracy. |

| Data Splitting | Random split on known materials [9] | Time-split or cluster-based split to test generalizability [9] | Better simulates the challenge of finding truly novel materials outside the training distribution. |

| Stability Target | Formation energy [9] | Energy above the convex hull (Ehull) [9] | Directly measures thermodynamic stability against phase decomposition. |

| Structure Handling | Using relaxed DFT structures as input [9] | Predicting from unrelaxed (initial) structures [9] | Avoids circular logic and increases practical utility for screening new candidates. |

Experimental Protocols and the Scientist's Toolkit

Integrated Workflow for Stable Crystal Discovery

A modern, robust pipeline for discovering synthesizable inorganic crystals integrates high-throughput computation, machine learning, and high-fidelity validation. The following diagram visualizes this multi-stage workflow, highlighting how heuristics like charge balancing are embedded within a broader, more rigorous process.

Workflow for Discovering Stable Crystals

The Scientist's Computational Toolkit

The following table details key computational "reagents" and resources essential for executing the workflow described above.

Table 3: Essential Computational Tools for Predicting Synthesizable Crystals

| Tool/Resource | Category | Function | Example Tools |

|---|---|---|---|

| Hypothetical Databases | Data | Large datasets of enumerated hypothetical compounds for screening. | Synthetic datasets from various sources [10] |

| CSP Algorithms | Software | Predicts the stable crystal structure of a given composition. | USPEX, CALYPSO, AIRSS [11] |

| Universal Interatomic Potentials (UIPs) | Model | Machine learning force fields for fast, accurate energy and force predictions. | UIPs highlighted in Matbench Discovery [9] |

| First-Principles Methods | Software | High-fidelity quantum mechanical calculations for validation. | Density Functional Theory (DFT) with VASP [11] [9] |

| Stability Metrics | Metric | Quantifies thermodynamic stability. | Energy above convex hull (E_hull) [9] |

| Benchmarking Platforms | Framework | Standardized evaluation of ML and CSP algorithm performance. | Matbench Discovery [9], CSPBenchMetrics [11] |

Free-Energy Calculation and Error Quantification Protocol

For the highest level of confidence, particularly in industrial applications like pharmaceutical polymorph selection, advanced free-energy calculation protocols have been developed. A state-of-the-art method, as demonstrated in recent studies, involves a composite approach [12]:

- Composite Energy Calculation: Combine a hybrid density functional (PBE0) with many-body dispersion (MBD) corrections and vibrational free energy (Fvib) contributions to achieve high accuracy.

- Error Quantification: Critically, establish a reliable experimental benchmark for solid-solid free-energy differences. From this, derive transferable error estimates, typically expressed as a standard error per atom (e.g., ~0.191 kJ mol⁻¹) and per water molecule for hydrates (~0.641 kJ mol⁻¹) [12].

- Phase Diagram Construction: Use the computed free energies with their associated errors to place anhydrate and hydrate crystal structures on the same energy landscape as a function of temperature and relative humidity, enabling predictive risk assessment of phase transitions.

This methodology transforms CSP from a qualitative tool into a quantitative, actionable procedure where predictions come with defined error bars, allowing for robust decision-making [12].

The charge-balancing heuristic is a necessary first gatekeeper in the computational search for new inorganic materials, but falling into the "Charge-Balancing Fallacy" by treating it as a sufficient condition for synthesizability severely limits discovery potential. The path forward lies in embracing integrated and probabilistic workflows that synthesize multiple lines of evidence.

The future of materials discovery will be driven by:

- Multi-Filter Pipelines: Embedding charge balance alongside other hard and soft filters (e.g., electronegativity, structural motifs) within a systematic screening environment [10].

- Advanced ML and UIPs: Leveraging universal interatomic potentials and other machine learning models that have been prospectively benchmarked for their ability to accurately classify stability, not just regress formation energies [9].

- Quantified Uncertainty: Adopting frameworks that provide error estimates for computed free energies, enabling true risk assessment and prioritization of experimental targets [12].

- Closing the Loop with Experiment: Using computation to guide synthesis and, in turn, using experimental results to refine and validate computational models prospectively.

By moving beyond the charge-balancing fallacy and other isolated heuristics, the field can fully exploit the enormous potential materials space and systematically discover the novel functional materials urgently needed to address global challenges.

The discovery of novel inorganic crystalline materials is a fundamental driver of technological progress, from developing more efficient batteries to designing new pharmaceuticals. While computational models can rapidly generate millions of hypothetical crystal structures with desirable properties, a critical bottleneck persists: the overwhelming majority of these virtual candidates cannot be synthesized in a laboratory [13] [3]. This discrepancy creates a significant gap between theoretical prediction and experimental realization, slowing the entire discovery cycle. The core of this problem is a fundamental data scarcity issue. In a typical supervised machine learning (ML) classification task, a model is trained on a balanced set of both positive examples (synthesizable crystals) and negative examples (non-synthesizable crystals). However, in materials science, while vast databases of successfully synthesized materials exist (e.g., the Materials Project (MP) and the Inorganic Crystal Structure Database (ICSD)), there are no definitive repositories of "unsynthesizable" materials [3] [2]. Failed synthesis attempts are rarely reported in the scientific literature, and the space of impossible compounds is astronomically large and undefined [14] [3]. This lack of verified negative data renders standard binary classification ML models ineffective for predicting synthesizability. To overcome this "data hurdle," researchers have turned to Positive-Unlabeled (PU) Learning, a class of semi-supervised machine learning techniques designed to learn exclusively from positive and unlabeled data [2] [15]. This whitepaper provides an in-depth technical guide to the PU learning problem, its methodologies, and its application as an essential framework for predicting the synthesizability of inorganic crystals.

The PU Learning Paradigm: Core Concepts and Formulations

Problem Formulation and Key Assumptions

Positive-Unlabeled (PU) learning formalizes the synthesizability prediction problem by redefining the available data. The set of all experimentally synthesized crystals, typically sourced from the ICSD or MP, is treated as the Positive (P) set. The vast space of hypothetical, computer-generated crystals for which synthesis has not been attempted or confirmed is treated not as negative, but as the Unlabeled (U) set. The key insight is that the unlabeled set is a mixture of both actually positive (synthesizable but yet-to-be-made) and actually negative (unsynthesizable) examples; the learner's task is to identify the hidden negative examples within this unlabeled set [3] [2]. The success of PU learning relies on two foundational assumptions:

- Selected Completely at Random (SCAR) Assumption: The probability that a positive example is labeled (i.e., included in the known synthesized database) is constant and independent of its attributes [3].

- Positive Examples are "Typical": The known positive examples in the labeled set are representative of the entire population of synthesizable materials. If the labeled positives are a biased subset (e.g., only containing certain crystal systems), the model's ability to generalize will be compromised.

A Comparative View of Traditional and PU-Based Approaches

The table below contrasts traditional stability metrics and standard ML with the PU learning approach, highlighting why PU learning is necessary for this domain.

Table 1: Comparison of Synthesizability Assessment Methods

| Method Category | Core Principle | Key Advantage | Fundamental Limitation |

|---|---|---|---|

| Thermodynamic Stability | Uses Energy Above Hull (E$_{\text{hull}}$) as a proxy for stability. | Physically intuitive; easily calculated via Density Functional Theory (DFT). | Fails to account for kinetics and synthesis conditions; many metastable materials exist [14] [7]. |

| Charge Balancing | Filters compositions based on net-neutral ionic charge. | Computationally inexpensive; chemically motivated. | Inflexible; fails for metallic/covalent materials; poor empirical accuracy (e.g., only 37% of ICSD materials are charge-balanced) [3]. |

| Standard Supervised ML | Trains a binary classifier on known positive and negative examples. | Powerful if representative negative examples are available. | Inapplicable due to the complete lack of true negative data [3] [2]. |

| Positive-Unlabeled (PU) Learning | Learns from synthesized (P) and hypothetical (U) materials, treating U as a mixture. | Directly addresses the core data scarcity problem; does not require negative examples. | Performance evaluation is challenging; relies on the SCAR assumption, which may not always hold perfectly [13] [2]. |

Contemporary Methodologies and Experimental Protocols

Recent research has produced several sophisticated PU learning frameworks for synthesizability prediction. The table below summarizes the architecture, input data, and key performance metrics of several state-of-the-art models.

Table 2: Summary of Contemporary PU Learning Models for Synthesizability

| Model Name | Architecture & Input | Key Innovation | Reported Performance |

|---|---|---|---|

| CPUL (Contrastive Positive Unlabeled Learning) [13] | Two-stage model: 1) Contrastive learning for feature extraction, 2) MLP classifier with PU learning. | Uses contrastive learning to pre-train features, improving robustness and reducing PU training time. | True Positive Rate (TPR): 93.95% on MP test set; 88.89% on Fe-containing materials. |

| SynthNN [3] | Deep learning model using atom2vec composition embeddings. | Learns synthesizability directly from the distribution of all known compositions; requires no crystal structure. | 7x higher precision than E$_{\text{hull}}$; outperformed human experts in discovery tasks. |

| CSLLM (Synthesizability LLM) [7] | Fine-tuned Large Language Model (LLM) using a novel "material string" text representation of crystal structure. | Achieves high accuracy by leveraging LLMs' pattern recognition on a balanced, structure-based dataset. | Accuracy: 98.6% on testing data, significantly outperforming E$_{\text{hull}}$ (74.1%) and phonon stability (82.2%). |

| PU-GPT-Embedding [2] | Pipeline: 1) Text description of crystal structure → 2) GPT text embeddings → 3) Neural network PU classifier. | Combines the rich representation of LLM embeddings with a dedicated PU classifier, offering high performance and cost efficiency. | Outperforms both graph-based models (PU-CGCNN) and fine-tuned LLMs (StructGPT) in prediction quality. |

Detailed Experimental Protocol: A Representative Workflow

The following protocol outlines the key steps for developing and validating a PU learning model for crystal synthesizability, synthesizing common elements from the cited research.

Data Curation and Preprocessing:

- Positive Set (P): Extract crystal structures and their compositions from a database of experimentally synthesized materials, such as the ICSD or the synthesized entries in the Materials Project (MP). A typical dataset may include ~38,000 positive samples [13] [2].

- Unlabeled Set (U): Compile a set of hypothetical crystal structures from generative models or stability screenings (e.g., from MP, OQMD, JARVIS). This set is typically much larger, often containing over 100,000 entries [13] [7].

- Feature Extraction: Convert the raw crystal data into a numerical representation suitable for ML.

- For Composition-based Models (e.g., SynthNN): Use learned composition embeddings like atom2vec [3].

- For Structure-based Models (e.g., CPUL, CSLLM): Generate features using crystal graphs (CGCNN), contrastive learning, or text-based representations like "material strings" or Robocrystallographer descriptions [13] [7] [2].

Model Training with PU Learning:

- Algorithm Selection: Choose a PU learning algorithm. A common and effective choice is a "bagging SVM" approach, which involves:

- Randomly sub-sampling potential negative examples from the unlabeled set.

- Training an ensemble of classifiers (e.g., Support Vector Machines) on the positive set and each sub-sample.

- Iteratively re-weighting the unlabeled samples and averaging the scores from all classifiers to produce a final Crystal-Likeness Score (CLscore) between 0 and 1 [13] [15].

- Loss Function: The model is trained with a loss function that penalizes misclassification of known positive examples while cautiously learning from the unlabeled set. The cost-sensitive binary cross-entropy loss is often used for neural network-based PU learners [3] [2].

- Algorithm Selection: Choose a PU learning algorithm. A common and effective choice is a "bagging SVM" approach, which involves:

Validation and Performance Assessment:

- Hold-out Test: Reserve a portion of the known positive data (e.g., 10,000 materials) as a test set. The model's True Positive Rate (TPR) or recall on this set is a primary metric, as it measures the ability to correctly identify synthesizable materials [13] [2].

- α-Estimation: Because true negatives are unavailable, precision and false positive rates cannot be directly calculated. Methods like α-estimation are used to approximate these metrics by estimating the fraction of positive examples in the unlabeled set [2].

- Case Study Validation: Apply the trained model to a specific, challenging subset of materials (e.g., all Fe-containing compounds or a novel chemical system) to demonstrate generalizability beyond the training distribution [13] [14].

Visualization of Methodologies and Workflows

High-Level Workflow for Synthesizability Prediction

The following diagram illustrates the end-to-end workflow for building and applying a PU learning model for synthesizability prediction.

Architecture of a Contrastive PU Learning (CPUL) Model

This diagram details the two-stage architecture of a specific advanced model, CPUL, which combines contrastive learning with PU learning.

Table 3: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Description | Relevance to PU Learning Experiments |

|---|---|---|

| Materials Project (MP) Database [13] [2] | A repository of computed and experimentally known crystal structures and properties. | Primary source for both positive (synthesized) and unlabeled (hypothetical) data. |

| Inorganic Crystal Structure Database (ICSD) [3] [7] | The world's largest database of fully determined inorganic crystal structures. | The definitive source for high-quality, curated positive examples. |

| Python Materials Genomics (pymatgen) [13] | A robust, open-source Python library for materials analysis. | Used for parsing CIF/POSCAR files, manipulating crystal structures, and computing features. |

| Robocrystallographer [2] | A tool that generates text descriptions of crystal structures from CIF files. | Converts structural data into human-readable text for fine-tuning LLMs or creating embeddings. |

| Crystal-Likeness Score (CLscore) [13] [7] | A probabilistic score (0-1) representing a model's confidence that a material is synthesizable. | The key output metric for ranking and screening candidate materials. |

| Bagging SVM / Iterative Classifier | A specific PU learning algorithm that ensembles multiple classifiers. | The core engine for many PU models, enabling robust learning from unlabeled data [13] [15]. |

The integration of Positive-Unlabeled learning has fundamentally shifted the paradigm of synthesizability prediction in computational materials science. By directly confronting the "data hurdle" of negative example scarcity, PU learning provides a principled and effective framework for prioritizing hypothetical crystals for experimental synthesis. The field is rapidly advancing, with current research trends focusing on enhancing model explainability, integrating multimodal data (e.g., synthesis recipes from text-mined literature), and leveraging the power of large foundation models [14] [7] [2]. The development of accurate synthesizability predictors is no longer a distant goal but an active research area, poised to dramatically accelerate the design and discovery of the next generation of functional materials.

The discovery of novel functional materials is a primary driver of technological innovation across fields ranging from energy storage to electronics. A persistent challenge in computational materials science is the significant gap between theoretically predicted materials and those that can be experimentally realized in the laboratory. This challenge necessitates a precise framework for categorizing materials based on their synthesis status and potential. Within the context of predicting synthesizable inorganic crystals, we define three critical classifications: synthesized materials (those experimentally realized and reported in literature), synthesizable materials (those theoretically predicted to be synthetically accessible but not yet synthesized), and unsynthesized materials (a broader category including both synthesizable and fundamentally unsynthesizable compounds). The core research problem lies in accurately distinguishing synthesizable candidates from the vast chemical space of unsynthesized materials, thereby bridging the divide between computational prediction and experimental realization.

Defining the Synthesizability Landscape

Conceptual Framework and Terminology

The relationship between synthesized, synthesizable, and unsynthesized materials can be visualized as a series of intersecting and non-intersecting sets within the total chemical space, each defined by specific criteria related to experimental realization and theoretical potential.

As illustrated in Figure 1, the relationship between these categories is dynamic and evolutionary. The synthesizable set contains materials that meet specific computational or theoretical criteria indicating synthetic accessibility, while the synthesized set represents the subset that has been experimentally realized. Materials may transition from synthesizable to synthesized through experimental effort, while theoretical advances may reclassify certain unsynthesizable materials as synthesizable.

Quantifying the Known and Unknown

Table 1: Representative Data Sources for Synthesized and Hypothetical Materials

| Database/Resource | Content Type | Size/Scale | Primary Use in Synthesizability Research |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [3] [16] | Experimentally synthesized inorganic crystals | ~70,120 curated structures (example dataset) [17] | Primary source of positive examples (synthesized materials) |

| Materials Project (MP) [16] [13] | DFT-calculated structures (mixed synthesized and hypothetical) | >126,000 materials [16] | Source of both positive and unlabeled examples |

| MatSyn25 (2D Materials) [18] | Synthesis process information from literature | 163,240 synthesis processes from 85,160 articles [18] | Training data for synthesis route prediction |

| OQMD/AFLOW [17] | High-throughput computational data | Millions of calculated structures [17] | Source of hypothetical/unlabeled materials |

Computational Approaches for Synthesizability Prediction

Machine Learning Methodologies

Positive-Unlabeled Learning Frameworks

A significant challenge in training synthesizability prediction models is the lack of definitive negative examples (truly unsynthesizable materials). Positive-unlabeled learning addresses this by treating unsynthesized materials as unlabeled rather than negative examples.

SynthNN Implementation: This deep learning model leverages atom2vec representations to learn optimal chemical formula representations directly from data without prior chemical knowledge. Remarkably, it learns chemical principles like charge-balancing and ionicity without explicit programming [3]. The model architecture uses a semi-supervised approach that probabilistically reweights unlabeled examples according to their likelihood of being synthesizable.

Contrastive Positive-Unlabeled Learning (CPUL): This hybrid approach combines contrastive learning with PU learning to predict crystal-likeness scores (CLscore). The framework first employs crystal graph contrastive learning to extract structural and synthetic features, followed by a multilayer perceptron classifier with PU learning to predict CLscore [13]. This approach achieves a true positive rate of 88.89% on Fe-containing materials from the Materials Project database [13].

Large Language Models for Synthesizability Assessment

The Crystal Synthesis Large Language Models framework represents a breakthrough in synthesizability prediction, utilizing three specialized LLMs to predict synthesizability, synthetic methods, and suitable precursors respectively [17]. Key innovations include:

- Material String Representation: A text-based representation of crystal structures that integrates essential crystal information for efficient LLM processing

- Balanced Dataset Curation: 70,120 synthesizable structures from ICSD paired with 80,000 non-synthesizable structures identified through CLscore thresholding

- Domain-Focused Fine-Tuning: Alignment of linguistic features with material features critical to synthesizability

The Synthesizability LLM achieves 98.6% accuracy, significantly outperforming traditional thermodynamic (74.1%) and kinetic (82.2%) stability metrics [17].

Network Science Approaches

The materials stability network approach constructs a scale-free network from the convex free-energy surface of inorganic materials combined with experimental discovery timelines [19]. This network evolves over time with a degree distribution following a power-law (p(k) ~ k^(-γ) with γ = 2.6 ± 0.1 after the 1980s [19].

Key network properties used for prediction include:

- Degree and eigenvector centralities

- Mean shortest path length

- Mean degree of neighbors

- Clustering coefficient

This approach implicitly captures circumstantial factors beyond thermodynamics that influence discovery, including scientific and non-scientific effects such as availability of kinetically favorable pathways, development of new synthesis techniques, and shifts in research focus [19].

Table 2: Performance Comparison of Synthesizability Prediction Methods

| Method/Model | Approach Type | Key Metrics | Advantages | Limitations |

|---|---|---|---|---|

| SynthNN [3] | Deep Learning (Atom2Vec) | 7× higher precision than formation energy; Outperforms human experts | Requires no prior chemical knowledge; Learns optimal descriptors from data | Composition-based only (no structure) |

| FTCP + DL [16] | Fourier-Transformed Crystal Properties | 82.6% precision, 80.6% recall for ternary crystals | Incorporates both real and reciprocal space information | Requires structural information |

| CPUL [13] | Contrastive + PU Learning | 93.95% TP accuracy on MP test set | Short training time; High accuracy on limited knowledge | Two-stage process more complex |

| CSLLM [17] | Large Language Model | 98.6% synthesizability accuracy; >90% method/precursor accuracy | Highest accuracy; Predicts methods and precursors | Requires extensive fine-tuning |

| Stability Network [19] | Network Science | Captures discovery dynamics | Incorporates historical discovery patterns | Indirect synthesizability assessment |

Experimental Validation and Synthesis Planning

Retrosynthesis Prediction for Inorganic Solids

Predicting synthesis pathways represents a critical step beyond binary synthesizability classification. The ElemwiseRetro model formulates inorganic retrosynthesis problems by dividing chemical elements into "source elements" (must be provided as precursors) and "non-source elements" (can come from reaction environments) [20].

Element-wise Graph Neural Network Architecture:

- Target composition encoded as a graph with node features from pretrained representations

- Source element mask discriminates source elements from given compositions

- Precursor classifier predicts precursors from a template library

- Joint probability calculation ranks precursor sets

This approach achieves 78.6% top-1 and 96.1% top-5 exact match accuracy, significantly outperforming popularity-based baseline models (50.4% top-1 accuracy) [20]. The probability score strongly correlates with prediction accuracy, providing a confidence metric for experimental prioritization.

Integration of Thermodynamic and Kinetic Factors

While thermodynamic stability (formation energy, energy above convex hull) provides a foundational synthesizability filter, it is insufficient alone. Only 37% of synthesized inorganic materials are charge-balanced according to common oxidation states, and even among typically ionic binary cesium compounds, only 23% are charge-balanced [3]. This demonstrates the limitations of simplistic thermodynamic heuristics.

Successful synthesizability models integrate multiple stability criteria:

- Energy above hull (Ehull) thresholds (e.g., <0.08 eV/atom) [16]

- Phonon stability (absence of imaginary frequencies)

- Phase competition metrics

- Decomposition pathway analysis

Essential Research Tools and Protocols

Table 3: Essential Research Resources for Synthesizability Prediction

| Resource/Reagent | Type | Function/Role | Example Applications |

|---|---|---|---|

| ICSD Database [3] [17] | Experimental Database | Primary source of synthesized material structures; Ground truth for model training | Positive examples for supervised learning; Benchmarking |

| Materials Project API [16] [13] | Computational Database | Access to DFT-calculated properties and structures | Feature extraction; Training data generation |

| PyMatGen [16] [13] | Python Library | Materials analysis and processing | Structure manipulation; Feature generation |

| CrabNet [16] | Attention-based Network | Compositional property prediction | Baseline model comparison |

| CGCNN [16] [13] | Graph Neural Network | Structure-property prediction | Crystal representation learning |

| FTCP Representation [16] | Crystal Representation | Encodes periodicity and elemental properties | Input for deep learning models |

Experimental Workflow for Synthesizability Assessment

The precise definition of the synthesizable space represents a critical advancement in materials discovery, addressing the fundamental challenge of bridging computational prediction and experimental realization. The development of sophisticated machine learning approaches—from positive-unlabeled learning to large language models—has dramatically improved our ability to distinguish synthesizable materials within the vast chemical space of unsynthesized compounds. These computational tools, integrated with experimental validation workflows, provide researchers with a systematic framework for prioritizing synthesis efforts.

Future advancements will likely focus on several key areas: (1) improved integration of kinetic and processing factors into synthesizability predictions, (2) development of standardized material representations for more effective knowledge transfer across domains, and (3) creation of larger, more comprehensive synthesis databases to support data-driven approaches. As these methodologies mature, the systematic identification of synthesizable materials will accelerate the discovery and deployment of novel functional materials, ultimately reducing the time from conceptual design to practical implementation.

AI-Driven Solutions: From Compositional Models to Precursor Prediction

The discovery of new inorganic crystalline materials is fundamental to technological advancement, yet a significant bottleneck persists: the synthesizability gap. This refers to the challenge of predicting whether a computationally designed material can be successfully synthesized in a laboratory. Traditional proxies for synthesizability, such as thermodynamic stability calculated via Density Functional Theory (DFT) or simple charge-balancing rules, often prove inadequate as they fail to capture the complex kinetic and chemical factors governing real-world synthesis [3]. This whitepaper explores the fundamental challenges in predicting synthesizable inorganic crystals and details how deep learning models, particularly synthesizability classification models like SynthNN (Synthesizability Neural Network), are addressing this core problem. We provide an in-depth examination of SynthNN's architecture, training methodology, and performance, while also situating it within the broader landscape of emerging deep learning approaches, including large language models (LLMs) that are pushing the boundaries of accuracy and explainability in synthesizability prediction [3] [17] [2].

The journey of materials discovery has evolved through several paradigms, from empirical trial-and-error to computational simulation and now into a data-driven era. High-throughput computational screening and generative models can propose millions of candidate materials with desirable properties [21] [22]. However, the vast majority of these theoretically "stable" candidates may not be synthetically accessible, creating a critical bottleneck. The central challenge lies in the fact that synthesizability is a multifaceted concept influenced by:

- Thermodynamic and Kinetic Factors: While a negative formation energy is often a prerequisite, it is not sufficient. Kinetic barriers, nucleation rates, and finite-temperature effects play decisive roles [22].

- Chemical Intuition and Rules: Heuristics like charge-balancing, while chemically motivated, are inflexible. Remarkably, only about 37% of known synthesized inorganic materials in the Inorganic Crystal Structure Database (ICSD) are charge-balanced according to common oxidation states, highlighting the limitation of this approach [3].

- Non-Physical Constraints: Practical synthesizability is also governed by external factors such as precursor cost, equipment availability, and human-perceived importance of the target material [3].

This complex interplay of factors makes synthesizability prediction an ideal candidate for data-driven machine learning approaches. By learning directly from the vast and growing database of known synthesized materials, deep learning models can internalize the complex, often implicit, "rules" of inorganic synthesis without relying on potentially incomplete human-defined physical principles.

Core Architecture of SynthNN

SynthNN represents a pioneering deep learning framework that reformulates material discovery as a synthesizability classification task. Its architecture is designed to leverage the entire space of known inorganic chemical compositions to make predictions without requiring prior crystal structure information [3] [23].

Model Input and Atom2Vec Representation

A key innovation of SynthNN is its use of the atom2vec representation. Instead of using pre-defined chemical descriptors, SynthNN represents each chemical formula by a learned atom embedding matrix that is optimized alongside all other parameters of the neural network [3].

- Input: A chemical formula (e.g., "NaCl").

- Representation Learning: The model learns a continuous, dense vector representation (embedding) for each element in the periodic table. The dimensionality of this embedding is a tunable hyperparameter.

- Advantage: This approach allows SynthNN to discover the optimal representation of chemical formulas directly from the distribution of synthesized materials, without requiring assumptions about which factors (e.g., electronegativity, ionic radius) are most important for synthesizability. Experiments suggest that through this process, SynthNN autonomously learns fundamental chemical principles such as charge-balancing, chemical family relationships, and ionicity [3].

Neural Network Architecture and Training Protocol

The core of SynthNN is a deep neural network trained using a Positive-Unlabeled (PU) Learning strategy, which is crucial for addressing the inherent data limitations in this field.

- Architecture: The learned atom embeddings are fed into a deep residual neural network (ResNet). The ResNet architecture, with its skip connections, facilitates the training of very deep networks, enabling the model to learn complex, hierarchical features from the compositional data [3] [24].

- PU Learning Formulation:

- Positive (P) Data: Experimentally synthesized materials are sourced from the Inorganic Crystal Structure Database (ICSD). These are reliable positive examples.

- Unlabeled (U) Data: A critical challenge is the lack of confirmed "unsynthesizable" materials. SynthNN addresses this by generating a large set of artificial chemical formulas that are not present in the ICSD. These are treated as unlabeled data, as some may be synthesizable but just undiscovered.

- Training Objective: The model is trained to classify synthesized materials as positive while probabilistically reweighting the unlabeled examples according to their likelihood of being synthesizable. This semi-supervised approach robustly handles the incomplete data labeling [3] [2].

- Hyperparameters: The model tuning involves optimizing key parameters such as the dimensionality of the atom embeddings and the ratio of artificially generated formulas to synthesized formulas used in training (denoted as ( N_{synth} )) [3].

The following diagram illustrates the SynthNN training workflow and architecture.

Performance Benchmarking and Experimental Protocols

The performance of SynthNN has been rigorously benchmarked against both traditional computational methods and human experts, demonstrating its significant advantages.

Quantitative Performance Comparison

SynthNN's performance is quantitatively superior to traditional methods. The table below summarizes its performance against key baselines as reported in the original study [3].

Table 1: Performance comparison of SynthNN against traditional methods for synthesizability classification.

| Method | Key Principle | Precision | Key Limitations |

|---|---|---|---|

| SynthNN | Data-driven classification on compositions | 7x higher than DFT formation energy | Requires large dataset; "Black-box" nature |

| DFT Formation Energy | Thermodynamic stability (energy above convex hull) | Baseline (1x) | Captures only ~50% of synthesized materials; Computationally expensive |

| Charge-Balancing | Net neutral ionic charge from common oxidation states | Lower than SynthNN | Inflexible; Only 37% of known materials are charge-balanced |

| Random Guessing | Random weighted by class imbalance | Lowest | Not a viable strategy |

In a head-to-head material discovery challenge against 20 expert material scientists, SynthNN outperformed all human experts, achieving 1.5x higher precision and completing the task five orders of magnitude faster than the best-performing human [3]. This demonstrates the model's potential to dramatically accelerate the materials discovery cycle.

Experimental Protocol for Model Validation

The standard protocol for training and validating a model like SynthNN involves several key steps, which are detailed in the table below.

Table 2: Experimental protocol for developing and validating a synthesizability classification model.

| Stage | Protocol Description | Key Datasets/Tools |

|---|---|---|

| 1. Data Curation | Extract synthesized inorganic crystalline materials from ICSD. Filter for quality and remove disordered structures. | Inorganic Crystal Structure Database (ICSD) [3] [17] |

| 2. Generating Unlabeled Data | Create a large set of hypothetical chemical formulas not present in ICSD. These serve as the unlabeled set in PU learning. | Combinatorial formula generation; Previous databases of hypothetical materials [3] |

| 3. Data Representation | Convert chemical formulas into a machine-learnable format. No structural information is required. | atom2vec embeddings; Stoichiometric features [3] |

| 4. Model Training (PU Learning) | Train a deep neural network (e.g., ResNet) using a PU learning loss function that distinguishes positive examples from the unlabeled set. | Deep Learning Frameworks (e.g., TensorFlow, PyTorch); Positive-Unlabeled learning algorithms [3] [2] |

| 5. Model Evaluation | Evaluate on a hold-out test set. Use α-estimation to approximate precision and false positive rate due to the lack of true negatives. | Standard ML metrics (Precision, Recall, F1-score); α-estimation for PU learning [2] |

The Evolving Landscape: Beyond SynthNN

While SynthNN operates solely on composition, recent advances have expanded the field to include crystal structure-based predictions and the application of large language models (LLMs), leading to substantial gains in accuracy and explainability.

Structure-Aware and Large Language Models