Beyond Thermodynamics: Evaluating Next-Gen AI for Predicting Synthesis of Complex Crystal Structures

Accurately predicting which computationally designed crystal structures can be experimentally synthesized is a critical bottleneck in materials discovery, particularly for complex systems relevant to pharmaceutical development.

Beyond Thermodynamics: Evaluating Next-Gen AI for Predicting Synthesis of Complex Crystal Structures

Abstract

Accurately predicting which computationally designed crystal structures can be experimentally synthesized is a critical bottleneck in materials discovery, particularly for complex systems relevant to pharmaceutical development. This article provides a comprehensive evaluation of modern synthesizability models, moving beyond traditional stability metrics. We explore the foundational principles of synthesizability, detail cutting-edge methodologies from compositional transformers to structure-aware graph networks, and address key challenges like data scarcity and error propagation. By comparing model performance on complex structures and validating predictions with experimental case studies, this review offers researchers and drug development professionals a practical framework for integrating reliable synthesizability assessment into their discovery pipelines, ultimately accelerating the transition from in-silico design to real-world materials.

Defining Synthesizability: Why Stability Metrics Aren't Enough for Complex Crystals

The accelerating use of computational tools has identified millions of hypothetical materials with promising properties, yet only a tiny fraction have been successfully synthesized in the laboratory. This disparity defines the synthesizability gap, a critical bottleneck in materials discovery. For decades, formation energy and phonon stability have served as the foundational, first-principles metrics for predicting whether a theoretical material can be experimentally realized. Thermodynamic stability, typically assessed through a material's energy above the convex hull (Ehull), indicates whether a compound is stable relative to its potential decomposition products at 0 K [1]. Kinetic stability, often evaluated via phonon dispersion calculations to check for the absence of imaginary frequencies, confirms whether a structure is dynamically stable against small atomic displacements [2].

However, a material's actual synthesizability is influenced by a far more complex set of factors that these traditional metrics cannot capture. Synthesis is governed not only by thermodynamic and kinetic stability but also by experimental feasibility, including precursor availability, feasible reaction pathways, appropriate solvents, and specific temperature and pressure conditions [2] [3]. Consequently, numerous structures with favorable formation energies remain unsynthesized, while various metastable structures with less favorable energies are routinely synthesized in laboratories [2]. This article provides a comparative analysis of the limitations inherent to traditional stability metrics and evaluates emerging data-driven approaches that are bridging the synthesizability gap for complex crystal structures and drug molecules.

Limitations of Traditional Stability Metrics

Traditional computational assessments of synthesizability rely heavily on two principal metrics derived from density functional theory (DFT). While necessary, they are insufficient conditions for predicting successful synthesis.

Formation Energy and Energy Above Hull

The formation energy and energy above hull are thermodynamic measures that evaluate a material's stability relative to its competing phases.

- Fundamental Principle: A negative formation energy indicates that a compound is stable with respect to its constituent elements, while an Ehull value of zero signifies that the material is on the convex hull and is thermodynamically stable at 0 K [1]. In practice, a threshold for Ehull (e.g., < 0.08 eV/atom) is often used as a crude filter for synthesizability [1].

- Key Limitations: These energy-based predictions are calculated for perfect crystals at 0 K, ignoring real-world factors such as defects, configurational entropy, and temperature-dependent entropic effects [1] [4]. They fail to account for the availability of precursors, earth abundance of starting materials, and accessibility of necessary high-temperature or high-pressure conditions [1]. Crucially, they cannot discern between polymorphs with similar energies, a common occurrence in materials like perovskites [1] [4].

Phonon Stability and Dynamical Simulations

Phonon dispersion calculations and Ab Initio Molecular Dynamics (AIMD) are used to assess kinetic and thermal stability.

- Fundamental Principle: Phonon dispersion curves with no imaginary frequencies (soft modes) confirm a structure's dynamic stability [2] [5]. AIMD simulations, which model atomic motion over time at finite temperatures, can probe thermal stability, as demonstrated in studies of XZnH3 perovskites where LiZnH3 showed instability despite negative phonon frequencies [5].

- Key Limitations: Materials with imaginary phonon frequencies can still be synthesized, as kinetics and non-equilibrium pathways can stabilize metastable phases [2]. Computational phonon analysis is notoriously expensive for large unit cells, limiting its use in high-throughput screening [2].

Table 1: Quantitative Comparison of Traditional Synthesizability Metrics

| Metric | Computational Cost | Primary Limitation | Reported Accuracy as a Synthesizability Predictor |

|---|---|---|---|

| Formation Energy/Energy Above Hull | Moderate to High (DFT) | Fails for metastable phases; ignores experimental conditions. | ~74.1% (True Positive Rate) [2] |

| Phonon Dispersion | High (DFT + post-processing) | Cannot account for kinetic stabilization pathways. | ~82.2% (True Positive Rate) [2] |

| AIMD Simulations | Very High | Limited timescales (ps-ns) compared to real synthesis. | Qualitative stability assessment [5] |

Emerging Data-Driven Synthesizability Models

To overcome the limitations of traditional metrics, machine learning (ML) and large language models (LLMs) are being deployed to learn the complex patterns underlying successful synthesis from existing experimental data.

Machine Learning and Positive-Unlabeled Learning

A significant challenge in training synthesizability models is the lack of confirmed negative examples; scientific literature primarily reports successful syntheses (positives). Positive-Unlabeled (PU) Learning has emerged as a powerful semi-supervised technique to address this.

- Core Methodology: PU learning algorithms treat the vast number of hypothetical, unsynthesized structures in databases as "unlabeled" rather than definitively "negative." The model learns from the known positive examples and estimates the likelihood of synthesis for unlabeled candidates based on their similarity to the positive set and other material descriptors [4].

- Model Implementations: Early models used graph-based representations of crystals, such as Crystal Graph Convolutional Neural Networks (CGCNN), as inputs for PU classifiers [1] [4]. More recent approaches use advanced representations like Fourier-transformed crystal properties (FTCP), which encode information in both real and reciprocal space, leading to a model with >82% precision/recall for ternary crystals [1].

Large Language Models (LLMs) for Crystal Synthesis

The application of Large Language Models (LLMs) represents a paradigm shift, leveraging their ability to process natural language and complex patterns.

- Text-Based Representations: Crystal structures are converted into text strings for LLM processing. The Crystal Synthesis LLM (CSLLM) framework uses a "material string" that condenses space group, lattice parameters, and representative atomic coordinates, omitting redundant information [2]. An alternative method uses

Robocrystallographerto generate human-readable text descriptions of crystal structures from CIF files [6]. - Model Architecture and Performance: The CSLLM framework employs three specialized LLMs for predicting synthesizability, synthetic methods, and suitable precursors [2]. This approach has demonstrated state-of-the-art performance, achieving 98.6% accuracy on testing data, significantly outperforming traditional thermodynamic (74.1%) and kinetic (82.2%) methods [2]. Another study fine-tuned GPT-4o-mini on text descriptions and found that an LLM-embedding-based PU classifier outperformed both a fine-tuned LLM and a traditional CGCNN model [6].

Table 2: Comparison of Data-Driven Synthesizability Prediction Models

| Model / Approach | Input Data | Key Advantage | Reported Performance |

|---|---|---|---|

| PU-CGCNN [6] [4] | Crystal Graph | Effective use of structural information with PU learning. | Baseline performance, lower than LLM-based methods [6]. |

| FTCP Representation [1] | Fourier-transformed crystal features | Captures periodicity and elemental properties in reciprocal space. | 82.6% Precision, 80.6% Recall (Ternary Crystals) [1]. |

| CSLLM Framework [2] | Material String (Text) | High accuracy, can also predict methods and precursors. | 98.6% Accuracy, >90% Precursor/Method Accuracy [2]. |

| LLM-Embedding + PU [6] | Text Embedding from Structure Description | Balances high performance with lower computational cost. | Outperforms both StructGPT-FT and PU-CGCNN [6]. |

Experimental Protocols and Workflows

The transition from theoretical prediction to experimental realization requires robust and well-defined computational workflows.

Workflow for LLM-Based Synthesizability Prediction

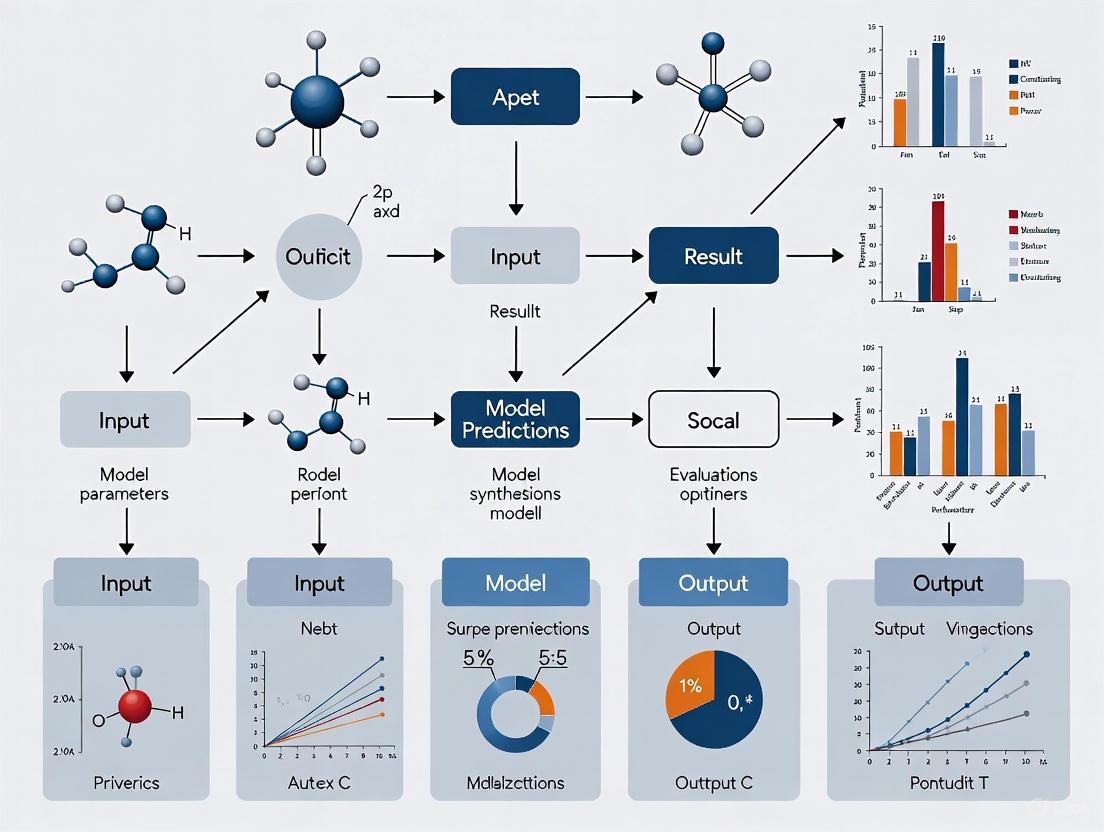

The following diagram illustrates the integrated workflow for predicting synthesizability and precursors using fine-tuned Large Language Models.

Workflow for Retrosynthetic Molecule Evaluation

In drug discovery, evaluating synthesizability requires a different approach centered on retrosynthetic analysis and route validation, as shown below.

Key Experimental and Computational Reagents

This table details essential resources, datasets, and software tools that form the foundation for modern synthesizability prediction research.

Table 3: Research Reagent Solutions for Synthesizability Studies

| Resource / Tool | Type | Primary Function in Research |

|---|---|---|

| Materials Project (MP) Database [1] [6] | Computational Database | Source of DFT-calculated structures and properties for thousands of hypothetical and known materials. |

| Inorganic Crystal Structure Database (ICSD) [1] [2] | Experimental Database | Curated source of experimentally synthesized crystal structures, used as positive labels for model training. |

| AiZynthFinder [7] [8] | Software Tool | Open-source tool for retrosynthetic planning, used to find synthetic routes for target molecules. |

| ZINC Database [7] [8] | Chemical Database | Database of commercially available compounds, used as a source of potential building blocks for synthesis planning. |

| Robocrystallographer [6] | Software Tool | Generates text descriptions of crystal structures from CIF files, enabling the use of LLMs. |

| Positive-Unlabeled (PU) Learning | Machine Learning Technique | Enables training of classifiers when only positive and unlabeled data are available. |

The limitations of traditional metrics like formation energy and phonon stability are clear: they provide necessary but insufficient conditions for synthesizability. The emergence of data-driven models, particularly those using PU learning and LLMs fine-tuned on comprehensive experimental data, is dramatically narrowing the synthesizability gap. These models integrate structural, compositional, and implicit experimental knowledge to achieve predictive accuracies exceeding 98%, far beyond the capabilities of energy-based or kinetic stability criteria alone [2]. For the research community, the critical path forward involves the continued development and adoption of these tools, the creation of standardized benchmarks, and the integration of synthesizability prediction directly into generative materials and drug design workflows. This will finally bridge the long-standing gap between computational prediction and experimental realization.

Accurately predicting which computationally designed crystal structures can be successfully synthesized in the laboratory remains a pressing challenge in materials science. The performance of any machine learning (ML) model for synthesizability prediction is fundamentally constrained by the quality and composition of its training data. This guide provides a comprehensive comparison of methodologies for constructing the foundational datasets for these models, specifically through the curation of positive samples from experimental databases like the Inorganic Crystal Structure Database (ICSD) and negative samples from theoretical repositories. The strategic selection of these samples directly impacts model accuracy, generalization capability, and ultimately, the successful translation of theoretical predictions into experimentally realized materials.

Data Source Profiles and Key Characteristics

The primary sources for building synthesizability datasets are the ICSD for positive samples and large-scale computational databases for negative candidates. The table below summarizes their core characteristics.

Table 1: Key Data Sources for Positive and Negative Samples

| Data Source | Sample Type | Content & Scope | Key Characteristics & Usage |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [9] [10] [11] | Positive | >210,000 to 240,000 experimentally identified inorganic crystal structures, with records from 1913 to present [9] [10] [11]. | Considered the "gold standard" for experimentally synthesized materials. Contains fully characterized structures with atomic coordinates. Data undergoes thorough quality checks [9]. |

| Theoretical Databases (e.g., Materials Project, OQMD, AFLOW, JARVIS) [1] [2] [12] | Negative (Potential) | Millions of DFT-calculated crystal structures (e.g., ~1.4 million structures from multiple sources were screened in one study) [2]. | Contain structures that are computationally generated but not necessarily synthesized. Include thermodynamic stability metrics (e.g., energy above hull). The label "theoretical" is often used as a proxy for being unsynthesized [12]. |

Comparative Analysis of Data Curation Methodologies

Different experimental designs for curating negative samples from theoretical databases lead to significant variations in dataset quality and subsequent model performance. The following table compares three prominent methodologies.

Table 2: Comparison of Negative Sample Curation Methodologies

| Curation Methodology | Core Principle | Protocol Description | Reported Performance Outcomes |

|---|---|---|---|

| Positive and Unlabeled (PU) Learning [13] | Treats all theoretical structures as "unlabeled"; some are randomly labeled as negative during training. | 1. Training: A model (e.g., decision tree) is trained on known positive (ICSD) and randomly selected negative samples from unlabeled data.2. Iteration: Process repeats with different random negative sets (bootstrapping).3. Prediction: Model learns to identify positive samples from the unlabeled pool [13]. | Achieved a 91% True Positive Rate for identifying synthesized materials across the Materials Project database [13]. |

| Crystal-Likeness Score (CLscore) Filtering [2] | Uses a pre-trained PU learning model to assign a synthesizability score (CLscore), with low scores indicating non-synthesizability. | 1. Scoring: A pre-trained model generates a CLscore for every theoretical structure.2. Selection: Structures with scores below a strict threshold (e.g., CLscore < 0.1) are selected as high-confidence negative samples [2]. | Used to create a balanced dataset of 80,000 non-synthesizable structures. 98.3% of ICSD positives had a CLscore > 0.1, validating the threshold [2]. |

| Theoretical Flag & Composition-Based Labeling [12] | Labels a composition as unsynthesizable only if all its polymorphs in the database are flagged as "theoretical." | 1. Query: Extract compositions and their "theoretical" flags from databases like the Materials Project.2. Labeling: A composition is labeled negative (y=0) only if no known synthesized polymorph exists (i.e., all are theoretical) [12]. |

This conservative approach avoids mislabeling synthesizable compositions and was used to create a dataset with 129,306 unsynthesizable compositions [12]. |

Experimental Protocols for Dataset Construction and Model Training

Protocol for Balanced Dataset Construction via CLscore

A state-of-the-art protocol for constructing a high-quality, balanced dataset for training synthesizability models involves leveraging the CLscore [2].

- Positive Sample Collection: Collect experimentally confirmed crystal structures from the ICSD. A typical selection might involve ~70,000 structures, excluding disordered ones and applying filters like a maximum of 40 atoms and 7 different elements per structure [2].

- Raw Theoretical Pool Assembly: Aggregate a massive pool of theoretical crystal structures from multiple computational databases, such as the Materials Project (MP), Computational Materials Database (CMDB), Open Quantum Materials Database (OQMD), and JARVIS. One study combined over 1.4 million such structures [2].

- CLscore Calculation: Use a pre-trained PU learning model to calculate a Crystal-Likeness Score (CLscore) for every structure in the theoretical pool and for the collected positive samples [2].

- Negative Sample Selection: Apply a low CLscore threshold to select high-confidence negative samples. For example, selecting the 80,000 structures with the lowest CLscores (e.g., < 0.1) creates a balanced set against ~70,000 positives. The validity of this threshold is confirmed by verifying that over 98% of the known positive samples have a CLscore above it [2].

- Dataset Validation: Use visualization techniques like t-SNE to ensure the final dataset of positive and negative samples comprehensively covers diverse crystal systems, element combinations, and atomic numbers [2].

Protocol for Integrated Compositional and Structural Model Training

Once a dataset is curated, it can be used to train advanced models that integrate both compositional and structural signals [12].

- Data Representation:

- Model Architecture:

- Compositional Encoder (

f_c): A fine-tuned transformer model (e.g., MTEncoder) processes the compositionx_cinto a latent vectorz_c[12]. - Structural Encoder (

f_s): A Graph Neural Network (GNN) processes the crystal structurex_sinto a latent vectorz_s[12]. - MLP Heads: Each encoder feeds a separate Multi-Layer Perceptron (MLP) head that outputs a synthesizability score. The model is trained end-to-end by minimizing binary cross-entropy loss [12].

- Compositional Encoder (

- Inference and Screening: During screening, the synthesizability probabilities from both the composition and structure models are aggregated using a rank-average ensemble (Borda fusion) to produce a robust final ranking of candidate materials [12].

Workflow Visualization of Data Curation and Model Application

The following diagram illustrates the end-to-end workflow for curating data and applying a synthesizability prediction model, integrating the key protocols described above.

Diagram 1: Workflow for data curation and synthesizability modeling.

Table 3: Essential Computational Tools and Databases for Synthesizability Research

| Tool/Resource | Type | Primary Function in Research |

|---|---|---|

| ICSD [9] [10] [11] | Database | The definitive source for experimentally verified inorganic crystal structures, used as the ground truth for positive samples. |

| Materials Project (MP) [1] [12] | Database | A primary source for theoretical, DFT-calculated crystal structures and stability data, used for curating negative samples. |

| PU Learning Models [13] [2] | Software/Method | A semi-supervised learning framework to handle datasets where only positive samples are reliably labeled. |

| Fourier-Transformed Crystal Properties (FTCP) [1] | Crystal Representation | A technique to represent crystal structures in both real and reciprocal space for machine learning input. |

| Graph Neural Networks (GNNs) [12] | Model Architecture | Deep learning models that operate directly on graph representations of crystal structures to encode structural features. |

| CLscore [2] | Metric | A synthesizability score generated by a PU model, enabling the filtering of high-confidence negative samples from theoretical databases. |

The discovery of new functional materials is fundamental to technological progress, from developing better batteries to novel pharmaceuticals. However, the combinatorial explosion of possible atomic arrangements presents a formidable challenge, particularly for complex crystal structures featuring large unit cells or numerous elemental components. Traditional computational methods for materials discovery, such as density functional theory (DFT), scale poorly with system size, often limiting practical crystal structure prediction to systems containing 20–30 atoms [14]. This limitation creates a significant bottleneck, as many promising materials—such as complex metal-organic frameworks or multi-element catalysts—far exceed this scale. Artificial intelligence is no longer merely a useful tool but has become an essential solution for navigating this vast and complex chemical space, enabling researchers to tackle problems that were previously computationally infeasible.

Performance Benchmark: AI Models vs. Traditional Methods

To objectively evaluate the advancement AI brings, the table below compares the performance of modern AI-based synthesizability prediction models against traditional stability-based screening methods.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method / Model Name | Underlying Approach | Reported Accuracy / Performance | Key Strengths / Limitations |

|---|---|---|---|

| CSLLM (Synthesizability LLM) [2] | Fine-tuned Large Language Model | 98.6% accuracy | Outperforms traditional methods; predicts methods & precursors. |

| PU-GPT-embedding [6] | LLM embeddings + PU-learning classifier | High accuracy; cost-effective | Better performance than graph-based models; 57% lower inference cost than fine-tuned LLM. |

| StructGPT-FT [6] | Fine-tuned LLM on text structure descriptions | Comparable to PU-CGCNN | Demonstrates the value of including structural information. |

| Thermodynamic Stability [2] | Energy above convex hull (e.g., ≥0.1 eV/atom) | 74.1% accuracy | Misses metastable synthesizable materials. |

| Kinetic Stability [2] | Phonon spectrum analysis (e.g., ≥ -0.1 THz) | 82.2% accuracy | Computationally expensive; structures with imaginary frequencies can be synthesized. |

The data reveals a clear performance gap. Traditional thermodynamic and kinetic stability checks, long used as proxies for synthesizability, achieve significantly lower accuracy (74.1% and 82.2%, respectively) because they do not fully capture the complex kinetic and experimental factors that determine whether a material can be made [2]. In contrast, AI models like the Crystal Synthesis Large Language Model (CSLLM) leverage patterns learned from vast datasets of known synthesized and hypothetical structures, achieving 98.6% accuracy in distinguishing synthesizable crystals [2].

Inside the Experiments: How AI Models Are Built and Validated

The superior performance of AI models stems from innovative methodologies for data handling, model architecture, and experimental validation.

Data Curation and Representation

A critical first step is converting crystal structures into a format that AI models can process effectively.

- The PU-Learning Challenge: A major hurdle is the lack of confirmed "negative" examples (non-synthesizable structures). Researchers address this using Positive-Unlabeled (PU) learning, treating known synthesized structures from databases like the Inorganic Crystal Structure Database (ICSD) as "positives" and treating hypothetical structures from computational databases (Materials Project, OQMD) as "unlabeled." A pre-trained model then assigns a low synthesizability score (e.g., CLscore <0.1) to a subset of these hypothetical structures to serve as robust "negative" examples [2] [15].

- Text-Based Crystal Representation: To leverage the power of LLMs, crystal structures are converted into text strings. The "material string" is a concise format that includes space group, lattice parameters, and a list of atoms with their Wyckoff positions, omitting redundant coordinate information [2]. Tools like Robocrystallographer can also generate human-readable text descriptions of crystal structures for model training [6].

Model Architectures and Training

Different AI architectures are employed, each with distinct advantages:

- Specialized LLMs (CSLLM): This framework uses three separate LLMs, each fine-tuned for a specific task: predicting synthesizability, suggesting a synthetic method (solid-state or solution), and identifying suitable precursors. This modular approach allows for targeted, high-accuracy predictions [2].

- Multimodal Generative AI (Chemeleon): This model uses cross-modal contrastive learning (Crystal CLIP) to align text embeddings with graph embeddings of crystal structures from equivariant Graph Neural Networks (GNNs). A subsequent diffusion model then generates novel crystal structures based on text prompts, enabling the exploration of complex multi-component systems like the Li-P-S-Cl quaternary space for solid-state batteries [16].

- Symbolic AI Systems (CRESt): Moving beyond pure prediction, systems like MIT's CRESt combine multimodal AI (processing literature, experimental data, images) with robotic high-throughput experimentation. The AI plans experiments, a robotic system executes synthesis and testing, and the results are fed back to the AI to optimize future trials, creating a closed-loop discovery engine [17].

Validation and Workflow

Robust validation is key to establishing model credibility. The standard protocol involves:

- Train-Test Split: Models are trained on a large dataset (e.g., 150,120 structures [2]) and tested on a held-out chronological split of structures added to databases after a certain date, ensuring they are evaluated on truly "unseen" data [16].

- Experimental Validation: The ultimate test is the synthesis of AI-predicted materials. For instance, the CRESt system was used to explore over 900 chemistries, leading to the discovery of a record-performance eight-element fuel cell catalyst [17]. Similarly, an active learning AI guided the discovery of four new high-performing battery electrolytes from an initial set of just 58 data points [18].

The workflow of a multimodal, robotic-assisted discovery platform can be visualized as follows:

The Researcher's Toolkit: Essential AI and Experimental Reagents

Navigating complex material spaces requires a suite of computational and experimental tools.

Table 2: Key Research Reagent Solutions for AI-Driven Materials Discovery

| Category | Reagent / Tool | Function in Research |

|---|---|---|

| Computational Models | Generative AI (Chemeleon) [16] | Generates novel crystal compositions and structures from text descriptions. |

| Synthesizability Predictor (CSLLM) [2] | Accurately predicts whether a hypothetical crystal structure can be synthesized. | |

| Force Field AI (Allegro-FM) [19] | Simulates billions of atoms with quantum mechanical accuracy to study material properties. | |

| Data Resources | Crystallographic Databases (ICSD, MP) [2] | Provide structured data on known and hypothetical crystals for model training. |

| Text Representation (Material String) [2] | A concise text format for representing crystal structures for LLM processing. | |

| Robocrystallographer [6] | Automatically generates human-readable text descriptions of crystal structures. | |

| Experimental Systems | High-Throughput Robotics [17] | Automates synthesis and electrochemical testing to rapidly validate AI predictions. |

| Computer Vision for Monitoring [17] | Monitors experiments via cameras to detect issues and improve reproducibility. |

The evidence is clear: the complexity of large-unit-cell and multi-element systems is not merely an inconvenience but a fundamental challenge that mandates an AI-driven approach. Traditional methods are outperformed by AI models in both the accuracy of synthesizability prediction and the sheer scale of systems that can be studied, as demonstrated by AI models that simulate billions of atoms [19] or discover complex multi-element catalysts [17]. The future of materials discovery lies in the continued development of multimodal and explainable AI, the tighter integration of AI with robotic laboratories for autonomous discovery, and the expansion of these methods to even more complex chemical spaces, ultimately accelerating the journey from theoretical prediction to synthesized material.

The acceleration of computational materials design has created a critical challenge: bridging the gap between theoretical predictions and experimental synthesis. While advanced algorithms can generate millions of candidate crystal structures with promising properties, most remain hypothetical because their synthesizability cannot be guaranteed. This bottleneck has driven the emergence of specialized machine learning models to predict which theoretically proposed structures can be successfully synthesized in laboratory conditions. Evaluating these models requires moving beyond conventional machine learning metrics to specialized Key Performance Indicators (KPIs) that reflect the complex, multi-faceted nature of materials synthesis.

The assessment of synthesizability prediction models demands a rigorous framework centered on three core KPIs: Accuracy, which measures overall correctness; Precision, which quantifies the reliability of positive predictions; and Generalizability, which evaluates performance on structurally novel or more complex materials than those seen during training. These KPIs provide the essential compass for tracking model health and guiding the iterative process of model improvement, ultimately determining whether a predictive model can transition from academic research to practical application in materials discovery pipelines.

Comparative Analysis of Synthesizability Prediction Models

Quantitative Performance Comparison

Recent research has produced several distinct approaches for predicting crystal structure synthesizability, each with characteristic strengths and limitations. The following table summarizes the quantitative performance of leading models based on rigorous benchmarking studies.

Table 1: Performance Comparison of Synthesizability Prediction Models

| Model Type | Core Methodology | Accuracy (%) | Precision (%) | Generalizability Assessment | Key Limitations |

|---|---|---|---|---|---|

| CSLLM Framework [2] | Three specialized LLMs for synthesizability, method, and precursor prediction | 98.6 | Not explicitly stated | 97.9% accuracy on complex structures with large unit cells | Requires comprehensive dataset construction; computational cost |

| PU-GPT-Embedding [6] | GPT embeddings fed into PU-learning classifier | ~98 (estimated from performance curves) | ~90 (estimated from performance curves) | Outperforms graph-based representations on novel structures | Depends on quality of text descriptions |

| StructGPT-FT [6] | Fine-tuned LLM using structural descriptions | ~96 (estimated from performance curves) | ~85 (estimated from performance curves) | Good generalization to diverse crystal systems | Performance limited compared to embedding approach |

| PU-CGCNN [6] | Graph neural network with PU-learning | ~94 (estimated from performance curves) | ~80 (estimated from performance curves) | Limited by graph construction heuristics | Omits geometric angles in representations |

| Thermodynamic Stability [2] | Energy above convex hull (≥0.1 eV/atom) | 74.1 | Not applicable | Poor for metastable phases | Misses many synthesizable materials |

| Kinetic Stability [2] | Phonon spectrum analysis (≥ -0.1 THz) | 82.2 | Not applicable | Limited predictive value | Structures with imaginary frequencies can be synthesized |

Specialized Model Capabilities

Beyond core synthesizability prediction, specialized models have emerged to address specific aspects of the materials discovery pipeline. The CSLLM framework exemplifies this trend with components targeting different stages of experimental planning.

Table 2: Specialized Model Capabilities in the CSLLM Framework [2]

| Model Component | Primary Function | Performance | Application Context |

|---|---|---|---|

| Synthesizability LLM | Predicts whether a crystal structure can be synthesized | 98.6% accuracy | Initial screening of theoretical structures |

| Method LLM | Classifies appropriate synthesis method (solid-state or solution) | 91.0% accuracy | Experimental planning |

| Precursor LLM | Identifies suitable chemical precursors | 80.2% success rate | Reaction design and optimization |

Experimental Protocols and Methodologies

Dataset Construction and Curation

The foundation of reliable synthesizability prediction lies in rigorous dataset construction. The most effective contemporary approaches utilize balanced datasets containing both synthesizable and non-synthesizable crystal structures. The protocol established by the CSLLM framework exemplifies best practices [2]:

- Positive Examples: 70,120 experimentally verified crystal structures from the Inorganic Crystal Structure Database (ICSD), filtered to include only ordered structures with ≤40 atoms and ≤7 different elements [2].

- Negative Examples: 80,000 non-synthesizable structures identified from 1,401,562 theoretical structures using a pre-trained Positive-Unlabeled (PU) learning model, selecting those with CLscore <0.1 (where CLscore <0.5 indicates non-synthesizability) [2].

- Structural Diversity: Comprehensive coverage of seven crystal systems (cubic, hexagonal, tetragonal, orthorhombic, monoclinic, triclinic, and trigonal) with elemental diversity spanning atomic numbers 1-94 (excluding 85 and 87) [2].

- Text Representation: Conversion of crystal structures to "material string" format—a simplified text representation containing space group, lattice parameters, and atomic coordinates with Wyckoff positions to eliminate redundancy [2].

Model Training and Evaluation Methodology

The training protocols for high-performing synthesizability models share several common elements while differing in their core architectural approaches:

LLM-Based Models (CSLLM, StructGPT) [2] [6]:

- Utilize fine-tuned transformer architectures (e.g., GPT-4o-mini) on crystal structure descriptions

- Implement domain-specific fine-tuning with learning rate 5e-5 for 3 epochs

- Employ maximum sequence length of 4096 tokens to accommodate structural descriptions

- Use temperature setting of 0.7 for generation tasks

Embedding-Based Models (PU-GPT-Embedding) [6]:

- Generate 3072-dimensional vector representations using text-embedding-3-large model

- Train binary PU-classifier neural networks on the embedding representations

- Utilize hierarchical embedding approach, where earlier dimensions represent coarse structural features and later dimensions capture fine-grained details

- Implement α-estimation for precision and false positive rate calculation due to lack of true negative data

Evaluation Protocol [6]:

- Hold-out testing with 20% of data reserved for evaluation

- Assessment of generalization on structures with complexity exceeding training data (e.g., larger unit cells)

- Calculation of True Positive Rate (recall) as primary metric, with estimated precision via α-estimation

- Benchmarking against traditional methods (thermodynamic and kinetic stability)

Workflow Visualization

Synthesizability Model Evaluation Workflow

Table 3: Essential Resources for Synthesizability Prediction Research

| Resource Category | Specific Tools & Databases | Primary Function | Access Considerations |

|---|---|---|---|

| Crystal Structure Databases | Inorganic Crystal Structure Database (ICSD) [2], Materials Project [6] | Sources of experimentally verified structures for training and benchmarking | Subscription required for ICSD; Materials Project is publicly accessible |

| Computational Frameworks | CSLLM Framework [2], PU-CGCNN [6], Robocrystallographer [6] | Specialized software for model training, inference, and structure description | CSLLM requires significant computational resources; Robocrystallographer is open-source |

| Text Representation Tools | Material String format [2], Robocrystallographer [6] | Convert crystal structures to machine-readable text representations | Custom implementation required for material strings |

| Language Models | GPT-4o-mini [6], text-embedding-3-large [6] | Core model architectures for fine-tuning and embedding generation | API costs scale with dataset size; local deployment alternatives available |

| Evaluation Metrics | True Positive Rate (Recall), α-estimation [6], Discovery Yield [20] | Quantify model performance beyond conventional metrics | α-estimation required for precision calculation in PU-learning context |

| Benchmarking Datasets | MP30 dataset [6], Custom balanced datasets [2] | Standardized testing grounds for model comparison | Dataset construction requires significant curation effort |

The systematic evaluation of synthesizability prediction models through the KPIs of Accuracy, Precision, and Generalizability reveals a rapidly evolving landscape where LLM-based approaches are setting new performance standards. The CSLLM framework and related embedding methods demonstrate that combining structural information with advanced language models achieves unprecedented prediction accuracy exceeding 98%, significantly outperforming traditional stability-based assessments and earlier graph neural network approaches.

These performance advances come with important practical considerations. The computational cost and data requirements of fine-tuned LLMs present significant barriers to entry, while the specialized text representations needed for crystal structures add implementation complexity. Furthermore, as research by Borg et al. highlights, traditional static error metrics must be complemented by discovery-focused measures like Discovery Yield and Discovery Probability to fully capture a model's value in practical materials discovery workflows [20].

For researchers and drug development professionals, the emerging generation of synthesizability prediction models offers powerful new capabilities for prioritizing candidate materials. However, successful implementation requires careful attention to dataset construction, model selection appropriate to specific discovery contexts, and comprehensive evaluation using the KPIs outlined in this analysis. As these models continue to evolve, their integration into automated materials discovery pipelines promises to significantly accelerate the translation of computational predictions into synthesized materials with tailored properties.

AI Architectures for Synthesizability: From LLMs to Graph Neural Networks

CSLLM Framework and Material String Representations

The accurate prediction of crystal structure synthesizability is a critical bottleneck in accelerating materials discovery. This guide compares the performance of the specialized Crystal Synthesis Large Language Models (CSLLM) framework against other emerging LLM-based approaches. Performance is evaluated on core tasks of synthesizability classification, synthetic method recommendation, and precursor identification, with a focus on each method's robustness when handling complex crystal structures. The adoption of efficient text-based crystal representations, such as the "material string," is a pivotal development enabling these advancements.

Performance Benchmarking

The table below summarizes the quantitative performance of the CSLLM framework and other relevant LLM-based models on key tasks in computational materials science.

Table 1: Performance Comparison of LLM-Based Models in Materials Science

| Model / Framework | Primary Task | Reported Accuracy | Key Strength | Structural Representation |

|---|---|---|---|---|

| CSLLM (Synthesizability LLM) [2] | Synthesizability Prediction | 98.6% (Test Set) | State-of-the-art accuracy & generalization on complex structures | Material String |

| CSLLM (Method LLM) [2] | Synthetic Method Classification | 91.0% | Classifying solid-state vs. solution methods | Material String |

| CSLLM (Precursor LLM) [2] | Precursor Identification | 80.2% | Identifying suitable precursors for binary/ternary compounds | Material String |

| L2M3 (finetuned GPT-4o) [21] | Synthesis Condition Prediction | 82% (Similarity Score) | Recommending synthesis conditions from precursors | Textual Formula |

| Finetuned Open-source Models (e.g., GLM-4.5-Air) [21] | Synthesis Condition Prediction | Matched GPT-4o Performance | Cost-effective, transparent alternative to closed-source models | Textual Formula |

| CrystaLLM [22] | Crystal Structure Generation | N/A (Qualitative Assessment) | Generating plausible, unseen crystal structures from text prompts | CIF File Tokenization |

Experimental Protocols and Workflows

The CSLLM Framework Workflow

The CSLLM framework employs a multi-model, sequential workflow to comprehensively address the synthesis prediction pipeline. [2]

CSLLM Workflow Breakdown:

- Input and Representation: A crystal structure in a standard format (CIF or POSCAR) is converted into a condensed "material string." [2] This representation integrates space group information, lattice parameters (a, b, c, α, β, γ), and a concise list of atomic species with their Wyckoff positions, eliminating redundant coordinate information present in source files. [2]

- Synthesizability Prediction: The material string is processed by the Synthesizability LLM, a model fine-tuned on a balanced dataset of 70,120 synthesizable structures from the Inorganic Crystal Structure Database (ICSD) and 80,000 non-synthesizable structures identified via a positive-unlabeled (PU) learning model. [2] This model performs a binary classification to determine synthesizability.

- Method and Precursor Identification: For structures deemed synthesizable, the workflow proceeds sequentially. The Method LLM classifies the likely synthetic pathway (e.g., solid-state or solution). Subsequently, the Precursor LLM identifies one or more suitable chemical precursors for the synthesis. [2]

- Output: The framework produces a comprehensive report detailing the synthesizability verdict, recommended synthetic method, and proposed precursors.

The CrystaLLM Generation Workflow

CrystaLLM represents an alternative approach that uses autoregressive generation to create novel crystal structures. [22]

CrystaLLM Workflow Breakdown:

- Model Pre-training: A decoder-only Transformer model is trained autoregressively on a massive corpus of 2.2 million tokenized Crystallographic Information File (CIF) files. [22] The learning objective is to predict the next token in the sequence, which includes atoms, space groups, and numeric digits representing lattice parameters and coordinates.

- Conditional Generation: To generate a new structure, the model is prompted with a starting sequence, typically a cell composition or space group symbol. [22]

- Autoregressive Decoding: The model generates a sequence of tokens one by one, with each new token conditioned on all previous tokens, until a complete and syntactically valid CIF file is produced. [22] This challenges conventional domain-specific representations by treating crystal structures purely as text.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item / Resource | Function in Research | Relevance to Experiment |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [2] | A comprehensive collection of experimentally validated inorganic crystal structures. | Source of ground-truth, synthesizable crystal structures for model training and benchmarking. |

| Material String [2] | A condensed text representation of a crystal structure that includes symmetry, lattice, and atomic position information. | Enables efficient fine-tuning of LLMs by providing a non-redundant, information-dense input format. |

| CIF File (Crystallographic Information File) [22] | A standard text file format for encapsulating crystallographic data. | Serves as the foundational data source and direct training data for structure generation models like CrystaLLM. |

| Positive-Unlabeled (PU) Learning Model [2] | A machine learning technique used to learn from datasets where only positive labels are confirmed. | Critical for constructing a high-quality dataset of non-synthesizable crystal structures to train robust classifiers. |

| Low-Rank Adaptation (LoRA) [21] | A parameter-efficient fine-tuning (PEFT) method that reduces computational overhead. | Allows for effective fine-tuning of large LLMs on domain-specific tasks with reduced resource requirements. |

The discovery of new inorganic crystalline materials is a fundamental driver of technological innovation. A critical bottleneck in this process is predicting synthesizability—whether a proposed chemical composition can be experimentally realized. Traditionally, this has relied on expert knowledge and computational proxies like thermodynamic stability, but these methods are often slow, limited in scope, or inaccurate [23] [1]. The advent of deep learning has introduced powerful data-driven approaches to this challenge. This guide focuses on two pivotal composition-based deep learning methods: SynthNN, a model designed explicitly for synthesizability classification, and Atom2Vec, an unsupervised technique for learning fundamental representations of atoms that can be used to build predictive models. Framed within a broader thesis on evaluating synthesizability model performance, this article provides a comparative analysis of their methodologies, performance, and practical applications, equipping researchers with the knowledge to select and utilize these tools effectively.

SynthNN: A Deep Learning Synthesizability Classifier

SynthNN is a deep learning model conceived to directly predict the synthesizability of inorganic chemical formulas without requiring structural information. It operates as a classification model, learning the complex patterns that distinguish synthesizable materials from non-synthesizable ones directly from the vast landscape of known chemical compositions. Its development was motivated by the limitations of traditional proxies like charge-balancing and formation energy, which fail to capture the full spectrum of factors influencing synthetic accessibility [23].

A key innovation of SynthNN is its use of a framework that learns an optimal representation of chemical formulas through an atom embedding matrix that is optimized alongside all other parameters of the neural network. This means the model does not rely on pre-defined chemical knowledge or descriptors; instead, it learns the relevant chemical principles—such as charge-balancing, chemical family relationships, and ionicity—directly from the data of experimentally realized materials [23]. Furthermore, SynthNN is trained using a semi-supervised learning approach known as Positive-Unlabeled (PU) learning. This is crucial because, while databases of successfully synthesized materials (positive examples) are available, definitive data on unsynthesizable materials (negative examples) are not. The model is trained on data from the Inorganic Crystal Structure Database (ICSD) augmented with artificially generated unsynthesized materials, treating the latter as unlabeled data and probabilistically reweighting them [23] [24].

Atom2Vec: Unsupervised Atom Representation Learning

Atom2Vec takes a fundamentally different, more foundational approach. Its primary objective is not to predict synthesizability directly, but to learn the basic properties of atoms in an unsupervised manner from a massive database of known compounds. Inspired by advances in natural language processing, Atom2Vec is based on the core idea that the properties of an atom can be inferred from the "environments" in which it appears across many different materials, analogous to how the meaning of a word can be derived from its context in sentences [25] [26].

The model works by processing known compounds to generate atom-environment pairs. For a compound like Bi₂Se₃, it generates pairs for each atom type: for Bi, the environment is (2)Se3, and for Se, the environment is (3)Bi2. These pairs are used to construct an atom-environment matrix. A model-free machine using Singular Value Decomposition (SVD) is then applied to this matrix to distill high-level concepts, resulting in each atom being represented by a high-dimensional vector [25] [26]. Remarkably, when these vectors are clustered, they group atoms into categories that align perfectly with the groups of the periodic table, demonstrating that the machine has learned fundamental chemical properties without any prior human labeling [25]. These learned atom vectors serve as powerful, universal input features for other machine learning models tasked with predicting specific material properties, including formation energy—a common proxy for synthesizability [25].

Table 1: Core Architectural Comparison between SynthNN and Atom2Vec

| Feature | SynthNN | Atom2Vec |

|---|---|---|

| Primary Objective | Direct synthesizability classification | Unsupervised atom representation learning |

| Core Methodology | Supervised/PU-learning on compositions | Unsupervised learning from atom environments |

| Input Requirement | Chemical composition | Chemical composition of known compounds |

| Key Output | Synthesizability probability score | High-dimensional vector representation for each element |

| Learning Principle | Learns chemistry of synthesizability from data | Infers atom properties from contextual environments |

Workflow Comparison: From Input to Prediction

The following diagrams illustrate the fundamental differences in how SynthNN and Atom2Vec process information to generate their respective outputs.

SynthNN Prediction Workflow

Atom2Vec Learning and Application Workflow

Experimental Protocols and Performance Benchmarking

Key Experimental Setups and Training Methodologies

SynthNN Training Protocol: The model was developed using a dataset of synthesized materials from the Inorganic Crystal Structure Database (ICSD), which serves as positive examples. To address the lack of confirmed negative examples, the training dataset was augmented with a large number of artificially generated chemical formulas, which are treated as unsynthesized (unlabeled) data. The model is trained with a Positive-Unlabeled (PU) learning approach, which probabilistically reweights the unlabeled examples during training to account for the likelihood that some of them might actually be synthesizable. The core of the model uses an atom2vec-inspired embedding layer that learns optimal vector representations for each element directly from the synthesizability data, followed by a deep neural network for classification [23] [24].

Atom2Vec Training Protocol: Atom2Vec is trained in a fully unsupervised fashion. It processes a large database of known compounds, generating a comprehensive set of atom-environment pairs for each. These pairs are compiled into a massive atom-environment co-occurrence matrix. A model-free machine then uses Singular Value Decomposition (SVD) on this matrix to distill the underlying patterns and represent each atom as a dense vector in a high-dimensional space (e.g., 100 dimensions). The quality of these vectors is validated by checking if clustering algorithms group them in a way that reflects the periodic table, which it successfully does [25] [26].

Benchmarking Metrics: The performance of synthesizability models is typically evaluated using standard classification metrics:

- Precision: The proportion of predicted synthesizable materials that are truly synthesizable.

- Recall: The proportion of actually synthesizable materials that are correctly identified by the model.

- Accuracy: The overall proportion of correct predictions (both synthesizable and non-synthesizable).

- F1-score: The harmonic mean of precision and recall, providing a single metric for model balance [23] [1].

Comparative Performance Data

Quantitative benchmarking reveals the distinct strengths and operational profiles of these models.

Table 2: Synthesizability Prediction Performance Comparison

| Model / Metric | Reported Precision | Reported Recall | Reported Accuracy | Key Benchmark Against |

|---|---|---|---|---|

| SynthNN | 7x higher than formation energy [23] | - | - | DFT-calculated formation energy, Human experts |

| Synthesizability Score (SC) Model [1] | 82.6% | 80.6% | - | Ternary crystals from MP/ICSD |

| Crystal Synthesis LLM (CSLLM) [27] | - | - | 98.6% | Structures with ≤40 atoms |

SynthNN has demonstrated superior performance in head-to-head comparisons. It was shown to identify synthesizable materials with 7 times higher precision than using DFT-calculated formation energy as a proxy. In a unique benchmark against human expertise, SynthNN was pitted against 20 expert materials scientists. The model outperformed all experts, achieving 1.5 times higher precision and completing the task five orders of magnitude faster than the best human performer [23].

It is important to note that SynthNN's precision and recall are highly dependent on the decision threshold chosen for classification. The following table provides specific performance data at different thresholds on a dataset with a 20:1 ratio of unsynthesized to synthesized examples [24]:

Table 3: SynthNN Performance vs. Decision Threshold

| Decision Threshold | Precision | Recall |

|---|---|---|

| 0.10 | 0.239 | 0.859 |

| 0.30 | 0.419 | 0.721 |

| 0.50 | 0.563 | 0.604 |

| 0.70 | 0.702 | 0.483 |

| 0.90 | 0.851 | 0.294 |

For Atom2Vec, its efficacy is demonstrated in downstream prediction tasks. When the learned atom vectors were used as input features for a neural network predicting the formation energies of elpasolite crystals, the model achieved significantly higher accuracy compared to the same model using traditional, human-engineered features based on atomic properties from the periodic table [25].

Discussion: Strategic Selection for Research Applications

Choosing between SynthNN and Atom2Vec is not a matter of identifying a superior model, but rather of selecting the right tool for a specific research objective and context.

For Direct, High-Throughput Synthesizability Screening: SynthNN is the specialized tool. Its end-to-end design, high speed, and proven superiority over traditional computational and human experts make it ideal for rapidly filtering millions of candidate compositions in an inverse design or materials screening workflow [23]. Researchers can directly use its pre-trained model to obtain a synthesizability score for a novel composition, fine-tuning the decision threshold based on whether they prioritize high recall (lower threshold) or high precision (higher threshold) [24].

For Foundational Research and Custom Property Prediction: Atom2Vec provides a foundational advantage. Its unsupervised learning of atom vectors offers a powerful, general-purpose feature set for building custom machine learning models for a wide range of material properties, not just synthesizability. Its ability to learn chemical intuition from data without human bias is a significant breakthrough. Researchers seeking to develop novel predictive models or gain deeper, transferable insights into material representations would benefit from using Atom2Vec as a feature engine [25] [26].

Considerations and Limitations: Both models are composition-based, meaning they do not explicitly utilize crystal structure information, which can be a limitation for materials where polymorphism is a critical factor. Furthermore, the performance of SynthNN is intrinsically linked to the quality and scope of the ICSD, and its PU-learning approach must contend with the inherent ambiguity of "unsynthesized" data. The field continues to evolve, with newer models like the Crystal Synthesis Large Language Models (CSLLM) emerging, which can process full structural information and achieve accuracies as high as 98.6%, albeit with different input requirements and architectural complexity [27].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational "reagents" and resources essential for working with and evaluating composition-based deep learning models for synthesizability prediction.

Table 4: Essential Research Reagents and Resources

| Resource Name | Type | Function in Research |

|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Database | The primary source of positive examples (synthesized materials) for training and benchmarking models like SynthNN [23] [1]. |

| Materials Project (MP) Database | Database | Provides a large collection of DFT-calculated material structures and properties, often used for training and testing ML models [1] [27]. |

| Positive-Unlabeled (PU) Learning | Algorithmic Framework | A semi-supervised learning technique critical for handling the lack of confirmed negative data in synthesizability prediction [23] [27]. |

| Fourier-Transformed Crystal Properties (FTCP) | Crystal Representation | A method for representing crystal structures in both real and reciprocal space, used as input for some alternative synthesizability models [1]. |

| Atom Vectors (from Atom2Vec) | Data/Feature Set | The learned, high-dimensional representations of elements that serve as powerful input features for various property prediction models [25]. |

| Formation Energy (ΔEf) | Thermodynamic Property | A common DFT-calculated proxy for stability, used as a baseline for benchmarking the performance of synthesizability models [23] [1]. |

| Energy Above Hull (Ehull) | Thermodynamic Property | Another stability metric indicating the energy difference to the most stable decomposition products; used for benchmarking [1]. |

In the critical endeavor to predict material synthesizability, both SynthNN and Atom2Vec represent significant leaps beyond traditional methods. SynthNN stands out as a highly specialized and powerful classifier, offering researchers a ready-to-use tool for high-throughput screening with demonstrated superiority over human experts and thermodynamic proxies. In contrast, Atom2Vec operates at a more foundational level, providing a robust, unsupervised method for learning atomic representations that can empower the development of a new generation of property-specific predictive models. The choice between them hinges on the researcher's immediate goal: direct, efficient synthesizability filtering versus building a versatile, foundational understanding of materials chemistry for broader applications. As the field progresses, these composition-based deep learning tools are poised to become indispensable components of the materials discovery pipeline, dramatically increasing the reliability and pace of identifying novel, synthetically accessible materials.

The accurate prediction of crystal properties is a cornerstone of modern materials science, accelerating the discovery of new functional materials for applications in semiconductors, batteries, and catalysis. Central to this endeavor is the effective computational representation of crystalline structures. Graph Neural Networks (GNNs) have emerged as a powerful framework for this task, naturally modeling crystals as graphs where atoms constitute nodes and chemical bonds form edges. Unlike simpler models, structure-aware GNNs explicitly incorporate higher-order geometrical information—such as bond angles, local coordination environments, and periodic invariance—to create richer, more discriminative representations. This guide provides a comparative analysis of leading structure-aware GNNs, evaluating their performance, architectural innovations, and applicability for predicting synthesizability and other key properties of complex crystal structures.

Comparative Analysis of Model Performance

The following table summarizes the performance of various structure-aware GNNs on standard benchmark datasets, highlighting their predictive accuracy for different material properties.

Table 1: Performance Comparison of Structure-Aware GNN Models on Material Property Prediction Tasks

| Model | Key Architectural Feature | Benchmark Dataset(s) | Target Property(s) | Performance Metric & Result |

|---|---|---|---|---|

| ALIGNN [28] [29] | Incorporates bond angles using a line graph of the atomic bond graph. | JARVIS-DFT [28] | Various electronic and mechanical properties | State-of-the-art results at time of publication; improves upon CGCNN and MEGNet [29]. |

| Matformer [30] | Periodic attention mechanism with periodic invariance. | JARVIS-DFT, Materials Project [30] | Formation energy, Band gap, etc. | Outperforms CGCNN, SchNet, and MEGNET on multiple tasks [30]. |

| Gformer [30] | Periodic encoding and a global feature extraction module for elemental composition. | JARVIS-DFT, Materials Project [30] | Six property prediction tasks | Achieves outstanding performance, outperforming CGCNN, SchNet, MEGNET, GATGNN, and ALIGNN [30]. |

| MatGNet [28] | Mat2vec node encoding and angular features via line graphs. | JARVIS-DFT [28] | 12 different-scale properties | Excels in prediction accuracy, surpassing models like Matformer and PST [28]. |

| CHGCNN [31] | Hypergraph representation incorporating triplets and local motifs. | MatBench [31] | Various material properties | Improved performance over models using only pair-wise edges, demonstrating the efficacy of hypergraphs [31]. |

| DenseGNN [32] | Dense Connectivity, Residual Networks, and Local Structure Order Parameters. | JARVIS-DFT, Materials Project, QM9 [32] | Universal property prediction | Achieves state-of-the-art performance, enables deeper architectures, and approaches X-ray diffraction accuracy in structure distinction [32]. |

Detailed Experimental Protocols and Methodologies

To ensure fair and reproducible comparisons, models are typically evaluated on publicly available datasets using standardized splits. Below is a detailed breakdown of the common experimental workflow and the specific protocols for several key models.

Common Benchmarking Framework

The experimental pipeline for evaluating crystal graph GNNs follows several consistent stages [28] [30]:

- Dataset Selection: Models are trained and evaluated on large, curated materials databases such as JARVIS-DFT (JARVIS Density Functional Theory) and the Materials Project (MP). These datasets contain thousands of crystal structures with corresponding DFT-calculated properties.

- Data Splitting: Datasets are split into training, validation, and test sets, often using a predefined random or composition-based split to prevent data leakage and ensure the model generalizes to unseen compositions.

- Graph Construction: Crystal structures are converted into graph representations. A common method, as used in CGCNN, defines nodes as atoms and edges as bonds between atoms within a cutoff distance [1].

- Training and Evaluation: Models are trained to minimize the error between their predictions and the DFT-calculated ground-truth values. Performance is most commonly reported as the Mean Absolute Error (MAE) on the test set for regression tasks (e.g., predicting formation energy) or Accuracy for classification tasks [28].

Protocols for Specific Models

- Gformer [30]: The model was evaluated on the JARVIS-DFT and Materials Project databases for six crystal property prediction tasks. The results were compared against seven previous methods (CFID, CGCNN, SchNet, MEGNET, GATGNN, ALIGNN, and Matformer) using the same training, validation, and testing splits as provided in the Matformer publication to ensure a direct and fair comparison.

- Crystal Hypergraph Convolutional Networks (CHGCNN) [31]: The model's performance was assessed on various datasets from MatBench. The experiments specifically focused on comparing models that incorporate different types of hyperedges (e.g., triplets vs. motifs) to demonstrate the importance of higher-order geometrical information. The results showed that models with hyperedges outperformed those with only pair-wise edges.

- Defect-Informed Equivariant GNN (DefiNet) [33]: This model was specifically designed for defect structures and was evaluated on a dedicated database for 2D material defects (2DMD). The primary evaluation metric was the coordinate Mean Absolute Error (MAE) between the ML-relaxed and DFT-relaxed structures. To precisely assess performance near defects, a localized MAE for atoms within a 3-6 Å radius of defect sites was also used.

Architectural Workflows and Signaling Pathways

The enhanced predictive power of structure-aware GNNs stems from their sophisticated internal workflows for processing crystal information. The following diagram visualizes this complex, multi-pathway signaling process.

This workflow illustrates how modern GNNs process a crystal structure. The initial graph representation is simultaneously processed by multiple specialized modules, each designed to capture a specific type of structural information. These extracted features are then fused and updated through message-passing layers before a final output layer generates the property prediction.

Successful development and application of structure-aware GNNs rely on a suite of computational tools and datasets. The following table details the key "research reagents" in this field.

Table 2: Essential Resources for Crystal Graph GNN Research

| Resource Name | Type | Function in Research | Relevance to Synthesizability |

|---|---|---|---|

| JARVIS-DFT [28] [30] | Dataset | A comprehensive collection of DFT-calculated properties for 3D materials, used for training and benchmarking models. | Provides foundational property data (e.g., formation energy) that is a key proxy for thermodynamic synthesizability. |

| Materials Project (MP) [30] [1] | Dataset | A large, open database of computed crystal structures and properties, often used alongside JARVIS-DFT. | Contains energy above hull (Ehull) data, a critical metric for thermodynamic stability and synthesizability screening [1]. |

| Inorganic Crystal Structure Database (ICSD) [2] [1] | Dataset | A curated database of experimentally synthesized crystal structures, used as a source of positive examples for synthesizability models. | Serves as the ground truth for training supervised machine learning models to distinguish synthesizable from non-synthesizable materials [2]. |

| pymatgen [1] | Software Library | A robust Python library for materials analysis; essential for parsing CIF files, manipulating crystal structures, and generating inputs for models. | Facilitates the pre-processing of crystal structures into graph representations and the extraction of relevant features for prediction. |

| CGCNN Crystal Graph [1] | Representation | A foundational method for converting a crystal structure into a graph with atoms as nodes and bonds as edges. | The baseline graph construction method upon which many more advanced, structure-aware models are built. |

| Fourier-Transformed Crystal Properties (FTCP) [1] | Representation | A crystal representation that incorporates information in both real and reciprocal space, capturing periodicity. | An alternative to graph-based representations, used in some synthesizability classification models for its comprehensive feature set. |

| Local Structure Order Parameters (LSOPs) [31] | Feature Descriptor | Quantitative measures that describe the 3D local coordination environment of an atom in a structure. | Used as features in hypergraph models (CHGCNN) to distinguish between geometrically distinct but compositionally similar structures, a crucial factor in polymorph synthesizability. |

Structure-aware GNNs represent a significant evolution beyond basic crystal graphs. By integrating critical geometric and chemical information—such as angular relationships, periodicity, and local environments—models like ALIGNN, Gformer, Matformer, and CHGCNN have demonstrably achieved superior performance in predicting key material properties. The choice of model depends on the specific research goal: for universal property prediction, DenseGNN and Gformer show broad efficacy; for capturing fine-grained angular information, ALIGNN is a strong choice; while for complex defect structures or highly distorted local environments, DefiNet and CHGCNN offer specialized inductive biases. As the field progresses, the integration of these advanced GNNs with large-scale experimental validation and synthesizability filters like CSLLM [2] will be crucial for closing the loop between computational prediction and experimental realization of novel materials.

Positive-Unlabeled (PU) learning represents a specialized branch of machine learning that addresses a critical challenge: training accurate binary classification models when only positive and unlabeled examples are available, with no confirmed negative samples. This approach has revolutionized synthesizability prediction in materials science by overcoming the fundamental limitation of missing negative data—a problem that persists because unsuccessful synthesis attempts are rarely published or systematically documented [34]. In the context of crystal structure research, PU learning reframes the synthesizability prediction problem, treating experimentally confirmed structures as positive examples and hypothetical, computationally-generated structures as unlabeled data that may contain both synthesizable and non-synthesizable materials [35].

The significance of PU learning extends beyond mere technical convenience. Theoretical analyses have demonstrated that under certain conditions, PU learning can potentially outperform traditional positive-negative (PN) learning, even when the latter has access to confirmed negative examples [36]. This counterintuitive finding underscores the importance of specialized approaches for data scenarios that violate standard supervised learning assumptions. In materials informatics, this capability is particularly valuable for high-throughput screening of virtual crystals, where accurately identifying synthesizable candidates accelerates the discovery of functional materials for applications ranging from photovoltaics to biomedical devices [35].

Performance Comparison of PU Learning Frameworks

Multiple PU learning approaches have been developed specifically for crystal synthesizability prediction, each with distinct architectural innovations and performance characteristics. The table below summarizes the key performance metrics of major frameworks as reported in recent literature.

Table 1: Performance Comparison of PU Learning Frameworks for Synthesizability Prediction

| Framework | Key Methodology | Reported Accuracy/Performance | Application Scope |

|---|---|---|---|

| CSLLM [2] | Three specialized Large Language Models (LLMs) | 98.6% accuracy on test set | Arbitrary 3D crystal structures |

| SynCoTrain [34] | Dual classifier co-training (ALIGNN + SchNet) | High recall on internal and leave-out test sets | Oxide crystals |

| CPUL [35] | Contrastive learning + PU learning | 93.95% true positive rate on MP database | Virtual crystals across multiple families |

| PU-CGCNN [6] | Graph convolutional neural networks | Benchmark for comparison with LLM approaches | General inorganic crystals |

| PU-GPT-embedding [6] | LLM-embeddings + PU classifier | Outperforms PU-CGCNN and fine-tuned LLMs | General inorganic crystals with textual descriptions |

These frameworks demonstrate the evolution from traditional graph-based approaches to more sophisticated architectures incorporating language models and co-training strategies. The CSLLM framework exemplifies this advancement, significantly outperforming traditional synthesizability screening methods based on thermodynamic stability (74.1% accuracy) and kinetic stability (82.2% accuracy) [2]. This performance gap highlights the limitations of physics-based proxies that ignore synthesis kinetics and technological constraints affecting real-world synthesizability [34].

Table 2: Advantages and Limitations of Different PU Learning Approaches

| Approach | Advantages | Limitations |

|---|---|---|

| Dual Classifier Co-training [34] | Reduces model bias, improves generalization | Requires careful architecture selection |

| LLM-based Frameworks [2] | High accuracy, generalizes to complex structures | Computationally intensive, requires fine-tuning |

| Contrastive PU Learning [35] | Efficient feature extraction, shorter training time | Multi-stage pipeline increases complexity |

| Evolutionary Multitasking [37] | Discovers more reliable positive samples | Complex implementation, emerging methodology |

Experimental Protocols and Methodologies

Dataset Construction and Curation

The foundation of effective PU learning in synthesizability prediction lies in careful dataset construction. The standard protocol involves collecting experimentally verified crystal structures from authoritative databases like the Inorganic Crystal Structure Database (ICSD) as positive examples [2]. For instance, one comprehensive study selected 70,120 crystal structures from ICSD with no more than 40 atoms and seven different elements, explicitly excluding disordered structures to focus on ordered crystal structures [2].

The unlabeled set typically combines hypothetical structures from multiple sources, including the Materials Project (MP), Computational Material Database, Open Quantum Materials Database, and JARVIS databases [2]. To create a balanced dataset, researchers often employ pre-trained PU learning models to identify likely non-synthesizable structures; one approach selected 80,000 structures with the lowest crystal-likeness scores (CLscore <0.1) from a pool of 1,401,562 theoretical structures as non-synthesizable examples [2]. This curation process ensures the dataset encompasses diverse crystal systems (cubic, hexagonal, tetragonal, orthorhombic, monoclinic, triclinic, and trigonal) and elements across the periodic table (atomic numbers 1-94, excluding 85 and 87) [2].

The SynCoTrain Co-training Protocol

SynCoTrain implements a sophisticated dual-classifier co-training framework to address generalization challenges in synthesizability prediction. The methodology proceeds through these critical stages:

Architecture Selection: Two graph convolutional neural networks with complementary inductive biases are selected: ALIGNN (Atomistic Line Graph Neural Network), which encodes atomic bonds and bond angles, and SchNetPack, which utilizes continuous convolution filters suitable for atomic structures [34].

Initial Training: Both classifiers are initially trained on labeled positive data (experimentally confirmed structures) and a subset of unlabeled data.

Iterative Co-training: The classifiers iteratively exchange predictions on the unlabeled data. Each classifier identifies high-confidence positive examples from the unlabeled set, which are then incorporated into the other classifier's training process [34].

Prediction Reconciliation: Final labels are determined based on the averaged predictions from both classifiers, reducing individual model biases and improving overall reliability [34].

This collaborative approach enables the model to effectively leverage the unlabeled data while mitigating the risk of confirmation bias that might occur with a single classifier architecture.

CSLLM Framework and Material String Representation

The Crystal Synthesis Large Language Models (CSLLM) framework introduces a novel text representation for crystal structures to facilitate training of specialized large language models. The methodology encompasses:

Material String Formulation: Creating a simplified text representation that integrates space group information, lattice parameters (a, b, c, α, β, γ), and atomic site information in a compact format that excludes redundant coordinate data [2].

Multi-LLM Architecture: Deploying three specialized LLMs dedicated to (i) synthesizability prediction, (ii) synthetic method classification, and (iii) precursor identification [2].

Fine-tuning Strategy: Domain-specific fine-tuning of foundation LLMs on the curated dataset of synthesizable and non-synthesizable structures, aligning the models' linguistic capabilities with crystallographic domain knowledge [2].

This approach demonstrates how adapting general-purpose LLMs to specialized scientific domains can achieve state-of-the-art performance in synthesizability prediction while providing additional capabilities such as synthetic route recommendation.

Workflow Visualization: PU Learning for Synthesizability Prediction

The following diagram illustrates the generalized workflow for applying PU learning to crystal synthesizability prediction, integrating elements from the major frameworks discussed:

PU Learning Workflow for Synthesizability Prediction

This workflow illustrates the standard pipeline for applying PU learning to crystal synthesizability prediction, highlighting the transformation of raw data from experimental and theoretical databases into actionable predictions through specialized machine learning approaches.