Beyond Stability: Decoding Kinetic Synthesizability and Thermodynamic Control in Crystal Engineering for Advanced Therapeutics

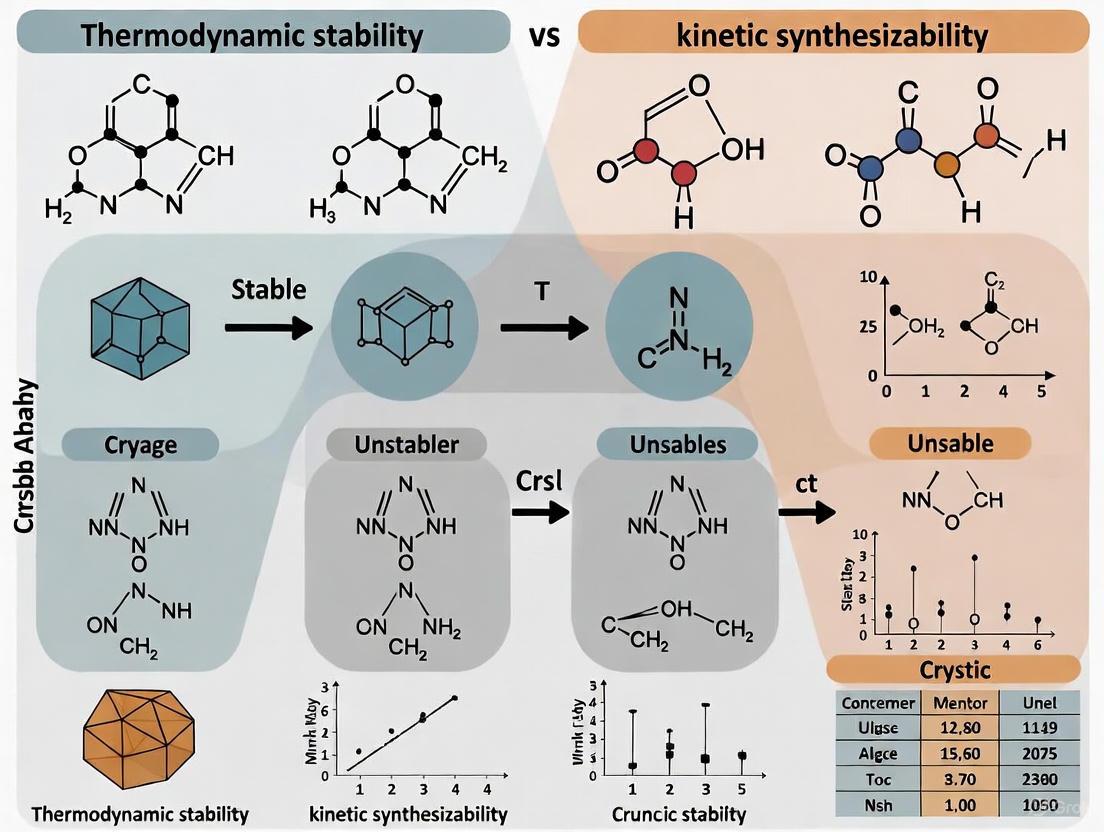

This article explores the critical interplay between thermodynamic stability and kinetic synthesizability in crystal formation, a cornerstone for developing effective pharmaceuticals and materials.

Beyond Stability: Decoding Kinetic Synthesizability and Thermodynamic Control in Crystal Engineering for Advanced Therapeutics

Abstract

This article explores the critical interplay between thermodynamic stability and kinetic synthesizability in crystal formation, a cornerstone for developing effective pharmaceuticals and materials. We first establish the foundational principles distinguishing these concepts and their respective roles in determining a crystal's end-state and formation pathway. The discussion then progresses to advanced computational and experimental methodologies, including machine learning and molecular dynamics, that predict and control these properties. We address common challenges in polymorphic systems and metastable phase synthesis, offering troubleshooting and optimization strategies. Finally, we present a comparative analysis of emerging validation frameworks, highlighting how integrating thermodynamic and kinetic perspectives accelerates the reliable discovery of synthesizable, high-performance materials for biomedical applications.

The Fundamental Duality: Understanding Thermodynamic Stability and Kinetic Synthesizability in Crystal Engineering

The discovery and development of new crystalline materials, pivotal to advancements in pharmaceuticals and technology, are governed by two fundamental but distinct concepts: thermodynamic stability and kinetic synthesizability. Thermodynamic stability defines the equilibrium state of lowest free energy, while kinetic synthesizability describes the accessibility of a material via specific synthesis pathways, which is dependent on activation energies and reaction rates. This whitepaper delineates these core principles for a research-oriented audience, providing the theoretical framework, quantitative data, experimental protocols for measurement, and modern computational tools essential for navigating the complex landscape of material design. Framed within the context of crystalline materials research, this guide underscores that the most stable structure is not always the one that is synthesized, and that successful material prediction must account for both equilibrium and out-of-equilibrium processes.

Theoretical Foundations: Stability vs. Synthesizability

The interplay between thermodynamic stability and kinetic synthesizability is a central paradigm in materials science, determining which phase of a material is observed under given experimental conditions.

Equilibrium Thermodynamic Stability

Thermodynamic stability refers to the state of a material with the lowest Gibbs free energy (G) under a given set of conditions (e.g., temperature and pressure). A material is considered thermodynamically stable if it cannot spontaneously lower its energy by transforming into another phase or decomposing into its constituent elements. The driving force for the formation of a thermodynamically stable product is the negative change in free energy (ΔG < 0) associated with the reaction [1]. In a system with multiple possible products, the thermodynamic product is the one that is globally the most stable, typically possessing a more substituted, internal double bond in organic chemistry examples, contributing to its lower energy state [2].

Pathway-Dependent Kinetic Synthesizability

Kinetic synthesizability, in contrast, is concerned with the rate at which a material forms and the pathway it takes during synthesis. It is governed by the activation energy (Eₐ) of the rate-determining step in the reaction pathway [1]. A high activation energy creates a significant energy barrier, making the reaction slow and potentially allowing for the isolation of metastable products. The kinetic product is the one that forms the fastest, a result of a lower activation energy pathway, even if it is not the most stable product overall [3] [2]. This concept explains why many materials, including glasses and metastable crystal polymorphs, can exist indefinitely despite not being the thermodynamic ground state; they are kinetically trapped in a local minimum on the free energy landscape [1] [4].

Table 1: Core Characteristics of Thermodynamic and Kinetic Concepts

| Feature | Thermodynamic Stability | Kinetic Synthesizability |

|---|---|---|

| Governing Principle | Global minimization of Gibbs Free Energy (ΔG) | Minimization of Activation Energy (Eₐ) and maximization of formation rate |

| Controls | Equilibrium state of the system | Pathway and rate of the synthesis reaction |

| Product Type | Thermodynamic product (more stable) | Kinetic product (forms faster) |

| Key Metric | Free energy difference between products and reactants | Height of the energy barrier along the reaction coordinate |

| Dependence | State function; independent of reaction pathway | Pathway-dependent; sensitive to reaction conditions |

| Analogy | Depth of the valley on a potential energy surface | Height of the hill that must be climbed to exit a valley [1] |

Quantitative Differentiation: The Case of 1,3-Butadiene

The classic reaction of conjugated dienes, such as the electrophilic addition of hydrogen bromide (HBr) to 1,3-butadiene, provides a clear quantitative demonstration of kinetic versus thermodynamic control [3] [2]. This reaction can yield two distinct products: a 1,2-addition product (kinetic) and a 1,4-addition product (thermodynamic). The product ratio is exquisitely sensitive to temperature, as shown in the data below.

Table 2: Temperature-Dependent Product Distribution in the Reaction of 1,3-Butadiene with HBr [3]

| Temperature (°C) | Control Regime | 1,2-adduct (Kinetic) (%) | 1,4-adduct (Thermodynamic) (%) |

|---|---|---|---|

| -15 °C | Kinetic | 70 | 30 |

| 0 °C | Kinetic | 60 | 40 |

| 40 °C | Thermodynamic | 15 | 85 |

| 60 °C | Thermodynamic | 10 | 90 |

The underlying reason for this temperature-dependent product distribution is visualized in the reaction coordinate diagram below. The kinetic product (1,2-adduct) forms faster because it has a lower activation energy. However, the reaction is reversible. At lower temperatures, the system cannot overcome the reverse energy barrier to convert to the more stable product. At higher temperatures, this interconversion becomes possible, and the system reaches an equilibrium dominated by the more stable thermodynamic product (1,4-adduct) [3] [2].

Experimental Methodologies for Probing Stability and Synthesizability

A suite of experimental techniques is employed to measure the thermodynamic and kinetic parameters of materials. The following table summarizes key methods, which are detailed further in the subsequent protocols.

Table 3: The Scientist's Toolkit: Key Experimental Methods

| Technique | Primary Function | Key Measurable Parameters | Application Note |

|---|---|---|---|

| Differential Scanning Calorimetry (DSC) | Measures heat flow associated with phase transitions [5] [6]. | Melting point (T |

Gold standard for thermodynamic stability; requires well-prepared samples [5]. |

| Thermogravimetric Analysis (TGA) | Measures mass change as a function of temperature or time [6]. | Decomposition temperature, thermal stability, composition. | Ideal for studying dehydration, decomposition, and combustion [6]. |

| Differential Scanning Fluorimetry (DSF) | Uses fluorescent dyes to monitor protein unfolding or denaturation [5]. | Melting temperature (T |

Medium-throughput method for stability screening, common in biochemistry [5]. |

| Simultaneous Thermal Analysis (STA) | Combines TGA and DSC in a single experiment [6]. | Mass change and heat flow simultaneously. | Correlates mass loss with energetic events; enhances data interpretability [6]. |

| X-ray Absorption Spectroscopy (XAS) | Probes local geometric and electronic structure [6]. | Oxidation state, coordination environment. | Element-specific technique for speciation and local structure analysis [6]. |

Detailed Protocol: Measuring Protein Thermal Stability via DSC

Principle: DSC directly measures the heat capacity of a protein solution as it is heated, detecting the endothermic peak associated with unfolding [5].

Procedure:

- Sample Preparation: Prepare a highly purified protein solution in an appropriate buffer. Dialyze the protein extensively against the buffer to ensure exact matching with the reference solution. Concentrate the sample to a typical range of 0.1-1.0 mg/mL. Centrifuge to remove any aggregates.

- Instrument Calibration: Perform a baseline run with both sample and reference cells filled with buffer. Calibrate the instrument for temperature and enthalpy using standard references (e.g., indium).

- Experimental Run: Load the protein solution into the sample cell and an equal volume of dialysis buffer into the reference cell. Seal the cells to prevent evaporation. Run a temperature ramp from, for example, 10°C to 100°C at a controlled scan rate (e.g., 1°C/min).

- Data Analysis: Subtract the buffer-buffer baseline from the sample data. Identify the melting temperature (T

), which is the temperature at the maximum of the endothermic unfolding peak. Integrate the area under the peak to determine the enthalpy change (ΔH) of unfolding.

Detailed Protocol: Assessing Material Stability via TGA

Principle: TGA monitors the mass of a sample as the temperature is increased, identifying events such as dehydration, decomposition, and oxidation [6].

Procedure:

- Sample Loading: Tare a high-temperature stable platinum or alumina crucible. Accurately weigh (e.g., 5-20 mg) of the solid sample into the crucible.

- Method Definition: Set up a temperature program in the instrument software. A typical method might involve an isotherm at 30°C for 5 minutes, followed by a ramp to 800°C at a rate of 10°C/min, under a nitrogen (inert) or air (oxidizing) atmosphere with a controlled flow rate.

- Measurement: Start the analysis. The instrument records mass as a function of time and temperature.

- Data Interpretation: Plot mass (%) versus temperature. A mass loss step indicates a thermal event. The onset temperature of mass loss marks the beginning of decomposition. The percentage mass loss at each step can be used to determine the composition, such as the water or solvent content in a hydrate.

Beyond Simple Stability: The Synthesizability Challenge in Material Design

For crystalline inorganic materials, thermodynamic stability is an insufficient predictor of whether a material can be synthesized. This is the central challenge of synthesizability.

Limitations of Traditional Proxies

- Charge-Balancing: A commonly used heuristic for ionic compounds is that a material must be charge-balanced. However, an analysis of known materials reveals that only 37% of synthesized inorganic crystalline materials in databases are charge-balanced according to common oxidation states. For binary cesium compounds, this figure is a mere 23%, indicating that bonding environments (metallic, covalent, etc.) often defy this simple rule [7].

- Formation Energy from DFT: Density-functional theory (DFT) calculations can predict if a material is thermodynamically stable against decomposition into other phases. While necessary, this condition is not sufficient. Many synthesizable materials are metastable, meaning they lie in a local free energy minimum and are protected from decomposition by kinetic barriers. Relying solely on formation energy captures only about 50% of known synthesized materials [7].

The Machine Learning Approach: Predicting Synthesizability

To address these limitations, modern research has turned to data-driven machine learning models. SynthNN is an example of a deep learning model trained on the entire space of known synthesized inorganic materials from the Inorganic Crystal Structure Database (ICSD) [7].

Methodology:

- Positive-Unlabeled Learning: The model is trained using a "Positive-Unlabeled" (PU) framework. The "positive" data are known synthesized materials from ICSD. The "unlabeled" data are a vast set of artificially generated chemical compositions that are presumed to be mostly unsynthesizable, with the acknowledgment that some may be synthesizable but not yet discovered [7].

- Atom2Vec Representation: The model uses an atom embedding technique (inspired by word2vec in natural language processing) to represent chemical formulas. This allows the model to learn the "chemical language" of synthesizability directly from the data, without relying on pre-defined rules like charge-balancing [7].

- Performance: In benchmarks, SynthNN identified synthesizable materials with 7x higher precision than using DFT-calculated formation energies alone. In a head-to-head discovery challenge against human experts, SynthNN achieved 1.5x higher precision and was five orders of magnitude faster [7].

This workflow, from prediction to synthesis, is summarized below.

The distinction between equilibrium thermodynamic stability and pathway-dependent kinetic synthesizability is fundamental to the targeted design of new materials, including crystalline polymorphs critical to pharmaceutical development. Thermodynamic stability defines the ultimate endpoint of a material, while kinetic factors control the accessible pathways to reach it. As the field advances, the integration of traditional experimental techniques—like DSC and TGA—with powerful machine learning models that learn the complex rules of synthesizability directly from data, represents the frontier of materials research. Acknowledging and leveraging the interplay between these two concepts is key to transitioning from serendipitous discovery to rational design of novel, functional materials.

In the research and development of new materials and therapeutics, a fundamental challenge lies in predicting and ensuring stability. This challenge is framed by a critical dichotomy: a material must be both thermodynamically stable (i.e., its chemical composition and structure represent a low-energy state) and kinetically synthesizable (i.e., it can be formed within a practical timeframe). While kinetics dictates the pathway and rate of formation, thermodynamics determines the final, stable state. The core thermodynamic potential governing this stability at constant temperature and pressure is the Gibbs Free Energy (G). A system at phase and chemical equilibrium is characterized by the minimum possible value of its Gibbs free energy [8]. This principle is the cornerstone of predicting whether a material, once synthesized, will remain stable or transform into a different, more stable phase over time. For researchers developing crystalline materials or solid-state biologic formulations, accurately modeling this energy minimization is therefore paramount. This guide details the core concepts, computational methodologies, and practical tools for applying these principles to predict material stability.

Core Theoretical Concepts

The Role of Gibbs Free Energy

For an isothermal, isobaric, closed system, the relevant thermodynamic potential is the Gibbs free energy. It can be expressed as a Legendre transform of the enthalpy, H, and internal energy, E, as: G = H - TS = E + PV - TS where T is temperature, S is entropy, P is pressure, and V is volume [9]. For systems comprising primarily condensed phases (e.g., solids), the PV term is often neglected. Furthermore, at 0 K, the expression for G simplifies to just the internal energy, E [9]. The normalization of G (or E at 0 K) with respect to the total number of particles in the system yields the energy per atom, Ē, which is the fundamental quantity used in stability analysis.

Phase Stability and the Convex Hull

The thermodynamic stability of a material is not determined by its formation energy alone, but by its energy relative to all other competing compounds in the relevant chemical space [10]. This is quantified by its decomposition enthalpy, ΔH_d, which is approximated from computational data as the total energy difference between a given compound and the most stable combination of other compounds in the system.

The tool for finding this most stable combination is the convex hull construction [10]. In the composition space, the formation energies (or Gibbs free energies) of all known compounds are plotted. The convex hull is the smallest convex set containing all these points. Graphically, for a 2D binary system, one can imagine pulling a string from below the energy-composition curve; the shape formed by the string is the convex hull [11].

- Stable Compositions: Compounds whose formation energies lie on the convex hull are thermodynamically stable. Their ΔH_d is negative.

- Unstable/Metastable Compositions: Compounds whose formation energies lie above the convex hull are unstable. The vertical distance from a point to the hull is its decomposition energy, a positive value of ΔH_d, indicating the energy cost required for it to decompose into the stable phases on the hull [9] [10].

This construction elegantly captures the common tangent method, where the conditions for phase equilibrium (equal temperature, pressure, and chemical potentials) are satisfied by the straight-line segments of the hull [11]. The convex hull method is a general approach that automatically generates both the number and types of phases present at equilibrium, provided thermodynamic data for all possible phases are included [8].

From Hulls to Phase Diagrams

The convex hull construction at a single temperature and pressure provides a single point on a phase diagram. By calculating the convex hull across a range of temperatures (which requires incorporating the entropy contribution, -TS, into the Gibbs free energy), one can construct the familiar temperature-composition phase diagrams [11]. As temperature changes, the relative stability of phases shifts, leading to changes in the hull's geometry, which manifest as different phase fields (e.g., solid, liquid, two-phase regions) in the diagram.

Computational Methods and Protocols

Density Functional Theory (DFT) for Energy Calculation

The primary source of energy data for computational stability prediction is Density Functional Theory (DFT). DFT provides a quantum mechanical method to calculate the total energy of a specific crystal structure.

Protocol: Calculating Formation Energy via DFT

- Structure Selection: Obtain or generate crystal structure files (e.g., in CIF format) for all known compounds in the chemical system of interest (e.g., Li-Fe-O) and for the pure elemental phases of the constituent elements.

- Energy Calculation: Perform a DFT calculation for each structure to obtain its total energy, E_total. These calculations are typically done at 0 K, neglecting zero-point energy.

- Formation Energy Calculation: The formation energy per atom, ΔE_f, for a compound is calculated as: ΔE_f = [E_total(compound) - Σ_i n_i E_total(element_i)] / N where n_i is the number of atoms of element i in the compound's formula unit, E_total(element_i) is the total energy of the pure element in its standard reference state, and N is the total number of atoms in the formula unit [9].

- Data Correction: For increased accuracy, apply any necessary correction schemes (e.g., Materials Project's GGA/GGA+U mixing scheme) to ensure consistent energy comparisons across different types of calculations [9].

Convex Hull Construction Protocol

Protocol: Constructing a T=0 K Phase Diagram

- Data Collection: Collect the computed formation energies (ΔE_f) for all compounds in a given chemical system.

- Hull Calculation: Input the list of compositions and their corresponding ΔE_f into a convex hull algorithm. The pymatgen code snippet below demonstrates this process.

- Stability Analysis: The algorithm outputs:

- The set of stable compounds (those on the hull).

- The decomposition pathway and energy for unstable compounds (those above the hull).

Code Example using pymatgen (from the Materials Project)

Source: Adapted from the Materials Project methodology [9]

Advanced Methods: Machine Learning and Active Learning

Given the combinatorial vastness of composition space, exhaustive DFT calculation is impossible. Machine learning (ML) models have been trained to predict formation energies directly from composition or structure [10]. However, a critical caveat is that an accurate prediction of formation energy does not guarantee an accurate prediction of stability (ΔH_d), due to the lack of systematic error cancellation when comparing energies of different compounds [10].

A more sophisticated approach is Convex hull-aware Active Learning (CAL). This Bayesian algorithm uses Gaussian process regressions to model energy surfaces and directly reasons about the uncertainty in the convex hull itself. It iteratively selects the next composition to simulate (e.g., via DFT) based on which data point is expected to maximally reduce the uncertainty in the hull, dramatically improving efficiency over brute-force methods [12] [13].

Visualization of Workflows

Workflow for Thermodynamic Stability Prediction

The following diagram illustrates the integrated workflow for predicting thermodynamic stability, combining high-throughput computation and active learning.

The Scientist's Toolkit: Research Reagents and Computational Solutions

The following table details key resources and their functions in computational thermodynamic stability analysis.

| Research Reagent / Solution | Function in Research |

|---|---|

| DFT Codes (VASP, Quantum ESPRESSO) | Software packages that perform quantum mechanical calculations to determine the total energy of a crystal structure from first principles. |

| Materials Project (MP) Database | A vast open database of pre-computed DFT energies for thousands of inorganic compounds, providing essential data for hull construction [9]. |

| pymatgen Library | A robust Python library for materials analysis. Its PhaseDiagram class is the industry standard for constructing convex hulls from computed data [9]. |

| Machine Learning Models (ElemNet, Roost) | Deep learning models that predict formation energies directly from a material's composition, enabling rapid screening of large compositional spaces [10]. |

| Convex Hull-Aware Active Learning (CAL) | A Bayesian algorithm that intelligently selects which compositions to simulate next to minimize uncertainty in the convex hull with minimal computations [12] [13]. |

| ICSD (Inorganic Crystal Structure Database) | A comprehensive collection of known crystal structures, used as a source of initial structural models for DFT calculations. |

Data Presentation and Analysis

Performance of Machine Learning Models for Stability Prediction

A critical examination of ML models reveals the distinction between predicting formation energy and predicting stability. The table below summarizes the performance of various compositional ML models on a test set of 85,014 compounds from the Materials Project [10]. While MAE for formation energy is relatively low, the high FPR for stability highlights the challenge.

| Model Type | Model Name | Formation Energy MAE (eV/atom) | Stability FPR (%) |

|---|---|---|---|

| Baseline | ElFrac | 0.49 | 45.3 |

| Compositional (Feature-Based) | Meredig | 0.36 | 21.1 |

| Compositional (Feature-Based) | Magpie | 0.29 | 17.6 |

| Compositional (Deep Learning) | ElemNet | 0.13 | 18.0 |

| Compositional (Graph Network) | Roost | 0.10 | 15.7 |

| Structural | Structural Model | - | 5.3 |

Source: Adapted from "A critical examination of compound stability predictions..." [10]. MAE: Mean Absolute Error; FPR: False Positive Rate (percentage of unstable compounds incorrectly predicted as stable).

The computational framework built upon Gibbs free energy minimization and convex hull construction provides a powerful and rigorous foundation for predicting the thermodynamic stability of crystals. Methodologies ranging from high-throughput DFT using databases like the Materials Project to advanced Convex hull-aware Active Learning algorithms have made it possible to map phase stability with unprecedented speed and efficiency. A key insight for researchers is that accurate formation energy prediction is necessary but not sufficient for reliable stability classification, a challenge that structural models and active learning are beginning to solve.

This robust prediction of thermodynamic stability sets the stage for addressing the second part of the core research dilemma: kinetic synthesizability. A material on the convex hull is a thermodynamic sink, but synthesizing it requires navigating kinetic barriers. The convergence of these two paradigms—precise thermodynamic stability mapping and an understanding of kinetic pathways—will ultimately empower researchers to not only identify which crystals can exist but also to devise the strategies to make them.

The pursuit of new functional materials, particularly in pharmaceutical and advanced materials science, has traditionally relied on thermodynamic stability as the primary indicator of synthesizability. This paradigm assumes that the most thermodynamically stable crystal structure, characterized by the global minimum in free energy, will preferentially form under given conditions. However, this perspective fails to explain the pervasive observation of metastable crystalline states—structures that persist in local free energy minima despite not being the most stable configuration. These metastable states often possess technologically desirable properties unattainable by their stable counterparts, making their controlled synthesis a critical goal. The challenge is exemplified by the documented difficulty in synthesizing computationally predicted ternary compounds like La₂SiP, La₅SiP₃, and La₂SiP₃, where kinetic barriers, specifically the rapid formation of a Si-substituted LaP phase, prevent the target phases from forming, despite their predicted existence [14].

This guide articulates the kinetic perspective that governs the formation and persistence of such metastable crystals. The formation of any crystalline phase, stable or metastable, must be understood as a kinetically driven process where the system must navigate a complex energy landscape with multiple minima, rather than simply finding the deepest well. The central thesis posits that while thermodynamic stability determines which states can exist, kinetic factors—specifically energy barriers, nucleation rates, and the manipulation of metastable states—dictate which states will be observed and isolated under realistic synthetic conditions. This framework is essential for rationalizing and overcoming synthesis challenges, transforming materials discovery from a thermodynamic screening exercise into a deliberate kinetic design process.

Theoretical Foundations of Kinetics in Crystallization

The Energy Landscape of Crystallization

The journey from a disordered phase (solution, melt, or vapor) to an ordered crystal occurs on a potential energy surface characterized by multiple minima and maxima. A metastable state is defined as a dynamical configuration that persists in a local free energy minimum that is not the global minimum [15]. Its persistence is not due to inherent stability but to the kinetic barriers that separate it from more stable states. These barriers, with a height denoted as ΔG‡, are determined by enthalpy (ΔH‡) and entropy (-TΔS‡) changes along the reaction coordinate: ΔG‡ = ΔH‡ - TΔS‡ [15]. The system's escape rate from a metastable well is governed by Kramers' theory, which in the overdamped regime is given by:

r = (ω₀ω_b / 2πγ) exp(-ΔG‡ / kT)

where ω₀ and ω_b are the angular frequencies associated with the curvatures at the metastable minimum and barrier top, respectively, γ is the friction coefficient, k is Boltzmann's constant, and T is temperature [15]. This mathematical description highlights that the lifetime of a metastable state depends exponentially on the barrier height and is modulated by dissipative effects in the system.

Table 1: Key Characteristics of Stable and Metastable Crystalline States

| Characteristic | Stable State | Metastable State |

|---|---|---|

| Thermodynamic Status | Global free energy minimum | Local free energy minimum |

| Persistence | Indefinite, barring external energy input | Finite, but potentially long duration |

| Governing Factor | Thermodynamic driving force (ΔG) | Kinetic barrier height (ΔG‡) |

| Formation Pathway | May be bypassed due to high kinetic barriers | Often forms first according to Ostwald's Rule |

| Synthesizability Prediction | Poorly predicted by formation energy alone | Requires kinetic and thermodynamic analysis |

Classical Nucleation Theory (CNT) and Its Kinetic Framework

Classical Nucleation Theory provides a quantitative framework for describing the initial, rate-limiting step of phase transitions, including crystallization. CNT posits that the formation of a new phase proceeds through the stochastic formation of small clusters that must surpass a critical size to become stable and grow spontaneously [16]. The free energy change (ΔG) associated with forming a spherical cluster of radius r is given by:

ΔG = - (4/3)πr³ |Δμ| / v_m + 4πr²γ

where Δμ is the chemical potential difference driving the phase change (positive in supersaturated conditions), v_m is the molecular volume, and γ is the interfacial free energy between the cluster and parent phase [16]. The first term represents the volumetric free energy gain, which favors cluster growth, while the second term represents the surface energy penalty, which destabilizes small clusters.

This relationship results in an energy barrier, ΔG, at a critical radius r. The critical radius and barrier height are derived as:

r* = 2γv_m / |Δμ| and ΔG* = (16πγ³v_m²) / (3(Δμ)²)

Clusters smaller than r* tend to dissolve, while those larger than r* are likely to grow into macroscopic crystals [16]. The nucleation rate J, representing the number of stable nuclei formed per unit volume per unit time, is then:

J = Z β* n* exp(-ΔG* / kT)

where Z is the Zeldovich factor (accounting for curvature in the free energy landscape), β* is the attachment rate of molecules to the critical nucleus, and n* is the concentration of critical nuclei [16]. This equation highlights the profound sensitivity of the nucleation rate to the energy barrier, which itself depends on supersaturation and interfacial energy.

Quantitative Analysis of Kinetic Parameters

The practical application of kinetic theory requires quantification of key parameters that govern nucleation and growth behavior. Experimental measurements across diverse systems have yielded valuable comparative data.

Table 2: Experimentally Determined Nucleation Parameters for Selected Systems

| System | Nucleation Rate, J (m⁻³s⁻¹) | Critical Barrier Height, ΔG*/kT | Induction Time Range | Primary Measurement Technique |

|---|---|---|---|---|

| Ascorbic Acid in Water | Increases with supersaturation | Derived from J vs S plot | Up to 5 hours | Isothermal transmissivity (Crystal16) [17] |

| Ascorbic Acid in Water-Ethanol | Decreases with higher ethanol fraction | Varies with solvent composition | Up to 5 hours | Isothermal transmissivity (Crystal16) [17] |

| La–Si–P Ternary Compounds | Effectively zero for target phases | Barrier from competing LaP phase | N/A | Molecular Dynamics simulation [14] |

| Membrane Distillation Crystallization | Modifiable via supersaturation rate | Reduced at high supersaturation | Controllable | Nývlt-like model linking parameters to rate [18] |

The data reveals several critical trends. First, the nucleation rate exhibits a positive dependence on supersaturation across systems, as predicted by CNT. Second, the solvent environment profoundly impacts kinetics, as seen with ascorbic acid, where increasing ethanol fraction reduces the nucleation rate, likely due to changes in interfacial energy or molecular mobility [17]. Third, in complex multi-component systems like La-Si-P, nucleation can be completely inhibited by kinetic competition from intervening phases, making the target materials effectively un-synthesizable despite their thermodynamic accessibility [14].

The Metastable Zone Width (MSZW)

An essential practical concept is the Metastable Zone Width (MSZW), defined as the region in the phase diagram between the solubility curve and the spontaneous nucleation boundary where the system remains metastable [18]. The MSZW is not a fixed thermodynamic property but depends on kinetic factors including cooling rate, agitation, and presence of impurities. A Nývlt-like relationship can relate multiple conditional parameters to nucleation rate and supersaturation in complex processes like membrane distillation crystallization [18]. Parameters such as membrane area, vapor flux, temperature difference, and crystallizer volume can be independently modified to control the supersaturation rate, which directly affects induction time and MSZW. Increasing supersaturation rate generally reduces induction time and broadens the MSZW, favoring bulk homogeneous nucleation over surface-mediated heterogeneous nucleation [18].

Experimental Protocols for Kinetic Analysis

Protocol 1: Isothermal Induction Time Measurement for Nucleation Kinetics

This protocol determines nucleation kinetics by measuring the stochastic induction time at various constant supersaturation levels, as automated in systems like Crystal16 [17].

Materials and Equipment:

- Crystallization reactor with accurate temperature control (±0.1°C)

- In-situ transmissivity probe or turbidity sensor

- Data acquisition system for continuous monitoring

- Thermostated bath or Peltier-controlled wells

- Filtered solutions of the target compound in selected solvents

Procedure:

- Prepare a saturated solution at a known equilibrium temperature (Tₑq).

- Heat the solution to 20°C above Tₑq at a controlled rate of 0.3°C/min to ensure complete dissolution of all crystals.

- Rapidly cool the solution to the target supersaturation temperature (e.g., 20°C below Tₑq) at a fast cooling rate (20°C/min) to establish instant supersaturation.

- Maintain isothermal conditions constant (±0.1°C) and monitor transmissivity continuously.

- Record the induction time (tᵢ) as the time interval between achieving supersaturation and the observed drop in transmissivity indicating nucleation.

- Repeat measurements 80-100 times at each supersaturation to account for stochasticity.

- Repeat steps 1-6 for different supersaturation levels and solvent compositions.

Data Analysis:

- Construct cumulative probability distributions of induction times at each supersaturation.

- Fit distributions using a non-linear least squares method to a Poisson probability function to extract the nucleation rate (J) and growth time (t_g).

- Plot ln(J/S) versus 1/ln²S to estimate kinetic parameter A (related to molecular attachment frequency) and thermodynamic parameter B (related to activation energy) from the intercept and slope, respectively [17].

Protocol 2: Molecular Dynamics Simulation of Phase Competition

This computational protocol investigates synthetic challenges when multiple competing phases exist, as demonstrated for La-Si-P systems [14].

Computational Resources:

- High-performance computing cluster

- Molecular dynamics software (e.g., LAMMPS, GROMACS)

- Accurate interatomic potential (e.g., artificial neural network machine learning potential)

- Structure visualization software

Procedure:

- System Setup: Construct simulation cells containing the atomic species of interest in proportions corresponding to target and competing phases.

- Potential Development: Train or select an artificial neural network machine learning interatomic potential that accurately reproduces known structural and energetic properties of related compounds.

- Equilibration: Bring the system to equilibrium at the target synthesis temperature using NPT or NVT ensembles.

- Growth Simulation: Simulate crystal growth from melt or solution interfaces, monitoring the emergence of different crystalline phases.

- Free Energy Calculation: Compute free energy profiles for the formation of target and competing phases using enhanced sampling methods (e.g., metadynamics, umbrella sampling).

- Kinetic Analysis: Determine energy barriers for phase transformations and quantify growth velocities of different phases.

Data Analysis:

- Identify the rapidly forming crystalline phases that act as kinetic barriers to target phase formation.

- Calculate the relative growth kinetics of competing phases from solid-liquid interfaces.

- Determine the narrow temperature windows where target phase growth may be favored over competing phases [14].

- Validate simulation predictions against experimental attempts at synthesis.

Diagram 1: Isothermal Induction Time Measurement Workflow

Advanced Synthesis: Exploiting Metastability in Material Design

Controlled Transitions Between Metastable States

In self-assembling systems, multiple metastable states often coexist for a fixed number of particles, each with different symmetrical features. Controlled transitions between these states can be achieved through external fields, as demonstrated in 2D magnetocapillary crystals [19]. For instance, applying a horizontal magnetic field component (Bₓ or By) to a crystal under constant vertical field (Bz) modifies the pairwise interaction potential according to:

u_ij = -K₀(x_ij) + Mc/x_ij³ (1 + β² - 3β² cos² θ_ij)

where β = Bₓ/Bz, xij is the normalized distance, and θij is the angle between the inter-particle vector and the x-axis [19]. By following specific cycles in the horizontal field plane (Bₓ, By), the entire crystal can be deformed and reorganized, and upon returning to the initial field conditions, may relax into a different metastable state. This approach enables navigation between different symmetrical configurations of the same number of particles, a key capability for functionalizing self-assembled structures.

Machine Learning for Predicting Kinetic Synthesizability

Traditional synthesizability assessment based solely on thermodynamic formation energy fails to account for kinetic accessibility. Recent advances employ machine learning to predict synthesizability directly from compositional or structural data. The Crystal Synthesis Large Language Model (CSLLM) framework utilizes three specialized LLMs to predict synthesizability of arbitrary 3D crystal structures, suggest synthetic methods, and identify suitable precursors [20]. This approach achieves 98.6% accuracy in synthesizability prediction, significantly outperforming traditional methods based on energy above convex hull (74.1% accuracy) or phonon stability (82.2% accuracy) [20]. Similarly, SynthNN, a deep learning synthesizability model, leverages the entire space of synthesized inorganic chemical compositions and outperforms both DFT-based methods and human experts in identifying synthesizable materials [7]. These models learn chemical principles like charge-balancing and ionicity directly from data without prior chemical knowledge, enabling more reliable prediction of kinetically accessible materials [7].

Table 3: Key Research Tools for Kinetic Studies of Crystallization

| Tool/Resource | Function/Application | Key Features |

|---|---|---|

| Crystal16 | Automated parallel crystallization screening | Measures induction times via transmissivity; built-in CNT analysis [17] |

| Artificial Neural Network (ANN) Potentials | Molecular dynamics simulations of complex systems | Accurate and efficient interatomic potentials for studying phase competition [14] |

| Electrostatic Levitator | Containerless study of supercooled liquids | Enables studies of metastable liquids at extreme temperatures >3000K [21] |

| CSLLM Framework | Predicting synthesizability and precursors | Three LLMs for synthesizability, methods, and precursors; >90% accuracy [20] |

| Helmholtz Coil System | Controlled magnetic field application | Tri-axial system for imposing arbitrary magnetic fields to trigger state transitions [19] |

Diagram 2: Energy Landscape and Kinetic Pathways in Crystallization

The kinetic perspective reveals that the synthesizability of crystalline materials is not determined solely by thermodynamic stability but by the complex interplay of energy barriers, nucleation rates, and the strategic manipulation of metastable states. This understanding transforms materials design from a search for global minima into a deliberate navigation of energy landscapes. Key principles emerge: metastable states often form first according to Ostwald's Rule, controlled by kinetic accessibility rather than thermodynamic stability; nucleation kinetics can be quantitatively predicted and manipulated through supersaturation control, interface engineering, and external fields; and synthetic outcomes depend critically on managing phase competition through understanding relative growth kinetics.

The integration of advanced computational methods—from machine-learned interatomic potentials predicting phase competition to large language models assessing synthesizability—with precise experimental protocols for kinetic analysis represents a powerful framework for future materials discovery. This kinetic-centric approach enables researchers to not only explain why certain predicted materials resist synthesis but to design strategies to overcome these barriers, opening pathways to previously inaccessible functional materials with tailored properties for pharmaceutical, energy, and advanced technological applications.

The solid-state form of an Active Pharmaceutical Ingredient (API)—whether crystalline, amorphous, or as a cocrystal—is a fundamental Critical Quality Attribute (CQA) that dictates its real-world therapeutic and manufacturable potential [22] [23]. A drug candidate must not only demonstrate potent interaction with its biological target but must also be capable of being consistently synthesized, formulated into a stable dosage form, and maintain its integrity throughout its shelf life to deliver the intended therapeutic effect [24]. This creates a complex interplay between the thermodynamic stability of the solid form, which governs its intrinsic solubility and dissolution rate, and its kinetic synthesizability, which determines the feasibility of manufacturing it on a practical scale [25] [22].

This guide examines the critical relationships between a drug's solid-state properties and its key development outcomes. It details how thermodynamic and kinetic principles provide a predictive framework for understanding a drug's aqueous solubility (and hence its bioavailability), its chemical stability over time (shelf-life), and the very feasibility of its synthesis. Furthermore, we explore how advanced computational and experimental methods are used to de-risk drug development by providing atomistic insights and quantitative predictions of these properties early in the discovery pipeline [26] [22] [27].

Core Concepts: Thermodynamic Stability vs. Kinetic Synthesizability

Defining the Paradigm

In pharmaceutical development, thermodynamic stability and kinetic synthesizability represent two distinct but equally critical axes for evaluating a drug candidate.

- Thermodynamic Stability refers to the state of lowest free energy under a given set of conditions (e.g., temperature, pressure). For crystals, the most stable polymorph is the least soluble and possesses the highest melting point. While this is advantageous for shelf-life, it can be detrimental to bioavailability [27].

- Kinetic Synthesizability refers to the feasibility and rate at which a particular solid form (including metastable polymorphs) can be produced. A structure may be thermodynamically metastable but kinetically persistent and readily synthesizable, making it a viable development candidate [25].

The following table summarizes the key distinctions and their pharmaceutical impacts.

Table 1: Contrasting Thermodynamic Stability and Kinetic Synthesizability in Drug Development

| Feature | Thermodynamic Stability | Kinetic Synthesizability |

|---|---|---|

| Core Principle | Governed by global minimum in free energy (e.g., Gibbs free energy). | Governed by the energy pathway and activation barriers of formation. |

| Primary Pharmaceutical Impact | Determines intrinsic solubility, dissolution rate, and ultimate bioavailability. | Determines the feasibility of manufacturing a consistent solid form at scale. |

| Relationship to Polymorphism | The most stable polymorph has the lowest solubility and highest lattice energy. | Metastable polymorphs, which may have higher solubility, can be kinetically trapped. |

| Computational Prediction | Modeled via crystal structure prediction (CSP) and lattice energy minimization [22] [27]. | Assessed via complex models (e.g., CSLLM, basin hypervolume) beyond simple energy-above-hull [25]. |

| Risk Factor | Low solubility leading to poor efficacy. | Failure to consistently crystallize the desired form; unexpected phase transitions during storage. |

The Thermodynamic-Kinetic Nexus in Drug Properties

The interplay between these concepts directly influences critical drug properties. For instance, a metastable polymorph offers a kinetic advantage of higher solubility and faster dissolution, but carries the thermodynamic risk of converting to a more stable, less soluble form over time, compromising product performance [22]. Similarly, synthesizability is not merely a matter of whether a crystal can form, but also which form appears fastest under given reaction conditions. A compound like ABT-072 exhibited diverse polymorphism due to its molecular flexibility, presenting a significant kinetic challenge in isolating a single pure form, whereas the more rigid ABT-333 had a much simpler polymorph landscape [22].

Impact on Drug Solubility and Efficacy

The Solubility Challenge and Thermodynamic Drivers

Poor aqueous solubility is a predominant hurdle in modern drug development, as it directly limits the amount of drug available for absorption into the bloodstream (bioavailability). The thermodynamic basis of solubility is elegantly described by a solubility thermodynamic cycle, which decomposes the process into two steps [27]:

- Sublimation: Breaking apart the crystalline lattice to bring a molecule into the gas phase. The free energy for this step, ( \Delta G_{sub}^o ), is a direct measure of the crystal lattice stability.

- Solvation: Transferring the gas-phase molecule into the aqueous solution. The free energy for this step is ( \Delta G_{solv}^o ).

The overall standard state solubility free energy is the sum: ( \Delta G{solubility}^o = \Delta G{sub}^o + \Delta G{solv}^o ) [27]. This equation highlights that a high lattice energy (less negative ( \Delta G{sub}^o )) opposes solubility, while favorable interactions with water (more negative ( \Delta G_{solv}^o )) promote it.

Table 2: Key Thermodynamic and Kinetic Parameters in Solubility and Synthesis

| Parameter | Description | Direct Pharmaceutical Implication |

|---|---|---|

| Dissociation Constant (K_d) | Equilibrium constant for drug-target complex dissociation. ( Kd = k{off}/k_{on} ) [26] | Lower K_d indicates higher binding affinity and potency. |

| Residence Time | Reciprocal of the dissociation rate constant (( \tau = 1/k_{off} )) [26] | Longer residence time often correlates with prolonged efficacy in vivo. |

| Sublimation Enthalpy (( \Delta H_{sub} )) | Energy required to transfer one mole of a solid to its gas phase [28]. | Directly correlates with lattice energy; higher ( \Delta H_{sub} ) typically means lower solubility. |

| Energy Above Hull | Measure of a compound's thermodynamic stability relative to its competing phases [25]. | Standard metric for synthesizability screening; a positive value indicates metastability. |

| CLscore | A machine-learning-based score predicting the synthesizability of a crystal structure [25]. | Scores below 0.1 indicate non-synthesizable structures; used to generate negative training data. |

Lattice Energy and Solubility: A Case Study

The inverse relationship between crystal stability and solubility is clearly demonstrated in cocrystals of the antitubercular drug isoniazid. Research showed that the sublimation enthalpy (( \Delta H{sub} )), a proxy for lattice energy, was defined by the coformer molecule. For isoniazid cocrystals with aliphatic dicarboxylic acids, ( \Delta H{sub} ) ranged from 185 to 200 kJ·mol⁻¹, and a direct linear correlation was established: increased stability (higher ( \Delta H_{sub} )) resulted in decreased solubility [28]. This provides a quantitative design rule for formulating soluble yet stable cocrystals.

Conformational Flexibility and Polymorphism

Molecular conformation in the solid state significantly impacts packing efficiency and stability. A comparative study of HCV drugs ABT-072 and ABT-333 illustrated this. ABT-072, with a flexible trans-olefin group, adopted various conformations to stabilize crystal packing, leading to a diverse and complex polymorph landscape. In contrast, the more rigid ABT-333, with a naphthyl group, exhibited a much simpler polymorph landscape with only one dominant low-energy structure [22]. This conformational flexibility, while enabling multiple polymorphs, introduces significant development risk regarding which form—and therefore which solubility profile—will be consistently manufactured.

Implications for Drug Shelf-Life and Stability

Kinetic Modeling of Drug Degradation

A drug's shelf-life is determined by its chemical and physical stability under storage conditions. Degradation kinetic studies are essential to ascertain the rate at which a drug degrades under various environmental stressors (e.g., temperature, humidity, pH) and to predict its expiration date [29]. The order of the degradation reaction is a critical characteristic determined through these studies.

Table 3: Common Orders of Drug Degradation Reactions and Associated Kinetics

| Reaction Order | Rate Law | Integrated Rate Equation | Half-Life (t₁/₂) Equation | Pharmaceutical Examples |

|---|---|---|---|---|

| Zero-Order | ( r = k_0 ) | ( At = A0 - k_0 t ) | ( t{1/2} = A0 / (2k_0) ) | Degradation of atorvastatin under basic hydrolysis [29]. |

| First-Order | ( r = k_1 [A] ) | ( \ln At = \ln A0 - k_1 t ) | ( t{1/2} = \ln 2 / k1 ) | Degradation of imidapril hydrochloride (hydrolytic) and meropenem (thermal) [29]. |

| Pseudo-First-Order | ( r = k' [A] ) | ( \ln At = \ln A0 - k' t ) | ( t_{1/2} = \ln 2 / k' ) | Common in solid dosage forms and suspensions where the concentration of one reactant remains constant [29]. |

The degradation of many pharmaceuticals, such as the color, texture, and rancidity of dried coconut chips, has been successfully modeled using zero-order kinetics [30]. For these products, the peroxide value (PV) change was a key indicator of rancidity, with an activation energy (( E_a )) of 11.83 kJ·mol⁻¹, allowing shelf-life prediction at different storage temperatures [30].

Solid-State Decomposition Kinetics

For solid APIs, decomposition often follows complex pathways. A kinetic study of the redox therapeutic MnTE-2-PyPCl₅ investigated its primary solid-state degradation pathway: loss of ethyl chloride via N-dealkylation. Using isoconversional models and artificial neural network analysis, the study determined an average activation energy (( Ea )) of ~90 kJ·mol⁻¹. By modeling the isothermal decomposition data, the shelf-life for 10% decomposition (( t{90\%} )) at 25°C was estimated to be approximately 17 years, providing critical data for its formulation, handling, and storage [31].

Predicting Synthesizability and Stability

Computational Crystal Structure Prediction

Crystal Structure Prediction (CSP) has become an indispensable tool for mapping the polymorphic landscape of an API. Modern CSP workflows use dispersion-corrected density functional theory (DFT-D) to rank predicted crystal packings by their lattice energy, identifying the global minimum (most thermodynamically stable form) and low-lying metastable structures [22] [27]. This provides atomistic insights into the relationship between molecular structure, intermolecular interactions, and observed solid-state properties.

To address the challenge of hydrate formation, algorithms like the Mapping Approach for Crystalline Hydrates (MACH) have been developed. MACH efficiently predicts stable hydrate structures by inserting water molecules into anhydrous crystal frameworks based on topological analysis, enabling early assessment of a critical solubility and stability risk [22].

Machine Learning and Large Language Models

Traditional synthesizability screening relied on thermodynamic metrics like energy above hull, which fails to account for kinetic barriers to synthesis. A groundbreaking approach, the Crystal Synthesis Large Language Model (CSLLM) framework, uses fine-tuned LLMs to predict the synthesizability of arbitrary 3D crystal structures. The model achieved a state-of-the-art accuracy of 98.6%, significantly outperforming screening based on energy above hull (74.1%) or phonon stability (82.2%) [25]. This demonstrates the power of data-driven models to learn complex synthesizability rules beyond simple thermodynamic stability.

Free Energy Perturbation for Solubility Prediction

Physics-based simulations are now capable of quantitative solubility prediction. By combining CSP with Molecular Dynamics (MD) and Free Energy Perturbation (FEP) methods, researchers can predict the aqueous solubility of crystalline compounds from first principles. The process involves using FEP to compute the free energy change for transferring a molecule from the crystal into solution, effectively capturing the contributions of crystal packing and solvation [22]. This approach was successfully applied to a series of n-alkylamides, with calculated solubility free energies accurate to within 1.1 kcal/mol on average [27].

The Scientist's Toolkit: Essential Reagents and Methods

Table 4: Key Research Reagent Solutions and Experimental Methods

| Tool / Reagent | Category | Primary Function in R&D |

|---|---|---|

| Copovidone (PVP-VA64) | Polymer / Excipient | A common water-soluble polymer carrier used in Hot-Melt Extrusion (HME) to form amorphous solid dispersions, enhancing API solubility [23]. |

| Isothermal Titration Calorimetry (ITC) | Biophysical Instrument | Measures the heat change associated with molecular binding, providing direct measurement of binding affinity (K_d), stoichiometry (n), and thermodynamic parameters (ΔH, ΔS) [26]. |

| Surface Plasmon Resonance (SPR) | Biophysical Instrument | A label-free technique for monitoring biomolecular interactions in real-time, used to determine binding kinetics (kon, koff) and affinity (K_d) [26]. |

| Differential Scanning Calorimetry (DSC) | Thermal Analysis | Determines melting point, glass transition temperature, and polymorphic transitions. Used to construct temperature-composition phase diagrams for HME [23]. |

| X-Ray Powder Diffraction (XRPD) | Structural Analysis | The definitive technique for identifying crystalline phases, quantifying polymorphism, and monitoring solid-form transformations in situ [23]. |

| Thermogravimetric Analysis (TGA) | Thermal Analysis | Measures weight loss as a function of temperature, used to study dehydration, desolvation, and thermal decomposition kinetics of APIs [31]. |

Experimental Protocols

Protocol for In-Situ Monitoring of API Dissolution Kinetics in a Polymer Matrix

This protocol is used to guide the design of Hot-Melt Extrusion (HME) processes by quantifying the dissolution rate of a crystalline API into a polymer [23].

- Sample Preparation: Prepare physical mixtures of the crystalline API (e.g., Acetaminophen or Indomethacin) and the polymer (e.g., Copovidone) at the desired drug load (e.g., 25% w/w). Different API particle size distributions (PSDs) may be used to study the impact of surface area.

- In-Situ XRPD Measurement:

- Load the sample into a high-temperature XRPD stage.

- Ramp the temperature to the target isothermal experimental temperature (e.g., 130°C) at a controlled rate.

- Once the temperature stabilizes, collect successive XRPD patterns over time.

- Monitor the intensity of a characteristic crystalline API peak as a function of time.

- Data Analysis (XRPD): Plot the normalized intensity of the chosen API peak against time. The time required for the peak to disappear indicates the complete dissolution of the crystalline API into the amorphous polymer matrix at that temperature and drug load.

- Complementary DSC Measurement:

- For faster dissolution processes that are difficult to capture with XRPD, use a standard DSC.

- Seal the physical mixture in a DSC pan and heat it to the target temperature at a very high heating rate (e.g., 50 K/min) to minimize dissolution during the heat-up phase.

- Hold the sample isothermally for varying times (t), then quench it to room temperature.

- Analyze the quenched sample by DSC to measure the residual enthalpy of fusion (( \Delta H_{t} )) of the API.

- Data Analysis (DSC): Plot the residual enthalpy (( \Delta H{t} )) against the isothermal hold time. The dissolution progress is given by ( \alpha = 1 - (\Delta H{t} / \Delta H0) ), where ( \Delta H0 ) is the enthalpy of the initial physical mixture. This data can be fitted to a kinetic model (e.g., model-free kinetics) to obtain a dissolution rate constant.

Protocol for Determining Solid-State Degradation Kinetics and Shelf-Life

This protocol outlines the steps for determining the kinetic parameters of API decomposition and estimating shelf-life using accelerated stability testing [31].

- Isothermal TGA Experiment:

- Using a thermogravimetric analyzer, heat multiple samples of the API (e.g., MnTE-2-PyPCl₅) rapidly to different isothermal temperatures (e.g., 158, 160, 162, 164°C).

- Hold the samples at each temperature for a fixed period (e.g., 60 minutes) and record the mass loss as a function of time.

- The decomposition reaction progress (( \alpha )) is calculated from the mass loss data.

- Kinetic Model Fitting:

- Fit the isothermal ( \alpha ) vs. time data to various solid-state kinetic models (e.g., R1, R2 contraction models).

- Modern approaches may use an artificial neural network (Multilayer Perceptron, MLP) to simultaneously evaluate multiple models and identify the best-fitting mechanism[s].

- Arrhenius Plot and Activation Energy:

- For each temperature, determine the rate constant (( k )) from the best-fit model.

- Construct an Arrhenius plot of ( \ln(k) ) against the reciprocal of the absolute temperature (( 1/T )).

- The slope of the linear fit is ( -Ea / R ), from which the activation energy (( Ea )) is calculated.

- Shelf-Life Extrapolation:

- Using the Arrhenius equation, extrapolate the degradation rate constant (( k{25} )) to the desired storage temperature (e.g., 25°C).

- Apply the appropriate kinetic model equation (e.g., for a first-order or a determined solid-state model like R1) to calculate the time for 10% degradation (( t{90\%} )), which is a common marker for shelf-life.

The journey from a potent molecule to a safe and effective medicine is paved with the complex realities of its solid state. A deep understanding of the interplay between thermodynamic stability and kinetic synthesizability is no longer a niche concern but a central pillar of successful drug development. As computational power and algorithms advance, the ability to predict solubility, polymorphism, and stability from a molecule's structure continues to improve. Frameworks like CSLLM for synthesizability prediction and end-to-end physics-based modeling combining CSP, FEP, and MD for solubility profiling are revolutionizing the field, allowing scientists to de-risk candidates earlier and design better drugs with a higher probability of technical and therapeutic success. The future of drug development lies in the continued integration of these sophisticated computational and experimental tools to navigate the intricate energy landscapes of crystalline materials, ensuring that promising therapeutic candidates can be reliably manufactured as stable, bioavailable, and long-lasting medicines.

This case study examines the challenge of establishing a thermodynamic stability relationship between two polymorphs of a developmental active pharmaceutical ingredient (API) highly prone to solvate formation. The compound, a sodium salt of an anthranilic acid amide targeting the Kv1.5 potassium channel, presented unusual crystallization behavior, forming solvates from virtually all organic solvents tested. Through the integrated application of thermal, solution, and eutectic melting analysis, this investigation demonstrates a methodological framework for polymorph stability determination when conventional slurry experiments are precluded by solvate formation tendencies. The findings offer significant implications for solid-form selection strategies within pharmaceutical development, particularly for compounds exhibiting similar crystallization challenges.

In pharmaceutical development, crystal polymorphism—the ability of a compound to exist in multiple crystalline structures—profoundly impacts API properties including solubility, stability, and bioavailability [32]. The thermodynamic stability relationship between polymorphs is a critical selection criterion to minimize the risk of solid-form transitions during manufacturing and storage [33]. While competitive slurry experiments typically establish this relationship, certain compounds present exceptional challenges that render standard methodologies ineffective.

The subject of this case study, an anthranilic acid amide derivative (hereafter referred to as API), exhibited a pronounced tendency toward solvate formation from all tested organic solvents, with solvent-free polymorphs obtainable only through controlled desolvation processes. This behavior necessitated alternative approaches to determine the thermodynamic stability of two resulting solvent-free polymorphs. The study exemplifies the broader scientific tension between thermodynamic stability, which dictates the ultimate equilibrium state, and kinetic synthesizability, which often determines the initially obtained solid form [1].

Theoretical Framework: Thermodynamic vs. Kinetic Stability

Fundamental Concepts

Thermodynamic stability refers to the state of lowest free energy (G) under given conditions. For polymorphic systems, the thermodynamically stable form has the lowest chemical potential and is therefore the least soluble among its polymorphs under fixed temperature and pressure conditions [33] [1]. In contrast, kinetic stability describes the persistence of a metastable state due to energy barriers that impede transformation to the thermodynamic minimum. This metastability arises from activation energy barriers that must be overcome for molecular reorganization to occur [1].

The relationship between these stability types can be visualized through energy landscapes, where local minima represent metastable forms and the global minimum corresponds to the thermodynamically stable form [1]. The following diagram illustrates this conceptual framework for polymorphic systems:

Diagram: Energy landscape illustrating kinetic vs. thermodynamic stability in polymorphic systems. Kinetic products form first due to lower activation barriers (EA1), while thermodynamic products are more stable but require higher energy pathways (EA2) for formation.

Implications for Pharmaceutical Development

The kinetic versus thermodynamic stability relationship directly impacts pharmaceutical development strategies. While kinetically stabilized forms often crystallize first due to lower activation energy barriers, they pose conversion risks during processing or storage [34]. Consequently, regulatory authorities typically prefer thermodynamically stable forms for drug products due to their predictable long-term behavior, necessitating robust analytical methods to identify these forms early in development [33].

Experimental System and Challenges

Compound Characteristics

The API investigated represents a sodium salt of 5-Fluoro-N-[(S)-1-phenyl-propyl]-2-(quinolone-8-sulfonylamino)-benzamide, a potassium channel blocker intended for atrial arrhythmia treatment. Initial crystallization screening revealed exceptional solvate formation propensity, with solvates crystallizing from all tested organic solvents except water, where the API displayed apparently infinite solubility [33].

Solid Form Landscape

Two solvent-free polymorphs were isolated through careful drying of specific solvate precursors:

- Polymorph 1: Lower melting form (ΔTm = 35°C difference)

- Polymorph 2: Higher melting form

The solvate formation tendency precluded standard slurry conversion experiments, as both polymorphs consistently converted to solvates in solvent-mediated environments. This limitation necessitated alternative approaches to establish their thermodynamic relationship [33].

Methodological Approaches

Experimental Workflow

The investigation employed multiple complementary techniques to overcome the solvate formation challenge, following the integrated workflow illustrated below:

Diagram: Experimental workflow for polymorph stability determination when slurry experiments are precluded by solvate formation.

Research Reagents and Materials

Table: Key Research Reagents and Experimental Materials

| Reagent/Material | Specification | Function/Application |

|---|---|---|

| API Samples | Chemical purity >99%, phase pure | Ensure reliable thermal and solution measurements |

| Benzanilide | Purity >99.5% | Reference compound for thermomicroscopy calibration |

| Differential Scanning Calorimeter (DSC) | - | Determine melting points and enthalpies of fusion |

| Solution Calorimeter | - | Measure heats of solution for polymorphs |

| X-ray Powder Diffractometer (XRPD) | Transmission geometry with Cu Kα radiation | Verify phase purity and monitor structural changes |

Detailed Experimental Protocols

Thermal Analysis Protocol

Objective: Determine thermodynamic stability relationship from melting data.

- Sample Preparation: Load 2-5 mg of each polymorph into sealed DSC pans with pinhole lids

- Heating Protocol: Heat samples at 0.5-2°C/min under nitrogen purge (50 mL/min)

- Data Collection: Record melting onset temperatures (Tm) and enthalpies of fusion (ΔHf)

- Analysis: Apply Burger's heat of fusion rule: If higher melting form has lower heat of fusion, forms are enantiotropically related; otherwise monotropic

Results Interpretation: Polymorph 2 showed significantly higher Tm (ΔTm = 35°C), but similar ΔHf values, suggesting monotropic relationship with Polymorph 2 as thermodynamically stable form at all temperatures below melting [33].

Solution Calorimetry Protocol

Objective: Directly measure enthalpy differences between polymorphs in solution.

- Solvent Selection: Identify appropriate solvent where both forms dissolve without degradation or transformation

- Calibration: Perform electrical calibration of calorimeter

- Measurement: Dissolve precisely weighed samples (5-20 mg) in selected solvent at constant temperature (25°C)

- Replication: Minimum triplicate measurements for each polymorph

- Calculation: Compare mean heats of solution (ΔHsoln)

Results Interpretation: The form with lower heat of solution (less endothermic/more exothermic) is thermodynamically more stable. Polymorph 2 exhibited significantly less endothermic ΔHsoln, confirming its thermodynamic stability [33].

Eutectic Melting Determination Protocol

Objective: Apply the eutectic melting method for stability ranking.

- Mixture Preparation: Prepare intimate physical mixtures of both polymorphs (50:50 w/w)

- Thermomicroscopy: Observe melting behavior under hot stage microscope

- Eutectic Temperature: Identify temperature at which first liquid phase appears (eutectic melting)

- Phase Identification: Determine which polymorph remains solid at eutectic temperature

Results Interpretation: The polymorph that remains solid above the eutectic temperature is the more thermodynamically stable form. Consistent with other methods, Polymorph 2 persisted as the solid phase [33].

Results and Comparative Analysis

Comprehensive Stability Assessment

Table: Comparative Thermodynamic Data for Polymorph Stability Assessment

| Method | Polymorph 1 Results | Polymorph 2 Results | Stability Assignment | Key Observations |

|---|---|---|---|---|

| Melting Data | Tm = ~200°C | Tm = ~235°C | Polymorph 2 stable (monotropic) | Unusually large ΔTm (35°C) with similar ΔHf |

| Solution Calorimetry | Higher ΔHsoln (more endothermic) | Lower ΔHsoln (less endothermic) | Polymorph 2 stable | Statistically significant Δ(ΔHsoln) |

| Eutectic Melting | Melts at eutectic temperature | Persists as solid phase | Polymorph 2 stable | Consistent with thermal and solution data |

| Temperature-Resolved XRPD | No solid-solid transition | No solid-solid transition | Polymorph 2 stable at high temperature | Lattice constants of Polymorph 2 more temperature-sensitive |

The convergent results from multiple independent methods established Polymorph 2 as the thermodynamically stable form across the temperature range studied, despite its kinetic inaccessibility through direct crystallization from common solvents.

Discussion

Methodological Considerations

This case study demonstrates that traditional slurry experiments, while preferred for establishing polymorph stability, present limitations for solvate-prone compounds. The integrated approach described herein provides a robust alternative, with solution calorimetry offering particularly decisive evidence through direct measurement of enthalpy differences [33].

The agreement between thermal, solution, and eutectic methods reinforces the reliability of this multimethod approach. While melting data alone suggested monotropy, the combination with solution calorimetry provided comprehensive thermodynamic understanding without requiring potentially problematic solvent-mediated transformations.

Implications for Solid Form Selection

For the subject API, the stability assignment justified development efforts focused on Polymorph 2, despite challenges in its direct crystallization. This approach mitigates the risk of solvent-mediated transformation during drug product manufacturing or storage, ensuring consistent product quality [34] [33].

The methodological framework demonstrates that solvate formation propensity need not preclude thermodynamic stability determination, but rather necessitates sophisticated analytical strategies that circumvent solvent-mediated pathways.

This case study successfully established the thermodynamic stability relationship between two polymorphs of a highly solvate-prone pharmaceutical compound through integrated application of thermal, solution, and eutectic melting analysis. The methodological approach provides a template for addressing similar challenges in pharmaceutical development, particularly for compounds where conventional slurry experiments are precluded by solvate formation tendencies.

The findings reinforce that kinetic accessibility and thermodynamic stability represent distinct considerations in polymorph selection, with the latter proving essential for robust pharmaceutical development. Future methodological advances may further enhance our ability to characterize complex solid-form landscapes, particularly for compounds exhibiting challenging crystallization behaviors.

Computational and Experimental Tools for Predicting Stability and Synthesizability

The formation energy of a material is a fundamental thermodynamic property representing the enthalpy change when a compound is formed from its constituent elements in their standard states. It serves as a crucial indicator of a material's inherent stability; a negative formation energy signifies that the compound is thermodynamically stable relative to its elements [35] [36]. In the context of crystalline materials, accurately predicting this property is the first step in assessing thermodynamic stability, which is often used as a preliminary proxy for synthesizability—the likelihood that a material can be experimentally realized [7] [36].

Density Functional Theory (DFT) has emerged as the foremost computational tool for first-principles calculation of formation energies. As a quantum mechanical modeling method, DFT determines the electronic structure of a many-body system, allowing researchers to compute the total energy of a crystal structure from first principles. This capability makes it indispensable for predicting formation energies without relying on empirical data [37]. The central theorem of DFT, the Hohenberg-Kohn theorem, establishes that the ground state energy of a system is a unique functional of its electron density, thereby simplifying the complex many-body problem into a more tractable form [38] [39].

Theoretical Foundations and Computational Methodology

Core Principles of DFT

DFT operates by solving the Kohn-Sham equations, which map the system of interacting electrons onto a fictitious system of non-interacting electrons with the same ground-state density. The total energy functional in the Kohn-Sham formulation can be expressed as:

E[n] = T_s[n] + E_ext[n] + E_H[n] + E_XC[n]

Where T_s[n] is the kinetic energy of the non-interacting electrons, E_ext[n] is the external potential energy (typically from nuclei), E_H[n] is the Hartree energy representing classical electron-electron repulsion, and E_XC[n] is the exchange-correlation energy that captures all quantum mechanical many-body effects [38] [37].

The critical challenge in DFT implementations lies in the approximation of the exchange-correlation functional (E_XC[n]). Several classes of functionals have been developed with varying degrees of accuracy and computational cost:

- Generalized Gradient Approximation (GGA): This widely-used class of functionals, including the Perdew-Burke-Ernzerhof (PBE) functional, depends on both the local electron density and its gradient. GGA offers improved accuracy over local density approximation (LDA) for many materials properties and is frequently employed in formation energy calculations of solids [40] [35].

- Hybrid Functionals: These incorporate a portion of exact exchange from Hartree-Fock theory with DFT exchange-correlation, generally providing improved accuracy but at significantly increased computational cost [38].

- Meta-GGA Functionals: These include the kinetic energy density in addition to the electron density and its gradient, offering further refinement for certain material systems [38].

Direct Calculation of Solid-Phase Formation Enthalpy

Traditional approaches to calculating solid-phase formation enthalpy (ΔH_f,solid) often rely on indirect methods, such as deriving it from gas-phase formation enthalpy (ΔH_f,gas) and sublimation enthalpy (ΔH_sub). However, a novel "isocoordinated reaction" method enables direct computation of ΔH_f,solid from DFT [41].

In this approach, reference states are selected based on the coordination numbers of all atoms in the material, creating a reaction where the coordination number of each atom remains unchanged in the reactants and products. For example [41]:

- H (coordination number = 1): Reference molecule = H₂

- O (coordination number = 1 or 2): Reference molecules = O₂ or H₂O

- N (coordination number = 1, 2, or 3): Reference molecules = N₂, N₂H₂, or NH₃

- C (coordination number = 2, 3, or 4): Reference molecules = C₂H₂, C₂H₃, or CH₄

This method effectively reduces errors in DFT calculations of energy differences between chemically dissimilar systems by maintaining similar coordination environments, similar to the error cancellation in isodesmic reactions but extended to solid-phase systems [41].

Practical Implementation and Workflow

Computational Setup and Parameters

Successful DFT calculation of formation energies requires careful attention to computational parameters. The following setup, derived from studies on perovskite hydrides and energetic materials, represents a typical robust configuration [40] [41]:

Table 1: Typical DFT Computational Parameters for Formation Energy Calculations

| Parameter | Typical Setting | Function and Importance |

|---|---|---|

| Software Package | CASTEP, VASP | Provides DFT implementation with plane-wave basis sets and pseudopotentials |

| Exchange-Correlation Functional | GGA-PBE | Balances accuracy and computational efficiency for solids |

| Pseudopotential | Ultrasoft, PAW | Describes electron-ion interactions while reducing computational cost |

| Plane-Wave Cutoff Energy | 500-600 eV | Determines basis set size; affects accuracy and computational demand |

| k-point Sampling | Γ-centered Monkhorst-Pack | Ensures adequate sampling of Brillouin zone; critical for convergence |

| Convergence Criteria | Energy: 10⁻⁵ eV/atom; Force: 0.01-0.03 eV/Å | Determines when self-consistent field iterations and geometry optimization terminate |

For the "isocoordinated reaction" method, additional steps include [41]:

- Determining coordination numbers of each atom in the crystal structure

- Selecting appropriate reference molecules for each element based on coordination environment