Beyond Classical Theory: How Atomistic Modeling is Redefining Nucleation Prediction in Drug Development

This article explores the critical validation of atomistic computational models against the long-standing Classical Nucleation Theory (CNT) in pharmaceutical research.

Beyond Classical Theory: How Atomistic Modeling is Redefining Nucleation Prediction in Drug Development

Abstract

This article explores the critical validation of atomistic computational models against the long-standing Classical Nucleation Theory (CNT) in pharmaceutical research. For drug development professionals, we dissect the fundamental principles of both approaches, detail cutting-edge simulation methodologies like molecular dynamics, and address their application in troubleshooting crystallization challenges. A comparative analysis highlights where atomistic models excel in predictive accuracy and where CNT remains a valuable conceptual framework, concluding with the transformative implications of these advanced tools for predicting polymorph stability, optimizing formulations, and de-risking the solid form selection process.

The Pillars of Prediction: Revisiting Classical Nucleation Theory and the Rise of Atomistic Models

Core Tenets and Capillarity Assumption of Classical Nucleation Theory (CNT)

Classical Nucleation Theory (CNT) is the most common theoretical framework used to quantitatively study the kinetics of nucleation, which is the first step in the spontaneous formation of a new thermodynamic phase or structure from a metastable state [1]. Developed in the 1930s based on earlier work by Volmer, Weber, Becker, and Döring, with conceptual roots in Gibbs' thermodynamics, CNT provides a mathematical description of how stable nuclei of a new phase emerge from a supersaturated parent phase [2]. The central objective of CNT is to explain and quantify the immense variation in nucleation times observed experimentally, which can range from negligible to exceedingly long time scales beyond experimental reach [1]. Despite known limitations and frequent quantitative discrepancies with experimental data, CNT remains widely used due to its relative simplicity, robustness, and ability to handle diverse nucleation phenomena across multiple disciplines including materials science, pharmaceutical development, atmospheric chemistry, and electrodeposition [2] [3] [4].

The theory's primary output is a prediction for the nucleation rate (R), which represents the number of stable nuclei formed per unit volume per unit time. The CNT expression for the nucleation rate is:

[ R = NS Z j \exp\left(-\frac{\Delta G^*}{kB T}\right) ]

where (\Delta G^*) is the free energy barrier to nucleation, (kB) is Boltzmann's constant, (T) is temperature, (NS) is the number of potential nucleation sites, (j) is the rate at which atoms/molecules join the nucleus, and (Z) is the Zeldovich factor [1]. This equation captures the competition between the thermodynamic barrier to nucleus formation (exponential term) and the kinetic factors governing molecular addition to growing clusters ((Z_j) term).

Core Tenets of CNT

Fundamental Principles

CNT rests on several fundamental principles that together provide a complete theoretical framework for predicting nucleation behavior. First, the theory conceptualizes nucleation as a stepwise process where atoms or molecules in a supersaturated medium randomly associate to form clusters of the new phase. These clusters are treated as distinct spherical entities with well-defined interfaces separating them from the parent phase [1] [2]. The theory further assumes that these clusters grow or shrink through the sequential addition or loss of single molecules (monomers), with the probability of growth increasing with cluster size [2].

A central concept in CNT is the critical nucleus - a cluster of specific size that represents the maximum of the free energy landscape. Clusters smaller than this critical size (known as embryos) are statistically more likely to dissolve than grow, while those larger than critical (stable nuclei) are more likely to grow [1] [2]. The critical radius (r_c) is derived as:

[ rc = \frac{2\sigma}{|\Delta gv|} ]

where (\sigma) is the interfacial tension and (\Delta g_v) is the bulk free energy gain per unit volume [1]. The height of the nucleation barrier (\Delta G^*) for homogeneous nucleation is then:

[ \Delta G^* = \frac{16\pi\sigma^3}{3|\Delta g_v|^2} ]

This expression reveals why nucleation can be extremely sensitive to small changes in conditions - the barrier depends on the cube of the interfacial tension and the square of the volumetric free energy change [1].

Homogeneous vs. Heterogeneous Nucleation

CNT distinguishes between two primary nucleation pathways: homogeneous and heterogeneous. Homogeneous nucleation occurs spontaneously throughout the bulk parent phase without preferential nucleation sites, while heterogeneous nucleation takes place on surfaces, impurities, or other imperfections that catalyze the nucleation process [1].

The free energy barrier for heterogeneous nucleation is significantly lower than for homogeneous nucleation because the catalytic substrate reduces the surface area of the nucleus exposed to the parent phase. The relationship between the two barriers is expressed as:

[ \Delta G^{het} = f(\theta)\Delta G^{hom} ]

where (f(\theta)) is a function of the contact angle (\theta) between the nucleus and the substrate:

[ f(\theta) = \frac{2 - 3\cos\theta + \cos^3\theta}{4} ]

This reduction explains why heterogeneous nucleation is vastly more common than homogeneous nucleation in real-world systems [1]. The contact angle reflects the balance of interfacial energies between the nucleus, parent phase, and substrate, with smaller contact angles resulting in greater reduction of the nucleation barrier.

Table 1: Key Parameters in Classical Nucleation Theory

| Parameter | Symbol | Definition | Role in CNT |

|---|---|---|---|

| Critical Radius | (r_c) | Size where growth becomes favored | Determines minimum stable nucleus size |

| Nucleation Barrier | (\Delta G^*) | Free energy maximum | Controls exponential term in rate equation |

| Interfacial Tension | (\sigma) | Energy per unit area of interface | Primary resistance to nucleation |

| Supersaturation | (S) | Ratio of actual to equilibrium concentration | Driving force for nucleation |

| Zeldovich Factor | (Z) | Kinetic correction factor | Accounts for cluster dissolution probability |

The Capillarity Assumption

Definition and Theoretical Basis

The capillarity assumption represents both a fundamental cornerstone and a significant limitation of CNT. This assumption treats nascent nuclei as microscopic droplets with the same bulk properties (density, structure, and interfacial tension) as the macroscopic, flat interface of the mature phase [2] [5]. In essence, CNT assumes that the interface between a cluster of the new phase and the original phase is sharp and that its properties are size-independent, even for clusters containing only a few atoms or molecules [2].

This approximation allows CNT to express the free energy of formation (\Delta G) for a spherical nucleus of radius (r) using a simple two-term expression:

[ \Delta G = \frac{4}{3}\pi r^3 \Delta g_v + 4\pi r^2 \sigma ]

where the first term represents the favorable bulk free energy change (volume term) and the second term represents the unfavorable free energy cost of creating a new interface (surface term) [1] [2]. The capillarity assumption provides the theoretical justification for using macroscopic interfacial tension values in calculating the properties of microscopic clusters, thereby enabling the development of closed-form analytical expressions for the nucleation rate.

Implications and Limitations

The capillarity assumption has profound implications for CNT's predictive capability and practical utility. By treating all clusters as miniature versions of the bulk phase, CNT disregards the impact of atomic structure, discrete molecular effects, and the potential existence of intermediate states that might differ significantly from the final stable phase [2]. This simplification becomes particularly problematic for very small clusters where a significant fraction of molecules reside at the interface, and where the concept of a well-defined surface tension becomes physically questionable [2] [6].

Recent research has highlighted specific failures of the capillarity assumption. A 2025 falsifiability test demonstrated that even when different crystal polymorphs have identical bulk and interfacial properties according to the capillarity approximation, they can exhibit remarkably different nucleation properties in molecular simulations [6]. This contradiction points to a fundamental limitation of CNT: its neglect of structural fluctuations within the liquid phase and the potential for non-classical nucleation pathways that proceed through intermediate states rather than direct assembly of the stable phase [6].

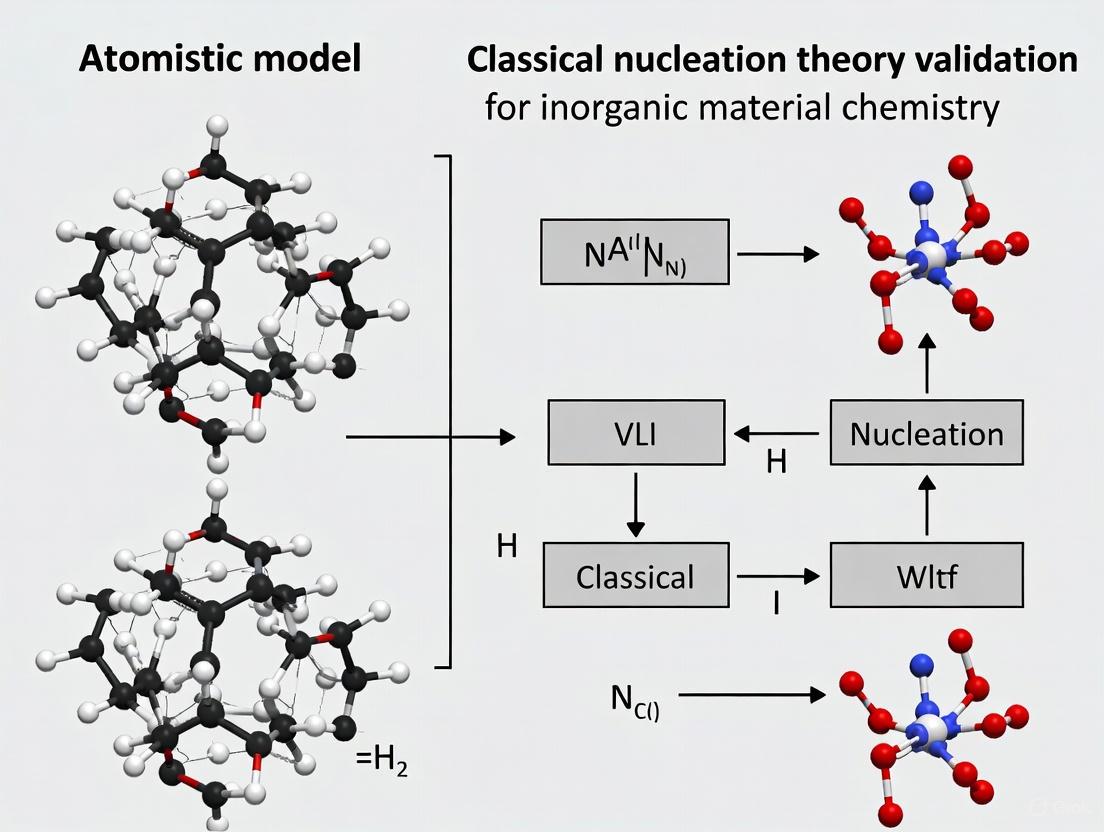

The following diagram illustrates the CNT concept of the nucleation barrier and the capillarity assumption:

Comparative Analysis: CNT vs Alternative Approaches

Quantitative Comparison of Predictive Performance

Table 2: Comparison of CNT Predictions with Experimental Observations

| System | CNT Prediction | Experimental Observation | Discrepancy |

|---|---|---|---|

| Ice nucleation in water (TIP4P/2005 model) | (R = 10^{-83} \text{s}^{-1}) at 19.5°C supercooling | Significantly higher nucleation rates observed | Massive underestimation (~80 orders of magnitude) [1] |

| Crystal nucleation from solution | Quantitative rate predictions | Often inaccurate rate magnitudes and temperature dependencies | 1-10 orders of magnitude error common [2] |

| Polymorph selection | No difference predicted for polymorphs with same bulk properties | Different nucleation rates observed for different polymorphs | Contradicts capillarity assumption [6] |

| Electrodeposition of metals | Barrier heights and nucleation rates | Varies with atomic structure and electrode interface | Misses atomic-scale effects [4] |

Non-Classical Nucleation Pathways

Growing experimental and computational evidence has revealed several non-classical nucleation pathways that deviate fundamentally from CNT assumptions. The prenucleation cluster (PNC) pathway, also known as the two-step nucleation mechanism, proposes that nucleation proceeds through the formation of thermodynamically stable clusters that lack a definite phase interface [2]. These PNCs are dynamic solute species rather than miniature crystals, and they undergo a structural transition to form phase-separated nanodroplets once a specific ion activity product is reached [2].

Another non-classical mechanism is cluster aggregation, where pre-nucleation clusters or subcritical nuclei collide and coalesce to form stable aggregates, effectively "tunneling" through the high energy barrier predicted by CNT [2]. This pathway is particularly relevant in systems like calcium carbonate, where the sudden size increase upon aggregation enables bypassing of the classical nucleation barrier [2].

Table 3: Classical vs. Non-Classical Nucleation Mechanisms

| Aspect | Classical (CNT) | Prenucleation Cluster Pathway | Cluster Aggregation |

|---|---|---|---|

| Fundamental Units | Monomers | Stable prenucleation clusters | Pre-critical nuclei |

| Interface Definition | Sharp interface from smallest clusters | No initial interface | Interfaces form upon aggregation |

| Thermodynamics | All subcritical clusters unstable | Prenucleation clusters are stable | Mixed stability landscape |

| Rate Determination | Crossing of single energy barrier | Liquid-liquid binodal transition | Collision frequency vs dissolution rate |

| Structural Progression | Direct to final crystal structure | Amorphous precursors common | Various intermediate structures |

Experimental Methodologies for CNT Validation

Pharmaceutical Precipitation Studies

In pharmaceutical research, CNT has been applied to simulate the precipitation of poorly soluble compounds in gastrointestinal fluids to predict oral absorption. The experimental protocol typically involves infusion-precipitation experiments where a drug solution is infused into a simulated intestinal fluid while monitoring concentration and precipitation kinetics [3] [7]. Key measurements include the critical supersaturation concentration (Ccssc) and precipitation rate, which are then used to fit CNT parameters such as surface tension (γ) and a pre-exponential factor (β) [3].

The CNT equation implemented in these studies is:

[ \frac{dN{nc}}{dt} = \beta D{mono} (NA C{aq})^2 \sqrt{\frac{kB T}{\gamma}} \frac{1}{\sqrt{\ln\frac{C{aq}}{S{aq}}}} \exp\left(-\frac{16\pi}{3} \frac{\gamma}{(kB T)^3} \frac{vm^2}{(\ln(C{aq}/S_{aq}))^2}\right) ]

where (D{mono}) is the monomer diffusion coefficient, (NA) is Avogadro's number, (C{aq}) is aqueous concentration, (S{aq}) is solubility, and (v_m) is molecular volume [3]. This approach has demonstrated adequate simulation of precipitation characteristics such as the increase in precipitation rate with increasing infusion rate and the relative insensitivity of maximum concentration to infusion rate changes [3] [7].

Molecular Simulation Approaches

Advanced molecular simulations provide a powerful tool for testing CNT predictions at the atomic scale. The typical workflow involves:

System Preparation: Creating simulation boxes containing thousands of molecules of the parent phase under controlled supersaturation conditions [1] [6]

Free Energy Calculation: Using enhanced sampling techniques like umbrella sampling or metadynamics to compute the free energy landscape as a function of cluster size [6]

Nucleation Rate Determination: Running multiple long-timescale molecular dynamics simulations and counting nucleation events to determine rates [1]

Interface Analysis: Characterizing the structure and properties of nascent nuclei using order parameters and density profiles [6]

For example, in a study of ice nucleation using the TIP4P/2005 water model, researchers computed a free energy barrier of (\Delta G^* = 275k_B T) at 19.5°C supercooling, with attachment rate (j = 10^{11} \text{s}^{-1}) and Zeldovich factor (Z = 10^{-3}), yielding a nucleation rate of (R = 10^{-83} \text{s}^{-1}) [1]. The massive discrepancy between this prediction and experimental observations highlights the limitations of CNT even for well-studied systems.

The following diagram illustrates a comparative experimental workflow for validating CNT:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Materials and Methods for Nucleation Research

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Simulated Intestinal Fluids | Biorelevant media for precipitation studies | Pharmaceutical absorption prediction [3] |

| Molecular Dynamics Software | Atomistic simulation of nucleation events | Testing CNT predictions [1] [6] |

| Enhanced Sampling Algorithms | Accelerate rare nucleation events in simulation | Free energy landscape calculation [6] |

| Order Parameters | Quantify degree of crystallinity in clusters | Distinguish parent and product phases [6] |

| Nephelometry/Light Scattering | Detect nucleation events in real time | Experimental rate measurement [3] |

| Electrochemical Cells | Control supersaturation via potential | Electrodeposition studies [4] |

| High-Resolution Microscopy | Visualize nascent nuclei | Critical size determination [4] |

Classical Nucleation Theory, built around the capillarity assumption, provides a foundational framework for understanding phase transformation kinetics across diverse scientific disciplines. Its core tenet - that nucleation proceeds through the formation of critical nuclei that resemble microscopic droplets of the bulk phase - offers valuable intuitive insights and mathematical simplicity that has maintained its relevance for nearly a century. However, comprehensive validation studies consistently reveal quantitative discrepancies between CNT predictions and experimental observations, particularly in systems where non-classical pathways like prenucleation clusters or cluster aggregation operate.

The ongoing tension between CNT and emerging atomistic models reflects a broader scientific evolution from phenomenological descriptions toward mechanistic understanding based on molecular-level interactions. While CNT remains useful for qualitative predictions and system screening, particularly in applied contexts like pharmaceutical development, its limitations in predictive accuracy continue to drive the development of more sophisticated theoretical frameworks. The future of nucleation research lies in integrating insights from both approaches - leveraging the intuitive power of CNT while incorporating the molecular realism of atomistic simulations - to achieve true predictive control over nucleation processes in materials design, drug development, and industrial manufacturing.

Carbon nanotubes (CNTs) are celebrated for their exceptional nanoscale properties, which suggest transformative potential for applications from aerospace to biomedicine. However, the transition from individual nanotubes to functional macroscale systems is fraught with challenges that create significant quantitative gaps between theoretical potential and realized performance. This guide objectively compares the performance of CNTs against conventional and emerging alternatives, framing these disparities within the fundamental scientific challenge of bridging atomistic models with classical continuum theories. By synthesizing current experimental data and methodologies, we provide researchers with a clear overview of the performance limitations, the critical role of experimental protocol, and the material solutions essential for advancing CNT-based technologies.

Carbon nanotubes, essentially rolled-up graphene sheets, exist as single-walled (SWCNT) or multi-walled (MWCNT) structures and are renowned for their extraordinary electrical, thermal, and mechanical properties at the nanoscale [8]. Theoretical predictions and measurements on individual, defect-free nanotubes suggest unparalleled performance: electrical resistivity as low as 7.7 × 10⁻⁷ Ω cm⁻¹, current densities exceeding 10¹⁰ A cm⁻², thermal conductivity up to 3500 W m⁻¹ K⁻¹, and tensile strength reaching 100 GPa [8]. These figures far surpass those of conventional materials like copper and steel, positioning CNTs as ideal candidates for next-generation electronics, lightweight composites, and advanced thermal management systems.

Nevertheless, translating these properties into commercial macroscale applications has been persistently disappointing [8]. The inherent limitations stem from the profound difficulties in controlling nanoscopic systems—including impurities, structural dispersity, and interfacial interactions—which collectively create a substantial performance gap. This challenge resonates with the core theme of validating atomistic versus classical nucleation theories, where predicting the behavior of a system from its fundamental constituents remains a formidable task. This guide quantitatively explores these gaps, providing a data-driven comparison with alternative materials and detailing the experimental protocols essential for rigorous validation.

Quantitative Performance Gaps: CNTs vs. Alternatives

The following tables synthesize experimental data to highlight the performance disparities between theoretical CNT potential, practical CNT macro-structures, and incumbent materials.

Table 1: Electrical Conductivity and Density Comparison

| Material | Theoretical/Best Reported Resistivity (Ω cm) | Macroscale Achieved Resistivity (Ω cm) | Current Density (A cm⁻²) | Density (g cm⁻³) |

|---|---|---|---|---|

| Metallic SWCNT (Individual) | 7.7 × 10⁻⁷ [8] | - | ~10¹⁰ [8] | ~1.3 [8] |

| MWCNT (Individual) | 5.0 × 10⁻⁶ [8] | - | - | ~2.1 [8] |

| CNT Yarns/Fibers | - | Specific conductivity approaching Cu [8] | - | - |

| Copper (Annealed) | 1.7 × 10⁻⁶ [8] | 1.7 × 10⁻⁶ | ~10⁶ [8] | 8.96 [8] |

| Aluminum | 2.7 × 10⁻⁶ | 2.7 × 10⁻⁶ | - | 2.7 [8] |

Table 2: Mechanical and Thermal Properties Comparison

| Material | Tensile Strength (GPa) | Specific Strength (N·tex⁻¹) | Young's Modulus (TPa) | Thermal Conductivity (W m⁻¹ K⁻¹) |

|---|---|---|---|---|

| Individual SWCNT | 10 - 100 [8] | - | ~1 [8] | 3500 (Axial) [8] |

| Individual MWCNT | 10 - 100 [8] | - | ~1 [8] | 3000 (Axial) [8] |

| High-Performance CNT Fiber | - | 4.10 ± 0.17 [9] | - | 400 [9] |

| T1100 Carbon Fiber | - | ~2.8 (for comparison) [9] | - | <13 [9] |

| Steel | ~0.5 - 2 | - | ~0.2 | ~50 |

Key Insights from Quantitative Data:

- The electrical performance gap is stark. While individual metallic SWCNTs can outperform copper in current-carrying capacity, translating this into macroscopic wires or yarns is challenging. The conductivity of bulk CNT assemblies, though promising, still struggles to consistently surpass that of annealed copper [8].

- Mechanical performance shows a similar trend. The specific strength of state-of-the-art CNT fibers (4.1 N·tex⁻¹) is a monumental achievement, exceeding that of top-grade carbon fibers like T1100 [9]. This demonstrates significant progress in bridging the nanoscopic-to-macroscopic gap for structural applications, particularly in weight-sensitive sectors like aerospace [10].

- The thermal conductivity of individual CNTs is exceptional, rivaling diamond. However, in macroscopic forms like fibers, despite being an order of magnitude higher than T1100 carbon fiber, the realized value (400 W m⁻¹ K⁻¹) is still only a fraction of the single-nanotube potential, limited by phonon scattering at tube-tube junctions and structural defects [8] [9].

Experimental Protocols for Assessing CNT Performance

The following section details key methodologies cited in CNT research, which are critical for understanding and validating the data presented in the previous section.

Fabrication of High-Strength CNT Fibers

Objective: To produce continuous carbon nanotube fibers (CNTFs) with superior mechanical and functional properties by optimizing nanotube alignment and interfacial engineering [9].

Detailed Protocol:

- CNT Aerogel Synthesis: A mixed carbon-source strategy is employed during chemical vapor deposition (CVD) to engineer CNT aerogels with optimally aligned and controlled-entanglement CNT bundles. This foundational step ensures structural uniformity in the initial CNT assembly.

- Acid-Assisted Stretching & Densification: The CNT aerogel is densified into a highly oriented architecture using chlorosulfonic acid (CSA) as a superacid solvent. The CSA acts as a protonating agent and dispersant, enabling the application of mechanical stretching. This process simultaneously aligns the nanotubes and dramatically improves the packing density and inter-tube interactions within the fiber.

- Fiber Spinning & Winding: The aligned and densified CNT structure is continuously spun and collected on a drum, enabling the production of kilometer-scale continuous fibers.

- Characterization: The mechanical (tensile strength, modulus), electrical, and thermal properties of the fiber are characterized at multiple points along its length to confirm uniformity and performance. Knot-strength tests are performed to assess flexibility and robustness.

Purification of As-Produced CNTs

Objective: To remove metallic and carbonaceous impurities from synthesized CNTs without introducing defects that degrade their intrinsic properties [8].

Detailed Protocol:

- Impurity Analysis: The as-produced CNT powder is first analyzed using Thermogravimetric Analysis (TGA) to determine the metal catalyst content and overall thermal stability.

- Acid Treatment (Oxidizing): For removing metallic impurities (Fe, Ni, Co), CNTs are treated with a mixture of nitric (HNO₃) and sulfuric (H₂SO₄) acids, often assisted by ultrasonication and heating. Caution: This aggressive method can create defects, shorten tube length, and introduce oxygen-containing surface groups.

- Alternative Acid Treatment (Milder): A less damaging method involves using a mixture of hydrogen peroxide (H₂O₂) and a non-oxidizing acid like hydrochloric (HCl). This is effective for dissolving catalyst residues and some amorphous carbon with minimal alteration of the CNT surface chemistry.

- Gas-Phase Purification: As an alternative to wet chemistry, annealing in a controlled atmosphere (e.g., air, O₂, CO₂, or Cl₂/O₂ mixture) can be implemented to selectively oxidize carbonaceous impurities, which are typically less stable than the crystalline CNT structure.

- High-Temperature Annealing: For the highest purity and defect healing, CNTs are heated in a vacuum or inert gas atmosphere at temperatures exceeding 1600°C. This step removes residual impurities and allows carbon atoms to rearrange, reducing structural defects, albeit at high energy cost and with potential yield loss.

Assessing Nanoparticle Biodistribution (PBPK-QSAR Modeling)

Objective: To predict the biodistribution and pharmacokinetics of nanoparticles, including CNTs, in biological systems based solely on their physicochemical properties, reducing reliance on animal testing [11].

Detailed Protocol:

- Data Curation: Compile a dataset of biodistribution experiments from published literature, focusing on healthy mice. Extract key nanoparticle properties: core material, shape, surface coating, hydrodynamic size, and zeta potential.

- PBPK Model Fitting: Use a generalized Physiologically Based Pharmacokinetic (PBPK) model. Employ Bayesian analysis with Markov Chain Monte Carlo (MCMC) simulation to fit the model to the experimental biodistribution data (e.g., concentration in organs over time). This generates kinetic parameters (e.g., uptake/release rate constants) for each nanoparticle.

- Multivariate Linear Regression (MLR): Build an MLR model to establish a quantitative relationship between the nanoparticle physicochemical properties (independent variables) and the kinetic parameters derived from the PBPK fitting (dependent variables).

- Model Validation & Prediction: The resulting MLR-PBPK framework is used to predict the biodistribution of new nanoparticles based solely on their input properties. The model's accuracy is validated against hold-out experimental data.

Visualizing the CNT Challenge Pathway

The following diagram illustrates the interconnected challenges and performance gaps in translating CNT properties from the nanoscale to the macroscale.

Diagram Title: Challenges Creating the CNT Performance Gap.

The Scientist's Toolkit: Key Research Reagents and Materials

This table lists essential materials and reagents used in CNT research and development, as cited in the experimental protocols.

Table 3: Essential Research Reagents for CNT Experiments

| Item | Function/Brief Explanation | Key Application Context |

|---|---|---|

| Chlorosulfonic Acid (CSA) | A superacid solvent that acts as a protonating agent and dispersant for CNTs, enabling their alignment and densification during fiber spinning. | Fabrication of high-performance CNT fibers [9]. |

| Nitric Acid (HNO₃) & Sulfuric Acid (H₂SO₄) | Oxidizing acid mixture used to remove metallic catalyst impurities from as-synthesized CNTs. | CNT purification protocols [8]. |

| Hydrogen Peroxide (H₂O₂) & Hydrochloric Acid (HCl) | A milder alternative purification mixture that removes impurities with less damage to the CNT structure. | CNT purification protocols [8]. |

| Polyethylene Glycol (PEG) | A polymer coating used to functionalize the surface of nanoparticles, improving biocompatibility and stability in biological fluids. | Surface functionalization for biodistribution studies [11]. |

| Catalysts (Fe, Ni, Co) | Metal nanoparticles essential for catalyzing the growth of CNTs via Chemical Vapor Deposition (CVD). | CNT synthesis [8]. |

The journey to harness the full potential of carbon nanotubes is a quintessential example of the challenge inherent in nanoscopic systems: the properties of the individual unit are not easily translated to the collective ensemble. Quantitative data confirms that while the performance gaps in electrical and thermal conductivity remain significant, remarkable progress has been made in closing the gap in mechanical properties, as evidenced by the specific strength of advanced CNT fibers. The path forward hinges on a deeper understanding and control of the interfaces between nanotubes and their environment, whether a metal matrix, a polymer, or a biological system. This endeavor requires the continued integration of sophisticated experimental protocols—from superacid-assisted fiber spinning to PBPK-QSAR modeling—that are grounded in a fundamental understanding of both atomistic interactions and classical materials science. For researchers and drug development professionals, acknowledging these inherent limitations and quantitative gaps is the first step toward systematically overcoming them.

The study of nucleation, the fundamental process where a new thermodynamic phase begins to form, has been revolutionized by atomistic computational modeling. While Classical Nucleation Theory (CNT) has long provided a macroscopic framework based on bulk thermodynamics, it often fails to capture the intricate molecular-scale details that govern nucleation pathways. This guide compares the atomistic modeling paradigm with CNT, examining their performance across diverse material systems including biominerals, metallic crystals, and ices. We present quantitative data on nucleation barriers, kinetics, and structures, supported by experimental validation from advanced techniques like in situ liquid-cell TEM and hyperpolarized NMR. The analysis demonstrates how atomistic simulations serve as a computational microscope, revealing non-classical pathways such as pre-nucleation clusters and surface-directed nucleation that challenge and complement traditional CNT frameworks.

Classical Nucleation Theory has served as the foundational framework for understanding phase transitions for decades. CNT treats nucleation as a stochastic process where atoms or molecules assemble into a spherical-cap critical nucleus, characterized by a single free energy barrier determined by the balance between surface and bulk energies [12]. This model depends heavily on macroscopic parameters like interfacial tension and assumes a single, well-defined pathway from disordered to ordered phases.

The atomistic paradigm challenges these simplifications by providing direct access to the molecular events preceding phase formation. Through techniques like molecular dynamics (MD) and metadynamics, researchers can now observe nucleation in silico, capturing transient intermediates, multiple pathways, and structural evolutions that occur at timescales from picoseconds to microseconds and length scales from angstroms to nanometers. This computational microscope has revealed that nucleation is often more complex than CNT predicts, involving non-classical pathways such as pre-nucleation clusters, two-step nucleation through dense liquid phases, and the critical influence of spatial confinement and epitaxial matching.

Methodological Comparison: CNT vs. Atomistic Modeling

Fundamental Principles and Approaches

Table 1: Core Methodological Differences Between CNT and Atomistic Modeling

| Aspect | Classical Nucleation Theory (CNT) | Atomistic Modeling Paradigm |

|---|---|---|

| Theoretical Basis | Macroscopic thermodynamics, capillary approximation | First-principles quantum mechanics, empirical force fields |

| Nucleus Description | Structureless spherical cap with sharp interface | Atomistically resolved with chemical specificity |

| Key Parameters | Interfacial tension, contact angle, supersaturation | Interatomic potentials, coordination numbers, bond angles |

| Barrier Calculation | Analytical expression: ΔG* = 16πγ³/(3ΔGᵥ²) | Free energy landscapes via enhanced sampling |

| Timescale Resolution | Mean-first-passage time from kinetic theory | Femtosecond to microsecond trajectory analysis |

| Spatial Resolution | Continuum (no atomic details) | Angstrom to nanometer scale |

| Experimental Validation | Bulk kinetic measurements, scattering data | In situ microscopy, spectroscopy, hyperpolarized NMR |

Atomistic Simulation Methodologies

Atomistic approaches employ diverse computational techniques to capture nucleation phenomena:

Molecular Dynamics (MD) simulations integrate Newton's equations of motion for all atoms in the system, generating trajectories that can reveal spontaneous nucleation events under sufficiently deep supercooling or supersaturation. Advanced sampling methods like metadynamics and umbrella sampling accelerate rare events like nucleation by biasing the simulation along collective variables, enabling quantitative free energy barrier calculations [13].

Force Fields form the foundation of these simulations, with choices ranging from:

- All-atom models like TIP4P/Ice for water, which include explicit hydrogen atoms and electrostatic interactions [12]

- Coarse-grained models like mW (monatomic water), which represent multiple atoms with single interaction sites for accelerated sampling [12]

- Reactive force fields that can handle bond formation and breaking during crystallization processes

Quantum Mechanical Calculations, particularly Density Functional Theory (DFT), provide parameter-free references for force field validation and enable precise characterization of electronic structure changes during nucleation [14] [15].

Quantitative Performance Comparison Across Material Systems

Nucleation Barriers and Kinetics

Table 2: Comparison of Nucleation Barriers and Kinetics Across Material Systems

| Material System | CNT Prediction | Atomistic Prediction | Experimental Validation | Key Discrepancy |

|---|---|---|---|---|

| Ice Nucleation on AgI [12] | Barrier depends solely on supercooling & contact angle | Enhancement in slits matching ice bilayer thickness (2.5-3.5 kBT reduction) | Cryo-electron microscopy | CNT misses structural matching & confinement effects |

| Dislocation Nucleation in Ni [13] | Not directly addressed | ΔF = 1.65 eV (pristine GB), reduces exponentially with void size | Nanoindentation experiments | Atomistics quantifies defect-mediated nucleation |

| CaP Prenucleation Clusters [14] | Assumes direct ion attachment to critical nucleus | Identifies stable PNCs with Ca/Pi ≈ 1, constant 3.0-3.6 Å Ca-P distances | dDNP-enhanced NMR spectroscopy | CNT misses persistent pre-nucleation species |

| Pt on Pd Nanocubes [15] | Uniform surface energy minimization | Corner nucleation (0.08 nm/s) until 1.6 nm threshold, then diffusion | In situ LC-TEM with atomic resolution | CNT cannot predict site-specific nucleation barriers |

| Mixed Inorganic Salts in SCW [16] | Homogeneous rate based on supersaturation | Ion-pairing dominated nucleation, rate decreases with temperature (34.96 to 1.65) | Crystallization tests in SCWG reactors | CNT overestimates rate at high T due to density neglect |

Structural Predictions and Mechanisms

Table 3: Structural Insights Beyond CNT Capabilities

| Structural Aspect | CNT Limitation | Atomistic Revelation | Experimental Corroboration |

|---|---|---|---|

| Cluster Structure | Featureless spherical cap | pH-independent Ca-P distances in PNCs matching brushite/OCP [14] | dDNP-NMR fingerprint matching |

| Surface Templating | Macroscopic contact angle | Atomic lattice matching (AgI to ice Ih basal plane) enhances nucleation [12] | Electron diffraction patterns |

| Defect Effects | Not incorporated | Exponential reduction in dislocation nucleation barrier with void size [13] | TEM of deformed nanocrystalline metals |

| Growth Pathways | Uniform radial growth | Directional diffusion from corners to edges to faces [15] | In situ LC-TEM trajectory analysis |

| Polymorphism | Single stable phase | Multiple competing polymorphs (α, β', β in TAGs) with transformation pathways [17] | XRD and DSC thermal analysis |

Experimental Validation Protocols

AdvancedIn SituCharacterization

Liquid-Cell Transmission Electron Microscopy (LC-TEM) Protocol for Pt-on-Pd Nanocube Validation [15]:

- Sample Preparation: 20 nm Pd cubic seeds mixed with K₂PtCl₄ (0.015-0.200 mM) in aqueous solution sealed between SiN windows

- Imaging Conditions: 300 keV electrons serving dual role as imaging probe and reducing agent, room temperature to slow diffusion

- Data Acquisition: Continuous imaging at 1-2 frames/second with sufficient electron dose for atomic resolution but minimal beam effects

- Trajectory Analysis: Track nucleation site preference, diffusion pathways, and growth rates with sub-nanometer precision

- Ex Situ Validation: Quench reaction by beam removal and perform HAADF-STEM for atomic-scale structural analysis

Key Findings: Pt preferentially nucleates at corners with 0.08 nm/s growth rate until reaching 1.6 nm threshold, then diffuses to edges and faces, creating uniform shells—contradicting CNT's uniform surface energy minimization premise.

Hyperpolarized NMR Spectroscopy

Protocol for Prenucleation Cluster Detection [14]:

- Dynamic Nuclear Polarization: Dissolution DNP at 1.4 K and 6.7 T for 2 hours with TEMPOL radical polarizer

- Sample Injection: Rapid dissolution and transfer to NMR spectrometer with 300 μL hyperpolarized sample injection in 1 second

- NMR Detection: ³¹P NMR on Bruker NEO 500 MHz spectrometer with BBFO Prodigy cryogenic probe, 8° flip angles at 1 s⁻¹ repetition

- Computational Integration: MD simulations with CHARMM36 force field followed by quantum mechanical chemical shift calculations

- Spectral Matching: Compare experimental NMR "fingerprints" with computed spectra from candidate PNC structures

Key Findings: Identification of stable CaP PNCs with Ca/Pi ≈ 1.0 and constant Ca-P distances (3.0, 3.6 Å) across pH 6-8, demonstrating pH-independent local coordination environments.

Enhanced Sampling and Free Energy Calculations

Protocol for Dislocation Nucleation Barriers [13]:

- System Setup: Ni bicrystal with ∑3 grain boundary containing pre-existing voids of varying radii (0.5-2.0 nm)

- MD Simulations: LAMMPS with Foiles-Hoyt EAM potential for Ni, uniaxial tension perpendicular to interface

- Nudged Elastic Band (NEB): Identify minimum energy path and transition state for dislocation nucleation

- Activation Analysis: Extract ΔG via Kocks form: ΔG = ΔF[1 - (σ/σ₀)ᵖ]ᵞ with temperature-dependent validation

- Phenomenological Modeling: Exponential relationship between activation energy and void size for crystal plasticity models

Key Findings: Activation energy for dislocation nucleation decreases exponentially with void radius, quantifying defect-mediated nucleation inaccessible to CNT.

Visualization of Atomistic Workflows and Pathways

Diagram 1: Integrated Workflow for Atomistic Nucleation Studies illustrating the multi-scale approach combining computational modeling with experimental validation.

Diagram 2: Paradigm Shift from CNT to Atomistic Modeling showing how atomistic approaches address fundamental limitations of classical theory.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Computational Tools for Nucleation Studies

| Category | Specific Tools/Reagents | Function in Nucleation Research |

|---|---|---|

| Simulation Software | LAMMPS [13] [16], GROMACS [14], GROMACS [14] | MD simulation engines for trajectory calculation and analysis |

| Force Fields | CHARMM36 [14], TIP4P/Ice [12], mW [12], EAM Foiles-Hoyt [13] | Define interatomic potentials for specific material systems |

| Enhanced Sampling | Nudged Elastic Band [13], Metadynamics, Umbrella Sampling | Calculate free energy barriers and transition paths for rare events |

| Quantum Mechanics | Density Functional Theory [15] | Electronic structure calculations for force field validation |

| In Situ Microscopy | Liquid-Cell TEM [15], Cryo-EM | Direct visualization of nucleation events with near-atomic resolution |

| Advanced Spectroscopy | Dissolution DNP-NMR [14], ²⁷Al/²⁹Si NMR [18] | Detection of transient species and local chemical environments |

| System-Specific Reagents | K₂PtCl₄/Pd nanocubes [15], AgI surfaces [12], Ca/Pi solutions [14] | Well-characterized experimental systems for validation |

The atomistic paradigm has fundamentally transformed our understanding of nucleation, serving as a computational microscope that reveals molecular details inaccessible to both experimental observation and classical theoretical frameworks. Across material systems—from biominerals and metals to ices and complex organic crystals—atomistic modeling has consistently demonstrated capabilities beyond CNT, including predicting non-classical pathways, quantifying defect-mediated nucleation, and revealing epitaxial template effects.

The convergence of enhanced sampling algorithms, exponentially growing computational resources, and increasingly sophisticated in situ experimental validation suggests an emerging era of predictive nucleation design. Future developments will likely focus on multi-scale frameworks that seamlessly connect electronic structure calculations to mesoscopic crystallization models, machine learning potentials that accelerate accurate sampling, and integrated digital workflows that combine simulation with robotic experimentation.

For researchers in pharmaceutical development, these advances translate to improved control over polymorph selection, crystal habit, and particle size distribution—critical parameters in drug bioavailability and processing. The atomistic paradigm provides not just explanatory power but a genuine path toward predictive materials design, enabling rational engineering of crystallization processes rather than empirical optimization.

The long-standing scientific debate between classical nucleation theory (CNT) and atomistic modeling approaches represents a fundamental conflict in how we understand the initial stages of phase transitions. For nearly a century, CNT has provided a valuable phenomenological framework for describing nucleation processes through continuum thermodynamics, representing critical nuclei as small droplets of the bulk phase with macroscopic properties like surface tension. However, this simplified view has faced persistent challenges in quantitatively predicting experimental results, particularly for systems where nanoscale clusters exhibit molecular-specific behavior not captured by continuum approximations. The convergence of algorithmic advances, rich datasets, and powerful computing architectures is now fundamentally transforming this research landscape, enabling unprecedented direct validation of these competing theoretical frameworks and resolving long-standing discrepancies between theory and experiment.

Recent breakthroughs in machine learning interatomic potentials have been particularly transformative, bridging the accuracy gap between quantum-mechanical calculations and molecular dynamics simulations. As demonstrated in groundbreaking aluminum crystallization studies, these ML-driven models trained exclusively on liquid-phase DFT configurations can accurately reproduce key thermodynamic and structural properties without prior knowledge of solid phases, creating a "crystal-unbiased" approach that effectively eliminates model bias from nucleation simulations [19]. Concurrently, heterogeneous computing architectures and specialized supercomputers like the KISTI-6 system are providing the computational resources necessary to simulate systems of sufficient scale to study spontaneous nucleation events with quantum accuracy [20]. These technological drivers are complemented by the emergence of rich experimental datasets that enable more rigorous validation of theoretical predictions, particularly through advanced characterization techniques that provide structural and kinetic information across multiple scales.

Performance Comparison: Classical vs. Atomistic Approaches

The table below summarizes the core characteristics, methodological strengths, and limitations of Classical Nucleation Theory versus modern atomistic approaches, highlighting how key drivers have addressed historical challenges in nucleation research.

Table 1: Performance Comparison Between Classical Nucleation Theory and Atomistic Approaches

| Aspect | Classical Nucleation Theory (CNT) | Modern Atomistic Approaches |

|---|---|---|

| Theoretical Foundation | Continuum thermodynamics; macroscopic material properties [21] | Quantum-mechanical calculations; machine learning interatomic potentials [19] |

| Cluster Representation | As small droplets of bulk phase with sharp interface [21] | As distinct molecular species with size-dependent properties [22] |

| Surface Tension Treatment | Constant macroscopic value [21] | Curvature-dependent (e.g., Tolman correction) [23] |

| Free Energy Landscape | Smooth, monatomic function of cluster size [22] | Multimodal function reflecting molecular complexity [22] |

| Computational Demand | Low; analytical expressions | Extremely high; requires supercomputing resources [20] [19] |

| Transferability | General framework but system-dependent parameters | High for ML potentials trained on diverse DFT data [19] |

| Quantitative Accuracy | Often underestimates rates by orders of magnitude; requires fitting [21] | Quantum-accurate; validated against experimental measurements [19] |

The performance disparities between these approaches are particularly evident in their treatment of nanoscale clusters. CNT assumes that clusters as small as a few molecules can be described using macroscopic interfacial properties, an approximation that becomes increasingly invalid at the nanoscale. In contrast, atomistic approaches explicitly capture molecular-specific interactions and structure, revealing free energy landscapes that are both "quantitatively and qualitatively different than in CNT" [22]. For instance, studies of water clusters up to size 10 and aluminum clusters up to size 60 demonstrate that the free energy of cluster formation exhibits multimodal behavior as a function of cluster size, reflecting structural transitions that are completely absent from the classical picture [22].

Recent validation studies highlight the improving predictive capability of modern approaches. In a comprehensive molecular dynamics study of aluminum crystallization using machine learning potentials, researchers found "excellent agreement between theoretical predictions and direct MD-derived values of J [nucleation rate], corroborating the validity of CNT" when properly parameterized with simulation-derived properties [19]. This suggests that CNT's phenomenological framework remains valuable when informed by accurate atomistic data, creating a potential synthesis between these seemingly opposed approaches.

Experimental Protocols and Methodologies

Machine Learning-Driven Molecular Dynamics for Nucleation Validation

A groundbreaking experimental protocol emerging from recent literature combines machine learning interatomic potentials with large-scale molecular dynamics simulations to directly test theoretical predictions of nucleation behavior. The methodology employed in aluminum crystallization studies demonstrates how key drivers are integrated to achieve unprecedented accuracy [19]:

Liquid-Phase Training Strategy: Unlike traditional empirical potentials, the ML model is trained exclusively on liquid-phase DFT configurations "without any prior knowledge of solid properties and structures," creating a crystal-unbiased approach that eliminates model predisposition toward specific crystalline phases [19].

Pair Entropy Fingerprint (PEF) Method: Crystalline clusters are identified using the PEF method, which detects emergent crystalline structures "independent of predefined crystal patterns," removing analytical bias from the characterization process [19].

Direct Nucleation Rate Calculation: The homogeneous nucleation rate, J, is calculated both through direct observation of nucleation events in MD simulations and theoretically via CNT equations "using MD-derived properties, without any fitting parameters," enabling direct validation of theoretical predictions [19].

Multi-Temperature Validation: Simulations span temperature ranges of T=500–540 K for spontaneous crystallization and T=600–790 K for seeded crystallization, providing comprehensive data across different nucleation regimes [19].

This protocol represents a significant advance over earlier approaches that struggled with transferability and oversimplification of complex atomic interactions. The research demonstrates that "an ML-driven, crystal-unbiased model can accurately capture the kinetics and thermodynamics of crystallization, validating two classical phenomenological theories at the atomic scale" [19].

Quantitative Experimental Data Interpretation Framework

For experimental systems where direct simulation is computationally prohibitive, such as protein nucleation, a rigorous framework for interpreting quantitative experimental data using CNT has been developed [21]:

Pre-exponential Factor Analysis: Experimental nucleation rates are analyzed to extract the pre-exponential factor, which is then compared against "physically reasonable bounds for homogeneous nucleation" to distinguish between homogeneous and heterogeneous mechanisms [21].

Barrier Height Distribution Assessment: The functional form of the nucleation rate is examined for evidence of "a distribution of barrier heights," which is "likely for heterogeneous nucleation but not possible for homogeneous nucleation" [21].

Hybrid Atomistic-Continuum Approach: Researchers suggest constructing a "master table" of free energies of cluster formation based on "a hybrid of atomistic data, experimental values inferred by means of the nucleation theorem, and extrapolations to larger cluster sizes based on CNT" [22].

This framework is particularly valuable for complex molecular systems like lysozyme, where application to experimental data revealed values for the pre-exponential factor "outside the physically reasonable bounds for homogeneous nucleation but consistent with heterogeneous nucleation" [21], resolving long-standing interpretation challenges.

Diagram 1: Integrated Research Workflow for Nucleation Validation

The Scientist's Toolkit: Essential Research Reagent Solutions

Modern nucleation research requires a sophisticated combination of computational tools, theoretical frameworks, and experimental systems. The table below details key "research reagent solutions" essential for cutting-edge investigations in this field.

Table 2: Essential Research Reagents and Tools for Nucleation Research

| Research Tool | Function/Application | Key Advances |

|---|---|---|

| Machine Learning Interatomic Potentials | Bridges accuracy of quantum calculations with scale of MD simulations [19] | Trained exclusively on liquid-phase DFT; crystal-unbiased [19] |

| Pair Entropy Fingerprint (PEF) Method | Identifies emergent crystalline clusters without predefined patterns [19] | Pattern-independent structure detection [19] |

| Classical Nucleation Theory with Extensions | Baseline phenomenological framework for nucleation interpretation [21] [23] | Tolman correction for curvature effects; real-gas behavior [23] |

| Heterogeneous Computing Architectures | Provides computational resources for quantum-accurate large-scale simulations [20] | KISTI-6 supercomputer specialized for nuclear theory calculations [20] |

| Lysozyme Protein System | Model experimental system for studying protein nucleation [21] | Enables distinction between homogeneous and heterogeneous nucleation [21] |

| Advanced Free Energy Formulations | Corrects nucleation work calculations with self-consistency correction [19] | Accounts for W₁ ≡ ΔG(n=1) term significant for good glass-formers [19] |

The integration of these tools has created new possibilities for resolving long-standing questions in nucleation research. For instance, the combination of ML potentials with PEF analysis has enabled researchers to "investigate spontaneous and seeded crystallization" in aluminum while avoiding the biases that plagued earlier simulation approaches [19]. Similarly, advanced CNT formulations that incorporate "curvature-dependent surface tension (Tolman correction) and real-gas behavior (Van der Waals correction)" are extending classical theory into the nanoscale domain where atomistic effects dominate [23].

Integration Pathways and Logical Relationships

The interplay between algorithmic advances, rich datasets, and powerful computing architectures follows a sophisticated logical structure that enables continuous improvement in nucleation prediction capabilities. The diagram below visualizes these key relationships and their synergistic effects on research outcomes.

Diagram 2: Integration Pathways Between Key Research Drivers

The synergistic relationship between these drivers creates a virtuous cycle of improvement: Rich datasets from both experimental measurements and quantum calculations train more accurate algorithmic approaches, which in turn demand more powerful computing architectures for implementation, enabling the generation of even richer validation data. This integrated framework is rapidly transforming nucleation research from a field dominated by phenomenological approximations to one grounded in molecular-level predictions with quantifiable accuracy.

For the specific case of distinguishing between homogeneous and heterogeneous nucleation mechanisms - a longstanding challenge in experimental interpretation - this integration enables modeling where "the functional form of the rate suggests that there is a distribution of barrier heights," with such a distribution being "likely for heterogeneous nucleation but not possible for homogeneous nucleation" [21]. The convergence of these key drivers thus resolves fundamental questions that have persisted since the inception of nucleation theory nearly a century ago.

Understanding drug action requires a comprehensive framework that connects atomic-level interactions to the resulting cellular phenotypes. This process inherently involves bridging vast scales: a drug molecule, measuring on the order of nanometers, must bind its specific biological target, such as a protein receptor, to initiate a cascade of signals that ultimately alter cellular function. The central challenge in modern drug development lies in accurately predicting and validating this entire pathway. Two distinct but potentially complementary computational approaches have emerged to address this challenge. Atomistic models aim to simulate the precise interactions at the molecular level, calculating the forces and binding energies between drugs and their targets with high fidelity. In parallel, classical nucleation theory (CNT) and its extensions provide a macroscopic framework for understanding collective cellular phenomena, such as the formation of protein aggregates or membrane domains, which can be crucial for drug effects. This guide objectively compares the performance, applicability, and validation of these two paradigms in the context of pharmacological research.

Computational Approaches: Atomistic Models vs. Classical Nucleation Theory

Fundamental Principles and Scope

Atomistic Models operate at the molecular and atomic scale. Their primary goal is to compute the potential energy surface of molecular systems, providing a detailed view of interactions. For drug action, this translates to simulating the binding of a small molecule to a protein target, the conformational changes induced, and the precise biochemical interactions that occur [24]. The advent of machine learning interatomic potentials (MLIPs), such as those trained on massive datasets like Meta's Open Molecules 2025 (OMol25), has dramatically accelerated these simulations by offering near-quantum mechanical accuracy at a fraction of the computational cost [24].

Classical Nucleation Theory (CNT) is a thermodynamic framework that describes how the first seeds of a new phase (e.g., a protein cluster or a bubble) form within a metastable parent phase (e.g., the cytoplasm). It focuses on the free energy balance between the bulk of a nascent cluster and its surface. CNT is particularly relevant for drug action when cellular effects involve collective phenomena, such as the aggregation of proteins in neurodegenerative diseases or the formation of signaling complexes on the cell membrane [25] [23]. Its strength lies in predicting rates and critical sizes for these transitions.

The following table summarizes their core attributes for easy comparison.

Table 1: Fundamental Comparison of Atomistic Models and Classical Nucleation Theory

| Feature | Atomistic Models | Classical Nucleation Theory (CNT) |

|---|---|---|

| Primary Scale | Atomic / Molecular (Nanoscale) | Macroscopic / Continuum (Microscale and above) |

| Core Function | Calculates molecular potential energy surfaces; simulates binding dynamics and conformational changes [24]. | Predicts nucleation rates and critical cluster size for phase transitions [25] [23]. |

| Key Inputs | Atomic coordinates, force fields, neural network potentials, electronic structure data [24] [26]. | Interfacial surface tension, supersaturation, thermodynamic driving force [23] [27]. |

| Typical Outputs | Binding energies, protein-ligand poses, reaction pathways, atomic forces [24]. | Nucleation rate, free energy barrier, critical nucleus size [27]. |

| Temporal Scale | Picoseconds to microseconds [24]. | Milliseconds to seconds (or longer). |

| Applicability in Drug Action | Direct: Molecular docking, lead optimization, understanding binding affinity and specificity [24]. Indirect: Informing parameters for coarser-grained models. | Indirect: Modeling cellular phenomena driven by aggregation, such as protein aggregation in disease or formation of signaling platforms [25]. |

Performance and Validation in Predictive Accuracy

Quantitative benchmarks are essential for evaluating the performance of these models. The validation criteria differ significantly due to their disparate scales and objectives.

Atomistic Models are validated against quantum mechanical calculations and experimental structural data. Performance is measured by the accuracy of predicted molecular energies, forces, and the resulting geometries. Recent universal models trained on expansive datasets have set new standards for accuracy.

Table 2: Performance Benchmarking of Atomistic Models and CNT

| Validation Metric | Atomistic Models (e.g., OMol25-trained models) | Classical Nucleation Theory |

|---|---|---|

| Energy Accuracy | Near-quantum mechanical accuracy; outperform affordable DFT levels on benchmarks like GMTKN55 and Wiggle150 [24]. | Not directly applicable to molecular energies. |

| Force Accuracy | High accuracy for interatomic forces, crucial for stable dynamics simulations; conservative-force models outperform direct-force prediction [24]. | Not applicable. |

| Transferability | High across diverse chemical spaces (biomolecules, electrolytes, metal complexes) due to training on massive, diverse datasets [24] [26]. | Limited; parameters are often system-specific and require calibration [27]. |

| Limitations | Computationally expensive for large systems or long timescales; accuracy depends on training data quality and coverage [24]. | Breaks down for small clusters (few hundred particles); relies on macroscopic parameters that may not hold at nanoscale [27]. |

| Key Validation | Internal benchmarks against high-accuracy DFT; user reports of enabling previously impossible computations on large systems [24]. | Comparison with molecular dynamics simulations for model systems (e.g., Lennard-Jones, water) [27]. |

For atomistic models, benchmarks show that models like Meta's eSEN and UMA, trained on the OMol25 dataset, achieve "essentially perfect performance" on standard molecular energy benchmarks, with users reporting they provide "much better energies than the DFT level of theory I can afford" [24]. The shift to conservative-force models is critical for obtaining physically realistic and stable molecular dynamics simulations [24].

Classical Nucleation Theory, in contrast, is validated by its ability to predict nucleation rates and critical sizes. However, its performance is highly system-dependent. Recent research using advanced simulation techniques has rigorously tested CNT's limits, finding that it breaks down for very small clusters containing only a few dozen to a few hundred particles [27]. For larger clusters, CNT can be a valid approximation, and its predictive power has been improved by extensions that incorporate curvature-dependent surface tension (Tolman correction) and real-gas behavior (Van der Waals correction), making it more applicable to nanoscale nuclei relevant in biological contexts [23].

Experimental Protocols for Model Validation

Workflow for Validating an Atomistic Drug-Target Model

The following diagram illustrates a robust, iterative workflow for developing and validating an atomistic model of drug action, leveraging modern datasets and neural network potentials.

Diagram 1: Workflow for atomistic model validation. The process is iterative, relying on high-quality data and multi-faceted validation against quantum mechanics and experiment.

Protocol Steps:

- System Definition: Clearly define the drug molecule, protein target, and the biological environment (e.g., solvation, membrane). Obtain initial 3D structures from databases like the RCSB PDB [24].

- Data Selection and Curation: This critical step has been revolutionized by large-scale, publicly available datasets. For comprehensive coverage, utilize datasets like:

- OMol25: Contains over 100 million calculations on diverse structures, including biomolecules from the PDB, electrolytes, and metal complexes, all computed at a consistent, high-level ωB97M-V theory [24].

- MAD Dataset: A more compact universal dataset designed for massive atomic diversity, useful for training robust models that handle both organic and inorganic components and non-equilibrium configurations [26].

- Model Training & Optimization: Select a modern neural network potential architecture (e.g., eSEN, UMA) and train it on the curated data. The UMA (Universal Model for Atoms) architecture, for instance, uses a Mixture of Linear Experts (MoLE) to effectively learn from multiple, dissimilar datasets, improving knowledge transfer [24]. The training often involves a two-phase strategy: pre-training a direct-force model followed by fine-tuning for conservative forces, which enhances stability and reduces training time [24].

- Model Validation: Validate the model's predictions against data not seen during training.

- Quantum Chemical Benchmarks: Compare predicted molecular energies and forces against high-accuracy DFT calculations on standardized benchmarks like GMTKN55 [24].

- Structural Benchmarks: Validate the model's ability to reproduce known protein-ligand binding poses and interactions from high-resolution crystal structures.

- Production Simulation & Analysis: Use the validated model to run molecular dynamics simulations or geometry optimizations of the drug-target complex. Analyze the results to determine binding affinities, key interaction residues, and induced conformational changes. These molecular-level insights are then used to form hypotheses about the subsequent cellular effects.

Workflow for Applying CNT to a Cellular Drug Effect

This protocol outlines how to apply and validate CNT for modeling a drug-induced cellular aggregation phenomenon.

Protocol Steps:

- Phenomenon Identification: Identify a cellular drug effect that involves a phase transition or aggregation, such as drug-induced protein aggregation or the formation of specific membrane lipid domains (lipid rafts) that concentrate signaling molecules.

- Parameterization: This is the most challenging step. Determine the key macroscopic parameters for the CNT equation:

- Interfacial Surface Tension (γ): Obtain from literature or estimate from molecular dynamics simulations of the interface between the two phases.

- Supersaturation (S) or Driving Force (Δμ): Estimate the concentration of the aggregating species (e.g., protein) in the cell and its equilibrium solubility.

- Corrections: For nanoscale clusters, incorporate corrections like the Tolman correction for curvature-dependent surface tension and the Van der Waals correction for non-ideal behavior [23].

- Model Application: Use the parameterized CNT model to calculate the free energy barrier to nucleation (ΔG) and the critical nucleus size (n). The nucleation rate (J) can then be estimated.

- Validation with Molecular Dynamics (MD): Compare the CNT predictions with direct molecular dynamics simulations, which serve as a "computational experiment."

- Use advanced sampling techniques like aggregation-volume-bias Monte Carlo to compute the free energy profile of cluster formation as a function of cluster size [27].

- Compare the critical cluster size and the height of the free energy barrier from MD with the predictions of the CNT model. Studies show that CNT agrees well with MD for large clusters (hundreds of particles) but fails for small clusters [27].

- Bridging to Cellular Phenotype: If the CNT model is successfully validated against MD, its predictions for nucleation rates under different drug concentrations can be linked to the observed cellular phenotype (e.g., the rate and extent of protein aggregation).

Successful research bridging molecular and cellular scales relies on a suite of computational and data resources. The following table details key solutions for atomistic simulation and validation.

Table 3: Research Reagent Solutions for Atomistic and Cellular Modeling

| Resource / Solution | Type | Primary Function | Relevance to Scale Bridging |

|---|---|---|---|

| OMol25 Dataset [24] | Dataset | Provides 100M+ high-accuracy quantum chemical calculations for training and benchmarking. | Foundational data for developing universal atomistic models of drug-target interactions. |

| Universal Model for Atoms (UMA) [24] | Pre-trained Model | A neural network potential trained on OMol25 and other datasets for accurate energy/force prediction. | Enables rapid, accurate simulations of diverse molecular systems without re-training. |

| eSEN Models [24] | Pre-trained Model | Equivariant neural network potentials; available in direct and conservative-force variants. | Conservative-force models provide stable MD trajectories for studying binding dynamics. |

| MAD Dataset [26] | Dataset | A compact dataset designed for massive atomic diversity, including non-equilibrium structures. | Improves model robustness for simulating distorted states encountered during binding. |

| Aggregation-Volume-Bias Monte Carlo [27] | Algorithm | An advanced sampling technique to compute free energies of cluster formation. | Validates and parameterizes CNT models by providing "ground truth" free energy profiles. |

| Molecular Dynamics (MD) Software | Tool | Simulates the physical movements of atoms and molecules over time. | Serves as the primary tool for both atomistic simulation and validation of coarse theories like CNT. |

Integrated View: Connecting Molecular Binding to Cellular Phenotype

The true power of computational modeling is realized when atomistic insights and macroscopic theories are woven together to explain a complete drug action pathway. The following diagram illustrates this integrated view, using a G-protein coupled receptor (GPCR) as an example target.

Diagram 2: Integrated drug action pathway. Atomistic simulations explain the molecular initiation event, while theories like CNT can model subsequent collective cellular signaling.

Pathway Explanation:

- Molecular Initiation: An atomistic simulation, powered by a universal NNP, reveals the precise binding of a drug molecule to its GPCR target. The model calculates the binding energy and shows the specific conformational change induced in the receptor [24].

- Signal Nucleation: The activated receptor conformation recruits intracellular signaling proteins (e.g., G-proteins). This creates a local, high-concentration environment near the membrane. This step represents a shift in scale, where the behavior is no longer about single molecules but about a collective. The formation of a stable signaling cluster can be modeled as a nucleation event [25]. The rate and stability of this cluster formation can be described by a CNT-based model, parameterized with interaction strengths informed by atomistic simulations.

- Cellular Phenotype: Once a critical signaling cluster (the nucleus) forms, it triggers a powerful and sustained downstream signal amplification cascade (e.g., second messenger production), ultimately leading to a measurable cellular response, such as changes in gene expression or cell metabolism.

This integrated view demonstrates that atomistic models and macroscopic theories are not competitors but essential partners. Atomistic models provide the "why" at the molecular level—the precise mechanism of the initial interaction. Theories like CNT, when carefully applied and validated, can describe the "how" at the cellular level—how that molecular event is amplified into a macroscopic cellular effect through collective phenomena. The ongoing validation of both approaches, using high-quality data and rigorous cross-testing with molecular simulations, is key to reliably bridging these scales and accelerating rational drug design.

The Atomistic Toolkit: Methodologies and Direct Pharmaceutical Applications

Molecular dynamics (MD) simulations have emerged as a powerful computational tool for capturing time-dependent phenomena across diverse scientific fields, from materials science to drug discovery. By solving Newton's equations of motion for all atoms in a system, MD provides unparalleled atomic-level spatial and temporal resolution of dynamic processes. This guide compares MD's performance against alternative computational methods for modeling time-dependent behaviors, highlighting its unique capabilities in capturing complex phenomena such as protein conformational changes, material deformation, and nucleation events. The analysis is framed within the broader context of validating atomistic models against classical theoretical frameworks, demonstrating how MD serves as a crucial bridge between theory and experiment.

Understanding time-dependent phenomena is fundamental to predicting material properties, drug interactions, and chemical processes. While experimental techniques often provide snapshots of these processes, MD simulations offer a continuous view of system evolution at atomic resolution. Technological advancements have transformed MD from a limited technique simulating small peptides for nanoseconds to a robust method capable of modeling complex systems for microseconds or longer, enabling the study of large conformational changes and rare events [28].

The validation of atomistic models against classical theories like Classical Nucleation Theory (CNT) represents a critical application of MD. CNT provides a thermodynamic framework for describing phase transitions but relies on simplifying assumptions about nucleus structure and growth kinetics. MD simulations serve as a computational experiment to test these assumptions directly, revealing limitations and providing pathways for theoretical refinement [23] [29]. This comparative analysis examines MD's evolving role in capturing time-dependent phenomena across scientific domains, with particular emphasis on its synergistic relationship with established theoretical frameworks.

Methodological Approaches: MD and Complementary Techniques

Molecular Dynamics Fundamentals

MD simulations model system evolution by numerically integrating Newton's equations of motion for all atoms, typically using force fields to describe interatomic interactions. Modern implementations leverage GPU acceleration to achieve simulation timescales of microseconds for systems comprising hundreds of thousands of atoms, capturing large conformational changes and state transitions [28]. The time-dependent Kohn-Sham equation forms the foundation for first-principles MD approaches, enabling the modeling of perturbative and non-perturbative electron dynamics in materials [30].

Advanced sampling techniques address the challenge of simulating rare events:

- Metadynamics: Utilizes nonequilibrium sampling to reconstruct equilibrium free-energy landscapes by adding bias potentials [28]

- Umbrella Sampling: Adds external harmonic potentials to analyze equilibrium distribution of states along predefined collective variables [28]

- Markov State Modeling (MSM): Analyzes distributed MD simulation data to determine long-term kinetic behavior through featurization and dimension reduction [28]

Complementary Computational Methods

While MD excels at capturing temporal evolution, other techniques offer complementary strengths for specific applications:

Table 1: Comparison of Computational Methods for Time-Dependent Phenomena

| Method | Spatial Scale | Temporal Scale | Key Applications | Limitations |

|---|---|---|---|---|

| Molecular Dynamics (MD) | Atoms to small macromolecules | Nanoseconds to microseconds | Protein conformational changes, nucleation events, diffusion | Limited by force field accuracy, computationally expensive for large systems |

| Discrete Element Method (DEM) | Micron-scale particles | Seconds to hours | Granular material flow, solid propellant creep, powder mechanics | Requires coarse-graining of molecular details |

| Classical Nucleation Theory (CNT) | Macroscopic thermodynamic | N/A (equilibrium theory) | Phase transitions, bubble formation, precipitation | Limited to near-solubility limit, assumes simplified nucleus morphology [29] |

| Phase Field (PF) Method | Mesoscale microstructure | Minutes to days | Microstructure evolution, spinodal decomposition, precipitate growth | Relies on phenomenological parameters, lacks atomic detail |

| Time-Dependent Density Functional Theory (TDDFT) | Electronic structure | Attoseconds to picoseconds | Electron dynamics, optical properties, high-harmonic generation | Limited to small systems and short timescales [30] |

Comparative Performance Analysis

Capturing Complex Temporal Evolution

MD simulations uniquely capture all stages of time-dependent processes, as demonstrated in creep behavior studies of solid propellants. Where traditional models often fail to reproduce accelerated strain rates in tertiary creep, MD combined with rate process theory successfully replicates primary, secondary, and tertiary creep stages, showing excellent agreement with experimental data [31]. This capability to capture nonlinear, multi-stage temporal evolution distinguishes MD from more limited analytical approaches.

In protein systems, large-scale MD investigations have revealed unexpected "breathing" motions of G protein-coupled receptors (GPCRs) on nanosecond-to-microsecond timescales, providing access to numerous previously unexplored conformational states [32]. These spontaneous transitions between closed, intermediate, and open states occur even in the absence of ligands, with MD quantifying transition kinetics (0.5 μs for closed-to-intermediate and 7.8 μs for closed-to-open transitions in apo receptors) [32]. Such detailed temporal information is inaccessible to experimental structural biology techniques and simplified theoretical models.

Validation Against Classical Theories

MD serves as a crucial validation tool for classical theories like CNT, revealing both consistencies and limitations. In cavitation studies, MD simulations have validated extended CNT frameworks that incorporate curvature-dependent surface tension (Tolman correction) and real-gas behavior (Van der Waals correction), showing that the modified theory accurately predicts lower cavitation pressures than the Blake threshold [23]. The simulations specifically demonstrated that the Tolman correction is most relevant for nuclei below approximately 10 nm, while for larger nuclei, its effect becomes negligible [23].

Similarly, in FeCr alloy systems, MD and phase field approaches have revealed CNT's limitation to regions near the solubility limit where experimental validation is challenging [29]. The atomistic modeling identified that CNT cannot adequately describe critical precipitates in nucleation-growth regions away from solubility limits, leading to the development of self-consistent phase field approaches that bypass CNT's limitations by using an effective Hamiltonian to describe decomposition kinetics [29].

Quantitative Performance Metrics

Table 2: Quantitative Comparison of Method Performance for Time-Dependent Phenomena

| Method | Temporal Resolution | System Size Limitations | Computational Cost | Validation Status |

|---|---|---|---|---|

| MD (Classical) | Femtosecond integration | ~1 million atoms | High (GPU-accelerated) | Excellent agreement with experiment for protein dynamics [32] |

| MD (QM/MM) | Femtosecond to picosecond | ~100,000 atoms | Very High | Good for reaction mechanisms, limited by QM method accuracy [28] |

| DEM | Millisecond to second | Billions of particles | Moderate to High | Validated for granular flows and propellant creep [31] |

| CNT | N/A (equilibrium) | Macroscopic | Low | Limited to near-solubility limit [29] |

| Phase Field | Second to hour | Centimeter scale | Low to Moderate | Good agreement with atom probe tomography [29] |