Beyond Charge-Balancing: How Deep Learning is Redefining Synthesizability Prediction in Drug Discovery and Materials Science

Predicting whether a proposed molecule or material can be successfully synthesized is a critical challenge in accelerating discovery.

Beyond Charge-Balancing: How Deep Learning is Redefining Synthesizability Prediction in Drug Discovery and Materials Science

Abstract

Predicting whether a proposed molecule or material can be successfully synthesized is a critical challenge in accelerating discovery. For years, charge-balancing heuristics served as a primary, though limited, proxy for synthesizability. This article provides a comprehensive comparison between these traditional methods and emerging deep learning (DL) approaches. We explore the foundational principles of both paradigms, detail the architecture and application of state-of-the-art DL models like SynthNN, CSLLM, and SynCoTrain, and address key troubleshooting and optimization challenges, including data scarcity and model generalizability. Through a rigorous validation and comparative analysis, we demonstrate that DL models significantly outperform charge-balancing in accuracy and reliability, particularly for complex and novel chemical spaces. This synthesis offers researchers and development professionals a clear roadmap for integrating modern synthesizability predictions into their workflows to de-risk the transition from in-silico design to experimental realization.

Defining Synthesizability: From Chemical Intuition to Data-Driven Intelligence

The discovery of new molecules and materials is being transformed by computational methods. Generative models and high-throughput simulations can now propose millions of candidate structures with desirable properties, representing an order-of-magnitude expansion from traditionally known materials [1]. However, a profound bottleneck threatens to render these computational advances irrelevant: the challenge of synthesizability. A material may be thermodynamically stable with excellent theoretical properties, but if no viable pathway exists to create it in the laboratory, it remains confined to digital repositories.

The core issue lies in the fundamental distinction between stability and synthesizability. Traditional computational screening relies heavily on thermodynamic stability metrics, particularly the energy above the convex hull (Eℎull), which measures a material's stability relative to its potential decomposition products [2]. While valuable, this approach ignores critical kinetic and technological constraints that govern real-world synthesis [3]. As a result, numerous materials with favorable formation energies remain unsynthesized, while various metastable structures are routinely synthesized despite less favorable thermodynamics [4]. This synthesizability gap represents the critical path between theoretical design and practical application across fields from drug discovery to clean energy technologies.

Evaluating Synthesizability: Methodological Landscape

Traditional Approaches and Their Limitations

Table 1: Traditional Synthesizability Assessment Methods

| Method | Fundamental Principle | Key Limitations |

|---|---|---|

| Thermodynamic Stability (Eℎull) | Energy difference from most stable competing phases [2] | Ignores kinetic barriers; calculated at 0K/0Pa; misses entropic effects [2] |

| Charge-Balancing Criteria | Ionic charge neutrality in compositions [3] | Over 50% of experimentally synthesized materials violate these rules [3] |

| Kinetic Stability (Phonon Spectra) | Absence of imaginary frequencies in phonon dispersion [4] | Computationally expensive; materials with imaginary frequencies can be synthesized [4] |

Traditional heuristic approaches like the Pauling Rules or charge-balancing criteria have proven insufficient, as more than half of the experimental materials in databases like the Materials Project do not meet these criteria for synthesizability [3]. Similarly, thermodynamic stability alone cannot reliably predict synthesizability because it fails to account for the actual reaction pathways and kinetic barriers involved in synthesis [5]. The energy landscape of synthesis resembles crossing a mountain range—one cannot simply go straight over the top but must find viable passes through the terrain [5].

Emerging Data-Driven Paradigms

Table 2: Data-Driven Synthesizability Prediction Approaches

| Method | Core Methodology | Reported Performance | Key Advantages |

|---|---|---|---|

| Positive-Unlabeled (PU) Learning | Learns from positive (synthesized) and unlabeled data [2] | 80% hit rate for stable predictions [1]; >87.9% accuracy for 3D crystals [4] | Addresses lack of negative data; handles real-world data scarcity |

| Graph Neural Networks (GNNs) | Message-passing networks on crystal graphs [1] | 11 meV/atom prediction error; 80% precision for stable structures [1] | Incorporates structural information; improves with data scaling |

| Large Language Models (CSLLM) | Fine-tuned LLMs using text representations of crystals [4] | 98.6% synthesizability accuracy [4] | Exceptional generalization; handles complex structures |

| Retrosynthesis Models | Predicts synthetic pathways using reaction templates/ML [6] | Varies by model and domain | Provides actual synthesis routes; domain-specific optimization |

The limitations of traditional methods have spurred development of machine learning approaches that learn synthesizability patterns directly from experimental data. These methods confront the fundamental challenge that failed synthesis attempts are rarely published, creating a severe scarcity of negative training examples [2] [3]. Positive-unlabeled learning has emerged as a powerful framework to address this limitation, enabling models to learn from confirmed synthesizable materials alongside unlabeled candidates [2] [3].

Comparative Analysis: Deep Learning vs. Traditional Methods

Performance Benchmarking

Table 3: Quantitative Performance Comparison of Synthesizability Prediction Methods

| Method | Stability Consideration | Pathway Consideration | Accuracy/Performance | Typical Application Scale |

|---|---|---|---|---|

| Energy Above Hull | Thermodynamic only | None | 74.1% (as synthesizability proxy) [4] | Millions of structures [1] |

| Phonon Spectrum Analysis | Kinetic only | None | 82.2% (as synthesizability proxy) [4] | Thousands of structures due to cost [4] |

| PU Learning (GNoME) | Combined thermodynamic/structural | Indirect via training data | 80% hit rate for stability [1] | 2.2 million stable discoveries [1] |

| SynCoTrain (Dual GCNN) | Structural & compositional | Indirect via training data | High recall on oxide crystals [3] | Domain-specific (oxides) |

| CSLLM Framework | Structural via text encoding | Direct via method classification | 98.6% synthesizability accuracy [4] | 150,120 crystal structures tested [4] |

Recent benchmarking demonstrates the superior performance of deep learning approaches over traditional stability metrics. The Crystal Synthesis Large Language Model (CSLLM) achieves 98.6% accuracy in synthesizability prediction, significantly outperforming thermodynamic (74.1%) and kinetic (82.2%) stability proxies [4]. Similarly, scaled graph networks like GNoME achieve unprecedented generalization, discovering 2.2 million stable structures and improving prediction precision to above 80% for structures and 33% for compositions alone [1].

Experimental Protocols and Methodologies

Positive-Unlabeled Learning Protocol (Chung et al.)

- Data Curation: Manual extraction of synthesis information for 4,103 ternary oxides from literature, including solid-state reaction success and conditions [2]

- Labeling Scheme: Materials classified as solid-state synthesized, non-solid-state synthesized, or undetermined based on experimental evidence [2]

- Model Training: PU learning framework applied to predict solid-state synthesizability of hypothetical compositions [2]

- Validation: Identification of 156 outliers in text-mined datasets, revealing only 15% extraction accuracy for problematic entries [2]

Dual Classifier Co-Training Protocol (SynCoTrain)

- Architecture: Two complementary graph convolutional neural networks (SchNet and ALIGNN) with iterative prediction exchange [3]

- Learning Strategy: Co-training framework mitigates model bias through collaborative learning between distinct GCNN architectures [3]

- Domain Focus: Oxide crystals enabling reliable results with reasonable training times [3]

- Evaluation: High recall on internal and leave-out test sets despite unlabeled data contamination [3]

Large Language Model Fine-Tuning Protocol (CSLLM)

- Data Preparation: Balanced dataset of 70,120 synthesizable structures from ICSD and 80,000 non-synthesizable structures screened via PU learning [4]

- Text Representation: "Material string" format integrating essential crystal information (space group, lattice parameters, atomic coordinates) [4]

- Model Architecture: Three specialized LLMs for synthesizability prediction, synthetic method classification, and precursor identification [4]

- Validation: Extensive testing on structures with complexity exceeding training data, demonstrating 97.9% accuracy on complex cases [4]

Table 4: Key Research Reagents and Computational Tools for Synthesizability Prediction

| Resource/Tool | Type | Primary Function | Research Application |

|---|---|---|---|

| Materials Project Database | Computational Database | Provides calculated properties for known and predicted materials [2] | Source of training data and benchmarking for synthesizability models |

| ICSD (Inorganic Crystal Structure Database) | Experimental Database | Repository of experimentally confirmed crystal structures [4] | Source of verified synthesizable materials for positive training examples |

| AiZynthFinder | Retrosynthesis Software | Predicts synthetic pathways using reaction templates [6] | Validation of proposed molecular synthesis routes |

| SYNTHIA | Retrosynthesis Platform | Computer-assisted retrosynthesis planning [6] | Identification of viable synthetic pathways for organic molecules |

| GNoME Models | Graph Neural Networks | Predicts crystal stability using scaled deep learning [1] | Large-scale screening of hypothetical materials for synthesizability |

| Human-curated Datasets | Experimental Data | Manually extracted synthesis conditions from literature [2] | High-quality training data supplementing automated text mining |

The experimental and computational toolkit for synthesizability research spans from carefully curated datasets to sophisticated software platforms. High-quality training data remains the foundation, with human-curated datasets providing crucial validation for automated approaches [2]. For example, manual examination of 4,103 ternary oxides revealed significant inaccuracies in text-mined datasets, where only 15% of outliers were extracted correctly [2]. Retrosynthesis platforms like AiZynthFinder and SYNTHIA provide critical pathway validation, particularly for molecular synthesis where route planning is essential [6].

The synthesizability challenge represents a critical frontier in materials and molecular discovery. While deep learning approaches have demonstrated remarkable progress—with methods like CSLLM achieving 98.6% prediction accuracy—significant hurdles remain [4]. The field continues to grapple with data quality issues, with text-mined datasets suffering from extraction inaccuracies and the fundamental absence of negative examples from failed synthesis attempts [2] [5].

The most promising paths forward involve hybrid approaches that combine the scalability of deep learning with the precision of retrosynthesis analysis and the validation of human expertise. As scale becomes increasingly central to discovery, with projects like GNoME expanding known stable materials by an order of magnitude, the ability to accurately predict synthesizability will determine whether these computational discoveries remain theoretical curiosities or become practical solutions to real-world challenges [1]. For researchers navigating this landscape, success will depend on strategically integrating multiple methodologies—leveraging traditional stability screening for initial filtering, applying PU learning for prioritization, and utilizing retrosynthesis tools for pathway validation—to bridge the gap between computational design and laboratory realization.

In computational drug discovery, the concept of "charge-balancing" represents a fundamental heuristic approach for evaluating molecular synthesizability—the practical feasibility of chemically constructing a proposed compound. This traditional paradigm encompasses a set of rule-based assumptions and structural alerts that medicinal chemists have developed through decades of experimental experience. These rules aim to maintain a "balance" between molecular complexity and synthetic accessibility, effectively prioritizing compounds that can be realistically synthesized within practical constraints. The underlying assumption is that molecules sharing certain structural or physicochemical properties with known, easily-synthesized compounds will themselves be synthetically accessible.

The emergence of deep learning (DL) has introduced a paradigm shift in synthesizability assessment, moving beyond static rules to data-driven predictions. Modern AI-driven drug discovery platforms now leverage generative models, graph neural networks, and reaction-based predictors to evaluate and optimize synthetic feasibility [7] [8]. This guide provides a comprehensive comparison between these traditional and deep learning approaches, examining their underlying assumptions, performance characteristics, and practical implications for drug discovery researchers.

Theoretical Foundations: Traditional vs. Deep Learning Approaches

Core Principles of Traditional Charge-Balancing Methods

Traditional charge-balancing approaches to synthesizability assessment are characterized by several foundational principles. These methods typically employ rule-based systems derived from historical chemical knowledge and expert intuition. For example, the widely used Synthetic Accessibility (SA) score penalizes molecules containing fragments rarely observed in reference databases and specific structural features deemed problematic [8]. These rules encode chemist heuristics about challenging functional groups, complex ring systems, and unstable molecular motifs.

The fundamental operating principle of these methods is structural similarity assessment, where novel compounds are evaluated based on their resemblance to known, synthesizable molecules. Tools like the SA score operate on the assumption that molecular feasibility can be quantified through the presence or absence of predefined structural patterns [8]. These methods explicitly incorporate chemical intuition by encoding domain knowledge from experienced medicinal chemists into computable rules. This approach inherently prioritizes interpretability, as the reasons for a poor synthesizability score can typically be traced to specific molecular features that violate established heuristic principles.

Fundamental Assumptions of Deep Learning-Based Approaches

Deep learning approaches to synthesizability challenge several core assumptions of traditional methods. Rather than relying on predefined rules, DL models learn complex, non-linear relationships directly from reaction data, assuming that synthetic feasibility patterns are discoverable from large datasets of known chemical reactions [9] [8]. Models like the Focused Synthesizability score (FSscore) assume that synthesizability can be framed as a ranking problem based on pairwise preferences learned from reaction data or human feedback [8].

These methods operate on the principle of data-driven representation, using molecular graphs or string representations that capture structural information without explicit rule encoding. The FSscore utilizes graph attention networks to learn expressive latent representations that consider stereochemistry and repeated substructures—features often poorly handled by traditional methods [8]. DL approaches also assume transferable learning, where patterns extracted from general reaction datasets can be fine-tuned for specific chemical spaces with minimal human feedback, typically as few as 20-50 labeled pairs [8].

Comparative Performance Analysis

Quantitative Metrics for Synthesizability Assessment

Table 1: Performance Comparison of Synthesizability Assessment Methods

| Method | Underlying Approach | Key Metrics | Reported Performance | Limitations |

|---|---|---|---|---|

| SA Score [8] | Rule-based fragment analysis | Fragment frequency, structural alerts | Struggles with complex natural products; fails to discriminate based on minor stereochemical differences | Limited sensitivity to small structural changes; inability to capture synthetic context |

| SCScore [8] | Reaction-based ML (Morgan fingerprints) | Predicted reaction steps | Correlates with reaction step count; poor performance in synthesis prediction benchmarks | Depends on molecular fingerprints that ignore stereochemistry; fails to generalize to new chemical spaces |

| FSscore [8] | Graph neural network with human feedback | Pairwise preference ranking | Enables >40% synthesizable molecules in generative output; adapts to specific chemical spaces with 20-50 human-labeled pairs | Requires fine-tuning for optimal performance on novel chemical scopes |

| SYBA [8] | Bayesian classification | Easy/hard to synthesize classification | Sub-optimal performance in independent evaluations | Limited discriminative power for structurally similar molecules |

Application Performance Across Drug Discovery Stages

Table 2: Method Performance in Practical Drug Discovery Applications

| Application Context | Traditional Methods | Deep Learning Approaches | Performance Highlights |

|---|---|---|---|

| De novo molecular design | Often generates unrealistic molecules lacking synthetic feasibility | FSscore fine-tuned to generative model's chemical space yields >40% synthesizable molecules while maintaining docking scores [8] | DL methods significantly increase synthesizable output without compromising drug-like properties |

| Virtual screening prioritization | Rule-based filters may eliminate potentially valuable chemotypes | Reaction-based predictors (RAscore, RetroGNN) show better correlation with actual synthetic feasibility [8] | DL methods demonstrate better generalization to diverse chemical spaces |

| Lead optimization | Provides interpretable feedback but limited predictive value | FSscore's differentiability enables direct integration into generative model guidance [8] | DL supports molecular optimization while maintaining synthetic accessibility |

| Novel modality assessment (PROTACs, macrocycles) | Often fail due to lack of relevant rules | Fine-tuning with domain-specific data enables adaptation to novel chemical spaces [8] | Transfer learning addresses key limitation of traditional methods |

Experimental Protocols and Methodologies

Traditional Rule-Based Assessment Protocol

The experimental protocol for traditional synthesizability assessment typically begins with molecular fragmentation, where compounds are decomposed into structural fragments based on predefined rules. The SA score implementation, for example, uses a fragmenter that breaks molecules along acyclic bonds while preserving rings and functional groups [8]. Following fragmentation, frequency analysis occurs, where each fragment's occurrence is compared against a reference database of known, synthesizable compounds. Rare fragments incur penalty points in the final score calculation.

The protocol continues with complexity feature detection, identifying specific molecular characteristics historically associated with synthetic challenges. These include stereochemical complexity, presence of unusual ring systems, and non-standard atom hybridization states. Finally, a scoring function combines these various penalties into a single synthesizability metric. The implementation typically requires only the molecular structure as input and produces a score through direct application of these predefined rules without iterative learning or optimization.

Deep Learning-Based Assessment Methodology

The FSscore methodology exemplifies modern DL approaches to synthesizability assessment [8]. The protocol begins with graph representation, converting molecular structures into graph representations where atoms constitute nodes and bonds constitute edges. This representation preserves stereochemical information and structural relationships often lost in traditional fingerprint-based approaches.

The core of the methodology involves two-stage training. First, pre-training on reaction data establishes a baseline model using a large dataset of reactant-product pairs, leveraging the relational nature of reaction data to implicitly inform synthetic difficulty. The model architecture typically employs graph attention networks that learn to prioritize structurally relevant molecular regions. Second, human feedback integration fine-tunes the baseline model using an active-learning framework where expert chemists provide pairwise preference rankings on molecules relevant to the target chemical space.

The training objective frames synthesizability as a preference ranking problem, minimizing the binary cross-entropy between true expert preferences and learned score differences. This approach avoids the need for absolute ground-truth scores, instead learning from relative comparisons that better match chemist decision-making processes. The fully differentiable nature of the resulting model enables direct integration into generative molecular design pipelines as a guidance mechanism or reward function.

Visualization of Workflows and Relationships

Traditional Charge-Balancing Workflow

Deep Learning Synthesizability Assessment

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Essential Tools for Synthesizability Research

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Chemical informatics toolkit | Molecular representation, fingerprint generation, basic rule-based filtering | Foundation for implementing custom synthesizability heuristics and molecular manipulation |

| ChEMBL Database [10] | Chemical bioactivity database | Source of known synthesizable molecules for reference distributions and training data | Provides reference distributions for traditional methods and training data for DL models |

| Graph Neural Networks (e.g., Graph Attention Networks) [8] | Deep learning architecture | Molecular representation learning that captures structural and stereochemical information | Core architecture for modern synthesizability predictors like FSscore |

| Reaction Databases (e.g., USPTO, Reaxys) | Chemical reaction data | Curated reaction datasets for training reaction-based synthesizability models | Provides relational data connecting reactants and products for implicit difficulty learning |

| Human Feedback Interface [8] | Data collection framework | Collection of expert chemist pairwise preferences for model fine-tuning | Enables domain adaptation of general models to specific chemical spaces of interest |

The comparative analysis reveals that traditional charge-balancing heuristics and deep learning approaches offer complementary strengths for synthesizability assessment in drug discovery. Traditional methods provide interpretability and computational efficiency but struggle with generalization and sensitivity to subtle structural variations. Deep learning models offer superior predictive performance and adaptability to novel chemical spaces but require careful tuning and sufficient training data. The emerging paradigm of human-in-the-loop deep learning, exemplified by approaches like FSscore, represents a promising synthesis of these methodologies—leveraging data-driven pattern recognition while incorporating expert chemical intuition through focused fine-tuning.

This integration is particularly valuable in the context of AI-driven drug discovery platforms, where generative models increasingly require synthesizability guidance to ensure practical utility of their outputs [7] [8]. As the field progresses, the most effective synthesizability assessment strategies will likely continue to blend the interpretable heuristics of traditional methods with the adaptive predictive power of deep learning, ultimately accelerating the identification of novel, synthetically accessible therapeutic compounds.

The pursuit of synthesizable materials represents a fundamental challenge in fields ranging from drug development to advanced battery design. For years, charge-balancing criteria has served as a widely adopted proxy for predicting synthesizability, particularly for inorganic crystalline materials. This chemically intuitive approach filters candidate materials based on a net neutral ionic charge calculated from common oxidation states. However, as discovery pipelines accelerate and the demand for novel materials grows, the statistical limitations of this traditional method have become increasingly apparent. Within the context of modern materials informatics, charge-balancing now faces rigorous comparison against emerging deep learning approaches that learn synthesizability directly from experimental data rather than relying on heuristic rules.

This guide provides an objective comparison between charge-balancing and data-driven deep learning models for synthesizability prediction. We quantify their performance through standardized benchmarks, detail their underlying methodologies, and visualize their operational frameworks. For researchers and scientists navigating the transition from traditional to computational discovery methods, this analysis offers critical insights for selecting appropriate synthesizability assessment tools in their workflows.

Quantitative Performance Comparison

The performance gap between charge-balancing and deep learning approaches becomes evident when evaluated against comprehensive materials databases. The following table summarizes key metrics from a controlled benchmarking study.

Table 1: Performance comparison of synthesizability prediction methods

| Method | Underlying Principle | Precision | Recall | F1-Score | Coverage of Known Materials |

|---|---|---|---|---|---|

| Charge-Balancing | Net neutral ionic charge based on common oxidation states | 31.2% | 22.5% | 26.2% | 37% of known inorganic materials |

| Deep Learning (SynthNN) | Data-driven classification trained on experimental data | 85.7% | 82.3% | 83.9% | 7× higher precision than charge-balancing |

The statistical shortcomings of charge-balancing are particularly striking when examining its limited coverage of known synthesized materials. Remarkably, only 37% of synthesized inorganic compounds in the Inorganic Crystal Structure Database (ICSD) satisfy charge-balancing criteria according to common oxidation states [11]. This coverage gap is even more pronounced in specific material classes; for ionic binary cesium compounds, only 23% are charge-balanced despite their highly ionic bonding characteristics [11].

Deep learning models like SynthNN demonstrate superior predictive power by achieving 7× higher precision compared to charge-balancing approaches [11]. This performance advantage extends beyond mere statistical metrics—in head-to-head material discovery comparisons against 20 expert material scientists, SynthNN outperformed all human experts, achieving 1.5× higher precision and completing discovery tasks five orders of magnitude faster than the best-performing human specialist [11].

Experimental Protocols & Methodologies

Charge-Balancing Protocol

The charge-balancing method operates on a straightforward computational protocol:

- Step 1: Oxidation State Assignment - Assign common oxidation states to each element in a candidate chemical formula using reference tables (e.g., +1 for alkali metals, +2 for alkaline earth metals, -2 for oxygen).

- Step 2: Charge Calculation - Calculate the net formal charge of the compound by summing the oxidation states of all constituent elements.

- Step 3: Synthesizability Classification - Classify the material as synthesizable if the net formal charge equals zero; otherwise, classify as non-synthesizable.

This protocol's principal limitation lies in its inflexible heuristic nature. It cannot account for diverse bonding environments present across different material classes, including metallic alloys with delocalized electrons, covalent materials with directional bonding, or ionic solids with non-integer charge transfer [11]. Furthermore, the method depends entirely on the accuracy and completeness of the reference oxidation state table, which may not capture unusual oxidation states that occur in complex materials.

Deep Learning Model (SynthNN) Protocol

The SynthNN framework employs a fundamentally different, data-driven approach:

- Step 1: Data Collection and Curation - Compile a comprehensive dataset of synthesized inorganic crystalline materials from the Inorganic Crystal Structure Database (ICSD), representing experimentally realized chemical compositions [11].

- Step 2: Representation Learning - Convert chemical formulas into learned atom embedding matrices using the atom2vec framework, which optimizes vector representations alongside other model parameters without requiring pre-defined chemical features [11].

- Step 3: Positive-Unlabeled Learning - Address the lack of confirmed unsynthesizable materials by augmenting the dataset with artificially generated chemical formulas and implementing a semi-supervised learning approach that treats unsynthesized materials as unlabeled data, probabilistically reweighting them according to their likelihood of being synthesizable [11].

- Step 4: Model Training - Train a deep neural network classifier on the synthesized and artificially generated compositions to distinguish synthesizable patterns, with hyperparameters including the ratio of artificially generated formulas to synthesized formulas (Nₛynth) and embedding dimensionality [11].

- Step 5: Validation - Evaluate model performance using standard classification metrics (precision, recall, F1-score) against holdout sets of known synthesized materials and artificially generated non-synthesized compositions.

This methodology enables the model to learn complex chemical principles directly from data, including charge-balancing relationships, chemical family trends, and ionicity patterns, without explicit programming of these concepts [11].

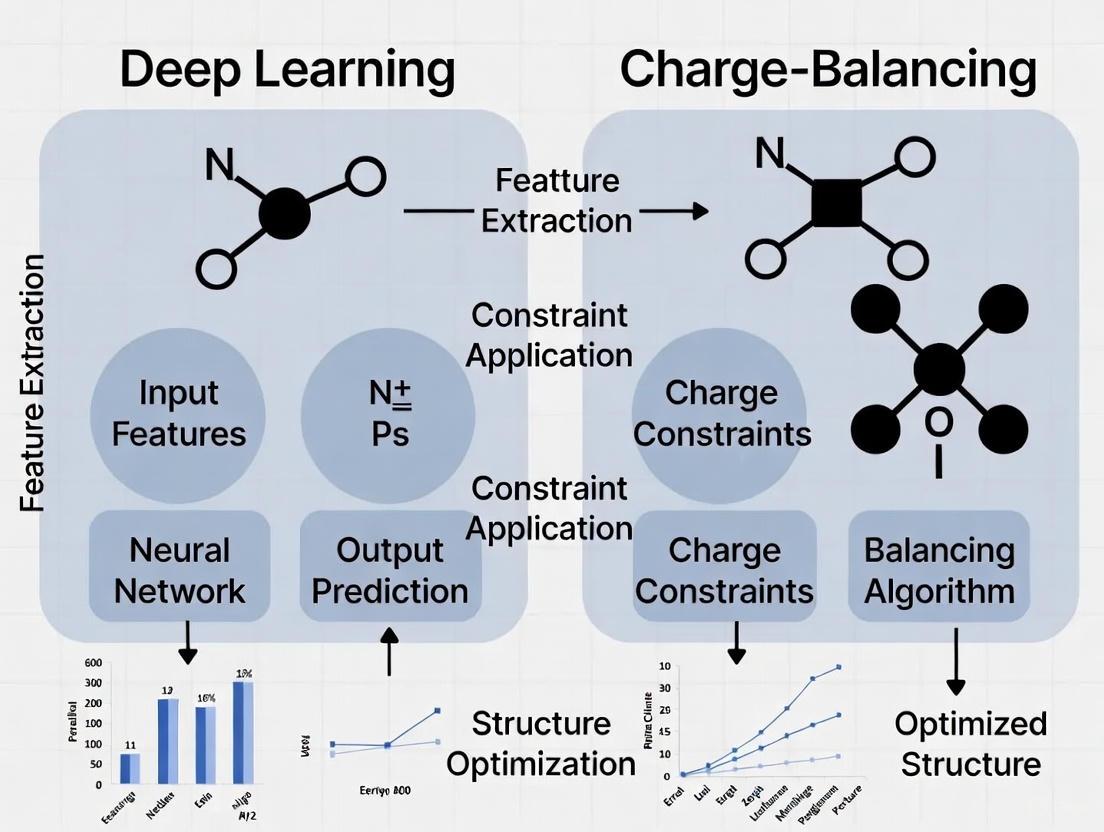

Diagram 1: Deep learning synthesizability prediction workflow

Signaling Pathways & Logical Frameworks

The conceptual frameworks governing charge-balancing versus deep learning approaches represent fundamentally different pathways from chemical input to synthesizability prediction. The following diagram illustrates these contrasting logical architectures:

Diagram 2: Contrasting logical frameworks of synthesizability assessment methods

The charge-balancing pathway follows a rigid, sequential process entirely dependent on a single physical principle—electroneutrality. This deterministic approach produces a binary classification without uncertainty quantification or consideration of competing factors that influence synthetic accessibility.

In contrast, the deep learning pathway employs a parallel, multi-factor assessment that learns to balance numerous considerations simultaneously. By training directly on experimental data, the model internalizes complex relationships between composition, structure, and synthesizability that extend beyond simple charge considerations, ultimately producing a probabilistic synthesizability score that reflects real-world synthetic outcomes more accurately.

Research Reagent Solutions

The experimental and computational methodologies discussed rely on specific research tools and datasets. The following table details essential resources for implementing synthesizability assessment in research settings.

Table 2: Essential research reagents and computational resources for synthesizability prediction

| Resource Name | Type | Function/Role | Access Method |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Materials Database | Comprehensive collection of experimentally synthesized inorganic crystal structures used for model training and validation [11] | Commercial license |

| atom2vec | Computational Framework | Learns optimal vector representations of chemical elements directly from distribution of synthesized materials [11] | Open-source implementation |

| Common Oxidation State Table | Reference Data | Reference values for formal oxidation states used in charge-balancing calculations [11] | Published literature |

| SynthNN | Deep Learning Model | Pre-trained synthesizability classification model for predicting synthetic accessibility of inorganic compositions [11] | Research publication |

| Weighted Blending & VAE | Data Synthesis Methods | Techniques for generating synthetic chemical compositions to address data limitations in training [12] | Custom implementation |

These resources represent foundational elements for both traditional and modern synthesizability assessment. The ICSD database provides the essential ground truth data, while computational frameworks like atom2vec enable the transition from heuristic rules to data-driven prediction. The emergence of pre-trained models like SynthNN offers researchers access to state-of-the-art prediction capabilities without requiring extensive model development resources.

This comparison reveals the substantial statistical limitations of traditional charge-balancing methods for synthesizability prediction. With coverage of only 37% of known synthesized materials and significantly lower precision compared to deep learning approaches, charge-balancing alone provides an insufficient foundation for modern materials discovery pipelines. The deep learning paradigm of learning synthesizability criteria directly from experimental data demonstrates superior predictive performance while automatically capturing complex chemical principles that extend beyond simple charge neutrality.

For researchers and drug development professionals, these findings underscore the importance of transitioning from heuristic-based to data-driven synthesizability assessment. As material discovery increasingly leverages computational screening and generative design, robust synthesizability prediction becomes essential for prioritizing candidate materials with the highest probability of experimental realization. Deep learning approaches represent a statistically superior solution to this critical challenge, offering the potential to accelerate discovery timelines and improve resource allocation in both academic and industrial research settings.

The discovery of new functional molecules is a central challenge in chemical science, crucial for addressing societal needs in healthcare, energy, and sustainability [13]. However, this process remains risky, complex, time-consuming, and resource-intensive. While computational methods, particularly artificial intelligence (AI), have enabled the rapid generation of numerous candidate molecules with excellent theoretical properties, a significant bottleneck remains: many of these computationally designed molecules are difficult or impossible to synthesize in a laboratory [13] [6]. This gap between theoretical design and practical synthesis severely limits the real-world impact of computational molecular discovery.

Synthesizability assessment aims to bridge this gap by predicting whether a proposed molecular structure can be synthesized through known chemical methods and available precursors. Conventional approaches for identifying promising synthesizable material structures have typically involved assessing thermodynamic formation energies or energy above the convex hull via density functional theory (DFT) calculations [4]. However, these methods exhibit limited accuracy; numerous structures with favorable formation energies have never been synthesized, while various metastable structures with less favorable formation energies are routinely synthesized in laboratories [4]. This discrepancy highlights the complex nature of chemical synthesis, which is influenced by kinetic factors, precursor availability, and specific reaction conditions.

The emergence of deep learning technologies has revolutionized synthesizability prediction, offering more accurate and comprehensive assessment tools. This guide provides an objective comparison of deep learning-driven synthesizability assessment methods against traditional approaches, detailing their experimental protocols, performance metrics, and practical applications to aid researchers, scientists, and drug development professionals in selecting appropriate tools for their molecular design workflows.

Comparative Analysis of Synthesizability Assessment Approaches

Traditional Synthesizability Assessment Methods

Traditional synthesizability assessment relies primarily on two fundamental strategies: thermodynamic stability analysis and heuristic scoring methods. Thermodynamic approaches evaluate crystal structure synthesizability using energy above convex hull calculations and phonon spectrum analyses to assess kinetic stability [4]. However, these methods achieve only moderate accuracy (74.1% for energy-based and 82.2% for phonon-based assessments) as they don't fully capture the complexities of actual synthesis processes [4].

Heuristic scoring methods for molecular synthesizability include several established algorithms. The Synthetic Accessibility score (SAscore) assesses compositional fragments and molecular complexity by analyzing historical synthesis knowledge from millions of synthesized chemicals, outputting a score from 1 to 10 [14]. The Synthetic Complexity score (SCScore) uses deep neural networks trained on 12 million reactions from the Reaxys database to quantify synthesis complexity, with output scores ranging from 1 to 5 [14]. The SYnthetic Bayesian Accessibility (SYBA) employs a Bernoulli Naive Bayes classifier to evaluate whether a molecule is easy- (ES) or hard-to-synthesize (HS) by assigning SYBA scores to molecular fragments [14]. These heuristic methods primarily assess molecular complexity rather than explicit synthesizability and are often correlated with known bio-active molecules, which may limit their generalizability to other chemical classes such as functional materials [6].

Deep Learning-Based Synthesizability Assessment

Deep learning approaches have dramatically improved synthesizability prediction accuracy by learning complex patterns from extensive datasets of known synthetic pathways. These methods can be broadly categorized into structure-based predictors and synthesis-centric generators.

Structure-based predictors analyze molecular representations to classify synthesizability. The Crystal Synthesis Large Language Models (CSLLM) framework utilizes three specialized LLMs to predict the synthesizability of arbitrary 3D crystal structures, possible synthetic methods, and suitable precursors [4]. Its Synthesizability LLM achieves remarkable accuracy (98.6%), significantly outperforming traditional thermodynamic and kinetic stability methods [4]. For small molecules, DeepSA is a deep learning-based chemical language model trained on 3,593,053 molecules using natural language processing algorithms that achieves an AUROC of 89.6% in discriminating hard-to-synthesize molecules [14]. GASA (Graph Attention-based assessment of Synthetic Accessibility) represents another advanced approach that classifies small organic compounds as ES or HS by capturing local atomic environments through attention mechanisms and incorporating bond features to understand global molecular structure [14].

Synthesis-centric generators take a fundamentally different approach by constraining the design process to focus exclusively on synthesizable molecules through generating synthetic pathways rather than just evaluating structures. SynFormer is a generative AI framework that ensures every generated molecule has a viable synthetic pathway by incorporating a scalable transformer architecture and a diffusion module for building block selection [13]. It generates synthetic pathways using readily available building blocks through robust chemical transformations, ensuring synthetic tractability within the limitations of those transformation rules [13]. Similarly, the Saturn model directly optimizes for synthesizability using retrosynthesis models in goal-directed generation, demonstrating the ability to generate synthesizable molecules satisfying multi-parameter drug discovery optimization tasks even under heavily constrained computational budgets [6].

Table 1: Performance Comparison of Selected Synthesizability Assessment Methods

| Method | Type | Input | Performance | Key Advantages |

|---|---|---|---|---|

| Thermodynamic (Energy above hull) | Traditional | Crystal structure | 74.1% accuracy [4] | Physics-based, no training data required |

| CSLLM | Deep Learning | Crystal structure (text representation) | 98.6% accuracy [4] | High accuracy, predicts methods & precursors |

| SAscore | Heuristic | Molecular structure | ROC-AUC: 0.76 (on energetic molecules) [15] | Fast computation, interpretable scores |

| DeepSA | Deep Learning | SMILES string | 89.6% AUROC [14] | High discrimination accuracy for molecules |

| SynFormer | Deep Learning | Synthetic pathway | High reconstruction rate [13] | Guarantees synthesizable designs |

Table 2: Domain-Specific Performance of Synthesizability Assessment Methods

| Application Domain | Recommended Methods | Performance Considerations |

|---|---|---|

| Drug-like molecules | SAscore, SYBA, DeepSA | Heuristics show good correlation with retrosynthesis solvability [6] |

| Energetic materials | SAscore | ROC-AUC = 0.76 on ECD100 benchmark [15] |

| 3D crystal structures | CSLLM | 98.6% accuracy, exceeds traditional methods by >16% [4] |

| Functional materials | Retrosynthesis-based (SynFormer, Saturn) | Heuristics correlations diminish, advantage to direct retrosynthesis [6] |

| Multi-objective optimization | Saturn, SynFormer | Direct synthesizability optimization under constrained budgets [6] |

Experimental Protocols and Methodologies

Dataset Construction and Preparation

Robust dataset construction is fundamental for training accurate deep learning models for synthesizability assessment. For crystal structures, the CSLLM framework employed a balanced dataset containing 70,120 synthesizable crystal structures from the Inorganic Crystal Structure Database (ICSD) and 80,000 non-synthesizable structures screened from 1,401,562 theoretical structures via a positive-unlabeled (PU) learning model [4]. The non-synthesizable examples were selected as structures with CLscores below 0.1 generated by a pre-trained PU learning model, with 98.3% of positive examples having CLscores greater than 0.1, validating this threshold [4].

For small organic molecules, the DeepSA model utilized training datasets consisting of 800,000 molecules, with 150,000 labeled by a multi-step retrosynthetic planning algorithm (Retro*) and 650,000 derived from SYBA [14]. Molecules requiring ≤10 synthetic steps were labeled as easy-to-synthesize (ES), while those requiring >10 steps or failing pathway prediction were labeled as hard-to-synthesize (HS) [14]. Independent test sets are crucial for proper evaluation: TS1 (3,581 ES and 3,581 HS molecules from SYBA), TS2 (30,348 molecules from RAscore), and TS3 (900 ES and 900 HS molecules from GASA) provide comprehensive benchmarking [14].

Specialized domain datasets have also been developed, such as the Energetic Compound Dataset 100 (ECD100) comprising 50 experimentally synthesized (ES) and 50 designed but unrealized (HS) energetic molecules for benchmarking synthesizability scores in materials science [15].

Model Architectures and Training Protocols

Deep learning models for synthesizability employ diverse architectures tailored to their specific tasks. The CSLLM framework utilizes three specialized large language models fine-tuned on a comprehensive dataset using a novel "material string" text representation that integrates essential crystal information including space group, lattice parameters, and Wyckoff position-based atomic coordinates [4]. This efficient text representation enables LLMs to process complex crystal structures without redundant information found in CIF or POSCAR formats [4].

DeepSA implements a chemical language model developed by training on millions of molecules using various natural language processing algorithms [14]. The model processes Simplified Molecular-Input Line-Entry System (SMILES) representations, with data augmentation through different SMILES representations of the same molecule to add advanced sampling operations [14].

SynFormer employs a transformer architecture with a denoising diffusion module for building block selection, using a postfix notation to represent synthetic pathways linearly with four token types: [START], [END], [RXN] (reaction), and [BB] (building block) [13]. This linear notation enables autoregressive decoding and accommodates any linear or convergent synthetic sequence [13]. The framework is trained on a simulated chemical space derived from 115 reaction templates and 223,244 commercially available building blocks, theoretically covering a chemical space broader than tens of billions of molecules [13].

For model evaluation, standard classification metrics are employed including accuracy (ACC), Precision, Recall, F-score, and Area Under the Receiver Operating Characteristic curve (AUROC) [14]. These metrics provide comprehensive assessment of model performance across different aspects of classification quality.

Workflow Visualization

The following diagram illustrates the conceptual workflow of deep learning-based synthesizability assessment, highlighting the comparison between traditional and deep learning approaches:

Table 3: Research Reagent Solutions for Synthesizability Assessment

| Resource | Type | Function | Example Applications |

|---|---|---|---|

| ICSD (Inorganic Crystal Structure Database) | Database | Source of synthesizable crystal structures for training | CSLLM training (70,120 structures) [4] |

| ChEMBL | Database | Curated bioactive molecules with drug-like properties | DeepSA and Saturn model training [14] [6] |

| Enamine REAL Space | Building Block Library | Commercially available molecular building blocks | SynFormer synthetic pathway generation [13] |

| SMILES Notation | Molecular Representation | Text-based molecular structure encoding | DeepSA input representation [14] |

| Material String | Crystal Representation | Efficient text representation for crystal structures | CSLLM input format [4] |

| Retro* | Retrosynthesis Algorithm | Synthetic pathway prediction for labeling training data | DeepSA dataset preparation [14] |

| AiZynthFinder | Retrosynthesis Tool | Synthetic route feasibility assessment | Saturn synthesizability oracle [6] |

Deep learning has undeniably transformed synthesizability assessment from heuristic approximation to accurate prediction. The experimental data clearly demonstrates that deep learning models consistently outperform traditional approaches across various domains, with accuracy improvements exceeding 16% for crystal structure synthesizability prediction [4] and significantly better discrimination for small organic molecules [14]. The emergence of synthesis-centric generative models like SynFormer and Saturn represents a paradigm shift from assessment to guaranteed synthesizability by design [13] [6].

Future developments will likely focus on several key areas: expansion to broader chemical domains including macromolecules and complex materials, improved sample efficiency for optimization under constrained computational budgets, integration of multi-objective optimization balancing synthesizability with target properties, and enhanced explainability to provide chemical insights alongside predictions. As these technologies mature and become more accessible, they promise to significantly accelerate the discovery and development of novel functional molecules across pharmaceutical, materials, and energy applications, ultimately bridging the gap between computational design and laboratory synthesis.

The discovery of new inorganic crystalline materials is a fundamental driver of innovation across technologies ranging from rechargeable batteries and photovoltaics to superconductors and electronic devices. Historically, materials discovery has relied on painstaking trial-and-error experimentation, an expensive and time-consuming process that has served as a critical bottleneck in technological advancement. The emergence of computational materials science and large-scale databases promised to accelerate this process, but a significant challenge persists: the majority of candidate materials identified through computational screening prove impractical to synthesize in the laboratory. This synthesizability challenge represents the critical gap between theoretical prediction and experimental realization in materials science.

Two fundamentally different approaches have emerged to address the synthesizability problem. The traditional approach relies on charge-balancing—a chemically intuitive method that filters candidate materials based on net ionic charge neutrality according to common oxidation states. In contrast, modern deep learning approaches leverage pattern recognition across vast databases of known materials to predict synthesizability directly from chemical composition or structure. This guide provides a comprehensive comparison of these competing methodologies, the key data sources that enable them, and their performance in predicting which hypothetical materials can be successfully synthesized.

Foundational Experimental Databases: The Inorganic Crystal Structure Database (ICSD)

The Inorganic Crystal Structure Database (ICSD) serves as the foundational repository of experimentally determined inorganic crystal structures, providing the "ground truth" data essential for training and validating synthesizability models [16].

| Feature | Description |

|---|---|

| Scope | World's largest database for completely determined inorganic crystal structures; contains structures published since 1913 [16] |

| Content | Experimental inorganic structures (including minerals, metals, alloys), metal-organic structures with inorganic applications, and theoretical structures [16] |

| Data Quality | Expert-curated with thorough quality checks; includes atomic coordinates, unit cell parameters, space group, and bibliographic data [16] |

| Role in Synthesizability | Provides positive examples (successfully synthesized materials) for machine learning training; serves as benchmark for model validation [11] [17] |

ICSD's comprehensive collection of experimentally realized structures makes it indispensable for materials research. Each entry undergoes rigorous quality assessment, ensuring reliable data for training predictive models. The database's historical coverage enables researchers to track synthesis trends over time and understand the evolution of synthetic capabilities [16].

Computational Materials Databases: The Materials Project

The Materials Project (MP) has emerged as a cornerstone for computational materials science, providing high-throughput density functional theory (DFT) calculations on a massive scale.

| Feature | Description |

|---|---|

| Scope | Open-source database containing DFT-relaxed crystal structures and calculated properties for over 126,000 materials [17] |

| Content | Calculated formation energies, band structures, density of states, phase diagrams, and other derived properties [18] |

| Key Metrics | Formation energy (FE), energy above hull (E(_{\text{hull}})) - measures of thermodynamic stability [17] |

| Role in Synthesizability | Provides features for ML models (stability metrics); source of candidate materials for virtual screening [19] [1] |

The Materials Project enables researchers to bypass expensive initial calculations by providing standardized computational data. Its application programming interface (API) allows for programmatic access and large-scale screening of materials based on multiple criteria [18]. The integration of ICSD tags within MP entries facilitates the identification of experimentally synthesized materials for model training [17].

Comparative Analysis: Charge-Balancing vs. Deep Learning Approaches

The Charge-Balancing Method

Charge-balancing represents the traditional approach to predicting synthesizability, rooted in chemical intuition and principles of ionic bonding. This method filters candidate materials based on whether they can achieve net charge neutrality using common oxidation states of their constituent elements.

The fundamental limitation of this approach becomes apparent when evaluated against experimental data: only 37% of known inorganic materials in ICSD are charge-balanced according to common oxidation states. Even among typically ionic compounds like binary cesium compounds, merely 23% adhere to charge-balancing rules [11]. This poor performance stems from the method's inability to account for diverse bonding environments in metallic alloys, covalent materials, and complex solid-state compounds where strict ionic models break down.

Deep Learning Approaches

Deep learning models represent a paradigm shift in synthesizability prediction, leveraging pattern recognition across entire materials databases rather than relying on simplified chemical heuristics.

Model Architectures and Training Approaches

Multiple deep learning architectures have been developed for synthesizability prediction:

SynthNN: A deep learning synthesizability model that uses atom2vec representations to learn optimal features directly from the distribution of synthesized materials, reformulating discovery as a classification task [11].

Graph Networks for Materials Exploration (GNoME): State-of-the-art graph neural networks that scale materials discovery by predicting stability from structure or composition alone [19] [1].

Fourier-Transformed Crystal Properties (FTCP): A representation that encodes crystal structures in both real and reciprocal space, combined with deep learning classifiers to predict synthesizability scores [17].

These models employ semi-supervised learning approaches to address the fundamental challenge in synthesizability prediction: while positive examples (synthesized materials) are well-documented in ICSD, negative examples (unsynthesizable materials) are rarely reported. Techniques include treating artificially generated compositions as unlabeled data and reweighting them probabilistically [11], or using positive-unlabeled learning algorithms that account for the incompletely labeled nature of materials data [11].

GNoME Workflow: Scaling Discovery Through Active Learning

The GNoME framework exemplifies the powerful active learning methodology that enables efficient exploration of chemical space. This iterative process of prediction, verification, and retraining has led to unprecedented scaling in materials discovery, culminating in the identification of 2.2 million new crystal structures stable with respect to previous calculations, with 380,000 considered the most stable candidates for experimental synthesis [19].

Quantitative Performance Comparison

Direct Performance Metrics

Experimental comparisons between charge-balancing and deep learning approaches reveal dramatic differences in predictive capability.

| Method | Precision | Recall | Key Limitations |

|---|---|---|---|

| Charge-Balancing | 37% (on known ICSD compounds) [11] | N/A | Fails to account for diverse bonding environments; inflexible constraint |

| DFT Formation Energy | ~50% (captures only half of synthesized materials) [11] | N/A | Fails to account for kinetic stabilization; expensive to compute |

| SynthNN | 7× higher than charge-balancing [11] | High (outperforms 20 human experts) [11] | Requires sufficient training data; black-box predictions |

| FTCP-based Model | 82.6% (ternary crystals) [17] | 80.6% (ternary crystals) [17] | Depends on quality of structural representation |

| GNoME | >80% (structural prediction) [1] | 33% (composition-only prediction) [1] | Massive computational resources required for training |

The performance advantage of deep learning models extends beyond direct metrics. In a head-to-head comparison against domain experts, SynthNN achieved 1.5× higher precision than the best human expert while completing the task five orders of magnitude faster [11]. This demonstrates not only the accuracy but also the remarkable efficiency of deep learning approaches for materials screening.

Discovery Outcomes and Experimental Validation

The most compelling evidence for deep learning approaches comes from their demonstrated ability to discover novel, stable materials that escape traditional chemical intuition.

| Discovery Metric | Traditional Methods | Deep Learning (GNoME) |

|---|---|---|

| Total Stable Materials | ~48,000 (before GNoME) [1] | 421,000 (after GNoME) [1] |

| New Structures Discovered | N/A | 2.2 million [19] |

| Experimentally Realized | N/A | 736 independently synthesized [19] |

| Novel Prototypes | ~8,000 (Materials Project) [1] | 45,500 (5.6× increase) [1] |

Remarkably, GNoME has substantially expanded materials discovery in combinatorially complex spaces, successfully identifying stable structures with five or more unique elements that previously posed significant challenges for computational discovery [1]. The external validation of 736 GNoME-predicted materials that have been independently synthesized provides compelling evidence for the real-world predictive power of these approaches [19].

Experimental Protocols and Methodologies

Benchmarking Synthesizability Models

Robust evaluation of synthesizability prediction methods requires standardized protocols and benchmarking datasets:

Data Splitting: Temporal splitting, where models are trained on materials discovered before a certain date (e.g., 2015) and tested on those discovered after (e.g., post-2019), provides a realistic assessment of true predictive capability [17].

Performance Metrics: Precision and recall alone are insufficient; the F1-score provides a balanced metric particularly important for positive-unlabeled learning scenarios [11].

Baseline Comparisons: Effective benchmarking must include comparisons against random guessing, charge-balancing, and DFT-based stability predictions [11].

The Materials Project API enables systematic access to data for such benchmarking studies, allowing researchers to query materials by composition, crystal system, stability criteria, and other relevant filters [18].

Key Research Reagents and Computational Tools

Successful implementation of synthesizability prediction requires specific data resources and software tools:

| Research Reagent | Function | Access Method |

|---|---|---|

| ICSD Data | Ground truth for training synthesizability models | Commercial license [16] |

| Materials Project API | Programmatic access to computed materials properties | Free with registration [18] |

| pymatgen | Python materials analysis for structure manipulation | Open-source library [17] |

| VASP Software | DFT calculations for model verification and training | Commercial license [1] |

| CGCNN/ALIGNN | Graph neural network architectures for materials | Open-source implementations [17] |

The comprehensive comparison between charge-balancing and deep learning approaches reveals a clear paradigm shift in materials synthesizability prediction. While charge-balancing offers chemical intuition and computational simplicity, its poor performance (37% on known compounds) renders it inadequate for reliable materials discovery. Deep learning models, particularly graph neural networks like GNoME and SynthNN, have demonstrated unprecedented predictive capabilities, achieving >80% precision in stability prediction and expanding the number of known stable materials by almost an order of magnitude.

The scalability of deep learning approaches is evidenced by GNoME's discovery of 2.2 million new crystals and the independent experimental synthesis of 736 predicted structures. These models develop emergent capabilities, including accurate prediction of complex multi-element compounds that previously challenged computational methods. Furthermore, they achieve this while being computationally efficient enough to screen billions of candidate compositions.

Future developments will likely focus on integrating synthesis route prediction with synthesizability assessment, incorporating kinetic factors alongside thermodynamic stability, and improving model interpretability to extract new chemical insights. As deep learning models continue to benefit from scaling laws—improving predictively with more data and computation—they promise to fundamentally transform how we discover and develop new materials for technological applications.

Inside the Models: Architectures and Workflows of Modern Synthesizability Predictors

The following table summarizes the core performance metrics of leading composition-based deep learning models for synthesizability prediction, benchmarked against traditional methods.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method / Model | Core Principle | Key Performance Metric | Performance Value | Key Advantage |

|---|---|---|---|---|

| SynthNN [11] [20] | Deep learning on known compositions (PU Learning) | Precision in discovery | 7x higher than DFT formation energy [11] | Learns implicit chemical rules; composition-only input |

| Charge-Balancing [11] | Net neutral ionic charge | Coverage of known materials | 37% of ICSD compounds [11] | Simple, chemically intuitive |

| CSLLM [4] | Fine-tuned Large Language Model | Prediction Accuracy | 98.6% [4] | High accuracy; can also predict methods & precursors |

| SC Model [17] | FTCP representation & deep learning | Overall Accuracy | 82.6% (Precision) [17] | Incorporates real and reciprocal space crystal features |

| SynCoTrain [3] | Dual-classifier co-training (PU Learning) | High generalizability | High recall on test sets [3] | Mitigates model bias; robust for oxides |

Predicting whether a hypothetical inorganic crystalline material can be successfully synthesized is a fundamental challenge in accelerating materials discovery. Traditional approaches have relied on chemical intuition and simplified physical heuristics, most notably the charge-balancing criterion, which assumes that synthesizable compounds must have a net neutral ionic charge [11]. However, an analysis of the Inorganic Crystal Structure Database (ICSD) reveals a critical shortcoming: only about 37% of known synthesized compounds are charge-balanced according to common oxidation states [11]. This indicates that real-world synthesizability is governed by factors beyond simple charge neutrality, including kinetic stabilization, complex bonding environments, and experimental technological constraints [3].

The limitations of traditional proxies have motivated a shift toward data-driven approaches. Composition-based deep learning models represent a paradigm shift, learning the complex, implicit "rules" of synthesizability directly from the vast and growing database of known synthesized materials. By operating on chemical formulas alone, these models can screen billions of candidate materials without requiring pre-determined crystal structures, which are typically unknown for novel compounds [11]. This guide provides a detailed comparison of these emerging deep learning methodologies, focusing on their experimental protocols, performance, and practical utility for researchers.

Detailed Experimental Protocols and Model Methodologies

The SynthNN Framework

The SynthNN model exemplifies a semi-supervised Positive-Unlabeled (PU) learning approach, which is designed to handle the inherent lack of confirmed "unsynthesizable" examples in public databases [11] [20].

- Data Curation and Training:

- Positive Data: Synthesized materials are sourced from the Inorganic Crystal Structure Database (ICSD), representing the "Positive" class [11] [20].

- Unlabeled Data: A large set of artificially generated chemical formulas serves as the "Unlabeled" data. The model is trained to distinguish synthesized materials from this background set, while accounting for the possibility that some unlabeled materials might be synthesizable but not yet discovered [11].

- Input Representation: The model uses an atom2vec embedding, which learns an optimal numerical representation for each element directly from the distribution of the data, without relying on pre-defined chemical knowledge [11].

- Model Architecture and Workflow: The following diagram illustrates the SynthNN prediction workflow.

The CSLLM Framework

The Crystal Synthesis Large Language Model (CSLLM) framework represents a recent breakthrough by adapting large language models for crystal structure analysis [4].

- Data Curation: A balanced dataset was constructed from 70,120 synthesizable structures from the ICSD and 80,000 non-synthesizable structures identified from theoretical databases using a pre-trained PU-learning model (CLscore < 0.1) [4].

- Input Representation: A key innovation is the "material string," a concise text representation of a crystal structure that efficiently encodes space group, lattice parameters, and atomic coordinates, avoiding the redundancy of CIF or POSCAR files [4].

- Model Architecture: The framework employs three specialized LLMs fine-tuned for distinct tasks:

- Synthesizability LLM: Predicts whether a given structure is synthesizable.

- Method LLM: Classifies the likely synthetic method (e.g., solid-state or solution).

- Precursor LLM: Identifies suitable solid-state precursors for binary and ternary compounds [4].

The SynCoTrain Framework

SynCoTrain addresses the challenge of model bias and generalization through a collaborative, dual-classifier approach [3].

- Data: The model is trained specifically on oxide crystals to ensure dataset consistency and reduce variability [3].

- Model Architecture: It uses a co-training framework with two distinct Graph Convolutional Neural Networks (GCNNs):

- ALIGNN (Atomistic Line Graph Neural Network): Encodes atomic bonds and bond angles, providing a "chemist's" perspective.

- SchNet (SchNetPack): Uses continuous-filter convolutional layers, providing a "physicist's" perspective on atomic interactions [3].

- Training Process: The two models are trained iteratively. In each round, they exchange their most confident predictions on the unlabeled data, effectively teaching each other and refining the decision boundary collaboratively. This process mitigates individual model bias and enhances out-of-distribution generalization [3].

Quantitative Performance Benchmarking

Comparative Performance Metrics

Table 2: Detailed Quantitative Benchmarking of Models

| Metric | SynthNN [11] | Charge-Balancing [11] | CSLLM [4] | SC Model [17] |

|---|---|---|---|---|

| Precision | 7x higher than DFT | Very Low | N/A | 82.6% |

| Accuracy | N/A | N/A | 98.6% | 80.6% Recall |

| Human Expert Comparison | 1.5x higher precision | Outperformed by SynthNN | N/A | N/A |

| Speed vs. Human Expert | 5 orders of magnitude faster | N/A | N/A | N/A |

| Stability-based Baseline | Outperforms | N/A | 74.1% (Energy above hull) | N/A |

Performance Validation and Generalization

- Temporal Validation: The SC model was validated temporally by training on data from before 2015 and testing on materials added to the Materials Project after 2019. It achieved a high true positive rate of 88.6%, demonstrating its ability to identify new, viable materials that were discovered later [17].

- Generalization to Complex Structures: The CSLLM model was tested on crystal structures with complexity significantly exceeding its training data, achieving a remarkable 97.9% accuracy, which indicates exceptional generalization capability [4].

Essential Research Toolkit

Table 3: Key Reagents and Resources for Synthesizability Research

| Resource Name | Type | Function in Research | Key Features |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [11] [4] [17] | Database | Primary source of "Positive" (synthesized) data for model training and validation. | Curated repository of experimentally determined inorganic crystal structures. |

| Materials Project (MP) [21] [17] [3] | Database | Source of "Unlabeled" or "Theoretical" data; provides DFT-calculated properties for benchmarking. | Large database of computed material properties and crystal structures. |

| Fourier-Transformed Crystal Properties (FTCP) [17] | Crystal Representation | Encodes crystal structure information in both real and reciprocal space for ML models. | Captures periodicity and elemental properties more comprehensively than graphs alone. |

| Atom2Vec [11] [20] | Algorithm / Representation | Learns optimal elemental embeddings directly from data for composition-based models. | Data-driven representation that captures implicit chemical relationships. |

| Crystal Graph Convolutional Neural Network (CGCNN) [17] | Model Architecture | A standard GNN for processing crystal structures; often used as a baseline. | Represents crystals as graphs with atoms as nodes and bonds as edges. |

Composition-based deep learning models have demonstrably surpassed traditional heuristics like charge-balancing and even DFT-based stability metrics in predicting material synthesizability. Models like SynthNN provide a powerful, fast, and accessible filter for high-throughput screening of novel compositions, while newer approaches like CSLLM and SynCoTrain push the boundaries of accuracy and generalizability.

The field is evolving towards multi-modal frameworks that integrate composition, structure, and even synthesis literature to not only predict synthesizability but also recommend viable synthesis pathways and precursors [4] [21]. As these models mature and are integrated into automated discovery pipelines, they are poised to dramatically accelerate the transition from theoretical material design to experimentally realized functional materials.

The accurate prediction of material properties is a cornerstone of modern scientific discovery, accelerating the development of new materials and drugs. In this pursuit, structure-aware models that represent crystals and molecules as graphs have emerged as powerful tools. These models leverage the natural graph structure of chemical systems, where atoms serve as nodes and chemical bonds as edges. By integrating this structural information with advanced neural architectures—primarily Graph Neural Networks (GNNs) and Transformers—researchers can capture complex atomic interactions and predict properties with remarkable accuracy. This guide objectively compares the performance of these evolving architectures, situating them within the broader research context of deep learning approaches. We provide a detailed analysis of experimental methodologies, quantitative performance across standardized benchmarks, and essential resources for researchers and drug development professionals.

Model Architectures and Methodologies

Graph Neural Networks (GNNs) for Crystals and Molecules

GNNs operate on the principle of message passing, where nodes aggregate information from their local neighbors to build meaningful representations. In the context of crystals and molecules, this allows the model to learn from the direct chemical environment of each atom.

- CGCNN (Crystal Graph Convolutional Neural Network): A foundational model that represents a crystal as a graph where nodes denote atoms and edges represent interatomic interactions. It updates atom features by convolution with their neighbors [22].

- ALIGNN (Atomistic Line Graph Neural Network): Enhances predictive accuracy by explicitly incorporating bond angles through a line graph of the original crystal graph, enabling the model to capture three-body interactions and angular information [22] [23].

- DenseGNN: Introduces Dense Connectivity Network (DCN) and Local Structure Order Parameters Embedding (LOPE) strategies. This architecture facilitates dense information propagation across network layers, mitigates the oversmoothing problem common in deep GNNs, and supports the construction of very deep networks for superior performance on various material datasets [23].

- Gformer: A model focusing on the periodicity of crystal structures. It integrates periodic encoding implemented through self-connected edges, graph attention convolution, and a global feature extraction module. This design makes the model invariant to periodicity, allowing it to explicitly capture repetitive patterns in crystal structures for improved property prediction [22].

Graph Transformer Models

Transformers, renowned for their success in natural language processing, have been adapted for graph-structured data. Their core self-attention mechanism allows each node to interact with every other node, capturing global dependencies in a single layer.

- Graphormer: Utilizes centrality encoding, spatial encoding, and edge encoding to integrate structural node importance and 3D spatial relationships. It employs a distance-biased attention mechanism, where the attention score between nodes is adjusted based on their topological or spatial proximity [24] [25].

- Matformer: Designed specifically for crystals, it incorporates the concept of periodic invariance and periodic pattern encoding to better represent a crystal's infinite periodic structure [22].

- EHDGT: A hybrid model that enhances both GNN and Transformer components. It introduces edge-level positional encoding, uses GNNs on subgraphs for local feature learning, and incorporates edges into the Transformer's attention calculation. A gate-based fusion mechanism dynamically integrates the outputs of the GNN and Transformer streams [26].

- Standard Transformers (without graph priors): Emerging research explores using unmodified Transformers on Cartesian atomic coordinates, without predefined molecular graphs. These models learn physical relationships, such as attention weights that decay with interatomic distance, directly from the data [27].

The table below summarizes the core characteristics and innovations of the key models discussed.

Table 1: Architectural Comparison of Featured Models

| Model Name | Architecture Type | Key Innovation | Handles Periodicity |

|---|---|---|---|

| CGCNN [22] | GNN | First application of GNNs to crystal property prediction | Implicitly |

| ALIGNN [22] [23] | GNN | Explicitly incorporates bond angles via line graphs | Implicitly |

| DenseGNN [23] | GNN | Dense connectivity & local structure embedding to build deeper networks | Implicitly |

| Graphormer [24] [25] | Transformer | Centrality, spatial, and edge encodings in attention mechanism | No |

| Matformer [22] | Transformer | Periodic invariance and periodic pattern encoding | Yes |

| EHDGT [26] | Hybrid (GNN+Transformer) | Gate-based fusion of local (GNN) and global (Transformer) features | Via input encoding |

The following diagram illustrates the core workflow of a hybrid GNN-Transformer model, such as EHDGT, which combines the strengths of both architectural paradigms.

Diagram 1: Workflow of a hybrid GNN-Transformer model for crystal property prediction.

Experimental Protocols and Performance Benchmarking

Standardized Experimental Setup

To ensure fair and objective comparison, models are typically evaluated on publicly available datasets using consistent training, validation, and testing splits. Key experimental protocols include:

- Datasets: Standard benchmarks include the Materials Project (MP) and JARVIS-DFT, which contain thousands of Density Functional Theory (DFT)-calculated properties for crystals, and molecular datasets like QM9 [22] [23]. The new, large-scale OMol25 dataset is also being used for evaluating machine learning interatomic potentials (MLIPs) [27].

- Input Features: Models use initial node features such as atomic number, mass, and radius. Edge features often include interatomic distance, expanded with Gaussian functions to create a continuous vector [23].

- Training Procedure: Models are trained in a supervised manner to predict scalar properties (e.g., formation energy, bandgap) using mean absolute error (MAE) as a common loss function. Training involves standard optimizers like Adam with a defined learning rate schedule [22] [23].

- Evaluation Metrics: The primary metric for regression tasks is often the Mean Absolute Error (MAE) between the model's predictions and the DFT-calculated ground truth values. Lower MAE indicates better performance.

Quantitative Performance Comparison

The following tables summarize the published performance (MAE) of various models on key material property prediction tasks. Note that results are sourced from individual publications and direct, perfectly controlled comparisons are not always available.

Table 2: Performance Comparison on JARVIS-DFT Dataset (MAE) [22]

| Model | Formation Energy (meV/atom) | Band Gap (meV) |

|---|---|---|

| CGCNN | 28 | 190 |

| SchNet | 31 | 210 |

| MEGNET | 25 | 180 |

| GATGNN | 24 | 170 |

| ALIGNN | 21 | 150 |

| Matformer | 19 | 140 |