Beyond Charge Neutrality: The Evolving Role of Charge Balancing in Predicting Inorganic Material Synthesizability

This article explores the critical yet complex role of charge balancing in predicting the synthesizability of inorganic crystalline materials, a topic of paramount importance for researchers in solid-state chemistry and...

Beyond Charge Neutrality: The Evolving Role of Charge Balancing in Predicting Inorganic Material Synthesizability

Abstract

This article explores the critical yet complex role of charge balancing in predicting the synthesizability of inorganic crystalline materials, a topic of paramount importance for researchers in solid-state chemistry and materials discovery. We first establish the foundational chemical principle of charge neutrality and its historical use as a proxy for stability. The content then delves into modern computational methodologies, including machine learning models like SynthNN and human-knowledge-guided filter pipelines, which transcend traditional rules. We address the significant limitations and troubleshooting of the charge-balancing rule, evidenced by its surprisingly low accuracy among known compounds. Finally, we present a comparative analysis of these new data-driven approaches against traditional methods like DFT-based formation energy calculations and expert intuition, highlighting their superior precision and transformative potential for accelerating the discovery of novel, synthetically accessible materials for biomedical and clinical applications.

The Chemical Principle of Charge Balancing: A Foundational Rule for Material Stability

Defining Charge Neutrality in Inorganic Crystal Chemistry

Core Principles and Mathematical Formulation

Charge neutrality is a foundational principle in inorganic crystal chemistry, asserting that the sum of positive and negative charges from constituent ions in a compound must equal zero, resulting in a net neutral charge for the overall material [1]. This principle is paramount for assessing the thermodynamic stability and synthesizability of inorganic crystalline materials [2].

The formal charge neutrality condition for a compound with stoichiometry (AwBxCyDz) is mathematically expressed as shown in Equation 1 [1]:

[ wqA + xqB + yqC + zqD = 0 ]

Here, (w, x, y, z) represent the stoichiometric coefficients, and (qA, qB, qC, qD) represent the formal oxidation states of species A, B, C, and D, respectively [1]. This equation provides the foundational rule for evaluating potential inorganic compounds.

This electron-counting rule is applicable to a wide range of inorganic materials, particularly those characterized by ionic and covalent bonding [1]. However, its utility as a sole predictor of synthesizability is limited for metallic alloys, intermetallic compounds, and non-stoichiometric phases, where different bonding and electron-counting principles apply [1] [3]. For instance, an analysis of known inorganic materials reveals that only about 37% of synthesized compounds in databases like the ICSD adhere to this simple charge-balancing rule, with the figure dropping to just 23% for binary cesium compounds [3].

Practical Implementation and Methodologies

Chemical Filtering Workflow

The charge neutrality principle is practically implemented as a "hard filter" in high-throughput computational pipelines for screening hypothetical inorganic materials [2]. Its application involves a sequence of steps to evaluate the viability of a proposed chemical composition.

Protocol: Applying the Charge Neutrality Filter

- Assign Oxidation States: For each element in the proposed composition, assign plausible oxidation states based on its known chemistry (e.g., O = -2, Na = +1, Ca = +2, Al = +3) [1] [2].

- Calculate Total Formal Charge: Multiply each assigned oxidation state by its stoichiometric coefficient and sum these products for all elements in the compound [1].

- Evaluate Neutrality: If the sum equals zero, the composition is considered "charge-neutral" and passes this filter. Compositions resulting in a non-zero sum are typically classified as "forbidden" [1].

This filter is often used alongside other chemical rules, such as the electronegativity balance filter, which requires that the most electronegative ion in the compound also carries the most negative formal charge [1] [2].

Quantitative Performance of Chemical Filters

The effectiveness of the charge neutrality filter, both in isolation and as part of a broader filtering strategy, is quantified by its ability to categorize vast combinatorial chemical spaces. The following table summarizes the distribution of binary, ternary, and quaternary compounds after applying charge neutrality and electronegativity balance filters, cross-referenced with their presence in the Materials Project database [1].

Table 1: Categorization of Enumerated Inorganic Compounds after Chemical Filtering

| System | Total Unique Combinations | Standard (Allowed, Known) | Missing (Allowed, Unknown) | Interesting (Forbidden, Known) | Unlikely (Forbidden, Unknown) |

|---|---|---|---|---|---|

| Binary (AwBx) | 225,879 | 3,627 (1.6%) | 9,837 (4.4%) | 6,354 (2.8%) | 206,061 (91.2%) |

| Ternary (AwBxCy) | 77,637,589 | 24,713 (0.03%) | 10,754,728 (13.9%) | 12,153 (0.01%) | 66,845,995 (86.1%) |

| Quaternary (AwBxCyDz) | 16,902,534,325 | 16,455 (~0.00%) | 2,909,418,527 (17.2%) | 962 (~0.00%) | 13,993,098,381 (82.8%) |

Data sourced from Park et al. (2025) Faraday Discuss. [1]

The data reveals that the chemical space is sparsely populated. While the charge neutrality filter is effective in drastically reducing the candidate space (e.g., only ~6% of binary compounds are "Allowed"), the "Missing" category represents a significant reservoir of potentially synthesizable materials that have not yet been realized in databases [1].

Successful research involving charge balancing and inorganic material synthesis relies on a suite of computational and experimental resources.

Table 2: Essential Resources for Charge and Synthesizability Research

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| SMACT(Semiconducting Materials from Analogy and Chemical Theory) | Software Library | Enables rapid screening over vast combinatorial chemical spaces with integrated chemical filters like charge neutrality [1]. |

| Oxidation State Tables | Reference Data | Provide common oxidation states for elements, which are essential for assigning formal charges in the neutrality calculation [3] [2]. |

| Pauling Electronegativity Scale | Reference Data | Used to apply the electronegativity balance filter, ensuring the most electronegative atom has the most negative charge [1]. |

| iSFAC (Ionic Scattering Factors) | Experimental Method | Determines partial atomic charges experimentally via electron diffraction, allowing direct measurement of charge distribution in a crystal [4]. |

| Pymatgen | Software Library | Aids in materials analysis, including processing crystal structures and accessing database entries for validation [2]. |

Charge Balancing in Modern Synthesizability Research

The role of charge neutrality has evolved from a standalone heuristic to a component integrated with advanced computational models. While foundational, charge balancing alone is an incomplete proxy for synthesizability [3]. Modern research focuses on supplementing this basic filter with other chemical rules and data-driven approaches.

Protocol: A Multi-Filter Screening Pipeline

A representative pipeline for identifying novel "perovskite-inspired" materials demonstrates the integration of charge neutrality with other filters [2]:

- Initial Enumeration: Define elemental spaces for A-site (e.g., Cs, K, Na), B-site (e.g., Bi, In, Pb), and X-site (e.g., I, Cl, Br) cations and anions to generate hypothetical ternary compositions AiBjXk [2].

- Apply Hard Filters:

- Apply Soft Filters:

- Validation: Cross-reference the filtered list with experimental databases (e.g., ICSD, Materials Project) to confirm novelty and identify candidates for experimental synthesis [2].

This integrated approach can reduce a pool of over 100,000 initial candidates to a few dozen high-priority targets for further investigation [2].

The Rise of Machine Learning Models

Machine learning models now leverage the entire space of known synthesized materials to predict synthesizability, learning underlying chemical principles—including charge-balancing and ionicity—directly from the data [3]. For example:

- SynthNN: A deep learning model that uses compositional embeddings (

atom2vec) to predict synthesizability with higher precision than traditional formation energy calculations or charge-balancing criteria alone [3]. - Crystal Synthesis LLMs (CSLLM): A framework of fine-tuned Large Language Models that can predict the synthesizability of arbitrary 3D crystal structures with 98.6% accuracy, significantly outperforming methods based solely on thermodynamic stability or simple chemical rules [5]. These models can also suggest synthetic methods and suitable precursors [5].

This evolution underscores a key trend: charge neutrality remains a critical, chemically intuitive starting point, but its true power is unlocked when combined with other knowledge and data-driven models to navigate the complex landscape of inorganic material synthesis.

For decades, the principle of charge balancing has served as a foundational heuristic in the prediction and rationalization of inorganic material synthesizability. This concept, rooted in the fundamental chemical intuition that stable compounds tend toward net neutral ionic charge, has provided synthetic chemists with a powerful initial filter for screening hypothetical materials. The underlying premise is elegantly simple: for a compound to be synthetically accessible, the sum of charges from its constituent ions, based on their common oxidation states, should approximate zero. This approach operates on the assumption that significantly charge-imbalanced compositions would inherently lack thermodynamic stability, making them unlikely synthetic targets. Within the context of modern materials research, understanding this traditional proxy is crucial, as it continues to inform contemporary computational screening pipelines and machine learning models, even as its limitations become increasingly quantified [2] [3].

The persistence of charge balancing as a screening tool is understandable given its direct relationship to ionic bonding models taught in introductory chemistry. When generating hypothetical compounds, researchers can quickly compute formal oxidation states and apply the charge neutrality principle before undertaking more computationally intensive density functional theory (DFT) calculations. This pre-screening step efficiently reduces the vast space of possible chemical compositions to a more manageable subset of seemingly plausible candidates. However, as research into synthesizability prediction has evolved, the performance of charge balancing as a standalone predictor has been systematically evaluated, revealing significant gaps between its theoretical ideal and experimental reality [3].

Quantitative Performance Assessment of Charge Balancing

Recent large-scale analyses have quantified the effectiveness of the charge-balancing approach for predicting synthesizability. The performance is measured by calculating the percentage of known, synthesized inorganic materials that also satisfy the charge neutrality condition according to common oxidation states. The results reveal substantial limitations in this traditional heuristic as a comprehensive synthesizability filter.

Table 1: Performance of Charge Balancing as a Synthesizability Predictor

| Material Category | Charge-Balanced Synthesized Materials | Key Findings |

|---|---|---|

| All Inorganic Crystalline Materials | 37% [3] | Majority (63%) of known synthesized compounds are not charge-balanced |

| Binary Cesium Compounds | 23% [3] | Poor performance even in typically ionic systems |

| General Ionic Solids | Limited accuracy [3] | Inflexible constraint cannot account for different bonding environments |

The data demonstrates that charge balancing alone is insufficient for reliable synthesizability prediction. While chemically intuitive, this approach incorrectly classifies a majority of known synthesizable materials as "unsynthesizable." Its inflexibility fails to account for diverse bonding environments in metallic alloys, covalent materials, and many ionic solids that deviate from ideal charge-balanced stoichiometries [3].

Methodological Framework: Implementing Charge Balance Screening

Core Algorithm and Workflow

The technical implementation of charge balance screening follows a standardized methodology applicable to any hypothetical inorganic composition. The procedure involves assigning oxidation states based on established chemical rules and verifying net neutrality.

Experimental Protocol: Charge Balance Verification

Oxidation State Assignment: For a target composition A(x)B(y)C(_z), assign probable oxidation states to each element using reference tables of common values (e.g., O = -2, alkali metals = +1, alkaline earth metals = +2, halogens = -1).

Charge Calculation: Multiply each element's oxidation state by its stoichiometric coefficient and sum across all elements: Total Charge = (x × oxidation state of A) + (y × oxidation state of B) + (z × oxidation state of C).

Neutrality Check: If Total Charge = 0, the compound is classified as "charge-balanced" and passes this synthesizability filter. Non-zero results lead to classification as "non-charge-balanced."

This algorithm is frequently implemented as the initial filter in multi-stage screening pipelines for computational materials discovery [2].

Integrated Screening Pipeline

In modern practice, charge balancing is rarely used alone. It is typically embedded within a larger framework of complementary filters that incorporate additional chemical principles. A representative pipeline demonstrates how charge balancing integrates with other knowledge-driven filters:

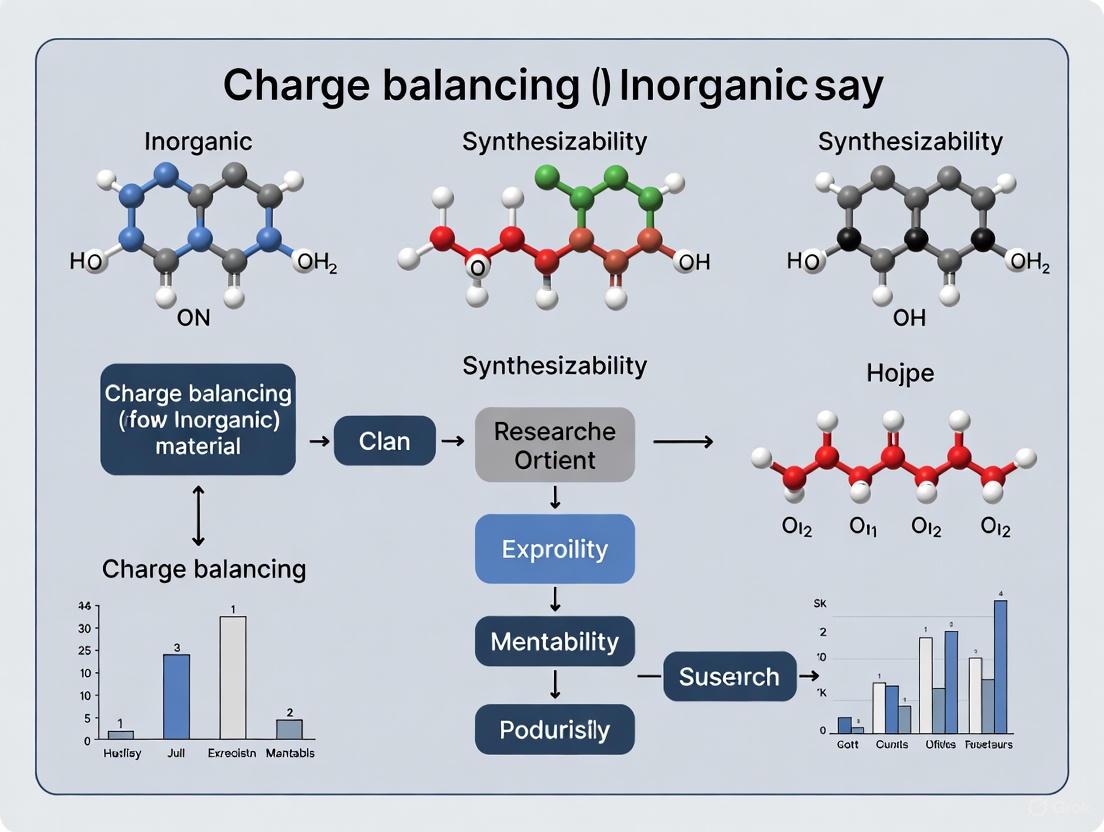

Diagram 1: Multi-stage screening pipeline. This workflow shows how charge balancing acts as an initial filter in a larger sequence of human-knowledge-driven rules for identifying synthesizable materials [2].

The Scientist's Toolkit: Research Reagents and Materials

Table 2: Essential Computational and Experimental Resources

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Oxidation State Tables | Reference Data | Provides common oxidation states for elements | Assigning formal charges for charge balance calculations [2] |

| Materials Project Database | Computational Database | Repository of known and DFT-calculated materials structures | Source of known synthesizable materials for validation and training [2] [3] |

| Inorganic Crystal Structure Database (ICSD) | Experimental Database | Comprehensive collection of experimentally characterized inorganic crystal structures | Ground truth dataset for benchmarking synthesizability predictors [3] |

| pymatgen | Software Library | Python materials analysis | Automating oxidation state assignment and charge balance checks [2] |

Contemporary Context and Evolution Beyond Traditional Proxy

The quantified limitations of charge balancing have catalyzed the development of more sophisticated, data-driven synthesizability predictors. Modern approaches directly address the shortcomings of the traditional proxy by learning complex patterns from extensive databases of synthesized materials.

Machine learning models, such as SynthNN (Synthesizability Neural Network), represent a paradigm shift. These models are trained on the entire space of synthesized inorganic chemical compositions from databases like the ICSD, learning the subtle chemical principles that govern synthesizability—including but not limited to charge balancing. Remarkably, even without explicit programming of chemical rules, models like SynthNN learn the importance of charge-balancing, chemical family relationships, and ionicity directly from the data distribution of realized materials [3].

These advanced models demonstrate superior performance compared to the charge-balancing heuristic. In direct benchmarking, SynthNN identified synthesizable materials with 7× higher precision than using DFT-calculated formation energies alone and significantly outperformed the charge-balancing baseline. Furthermore, in a head-to-head discovery comparison, SynthNN achieved 1.5× higher precision than the best human expert and completed the task five orders of magnitude faster, demonstrating the powerful synergy between human chemical intuition encoded in rules like charge balancing and data-driven pattern recognition [3].

The most effective modern pipelines for materials discovery now combine these approaches. They may use charge balancing as an initial, computationally inexpensive filter to reduce the candidate pool, subsequently applying more powerful ML-based synthesizability classifiers and DFT stability calculations to prioritize the most promising candidates for experimental synthesis [6]. This integrated strategy leverages the chemical intuition of traditional proxies while overcoming their limitations through data-driven validation.

The pursuit of novel inorganic materials is fundamentally constrained by synthesizability. While computational methods can generate millions of hypothetical compounds, reliably predicting which ones can be experimentally realized remains a central challenge. This guide explores the foundational role of ionic charge balance as a primary filter for thermodynamic favorability, a critical determinant in the synthesizability of inorganic crystalline materials. Charge balancing serves as a computationally inexpensive, chemically intuitive proxy for stability, predicated on the principle that compounds with a net neutral ionic charge, based on common oxidation states, are more likely to be synthetically accessible [2] [3]. Within the context of a broader thesis on material discovery, this principle is not merely a rule of thumb but a gateway to understanding the complex thermodynamic landscape that governs solid-state synthesis. This document provides researchers and drug development professionals with a rigorous technical framework, integrating quantitative data, experimental protocols, and visualization tools to elucidate the logical pathway from ionic charge to thermodynamic favorability and, ultimately, to synthesizability.

Theoretical Foundations: From Charge to Thermodynamics

The Principle of Ionic Charge Balance

The principle of ionic charge balance posits that stable, synthesizable inorganic compounds tend to have a net neutral charge when their constituent elements are considered in their common oxidation states [2]. This is a "hard" filter in many screening pipelines; it is difficult to envision creating a stable compound that violates this rule of charge neutrality [2]. The underlying logic is rooted in electrostatics: a significantly charged compound would experience immense Coulombic repulsion, making its formation energetically unfavorable.

However, this principle has limitations. An analysis of known materials reveals that only 37% of synthesized inorganic materials in databases can be charge-balanced using common oxidation states. This figure drops to a mere 23% for known binary cesium compounds [3]. This indicates that while charge balancing is a valuable initial filter, it is an inflexible constraint that cannot fully account for the diverse bonding environments in metallic alloys, covalent materials, or complex ionic solids where other stabilization mechanisms are at play [3].

Thermodynamic Favorability and Gibbs Free Energy

The thermodynamic driving force for a chemical reaction, including the formation of a solid-state compound, is the Gibbs Free Energy change, ΔG [7]. A reaction is considered thermodynamically favored (or spontaneous) when ΔG is negative (ΔG < 0) [7].

The relationship is given by the fundamental equation:

ΔG = ΔH - TΔS

where ΔH is the enthalpy change, T is the absolute temperature, and ΔS is the entropy change [7].

The following table summarizes how the signs of ΔH and ΔS dictate the temperature dependence of a reaction's favorability [7]:

| ΔH | ΔS | ΔG < 0 favoured at: | Reaction Character |

|---|---|---|---|

| < 0 (Exothermic) | > 0 | All temperatures | Always thermodynamically favored |

| > 0 (Endothermic) | < 0 | No temperatures | Always thermodynamically unfavored |

| > 0 (Endothermic) | > 0 | High temperatures | Favored as temperature increases |

| < 0 (Exothermic) | < 0 | Low temperatures | Unfavored as temperature increases |

For a formation reaction, a negative ΔG (ΔG_f) suggests a compound is stable with respect to its elements. A more robust metric is the energy above hull, which is the energy difference between a compound and the most stable phase or phases in its chemical space. A compound with an energy above hull of zero is on the convex hull and is considered thermodynamically stable at 0 K [2].

The Logical Pathway from Ionic Charge to Synthesizability

Ionic charge balance is a strong initial indicator of thermodynamic favorability because it implicitly addresses the electrostatic (enthalpic, ΔH) component of the Gibbs free energy. A charge-neutral arrangement minimizes Coulombic repulsion, which is a major contributor to the lattice energy in ionic solids, thereby favoring a more negative ΔH and, consequently, a more negative ΔG. However, the ultimate synthesizability of a material is not governed by thermodynamics alone; kinetic barriers, synthetic pathway availability, and non-physical considerations like reactant cost and equipment availability also play critical roles [3].

Quantitative Data and Experimental Validation

Stability Constants of Coordination Complexes

The stability of metal-ligand complexes in solution provides a quantitative measure of thermodynamic favorability that is directly accessible through experiment. The formation constant (K or β) is the equilibrium constant for the complexation reaction [8]. A larger formation constant signifies a more thermodynamically stable product complex compared to its reactants [8].

For example, the stepwise formation of the tetraamminecopper(II) complex from the aqueous copper ion is characterized by the following equilibrium constants [8]:

| Step | Reaction | Stepwise Constant (K) |

|---|---|---|

| 1 | [Cu(OH₂)₆]²⁺ + NH₃ ⇌ [Cu(NH₃)(OH₂)₅]²⁺ + H₂O |

K₁ = 1.9 × 10⁴ |

| 2 | [Cu(NH₃)(OH₂)₅]²⁺ + NH₃ ⇌ [Cu(NH₃)₂(OH₂)₄]²⁺ + H₂O |

K₂ = 3.9 × 10³ |

| 3 | [Cu(NH₃)₂(OH₂)₄]²⁺ + NH₃ ⇌ [Cu(NH₃)₃(OH₂)₃]²⁺ + H₂O |

K₃ = 1.0 × 10³ |

| 4 | [Cu(NH₃)₃(OH₂)₃]²⁺ + NH₃ ⇌ [Cu(NH₃)₄(OH₂)₂]²⁺ + H₂O |

K₄ = 1.5 × 10² |

The overall formation constant for [Cu(NH₃)₄(OH₂)₂]²⁺ is the product of the stepwise constants [8]:

β₄ = K₁ × K₂ × K₃ × K₄ = (1.9 × 10⁴)(3.9 × 10³)(1.0 × 10³)(1.5 × 10²) = 1.1 × 10¹³

This exceptionally high value indicates a powerful thermodynamic drive for the formation of this charge-balanced complex.

The Effect of Ionic Environment: Activity and Ionic Strength

Thermodynamic measurements, including formation constants, are sensitive to the ionic environment of the solution. The observed stability of a complex can decrease in the presence of inert ions, a phenomenon explained by the concept of ionic strength and its effect on ionic activity [9].

Ionic strength (μ) is calculated as:

μ = 1/2 Σ c_i z_i²

where c_i is the concentration of the i-th ion and z_i is its charge [9].

For instance, the stability of the Fe(SCN)²⁺ complex decreases when an inert salt like KNO₃ is added. This occurs because each ion is surrounded by an ionic atmosphere of opposite charge, which screens the ions and reduces their effective charge, thereby weakening the force of attraction and making complex formation less favorable [9].

Experimental Protocol: Demonstrating the Ionic Strength Effect

- Objective: To observe the decrease in stability of the

Fe(SCN)²⁺complex with increasing ionic strength. - Materials: 1.0 mM FeCl₃ solution, 1.5 mM KSCN solution, solid KNO₃.

- Procedure:

- Mix equal volumes of 1.0 mM FeCl₃ and 1.5 mM KSCN. A reddish-orange color due to

Fe(SCN)²⁺formation will be observed. - Add approximately 10 g of KNO₃ to the solution and stir until completely dissolved.

- Observe the visible lightening of the solution's color.

- Mix equal volumes of 1.0 mM FeCl₃ and 1.5 mM KSCN. A reddish-orange color due to

- Analysis: The color lightening indicates a decrease in the concentration of

Fe(SCN)²⁺, signifying a shift in the equilibrium position to the left (Le Chatelier's principle) and a corresponding decrease in the apparent formation constant due to increased ionic strength [9].

Computational Screening and Advanced Synthesizability Prediction

The Materials Discovery Pipeline and Human-Knowledge Filters

In modern computational materials science, generative algorithms can produce millions of hypothetical compounds. A critical downselection step is required to identify the most promising, synthesizable candidates. Here, chemical knowledge is embedded as "filters" within an automated screening pipeline [2].

A typical pipeline for "perovskite-inspired" materials might involve the sequential application of these filters [2]:

- Charge Neutrality Filter: A "hard" filter that removes compositions that cannot achieve net neutral charge with common oxidation states.

- Electronegativity Balance Filter: Ensures the most electronegative ion in a compound also has the most negative charge.

- Unique Oxidation State Filter: Excludes compounds with multiple possible oxidation states per element.

- Oxidation State Frequency Filter: Removes compounds containing elements in uncommon oxidation states.

- Stoichiometric Variation Filters ("Intra" and "Cross"): Compare proposed compounds to known stoichiometries within and across related chemical phase diagrams.

One study applying this approach started with over 100,000 novel compounds and, after applying this filter cascade, identified just 27 that met all criteria [2].

Data-Driven Approaches: SynthNN and Beyond

Given the limitations of rule-based filters, machine learning models trained directly on the database of all known synthesized materials have emerged as powerful tools. SynthNN is a deep learning model that leverages the entire space of synthesized inorganic chemical compositions from the Inorganic Crystal Structure Database (ICSD) to predict synthesizability [3].

Remarkably, without being explicitly programmed with chemical rules, SynthNN learns the principles of charge-balancing, chemical family relationships, and ionicity directly from the data [3]. It reformulates material discovery as a synthesizability classification task and has been shown to:

- Identify synthesizable materials with 7x higher precision than using DFT-calculated formation energies alone.

- Outperform 20 expert material scientists in a head-to-head discovery challenge, achieving 1.5x higher precision and completing the task five orders of magnitude faster [3].

This demonstrates that the "underlying logic" of ionic charge is so fundamental that it is a latent feature discoverable from the distribution of real material data, and it can be combined with other complex patterns to create a highly effective predictor of synthesizability.

The Scientist's Toolkit: Key Research Reagents and Materials

The following table details essential materials and resources used in the experimental and computational protocols cited in this field.

| Item | Function / Relevance |

|---|---|

| Aqueous Metal Ions (e.g., [Cu(OH₂)₆]²⁺) | The starting reactant for complexation studies in solution, representing the solvated metal cation [8]. |

| Ligands (e.g., NH₃, SCN⁻) | Molecules or ions that bind to the metal center to form coordination complexes, enabling the measurement of formation constants [8]. |

| Inert Salts (e.g., KNO₃) | Used to modulate the ionic strength of a solution to study activity effects on equilibrium constants [9]. |

| Inorganic Crystal Structure Database (ICSD) | A comprehensive database of experimentally reported crystalline inorganic structures, serving as the primary source of "synthesized" materials for training models like SynthNN [3]. |

| Materials Project Database | A large database of computed material properties using DFT, used for cross-referencing and identifying "known" versus "hypothetical" compounds in screening pipelines [2]. |

| pymatgen | A robust, open-source Python library for materials analysis, essential for implementing computational screening filters and workflows [2]. |

The principle of charge balance, a cornerstone of traditional chemical reasoning, posits that stable inorganic compounds must exhibit a net charge of zero, achieved through well-defined oxidation states that balance precisely. While this rule provides a powerful heuristic for predicting compound stability, its rigid application fails to account for a significant class of materials with technologically compelling properties. This review examines the limitations of the charge-balance paradigm through the lens of metallic phases, covalent metals, and non-stoichiometric compounds. We demonstrate how electron-deficient covalent bonding, metallic conductivity with formal charge imbalance, and vacancy-stabilized phases defy conventional oxidation state formalism. Supported by quantitative data and computational evidence, this analysis argues for a more nuanced understanding of chemical bonding and stability, which is critical for advancing synthesizability prediction and accelerating the discovery of novel inorganic materials.

In computational materials discovery, the initial screening of hypothetical compounds relies heavily on foundational chemical rules to prioritize candidates for synthesis. Among these, the principle of charge neutrality is perhaps the most fundamental, acting as a primary "filter" to separate plausible compositions from those deemed unstable [2]. This heuristic is rooted in classical ionic bonding models, where the attractive forces between cations and anions of opposite charge lead to a stable, neutral compound. Consequently, violation of this rule is often considered a reliable indicator of non-synthesizability.

However, the rigorous application of this rigid rule overlooks entire categories of materials where stability emerges from mechanisms that transcend simple electrostatic balance. The growing availability of large-scale computational databases has revealed a significant number of predicted "stable" materials that appear charge-unbalanced when traditional oxidation states are assigned [10] [2]. This observation points to a critical gap in our understanding. This review deconstructs the limitations of the charge-balance rule by examining three specific domains: materials exhibiting metallic bonding, phases with electron-deficient covalent bonding, and non-stoichiometric compounds. We synthesize recent research to illustrate that while charge balance is a valuable initial filter, its dogmatic application can falsely exclude promising, synthesizable materials with unique electronic properties.

Metallic Bonding and Electron Delocalization

In metallic systems, the presence of a delocalized electron sea fundamentally alters the rules of chemical bonding. The stability of these phases is not governed by localized, integer electron transfers between atoms but by the overall energy of the collective electron system and the resulting band structure.

The Case of MAX Phase Ceramics

MAX phases, with the general formula (M{n+1}AXn), are a classic example of materials that combine metallic and ceramic properties. First-principles calculations on arsenic-based (M_2AsX) (M = Nb, Mo; X = C, N) phases confirm their metallic nature through band structure and density of states analyses [11]. Despite this metallic character, these compounds are thermodynamically and mechanically stable, as evidenced by negative formation enthalpies and satisfaction of the Born stability criteria.

Table 1: Stability and Electronic Properties of Selected Metallic MAX Phases

| Compound | Formation Enthalpy (eV/atom) | Band Gap (eV) | Mechanical Stability (Born Criteria) |

|---|---|---|---|

| Nb₂AsC | Negative | Metallic | Yes |

| Nb₂AsN | Negative | Metallic | Yes |

| Mo₂AsC | Negative | Metallic | Yes |

| Mo₂AsN | Negative | Metallic | Yes |

Their stability is attributed to a complex interplay of bonding types: strong ionic and covalent interactions within the M-X layers, and weaker metallic bonding between the M-X and A layers [11]. This multi-faceted bonding picture cannot be captured by a simple charge-balance check, as the concept of integer oxidation states becomes ambiguous in such a delocalized electronic environment.

Electron-Deficient Covalent Metals

A particularly striking violation of the charge-balance rule is found in a class of materials known as covalent metals. These compounds feature directional covalent bonding and low coordination numbers, typical of semiconductors, yet exhibit metallic conductivity and Pauli paramagnetism [10].

Copper Chalcogenides: A Paradigm of Electron Deficiency

Ternary copper sulfides and selenides, such as NaCu₄S₃, NaCu₄Se₃, and CsCu₄Se₃, demonstrate that metallic conductivity can coexist with a formally charge-unbalanced composition [10]. Using traditional oxidation state assignment (Cu⁺, Na⁺, S²⁻), a composition like NaCu₄S₃ would be charge-unbalanced. However, these phases are stable and exhibit p-type metallic conductivity.

The origin of this behavior is a delocalized electron deficiency, or "holes," within the covalent framework. Density functional theory (DFT) studies on covellite (CuS) confirm the absence of a bandgap and the presence of holes in the valence band [10]. This deficiency arises from a mismatch between the number of available molecular orbitals and the number of valence electrons to fill them. The holes are delocalized over structural units like Cu₃S₃ blocks, leading to metallic conductivity without requiring mixed copper valence states. This phenomenon results in slightly higher positive charges on copper and less negative charges on sulfur, a picture that is inconsistent with integer oxidation states.

Table 2: Properties of Selected Electron-Deficient Copper Chalcogenides

| Compound | Conductivity Type | Magnetic Behavior | Formal Charge Status | Key Experimental Evidence |

|---|---|---|---|---|

| CuS (Covellite) | p-type metallic | Pauli paramagnetic | Electron-deficient | DFT: Holes in valence band, no bandgap [10] |

| NaCu₄S₃ | p-type metallic | Pauli paramagnetic | Formally unbalanced | Metallic conductivity, paramagnetism [10] |

| NaCu₄Se₄ | p-type metallic | Pauli paramagnetic | Formally unbalanced | Metallic conductivity, paramagnetism [10] |

Experimental Synthesis Protocols

The synthesis of these ternary copper chalcogenides often employs alkali polychalcogenide flux methods [10]. The following represents a generalized protocol:

- Preparation of Polychalcogenide Flux: In an inert atmosphere glovebox, alkali metal (e.g., Na) is reacted with chalcogen (S or Se) in a stoichiometric ratio yielding a polychalcogenide (e.g., Na₂Sₓ). Alternatively, pre-formed alkali chalcogenides (Na₂S) are mixed with elemental chalcogen.

- Charge Preparation: The copper source (elemental copper or a pre-made copper chalcogenide like CuS) is mixed with the polychalcogenide flux in a sealed ampoule (quartz or Pyrex) under vacuum.

- Reaction Cycle: The sealed ampoule is heated in a muffle furnace to a temperature between 350–1100 °C, held for a period (hours to days), and then slowly cooled to promote crystal growth.

- Product Isolation: After cooling, the resulting solid is removed, and the excess water-soluble flux is washed away with deionized water and solvents like DMF. The remaining product contains crystals of the target phase.

Non-Stoichiometric and Defect-Stabilized Phases

Another significant limitation of the rigid charge-balance rule is its failure to account for the stability of non-stoichiometric compounds. These materials possess a variable composition range due to the presence of point defects, such as vacancies, which can stabilize the crystal structure.

Electronic Structure of Titanium Carbides and Nitrides

Stoichiometric titanium carbide (TiC) and nitride (TiN) exhibit a mix of metallic, ionic, and covalent bonding [12]. However, their substoichiometric variants (TiCₓ, TiNₓ, where x < 1) are also stable and well-studied. The presence of vacancies on the non-metal sublattice sites introduces significant changes in the electronic structure.

APW and KKR-CPA band structure calculations reveal that carbon or nitrogen vacancies in these compounds create additional peaks in the density of states, known as "vacancy peaks" [12]. These vacancy-induced states can participate in bonding and stabilize the defective structure. For example, in TiCₓ, the vacancies affect the covalent bonds involving Ti 3d orbitals, altering the material's properties compared to its stoichiometric counterpart. The stability is thus not a matter of perfect charge balance, but of the overall energy minimization that includes the contribution of defect states.

Computational Frameworks for Synthesizability Prediction

The limitations of simple chemical rules have driven the development of more sophisticated, data-driven approaches to predict material synthesizability. These methods aim to embed human domain knowledge into a computational pipeline to better identify viable novel compounds.

A Pipeline of Human-Knowledge Filters

One approach involves applying a sequence of "filters" to screen hypothetical compounds [2]. This pipeline starts with hard rules and progresses to softer, more nuanced heuristics:

- Charge Neutrality Filter: A hard filter that removes compositions with a net formal charge.

- Electronegativity Balance Filter: Ensures the most electronegative ion carries the most negative charge.

- Oxidation State Filters: Removes compounds with uncommon or multiple oxidation states for a single element.

- Stoichiometry Filters: Assesses the prevalence of a compound's stoichiometric ratios both within its own phase diagram and across adjacent diagrams.

This staged process, as applied to over 100,000 hypothetical "perovskite-inspired" materials, successfully narrowed the list to 27 high-priority candidates, demonstrating the value of combining rigid rules with contextual chemical intuition [2].

Integrated Synthesizability Models

A more advanced framework moves beyond sequential filters to a unified model that simultaneously evaluates composition and crystal structure [6]. The model defines synthesizability as the probability a compound can be prepared in a laboratory. It integrates two complementary data streams:

- Compositional Encoder (f_c): A transformer model fine-tuned on stoichiometry and engineered compositional descriptors.

- Structural Encoder (f_s): A graph neural network fine-tuned on crystal structure graphs.

These encoders output separate synthesizability scores, which are aggregated via a rank-average ensemble (Borda fusion) to prioritize candidates. This integrated model, when applied to 4.4 million computational structures, successfully identified synthesizable targets, seven of which were experimentally validated in a high-throughput laboratory [6]. This demonstrates the superior predictive power of models that learn complex stability criteria directly from data, rather than relying on predefined, rigid rules.

The Scientist's Toolkit: Key Reagents and Methods

Table 3: Essential Research Reagents and Methods for Synthesizing Complex Phases

| Reagent / Method | Function in Synthesis | Example Use Case |

|---|---|---|

| Alkali Polychalcogenide Flux | Low-melting solvent and reactant; promotes crystal growth of chalcogenides. | Synthesis of NaCu₄S₃, CsCu₄Se₃ [10]. |

| Hydrothermal/Solvothermal Synthesis | Enables reactions in aqueous or organic solvents at elevated T&P; good for metastable phases. | Synthesis of CsCu₄Se₃ [10]. |

| Boron-Chalcogen Mixture (BCM) | Reduces metal oxides in situ to form chalcogenides; useful for air-sensitive elements. | Synthesis of NaCuUS₃ from U₃O₈ [10]. |

| Solid-State Precursor Model (Retro-Rank-In) | Computational model for suggesting viable solid-state precursor combinations. | Predicting precursors for novel targets [6]. |

| Synthesis Condition Predictor (SyntMTE) | Computational model for predicting calcination temperatures. | Predicting reaction parameters for novel targets [6]. |

The empirical and computational evidence surveyed in this review compellingly argues that a rigid adherence to the charge-balance rule is an untenable constraint in modern inorganic materials discovery. The stable existence of metallic MAX phases, electron-deficient covalent metals like copper chalcogenides, and non-stoichiometric refractory compounds demonstrates that stability can emerge from complex, delocalized bonding and defect engineering that simple oxidation-state arithmetic cannot capture. As the field progresses, the integration of human chemical intuition—encoded as sophisticated filters—with data-driven models that holistically assess composition and structure represents the most promising path forward. Embracing this nuanced view of chemical stability is essential for unlocking the next generation of functional inorganic materials.

The principle of charge balancing, which posits that synthesizable inorganic crystalline materials should exhibit net neutral ionic charge based on common oxidation states, has long served as a foundational heuristic in materials discovery. This technical analysis demonstrates a profound statistical reality: the majority of known inorganic materials defy this conventional criterion. Comprehensive data from the Inorganic Crystal Structure Database (ICSD) reveals that only approximately 37% of synthesized inorganic compounds are charge-balanceable according to standard oxidation states, with the figure dropping to a mere 23% for binary cesium compounds typically considered highly ionic [3]. This paper examines the quantitative evidence for this prevalence, explores advanced synthesizability models that outperform charge-balancing proxies, details experimental protocols for synthesizability-guided discovery, and provides a research toolkit for modern materials research. The findings necessitate a paradigm shift from rigid charge-balancing rules toward data-driven, multi-factor synthesizability assessment frameworks that more accurately capture the complex chemistry of experimentally accessible materials.

The targeted synthesis of crystalline inorganic materials presents formidable challenges due to poorly understood reaction mechanisms and the influence of kinetic factors alongside thermodynamic stability [3]. In the absence of universal synthesizability principles, computational materials discovery has frequently relied on charge-balancing as a computationally inexpensive proxy for synthesizability. This approach filters candidate materials by requiring a net neutral ionic charge calculated from commonly accepted oxidation states for all constituent elements [3].

While chemically intuitive, this paradigm rests on a critical assumption that most synthesized materials adhere to this charge-balancing principle. Recent evidence fundamentally challenges this assumption, revealing that charge-balancing fails to describe the majority of experimentally realized inorganic crystals. The development of machine learning models trained directly on synthesis data has demonstrated that synthesizability depends on a complex array of factors beyond simple charge neutrality, including chemical family relationships, ionicity, and non-physical considerations such as reactant cost and equipment availability [3].

Quantitative Evidence: Statistical Prevalence of Non-Charge-Balanced Materials

Analysis of comprehensive materials databases provides definitive statistical evidence for the surprising prevalence of non-charge-balanced known materials.

Large-scale analysis of the Inorganic Crystal Structure Database (ICSD), which represents a nearly complete history of synthesized and structurally characterized inorganic crystalline materials, reveals that charge-balancing is the exception rather than the rule [3].

Table 1: Charge-Balancing Statistics Across Material Categories

| Material Category | Percentage Charge-Balanced | Data Source | Sample Size |

|---|---|---|---|

| All inorganic crystalline materials | 37% | ICSD | >100,000 entries |

| Binary cesium compounds | 23% | ICSD | Not specified |

| Synthesizable materials identified by SynthNN | 93% (precision) | Computational screening | 4.4 million candidates |

The statistical evidence demonstrates that approximately 63% of all known inorganic materials defy charge-balancing expectations according to common oxidation states [3]. This prevalence challenges the fundamental validity of charge-balancing as a universal synthesizability filter.

Performance Comparison of Synthesizability Assessment Methods

The poor performance of charge-balancing as a synthesizability proxy becomes evident when compared with modern assessment methods.

Table 2: Performance Metrics of Synthesizability Assessment Methods

| Assessment Method | Precision | Recall | Key Limitations |

|---|---|---|---|

| Charge-balancing filter | Low (inferred) | Low (inferred) | Inflexible to different bonding environments; only 37% coverage of known materials |

| DFT-calculated formation energy | 50% | 50% | Fails to account for kinetic stabilization and finite-temperature effects |

| SynthNN (composition-based) | 7× higher than charge-balancing | Not specified | Requires no prior chemical knowledge |

| Unified composition-structure model | 93% (experimental validation rate) | Not specified | Integrated signals from composition and crystal structure |

The precision advantage of SynthNN over charge-balancing is particularly significant – it identifies synthesizable materials with 7 times higher precision than the charge-balancing approach [3]. In head-to-head comparisons against human experts, this deep learning model achieved 1.5 times higher precision and completed synthesizability assessment tasks five orders of magnitude faster than the best-performing human expert [3].

Beyond Charge-Balancing: Modern Synthesizability Assessment Frameworks

The limitations of charge-balancing have catalyzed the development of more sophisticated synthesizability assessment frameworks that leverage machine learning and integrated composition-structure analysis.

Composition-Based Deep Learning Models

The SynthNN model represents a fundamental shift from rule-based filtering to data-driven synthesizability classification [3]. This approach leverages the entire space of synthesized inorganic chemical compositions through the following methodological framework:

- Model Architecture: Implements a deep learning synthesizability model that utilizes atom2vec, representing each chemical formula by a learned atom embedding matrix optimized alongside all other neural network parameters [3].

- Training Data: Trains on chemical formulas from the ICSD, augmented with artificially generated unsynthesized materials to address the lack of reported unsuccessful syntheses [3].

- Learning Capabilities: Without explicit programming of chemical principles, SynthNN autonomously learns charge-balancing relationships, chemical family patterns, and ionicity principles from the distribution of synthesized materials [3].

- PU Learning Framework: Employs positive-unlabeled learning algorithms that treat unsynthesized materials as unlabeled data, probabilistically reweighting them according to their likelihood of being synthesizable [3].

This composition-based approach enables rapid screening across billions of candidate materials without requiring structural information, making it particularly valuable for early-stage discovery workflows [3].

Unified Composition and Structure Synthesizability Models

Recent advances have integrated compositional and structural signals to generate more accurate synthesizability predictions. The unified model demonstrated in a 2025 synthesizability-guided pipeline combines both approaches [6]:

- Problem Formulation: Represents each candidate material by both its composition (xc) and relaxed crystal structure (xs), with the goal of learning a synthesizability score s(x) ∈ [0,1] that estimates experimental accessibility [6].

- Model Architecture: Employs dual encoders – a compositional MTEncoder transformer for composition data and a graph neural network fine-tuned from the JMP model for crystal structure analysis [6].

- Rank-Average Ensemble: Converts probabilities from both composition and structure models to ranks, then aggregates them via Borda fusion to generate enhanced synthesizability rankings across candidate materials [6].

- Experimental Validation: This unified approach successfully identified synthesizable candidates from a pool of 4.4 million computational structures, with experimental characterization confirming seven matched target structures out of sixteen attempted syntheses [6].

Synthesizability Assessment Workflow

Experimental Protocols: Synthesizability-Guided Materials Discovery

The transition from theoretical prediction to experimental validation requires robust experimental protocols. The following methodology outlines a synthesizability-guided pipeline for materials discovery.

Candidate Screening and Prioritization Protocol

- Input Pool Curation: Begin with computational structure databases (Materials Project, GNoME, Alexandria) containing DFT-relaxed crystal structures [6].

- Synthesizability Scoring: Apply unified composition-structure model to calculate synthesizability scores for all candidates (0-1 scale) [6].

- Ranking and Filtering:

- Retain only highly synthesizable candidates (RankAvg ≥ 0.95)

- Apply practical filters: remove platinoid-containing compounds, non-oxides, and toxic materials

- Web-scale literature search via LLM to exclude previously synthesized compounds

- Expert review to eliminate targets with unrealistic oxidation states [6]

- Output: Generate prioritized candidate list (~500 structures from initial 4.4 million) [6].

Synthesis Planning and Execution Protocol

- Precursor Selection: Apply Retro-Rank-In precursor-suggestion model to generate ranked list of viable solid-state precursors for each target [6].

- Parameter Prediction: Use SyntMTE model trained on literature-mined solid-state synthesis corpora to predict calcination temperatures required for target phase formation [6].

- Reaction Balancing: Balance chemical reactions and compute corresponding precursor quantities [6].

- High-Throughput Synthesis: Execute syntheses in automated solid-state laboratory platform [6].

- Characterization: Verify products automatically via X-ray diffraction (XRD) with structure matching [6].

This complete experimental process – from computational screening to characterized products – has been demonstrated to require only three days for execution, highlighting the efficiency gains enabled by synthesizability-guided approaches [6].

Experimental Synthesis Workflow

Modern synthesizability research requires specialized computational tools and experimental resources. The following table details key solutions for implementing synthesizability-guided materials discovery.

Table 3: Essential Research Toolkit for Synthesizability Studies

| Tool/Resource | Type | Function/Purpose | Key Features |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) | Data Resource | Provides comprehensive repository of synthesized inorganic crystals for training and validation | Contains synthesis details for historically reported materials; enables benchmarking against known compounds [3] |

| SynthNN | Computational Model | Composition-based synthesizability classification without structural information | 7× higher precision than charge-balancing; five orders of magnitude faster than human experts [3] |

| MTEncoder Transformer | Computational Tool | Composition encoder for chemical formulas in unified synthesizability models | Generates optimal representation of chemical formulas directly from distribution of synthesized materials [6] |

| Graph Neural Network (JMP model) | Computational Tool | Structure encoder for crystal structures in unified synthesizability models | Processes crystal structure graphs to extract structural synthesizability signals [6] |

| Retro-Rank-In | Computational Tool | Precursor-suggestion model for synthesis planning | Generates ranked list of viable solid-state precursors for target materials [6] |

| SyntMTE | Computational Tool | Synthesis parameter prediction model | Predicts calcination temperatures from literature-mined synthesis data [6] |

| Automated Laboratory Platform | Experimental System | High-throughput synthesis execution | Enables rapid experimental validation of computationally predicted materials [6] |

The statistical reality that nearly two-thirds of known inorganic materials defy conventional charge-balancing principles necessitates a fundamental re-evaluation of synthesizability assessment in materials discovery. The demonstrated prevalence of non-charge-balanced compounds, coupled with the superior performance of data-driven synthesizability models, underscores the limitations of relying on simplistic chemical heuristics for predicting synthetic accessibility.

Modern frameworks that integrate compositional and structural information through machine learning offer substantially improved precision in identifying synthesizable materials, as validated by experimental synthesis of novel compounds. These approaches successfully learn complex chemical relationships – including charge-balancing patterns as one factor among many – directly from the empirical data of synthesized materials, without requiring explicit programming of chemical rules.

The research toolkit and experimental protocols detailed in this analysis provide a pathway for implementing synthesizability-guided discovery that transcends the limitations of charge-balancing filters. As materials research increasingly leverages computational screening of massive candidate spaces, embracing these sophisticated synthesizability assessment methods will be essential for efficiently bridging the gap between theoretical prediction and experimental realization.

From Rule to Algorithm: Modern Methods for Predicting Synthesizability

In the field of computational materials science, the efficient discovery of novel, synthesizable inorganic materials remains a significant challenge. While generative algorithms can now produce millions of hypothetical compounds, the majority often prove unsynthesizable in laboratory conditions [2]. This disconnect highlights a critical bottleneck in the materials discovery pipeline: effectively weeding out unstable or difficult-to-synthesize candidates before committing valuable experimental resources.

Within this context, charge balancing principles have emerged as foundational elements for constructing effective screening filters. These principles allow researchers to embed fundamental chemical knowledge directly into computational workflows, creating a crucial bridge between human expertise and automated discovery systems [2]. The application of charge neutrality and electronegativity balance rules represents a powerful methodology for prioritizing candidate materials with enhanced potential for experimental synthesis, thereby accelerating the overall discovery process [2] [13].

Theoretical Foundation: Core Chemical Principles as Screening Filters

The screening pipeline operates on a hierarchy of chemical principles, classified as either "hard" or "soft" filters based on their permissiveness and reliability in predicting synthesizability.

Hard Filters: Non-Negotiable Chemical Laws

Charge Neutrality Filter: This filter mandates that all stable chemical compounds must be electrically neutral overall [2]. It is considered a "hard" filter because violating this principle makes compound formation virtually unimaginable. The filter operates by ensuring the sum of cationic charges equals the sum of anionic charges in a proposed composition [13].

Electronegativity Balance Filter: This principle suggests that the most electronegative ion in a compound should also carry the most negative charge [2]. This filter helps identify compounds with plausible charge distributions based on the relative electronegativities of their constituent elements.

Soft Filters: Empirical Rules of Thumb

Additional filters incorporate empirical knowledge with recognized exceptions:

Unique Oxidation State Filter: Prioritizes compounds where elements appear in common, stable oxidation states [2].

Oxidation State Frequency Filter: Favors oxidation states that appear frequently in known stable compounds [2].

Stoichiometric Variation Filters: Include both "intra-phase diagram" analysis (comparing stoichiometries within the same ternary system) and "cross-phase diagram" analysis (identifying common stoichiometries across related chemical systems) [2].

Table 1: Classification and Description of Chemical Knowledge Filters

| Filter Type | Filter Name | Chemical Principle | Classification |

|---|---|---|---|

| Hard Filters | Charge Neutrality | Total cationic charges must equal total anionic charges | Non-conditional |

| Electronegativity Balance | Most electronegative ion carries the most negative charge | Non-conditional | |

| Soft Filters | Unique Oxidation State | Elements should exhibit common, stable oxidation states | Conditional |

| Oxidation State Frequency | Preferred oxidation states are those frequent in known compounds | Conditional | |

| Stoichiometric Variation | Stoichiometries should align with patterns in known systems | Conditional |

Implementation Framework: From Theory to Computational Practice

Workflow Integration of Human Knowledge Filters

The screening process involves sequential application of filters to progressively refine candidate materials. The following diagram illustrates this workflow, showing how raw candidate lists are distilled to high-probability synthesis targets.

Computational Methodologies and Protocols

Charge Balance Calculation Procedure

The implementation of charge neutrality filters requires conversion of elemental concentrations to charge equivalents. The standard methodology follows these computational steps [13]:

Convert to Weight Fraction: Transform raw ion concentration data (mg/L) to weight fraction relative to dry sample mass: ( wi = \frac{ci Vw}{ms} ) Where ( wi ) is weight fraction of ion ( i ), ( ci ) is concentration (mg/L), ( Vw ) is water volume (L), and ( ms ) is dry sample mass (mg).

Calculate Charge Equivalents: Convert weight fractions to equivalents per kilogram (Eq/kg) to enable direct charge comparison: ( ei = \frac{wi |zi|}{M} ) Where ( ei ) is equivalents per kilogram, ( z_i ) is absolute ion charge, and ( M ) is molar mass (kg/mol).

Determine Charge Imbalance: Calculate the charge excess between total cations and anions: ( \Delta e = e{\text{cat}} - e{\text{ani}} )

Apply Correction Pathways:

- Pathway I: For charge excess ≤2%, apply equal adjustment to all ions (attributed to analytical uncertainty)

- Pathway II: For charge excess >2%, identify and quantify cations associated with undetected anions (typically carbonates, bicarbonates, or hydroxides)

Electronegativity Balance Implementation

The electronegativity filter algorithm follows this sequence [2]:

- Calculate probable oxidation states for all elements in the proposed compound

- Determine partial charges using appropriate electronegativity equalization methods

- Verify that the most electronegative element carries the most negative partial charge

- Flag compounds violating this principle for exclusion or lower prioritization

Table 2: Performance Metrics of Human-Knowledge Screening Pipeline

| Screening Stage | Compounds Remaining | Reduction (%) | Key Filter Action |

|---|---|---|---|

| Initial Candidates | >100,000 | - | Raw generative algorithm output |

| After Charge & Electronegativity Filters | ~50,000 | ~50% | Removal of electrostatically implausible compounds |

| After Oxidation State Filters | ~1,400 | ~80% | Exclusion of uncommon oxidation states |

| After Stoichiometric Filters | 27 | ~90% | Alignment with observed stoichiometric patterns |

Successful implementation of knowledge-guided screening pipelines requires both computational tools and chemical databases.

Table 3: Essential Resources for Material Screening Pipelines

| Resource Name | Type | Primary Function | Application in Screening |

|---|---|---|---|

| Materials Project Database | Computational Database | Provides DFT-calculated properties for known compounds | Reference data for stability assessment and novelty determination |

| pymatgen | Python Library | Materials analysis and phase diagram construction | Core computational engine for filter implementation |

| ICSD | Experimental Database | Curated experimental crystal structures | Ground truth for filter validation and novelty assessment |

| Electronegativity Scales | Chemical Reference | Quantitative element electronegativity values | Electronegativity balance calculations |

| Oxidation State Tables | Chemical Reference | Common oxidation states by element | Oxidation state filter rules |

Integration with Modern Data-Driven Approaches

While human knowledge filters provide crucial chemical intuition, they are most powerful when integrated with computational approaches. Machine learning models, particularly semi-supervised learning, have shown promising results in predicting synthesizability, with one model achieving 83.4% recall and 83.6% estimated precision on test data [14]. Furthermore, universal interatomic potentials have advanced sufficiently to effectively pre-screen thermodynamically stable hypothetical materials [15].

The relationship between these approaches is synergistic rather than competitive. Human knowledge filters excel at providing rapid, chemically intuitive initial screening, while ML methods offer more nuanced, pattern-based predictions. The following diagram illustrates this integrated approach to materials discovery.

The embedding of human chemical knowledge—particularly charge neutrality and electronegativity balance principles—as computational filters represents a powerful paradigm in materials discovery. By translating fundamental chemical principles into automated screening criteria, researchers can dramatically improve the efficiency of materials discovery pipelines, bridging the gap between computational prediction and experimental realization. As the field advances, the integration of these knowledge-based approaches with emerging machine learning methodologies promises to further accelerate the discovery of novel functional materials for energy, electronics, and beyond.

The discovery of new inorganic crystalline materials is a fundamental driver of technological innovation. However, a significant bottleneck exists: determining which computationally predicted materials are synthetically accessible in a laboratory. For years, charge-balancing—ensuring a net neutral ionic charge based on common oxidation states—has been a widely used heuristic in synthesizability research [16] [2]. This principle is rooted in chemical intuition, as it filters out compositions that appear electrostatically improbable. Nevertheless, this method presents a major limitation; an analysis of the Inorganic Crystal Structure Database (ICSD) reveals that only about 37% of known synthesized inorganic compounds actually satisfy this charge-balancing criterion [16]. In specific classes of materials, such as binary cesium compounds, this figure drops to a mere 23% [16]. This stark disparity underscores that synthesizability is governed by a more complex set of factors than charge balance alone, including kinetic stabilization, precursor availability, and human-driven experimental choices [17].

The inability of simple rules to reliably predict synthesizability has created a critical need for more sophisticated, data-driven approaches. This whitepaper introduces SynthNN, a deep learning model that represents a paradigm shift in predicting the synthesizability of crystalline inorganic materials directly from their chemical compositions [16]. By learning from the entire corpus of known synthesized materials, SynthNN moves beyond the limitations of rigid, human-defined rules and captures the subtle, complex patterns that truly dictate whether a material can be made.

SynthNN is a deep learning classification model designed to predict the synthesizability of inorganic chemical formulas without requiring any prior structural information [16] [18]. Its primary goal is to integrate synthesizability constraints directly into computational material screening workflows, thereby increasing the reliability of identifying synthetically accessible candidates [16].

Core Architecture and atom2vec

A key innovation of SynthNN is its use of a framework called atom2vec [16]. This approach represents each chemical element in a composition via a learned embedding matrix that is optimized alongside all other parameters of the neural network during training.

- Input: A chemical formula (e.g., NaCl, CaTiO₃).

- Process: The formula is broken down into its constituent elements. Each element is converted into a dense, real-valued vector (an embedding) from the atom2vec embedding matrix.

- Learning: The model learns the optimal dimensionality and values of these embeddings directly from the distribution of synthesized materials in the training data. This allows SynthNN to infer complex chemical relationships, such as chemical family trends and effective ionicity, without being explicitly programmed with such rules [16].

The following diagram illustrates the flow of information through the SynthNN architecture and its training ecosystem:

The Positive-Unlabeled (PU) Learning Framework

A major challenge in training synthesizability models is the lack of confirmed negative examples (definitively unsynthesizable materials) in scientific databases. SynthNN addresses this through a Positive-Unlabeled (PU) learning approach [16].

- Positive Examples: These are known synthesized materials, sourced from the Inorganic Crystal Structure Database (ICSD) [16].

- Unlabeled Examples: A large set of artificially generated chemical formulas that are not present in the ICSD. The model treats these as likely unsynthesizable, but acknowledges that some may be synthesizable materials that simply haven't been discovered or reported yet [16].

- Probabilistic Reweighting: The PU learning framework probabilistically reweights the unlabeled examples during training based on their likelihood of being synthesizable. This prevents the model from over-penalizing potentially viable materials that are absent from the database [16].

Experimental Protocols and Model Performance

Data Curation and Training Methodology

The development and evaluation of SynthNN followed a rigorous experimental protocol.

- Data Source: The positive data was extracted from the ICSD, representing a comprehensive history of synthesized and structurally characterized inorganic crystalline materials [16].

- Artificial Negatives: The training dataset was augmented with a large number of artificially generated chemical formulas not found in the ICSD. The ratio of these artificial formulas to synthesized formulas is a key model hyperparameter,

N_synth[16]. - Benchmarking: SynthNN's performance was benchmarked against two baselines: 1) random guessing (weighted by class imbalance), and 2) the traditional charge-balancing criterion [16].

- Performance Metrics: Model performance was evaluated using standard classification metrics, including precision and recall. However, given the PU learning context, the F1-score is also a critical metric for evaluation [16].

Quantitative Performance Comparison

The table below summarizes the performance of SynthNN against traditional baseline methods, demonstrating its superior predictive power.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Key Principle | Reported Precision | Key Limitation |

|---|---|---|---|

| SynthNN | Deep learning on known compositions [16] | ~56.3% (at 0.5 threshold) [18] | Requires large datasets; "black box" nature |

| Charge-Balancing | Net neutral ionic charge [16] | Significantly lower than SynthNN [16] | Only 37% of known materials comply [16] |

| DFT Formation Energy | Thermodynamic stability [16] | 7x lower than SynthNN [19] | Fails to account for kinetic stabilization [16] |

| CSLLM (2025) | Fine-tuned Large Language Model on crystal structures [5] | 98.6% accuracy [5] | Requires full crystal structure as input [5] |

SynthNN's performance was further validated in a unique head-to-head competition against 20 expert material scientists. In this test, SynthNN not only completed the material discovery task five orders of magnitude faster than the best human expert but also achieved 1.5 times higher precision [16]. This demonstrates that the model effectively internalizes and generalizes the complex chemical intuition of expert chemists.

Detailed SynthNN Performance Metrics

For researchers seeking to apply SynthNN, the choice of classification threshold allows for a trade-off between precision and recall. The following table provides detailed performance metrics across different decision thresholds on a dataset with a 20:1 ratio of unsynthesized to synthesized examples [18].

Table 2: SynthNN Performance Metrics at Different Decision Thresholds

| Decision Threshold | Precision | Recall |

|---|---|---|

| 0.10 | 0.239 | 0.859 |

| 0.20 | 0.337 | 0.783 |

| 0.30 | 0.419 | 0.721 |

| 0.40 | 0.491 | 0.658 |

| 0.50 | 0.563 | 0.604 |

| 0.60 | 0.628 | 0.545 |

| 0.70 | 0.702 | 0.483 |

| 0.80 | 0.765 | 0.404 |

| 0.90 | 0.851 | 0.294 |

Implementing and utilizing a model like SynthNN requires a specific set of data and computational resources. The following table details the key components of the research pipeline.

Table 3: Essential Research Reagents and Resources for SynthNN

| Item / Resource | Function / Description | Source / Example |

|---|---|---|

| ICSD Database | Primary source of positive (synthesized) training examples and validation data [16]. | Inorganic Crystal Structure Database (licensed) [16] |

| Artificial Composition Generator | Creates hypothetical chemical formulas to serve as unlabeled/negative examples during model training [16]. | Custom algorithms |

| Pre-trained Atom Embeddings (atom2vec) | Provides numerical representation of elements, capturing chemical properties from data [16]. | SynthNN model weights [18] |

| High-Performance Computing (HPC) Cluster | Enables efficient training of deep learning models and large-scale screening of candidate materials [6]. | GPU/CPU clusters (e.g., NVIDIA) [6] |

| Synthesizability Screening Pipeline | Integrated workflow that combines SynthNN with other filters (e.g., charge neutrality) for candidate prioritization [2]. | Custom software pipelines [2] [6] |

Integration with Material Discovery Workflows

The true power of SynthNN is realized when it is seamlessly integrated into a larger computational materials discovery pipeline. The flowchart below depicts a synthesizability-guided discovery workflow, from candidate generation to experimental synthesis.

This integrated approach is highly effective. For instance, one study screened over 4.4 million computational structures with a synthesizability model to identify 24 high-priority candidates. Subsequent experimental efforts successfully synthesized 7 out of 16 characterized targets, including one completely novel structure, with the entire process taking only three days [6].

SynthNN represents a transformative advancement in the field of computational materials discovery. By leveraging deep learning on the vast dataset of known materials, it successfully captures the complex, multi-faceted nature of synthesizability that eludes simpler, rule-based heuristics like charge balancing. While charge balancing remains a useful foundational concept, its limitations are clear. SynthNN moves the field forward by learning the underlying chemical principles directly from data, achieving a level of precision and efficiency that surpasses both traditional computational methods and human experts.

The integration of SynthNN and its next-generation successors into automated discovery pipelines is paving the way for a more rapid and reliable transition from theoretical prediction to synthesized material. This will undoubtedly accelerate the discovery and development of new functional materials to address pressing technological challenges.

The targeted discovery of new inorganic crystalline materials is a cornerstone for developing next-generation technologies in areas like energy storage, catalysis, and electronics. A central, long-standing challenge in this field has been the reliable prediction of whether a hypothetical material is synthesizable. For decades, charge balancing—the principle that a stable ionic compound should have a net neutral charge based on the common oxidation states of its constituents—has been a fundamental chemical rule used as a proxy for synthesizability in computational screening [2] [3]. However, empirical evidence increasingly reveals the limitations of this heuristic. Analysis of known materials shows that only about 37% of synthesized inorganic compounds strictly adhere to this rule, a figure that drops to a mere 23% for binary cesium compounds [3]. This indicates that while charge balancing is a contributing factor, human experts and successful materials leverage a far more complex and nuanced understanding of chemistry.

Machine learning (ML), particularly deep learning, offers a paradigm shift. By training on vast databases of known materials, ML models can move beyond rigid, human-defined rules. They learn implicit patterns and relationships, effectively internalizing chemical principles like charge balancing, electronegativity, and ionicity directly from the data, and often discovering novel, successful chemical patterns that defy conventional wisdom [3]. This technical guide explores the mechanisms through which this internalization occurs, detailing the methodologies, workflows, and evidence that demonstrate how data-driven models are advancing the frontier of inorganic materials research.

Quantitative Comparison: Traditional Rules vs. Machine Learning

The performance gap between traditional heuristic-based screening and modern data-driven approaches is substantial. The table below summarizes key quantitative benchmarks that highlight the superior precision of machine learning models in identifying synthesizable materials.

Table 1: Performance Comparison of Synthesizability Prediction Methods

| Method | Core Principle | Key Performance Metric | Value | Reference |

|---|---|---|---|---|

| Charge Balancing | Net ionic charge neutrality | Precision on known materials | ~37% | [3] |

| DFT Formation Energy | Thermodynamic stability | Recall of synthesized materials | ~50% | [3] |

| SynthNN (ML Model) | Data-driven pattern recognition | Precision vs. human experts | 1.5x higher | [3] |

| MatterGen (Gen. Model) | Diffusion-based structure generation | Generation of new, stable materials | >2x higher than prior models | [20] |

These comparisons show that ML models not only outperform simple chemical rules but also exceed the capabilities of computationally expensive physics-based simulations like DFT in specific predictive tasks. In a head-to-head discovery challenge, the SynthNN model achieved 1.5 times higher precision than the best-performing human expert and completed the task 100,000 times faster, demonstrating a significant leap in efficiency and accuracy [3].

Internalization Mechanisms: How Models Learn Chemistry

Machine learning models internalize chemical principles through several key mechanisms, which move from relying on human-engineered input to learning directly from the raw data of material compositions and structures.

Learned Compositional Representations

Models can be designed to discover the most relevant features for predicting synthesizability directly from the data. The SynthNN model, for instance, uses an atom2vec representation. This approach represents each chemical element with a vector of numbers (an embedding) that is initially random and is progressively optimized during model training [3]. The model learns these representations by analyzing the distribution of all previously synthesized materials in the Inorganic Crystal Structure Database (ICSD). Without being explicitly programmed with rules, analyses indicate that SynthNN learns the importance of charge-balancing, recognizes relationships between chemical families, and grasps concepts of ionicity [3]. It effectively deduces the underlying "chemistry" of synthesizability from the collective record of experimental success.

Learning from Human Knowledge Pipelines