Beyond Charge Balancing: Critical Limitations and Advanced Models for Accurate Synthesizability Prediction in Drug Development

This article critically examines the limitations of using charge balancing as a proxy for predicting material synthesizability, a crucial challenge in pharmaceutical development.

Beyond Charge Balancing: Critical Limitations and Advanced Models for Accurate Synthesizability Prediction in Drug Development

Abstract

This article critically examines the limitations of using charge balancing as a proxy for predicting material synthesizability, a crucial challenge in pharmaceutical development. It explores why this traditional heuristic fails to account for kinetic factors, technological constraints, and the complex reality of synthesized materials, with evidence showing it incorrectly labels most known compounds. The content delves into modern, data-driven solutions, including Positive-Unlabeled (PU) learning, graph neural networks, and large language models, which offer superior accuracy by learning synthesizability directly from experimental data. Aimed at researchers and drug development professionals, this review provides a comparative analysis of these advanced methodologies, discusses optimization strategies for integration into discovery pipelines, and outlines future directions for deploying reliable synthesizability filters to accelerate the creation of novel therapeutics.

Why Charge Balancing Fails as a Synthesizability Proxy: Uncovering the Fundamental Flaws

The Historical Role of Charge Balancing in Materials Assessment

Charge balancing principles have long served as a foundational heuristic in materials science for predicting synthesizability and stability. This technical guide examines the historical application of charge balancing in materials assessment, tracing its evolution from simple empirical rules to its integration within modern, data-driven machine learning models. While physico-chemical heuristics like the Pauling Rules and charge-balancing criteria provided an initial framework for evaluating hypothetical compounds, their limitations have become increasingly apparent. This paper details the quantitative shortcomings of these traditional methods, presents experimental protocols for validating new synthesizability models, and visualizes the evolving workflow in materials discovery. The analysis concludes that although charge balancing laid crucial groundwork, its role is now being subsumed by more sophisticated computational approaches that better account for kinetic factors and synthetic accessibility, ultimately framing charge balancing as a historical stepping stone rather than a definitive predictive tool in synthesizability research.

The prediction of which hypothetical materials can be successfully synthesized has long relied on principles of charge balancing derived from fundamental chemistry. Historically, physico-chemical based heuristics such as the Pauling Rules and charge-balancing criteria provided materials scientists with practical tools to assess crystal stability and synthesizability prior to experimental investment [1]. These rules emerged from intuitive chemical principles suggesting that compounds with properly balanced ionic charges would naturally form more stable structures with lower energy states, making them synthetically accessible.

For decades, these heuristics served as the primary screening mechanism in computational materials discovery pipelines. The underlying assumption was straightforward: materials that achieved sufficient electrostatic equilibrium would preferentially form under typical laboratory conditions. This perspective treated synthesizability predominantly as a function of thermodynamic stability, largely ignoring the complex kinetic and technological factors that ultimately determine successful synthesis outcomes [1]. The historical dominance of this charge-balancing paradigm established a conceptual framework that continues to influence materials assessment methodologies, even as its limitations become increasingly evident through systematic analysis of experimental materials databases.

Quantitative Limitations of Traditional Charge Balancing

Statistical Failure Rates in Modern Databases

Rigorous analysis of experimental materials databases reveals significant quantitative shortcomings in traditional charge-balancing approaches. When evaluated against the Materials Project database, a comprehensive repository of experimentally characterized and computationally predicted materials, these historical heuristics demonstrate substantial failure rates.

Table 1: Performance of Traditional Heuristics Against Experimental Data

| Heuristic Method | Reported Failure Rate | Database Evaluated | Key Limitation |

|---|---|---|---|

| Pauling Rules | >50% of synthesized materials fail rules [1] | Materials Project | Oversimplified structural assumptions |

| Charge-Balancing Criteria | >50% of synthesized materials fail criteria [1] | Materials Project | Ignores kinetic stabilization |

| Formation Energy/Convex Hull | Fails for metastable materials [1] | Multiple databases | Purely thermodynamic perspective |

The startling statistic that more than half of all experimentally synthesized materials in the Materials Project database violate these established heuristics underscores a fundamental disconnect between traditional charge-balancing principles and practical synthesizability [1]. This discrepancy indicates that while these rules may capture certain thermodynamic preferences, they fail to account for the diverse synthetic pathways and kinetic factors that enable the existence of many real-world materials.

The Metastability Challenge

A core limitation of charge-balancing approaches lies in their inability to account for metastable materials that persist despite thermodynamic instability. These materials, which constitute a significant portion of functional compounds, remain synthetically accessible through kinetic stabilization pathways that traditional heuristics cannot capture [1].

Materials that are kinetically trapped in metastable states often exhibit remarkable persistence after their initial formation, even when their formation energies deviate significantly from the ground state [1]. These materials may become the ground state under alternative thermodynamic conditions (e.g., high pressure), and remain stable once those conditions are removed. Furthermore, technological constraints play a crucial role, where novel synthesis methods like the Carbothermal Shock (CTS) method enable access to previously "unsynthesizable" materials with homogeneous components and uniform structures [1]. Charge balancing alone cannot predict which metastable phases might be accessible through such advanced synthetic techniques, highlighting a fundamental gap in its predictive capability.

Evolution Beyond Heuristics: Computational and Machine Learning Approaches

Integrated Synthesizability Models

The limitations of traditional approaches have catalyzed the development of sophisticated computational models that integrate multiple data modalities for synthesizability prediction. Modern frameworks combine compositional signals (elemental chemistry, precursor availability, redox constraints) with structural signals (local coordination, motif stability, packing) to generate more accurate synthesizability scores [2].

Table 2: Modern Synthesizability Prediction Approaches

| Model/Approach | Methodology | Data Inputs | Performance |

|---|---|---|---|

| SynCoTrain [1] | Dual-classifier PU-learning with co-training | Crystal structures (GCNNs) | High recall on oxide test sets |

| Unified Synthesizability Model [2] | Composition + structure ensemble | Composition descriptors & crystal graphs | 7/16 successful experimental syntheses |

| Composition-Only Models [2] | MTEncoder transformer | Stoichiometry & elemental descriptors | Limited by structural ignorance |

| Structure-Aware Models [2] | Graph Neural Networks (GNN) | Crystal structure graphs | Enhanced but computationally intensive |

The unified model employs a rank-average ensemble method (Borda fusion) to combine predictions from complementary composition and structure encoders, achieving state-of-the-art performance in prioritizing synthesizable candidates from millions of hypothetical structures [2]. This integrated approach demonstrates how moving beyond simple charge balancing enables more nuanced synthesizability assessments.

PU-Learning and the Negative Data Challenge

A fundamental innovation in modern synthesizability prediction is the application of Positive and Unlabeled (PU) learning to address the scarcity of confirmed negative examples (verified unsynthesizable materials) [1]. Unlike traditional classification tasks, synthesizability prediction suffers from a pronounced negative data deficiency, as failed synthesis attempts are rarely published or systematically cataloged [1].

The SynCoTrain framework implements a semi-supervised co-training approach where two complementary graph convolutional neural networks—ALIGNN (encoding atomic bonds and angles) and SchNet (using continuous convolution filters)—iteratively exchange predictions on unlabeled data [1]. This methodology mitigates model bias while progressively refining synthesizability classifications through collaborative learning. By leveraging PU-learning, these models effectively circumvent the historical dependence on curated negative datasets that plagued earlier charge-balancing approaches.

Experimental Protocols for Validating Synthesizability Predictions

High-Throughput Experimental Validation

Rigorous experimental validation is essential for assessing the performance of synthesizability prediction methods. Contemporary protocols employ automated, high-throughput synthesis pipelines to test computationally prioritized candidates under realistic laboratory conditions.

Workflow Implementation:

- Candidate Screening: Filter large computational databases (e.g., 4.4 million structures) using synthesizability scores to identify high-priority candidates (e.g., rank-average >0.95) [2].

- Composition Filtering: Remove compounds containing rare/expensive elements (e.g., platinoid groups) and toxic compounds to focus on practically relevant materials [2].

- Retrosynthetic Planning: Apply precursor-suggestion models (e.g., Retro-Rank-In) to generate viable solid-state precursor pairs [2].

- Process Parameter Prediction: Use literature-mined synthesis models (e.g., SyntMTE) to predict calcination temperatures and balance precursor quantities [2].

- Automated Synthesis: Execute predicted synthesis routes using high-throughput laboratory platforms with robotic material handling [2].

- Characterization: Employ automated X-ray diffraction (XRD) for phase identification and structure verification of synthesis products [2].

In a recent implementation, this protocol successfully synthesized and characterized 16 target compounds within just three days, with 7 matching the predicted structures—including one novel compound and one previously unreported phase [2]. This demonstrates the accelerated materials discovery pipeline enabled by modern synthesizability prediction compared to traditional charge-balancing approaches.

Performance Metrics and Benchmarking

Quantitative evaluation of synthesizability models requires specialized metrics adapted to the materials science context:

- Recall-Oriented Assessment: Given the practical focus on identifying synthesizable materials rather than comprehensively rejecting unsynthesizable ones, high recall rates are prioritized, particularly for internal and leave-out test sets [1].

- Stability Prediction Contrast: Models are additionally evaluated on stability prediction performance to gauge reliability through comparison with PU-learning recall, with the expectation of poorer stability performance due to high unlabeled data contamination [1].

- Experimental Success Rate: The ultimate validation metric measuring the percentage of computationally prioritized candidates that successfully synthesize into target phases under predicted conditions [2].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Experimental Resources for Synthesizability Research

| Research Reagent/Resource | Function/Application | Technical Specifications |

|---|---|---|

| Graph Convolutional Neural Networks (GCNNs) [1] | Encode crystal structure information for machine learning | ALIGNN (bond-angle encoding), SchNet (continuous filters) |

| High-Throughput Synthesis Platform [2] | Automated execution of predicted synthesis routes | Robotic handling, precise temperature control |

| Automated XRD Characterization [2] | Rapid phase identification and structure verification | High-throughput sample processing |

| Materials Databases [1] [2] | Source of labeled training data and candidate structures | Materials Project, GNoME, Alexandria |

| Precursor Suggestion Models [2] | Recommend viable solid-state precursor combinations | Retro-Rank-In algorithm |

| Synthesis Condition Predictors [2] | Predict calcination temperatures and parameters | SyntMTE model trained on literature corpora |

Charge balancing heuristics have played a historically significant but ultimately limited role in materials synthesizability assessment. While providing an intuitive initial framework for evaluating hypothetical compounds, these approaches demonstrate critical failures when confronted with systematic experimental validation. The emergence of sophisticated machine learning models that integrate compositional and structural features while addressing fundamental data challenges through PU-learning represents a paradigm shift in synthesizability prediction. These modern approaches acknowledge the multifaceted nature of synthetic accessibility, incorporating kinetic, technological, and thermodynamic considerations that extend far beyond simple electrostatic balancing. The historical role of charge balancing thus remains as an important conceptual foundation that has been progressively superseded by more comprehensive, data-driven methodologies capable of navigating the complex reality of materials synthesis.

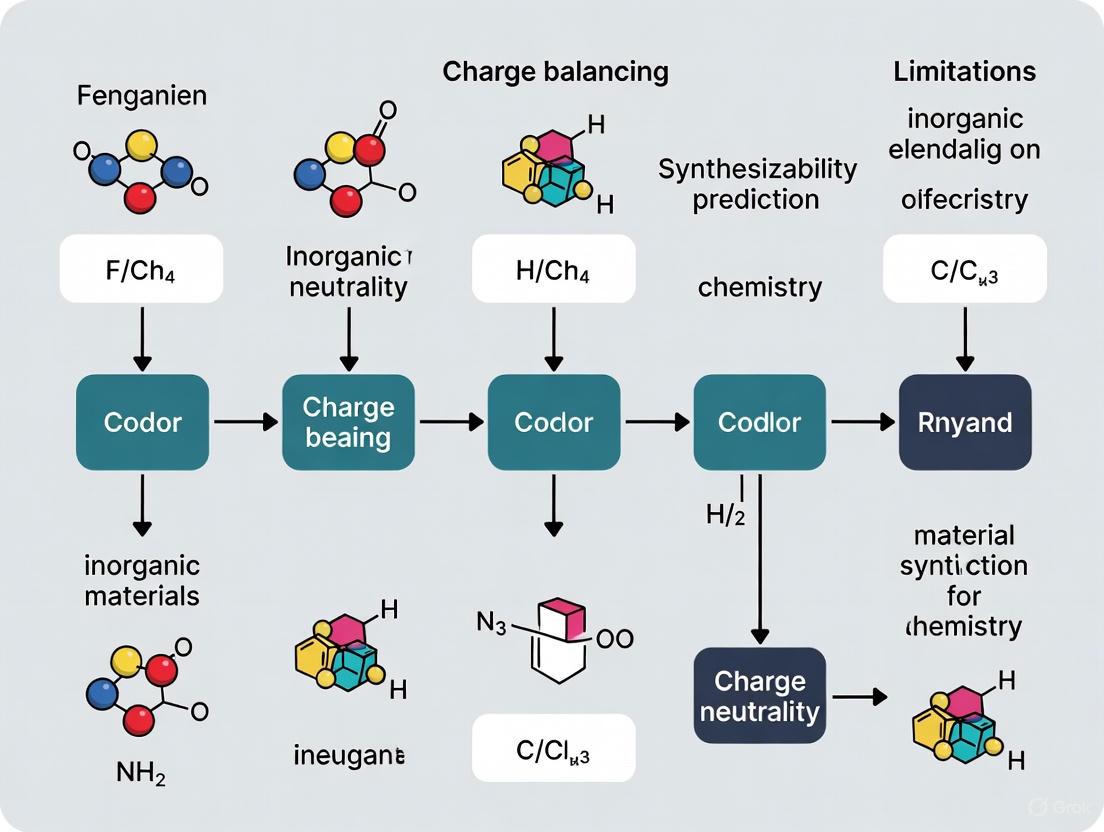

Diagrams and Workflows

Historical vs. Modern Assessment Workflow

SynCoTrain Co-Training Methodology

The prediction of which hypothetical materials can be successfully synthesized is a fundamental challenge in materials science. For decades, charge-balancing criteria have served as a widely used heuristic for this purpose, grounded in the chemically intuitive principle that synthesizable ionic compounds should exhibit a net neutral charge based on common oxidation states. However, a growing body of empirical evidence reveals that this traditional approach has significant limitations. This technical guide examines the quantitative evidence demonstrating the low success rate of charge balancing in predicting synthesizability, explores the methodological frameworks used to generate this evidence, and discusses advanced machine learning approaches that are surpassing this traditional method.

Quantitative Evidence: The Limited Predictive Power of Charge Balancing

Recent research has systematically evaluated the performance of charge-balancing criteria against comprehensive materials databases. The findings consistently demonstrate that charge balancing alone is an insufficient predictor of synthesizability.

Table 1: Empirical Performance of Charge-Balancing Criteria

| Study | Dataset | Charge-Balancing Success Rate | Key Findings |

|---|---|---|---|

| SynthNN (2023) [3] | Inorganic crystalline materials from ICSD | 37% of synthesized materials were charge-balanced | Only 23% of known binary cesium compounds were charge-balanced despite highly ionic bonds |

| SynCoTrain (2025) [1] | Materials Project database | <50% of experimental materials met criteria | Traditional heuristics like Pauling Rules and charge-balancing proved insufficient |

The performance gap becomes even more apparent when comparing charge balancing against modern machine learning approaches. In head-to-head comparisons, machine learning models have demonstrated significantly higher precision in identifying synthesizable materials [3]. These findings fundamentally challenge the long-standing assumption that charge neutrality is a reliable proxy for synthesizability.

Experimental Methodologies for Evaluating Synthesizability Predictors

Data Curation and Preprocessing

Establishing robust benchmarks for synthesizability prediction requires carefully curated datasets and methodological rigor:

Positive Example Sourcing: Studies typically extract synthesized inorganic materials from the Inorganic Crystal Structure Database (ICSD), which represents a nearly complete history of crystalline inorganic materials reported in scientific literature [3].

Handling Negative Examples: A significant challenge is the lack of confirmed "unsynthesizable" materials in databases, as unsuccessful synthesis attempts are rarely published. Research addresses this through:

Data Stratification: To ensure representative evaluation, datasets are typically stratified into train/validation/test splits, with careful attention to maintaining similar distributions of chemical families across splits [2].

Performance Evaluation Metrics

Researchers employ multiple quantitative metrics to evaluate synthesizability predictors:

- Precision and Recall: Standard classification metrics measuring accuracy in identifying synthesizable materials [3] [1]

- F1-Score: Harmonic mean of precision and recall, particularly important for PU learning algorithms [3]

- Area Under the Precision-Recall Curve (AUPRC): Used for model selection during training with early stopping [2]

Table 2: Comparison of Synthesizability Prediction Approaches

| Method | Principles | Advantages | Limitations |

|---|---|---|---|

| Charge-Balancing | Net neutral ionic charge based on common oxidation states [3] | Chemically intuitive; computationally inexpensive | Inflexible; cannot account for different bonding environments [3] |

| DFT-based Stability | Formation energy calculations relative to convex hull [3] [1] | Accounts for thermodynamic factors | Overlooks kinetic stabilization and finite-temperature effects [1] [2] |

| Composition-Based ML | Machine learning trained on chemical formulas of known materials [3] [2] | No structural information required; fast screening | Cannot differentiate between polymorphs [2] |

| Structure-Aware ML | Graph neural networks using crystal structure graphs [1] [2] | Captures local coordination and motif stability | Requires structural information, which may be unknown [2] |

Advanced Synthesizability Prediction Frameworks

SynthNN: A Deep Learning Approach

The SynthNN framework addresses charge balancing limitations through a deep learning synthesizability model that leverages the entire space of synthesized inorganic chemical compositions [3]:

- Architecture: Employs the atom2vec framework, representing each chemical formula by a learned atom embedding matrix optimized alongside all other neural network parameters [3]

- Training Data: Combines synthesized materials from ICSD with artificially generated unsynthesized materials in a Positive-Unlabeled (PU) learning approach [3]

- Performance: Demonstrates 7× higher precision than charge balancing and outperforms human experts in material discovery tasks [3]

SynCoTrain: A Dual-Classifier Co-Training Framework

SynCoTrain implements a semi-supervised classification model specifically designed to address the lack of negative data [1]:

- Dual-Classifier Architecture: Employs two complementary graph convolutional neural networks (SchNet and ALIGNN) to mitigate individual model bias [1]

- Co-Training Process: Iteratively exchanges predictions between classifiers to refine labels and enhance generalizability [1]

- Domain Specialization: Initially focused on oxide crystals, a well-studied material family with extensive experimental data [1]

Unified Composition-Structure Models

Recent approaches integrate both compositional and structural information to improve synthesizability predictions [2]:

- Multi-Encoder Architecture: Combines a compositional transformer encoder with a structural graph neural network [2]

- Rank-Average Ensemble: Aggregates predictions from both composition and structure models via Borda fusion to enhance candidate ranking [2]

- Experimental Validation: Successfully synthesized 7 of 16 predicted candidates, demonstrating practical utility [2]

The following diagram illustrates the workflow of an advanced synthesizability prediction pipeline that integrates both compositional and structural information:

Table 3: Key Research Reagents and Computational Tools for Synthesizability Research

| Resource | Type | Function | Access |

|---|---|---|---|

| Inorganic Crystal Structure Database (ICSD) [3] | Materials Database | Primary source of experimentally synthesized crystalline structures | Commercial |

| Materials Project [1] [2] | Computational Database | DFT-calculated material properties and structures | Public |

| Retro-Rank-In [2] | Computational Tool | Precursor suggestion model for synthesis planning | Research |

| Atomistic Line Graph Neural Network (ALIGNN) [1] | ML Model | Graph convolutional network encoding bonds and angles | Open Source |

| SchNetPack [1] | ML Model | Graph neural network using continuous convolution filters | Open Source |

| Rayyan [4] | Screening Tool | Semi-automated literature screening application | Web Application |

The empirical evidence conclusively demonstrates that traditional charge-balancing criteria successfully identify only a minority of synthesizable materials, with success rates below 40% across multiple studies. This limited performance stems from the method's inability to account for diverse bonding environments, kinetic stabilization effects, and the complex array of factors that influence synthetic accessibility. Modern machine learning approaches that learn synthesizability patterns directly from comprehensive materials data have demonstrated substantially superior performance, achieving up to 7× higher precision than charge balancing. These advanced frameworks integrate compositional and structural information while addressing the fundamental challenge of limited negative data through Positive-Unlabeled learning techniques. As synthesizability prediction continues to evolve, the integration of these data-driven approaches with experimental validation promises to significantly accelerate the discovery and development of novel functional materials.

The prediction and realization of novel functional materials and therapeutic agents represent a cornerstone of modern scientific advancement. For decades, thermodynamic stability, often quantified through formation energy and energy above the convex hull, has served as the primary screening metric for predicting synthesizability. However, this thermodynamic paradigm presents a critical limitation: numerous structures with favorable formation energies remain unsynthesized, while various metastable structures with less favorable formation energies are successfully synthesized in laboratories. This discrepancy highlights that thermodynamic stability is a necessary but insufficient condition for material synthesis, as it overlooks the critical roles of kinetic stabilization and synthesis pathway feasibility. Similarly, in drug discovery, the traditional reliance on equilibrium binding parameters (e.g., IC50 values) fails to fully account for time-dependent target engagement in dynamic physiological environments where drug concentrations fluctuate. This whitepaper examines how moving beyond thermodynamic considerations to incorporate kinetic stabilization and synthesis technology addresses fundamental limitations in both materials science and drug development, enabling more accurate predictions and successful experimental realization.

Kinetic Stabilization: Fundamental Concepts and Mechanisms

Theoretical Foundations of Kinetic Stabilization

Kinetic stabilization describes phenomena where a system remains in a metastable state due to energy barriers that slow its transition to the thermodynamic ground state. Unlike thermodynamic stability which concerns the final state of a system, kinetic stabilization focuses on the pathway and rate of transformation.

- Drug-Target Complexes: The time-dependent target occupancy is a function of both drug and target concentration as well as the thermodynamic and kinetic parameters that describe the binding reaction coordinate. Sustained target occupancy can be achieved through structural modifications that increase target (re)binding and/or decrease the rate of drug dissociation [5].

- Organic Radicals: For organic radicals, kinetic stabilization often arises from steric effects that inhibit radical dimerization or reaction with solvent molecules. The incorporation of branched substituents such as t-butyl groups close to the radical center provides steric protection, as exemplified by stable radicals like (2,2,6,6-tetramethylpiperidin-1-yl)oxyl (TEMPO) [6].

- Material Systems: In materials science, kinetic stabilization enables the synthesis of metastable phases that would be considered non-synthesizable based solely on thermodynamic criteria. These materials remain in their metastable states due to energy barriers that prevent rearrangement to more stable configurations [7].

Quantitative Descriptors for Kinetic Stabilization

Quantifying kinetic stabilization requires descriptors that capture both electronic and structural features influencing transformation barriers.

Table 1: Quantitative Descriptors for Kinetic Stabilization Across Domains

| Domain | Descriptor | Definition | Interpretation |

|---|---|---|---|

| Organic Radicals | Percent Buried Volume | The occupied percentage of the total volume of a sphere with a defined radius centered around the radical center [6] | Higher values indicate greater steric protection around the reactive center, slowing dimerization and other bimolecular reactions |

| Drug-Target Interactions | Target Residence Time | 1/koff, where koff is the rate constant for drug-target complex dissociation [5] | Longer residence times enable sustained target engagement even after systemic drug concentration declines |

| Material Synthesizability | CLscore | A machine-learning-derived score predicting synthesizability based on structural features beyond thermodynamic stability [7] | Scores <0.1 predict non-synthesizability; scores >0.1 predict synthesizability with 98.3% accuracy for known materials |

For organic radicals, the combination of maximum spin density (reflecting thermodynamic stabilization via delocalization) and percent buried volume (reflecting kinetic persistence) creates a stability map where long-lived radicals occupy a distinct region characterized by both substantial spin delocalization and significant steric protection [6].

Limitations of Thermodynamic-Only Approaches

The Synthesizability Gap in Materials Discovery

Traditional materials discovery has relied heavily on thermodynamic stability metrics, particularly energy above the convex hull, to predict synthesizability. However, significant limitations emerge from this approach:

- Metastable Materials Synthesis: Various metastable structures with less favorable formation energies are successfully synthesized, while numerous structures with favorable formation energies remain unsynthesized [7]. For example, the Materials Project lists 21 SiO₂ structures within 0.01 eV of the convex hull, yet the second most common phase (cristobalite) is not among these [2].

- Failure of Phonon Analysis: Material structures with imaginary phonon frequencies (indicating kinetic instability) can still be synthesized, demonstrating the limitations of phonon stability analyses as synthesizability predictors [7].

- Insufficient Predictive Accuracy: Thermodynamic methods based on energy above hull (≥0.1 eV/atom) achieve only 74.1% accuracy in synthesizability prediction, while kinetic methods based on phonon spectra (lowest frequency ≥ -0.1 THz) reach 82.2% accuracy. Both are substantially outperformed by machine learning approaches that capture additional factors [7].

Kinetic Selectivity in Drug Discovery

In drug discovery, the reliance on equilibrium binding parameters (e.g., IC₅₀) presents analogous limitations:

- Misleading Selectivity Assessment: Compounds with similar IC₅₀ values for target and off-target proteins appear to lack selectivity based on thermodynamic parameters alone. However, if the kon and koff values differ between targets, kinetic selectivity can exist even in the absence of thermodynamic selectivity [5].

- Time-Dependent Occupancy Discrepancies: Equilibrium parameters cannot fully account for time-dependent changes in target engagement in dynamic physiological environments where drug concentrations fluctuate. Simulations demonstrate that compounds with identical Kd values but different kinetic parameters show dramatically different temporal occupancy profiles, particularly with rapidly-cleared drugs [5].

- Potency-Kinetics Disconnect: Structural modifications that increase drug potency (decrease Kd) do not necessarily decrease koff. Examples include quinazoline-based inhibitors gefitinib and lapatinib, which have similar affinities for EGFR (0.4 and 3 nM) but vastly different residence times (<14 min vs. 430 min) [5].

Predictive Technologies Incorporating Kinetic Factors

Machine Learning for Synthesizability Prediction

Advanced computational approaches now integrate multiple factors beyond thermodynamic stability to improve synthesizability predictions:

Table 2: Machine Learning Approaches for Synthesizability Prediction

| Model/ Framework | Approach | Key Features | Performance |

|---|---|---|---|

| CSLLM [7] | Three specialized LLMs fine-tuned on crystal structures | Predicts synthesizability, synthetic methods, and suitable precursors | 98.6% accuracy in synthesizability prediction; >90% accuracy in method classification and precursor identification |

| SynCoTrain [8] | Dual classifier PU-learning with SchNet and ALIGNN networks | Uses Positive and Unlabeled learning to address scarcity of negative data | High recall on internal and leave-out test sets; balances dataset variability and computational efficiency |

| Composition-Structure Ensemble [2] | Rank-average ensemble of compositional and structural models | Integrates composition signals (elemental chemistry, precursor availability) with structural signals (local coordination, packing) | Successfully identified synthesizable candidates from 4.4 million structures; experimental synthesis confirmed 7 of 16 targets |

These approaches demonstrate that synthesizability prediction requires considering both compositional features (governed by elemental chemistry, precursor availability, redox and volatility constraints) and structural features (capturing local coordination, motif stability, and packing) [2].

Quantitative Flux Analysis in Metabolic Pathways

For biological systems, quantitative flux analysis using isotope tracers provides kinetic insights beyond static concentration measurements:

- NAD Metabolism Mapping: Isotope-tracer methods using [²H]NAM or [¹³C]tryptophan enable quantitation of NAD synthesis and breakdown fluxes in cell lines and tissues, revealing tissue-specific NAD metabolism that cannot be deduced from concentration measurements alone [9].

- Dynamic Pathway Analysis: In T47D breast cancer cells, flux analysis revealed that NAD was made from nicotinamide and consumed largely by PARPs and sirtuins, with intracellular NAM equilibration (t₁/₂ 20 min) being much faster than NAD biosynthesis (t₁/₂ 9 h) [9].

- Pharmacokinetic Insights: Flux analysis demonstrated that oral administration of nicotinamide riboside or mononucleotide delivers these intact molecules to multiple tissues, while oral administration results in hepatic metabolism to nicotinamide, revealing critical bioavailability constraints for nutraceutical development [9].

Experimental Methodologies for Kinetic Analysis

High-Throughput Kinetic Screening in Drug Discovery

Technical advances now enable detailed kinetic characterization earlier in the drug discovery process:

- High-Pressure Automated Lag Time Apparatus (HP-ALTA): This methodology enables quantitative assessment of kinetic hydrate inhibitors (KHIs) by making numerous formation measurements rapidly, overcoming the stochasticity limitations of conventional techniques [10].

- Formation Probability Distributions: HP-ALTA data is analyzed by constructing formation probability density histograms with uniform temperature bin widths, enabling operations like numerical integration and subtraction without regressing data to model analytic functions [10].

- Memory Effect Quantification: The method enables quantification of the memory effect phenomenon (where previously-formed hydrate structures facilitate reformation), revealing that the memory effect reduces expected subcoolings of poor-performing KHIs by 8-14 K, while only reducing expected subcoolings of the best performers by 1-2 K [10].

Target-Guided Synthesis in Drug Discovery

Kinetic target-guided synthesis approaches represent a paradigm shift in ligand discovery:

- In Situ Click Chemistry: This method uses biological targets to assemble their own inhibitors from complementary building blocks via in situ click chemistry, with the protein selectively synthesizing the tightest-binding ligand from a library of potential fragments [11].

- Warhead Design Considerations: Successful kinetic target-guided synthesis requires careful warhead selection and design, with protein supply remaining a key success factor. Miniaturization efforts are expanding the scope of this strategy as a fully-fledged drug discovery tool [11].

Isotopic Tracer Protocols for Metabolic Flux Analysis

Detailed methodology for quantifying NAD synthesis and breakdown fluxes [9]:

Protocol Steps:

- Preparation of Isotope-Labeled Medium: DMEM medium with 10% dialyzed serum is prepared with exclusively isotopic NAM (32 μM, the standard DMEM concentration) or labeled tryptophan [9].

- Cell Culture Incubation: T47D breast cancer cells are switched to the labeled medium and incubated for predetermined time periods.

- Sample Collection and Metabolite Extraction: Cells are collected at various time points (e.g., 0, 15, 30 minutes, 1, 2, 4, 8, 24 hours) and metabolites extracted using 80% methanol at -80°C.

- Mass Spectrometry Analysis: Liquid chromatography-mass spectrometry (LC-MS) is used to quantify both unlabeled and labeled forms of NAD-related metabolites.

- Flux Modeling: Mathematical modeling of the labeling kinetics determines NAD synthesis (fin) and breakdown (fout) fluxes, accounting for dilution by cell growth (f_growth).

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Kinetic Stabilization Studies

| Reagent/Material | Function/Application | Experimental Context |

|---|---|---|

| [2,4,5,6-²H]Nicotinamide (NAM) | Stable isotope tracer for NAD flux measurements | Enables quantification of NAD synthesis and breakdown fluxes in cells and tissues [9] |

| High-Pressure Automated Lag Time Apparatus (HP-ALTA) | High-throughput measurement of hydrate formation probability distributions | Enables quantitative ranking of kinetic hydrate inhibitor performance [10] |

| Kinetic Hydrate Inhibitors (KHIs) | Delay hydrate nucleation and/or growth | Test compounds for evaluating kinetic inhibition performance; typically used at 0.5-1 wt% concentration [10] |

| Azide-Alkyne Warheads | Complementary reactive groups for in situ click chemistry | Enable target-guided synthesis of inhibitors via copper-free click chemistry [11] |

| Graph Neural Networks (GNNs) | Machine learning models for structure-property prediction | Predict material synthesizability from crystal structure graphs; examples: SchNet, ALIGNN [8] [2] |

| Large Language Models (LLMs) | Text-based prediction of synthesizability and synthesis parameters | Fine-tuned models (CSLLM) predict synthesizability, methods, and precursors from text-based crystal structure representations [7] |

The integration of kinetic stabilization principles and synthesis technology represents a paradigm shift in both materials science and drug discovery. Thermodynamic stability, while providing a valuable initial screening parameter, fails to accurately predict synthesizability and biological activity due to its neglect of kinetic barriers and synthesis pathway feasibility. Machine learning approaches that integrate compositional and structural features significantly outperform thermodynamic-only methods in synthesizability prediction. Similarly, in drug discovery, kinetic parameters (kon, koff, residence time) provide critical insights into time-dependent target engagement that equilibrium binding constants cannot reveal. Experimental methodologies including high-throughput kinetic screening, target-guided synthesis, and isotopic flux analysis provide the empirical foundation for understanding and exploiting kinetic stabilization across scientific domains. As these kinetic-aware approaches continue to mature, they promise to bridge the gap between computational prediction and experimental realization, accelerating the discovery of novel functional materials and therapeutic agents.

The Critical Distinction Between Thermodynamic Stability and Practical Synthesizability

The discovery of new inorganic crystalline materials is a fundamental driver of technological advancement, fueling innovations across sectors from renewable energy to biomedical devices. A central paradox, however, often impedes progress: computational methods regularly predict thousands of thermodynamically stable compounds with promising properties, yet the vast majority remain synthetically inaccessible in the laboratory. This discrepancy highlights the critical distinction between a material's thermodynamic stability—its inherent energetic favorability at equilibrium conditions—and its practical synthesizability—the experimental feasibility of realizing it under practical laboratory constraints. For decades, heuristic rules like charge-balancing have served as initial synthesizability filters, but their limitations are increasingly apparent in contemporary research. Within the context of a broader thesis on the limitations of charge-balancing for synthesizability prediction, this review examines why thermodynamic proxies are insufficient and explores the data-driven methodologies that are redefining how researchers identify genuinely accessible materials, thereby bridging the gap between computational prediction and experimental realization.

The charge-balancing approach, which filters candidate materials based on net neutral ionic charge using common oxidation states, represents an intuitively appealing but fundamentally limited strategy. Quantitative analysis reveals its severe shortcomings: among all synthesized inorganic materials, only approximately 37% actually satisfy charge-balancing criteria, and even for typically ionic systems like binary cesium compounds, the proportion drops to just 23% [3]. This poor performance stems from the model's inability to account for diverse bonding environments in metallic alloys, covalent materials, and other non-idealized systems. Consequently, while charge-balancing offers computational simplicity, it fails as a comprehensive synthesizability metric, necessitating more sophisticated approaches that capture the complex physical and chemical factors governing synthetic accessibility.

Beyond Heuristics: The Multifactorial Nature of Synthesizability

The Insufficiency of Thermodynamic Stability as a Sole Proxy

Traditional materials discovery has heavily relied on density functional theory (DFT) calculations to assess thermodynamic stability through formation energy (FE) and energy above the convex hull (E$hull$). Materials with negative formation energies and E$hull$ values close to zero are considered thermodynamically stable and thus presumed synthesizable. However, this approach provides an incomplete picture of synthesizability for several reasons. First, thermodynamic stability calculations typically consider perfect crystals at 0 K, ignoring real-world factors like defects, finite temperature effects, and kinetic barriers that dominate actual synthesis outcomes [12]. Second, numerous metastable materials with less favorable formation energies are routinely synthesized through kinetic stabilization, while many theoretically stable compounds remain unsynthesized due to high activation energy barriers or the absence of viable synthesis pathways [1].

The practical limitations of thermodynamic proxies are quantitatively demonstrated in large-scale benchmarking studies. When assessing synthesizability, conventional stability thresholds (e.g., E$_hull$ < 0.08 eV/atom) achieve only approximately 50% accuracy in distinguishing synthesizable materials, performing barely better than random guessing [3]. Furthermore, an analysis of well-explored chemical spaces reveals numerous hypothetical materials with favorable formation energies that have never been synthesized, underscoring that thermodynamics alone cannot predict experimental accessibility [1]. These limitations necessitate a paradigm shift toward multifactorial synthesizability assessment that incorporates kinetic, experimental, and compositional considerations alongside thermodynamic factors.

Key Factors Governing Practical Synthesizability

Practical synthesizability emerges from the complex interplay of multiple physical and experimental factors:

Kinetic Stabilization: Metastable materials can be synthesized when kinetic barriers prevent their transformation to more stable phases, effectively trapping them in local energy minima [1]. This explains the synthesis of numerous materials with positive formation energies or significant distances from the convex hull.

Synthetic Pathway Accessibility: The existence of feasible reaction pathways with manageable activation energies critically determines whether a material can be synthesized, independent of its final thermodynamic stability [1].

Precursor Availability and Reactivity: The choice of starting materials significantly influences synthesis outcomes, as precursors must provide appropriate thermodynamic driving forces or kinetic pathways to the target material [13] [2].

Experimental Conditions and Methodology: Synthesis success depends heavily on laboratory-accessible parameters including temperature, pressure, and available equipment [1]. Some materials require extreme conditions (e.g., high pressures) that may not be practically feasible.

Technological Constraints: Practical considerations such as reactant costs, equipment availability, and human resource limitations inevitably influence which materials are targeted for synthesis [3].

Table 1: Quantitative Comparison of Synthesizability Prediction Methods

| Method | Key Metric | Reported Accuracy/Performance | Key Limitations |

|---|---|---|---|

| Charge-Balancing | Net neutral ionic charge | 37% of synthesized materials are charge-balanced [3] | Cannot account for diverse bonding environments; oversimplified |

| DFT Thermodynamic Stability | Energy above convex hull (E$_hull$) | ~50% accuracy in identifying synthesizable materials [3] | Ignores kinetic factors and experimental constraints |

| Machine Learning (SynthNN) | Composition-based classification | 7× higher precision than DFT stability [3] | Requires large training datasets; limited to composition-based features |

| Deep Learning (FTCP) | Structural synthesizability score | 82.6% precision, 80.6% recall for ternary crystals [12] | Dependent on structural data quality and representation |

| Large Language Models (CSLLM) | Structure-based synthesizability classification | 98.6% accuracy on testing data [13] | Requires extensive fine-tuning; potential "hallucination" issues |

Experimental and Computational Methodologies

Data Curation and Representation Strategies

Accurate synthesizability prediction begins with robust data curation and effective material representation. The standard approach utilizes the Inorganic Crystal Structure Database (ICSD) as a source of synthesizable ("positive") examples, containing experimentally validated structures reported in the literature [12] [3]. A significant challenge arises from the lack of confirmed non-synthesizable ("negative") examples, as failed synthesis attempts are rarely published. Researchers address this through Positive-Unlabeled (PU) learning approaches, where artificially generated compounds or theoretical structures not present in experimental databases are treated as unlabeled negative examples [1] [3].

Material representation strategies vary based on available data:

Composition-only representations (e.g., atom2vec) learn optimal feature representations directly from the distribution of synthesized materials without requiring structural information [3].

Structural representations include Fourier-Transformed Crystal Properties (FTCP), which captures crystal periodicity in both real and reciprocal space [12], and graph-based representations like crystal graph convolutional neural networks (CGCNN) that encode atomic properties and bonding information [12].

Integrated representations combine both compositional and structural information, with models like the unified approach by Prein et al. using separate encoders for composition (transformer-based) and structure (graph neural network) [2].

Table 2: Key Research Reagents and Computational Resources for Synthesizability Research

| Resource/Solution | Function/Role | Application in Synthesizability Research |

|---|---|---|

| ICSD Database | Source of experimentally verified crystal structures | Provides ground truth data for training synthesizability models [12] [3] |

| Materials Project API | Access to DFT-calculated material properties | Enables comparison between computational predictions and experimental synthesizability [12] |

| PU Learning Algorithms | Handle absence of confirmed negative examples | Allows training classification models without definitively non-synthesizable examples [1] [3] |

| Graph Neural Networks (ALIGNN, SchNet) | Process crystal structure graphs | Encode structural information for synthesizability prediction [1] |

| Solid-State Precursors | Reactants for experimental validation | Used to verify synthesizability predictions through laboratory synthesis [2] |

Machine Learning Architectures and Training Protocols

Modern synthesizability prediction employs diverse machine learning architectures, each with specialized training methodologies:

Composition-Based Models (SynthNN): These models utilize neural networks with atom embedding matrices (atom2vec) that learn optimal representations of chemical formulas directly from the distribution of synthesized materials [3]. The training process involves minimizing binary cross-entropy loss on datasets containing both ICSD compounds (positive examples) and artificially generated compositions (treated as negative examples). The critical hyperparameter N$_{synth}$ controls the ratio of artificial to synthesized formulas in training, significantly impacting model performance [3].

Structure-Aware Deep Learning Models: These approaches process crystal structures represented as FTCP or crystal graphs using deep neural networks. The typical protocol involves training on ternary and quaternary compounds from materials databases, with careful train-test separation based on discovery timeline (e.g., pre-2015 training and post-2019 testing) to evaluate true predictive capability [12]. Models output a synthesizability score (SC) between 0-1, with classification thresholds optimized for precision-recall balance.

Dual-Classifier PU Learning (SynCoTrain): This sophisticated approach employs co-training with two complementary graph convolutional neural networks (SchNet and ALIGNN) that iteratively exchange predictions to mitigate individual model biases [1]. The training protocol involves iterative refinement where each classifier labels the most confident positive examples from the unlabeled data, which are then added to the other classifier's training set. This collaborative approach enhances generalization, particularly for out-of-distribution predictions [1].

Large Language Models (CSLLM): For crystal structure synthesizability prediction, researchers fine-tune LLMs on specialized text representations of crystal structures ("material strings") containing essential crystallographic information [13]. The fine-tuning process uses balanced datasets of synthesizable (ICSD) and non-synthesizable (low CLscore) structures, with careful prompt engineering to reduce hallucinations and improve accuracy [13].

Comparative Analysis of Predictive Performance

Quantitative Benchmarking Across Methodologies

Rigorous benchmarking reveals significant performance differences across synthesizability prediction approaches. Traditional methods show limited efficacy: charge-balancing achieves precision barely above random guessing, while DFT-based thermodynamic stability (E$_hull$ < 0.1 eV/atom) reaches approximately 74.1% accuracy [13]. Advanced machine learning methods substantially outperform these baselines. The SynthNN model demonstrates 7× higher precision than DFT-calculated formation energies in identifying synthesizable materials [3]. In a direct competition against human experts, SynthNN achieved 1.5× higher precision and completed the assessment task five orders of magnitude faster than the best-performing materials scientist [3].

Structure-based deep learning models show particularly strong performance for ternary compounds, with FTCP-based approaches achieving 82.6% precision and 80.6% recall [12]. When tested temporally on materials discovered after training data collection, these models maintained high true positive rates (88.60% for post-2019 discoveries), demonstrating effective generalization to novel chemical spaces [12]. The most advanced approaches, including large language models fine-tuned on crystal structures (CSLLM), report remarkable 98.6% accuracy on testing data, significantly outperforming both thermodynamic and kinetic (phonon spectrum) stability metrics [13].

Practical Validation Through Experimental Synthesis

The ultimate validation of synthesizability prediction models comes from experimental synthesis of recommended candidates. Recent research demonstrates promising results in this domain. In one pipeline implementation, researchers applied a combined compositional and structural synthesizability score to screen over 4.4 million computational structures, identifying approximately 500 high-priority candidates [2]. Through retrosynthetic planning and automated laboratory synthesis, they successfully characterized 16 targets, with 7 matching the predicted structures—including one completely novel compound and one previously unreported phase [2]. This successful experimental validation, completed within just three days, highlights the practical utility of modern synthesizability prediction in accelerating genuine materials discovery.

Table 3: Experimental Workflow for Synthesizability Validation

| Stage | Protocol Description | Key Outcomes |

|---|---|---|

| Candidate Screening | Apply synthesizability score to computational databases (e.g., Materials Project, GNoME) | Filter millions of candidates to hundreds of high-priority targets [2] |

| Retrosynthetic Planning | Use precursor-suggestion models (e.g., Retro-Rank-In) to identify viable solid-state precursors | Generate ranked lists of precursor pairs with corresponding reaction balances [2] |

| Synthesis Parameter Prediction | Apply models (e.g., SyntMTE) to predict calcination temperatures and conditions | Determine optimal synthesis parameters for target phase formation [2] |

| High-Throughput Synthesis | Execute predicted synthesis routes in automated laboratory platforms | Produce target materials for characterization [2] |

| Structural Characterization | Verify products via X-ray diffraction (XRD) analysis | Confirm successful synthesis and structural match to predictions [2] |

Visualizing Synthesizability Prediction Workflows

Machine Learning Approach for Synthesizability Prediction

Integrated Composition and Structure Model Architecture

The distinction between thermodynamic stability and practical synthesizability represents a fundamental consideration in modern materials discovery. While thermodynamic calculations provide valuable insights into a material's inherent stability, they capture only one dimension of the complex synthesizability landscape. The limitations of traditional heuristics like charge-balancing have motivated the development of sophisticated data-driven approaches that learn synthesizability patterns directly from experimental data. Contemporary machine learning models, particularly those integrating both compositional and structural information through PU learning frameworks, demonstrate remarkable predictive accuracy, substantially outperforming both human experts and traditional computational methods.

Looking forward, synthesizability prediction will increasingly focus on pathway-specific assessment—not merely determining if a material can be synthesized, but under what conditions and through what routes. The integration of large language models capable of predicting synthetic methods and precursors represents a promising direction, potentially offering complete synthesis planning alongside synthesizability evaluation [13]. As these models continue to evolve, incorporating more comprehensive considerations of kinetic factors, precursor economics, and experimental constraints, they will dramatically accelerate the translation of computational materials predictions into laboratory realities, ultimately fulfilling the promise of materials design and discovery.

Modern Computational Approaches: From Machine Learning to LLMs for Synthesizability

In multiple scientific domains, particularly in materials science and drug development, a fundamental challenge persists: the critical lack of definitively labeled negative data. This data scarcity problem is particularly acute in synthesizability prediction research, where the goal is to identify novel, synthesizable materials from vast chemical spaces. Traditional supervised machine learning approaches require both positive examples (successfully synthesized materials) and negative examples (verified unsynthesizable materials) to train accurate classifiers. However, negative examples are exceptionally rare in scientific databases; failed synthesis attempts are systematically underrepresented in the literature due to publication bias, while "unsynthesizable" is often a temporally contingent label that depends on evolving synthetic capabilities [1] [3].

For years, researchers have relied on computational proxies to overcome this data limitation, with charge-balancing emerging as a particularly prevalent heuristic in synthesizability prediction. This approach filters candidate materials based on net ionic charge neutrality, assuming that synthesizable materials must satisfy this basic chemical principle. However, quantitative analyses reveal severe limitations in this approach. Studies of known materials show that only approximately 37% of synthesized inorganic crystals in databases are charge-balanced according to common oxidation states, with the figure dropping to just 23% for binary cesium compounds [3]. This demonstrates that while charge-balancing may capture one facet of synthesizability, it fails to account for the complex array of kinetic, thermodynamic, and technological factors that ultimately determine whether a material can be synthesized.

Positive-Unlabeled (PU) learning represents a paradigm shift in how we approach this fundamental data scarcity problem. By reformulating the classification task to learn from only positive and unlabeled examples, PU learning algorithms can directly address the reality of scientific databases where negative examples are either missing or unreliable [14]. This article provides a comprehensive technical introduction to PU learning methodologies, with specific application to overcoming the limitations of charge-balancing in synthesizability prediction research.

Theoretical Foundations of PU Learning

Problem Formulation and Key Assumptions

PU learning addresses a specialized binary classification problem where the training data consists of:

- Labeled positive examples (P): Instances confirmed to belong to the target class

- Unlabeled examples (U): Instances that may belong to either the positive or negative class [14]

Formally, we consider a dataset of triplets (x, y, s) where x represents feature vectors, y ∈ {0,1} the true class (unobserved for some examples), and s ∈ {0,1} indicates whether an example is labeled. The critical constraint is that only positive examples can be labeled: Pr(y=1|s=1)=1 [14]. Two common scenarios for PU data generation include:

- Single-training-set scenario: Both positive and unlabeled examples come from the same dataset, with positive examples being labeled according to a probabilistic labeling mechanism characterized by propensity score e(x) = Pr(s=1|y=1,x) [14]

- Case-control scenario: Positive and unlabeled examples come from independently drawn datasets [14]

Successful PU learning typically relies on several key assumptions:

- Selected Completely At Random (SCAR): Labeled positive examples are randomly selected from the entire positive set, meaning e(x) is constant

- Separability: Positive and negative examples are perfectly separable in the feature space

- Positive subdomain prior: The positive class forms a coherent cluster in the feature space [14]

Comparison of PU Learning Approaches

Table 1: Comparison of Major PU Learning Approaches

| Approach Category | Key Methodology | Advantages | Limitations | Representative Algorithms |

|---|---|---|---|---|

| Two-Step Techniques | Identifies reliable negative examples from unlabeled data, then applies supervised learning | Simple conceptual framework; Leverages existing supervised algorithms | Performance degrades if reliable negative identification fails | Spy-EM, Roc-SVM [15] [14] |

| Biased Learning | Treats all unlabeled examples as negative with smaller weights | Straightforward implementation; No complex identification step | Poor performance when unlabeled set contains many positives | [15] |

| Unbiased Risk Estimation | Derives unbiased estimators of classification risk using PU data | Theoretical soundness; Direct risk minimization | Relies on accurate class prior estimation; May require linear-odd loss functions | UPU, NNPU, PUSB [15] |

Advanced PU Learning Frameworks

Noise-Insensitive PU Learning (Pin-LFCS)

Recent advances in PU learning have addressed critical challenges like feature noise and model robustness. The Pinball Loss Factorization and Centroid Smoothing (Pin-LFCS) method represents one such advancement, specifically designed to handle noisy data scenarios common in real-world scientific applications [15].

Pin-LFCS employs a robust optimization framework through two key innovations:

- Pinball loss factorization: Decomposes the noise-insensitive pinball loss into label-independent and label-dependent terms

- Centroid smoothing: Eliminates adverse effects of label noise by focusing on the label-dependent term [15]

The kernelized version (Pin-KLFCS) extends this approach to nonlinear classification problems while maintaining theoretical guarantees including noise insensitivity, unbiasedness, and generalization error bounds [15]. Experimental validation across 14 benchmark datasets with varying noise levels demonstrates that these methods outperform existing approaches, particularly in noisy conditions prevalent in scientific data collection [15].

SynCoTrain: A Dual-Classifier Framework for Synthesizability Prediction

The SynCoTrain framework exemplifies the application of advanced PU learning to synthesizability prediction, specifically addressing the limitations of charge-balancing approaches [1]. This method employs a co-training paradigm with two complementary graph convolutional neural networks:

- ALIGNN (Atomistic Line Graph Neural Network): Encodes both atomic bonds and bond angles, aligning with chemical intuition

- SchNetPack: Utilizes continuous-filter convolutional layers, representing a physics-based perspective [1]

Table 2: SynCoTrain Experimental Performance Comparison

| Evaluation Metric | Charge-Balancing | DFT Formation Energy | SynCoTrain (PU Learning) |

|---|---|---|---|

| Precision | Low (37% on known materials) | 1.0x (baseline) | 7x higher than DFT [3] |

| Recall | Not reported | Not reported | High on internal and leave-out test sets [1] |

| Human Expert Comparison | Not applicable | Not applicable | 1.5x higher precision than best human expert [3] |

The co-training process iteratively exchanges predictions between classifiers, mitigating individual model bias and enhancing generalizability. Each iteration employs the PU learning method introduced by Mordelet and Vert, which treats synthesizable crystals as positive examples and all others as unlabeled [1]. This approach successfully addresses the negative data scarcity problem that fundamentally limits charge-balancing methods.

Experimental Protocols and Methodologies

PU Learning Experimental Workflow

Detailed Experimental Protocol for Synthesizability Prediction

Data Collection and Preparation:

- Positive Examples: Extract confirmed synthesizable materials from experimental databases (e.g., ICSD, Materials Project)

- Unlabeled Examples: Generate hypothetical materials through:

- Chemical element substitution in known structures

- Structural enumeration in compositionally similar spaces

- Random stoichiometry generation within chemical constraints [3]

Feature Engineering:

- Compositional Features: Elemental properties (electronegativity, atomic radius, valence electron count)

- Structural Features (if available): Symmetry information, coordination environments, packing patterns

- Charge-Balancing Metrics: Include as one feature among many rather than as a filtering criterion [3]

Model Training and Validation:

- Class Prior Estimation: Estimate the proportion of positive examples in the unlabeled set using non-traditional supervised learners or maximum likelihood estimation

- Algorithm Selection: Choose appropriate PU learning method based on data characteristics and noise tolerance requirements

- Validation Strategy: Employ hold-out validation with careful adjustment of metrics to account for unlabeled positives in test set [16]

Performance Assessment:

- Standard Metrics: Compute precision, recall, and F1-score with adjustments for PU setting

- Comparison Baselines: Evaluate against charge-balancing, formation energy thresholds, and human expert performance

- Ablation Studies: Isolate contributions of different feature sets and algorithmic components [3]

Implementation Considerations

The Scientist's Computational Toolkit

Table 3: Essential Research Reagents for PU Learning Experiments

| Tool/Category | Specific Examples | Function/Role | Application Context |

|---|---|---|---|

| Graph Neural Networks | ALIGNN, SchNetPack | Encode crystal structure information for synthesizability prediction | Materials science applications [1] |

| Class Prior Estimation | EN-ALE, KM1, KM2 | Estimate proportion of positive examples in unlabeled set | Critical for unbiased risk estimation methods [15] [14] |

| Loss Functions | Pinball loss, Sigmoid loss, Ramp loss | Provide noise insensitivity and theoretical guarantees | Robust PU learning implementations [15] |

| Benchmark Datasets | UCI datasets, Material databases (ICSD, OQMD) | Algorithm validation and performance comparison | General benchmarking and method development [15] [3] |

Model Selection and Evaluation Framework

PU learning represents a fundamental advancement in how we approach classification problems under the realistic data constraints faced by scientific researchers. By moving beyond the limitations of heuristic proxies like charge-balancing, PU learning enables direct learning from the actual distribution of experimentally realized materials. Frameworks like SynCoTrain demonstrate that PU learning not only outperforms traditional computational proxies but can surpass human expert performance in predicting synthesizability, achieving up to 7× higher precision than formation energy-based approaches and 1.5× higher precision than the best human experts [3].

The continued development of robust PU learning methods—particularly those resistant to feature noise and capable of handling complex scientific data—holds significant promise for accelerating materials discovery and drug development. As benchmark frameworks become more standardized and accessible [17], these methods will increasingly become essential tools in the computational scientist's toolkit, enabling more reliable identification of synthesizable materials and bioactive compounds despite the fundamental challenge of negative data scarcity.

The prediction of material properties and synthesizability is a cornerstone of modern materials science and drug development. Traditional methods that rely on charge balancing and thermodynamic stability metrics often provide incomplete insights, as they fail to fully account for the complex quantum interactions and kinetic factors that determine whether a material can actually be synthesized [18]. Graph Neural Networks (GNNs) have emerged as a powerful solution to this challenge by directly learning from atomic-scale structures.

GNNs are uniquely suited for modeling crystal structures because they represent materials as graphs, where atoms serve as nodes and chemical bonds as edges [19]. This representation allows GNNs to capture both the elemental composition and the spatial arrangement of atoms in a system. For crystallographic applications, GNNs must satisfy fundamental physical constraints including rotational invariance (energy predictions should not change if the crystal is rotated) and translational invariance (predictions should not change if the crystal is translated) [20]. Furthermore, models predicting forces must demonstrate equivariance, meaning forces rotate appropriately with the crystal structure [21].

The limitations of traditional charge-balancing approaches for synthesizability prediction have become increasingly apparent. These methods often rely on simplified heuristics and fail to account for kinetic barriers and technological constraints that ultimately determine synthesis outcomes [18] [22]. GNNs offer a more comprehensive approach by learning directly from atomic structures and their relationships, enabling them to capture complex patterns that elude traditional methods.

Technical Architecture of Key GNN Models

SchNet: Quantum-Accurate Predictions with Continuous-Filter Convolutions

SchNet (Schütt Neural Network) is a deep neural network framework specifically designed for quantum-accurate prediction of properties and dynamics in atomistic systems [20]. Its architecture systematically incorporates physical principles to ensure predictions obey fundamental scientific constraints.

Core Architectural Components:

Continuous-Filter Convolutions (cfconv): SchNet generalizes convolutional operations to non-gridded atomic positions by using continuous-filter convolutions that operate directly on interatomic distances. The cfconv layer is computed as:

(xi^{(l+1)} = xi^{(l)} + \sum{j=1}^n xj^{(l)} \circ W^{(l)}(ri - rj))

where (x_i^{(l)}) represents the feature vector of atom (i) at layer (l), (\circ) denotes element-wise multiplication, and (W^{(l)}) is a filter-generating network [20].

Representation Invariance: To ensure rotational and translational invariance, SchNet's filter-generating network (W) uses only interatomic distances (d{ij} = \|ri - r_j\|), expanded using Gaussian radial basis functions:

(ek(d{ij}) = \exp\left[ -\gamma (d{ij} - \muk)^2 \right])

for (k=1,...,K), where (\mu_k) are distance centers and (\gamma) controls the width [20].

Activation Functions: SchNet employs shifted softplus activations (\text{ssp}(x) = \ln(0.5e^x + 0.5)) throughout the network, ensuring smooth, infinitely differentiable functions that are crucial for analytical force calculations [20].

Physical Property Prediction: After processing through multiple interaction blocks, SchNet generates atomic energy contributions (Ei) from atomic feature vectors (xi^{(L)}), then sums these to obtain the total potential energy: (E = \sum{i=1}^n Ei). Forces are derived analytically as gradients of this energy: (Fi = -\nabla{r_i} E), guaranteeing energy conservation [20].

ALIGNN: Explicit Modeling of Angular Relationships

The Atomistic Line Graph Neural Network (ALIGNN) addresses a key limitation in early GNNs by explicitly modeling both two-body (pairwise) and three-body (angular) interactions in atomistic systems [23].

Architectural Innovation:

Dual Graph Structure: ALIGNN operates on two interrelated graphs: the original atomistic bond graph (representing atoms and bonds) and its corresponding line graph (representing bond pairs and angles between them) [23].

Edge-Gated Graph Convolution: The model employs edge-gated graph convolution layers that first process the line graph to capture angular information, then apply this information to update the original bond graph [23].

Hierarchical Feature Integration: By composing convolution layers across both graph types, ALIGNN effectively captures many-body interactions that are crucial for accurately modeling complex chemical environments [23].

This explicit modeling of angular relationships enables ALIGNN to overcome the limitations of purely distance-based models, which can struggle to distinguish structures with identical bond lengths but different overall configurations [21].

Experimental Protocols and Performance Benchmarking

Training Methodologies for Crystallographic GNNs

Data Preparation and Representation:

- Structure Formatting: Atomic structures are typically represented in standard formats (POSCAR, .cif, .xyz, or .pdb) and converted into graph representations with nodes (atoms) and edges (bonds) [23].

- Graph Construction: For periodic crystals, edges connect atoms within a specified cutoff distance, typically ranging from 5-8 Å, with periodic boundary conditions applied [24].

- Feature Initialization: Node features are initialized using element-specific embeddings, while edge features encode interatomic distances expanded using Gaussian radial basis functions [20] [23].

Loss Functions and Optimization: GNNs for material property prediction typically employ combined loss functions that optimize for both energy and force accuracy:

(\mathcal{L} = \rho \| E - \hat{E} \|^2 + \frac{1}{n} \sum{i=1}^n \| Fi - \hat{F}_i \|^2)

where (\rho) (typically 0.01-0.1) balances the relative contribution of energy and force terms [20]. Models are generally trained using the Adam optimizer with learning rate decay and early stopping based on validation performance [20].

Advanced Training Schemes: For challenging scenarios with limited labeled data, specialized training approaches have been developed. Adaptive Checkpointing with Specialization (ACS) employs a shared backbone with task-specific heads, checkpointing model parameters when validation loss for a task reaches a new minimum [25]. This approach has demonstrated effectiveness in ultra-low data regimes, achieving accurate predictions with as few as 29 labeled samples [25].

Quantitative Performance Comparison

Table 1: Benchmark Performance of GNN Models on Material Property Prediction

| Model | Architecture Type | QM9 Energy MAE (kcal/mol) | MD17 Force RMSE (kcal/mol/Å) | Materials Project Formation Energy MAE (eV/atom) |

|---|---|---|---|---|

| SchNet | Invariant (Distance-based) | 0.31 [20] | <0.33 [20] | 0.035 [20] |

| ALIGNN | Invariant (Angle-aware) | - | - | 0.026 (estimated) [23] |

| E2GNN | Equivariant (Scalar-Vector) | - | - | - |

| CGCNN | Invariant (Crystal Graph) | - | - | 0.039 [24] |

| MEGNet | Invariant (Multi-scale) | - | - | 0.033 [26] |

Table 2: Synthesizability Prediction Performance (Oxide Crystals)

| Method | Model Components | Recall (%) | Key Innovation |

|---|---|---|---|

| SynCoTrain | SchNet + ALIGNN [18] | 95-97 [18] | Dual classifier with PU Learning |

| Traditional | Stability Metrics [18] | <80 (estimated) | Charge balancing heuristics |

The benchmarking data reveals several important trends. First, models that incorporate more sophisticated structural representations (such as ALIGNN's angular information) generally outperform simpler architectures [23]. Second, the application of GNNs to synthesizability prediction demonstrates remarkable effectiveness, with the SynCoTrain framework achieving 95-97% recall in identifying synthesizable oxide materials [18]. This represents a significant improvement over traditional stability-metric approaches.

Advanced Applications and Research Directions

Beyond Basic Property Prediction

Synthesizability Prediction: The SynCoTrain framework exemplifies how GNNs can address the synthesizability prediction challenge. This approach employs a co-training framework with two complementary GNNs (SchNet and ALIGNN) that iteratively exchange predictions to reduce model bias and enhance generalizability [18] [22]. Critically, it uses Positive and Unlabeled (PU) Learning to address the scarcity of negative data (failed synthesis attempts rarely published) [18].

Crystal Structure Prediction: GNNs have been successfully applied to the inverse problem of crystal structure prediction - determining stable atomic arrangements given only a chemical composition. One approach combines graph networks with optimization algorithms like Bayesian Optimization to search for structures with minimal formation enthalpy [26]. This method has demonstrated the ability to predict crystal structures with computational costs three orders of magnitude lower than conventional DFT-based approaches [26].

Machine Learning Force Fields: Both SchNet and ALIGNN have been extended to develop machine learning force fields (ALIGNN-FF) capable of modeling diverse systems with any combination of 89 elements [23]. These force fields enable accurate molecular dynamics simulations at quantum-mechanical level accuracy but with significantly reduced computational cost, supporting applications including structural optimization and phonon property calculation [23].

Limitations and Future Directions

Despite their impressive capabilities, current GNN approaches face several important limitations:

Data Requirements: GNNs typically require substantial training data (thousands of structures) to achieve high accuracy, presenting challenges for novel material systems with limited examples [24].

Many-Body Interactions: SchNet's original formulation, being limited to radial filters, may struggle with strongly directional bonding environments that require explicit angular terms [20].

Transferability: Models trained on one class of materials (e.g., oxides) may not generalize well to other material families without retraining or fine-tuning [18].

Interpretability: While GNNs achieve high predictive accuracy, extracting chemically intuitive insights from their learned representations remains challenging [20].

Future research directions include developing more sample-efficient architectures, incorporating explicit physical constraints, improving uncertainty quantification, and enhancing model interpretability. Equivariant models that more rigorously encode geometric symmetries represent a particularly promising avenue for future development [21].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Crystal Structure GNN Research

| Tool/Resource | Type | Function | Availability |

|---|---|---|---|

| SchNetPack | Software Platform | Model building, training, and deployment for SchNet-based models [20] | Open source |

| ALIGNN | Software Platform | Implementation of ALIGNN model for property prediction and force fields [23] | Open source |

| MatDeepLearn | Benchmarking Platform | Reproducible assessment and comparison of GNNs on materials datasets [24] | Open source |

| OQMD | Materials Database | Formation energies and structures for ~320,000 materials [26] | Public |

| Materials Project | Materials Database | Crystal structures and properties for ~132,000 materials [26] | Public |

| JARVIS-DFT | Materials Database | ~75,000 materials with 4 million energy-force entries [23] | Public |

| DGL/PyTorch | Deep Learning Framework | Graph neural network implementation and training [23] | Open source |