Benchmarking Roost, Magpie, and ECCNN: A Comparative Analysis of Machine Learning Models for Thermodynamic Stability Prediction in Materials Science and Drug Development

This article provides a comprehensive benchmark analysis of three prominent machine learning models—Roost, Magpie, and ECCNN—for predicting thermodynamic stability of inorganic compounds, with specific relevance to biomedical and materials research.

Benchmarking Roost, Magpie, and ECCNN: A Comparative Analysis of Machine Learning Models for Thermodynamic Stability Prediction in Materials Science and Drug Development

Abstract

This article provides a comprehensive benchmark analysis of three prominent machine learning models—Roost, Magpie, and ECCNN—for predicting thermodynamic stability of inorganic compounds, with specific relevance to biomedical and materials research. We explore the foundational principles of each model, detail their methodological applications for composition-based prediction, analyze performance optimization strategies, and present rigorous comparative validation. By synthesizing performance metrics and identifying optimal use-case scenarios, this resource equips researchers and drug development professionals with the knowledge to efficiently select and implement these cutting-edge tools for accelerating materials discovery and development pipelines.

Understanding the Core Principles of Roost, Magpie, and ECCNN for Stability Prediction

The Critical Role of Thermodynamic Stability Prediction in Materials Science and Drug Development

Accurately predicting thermodynamic stability is a fundamental challenge that dictates the pace of discovery in both materials science and pharmaceutical development. In materials science, stability determines whether a hypothetical compound can be synthesized and persist under operating conditions, separating promising candidates from those that will decompose [1]. In drug development, the thermodynamic stability of proteins and the solubility of small-molecule active pharmaceutical ingredients (APIs) directly influence efficacy, safety, and manufacturability [2]. Traditional methods like density functional theory (DFT) calculations or alchemical free energy simulations, while accurate, are computationally prohibitive for screening vast chemical spaces [1] [3]. This has driven the adoption of machine learning (ML) and advanced simulation techniques to act as efficient pre-filters or alternatives, accelerating the identification of viable targets. This guide benchmarks contemporary stability prediction methodologies, focusing on the performance of ensemble ML models like ECSG (which integrates Roost and Magpie) against other alternatives, and compares them to state-of-the-art simulations in biophysics [1] [4].

Comparative Analysis of Stability Prediction Methodologies

The following table compares the core architectural approaches, advantages, and limitations of prominent stability prediction techniques.

Table 1: Comparison of Thermodynamic Stability Prediction Methodologies

| Methodology | Core Approach | Primary Application | Key Advantages | Major Limitations |

|---|---|---|---|---|

| Ensemble ML (ECSG) | Stacked generalization combining Magpie, Roost, and ECCNN models [1]. | Inorganic crystal stability. | High accuracy (AUC=0.988), superior data efficiency, reduces inductive bias [1]. | Requires training data; performance depends on training domain coverage. |

| Universal Interatomic Potentials (UIPs) | ML-trained potentials for energy and force prediction [4]. | Crystal stability from unrelaxed structures. | Can screen unrelaxed structures; strong prospective performance [4]. | High computational cost per prediction compared to composition-based models. |

| λ-Dynamics with Competitive Screening (CS) | Alchemical free energy simulation with biasing to sample favorable mutations [3]. | Protein point mutation stability. | Computes dozens of mutants in one simulation; high accuracy for surface/buried sites [3]. | Computationally intensive; requires expert setup and significant sampling. |

| Traditional Alchemical Free Energy (FEP/FEP+) | Pairwise free energy perturbation calculations [3]. | Protein stability & ligand binding. | High accuracy (~1 kcal/mol error); well-established [3]. | Cost scales linearly with mutations; inefficient for large combinatorial spaces [3]. |

| Density Functional Theory (DFT) | First-principles quantum mechanical calculation [1] [4]. | Formation energy & convex hull stability. | Considered a high-accuracy benchmark; physics-based [1]. | Extremely computationally expensive; intractable for high-throughput screening [4]. |

Benchmarking Performance: Experimental Data and Validation

Independent benchmarking frameworks like Matbench Discovery provide critical performance metrics for ML models on a realistic, prospective materials discovery task [4]. In drug development, accuracy is measured by correlation to experimental stability measurements.

Table 2: Experimental Validation Results for Key Methodologies

| Method (Study) | Key Performance Metric | Result | Benchmark Context / Validation |

|---|---|---|---|

| ECSG Ensemble Model [1] | Area Under the Curve (AUC) | 0.988 | Stability classification on JARVIS database [1]. |

| ECSG Ensemble Model [1] | Data Efficiency | 1/7th the data | Achieved equivalent accuracy to existing models with 7x less data [1]. |

| λ-Dynamics (CS) [3] | Pearson Correlation (R) vs. Experiment | 0.84 (Surface sites), 0.78 (Buried sites) | Protein G mutation stability; aggregate of four sites [3]. |

| λ-Dynamics (CS) [3] | Root-Mean-Square Error (RMSE) | 0.89 kcal/mol (Surface), 1.43 kcal/mol (Buried) | Compared to experimental unfolding free energies [3]. |

| Matbench Discovery Leaderboard [4] | WBM Accuracy (Top Model) | ~89% | Prospective discovery task for stable inorganic crystals [4]. |

| Universal Interatomic Potentials [4] | Performance vs. Other ML | State-of-the-Art | Led initial Matbench Discovery leaderboard across metrics [4]. |

Experimental Protocols for Key Methodologies

1. Protocol for Training and Validating the ECSG Ensemble Model [1]

- Objective: To predict the thermodynamic stability (stable/unstable) of inorganic compounds.

- Data Preparation: Input is chemical composition. For the ECCNN branch, encode elements into a 118×168×8 tensor representing electron configuration features. For Magpie and Roost, use standard composition-based featureization and graph representation, respectively [1].

- Base Model Training: Independently train three base models: (i) Magpie: Use gradient-boosted trees on stoichiometric and elemental property statistics [1]. (ii) Roost: Train a graph neural network on the complete graph of elements in the formula [1]. (iii) ECCNN: Train a convolutional neural network on the electron configuration tensor [1].

- Stacked Generalization: Use the predictions of the three base models as input features to train a meta-learner (e.g., a linear model or another shallow network) to produce the final stability classification [1].

- Validation: Perform cross-validation on databases like JARVIS or Materials Project. The primary metric is the AUC for classifying stable vs. unstable compounds. Validate prospective predictions with DFT calculations [1].

2. Protocol for λ-Dynamics with Competitive Screening (CS) for Protein Stability [3]

- Objective: To calculate the relative change in unfolding free energy (ΔΔG) for all 20 amino acid mutations at a single residue.

- System Setup: Prepare atomic coordinates for the folded protein (e.g., Protein G B1 domain) and an unfolded peptide reference state. Parameterize all amino acid mutations at the target site using a dual-topology approach within the CHARMM force field [3].

- Bias Training (Unfolded Ensemble): Run λ-dynamics simulations for the unfolded peptide ensemble using Adaptive Landscape Flattening (ALF) to train a bias potential that ensures equal sampling of all mutant states [3].

- Competitive Screening (Folded Ensemble): Transfer the bias potential trained in the unfolded state to the simulation of the folded protein. This biases sampling toward mutants that are more stable in the folded state relative to the unfolded reference [3].

- Free Energy Calculation: The relative free energy for each mutant is calculated from the difference in alchemical free energies between the folded and unfolded ensembles. Perform multiple independent trials (e.g., 5 trials with 5 replicas each) for error estimation via bootstrapping [3].

- Validation: Compare calculated ΔΔG values to experimentally measured unfolding free energies. Report Pearson correlation (R) and RMSE for surface and buried mutation sites separately [3].

Visualization of Methodologies and Workflows

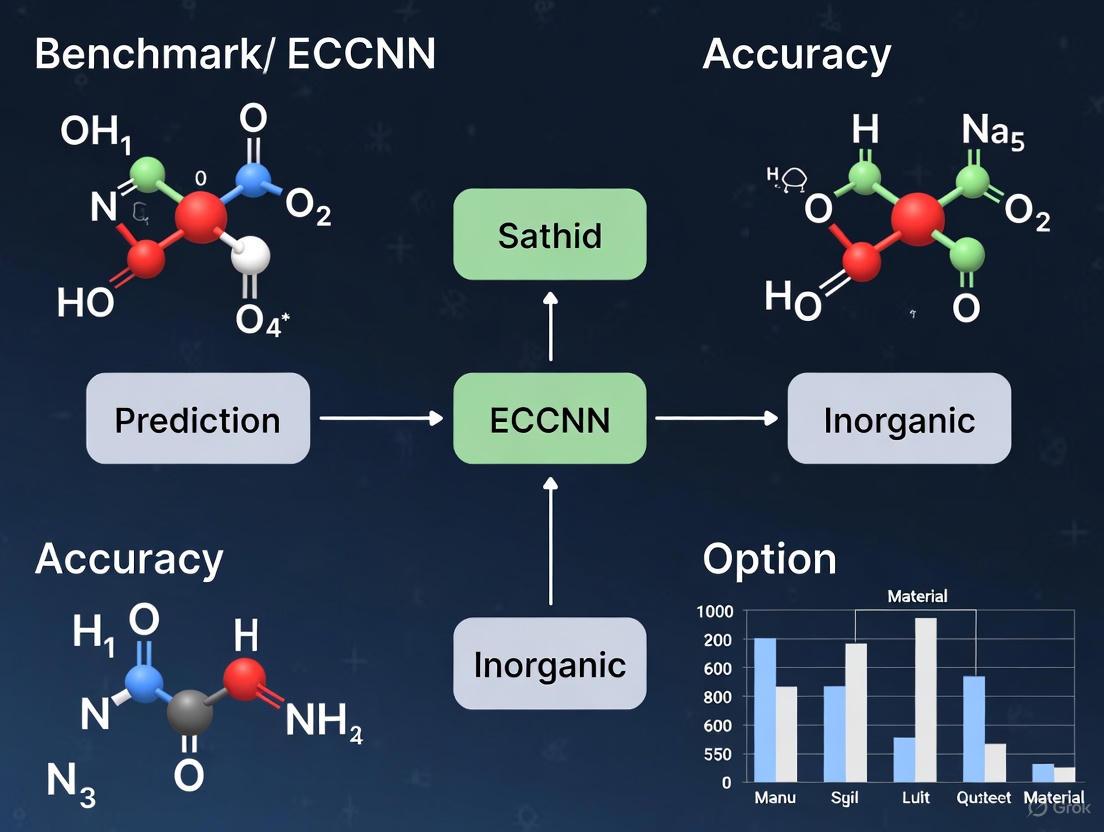

ECSG Ensemble Model Workflow [1]

Matbench Discovery Evaluation Logic [4]

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Stability Prediction

| Item | Function & Application | Key Consideration |

|---|---|---|

| Curated Materials Databases (MP, OQMD, JARVIS) [1] [4] | Provide labeled datasets (formation energy, stability) for training and benchmarking ML models. | Data quality, scope of chemistries, and accessibility of the convex hull data are critical. |

| ML Framework Packages (ALF, CHGNet, M3GNet) [3] [4] | Software implementing specific algorithms like λ-dynamics bias training or universal interatomic potentials. | Integration with simulation software (CHARMM, LAMMPS, VASP) and ease of use are vital. |

| Validated Force Fields (CHARMM36, AMBER) [3] | Parameter sets defining energy terms for atoms in biomolecular simulations like λ-dynamics. | Appropriate for the system (proteins, water, ions); impacts accuracy of free energy estimates. |

| High-Throughput DFT Workflow Tools (AFLOW, pymatgen) [4] | Automate the process of running and analyzing thousands of DFT calculations for validation. | Robust error handling and integration with supercomputing queues are necessary. |

| Benchmarking Suites (Matbench Discovery) [4] | Provide standardized tasks, datasets, and metrics to objectively compare model performance. | Ensures fair comparison and highlights a model's prospective utility in real discovery campaigns. |

The discovery of novel functional materials is a cornerstone of technological advancement, from clean energy solutions to next-generation pharmaceuticals. Central to this pursuit is the accurate prediction of a material's thermodynamic stability, a prerequisite for successful synthesis and application [1]. Computational models have emerged as powerful tools to navigate the vast chemical space, traditionally dominated by resource-intensive density functional theory (DFT) calculations and experimental trial-and-error [5]. Two dominant paradigms have crystallized in this field: composition-based models and structure-based models. Composition-based models predict properties using only the chemical formula, while structure-based models require additional information on the geometric arrangement of atoms within a crystal lattice [1].

This comparison guide is framed within a critical research context: the benchmarking of advanced stability prediction models, specifically the Roost, Magpie, and ECCNN frameworks. Research has shown that individually, these models possess inherent biases—Roost assumes strong interatomic interactions in a complete graph, Magpie relies on statistical summaries of elemental properties, and ECCNN introduces a novel focus on electron configuration [1]. The drive to overcome the limitations of single-model approaches has led to the development of ensemble methods like the Electron Configuration models with Stacked Generalization (ECSG), which integrates these three distinct models to mitigate inductive bias and achieve superior predictive performance [1]. The subsequent sections will objectively dissect the fundamental advantages and limitations of composition and structure-based approaches, supported by experimental data, to illuminate their respective roles in accelerating the discovery pipeline for researchers and drug development professionals.

Comparative Analysis: Advantages, Limitations, and Performance Data

The choice between composition-based and structure-based modeling is pivotal, dictated by the stage of discovery, data availability, and the specific property of interest. The table below summarizes their core characteristics, advantages, and limitations.

Table 1: Core Comparison of Composition-Based and Structure-Based Models

| Aspect | Composition-Based Models | Structure-Based Models |

|---|---|---|

| Primary Input | Chemical formula (elemental stoichiometry). | Crystalline structure (atomic coordinates, lattice parameters, space group). |

| Key Advantage | Enable ultra-high-throughput screening of vast, unexplored compositional spaces where structure is unknown [1]. | Capture the fundamental physics of atomic interactions, leading to high accuracy and the ability to model structure-sensitive properties [6]. |

| Major Limitation | Cannot distinguish between polymorphs (different structures with the same composition) and may miss properties dictated by geometry [1]. | Require a known or hypothesized crystal structure, which is often unavailable for novel materials and costly to obtain via DFT or experiment [1]. |

| Data Efficiency | Can achieve high performance with less data; ECSG ensemble matched benchmarks using one-seventh the data of a prior model [1]. | Typically require large, high-quality structural datasets for training but exhibit strong scaling laws with increasing data [6]. |

| Computational Cost (Inference) | Extremely low, allowing for the screening of millions of candidates in minutes. | Higher than composition-based, but still magnitudes faster than DFT. |

| Generalizability | Can extrapolate to new compositions but may struggle with elements not seen during training without careful feature design [1]. | Excellent generalization within known structural families; emergent generalization to novel structural types (e.g., 5+ element crystals) has been demonstrated at scale [6]. |

| Representative Models | Magpie, Roost, ECCNN, CrabNet [1] [7]. | Crystal Graph CNN (CGCNN), MEGNet, Graph Networks for Materials Exploration (GNoME) [6] [8]. |

The performance differential between these paradigms is quantifiable. The ECSG ensemble, a premier composition-based framework, achieved an Area Under the Curve (AUC) score of 0.988 for stability prediction on the JARVIS database [1]. In contrast, large-scale structure-based models like GNoME have pushed the boundaries of discovery, identifying over 2.2 million potentially stable crystal structures—an order-of-magnitude expansion of known stable materials—with a precision (hit rate) for stable predictions exceeding 80% when structural information is available [6].

Table 2: Quantitative Performance Benchmarking

| Model / Framework | Model Type | Key Performance Metric | Result | Context / Dataset |

|---|---|---|---|---|

| ECSG Ensemble [1] | Composition-Based (Ensemble) | Area Under the Curve (AUC) | 0.988 | Stability prediction on JARVIS database. |

| ECSG Ensemble [1] | Composition-Based (Ensemble) | Sample Efficiency | Used 1/7 of the data | To achieve accuracy equivalent to a benchmark model. |

| GNoME [6] | Structure-Based (GNN) | Discovery Hit Rate | > 80% | Precision of stable predictions when structure is provided. |

| GNoME [6] | Structure-Based (GNN) | Stable Materials Discovered | 2.2 million structures | Number of new predictions stable w.r.t. prior convex hull. |

| Bilinear Transduction [7] | Hybrid/OOD Method | Extrapolative Precision Boost | 1.8x for materials | Improvement in recalling high-performing, out-of-distribution candidates. |

A critical challenge for both approaches is Out-of-Distribution (OOD) generalization—predicting properties for materials or property values outside the training domain [7]. While structure-based models show emergent OOD capabilities with scale [6], novel methods like Bilinear Transduction, which learns to predict based on differences between materials rather than absolute representations, have shown promise. This method improved extrapolative precision for solid-state materials by 1.8x and boosted recall of top OOD candidates by up to 3x [7].

Experimental Protocols and Methodologies

Protocol for Composition-Based Ensemble Modeling (ECSG Framework)

The ECSG framework exemplifies a rigorous methodology to overcome the limitations of single composition-based models [1] [9].

- Base Model Training: Three distinct base models are trained independently on labeled stability data (e.g., decomposition energy, ∆H_d):

- ECCNN: A chemical formula is encoded into a 3D tensor (118 × 168 × 8) representing the electron configuration of constituent atoms. This input passes through two convolutional layers (64 filters, 5×5 kernel), batch normalization, max-pooling, and fully connected layers [1].

- Magpie: For a given composition, 22 elemental properties (e.g., atomic number, radius) are used to calculate statistical features (mean, deviation, range, etc.). These features train a gradient-boosted regression tree (XGBoost) model [1].

- Roost: The formula is represented as a complete graph of elements. A graph neural network with an attention mechanism learns message-passing between atoms to model interatomic interactions [1].

- Meta-Dataset Construction via k-Fold Cross-Validation: Each base model generates "out-of-sample" predictions on the training set using k-fold cross-validation. These predictions become the meta-features.

- Stacked Generalization (Meta-Learning): A new dataset is constructed where inputs are the meta-features (predictions from ECCNN, Magpie, Roost) and the target is the true stability label. A final meta-learner (e.g., a linear model) is trained on this dataset to optimally combine the base models' strengths [1].

Protocol for Structure-Based Discovery with Active Learning (GNoME-Like)

This protocol outlines the iterative active learning cycle used for large-scale structural discovery [6].

- Candidate Generation:

- Generate diverse candidate crystal structures using methods like symmetry-aware partial substitutions (SAPS) or random structure search (AIRSS).

- Model-Based Filtration:

- Use a pre-trained graph neural network (GNN) to predict the energy and stability of each candidate. The GNN represents the crystal as a graph with atoms as nodes and bonds as edges, passing messages to capture atomic interactions [6].

- Filter candidates based on predicted stability (e.g., decomposition energy below a threshold), prioritizing those most likely to be stable.

- First-Principles Validation:

- Perform DFT calculations on the top-filtered candidates to obtain accurate energies and relax the structures.

- Active Learning Loop:

- Add the newly computed DFT data (both stable and unstable outcomes) to the training database.

- Re-train or fine-tune the GNN model on the expanded dataset. This iterative loop progressively improves the model's accuracy and discovery "hit rate" [6].

Validation Protocol for Novel Predictions

- First-Principles Confirmation: Any novel composition or structure predicted to be stable by an ML model must be validated by high-fidelity DFT calculations to confirm its energy is on or near the convex hull of stable phases [1] [6].

- Experimental Realization: The ultimate validation is synthesis. Promising candidates confirmed by DFT are targeted for experimental synthesis (e.g., solid-state reaction, vapor deposition). Techniques like X-ray diffraction are then used to confirm the predicted crystal structure [6].

Table 3: Key Computational Tools, Databases, and Resources

| Item / Resource | Primary Function | Relevance to Model Development |

|---|---|---|

| Materials Project (MP) [1] [6] | Open database of computed properties for known and predicted inorganic materials. | Primary source of training data (formation energies, band gaps, structures) for both composition and structure-based models. |

| Open Quantum Materials Database (OQMD) [1] | Database of calculated thermodynamic and structural properties of materials. | Alternative/complementary source of high-throughput DFT data for training and benchmarking. |

| JARVIS Database [1] | Database incorporating DFT, classical force-field, and experimental data. | Used for benchmarking model performance on properties like stability. |

| MatDeepLearn (MDL) Framework [10] | A Python toolkit for developing graph-based deep learning models for materials. | Provides implementations of CGCNN, MEGNet, MPNN, and other GNN architectures for structure-based modeling. |

| Ensemble/Committee Models [9] | A technique using multiple models to make a collective prediction. | Used to quantify prediction uncertainty, which is critical for guiding active learning and identifying unreliable predictions. |

| Density Functional Theory (DFT) Codes (VASP, Quantum ESPRESSO) [6] | First-principles computational method for electronic structure calculation. | The "ground truth" generator for training data and the essential validator for ML model predictions. |

The trajectory of computational material discovery points toward the synthesis of the two paradigms. Hybrid models that integrate compositional ease with structural fidelity represent a key frontier. For instance, the TSGNN model uses a dual-stream architecture, processing topological information via a GNN and spatial information via a CNN, leading to enhanced property prediction [8]. Similarly, the Bilinear Transduction method offers a novel way to improve extrapolation for both composition and structure-based inputs [7]. Furthermore, the integration of active learning with autonomous robotic laboratories (self-driving labs) creates a closed-loop discovery engine, where ML models propose candidates, robots synthesize them, and characterization data feedback to improve the models in real-time [5].

In conclusion, composition-based and structure-based models are complementary engines for material discovery. Composition-based models like the ECCSG ensemble provide unmatched speed for exploratory screening of uncharted chemical spaces. In contrast, structure-based models like GNoME offer high-fidelity predictions and are indispensable for detailed property analysis and discovery in domains where structural hypotheses can be formulated. The ongoing benchmarking of frameworks like Roost, Magpie, and ECCNN underscores a critical lesson: leveraging the strengths of multiple approaches through ensemble or hybrid methods is a powerful strategy to mitigate individual model limitations, enhance predictive stability, and ultimately accelerate the path to novel functional materials.

Visualizing the Workflows and Model Architectures

Discovery Workflow: Composition vs. Structure-Based Pathways

Diagram 1: Material Discovery Model Pathways

Architecture of the ECSG Ensemble Model

Diagram 2: ECSG Ensemble Model Architecture

The accurate prediction of a material's thermodynamic stability from its composition is a fundamental challenge in accelerating the discovery of new inorganic compounds and, by extension, novel drug substances or delivery systems [1]. Traditional methods like density functional theory (DFT) are accurate but computationally prohibitive for screening vast compositional spaces [1]. This has spurred the development of machine learning (ML) models that use only chemical formulas as input. Among these, the Magpie (Materials-Agnostic Platform for Informatics and Exploration) framework established a robust baseline by deriving rich statistical features from tabulated elemental properties [1]. Its performance is now critically evaluated against next-generation models like the graph-based Roost and the electron-convolutional ECCNN within ensemble frameworks [1]. This guide provides a comparative analysis of these approaches, grounded in experimental benchmarking data, to inform researchers and drug development professionals on selecting and implementing these tools for stability-driven materials discovery.

Core Methodologies & Experimental Protocols

The benchmark is defined by a head-to-head comparison within an ensemble learning framework designed to mitigate the inductive bias inherent in any single modeling approach [1]. The following protocols detail the implementation and evaluation of the key models.

2.1 Model Architectures and Training Protocols

- Magpie: The framework generates a fixed-length feature vector for any chemical composition. It calculates statistical moments (mean, standard deviation, range, etc.) across 22 fundamental elemental properties (e.g., atomic number, radius, electronegativity) for all elements in a compound [1] [11]. These engineered features are typically used to train a supervised learner, such as Gradient Boosted Regression Trees (e.g., XGBoost) [1]. The protocol involves loading composition data, generating attributes via built-in generators, and training the model [11].

- Roost (Representation Learning from Stoichiometry): This model represents a composition as a fully connected graph, where nodes are elements and edges represent interactions [1]. It uses a message-passing graph neural network to learn a compositional embedding, directly learning the relationships between elements from data rather than relying on pre-defined statistics [1].

- ECCNN (Electron Configuration Convolutional Neural Network): This novel model uses the electron configuration of constituent atoms as its primary input [1]. The configuration for each of the 118 elements is encoded into a matrix, which is then processed by convolutional layers to extract patterns related to stability [1]. This approach incorporates quantum-mechanical information without expensive calculation.

- Ensemble Framework (ECSG): The benchmarking study employed a stacked generalization ensemble. The predictions from Magpie, Roost, and ECCNN serve as input features to a meta-learner (a super learner), which produces the final stability prediction [1]. This combines domain knowledge at different scales—atomic properties, interatomic interactions, and electronic structure.

2.2 Benchmarking Datasets and Validation Experiments were conducted using data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database [1]. The primary task was binary classification of compound stability, defined by its position relative to the convex hull of formation energies. Standard metrics include Area Under the Receiver Operating Characteristic Curve (AUC), F1-score, and precision. A critical additional metric is sample efficiency, measured by the amount of training data required to achieve a target performance level [1]. Validation included applying the best model to explore new families of materials, such as double perovskite oxides, with subsequent validation via first-principles DFT calculations [1].

The Magpie Feature Engineering Workflow

Performance Comparison: Quantitative Benchmarking

The following tables summarize the key performance metrics from the comparative study, highlighting the strengths and trade-offs of each approach [1].

Table 1: Core Performance Metrics on JARVIS Stability Classification Task

| Model | Primary Architecture | Key Input Representation | AUC Score | Precision | F1-Score | Interpretability |

|---|---|---|---|---|---|---|

| Magpie | Gradient Boosted Trees | Statistical Features from Elemental Properties | 0.942 | 0.891 | 0.901 | High (Explicit features) |

| Roost | Graph Neural Network | Composition as Complete Graph | 0.961 | 0.908 | 0.917 | Medium (Learned embeddings) |

| ECCNN | Convolutional Neural Network | Electron Configuration Matrices | 0.950 | 0.899 | 0.908 | Low (Patterns in EC space) |

| ECSG (Ensemble) | Stacked Generalization | Outputs of Magpie, Roost, ECCNN | 0.988 | 0.941 | 0.943 | Medium (Meta-model dependent) |

Table 2: Sample Efficiency and Computational Considerations

| Model | Relative Sample Efficiency* | Training Speed | Inference Speed | Data Dependency | Primary Advantage |

|---|---|---|---|---|---|

| Magpie | Baseline (1x) | Fast | Very Fast | Low | Speed, interpretability, robustness |

| Roost | ~3x | Medium | Fast | High | Captures complex element interactions |

| ECCNN | ~2x | Slow | Medium | Medium | Incorporates quantum-mechanical insight |

| ECSG (Ensemble) | ~7x | Very Slow | Slow | Very High | Maximum predictive accuracy |

*Sample efficiency denotes the amount of training data required to achieve a performance target. An efficiency of 7x indicates the ensemble needed only 1/7th the data of a baseline model to achieve the same AUC [1].

Table 3: Key Software and Data Resources for Stability Prediction

| Item Name | Type | Function/Benefit | Reference/Access |

|---|---|---|---|

| Magpie Python Module | Software Library | Provides attribute generators to compute statistical features from compositions for use in ML pipelines. | [12] |

| JARVIS, Materials Project, OQMD | Materials Database | Curated repositories of DFT-calculated formation energies and properties for training and validation. | [1] |

| Elemental Property Lookup Tables | Data File | Essential for Magpie. Contains values for properties like atomic radius and electronegativity for all elements. | Bundled with Magpie [11] |

| Weka / scikit-learn | ML Library | Integrated with Magpie for building final regression or classification models on the generated features. | [11] |

| CompositionEntry Class | Data Structure (Magpie) | Standardized object to handle and parse chemical formulas within the Magpie framework. | [12] |

Integrated View: The Ensemble Pathway to Robust Prediction

The ensemble framework (ECSG) demonstrates that combining diverse modeling philosophies yields superior results [1]. The following diagram illustrates how the three benchmarked models contribute complementary knowledge to the final prediction.

The ECSG Ensemble Framework for Stability Prediction

Discussion and Strategic Recommendations

The benchmarking data reveals a clear trade-off between model simplicity and predictive power. Magpie remains an excellent choice for initial screening and interpretable studies due to its speed and the direct physical meaning of its features [1] [11]. Its main limitation is the ceiling imposed by manually engineered features. Roost and ECCNN show higher potential accuracy by learning more complex representations, but at the cost of interpretability and requiring more data [1].

For mission-critical applications where accuracy is paramount, such as prioritizing compounds for experimental synthesis in drug development, the ensemble (ECSG) approach is recommended. Its dramatically higher sample efficiency means reliable models can be built with smaller datasets, a significant advantage in exploring novel chemical spaces [1]. The choice of tool should align with the project's stage: use Magpie for rapid, interpretable prototyping, and advance to ensemble methods for final candidate selection and validation.

The discovery and development of novel materials and drug candidates are fundamentally constrained by the vastness of chemical space. Conventional methods for assessing thermodynamic stability, such as density functional theory (DFT) calculations, are computationally intensive, creating a significant bottleneck in research and development pipelines [9]. Machine learning (ML) offers a transformative paradigm by enabling rapid, accurate predictions of material stability directly from chemical composition, dramatically accelerating the identification of viable candidates [9]. Within this ML landscape, graph neural networks (GNNs) have emerged as a particularly powerful architecture for modeling atomic systems. By representing atoms as nodes and bonds as edges, GNNs naturally capture the relational and topological information critical to understanding material properties [13]. This comparison guide objectively evaluates the Roost (Representation Learning from Stoichiometry) architecture against other prominent models—Magpie and ECCNN—within the context of an ensemble framework for predicting inorganic compound stability. The analysis is framed within a broader thesis on benchmarking prediction accuracy, providing researchers and drug development professionals with a clear, data-driven assessment of these tools [9].

Model Comparison: Architectural Approaches to Composition-Based Prediction

The performance of ML models in predicting material stability is deeply influenced by their underlying architectural philosophy and how they represent chemical information. The following table details the core characteristics of the three primary models within the Electron Configuration models with Stacked Generalization (ECSG) ensemble framework [9].

Table 1: Architectural Comparison of Roost, Magpie, and ECCNN Models

| Model Name | Core Architectural Principle | Input Feature Representation | Domain Knowledge Leveraged | Primary Algorithm |

|---|---|---|---|---|

| Roost [9] | Relational Learning via Graph Attention | A complete graph where nodes are elements and edges represent interactions. | Interatomic interactions and bonding relationships. | Graph Neural Network (GNN) with attention mechanism. |

| Magpie [9] | Statistical Feature Engineering | Statistical features (mean, deviation, range, etc.) of 22 fundamental elemental properties. | Intrinsic atomic properties (mass, radius, electronegativity, etc.). | Gradient-Boosted Regression Trees (XGBoost). |

| ECCNN [9] | Spatial Feature Extraction via Convolutions | A 3D tensor (118 x 168 x 8) encoding the electron configuration of constituent atoms. | Quantum-mechanical electron configuration. | Convolutional Neural Network (CNN). |

Roost operates on a graph representation of the chemical formula. Its key innovation is the use of an attention-based message-passing mechanism, which allows the model to dynamically learn and weigh the significance of interactions between different element types within a compound [9]. This enables it to capture complex, non-linear relationships that simple statistics might miss. In contrast, Magpie relies on carefully crafted statistical summaries of elemental properties, making it a robust, interpretable, and computationally efficient model derived from domain expertise [9]. ECCNN takes a more fundamental quantum mechanical approach by directly processing electron orbital information through convolutional filters, aiming to learn stability patterns from first-principles electronic structure data [9].

Experimental Protocols & Benchmarking Methodology

The comparative performance of Roost, Magpie, and ECCNN is best understood within the ECSG ensemble framework, which employs a stacked generalization protocol to mitigate individual model bias and enhance predictive performance [9].

The ECSG Ensemble Framework Protocol

The ECSG framework integrates the three base models in a two-level structure [9]:

- Base-Level Model Training: The Roost, Magpie, and ECCNN models are independently trained on the same dataset of known stable and unstable compounds.

- Cross-Validation Prediction Generation: A k-fold cross-validation strategy is run on the training set. The predictions from each base model on the held-out validation folds are collected. These "out-of-sample" predictions form a new set of features, called meta-features.

- Meta-Dataset Construction: A new dataset is created where each sample's input features are the three meta-features (predictions from Roost, Magpie, and ECCNN), and the target is the true stability label.

- Meta-Model Training: A final "meta-learner" (e.g., a linear model or another XGBoost model) is trained on this new dataset to learn the optimal way to combine the predictions of the three base models [9].

Validation and Benchmarking Protocol

Model performance was rigorously validated using established computational materials databases [9]. The protocol involves:

- Training Data Source: Models are trained on formation energy and stability data from large-scale DFT-calculated databases such as the Materials Project (MP) and the Open Quantum Materials Database (OQMD) [9].

- Benchmarking Dataset: Performance metrics are evaluated on curated datasets from resources like the JARVIS database [9].

- Accuracy Metric: The primary metric for comparison is the Area Under the Curve (AUC) of the Receiver Operating Characteristic (ROC) curve, which evaluates the model's ability to discriminate between stable and unstable compounds [9].

- Experimental Corroboration: Top predictions for novel stable compounds from the model are validated through follow-up high-fidelity DFT calculations to confirm their thermodynamic stability (placement on the convex hull) [9].

Diagram 1: ECSG Ensemble Model Training Workflow (Width: 760px)

Performance Data & Comparative Analysis

Benchmark Performance and Sample Efficiency

The integrated ECSG ensemble, which leverages the strengths of Roost, Magpie, and ECCNN, achieves state-of-the-art performance. Quantitative benchmarks highlight the advantages of the ensemble approach and the sample efficiency of GNN-based models like Roost [9].

Table 2: Quantitative Performance Benchmark of the ECSG Ensemble

| Performance Metric | ECSG Ensemble Result | Context & Comparative Advantage | Evaluation Dataset |

|---|---|---|---|

| Area Under Curve (AUC) | 0.988 [9] | Demonstrates exceptional discriminative accuracy between stable/unstable compounds. | JARVIS Database [9] |

| Sample Efficiency | Achieves equivalent accuracy using ~1/7 of the data [9] | The ensemble requires significantly less training data than a single model to reach the same accuracy. | JARVIS Database [9] |

Performance on Standard ML-IAP Benchmark Tasks

Beyond stability prediction, graph-based architectures like Roost are foundational for Machine Learning Interatomic Potentials (ML-IAPs), which predict energies and forces for molecular dynamics. Their performance on standard benchmark datasets is indicative of their general capability in modeling atomic interactions [14].

Table 3: Model Performance on Common ML-IAP Benchmark Datasets

| Dataset | Description | Typical State-of-the-Art Performance | Relevance to Stability Prediction |

|---|---|---|---|

| QM9 [14] | 134k small organic molecules (C, H, O, N, F). | Energy MAE: < 1 meV/atom; Force MAE: ~20 meV/Å for top models [14]. | Tests model accuracy on diverse, quantum-mechanical ground-truth data. |

| MD17/22 [14] | Molecular dynamics trajectories for molecules. | Force MAE can be as low as 2-5 meV/Å for models like GemNet [14]. | Validates model ability to capture forces and dynamics on a learned potential energy surface. |

Diagram 2: Roost's GNN Architecture with Attention (Width: 760px)

Implementing and leveraging models like Roost requires access to specific computational tools and databases. The following table lists critical resources for researchers in this field [9].

Table 4: Essential Computational Tools & Databases for ML-Driven Discovery

| Item / Resource | Primary Function / Application | Key Features for Stability Prediction |

|---|---|---|

| Materials Project (MP) [9] | Database for acquiring training data on formation energies and compound stability. | Contains extensive DFT-calculated data for hundreds of thousands of inorganic compounds. |

| Open Quantum Materials Database (OQMD) [9] | Database for acquiring training data on formation energies and compound stability. | A large repository of calculated thermodynamic and structural properties. |

| JARVIS Database [9] | Database used for benchmarking model performance. | Includes a wide range of computed properties for materials, useful for validation. |

| Ensemble/Committee Model [9] | A technique for quantifying prediction uncertainty. | Uses predictions from multiple models to estimate confidence, crucial for guiding active learning. |

| DeePMD-kit [14] | Software for training and running deep potential molecular dynamics. | Exemplifies scalable ML-IAP implementation; relevant for extending Roost-like models to force field development. |

In the critical task of predicting inorganic compound stability, the Roost architecture provides a powerful, relationally-aware complement to the feature-driven Magpie and the electron structure-focused ECCNN. While Roost's graph-based, attention-driven approach excels at modeling complex interatomic interactions, its greatest predictive power is realized within ensemble frameworks like ECSG, which synthesize the strengths of diverse modeling philosophies to achieve superior accuracy and data efficiency [9].

For drug development professionals, this translates to a tangible acceleration of the discovery pipeline. The ability to rapidly and accurately screen vast compositional spaces for stable compounds can drastically reduce the time and cost associated with identifying promising inorganic candidates for applications such as contrast agents, drug delivery vehicles, or bioactive implants. Future advancements will likely involve the tighter integration of GNN-based stability predictors with automated experimental synthesis platforms and the extension of these models to predict not just stability but also functional properties critical for biomedical application, heralding a new era of AI-driven rational design in materials and drug development.

The accurate prediction of thermodynamic stability is a cornerstone for the efficient discovery of novel inorganic compounds and functional materials. This task, central to a broader thesis on benchmarking prediction accuracy, presents a significant challenge due to the combinatorial vastness of chemical space and the subtle energy differences that determine stability [15]. Traditional methods, such as Density Functional Theory (DFT), provide accuracy but are computationally prohibitive for high-throughput screening [1]. Consequently, machine learning (ML) models that use only chemical composition as input have emerged as promising, rapid alternatives [16].

However, a critical examination reveals that many compositional models achieve low error in predicting formation energy but perform poorly on the definitive metric of stability (decomposition energy, ΔH_d), which requires precise relative energy comparisons within a chemical space [15]. This performance gap underscores a key thesis: model performance is intrinsically linked to the fundamental physical principles embedded within its input representation. Many existing models rely on hand-crafted features (e.g., Magpie) or learned stoichiometric relationships (e.g., Roost), which may introduce inductive biases or lack direct electronic-structure insight [1].

The Electron Configuration Convolutional Neural Network (ECCNN) introduces a paradigm shift by using the raw electron configuration (EC) of constituent elements as its foundational input [1]. This approach grounds the model in a first-principles physical descriptor—the distribution of electrons in atomic orbitals—which is directly linked to chemical bonding and stability. This article presents a comparative guide evaluating the ECCNN framework against established benchmarks like Roost and Magpie, assessing its performance in stability prediction within a rigorous benchmarking thesis focused on accuracy, data efficiency, and generalizability.

Model Architectures and Methodological Comparison

The models discussed here are composition-based, requiring only a chemical formula, making them applicable for screening hypothetical compounds where atomic structure is unknown [1]. Their core differences lie in how they transform a chemical formula into a numerical representation for the learning algorithm.

Table 1: Comparison of Core Model Architectures and Input Representations

| Model | Core Input Representation | Underlying Architecture | Key Principle / Inductive Bias |

|---|---|---|---|

| Magpie [1] [15] | Statistical features (mean, deviation, etc.) of 22 elemental properties (e.g., electronegativity, radius). | Gradient Boosted Regression Trees (XGBoost). | Material properties can be captured via statistical aggregates of classical atomic properties. |

| Roost [1] [16] | Learned embeddings for each element, initialized from sources like Matscholar embeddings [16]. | Graph Neural Network (GNN) with weighted attention pooling. | A composition is a fully connected graph of atoms; message passing captures interatomic interactions. |

| ECCNN [1] | Fundamental Electron Configuration (EC) matrix for the composition. | Convolutional Neural Network (CNN) with pooling and fully connected layers. | Stability is governed by the quantum-mechanical electronic structure of constituent atoms. |

| ECSG (Ensemble) [1] | Combines the predictions of Magpie, Roost, and ECCNN as meta-features. | Stacked Generalization (a meta-learner, often linear). | Ensemble diversifies knowledge sources (atomic stats, interatomic interactions, electronic structure) to reduce bias. |

Electron Configuration Encoding in ECCNN: The ECCNN model encodes a material's composition into a 118×168×8 tensor [1]. This is constructed by mapping each of the 118 elements to a fixed vector representing its electron configuration across atomic orbitals. For a given compound, a weighted combination (by stoichiometric fraction) of these elemental EC vectors forms the input matrix, which is then processed by convolutional layers to extract patterns relevant to stability.

Diagram 1: ECCNN Model Architecture Flow (87 characters)

Performance Benchmarking on Stability Prediction

Benchmarking on the JARVIS-DFT database demonstrates that the ensemble model integrating ECCNN, ECSG, achieves top-tier performance in distinguishing stable from unstable compounds [1].

Table 2: Quantitative Performance Comparison on Stability Prediction

| Model | AUC-ROC | Key Strengths | Notable Limitations |

|---|---|---|---|

| Magpie [1] [15] | ~0.92-0.95 (reported in prior studies) | Interpretable features, fast training, strong baseline. | Relies on pre-defined feature engineering; may not capture complex quantum interactions. |

| Roost [1] [16] | High (specific AUC not isolated in source) | Learns composition relationships directly; flexible representation. | Performance can depend on pretraining data; may overfit to specific compositional patterns [16]. |

| ECCNN (Base) [1] | Very High (contributes to 0.988 ensemble) | Superior data efficiency (needs 1/7th data for similar performance). Physically grounded input. | Computationally more intensive than Magpie; requires EC data for all elements. |

| ECSG (Ensemble) [1] | 0.988 | Highest overall accuracy. Mitigates individual model bias via knowledge fusion. | Increased complexity; requires training multiple base models. |

Data Efficiency: A pivotal finding is ECCNN's sample efficiency. The model achieved accuracy comparable to state-of-the-art alternatives using only one-seventh of the training data [1]. This is attributed to the fundamental, information-rich nature of electron configuration data, which provides a strong physical prior, reducing the amount of data needed for the model to generalize effectively.

Out-of-Distribution (OOD) Generalization: While not explicitly tested on ECCNN, related research underscores the importance of input encoding for OOD performance. Studies show that models using physical property encodings (closer in spirit to ECCNN's philosophy) generalize better to OOD samples defined by unseen elements or property ranges compared to models using simpler one-hot encodings [17]. This suggests ECCNN's physically-grounded input is a promising strategy for robust predictions in unexplored chemical spaces.

Experimental Protocols and Workflow

The validation of stability prediction models follows a rigorous workflow, from data sourcing to final DFT verification of novel candidates.

Table 3: Key Experimental Protocol for Benchmarking Stability Models

| Protocol Stage | Description | Common Sources/Tools |

|---|---|---|

| 1. Data Curation | Collecting formation energies (ΔH_f) and associated stable/unstable labels for diverse inorganic compounds. | Materials Project (MP) [15], JARVIS-DFT [1], Open Quantum Materials Database (OQMD) [1] [16]. |

| 2. Stability Label Derivation | Calculating decomposition energy (ΔH_d) via convex hull construction for each composition in a chemical space. | Pymatgen for phase diagram analysis [15]. |

| 3. Dataset Splitting | Partitioning data into training, validation, and test sets. For OOD tests, splitting by element presence or property value [17]. | Random splits for standard benchmarks; strategic splits for OOD evaluation (e.g., remove all Ca-containing samples) [17]. |

| 4. Model Training & Validation | Training models on ΔH_f or stability labels. Tuning hyperparameters via cross-validation on the validation set. | Frameworks: TensorFlow/PyTorch. Metrics: Mean Absolute Error (MAE) for energy, AUC-ROC for binary stability classification [1]. |

| 5. Novel Discovery Screening | Using trained model to screen vast hypothetical compositions (e.g., double perovskites, 2D semiconductors). Ranking candidates by predicted stability [1]. | High-throughput scripting to generate composition lists and feed them to the model. |

| 6. First-Principles Verification | Performing DFT calculations on top-ranked novel candidates to confirm their thermodynamic stability (negative ΔH_d). | DFT codes (VASP, Quantum ESPRESSO) with standard exchange-correlation functionals (PBE, HSE) [1] [18]. |

Diagram 2: Stability Prediction and Discovery Workflow (65 characters)

The Scientist's Toolkit: Research Reagent Solutions

Implementing and evaluating these models requires a suite of software and data resources.

Table 4: Essential Research Tools and Resources for Stability Prediction

| Tool/Resource Name | Type | Primary Function in Research | Key Reference/Availability |

|---|---|---|---|

| Materials Project (MP) | Database | Primary source of DFT-calculated formation energies, crystal structures, and pre-computed phase diagrams for hundreds of thousands of materials. | [1] [15] |

| JARVIS-DFT | Database | A comprehensive collection of DFT calculations for materials, used as a benchmark dataset for stability prediction models. | [1] |

| Pymatgen | Software Library | Python library for materials analysis; essential for parsing CIF files, generating composition features, and performing convex hull analyses to determine stability. | [15] |

| Matbench | Benchmarking Suite | A standardized benchmark suite for evaluating ML models on various materials property prediction tasks, allowing fair comparison. | [16] [17] |

| Roost Code | Model Implementation | Open-source implementation of the Roost (Representation Learning from Stoichiometry) graph neural network model. | [16] |

| Magpie Feature Set | Feature Generator | A well-defined set of heuristic, composition-based feature descriptors derived from elemental properties. | [1] [15] |

| Electron Configuration Data | Fundamental Data | Tabulated electron configurations for elements, required as the raw input for the ECCNN model. | Standard periodic table references. |

| VASP/Quantum ESPRESSO | Simulation Software | First-principles DFT codes used for the final verification of predicted stable materials, providing the ground-truth energy assessment. | [1] [18] |

Comparative Analysis of Theoretical Foundations and Domain Knowledge Integration

The accurate prediction of stability—whether in materials, geological structures, or financial systems—is a cornerstone of advancement across scientific and industrial domains. Traditional methods often rely on costly physical experiments or computationally intensive simulations, creating a bottleneck for discovery and optimization. Machine learning (ML) has emerged as a transformative tool, offering pathways to rapid and resource-efficient predictions. However, the performance and generalizability of these models are fundamentally governed by their theoretical foundations and the manner in which domain-specific knowledge is integrated into their architecture. This comparative analysis examines prominent ML frameworks, including the Electron Configuration Convolutional Neural Network (ECCNN) and its ensemble variant (ECSG), Roost, and Magpie, within the context of benchmarking stability prediction accuracy. The analysis is grounded in experimental data and methodologies, focusing on how different inductive biases and knowledge integrations impact predictive performance, sample efficiency, and practical utility in fields such as materials science and geomechanics [1] [19].

The predictive power of a model is not merely a function of its algorithm but is deeply rooted in its core theoretical assumptions and how expert knowledge of the field is encoded. The following table summarizes the foundational principles of key models used for stability prediction.

Table 1: Comparison of Theoretical Foundations in Stability Prediction Models

| Model | Core Theoretical Foundation | Method of Domain Knowledge Integration | Primary Inductive Bias | Typical Application Domain |

|---|---|---|---|---|

| ECCNN (Electron Configuration CNN) | Electron configuration determines chemical bonding and material properties [1]. | Direct input of raw electron configuration matrices, minimizing hand-crafted features [1]. | Assumes spatial locality in electron configuration data suitable for CNN processing [1]. | Thermodynamic stability of inorganic compounds [1]. |

| Roost | Crystals as dense graphs; properties emerge from message-passing between atoms [1]. | Chemical formula represented as a complete graph; attention mechanisms model interatomic interactions [1]. | Assumes all atoms in a unit cell significantly interact [1]. | Formation energy and stability of crystalline materials [1]. |

| Magpie | Statistical aggregation of elemental properties correlates with macro-scale material behavior [1]. | Uses statistical features (mean, deviation, range) of elemental properties like electronegativity, atomic radius [1]. | Assumes material properties can be statistically summarized from tabulated elemental traits [1]. | General materials property prediction [1]. |

| ECSG (Ensemble) | Stacked generalization mitigates individual model bias [1]. | Combines predictions from ECCNN, Roost, and Magpie to form a meta-learner [1]. | Averages biases from diverse foundational assumptions for robust prediction [1]. | Exploration of novel composition spaces (e.g., perovskites, 2D semiconductors) [1]. |

| CNN-BiLSTM-Attention Hybrids | Spatiotemporal patterns in sequential data are hierarchical and require localized and long-range modeling [20] [21]. | CNN extracts spatial/local features, BiLSTM captures bidirectional temporal dependencies, Attention highlights critical points [20]. | Assumes data has both spatial (or feature-based) and sequential structure with key informative periods [21]. | Wind power forecasting [20], power load prediction [21]. |

Performance Benchmarking and Quantitative Analysis

Empirical validation is critical for assessing the real-world efficacy of theoretical frameworks. The following data, primarily drawn from a landmark study on thermodynamic stability prediction, provides a direct comparison of model performance [1].

Table 2: Benchmarking Performance on Thermodynamic Stability Prediction (JARVIS Database) [1]

| Model | AUC-ROC | Key Performance Advantage | Sample Efficiency | Notable Application Outcome |

|---|---|---|---|---|

| ECSG (Ensemble) | 0.988 | Highest overall accuracy and robustness [1]. | Achieves same accuracy as baselines using only 1/7 of the data [1]. | Identified novel stable double perovskite oxides and 2D semiconductors, validated by DFT [1]. |

| ECCNN | 0.975 (Approx. from ensemble components) | Introduces novel electron configuration perspective, less reliant on crafted features [1]. | High; benefits from efficient CNN parameter use [1]. | Provides complementary insights to property-based and graph-based models [1]. |

| Roost | N/A (Component Model) | Effectively models interatomic interactions via attention [1]. | Moderate; requires sufficient data to learn graph relationships [1]. | Strong performer in formation energy prediction tasks [1]. |

| Magpie | N/A (Component Model) | Fast, interpretable via feature importance [1]. | High; works with small datasets due to simple feature space [1]. | Serves as a robust baseline for composition-based property prediction [1]. |

| ElemNet (Reference Baseline) | Lower than ECSG [1] | Deep learning on elemental fractions only [1]. | Low; requires large datasets and suffers from significant bias [1]. | Highlights limitations of models without explicit domain knowledge integration [1]. |

The superiority of the ECSG ensemble is evident, demonstrating that synthesizing diverse knowledge bases (electronic, graph-based, and statistical) yields a model that is both more accurate and dramatically more data-efficient. This principle of hybrid integration for enhanced performance is echoed in other domains. For instance, in wind power forecasting, a hybrid OPESC-CNN-BiLSTM-SA model reduced RMSE by 30.07% and MAE by 34.51% compared to baselines [20]. Similarly, in power load forecasting, a CNN-BiLSTM-Attention model achieved MAPE values as low as 1.08% across seasons, outperforming standalone models [21].

Experimental Protocols and Methodologies

A detailed understanding of experimental design is essential for interpreting results and reproducing benchmarks.

4.1 Protocol for Ensemble Model Development and Validation (ECSG Study) [1]

- Data Sourcing: Models were trained and tested using stability data from the Joint Automated Repository for Various Integrated Simulations (JARVIS) database, which contains DFT-calculated formation energies and decomposition enthalpies.

- Input Representation:

- ECCNN: Elemental compositions were encoded into a fixed-size 3D tensor (118 elements × 168 electron orbital slots × 8 quantum numbers) representing the electron configuration.

- Roost: Compositions were represented as a complete weighted graph, with nodes as atoms and edges reflecting stoichiometric relationships.

- Magpie: A feature vector was generated by calculating statistical moments (mean, variance, min, max, etc.) of a suite of elemental properties.

- Model Training: The three base models (ECCNN, Roost, Magpie) were trained independently to predict thermodynamic stability (a classification task). Their architectures were: a 2-layer CNN for ECCNN; a message-passing graph neural network for Roost; and gradient-boosted trees (XGBoost) for Magpie.

- Stacked Generalization: The predictions (class probabilities) from the three base models on the training set were used as input features to train a meta-learner (a logistic regression model). This meta-model learned the optimal way to combine the base predictions.

- Performance Evaluation: The final ECSG ensemble was evaluated on a held-out test set using the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). Sample efficiency was tested by training on progressively smaller subsets of data.

- Discovery Validation: Proposed stable compounds from the model were validated using first-principles Density Functional Theory (DFT) calculations to confirm their negative decomposition energy.

4.2 Protocol for Hybrid Spatiotemporal Model (CNN-BiLSTM-Attention) [20] [21]

- Data Preprocessing: For non-stationary sequences (e.g., wind speed, power load), data is often decomposed using techniques like Variational Mode Decomposition (VMD) or CEEMDAN to isolate distinct frequency components [21].

- Feature Engineering: Relevant spatial/contextual features (e.g., weather data, temporal markers) are organized into a feature matrix.

- Model Architecture:

- CNN Layer: Processes the input matrix to extract local spatial patterns and inter-feature correlations.

- BiLSTM Layer: Takes the CNN's output and processes the sequence both forward and backward to capture long-term temporal dependencies.

- Attention Layer: Dynamically assigns higher weight to hidden states from the BiLSTM that are more critical for the specific prediction point.

- Hyperparameter Optimization: Critical parameters (learning rate, hidden units, etc.) are often tuned using optimization algorithms (e.g., OPESC, genetic algorithms) to prevent overfitting and improve generalization [20].

- Evaluation: Models are evaluated using regression metrics like Root Mean Square Error (RMSE), Mean Absolute Error (MAE), and Mean Absolute Percentage Error (MAPE) on test datasets representing future, unseen periods.

Visualizing Architectures and Workflows

Theoretical Framework Integration Diagram

ECCNN Model Architecture Diagram

Ensemble Model Experimental Workflow

Table 3: Key Research Reagent Solutions for ML-based Stability Prediction

| Resource / Tool | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| JARVIS (Joint Automated Repository for Various Integrated Simulations) | Database | Provides DFT-calculated formation energies, band gaps, and other properties for a vast range of materials, serving as a ground-truth source for training and testing [1]. | Essential for benchmarking models like ECCNN and ECSG on thermodynamic stability tasks [1]. |

| Materials Project (MP) / Open Quantum Materials Database (OQMD) | Database | Large-scale materials databases similar to JARVIS, offering another source of consistent, computed property data for model development [1]. | Used to train and compare baseline models (e.g., Roost, Magpie) and ensure generalizability across data sources. |

| Density Functional Theory (DFT) Software (VASP, Quantum ESPRESSO) | Simulation Tool | Provides first-principles validation of model predictions. Critical for confirming the stability of newly proposed compounds identified by ML models [1]. | The ultimate validation step in the discovery pipeline; used to verify ML-predicted stable compounds. |

| SHapley Additive exPlanations (SHAP) | Analysis Library | Explains the output of ML models by assigning importance values to each input feature, enhancing interpretability [19]. | Used in comparative studies (e.g., stope stability) to understand feature importance and model logic, aiding in bias analysis [19]. |

| Variational Mode Decomposition (VMD) / CEEMDAN | Signal Processing Algorithm | Decomposes non-stationary time-series data (e.g., load, wind speed) into simpler, quasi-stationary modes for easier and more accurate modeling [20] [21]. | A critical preprocessing step in hybrid spatiotemporal models for energy forecasting, directly impacting final accuracy [21]. |

| Automated ESR Analyzer (e.g., TEST1) | Laboratory Instrument | Provides standardized, high-throughput measurement of clinical stability metrics like erythrocyte sedimentation rate (ESR) for validation studies [22]. | Highlights the role of standardized experimental validation in benchmarking, even in non-ML contexts (correlation r=0.902 with Westergren method) [22]. |

Implementing Ensemble Strategies and Practical Applications for Enhanced Prediction

The discovery of novel, thermodynamically stable inorganic compounds is a foundational task in materials science and drug development, pivotal for creating next-generation semiconductors, catalysts, and pharmaceutical agents. The primary challenge lies in the astronomical size of the compositional space, which makes exhaustive experimental or first-principles computational screening impractical and inefficient [1]. Machine learning (ML) has emerged as a transformative tool to predict compound stability, typically represented by decomposition energy (ΔHd), directly from chemical composition [1]. However, prevalent ML models are often constructed on specific, narrow domains of knowledge—such as elemental statistics or assumed graph interactions—which introduces significant inductive bias. This bias limits model generalizability and accuracy when exploring uncharted compositional territories [1].

To overcome these limitations, the Electron Configuration models with Stacked Generalization (ECSG) framework was developed. ECSG is an ensemble methodology that strategically integrates three distinct base models—Magpie, Roost, and ECCNN—each rooted in complementary physical and chemical knowledge domains [1]. The framework employs a stacked generalization (or stacking) meta-learning strategy, where a high-level "super learner" model learns to optimally combine the predictions of the diverse base models [1] [23]. This approach is designed to mitigate the individual biases of each constituent model, harness synergistic effects, and yield predictions with superior accuracy, robustness, and sample efficiency compared to any single model or traditional benchmark [1].

This comparison guide objectively evaluates the performance of the ECSG framework against its constituent models and other alternatives, within the context of ongoing research focused on benchmarking stability prediction accuracy. The analysis is supported by experimental data, detailed protocols, and visualizations of the underlying architecture.

Performance Comparison: ECSG vs. Constituent and Alternative Models

The efficacy of the ECSG framework is quantitatively demonstrated through rigorous benchmarking on materials databases. The following tables summarize its performance against key alternatives.

Table 1: Core Performance Metrics on Thermodynamic Stability Prediction

| Model | Core Approach / Domain Knowledge | Reported AUC | Key Strength | Primary Limitation / Inductive Bias |

|---|---|---|---|---|

| ECSG (Ensemble) | Stacked Generalization of Magpie, Roost & ECCNN | 0.988 [1] | High accuracy & sample efficiency; mitigates individual model bias | Increased computational complexity in training |

| ECCNN (Base Model) | Electron Configuration Convolutional Neural Network | Not singly reported | Leverages fundamental electron structure data | Model performance dependent on quality of encoding |

| Roost (Base Model) | Graph Neural Network with message-passing | Not singly reported | Captures interatomic interactions within a formula | Assumes a complete graph of atomic interactions [1] |

| Magpie (Base Model) | Statistical features of elemental properties | Not singly reported | Computationally efficient; uses rich elemental descriptors | Relies on hand-crafted, domain-specific features [1] |

| ElemNet (Alternative) | Deep learning on elemental composition only | Lower than ECSG [1] | Simple, composition-based input | Strong bias from assuming composition alone determines properties [1] |

Table 2: Comparative Analysis of Efficiency and Generalizability

| Evaluation Dimension | ECSG Framework Performance | Typical Single-Model Performance | Implication for Research |

|---|---|---|---|

| Sample Efficiency | Achieves equivalent accuracy using only 1/7 of the training data required by existing models [1]. | Requires significantly larger, labeled datasets for comparable performance [1]. | Dramatically reduces dependency on large, computationally expensive DFT databases. |

| Exploration of Novel Spaces | Successfully identified new, DFT-validated 2D semiconductors and double perovskite oxides [1]. | Performance can degrade in uncharted compositional spaces due to bias [1]. | Enables more reliable and confident navigation of unexplored chemical spaces for discovery. |

| Bias Mitigation | Integrates complementary knowledge (atomic, interactive, electronic) to cancel out individual model biases [1]. | Each model contains bias from its foundational assumptions (e.g., Roost's complete-graph assumption) [1]. | Produces more generalizable and robust predictions, crucial for high-throughput virtual screening. |

Experimental Protocols and Methodologies

The ECSG Framework: A Detailed Workflow

The ECSG framework operates on a two-level architecture: a base level containing three diverse models and a meta-level that combines their predictions [1] [9]. The following diagram illustrates the complete workflow, from input encoding to final prediction.

Diagram Title: ECSG Framework Workflow from Input to Prediction

Protocol for Base-Level Model Training and Feature Generation

The power of ECSG stems from the deliberate diversity of its base models. Their individual training protocols are detailed below [1] [9].

Table 3: Base-Level Model Specifications and Training Protocols

| Model | Domain Knowledge | Input Feature Generation Protocol | Model Architecture & Training Protocol |

|---|---|---|---|

| ECCNN | Fundamental electron configurations of atoms. | 1. Map each element in the formula to its electron configuration.2. Encode into a 3D tensor of dimensions 118 (elements) × 168 × 8 representing occupied states [1]. | Architecture: Two convolutional layers (64 filters, 5×5), batch normalization, max-pooling, flattened dense layers [1].Training: Trained via backpropagation (e.g., Adam optimizer) using stability labels. |

| Magpie | Statistical patterns of 22 intrinsic elemental properties (e.g., atomic radius, electronegativity). | For a given composition, calculate the mean, mean absolute deviation, range, min, max, and mode for each of the 22 properties across all constituent atoms [1]. | Architecture: Gradient-boosted regression trees (XGBoost) [1].Training: XGBoost algorithm trained on the vector of statistical features. |

| Roost | Interatomic interactions and bonding within a chemical formula. | Represent the chemical formula as a complete graph. Nodes are elements (with feature vectors), and edges represent all possible pairwise interactions [1]. | Architecture: Graph Neural Network (GNN) with an attention-based message-passing mechanism [1].Training: GNN learns to aggregate information from neighboring nodes to predict global compound stability. |

Protocol for Stacked Generalization (Meta-Learning)

The stacked generalization procedure is critical for bias reduction. It must be performed carefully to prevent data leakage and overfitting [1] [23].

- Base Model Cross-Validation: Train each of the three base models (ECCNN, Magpie, Roost) on the training dataset. However, to generate inputs for the meta-model, use k-fold cross-validation on this training set. For each fold, train the base model on the training subset and generate predictions on the held-out validation subset. This results in out-of-sample predictions for every data point in the original training set [1].

- Meta-Dataset Construction: Create a new dataset (the meta-dataset) where:

- The input features for each compound are its three out-of-sample prediction values:

[Pred_ECCNN, Pred_Magpie, Pred_Roost]. - The target is the original true stability label for that compound.

- The input features for each compound are its three out-of-sample prediction values:

- Meta-Model Training: Train a relatively simple, yet powerful, meta-learner (e.g., a linear model, ridge regression, or a shallow XGBoost model) on this meta-dataset. This model learns the optimal way to weight and combine the predictions of the base models to minimize final prediction error [1] [9].

- Final Inference: For prediction on new, unseen compounds, the fully trained base models first generate their predictions. These three predictions are then fed as a feature vector into the trained meta-model, which produces the final, refined stability prediction.

Complementary Knowledge Domains and Bias Reduction Mechanism

The selection of base models in ECSG is not arbitrary; it is designed to cover orthogonal and complementary scales of material description. This design is the core of its bias reduction capability. The following diagram conceptualizes how the different knowledge domains interact.

Diagram Title: Complementary Knowledge Domains Integrated by ECSG

- Magpie (Atomic Scale): Operates on tabulated elemental properties. Its bias stems from relying on human-selected features and their statistical aggregations, which may not fully capture complex, non-linear interactions [1].

- Roost (Interactive Scale): Operates on a graph representation of the formula. Its bias originates from the assumption that all atoms in a formula interact equally (complete graph), which may not reflect true chemical bonding environments [1].

- ECCNN (Electronic Scale): Operates on fundamental electron configuration data. While more fundamental, its bias is tied to the specific encoding scheme of electron states into a tensor and the convolutional neural network's inductive biases [1].

The meta-learner in the ECSG framework does not simply average these predictions. Instead, it learns a non-linear function that identifies when one model's prediction is more reliable than the others based on the specific chemical context. It effectively discerns and down-weights the contribution of a model where its inherent domain bias would lead to an erroneous prediction, thereby reducing the overall inductive bias of the system [1] [23].

Implementing and utilizing the ECSG framework effectively requires access to specific datasets, software tools, and computational resources.

Table 4: Research Reagent Solutions for ML-Driven Stability Prediction

| Category | Item / Resource | Function / Application in ECSG Workflow |

|---|---|---|

| Data Sources | Materials Project (MP) | Primary database for acquiring labeled training data on formation energies and computed stability for thousands of inorganic compounds [1] [9]. |

| Open Quantum Materials Database (OQMD) | Another extensive repository of calculated thermodynamic properties used for training and benchmarking models [1]. | |

| JARVIS Database | Used in the referenced study for benchmarking the final performance of the ECSG model [1]. | |

| Software & Libraries | XGBoost / LightGBM | Libraries for implementing gradient-boosted trees, used in the Magpie base model and potentially as the meta-learner [1] [9]. |

| PyTorch / TensorFlow | Deep learning frameworks essential for building and training the ECCNN (CNN) and Roost (GNN) models [1]. | |

| Deep Graph Library (DGL) / PyTorch Geometric | Specialized libraries for graph neural network implementation, required for the Roost model [1]. | |

| Validation & Deployment | Density Functional Theory (DFT) Codes (VASP, Quantum ESPRESSO) | Critical for validation. Final predictions of novel stable compounds must be validated by high-fidelity DFT calculations to confirm formation energy and stability on the convex hull [1] [24]. |

| Active Learning Pipelines | Frameworks to iteratively select the most informative candidates for DFT validation, optimizing the discovery loop [9]. | |

| Experimental Follow-Up | High-Throughput Synthesis & Characterization | For experimentally validating the DFT-confirmed, ML-predicted novel compounds (e.g., via automated synthesis robots, XRD, SEM) [9]. |

Data Preparation and Feature Engineering for Composition-Based Model Input

This comparison guide provides an objective evaluation of three prominent composition-based machine learning models—Roost, Magpie, and ECCNN—within the framework of the Electron Configuration models with Stacked Generalization (ECSG) ensemble approach for thermodynamic stability prediction. Benchmarking analysis reveals that the integrated ECSG framework achieves superior performance (AUC: 0.988) by leveraging complementary domain knowledge from its constituent models, while demonstrating exceptional sample efficiency, requiring only one-seventh of the data used by existing models to achieve comparable accuracy [1]. This analysis is contextualized within broader research on accelerating materials discovery through computational methods, providing drug development professionals and researchers with validated methodologies for stability prediction of inorganic compounds.

Quantitative Performance Comparison of Composition-Based Models

Table 1: Core Performance Metrics for Stability Prediction Models on the JARVIS Database

| Model | AUC Score | Key Input Features | Sample Efficiency | Primary Algorithm |

|---|---|---|---|---|

| ECSG (Ensemble) | 0.988 [1] | Stacked predictions from base models | Highest (1/7 data for equivalent performance) [1] | Stacked Generalization |

| ECCNN | 0.978 [1] | Electron configuration matrices (118×168×8) | High | Convolutional Neural Network |

| Roost | 0.962 [1] | Complete graph of elements with attention | Medium | Graph Neural Network |

| Magpie | 0.954 [1] | Statistical features from elemental properties | Medium | Gradient Boosted Trees (XGBoost) |

Table 2: Feature Engineering Approaches and Domain Knowledge Integration

| Model | Feature Engineering Strategy | Domain Knowledge Source | Dimensionality | Key Advantages |

|---|---|---|---|---|

| ECCNN | Direct electron configuration encoding [1] | Quantum mechanical principles | High (118×168×8 matrix) | Minimal inductive bias, intrinsic atomic characteristics |

| Roost | Graph representation with message passing [1] | Interatomic interactions | Variable (based on composition) | Captures relational information between atoms |

| Magpie | Statistical aggregation of elemental properties [1] | Empirical materials science knowledge | Moderate (handcrafted features) | Interpretable features, wide property coverage |

| Traditional ML | Handcrafted features based on specific assumptions [1] | Limited domain theories | Variable | Simpler implementation, faster training |

Experimental Protocols for Model Benchmarking

Dataset Curation and Preprocessing Methodology