Benchmarking Machine Learning Architectures for Molecular Synthesizability: A New Paradigm for Drug Discovery

The critical challenge in computational drug discovery is the generation of molecules with optimal pharmacological properties that are also synthesizable in the laboratory.

Benchmarking Machine Learning Architectures for Molecular Synthesizability: A New Paradigm for Drug Discovery

Abstract

The critical challenge in computational drug discovery is the generation of molecules with optimal pharmacological properties that are also synthesizable in the laboratory. This article provides a comprehensive benchmark of contemporary machine learning architectures for predicting and optimizing molecular synthesizability. We explore the foundational shift from traditional scores like Synthetic Accessibility (SA) to data-driven, retrosynthesis-based metrics such as the round-trip score. The analysis covers a range of methodologies, from graph neural networks and transformers for molecular representation to the application of innovative frameworks like SDDBench for unified evaluation. We address key troubleshooting aspects, including data limitations, model overfitting, and generalization to novel chemical spaces. Finally, we present a comparative validation of model performance, establishing a new standard for evaluating synthesizability in generative drug design. This work is tailored for researchers, scientists, and development professionals aiming to bridge the gap between in silico predictions and practical chemical synthesis.

Defining Synthesizability: From Chemical Intuition to Data-Driven Metrics

The drug discovery landscape is undergoing a transformative shift, driven by artificial intelligence (AI) and machine learning (ML) that can generate millions of novel molecular structures with computationally predicted therapeutic properties. However, a significant and persistent challenge, known as the "generation-synthesis gap," undermines this potential: the vast majority of these AI-designed molecules cannot be successfully synthesized in a laboratory setting [1]. This gap represents a critical bottleneck, transforming a theoretically promising pipeline into a practical roadblock. The fundamental issue is that generation models optimize for biological activity and drug-like properties, often without the inherent chemical logic and constraints required for practical synthesis. Consequently, brilliant computational designs become chemical dead-ends, unable to be physically realized for experimental testing.

The core of the problem lies in the complex interplay between thermodynamic stability, kinetic accessibility, and experimental feasibility. While traditional computational screening often relies on density functional theory (DFT) to calculate a compound's thermodynamic stability, this zero-kelvin assessment is an incomplete picture of synthesizability [2]. Not all stable compounds have been synthesized, and conversely, not all unstable compounds are unsynthesizable; these are categorized as "uncorrelated" materials, whose synthesizability cannot be determined by stability alone [2]. This limitation has spurred the development of specialized ML architectures designed specifically to predict synthesizability, moving beyond simple stability metrics to capture the nuanced patterns of successful chemical synthesis.

Benchmarking Machine Learning Architectures for Synthesizability Prediction

To bridge the generation-synthesis gap, researchers have developed several sophisticated ML approaches. The table below provides a structured comparison of four distinct architectures, highlighting their core methodologies, performance, and ideal use cases.

Table 1: Benchmarking ML Architectures for Synthesizability Prediction

| Model/Architecture | Core Approach | Reported Performance | Key Advantage | Limitation / Consideration |

|---|---|---|---|---|

| SynFrag [1] | Fragment assembly & autoregressive generation | Consistent performance across clinical drugs & AI-generated molecules | Sub-second predictions; interpretable attention mechanisms | Performance is tied to learned fragment assembly patterns |

| CSLLM (Synthesizability LLM) [3] | Fine-tuned Large Language Model on "material string" representation | 98.6% accuracy on test set; 97.9% on complex structures | Exceptional generalization to structurally complex materials | Requires curated text representation (CIF/POSCAR) of crystal structures |

| DFT + ML Model [2] | Machine learning combined with DFT-calculated stability (e.g., Ehull) | 0.82 precision & recall for Half-Heuslers | Identifies synthesizable unstable & unsynthesizable stable compounds | Computationally expensive due to DFT requirement |

| Semi-Supervised (PU Learning) [4] | Positive-Unlabeled learning on material stoichiometries | 83.4% recall, 83.6% estimated precision | Effective for guiding discovery of new inorganic phases (e.g., Cu₄FeV₃O₁₃) | Does not specify synthesis method or precursors |

Experimental Protocols and Workflows

The performance of these models hinges on their unique experimental designs and data curation protocols.

SynFrag's Training and Validation: This model employs self-supervised pretraining on millions of unlabeled molecules to learn dynamic fragment assembly patterns. This approach goes beyond simple fragment statistics or reaction annotations, capturing connectivity relationships that lead to "synthetic difficulty cliffs," where minor structural changes cause major synthesizability shifts. Its evaluation across public benchmarks, clinical drugs with intermediates, and AI-generated molecules demonstrates its robustness across diverse chemical spaces [1].

CSLLM Framework and Data Curation: The Crystal Synthesis Large Language Model framework utilizes three specialized LLMs for predicting synthesizability, synthetic methods, and precursors. Its high accuracy stems from a balanced and comprehensive dataset of 70,120 synthesizable crystal structures from the Inorganic Crystal Structure Database (ICSD) and 80,000 non-synthesizable structures identified via a pre-trained PU learning model. A key innovation is the "material string," a efficient text representation for crystal structures that includes lattice parameters, composition, atomic coordinates, and symmetry, enabling effective fine-tuning of LLMs [3].

Semi-Supervised Learning for Stoichiometries: The model detailed by employs Positive-Unlabeled (PU) learning to predict the synthesizability of inorganic material stoichiometries. This method is particularly valuable because it learns the hidden features of synthesizable compositions from limited labeled data, allowing for the construction of continuous synthesizability phase maps. Its experimental validation involved guiding the exploration of a quaternary oxide system (CuO, Fe₂O₃, and V₂O₅), leading to the discovery of a new phase, Cu₄FeV₃O₁₃ [4].

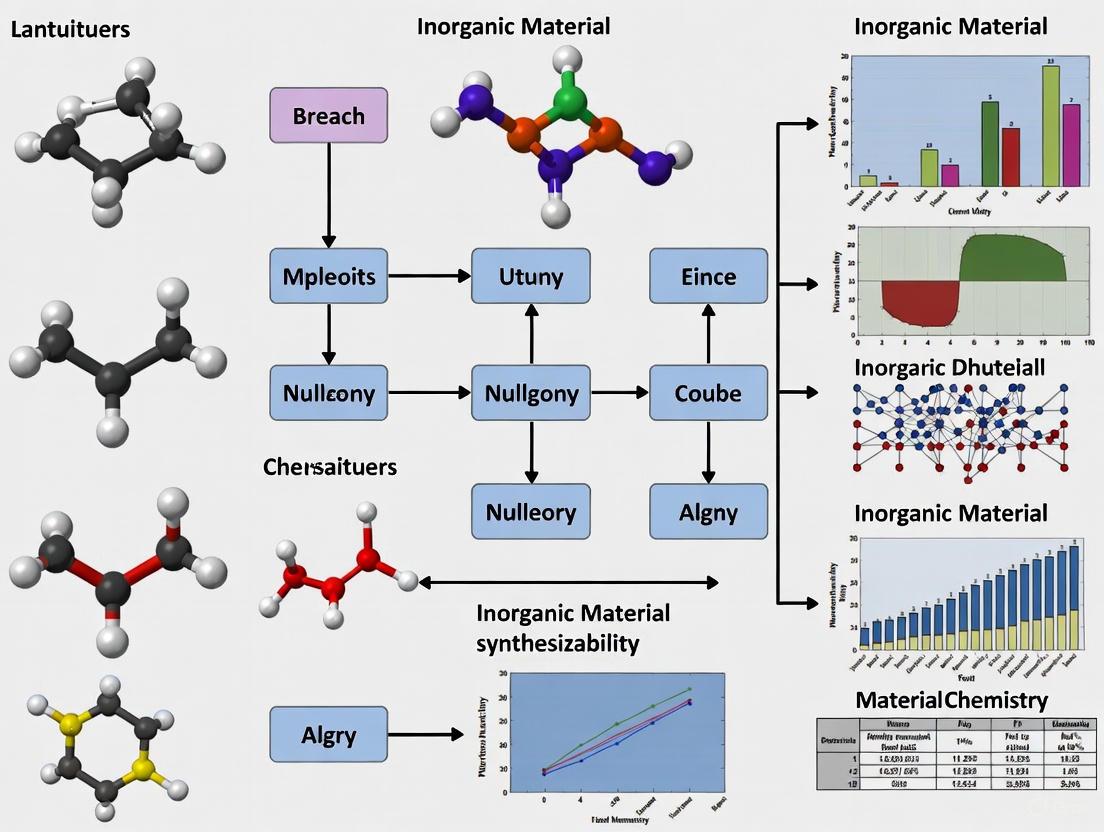

Visualizing the Synthesis Gap and Solution Workflows

The following diagrams, generated with Graphviz, illustrate the core problem and the integrative workflows of advanced prediction models.

The Synthesis Gap in Drug Discovery

SynFrag Fragment Assembly Workflow

CSLLM Multi-Model Prediction Framework

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful synthesizability research relies on a suite of computational tools, datasets, and platforms. The table below details key resources for building and validating predictive models.

Table 2: Key Research Reagent Solutions for Synthesizability Research

| Tool / Resource | Type | Primary Function | Relevance to Synthesizability |

|---|---|---|---|

| SynFrag Platform [1] | Web Platform / Code | Predicts synthetic accessibility (SA) | Provides rapid, interpretable SA scoring for large-scale molecular screening in drug discovery. |

| Polaris Hub [5] | Benchmarking Platform | Centralized repository for datasets & benchmarks | Offers standardized datasets and evaluation frameworks for comparing ML models in drug discovery. |

| DFT Software (e.g., VASP) [2] | Computational Tool | Calculates thermodynamic stability (Ehull) | Provides foundational stability data (Ehull) for training and validating ML models on material synthesizability. |

| ICSD [3] | Database | Repository of experimentally synthesizable crystal structures | Serves as the primary source of confirmed positive examples (synthesizable materials) for model training. |

| Material String Representation [3] | Data Representation | Text-based encoding of crystal structures | Enables efficient fine-tuning of Large Language Models (LLMs) for crystal structure analysis and prediction. |

| PU Learning Model [4] | Algorithm / Method | Identifies negative samples from unlabeled data | Critical for constructing balanced datasets by reliably identifying non-synthesizable material structures. |

The benchmarking of these diverse machine learning architectures reveals a clear trajectory for the future of synthesizability research. The most promising frameworks, such as CSLLM and SynFrag, are those that move beyond single-score predictions to offer interpretable, multi-faceted assessments of the synthesis pathway. Their ability to not only predict feasibility but also suggest methods and precursors represents a paradigm shift from passive assessment to active design guidance.

For researchers and drug development professionals, the implication is that integrating these specialized synthesizability predictors early in the molecular generation pipeline is no longer optional but essential. By leveraging tools that combine the computational efficiency of ML with the chemical logic of synthesis, the industry can begin to close the generation-synthesis gap. This will compress discovery timelines, reduce costly experimental failure, and ultimately accelerate the delivery of novel therapeutics to patients. The future lies not in replacing expert intuition but in augmenting it with predictive, interpretable, and actionable computational intelligence.

Limitations of Traditional Synthetic Accessibility (SA) Scores and Fragment-Based Methods

In the fields of medicinal chemistry and computer-assisted drug discovery, accurately predicting the ease with which a molecule can be synthesized—its synthetic accessibility (SA)—is a fundamental challenge. For researchers employing generative AI and fragment-based methods, unreliable SA predictions can lead to wasted resources on molecules that are impractical or prohibitively expensive to produce [6] [7]. Traditional SA scores and established fragment-based drug discovery (FBDD) approaches have provided valuable frameworks for assessing synthesizability. However, they possess significant limitations that can hinder their effectiveness in modern, high-throughput research environments, particularly when benchmarking machine learning architectures for synthesizability research [6] [7] [8]. This guide objectively compares the performance of traditional methods, highlighting their core shortcomings through structured data and experimental protocols to inform the selection and development of more robust alternatives.

A Critical Look at Traditional Synthetic Accessibility Scores

Traditional SA scores are widely used as fast filters to prioritize molecules for synthesis. They can be broadly categorized into structure-based and retrosynthesis-based approaches, each with distinct weaknesses [7].

Quantitative Limitations of Popular SA Scores

Benchmarking studies reveal varying performance of common SA scores when tested against the outcomes of a full retrosynthetic analysis using tools like AiZynthFinder.

Table 1: Performance Metrics of Selected SA Scores on a Standardized Benchmark [7]

| SA Score | Underlying Approach | Key Performance Shortcoming |

|---|---|---|

| SAscore | Structure-based (Fragment Frequency + Complexity Penalty) | Lower accuracy in discriminating feasible from infeasible molecules compared to retrosynthesis-based scores. |

| SYBA | Structure-based (Bayesian Classifier on Easy/Hard-to-Synthesize Sets) | Performance is dependent on the quality and representativeness of the generated "hard-to-synthesize" set. |

| SCScore | Retrosynthesis-based (Neural Network on Reaction Data) | Better discrimination than structure-based methods, but can be slow and depends on Reaxys data coverage. |

| RAscore | Retrosynthesis-based (Gradient Boosting on AiZynthFinder Outcomes) | Designed specifically for one CASP tool; generalizability to other synthesis planners can be limited. |

Core Experimental Protocol for Benchmarking SA Scores

The following methodology is adapted from studies that critically assess SA scores against CASP tools [7]:

- Dataset Curation: A diverse set of target molecules is compiled from sources like ChEMBL or PubChem, ensuring a range of structural complexity.

- Ground Truth Establishment: Each target molecule is processed through a CASP tool (e.g., AiZynthFinder) with a defined computational budget (e.g., maximum search time or expansion depth). A molecule is labeled "synthesizable" if at least one complete route to commercially available building blocks is found.

- SA Score Calculation: Traditional SA scores (SAscore, SYBA, SCScore, RAscore) are computed for all molecules in the dataset.

- Performance Evaluation: The accuracy of each SA score in predicting the ground-truth "synthesizable" label is measured using metrics like the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). The ability of the scores to reduce the search space and computation time in CASP is also analyzed.

Fundamental Limitations of Traditional SA Scoring

- Lack of Cost Awareness: Most scores output an arbitrary number (e.g., 1-10) that does not translate to real-world economic viability. A molecule labeled "easy" by a score might still be incredibly expensive to synthesize due to rare starting materials or costly reaction steps [6].

- Ignoring Purchasability: Traditional scores do not cross-reference with chemical supplier databases. A molecule might be classified as "hard-to-synthesize" by an algorithm, yet be readily available for purchase from a proprietary supplier, leading to a false negative [6].

- Dependence on Underlying CASP Accuracy: Retrosynthesis-based scores are only as reliable as the CASP tool they are trained on or designed for. Flaws in the template set, search algorithm, or stocklist of the CASP tool are inherently baked into the score [7].

- Poor Generalizability: Many SA scores are trained on specific datasets (e.g., drug-like molecules from ZINC or ChEMBL) and may perform poorly when applied to different regions of chemical space, such as natural products or macrocycles [6] [7].

Diagram 1: Core limitations of traditional SA scores and their ultimate consequence in a research workflow.

Inherent Challenges in Fragment-Based Drug Design

FBDD is a powerful strategy for tackling difficult targets, but its workflow contains steps prone to inefficiency and failure [9] [10].

The Fragment Screening and Optimization Workflow

The standard FBDD pipeline involves several critical stages where limitations can manifest.

Diagram 2: The FBDD workflow and its associated limitations at each key stage.

Key Limitations of the FBDD Methodology

- The Deconstruction-Reconstruction Paradox: A fundamental assumption in FBDD is that the binding mode of a fragment will be conserved as it is grown into a larger molecule. However, experimental studies have shown that this is not always true. When a known inhibitor is deconstructed into its component fragments, these fragments often bind in different orientations or locations than they occupy in the full parent molecule [9]. This invalidates the rational structure-based design that is central to FBDD and can lead optimization efforts down unproductive paths.

- Rapidly Escalating Synthetic Complexity: While initial fragments are typically small and synthetically tractable, the process of growing, linking, or merging them to improve potency and selectivity often results in lead compounds with significantly increased molecular complexity. This can render the final lead difficult or expensive to synthesize on a practical scale, a problem not captured by initial fragment SA assessments [9] [11].

- High Resource Demands for Screening: Identifying initial fragment hits requires highly sensitive biophysical techniques like NMR, SPR, or X-ray crystallography. These methods are often low- to medium-throughput, require significant amounts of high-purity protein, and rely on expensive instrumentation and specialized expertise, creating a barrier to entry for some research groups [9] [10].

- Limitations of Rule-Based Fragment Libraries: While guidelines like the "Rule of Three" are useful for ensuring fragment solubility and initial synthetic tractability, they can also restrict the chemical space explored. Privileged sub-structures or three-dimensional fragments that are slightly larger might be excluded, potentially missing valuable starting points for drug discovery [9] [10].

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 2: Essential Resources for Synthesizability and FBDD Research

| Research Reagent / Tool | Function / Application | Key Considerations |

|---|---|---|

| AiZynthFinder | Open-source CASP tool for retrosynthesis planning; used to establish ground truth for SA score benchmarking [7]. | Search parameters (e.g., depth, time) must be standardized for reproducible benchmarking. |

| RDKit | Open-source cheminformatics toolkit; provides implementation of SAscore and essential functions for molecular featurization [7]. | Community-standard platform for molecular representation and calculation of descriptor-based scores. |

| ZINC/ChEMBL Databases | Sources of commercially available and bioactive molecules; used to build training sets for SA models and define "easy-to-synthesize" chemical space [7]. | Dataset bias can limit model generalizability if not carefully considered. |

| Molport Database | Database of purchasable chemicals from global suppliers; used in novel SA models like MolPrice to incorporate real-world cost and availability [6]. | Provides a pragmatic reality check for virtual molecules. |

| Surface Plasmon Resonance (SPR) | Label-free biophysical technique for detecting fragment binding; provides kinetic data (kon, koff) [10]. | Requires protein immobilization and can be prone to artifacts if not carefully controlled. |

| Nuclear Magnetic Resonance (NMR) | High-sensitivity method for fragment screening; can identify very weak binders and map binding sites [9] [10]. | Requires isotopic labeling for protein-observed methods and significant expertise for data interpretation. |

The limitations of traditional SA scores and FBDD methods present significant challenges but also clear directions for future research. For SA scoring, the next generation of tools is moving beyond simple structural rules or black-box predictions towards cost-aware models that incorporate market data from supplier databases [6] and methods that provide more interpretable and actionable feedback to chemists. For FBDD, the integration of generative AI and active learning cycles shows promise in addressing the reconstruction problem by exploring novel chemical spaces more efficiently [12]. Furthermore, the application of machine learning to quantify molecular complexity provides a more nuanced foundation for predicting synthetic challenges during lead optimization [11]. When benchmarking machine learning architectures for synthesizability, it is therefore critical to move beyond simple correlation with traditional scores and instead validate against real-world outcomes—successful synthesis routes, cost of goods, and the experimental success of designed compounds in bioassays.

A significant challenge in wet lab experiments with current drug design generative models is the fundamental trade-off between pharmacological properties and synthesizability. Molecules predicted to have highly desirable properties are often difficult or impossible to synthesize, while those that are easily synthesizable tend to exhibit less favorable properties [13] [14]. This synthesis gap represents a critical bottleneck in computational drug discovery, as molecules proposed by generative models may be challenging or infeasible to synthesize in practice [15]. The ability to synthesize designed molecules on a large scale remains crucial for drug development, yet evaluating synthesizability in general drug design scenarios continues to pose significant challenges for the field of drug discovery [13] [14].

Traditional approaches to assessing synthesizability, particularly the widely used Synthetic Accessibility (SA) score, evaluate ease of synthesis primarily through structural features and fragment contributions with complexity penalties [14]. However, this metric falls short of guaranteeing that actual synthetic routes can be found or executed in laboratory settings [13] [14]. The limitations of traditional scores have prompted a paradigm shift toward data-driven approaches that directly assess the feasibility of synthetic routes through retrosynthetic planning [16] [14]. This article examines how modern machine learning architectures are redefining synthesizability assessment through retrosynthetic planning, establishing a new gold standard for evaluating computationally generated molecules in drug discovery pipelines.

The Data-Driven Paradigm Shift in Synthesizability Assessment

Redefining Synthesizability: From Structural Features to Practical Routes

The data-driven perspective redefines molecule synthesizability from a practical standpoint: a molecule is considered synthesizable if retrosynthetic planners, trained on extensive reaction databases, can predict a feasible synthetic route for it [14]. This approach shifts focus from theoretical structural compatibility to practical synthetic pathway existence, better aligning computational assessments with real-world laboratory constraints.

This paradigm leverages the synergistic duality between retrosynthetic planners and reaction predictors, both trained on extensive reaction datasets [13]. The core innovation lies in creating a closed-loop validation system where drug design models generate new drug molecules, retrosynthetic planners predict their synthetic routes, and reaction prediction models attempt to reproduce both the predicted routes and the generated molecules [14]. This integrated framework enables a more realistic assessment of synthesizability that accounts for the practical executability of proposed synthetic routes in laboratory settings [16].

Key Limitations of Traditional Synthesizability Metrics

Traditional synthesizability assessment methods, particularly the SA score, face several fundamental limitations that the data-driven approach aims to address:

- Structural Focus Over Practical Viability: SA score evaluates synthesizability based primarily on structural features and fragment contributions, failing to account for the practical challenges of developing actual synthetic routes [14].

- Inadequate Reaction Pathway Consideration: The metric does not guarantee that synthetic routes can actually be found or that identified reactions will succeed in laboratory conditions [13].

- Limited Generalization for Novel Structures: For molecules significantly different from known chemical space, structural metrics provide insufficient guidance about synthetic feasibility [14].

Benchmarking Retrosynthetic Planning Architectures

Performance Metrics for Retrosynthetic Planning

Evaluating retrosynthetic planning strategies requires multiple metrics that capture both route-finding capability and practical viability. Traditional evaluation has primarily focused on solvability—the ability to successfully find a complete route whose leaf nodes consist of commercially available molecules [16]. However, empirical evidence demonstrates that solvability does not necessarily imply feasibility, prompting the development of more nuanced evaluation frameworks [16].

Table 1: Key Metrics for Evaluating Retrosynthetic Planning Performance

| Metric | Definition | Interpretation | Limitations |

|---|---|---|---|

| Solvability | Ability to find a complete route to commercially available molecules [16] | Measures route existence | Does not guarantee practical feasibility |

| Route Feasibility | Average of single step-wise feasibility scores reflecting practical executability [16] | Assesses laboratory viability | Requires extensive reaction data for accurate scoring |

| Round-Trip Score | Tanimoto similarity between reproduced and original molecule via predicted route [14] | Validates route correctness through forward simulation | Computational intensive; depends on reaction predictor quality |

| Search Efficiency | Planning cycles or time required to find viable routes [15] | Measures computational performance | Does not correlate with route quality |

Comparative Performance of Planning Algorithms

Recent comprehensive evaluations have benchmarked various planning algorithm and single-step retrosynthesis prediction model (SRPM) combinations across multiple datasets. These studies reveal that the model combination with the highest solvability does not always produce the most feasible routes, underscoring the need for multi-faceted evaluation [16].

Table 2: Performance Comparison of Retrosynthetic Planning Architectures

| Planning Algorithm | SRPM | Solvability (%) | Route Feasibility | Key Strengths | Dataset |

|---|---|---|---|---|---|

| MEEA* | Default | ~95 | Moderate | Superior route finding capability [16] | Multiple benchmarks |

| Retro* | Default | ~85 | High | Balanced performance on both metrics [16] | Multiple benchmarks |

| EG-MCTS | Default | ~80 | Moderate | Exploration-exploitation balance [16] | Multiple benchmarks |

| AND-OR Search | Various | 60-75 | Variable | Established baseline performance [15] | Retro*-190 |

| Neuro-symbolic | Evolutionary | ~90 | High | Progressive efficiency improvement [15] | Grouped similar molecules |

Retro* demonstrates particularly strong performance, selecting child nodes by considering both accumulated synthetic cost and estimated future synthetic cost predicted by a trained value network [16]. Meanwhile, emerging neuro-symbolic approaches show remarkable efficiency gains when processing groups of similar molecules, substantially reducing inference time—a crucial advantage for validating molecules from generative models [15].

Single-Step Retrosynthesis Prediction Models

The performance of multi-step planning algorithms fundamentally depends on the accuracy of underlying single-step retrosynthesis prediction models. Both template-based and template-free approaches offer distinct advantages:

- Template-based models (e.g., AizynthFinder, LocalRetro) generate reactants by selecting suitable reaction templates from predefined sets, ensuring chemical plausibility [16].

- Template-free models (e.g., Chemformer, ReactionT5) directly predict reactants without template reliance, offering greater flexibility for novel reactions [16].

Recent innovations like RetroTRAE represent molecules using sets of atom environments (AEs) as chemically meaningful building blocks, achieving top-1 accuracy of 58.3% on USPTO test datasets, increasing to 61.6% with highly similar analogs [17]. This approach outperforms other neural machine translation-based methods while avoiding issues associated with SMILES representations [17].

Experimental Protocols and Methodologies

The Round-Trip Validation Framework

The round-trip score methodology establishes an integrated framework for synthesizability assessment through several methodical steps:

- Molecule Generation: Drug design models generate candidate ligand molecules for specific protein binding sites [14].

- Retrosynthetic Planning: Retrosynthetic planners predict synthetic routes for generated molecules through recursive decomposition [14].

- Forward Reaction Prediction: Reaction prediction models simulate the synthesis process using the predicted route's starting materials [14].

- Similarity Calculation: The round-trip score computes Tanimoto similarity between the reproduced molecule and the originally generated molecule [14].

This approach correlates strongly with practical synthesizability, as molecules with feasible synthetic routes consistently achieve higher round-trip scores compared to those lacking viable routes [14].

Diagram 1: Round-trip validation workflow for synthesizability assessment

Multi-Step Retrosynthetic Planning Framework

The multi-step retrosynthetic planning framework (MRPF) follows a systematic search process:

- Initialization: Begin with target molecule at root node [16].

- Expansion: At each step, SRPMs predict possible reactants as child nodes [16].

- Cost Calculation: Apply negative log-likelihood to reaction probabilities, where higher probabilities yield lower costs [16].

- Selection: Choose child node with minimal cost for expansion [16].

- Termination: Continue iteratively until all leaf nodes correspond to commercially available starting molecules [16].

Planning algorithms employ different strategies for balancing exploration and exploitation. Retro* uses neural network-guided A* search prioritizing promising routes, while EG-MCTS leverages Monte Carlo Tree Search to balance high-potential and uncertain pathways [16]. MEEA* combines MCTS exploration with A* optimality, incorporating look-ahead search to evaluate future states [16].

Advanced neurosymbolic approaches implement a continuous learning cycle inspired by human knowledge acquisition:

- Wake Phase: Construct AND-OR search graphs during retrosynthetic planning, recording successful routes and failures [15].

- Abstraction Phase: Extract reusable multi-step reaction patterns (cascade chains for consecutive transformations, complementary chains for interacting reactions) [15].

- Dream Phase: Generate synthetic retrosynthesis data (fantasies) to refine neural models through simulated experiences [15].

This evolutionary process enables the system to discover compositional strategies beyond fundamental reaction rules, progressively improving retrosynthetic planning efficiency, particularly for groups of similar molecules [15].

Diagram 2: Neurosymbolic learning cycle for continuous improvement

The Scientist's Toolkit: Essential Research Reagents

Implementing data-driven synthesizability assessment requires specialized computational tools and datasets. The following resources represent essential components of the modern computational chemist's toolkit for retrosynthetic planning research:

Table 3: Essential Research Reagents for Data-Driven Synthesizability Research

| Resource Category | Specific Tools/Resources | Function | Key Applications |

|---|---|---|---|

| Retrosynthetic Planners | ASKCOS [16], Synthia [16], AizynthFinder [16] | Multi-step synthetic route prediction | De novo route design, synthesizability assessment |

| Reaction Prediction Models | Molecular Transformer [17], Template-free predictors [16] | Forward prediction of reaction outcomes | Route validation, round-trip scoring |

| Benchmark Datasets | USPTO [17], Retro*-190 [15], Custom SBDD benchmarks [14] | Performance evaluation and comparison | Algorithm validation, model training |

| Molecular Representations | Atom Environments [17], SMILES, SELFIES [17] | Chemical structure encoding | Model input representation |

| Specialized Libraries | RDKit, SDV (Synthetic Data Vault) [18] | Cheminformatics operations, synthetic data generation | Molecular manipulation, data augmentation |

The emergence of data-driven synthesizability assessment via retrosynthetic planning represents a fundamental shift in computational drug discovery. By moving beyond structural metrics to practical route evaluation, these approaches better align computational predictions with laboratory realities. The integrated framework of retrosynthetic planning, reaction prediction, and round-trip validation establishes a more rigorous standard for evaluating computationally generated molecules.

Performance benchmarks reveal that optimal algorithm selection depends on specific research goals—while MEEA* demonstrates superior solvability, Retro* provides better balanced performance considering both route existence and feasibility [16]. Emerging neurosymbolic approaches show particular promise for efficient validation of AI-generated molecular libraries, with their ability to reuse synthesis patterns and progressively decrease inference time [15].

As these methodologies continue evolving, data-driven synthesizability assessment will play an increasingly crucial role in bridging the gap between computational design and practical synthesis. By enabling more accurate identification of synthesizable drug candidates early in the discovery pipeline, these approaches have the potential to significantly reduce development costs and timelines, accelerating the delivery of novel therapeutics to patients.

The integration of machine learning (ML) into chemistry has catalyzed a paradigm shift in the discovery and development of molecules and materials. For researchers in drug development and synthetic chemistry, benchmarking the performance of diverse ML architectures is crucial for navigating this rapidly evolving landscape. This guide provides an objective comparison of core ML tasks—structure-based drug design (SBDD), reaction prediction, and retrosynthesis analysis—within the broader context of benchmarking for synthesizability research. It synthesizes current experimental data and detailed methodologies to offer a clear reference for scientists selecting tools and approaches for their work.

Structure-Based Drug Design

Structure-based drug design (SBDD) leverages the three-dimensional structure of a target protein to identify and optimize potential drug molecules. Recent benchmarking studies reveal surprising insights about the performance of various algorithmic approaches.

Performance Benchmarking of SBDD Algorithms

A comprehensive benchmark evaluated sixteen models across three dominant algorithmic categories: search-based algorithms, deep generative models, and reinforcement learning. The assessment focused on the pharmaceutical properties of generated molecules and their docking affinities with target proteins [19].

Table 1: Performance Comparison of SBDD Algorithm Types

| Algorithm Type | Representative Models | Key Strengths | Notable Performance Findings |

|---|---|---|---|

| Search-based Algorithms | AutoGrow4 | Strong optimization ability, competitive docking performance | Dominates in optimization ability [19] |

| 1D/2D Ligand-centric Methods | (Various) | Can use docking as a black-box oracle | Achieves competitive performance vs. 3D methods [19] |

| 3D Structure-based Methods | (Various) | Explicitly uses 3D protein structure | Does not definitively dominate other approaches [19] |

The benchmark demonstrated that AutoGrow4, a 2D molecular graph-based genetic algorithm, currently dominates SBDD in terms of optimization ability [19]. Contrary to conventional wisdom, the study also highlighted that 1D/2D ligand-centric methods can be effectively applied in SBDD by treating the docking function as a black-box oracle. These methods achieved competitive performance compared with 3D-based approaches that explicitly use the target protein's structure [19].

Experimental Protocol for SBDD Benchmarking

To ensure reproducible benchmarking of SBDD methods, the following experimental protocol was utilized in the cited study [19]:

- Model Selection: Include representative models from all major algorithmic categories (search-based, deep generative models, reinforcement learning).

- Evaluation Metrics: Assess both the pharmaceutical properties of generated molecules (e.g., drug-likeness, synthetic accessibility) and their binding affinities via molecular docking.

- Docking Procedure: Use standardized docking software and configurations to evaluate the binding affinity of generated molecules against specified target proteins.

- Comparison Framework: Treat the docking function as a black-box oracle to enable fair comparison between 1D/2D and 3D methods.

Diagram 1: Workflow for benchmarking SBDD algorithms.

Reaction Prediction

Reaction prediction involves forecasting the outcomes of chemical reactions, including products, yields, and stereoselectivity. Accurate prediction requires models that understand complex electronic and steric influences.

Knowledge-Based Graph Models for Reaction Performance

The SEMG-MIGNN (Steric- and Electronics-embedded Molecular Graph with Molecular Interaction Graph Neural Network) architecture represents a significant advance for reaction performance prediction [20]. This model embeds digitalized steric and electronic information of the atomic environment and incorporates a molecular interaction module to capture synergistic effects between reaction components [20].

Table 2: Comparison of Reaction Prediction Models and Performance

| Model Name | Architecture Type | Key Features | Performance Highlights |

|---|---|---|---|

| SEMG-MIGNN | Knowledge-based Graph Neural Network | Embeds local steric/electronic environments; Molecular interaction module | Excellent predictions of reaction yield and stereoselectivity; Strong extrapolative ability for new catalysts [20] |

| QM-GNN | Fusion Graph Neural Network | Combines GNN with quantum chemical descriptors of reaction sites | Improved predictive ability for regioselectivity and reactivity [20] |

| ChemTorch | Standardized Framework | Modular pipelines for benchmarking | Highlights advantage of structurally-informed models; Shows performance drops under out-of-distribution conditions [21] |

Experimental Protocol for Reaction Prediction Benchmarking

The experimental methodology for developing and evaluating the SEMG-MIGNN model involved [20]:

- Molecular Graph Construction: Generate molecular graphs with empty vertices from SMILES strings.

- Steric Information Embedding: Optimize molecular geometry using GFN2-xTB theory and map the steric environment using Spherical Projection of Molecular Stereostructure (SPMS), creating a 2D distance matrix for each atom.

- Electronic Information Embedding: Compute electron density at the B3LYP/def2-SVP level and record values in a 7×7×7 grid around each atom as an electronic environment tensor.

- Model Training: Process the Steric- and Electronics-embedded Molecular Graphs (SEMG) through the Molecular Interaction GNN (MIGNN) with its specialized interaction module for information exchange between reaction components.

- Validation: Test model performance on benchmark datasets for reaction yield and enantioselectivity prediction, using scaffold-based data splitting to verify extrapolative ability.

Retrosynthesis Analysis

Retrosynthesis analysis involves deconstructing target molecules into simpler precursors, a fundamental task in synthetic chemistry. ML approaches have dramatically accelerated this process, with template-based, semi-template-based, and template-free methods representing the primary architectures.

Performance Benchmarking of Retrosynthesis Models

Recent breakthroughs in retrosynthesis planning have been driven by large-scale data generation and advanced transformer architectures. The RSGPT model exemplifies this trend, achieving state-of-the-art performance through pre-training on 10 billion synthetically generated reaction data points [22].

Table 3: Retrosynthesis Model Performance on Standard Benchmarks

| Model Name | Algorithm Type | USPTO-50k Top-1 Accuracy | Key Innovations |

|---|---|---|---|

| RSGPT | Template-free, Generative Pretrained Transformer | 63.4% | Pre-training on 10B synthetic reactions; Reinforcement Learning from AI Feedback (RLAIF) [22] |

| RetroExplainer | Template-free, Graph-based | ~55% | Formulates retrosynthesis as molecular assembly; enables quantitative interpretation [22] |

| NAG2G | Template-free, Graph-based | ~55% | Combines 2D molecular graphs with 3D conformations; incorporates atomic mapping [22] |

| Energy-Based Reranking | Reranking Framework | Improves various base models | Applied to RetroSim: 35.7% → 51.8%; NeuralSym: 45.7% → 51.3% [23] |

For multi-step retrosynthesis route prediction, the PaRoutes framework provides a standardized benchmarking approach. Using this framework, studies have compared search algorithms like Monte Carlo Tree Search (MCTS), Retro*, and Depth-First Proof-Number Search (DFPN), finding that MCTS outperforms others in route quality and diversity [24].

Experimental Protocol for Retrosynthesis Benchmarking

Single-Step Retrosynthesis

The training protocol for state-of-the-art models like RSGPT involves a multi-stage process [22]:

- Synthetic Data Generation: Use template-based algorithms (e.g., RDChiral) applied to molecular fragment libraries to generate billions of synthetic reaction data points for pre-training.

- Model Pre-training: Pre-train transformer architectures on large-scale synthetic reaction data using language modeling objectives.

- Reinforcement Learning from AI Feedback (RLAIF): Employ AI-generated feedback to refine the model through a reward mechanism, validating generated reactants and templates with cheminformatics tools.

- Task-Specific Fine-tuning: Fine-tune the pre-trained model on specific benchmark datasets (e.g., USPTO-50k, USPTO-MIT, USPTO-FULL) to optimize performance for particular reaction types.

Diagram 2: Training workflow for advanced retrosynthesis models.

Multi-Step Retrosynthesis Route Prediction

The PaRoutes framework establishes this standardized protocol for benchmarking multi-step retrosynthesis methods [24]:

- Dataset Curation: Extract synthetic routes from patent literature (e.g., USPTO), ensuring non-overlapping leaf molecules and target molecules across routes.

- Route Quality Assessment: Compute metrics such as route length, convergence, and starting material availability in stock databases.

- Route Diversity Evaluation: Calculate pair-wise distance between routes using molecular representation approaches to ensure diverse solution sets.

- Algorithm Comparison: Test different search algorithms (MCTS, Retro*, DFPN) on their ability to recover reference routes and generate diverse, high-quality synthetic pathways.

The Scientist's Toolkit

This section details essential computational tools and resources used in benchmarking experiments across the featured studies.

Table 4: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Primary Function | Relevant Domain |

|---|---|---|---|

| AutoGrow4 | Search-based Algorithm | Molecular optimization using genetic algorithms | Structure-Based Drug Design [19] |

| AutoDock Vina | Docking Software | Molecular docking and virtual screening | Structure-Based Drug Design [25] |

| RDChiral | Cheminformatics Tool | Template extraction and reaction application | Retrosynthesis Analysis [22] |

| PaRoutes | Benchmarking Framework | Evaluation of multi-step retrosynthesis route predictions | Retrosynthesis Analysis [24] |

| DEKOIS 2.0 | Benchmarking Set | Protein-specific active compounds and decoys for docking evaluation | Structure-Based Drug Design [25] |

| USPTO Datasets | Reaction Database | Curated chemical reactions extracted from U.S. patents | Reaction Prediction & Retrosynthesis [24] [22] |

| ChemTorch | Deep Learning Framework | Standardized benchmarking for chemical reaction property prediction | Reaction Prediction [21] |

This comparison guide synthesizes performance data and methodologies across three core ML tasks in synthesizability research. Key findings indicate that:

- In SBDD, simpler approaches like 2D ligand-centric methods remain highly competitive with advanced 3D-aware models [19].

- For reaction prediction, incorporating explicit chemical knowledge (steric and electronic effects) significantly enhances model performance and interpretability [20].

- In retrosynthesis, the combination of large-scale synthetic data generation and advanced architectures like transformers has dramatically pushed forward state-of-the-art accuracy [22].

These insights provide researchers with evidence-based guidance for selecting appropriate ML architectures for their specific challenges in drug development and synthetic chemistry. As the field evolves, standardized benchmarking frameworks will continue to be essential for objectively measuring progress and identifying the most promising directions for future research.

The Critical Challenge of Molecular Synthesizability in Drug Discovery

A significant and persistent challenge in modern drug discovery is the fundamental trade-off between a molecule's predicted pharmacological properties and its synthesizability. Computational models frequently propose drug candidates with highly desirable properties that, when advanced to wet lab experiments, prove to be impractical or impossible to synthesize. Conversely, molecules that are easily synthesizable often exhibit less favorable binding affinities, pharmacokinetics, or other key therapeutic properties [14] [26]. This synthesis gap represents a major bottleneck in the drug development pipeline, leading to wasted resources and slowed progress.

The field has traditionally relied on the Synthetic Accessibility (SA) score to evaluate this critical characteristic [14] [27]. However, this metric has a profound limitation: it assesses synthesizability based primarily on structural features and fragment contributions, failing to guarantee that a practical, step-by-step synthetic route can actually be discovered or executed in a laboratory [14] [26]. Consequently, there is a pressing need for a more rigorous, data-driven benchmark that can accurately assess the practical synthesizability of computationally generated molecules, thereby bridging the gap between in silico predictions and in vitro synthesis. It is within this context that SDDBench and its novel round-trip score have been developed, offering a new paradigm for evaluating drug design models [14] [28].

SDDBench: A Novel Benchmark for Synthesizable Drug Design

SDDBench is a benchmarking framework introduced to directly address the synthesizability problem. It proposes a fundamental redefinition of molecular synthesizability from a data-centric perspective. Under this new paradigm, a molecule is considered synthesizable if data-driven retrosynthetic planners, trained on extensive repositories of known chemical reactions, can predict a feasible synthetic route for it [14]. This approach moves beyond simplistic structural analysis to a more practical assessment grounded in the realities of synthetic organic chemistry.

The core innovation of SDDBench is its round-trip score, a novel metric that leverages the synergistic duality between retrosynthetic planning and forward reaction prediction [14] [28]. This metric is inspired by evaluation methods in other fields, such as the CLIP score in image generation, which assesses the alignment between generated images and their text prompts [14]. Similarly, the round-trip score evaluates the alignment between a generated molecule and its proposed synthetic pathway.

The Round-Trip Score Workflow

The calculation of the round-trip score involves a three-stage process that integrates multiple components of AI-driven chemistry, creating a comprehensive simulation of the synthetic planning and execution cycle. The following diagram illustrates this workflow:

Figure 1: The round-trip score workflow integrates retrosynthetic planning and reaction prediction.

This workflow operationalizes through several key stages:

Retrosynthetic Planning: A generated molecule ( m ) is fed into a retrosynthetic planner ( g_\Phi ), which predicts a complete synthetic route. This route decomposes the target molecule into progressively simpler precursors until it reaches commercially available starting materials [26] [28]. The planner used in the benchmark, such as Neuralsym, employs beam search to generate multiple potential pathways [28].

Reaction Prediction Simulation: The predicted synthetic route is then passed to a forward reaction prediction model ( f_\Theta ). This model acts as a simulation agent for wet lab experiments, attempting to reconstruct the target molecule by applying the predicted reaction sequence to the identified starting materials [26].

Similarity Calculation and Scoring: The final product of the forward simulation, ( m' ), is compared to the original generated molecule ( m ). The round-trip score ( S(m) ) is computed as the Tanimoto similarity between ( m ) and ( m' ). A high score indicates that the proposed synthetic route is feasible and reliably reproduces the target, while a low score suggests the route is likely flawed or impractical [14] [28].

Formally, the round-trip score is defined as: [ S(m) = \text{Sim}(m, f{\Theta}(g{\Phi}(m))) = \text{Sim}(m, m') ] where ( g ) is the retrosynthetic planner and ( f ) is the forward prediction model [28].

Essential Research Reagents for SDDBench Implementation

The experimental framework of SDDBench relies on several key computational tools and data resources, each playing a critical role in the benchmarking process.

Table 1: Key Research Reagents and Resources in SDDBench

| Resource Name | Type | Primary Function in the Benchmark | Key Features |

|---|---|---|---|

| Retrosynthetic Planner | Software Model | Predicts synthetic routes for target molecules [28]. | Trained on USPTO; uses beam search [28]. |

| Reaction Prediction Model | Software Model | Simulates chemical reactions from reactants to products [14]. | Transformer architecture; validates route feasibility [28]. |

| USPTO Database | Chemical Dataset | Provides reaction data for training models [14] [26]. | Large-scale, curated reaction data from patents [26]. |

| ZINC Database | Chemical Database | Defines commercially available starting materials [26]. | Source of purchasable compounds for synthetic routes [26]. |

| Structure-Based Drug Design (SBDD) Models | Generative Models | Generate candidate ligand molecules for a protein target [14]. | Models include Pocket2Mol, FLAG, DecompDiff [28]. |

Comparative Analysis: SDDBench Against Traditional and Contemporary Benchmarks

To properly contextualize SDDBench's role in the landscape of computational drug discovery, it is essential to compare it with both traditional synthesizability metrics and other modern benchmarks designed to address various aspects of the drug discovery pipeline.

Comparison with Synthesizability Metrics

SDDBench's round-trip score introduces a fundamentally different approach to evaluating synthesizability compared to existing scores.

Table 2: SDDBench vs. Traditional Synthesizability Metrics

| Metric | Basis of Evaluation | Key Advantages | Key Limitations |

|---|---|---|---|

| Round-Trip Score (SDDBench) | Data-driven route feasibility & chemical simulation [14] [26] | Directly assesses practical route feasibility; integrates both retrosynthetic and forward prediction [26]. | Computationally intensive; depends on quality of training data [14]. |

| Synthetic Accessibility (SA) Score | Structural fragments & complexity penalty [14] [27] | Fast to compute; simple to interpret [14]. | Does not guarantee a route exists; based on heuristics [14] [26]. |

| SCScore | Historical reaction data complexity trends [27] | Contextualizes complexity within known chemical space [27]. | Does not propose or validate specific synthetic routes [27]. |

| RAScore | AI-driven retrosynthetic planning [27] | Leverages modern AI planners for classification [27]. | Primarily a classifier; may not validate route execution [26]. |

Comparison with Broader Drug Discovery Benchmarks

Beyond synthesizability-specific metrics, several benchmarks have been developed to address other critical stages in the drug discovery process. The following table places SDDBench alongside these initiatives, highlighting its unique focus.

Table 3: SDDBench in the Context of General Drug Discovery Benchmarks

| Benchmark | Primary Focus | Relevance to Practical Drug Discovery | Key Differentiator of SDDBench |

|---|---|---|---|

| SDDBench | Synthesizability of generated molecules [14] | Directly addresses the synthesis gap in wet-lab translation [14]. | Focus on end-to-end synthetic route validation via the round-trip score [26]. |

| MoleculeNet | Broad molecular property prediction [29] | Consolidates many tasks but has documented flaws [29]. | Targeted problem focus vs. MoleculeNet's general scope [29]. |

| Lo-Hi | Practical property prediction (Hit ID & Lead Optimization) [30] | Aligns tasks with real-world drug discovery stages [30]. | Focus on synthesizability rather than activity/binding prediction [30]. |

| CARA | Compound activity prediction for real-world applications [31] | Carefully designs data splits for virtual screening & lead optimization [31]. | Focus on synthesizability rather than activity/binding prediction [31]. |

| Polaris | Platform for sharing datasets & benchmarks [5] | Aims to be a central hub for the community [5]. | SDDBench is a specific benchmark; Polaris is a platform for hosting many [5]. |

Experimental Insights and Performance Evaluation

The efficacy of SDDBench is demonstrated through comprehensive evaluations of various Structure-Based Drug Design (SBDD) models. These experiments reveal critical insights into the relationship between drug generation and synthesizability.

Key Experimental Protocols in SDDBench

The standard experimental protocol under SDDBench involves several methodical steps:

Molecule Generation: Multiple SBDD generative models, including LiGAN, AR, Pocket2Mol, FLAG, DrugGPS, and DecompDiff, are used to generate ligand molecules for given protein binding sites [28]. This creates a diverse set of candidate molecules for synthesizability assessment.

Retrosynthetic Analysis: The generated molecules are processed by a retrosynthetic planner (e.g., Neuralsym). The planner's performance is measured by its search success rate—the percentage of molecules for which it can find at least one synthetic route ending in commercially available starting materials [28].

Route Validation and Scoring: For molecules with successful routes, the round-trip score is calculated. The benchmark also tracks the top-k route quality, defined as the percentage of molecules for which at least one proposed route achieves a high round-trip score (e.g., >0.9) [28], indicating a high degree of confidence in the route's feasibility.

Performance Comparison of Generative Models

Experimental results from SDDBench reveal significant variations in the ability of different SBDD models to generate synthesizable molecules. The following table summarizes hypothetical performance data illustrative of findings discussed in the literature [28]:

Table 4: Comparative Performance of SBDD Models on SDDBench Metrics

| Generative Model | Search Success Rate (%) | Top-k Route Quality (% with Score >0.9) | Inference Speed (Mols/Sec) |

|---|---|---|---|

| Pocket2Mol | 75.4 | 68.2 | 2.1 |

| FLAG | 71.1 | 62.5 | 1.8 |

| DecompDiff | 68.9 | 59.7 | 0.9 |

| AR | 65.3 | 55.1 | 3.4 |

| LiGAN | 58.7 | 48.3 | 5.2 |

These results demonstrate a critical trade-off. While some models may excel in traditional metrics like binding affinity or generation speed, SDDBench reveals that they may lag in producing practically synthesizable candidates. Pocket2Mol, for instance, has been identified as a leading performer in generating synthesizable candidates, achieving a balance between high search success rates and high-quality routes [28]. This type of analysis is invaluable for guiding the future development of drug generation models toward more practical and economically viable outputs.

SDDBench, with its innovative round-trip score, represents a paradigm shift in how the computational drug discovery community evaluates the output of generative models. By moving beyond superficial structural metrics to a functional test of synthetic feasibility, it directly addresses one of the most costly bottlenecks in drug development: the synthesis gap. The benchmark provides a much-needed, rigorous tool for objectively comparing the practical utility of different drug design architectures, pushing the field toward models that generate not just theoretically active compounds, but actionable drug candidates.

The development and adoption of focused, high-quality benchmarks like SDDBench, Lo-Hi, and CARA signal a maturation of the field. As these benchmarks become standard, they will drive progress in machine learning for drug discovery toward more reliable and practical applications, ultimately accelerating the journey from a digital design to a real-world therapeutic. Future work will likely focus on expanding the chemical reaction data underlying the retrosynthetic planners, refining the accuracy of forward reaction predictors, and integrating synthesizability assessment directly into the generative process itself.

Architectures in Practice: Implementing ML Models for Synthesizability Prediction

The accurate representation of molecules is a foundational step in applying machine learning (ML) to structure-based drug design (SBDD). The choice of representation directly influences a model's ability to learn the complex structure-activity relationships that dictate binding affinity, specificity, and ultimately, therapeutic efficacy. Traditional descriptor-based methods have increasingly been supplemented or replaced by more expressive data-driven representations, including molecular graphs, SMILES strings, and 3D geometric structures. Each paradigm offers a distinct set of trade-offs between computational efficiency, ease of use, and the richness of encoded chemical information. This guide provides an objective comparison of these dominant molecular representation schemes, synthesizing recent benchmarking studies and performance data to offer practical insights for researchers and drug development professionals engaged in synthesizability research.

Molecular Graphs

Molecular graphs provide a natural and intuitive representation by encoding atoms as nodes and bonds as edges within a graph structure [32]. This format is particularly amenable to processing with graph neural networks (GNNs), which learn features through message-passing mechanisms that aggregate information from local atomic environments [33] [32]. The key advantage of graph representations lies in their explicit encoding of molecular topology, allowing models to directly learn from connectivity patterns that define chemical functionality. Molecular graphs can be further categorized into 2D and 3D representations, with 2D graphs capturing topological connectivity and 3D graphs incorporating spatial atomic coordinates to convey geometric shape and conformation [32].

SMILES and Sequence-Based Representations

The Simplified Molecular-Input Line-Entry System (SMILES) represents molecules as linear strings of ASCII characters using a grammar that describes molecular structure [34]. This sequential representation allows the application of powerful natural language processing (NLP) architectures, particularly Transformer-based models like BERT and GPT, which treat SMILES strings as a chemical "language" [34]. These models can be pre-trained on vast unlabeled molecular datasets to learn fundamental chemical principles before being fine-tuned for specific predictive tasks. However, a significant limitation of SMILES is that minor syntactic changes can correspond to dramatically different molecular structures, and the representation does not natively capture 3D spatial information [32] [34].

3D Geometries and Equivariant Networks

3D geometric representations explicitly encode the spatial coordinates of atoms within a molecule, capturing critical information about molecular shape, steric effects, and conformational preferences that directly influence protein-ligand interactions [32] [35]. Recent advances in E(3)-equivariant neural networks ensure that model predictions remain consistent with respect to rotations and translations of the input molecular structure, a crucial property for physics-aware learning in SBDD [36] [32]. The primary challenge with 3D representations is their dependency on accurate conformation generation, which may not always be available, and increased computational complexity compared to 2D methods [37].

Table 1: Core Characteristics of Molecular Representation Schemes

| Representation | Data Structure | Key Features | Primary ML Architectures | Domain Knowledge Integration |

|---|---|---|---|---|

| Molecular Graphs (2D) | Graph (Nodes + Edges) | Atom/bond types, molecular topology | GCN, GAT, MPNN, D-MPNN [33] [32] | Functional groups, molecular weight [32] |

| SMILES/Sequences | Linear String | Molecular syntax, atomic composition | Transformer, BERT, GPT [34] | Learned from large-scale pre-training [34] |

| 3D Geometries | 3D Coordinates + Features | Spatial coordinates, molecular shape, chirality | E(3)-Equivariant GNNs, Diffusion Models [36] [32] | Bond lengths, angles, torsions, steric constraints [36] |

Benchmarking Performance Across Applications

Predictive Accuracy on Standardized Tasks

Comprehensive benchmarking reveals that the performance of representation schemes varies significantly across different prediction tasks and datasets. A systematic evaluation of eight ML algorithms across 11 public datasets provides insightful performance comparisons between descriptor-based and graph-based models [33].

Table 2: Performance Comparison Across Representation Types on Benchmark Tasks

| Task Type | Dataset | Best Performing Model | Key Metric | Performance | Representation Category |

|---|---|---|---|---|---|

| Regression | ESOL | Attentive FP [33] | RMSE | 0.503 ± 0.076 | Graph-based |

| Regression | FreeSolv | Attentive FP [33] | RMSE | 0.736 ± 0.037 | Graph-based |

| Classification | BACE | Attentive FP [33] | AUC-ROC | 0.850 ± 0.012 | Graph-based |

| Classification | BBBP | Attentive FP [33] | AUC-ROC | 0.920 ± 0.015 | Graph-based |

| Virtual Screening | CARA Benchmark | Meta-learning & Multi-task Training [31] | Multiple metrics | Varies by assay type | Graph-based with specialized training |

Notably, the study found that traditional descriptor-based models including Support Vector Machines (SVM) and Extreme Gradient Boosting (XGBoost) often matched or exceeded the performance of graph-based models on many benchmark tasks, while offering superior computational efficiency [33]. For instance, SVM generally achieved the best predictions for regression tasks, while Random Forest (RF) and XGBoost delivered reliable performance for classification tasks [33]. However, certain graph-based models like Attentive FP and GCN demonstrated outstanding performance on larger or multi-task datasets [33].

Universal Fingerprints: Bridging Small and Large Molecules

The MAP4 (MinHashed Atom-Pair fingerprint up to a diameter of four bonds) fingerprint represents an innovative approach that combines substructure and atom-pair concepts to create a universal fingerprint suitable for both small molecules and biomacromolecules [38] [39]. MAP4 encodes circular substructures around each atom in an atom-pair, written as SMILES strings and combined with the topological distance separating the two central atoms [38] [39]. These atom-pair molecular shingles are hashed and MinHashed to form the final fingerprint representation.

In benchmark evaluations, MAP4 significantly outperformed other fingerprints on an extended benchmark combining small molecule and peptide datasets [38] [39]. It achieved superior performance in recovering BLAST analogs from either scrambled or point mutation analogs, demonstrating particular strength for biomolecules [38] [39]. Additionally, MAP4 produced well-organized chemical space tree-maps (TMAPs) for diverse databases including DrugBank, ChEMBL, SwissProt, and the Human Metabolome Database, successfully differentiating between metabolites that were indistinguishable using substructure fingerprints [38] [39].

Experimental Protocols for Benchmarking

The CARA Benchmark for Real-World Drug Discovery

The Compound Activity benchmark for Real-world Applications (CARA) addresses critical gaps in existing benchmarks by carefully considering the biased distribution of real-world compound activity data [31]. Key aspects of its design include:

- Assay Type Distinction: CARA explicitly distinguishes between Virtual Screening (VS) assays, which contain compounds with diffused distribution patterns and lower pairwise similarities, and Lead Optimization (LO) assays, which contain congeneric compounds with aggregated distribution patterns and high structural similarities [31].

- Data Splitting Schemes: The benchmark implements tailored train-test splitting schemes specifically designed for VS and LO tasks to prevent data leakage and overestimation of model performance [31].

- Evaluation Scenarios: CARA considers both few-shot scenarios (when a few samples are already measured) and zero-shot scenarios (when no task-related data are available) to reflect different real-world application settings [31].

- Evaluation Metrics: The benchmark employs multiple metrics including AUC, EF1, EF5, BEDROC, and RIE to provide a comprehensive assessment of model performance across different aspects of prediction quality [31].

Experimental results from CARA demonstrated that while current models can make successful predictions for certain proportions of assays, their performances varied substantially across different assays [31]. The benchmark also revealed that different few-shot training strategies showed distinct performance patterns related to task types, with meta-learning and multi-task learning being particularly effective for VS tasks [31].

Structure-Based Generative Model Evaluation

For 3D structure-based generative models like DiffGui, comprehensive evaluation protocols assess multiple aspects of generated molecules [36]:

- Structural Quality: Evaluated using Jensen-Shannon (JS) divergence between distributions of bonds, angles, and dihedrals for reference and generated ligands, plus RMSD values between generated geometries and optimized conformations [36].

- Molecular Metrics: Include atom stability, molecular stability, PoseBusters validity, RDKit validity, novelty, uniqueness, and similarity with reference ligands [36].

- Molecular Properties: Assess binding affinity (Vina Score), quantitative estimate of drug-likeness (QED), synthetic accessibility (SA), octanol-water partition coefficient (LogP), and topological polar surface area (TPSA) [36].

DiffGui incorporates bond diffusion and property guidance to address challenges in 3D molecular generation, explicitly modeling both atoms and bonds while incorporating binding affinity and drug-like properties into training and sampling processes [36]. This approach has demonstrated state-of-the-art performance on the PDBbind dataset and competitive results on CrossDocked, with ablation studies confirming the importance of both bond diffusion and property guidance modules [36].

Visualization of Representation Learning Workflows

Molecular Representation Learning Workflow for SBDD

Table 3: Key Research Reagents and Computational Tools

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| RDKit | Cheminformatics Toolkit | Molecule I/O, descriptor calculation, fingerprint generation | Fundamental processing for all representation types [38] [32] |

| MAP4 Fingerprint | Molecular Fingerprint | Unified representation for small molecules and biomacromolecules | Virtual screening across diverse chemical spaces [38] [39] |

| CARA Benchmark | Benchmark Dataset | Evaluating compound activity prediction methods | Real-world drug discovery applications [31] |

| PDBbind | Curated Database | Protein-ligand complexes with binding affinities | Structure-based model training and validation [36] |

| DiffGui | Generative Model | Target-aware 3D molecular generation with property guidance | De novo drug design and lead optimization [36] |

| Attentive FP | Graph Neural Network | Molecular property prediction with attention mechanism | Property prediction for small molecules [33] |

| Transformer Models | Neural Architecture | SMILES-based molecular representation learning | Chemical language processing and property prediction [34] |

The benchmarking data and experimental comparisons presented in this guide demonstrate that no single molecular representation universally dominates all applications in structure-based drug design. Graph-based representations offer a balanced approach for general molecular property prediction, particularly when enhanced with attention mechanisms as in Attentive FP. SMILES-based Transformer models excel in leveraging large-scale pre-training for chemical language understanding. 3D geometric representations provide critical spatial information for structure-based design tasks but require more sophisticated architectures and computational resources. Emerging universal fingerprints like MAP4 show promise for applications spanning traditional small molecules and larger biomolecules. The choice of representation must ultimately align with the specific requirements of the drug discovery stage, considering factors such as data availability, computational constraints, and the critical molecular features governing the target activity relationship.

The acceleration of drug discovery hinges on the ability of computational models to not only design therapeutically effective molecules but also to ensure these molecules are synthesizable in a laboratory. Within this context, synthesizability research focuses on benchmarking machine learning architectures for their proficiency in generating viable molecular structures and predicting their complex properties. Two deep learning architectures have emerged as frontrunners: Graph Neural Networks (GNNs), which naturally model molecular structure, and Transformers, which excel at processing sequential and symbolic data. This guide provides an objective comparison of GNNs and Transformers, evaluating their performance, experimental protocols, and specific applicability to the critical challenge of synthesizability in molecular design. The aim is to offer researchers a clear, data-driven foundation for selecting and implementing these architectures in drug discovery pipelines.

Graph Neural Networks (GNNs): The Molecular Graph Specialist

GNNs are a class of neural networks specifically designed to operate on graph-structured data, making them intrinsically suited for molecular machine learning. In a molecular graph, atoms are represented as nodes and chemical bonds as edges [40].

The core operation of a GNN is message passing, where each node aggregates information from its neighboring nodes and edges. This process, often referred to as graph convolution, allows the network to capture the local chemical environment of each atom and learn complex structural patterns [41] [40]. By stacking multiple layers, a GNN can learn representations that encompass increasingly larger substructures of the molecule, ultimately leading to a holistic molecular embedding that can be used for property prediction [40].

Recent advancements have made GNNs more chemically intuitive. For instance, the MolNet architecture goes beyond simple 2D graph connectivity by incorporating a noncovalent adjacency matrix to account for "through-space" interactions (e.g., van der Waals forces) and a weighted bond matrix to differentiate between bond types (single, double, triple, aromatic) [42].

Transformers: The Sequential Data Powerhouse

Transformers, originally designed for natural language processing, have been co-opted for molecular applications by treating molecular representations like Simplified Molecular-Input Line-Entry System (SMILES) strings as sequences of characters [43] [44].

The Transformer's fundamental mechanism is self-attention. This allows the model to weigh the importance of different elements in a sequence when encoding a particular element. For a SMILE string, this means the model can learn long-range dependencies between atoms that may be distant in the sequence but critical to the molecule's overall properties [43]. Models like Saturn leverage this architecture for autoregressive molecular generation and optimization, demonstrating state-of-the-art sample efficiency in goal-directed design [44].

Their prowess in processing sequential data has made Transformers pivotal in diverse drug discovery tasks, including protein design, molecular dynamics, and drug target identification [43].

Performance Comparison in Molecular Tasks

Direct, apples-to-apples comparisons between GNNs and Transformers can be challenging due to differing molecular representations (graphs vs. sequences) and task specifics. However, benchmarks on public datasets and published studies reveal distinct performance trends.

Table 1: Performance Comparison on Key Molecular Tasks

| Model Architecture | Molecular Representation | Sample Efficiency | Key Benchmark Results | Interpretability |

|---|---|---|---|---|

| Graph Neural Network (GNN) | Molecular Graph (Nodes & Edges) | Moderate | - XGDP: Outperformed pioneering models in drug response prediction [41].- MolNet: State-of-the-art on BACE classification & ESOL regression [42]. | High (Can identify functional groups & significant genes) [41] |

| Transformer | SMILES/String | High | - Saturn: Demonstrated state-of-the-art sample efficiency vs. 22 existing models [44]. | Moderate (Primarily at sequence level) |

The XGDP framework exemplifies a modern GNN application, using a Graph Neural Network module on molecular graphs alongside a CNN for gene expression data to achieve precise drug response prediction [41]. Furthermore, its use of explainability algorithms like GNNExplainer allows it to capture salient functional groups of drugs and their interactions with significant genes in cancer cells, providing crucial mechanistic insights [41].

Transformers, on the other hand, excel in sample efficiency. The Saturn model, built on the Mamba architecture, has shown remarkable efficiency under heavily constrained computational budgets (e.g., 1000 oracle evaluations), enabling effective multi-parameter optimization that includes synthesizability via retrosynthesis models [44].

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies follow rigorous experimental protocols. Key methodological details include dataset selection, model training, and evaluation metrics, with a specific focus on synthesizability assessment.

Data Preparation and Model Training

- Data Acquisition: Publicly available datasets are standard. For property prediction, sources like the ChEMBL database (e.g., Lipophilicity dataset with ~4200 compounds) are common [40]. For drug response, combined datasets like Genomics of Drug Sensitivity in Cancer (GDSC) and Cancer Cell Line Encyclopedia (CCLE) are used [41].

- Feature Engineering: For GNNs, node and edge features are critical. Advanced feature sets, such as circular atomic features inspired by Extended-Connectivity Fingerprints (ECFPs), which incorporate atomic properties and neighborhood information, have been shown to enhance predictive power [41]. For Transformers, SMILES strings are tokenized as the input sequence [44].

- Training and Evaluation: Models are typically implemented in deep learning frameworks like PyTorch, with specific libraries such as PyTorch Geometric for GNNs [40]. Performance is evaluated using standard metrics like Root Mean Square Error (RMSE) for regression tasks and Area Under the Curve (AUC) for classification, with careful dataset splitting into training, validation, and test sets.

Synthesizability-Specific Evaluation

Evaluating a model's utility for synthesizability research often involves specialized protocols that move beyond simple property prediction to assess the practical feasibility of generated molecules.

- Retrosynthesis Models as Oracles: The most rigorous assessment uses dedicated retrosynthesis tools—such as AiZynthFinder, IBM RXN, or SYNTHIA—to determine whether a proposed molecule has a predicted synthetic pathway [44]. These models can be integrated directly into the optimization loop of a generative model, as demonstrated with Saturn, to directly optimize for synthesizability [44].

- Heuristic Metrics: Faster, heuristic synthesizability scores like the Synthetic Accessibility (SA) score or SYBA are often used as proxies. These are based on the frequency of molecular substructures in known compounds [44]. While they are correlated with retrosynthesis model success for drug-like molecules, this correlation diminishes for other chemical spaces, such as functional materials [44].

- Multi-Parameter Optimization (MPO): Real-world benchmarking involves optimizing for multiple objectives simultaneously. A typical MPO task might require a model to generate molecules that satisfy target properties (e.g., binding affinity from docking simulations, specific quantum-mechanical properties) while also being deemed synthesizable by a retrosynthesis oracle, all under a constrained computational budget [44].

Diagram 1: GNN and Transformer Workflow for Molecular Tasks

The Scientist's Toolkit: Key Research Reagents & Software

Implementing and benchmarking GNNs and Transformers requires a suite of software libraries and computational tools. The following table details essential "research reagents" for the field.