Bayesian Optimization vs. Genetic Algorithms: An AI Strategy Guide for Accelerated Materials Discovery

This article provides a comprehensive comparison of Bayesian Optimization (BO) and Genetic Algorithms (GAs) for materials discovery, tailored for researchers and professionals in biomedicine and drug development.

Bayesian Optimization vs. Genetic Algorithms: An AI Strategy Guide for Accelerated Materials Discovery

Abstract

This article provides a comprehensive comparison of Bayesian Optimization (BO) and Genetic Algorithms (GAs) for materials discovery, tailored for researchers and professionals in biomedicine and drug development. It covers the foundational principles of both algorithms, explores advanced methodological adaptations for complex material design goals like multi-objective and target-specific optimization, and addresses practical troubleshooting for industrial-scale challenges. Through validation against real-world case studies and comparative performance metrics, the article offers actionable insights for selecting and implementing the right AI strategy to drastically reduce experimental iterations and accelerate the development of novel materials, from high-entropy alloys to therapeutic molecules.

The Core Engines of AI-Driven Discovery: Understanding Bayesian Optimization and Genetic Algorithms

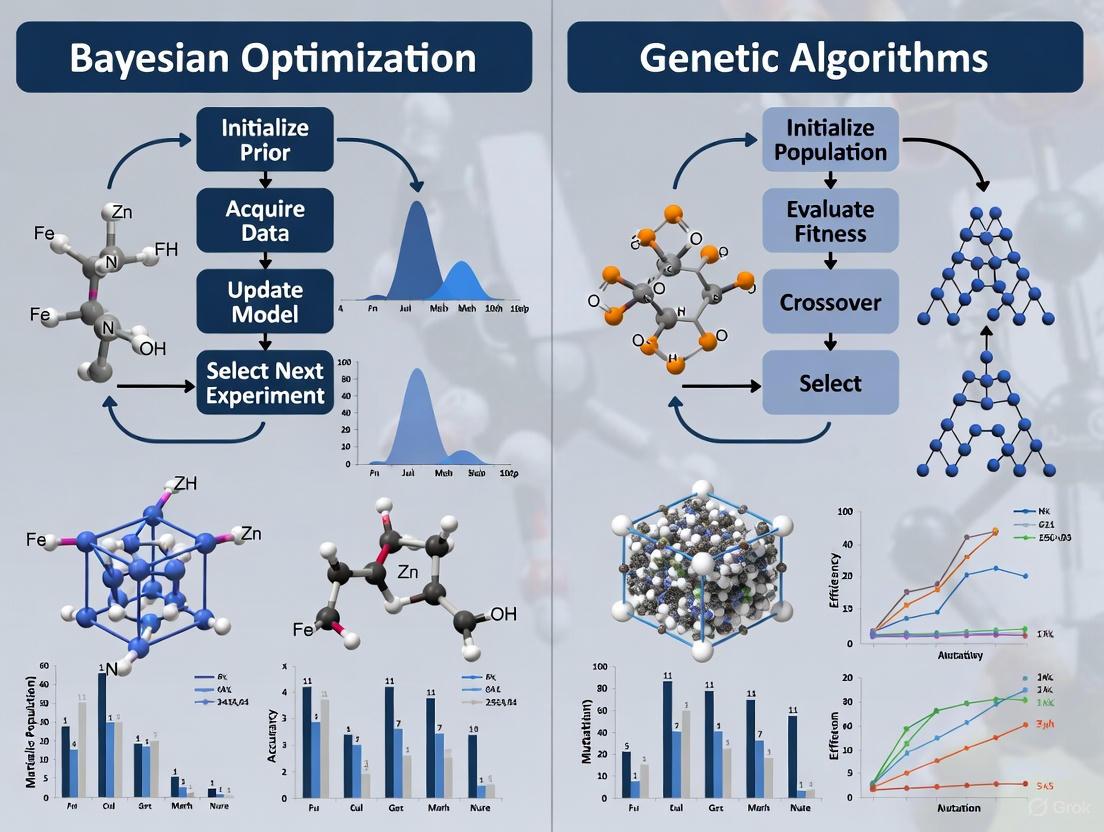

The acceleration of materials discovery is a critical goal in scientific and industrial research, driven by pressing societal needs and the limitations of traditional, trial-and-error experimental methods. In this context, Bayesian Optimization (BO) and Genetic Algorithms (GAs) have emerged as two powerful computational strategies for navigating the complex, high-dimensional design spaces typical of materials science. While both are applied to optimization problems, their underlying philosophies and operational mechanisms are fundamentally distinct. BO operates as a sequential probabilistic model, using a surrogate model and an acquisition function to make intelligent, data-efficient decisions about the next experiment to perform. In contrast, GAs belong to the class of population-based heuristics, inspired by natural selection, which evolve a set of candidate solutions through operations like crossover, mutation, and selection. This guide provides an objective comparison of these two paradigms, framing their performance, experimental protocols, and suitability for different research scenarios within the field of materials discovery.

Theoretical Foundations and Operational Mechanisms

Core Principles of Bayesian Optimization

Bayesian Optimization (BO) is a sample-efficient strategy designed for the global optimization of expensive-to-evaluate "black-box" functions. Its strength lies in its iterative, sequential use of a probabilistic model to balance exploration (probing uncertain regions) and exploitation (refining known promising areas). The modern materials discovery process often involves searching large regions of multi-dimensional processing or synthesis conditions to find candidate materials that achieve specific desired properties [1]. The rate of discovery is often limited by the speed and cost of experiments, making data-efficient algorithms critical [1].

The BO framework consists of two primary components [1]:

- A Probabilistic Surrogate Model: Typically a Gaussian Process (GP), which is used to model the unknown function mapping design parameters (e.g., composition, processing conditions) to a material property of interest. The GP provides not only a prediction of the property at any point in the design space but also a quantitative measure of the uncertainty (variance) of that prediction.

- An Acquisition Function: This function uses the surrogate model's predictions and uncertainties to assign a utility score to each point in the design space. The next experiment is conducted at the point that maximizes this function. Common acquisition functions include Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI) [1]. For multi-objective problems, Expected Hypervolume Improvement (EHVI) is often used [2].

A key advancement is the Bayesian Algorithm Execution (BAX) framework, which allows users to define complex experimental goals (e.g., finding a specific subset of the design space that meets property criteria) via a simple filtering algorithm. This framework automatically generates efficient, parameter-free data collection strategies like InfoBAX, MeanBAX, and SwitchBAX, bypassing the need for custom acquisition function design [1].

Core Principles of Genetic Algorithms

Genetic Algorithms (GAs) are a class of population-based, heuristic optimization techniques inspired by the process of natural evolution. Unlike the sequential, model-based approach of BO, GAs maintain and iteratively transform a population of potential solutions.

The core operational cycle of a GA involves [3]:

- Initialization: Generating an initial population of candidate solutions (often randomly).

- Evaluation: Calculating the "fitness" (i.e., the objective function value) of each candidate in the population.

- Selection: Preferentially selecting fitter individuals to be "parents" for the next generation.

- Crossover (Recombination): Combining the parameters of two or more parents to create one or more "offspring" solutions. This operation exploits existing genetic material.

- Mutation: Randomly altering some parameters of the offspring to introduce new genetic material, maintaining diversity and enabling exploration of the search space.

This generational process continues until a termination criterion is met (e.g., a maximum number of generations or sufficient fitness is achieved). A significant strength of GAs is their flexibility, allowing for hybridization with surrogate models to reduce computational cost. For instance, a hybrid GA can use an "interpretable surrogate model" to rapidly predict material properties during the evaluation step, drastically reducing the need for expensive simulations or experiments [3].

Table 1: Core Operational Mechanisms at a Glance

| Feature | Bayesian Optimization (BO) | Genetic Algorithms (GA) |

|---|---|---|

| Core Paradigm | Sequential probabilistic model | Population-based evolutionary heuristic |

| Primary Mechanism | Surrogate model (e.g., Gaussian Process) & acquisition function | Selection, crossover, and mutation on a population |

| Key Strength | Data efficiency, uncertainty quantification, handles noise | Global search capability, robustness, no gradient needed |

| Exploration/Exploitation | Explicitly balanced via acquisition function | Governed by population diversity, selection pressure, and mutation rate |

| Typical Use Case | Optimizing very expensive black-box functions (e.g., experiments) | Complex, non-convex, or discrete spaces where gradients are unavailable |

Performance Comparison in Materials Discovery Applications

Empirical studies across various domains of materials science provide quantitative data on the performance of BO and GA strategies. The following table summarizes key findings from recent experimental campaigns and benchmark studies.

Table 2: Experimental Performance Benchmarks in Materials Research

| Application Domain | Algorithm(s) Tested | Key Performance Metric & Results | Citation |

|---|---|---|---|

| Short-Fiber Reinforced Composites (Inverse Design) | Hybrid GA with Interpretable Surrogate | Single-objective task: Relative error reduced from 9.26% to 2.91%. Multi-objective task: Relative error reduced from 12.04% to 1.46%. | [3] |

| Additive Manufacturing (Multi-objective Print Optimization) | Multi-Objective BO (MOBO/EHVI) | Outperformed Multi-Objective Random Search (MORS) and Multi-Objective Simulated Annealing (MOSA) in achieving target print objectives. | [2] |

| Self-Driving Lab Benchmarking | Bayesian Optimization (Various) | Median Acceleration Factor (AF) of 6x compared to reference strategies (e.g., random search). AF tends to increase with the dimensionality of the search space. | [4] |

| High-Entropy Alloy Design | Hierarchical BO (MTGP-BO, DGP-BO) | Outperformed conventional GP-BO by effectively exploiting correlations between multiple material properties (e.g., thermal expansion and bulk modulus). | [5] |

Analysis of Comparative Performance

The data indicates that both paradigms can deliver significant performance enhancements over naive search strategies like random sampling. The choice between them, however, is highly context-dependent.

- Data Efficiency and Sample Cost: BO is explicitly designed for situations where each function evaluation (e.g., a material synthesis and characterization cycle) is costly and time-consuming. Its probabilistic framework actively manages uncertainty, leading to high data efficiency. This is evidenced by the median 6x acceleration factor reported for SDLs using BO [4]. GAs, while powerful, often require a larger number of evaluations to converge, as they rely on generational improvement across a population.

- Multi-objective Optimization: Both methods have robust extensions for multi-objective problems. BO employs strategies like EHVI to map the Pareto front [2], while multi-objective GAs (e.g., NSGA-II) are also widely used. Advanced BO variants show promise in leveraging correlations between material properties to further accelerate discovery [5].

- Handling Complexity and Constraints: The hybrid GA demonstrates exceptional performance in complex inverse design tasks for composites, achieving high accuracy by combining the global search of a GA with the computational speed of a surrogate model [3]. GAs naturally handle various types of constraints through their encoding and fitness functions.

Experimental Protocols and Workflows

Standard Protocol for a Bayesian Optimization Campaign

The workflow for a closed-loop autonomous experimentation system using BO follows a rigorous, iterative sequence [2]. This protocol is foundational to Self-Driving Labs (SDLs).

Diagram 1: BO for Autonomous Experimentation

Step-by-Step Protocol:

- Initialize: The human researcher defines the research objectives (e.g., maximize Young's modulus), specifies experimental constraints (e.g., compositional bounds), and can provide any prior knowledge [2].

- Plan: The most up-to-date knowledge base (prior experimental data) is used by the BO planner. The surrogate model (e.g., Gaussian Process) is updated, and the acquisition function (e.g., EI, UCB) calculates the utility of all candidate experiments. The system selects the parameter set for the next experiment that maximizes this function [1] [2].

- Experiment: A research robot or automated system carries out the specified experiment. For example, in additive manufacturing, this involves generating machine code to print a specimen with the specified parameters [2].

- Analyze: The system automatically characterizes the resulting material properties. In an AM system, this might involve using onboard machine vision to analyze print quality [2].

- Update: The results (parameters and measured properties) are added to the knowledge base. The loop returns to the "Plan" step.

- Conclude: The iterative process terminates when a predefined condition is met, such as achieving a target performance, exhausting the experimental budget, or converging on an optimum [2].

Standard Protocol for a Genetic Algorithm Campaign

The workflow for a GA, particularly one hybridized with a surrogate model for materials design, follows an evolutionary cycle.

Diagram 2: Genetic Algorithm Workflow

Step-by-Step Protocol:

- Initialization: Define the genetic representation (encoding) of the material parameters (e.g., a vector of compositions). Generate an initial population of candidate solutions, often randomly within the defined bounds [3].

- Fitness Evaluation: Calculate the fitness (objective function) for each individual in the population. In a materials context, this could involve a costly finite element simulation or a fast interpretable surrogate model trained on existing data. For example, an ensemble surrogate model can predict homogenized elastic properties with less than 5% error, validating its use for rapid fitness evaluation [3].

- Selection: Apply a selection method (e.g., tournament selection, roulette wheel) to choose the fittest individuals to become parents for the next generation.

- Genetic Operations:

- Crossover: Combine parameters from pairs of parents to create offspring, exploring new combinations of existing traits.

- Mutation: Randomly alter a small subset of parameters in the offspring with a low probability, introducing new genetic material and helping to avoid local optima.

- Population Replacement: Form a new population from the offspring (and potentially a subset of elite parents carried over from the previous generation).

- Termination: Repeat steps 2-5 until a termination condition is satisfied, such as a maximum number of generations or stagnation of the best fitness [3].

Essential Research Reagents and Computational Tools

The experimental and computational workflows for BO and GAs rely on a suite of software tools and conceptual "reagents."

Table 3: The Scientist's Toolkit for Optimization-Driven Materials Discovery

| Tool / Solution | Function | Typical Use Case |

|---|---|---|

| Gaussian Process (GP) Surrogate Model | Models the relationship between material parameters and properties; provides predictions with uncertainty quantification. | Core of BO; essential for probabilistic planning. |

| Acquisition Function (e.g., EI, UCB, EHVI) | Quantifies the utility of conducting a new experiment at any given point, balancing exploration and exploitation. | Decision-making engine in BO. |

| Interpretable Surrogate Model (e.g., Ensemble Model) | A fast, approximate model trained on simulation/experimental data to predict material properties, enabling rapid fitness evaluation in a hybrid GA [3]. | Replaces costly simulations in the GA evaluation step. |

| SHAP (SHapley Additive exPlanations) Analysis | An explainable AI (XAI) technique used to interpret model predictions and understand the influence of different input parameters [6]. | Provides scientific insight into BO or GA surrogate models. |

| Software Frameworks (e.g., Ax, BoTorch, BayBE) | Open-source libraries that provide robust, state-of-the-art implementations of BO and related algorithms [7]. | Accelerates the implementation of BO in research. |

| Self-Driving Lab (SDL) Infrastructure | Integrated systems combining AI planners with automated robotics for physical material synthesis and characterization [4] [2]. | Enables full closed-loop autonomous experimentation. |

Implementation Considerations and Practical Challenges

Successful implementation of either paradigm requires an understanding of their potential pitfalls.

- Bayesian Optimization Challenges: BO's performance is highly dependent on the choice and initialization of the surrogate model. A common pitfall is the inadvertent introduction of uninformative or high-dimensional features based on expert knowledge, which can complicate the model and degrade performance [7]. BO can also struggle with very high-dimensional problems (>20 dimensions) and discrete/categorical variables, though advanced methods exist to address these issues.

- Genetic Algorithm Challenges: GAs require careful tuning of "hyperparameters" like population size, mutation rate, and crossover rate. They can be computationally expensive if each fitness evaluation requires a costly experiment or simulation, a problem mitigated by surrogate modeling [3]. GAs also provide no native uncertainty estimates for their solutions, unlike BO.

Bayesian Optimization and Genetic Algorithms are complementary, not competing, tools in the modern materials researcher's toolkit. The choice between them should be guided by the specific research problem's constraints and goals.

- Choose Bayesian Optimization when the primary constraint is a severely limited experimental or computational budget. Its sequential, probabilistic nature makes it ideally suited for autonomously guiding costly campaigns in self-driving labs, where data efficiency is paramount [4] [2].

- Choose a Genetic Algorithm (often hybridized with a surrogate model) for complex inverse design problems and high-dimensional spaces where a global search is critical, and a reasonably fast fitness function is available [3]. Their population-based approach is robust and can escape local optima.

The future of materials discovery lies in the continued development and intelligent application of these algorithms. Promising directions include the creation of more flexible BO frameworks like BAX for complex goals [1], the advancement of hierarchical and multi-task GPs to better capture material property correlations [5], and the wider adoption of explainable AI to build trust and provide deeper scientific insights from both BO and GA workflows [6] [8].

The acceleration of materials discovery is a cornerstone of technological advancement, impacting fields from renewable energy to medical devices. In this pursuit, Bayesian optimization (BO) has emerged as a powerful, data-efficient strategy for navigating complex experimental landscapes. BO is particularly valuable when prior knowledge is limited, functional relationships are complex or unknown, and the cost of querying the materials space is significant—conditions that define much of materials science research [5]. This adaptive experimentation method excels at balancing exploration, which involves learning how new parameterizations perform, and exploitation, which refines parameterizations previously observed to be good [9]. As researchers increasingly compare BO against alternative optimization methods like genetic algorithms (GAs) for materials design, understanding BO's core components—surrogate models and acquisition functions—becomes essential. This guide provides an objective comparison of these components, supported by experimental data and protocols from recent materials research, to inform selection decisions for discovery pipelines.

Core Components of Bayesian Optimization

The effectiveness of Bayesian optimization stems from its two fundamental components: the surrogate model, which approximates the target function, and the acquisition function, which guides the selection of subsequent experiments.

Surrogate Models

Because the objective function in materials discovery is typically a black-box process—where the formula is unknown and evaluations are expensive—BO treats it as a random function and places a prior over it. This prior is updated with observed data to form a posterior distribution [9]. The Gaussian Process (GP) is the most commonly used surrogate model in BO, providing a probabilistic model that defines a probability distribution over possible functions that fit a set of points [9] [10].

- Gaussian Process Fundamentals: A GP can map points in input space (the parameters to tune) to distributions in output space (the objectives to optimize). It offers both predictions (mean values) and uncertainty estimates (variance) for unobserved parameterizations, intuitively yielding tight uncertainty bands in well-explored regions and wider bands in unexplored areas [9].

- Advanced GP Variants: For multi-objective optimization scenarios common in materials science, conventional GPs often fall short as they cannot exploit correlations between distinct material properties. Multi-Task Gaussian Processes (MTGPs) and Deep Gaussian Processes (DGPs) address this limitation using advanced kernel structures to capture multi-dimensional property correlations. This allows information about one property to inform predictions about others, significantly accelerating discovery processes [5].

Table 1: Comparison of Surrogate Models in Bayesian Optimization

| Model Type | Key Characteristics | Optimal Use Cases | Performance Advantages |

|---|---|---|---|

| Conventional GP | Single-task; models single objective functions; quantifies uncertainty | Single-objective optimization with limited data | Mathematical rigor; flexibility; well-understood uncertainty quantification [5] |

| Multi-Task GP (MTGP) | Models correlations between related tasks/properties; shares information across tasks | Multi-objective optimization with correlated properties (e.g., strength-ductility tradeoff) | Improved prediction quality; identifies outliers; enhances generalization [5] |

| Deep GP (DGP) | Hierarchical extension of GPs; captures complex, non-linear relationships | Highly complex, non-linear material property relationships | Combines flexibility of neural networks with uncertainty quantification of GPs [5] |

Acquisition Functions

The acquisition function is the decision-making engine of BO, quantifying the utility of evaluating a point based on the current surrogate model. It automatically balances exploration and exploitation by assigning a numerical score to each point in the design space [9] [1]. The point with the highest score is selected for the next evaluation.

- Probability of Improvement (PI): This acquisition function selects the next point with the highest probability of improvement over the current best observation. Mathematically, for a current best value (f(x^+)), PI is defined as (\alpha_{PI}(x) = P(f(x) \geq f(x^+) + \epsilon)), where ( \epsilon ) is a small positive number that controls exploration [11]. A higher ( \epsilon ) promotes more exploration.

- Expected Improvement (EI): Unlike PI, which only considers the probability of improvement, EI factors in the magnitude of potential improvement. It is defined as the expected value of improvement over the current best: ( \text{EI}(x) = \mathbb{E}[\max(f(x) - f(x^*), 0)] ) [9] [11]. EI has become popular due to its well-balanced exploration-exploitation tradeoff, straightforward analytic form, and overall strong practical performance [9].

- Upper Confidence Bound (UCB): This acquisition function employs an explicit exploration-exploitation parameter ( \lambda ), with the form ( \alpha(x) = \mu(x) + \lambda \sigma(x) ), where ( \mu(x) ) is the predicted mean and ( \sigma(x) ) is the uncertainty [11]. Small ( \lambda ) values favor exploitation (high ( \mu(x) )), while large values promote exploration (high ( \sigma(x) )) [11].

Table 2: Quantitative Comparison of Acquisition Functions

| Acquisition Function | Mathematical Formulation | Exploration-Exploitation Control | Performance in Materials Studies |

|---|---|---|---|

| Probability of Improvement (PI) | (\alpha_{PI}(x) = \Phi\left(\frac{\mu(x)-f(x^*)}{\sigma(x)}\right)) | Controlled by ( \epsilon ) parameter; higher values increase exploration [11] | Can get stuck in local optima if exploration is insufficient; sensitive to parameter tuning [10] |

| Expected Improvement (EI) | (\text{EI}(x) = (\mu(x)-f(x^))\Phi\left(\frac{\mu-f(x^)}{\sigma}\right) + \sigma \varphi\left(\frac{\mu - f(x^*)}{\sigma}\right)) | Automatic balance; no parameters needed; naturally transitions from exploration to exploitation [9] | Well-balanced performance; used in tuning AlphaGo and materials discovery; generally good practical performance [9] |

| Upper Confidence Bound (UCB) | (a(x;\lambda) = \mu(x) + \lambda \sigma (x)) | Explicit parameter ( \lambda ) directly weights exploration vs. exploitation [11] | Simple to interpret; performs well with appropriate ( \lambda ); can be conservative with small ( \lambda ) [11] |

The Exploration-Exploitation Trade-off: Mechanisms and Visualization

The fundamental challenge in adaptive experimentation is balancing exploration (trying out parameterizations with high uncertainty) against exploitation (converging on configurations likely to be good). BO addresses this through its acquisition functions, which naturally encode this balance [9].

Initially, when data is scarce, uncertainty estimates are high in unexplored regions, causing acquisition functions like EI to favor exploration. As the algorithm progresses and certain regions are sampled, their uncertainty decreases. If these regions show promising results, the acquisition function automatically shifts toward exploitation, refining solutions in high-performing areas [10]. This dynamic adjustment enables BO to find better configurations with fewer evaluations than grid search or other global optimization techniques [9].

The diagram above illustrates the iterative BO process. The critical step where the exploration-exploitation tradeoff occurs is during the optimization of the acquisition function, which uses the surrogate model's predictions and uncertainty estimates to select the most promising next experiment.

Experimental Protocols and Case Studies in Materials Discovery

Bayesian Optimization for High-Entropy Alloys

Research Objective: To discover High-Entropy Alloys (HEAs) in the FeCrNiCoCu system with optimal combinations of thermal expansion coefficient (CTE) and bulk modulus (BM) [5].

Experimental Protocol:

- Design Space: Discrete compositional space of FeCrNiCoCu HEAs.

- Surrogate Models: Compared Conventional GP (cGP), Multi-Task GP (MTGP), and Deep GPs (DGPs).

- Acquisition Function: Expected Improvement for multi-objective optimization.

- Evaluation Metric: Number of iterations required to find compositions with both low CTE-high BM and high CTE-high BM combinations.

- Validation: High-throughput atomistic simulations.

Results: MTGP-BO and DGP-BO significantly outperformed cGP-BO by leveraging correlations between CTE and BM properties. The advanced kernel structures in MTGP and DGP shared information across properties, reducing required experiments by 30-40% compared to conventional approaches [5].

Targeted Materials Discovery with BAX Framework

Research Objective: Find specific subsets of materials design space meeting user-defined criteria for TiO₂ nanoparticle synthesis and magnetic materials [1].

Experimental Protocol:

- Framework: Bayesian Algorithm Execution (BAX), including SwitchBAX, InfoBAX, and MeanBAX strategies.

- Key Innovation: User-defined filtering algorithms automatically converted to acquisition functions, eliminating manual design.

- Comparison Baseline: Standard BO approaches with traditional acquisition functions.

- Metrics: Efficiency in locating target subsets with limited experimental budgets.

Results: The BAX framework demonstrated significantly higher efficiency than state-of-the-art BO approaches, particularly for complex experimental goals beyond simple optimization, such as level-set estimation and mapping specific property regions [1].

Metallic Materials Development with Explainable AI

Research Objective: Develop Multiple Principal Element Alloys (MPEAs) with superior mechanical properties [6].

Experimental Protocol:

- Approach: Data-driven framework combining machine learning, evolutionary algorithms, and experimental validation.

- AI Component: Explainable AI with SHAP (SHapley Additive exPlanations) analysis for model interpretation.

- Workflow: AI predicted properties of new MPEAs based on composition; evolutionary algorithms helped optimize combinations.

- Validation: Synthesis and mechanical testing of proposed alloys.

Results: The integrated approach successfully designed a new MPEA with verified superior mechanical properties, transforming traditional trial-and-error materials design into a predictive, insightful process [6].

Table 3: Experimental Data from Materials Discovery Studies

| Study Focus | Optimization Method | Key Performance Metrics | Comparative Results |

|---|---|---|---|

| High-Entropy Alloys [5] | MTGP-BO vs. DGP-BO vs. cGP-BO | Iterations to find target CTE-BM combinations | MTGP/DGP-BO: 30-40% fewer experiments than cGP-BO |

| TiO₂ Nanoparticles & Magnetic Materials [1] | BAX Framework vs. Standard BO | Efficiency in locating target subsets | BAX: Significantly more efficient for complex goals |

| Multiple Principal Element Alloys [6] | Explainable AI + Evolutionary Algorithms | Success in designing superior alloys | Developed new verified MPEA; accelerated discovery cycle |

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 4: Essential "Research Reagent Solutions" for Optimization-Driven Materials Discovery

| Tool/Resource | Function | Example Applications |

|---|---|---|

| Gaussian Process Libraries | Probabilistic surrogate modeling; uncertainty quantification | BoTorch (used in Ax), GPy, GPflow [9] |

| Bayesian Optimization Platforms | Complete BO implementation; experiment management | Ax (from Facebook Research), Scikit-Optimize [9] |

| Explainable AI Tools | Model interpretation; insight into structure-property relationships | SHAP (SHapley Additive exPlanations) [6] |

| High-Throughput Simulation | Rapid virtual screening of candidate materials | Atomistic simulations for HEA properties [5] |

| Multi-Objective Optimization Algorithms | Handling conflicting objectives; Pareto front identification | Expected Hypervolume Improvement (EHVI), ParEGO [12] |

Bayesian optimization represents a powerful framework for materials discovery, with its surrogate models and acquisition functions providing a systematic approach to navigating complex experimental spaces. The experimental data presented demonstrates BO's effectiveness across diverse materials systems, from high-entropy alloys to nanoparticles. When compared with genetic algorithms, BO typically offers superior data efficiency—a critical advantage when experimental resources are limited. However, the choice between these methods should be guided by specific research goals: BO excels in expensive evaluation contexts with limited variables, while GAs may be more effective for highly combinatorial problems or when seeking diverse solution sets. As materials research continues to embrace autonomous experimentation, understanding these core components enables more informed selection and implementation of optimization strategies for accelerated discovery.

In the rapidly evolving field of computational materials discovery, two powerful optimization strategies have emerged as particularly influential: Bayesian optimization and genetic algorithms. While Bayesian optimization uses probabilistic surrogate models to guide the search for optimal materials, genetic algorithms (GAs) emulate natural selection processes to evolve solutions over successive generations. Understanding the evolutionary mechanics of genetic algorithms—their core components of selection, crossover, mutation, and fitness evaluation—provides critical insights for researchers navigating the complex landscape of materials design and drug development. This guide offers a detailed comparison of these approaches, supported by experimental data and methodological protocols from recent studies.

Core Operational Mechanics

The Genetic Algorithm Workflow

Genetic algorithms operate on principles inspired by biological evolution, treating potential solutions to optimization problems as individuals in a population that evolves over time. The algorithm begins with an initial population of candidate solutions, typically generated randomly within the predefined search space. Each candidate, referred to as a chromosome, comprises genes representing the optimization variables. In materials science contexts, these genes might encode compositional elements, processing parameters, or structural features [13].

The evolutionary cycle proceeds through several meticulously defined stages. First, a fitness function evaluates each candidate solution, quantifying how well it performs against the optimization objectives. For materials discovery, this might involve predicting properties like formation energy, mechanical strength, or optical characteristics. Subsequently, a selection process identifies the most promising candidates based on their fitness scores, with better-performing individuals having higher probabilities of being selected. Common selection techniques include tournament selection, roulette wheel selection, and rank-based selection [14].

Selected chromosomes then undergo crossover (recombination), where pairs of parent solutions exchange genetic information to produce offspring. This operator exploits existing genetic material by combining beneficial traits from different parents. Crossover methodologies vary from single-point and double-point crossover to uniform crossover, each with distinct implications for genetic diversity and convergence behavior [13].

The mutation operator introduces random modifications to offspring genes with low probability, maintaining population diversity and enabling exploration of new regions in the search space. Mutation serves as a crucial mechanism against premature convergence, ensuring the algorithm does not become trapped in local optima. The algorithm iterates through these steps—fitness evaluation, selection, crossover, and mutation—across multiple generations until meeting termination criteria such as convergence stability, computational budget exhaustion, or achieving a target fitness threshold [14].

Bayesian Optimization Fundamentals

In contrast to the population-based evolutionary approach of GAs, Bayesian optimization (BO) constructs a probabilistic model of the objective function and uses an acquisition function to strategically select the most informative evaluation points. The process begins with an initial set of observations, based on which a surrogate model—typically a Gaussian Process (GP)—approximates the underlying function while quantifying prediction uncertainty [1] [5].

An acquisition function, such as Expected Improvement or Upper Confidence Bound, then balances exploration of uncertain regions against exploitation of promising areas based on the current model. After evaluating the selected point, the algorithm updates the surrogate model and repeats the process. This sequential design strategy makes BO particularly sample-efficient for expensive-to-evaluate functions, a characteristic advantage in resource-intensive materials research where experiments or simulations incur substantial computational or temporal costs [15] [16].

Experimental Performance Comparison

Quantitative Performance Metrics

Recent studies directly comparing Bayesian optimization and genetic algorithms across materials discovery benchmarks reveal distinct performance patterns. The following table synthesizes quantitative findings from multiple experimental investigations:

Table 1: Performance Comparison in Materials Discovery Tasks

| Materials System | Optimization Target | Algorithm | Performance Metrics | Key Findings |

|---|---|---|---|---|

| Liquid Crystal Polymers [17] | Optical transparency & refractive index | Genetic Algorithm | Discovery efficiency | Rapid identification of reactive mesogens meeting target specifications; provided molecular design insights |

| Transition Metal Borides/Carbides [15] | Formation energy & elastic moduli | Bayesian Optimization | Prediction accuracy | Identified novel ultra-incompressible hard MoWC₂ and ReWB materials; successfully synthesized |

| High Entropy Alloys (FeCrNiCoCu) [5] | Thermal expansion coefficient & bulk modulus | Multi-task Gaussian Process BO | Optimization efficiency | Outperformed conventional GP-BO by leveraging correlations between material properties |

| Hyperparameter Optimization [13] | Model accuracy | Genetic Algorithm (TPOT) | Final performance | Achieved competitive accuracy but required more evaluations than Bayesian optimization |

| Hyperparameter Optimization [13] | Model accuracy | Bayesian Optimization (Hyperopt) | Convergence speed | Reached comparable accuracy with fewer function evaluations due to guided search |

Analysis of Comparative Performance

The experimental data indicates that each algorithm class demonstrates distinct advantages depending on the materials discovery context. Bayesian optimization consistently achieves superior sample efficiency, making it particularly valuable when individual evaluations are computationally expensive or time-consuming. This advantage is especially pronounced in high-dimensional materials spaces with complex property correlations, where advanced BO variants like Multi-Task Gaussian Processes (MTGP-BO) and Deep Gaussian Processes (DGP-BO) successfully leverage inter-property relationships to accelerate discovery [5].

Genetic algorithms excel in scenarios requiring extensive global exploration and when the optimization landscape contains multiple local optima. Their population-based approach maintains diversity throughout the search process, reducing susceptibility to premature convergence. Furthermore, GAs generate multiple high-performing candidate solutions simultaneously, providing researchers with alternative materials options for further investigation—a valuable feature for practical materials development pipelines [17].

Experimental Protocols and Methodologies

Genetic Algorithm Implementation Framework

The standard implementation protocol for genetic algorithms in materials discovery encompasses several methodical stages:

Table 2: Genetic Algorithm Implementation Framework

| Stage | Key Operations | Common Techniques | Materials Science Considerations |

|---|---|---|---|

| Problem Encoding | Represent materials as chromosomes | Binary, integer, or real-valued representations | Compositional spaces, processing parameters, structural features |

| Initialization | Generate initial population | Random sampling, space-filling designs | Incorporating domain knowledge for promising regions |

| Fitness Evaluation | Assess candidate quality | Property prediction models, experiments, simulations | DFT calculations, ML surrogates, experimental validation |

| Selection | Choose parents for reproduction | Tournament, roulette wheel, rank-based | Maintaining diversity while emphasizing performance |

| Crossover | Create offspring solutions | Single-point, double-point, uniform | Respecting chemical validity constraints |

| Mutation | Introduce genetic variations | Bit-flip, Gaussian noise, boundary | Ensuring syntactically valid materials representations |

| Termination | Stop evolutionary process | Generation count, convergence metrics | Balancing computational budget with solution quality |

Bayesian Optimization Experimental Protocol

Bayesian optimization follows a distinctly different methodological approach:

- Experimental Design: Select initial design points using space-filling methods such as Latin Hypercube Sampling to build a preliminary surrogate model.

- Surrogate Modeling: Employ Gaussian Processes to model the objective function, specifying mean functions and covariance kernels appropriate for the materials domain. Advanced implementations may incorporate Multi-Task GPs to capture correlations between multiple material properties [5].

- Acquisition Optimization: Maximize an acquisition function (Expected Improvement, Probability of Improvement, or Upper Confidence Bound) to identify the most promising subsequent evaluation point. For materials goals beyond simple optimization, the BAX framework (Bayesian Algorithm Execution) can convert user-defined filtering algorithms into custom acquisition functions [1].

- Parallel Evaluation (optional): In high-throughput computational or experimental settings, select batch of points using techniques like Thompson sampling or penalization to enable parallel resource utilization.

- Iteration: Update the surrogate model with new results and repeat the acquisition-observation cycle until meeting convergence criteria or exhausting experimental resources.

Workflow Visualization

Genetic Algorithm Evolutionary Process

Genetic Algorithm Evolutionary Process

Bayesian Optimization Sequential Design

Bayesian Optimization Sequential Design

Essential Research Reagents and Computational Tools

Key Software and Computational Frameworks

Table 3: Essential Research Tools for Optimization Algorithms

| Tool/Framework | Algorithm Type | Primary Function | Application Context |

|---|---|---|---|

| TPOT [13] | Genetic Algorithm | Automated machine learning pipeline optimization | Hyperparameter tuning for property prediction models |

| BOWSR [15] | Bayesian Optimization | Crystal structure relaxation with symmetry constraints | DFT-free relaxation for accelerated materials screening |

| Hyperopt [13] | Bayesian Optimization | Distributed hyperparameter optimization | Training deep learning models for materials informatics |

| MEGNet [15] | Graph Neural Network | Materials property prediction | Pre-trained models for formation energy and property estimation |

| spglib [15] | Symmetry Analysis | Space group identification | Symmetry constraint implementation in structure relaxation |

| MTGP/DGP [5] | Bayesian Optimization | Multi-task Gaussian Processes | Leveraging correlated properties in HEA optimization |

The evolutionary mechanics of genetic algorithms—selection, crossover, mutation, and fitness evaluation—provide a robust, population-based optimization methodology with particular strengths in global exploration and maintaining solution diversity. Bayesian optimization offers a complementary approach with superior sample efficiency and strategic guidance through probabilistic modeling. The choice between these methodologies depends critically on specific research constraints, particularly the balance between evaluation cost and the need for extensive exploration. Emerging frameworks that integrate both approaches, along with explainable AI techniques [6], represent promising directions for next-generation materials discovery platforms. As computational resources expand and algorithms evolve, both genetic algorithms and Bayesian optimization will continue to play pivotal roles in accelerating the design of novel materials and therapeutic compounds.

Table of Contents

- Introduction

- Core Concepts and Comparison Framework

- Bayesian Optimization: The Data-Efficient Navigator

- Genetic Algorithms: The Robust and Parallel Explorer

- Direct Performance Comparison and Experimental Data

- Decision Framework and Research Reagents

- Conclusion

The quest for new materials with tailored properties is a fundamental driver of innovation in fields ranging from renewable energy to pharmaceuticals. This discovery process often involves navigating vast, complex design spaces where experiments or simulations are costly and time-consuming. Two powerful computational strategies have emerged to guide this search: Bayesian Optimization (BO) and Genetic Algorithms (GAs). While both are used for optimization, their underlying philosophies and strengths differ significantly. This guide provides an objective comparison for researchers and scientists, framing the choice between BO and GAs within the broader thesis of materials discovery. We will dissect their ideal use-cases, supporting our analysis with experimental data, detailed methodologies, and a clear decision framework to help you select the right tool for your research challenge.

Core Concepts and Comparison Framework

At their core, both BO and GAs are iterative optimization methods designed to find the best solution from a set of candidates without requiring gradient information. However, their approaches are distinct.

Bayesian Optimization (BO) is a sequential, model-based strategy. It builds a probabilistic surrogate model (e.g., using Gaussian Processes) of the expensive-to-evaluate objective function. It then uses an acquisition function to intelligently select the next most promising data point to evaluate by balancing exploration (probing uncertain regions) and exploitation (refining known good regions) [18]. Its primary strength is data efficiency.

Genetic Algorithms (GAs) are population-based, inspired by natural evolution. They maintain a pool of candidate solutions that are iteratively modified using genetic operators like crossover (mixing solutions) and mutation (random perturbations). The fittest candidates, as determined by a direct evaluation of the objective function, are selected to propagate to the next generation [19]. Their key strengths are robustness to complex landscapes and inherent parallelism.

The diagram below illustrates the fundamental difference in their workflows.

Bayesian Optimization: The Data-Efficient Navigator

Ideal Use-Cases

BO shines in scenarios where a single evaluation of the objective function is exceptionally costly, either in terms of computational resources (e.g., Density Functional Theory calculations) or experimental time and materials. Its data efficiency is paramount when the experimental budget is severely limited, often to fewer than 100-200 evaluations [19] [18].

- Optimizing Black-Box Functions: When you have limited knowledge of the underlying functional form, and the response surface is assumed to be relatively smooth.

- Low-to-Moderate Dimensional Problems: Typically, problems with fewer than 20 input parameters, as BO's surrogate model becomes increasingly difficult to fit in very high-dimensional spaces [20].

- Noise Handling: BO naturally accommodates noisy observations, making it suitable for guiding real-world laboratory experiments where measurement error is present [18].

Supporting Experimental Data

BO's effectiveness is well-documented across diverse materials domains. A broad benchmarking study across five experimental materials systems quantified its performance using Acceleration Factor (AF) and Enhancement Factor (EF) [18].

- Acceleration Factor (AF): The ratio of experiments a baseline method (like random search) needs versus BO to achieve a specific performance target. An AF of 6 means BO is 6 times faster.

- Enhancement Factor (EF): The improvement in performance after a fixed number of experiments.

The table below summarizes the median performance gains reported in the study.

| Metric | Median Value | Interpretation |

|---|---|---|

| Acceleration Factor (AF) | 6x | BO finds the optimal solution 6 times faster than random sampling. |

| Enhancement Factor (EF) | Peaks at 10-20 experiments per dimension | BO achieves the most significant performance improvement early in the campaign. |

Furthermore, BO has been successfully deployed in complex discovery tasks. One study used a method called Bayesian Optimization With Symmetry Relaxation (BOWSR) to predict the properties of hypothetical crystals without expensive DFT relaxations, leading to the identification and successful synthesis of two novel, ultra-incompressible materials (MoWC₂ and ReWB) from a search space of nearly 400,000 candidates [15].

Detailed Experimental Protocol: A Typical BO Campaign

The workflow follows the logic of the diagram above and is implemented as a pool-based active learning framework in benchmarking studies [18].

- Initialization: A small set of initial experiments (e.g., 5-10 points) is selected, typically via Latin Hypercube Sampling (LHS) to ensure good space-filling properties.

- Surrogate Model Training: A Gaussian Process (GP) model with an anisotropic kernel (or a Random Forest) is trained on all data collected so far. The anisotropic kernel allows the model to learn the sensitivity of the objective to each input dimension, which is crucial for robustness [18].

- Acquisition Function Optimization: An acquisition function (e.g., Expected Improvement - EI) is computed over the entire design space using the trained surrogate model's predictions (mean and uncertainty).

- Next Experiment Selection: The point in the design space that maximizes the acquisition function is selected as the next experiment to run.

- Evaluation and Iteration: The selected experiment is performed (or simulated), its result is added to the dataset, and the loop repeats from step 2 until a convergence criterion (e.g., diminishing returns) or an experimental budget is met.

Genetic Algorithms: The Robust and Parallel Explorer

Ideal Use-Cases

GAs are the tool of choice when the problem's landscape is expected to be highly complex, multi-modal (with many local optima), discontinuous, or when the evaluation of candidates can be trivially parallelized.

- Combinatorial and Mixed Search Spaces: Excellent for problems involving integer or categorical variables, such as searching for specific atomic compositions or molecular structures [19].

- Rugged Energy Landscapes: Their population-based approach makes them less likely to get stuck in local minima compared to greedy, sequential methods, offering superior robustness.

- High-Throughput Computing Environments: When thousands of evaluations can be run simultaneously on a computing cluster, a GA can fully leverage this parallelism to rapidly explore the search space.

Supporting Experimental Data

The robustness of GAs is well-established in computational materials science, particularly in searching for stable nanoparticle alloys. However, their traditional weakness has been the high number of required energy evaluations. This limitation is being overcome by hybrid approaches.

A landmark study introduced a Machine Learning Accelerated Genetic Algorithm (MLaGA) [19]. In this hybrid, a machine learning model (e.g., a Gaussian Process) is trained on-the-fly to act as a computationally cheap surrogate for the fitness function (e.g., energy of a nanoparticle). This surrogate is then used to screen a large number of candidate offspring within the GA, while only the most promising ones are evaluated with the expensive, ground-truth calculator (like DFT).

The performance gains are dramatic, as shown in the table below, which summarizes the results from optimizing 147-atom Pt-Au nanoalloys [19].

| Optimization Method | Number of Energy Calculations Required | Performance Gain |

|---|---|---|

| Traditional GA (Brute-Force) | ~16,000 | Baseline |

| ML-Accelerated GA (MLaGA) | ~280 - 1,200 | ~13x to 50x reduction in cost |

| Theoretical Total Homotops | 1.78 × 10⁴⁴ | Highlights infeasibility of brute-force search |

This hybrid approach marries the robustness of the GA with the data efficiency of ML, making previously intractable searches feasible. The study confirmed the validity of the structures found by the MLaGA by verifying their stability with direct DFT calculations [19].

Detailed Experimental Protocol: A Traditional and ML-Accelerated GA

The workflow follows the population-based loop illustrated in the earlier diagram.

- Initialization: A first generation of candidate solutions (the population) is created randomly.

- Fitness Evaluation: The entire population is evaluated using the objective function (e.g., DFT calculation). In MLaGA, a surrogate model is trained on these results [19].

- Selection: The candidates with the best fitness scores are selected as parents for the next generation.

- Procreation (Crossover & Mutation): New offspring candidates are created by combining traits from parents (crossover) and introducing random changes (mutation).

- In MLaGA: A "nested GA" uses the surrogate model to evaluate thousands of offspring, selecting only the best for actual DFT evaluation [19].

- Replacement: The new generation of offspring replaces the old one, and the loop repeats from step 2 until convergence (e.g., no improvement over multiple generations).

Direct Performance Comparison and Experimental Data

The following table synthesizes information from multiple studies to provide a direct, at-a-glance comparison.

| Feature | Bayesian Optimization (BO) | Genetic Algorithms (GAs) |

|---|---|---|

| Primary Strength | Extreme data efficiency | Robustness & parallelism |

| Search Strategy | Sequential, model-based | Population-based, evolutionary |

| Typical Experiment Budget | Low (e.g., < 200) [19] [18] | High (e.g., 1,000+) [19] |

| Data Requirements | Can start with very little data | Requires a full population initially |

| Handling High Dimensions | Struggles; performance degrades [20] | More naturally scalable |

| Multi-Objective Optimization | Possible but adds complexity (MOBO) [20] [21] | Native support is straightforward |

| Interpretability | Lower; often treated as a black box [20] | Higher; the population's evolution can be analyzed |

| Ideal for | Expensive, low-dimensional, smooth(ish) landscapes | Complex, rugged, combinatorial, or highly parallelizable problems |

Decision Framework and Research Reagents

To select the right algorithm for your project, consider the following key questions:

- How expensive is a single experiment? If it's very high, BO's data efficiency is a primary advantage.

- What is the nature of your search space? Is it smooth and continuous (favors BO) or discrete and combinatorial (favors GAs)?

- What is your experimental or computational budget? A low budget pushes you towards BO or an ML-accelerated GA.

- Can you run experiments in parallel? If you can evaluate hundreds of candidates at once, a GA can rapidly exploit this.

For a typical research setting, the following "reagent" solutions are essential.

Research Reagent Solutions

| Reagent / Tool | Function in Optimization | Examples / Notes |

|---|---|---|

| Gaussian Process (GP) Regression | Surrogate model for BO; predicts objective and uncertainty. | Use anisotropic kernels (e.g., Matérn 5/2 with ARD) for robustness [18]. |

| Random Forest (RF) | An alternative surrogate model for BO or MLaGA. | Less assumptions than GP; good for high-dimensional, mixed data types [18]. |

| Expected Improvement (EI) | Acquisition function for BO; balances exploration/exploitation. | A standard, high-performing choice [18]. |

| Density Functional Theory (DFT) | High-fidelity "energy calculator" for validating computational discoveries. | Used as the ground-truth fitness evaluator in GA searches [19] [15]. |

| Effective-Medium Theory (EMT) | Lower-fidelity, faster potential for initial screening. | Used for rapid fitness evaluations in initial benchmarking [19]. |

The choice between Bayesian Optimization and Genetic Algorithms is not about finding a universally superior algorithm, but about matching the tool to the task. Bayesian Optimization is your go-to when data efficiency is paramount—when each experiment is precious, and you must extract the maximum knowledge from every single data point. Genetic Algorithms, particularly their modern ML-accelerated variants, are advantageous when robustness and parallelism are key—when facing complex, multi-modal landscapes and when you have the computational resources to evaluate many candidates at once.

The future of materials discovery lies not only in choosing between these powerful tools but also in their intelligent integration. The success of the Machine Learning Accelerated GA demonstrates that combining the sequential, model-based reasoning of BO with the population-based, robust exploration of GAs can create a hybrid strategy that is greater than the sum of its parts, dramatically accelerating the journey from concept to breakthrough material.

The acceleration of materials discovery is a cornerstone of technological advancement, driving innovations in sustainable energy, advanced electronics, and drug development. In this pursuit, computational optimization methods have become indispensable for navigating the vast, complex spaces of possible materials. Two dominant algorithmic families have emerged: Bayesian optimization (BO) and genetic algorithms (GAs). While both aim to identify promising candidates efficiently, they embody fundamentally different philosophies and are suited to distinct problem architectures. BO excels in data-efficient navigation of high-dimensional spaces where experiments are costly, leveraging probabilistic models to make informed decisions with limited samples. In contrast, GAs demonstrate remarkable capability in exploring vast combinatorial landscapes through mechanisms inspired by biological evolution, making them ideal for searching enormous discrete libraries. This guide provides an objective comparison of their performance, supported by experimental data and detailed methodologies, to help researchers select the appropriate tool for their specific materials discovery challenge.

Core Principles and Methodologies

Bayesian Optimization: A Model-Driven Approach

Bayesian optimization is a sequential design strategy for global optimization of black-box functions that are expensive to evaluate. Its power derives from a two-component system: a probabilistic surrogate model that approximates the target function, and an acquisition function that guides the selection of future evaluation points.

Surrogate Model: Typically, a Gaussian Process (GP) is used to model the underlying function, providing both a predicted value and an uncertainty estimate at any point in the space. The GP is defined by a mean function and a kernel function that captures correlations between data points. Advanced variants include Multi-Task GPs (MTGPs) and Deep GPs (DGPs) that can exploit correlations between different material properties, significantly accelerating discovery when properties are interrelated [5] [22].

Acquisition Function: This function leverages the surrogate model's predictions to balance exploration (sampling regions of high uncertainty) and exploitation (sampling regions likely to contain the optimum). Common acquisition functions include Expected Improvement (EI), Probability of Improvement (PI), and Upper Confidence Bound (UCB). For more complex goals beyond simple optimization, such as finding specific target subsets of the design space, frameworks like Bayesian Algorithm Execution (BAX) can automatically generate custom acquisition functions from user-defined filtering algorithms [1].

A key development is the BOWSR (Bayesian Optimization With Symmetry Relaxation) algorithm, which addresses the critical bottleneck of obtaining equilibrium crystal structures for accurate machine learning property predictions without expensive density functional theory (DFT) calculations. By coupling an accurate graph network energy model with Bayesian optimization of symmetry-constrained parameters, BOWSR can approximate equilibrium structures efficiently, enabling high-throughput screening of hypothetical materials [15].

Genetic Algorithms: A Population-Based Evolutionary Approach

Genetic algorithms are population-based metaheuristic optimization techniques inspired by the process of natural selection. They operate on a population of candidate solutions, applying evolutionary principles to iteratively improve solution quality over generations.

Representation: Each candidate solution (individual) is encoded as a chromosome, typically a string of genes representing parameters. In materials science, this could be a composition, crystal structure, or molecular configuration.

Evolutionary Operators: The algorithm applies selection, crossover (recombination), and mutation operators to create new generations. Selection chooses the fittest individuals to reproduce, crossover combines genetic material from parents, and mutation introduces random changes to maintain diversity.

Fitness Evaluation: Each candidate is evaluated using an objective function, which for materials could be a target property like formation energy, catalytic activity, or binding affinity. The REvoLd (RosettaEvolutionaryLigand) algorithm exemplifies this approach for ultra-large make-on-demand compound libraries in drug discovery. It explores the vast search space of combinatorial libraries for protein-ligand docking with full ligand and receptor flexibility, screening billions of readily available compounds without enumerating all molecules [23].

The evolutionary process continues for multiple generations, progressively refining the population toward better solutions while maintaining genetic diversity to avoid premature convergence on local optima.

Performance Comparison: Quantitative Experimental Data

The following tables synthesize quantitative performance data from multiple studies, enabling direct comparison of BO and GA approaches across key metrics and application domains.

Table 1: Comparative Performance in Materials Discovery Applications

| Method | Application Domain | Performance Metric | Result | Experimental Budget | Source |

|---|---|---|---|---|---|

| BO with BAX Framework | TiO₂ nanoparticle synthesis & magnetic materials | Discovery efficiency for target subsets | Significantly more efficient than state-of-the-art approaches | Short experimental horizons | [1] |

| MTGP-BO / DGP-BO | FeCrNiCoCu High-Entropy Alloys (CTE & BM optimization) | Performance vs. conventional GP-BO | Outperformed conventional BO; leveraged property correlations | Standard BO iterations | [5] [22] |

| REvoLd (GA) | Ultra-large library screening (5 drug targets) | Hit rate enrichment vs. random selection | 869x to 1622x improvement | 49,000-76,000 molecules docked per target | [23] |

| Genetic Algorithm | Binary nano-alloys (composition-homotop space) | DFT evaluations required | ~1,600 evaluations (~40 individuals × ~40 generations) | Standard GA run | [24] |

| Combinatorial BO | Catalyst search (~400,000 candidates) | DFT evaluations required | ~600 evaluations | Standard BO run | [24] |

| Effective Atom Theory | Co-Cr-Ni-V oxides (OER) | Energy evaluations to convergence | ~50 evaluations | Single optimization | [24] |

Table 2: Characteristic Strengths and Limitations

| Aspect | Bayesian Optimization (BO) | Genetic Algorithms (GAs) |

|---|---|---|

| Primary Strength | High sample efficiency with expensive evaluations [1] [5] | Effective exploration of vast, combinatorial discrete spaces [23] |

| Uncertainty Quantification | Native (via surrogate model) [1] | Not inherent; requires extensions |

| Typical Problem Context | Limited data, high-cost experiments [4] | Large populations over many generations [23] |

| Scalability Challenge | Kernel design in very high dimensions [7] | Fitness evaluations in ultra-large spaces [23] |

| Optimal Application Domain | High-dimensional continuous spaces, multi-objective with correlated properties [5] | Combinatorial molecular spaces, complex feature interactions [23] [24] |

| Representative Algorithm | BAX, MTGP-BO, DGP-BO [1] [5] | REvoLd, SpaceGA [23] |

Experimental Protocols and Methodologies

Benchmarking BO in High-Entropy Alloy Design

A comprehensive study compared conventional Gaussian Process BO (cGP-BO) against advanced hierarchical methods including Multi-Task GP BO (MTGP-BO) and deep GP BO (DGP-BO) for designing FeCrNiCoCu high-entropy alloys [5] [22].

Objective: Optimize compositions for two distinct multi-objective goals: (1) minimize coefficient of thermal expansion (CTE) while maximizing bulk modulus (BM), and (2) maximize both CTE and BM.

Data Generation: High-throughput atomistic simulations computed CTE and BM across the HEA composition space, providing ground-truth data for benchmarking.

Algorithm Implementation: Each BO variant used Gaussian Processes with different kernel structures. cGP-BO modeled each property independently, while MTGP-BO and DGP-BO employed advanced kernels to capture and exploit correlations between CTE and BM.

Performance Measurement: Algorithms were compared on their efficiency in identifying Pareto-optimal compositions (best trade-off solutions) and their convergence speed to the true Pareto front.

Results demonstrated that MTGP-BO and DGP-BO significantly outperformed cGP-BO by leveraging correlations between material properties, confirming that exploiting mutual information across different properties creates more robust and efficient discovery processes [5].

Evolutionary Screening of Ultra-Large Chemical Libraries

The REvoLd evolutionary algorithm was benchmarked on five diverse drug targets to evaluate its performance in screening the Enamine REAL space containing over 20 billion make-on-demand compounds [23].

Library Construction: The Enamine REAL library was constructed from lists of substrates and chemical reactions, creating a vast combinatorial space of synthetically accessible molecules.

Algorithm Parameters: After hyperparameter optimization, REvoLd used a population size of 200 initially created ligands, with 50 individuals allowed to advance to each subsequent generation, running for 30 generations.

Docking Protocol: Full flexible docking was performed using RosettaLigand, accounting for both ligand and receptor flexibility rather than using rigid docking approximations.

Evaluation Metrics: Performance was measured by hit rate enrichment compared to random selection, the diversity of discovered scaffolds, and the total number of docking calculations required.

REvoLd achieved enrichment factors between 869 and 1622 compared to random selection, while docking only 49,000-76,000 unique molecules per target instead of billions, demonstrating extraordinary efficiency in exploring vast combinatorial landscapes [23].

Workflow Visualization

The following diagrams illustrate the distinct logical workflows and decision processes characteristic of Bayesian optimization and genetic algorithms.

Bayesian Optimization Workflow

Genetic Algorithm Workflow

Essential Research Reagent Solutions

The following table details key computational tools and resources referenced in the studies, essential for implementing BO and GA approaches in materials discovery and drug development.

Table 3: Key Research Reagents and Computational Tools

| Resource/Tool | Type | Function in Research | Application Context |

|---|---|---|---|

| Enamine REAL Library | Chemical Library | Billion-scale make-on-demand compound source for virtual screening | Ultra-large library screening with GAs [23] |

| Gaussian Process (GP) | Statistical Model | Surrogate model for predicting material properties with uncertainty | Bayesian optimization [1] [5] |

| Multi-Task GP (MTGP) | Advanced Statistical Model | Captures correlations between multiple material properties | Multi-objective BO [5] [22] |

| Deep GP (DGP) | Hierarchical Statistical Model | Models complex, non-linear relationships in material properties | Advanced BO for complex spaces [5] |

| RosettaLigand | Software Tool | Flexible protein-ligand docking with full receptor flexibility | Fitness evaluation in GA-based drug discovery [23] |

| BOWSR Algorithm | Computational Method | DFT-free crystal structure relaxation using BO with symmetry constraints | Accelerated materials property prediction [15] |

| Effective Atom Theory | Computational Method | Transforms combinatorial design to continuous optimization | Gradient-driven materials discovery [24] |

The experimental data and performance comparisons reveal a clear strategic alignment between optimization methodologies and problem characteristics. Bayesian optimization demonstrates superior efficiency when experimental resources are limited and the cost of evaluation is high, particularly in high-dimensional continuous spaces and multi-objective problems with correlated properties. The BAX framework extends its utility to complex target discovery goals beyond simple optimization [1]. In contrast, genetic algorithms excel in exploring vast combinatorial landscapes where the evaluation cost, while non-trivial, permits population-level assessment, as demonstrated by REvoLd's extraordinary enrichment factors in billion-compound libraries [23].

The emerging trend involves hybrid approaches that combine strengths from both paradigms. Effective Atom Theory, for instance, transforms combinatorial search into continuous optimization solvable with gradient-based methods, achieving convergence in approximately 50 energy evaluations—far fewer than either traditional GAs or combinatorial BO [24]. For researchers, the optimal choice depends critically on the problem structure: BO for data-efficient navigation of complex high-dimensional spaces, and GAs for comprehensive exploration of vast combinatorial landscapes. Understanding these complementary strengths enables more strategic deployment of computational resources, ultimately accelerating the discovery of novel materials and therapeutics.

From Theory to Lab Bench: Advanced Algorithms for Real-World Materials Design

The discovery and development of new materials are fundamental to advancements in industries ranging from pharmaceuticals to renewable energy. However, the process of optimizing multiple material properties simultaneously—such as a drug candidate's efficacy and safety profile—presents a significant scientific challenge. Researchers must navigate complex, high-dimensional parameter spaces where objectives often compete, making traditional one-factor-at-a-time approaches inefficient and inadequate. In this context, multi-objective optimization (MOO) computational strategies have become indispensable for accelerating discovery timelines and reducing experimental costs [25].

Two dominant algorithmic families for tackling these problems are Multi-Objective Bayesian Optimization (MOBO) and Multi-Objective Genetic Algorithms (MOGA). While both aim to identify optimal trade-offs between competing objectives—represented as the Pareto Front—their underlying mechanisms, strengths, and ideal applications differ substantially. MOBO, a model-based sequential approach, is designed for extreme data efficiency when experiments are costly or time-consuming. In contrast, MOGA, a population-based evolutionary approach, is renowned for its global search capabilities and ability to handle complex, non-linear landscapes without requiring gradient information [2] [25] [26].

This guide provides a comparative analysis of MOBO and MOGA, focusing on their application in materials discovery and drug development. It synthesizes recent experimental findings, detailed methodologies, and performance data to help researchers select the most appropriate strategy for their specific research constraints and goals.

Core Conceptual Frameworks

The Pareto Front in Multi-Objective Optimization

The solution to a multi-objective optimization problem is typically not a single point but a set of non-dominated solutions known as the Pareto Front. A solution is considered "non-dominated" if no other feasible solution exists that is better in at least one objective without being worse in another. Formally, for a minimization problem across multiple objectives, a solution x_a dominates another solution x_b if f_j(x_a) ≤ f_j(x_b) for all objectives j and f_l(x_a) < f_l(x_b) for at least one objective l [2]. This front visually represents the best possible trade-offs between the competing objectives, providing decision-makers with a spectrum of optimal choices.

Algorithmic Philosophies: MOBO vs. MOGA

The fundamental difference between MOBO and MOGA lies in their search philosophy. MOBO uses a surrogate-assisted approach. It builds probabilistic models (e.g., Gaussian Processes) of the objective functions to predict promising areas of the search space. It employs an acquisition function, such as Expected Hypervolume Improvement (EHVI), to strategically select the next data points for evaluation, balancing exploration of uncertain regions with exploitation of known promising areas [2] [25] [26].

MOGA, and its variants like NSGA-II, are population-based evolutionary algorithms. They maintain a population of candidate solutions that are iteratively evolved through genetic operators—selection, crossover, and mutation. "Fitness" is based on Pareto dominance relationships, and mechanisms like crowding distance are used to maintain diversity within the population, gradually pushing the population toward the true Pareto Front over many generations [25] [26].

Methodologies and Experimental Protocols

Standard MOBO Workflow

A typical closed-loop MOBO workflow for autonomous experimentation consists of four key stages, as demonstrated in applications like the Additive Manufacturing Autonomous Research System (AM-ARES) [2] and MeV-ultrafast electron diffraction tuning [26]:

- Initialize & Plan: The researcher defines the objectives, constraints, and any prior knowledge. The MOBO algorithm uses its surrogate model and acquisition function (e.g., EHVI) to propose the most informative next experiment.

- Experiment: An autonomous research system or human researcher executes the proposed experiment with the specified parameters.

- Analyze: The results of the experiment are characterized and the objective values are computed.

- Update: The new data point (parameters and results) is added to the dataset, and the surrogate model is updated. The loop repeats from the Plan step until a stopping criterion is met.

Standard MOGA Workflow

The MOGA workflow, exemplified by algorithms like NSGA-II, follows a generational evolutionary process [25]:

- Initialization: A initial population of candidate solutions is generated randomly or via a design-of-experiments method.

- Evaluation: The entire population is evaluated against all objective functions.

- Non-Dominated Sorting: The population is ranked into Pareto fronts (Front 1, Front 2, etc.) based on dominance relationships.

- Selection & Diversity Preservation: Candidates are selected for breeding, prioritizing higher-ranked fronts. Within a front, individuals in less crowded regions (e.g., higher crowding distance) are favored to maintain diversity.

- Genetic Operations: A new offspring population is created by applying crossover (recombination) and mutation to the selected parents.

- Replacement: The parent and offspring populations are combined, and the best individuals are selected to form the next generation. The process repeats from the Evaluation step.

Key Research Reagents and Computational Tools

The following table details key computational and methodological "reagents" essential for implementing MOBO and MOGA in an experimental research context.

Table 1: Essential Research Reagents and Tools for Multi-Objective Optimization

| Reagent/Tool | Function | Example Use Case |

|---|---|---|

| Gaussian Process (GP) Surrogate Model | A probabilistic model that approximates the unknown objective function, providing both a mean prediction and uncertainty estimate at any point in the design space [25] [26]. | Core component of MOBO; used to predict material properties based on process parameters without costly experiments. |

| Expected Hypervolume Improvement (EHVI) | An acquisition function that quantifies the potential of a candidate point to improve the dominated volume in the objective space, guiding the selection of the next experiment in MOBO [2] [26]. | Balances exploration and exploitation in MOBO for autonomous materials extrusion and beamline tuning. |

| Non-Dominated Sorting | A ranking procedure that classifies a population of solutions into successive Pareto fronts based on their dominance relationships [25]. | Used in NSGA-II and other MOGAs to assign fitness and select parents for the next generation. |

| Crowding Distance | A density estimation metric that measures how close a solution is to its neighbors in the objective space, used to preserve diversity in the Pareto Front approximation [25]. | Prevents convergence to a single region of the Pareto Front in MOGA, ensuring a wide spread of optimal solutions. |

| V-shaped Transfer Function | A mapping function that converts a continuous search space into a binary one, enabling the application of continuous optimizers to discrete problems [27]. | Allows algorithms like the Binary Multi-Objective Bonobo Optimizer (BMOBO) to solve topology planning in Wireless Mesh Networks. |

Performance Comparison: Experimental Data and Analysis

Direct comparisons in recent literature consistently highlight a trade-off between the exceptional data efficiency of MOBO and the robust global search capabilities of MOGA.

Quantitative Performance Metrics

Performance is typically evaluated using metrics that assess the quality of the approximated Pareto Front [25]:

- Hypervolume (HV): Measures the volume of the objective space dominated by the approximated front, capturing both convergence and diversity. Higher is better.

- Inverted Generational Distance (IGD): Measures the average distance from the true Pareto Front to the approximated front. Lower is better.

- Generational Distance (GD): Measures the average distance from the approximated front to the true Pareto Front. Lower is better.

- Data Usage (D): The number of experimental evaluations required to achieve a target performance level. Lower is better for resource-constrained scenarios.

The table below synthesizes findings from multiple studies comparing MOBO and MOGA across different domains.

Table 2: Comparative Performance of MOBO vs. MOGA in Recent Studies

| Application Domain | Key Performance Findings | Citation |

|---|---|---|

| MeV-Ultrafast Electron Diffraction | MOBO achieved performance comparable to expert human operators and converged to the Pareto Front at least an order of magnitude faster than MOGA, requiring far fewer experimental measurements. | [26] |

| Additive Manufacturing (Material Extrusion) | MOBO demonstrated high generality and robustness in optimizing multiple print objectives. It was shown to be highly effective for autonomous experimentation where the number of experiments must be minimized. | [2] |

| Smart Manufacturing & Material Discovery | A Bayesian sequential decision-making framework (BMSDM) comprehensively outperformed traditional methods, including MOGAs, across multiple performance metrics (GD, IGD, HV) on a real manufacturing dataset. | [25] |