Balancing Exploration and Exploitation in Materials Search Algorithms: Strategies for Accelerated Drug Discovery

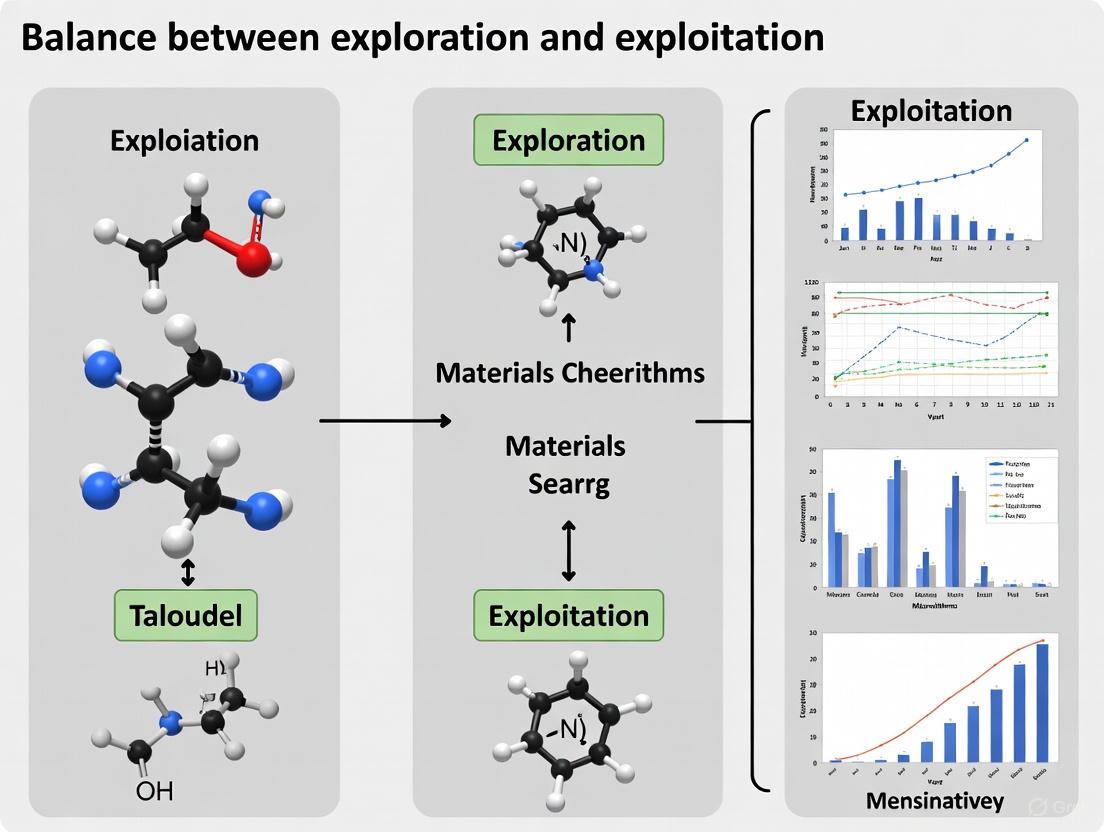

This article provides a comprehensive analysis of the exploration-exploitation trade-off, a critical challenge in optimizing materials search algorithms for drug development.

Balancing Exploration and Exploitation in Materials Search Algorithms: Strategies for Accelerated Drug Discovery

Abstract

This article provides a comprehensive analysis of the exploration-exploitation trade-off, a critical challenge in optimizing materials search algorithms for drug development. Tailored for researchers and pharmaceutical professionals, it covers foundational theory, modern algorithmic strategies like adaptive metaheuristics and multi-armed bandits, and practical solutions for overcoming local optima and premature convergence. It further details rigorous validation frameworks using benchmark functions and real-world case studies, offering a holistic guide for enhancing the efficiency and success rates of computational materials and drug candidate discovery.

The Fundamental Trade-Off: Understanding Exploration and Exploitation in Optimization

Frequently Asked Questions

1. What are the most common signs that my optimization algorithm is over-exploiting? A common sign is premature convergence, where the algorithm becomes trapped in a local optimum and the population of candidate solutions loses diversity. This is often characterized by a high number of duplicate solutions being evaluated and a stagnation in the improvement of the fitness score [1].

2. How can I balance exploration and exploitation in a multi-objective materials search? Balancing this trade-off is a central challenge. The Expected Hypervolume Improvement (EHVI) method has been demonstrated as an effective strategy. It actively manages the trade-off by selecting new data points that promise to increase the volume of the objective space dominated by the current Pareto front, thereby balancing the gain of new information (exploration) with the improvement of existing solutions (exploitation) [2].

3. My algorithm seems to be exploring randomly without improving. What should I check? This can indicate ineffective exploration. Investigate whether your algorithm's search mechanism is suited for the problem's fitness landscape. For deceptive landscapes with "blind spots" (global optima that are hard to find), algorithms may require enhanced exploration strategies, such as those incorporating Levy flight dynamics or memory mechanisms to avoid revisiting unproductive regions [3] [1].

4. What is a surrogate model and why is it used in materials informatics? A surrogate model is a machine learning model trained on existing data to rapidly predict material properties, acting as a stand-in for slower, more expensive experiments or simulations [4]. In an active learning loop, it is used to guide the search for new materials by identifying the most promising candidates to evaluate next, thus optimizing the use of resources [2].

Troubleshooting Guides

Problem: Algorithm Trapped in a Local Optimum (Over-Exploitation)

Description The algorithm converges too quickly on a sub-optimal solution, failing to discover better materials that may exist in other regions of the search space.

Diagnosis and Resolution Steps

- Quantify Population Diversity: Implement a method to track the diversity of your candidate solutions. A sharp decline in diversity is a key indicator of over-exploitation [1].

- Integrate a Memory Archive: Use a meta-approach like Long-Term Memory Assistance Plus (LTMA+). This technique maintains an archive of unique, non-revisited solutions and uses it to dynamically shift the search away from over-exploited regions and toward unexplored areas when stagnation is detected [1].

- Employ Advanced Search Strategies: Consider switching to or incorporating algorithms that use dynamic search patterns. For example, the Hare Escape Optimization (HEO) algorithm uses Levy flights and adaptive directional shifts to escape local optima effectively [3].

- Adjust Acquisition Function: If using Bayesian optimization, review your acquisition function. For a better exploration-exploitation balance, use a function like Expected Hypervolume Improvement (EHVI) instead of pure exploitation-based methods [2].

Problem: Inefficient Search in High-Dimensional Spaces (Poor Exploration)

Description The search process is slow, fails to find good solutions in a reasonable time, or misses the global optimum in a complex fitness landscape.

Diagnosis and Resolution Steps

- Benchmark on Deceptive Problems: Test your algorithm on specialized benchmark suites like the "Blind Spot" benchmark or functions from CEC 2015/2020. If performance is poor, it indicates a weakness in handling deceptive landscapes [1] [3].

- Enhance with Hybrid Strategies: Augment your base algorithm with a meta-layer like LTMA+. This helps improve robustness and success rates on problems where the global optimum is difficult to locate [1].

- Adopt a Modern Metaheuristic: Implement a recently developed algorithm designed for robust exploration, such as the Hare Escape Optimization (HEO), which has been validated on complex benchmarks and engineering problems [3].

- Validate with Multi-Objective Active Learning: For materials discovery, set up an active learning loop using multi-objective Bayesian optimization with EHVI. This has been proven to efficiently find optimal Pareto fronts by sampling only a small fraction (e.g., 16-23%) of the entire search space [2].

Experimental Protocols & Performance Data

Protocol 1: Active Learning with Multi-Objective Bayesian Optimization

This methodology is used for efficiently discovering materials that optimally satisfy multiple target properties [2].

- Database Construction: Compile a dataset of materials with the properties of interest. Example databases include the Computational 2D Materials Database (C2DB) or JARVIS-DFT.

- Feature Generation: Generate a numerical fingerprint or descriptor for each material in the dataset [4].

- Model Training: Train a separate surrogate model (e.g., a machine learning model) for each target property using the initial training data.

- Active Learning Loop:

- Using the surrogate models, identify the next most promising material candidate to evaluate. This is done by optimizing an acquisition function like EHVI.

- The new candidate is "sampled" (its properties are obtained via a high-fidelity calculation or experiment).

- This new data is added to the training set, and the surrogate models are retrained.

- The loop repeats until a stopping criterion is met (e.g., a performance target is reached).

Performance Data: The table below summarizes the efficiency of the EHVI method on a 2D materials database [2].

| Initial Training Data Ratio | Sampling of Search Space to Find Optimal Pareto Front |

|---|---|

| 0.5% | 16% |

| 1% | 19% |

| 5% | 23% |

Protocol 2: Benchmarking Algorithm Robustness with LTMA+

This protocol is used to evaluate and enhance an algorithm's ability to avoid premature convergence on difficult problems [1].

- Problem Selection: Use a benchmark designed to expose algorithmic weaknesses, such as the Blind Spot benchmark.

- Algorithm Setup: Configure the metaheuristic algorithm you wish to test.

- Integration with LTMA+: Enhance the algorithm with the LTMA+ meta-approach. This involves:

- Maintaining a long-term memory archive of unique solutions.

- Monitoring for duplicate solution evaluations.

- Using the frequency of duplicates to dynamically guide the search toward unexplored regions when diversity is low.

- Evaluation: Run the standard algorithm and the LTMA+-enhanced version on the benchmark. Compare success rates, solution accuracy, and convergence speed.

Performance Data: The table below shows the percentage speedup achieved by the original LTMA on different problem types [1].

| Problem Type | Speedup by LTMA |

|---|---|

| Low Computational Cost | ≥10% |

| Soil Model Optimization | ≥59% (35% duplicates generated without LTMA) |

The Scientist's Toolkit: Essential Research Reagents

In computational materials science, the "reagents" are the data, software, and algorithmic tools used to conduct research.

| Item Name | Function |

|---|---|

| High-Throughput Data Repositories (e.g., Materials Project, NOMAD) | Provides reliable, large-scale data on material properties for training surrogate models and benchmarking [4]. |

| Numerical Fingerprints/Descriptors | Converts a material's chemical structure into a numerical string, enabling machine learning [4]. |

| Surrogate Machine Learning Models | Enables rapid prediction of material properties without expensive simulations, accelerating the search loop [4] [2]. |

| Acquisition Functions (e.g., EHVI) | In Bayesian optimization, this function decides the next experiment to run by balancing exploration and exploitation [2]. |

| Specialized Benchmarks (e.g., CEC suites, Blind Spot) | Provides standardized test problems to evaluate, validate, and compare the performance of optimization algorithms [3] [1]. |

Workflow and Relationship Diagrams

Diagram 1: Active Learning Loop in Materials Search

Diagram 2: Troubleshooting Over-Exploitation with LTMA+

For researchers in materials science and drug development, computational search algorithms are indispensable for navigating vast, complex search spaces to discover new compounds or optimize molecular structures. The performance of these algorithms hinges on a fundamental trade-off: exploration, the broad investigation of new regions of the search space, and exploitation, the intensive refinement of known promising areas [5]. An imbalance can lead to excessive computational costs or premature convergence on suboptimal solutions. This technical support center provides practical guides and FAQs to help you diagnose and resolve common issues related to this critical balance in your experiments.

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Search Stagnation

Problem: Your algorithm's performance has plateaued, and it is no longer finding improved solutions.

Diagnostic Steps:

- Check Population Diversity: Monitor the diversity of your candidate solutions in both the search (e.g., genetic sequences, chemical descriptors) and objective (e.g., binding affinity, material property) spaces over generations. A rapid decline indicates excessive exploitation [6].

- Analyze Acceptance Rate: Track the rate at which new, worse-performing solutions are accepted. A rate that drops to zero too early suggests the algorithm cannot escape local optima [5].

Solutions:

- For Excessive Exploitation (Stuck in Local Optima):

- Increase Exploration Parameters: In Simulated Annealing, increase the initial temperature or slow the cooling rate. In Tabu Search, increase the tabu list size [5].

- Introduce a Hybrid Operator: If using a multi-operator algorithm, dynamically increase the usage of exploratory operators (e.g., DE/rand/1/bin) when stagnation is detected [6].

- Implement Random Restarts: Periodically re-initialize part of the population from random points in the search space to rediscover diversity [5].

- For Excessive Exploration (Slow Convergence):

- Increase Exploitation Parameters: Lower the initial temperature in Simulated Annealing or use a more aggressive cooling schedule. In population-based algorithms, reduce the mutation rate [5].

- Switch to Local Search: After an initial exploratory phase, hybridize your algorithm with a local search method to intensively exploit the best regions found [5].

Guide 2: Tuning Algorithm Parameters for Specific Search Landscapes

Problem: Your algorithm performs well on standard test functions but fails on your specific research problem.

Diagnostic Steps:

- Characterize Your Landscape: Analyze your problem's fitness landscape. Is it smooth or rugged? Are there many local optima? This understanding guides parameter selection.

- Perform Sensitivity Analysis: Systematically run your algorithm while varying one key parameter (e.g., mutation rate, cooling rate) at a time to observe its impact on final solution quality and convergence speed [6].

Solutions:

- For Rugged Landscapes (Many local optima): Prioritize exploration. Use higher mutation rates, higher initial temperatures, and consider algorithms like Tabu Search that explicitly forbid revisiting recent solutions [5].

- For Smooth Landscapes: Prioritize exploitation. Use lower mutation rates and algorithms like Hill Climbing that can efficiently refine a good solution [5].

- Implement Adaptive Tuning: Use a strategy that dynamically adjusts parameters based on search progress, such as reducing the mutation rate as the population converges or using survival analysis to choose between exploratory and exploitative operators [6].

Frequently Asked Questions (FAQs)

Q1: How can I quantitatively measure the exploration-exploitation balance in my algorithm during a run? A1: Direct measurement is challenging, but effective proxies exist. You can track the population diversity in the search space (e.g., average Hamming distance between genotypes, variance in continuous parameters) – high diversity suggests exploration. Conversely, monitoring the rate of improvement in fitness can indicate exploitation. Some advanced methods propose indicators like "Survival length in Position (SP)" to guide this balance adaptively [6].

Q2: My multiobjective evolutionary algorithm (MOEA) finds a diverse Pareto front, but the solutions are not close to the true optimum. Is this an exploration or exploitation problem? A2: This is typically an exploitation problem. The algorithm is successfully exploring different regions of the objective space (good diversity) but failing to refine the solutions within those regions to push them closer to the true Pareto front. To address this, enhance exploitation by incorporating local search operators around promising solutions on the front, or using recombination operators that favor small, refinements [6].

Q3: In Simulated Annealing, what is a good rule of thumb for setting the initial temperature and cooling rate? A3: While problem-specific, a common methodology is to choose an initial temperature that allows for a high probability (e.g., 80%) of accepting a worse solution of a typical magnitude early in the search. The cooling rate is often set between 0.95 and 0.99, applied multiplicatively each iteration, providing a gradual shift from exploration to exploitation. The exact values should be determined empirically for your problem [5].

Q4: How does the trade-off differ between single-objective and multiobjective optimization? A4: In single-objective optimization, population diversity in the search space is typically allowed to decrease over time to converge on a single optimum. In multiobjective optimization, diversity must be maintained throughout the search in the objective space to capture a representative Pareto front, even while exploitation is used to improve the convergence of each solution on the front [6].

Experimental Protocols & Data

Table 1: Characteristics and trade-offs of common local search algorithms.

| Algorithm | Primary Strength | Mechanism for Exploration | Mechanism for Exploitation | Best Suited For |

|---|---|---|---|---|

| Hill Climbing | Simplicity, fast convergence | None (greedy) | Always moving to a better neighbor | Smooth, unimodal landscapes [5] |

| Simulated Annealing | Escaping local optima | Accepting worse moves at high temperature | Greedy acceptance at low temperature | Rugged landscapes with many local optima [5] |

| Tabu Search | Avoiding cycles | Tabu list forbids recent moves | Intensive local search on current solution | Complex constraints and path-based problems [5] |

| Multiobjective EA (EMEA) | Balanced Pareto front discovery | DE recombination operator | Clustering-based advanced sampling | Problems requiring a diverse, high-quality solution set [6] |

Detailed Methodology: Simulated Annealing for Materials Search

This protocol outlines the implementation of a Simulated Annealing algorithm to find a material composition with a target property [5].

1. Initialization:

- Define an objective function,

F(x), that quantifies the performance of a material compositionx(e.g., superconducting critical temperature). - Initialize a random starting solution

current_x. - Set parameters:

initial_temp = 1000,cooling_rate = 0.003, andmax_iterations = 5000.

2. Iterative Search:

For each iteration up to max_iterations:

- Generate Neighbor: Create a new solution

neighbor_xby perturbingcurrent_x(e.g., slightly altering elemental dopant concentrations). - Evaluate: Calculate

current_score = F(current_x)andneighbor_score = F(neighbor_x). - Acceptance Probability: Decide whether to move to the neighbor:

- If

neighbor_scoreis better, always accept. - If

neighbor_scoreis worse, accept with probabilityP = exp((current_score - neighbor_score) / current_temp).

- If

- Update Best: If

current_scoreis the best found so far, record it. - Cool Down: Update the temperature:

current_temp = current_temp * (1 - cooling_rate).

3. Output: Return the best solution found during the search.

Workflow Visualization

The following diagram illustrates the logical flow and balancing mechanism of the Simulated Annealing protocol.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential computational "reagents" for search algorithm experiments.

| Item / Concept | Function in the Experiment |

|---|---|

| Objective Function | The "assay" that quantifies the quality of a candidate solution (e.g., predicted binding affinity, material density) [5]. |

| Recombination Operator (e.g., DE/rand/1/bin) | An exploratory operator that creates new solutions by combining parts of existing ones, promoting genetic diversity in the population [6]. |

| Local Search Operator (e.g., Hill Climbing) | An exploitative operator that refines a single solution by searching its immediate neighborhood for incremental improvements [5]. |

| Temperature Parameter (Simulated Annealing) | A dynamic control knob that explicitly manages the trade-off. High values favor exploration (accepting worse moves), low values favor exploitation [5]. |

| Tabu List (Tabu Search) | A memory structure that prevents the algorithm from revisiting recently explored solutions, forcing exploration of new regions [5]. |

| Survival Analysis Indicator | A probabilistic metric used in adaptive algorithms to decide whether to invoke an exploratory or exploitative operator based on recent search progress [6]. |

FAQs: Multi-Armed Bandit Implementation for Materials Research

Q1: What is the core exploration-exploitation dilemma in the context of materials science? The Multi-Armed Bandit (MAB) problem models the challenge of choosing between exploring new options with uncertain rewards and exploiting the best-known option. In materials science, this translates to the challenge of balancing research efforts between testing new, unexplored material compositions (exploration) and further investigating the most promising known candidates (exploitation) to maximize the discovery of materials with desired properties within a limited research budget [7] [8].

Q2: How do I choose between algorithms like ε-Greedy, UCB, and Thompson Sampling for my search? The choice of algorithm depends on your specific project's needs for simplicity, performance, and handling of uncertainty. The following table summarizes the key characteristics:

| Algorithm | Core Mechanism | Best For | Key Considerations |

|---|---|---|---|

| ε-Greedy [8] [9] | Selects random action with probability ε, otherwise greedy action. | Simple, easy-to-implement baselines. | Fixed exploration rate can be inefficient; performance sensitive to ε value. |

| Upper Confidence Bound (UCB) [8] [9] | Selects action with highest upper confidence bound on reward. | Scenarios favoring optimism under uncertainty. | Deterministic; requires tracking all arms. UCB1 form: $Q(a) + \sqrt{\frac{2 \log t}{N_t(a)}}$ [8]. |

| Thompson Sampling [8] [9] | Draws reward samples from posterior (e.g., Beta) distributions, picks best. | Efficiently balancing exploration/exploitation; Bernoulli rewards. | Probabilistic; often delivers superior empirical performance. Beta posterior update [8]. |

Q3: My material search space is vast and high-dimensional. Can MAB methods handle this? Standard MABs treat each "arm" as independent, which is inefficient for vast chemical spaces. For these problems, Contextual Bandits are more suitable. They incorporate feature vectors (e.g., molecular descriptors, elemental properties) into the decision-making process, allowing the algorithm to generalize learning from one material to other, structurally similar materials. This enables a more intelligent search across the entire space [10] [11]. Advanced methods like the Mendelevian Search (MendS) algorithm use a double evolutionary search through this abstract chemical space to find optimal compounds [12].

Q4: What are the common pitfalls when applying a MAB framework to clinical dose-finding trials? A key challenge in dose-finding is the small sample size, which can lead to high variability in reward estimates. For instance, in Thompson Sampling, the heavy tails of the posterior distribution can cause erratic dose selection. Mitigation strategies include Regularized Thompson Sampling or switching to a greedy algorithm that selects based on the posterior mean rather than a random sample, which can improve stability and performance in these limited-data environments [13].

Q5: How can I address the computational cost of MAB algorithms with many material options? Scalability is a known challenge. Strategies to manage this include:

- Algorithm Choice: Simpler algorithms like ε-Greedy have lower computational overhead than Thompson Sampling [9].

- Feature-Based Approaches: Using Contextual Bandits to generalize rather than treat each arm independently [11].

- Parallelization: Where possible, design experiments to allow for parallel evaluation of multiple candidates to accelerate data collection.

Troubleshooting Guides

Guide 1: Resolving Premature Convergence to a Suboptimal Material

Problem: Your algorithm is repeatedly selecting the same material candidate early in the search process, potentially missing better options.

Solution Steps:

- Diagnose the Exploration Rate:

- For ε-Greedy, check if the value of ε is too low. A very small ε (e.g., <0.01) leads to minimal exploration [9].

- For Thompson Sampling, examine the posterior distributions. If they prematurely converge with very low variance, the algorithm will stop exploring.

Adjust Algorithm Parameters:

Validate the Reward Function: Ensure your reward function (e.g., a measure of material hardness) is correctly calibrated and provides a meaningful signal for the property you are optimizing [10].

Guide 2: Managing Noisy or Sparse Material Property Data

Problem: Experimental data for material properties can be noisy, sparse, and high-dimensional, which undermines the algorithm's ability to learn accurate reward models [10].

Solution Steps:

- Incorporate Domain Knowledge: Use feature representations (descriptors) for your materials that are informed by domain expertise. This helps the algorithm reason about similarities between different material compositions [10] [11].

- Leverage Probabilistic Models: Algorithms like Thompson Sampling are naturally suited for noisy environments because they maintain a distribution over reward estimates, explicitly modeling uncertainty [8] [9].

- Implement Bayesian Optimization: For inverse materials design (finding materials given desired properties), Bayesian optimization, which often uses Thompson Sampling-like acquisition functions, is a standard and powerful tool for navigating noisy, expensive-to-evaluate objective functions [10].

Experimental Protocols & Workflows

Protocol 1: Implementing a Thompson Sampling Bandit for Compound Screening

Objective: To identify the compound with the highest success probability (e.g., binding affinity, catalytic activity) from a library of K candidates.

Methodology:

- Initialization:

Iterative Experimentation (for each round t):

- Sample: For each arm ( k ), draw a sample ( \thetak ) from its current Beta(( \alphak ), ( \beta_k )) distribution [9].

- Select: Choose the arm ( at ) with the largest sampled value ( \thetak ) [9].

- Experiment: Perform the experiment (e.g., assay) on the selected compound ( a_t ).

- Observe Reward: Record the outcome ( rt ), where ( rt = 1 ) for a success and ( 0 ) for a failure [9].

- Update: Update the parameters for the selected arm ( at ):

Termination: The process is repeated until a predetermined budget (number of experiments) is exhausted. The arm with the highest empirical success rate (( \alphak / (\alphak + \beta_k) )) is reported as the best candidate.

The workflow for this protocol is illustrated in the following diagram:

Protocol 2: Mendelevian Search for Inverse Materials Design

Objective: To discover the compound and crystal structure with the optimal value for a target property (e.g., hardness, magnetization) across all possible combinations of chemical elements [12].

Methodology:

- Define Chemical Space: Construct an abstract chemical space where proximity correlates with similarity in material properties [12].

- Double Evolutionary Search:

- Inner Loop (Structure Prediction): For a given point in chemical space (a composition), an evolutionary algorithm searches for the most stable crystal structure [12].

- Outer Loop (Composition Search): The discovered compounds compete, "mate," and "mutate" within the chemical space, evolving towards regions with superior target properties [12].

- Selection: The algorithm selects the best material based on the evolutionary process, effectively solving the inverse design problem.

The Scientist's Toolkit: Key Research Reagents & Computational Solutions

This table details essential computational "reagents" for implementing bandit-based search strategies.

| Item / Solution | Function / Explanation | Application Example |

|---|---|---|

| Beta Distribution | A continuous probability distribution on [0, 1], used as a conjugate prior for Bernoulli rewards in Bayesian analysis. | Modeling the posterior distribution of a success probability (e.g., drug efficacy, reaction yield) in Thompson Sampling [8] [9]. |

| Markov Decision Process (MDP) | A mathematical framework for modeling sequential decision-making under uncertainty where outcomes are partly random and partly under control. | Formally defining the state, actions, and transition dynamics of a bandit problem, especially for more complex variants like restless bandits [7]. |

| Gittins Index | A dynamic allocation index that provides the optimal solution for the infinite-horizon discounted Bayesian MAB problem [7] [14]. | Optimizing resource allocation in theoretical models, though rarely used in clinical trials due to low statistical power for hypothesis testing [14]. |

| Contextual Feature Vector | A set of numerical descriptors representing the features of a candidate (e.g., molecular weight, atomic radius, electronic band gap). | Enabling Contextual Bandits to generalize learning across the material search space by relating arm choice to observable features [11]. |

| High-Throughput Virtual Screening (HTVS) | A computational method to rapidly screen vast libraries of material candidates using simulations to generate data [10]. | Generating initial data on material properties to train or provide a prior for bandit algorithms, reducing the number of physical experiments needed [10]. |

Core Concepts: Exploration vs. Exploitation in Drug Discovery

In the context of drug discovery, the challenge of exploration (searching for new, promising candidate molecules) versus exploitation (optimizing known, high-value leads) is a central computational problem [15]. AI-driven methods have transformed this search process, enabling researchers to navigate the vast chemical space of potential drug candidates more efficiently than ever before [16] [17].

Exploration is the process of choosing actions with the objective of learning about the environment. In drug discovery, this involves screening vast chemical libraries or using generative AI to design novel molecular structures to identify initial "hit" compounds [16] [18]. Exploitation, on the other hand, is the process of using previously obtained information to acquire rewards. This translates to optimizing the chemical structure of a confirmed "hit" or "lead" compound to enhance its potency, selectivity, and safety profile [16]. Optimal strategies will combine these two objectives appropriately [15].

The table below summarizes how this balance manifests in key stages of the drug discovery pipeline.

| Discovery Stage | Exploration (Broad Search) | Exploitation (Focused Optimization) |

|---|---|---|

| Target Identification | Identifying novel biological targets (e.g., proteins, genes) for a disease [16]. | Validating and deepening understanding of a known, high-value target [16]. |

| Hit Identification | Virtual screening of ultra-large libraries (billions of compounds) to find initial "Hits" [17]. | Iterative testing and confirmation of a small set of promising candidate "Hits" [16]. |

| Lead Optimization | Generating diverse analog structures around a Lead compound to explore chemical space [16]. | Fine-tuning the Lead's structure to improve specific properties like potency and metabolic stability [16]. |

Troubleshooting Guides & FAQs

This section addresses common operational challenges when implementing algorithmic search strategies in a drug discovery environment.

FAQ: Algorithmic Search & Balance

What does "balancing exploration and exploitation" mean in a practical screening campaign? It means strategically allocating computational and experimental resources. For example, an initial campaign might use fast, lower-fidelity filters (e.g., simple docking) to explore a billion-compound library (exploration). Promising hits from this round are then fed into a more computationally expensive, high-fidelity simulation (exploitation) to select the best few hundred compounds for synthesis and testing [17]. The optimal strategy combines these two objectives to be both informative and rewarding [15].

How can I tell if my screening algorithm is over-exploiting? A key sign is a lack of chemical diversity in the final output. If all your top-ranked compounds are structurally very similar, your algorithm may be stuck in a local optimum and failing to explore other promising regions of chemical space. This can be formalized using the Z'-factor, which assesses data quality; a large assay window with high noise (poor Z'-factor) may indicate ineffective exploration or unstable results [19].

We use AI for molecular design. How does exploration/exploitation apply? Generative AI models, like Generative Adversarial Networks (GANs), directly embody this balance [16]. The generator creates new molecular structures (exploration), while the discriminator evaluates them against known desirable properties (exploitation). This adversarial process continues until the generator produces optimized, novel compounds [16].

Troubleshooting Common Experimental Issues

Problem: No Assay Window in a TR-FRET-Based Screening Assay

- Description: The assay fails to show a meaningful signal difference between positive and negative controls.

- Solution:

- Verify Instrument Setup: The most common reason is an incorrect instrument configuration. Confirm that the exact recommended emission and excitation filters for your TR-FRET assay are used [19].

- Check Reagent Quality: Ensure assay reagents, such as the Terbium (Tb) or Europium (Eu) donors, have not degraded and are pipetted accurately [19].

- Test Reader Setup: Use purchased reagents to perform a plate reader validation test before running your actual assay [19].

Problem: High Variation (Noise) in Screening Data

- Description: Data points show large standard deviations, making it difficult to distinguish true hits from background noise.

- Solution:

- Use Ratiometric Data Analysis: For TR-FRET assays, always use the emission ratio (Acceptor Signal / Donor Signal, e.g., 520 nm/495 nm for Tb) instead of raw RFU values. This accounts for pipetting variances and lot-to-lot reagent variability [19].

- Calculate the Z'-Factor: Assess assay robustness with the Z'-factor. A value >0.5 is considered suitable for screening. This metric considers both the assay window and the data variation [19].

- The formula is:

Z' = 1 - [ (3 * SD_positive + 3 * SD_negative) / |Mean_positive - Mean_negative| ][19].

Problem: Inconsistent EC50/IC50 Values Between Labs

- Description: Different laboratories obtain differing potency values for the same compound.

- Solution:

- Audit Stock Solutions: The primary reason is differences in the preparation of compound stock solutions (e.g., at 1 mM). Verify solubility, concentration, and storage conditions [19].

- Consider Biological Context: In cell-based assays, the compound may not effectively cross the cell membrane or may be affecting an upstream/downstream target. Confirm the assay is measuring the intended interaction [19].

Experimental Protocols

Protocol 1: Iterative Ultra-Large Virtual Screening

This methodology accelerates the discovery of novel hit compounds from gigascale chemical libraries by balancing broad exploration with focused exploitation [17].

Library Preparation (Exploration Setup):

- Start with an ultra-large virtual chemical library (e.g., ZINC20, Enamine REAL) containing billions of readily synthesizable molecules [17].

- Prepare the library for docking by generating 3D conformational structures and applying standard drug-like filters.

Initial Broad Docking (Exploration Phase):

- Perform a fast, first-pass molecular docking screen against your protein target's 3D structure for the entire library. This step prioritizes breadth over precision [17].

Iterative Refinement & Screening (Exploitation Phase):

- Select the top 1-10 million compounds from the initial screen.

- Re-dock this subset using more sophisticated, computationally expensive methods (e.g., more precise scoring functions, molecular dynamics simulations) [17].

- Further refine the selection through multiple iterative rounds, potentially incorporating active learning, where the model prioritizes compounds it is most uncertain about, balancing exploration and exploitation [17].

Final Selection & Validation:

- Select a few hundred to a thousand top-ranking compounds for in vitro experimental testing.

- Confirm "hits" through dose-response assays to determine IC50/EC50 values.

Protocol 2: AI-Driven de Novo Molecular Design

This protocol uses generative models to create novel, optimized drug candidates from scratch [16].

Model Training (Learning the Chemical Space):

- Train a deep learning model, such as a Generative Adversarial Network (GAN) or a variational autoencoder (VAE), on a large dataset of known drug-like molecules and their properties [16].

- The generator learns to produce novel molecular structures, while the discriminator learns to distinguish them from real ones [16].

Conditional Generation (Guided Exploitation):

Molecular Generation & Filtering (Exploration Phase):

- Use the trained generator to produce a large library of novel candidate molecules.

- Filter this generated library using predictive AI models (e.g., for toxicity, synthetic accessibility) to remove unrealistic or undesirable compounds [16].

Optimization & Selection (Exploitation Phase):

- Employ reinforcement learning or Bayesian optimization to iteratively improve the generated structures against a multi-parameter optimization goal (e.g., potency + selectivity + solubility) [16].

- Select the final, top-ranked molecules for synthesis and biological testing.

Workflow & Pathway Diagrams

AI-Driven Drug Discovery Workflow

Exploration vs. Exploitation Balance

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and experimental resources essential for implementing AI-driven discovery campaigns.

| Tool / Reagent | Function / Description | Role in Exploration/Exploitation |

|---|---|---|

| ZINC20 / Enamine REAL | Free and commercial ultralarge databases of readily synthesizable compounds for virtual screening [17]. | Exploration: Provides the chemical space for initial broad screening. |

| Generative Adversarial Network (GAN) | A deep learning model consisting of a generator (creates molecules) and a discriminator (evaluates them) [16]. | Core Balance: The generator explores, the discriminator exploits. |

| AlphaFold | AI system that predicts the 3D structure of proteins from their amino acid sequence [18]. | Enabler: Provides structural data for structure-based screening (both exploration and exploitation). |

| LanthaScreen TR-FRET Assays | Homogeneous assays used for high-throughput screening and profiling compound activity (e.g., kinase inhibition) [19]. | Exploitation: Provides high-quality experimental data for validating and optimizing hits/leads. |

| Quantitative Structure-Activity Relationship (QSAR) | Modeling approach that relates a compound's molecular descriptors to its biological activity [16]. | Exploitation: Uses known data to predict and optimize the activity of new analogs. |

Algorithmic Strategies for Dynamic Balance: From Epsilon-Greedy to Adaptive Metaheuristics

Frequently Asked Questions

Q1: My Epsilon-Greedy algorithm converges to a sub-optimal arm. What is the most likely cause and how can I fix it? A1: This occurs when the exploration rate (ε) is set too high, causing excessive random exploration long after the optimal arm is identified [20]. To fix this:

- For stationary problems (where reward distributions do not change), use a decaying ε schedule or an annealing strategy to reduce exploration over time [21] [22].

- For non-stationary problems, a constant ε may be necessary; tune its value carefully via simulation to balance exploration and exploitation for your specific environment [23].

Q2: How does the UCB algorithm avoid the need for a manually set exploration parameter like epsilon? A2: UCB automatically balances exploration and exploitation by constructing a confidence bound for each arm's reward estimate. It selects the arm that maximizes the sum of the estimated reward (exploitation) and a confidence term (exploration). This confidence term is large for arms that have been sampled infrequently or when the total number of trials is low, ensuring they are explored. The exploration naturally decays as an arm is pulled more often [24] [25] [20].

Q3: In a materials search with a limited experimental budget, which algorithm typically finds the highest-performing candidate faster? A3: For problems with a large number of arms (candidates) and a limited budget, UCB or Thompson Sampling often outperform the standard Epsilon-Greedy algorithm. UCB's targeted exploration is more efficient than Epsilon-Greedy's random exploration, which wastes trials on clearly suboptimal arms [26]. The following table summarizes a comparative experiment highlighting this performance difference.

| Algorithm | Number of Sockets | Mean Reward Spread | Time Steps to Reach Target Charge | Key Observation |

|---|---|---|---|---|

| Epsilon-Greedy | 5 | 2.0 | ~320 | Performs adequately with few, distinct options [26]. |

| UCB | 5 | 2.0 | ~300 | Quickly identifies and exploits the best arm [26]. |

| Epsilon-Greedy | 100 | 0.1 | >500 (Did not finish in time) | Struggles with many similar options due to inefficient random exploration [26]. |

| UCB | 100 | 0.1 | ~400 | More efficient than Epsilon-Greedy, but slowed by initial "priming rounds" [26]. |

| Thompson Sampling | 100 | 0.1 | ~350 | Best performance in complex scenarios, requiring no parameter tuning [26]. |

Q4: What is a major drawback of the purely Greedy algorithm in a research context? A4: The purely Greedy algorithm often converges to a local optimum (sub-optimal material) because it exploits the first arm that appears good and never explores alternatives. Its performance is highly sensitive to initial reward estimates, which can be problematic with no prior knowledge [27] [20].

Experimental Protocols & Methodologies

Below is a detailed protocol for comparing Epsilon-Greedy and UCB1 algorithms, based on established testing frameworks [27] [26].

1. Objective To empirically evaluate and compare the performance, in terms of cumulative regret and optimal action identification, of the Epsilon-Greedy and UCB1 algorithms on a stochastic multi-armed bandit problem.

2. Materials & Setup (The Researcher's Toolkit) The core components required to implement this experimental protocol are software-based.

| Research Reagent / Tool | Function / Description |

|---|---|

| k-Armed Bandit Testbed | A simulated environment with a set of 'k' arms (e.g., material candidates). Each arm returns a reward from a fixed probability distribution when pulled [23]. |

| Reward Distribution (Normal) | Used to model stochastic rewards for each arm. The mean (μ) represents the arm's true performance, and the standard deviation (σ) represents noise or measurement error [24] [23]. |

| Algorithm Implementations | Code for the Epsilon-Greedy and UCB1 selection policies. This includes the logic for updating reward estimates and selecting the next arm [27]. |

| Performance Metric: Cumulative Regret | The primary metric, calculated as the sum over time of the difference between the reward of the optimal arm and the reward of the arm selected by the algorithm [24]. |

3. Procedure

- Initialize the Bandit Problem:

Initialize the Algorithms:

- Epsilon-Greedy: Set the exploration parameter ε (e.g., ε=0.1). Initialize the estimate ( Q(a) ) for each arm to 0 and the count ( N(a) ) to 0 [27] [23].

- UCB1: Set the confidence level parameter c (a common value is c=√2). Initialize ( N(a) ) to 0. Perform a priming round by pulling each arm once to obtain initial ( Q(a) ) estimates [27] [24].

Run the Experiment for T Time Steps:

- For t = 1 to T (e.g., T=1000):

- Epsilon-Greedy:

- With probability (1-ε), select the greedy arm: ( At = \arg\maxa Q(a) ).

- With probability ε, select a random arm uniformly.

- UCB1:

- Select the arm that maximizes: ( Q(a) + c \times \sqrt{\frac{\log(t)}{N(a)}} ).

- For the selected arm ( At ), receive a reward ( Rt ) from the environment.

- Update the estimates for ( At ):

- Increment the count: ( N(At) = N(At) + 1 ).

- Update the value estimate incrementally: ( Q(At) = Q(At) + \frac{1}{N(At)} (Rt - Q(At)) ) [23].

- Epsilon-Greedy:

- Record the reward and whether the optimal arm was selected at each step.

- For t = 1 to T (e.g., T=1000):

Repeat and Average:

- Repeat the entire experiment for a large number of independent runs (e.g., 1000) with different randomly generated bandit problems to obtain statistically significant results [23].

- Calculate the average reward and percentage of time the optimal arm was selected across all runs for each time step.

4. Expected Results & Analysis

- The Epsilon-Greedy algorithm will show linear cumulative regret, ( O(T) ), because it continues to explore at a fixed rate [24].

- The UCB1 algorithm will show logarithmic cumulative regret, ( O(\log T) ), as it efficiently reduces exploration over time, leading to better long-term performance [24].

- You should generate a plot of Average Reward vs. Steps and % Optimal Action vs. Steps. The UCB1 curve will typically rise faster and stabilize at a higher level than the Epsilon-Greedy curve, especially with well-tuned parameters [23].

Algorithm Logic and Workflow

The following diagram illustrates the core decision-making logic of the UCB1 algorithm, which embodies the principle of "optimism in the face of uncertainty."

UCB1 Algorithm Decision Flow

Troubleshooting Guide

Problem: UCB1 performs poorly in early stages with many arms. Solution: This is due to the mandatory "priming rounds" where each of the k arms is pulled once. For a very large k, this initial exploration phase is long. Consider algorithms like Thompson Sampling that do not have this requirement and can start exploiting promising arms more quickly [26].

Problem: Choosing the right parameters (ε for Epsilon-Greedy, c for UCB1) is difficult. Solution:

- For Epsilon-Greedy, a common starting point is ε=0.1. For a more robust approach, implement epsilon annealing, where ε starts higher and decays over time (e.g., ε = 1/√t) [21].

- For UCB1, the theoretical value ( c = \sqrt{2} ) is a good default. If performance is suboptimal, perform a parameter sweep on a simulated version of your problem to find a better value [25].

Problem: My reward distributions are changing over time (non-stationary). Solution: Both basic algorithms assume stationary distributions. To handle non-stationarity:

- Use a constant step-size parameter α instead of 1/N(a) when updating Q(a). This gives more weight to recent rewards, allowing the algorithm to forget old data and adapt to changes [23].

- Explore more advanced bandit algorithms specifically designed for non-stationary environments.

Frequently Asked Questions (FAQ)

General Algorithm Concepts

What is the difference between exploration and exploitation in metaheuristics?

Exploration is the process of discovering diverse solutions in different regions of the search space to identify promising areas, while exploitation intensifies the search within these promising areas to refine solutions and accelerate convergence [28]. Maintaining the right balance is crucial, as excessive exploration slows convergence, while predominant exploitation leads to local optima [28].

How does the Raindrop Algorithm (RD) balance exploration and exploitation?

The Raindrop Algorithm comprises two distinct phases. During the exploration phase, it employs mechanisms like splash, diversion, and evaporation to enhance global search capabilities. In the exploitation phase, it simulates raindrop convergence and overflow behaviors to improve local search performance [29].

Are there hybrid strategies to improve the exploration-exploitation balance?

Yes, hybrid strategies combine global and local search methods. For example, the G-CLPSO strategy combines the global search characteristics of Comprehensive Learning Particle Swarm Optimization (CLPSO) with the exploitation capability of the Marquardt-Levenberg method, demonstrating superior performance in accuracy and convergence [30].

Implementation and Tuning

What does the convergence characteristic of the Raindrop Algorithm look like?

The Raindrop Algorithm demonstrates rapid convergence characteristics, typically achieving optimal solutions within 500 iterations while maintaining computational efficiency [29].

How do Competitive Swarm Optimizer (CSO) variants enhance performance?

Competitive Swarm Optimizer with Mutated Agents (CSO-MA) adds a mutation step to the standard CSO. It randomly chooses a loser particle, picks a variable, and changes its value to a boundary value. This increases solution diversity and helps prevent premature convergence to local optima [31].

Can you provide a visual representation of a hybrid optimization workflow?

The following diagram illustrates the workflow of a hybrid global-local optimization strategy, such as G-CLPSO, which combines stochastic global search with deterministic local exploitation:

Troubleshooting Common Experimental Issues

Algorithm Performance Problems

| Problem | Possible Causes | Solutions |

|---|---|---|

| Premature Convergence | Over-emphasis on exploitation, insufficient population diversity [28] | Increase exploration mechanisms (e.g., mutation rate in CSO-MA [31]), use hybrid strategies [30] |

| Slow Convergence | Excessive exploration, poor parameter tuning [28] | Balance phases (e.g., fine-tune social factor φ in CSO [31]), implement local search exploitation [30] |

| High Computational Cost | Large population size, complex fitness evaluation | Limit iterations (e.g., Raindrop Algorithm typically converges in <500 iterations [29]), use efficient sampling |

Implementation and Validation

| Problem | Possible Causes | Solutions |

|---|---|---|

| Poor Solution Quality | Inadequate balance between exploration and exploitation [28] | Validate on benchmarks (e.g., CEC-BC-2020 [29]), use statistical tests (e.g., Wilcoxon rank-sum [29]) |

| Parameter Sensitivity | Over-fitting to specific problems | Test on diverse functions (separable/non-separable, unimodal/multimodal [31] [30]) |

| Performance Inconsistency | Stochastic nature of algorithms | Conduct multiple independent runs, report statistical significance [29] |

Experimental Protocols and Methodologies

Benchmarking Algorithm Performance

Objective: Evaluate the performance of nature-inspired metaheuristics on standard test functions.

Procedure:

- Select Benchmark Functions: Choose a diverse set of 23 benchmark functions and CEC-BC-2020 benchmark suite [29]

- Configure Algorithms: Set population size, iteration limits, and algorithm-specific parameters

- Execute Multiple Runs: Perform independent runs to account for stochastic variations

- Collect Data: Record convergence curves, final solution quality, and computational time

- Statistical Analysis: Apply Wilcoxon rank-sum tests to validate statistical significance [29]

Expected Outcomes: Quantitative comparison of solution quality, convergence speed, and robustness across different problem types.

Engineering Application Validation

Objective: Validate algorithm performance on real-world engineering optimization problems.

Procedure:

- Problem Selection: Identify complex, nonlinear constrained optimization problems [29]

- Algorithm Implementation: Apply metaheuristics (e.g., Raindrop Algorithm) to problem domain

- Performance Metrics: Measure solution quality, computational efficiency, and improvement over baseline methods

- Comparative Analysis: Benchmark against conventional optimization approaches

Application Example: In robotic engineering, the Raindrop Algorithm achieved an 18.5% reduction in position estimation error and 7.1% improvement in overall filtering accuracy compared to conventional methods [29].

Quantitative Performance Data

Algorithm Convergence Characteristics

| Algorithm | Typical Convergence Iterations | Key Strengths | Benchmark Performance |

|---|---|---|---|

| Raindrop Algorithm (RD) | <500 iterations [29] | Rapid convergence, balanced phases | First-place in 76% of test cases; statistically superior in 94.55% of CEC-BC-2020 cases [29] |

| CSO-MA | Varies by dimension [31] | Mutation prevents local optima | Competitive on high-dimensional problems (up to 5000 dimensions) [31] |

| G-CLPSO | Faster than CLPSO [30] | Hybrid global-local search | Superior accuracy and convergence in non-separable functions [30] |

Engineering Application Results

| Application Domain | Algorithm | Performance Improvement |

|---|---|---|

| Robotic Engineering | Raindrop Algorithm | 18.5% reduction in position estimation error; 7.1% improvement in filtering accuracy [29] |

| Statistical Modeling | CSO-MA | Effective for maximum likelihood estimation, Rasch models, M-estimation [31] |

| Hydrological Modeling | G-CLPSO | Outperformed gradient-based and stochastic algorithms in inverse estimation [30] |

The Scientist's Toolkit: Research Reagent Solutions

Essential Algorithmic Components

| Research Reagent | Function in Optimization | Example Implementation |

|---|---|---|

| Exploration Mechanisms | Discover promising regions in search space | Splash, diversion, evaporation in Raindrop Algorithm [29] |

| Exploitation Mechanisms | Refine solutions in promising regions | Convergence, overflow in Raindrop Algorithm [29] |

| Hybridization Strategies | Combine global exploration with local exploitation | G-CLPSO: CLPSO + Marquardt-Levenberg method [30] |

| Mutation Operators | Maintain population diversity | CSO-MA boundary mutation [31] |

| Benchmark Suites | Validate algorithm performance | 23 benchmark functions, CEC-BC-2020 suite [29] |

Algorithm Phase Transition Logic

The following diagram illustrates the decision process for transitioning between exploration and exploitation phases in nature-inspired metaheuristics, crucial for maintaining balance throughout the optimization process:

Frequently Asked Questions

Q1: What does "premature convergence" mean in practice, and how can I detect it in my optimization runs? Premature convergence occurs when an algorithm becomes trapped in a local optimum, stalling the search for a globally superior solution. You can detect it by monitoring population diversity, which is the degree of dispersion of individuals in the search space [32]. A consistently low diversity value indicates that the population is too concentrated and likely stagnating [32]. Similarly, a lack of improvement in the fitness of the best solution over successive iterations is a key indicator.

Q2: My algorithm is not exploring the search space effectively. How can I encourage more exploration? To enhance exploration, you can:

- Implement an adaptive niching method that divides the population into subpopulations (niches) to maintain diversity and explore multiple regions of the fitness landscape simultaneously [32].

- Use a diversity-controlled mutation strategy that selects mutation operators favoring exploration when population diversity is low [32].

- Dynamically adjust the step-size parameter based on the current solution's fitness, allowing for larger jumps in the search space during the early stages of optimization [33].

Q3: How can I balance the trade-off between exploration and exploitation automatically? An effective method is to use a framework for Adaptive Strategy Management (ASM). This framework dynamically switches between different solution-generation strategies based on real-time performance feedback [34]. The core steps are:

- Filtering: Deciding which candidate solutions to consider.

- Switching: Choosing the most appropriate search strategy (e.g., exploration- or exploitation-focused).

- Updating: Refining the model and strategies based on new results [34]. Population diversity can serve as the primary metric for this trade-off, with high diversity triggering exploitative behaviors and low diversity triggering exploratory behaviors [32].

Q4: What is a practical way to handle parameters that are sensitive or difficult to set? A robust approach is to reduce the algorithm's dependence on pre-defined parameters. For example, you can employ a diversity-based niching method that is not sensitive to the choice of parameters, as it adaptively partitions the population based on the current distribution of individuals rather than a fixed radius [32]. Another strategy is to refine the algorithm's core equations to reduce the number of hyperparameters required [33].

Troubleshooting Guides

Problem: Algorithm Convergence to Local Optima Description: The optimizer repeatedly returns a suboptimal solution, failing to find the global best. Solution Steps:

- Integrate a Local Optima Processing Strategy: Employ a tabu archive to record previously discovered optima. When a subpopulation is identified as prematurely convergent (its diversity falls below a threshold for several iterations), reinitialize its individuals while using the archive to avoid re-exploring the same regions [32].

- Adopt a Dynamically Scaled Step-Size: Incorporate an adaptive method that adjusts the step-size parameter based on the current solution's fitness. This helps the algorithm escape local optima by taking larger steps when needed [33].

- Verify Strategy Switching: If using an Adaptive Strategy Management (ASM) framework, ensure the switching mechanism is guided by knowledge of the global best solution to help direct the search away from less promising areas [34].

Problem: Poor Balance Between Exploration and Exploitation Description: The algorithm either wanders randomly without converging or converges too quickly. Solution Steps:

- Monitor Population Diversity: Track diversity metrics throughout the search process. A high diversity value implies exploration, while a low value reflects exploitation [32].

- Implement Adaptive Mutation Selection: Design a mechanism that allows each subpopulation to select a mutation operator adaptively at each iteration. The choice should be based on factors like problem dimensionality and the subpopulation's current diversity, enabling a better balance [32].

- Apply a Filtering and Switching Framework: Use the ASM framework to systematically alternate between exploratory and exploitative strategies. Methods like "ASM-Close Global Best," which combine proximity filtering with global best knowledge, have demonstrated robust convergence and high-quality solutions [34].

Problem: High Computational Cost for Large-Scale Problems Description: The optimization process is too slow or computationally expensive, especially for very large-scale design problems. Solution Steps:

- Utilize an Adaptive Strategy Management Framework: The ASM framework is specifically designed to enhance the efficiency of computationally expensive processes by dynamically deciding which solutions to evaluate, thus reducing unnecessary computations [34].

- Employ a Surrogate Model with Active Learning: In an active learning loop, use a surrogate model (e.g., a machine learning model) to make predictions. Guide experiments or calculations by maximizing a utility function (like expected improvement) to prioritize the most informative data points, reducing the number of costly evaluations required [35].

Experimental Protocols for Cited Methods

1. Protocol for Implementing Adaptive Strategy Management (ASM) This protocol is based on the ASM framework for large-scale structural optimization [34].

- Objective: To dynamically switch between multiple solution-generation strategies to improve optimization efficiency.

- Core Components:

- Filtering: Decide which candidate solutions to evaluate. Methods can include proximity-based filtering to ensure feasibility and stability.

- Switching: Adaptively decide when to switch strategies based on real-time performance feedback. Switching can be guided by the current best or global best solutions.

- Updating: Continuously update the strategy performance models and the population of solutions.

- Methodology:

- Select a core optimizer (e.g., Chaos Game Optimization was used in the original study).

- Define a set of solution-generation strategies.

- At each iteration, execute the ASM cycle (Filtering → Switching → Updating).

- Evaluate new solutions and update the global best.

- Validation: Evaluate the performance on benchmark structural problems and compare against state-of-the-art optimizers using convergence speed and solution quality metrics.

2. Protocol for Diversity-Based Adaptive Differential Evolution (DADE) This protocol outlines the use of the DADE algorithm for multimodal optimization problems [32].

- Objective: To locate as many global optima as possible in a multimodal landscape.

- Core Components:

- Diversity-Based Niching: Adaptively divide the population into subpopulations (niches) using a modified diversity measurement to determine niche membership.

- Adaptive Mutation Selection: For each niche, adaptively choose a mutation operator based on problem dimensionality and the niche's current diversity.

- Local Optima Processing: Use a tabu archive (elite set and tabu regions) to reinitialize niches that show premature convergence.

- Methodology:

- Initialize the population and parameters.

- Loop until termination: a. Measure population diversity and perform niching. b. For each niche, select a mutation strategy and generate offspring. c. Identify stagnating niches and reinitialize them using the tabu archive. d. Evaluate new individuals and update the population.

- Validation: Test the algorithm on a suite of multimodal benchmark functions (e.g., from CEC2013) and compare its performance (number of optima found, convergence rate) against other state-of-the-art multimodal algorithms.

Research Reagent Solutions

The table below lists key algorithmic components and their functions in adaptive control methods.

| Research Reagent | Function & Purpose |

|---|---|

| Diversity Metric [32] | A measure of the dispersion of individuals in the population; used to quantify the balance between exploration and exploitation and trigger adaptive responses. |

| Niching Method [32] | A technique to subdivide the population into distinct subpopulations (niches), enabling the simultaneous discovery of multiple optimal solutions. |

| Tabu Archive [32] | A memory structure that stores previously discovered optima; used to help the algorithm escape local optima and avoid re-exploring the same regions. |

| Utility/Acquisition Function [35] | A function (e.g., Expected Improvement) used in Bayesian optimization to decide the next most promising data point to evaluate, guiding the search efficiently. |

| Surrogate Model [35] | A machine learning model that approximates a computationally expensive objective function; used to make predictions during the optimization process. |

| Strategy Switching Mechanism [34] | A component within the Adaptive Strategy Management framework that dynamically alternates between different search strategies based on performance feedback. |

| Control Systems Controller [36] | A decision-making algorithm that uses personalized dynamic models to enable daily, perpetual adaptation of intervention parameters, such as goal settings. |

Workflow and System Diagrams

The following diagram illustrates the high-level logical flow of an adaptive control process that balances exploration and exploitation, integrating concepts from the cited research.

Adaptive Control Process Flow

The diagram below provides a more detailed look at the Adaptive Strategy Management (ASM) framework, which is a specific method for dynamic strategy switching.

Adaptive Strategy Management (ASM) Cycle

Troubleshooting Guides

Guide 1: Resolving Premature Convergence in IFOX

Problem: The IFOX algorithm converges too quickly to suboptimal solutions during the molecular search space exploration, leading to poor property prediction accuracy.

Explanation: Premature convergence occurs when the algorithm's exploitation phase dominates too early, preventing a thorough exploration of the molecular configuration space. This is particularly problematic in materials science where optimal molecular configurations may exist in narrow regions of the search space [37].

Solution Steps:

- Implement Adaptive Step-Size Parameter: Modify the dynamic scaling of the step-size parameter based on current solution fitness, allowing the algorithm to automatically adjust between exploration and exploitation phases [38].

- Verify Parameter Settings: Ensure the removal of four redundant hyperparameters (C1, C2, a, Mint) has been properly implemented, as these often contribute to premature convergence in the original FOX algorithm [37] [38].

- Introduce Fitness-Based Adaptation: Implement the fitness-based adaptive method that scales exploration intensity according to solution quality, giving poorer solutions more exploration capability [38].

Validation: Monitor the population diversity metric throughout iterations. A gradual decrease rather than sharp drop indicates proper balance between exploration and exploitation.

Guide 2: Handling High-Dimensional Molecular Feature Spaces

Problem: Performance degradation when processing high-dimensional molecular descriptors and fingerprints in property prediction tasks.

Explanation: Molecular property prediction often involves processing numerous descriptors including topological, electronic, constitutional, and physicochemical features, leading to the "curse of dimensionality" [39].

Solution Steps:

- Apply Feature Selection Integration: Integrate ReliefF and Copula entropy during initialization phase to handle high-dimensional data, as demonstrated in improved grey wolf optimization variants [38].

- Implement Competitive Guidance Strategy: Utilize a competitive guidance strategy for flexible search in high-dimensional spaces [38].

- Employ Differential Evolution Mechanisms: Apply differential evolution-based methods to enhance leader positioning and avoid local optima in high-dimensional scenarios [38].

Validation: Compare solution quality using subsets of features; optimal performance should maintain consistency across different feature subsets.

Frequently Asked Questions

FAQ 1: How does IFOX specifically address the exploration-exploitation balance in molecular search spaces?

IFOX incorporates a novel fitness-based adaptive method that uses a dynamically scaled step-size parameter to autonomously balance exploration and exploitation based on the current solution's fitness value [38]. Unlike the original FOX algorithm which used a static 50/50 ratio between phases [37], IFOX adjusts this balance continuously throughout the optimization process. For molecular property prediction, this means the algorithm can spend more time exploring complex molecular configuration spaces early in the process, then gradually shift toward exploiting promising regions where optimal molecular structures are likely to be found [38].

FAQ 2: What molecular representations work best with IFOX for property prediction?

Based on current research, multiple molecular representations can be effectively utilized with IFOX:

Table: Molecular Representations for IFOX Integration

| Representation Type | Best Use Cases | IFOX Compatibility |

|---|---|---|

| Graph-based Representations (GNN) | Capturing topological relationships between atoms [39] [40] | High - aligns with IFOX's pattern recognition |

| Molecular Fingerprints (ECFP) | Structural similarity assessment and virtual screening [39] [40] | Medium - requires feature dimension optimization |

| SMILES Sequences | Sequential molecular data processing [39] | Low - less optimal for IFOX's operational mechanics |

| Multimodal Approaches | Complex property prediction combining multiple data types [39] | High - benefits from IFOX's adaptive capabilities |

The most effective approach often involves combining graph-based representations for intra-molecule information with fingerprint-based methods for inter-molecule relationships [40].

FAQ 3: What metrics should I use to evaluate IFOX performance in molecular optimization?

Table: Performance Metrics for IFOX in Molecular Property Prediction

| Metric Category | Specific Metrics | Target Values |

|---|---|---|

| Solution Quality | Mean Best Fitness, Standard Deviation [37] [38] | 40% improvement over baseline FOX [38] |

| Convergence Behavior | Convergence Speed, Success Rate [37] | 880 wins, 228 ties, 348 losses against 16 algorithms [38] |

| Statistical Significance | Friedman Test, Wilcoxon Signed-Rank Test [37] [38] | Average rank of 5.92 among 17 algorithms [38] |

| Computational Efficiency | Function Evaluations, Processing Time [37] | Equivalent or better than original FOX with improved results [38] |

Experimental Protocols

Protocol 1: Benchmark Testing for Algorithm Validation

Objective: Validate IFOX performance against standard benchmark functions before molecular application.

Methodology:

- Test Suite Configuration: Utilize 20 classical benchmark functions (10 unimodal, 10 multimodal) and 61 CEC functions from CEC 2017, 2019, 2021, and 2022 [37] [38].

- Parameter Settings: Implement the reduced parameter set excluding C1, C2, a, and Mint as described in IFOX specifications [38].

- Comparison Framework: Test against 16 state-of-the-art optimization algorithms including LSHADE and NRO [38].

- Statistical Analysis: Apply Friedman and Wilcoxon signed-rank tests for performance validation [37] [38].

Success Indicators: Achieving competitive performance with an average rank of 5.92 among 17 algorithms and significant improvement over basic FOX [38].

Protocol 2: Molecular Property Prediction Workflow

Objective: Implement IFOX for accurate molecular property prediction in drug discovery applications.

Methodology:

- Data Preparation: Convert molecular structures to graph representations and calculate molecular fingerprints (ECFP) [40].

- IFOX Configuration: Initialize with adaptive exploration-exploitation parameters specific to molecular space characteristics.

- Optimization Cycle: Execute IFOX with fitness function based on property prediction accuracy.

- Validation: Test optimized molecular representations on held-out test sets using standard MPP metrics [39] [40].

The Scientist's Toolkit

Table: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application in IFOX-MPP |

|---|---|---|

| Graph Neural Networks (GNN) | Atom-level molecular graph representation [40] | Extracts structural features for fitness evaluation |

| Extended Connectivity Fingerprints (ECFP) | Molecular structural representation [40] | Provides similarity metrics for inter-molecule relationships |

| Tanimoto Coefficient | Similarity calculation between molecular fingerprints [40] | Enables construction of molecular similarity graphs |

| Graph Structure Learning (GSL) | Learning relationships between molecules [40] | Enhances molecular embeddings using inter-molecule information |

| Two-Level Graph Framework | Combines atom-level and molecule-level representations [40] | Provides comprehensive molecular representation for IFOX optimization |

| Benchmark Molecular Datasets | Standardized performance evaluation [39] | Validates IFOX performance against established baselines |

Overcoming Common Pitfalls: Premature Convergence and Local Optima Traps

This technical support guide provides researchers and scientists with practical methodologies for diagnosing and addressing premature convergence and over-exploration in evolutionary and materials search algorithms.

Frequently Asked Questions (FAQs)

What are the primary indicators of premature convergence in an optimization run?

Premature convergence is typically indicated by a rapid loss of population diversity and the algorithm getting trapped in a suboptimal solution. Key signs include [41] [42]:

- Rapid Decrease in Population Diversity: A sharp, early drop in genotypic or phenotypic diversity within the population.

- Stagnation of Fitness: The best or average fitness of the population stops improving long before the search is complete, and this plateau persists for a significant number of generations [41].

- Population Homologization: The population of candidate solutions becomes overly similar, clustering tightly around a single point in the search space that does not represent a global optimum [43].

- Dominance of Suboptimal Traits: A particular set of genes (solution components) begins to dominate the population early in the search process, preventing the exploration of other promising regions [42].

How can I distinguish between healthy exploitation and problematic over-exploitation?

The distinction lies in the balance and timing of the search process. Exploitation is healthy when it refines promising solutions discovered during a prior phase of broad exploration. Over-exploitation, which leads to premature convergence, occurs when this refinement happens too soon, cutting off the discovery of potentially better regions. A process that converges to a stable point too early, often close to the starting point of the search and with a worse evaluation than the global optimum, is a hallmark of premature convergence [41]. Configuring an algorithm to be less greedy (e.g., via a lower selective pressure) can help overcome this issue [41].

What are the common algorithmic factors that contribute to premature convergence?

Several factors inherent to algorithm design can trigger premature convergence [42]:

- Excessive Selective Pressure: Overly favoring the most-fit individuals in the population rapidly reduces diversity [41] [42].

- Insufficient Genetic Variation: Weak mutation rates or ineffective crossover operators fail to introduce enough new genetic material to escape local optima [44] [42].

- Small Population Size: A small population provides a limited sampling of the search space, making it easier for a suboptimal solution to dominate.

My algorithm explores constantly but fails to find good solutions. Is this over-exploration?

Yes, this is a classic sign of over-exploration or a lack of exploitation. The algorithm is spending too many resources sampling new, random areas of the search space without effectively refining and converging on the promising solutions it has already found. This results in slow convergence and an inability to locate a precise, high-quality optimum, manifesting as a failure to improve the best-found solution over time [30].

Diagnostic Tables for Common Problems

Table 1: Signs of Premature Convergence vs. Over-Exploration

| Symptom | Premature Convergence | Over-Exploration |

|---|---|---|

| Population Diversity | Rapidly decreases and remains low [43] [42] | Remains high throughout the run |

| Fitness Progress | Stagnates early at a suboptimal level [41] | Improves slowly or erratically without stabilizing |

| Best Solution Quality | Suboptimal local optimum | Poor, fails to refine |

| Primary Cause | Excessive selective pressure; insufficient mutation [41] [42] | Weak selective pressure; excessive random search |

Table 2: Quantitative Metrics for Diagnosis

| Metric | How to Measure It | Interpretation |

|---|---|---|

| Genotypic Diversity | Mean Hamming distance between individuals in the population [43]. | A consistently low value suggests premature convergence. |

| Fitness Stagnation Counter | Number of generations without improvement in best fitness [41]. | A high and steadily increasing count indicates convergence. |

| Selection Pressure | Rate at which population fitness variance decreases [42]. | A very high rate suggests risk of premature convergence. |

Experimental Protocols for Diagnosis

Protocol 1: Tracking Population Diversity

Objective: To quantitatively monitor the loss of diversity during an evolutionary run. Materials: Optimization algorithm, population data logger, distance metric (e.g., Hamming distance for genotypes, Euclidean distance for parameters). Methodology:

- At each generation (or at regular intervals), calculate the average distance between all pairs of individuals in the population.

- Plot this average distance over the course of the run.

- A sharp, exponential decay in the diversity plot is a clear indicator of premature convergence [43].

Protocol 2: Fitness Landscape Ruggedness Analysis

Objective: To understand the multi-modal nature of the problem, which influences convergence behavior. Materials: Sampling algorithm (e.g., random walk), local search operator. Methodology:

- Perform a broad, random sampling of the search space.

- From multiple starting points, perform a simple hill-climbing search.

- If the local searches consistently converge to many different fitness peaks, the landscape is highly multi-modal (rugged). Algorithms prone to premature convergence will perform poorly on such landscapes [41].

Workflow Diagrams

Diagram: Diagnostic & Remedial Workflow

Research Reagent Solutions

Table 3: Algorithmic "Reagents" for Balancing Search

| Research "Reagent" (Technique) | Function | Primary Use Case |

|---|---|---|

| Niching Methods [43] | Preserves sub-populations around different optima to maintain diversity. | Preventing premature convergence on multi-modal fitness landscapes. |

| Island Models [43] | Isolates sub-populations to encourage independent exploration, with periodic migration. | Maintaining high-level diversity and exploring multiple search regions in parallel. |

| Adaptive Mutation Rates [42] | Dynamically adjusts mutation probability based on population diversity metrics. | Reactively injecting diversity when the population becomes too homogeneous. |

| Hybrid Global-Local Strategies [30] | Combines global explorative algorithms (e.g., PSO) with local exploitative methods (e.g., gradient descent). | Overcoming stagnation by adding directed local search after a broad global exploration. |

| Fitness Sharing [43] | Reduces the effective fitness of individuals in crowded regions of the search space. | Promoting exploration of less crowded, and potentially promising, regions. |

| Tabu Search [42] | Maintains a memory of recently visited solutions to avoid cycling back to them. | Forcing the algorithm to explore new regions by explicitly forbidding a return to recent areas. |