Automated Procedures for Thermodynamic Stability and Chemical Potentials: A Guide for Materials and Drug Development

This article provides a comprehensive overview of automated computational and experimental procedures for determining material thermodynamic stability and synthesizable chemical potential ranges.

Automated Procedures for Thermodynamic Stability and Chemical Potentials: A Guide for Materials and Drug Development

Abstract

This article provides a comprehensive overview of automated computational and experimental procedures for determining material thermodynamic stability and synthesizable chemical potential ranges. It covers foundational algorithms like the Chemical Potential Limits Analysis Program (CPLAP) for stability testing against competing phases and explores their critical applications in predicting defect behavior in optoelectronics and optimizing developability in biologics. The content details advanced methodologies, including automated scientific workflows and closed-loop self-optimizing systems, and addresses key troubleshooting aspects for complex systems. Finally, it examines validation strategies through case studies in polymorphic perovskite alloys and antibody engineering, highlighting the transformative impact of these automated approaches on accelerating the discovery and development of stable materials and therapeutics.

Why Automate Stability Analysis? Core Concepts and Critical Need

In the research and development of new materials, particularly for applications in energy harvesting and optoelectronics, a fundamental challenge is ensuring that a proposed material is thermodynamically stable and identifying the specific chemical conditions required for its successful synthesis [1] [2]. The formation of any multi-element material is always in competition with the formation of other, often simpler, solid phases composed of subsets of its constituent elements [2]. An automated, computational procedure to determine thermodynamic stability addresses this challenge by providing a fast, reliable method to map the precise range of elemental chemical potentials necessary for a target material's formation relative to all competing phases [1]. This analysis is not just a theoretical exercise; it is a critical prerequisite for predicting defect properties and tailoring materials for specific technological applications, ensuring that research efforts are directed toward compounds that are synthesizable and stable [2].

Theoretical Foundation

The core principle underlying stability analysis is the condition of thermodynamic equilibrium in a system open to mass exchange [2]. The stability of a target material is determined by comparing its free energy of formation with the free energies of all possible competing phases within the same chemical system.

For a material with the general formula ( AxByCz ), the formation reaction from the elemental standard states can be written as: ( xA + yB + zC \rightarrow AxByCz ) The Gibbs free energy of formation, ( \Delta Gf ), for this reaction is given by: ( \Delta Gf = G(AxByCz) - x\muA - y\muB - z\muC ) where ( G(AxByCz) ) is the free energy of the material and ( \mui ) represents the chemical potential of element ( i ).

For the material to be stable, two primary conditions must be satisfied:

- Stability against Elements: The free energy of formation must be negative, ( \Delta G_f < 0 ), indicating that the compound is stable with respect to its constituent elements in their standard states [2].

- Stability against Competing Phases: The material must be stable against every other possible compound (e.g., ( ApBq ) ) in the system. This leads to a set of linear inequalities for each competing phase ( D ): ( \Delta Gf(AxByCz) < x\muA + y\muB + z\muC - \Delta Gf(D) )

The set of all such inequalities defines a region in an (n-1)-dimensional chemical potential space (where ( n ) is the number of elemental species), within which the target material is the thermodynamically most stable phase [1] [2]. The boundaries of this region are hypersurfaces corresponding to the equilibrium conditions between the target material and each competing phase.

Automated Computational Protocol

The algorithm automates the process of testing thermodynamic stability and determining the stable chemical potential range. The following workflow and protocol detail the step-by-step procedure.

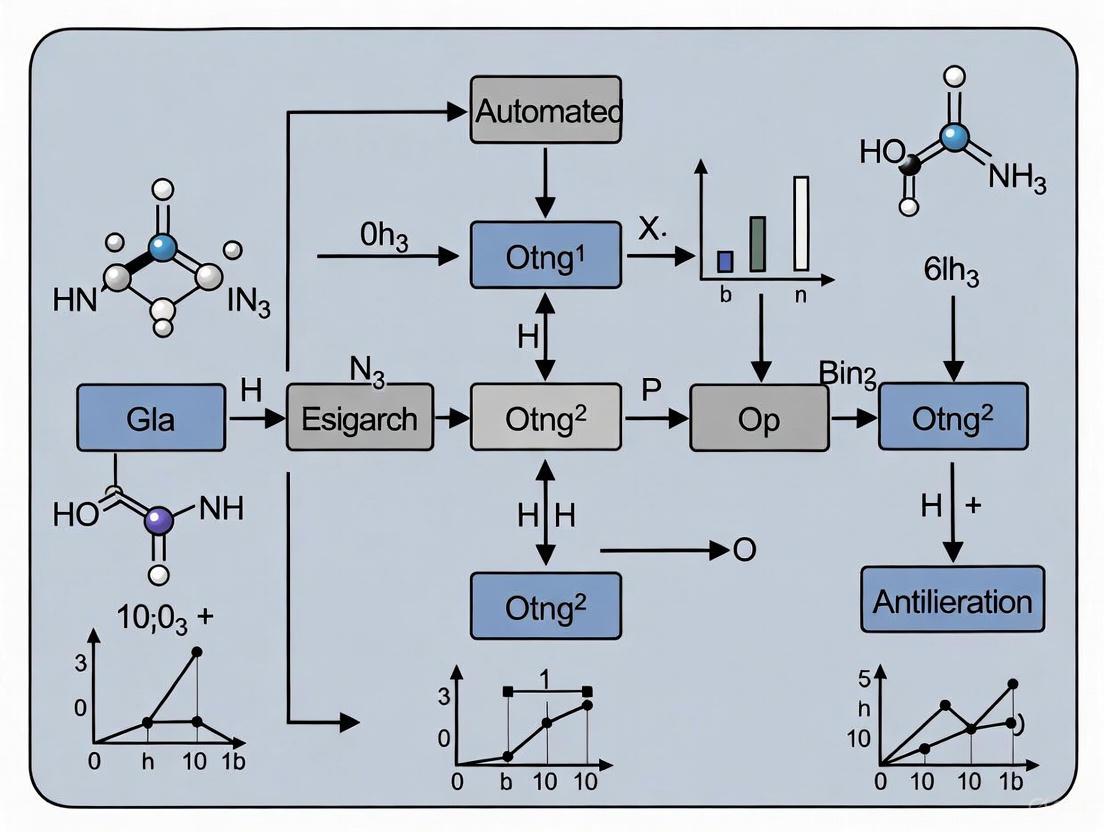

Workflow Diagram

The following diagram illustrates the logical flow of the automated algorithm for determining thermodynamic stability.

Step-by-Step Experimental Protocol

Protocol 1: Determining Thermodynamic Stability Using CPLAP

Objective: To test the thermodynamic stability of a target material and determine the range of elemental chemical potentials for its formation relative to all competing phases.

Software: Chemical Potential Limits Analysis Program (CPLAP) [1] [2].

Input Requirements:

- Free energy of formation of the target material.

- Free energies of formation for all known competing phases and elemental standard states.

- All energies must be calculated using the same level of theory and computational parameters.

Procedure:

Input Preparation:

- Specify the number of atomic species in the target compound.

- Input the names and stoichiometry of each species.

- Provide the free energy of formation of the target compound.

- Input the total number of competing phases.

- For each competing phase, provide the number of species, names, stoichiometry, and free energy of formation.

- This input can be provided via a file or interactively.

System Setup:

- The algorithm constructs a system of

mlinear equations withnunknowns, wheremis the number of conditions from competing phases andnis the number of independent chemical potentials [1] [2]. - The condition of stability against the elemental standard states reduces the dimensionality of the problem, making the chemical potential space (n-1)-dimensional [2].

- The algorithm constructs a system of

Solve Intersection Points:

- The algorithm solves all possible combinations of

nlinear equations from the set ofmequations. - Each solved combination provides a candidate intersection point in the chemical potential space.

- The algorithm solves all possible combinations of

Compatibility Check:

- Each candidate intersection point is tested against the full set of stability conditions (inequalities).

- Points that violate any condition are discarded.

Result Interpretation:

- Unstable Material: If no compatible intersection points are found, the material is declared thermodynamically unstable within the provided chemical environment [1].

- Stable Material: If compatible points are found, they define the corner points (boundaries) of the stability region in the chemical potential space.

Output and Visualization:

- The program outputs the stability result and the coordinates of the boundary points.

- For 2-D and 3-D chemical potential spaces, the program generates files for visualization with tools like GNUPLOT or MATHEMATICA [1].

Restrictions: The algorithm assumes the material growth environment is in thermal and diffusive equilibrium [1].

Data Presentation and Analysis

Algorithm Parameters and Specifications

Table 1: CPLAP Program Summary and Technical Specifications [1] [2].

| Parameter | Specification | Description |

|---|---|---|

| Program Title | CPLAP | Chemical Potential Limits Analysis Program |

| Catalogue Identifier | AEQOv10 | Identifier in the CPC Program Library |

| Programming Language | FORTRAN 90 | Core language for computational efficiency |

| Lines of Code | ~4301 | Including test data |

| RAM | 2 Megabytes | Minimal memory requirement |

| Running Time | < 1 Second | For typical problems |

| Input | Free energies, stoichiometries | Of target material and all competing phases |

| Primary Output | Stability result, boundary points | Range of chemical potentials for stable materials |

Example Analysis of a Ternary System

The application of this protocol is illustrated using the ternary system BaSnO₃, a transparent conducting oxide. The formation of cubic perovskite BaSnO₃ competes with phases like BaO, SnO, SnO₂, and BaSn₂ [2].

Table 2: Competing Phases and Stability Conditions for BaSnO₃ [2].

| Competing Phase | Stability Condition | Role in Defining Stability Region |

|---|---|---|

| BaO | ( \mu{Ba} + \mu{O} \leq \Delta G_f(BaO) ) | Prevents precipitation of BaO. |

| SnO₂ | ( \mu{Sn} + 2\mu{O} \leq \Delta G_f(SnO₂) ) | Prevents precipitation of SnO₂. |

| BaSn₂ | ( \mu{Ba} + 2\mu{Sn} \leq \Delta G_f(BaSn₂) ) | Prevents formation of Ba-Sn intermetallic. |

| O₂ gas (Standard State) | ( \mu_{O} \leq 0 ) | Upper limit for oxygen chemical potential. |

| Ba solid (Standard State) | ( \mu_{Ba} \leq 0 ) | Upper limit for barium chemical potential. |

| Sn solid (Standard State) | ( \mu_{Sn} \leq 0 ) | Upper limit for tin chemical potential. |

The intersection of the conditions derived from these competing phases yields a two-dimensional stability region for BaSnO₃ in the space of ( \Delta \mu{Ba} ) and ( \Delta \mu{Sn} ) (with ( \Delta \mu_{O} ) being dependent). The boundaries of this region are lines where BaSnO₃ is in equilibrium with a competing phase (e.g., BaSnO₃ + 2Sn BaSn₂ + O₃) [2].

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Computational Tools and Inputs for Thermodynamic Stability Analysis.

| Item / Reagent | Function / Role in Analysis |

|---|---|

| First-Principles Code (e.g., DFT) | Calculates the absolute free energy of the target material and competing phases, serving as the primary input for the stability algorithm [2]. |

| Crystal Structure Database (e.g., ICSD) | Provides a comprehensive list of known competing phases within the chemical system of interest to ensure all relevant compounds are considered [2]. |

| Chemical Potential Limits Analysis Program (CPLAP) | The core algorithm that automates the solution of linear inequalities and identifies the stability region in chemical potential space [1] [2]. |

| Visualization Software (e.g., GNUPLOT) | Generates 2D or 3D plots from the CPLAP output files, providing an intuitive visual representation of the stability region [1]. |

| Elemental Chemical Potentials (( \mu_i )) | The key variables of the analysis. Their allowable range defines the synthesis conditions (e.g., oxygen partial pressure, metal activity) under which the target material is stable [2]. |

Chemical Potentials as a Map for Synthesis

In the pursuit of novel materials for technological applications, from energy harvesting to optoelectronics, researchers face the fundamental challenge of determining which phases are thermodynamically stable and under what synthesis conditions they can form. The chemical potential (μ), which represents the change in free energy of a system when particles are added or removed, provides an essential map for navigating this complex synthesis landscape [3]. Within the context of automated procedures for assessing material thermodynamic stability, chemical potentials serve as crucial independent variables that define the necessary chemical environment for target material formation relative to competing phases [4]. This application note establishes how chemical potential analysis forms the foundation for predicting synthesis feasibility and optimizing growth conditions across diverse material systems.

The chemical potential is formally defined as the partial derivative of the Gibbs free energy with respect to particle number at constant temperature and pressure: μi = (∂G/∂Ni)T,P,Nj≠i [5]. This definition positions chemical potential as the molar Gibbs free energy for pure substances and the partial molar Gibbs free energy for mixtures [3]. In practical terms, particles naturally move from regions of higher chemical potential to lower chemical potential, analogous to objects moving from higher to lower gravitational potential [3]. This directional tendency drives diffusion, phase transitions, and chemical reactions, making chemical potential analysis indispensable for predicting material behavior during synthesis.

Theoretical Foundation

Fundamental Thermodynamic Relationships

The chemical potential appears in the fundamental thermodynamic equations for all major energy potentials [5]. For the internal energy U, enthalpy H, Helmholtz free energy F, and Gibbs free energy G, the differential forms are:

- dU = TdS - PdV + ΣμidNi

- dH = TdS + VdP + ΣμidNi

- dA = -SdT - PdV + ΣμidNi

- dG = -SdT + VdP + ΣμidNi

These relationships highlight that the chemical potential can be defined through multiple pathways: μi = (∂U/∂Ni)S,V,Nj≠i = (∂H/∂Ni)S,P,Nj≠i = (∂A/∂Ni)T,V,Nj≠i = (∂G/∂Ni)T,P,Nj≠i [5]. For synthesis applications where processes occur at constant temperature and pressure, the definition based on the Gibbs free energy is most practically useful.

For an ideal gas, the chemical potential exhibits a logarithmic dependence on pressure: μ = μ° + RT ln(P/P°), where μ° is the chemical potential at standard pressure P° [5]. For condensed phases under pressure, the chemical potential includes a PV work term: μ = μ° + V(P - P°), assuming minimal compressibility [5].

Equilibrium Conditions and Phase Stability

At thermodynamic equilibrium, the chemical potential of each species is uniform throughout the system [3]. For systems with multiple phases, this means μiα = μiβ for all phases α and β containing species i [3]. This equilibrium condition forms the basis for predicting phase stability and transformations.

In the context of material synthesis, a target material is thermodynamically stable relative to competing phases when its Gibbs free energy of formation is lower than the weighted sum of the free energies of all possible decomposition products [4]. This condition can be expressed through inequalities relating the chemical potentials of the constituent elements.

Table 1: Key Thermodynamic Relations Involving Chemical Potential

| Relation | Mathematical Expression | Application Context |

|---|---|---|

| Fundamental Definition | μi = (∂G/∂Ni)T,P,Nj≠i | General material systems |

| Ideal Gas Behavior | μ = μ° + RT ln(P/P°) | Gas-phase precursors |

| Condensed Phase | μ = μ° + V(P-P°) | Solids, liquids under pressure |

| Equilibrium Condition | μiα = μiβ for all phases α, β | Phase coexistence |

| Electrochemical Potential | μ~ = μ + zFφ | Electrochemical synthesis |

Computational Framework for Stability Analysis

Automated Stability Assessment Algorithm

The determination of a material's thermodynamic stability requires comparing its free energy of formation against all competing phases and compounds formed from its constituent elements [4]. For a material with n atomic species, the stability region exists in an (n-1)-dimensional chemical potential space, as the condition of stability relative to the elemental phases reduces the degrees of freedom by one [4].

The automated algorithm for stability assessment implemented in tools such as the Chemical Potential Limits Analysis Program (CPLAP) follows these key steps [4]:

- Input Preparation: Gather the free energies of formation for the target material and all competing phases, calculated using consistent theoretical methods.

- Constraint Formulation: For each competing phase, formulate linear inequalities based on the condition that the target material is more stable.

- Intersection Calculation: Solve all combinations of (n-1) equations to find intersection points in chemical potential space.

- Feasibility Check: Verify which intersection points satisfy all inequality constraints.

- Stability Region Definition: The compatible solutions define the corner points of the stability region.

If no feasible solutions satisfy all constraints, the material is thermodynamically unstable relative to the competing phases considered [4].

Diagram 1: Automated stability assessment workflow (76 characters)

Case Study: BaSnO₃ Stability Analysis

The application of this automated procedure can be illustrated with the ternary system BaSnO₃, an indium-free transparent conducting oxide [4]. The formation of cubic perovskite BaSnO₃ competes with phases including BaO, SnO, SnO₂, and other binary compounds [4].

The stability condition for BaSnO₃ relative to a competing phase ApBqCr can be expressed as:

ΔGf(BaSnO₃) < aμBa + bμSn + cμO

where a, b, and c are stoichiometric coefficients, and μBa, μSn, and μO are the chemical potentials of barium, tin, and oxygen, respectively [4]. By applying such constraints for all competing phases, the stable region of BaSnO₃ in the (μBa, μSn) plane (with μO determined by the stability condition) can be precisely determined [4].

Experimental Protocols

Protocol 1: Computational Determination of Chemical Potential Limits

This protocol details the procedure for determining the range of elemental chemical potentials over which a target material is thermodynamically stable [4].

Research Reagent Solutions

Table 2: Essential Computational Tools for Stability Analysis

| Tool/Resource | Function | Application Notes |

|---|---|---|

| First-Principles Code (e.g., DFT) | Calculate free energies of formation | Use consistent functional and parameters |

| Crystal Structure Database (e.g., ICSD) | Identify competing phases | Comprehensive coverage is critical |

| Stability Analysis Code (e.g., CPLAP) | Solve constraint equations | Handles multidimensional optimization |

| Visualization Software (e.g., GNUPLOT) | Plot stability regions | Essential for 2D/3D chemical potential spaces |

Step-by-Step Procedure

Identify Competing Phases

- Search crystal structure databases for all compounds containing subsets of elements in the target material.

- Include elemental phases as references (μelement = 0 defines the elemental standard state).

Calculate Free Energies

- Compute free energies of formation for target material and all competing phases using consistent computational parameters.

- Account for temperature effects through vibrational contributions where necessary.

Formulate Stability Constraints

- For each competing phase, write the inequality: ΔGf(target) < Σνiμi, where νi are stoichiometric coefficients.

- Include elemental boundary conditions: μi ≤ 0 (chemical potentials cannot exceed elemental reference states).

Solve Constraint System

- Input constraints into stability analysis program.

- Identify vertices of stability region by solving intersections of (n-1) constraint equations.

Validate and Interpret Results

- Verify that all vertices satisfy complete constraint set.

- Identify which competing phases define each boundary of the stability region.

- For quaternary or higher systems, consider fixing one chemical potential to reduce dimensionality.

Protocol 2: FMAP Method for Chemical Potential Calculation in Solutions

The FMAP (FFT-based Modeling of Atomistic Protein-crowder interactions) method provides an efficient approach for calculating excess chemical potentials in macromolecular solutions, which is essential for understanding liquid-liquid phase separation relevant to biomaterial synthesis and drug formulation [6].

Principle

FMAP implements Widom's particle insertion method but achieves computational efficiency by expressing intermolecular interactions as correlation functions evaluated via fast Fourier transform (FFT) [6]. The excess chemical potential is calculated as:

μex = -kBT ln⟨exp(-Uint/kBT)⟩

where Uint is the interaction energy of a test particle inserted into the system [6].

Step-by-Step Procedure

System Preparation

- Generate equilibrium configurations of the solution phase using molecular dynamics simulations.

- Ensure proper system size to minimize finite-size effects.

Interaction Grid Construction

- Discretize the simulation box into a uniform grid.

- Precalculate interaction potentials between the inserted particle and solution molecules.

FFT-Based Energy Calculation

- Express intermolecular interactions as correlation functions.

- Use FFT to efficiently compute insertion energies at all grid points.

Excess Chemical Potential Evaluation

- Average Boltzmann factors of insertion energies across all grid points and configurations.

- Apply overlap sampling or Bennett acceptance ratio methods for improved accuracy.

Phase Coexistence Determination

- Calculate chemical potentials over a range of densities.

- Apply Maxwell equal-area rule to locate coexistence conditions where chemical potentials are equal in both phases.

Diagram 2: FMAP computational workflow (52 characters)

Advanced Applications

Defect Engineering and Dopant Incorporation

Chemical potential control extends beyond phase stability to precisely engineer defect populations and dopant incorporation in functional materials [4]. The formation energy of a defect or dopant D in charge state q is given by:

ΔEf(Dq) = Etot(Dq) - Etot(bulk) - ΣΔniμi + q(EF + Ev) + Ecorr

where Δni is the change in number of atoms of type i, μi is their chemical potential, EF is the Fermi level, and Ev is the valence band maximum [4]. By synthesizing materials at different points within the stability region (different elemental chemical potential ratios), researchers can preferentially enhance or suppress specific defects, enabling controlled doping for electronic and optoelectronic applications.

Electrochemical Synthesis and Battery Materials

In electrochemical systems, the electrochemical potential μ~ = μ + zFφ combines the chemical potential with the electrostatic potential, providing the relevant free energy for ion insertion and extraction in battery electrodes [3] [7]. For tungsten-based materials in metal-ion batteries, controlling the chemical potential landscape during synthesis determines crystallographic phase, morphology, and ultimately electrochemical performance [7]. Computational analysis of lithium/sodium/potassium chemical potential ranges during synthesis guides the development of stable electrode materials with enhanced capacity retention.

Data Presentation and Analysis

Stability Region Documentation

For effective communication of synthesis guidelines, stability regions should be presented with complete boundary specifications. The following table template provides a standardized format for reporting chemical potential limits:

Table 3: Chemical Potential Stability Range Documentation

| Material System | Competing Boundary Phases | Chemical Potential Limits | Stability Region Volume |

|---|---|---|---|

| BaSnO₃ | BaO, SnO₂, BaSn₂O₄ | μBa: [-2.5, -1.8] eV, μSn: [-3.1, -2.4] eV | 0.28 eV² |

| Example Quaternary | AB, AC, AD, BC, ABC | μA: [-X.X, -X.X] eV, μB: [-X.X, -X.X] eV | X.XX eV³ |

| High-Entropy Alloy | Multiple intermetallics | Elemental ranges with mutual constraints | Multidimensional volume |

Validation with Experimental Synthesis

Computationally predicted stability regions must be validated through targeted synthesis experiments. The following protocol outlines this validation process:

- Select Synthesis Points: Choose representative points within, on the boundary of, and outside the predicted stability region.

- Design Synthesis Routes: Develop appropriate synthesis protocols (solid-state reaction, vapor deposition, solution processing) capable of controlling elemental chemical potentials.

- Characterize Products: Use XRD, electron microscopy, and elemental analysis to identify phase purity and composition.

- Refine Computational Parameters: Adjust initial computational parameters based on experimental discrepancies to improve predictive accuracy.

Chemical potential analysis provides an essential conceptual and computational framework for guiding material synthesis. By mapping stability regions in multidimensional chemical potential space, researchers can predict synthesis feasibility, select appropriate growth conditions, and strategically engineer defects and dopants. The automated procedures and protocols outlined in this application note establish a standardized methodology for incorporating chemical potential analysis into materials design workflows, accelerating the development of novel functional materials for energy, electronic, and biomedical applications.

Application Note: Predicting Thermodynamic Defects in Solid-State Materials

Background and Principle

The thermodynamic stability of a material is governed by its formation energy relative to all competing phases in a chemical system. The Chemical Potential Limits Analysis Program (CPLAP) implements an automated algorithm to determine this stability and the precise range of elemental chemical potentials required for a material's formation [2]. This analysis is fundamental for predicting intrinsic defect stability, as defect formation energies directly depend on these chemical potentials. When the chemical potential of an element is high, defects involving deficiencies of that element become less favorable, while excess-type defects become more likely. Accurately establishing this stability region prevents unphysical predictions of defect properties and guides experimental synthesis conditions [2].

Quantitative Stability Data

Table 1: Formation Energies and Competing Phases for a Model Ternary System (e.g., BaSnO₃)

| Material/Phase | Composition | Formation Energy (eV/atom) | Reference |

|---|---|---|---|

| Target Material | BaSnO₃ | -4.2 | Calculated |

| Competing Phase 1 | BaO | -2.1 | [2] |

| Competing Phase 2 | SnO₂ | -1.8 | [2] |

| Competing Phase 3 | BaSn₂ | -3.1 | [2] |

Table 2: Calculated Chemical Potential Limits for Stable BaSnO₃ Formation

| Element | Lower Bound (μ_min, eV) | Upper Bound (μ_max, eV) | Constraining Phase |

|---|---|---|---|

| Barium (Ba) | -1.5 | 0.0 (Std. State) | BaO |

| Tin (Sn) | -2.1 | 0.0 (Std. State) | SnO₂ |

| Oxygen (O) | -3.8 | -2.5 | BaO, SnO₂ |

Experimental Protocol: Stability and Defect Analysis via CPLAP

Methodology: This protocol outlines the procedure for using the CPLAP program to determine the thermodynamic stability region of a material and calculate the formation energy of a specific defect within that region [2].

Step-by-Step Procedure:

- Input Preparation: Compile the formation energies of the target material and all competing binary and ternary phases in the system. These energies must be calculated using a consistent theoretical framework (e.g., the same DFT functional).

- Program Execution: Run CPLAP, providing the required input files. Specify the number of elemental species and the independent chemical potential variable to be fixed (e.g., fixing μ_O to simulate oxygen-rich or oxygen-poor conditions).

- Stability Check: The algorithm automatically tests all linear combinations of stability conditions. The output will confirm whether the material is thermodynamically stable.

- Stability Region Visualization: If stable, the program generates output files containing the intersection points of the hypersurfaces that define the stable region. For 2D or 3D spaces, these files can be visualized with tools like GNUPLOT [2].

- Defect Formation Energy Calculation: Within the stable chemical potential region, select a specific point (a set of elemental chemical potentials) to evaluate the formation energy (ΔH) of a defect using the standard formula:

ΔH = E_{defect} - E_{pristine} - Σn_i μ_i + q(E_{VBM} + μ_e)where E is the total energy, ni is the number of atoms added/removed, and μi is the chemical potential.

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item/Solution | Function/Description |

|---|---|

| CPLAP Code | Fortran 90 program for automated thermodynamic stability and chemical potential limit analysis [2]. |

| DFT Software (VASP, Quantum ESPRESSO) | First-principles software for calculating the formation energy of the target material and all competing phases. |

| ICSD Database | Inorganic Crystal Structure Database; used to identify all known competing phases in the system [2]. |

| Visualization Tool (GNUPLOT) | Used to plot the calculated stability region in 2D or 3D chemical potential space [2]. |

Application Note: Optimizing Biologic Formulation Stability

Background and Principle

The stability of biologic drug products—including monoclonal antibodies, bispecifics, and antibody-drug conjugates (ADCs)—is a critical determinant of their efficacy and shelf life [8]. Instability manifests as aggregation, denaturation, oxidation, and deamidation, which can reduce bioactivity and increase immunogenicity. The transition from trial-and-error formulation to a data-driven approach using predictive stability modeling is key to accelerating development. This involves using machine learning (ML) and biophysical modeling to identify a stable formulation by understanding the molecule's behavior in different excipient environments before extensive lab testing [8].

Quantitative Stability Challenges and Solutions

Table 4: Common Biologic Stability Challenges and Predictive Modeling Solutions

| Challenge | Impact on Drug Product | ML-Predictive Solution |

|---|---|---|

| High-Concentration Formulation | Increased viscosity, aggregation risk [8]. | Models to predict protein-protein interactions and identify viscosity-reducing excipients. |

| Lyophilization (Freeze-Drying) | Protein damage upon freezing/reconstitution [8]. | Prediction of optimal cryoprotectants and lyophilization cycle parameters. |

| New Modalities (e.g., mRNA, Viral Vectors) | Increased sensitivity to enzymatic degradation [8]. | Predictive stability modeling for novel degradation pathways. |

Table 5: Key Excipients for Biologic Stabilization and Their Functions

| Excipient Category | Example Compounds | Stabilizing Function |

|---|---|---|

| Buffers | Histidine, Succinate, Phosphate | Control pH to minimize chemical degradation. |

| Surfactants | Polysorbate 20, Polysorbate 80 | Reduce interfacial-induced aggregation. |

| Sugars & Polyols | Sucrose, Trehalose, Sorbitol | Act as cryoprotectants and lyoprotectants. |

| Amino Acids | Arginine, Glycine, Proline | Suppress aggregation and reduce viscosity. |

| Antioxidants | Methionine, EDTA | Inhibit oxidation of methionine and other residues. |

Experimental Protocol: Predictive High-Concentration Formulation

Methodology: This protocol uses a combination of biophysical modeling and machine learning to efficiently develop a stable, high-concentration formulation for a monoclonal antibody, minimizing material consumption and development time [8].

Step-by-Step Procedure:

- Dataset Creation: Compile a historical dataset of formulation conditions (e.g., buffer type, pH, excipients) and corresponding stability metrics (aggregation rate, viscosity) for similar molecules.

- Feature Engineering: Define molecular descriptors and formulation parameters as input features for the ML model.

- Model Training: Train a machine learning model (e.g., Random Forest or Gradient Boosting Machine [9]) on the historical dataset to predict stability outcomes based on formulation inputs.

- In-silico Screening: Use the trained model to screen thousands of virtual formulation compositions, ranking them by predicted stability.

- Experimental Validation: Select the top 5-10 predicted formulations for laboratory testing. This includes: a. Sample Preparation: Prepare the biologic at the target concentration (e.g., >100 mg/mL) in the selected buffer/excipient conditions. b. Stability Monitoring: Subject the samples to accelerated stability studies (e.g., 4°C, 25°C, 40°C) and monitor for aggregates via Size Exclusion Chromatography (SEC-HPLC) and sub-visible particles via Micro-Flow Imaging. c. Viscosity Measurement: Measure viscosity using a micro-viscometer.

- Model Refinement: Use the experimental results to refine and retrain the predictive model, improving its accuracy for future projects.

Workflow Visualization

The Scientist's Toolkit

Table 6: Essential Tools for Advanced Biologics Formulation

| Item/Solution | Function/Description |

|---|---|

| Predictive Stability Platform | AI/ML-driven software (e.g., from specialized CROs) for in-silico formulation screening [8]. |

| Size Exclusion Chromatography (SEC-HPLC) | Analytical technique to quantify soluble aggregates and fragments in a biologic sample. |

| Dynamic Light Scattering (DLS) | Technique to measure hydrodynamic radius and detect sub-micron particles. |

| Micro-Flow Imaging | Provides count and image of sub-visible particles. |

| Forced Degradation Study Materials | Equipment and reagents for exposing the biologic to stress conditions (heat, light, agitation) to rapidly assess stability. |

A fundamental challenge in materials science and drug development is predicting whether a new compound will be thermodynamically stable under specific synthesis conditions. The thermodynamic stability of a material dictates whether its formation is favorable compared to other competing phases and compounds composed of the same elements [2]. This analysis is crucial for guiding the synthesis of novel materials for applications ranging from energy harvesting and transparent electronics to pharmaceutical solid forms, as stable materials present far fewer technological challenges when incorporated into devices or formulations [2].

The core of this analysis involves comparing the free energy of formation of the target material with that of all possible competing phases. Assuming thermodynamic equilibrium, the condition for stability produces a set of linear inequalities that constrain the allowable chemical potentials of the constituent elements [2]. For binary systems, this is straightforward, but for ternary, quaternary, or higher-order systems—which are of increasing interest for tuning functional properties—the calculation becomes combinatorially complex [2] [10]. Manual determination of the stability region is therefore tedious and error-prone. Automated algorithms like the Chemical Potential Limits Analysis Program (CPLAP) are designed to perform this essential analysis accurately and efficiently [2].

The CPLAP Algorithm: Core Principles and Operation

CPLAP is a Fortran 90 program that implements a simple and fast algorithm to test the thermodynamic stability of a multi-ternary material and determine the necessary chemical environment (range of elemental chemical potentials) for its formation relative to all competing phases [2].

Theoretical Foundation

The algorithm is based on the assumption that the growth environment is in thermal and diffusive equilibrium. The formation of a material, for instance, a binary compound ( AmBn ), occurs via the reaction ( mA + nB \leftrightarrow AmBn ). This formation competes with the formation of other phases, such as ( ApBq ), and the pure elemental standard states.

The fundamental condition for the stability of ( AmBn ) is that its formation free energy, ( \Delta Gf(AmB_n) ), must be negative and lower in energy than any combination of other phases that could be formed from the same elements. This translates to a set of linear inequalities involving the chemical potentials (( \mu )) of the constituent elements. For a compound with ( n ) atomic species, the stability region is bounded within an (( n-1 ))-dimensional chemical potential space [2]. Each competing phase defines a hypersurface in this space, and the region bounded by these hypersurfaces corresponds to the range of chemical potentials where the target material is stable.

Algorithmic Workflow

The CPLAP algorithm automates the process of finding this bounded region through the following logical steps [2]:

- Input: The user provides the number of elemental species, their names and stoichiometry in the target material, and its free energy of formation. The user must also input the total number of competing phases and, for each one, its stoichiometry and free energy of formation. It is critical that all energies are calculated or measured using the same consistent level of theory.

- Equation Formulation: The algorithm constructs a system of ( m ) linear equations with ( n ) unknowns (the independent chemical potentials). These equations are derived from the conditions that the target material is stable and that no competing phase is more stable.

- Solving Combinations: The algorithm solves all possible combinations of ( n ) equations from the total set of ( m ) equations to find their intersection points.

- Solution Validation: Each intersection point is checked to determine if it satisfies all the original inequality conditions. Points that fail are discarded.

- Output: If no valid solutions are found, the material is declared thermodynamically unstable. If valid points exist, they define the corner points (vertices) of the stability region. For 2D and 3D chemical potential spaces, the program can generate files for visualization with tools like GNUPLOT or Mathematica.

The following diagram illustrates this workflow:

Figure 1: Logical workflow of the CPLAP algorithm for determining thermodynamic stability.

Essential Research Reagent Solutions

The following table details the key "reagents" or essential inputs required to execute a thermodynamic stability analysis using an algorithm like CPLAP.

Table 1: Essential Research Reagent Solutions for CPLAP Analysis

| Research Reagent | Function in the Analysis |

|---|---|

| Target Material Free Energy of Formation (( \Delta G_f )) | The fundamental thermodynamic quantity for the compound whose stability is being investigated. It serves as the baseline for all comparisons with competing phases [2]. |

| Competing Phases Free Energies (( \Delta G_{f,comp} )) | The free energies of formation for all other possible compounds (e.g., binaries, ternaries) and elemental standard states that can be formed from the constituent elements. A comprehensive and accurate set is crucial for a correct stability assessment [2]. |

| Consistent Computational Method | A uniform level of theory (e.g., a specific DFT functional, dispersion correction) must be used to calculate all free energies. This ensures internal consistency and avoids unphysical predictions arising from mixing data of different accuracies [2] [10]. |

| Elemental Chemical Potentials (( \mu_i )) | The variables of the analysis. They represent the energy state of each elemental component in the growth environment. The algorithm's goal is to find the range of these values where the target material is stable [2]. |

Application Notes and Protocols

This section provides a detailed methodology for applying CPLAP to determine the thermodynamic stability of a material, using a ternary system as an example.

Protocol: Determining Thermodynamic Stability with CPLAP

Objective: To computationally determine the thermodynamic stability of a ternary material (e.g., BaSnO₃) and the range of elemental chemical potentials (Ba, Sn, O) required for its successful synthesis.

I. Pre-Analysis Phase: Input Generation

Identify Competing Phases:

- Conduct a thorough search of chemical databases (e.g., the Inorganic Crystal Structure Database) to identify all known solid phases in the Ba-Sn-O system and the relevant binary subsystems (Ba-O, Sn-O) [2].

- For BaSnO₃, key competing phases include BaO, SnO, SnO₂, and the elemental standard states (Ba, Sn, O₂) [2].

Calculate Free Energies of Formation:

- Using a consistent computational framework (e.g., Density Functional Theory with a specific functional and pseudopotential), calculate the free energy of formation at the athermal limit (( T = 0 ) K, approximating free energy with internal energy) for:

- The target material: BaSnO₃.

- All identified competing phases.

- Critical Note: The accuracy of the final result is entirely dependent on the consistency and completeness of this input data [2].

- Using a consistent computational framework (e.g., Density Functional Theory with a specific functional and pseudopotential), calculate the free energy of formation at the athermal limit (( T = 0 ) K, approximating free energy with internal energy) for:

II. Execution Phase: Running CPLAP

Prepare Input File:

- Format the input data as required by CPLAP. This includes:

- Number of species in the target compound (e.g., 3 for Ba, Sn, O).

- Names and stoichiometry of these species.

- Free energy of formation of the target compound.

- Total number of competing phases.

- For each competing phase, its stoichiometry and free energy of formation.

- Format the input data as required by CPLAP. This includes:

Execute the Program:

- Run the CPLAP executable. The program will automatically perform the algorithm outlined in Section 2.2.

III. Post-Analysis Phase: Interpreting Output

Stability Result:

- The primary output will state whether the material is thermodynamically stable or unstable relative to the provided set of competing phases.

Stability Region Data:

- If stable, CPLAP outputs the intersection points that define the vertices of the stability region in the (( n-1 ))-dimensional chemical potential space. For a ternary system like BaSnO₃, this is a 2D space (e.g., ( \Delta \mu{Ba} ) vs ( \Delta \mu{Sn} ), with ( \Delta \mu_O ) determined by the stability condition).

- The output also specifies which competing phase (or element) is responsible for each bounding surface of the stability region.

Visualization (Optional):

- For 2D and 3D spaces, CPLAP generates data files compatible with visualization tools like GNUPLOT. This allows for the creation of a phase diagram that clearly shows the stable region.

Visualization of the Chemical Potential Space

The output of a CPLAP analysis for a stable ternary material can be visualized as a bounded region in a 2D chemical potential space, as shown below. The axes represent the chemical potentials of two elements relative to their standard states (e.g., ( \Delta \mu{Ba} = \mu{Ba} - \mu_{Ba, \text{standard}} )).

Figure 2: Schematic representation of the stability region for a ternary material like BaSnO₃. The shaded polygon represents the combination of Ba and Sn chemical potentials for which BaSnO₃ is stable. Each edge of the polygon corresponds to equilibrium with a different competing phase (e.g., BaO, SnO₂).

Table 2: Key Output Metrics from a CPLAP Analysis of a Hypothetical Ternary Material

| Output Metric | Description | Significance for Synthesis |

|---|---|---|

| Maximum ( \Delta \mu_A ) | The highest permissible chemical potential for element A before phase ApBq becomes more stable. | Defines the A-rich limit of the synthesis window. |

| Minimum ( \Delta \mu_A ) | The lowest permissible chemical potential for element A before elemental A precipitates. | Defines the A-poor limit of the synthesis window. |

| Stability Region Area | The size of the bounded region in chemical potential space. | A larger area indicates a more robust material, easier to synthesize under a wider range of conditions. |

| Bounding Competing Phases | The list of phases that form the boundaries of the stability region. | Identifies the most likely impurities to form if synthesis conditions deviate from the optimal range. |

Advanced Applications and Integration in Broader Workflows

The power of automated stability analysis extends beyond simple ternary compounds. Recent research highlights its integration into larger, automated computational workflows. For instance, the SimStack framework employs an automated workflow to model polymorphic features and thermodynamic stability in complex metal halide perovskite alloys (e.g., MA1-xCsxPbI3 and FA1-xCsxPbI3) [10]. This workflow seamlessly integrates cluster expansion with the generalized quasichemical approximation (GQCA) to handle configurational disorder and calculate phase diagrams, incorporating sophisticated relativistic effects like spin-orbit coupling [10].

Furthermore, the determination of an accurate stability region is a critical prerequisite for modeling point defects in materials. The formation energy of a defect depends directly on the chemical potentials of the constituent elements [2]. Knowledge of the full stability range is therefore essential for predicting which defects will form preferentially under given synthesis conditions, enabling the rational design of materials with specific electronic or optical properties, such as p-type transparent conductors [2].

How It Works: Automated Algorithms and Workflows in Action

The Chemical Potential Limits Analysis Program (CPLAP) is an automated algorithm designed to determine the thermodynamic stability of a material and the precise range of chemical potentials required for its synthesis relative to competing phases [2]. This tool addresses a fundamental challenge in materials science, particularly for multi-component systems where manual stability analysis becomes prohibitively complex [2].

The algorithm is especially valuable for theoretical and computational studies of advanced materials for technological applications such as energy harvesting, transparent electronics, and optoelectronics [2]. For ternary systems, the stability calculation, while straightforward, becomes tedious with many competing phases, and for quaternary or higher-order systems, the process becomes substantially more complicated due to the large number of competing phases and independent variables [2].

Theoretical Foundation

Core Thermodynamic Principle

The fundamental assumption underlying CPLAP's analysis is that the growth environment is in thermal and diffusive equilibrium [2]. The algorithm tests whether the formation of a target material is thermodynamically favorable compared to all possible competing phases and the elemental standard states.

For a binary compound (AmBn) forming via the reaction (mA + nB \leftrightarrow AmBn), the formation energy is:

[ \Delta Gf(AmBn) = G(AmBn) - m\muA - n\mu_B ]

where (G(AmBn)) is the free energy of the compound, and (\muA) and (\muB) are the chemical potentials of elements A and B, respectively [2].

Stability Conditions

For the target material to be stable, two conditions must be satisfied:

Stability against elements: The formation energy must be negative:

[ \Delta Gf(AmB_n) < 0 ]

Stability against competing phases: The formation energy must be lower than that of any decomposition pathway into competing phases. For each competing phase (C), this imposes a linear inequality:

[ \Delta Gf(AmBn) < a\muA + b\mu_B + \cdots ]

where (a, b, \ldots) are stoichiometric coefficients [2].

The chemical potentials are referenced to their standard states, with the energy per atom in its standard state set as the zero of chemical potential for that element [2].

The CPLAP Algorithm: A Step-by-Step Breakdown

Input Requirements

The algorithm requires the following input data, which must be calculated or measured prior to execution using a consistent level of theory [2]:

Table 1: CPLAP Input Requirements

| Input Parameter | Description | Format |

|---|---|---|

| Number of Species | Atomic species in the target material | Integer |

| Species Names & Stoichiometry | Element symbols and their proportions in the material | Chemical formula |

| Free Energy of Formation | Free energy of the target material | Numerical value (eV/atom or similar) |

| Competing Phases Data | Number, stoichiometry, and free energy of all competing phases | List of compounds with energies |

The user must extensively search chemical databases and calculate all competing phase energies using the same computational parameters as for the target material to ensure consistency [2].

Algorithm Workflow

The CPLAP algorithm implements the following computational workflow:

Figure 1: CPLAP Algorithm Workflow

Core Computational Procedure

The algorithm executes these specific mathematical operations:

Dimensionality Reduction: The condition that the target material is stable constrains the elemental chemical potentials, reducing the space spanned by the chemical potentials to (n-1) dimensions, where n is the number of atomic species [2].

Hypersurface Intersection: Each competing phase and standard state defines a hypersurface in the (n-1)-dimensional chemical potential space. The algorithm finds all intersection points of these hypersurfaces by solving all combinations of n linear equations from the m available equations (where m > n) [2].

Solution Validation: Each intersection point is tested against all constraint inequalities to determine if it satisfies every condition. If no valid solutions exist, the material is declared thermodynamically unstable [2].

Stability Region Definition: Valid intersection points form the vertices of the stability region polygon/polyhedron in chemical potential space. The region bounded by these points contains all chemical potential values for which the target material is stable [2].

Output Interpretation

CPLAP generates several output components:

Table 2: CPLAP Output Components

| Output Component | Description | Utility |

|---|---|---|

| Stability Result | Binary determination of material stability | Immediate go/no-go decision |

| Intersection Points | Chemical potential values at stability region boundaries | Quantitative stability limits |

| Phase Associations | Which competing phase defines each boundary point | Guides synthesis conditions |

| Visualization Files | Data files for GNUPLOT or MATHEMATICA | Enables 2D/3D visualization of stability region |

For two- and three-dimensional chemical potential spaces, the program generates files for visualization of the stability region using standard plotting tools [2].

Application Example: BaSnO₃ Stability Analysis

Experimental Setup

To demonstrate CPLAP's application, consider the ternary system BaSnO₃ (cubic perovskite), an indium-free transparent conducting oxide, competing with phases BaO, SnO, SnO₂, and BaSn₂ [2].

Table 3: Research Reagent Solutions for Stability Analysis

| Material/Reagent | Function in Analysis | Theoretical Treatment |

|---|---|---|

| BaSnO₃ Target Phase | Primary material whose stability is being assessed | DFT calculation of formation energy |

| Competing Phases (BaO, SnO, SnO₂, BaSn₂) | Reference states for stability comparison | Database energies or DFT calculations |

| Elemental Standards (Ba, Sn, O) | Reference states for chemical potential zero points | Elemental crystal structure energies |

| DFT Calculation Package | Electronic structure calculations | VASP, Quantum ESPRESSO, or similar |

| Crystal Structure Database | Source of competing phase structures | Inorganic Crystal Structure Database (ICSD) |

Chemical Potential Space Visualization

For the ternary system BaSnO₃, the chemical potential space is two-dimensional after accounting for the stoichiometric constraint. The visualization would show the stability region polygon bounded by lines representing each competing phase.

Figure 2: BaSnO₃ Chemical Potential Space Construction

Protocol for Stability Determination

Protocol 1: Complete Thermodynamic Stability Analysis

System Preparation

- Identify all constituent elements of the target material (e.g., Ba, Sn, O for BaSnO₃)

- Define the target material's crystal structure and stoichiometry

Competing Phase Enumeration

- Search crystal structure databases (ICSD) for all known phases in the constituent systems

- Include all binary and ternary compounds formed from subsets of the elements

- Include elemental standard states (pure elements in their reference structures)

Energy Calculation

- Calculate formation energies for target material and all competing phases

- Use consistent computational parameters (DFT functional, k-points, cutoff energy)

- Ensure all calculations are performed at the same level of theory

CPLAP Execution

- Prepare input file with all formation energies and stoichiometries

- Execute CPLAP algorithm to determine stability and chemical potential ranges

- For unstable materials, identify the competing phases that cause instability

Results Interpretation

- Analyze the stability region polygon vertices and boundaries

- Identify the competing phase associated with each stability boundary

- Extract practical synthesis conditions from chemical potential ranges

Advanced Applications

Defect Formation Energy Prediction

Beyond intrinsic stability, CPLAP's chemical potential ranges are crucial for predicting defect behavior. Defect formation energies depend on chemical potentials, and knowledge of the full stability range is essential for predicting which defects form favorably under specific synthesis conditions [2]. This enables targeted materials design, such as producing p-type materials by identifying chemical environments that favor acceptor defect formation [2].

Higher-Order Systems

While the BaSnO₃ example demonstrates a ternary system, CPLAP's principal advantage emerges with higher-order systems. For quaternary, quinternary, and more complex materials, manual stability analysis becomes intractable, while CPLAP systematically handles the increasing complexity through its automated intersection-finding algorithm [2].

Technical Specifications

CPLAP is implemented in FORTRAN 90 and is distributed with a standard CPC license [2]. The program requires minimal computational resources (approximately 2 MB RAM) and executes in less than one second for typical problems [2]. The source code is available through the CPC Program Library and GitHub repository [2] [11].

Leveraging Automated Scientific Workflows (e.g., SimStack)

The growing complexity of computational materials science necessitates robust frameworks that can streamline multiscale simulations. SimStack is an intuitive workflow framework designed to facilitate the efficient implementation, adoption, and execution of complex simulation workflows, enabling fast uptake of modeling techniques for advanced functional materials and nanomaterials by industry [12]. This platform addresses key challenges in computational research by providing automation, reproducibility, reusability, and transferability of simulation protocols, dramatically reducing the time and effort required to set up new or existing workflows while hiding the complexity of high-performance computing (HPC) resources [13] [14].

SimStack enables rapid prototyping of complex multiscale workflows for materials design through several key features. New modules from any source (academic or commercial) can be incorporated within minutes without advanced coding knowledge, as a graphical user interface (GUI) is automatically generated when a new module is incorporated [15]. The drag-and-drop development environment allows researchers to adapt simulation workflows easily, with parameters and files automatically transferred between individual modules upon execution. The specialized client-server setup enables one-click execution on remote resources and convenient job monitoring, eliminating the need for direct SSH access for workflow developers and end-users [15].

SimStack Architecture and Core Components

Client-Server Architecture

The SimStack workflow framework operates on a lightweight client-server concept connected via the secure shell (SSH) protocol [14]. The client provides a GUI for the end-user to construct, modify, and configure workflows, submit them to the server component on remote HPC resources, monitor submitted workflows, and browse and retrieve generated data [14]. This architecture effectively hides the complexity of job submission, monitoring, and file transfer, making advanced computational methods accessible to non-experts [12].

The server component, deployed on remote computational resources (either in-house or in the cloud for on-demand SaaS), handles the actual execution. This setup allows for on-demand scaling and pay-per-use of cloud resources, eliminating up-front costs and reducing the time and expense associated with setting up modeling solutions [12]. Since only the SimStack Client is installed at the end-user side while complex software modules reside on the remote resource, maintenance overhead is significantly reduced [12].

Workflow Active Nodes (WaNos)

The fundamental building blocks of SimStack workflows are Workflow Active Nodes (WaNos). Each WaNo represents a discrete step in the workflow execution and contains an XML file describing the expected input, configurable parameters, the output generated by the WaNo, and the code to be executed [14]. SimStack employs the Jinja templating engine to incorporate user input, allowing specific parameters to be easily exposed via the GUI and included as command line parameters or in script and input file templates [14]. This templating approach transforms static scripts into user-configurable building blocks with graphical interfaces within minutes, enabling simple incorporation of any arbitrary software or script routinely used on HPC resources [14].

Table: Core Components of the SimStack Framework

| Component | Function | Key Features |

|---|---|---|

| SimStack Client | Graphical interface for workflow design and monitoring | Drag-and-drop environment, automated GUI generation, connection to remote resources |

| SimStack Server | Backend execution on HPC resources | Handles job submission, data transfer, and workflow coordination on remote systems |

| WaNo (Workflow Active Node) | Basic workflow building block | XML-based definition, configurable parameters, template-based code execution |

| WaNo Architect | Intelligent graphical editor for WaNo development | Assists software incorporation, maximizes developer productivity |

Application Note: Thermodynamic Stability Analysis of Perovskite Alloys

A compelling demonstration of SimStack's capabilities in thermodynamic stability research can be found in the automated workflow for analyzing thermodynamic stability in polymorphic perovskite alloys, as documented in npj Computational Materials [10]. This study explored the polymorphic features of pseudo-cubic A₁₋ₓCsₓPbI₃ (where A = MA, FA) alloys, focusing on how mixing organic and inorganic cations affects their structural and electronic properties, configurational disorder, and thermodynamic stability [10].

The research employed an automated cluster expansion within the generalized quasichemical approximation (GQCA) to investigate these complex systems. Results revealed how the effective radius of the organic cation (rₘₐ = 2.15 Å, rғᴀ = 2.53 Å) and its dipole moment (μₘₐ = 2.15 D, μғᴀ = 0.25 D) influence Glazer's rotations in the perovskite sublattice [10]. The MA-based alloy exhibited a higher critical temperature (527 K) and was stable for x > 0.60 above 200 K, while its FA analog had a lower critical temperature (427.7 K) and was stable for x < 0.15 above 100 K [10].

Key Findings and Significance

The SimStack-enabled methodology provided significant insights into the thermodynamic behavior of these complex systems. The workflow allowed comprehensive calculations of thermodynamic properties, phase diagrams, optoelectronic insights, and power conversion efficiencies while meticulously incorporating crucial relativistic effects like spin-orbit coupling (SOC) and quasi-particle corrections [10]. This structured approach revealed high power conversion efficiencies of about 28% for MA₁₋ₓCsₓPbI₃ with 0.50 < x < 1.00 and 31-32% for FA₁₋ₓCsₓPbI₃ with 0.0 < x < 0.20 as thermodynamically stable compositions at room temperature [10].

The study underscored the pivotal role of composition and polymorphic degrees in determining the stability and optoelectronic properties of metal halide perovskite (MHP) alloys, demonstrating SimStack's effectiveness in advancing our understanding of these materials [10]. By automating the complex simulation protocol, the workflow enabled researchers to efficiently map the composition dependence of properties that would otherwise require high financial costs for reagents and material characterization through experimental approaches [10].

Experimental Protocols and Workflow Design

Automated Workflow for Thermodynamic Stability Analysis

The automated workflow for thermodynamic stability analysis implemented in SimStack followed a structured protocol to ensure comprehensive characterization of the perovskite alloy systems. The methodology leveraged first-principles investigations combined with cluster expansion techniques within the generalized quasichemical approximation to capture the configurational semi-local disorder in MHP alloys from a statistical ensemble approach [10].

The workflow maintained accuracy at the ab initio level while incorporating necessary relativistic corrections crucial for metal halide perovskites, including GW approximation and spin-orbit coupling for accurate gap energy mapping [10]. This approach enabled the research team to construct a reliable statistical ensemble for mixed metal halide perovskites that properly accounted for polymorphic contributions, which presents significant challenges in traditional computational approaches [10].

Key Methodological Steps

Table: Key Methodological Steps in the Perovskite Thermodynamic Stability Workflow

| Step | Method/Technique | Purpose | Key Parameters |

|---|---|---|---|

| Structural Optimization | Density Functional Theory (DFT) | Determine equilibrium geometry of polymorphic structures | Lattice constants, Pb-I distances, Pb-I-Pb angles |

| Cluster Expansion | Automated cluster expansion within GQCA | Model configurational disorder in alloys | Effective cation radius, dipole moments |

| Electronic Structure Analysis | DFT with spin-orbit coupling (SOC) | Calculate electronic properties with relativistic effects | Band gap, density of states |

| Thermodynamic Property Calculation | Generalized quasichemical approximation | Determine thermodynamic stability and phase behavior | Critical temperature, stability ranges |

| Efficiency Prediction | Spectroscopic limited maximum efficiency (SLME) model | Predict power conversion efficiency for solar applications | Power conversion efficiency (PCE) |

Visualization of Workflow Architecture

SimStack Client-Server Architecture Diagram

Thermodynamic Stability Workflow Diagram

Research Reagent Solutions and Computational Tools

Table: Essential Computational Tools for Automated Thermodynamic Stability Workflows

| Tool/Category | Specific Examples | Function in Workflow |

|---|---|---|

| Electronic Structure Codes | VASP, Quantum ESPRESSO, ABINIT | First-principles calculation of structural and electronic properties |

| Molecular Dynamics Engines | LAMMPS, GROMACS, NAMD | Simulation of dynamic processes and thermal behavior |

| Cluster Expansion Tools | ATAT, CASM | Modeling of configurational disorder in alloy systems |

| Thermodynamic Analysis | Custom GQCA implementations, pymatgen | Calculation of phase diagrams and stability ranges |

| Workflow Management | SimStack WaNos, AiiDA, Fireworks | Orchestration of multiscale simulation protocols |

| Relativistic Corrections | SOC implementations, GW codes | Accurate electronic structure treatment for heavy elements |

| Data Analysis | Python, Jupyter notebooks | Processing of simulation results and efficiency calculations |

Implementation Considerations

Deployment and Module Integration

Implementing SimStack for thermodynamic stability research requires careful consideration of deployment strategies. The SimStack server must be made available on remote high-performance computing resources, typically institute clusters or cloud-based HPC facilities [15]. Researchers then install the SimStack client locally on their laptop or desktop computers, which is available through the official SimStack distribution channels [15].

A key advantage of SimStack is its flexibility in incorporating new computational modules. To integrate a new simulation tool, researchers create a Workflow Active Node (WaNo) consisting of XML files combined with scripts that define the execution command, expected input and output, along with essential adjustable parameters [15]. This process enables computational experts and non-experts to provide a GUI for a particular application quickly, making advanced simulation methods more accessible to the broader research community [14].

Advantages for Thermodynamic Stability Research

The application of SimStack to thermodynamic stability studies of materials offers several significant advantages. By formalizing complex simulation protocols into reusable workflows, SimStack ensures correct usage and consistency among identical and similar simulations, addressing a critical challenge in computational research where incorrect usage is often the source of errors in simulations [14]. The framework's ability to capture the full simulation process in a formalized workflow enhances reproducibility, which has been identified as a major challenge across scientific fields [14].

For thermodynamic stability investigations specifically, SimStack enables meticulous incorporation of all critical relativistic effects, such as spin-orbit coupling and quasi-particle corrections, while managing the intricate calculations required for complex alloy systems [10]. This structured approach provides a more accurate representation of material behavior in real-world conditions, facilitating the rational design of thermodynamically stable compositions for specific applications such as photovoltaics [10].

Integrating First-Principles Calculations with Cluster Expansion

First-principles calculations, primarily based on density functional theory (DFT), provide a foundational approach for computing the electronic structure and energy of materials from quantum mechanical principles. For complex systems with configurational disorder, such as alloys, directly applying DFT to every possible atomic arrangement is computationally intractable. The cluster expansion (CE) method addresses this by creating a mathematically rigorous surrogate model that maps the configuration-dependent energy of a system onto a polynomial function of occupation variables. Integrating these two methods enables the accurate and efficient prediction of thermodynamic stability in materials, forming a powerful toolkit for automated materials research. This integration is pivotal for high-throughput screening and the design of novel materials, from high-entropy alloys to energy storage compounds, by providing access to finite-temperature properties and phase stability across vast compositional spaces [16] [17].

Theoretical Foundation

First-Principles Calculations

Density functional theory serves as the primary engine for first-principles calculations in materials science. It approximates the many-body Schrödinger equation by mapping a system of interacting electrons onto a system of non-interacting electrons moving in an effective potential, making computational studies of complex materials feasible [18]. The accuracy of DFT depends critically on the exchange-correlation (xc) functional. Semi-local functionals like the Local-Density Approximation (LDA) or Generalized-Gradient Approximation (GGA) are computationally efficient but often fail for systems with strongly localized d or f electrons due to electron self-interaction errors [18]. To overcome this, Hubbard-corrected DFT (DFT+U+V) adds corrective terms. The onsite U term penalizes fractional occupation of orbitals on atomic sites, while the intersite V term stabilizes states between two atoms [18]. The total energy in DFT+U+V is given by:

[

E{\text{DFT}+U+V} = E{\text{DFT}} + E_{U+V}

]

The first-principles determination of Hubbard parameters (U, V) is essential for accuracy and can be automated using frameworks like aiida-hubbard, which employs density-functional perturbation theory (DFPT) for efficient computation [18].

Cluster Expansion Formalism

The cluster expansion is a surrogate model that describes the configuration-dependent energy of a multi-component crystal. For a binary alloy with components A and B, each crystal site i is assigned an occupation variable s_i = +1 for A and -1 for B. A specific atomic arrangement is described by the vector (\vec{s} = (s1, \dots, sN)) [16].

The cluster expansion expresses the energy of a configuration as a sum over clusters of sites [16]: [ E(\vec{s}) = \sum{c} Vc \Phic(\vec{s}) ] Here, ( \Phic(\vec{s}) = \prod{i \in c} si ) is the cluster basis function (a product of occupation variables for the sites in cluster c), and ( V_c ) is the Effective Cluster Interaction (ECI) for that cluster.

Leveraging crystal symmetry, the expansion is typically written per unit cell as a sum over orbits of symmetrically equivalent clusters (\Omegac) [16]: [ e(\vec{s}) = \sum{\Omegac} wc \xic(\vec{s}) ] Here, ( \xic(\vec{s}) ) is the correlation function for orbit ( \Omegac ), and ( wc ) is the ECI incorporating the multiplicity per unit cell.

The CE Hamiltonian can be parameterized from a limited set of DFT calculations. To capture long-ranged strain interactions that decay slowly in real space, the Mixed-Space Cluster Expansion (MSCE) was developed. In MSCE, short-ranged chemical interactions are modeled in real space, while long-ranged strain interactions are treated in reciprocal space (k-space), enabling accurate modeling of size-mismatched alloys [17].

Integrated Computational Workflow

The integration of first-principles calculations and cluster expansion follows a systematic workflow to transition from fundamental quantum mechanics to predictive thermodynamic models. The schematic below illustrates this multi-stage process.

Figure 1. Integrated workflow from first-principles calculations to thermodynamic property prediction. The process begins with foundational DFT calculations, progresses through cluster expansion parameterization, and culminates in statistical mechanics simulations for property prediction.

Workflow Description

The integrated workflow consists of three primary stages:

First-Principles Foundation: This stage involves performing accurate DFT calculations. Key considerations include the choice of pseudopotentials (e.g., Projector Augmented-Wave method) and exchange-correlation functional. For systems with localized electrons, self-consistent calculation of Hubbard U and V parameters is crucial, achievable through automated workflows like

aiida-hubbard[18]. The outputs are total energies, atomic forces, and electronic structures for a set of input configurations.Cluster Expansion Parameterization: A set of training structures representing different atomic orderings and compositions is generated. The energies from DFT calculations are used to fit the Effective Cluster Interactions (ECIs). To ensure the model is robust and predictive, Bayesian methods can be employed for uncertainty quantification and to enforce the reproduction of the correct ground-state structures [16]. The MSCE approach is applied if long-ranged strain interactions are significant [17].

Statistical Mechanics & Prediction: The parameterized CE Hamiltonian serves as an efficient surrogate for evaluating the energy of any configuration. It is coupled with statistical mechanics techniques like Monte Carlo (MC) simulations to sample the configurational space and compute thermodynamic averages. This enables the prediction of finite-temperature properties, such as free energies, phase diagrams, and short-range order parameters [19] [16].

Research Applications and Case Studies

Case Study: Thermodynamic Stability of Pseudo-binary YV({1-x})B(2) Alloys

This study exemplifies the application of the integrated workflow to predict the stability and mechanical properties of transition metal diborides [20].

- Objective: To explore the thermodynamic stability and mechanical properties of AlB(2)-type Y({1-x})V(x)B(2) pseudo-binary alloys.

- Methods: A cluster-expansion model was built using 20,419 primitive supercells. DFT calculations provided the training data. The quasiharmonic approximation was used to account for lattice vibration effects on Gibbs free energy at various pressures (0–15 GPa) and temperatures (0–1200 K) [20].

- Key Findings:

- The Y({0.5})V({0.5})B(2) configuration, forming a superlattice of alternating YB(2) and VB(2) layers, was identified as the only thermodynamically stable ordered phase in the system.

- The stabilization mechanism was attributed to a band-filling effect, where alloying electronically optimizes the density of states.

- The hardness of the Y({0.5})V({0.5})B(2) superlattice was predicted to be ~40 GPa, indicating potential as a superhard material. Its shear strength and stiffness showed positive deviations of ~8% and ~5%, respectively, from the Vegard's law estimate [20].

Table 1: Key Predicted Properties of Y({0.5})V({0.5})B(_2) from First-Principles Cluster Expansion [20]

| Property | Predicted Value | Deviation from Vegard's Law |

|---|---|---|

| Hardness | ~40 GPa | +25% |

| Shear Strength | -- | +8% |

| Stiffness | -- | +5% |

| Stable Structure | Superlattice (YB(2)/VB(2) layers) | N/A |

Other Noteworthy Applications

- Surface Structure of PdPtAg Ternary Alloys: A CE model accelerated by DFT was used to explore the surface segregation and atomic ordering of a Pd/Pt/Ag(111) ternary alloy across its compositional space. Monte Carlo simulations revealed that Ag segregates to the outermost surface layer, while Pd concentration peaks in the second layer. This atomic-scale understanding is critical for designing catalysts for reactions like the oxygen reduction reaction [19].

- Interstitial Clustering in BCC High-Entropy Alloys: First-principles calculations were used to study the formation of interstitial solute (C, N, O) clusters. The analysis provided insights into the thermodynamic and kinetic factors favoring cluster formation, which is key to overcoming the strength-ductility trade-off in these alloys [21].

- Li-alloys for Batteries: Bayesian frameworks have been applied to construct cluster expansions for Li(x)Mg({1-x}) and Li(x)Al({1-x}) alloys, which are relevant for solid-state Li batteries. This approach formally quantifies the uncertainty in the surrogate model, improving the reliability of predicted thermodynamic properties [16].

Experimental Protocols

Protocol: Parameterizing a Cluster Expansion for a Binary Alloy

This protocol details the steps for constructing a cluster expansion for a binary alloy system A(x)B({1-x}) using first-principles calculations.

Objective: To develop a accurate and predictive CE Hamiltonian for a binary alloy to enable Monte Carlo simulation of its phase stability.

Materials/Software Requirements:

- DFT software (e.g., VASP, Quantum ESPRESSO)

- Cluster expansion code (e.g., ATAT, CASM)

- High-performance computing (HPC) resources

Procedure:

Define the Parent Lattice: Identify the underlying crystal structure (e.g., FCC, BCC, HCP) and its lattice parameters.

Generate Training Structures:

- Use algorithms (e.g., in ATAT) to create a diverse set of supercells with different atomic orderings, compositions, and sizes (typically up to 20-50 atoms for initial training) [20] [16].

- The set should include prototypes like pure A, pure B, and various ordered structures at compositions A(3)B, AB, AB(3), etc.

- For the Mixed-Space CE (MSCE), also compute the constituent strain energy (CSE) for the system [17].

Perform First-Principles Calculations:

- For each training structure, perform a DFT calculation to obtain the fully relaxed total energy.

- DFT Settings [20] [19]:

- Pseudopotential: Projector Augmented-Wave (PAW).

- Exchange-Correlation Functional: PBE-GGA or RPBE for surfaces.

- Plane-Wave Cutoff Energy: 500 eV or higher.

- k-point Mesh: Use a Γ-centered grid with a density of ~2000 k-points per reciprocal atom or a 25×25×25 mesh for bulk calculations.

- Convergence Criteria: Energy convergence to 1×10(^{-5}) eV and forces to 0.02 eV/Å.

Fit the Effective Cluster Interactions (ECIs):

- The code will perform a least-squares fit (e.g., using Bayesian compression) to determine the ECIs ((w_c)) that minimize the difference between CE-predicted and DFT-calculated energies for the training set.

- Use cross-validation to select the optimal set of clusters and avoid overfitting. The cross-validation score is a good indicator of the model's predictive power [16].

Validate the Model:

- Check that the CE correctly reproduces the ground-state line, meaning all stable ordered structures from DFT are also stable in the CE.

- Calculate the energy of a few additional structures not in the training set (a test set) to validate predictive accuracy.

Protocol: Self-Consistent Calculation of HubbardUandV

This protocol describes the use of an automated workflow for computing Hubbard parameters.

Objective: To determine self-consistent onsite U and intersite V parameters for a transition metal compound using DFPT.

Procedure [18]:

Initialization: Start with an initial structure and a base xc functional. An initial DFT calculation without Hubbard corrections (U=0, V=0) can be used as a starting point.

Self-Consistent Cycle:

- Step A: From the current corrected ground state, the