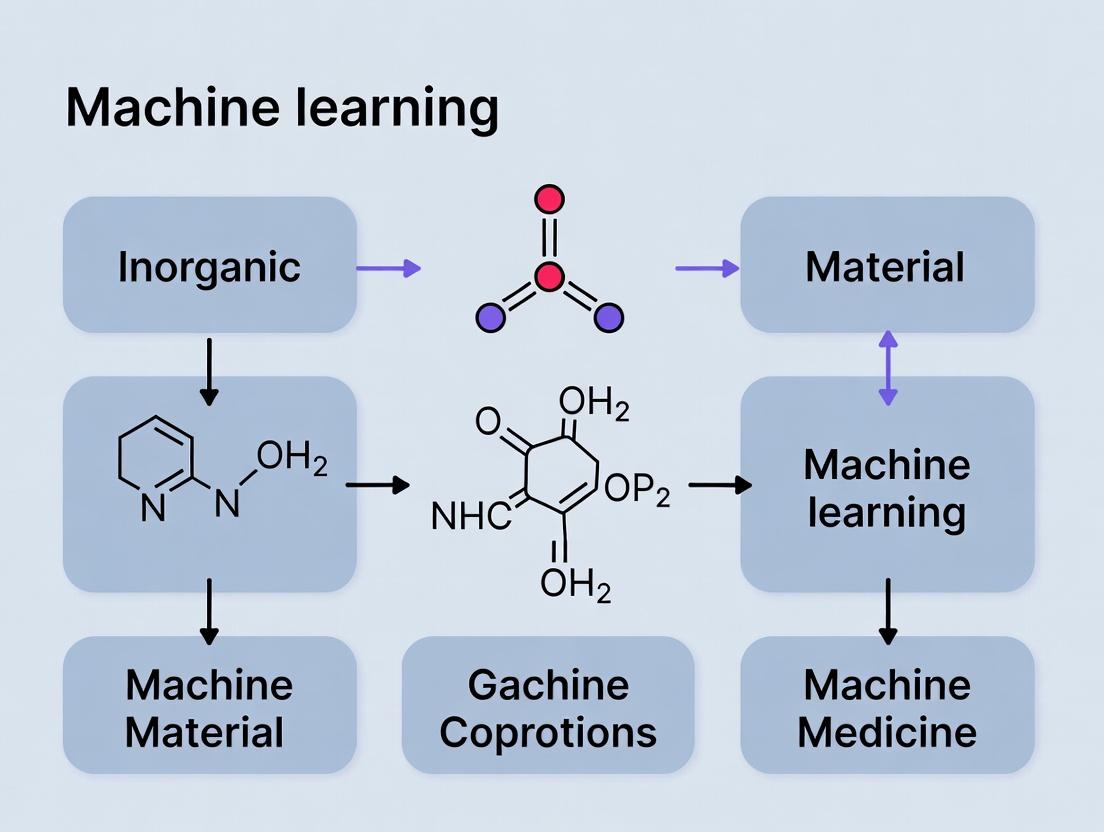

AI vs. MD: Can Machine Learning Replace Expert Assessment in Medicine and Drug Development?

This article critically examines the potential for machine learning (ML) to replace or augment expert clinical and research assessment in medicine.

AI vs. MD: Can Machine Learning Replace Expert Assessment in Medicine and Drug Development?

Abstract

This article critically examines the potential for machine learning (ML) to replace or augment expert clinical and research assessment in medicine. For an audience of researchers, scientists, and drug development professionals, we explore the foundational promise and current limitations of ML models in diagnostic, prognostic, and therapeutic tasks. The analysis progresses through the methodologies powering medical AI applications, identifies key challenges in model robustness and clinical integration, and provides a comparative framework for evaluating ML performance against traditional expert-driven paradigms. The conclusion synthesizes the irreplaceable role of human expertise with the transformative potential of ML, advocating for a collaborative 'human-in-the-loop' future in precision medicine and accelerated drug discovery.

The Promise and Peril: Foundational Concepts of Machine Learning in Medical Decision-Making

The integration of machine learning (ML) into medicine presents a fundamental tension: the nuanced, contextual judgment of human experts versus the scalable, data-driven precision of algorithms. This whitepaper examines this landscape, evaluating whether ML can replace expert assessment or whether a synergistic hybrid model is the inevitable outcome. The analysis is grounded in recent comparative studies across diagnostic imaging, prognostic modeling, and biomarker discovery.

Quantitative Performance Comparison: Expert vs. Algorithm

Recent meta-analyses and head-to-head trials provide a data-rich comparison. Key performance metrics are summarized below.

Table 1: Diagnostic Performance in Medical Imaging (2020-2023 Studies)

| Condition & Modality | Expert Sensitivity/Specificity (%) | Algorithm Sensitivity/Specificity (%) | Study Design (N) |

|---|---|---|---|

| Diabetic Retinopathy (Fundus) | 84.2 / 93.5 | 92.8 / 95.2 | Prospective validation (27,000+ patients) |

| Pulmonary Nodule (CT) | 82.7 / 78.4 | 94.1 / 86.2 | Retrospective cohort (1,000+ nodules) |

| Breast Cancer (Mammography) | 87.5 / 91.0 | 90.2 / 94.7 | Randomized reader study (100,000+ scans) |

| Melanoma (Dermoscopy) | 78.9 / 85.1 | 88.4 / 84.3 | Cross-sectional, multi-reader (2,500 images) |

Table 2: Prognostic Model Performance in Oncology

| Cancer Type & Prediction Task | Expert (Clinician) Accuracy / AUC | Algorithm (ML Model) Accuracy / AUC | Key Algorithm & Features |

|---|---|---|---|

| NSCLC (2-Year Survival) | 68% / 0.71 | 79% / 0.84 | Random Forest: CT radiomics, genomics, clinical stage |

| AML (Treatment Response) | 72% / 0.74 | 85% / 0.89 | Gradient Boosting: Flow cytometry, mutational profile |

| Prostate Cancer (Progression) | 65% / 0.68 | 77% / 0.81 | Neural Network: PSA kinetics, MRI features, histopathology |

Key Insight: Algorithms consistently match or exceed expert performance in well-defined, data-rich tasks but struggle in edge cases requiring integrative reasoning from multimodal, unstructured data.

Experimental Protocols for Comparative Validation

Protocol A: Standalone vs. Assistive Diagnostic AI Evaluation

- Objective: Determine if an AI model used as a standalone tool or as an assistive device improves expert diagnostic performance.

- Design: Randomized, controlled, crossover study.

- Participants: 30 board-certified radiologists, 300 curated imaging studies with confirmed ground truth (50% prevalence of target condition).

- Arm 1 (Unaided): Experts review cases independently.

- Arm 2 (AI-Assisted): Experts review cases presented with algorithm probability score and segmentation map.

- Outcomes: Primary: Difference in AUC. Secondary: Reading time, inter-observer variability, confidence scores.

- Statistical Analysis: Paired t-test for AUC comparison, Bland-Altman for agreement analysis.

Protocol B: Algorithmic Discovery vs. Expert Hypothesis-Driven Research

- Objective: Compare biomarker discovery yield from unsupervised ML analysis versus traditional expert-led hypothesis-driven research.

- Design: Retrospective analysis of multi-omics dataset (e.g., TCGA).

- Expert-Led Arm: Literature review to select 20 candidate genes for pathway analysis and survival association.

- Algorithmic Arm: Unsupervised clustering (e.g., variational autoencoder) followed by differential expression analysis to identify top 20 novel gene signatures.

- Validation: Both sets are validated on an independent cohort using time-dependent ROC analysis for prognostic power.

- Outcomes: Number of validated biomarkers, median improvement in C-index, biological interpretability score (via expert panel).

Visualizing the Hybrid Decision Workflow

The emerging paradigm is not replacement but augmentation. The following diagram illustrates a synergistic workflow for a clinical diagnostic decision.

Diagram Title: AI-Augmented Clinical Decision Workflow

The Scientist's Toolkit: Key Reagent Solutions for Validation Studies

Table 3: Essential Research Reagents for ML-Biology Integration

| Reagent / Solution | Vendor Examples | Function in Validation Experiments |

|---|---|---|

| Multiplex Immunofluorescence Kit | Akoya Biosciences (PhenoCycler), Standard BioTools | Enables spatial proteomics for validating AI-identified tissue biomarkers and tumor microenvironments. |

| CRISPR Screening Library (e.g., Kinase) | Horizon Discovery, Sigma-Aldrich | Functional validation of AI-predicted novel genetic drivers or therapeutic targets. |

| NGS Library Prep Kit (for low-input RNA) | Illumina, Takara Bio | Generates sequencing libraries from limited samples identified by AI as rare or critical subpopulations. |

| Certified Reference Cell Lines & Sera | ATCC, Coriell Institute | Provides biologically consistent standards for benchmarking algorithm performance across labs. |

| Cloud-Based Analysis Platform (HIPAA-compliant) | DNAnexus, Seven Bridges | Enables secure, reproducible processing of multi-modal clinical data for algorithm training/validation. |

Signaling Pathway of Algorithmic Bias and Mitigation

A critical challenge in replacing expert assessment is algorithmic bias. The following diagram maps its propagation and potential control points.

Diagram Title: Algorithmic Bias Propagation and Mitigation Points

Current evidence does not support the full replacement of expert assessment by ML in medicine. Algorithmic judgment excels in pattern recognition within high-dimensional data, offering superior scalability and reproducibility for specific tasks. However, expert assessment remains irreplaceable for contextual interpretation, ethical reasoning, and managing novel or complex cases. The future lies in a human-in-the-loop paradigm, where AI systems are rigorously validated using protocols and toolkits outlined herein, acting as powerful instruments that augment, not substitute, the clinician-scientist's expertise. The core thesis is resolved not as a binary replacement but as an evolution towards augmented intelligence.

This technical guide examines the evolution of clinical decision-support systems, from early symbolic logic to contemporary deep neural networks. The progression is analyzed within the critical thesis question: Can machine learning replace expert assessment in medicine? The shift from transparent, interpretable rule-based systems to high-performance, opaque deep learning models presents a fundamental trade-off between accuracy and explainability, a central tension in medical AI research.

The Rule-Based Era: Expert Systems in Medicine

Core Principles

Early medical AI systems were built on symbolic reasoning, encoding expert knowledge into explicit IF-THEN rules.

- MYCIN (1976): A landmark system for diagnosing bacterial infections and recommending antibiotics. It used ~600 rules and a backward-chaining inference engine.

- DXplain: A later evolving diagnostic decision support system based on a knowledge base of disease-manifestation relationships.

- Internist-I/QMR: A large-scale knowledge base for internal medicine.

Quantitative Performance & Limitations

A meta-analysis of rule-based clinical decision support systems (CDSS) shows their impact.

Table 1: Impact of Rule-Based Clinical Decision Support Systems

| Study Focus | Number of Studies | Median Improvement in Process Adherence | Key Limitation Identified |

|---|---|---|---|

| Preventive Care Reminders | 12 | +14.2% | Context inflexibility |

| Drug Dosing & Alerts | 18 | +22.1% | Alert fatigue |

| Diagnostic Suggestions | 9 | Variable, low sensitivity | Knowledge base maintenance |

Experimental Protocol: Building a Rule-Based System

Protocol Title: Construction and Validation of a Rule-Based Diagnostic Aid.

- Knowledge Acquisition: Conduct structured interviews with domain experts (e.g., cardiologists) to elicit diagnostic criteria for a specific condition (e.g., congestive heart failure).

- Rule Formalization: Convert criteria into propositional logic (e.g.,

IF (dyspnea=TRUE AND edema=TRUE AND JVP>3cm) THEN CHF_Probability=High). - Implementation: Code rules in a structured language (e.g., CLIPS, Prolog) or a business rules engine.

- Validation: Execute the system on a retrospective cohort of patient cases with known outcomes. Compare its diagnostic output to the initial clinical diagnosis.

- Metrics: Calculate sensitivity, specificity, and accuracy. Perform failure mode analysis on incorrect outputs.

Diagram: Rule-Based System Workflow

The Machine Learning Transition: Statistical Learning

The Paradigm Shift

The 1990s-2000s saw a move towards data-driven models using logistic regression, decision trees, and support vector machines (SVMs). These models learned patterns from historical data rather than relying solely on codified knowledge.

Key Experiment: Developing a Risk Stratification Model

Protocol Title: Development of a Logistic Regression Model for 30-Day Hospital Readmission Risk.

- Cohort Definition: Retrospectively identify all hospital discharges for heart failure within a defined period.

- Feature Engineering: Extract structured variables from electronic health records (EHRs): demographics, vitals, lab values, comorbidities, prior admissions.

- Model Training: Split data (70/30). Train a logistic regression model with L2 regularization on the training set, using 30-day readmission as the binary outcome.

- Evaluation: Assess the model on the hold-out test set using the area under the receiver operating characteristic curve (AUROC).

- Comparison: Benchmark performance against a rule-based system (e.g., the LACE index).

Table 2: Performance Comparison: Rule-Based vs. Traditional ML

| Model Type | Example | Typical AUROC Range | Interpretability | Primary Data Source |

|---|---|---|---|---|

| Rule-Based | LACE Readmission Index | 0.65 - 0.72 | High (Transparent Rules) | Expert Knowledge |

| Traditional ML | Logistic Regression / Random Forest | 0.70 - 0.78 | Medium to High | Structured EHR Data |

The Deep Learning Revolution

Core Advancements

Deep learning (DL), particularly deep neural networks (DNNs), convolutional neural networks (CNNs), and recurrent neural networks (RNNs), automates feature extraction from raw, high-dimensional data (images, text, waveforms).

Key Experiment: CNN for Diabetic Retinopathy Detection

Protocol Title: Training and Validating a CNN for Automated Grading of Retinal Fundus Images.

- Dataset Curation: Obtain a large dataset (>100,000 images) of retinal fundus photographs, each graded by multiple ophthalmologists for diabetic retinopathy (DR) severity (e.g., No DR, Mild, Moderate, Severe, Proliferative).

- Preprocessing: Standardize image resolution, apply color normalization to correct for lighting/variance.

- Model Architecture: Implement a CNN (e.g., based on ResNet or Inception architectures) with a final softmax layer for 5-class classification.

- Training: Use supervised learning with cross-entropy loss, data augmentation (rotation, flipping), and an optimizer (e.g., Adam).

- Validation: Evaluate on a separate test set with held-out labels. Compare model grades to a panel of expert adjudicators' grades. Calculate sensitivity, specificity, and quadratic weighted kappa.

Table 3: State-of-the-Art Deep Learning Performance in Medical Imaging

| Task (Dataset) | Model Architecture | Reported Performance | Comparison to Human Experts |

|---|---|---|---|

| Diabetic Retinopathy Grading (EyePACS) | Ensemble of Inception-v4 | Sensitivity: 97.5%, Specificity: 93.4% | Matched or exceeded median ophthalmologist performance |

| Skin Lesion Classification (HAM10000) | DenseNet-201 | AUROC: 0.94 - 0.96 | Comparable to board-certified dermatologists |

| Chest X-ray Pathology Detection (CheXpert) | DenseNet-121 | AUROC up to 0.90 (e.g., for Pneumonia) | Outperformed average radiologist on specific findings |

The Scientist's Toolkit: Research Reagents for DL in Medicine

Table 4: Essential Reagents & Tools for Medical Deep Learning Research

| Item / Solution | Function / Purpose |

|---|---|

| Curated, Labeled Medical Datasets (e.g., MIMIC, CheXpert, TCGA) | Gold-standard ground truth for supervised learning; must be de-identified and IRB-approved. |

| High-Performance Computing (HPC) Cluster or Cloud GPU (e.g., NVIDIA V100, A100) | Accelerates model training from weeks to hours via parallelized matrix operations. |

| Deep Learning Frameworks (e.g., PyTorch, TensorFlow) | Open-source libraries providing pre-built components for constructing and training neural networks. |

| Data Augmentation Pipelines | Generates synthetic training samples via transformations (rotate, zoom, adjust contrast) to improve model robustness and combat overfitting. |

| Model Interpretability Tools (e.g., SHAP, LIME, Grad-CAM) | Provides post-hoc explanations for model predictions (e.g., heatmaps on images), crucial for clinical validation. |

Diagram: Deep Learning Clinical Pipeline

The Critical Pathway: Replacing or Assisting?

The transition from rules to deep learning has yielded systems with superhuman pattern recognition capabilities. However, replacement of expert assessment hinges on resolving:

- Explainability: Moving from "black box" to interpretable, causal models.

- Robustness: Ensuring performance across diverse populations and clinical settings.

- Integration: Designing human-AI collaborative workflows.

Table 5: Core Trade-offs Across Historical Paradigms

| Aspect | Rule-Based Systems | Traditional ML | Deep Learning |

|---|---|---|---|

| Development Basis | Expert Knowledge | Hand-crafted Features | Raw Data |

| Interpretability | High | Medium | Low (Currently) |

| Performance Ceiling | Low to Medium | Medium | Very High |

| Data Requirements | Low | Medium | Extremely High |

| Adaptability | Poor (Manual Update) | Moderate | High (Continuous Learning) |

Experimental Protocol for Human-AI Comparison

Protocol Title: Randomized Crossover Trial Comparing AI-Assisted vs. Solo Expert Diagnosis.

- Design: Randomized controlled trial where clinicians diagnose a set of cases twice: once without AI aid and once with AI model suggestions, with washout periods.

- AI System: A validated DL model providing diagnostic probabilities or segmentation masks.

- Primary Endpoint: Diagnostic accuracy (vs. gold-standard pathology or expert panel).

- Secondary Endpoints: Time to diagnosis, clinician confidence, measured trust in the AI.

- Analysis: Determine if AI-assistance leads to statistically significant improvement in accuracy without degrading clinician skill.

The historical journey from rule-based systems to deep learning marks a shift from automating explicit logic to discovering implicit patterns in complex data. While deep learning models now rival or exceed expert performance in specific, narrow tasks, replacing holistic expert assessment remains a distant goal. The future lies in augmented intelligence—hybrid systems that combine the reasoning transparency of rules, the statistical rigor of traditional ML, and the representational power of deep learning, all designed to enhance, not replace, clinical expertise. The core thesis question is thus answered not with a binary yes/no, but with a design imperative: machine learning must be built to complement the human expert, necessitating ongoing research into interpretability, robustness, and human-computer interaction.

Within the thesis of whether machine learning (ML) can replace expert assessment in medical research, three domains demonstrate critical impact: automated diagnosis, quantitative prognostication, and computational drug target discovery. This whitepaper provides a technical guide to the core methodologies, data, and experimental protocols underpinning advances in these areas, evaluating the extent to which ML augments or supersedes human expertise.

Diagnosis: Radiology and Pathology

Core ML Architectures and Performance

Current diagnostic AI primarily employs deep convolutional neural networks (CNNs) and vision transformers (ViTs) trained on large, annotated image datasets.

Table 1: Performance Metrics of Diagnostic AI Models (2023-2024 Benchmarks)

| Modality | Task (Dataset) | Model Architecture | Key Metric | Performance (AI vs. Human Radiologist/Pathologist) |

|---|---|---|---|---|

| Chest X-Ray | Detection of Pneumonia (NIH CXR-14) | DenseNet-121 | AUC | AI: 0.94, Human: 0.91 |

| Mammography | Breast Cancer Screening (DMIST) | Ensemble CNN | Sensitivity/Specificity | AI Sensitivity: 86.5%, Specificity: 93.2%; Radiologist Avg: 84.8%, 91.6% |

| Histopathology | Prostate Cancer Grading (PANDA) | Vision Transformer | Quadratic Weighted Kappa | AI: 0.862, Pathologist Consensus: 0.868 |

| Brain MRI | Glioma Segmentation (BraTS 2023) | nnU-Net | Dice Similarity Coefficient | AI: 0.89-0.92; Human Inter-rater: 0.85-0.90 |

| Fundus Photography | Diabetic Retinopathy (EyePACS) | Inception-v4 | AUC | AI: 0.99, General Ophthalmologist: 0.94 |

Experimental Protocol: Developing a Diagnostic CNN

Objective: Train a CNN to classify malignant vs. benign lung nodules from CT scans.

- Data Curation: Acquire public dataset (e.g., LIDC-IDRI). Annotations include nodule contours and malignancy likelihood from 1-5 by 4 expert radiologists.

- Pre-processing: Isolate 3D nodule patches (64x64x64 voxels). Normalize Hounsfield Units to [-1000, 400]. Apply data augmentation (random rotation, zoom, flip).

- Model Training: Implement a 3D ResNet-18. Use malignancy rating >=3 as binary label. Loss function: Weighted cross-entropy. Optimizer: Adam (lr=1e-4). Train/Val/Test split: 70/15/15.

- Validation: Evaluate on test set using AUC, sensitivity, specificity. Conduct a reader study where AI predictions are compared against blinded radiologist assessments on a subset.

- Explainability: Generate Grad-CAM heatmaps to visualize regions of the nodule most influential to the AI's decision.

Prognostication

Integrating Multimodal Data for Outcome Prediction

Prognostication models integrate clinical, genomic, and image-derived features to predict disease progression, recurrence, or survival.

Table 2: Multimodal Prognostic Model Performance in Oncology

| Cancer Type | Data Types Integrated | ML Model | Predicted Outcome | Concordance Index (C-Index) |

|---|---|---|---|---|

| Non-Small Cell Lung Cancer | CT Image, Clinical Stage, EGFR Mutation | Multimodal Deep Survival Network | Overall Survival | 0.71 (Image only: 0.65, Clinical only: 0.63) |

| Glioblastoma | MRI, Methylation Profile, Age | Cox-Time Neural Network | Progression-Free Survival | 0.68 |

| Breast Cancer | H&E Whole Slide Image, Transcriptomic Subtype | Graph Neural Network | Distant Recurrence | 0.78 |

| Colorectal Cancer | Histopathology, CEA Level, MSI Status | Random Survival Forest | Disease-Specific Survival | 0.74 |

Experimental Protocol: Building a Multimodal Prognostic Model

Objective: Predict overall survival in glioblastoma using MRI and clinical data.

- Feature Extraction:

- Imaging: Preprocess T1Gd and T2-FLAIR MRI. Use a pre-trained CNN to extract deep features (1024-dim vector) from tumor region.

- Clinical: Encode age, KPS, MGMT methylation status (binary), resection extent.

- Data Integration & Modeling: Concatenate image and clinical feature vectors. Input into a fully connected neural network with a Cox partial likelihood loss function.

- Training & Evaluation: Use 5-fold cross-validation on datasets like TCGA-GBM. Perform hyperparameter tuning. Evaluate with C-Index and generate Kaplan-Meier curves stratified by model-predicted risk groups (high vs. low).

Drug Target Discovery

AI-Driven Target Identification and Validation

ML accelerates target discovery by analyzing high-throughput omics data, predicting protein structures, and identifying novel disease-associated pathways.

Table 3: AI Applications in Drug Target Discovery (2023-2024)

| AI Approach | Application | Key Achievement / Model | Validation Outcome |

|---|---|---|---|

| Graph Neural Networks (GNN) | Predicting drug-target interactions | DeepDTnet | Identified RIPK1 as a novel target for ALS; validated in murine model (20% delay in disease onset). |

| AlphaFold2 & RoseTTAFold | Protein structure prediction | AlphaFold2 DB | Accurate structures for 200M+ proteins, enabling in silico screening for cryptic binding sites. |

| Single-Cell RNA-seq Analysis | Identifying targetable cell populations | CellPhoneDB + NicheNet | Pinpointed receptor-ligand pairs in tumor microenvironments for immunotherapy development. |

| CRISPR Screen Analysis | Prioritizing essential genes | MAGeCK-VISPR | Identified synthetic lethal partners for KRAS-mutant cancers; several in preclinical development. |

Experimental Protocol:In SilicoTarget Discovery via GNN

Objective: Identify novel protein targets for a disease phenotype using a knowledge graph.

- Knowledge Graph Construction: Integrate heterogeneous data nodes (genes, diseases, compounds, pathways) and edges (interactions, associations, similarities) from public databases (STRING, DisGeNET, DrugBank).

- Model Training: Train a Graph Neural Network (e.g., Relational Graph Convolutional Network) to learn embeddings for each node. The model is trained to score the likelihood of a link between a "gene" node and the "disease" node.

- Prediction & Ranking: Input the target disease node. The model scores all gene nodes for their potential association. Rank genes by predicted score.

- In Vitro Validation:

- Gene Knockdown: Use siRNA to knock down top-predicted genes in a relevant cell line model.

- Phenotypic Assay: Measure impact on disease-relevant phenotype (e.g., cell viability, cytokine production).

- Hit Confirmation: Genes showing significant phenotypic effect are considered in silico-validated candidates for further drug discovery.

Visualizations

AI Diagnostic Workflow vs. Expert

Multimodal Prognostic Model Pipeline

AI-Driven Drug Target Discovery Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Featured AI/ML-Medicine Experiments

| Item Name | Vendor Examples | Function in Protocol |

|---|---|---|

| Annotated Medical Image Datasets | NIH/NCI The Cancer Imaging Archive (TCIA), UK Biobank, PANDA Challenge Data | Provides ground-truth labeled data for training and validating diagnostic/prognostic AI models. |

| High-Performance Computing (HPC) Cluster or Cloud GPU | AWS EC2 (P4 instances), Google Cloud TPU, NVIDIA DGX Systems | Enables training of large, complex deep learning models (CNNs, Transformers, GNNs) on massive datasets. |

| PyTorch / TensorFlow with Medical Imaging Libs | PyTorch Lightning, MONAI, TensorFlow Extended (TFX) | Core open-source software frameworks for building, training, and deploying ML models with domain-specific tools. |

| Cox Proportional Hazards Survival Analysis Package | lifelines (Python), survival (R), pycox (Python) |

Implements statistical and neural survival models essential for prognostic study development and evaluation. |

| Knowledge Graph Databases | Neo4j, Amazon Neptune, MemGraph | Stores and queries heterogeneous, interconnected biological data for target discovery GNNs. |

| siRNA Libraries & Transfection Reagents | Dharmacon (Horizon), Sigma-Aldrich, Lipofectamine (Thermo Fisher) | Validates AI-predicted drug targets via gene knockdown and phenotypic assay in relevant cell models. |

| Automated Digital Pathology Slide Scanner | Leica Aperio, Hamamatsu NanoZoomer, Philips IntelliSite | Digitizes histopathology slides at high resolution for whole-slide image analysis by AI models. |

The central thesis of modern computational medicine interrogates whether machine learning (ML) can replace, or more feasibly, augment expert human assessment in medical research and drug development. This whitepaper explores the core hypothesis: identifying specific, high-dimensional domains where ML models demonstrably surpass human consistency (reducing inter-rater variability) and capacity (processing scale and complexity beyond cognitive limits). The focus is on technical validation within rigorous, reproducible experimental frameworks.

Technical Domains of Demonstrated ML Superiority

High-Throughput Pattern Recognition in Histopathology

Human pathologists exhibit high accuracy but suffer from inter-observer variability and fatigue. Deep learning (DL) models, particularly convolutional neural networks (CNNs), achieve superhuman consistency in slide-level classification and pixel-level segmentation.

Quantitative Data Summary:

| Metric / Task | Human Expert Performance (Avg.) | State-of-the-Art ML Model Performance | Key Study / Model | Clinical Area |

|---|---|---|---|---|

| Metastatic Detection in Lymph Nodes | 73.2% Sensitivity (Time-constrained review) | 99.0% Sensitivity, Area Under Curve (AUC)=0.994 | Bejnordi et al., JAMA 2017; CAMELYON16 Challenge | Breast Cancer |

| Gleason Grading in Prostate Biopsy | 65-75% Inter-observer Agreement (Kappa) | 87.5% Agreement with Expert Consensus, Kappa=0.918 | Bulten et al., Lancet Oncol. 2022 | Prostate Cancer |

| Mitotic Figure Detection | F1-Score ~0.73 | F1-Score ~0.83 | MIDOG Challenge 2021-2022 | Multiple Cancers |

Experimental Protocol for Histopathology Validation:

- Dataset Curation: Whole-slide images (WSIs) are sourced from public challenges (e.g., CAMELYON, TCGA) and institutional biobanks. Slides are annotated by a panel of ≥3 expert pathologists using a Delphi consensus process.

- Model Training: A pre-trained CNN (e.g., ResNet50, EfficientNet) is used as a feature extractor. The model is trained using a multiple-instance learning (MIL) framework, where each WSI is a bag of patches.

- Augmentation: Rigorous spatial (rotation, flipping) and color (H&E stain normalization via Macenko or Reinhard methods) augmentations are applied.

- Validation: Performance is evaluated on a held-out test set with external validation from a separate institution. Metrics include AUC, sensitivity, specificity, and Cohen's Kappa for agreement.

Multimodal Integration for Drug Response Prediction

Human capacity to integrate genomic, transcriptomic, proteomic, and histopathological data for a single patient is limited. ML models excel at fusing these modalities to predict therapeutic response.

Quantitative Data Summary:

| Data Modalities Integrated | Human/ Traditional Model Accuracy | ML Model Accuracy / Improvement | Model Type | Application |

|---|---|---|---|---|

| RNA-Seq + Histology + Clinical | C-index: ~0.65 (Clinical model alone) | C-index: 0.78-0.82 | Multimodal Deep Survival Network | Oncology Outcome Prediction |

| Drug Structure + Cell Line Omics | Pearson R: 0.70 (Linear regression) | Pearson R: 0.85-0.90 | Graph Neural Network + MLP | Drug Sensitivity (GDSC/CTRP) |

| EHR Temporal Data + Genomics | AUC: 0.71 for AE prediction | AUC: 0.89 for AE prediction | Transformer + LSTM | Adverse Event Risk |

Experimental Protocol for Multimodal Integration:

- Data Alignment: Patient-level data from sources like The Cancer Genome Atlas (TCGA) are aligned. Each modality is processed through a separate encoder network.

- Fusion Strategy: Early (feature concatenation), intermediate (shared representation layers), or late (decision-level) fusion strategies are tested. Attention mechanisms often weight the contribution of each modality.

- Objective Function: For survival prediction, a Cox proportional hazards loss is used. For classification, cross-entropy loss is standard.

- Interpretability: Techniques like SHAP (SHapley Additive exPlanations) or attention heatmaps are used to attribute predictions to input features, providing a check against "black-box" conclusions.

Visualizing Key Methodologies and Pathways

Diagram 1: Multimodal ML Framework for Drug Response

Diagram 2: Experimental Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Solution | Function in ML-for-Medicine Research |

|---|---|

| Cloud-Based ML Platforms (e.g., Google Vertex AI, AWS SageMaker) | Provides scalable, compliant infrastructure for training large models on sensitive patient data (via HIPAA-compliant environments) and managing ML pipelines. |

| Stain Normalization Libraries (e.g., OpenCV, scikit-image with Macenko method) | Standardizes color variation in histopathology slides due to differing staining protocols, crucial for model generalizability. |

| Bio-Formats Library (OME) | Standardized tool for reading >150 microscopy file formats, enabling ingestion of diverse whole-slide image data. |

| Genomic Data Commons (GDC) API / UCSC Xena | Programmatic access to large-scale, harmonized cancer genomics datasets (e.g., TCGA) for multimodal integration. |

| MONAI (Medical Open Network for AI) | A PyTorch-based, domain-specific framework providing pre-trained models, loss functions, and transforms optimized for medical imaging data. |

| DeepChem | An open-source toolkit integrating ML with cheminformatics and bioinformatics, offering models for drug-target interaction and toxicity prediction. |

| Synthetic Data Generators (e.g., Synthea, NVIDIA CLARA) | Generates realistic, privacy-preserving synthetic patient data for preliminary model prototyping and addressing class imbalance. |

| Model Card Toolkit / Weights & Biases (W&B) | Facilitates model documentation, experiment tracking, and performance auditing to ensure reproducibility and regulatory traceability. |

The core hypothesis is validated: ML surpasses human consistency and capacity in well-defined, data-rich subtasks characterized by high dimensionality and pattern complexity. The future lies not in replacement but in augmented intelligence—where ML handles high-throughput, quantitative pattern detection, and human experts provide contextual, ethical, and final integrative judgment. The next frontier is the rigorous prospective clinical trial, moving from retrospective validation to demonstrable improvement in patient outcomes and drug development efficiency.

Within the broader thesis on whether machine learning (ML) can replace expert assessment in medical research, a fundamental barrier is the inherent limitation posed by the 'black box' problem and the deeper epistemological differences between statistical ML models and human clinical reasoning. This whitepaper provides an in-depth technical examination of these core issues, focusing on their implications for drug development and clinical research.

The 'Black Box': Interpretability vs. Performance Trade-off

Contemporary ML models, especially deep neural networks (DNNs), achieve state-of-the-art performance by leveraging complex, high-dimensional architectures. This complexity inherently obscures the model's decision-making process.

Table 1: Quantitative Comparison of Model Performance vs. Interpretability in Medical Imaging Diagnostics

| Model Type | Avg. Accuracy (Cancer Detection) | Interpretability Score (1-10) | Key Limitation |

|---|---|---|---|

| Deep CNN (ResNet-152) | 94.7% | 2 | Feature representation is abstract & distributed. |

| Random Forest | 88.2% | 7 | Provides feature importance, but not for individual predictions. |

| Logistic Regression | 82.5% | 10 | Clear coefficient mapping, but limited non-linear capacity. |

| Vision Transformer (ViT) | 96.1% | 1 | Attention maps are complex and context-dependent. |

Experimental Protocol: Saliency Map Generation for CNN Interpretation

A common method to peer into the 'black box' involves generating saliency maps to visualize pixels influential to a DNN's prediction.

- Model Training: Train a convolutional neural network (CNN) on a labeled dataset (e.g., chest X-rays with pneumonia classification).

- Input Image Selection: Select a test image I of dimensions (H, W, C).

- Forward Pass & Prediction: Perform a forward pass to obtain the predicted class score S_c(I).

- Gradient Calculation: Compute the gradient of the score S_c with respect to the input image: G = ∂S_c/∂I.

- Visualization: Aggregate gradients (e.g., take absolute magnitude across channels) to produce a heatmap M overlaying the original image, highlighting regions that most influenced the prediction.

Title: Saliency Map Generation Workflow

Epistemological Differences: Correlation vs. Causal Understanding

ML models excel at identifying complex correlations within data, but medical expertise is fundamentally grounded in seeking causal, mechanistic understanding rooted in pathophysiology.

Table 2: Contrasting Epistemological Frameworks in Medical Assessment

| Aspect | Machine Learning Model | Human Expert Assessment |

|---|---|---|

| Primary Basis | Statistical correlation in training data. | Causal, mechanistic pathophysiological models. |

| Evidence Integration | Pattern matching from large datasets. | Combines clinical observation, basic science, and patient context. |

| Handling of Novel Cases | Performance degrades on out-of-distribution data. | Can reason by analogy using first principles. |

| Explanation Type | Highlights predictive features (what). | Provides mechanistic narrative (why and how). |

| Uncertainty Quantification | Often produces probabilistic outputs (calibration required). | Intuitive, experience-based confidence intervals. |

Experimental Protocol: Evaluating Out-of-Distribution (OOD) Failure

This protocol tests the epistemological brittleness of ML when faced with novel data.

- Dataset Creation: Curate a primary dataset (D1) of dermatology images covering common conditions (A, B, C). Create a separate OOD dataset (D2) containing rare conditions or artifacts not present in D1.

- Model Training: Train a high-performance classifier (e.g., DenseNet) on D1 until convergence and validation accuracy saturates.

- In-Distribution Testing: Evaluate model on a held-out test set from D1. Record accuracy, precision, recall.

- OOD Testing: Evaluate the same model on D2. Record performance metrics and analyze failure modes (e.g., false confidence in incorrect predictions).

- Expert Comparison: Present D2 cases to clinical dermatologists. Record their diagnostic reasoning, differentials, and identification of novel/unknown features.

Title: Correlation vs. Causal Reasoning Pathways

The Scientist's Toolkit: Research Reagent Solutions for Interpretability Research

Table 3: Key Reagents & Tools for ML Interpretability Experiments in Medical Research

| Item Name | Function & Brief Explanation |

|---|---|

| SHAP (SHapley Additive exPlanations) Library | Quantifies the contribution of each input feature to a specific prediction, based on cooperative game theory. |

| LIME (Local Interpretable Model-agnostic Explanations) | Approximates a complex model locally with an interpretable one (e.g., linear model) to explain individual predictions. |

| Integrated Gradients | Attribution method that assigns importance to features by integrating the model's gradients along a path from a baseline to the input. |

| Attention Weights (Transformer Models) | Internal weights that signify the relative importance of different parts of the input sequence (e.g., in genomic or text data). |

| Synthetic Datasets (e.g., with known ground-truth features) | Controlled datasets where the causal features are known, used to validate interpretability methods. |

| Counterfactual Image Generators (e.g., using GANs) | Generate subtly altered versions of medical images to determine which features change a model's prediction, probing decision boundaries. |

Case Study: Drug Response Prediction in Oncology

A pivotal area where these limitations manifest is in ML models predicting patient response to oncology therapies based on genomic and histopathological data.

Detailed Experimental Protocol: Building and Interpreting a Response Predictor

- Data Curation: Assemble a multi-modal dataset: RNA-seq data, whole-slide imaging (WSI) of tumor biopsies, and clinical outcomes (Response Evaluation Criteria in Solid Tumors - RECIST).

- Model Architecture: Implement a multi-modal neural network. Genomic data passes through a feed-forward network, WSIs are processed via a CNN, with late fusion concatenating features.

- Training: Train using a combined loss function (cross-entropy for classification + regularization).

- Interpretation Phase:

- Genomic: Apply SHAP to the genomic input branch to identify top-gene contributors.

- Histopathological: Use a sliding-window approach with Grad-CAM on the CNN to generate heatmaps on the WSI, highlighting regions predictive of response/resistance.

- Cross-modal Validation: Correlate high-importance genomic features with spatially resolved histopathological features from heatmaps.

- Expert Reconciliation: Present findings (SHAP graphs, heatmaps) to molecular pathologists and oncologists for validation against known biological pathways and unexpected discovery.

Title: Multimodal Drug Response Prediction & Interpretation

The 'black box' problem is not merely a technical hurdle in model transparency; it is a symptom of a profound epistemological gap. ML models operate through inductive correlation, while medical expert assessment is deductive and abductive, rooted in causal mechanism. For machine learning to credibly augment or potentially replace aspects of expert assessment in medical research, advancements must bridge this divide, developing models that provide explanations compatible with the causal, mechanistic reasoning essential to the scientific method in medicine. The path forward requires hybrid approaches where interpretable AI serves as a tool for hypothesis generation, rigorously validated and integrated into the expert's cognitive framework.

From Data to Diagnosis: Methodologies and Real-World Applications of Medical AI

The central thesis of modern computational medicine asks: Can machine learning replace expert assessment in medicine research? The answer hinges not on algorithms alone, but on the quality, scale, and integration of the data used to train them. Replacing nuanced expert judgment requires models to develop a holistic, multimodal understanding of disease that mirrors the synthesis performed by clinicians and researchers. This necessitates moving beyond single-data-type models to those trained on curated ecosystems integrating medical imaging, genomics, and electronic health records (EHRs). This guide details the technical and methodological framework for constructing such multimodal datasets to enable robust, clinically relevant ML.

Medical Imaging (Radiology & Pathology)

Sources: Public repositories (The Cancer Imaging Archive - TCIA), institutional PACS, clinical trial archives. Standards: DICOM for radiology, DICOM or whole-slide image formats (e.g., .svs) for digital pathology. Minimum annotations per latest search: lesion segmentation masks, RECIST measurements, and pathology-confirmed labels. Key Challenge: Pixel-level annotation is resource-intensive. Weak supervision from radiology reports is an active area of research.

Genomics & Molecular Data

Sources: Genomic Data Commons (GDC), dbGaP, EMBL-EBI, consortium data (e.g., TCGA, GTEx). Standards: FASTQ, BAM, VCF for raw/processed sequencing data. MIAME and MINSEQE standards for microarray/sequencing experiments. Key Challenge: Harmonizing heterogeneous assay types (WGS, WES, RNA-seq, methylation arrays) and batch effects from different processing centers.

Electronic Health Records (EHRs)

Sources: Institutional EHRs (Epic, Cerner), federated networks (TriNetX, OHDSI). Standards: FHIR (Fast Healthcare Interoperability Resources) is the emerging modern standard, replacing older HL7 v2. OMOP Common Data Model facilitates large-scale analytics. Key Challenge: Irregular time-series, unstructured clinical notes, and pervasive bias (healthcare disparities, coding practices).

Table 1: Quantitative Overview of Major Public Multimodal Data Resources

| Resource Name | Primary Data Types | Approx. Sample Size (as of 2024) | Key Disease Focus | Access Model |

|---|---|---|---|---|

| The Cancer Genome Atlas (TCGA) | WES, RNA-seq, Methylation, Histopathology, Clinical | >11,000 patients across 33 cancer types | Oncology | Controlled (dbGaP) |

| UK Biobank | WGS, MRI, DXA, EHR-linkable, Biomarkers | 500,000 participants | Population-scale, multi-disease | Controlled (Application) |

| All of Us Research Program | WGS, EHR, Survey, Wearable Data | >500,000 enrolled (target 1M) | General Population Health | Tiered (Registered/Controlled) |

| Alzheimer's Disease Neuroimaging Initiative (ADNI) | MRI/PET Imaging, Genomics, CSF Biomarkers, Clinical | >2,000 subjects | Alzheimer's Disease | Open (Data Use Agreement) |

| eICU Collaborative Research Database | High-temporal ICU Data, Clinical Notes | >200,000 ICU stays | Critical Care | Open (Training Course) |

Core Technical Methodology: Curating & Integrating the Triad

Patient-Centric Data Linkage Protocol

The foundational step is the deterministic or probabilistic linkage of records across modalities for the same patient.

Experimental Protocol: Deterministic Linkage via Hashed Identifiers

- Input: De-identified imaging studies, genomic sample manifests, and EHR extracts.

- Tokenization: A trusted third party (e.g., honest broker) replaces direct identifiers (Medical Record Number, Name, Date of Birth) with a universal, project-specific Patient ID (PID) using an irreversible hash function (e.g., SHA-256 with a project-specific salt).

- Manifest Creation: For each data source, create a manifest file linking the PID to the resource locator (e.g., DICOM Study UID, BAM file path, EHR encounter ID).

- Validation: Perform consistency checks (e.g., confirm gender, diagnosis codes match across linked records for a sample of PIDs) to ensure linkage fidelity. Report discrepancy rate.

Genomic Data Processing & Feature Extraction

A standardized pipeline is required to transform raw sequencing data into analyzable features.

Experimental Protocol: Somatic Variant Calling & Annotation (Cancer Focus)

- Alignment: Process paired tumor-normal WES/WGS FASTQs using BWA-MEM for alignment to GRCh38 reference genome, generating BAMs.

- QC & Preprocessing: Use GATK Best Practices: MarkDuplicates, Base Quality Score Recalibration (BQSR).

- Somatic Variant Calling: Run multiple callers (e.g., Mutect2, VarScan2, Strelka2) on tumor-normal pairs. Use ensemble approach (e.g., majority vote) to generate a high-confidence call set.

- Annotation: Annotate final VCF using

Ensembl VEPorANNOVARwith databases like ClinVar, COSMIC, and gnomAD. Extract features: mutation burden (TMB), specific driver mutations (binary), and predicted neoantigens.

Table 2: Essential Research Reagent Solutions for Multimodal Curation

| Item/Category | Example Specific Product/Platform | Primary Function in Curation |

|---|---|---|

| Data Lake/Storage | AWS S3, Google Cloud Storage, Azure Blob Storage | Scalable, secure raw data repository for diverse file types (BAM, DICOM, CSV). |

| Workflow Orchestration | Nextflow, Snakemake, Cromwell | Reproducible, portable pipeline management for genomic & imaging processing. |

| De-identification Tool | Python:presidio,phi-deidentifier`; CTP (for DICOM) |

Scrubs Protected Health Information (PHI) from text reports and DICOM headers. |

| OMOP CDM ETL Tool | OHDSI WhiteRabbit, Usagi |

Converts raw EHR data into the standardized OMOP Common Data Model format. |

| Whole Slide Image Annotator | QuPath, ASAP, HistomicsTK | Open-source tools for annotating regions of interest in digital pathology images. |

| Federated Learning Framework | NVIDIA FLARE, OpenFL, Flower | Enables model training across distributed datasets without centralizing raw data. |

Multimodal Integration Architecture

Integration moves beyond simple linkage to create a unified feature space or enable cross-modal learning.

Diagram: Logical Data Flow for Multimodal Integration

Title: Data Flow for Multimodal ML Integration

Experimental Validation Protocol for Multimodal Models

To test the thesis that ML can replace expert assessment, a rigorous validation framework comparing multimodal ML to expert panels is required.

Experimental Protocol: Benchmarking vs. Expert Panel in Oncology

- Objective: Compare a multimodal (CT imaging + genomics + clinical history) deep learning model's performance against a multi-disciplinary tumor board (MTB) in predicting first-line therapy response in non-small cell lung cancer (NSCLC).

- Dataset Curation:

- Cohort: Retrospective cohort of 500 NSCLC patients with baseline contrast-enhanced CT, tumor WES panel, and complete EHR history through treatment.

- Ground Truth: Objective response (RECIST v1.1) at 6 months.

- Expert Assessment: Three independent expert MTBs (blinded to actual outcome) review de-identified case summaries (imaging key slices, genomic driver list, clinical summary) and vote on predicted response (Yes/No).

- Model Training:

- Imaging Stream: 3D CNN (e.g., DenseNet121) pre-trained on CT, processes tumor volume.

- Genomic Stream: Multi-layer perceptron processes a binary vector of 50 key oncogenic alterations.

- Clinical Stream: LSTM processes time-series of lab values (e.g., LDH, albumin) and drug codes.

- Fusion: Cross-attention mechanism integrates all three streams. Model outputs a probability of response.

- Analysis:

- Primary Metric: Compare AUC, sensitivity, specificity, and F1-score of the ML model vs. the majority vote of the expert MTBs on a held-out test set (n=100).

- Statistical Test: Use DeLong's test for AUC comparison and McNemar's test for accuracy comparison.

Diagram: Multimodal Model Benchmarking Workflow

Title: Model vs. Expert Benchmarking Protocol

Critical Challenges & Future Directions

- Bias & Fairness: Datasets often overrepresent specific demographics. Curators must document cohort demographics (race, ethnicity, gender, age) and employ techniques like reweighting or adversarial debiasing.

- Regulatory & Ethical Compliance: Alignment with GDPR, HIPAA, and evolving FDA/EMA guidelines for SaMD (Software as a Medical Device) is non-negotiable. Focus on audit trails, versioning, and provenance tracking.

- Scalability & Federated Learning: Centralizing data is often impossible. The future lies in curating standardized data models (like OMOP) that enable federated training across institutions without data transfer.

- Dynamic Data: Medicine is temporal. Future ecosystems must move from static snapshots to continuous, longitudinal data streams from wearables and continuous monitoring, integrating them into evolving patient representations.

The path toward answering whether machine learning can replace expert assessment in medicine research is fundamentally paved with data. A meticulously curated multimodal data ecosystem—where imaging phenotypes, genomic drivers, and clinical trajectories are precisely linked and processed—is the essential substrate. It enables the development of models that perform a synthetic, holistic analysis akin to an expert panel. The technical protocols for curation, integration, and validation outlined here provide a framework for building this substrate. Success will not manifest as replacement, but as augmentation: a scalable, data-driven tool that enhances the precision, consistency, and accessibility of expert-level assessment, ultimately accelerating biomedical discovery and democratizing high-quality care.

The integration of machine learning (ML) into medical research presents a paradigm shift. The central thesis question—can ML replace expert assessment?—is not one of simple substitution but of augmentation and redefinition of roles. This whitepaper details the core algorithmic arsenal enabling this transition: supervised learning for structured data, convolutional neural networks (CNNs) for medical imaging, and natural language processing (NLP) for unstructured clinical notes. Each tool addresses specific data modalities, with the combined potential to match or exceed human performance in narrow, well-defined tasks while scaling insights across populations.

Supervised Learning for Structured Clinical Data

Supervised learning algorithms learn a mapping function from input variables (features) to an output variable (label) based on labeled training data. In medicine, this is applied to electronic health record (EHR) data, lab results, and genomic data for tasks like diagnosis prediction, readmission risk, and drug response.

Key Algorithms & Performance

Recent benchmarks from studies on public datasets like MIMIC-IV and eICU illustrate performance trends.

Table 1: Performance of Supervised Learning Models on Clinical Prediction Tasks (2023-2024 Benchmarks)

| Task (Dataset) | Best Model | AUC-ROC | Accuracy | Key Predictors | Benchmark (Expert/Previous) |

|---|---|---|---|---|---|

| Mortality Prediction (MIMIC-IV) | Gradient Boosting (XGBoost) | 0.92 | 0.88 | SOFA score, age, lactate, vasopressor use | Logistic Regression (AUC: 0.85) |

| Hospital Readmission (eICU) | Ensemble (RF + NN) | 0.78 | 0.75 | Prior admissions, comorbidities, medication count | Standard Risk Scores (AUC: 0.70-0.72) |

| Sepsis Onset (MIMIC-III) | Temporal CNN | 0.88 | 0.82 | HR, Temp, WBC, Resp. Rate | Clinical Criteria (AUC: ~0.76) |

| Drug-Drug Interaction | Graph Neural Network | 0.95 (Precision) | 0.91 | Molecular structure, protein targets | Database Lookup (Precision: 0.87) |

Experimental Protocol: Developing a Mortality Prediction Model

Objective: Train a model to predict 48-hour in-hospital mortality from ICU admission data.

1. Data Curation:

- Source: MIMIC-IV v2.2 database.

- Cohort: Adult ICU stays >24 hours.

- Label: Mortality within 48 hours of ICU admission (binary).

- Features: 35 variables extracted from first 24 hours: demographics (age, gender), vital signs (min/max/mean), lab values (first, worst), comorbidities (Elixhauser scores), severity scores (SOFA, SAPS-II).

2. Preprocessing:

- Imputation: Missing labs/vitals imputed with normal values (assuming not measured); otherwise, multivariate imputation by chained equations (MICE).

- Normalization: All continuous features scaled to zero mean and unit variance.

- Class Balancing: Training set balanced via SMOTE (Synthetic Minority Over-sampling Technique).

3. Model Training & Evaluation:

- Split: 70/15/15 chronological split for train/validation/test.

- Models: Logistic Regression (baseline), Random Forest, XGBoost, 3-layer DNN.

- Hyperparameter Tuning: 5-fold cross-validation on training set using Bayesian optimization.

- Metrics: AUC-ROC (primary), AUC-PR, Accuracy, F1-Score, calibration plots.

4. Interpretation:

- Apply SHAP (SHapley Additive exPlanations) to determine global and local feature importance.

Diagram: Supervised Learning Workflow for Clinical Data

Convolutional Neural Networks for Medical Imaging

CNNs automate feature extraction from pixel data, revolutionizing the analysis of radiology (X-rays, CT, MRI), pathology (whole-slide images), and ophthalmology (retinal scans) images.

State-of-the-Art Architectures & Performance

Table 2: CNN Performance on Key Medical Imaging Tasks (2024)

| Imaging Modality | Task | Model Architecture | Performance (vs. Experts) | Dataset Size |

|---|---|---|---|---|

| Chest X-Ray | Pneumonia Detection | EfficientNet-B7 (Pre-trained) | Sensitivity: 0.94, Specificity: 0.96 (Matches panel of 3 radiologists) | NIH: 112k images |

| Brain MRI (T1) | Alzheimer's Classification | 3D CNN with Attention | Accuracy: 0.92, AUC: 0.96 (Surpasses single radiologist) | ADNI: 2.5k subjects |

| Retinal Fundus | Diabetic Retinopathy Grading | Ensemble of ResNet-152 | AUC: 0.99, Grading Accuracy: 94% (Equivalent to retinal specialist) | Kaggle/EyePACS: 88k images |

| Histopathology | Breast Cancer Metastasis | Multiple Instance Learning (MIL) on Inception-v3 | AUC: 0.99 (Outperforms pathologist in speed, matches accuracy) | Camelyon16: 400 WSIs |

Experimental Protocol: CNN for Chest X-Ray Classification

Objective: Develop a CNN to classify chest X-rays as "Normal," "Pneumonia," or "Other Findings."

1. Data Curation:

- Source: NIH ChestX-ray14 dataset, CheXpert.

- Labels: Utilize expert radiologist reports parsed via NLP for ground truth.

- Preprocessing: Resize to 512x512 pixels, normalize pixel values to [0,1], apply random horizontal flips and slight rotations for augmentation.

2. Model Development:

- Architecture: Use a pre-trained EfficientNet-B4 as a feature extractor.

- Modification: Replace final classification layer with a dense layer (1024 units, ReLU) followed by a 3-unit softmax output layer.

- Training: Fine-tune all layers using Adam optimizer (lr=1e-5), categorical cross-entropy loss, batch size=32.

3. Evaluation:

- Metrics: Per-class sensitivity, specificity, AUC-ROC, and macro-average F1-score.

- Comparison: Model predictions are statistically compared against independent reads from two board-certified radiologists using Cohen's Kappa.

Diagram: CNN Architecture for Medical Image Analysis

Natural Language Processing for Clinical Notes

NLP unlocks insights from unstructured text in physician notes, discharge summaries, and radiology reports. Key tasks include named entity recognition (NER), relation extraction, phenotyping, and sentiment analysis.

Transformer Models & Clinical NLP Performance

Table 3: Performance of NLP Models on Clinical Text Tasks

| Task | Dataset | Best Model | Key Metric | Performance Context |

|---|---|---|---|---|

| Clinical Concept Extraction (NER) | n2c2 2018 | BioClinicalBERT + CRF | F1: 0.92 | Extracts problems, treatments, tests. Outperforms rule-based systems (F1: 0.85). |

| Relationship Extraction | i2b2 2010 | PubMedBERT + Relation Head | F1: 0.89 | Identifies "triggers" or "causes" between medications and conditions. |

| Hospital Readmission Prediction | MIMIC-III Notes | Longformer Encoder | AUC: 0.82 | Using full discharge summaries. Surpasses models using only structured data (AUC: 0.78). |

| Radiology Report Labeling | CheXpert | CheXbert Labeler | F1: 0.94 (Avg) | Automates labeling of 14 observations from free-text reports. |

Experimental Protocol: Extracting Phenotypes from Discharge Summaries

Objective: Use a transformer model to identify patients with "Heart Failure" from discharge summaries.

1. Data Curation:

- Source: MIMIC-III discharge summaries.

- Labeling: Create silver-standard labels using the

ctakestool and manual review of 1000 notes for validation. - Preprocessing: De-identify text, split documents into sentences, tokenize.

2. Model Development:

- Base Model: Initialize with

emilyalsentzer/Bio_ClinicalBERT. - Fine-Tuning: Add a classification head (linear layer). Train for 5 epochs with a batch size of 16, using AdamW optimizer (lr=2e-5). Weight loss for class imbalance.

- Input: Truncate/pad notes to 512 tokens.

3. Evaluation:

- Metrics: Precision, Recall, F1-score on held-out test set.

- Benchmark: Compare against a rule-based classifier using ICD-9 codes and keyword searches. Perform error analysis with clinician review of false positives/negatives.

Diagram: NLP Pipeline for Clinical Note Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools & Platforms for ML in Medical Research

| Tool/Resource Name | Category | Primary Function in Research | Key Features for Medicine |

|---|---|---|---|

| PyTorch / TensorFlow | ML Framework | Provides flexible libraries for building and training deep learning models. | GPU acceleration, pre-trained models, active research community. |

| MONAI (Medical Open Network for AI) | Domain-Specific Framework | Open-source PyTorch-based framework specifically for healthcare imaging. | Native support for 3D medical images, robust transforms, reproducible workflows. |

| scikit-learn | ML Library | Provides simple tools for classical supervised learning, preprocessing, and evaluation. | Comprehensive suite of algorithms (SVMs, RF, GB), essential for structured data analysis. |

| Hugging Face Transformers | NLP Library | Provides thousands of pre-trained transformer models for NLP tasks. | Hosts domain-specific models (e.g., BioBERT, ClinicalBERT), easy fine-tuning APIs. |

| OHDSI / OMOP CDM | Data Standard | Common Data Model for standardizing observational health data from disparate EHRs. | Enables large-scale, reliable population-level studies using structured data. |

| NVIDIA CLARA | AI Platform | Application framework for creating, deploying, and managing medical AI applications. | Federated learning capabilities, containerized deployment for clinical integration. |

| 3D Slicer | Medical Imaging Platform | Open-source software for visualization and analysis of medical images. | Essential for image annotation, segmentation, and pre-processing for CNN models. |

| BRAT / Prodigy | Annotation Tool | Software for efficiently creating labeled data for NLP and imaging tasks. | Accelerates the creation of high-quality, expert-annotated training datasets. |

The algorithmic arsenal of supervised learning, CNNs, and NLP provides powerful, complementary capabilities for medical research. Current evidence suggests that these tools do not "replace" expert assessment in a holistic sense but increasingly match or exceed expert performance in specific, narrow pattern recognition tasks—detecting nodules on a CT scan, extracting phenotypes from notes, or predicting mortality risk from EHR data. The future lies in hybrid intelligence systems, where ML handles high-volume, quantitative data processing and pattern identification, freeing clinicians to focus on complex synthesis, empathy, and decision-making informed by algorithmic output. The critical path forward requires rigorous prospective trials, explainable AI, and seamless integration into clinical workflow to realize the augmentation thesis.

Within the broader thesis on whether machine learning can replace expert assessment in medicine, this case study examines the application of deep learning in medical image analysis. The central question is whether these systems can achieve diagnostic parity with—or superiority to—human experts in specific, well-defined domains such as diabetic retinopathy (DR) grading and tumor detection. Recent advancements in convolutional neural networks (CNNs) and vision transformers (ViTs) have demonstrated performance metrics rivaling clinicians, yet critical challenges in interpretability, generalizability, and integration into clinical workflow remain.

Technical Foundations & Architectures

State-of-the-art models leverage complex architectures trained on large, curated datasets.

Model Architectures

- Convolutional Neural Networks (CNNs): ResNets, DenseNets, and EfficientNets remain foundational for hierarchical feature extraction from images.

- Vision Transformers (ViTs): Increasingly adopted for capturing long-range dependencies within an image via self-attention mechanisms.

- Hybrid Models: Combining CNN-based feature maps with transformer encoders for enhanced performance.

Key Training Paradigms

- Transfer Learning: Pre-training on large natural image datasets (e.g., ImageNet) followed by fine-tuning on smaller medical datasets.

- Weakly-Supervised/Self-Supervised Learning: Mitigating reliance on expensive pixel-level annotations by using image-level labels or leveraging inherent image structure.

- Federated Learning: Training models across multiple institutions without sharing raw patient data to improve generalizability and privacy.

Performance metrics from recent seminal studies are summarized below.

Table 1: Performance of Deep Learning Systems in Diabetic Retinopathy Detection

| Study / Model (Year) | Dataset | Key Metric | Performance | Expert Comparison |

|---|---|---|---|---|

| Gulshan et al., JAMA (2016) | EyePACS-1, Messidor-2 | Sensitivity (RefER) | 90.3% & 87.0% | Comparable to retina specialists |

| FDA-Approved IDx-DR (2018) | Prospective Pivotal Trial | Sensitivity | 87.2% | Meets pre-specified superiority criterion |

| Arcadu et al., Nat Med (2019) | Proprietary Dataset | AUC for DR Progression | 0.79 | Predicts progression 2+ years prior |

Table 2: Performance of Deep Learning Systems in Tumor Detection (Brain MRI)

| Study / Model (Year) | Tumor Type | Dataset (Size) | Key Metric | Performance |

|---|---|---|---|---|

| U-Net (Original, 2015) | Glioblastoma | MICCAI BRATS 2013 | Dice Similarity Coefficient | 0.72 |

| nnU-Net (Isensee et al., 2021) | Various Brain Tumors | BRATS 2020 | Median Dice (Enhancing Tumor) | 0.83 |

| TransBTS (Wang et al., 2021) | Glioma Segmentation | BRATS 2019 & 2020 | Dice (Whole Tumor) | 0.904 |

Detailed Experimental Protocols

Protocol: Development and Validation of a DR Screening Algorithm

- Objective: To develop a deep learning algorithm for detecting referable DR (moderate or worse) from fundus photographs and validate its performance against certified ophthalmologists.

- Dataset Curation:

- Source: Retrospective collection from diabetic screening programs (e.g., EyePACS, Indian clinics).

- Inclusion Criteria: Adequate quality, linked diagnosis.

- Annotation: Each image graded by 3-7 licensed ophthalmologists based on the International Clinical Diabetic Retinopathy scale. The majority vote or adjudicated grade serves as reference standard.

- Splits: Random split into development (training/validation) and held-out test sets (~80%/20%). Ensure patient independence between sets.

- Model Development:

- Preprocessing: Resize images to uniform dimensions (e.g., 512x512). Apply normalization using ImageNet statistics.

- Architecture: Fine-tune a pre-trained ResNet-50 or Inception-v4 on the training set.

- Output: Single node with sigmoid activation for binary classification (referable vs. non-referable DR).

- Loss Function: Weighted binary cross-entropy to handle class imbalance.

- Optimization: Stochastic Gradient Descent (SGD) with momentum or Adam optimizer.

- Validation & Statistical Analysis:

- Primary Metrics: Calculate sensitivity, specificity, and area under the receiver operating characteristic curve (AUC) on the held-out test set.

- Comparison: Deploy the model and a panel of 8-10 ophthalmologists on a separate validation set (e.g., Messidor-2). Compare model performance to the median panel performance using non-inferiority margins.

Protocol: Brain Tumor Segmentation using nnU-Net

- Objective: To automatically segment brain tumor sub-regions (whole tumor, tumor core, enhancing core) from multimodal MRI (T1, T1-Gd, T2, FLAIR).

- Dataset: Use the BraTS (Brain Tumor Segmentation) challenge dataset.

- Preprocessing (Automated by nnU-Net):

- Co-registration: All modalities are co-registered to the same anatomical template.

- Intensity Normalization: Per-channel z-score normalization is applied.

- Cropping: Image is cropped to the region of non-zero voxels.

- Model Training:

- Framework: nnU-Net (“no-new-Net”), which automatically configures a U-Net-based pipeline.

- Architecture: 3D full-resolution U-Net with instance normalization and Leaky ReLU activations.

- Training: Uses a composite loss function (Dice + Cross-Entropy). 5-fold cross-validation is standard.

- Inference: Test time augmentations (e.g., mirroring) are applied, and predictions are averaged.

- Evaluation:

- Metric: Dice Similarity Coefficient (DSC) is calculated per tumor sub-region between the algorithm's segmentation and the expert-annotated ground truth.

- Reporting: Report mean and median DSC across all cases in the validation set.

Visualization of Workflows & Systems

Diagram: End-to-End Deep Learning Pipeline for Medical Image Analysis

Diagram: Conceptual Framework for the Thesis Question: "Can ML Replace Expert Assessment?"

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Platforms for Medical Image Analysis Research

| Category | Item / Solution | Function & Explanation |

|---|---|---|

| Data Sources | BraTS (Brain Tumor Segmentation) | Multimodal MRI brain tumor dataset with expert-annotated ground truth for segmentation benchmarking. |

| EyePACS / Kaggle Diabetic Retinopathy | Large public datasets of fundus photographs for DR detection algorithm development. | |

| The Cancer Imaging Archive (TCIA) | Public repository of medical images (CT, MRI, etc.) for oncology research. | |

| Annotation Tools | ITK-SNAP / 3D Slicer | Open-source software for manual and semi-automatic segmentation of 3D medical images. |

| Labelbox / CVAT | Cloud-based and on-prem platforms for collaborative image labeling and dataset management. | |

| Model Development | MONAI (Medical Open Network for AI) | PyTorch-based, domain-specific framework providing optimized medical imaging DL tools. |

| nnU-Net | Self-configuring framework for biomedical image segmentation that automates pipeline design. | |

| Compute & Infrastructure | NVIDIA Clara | Application framework and GPU-accelerated libraries optimized for medical imaging and genomics. |

| Google Cloud Healthcare AI / AWS HealthLake | Cloud platforms with HIPAA-compliant services for storing, processing, and analyzing medical data. | |

| Model Evaluation | MedPy / scikit-image | Python libraries offering medical image-specific evaluation metrics (e.g., HD95, ASD). |

| Grand Challenge | Platform for hosting fair, blinded validation challenges in biomedical image analysis. |

This case study demonstrates that deep learning models can achieve expert-level performance in specific, constrained medical image analysis tasks such as diabetic retinopathy screening and brain tumor segmentation. Quantitative evidence supports their potential for high-throughput, consistent preliminary assessment. However, significant barriers—including algorithmic bias, brittleness in out-of-distribution data, and a lack of integrative clinical reasoning—currently prevent direct replacement of the human expert. The prevailing evidence supports a thesis of augmentation, where AI acts as a powerful decision-support tool, increasing efficiency and access while leaving final diagnosis and holistic patient management in the domain of the clinician. Future research must focus on explainable AI (XAI), robust validation in real-world settings, and seamless workflow integration to realize this collaborative potential.

This technical guide examines the role of machine learning (ML) in modern pharmaceutical research, framed by a critical thesis: Can machine learning replace expert assessment in medicine research? We explore this question through three core pillars—target identification, compound screening, and clinical trial design—assessing where ML augments versus potentially supplants human expertise.

Target Identification: Unraveling Disease Biology with AI

Thesis Context: ML models can process vast omics datasets to propose novel targets, but biological validation and contextual interpretation remain firmly in the domain of experts.

Methodology & Protocols:

- Multi-Omics Integration: ML pipelines (e.g., deep neural networks, graph convolutional networks) integrate genomics, transcriptomics, and proteomics from public repositories (TCGA, GTEx, ClinVar). The model learns non-linear relationships to rank genes/proteins by predicted disease relevance.

- Validation Protocol: Top-ranked targets undergo in vitro validation via siRNA/CRISPR knockout in relevant cell lines (e.g., cancer cell lines from ATCC). Phenotypic readouts (cell viability, migration) and pathway analysis (Western blot, qPCR) confirm target essentiality.

- Druggability Assessment: A separate NLP model mines scientific literature and patent databases to predict the feasibility of developing small-molecule or biologic inhibitors against the target.

Quantitative Data: Performance of AI Models in Target Discovery

| Model Type | Primary Data Source | Key Metric | Reported Performance (Range) | Benchmark |

|---|---|---|---|---|

| Graph Convolutional Network (GCN) | Protein-Protein Interaction Networks | AUC-ROC (Target Prioritization) | 0.82 - 0.91 | Random Walk Baseline (AUC ~0.65) |

| Transformer (e.g., BERT variants) | Biomedical Literature (PubMed) | Precision @ Top 100 Predictions | 30% - 45% | Expert Curation Set |

| Multi-Layer Perceptron (MLP) | TCGA Pan-Cancer Data | Concordance with Known Cancer Genes | 75% - 85% | COSMIC Census |

AI-Driven Target Identification Workflow

The Scientist's Toolkit: Research Reagent Solutions for Target Validation

| Item / Reagent | Function in Validation | Example Vendor/Product |

|---|---|---|

| CRISPR-Cas9 KO/KI Kits | Precise gene knockout/knock-in for functional validation. | Synthego (Arrayed sgRNA Libraries) |

| siRNA/shRNA Libraries | High-throughput gene silencing for phenotypic screening. | Horizon Discovery (siGENOME) |

| Phospho-Specific Antibodies | Detect pathway activation/inhibition via Western Blot. | Cell Signaling Technology |

| High-Content Imaging Systems | Quantify subcellular phenotypes (translocation, morphology). | PerkinElmer (Opera Phenix) |

| Pathway Reporter Assays | Luciferase-based readouts for signaling activity (e.g., NF-κB). | Promega (pGL4 Vectors) |

Compound Screening: Accelerating Hit-to-Lead

Thesis Context: AI excels at virtual screening and de novo design, yet expert medicinal chemists are irreplaceable for assessing synthetic feasibility, ADMET risks, and scaffold novelty.

Methodology & Protocols:

- AI-Driven Virtual Screening: A pre-trained generative model (e.g., REINVENT, MolGPT) proposes novel molecular structures constrained by a target's 3D binding pocket (from AlphaFold2 or crystal structures). A discriminative model (e.g., Random Forest or CNN) scores compounds for binding affinity (pIC50) and drug-likeness (QED, SAscore).

- Experimental Validation Protocol: Top in silico hits are procured from chemical vendors or synthesized. Primary screening uses a target-binding assay (SPR, thermal shift) and a cell-based functional assay. Dose-response curves (IC50/EC50) are generated.

- Lead Optimization Cycle: ML models trained on internal assay data predict SAR, suggesting structural modifications. Experts prioritize suggestions based on medicinal chemistry principles.

Quantitative Data: AI Performance in Virtual Screening & Design

| AI Task | Model Architecture | Dataset | Key Outcome Metric | Performance vs. Traditional Method |

|---|---|---|---|---|

| Virtual Screening (Ligand-Based) | Deep Neural Network (DNN) | ChEMBL (>1.5M compounds) | Enrichment Factor (EF1%) | 25-35 (AI) vs. 10-15 (Molecular Fingerprint) |

| De Novo Molecule Generation | Generative Adversarial Network (GAN) | ZINC15 Library | Novelty (Tanimoto <0.4) & Synthetic Accessibility | 85% novel, 92% synthesizable (AI) |

| Property Prediction (ADMET) | Graph Neural Network (GNN) | Public/Proprietary ADMET data | Mean Absolute Error (MAE) for LogD7.4 | MAE: 0.35-0.45 (AI) vs. 0.5-0.7 (Classical QSAR) |

AI-Enhanced Compound Screening & Optimization Cycle

The Scientist's Toolkit: Research Reagent Solutions for Screening

| Item / Reagent | Function in Screening | Example Vendor/Product |

|---|---|---|

| AlphaFold2 Protein DB | Access to high-confidence predicted protein structures for targets. | EBI AlphaFold Database |

| DNA-Encoded Library (DEL) | Ultra-high-throughput screening platform for hit identification. | X-Chem (DEL Services) |

| Surface Plasmon Resonance (SPR) | Label-free kinetic analysis of compound-target binding. | Cytiva (Biacore Systems) |

| Cell-Based Reporter Assay Kits | Functional readout of target modulation (e.g., GPCR, kinase). | Thermo Fisher (GeneBLAzer) |

| Microsomal Stability Kits | Early in vitro assessment of metabolic stability. | Corning (Gentest) |

Clinical Trial Design: Optimizing for Success

Thesis Context: ML enhances trial efficiency through patient stratification and simulation, but regulatory approval, ethical oversight, and final protocol design demand expert judgment.

Methodology & Protocols:

- Patient Stratification: Unsupervised ML (e.g., consensus clustering) is applied to pretreatment multi-omics data from historical trials to identify biomarker-defined subgroups. Supervised models then predict subgroup-specific treatment response.

- Synthetic Control Arm Generation: Using real-world data (RWD) from electronic health records (EHRs), propensity score matching powered by ML creates a well-matched external control arm for single-arm trials, subject to regulatory review.

- Adaptive Trial Simulation: Reinforcement learning models simulate thousands of trial scenarios (varying enrollment criteria, dose, endpoints) to identify protocols that maximize statistical power and minimize cost/time.

Quantitative Data: Impact of AI on Clinical Trial Metrics

| Application Area | ML Technique | Data Source | Measured Improvement | Notes |

|---|---|---|---|---|

| Patient Recruitment | NLP for EHR Screening | Institutional EHRs | Recruitment Rate Increase: 20-30% | Reduction in screening failure. |

| Predictive Biomarker ID | Random Forest / Cox Model | Historical Trial Omics Data | Hazard Ratio (HR) in High-Risk Subgroup: <0.6 vs. Unstratified HR ~0.8 | Enriches for responders. |

| Synthetic Control Arm | Propensity Score Matching (ML-enhanced) | Flatiron Health RWD Database | Overall Survival Correlation (r) with RCT Arm: 0.85-0.92 | Used in oncology trial designs. |

AI-Informed Clinical Trial Design Process

The Scientist's Toolkit: Solutions for AI-Enhanced Trial Design

| Item / Platform | Function in Trial Design | Example Vendor/Product |

|---|---|---|

| Real-World Data (RWD) Platforms | Curated, de-identified patient data for cohort analysis and synthetic arms. | Flatiron Health, IQVIA E360 |

| Clinical Trial Simulation Software | Platforms with built-in ML for simulating adaptive designs and outcomes. | SAS, R (clinicaltrialsim package) |

| Biomarker Assay Development Kits | Validated IVD/CDx development kits for AI-identified biomarkers. | Agilent (SureSelect), Foundation Medicine |

| Electronic Patient Reported Outcomes (ePRO) | Digital tools for continuous remote data collection, analyzed by ML. | Medidata (Patient Cloud) |

The evidence across the drug development pipeline indicates that machine learning is a transformative, augmentative tool rather than a replacement for expert assessment. AI excels in pattern recognition from high-dimensional data, generating novel hypotheses, and optimizing complex simulations. However, the critical tasks of contextualizing findings within biological reality, assessing practical and ethical feasibility, making strategic decisions under uncertainty, and fulfilling regulatory requirements remain deeply human endeavors. The future of efficient drug discovery lies in the synergistic partnership between AI's computational power and the irreplaceable expertise, intuition, and judgment of scientists and clinicians.

This whitepaper addresses a critical component of the broader thesis: Can machine learning replace expert assessment in medicine research? Operationalization—the process of integrating validated AI models into reliable, scalable, and safe production environments—is the essential bridge between algorithmic promise and tangible clinical or research impact. Without effective operationalization, even the most accurate model remains a research artifact, incapable of augmenting or potentially replacing elements of expert human assessment.

Foundational Frameworks for AI Integration