Advanced Techniques for Measuring Nucleation Rate: Experimental Validation and Applications in Pharmaceutical Development

This article provides a comprehensive overview of modern experimental techniques for measuring nucleation rates, a critical parameter in crystallization processes for pharmaceutical development.

Advanced Techniques for Measuring Nucleation Rate: Experimental Validation and Applications in Pharmaceutical Development

Abstract

This article provides a comprehensive overview of modern experimental techniques for measuring nucleation rates, a critical parameter in crystallization processes for pharmaceutical development. It covers foundational principles of classical nucleation theory and the inherent stochastic nature of the process. The content explores methodological advances including constant cooling rate analysis, metastable zone width (MSZW) determination, and droplet freezing techniques. It addresses key troubleshooting challenges such as instrument resolution limits and statistical biases in parameter estimation. Finally, the article presents rigorous validation frameworks through method intercomparison studies and statistical analysis, offering researchers and drug development professionals practical insights for optimizing crystallization processes and ensuring reproducible results in API manufacturing.

Understanding Nucleation Fundamentals: From Theory to Experimental Challenges

Classical Nucleation Theory (CNT) provides the fundamental framework for quantifying the rate at which the first-order phase transitions occur, such as the formation of liquid droplets from a supersaturated vapor or solid crystals from a supersaturated solution or melt. This process is thermally activated and stochastic, where the formation of a stable nucleus of the new phase must overcome a free energy barrier. The CNT formalism describes this nucleation barrier as a balance between the favorable bulk free energy change and the unfavorable surface free energy penalty associated with creating an interface between the new and parent phases. The critical nucleus size represents the unstable equilibrium point where the free energy is maximum; clusters smaller than this tend to redissolve, while larger clusters are likely to grow spontaneously. The nucleation rate, which quantifies the number of nucleation events per unit volume per unit time, depends exponentially on the height of this free energy barrier.

The standard CNT expression for the nucleation rate J is given by: J = kn exp(-ΔG/RT) where kn is the kinetic constant, ΔG is the Gibbs free energy of nucleation, R is the gas constant, and T is the temperature [1]. Accurately quantifying this rate is crucial for applications ranging from atmospheric science predicting ice cloud formation to pharmaceutical manufacturing controlling crystal polymorphism. However, the direct experimental validation of CNT predictions faces challenges due to the nanoscale dimensions of critical nuclei and the rarity of nucleation events, leading to the development of advanced computational and experimental methods to test and refine the theory's foundational assumptions [2] [3].

Recent Theoretical and Computational Advances

Recent research has focused on extending CNT's basic framework to account for physical realities that become significant at the nanoscale, and on using advanced computational methods to rigorously test its limits.

Extensions to the Classical Framework

A key advancement involves incorporating curvature-dependent surface tension, known as the Tolman correction, which becomes significant for nuclei with radii below approximately 10 nm [4]. For such small nuclei, the assumption of a constant surface tension (valid for flat interfaces) breaks down. Explicitly including this correction, along with real-gas behavior via a Van der Waals correction, has yielded a more accurate CNT formulation for predicting cavitation inception at nanoscale gaseous nuclei. This refined model predicts lower cavitation pressures than the traditional Blake threshold, showing closer agreement with molecular dynamics simulations [4].

Computational Validation and Limits of Applicability

Molecular dynamics (MD) simulations have become an indispensable tool for studying nucleation, providing atomic-level insight into a process that is difficult to observe directly in experiments. Seeding methods are a powerful computational technique where a pre-formed nucleus is inserted into a metastable system to study its evolution [2]. This approach is particularly effective in the canonical (NVT) ensemble for determining stable cluster properties and provides a stringent test for CNT. Simulations of Lennard-Jones condensation have demonstrated that CNT can accurately predict stable cluster radii across a wide range of conditions, with even simple thermodynamic models like the ideal gas approximation proving useful for initializing simulations [2].

However, sophisticated free energy calculations for Lennard-Jones and water systems have delineated the boundaries of CNT's validity. The theory, along with the Tolman equation, receives strong support from simulations for large clusters containing a few hundred particles. Conversely, these theories break down for very small clusters, where the capillary approximation of a sharp interface becomes unrealistic [3]. This establishes a lower size limit for the reliable application of classical approaches.

Experimental Methodologies for Rate Quantification

Quantifying nucleation rates experimentally requires ingenious methods to detect the stochastic formation of critical nuclei. The following table summarizes key experimental protocols and their applications.

Table 1: Experimental Methods for Nucleation Rate Quantification

| Method | Fundamental Principle | Primary Application Domain | Key Measurable Output |

|---|---|---|---|

| Polythermal Method (MSZW) [1] | Cooling a solution from saturation temperature at a constant rate until nucleation is detected. | Solution crystallization (Pharmaceuticals, Inorganics, Biomolecules) | Metastable Zone Width (MSZW), Nucleation Temperature (Tnuc) |

| Constant Cooling Rate [5] | Supercooling a liquid at a constant rate until freezing is detected. | Ice nucleation (Thermal Energy Storage, Atmospheric Science) | Distribution of Freezing Temperatures |

| Seeded MD Simulations [2] | Inserting a pre-formed nucleus into a metastable system in NVT or NPT ensemble. | Computational studies of phase transitions (Validation of CNT) | Critical cluster size, Nucleation free energy barrier |

| Ice Nucleation Instruments [6] | Measuring ice-nucleating particles (INPs) via immersion freezing or deposition nucleation in field or lab. | Atmospheric Science & Cloud Physics | INP concentration vs. temperature |

The Polythermal Method and Metastable Zone Width (MSZW)

The polythermal method is widely used in crystallization studies. An initially undersaturated solution is cooled at a fixed rate from a known saturation temperature (T). The temperature at which the first crystals are detected is the nucleation temperature (Tnuc). The difference ΔTmax = *T - *Tnuc is the Metastable Zone Width (MSZW), which defines the supersaturation window where the solution is metastable and crystal growth can occur without spontaneous nucleation [1].

A recent model enables the extraction of nucleation rates and Gibbs free energy directly from MSZW data obtained at different cooling rates [1]. The nucleation rate is related to experimental parameters by: J = ( dc* / dT ) × ( dT* / dt ) × ( Δcmax / ΔTmax ) where dc/dT* is the slope of the solubility curve, dT/dt* is the cooling rate, and Δcmax is the supersaturation at the nucleation point. A linearized plot of ln(Δcmax/ΔTmax) versus 1/Tnuc allows for the determination of the nucleation kinetic constant kn and the Gibbs free energy of nucleation ΔG [1]. This methodology has been successfully validated across a diverse set of 22 solute-solvent systems, including active pharmaceutical ingredients (APIs), inorganic compounds, and large biomolecules like lysozyme [1].

Statistical Analysis of Stochastic Ice Nucleation

In experiments measuring the freezing of supercooled water, the stochastic nature of nucleation means that repeated experiments at a constant cooling rate yield a distribution of freezing temperatures. Advanced statistical methods are required to reliably extract the nucleation rate parameters, k and ΔG, from such datasets. Traditional binning methods are model-free but lack accuracy. Recent advances introduce bias-corrected maximum likelihood estimation (BC MLE) and Bayesian analysis with reference priors [5]. These methods provide more accurate parameter estimation, effectively address systematic biases, and offer a robust framework for quantifying uncertainty, which is crucial for engineering applications like designing supercooling-based thermal energy storage systems [5].

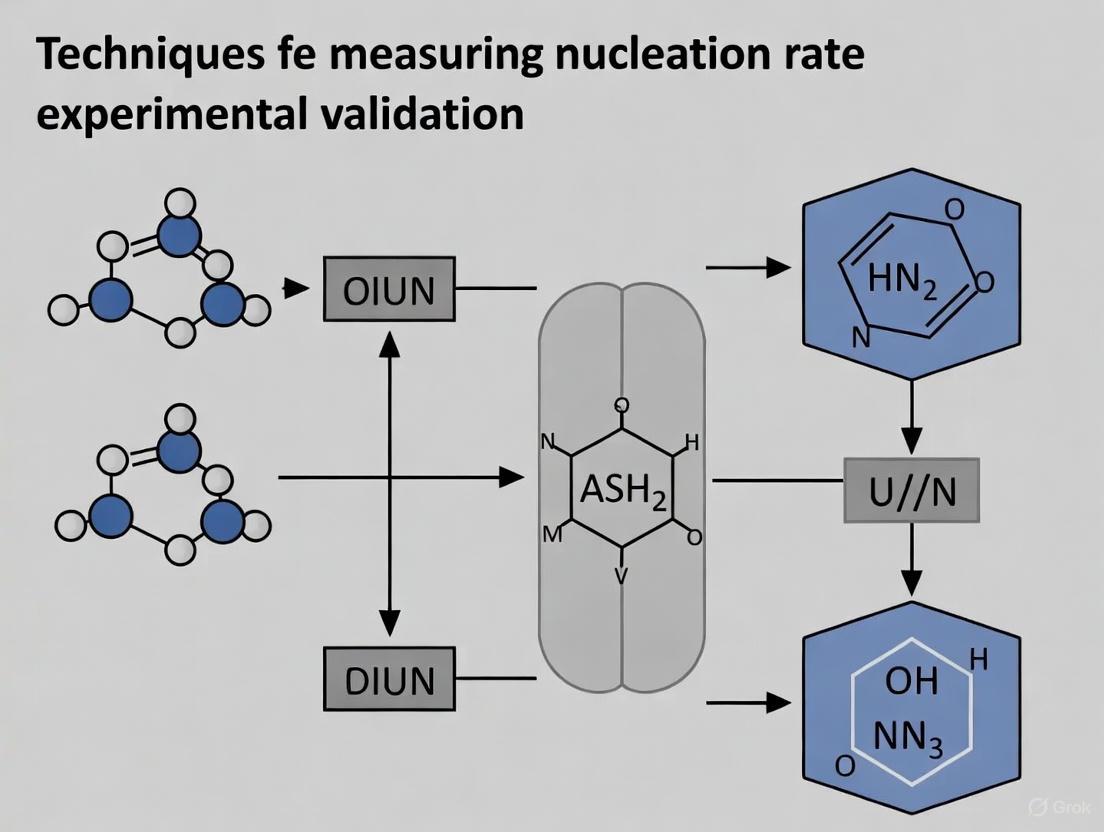

The following diagram illustrates the logical relationship between different experimental and computational methods used to quantify nucleation rates and validate CNT.

Diagram 1: CNT Validation Methods and Applications. This diagram shows the relationship between Classical Nucleation Theory (CNT), the primary methods used for its experimental and computational validation, and its key application domains.

CNT in Pharmaceutical and Industrial Applications

The quantification of nucleation is critical in industrial applications, particularly in the pharmaceutical industry, where the crystalline form of an Active Pharmaceutical Ingredient (API) impacts stability, solubility, and bioavailability.

Quantitative Analysis of API Nucleation

The application of the MSZW model to 10 different API/solvent systems has provided quantitative nucleation parameters [1]. The calculated nucleation rates for APIs ranged from 1020 to 1024 molecules per m³·s, while the Gibbs free energy of nucleation (ΔG) varied from 4 to 49 kJ mol-1 [1]. For the large biomolecule lysozyme, the nucleation rate was significantly higher (up to 1034 molecules per m³·s), with a correspondingly larger ΔG of 87 kJ mol-1, highlighting the energetic challenges in nucleating larger, more complex molecules [1]. Beyond the rate and ΔG, the model enables the calculation of other crucial thermodynamic parameters, including the surface free energy (interfacial tension), the radius of the critical nucleus, and the number of unit cells within it, based solely on experimentally accessible MSZW data [1].

Table 2: Experimentally Determined Nucleation Parameters for Selected Systems [1]

| Compound | Solvent | Nucleation Rate, J (molecules m⁻³ s⁻¹) | Gibbs Free Energy, ΔG (kJ mol⁻¹) | Nucleation Kinetic Constant, kn |

|---|---|---|---|---|

| Lysozyme | NaCl Solution | Up to 1034 | 87.0 | - |

| Paracetamol | Water | 1020 - 1024 | 17.4 | 2.04 × 1011 |

| Glycine (Amino Acid) | Water | 1020 - 1024 | 15.0 | 1.59 × 1011 |

| L-Arabinose (API Intermediate) | Water | 1020 - 1024 | 4.2 | 2.83 × 108 |

The Scientist's Toolkit: Key Research Reagents and Materials

The experimental and computational studies cited rely on a core set of reagents, materials, and software tools.

Table 3: Essential Research Tools for Nucleation Rate Quantification

| Tool / Reagent | Function / Description | Field of Use |

|---|---|---|

| Lennard-Jones Potential | A simple model potential for van der Waals interactions used in MD simulations to study fundamental nucleation behavior. | Computational Physics/Chemistry [2] [3] |

| TIP4P/2005 Water Model | A highly accurate empirical model for water molecules used in MD simulations to study ice nucleation. | Computational Physics [3] |

| LAMMPS | An open-source molecular dynamics simulation package used for performing seeded and brute-force nucleation simulations. | Computational Materials Science [2] |

| Active Pharmaceutical Ingredients (APIs) | The active components in pharmaceuticals studied to control crystallization kinetics and polymorph selection. | Pharmaceutical Science [1] |

| Silicone/Fluorocarbon Coatings | Surface treatments used to suppress heterogeneous ice nucleation in thermal storage systems. | Engineering & Materials Science [5] |

| Ice Nucleating Particles (INPs) | Atmospheric aerosols (e.g., mineral dust, biological particles) studied for their role in cloud glaciation. | Atmospheric Science [6] |

Classical Nucleation Theory remains an indispensable, though evolving, framework for quantifying nucleation rates across diverse scientific and engineering disciplines. While its core equation provides a robust starting point, recent work has been focused on defining its limits and enhancing its accuracy through nanoscale corrections like the Tolman equation [4] and sophisticated computational validation [2] [3]. Experimentally, the development of models that extract quantitative parameters like Gibbs free energy and nucleation rates from standard measurements like MSZW represents a significant advance, especially for pharmaceutical applications [1]. Concurrently, improved statistical methods for analyzing stochastic nucleation events are increasing the reliability of empirical predictions for technologies like ice thermal storage [5]. The continued integration of theoretical, computational, and experimental approaches ensures that CNT will remain a vital tool for quantifying and controlling phase transitions in research and industry.

Nucleation, the initial formation of a new thermodynamic phase from a parent phase, represents a fundamental process in materials science, pharmaceutical development, and climate science. The inherently stochastic nature of nucleation poses significant challenges for its quantitative study and accurate measurement. This stochasticity arises from the fact that nucleation is an activated process, where an energy barrier must be overcome through random molecular fluctuations to form a critical nucleus capable of subsequent growth [7] [8].

The Classical Nucleation Theory (CNT) provides the foundational framework for understanding this process, describing how the nucleation frequency depends on system-specific energy barriers and kinetic factors [9] [7]. However, CNT has significant limitations, particularly its restriction to conditions near solubility limits where nucleation rates become impractically low for experimental measurement [9]. This theoretical constraint, combined with the practical challenges of detecting nanoscale nuclei and competing processes like crystal growth and secondary nucleation, makes accurate experimental determination of nucleation rates particularly difficult [7] [10].

This article examines current methodologies for measuring nucleation rates, comparing deterministic and stochastic approaches while providing detailed experimental protocols and their underlying principles. By framing this discussion within the context of experimental validation research, we aim to provide scientists with a comprehensive toolkit for designing robust nucleation studies across diverse applications from pharmaceutical development to atmospheric science.

Theoretical Framework: Reconciling Stochastic Theory with Experimental Reality

The Fundamental Challenge of Stochasticity

Nucleation is fundamentally a stochastic process due to its dependence on random molecular fluctuations that overcome an energy barrier [7] [8]. This stochastic nature means that even under identical conditions, the timing of nucleation events will vary significantly across experimental replicates. The nucleation rate (J), defined as the number of nuclei formed per unit volume or surface area per unit time, provides the key quantitative measure for comparing nucleation propensity across different systems and conditions [8].

The mathematical description of this process follows an exponential decay model for the survival probability of the metastable phase. For a system with uniform nucleation properties, the fraction of unfrozen/untransformed material (UnF) decreases exponentially with time according to:

$${\mathrm {UnF}}\left( T \right) = \frac{{N{{\mathrm{ufz}}}(T)}}{{N{{\mathrm{tot}}}}} = e^{ - J_{{\mathrm{het}}}\left( T \right)At}$$ [8]

where Nufz is the number of unfrozen droplets or untransformed volumes, Ntot is the total number examined, A is the surface area or volume available for nucleation, and t is the time. This relationship predicts a straight line when plotting ln(Nufz/Ntot) versus time, with the slope providing a direct measure of the nucleation rate [8].

Limitations of Classical Nucleation Theory

While CNT provides a valuable theoretical framework, it faces significant limitations in practical applications. Tissot et al. confirmed that CNT cannot be applied to describe critical precipitates except near solubility limits, where nucleation rates are very low, making experimental measurements difficult [9]. This restriction poses problems for studying many real-world systems where nucleation occurs farther from equilibrium.

To address these limitations, alternative approaches have been developed. Phase Field (PF) methods within the Cahn-Hilliard-Cook (CHC) equation framework offer a unified approach for modeling decomposition everywhere inside the miscibility gap using few free parameters [9]. These methods replace the exact knowledge of kinetic pathways with identification of the index 1 saddle point associated with an effective Hamiltonian, providing a more robust foundation for predicting nucleation behavior across a wider range of conditions [9].

Methodological Comparison: Stochastic versus Deterministic Approaches

Fundamental Principles and Applications

Experimental approaches for measuring nucleation rates fall into two broad categories: deterministic methods based on population evolution, and stochastic methods based on induction time statistics. The table below compares their fundamental characteristics, applications, and limitations.

Table 1: Comparison of Deterministic and Stochastic Approaches to Nucleation Rate Measurement

| Aspect | Deterministic Methods | Stochastic Methods |

|---|---|---|

| Theoretical Basis | Population balance equations modeling crystal size distribution evolution [7] | Statistics of induction times based on probability distributions [7] [10] |

| Primary Output | Nucleation rate as function of supersaturation [7] | Nucleation rate from cumulative probability distributions [7] [10] |

| Experimental Focus | Evolution of crystal population attributes (count, size distribution) [7] | Timing of initial nucleation event across multiple replicates [7] [10] |

| Data Requirements | Continuous monitoring of crystal population | Multiple identical experiments to establish statistics |

| Strengths | Direct connection to process-scale attributes; established methodology | Directly accounts for inherent randomness of nucleation |

| Limitations | Overpredicts rates when secondary nucleation present; assumes deterministic behavior [7] | Underestimates rates when many primary nuclei form; requires numerous replicates [7] |

| Ideal Applications | Systems with dominant primary nucleation; process optimization | Fundamental studies; systems with significant stochasticity |

Comparative Performance and Accuracy

Recent validation studies have revealed significant systematic differences between these approaches. In a conceptual validation study, deterministic methods were shown to overpredict nucleation rates in the presence of secondary nucleation, while stochastic methods could underestimate rates when a large number of primary nuclei form [7]. This systematic bias stems from fundamental methodological differences: deterministic methods extract rates from population attributes that are sensitive to all nucleation events, while stochastic methods focus specifically on the timing of initial nucleation [7].

The magnitude of these discrepancies can be substantial. For p-aminobenzoic acid in ethanol-water mixtures, deterministic methods overpredicted nucleation rates by 5-6 orders of magnitude compared to stochastic methods, while for paracetamol in ethanol, the overprediction was 2-3 orders of magnitude [7]. These dramatic differences highlight the critical importance of methodological selection for accurate nucleation rate measurement.

Hybrid and Advanced Approaches

To overcome the limitations of both approaches, researchers have developed hybrid methodologies that combine deterministic and stochastic considerations. For instance, the Phase Field approach with CHC equation provides a self-consistent framework that can describe nucleation, growth, and spinodal decomposition in a unified manner across the miscibility gap [9]. This method identifies two characteristic times for nucleation and diffusion, with their ratio determining whether nucleation is modeled by a source term or an initial condition [9].

Similarly, microfluidic platforms that enable tight control over droplet volumes and identical conditions across hundreds of replicates allow for more constrained determination of ice nucleation kinetics, providing tighter constraints on nucleation rates than previously possible [8].

Experimental Protocols for Nucleation Rate Measurement

Induction Time Measurements with Automated Detection

The induction time approach measures the time between achieving supersaturation and the first detection of crystals, leveraging the inherent stochasticity of nucleation across multiple identical experiments [10]. The detailed methodology involves:

Sample Preparation: Prepare multiple identical stirred solutions with precisely controlled supersaturation in small volumes (typically 1-2 mL). For pharmaceutical compounds like diprophylline polymorphs, use temperature cycling between 60°C and 25°C, holding at the crystallization temperature until detection [10].

Nucleation Detection: Monitor transmissivity using in-situ analytics such as laser-based transmission probes. A sharp drop in transmissivity indicates crystal formation and defines the induction time [10] [11].

Data Collection: For each condition, conduct 50-100 identical replicates to establish reliable statistics on induction time distributions [10].

Feedback Control Implementation: Utilize automated feedback control to dramatically reduce experimental time. Systems like Crystal16 can detect dissolution (clear point) and crystallization (cloud point) events, automatically triggering the next temperature step. This can reduce experiment time from 70 hours to 15 hours for complete datasets [10].

Data Analysis: Plot cumulative probability distributions of induction times and extract nucleation rates from the distribution fitting. The nucleation rate J can be calculated from the slope of the cumulative distribution function [10].

Figure 1: Induction Time Measurement Workflow

Evaporative Crystallization Methodology

For compounds with temperature-insensitive solubility, evaporative crystallization provides an alternative approach for nucleation kinetics measurement [11]:

Experimental Setup: Utilize parallel reactors (e.g., 8 mL glass vials) with independent temperature control, magnetic stirring, and in-situ laser-based transmissivity monitoring. Deliver dry air to each vial through thermal Mass Flow Controllers to precisely regulate evaporation rates [11].

Parameter Variation: Test solutions at different temperatures (20-60°C), airflow rates (1-2 Ln/min), and initial concentrations (saturation ratios 0.8-0.9) to probe different supersaturation generation rates [11].

Nucleation Detection: Identify nucleation events as sharp drops in transmissivity, recording the precise time for each event [11].

Stochastic Modeling: Analyze nucleation times using a stochastic framework based on Classical Nucleation Theory. Plot cumulative distribution functions (CDFs) of nucleation times and supersaturation values at nucleation [11].

Variance Analysis: Distinguish between intrinsic stochastic variability and experimental inconsistencies through statistical analysis of the results [11].

Differential Thermal Analysis (DTA) Method

For glass and material science applications, DTA provides an alternative methodology for measuring crystal nucleation rates [12]:

Sample Preparation: Use small amounts of material (40-60 mg) with relatively large particle size (>400 μm) to minimize surface effects [12].

Thermal Treatment:

- Heat isothermally at nucleation temperature (TN) for time tN

- Heat at higher temperature (TG) for short time tG to allow crystal growth

- Perform non-isothermal heating at high heating rate to measure crystallization enthalpy [12]

Data Analysis: Calculate nucleation rates using analytical equations that relate the number of quenched-in nuclei and the temperature corresponding to the DTA peak maximum to the nucleation rate [12].

Validation: Compare with numerically simulated DTA curves generated from models with known nucleation rates to validate the methodology [12].

The Scientist's Toolkit: Essential Research Reagents and Equipment

Table 2: Essential Research Tools for Nucleation Rate Measurement

| Tool/Reagent | Function | Application Examples |

|---|---|---|

| Crystallization Platforms (Crystal16, Crystalline) | Provide small-scale parallel reactors with temperature control and in-situ transmissivity monitoring | Automated induction time measurements; evaporative crystallization studies [10] [11] |

| Microfluidic Devices | Enable precise control of droplet volumes and identical conditions across hundreds of replicates | Investigation of immersion freezing; study of intrinsic stochasticity [8] |

| Differential Thermal Analysis | Measure thermal events associated with crystallization | Determination of nucleation rates in glasses; quantification of quenched-in nuclei [12] |

| Model Compounds (e.g., lithium disilicate, sodium chloride, diprophylline) | Well-characterized reference materials for method validation | Protocol development; interlaboratory comparisons [12] [11] |

| Mass Flow Controllers | Precisely regulate evaporation rates in evaporative crystallization | Controlled supersaturation generation [11] |

Implications for Experimental Design Across Disciplines

Pharmaceutical Development

In pharmaceutical development, understanding nucleation kinetics is crucial for controlling polymorphism, crystal morphology, and particle size distribution [10]. The stochastic nature of nucleation means that robust process design must account for inherent variability rather than treating it as experimental error. The induction time method with automated detection enables efficient screening of nucleation behavior for different polymorphs, as demonstrated for diprophylline where Form RII showed much higher nucleation rates than Form RI in different solvents [10].

Atmospheric Science

In atmospheric science, immersion freezing - where an ice nucleating particle is immersed in a supercooled water droplet - represents a dominant ice formation pathway impacting climate [8]. The traditional concept of ice nucleation active site (INAS) densities fails to account for the time-dependent nature of freezing, leading to large predictive uncertainties [8]. A stochastic approach that accounts for uncertainties in ice nucleating particle surface area provides a more consistent description aligned with nucleation theory [8].

Materials Science

In materials science, predicting microstructure evolution during phase separation, such as the α-α' decomposition in FeCr alloys, requires accurate modeling of nucleation and growth processes [9]. The Phase Field approach with CHC equation enables direct comparison between simulated 3D microstructures and experimental measurements from Atom Probe Tomography, validating the predictive capability of the models [9].

The stochastic nature of nucleation fundamentally influences experimental design across scientific disciplines. Methodological selection between deterministic and stochastic approaches carries significant implications for accuracy, with each exhibiting systematic biases under different conditions. The development of hybrid methods and advanced instrumentation platforms continues to improve our ability to measure and predict nucleation rates across diverse applications.

Future directions in nucleation research will likely focus on developing more unified frameworks that bridge atomic-scale stochastic events with macroscopic observable phenomena, ultimately enabling more predictive control of crystallization processes in materials synthesis, pharmaceutical development, and climate modeling.

In the study of crystallization, a fundamental process in pharmaceutical and materials science, two parameters are paramount for understanding and controlling the initial formation of a new phase: the nucleation rate constant and the Gibbs free energy of nucleation. The nucleation rate constant, often denoted as k_n, is a kinetic parameter that represents the frequency of successful molecular collisions leading to the formation of a stable nucleus. The Gibbs free energy of nucleation (ΔG), a thermodynamic parameter, defines the energy barrier that must be overcome for a nucleus to achieve a critical, stable size. Accurately measuring these parameters is essential for predicting crystallization behavior, controlling crystal size and polymorphism, and optimizing industrial processes in drug development. This guide objectively compares contemporary techniques for their experimental determination, framing the discussion within the broader thesis of advancing measurement validation in nucleation research.

Theoretical Foundations and Measurement Principles

Classical Nucleation Theory (CNT) Fundamentals

Classical Nucleation Theory (CNT) provides the fundamental framework for quantifying nucleation, describing it as a thermally activated process where the nucleation rate J (number of nuclei per unit volume per second) is governed by an Arrhenius-type relationship [13]:

J = k_n * exp(-ΔG / (k_B * T)) ...(1)

where k_B is the Boltzmann constant and T is the absolute temperature.

The Gibbs free energy barrier (ΔG) for the formation of a spherical critical nucleus is given by [14] [13]:

ΔG = (16πγ³υ²) / (3(k_B T ln S)²) ...(2)

where γ is the surface tension (interfacial energy), υ is the molecular volume, and S is the supersaturation ratio. These equations show the profound interdependence of kinetics and thermodynamics in nucleation; the rate J is exponentially sensitive to the energy barrier ΔG, which itself is a strong function of supersaturation and temperature.

Core Parameter Relationships and Definitions

The parameters k_n and ΔG are not merely abstract values but have concrete physical interpretations. The nucleation rate constant (k_n) encompasses the pre-exponential factors in CNT, including the concentration of nucleation sites, the Zeldovich factor (accounting for the probability that a critical nucleus will grow), and the molecular attachment frequency [13]. The Gibbs free energy of nucleation (ΔG) represents the maximum work required to form a critical nucleus—a cluster of molecules that has an equal probability of growing or dissolving. From ΔG, other critical properties like the radius of the critical nucleus (r*) and the number of molecules within it can be derived [1].

Comparative Experimental Methodologies

A variety of experimental protocols are employed to measure the Metastable Zone Width (MSZW) and extract the key parameters k_n and ΔG. The table below compares the core principles and applications of three prevalent techniques.

Table 1: Comparison of Key Experimental Methodologies for Nucleation Studies

| Methodology | Fundamental Principle | Primary Measured Output | Key Advantages | Typical Systems/Applications |

|---|---|---|---|---|

| Polythermal (Cooling) Crystallization [1] [15] | A solution at a known saturation temperature (T_0) is cooled at a constant rate until nucleation is detected at T_nuc. The MSZW is ΔT_max = T_0 - T_nuc. |

Metastable Zone Width (ΔT_max), Nucleation Temperature (T_nuc). |

Experimentally simple, widely applicable, mimics industrial cooling crystallization processes. | APIs, Inorganic salts (e.g., Li₂CO₃), Amino acids (e.g., Glycine). |

| Droplet Freezing Technique (DFT) [16] | Numerous individual droplets of a solution or suspension are cooled on a cold stage. The frozen fraction of droplets is monitored versus temperature. | Frozen fraction curves, Cumulative Ice Nucleating Particle (INP) spectra. | High sensitivity, allows statistical treatment of stochastic nucleation events, measures very low INP concentrations. | Atmospheric science, Ice nucleation in water, Biological INPs (e.g., Snomax). |

| Induction Time Measurement [15] | A solution is rapidly brought to a constant supersaturation, and the time elapsed until the first detectable nuclei appear (t_ind) is measured. |

Induction Time (t_ind). |

Provides direct insight into nucleation kinetics at a fixed driving force, can decouple nucleation from growth. | Fundamental kinetic studies, effect of impurities on nucleation. |

Detailed Experimental Protocol: Polythermal Method with Laser Detection

The following workflow details a standardized protocol for measuring MSZW, as applied in studies like the crystallization of Li₂CO₃ [15].

Step-by-Step Procedure [15]:

- Solution Preparation: A saturated solution of the solute (e.g., Li₂CO₃) is prepared in a jacketed crystallizer equipped with precise temperature control. The solution is maintained at a known saturation temperature (

T_0) with continuous stirring for a sufficient time to ensure complete dissolution and homogeneity. - Cooling and Detection: The solution is cooled from

T_0at a predefined, constant cooling rate (R). The onset of nucleation is detected in real-time using a laser turbidimeter, which measures the sudden increase in light scattering caused by the formation of the first crystal nuclei. - Data Recording: The temperature at which this nucleation event is detected is recorded as the nucleation temperature (

T_nuc). The Metastable Zone Width (MSZW) is calculated asΔT_max = T_0 - T_nuc. - Repetition and Modeling: The experiment is repeated at different saturation temperatures (

T_0) and cooling rates (R). The collected data (ΔT_max,T_nuc,R) is then used with a model, such as the one proposed by Vashishtha and Kumar (2025), to calculate the nucleation rate constant (k_n) and Gibbs free energy of nucleation (ΔG) [1].

Detailed Experimental Protocol: Droplet Freezing Technique (FINDA-WLU)

For systems like ice nucleation, the Droplet Freezing Technique is the gold standard. The following protocol is based on the improved Freezing Ice Nucleation Detection Analyzer (FINDA-WLU) [16].

Step-by-Step Procedure [16]:

- Droplet Array Preparation: A suspension containing the material of interest (e.g., Arizona Test Dust, Snomax, or a solution) is prepared. Using a pipette or a microfluidic device, multiple dozens of microliter-sized droplets are dispensed into the wells of a 96-well PCR plate.

- Temperature-Controlled Freezing: The PCR plate is placed onto a custom-made, temperature-controlled aluminum cold stage, which is sealed with an insulated lid to prevent frost. The stage is cooled at a constant, programmable rate (e.g., 0.1–1.0 °C/min).

- Automated Freezing Detection: A CCD camera positioned above the plate continuously monitors the droplets. When a droplet freezes, it becomes opaque due to the formation of micro-crystals. This change in optical appearance is automatically detected by the instrument's software.

- Data Analysis and INP Spectra: The temperature at which each individual droplet freezes is recorded. The data from the entire droplet array is used to generate a frozen fraction curve as a function of temperature. This curve is then statistically analyzed to determine the cumulative concentration of ice-nucleating particles (INPs) and the nucleation rate.

Quantitative Data Comparison Across Material Classes

Recent research provides direct quantitative comparisons of k_n and ΔG across a wide range of materials. A 2025 study developed a model using MSZW data to directly estimate these parameters, yielding the following comparative data [1].

Table 2: Experimentally Determined Nucleation Parameters for Various Material Classes [1]

| Material Class | Example Compound | Nucleation Rate Constant (k_n, molecules m⁻³ s⁻¹) |

Gibbs Free Energy of Nucleation (ΔG, kJ mol⁻¹) |

|---|---|---|---|

| Active Pharmaceutical Ingredients (APIs) | Various (10 systems) | 10²⁰ – 10²⁴ | 4 – 49 |

| Large Biomolecule | Lysozyme | Up to 10³⁴ | 87 |

| Amino Acid | Glycine | Reported in study | Reported in study |

| Inorganic Compounds | Various (8 systems) | Reported in study | 4 – 49 (for most) |

| API Intermediate | L-Arabinose | Reported in study | Reported in study |

Key Insights from Comparative Data:

- APIs and Inorganics Show Similar Energetics: The Gibbs free energy barrier for most APIs and inorganic compounds falls within a relatively narrow range (4–49 kJ mol⁻¹), suggesting some universality in the nucleation thermodynamics for small molecules, despite significant chemical differences [1].

- Biomolecules Exhibit High Barriers: The large biomolecule lysozyme exhibits a significantly higher Gibbs free energy (87 kJ mol⁻¹). This reflects the greater complexity and lower conformational flexibility of large molecules, which must organize into a critical nucleus, resulting in a much higher energy barrier [1].

- Nucleation Rate Constants Vary Dramatically: The nucleation rate constant

k_nspans over 14 orders of magnitude across the studied systems. Lysozyme's extremely highk_n(up to 10³⁴) compensates for its very highΔGin the nucleation rate equation, allowing nucleation to occur on practical timescales under the right conditions [1].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful experimental determination of nucleation parameters relies on specific reagents and instrumentation.

Table 3: Essential Research Reagents and Solutions for Nucleation Studies

| Item / Reagent | Function in Experiment | Example from Research |

|---|---|---|

| High-Purity Solutes & Solvents | To minimize the impact of unknown impurities that can act as unintended nucleation sites, thereby ensuring reproducible and homogeneous nucleation kinetics. | Analytical grade LiCl, Na₂CO₃ used in Li₂CO₃ crystallization [15]. |

| Reference Nucleating Materials | To act as a calibrated standard for validating and benchmarking new experimental setups and methodologies. | Arizona Test Dust (ATD) and Snomax used to calibrate the FINDA-WLU instrument [16]. |

| Temperature Calibration Standards | To ensure accurate and precise temperature measurement of the cold stage or crystallizer, which is critical for determining ΔT_max and T_nuc. |

Pt100 sensors with ±0.15°C accuracy, sealed in thermally conductive epoxy in FINDA-WLU [16]. |

| Polymerase Chain Reaction (PCR) Plates | To act as a multi-well sample holder for high-throughput droplet freezing experiments, allowing dozens of replicate measurements simultaneously. | 96-well PCR plates used in FINDA-WLU and other DFT setups [16]. |

Interplay of Kinetics and Thermodynamics in Experimental Design

The relationship between the nucleation rate constant (k_n) and the Gibbs free energy (ΔG) is not merely theoretical but has direct consequences for experimental design and data interpretation. The exponential dependence of the nucleation rate J on ΔG means that small errors in measuring the supersaturation (S) or temperature (T) can lead to massive errors in the predicted J [13]. Furthermore, the choice of experimental method is often a trade-off between directly measuring a parameter and the practical feasibility.

This conceptual diagram illustrates the logical chain from controlled experimental conditions to the final measured output. The conditions of supersaturation and temperature directly set the thermodynamic energy barrier (ΔG). This barrier, in turn, exerts exponential control over the kinetic nucleation rate (J). Finally, this rate manifests in the laboratory as an experimentally observable quantity, such as the width of the metastable zone (ΔT_max) or the induction time (t_ind). This cascade highlights why precise control and measurement of S and T are non-negotiable for accurate validation of nucleation theories.

The accurate measurement of nucleation rates represents a cornerstone in the development and optimization of crystalline materials, particularly for Active Pharmaceutical Ingredients (APIs). Experimental metastability refers to the persistence of a supersaturated state before the spontaneous formation of nuclei, with the Metastable Zone Width (MSZW) serving as a critical parameter for determining nucleation kinetics. [17] Understanding this phenomenon is not merely academic; it directly enables the control of crystal size distribution, polymorphism, and purity in industrial processes. For researchers and drug development professionals, bridging theoretical models with practical measurement techniques is essential for advancing from empirical observations to predictive crystallization design.

The core challenge in this field lies in the direct experimental observation of nucleation events, as critical nuclei are transient, nanoscale entities. Consequently, the scientific community has developed sophisticated indirect methods to quantify nucleation rates by analyzing how metastable zones respond to controlled experimental conditions such as cooling rates and concentration changes. [17] This guide provides a comparative analysis of the predominant experimental techniques used to measure nucleation rates, evaluates their underlying protocols, and presents a structured framework for their application in pharmaceutical research and development.

Comparative Analysis of Measurement Techniques

The following section objectively compares two prominent methodological approaches for determining nucleation rates, highlighting their operational principles, data requirements, and suitability for different research scenarios.

Comparative Data Table

| Technique | Core Principle | Measured Nucleation Rate Range (molecules m⁻³ s⁻¹) | Key Measurable Parameters | Typical Systems Applicable |

|---|---|---|---|---|

| MSZW-based Model [17] | Correlates cooling rate with the onset of nucleation to deduce kinetics. | APIs: 10²⁰ to 10²⁴Large Molecules (e.g., Lysozyme): Up to 10³⁴ | Gibbs Free Energy of Nucleation (ΔG), Surface Free Energy, Critical Nucleus Size, Induction Time | APIs, API Intermediates, Amino Acids (e.g., Glycine), Inorganic Compounds in solution |

| Gradient Annealing with Microanalysis [18] | Quenches partially melted samples to preserve early-stage nuclei for ex-post-facto size/distribution analysis. | ~10¹³ (for liquid droplets in Al-Cu alloy) | Nucleation Rate as a function of time, Identification of bimodal nucleation site types | Metallic alloys, Solid solution systems undergoing melting |

Applicability and Throughput: The MSZW-based model is highly suitable for solution-based crystallization, which is the standard for pharmaceutical API development. It allows for high-throughput screening using readily available solubility and MSZW data. [17] In contrast, the Gradient Annealing technique is a specialized method primarily for material science, specifically for studying melting in solid solutions, and requires meticulous post-experiment microstructural analysis. [18]

Output and Insight: A key advantage of the newer MSZW model is its ability to extract fundamental thermodynamic parameters like Gibbs free energy of nucleation ( reported from 4 to 49 kJ mol⁻¹ for most compounds, and up to 87 kJ mol⁻¹ for lysozyme) directly from cooling curve experiments. [17] The Gradient Annealing method excels at providing temporally resolved nucleation rates and can identify heterogeneous nucleation mechanisms based on distinct particle size distributions. [18]

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for researchers, this section details the standard operating procedures for the featured techniques.

This protocol outlines the procedure for determining nucleation kinetics using cooling crystallization experiments.

Step 1: Solubility Determination. First, establish the fundamental solubility curve of the solute in the chosen solvent. This is typically done by equilibrating suspensions at various temperatures and analytically determining the saturation concentration (e.g., via HPLC or gravimetric analysis).

Step 2: Metastable Zone Width (MSZW) Measurement. Prepare a saturated solution at a known temperature. Using a controlled crystallizer equipped with temperature control and a detection method (e.g., Focused Beam Reflectance Measurement (FBRM), particle vision microscope (PVM), or turbidity probe), cool the solution at a constant, predefined cooling rate (e.g., 0.1 to 10 °C/min). Record the temperature at which a sudden change in particle count or turbidity is detected, indicating the onset of nucleation. This temperature defines the limit of the metastable zone for that cooling rate. Repeat this experiment for at least 3-5 different cooling rates.

Step 3: Data Analysis with Mathematical Model. Apply the new mathematical model based on Classical Nucleation Theory, as described by Vashishtha and Kumar. [17] The model uses the MSZW and solubility data at different cooling rates to directly calculate the nucleation rate, kinetic constant, and Gibbs free energy of nucleation. It further allows for the prediction of induction times.

This protocol describes a method for investigating nucleation during melting, as applied to an Al-Cu alloy.

Step 1: Sample Preparation and Homogenization. Prepare a homogeneous, coarse-grained sample of the material. For the referenced Al-Cu alloy, this involved casting, machining to a defined diameter (4mm), and prolonged annealing (100 hours at 813 K) to ensure a uniform microstructure. [18]

Step 2: Gradient Heat Treatment. Expose the prepared sample to a steep temperature gradient for a short, precise duration. In the referenced study, this was achieved using a middle-frequency induction coil, generating a gradient of ~80 K/mm with a maximum temperature exceeding the solidus temperature. [18] This results in a single sample containing regions that experienced different maximum temperatures.

Step 3: Quenching and Metallographic Preparation. Rapidly quench the sample to room temperature to preserve the microstructures developed at the high temperatures. Section the sample longitudinally, prepare metallographic specimens (grinding, polishing), and use etching to reveal the microstructure.

Step 4: Microstructural Analysis and Simulation. Analyze the quenched samples using scanning electron microscopy (SEM) or similar techniques. Identify and measure the size of at least 200 secondary-phase particles (e.g., spherical θ-phase in Al-Cu) that formed from nucleated liquid droplets. [18] The final nucleation rate is determined by combining these experimental size distributions with numerical simulations of droplet growth to back-calculate the nucleation kinetics.

Conceptual Framework and Workflow Visualization

The following diagrams map the logical relationships and experimental workflows central to understanding and measuring nucleation.

Theoretical-Experimental Bridge

MSZW Experimental Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful experimentation in nucleation rate measurement requires specific materials and analytical tools. The following table details key items and their functions in the context of the discussed protocols.

Research Reagent Solutions

| Item | Function in Experiment | Example / Specification |

|---|---|---|

| APIs & High-Purity Solutes | The model compound of interest for nucleation studies; purity is critical for reproducible results. | Various APIs, API intermediates, amino acids (e.g., Glycine), lysozyme. [17] |

| Inorganic Salts | Used as model systems for fundamental nucleation studies or in specific industrial applications. | 8 different inorganic compounds were validated in the MSZW model study. [17] |

| Al–Cu Alloy | A model metallic system for studying nucleation of melt in a solid solution via gradient annealing. | Al–3.7 wt.% Cu, homogenized and machined to specific dimensions (e.g., 4mm rod). [18] |

| Analytical Solvents | To create the solution for crystallization and for solubility determination; must be of appropriate purity. | Solvents chosen based on solute solubility and compatibility with detection methods. |

| Controlled Crystallizer | Provides a well-mixed, temperature-controlled environment for performing MSZW experiments. | Jacketed glass reactor with programmable thermostat and agitation. |

| Induction Heating System | To apply a controlled, localized, and rapid heat treatment for gradient annealing experiments. | Middle-frequency induction coil capable of generating steep thermal gradients (~80 K/mm). [18] |

| In-situ Particle Analyzer | To detect the onset of nucleation in real-time during MSZW experiments. | FBRM (Focused Beam Reflectance Measurement), PVM (Particle Vision Microscope), or turbidity probe. [17] |

| Scanning Electron Microscope (SEM) | For high-resolution imaging and analysis of quenched microstructures or crystal morphologies. | Used to measure size distributions of secondary-phase particles or nuclei. [18] |

The experimental techniques compared in this guide—the modern MSZW-based model and the Gradient Annealing method—provide robust, complementary pathways for quantifying nucleation rates. The MSZW approach offers a direct, high-throughput route highly relevant to pharmaceutical crystallization, yielding essential thermodynamic parameters. [17] The Gradient Annealing method, while more specialized, provides unique insights into time-resolved kinetics and heterogeneous nucleation mechanisms. [18] Mastery of these techniques, along with the associated toolkit, empowers scientists to transform the abstract concept of metastability into concrete, actionable data, thereby enabling more predictable and controlled manufacturing processes for advanced materials and life-saving drugs.

Special Considerations for Pharmaceutical Systems and APIs

In the development and manufacturing of Active Pharmaceutical Ingredients (APIs), controlling the crystallization process is paramount. The initial step of this process, nucleation, fundamentally dictates critical particle properties such as crystal size distribution, morphology, and polymorphic form. These properties, in turn, directly influence the bioavailability, stability, and processability of the final drug product. Consequently, the accurate measurement of nucleation rates is not merely an academic exercise but a crucial industrial requirement for ensuring consistent product quality and meeting stringent regulatory standards.

Pharmaceutical systems present unique challenges for nucleation studies. APIs are often complex organic molecules that can exist in multiple solid forms (polymorphs), each with distinct therapeutic implications. The drive towards more sustainable processes has also introduced novel solvent systems, such as Ionic Liquids (ILs), which exhibit different nucleation behaviors than traditional solvents. This guide provides a comparative analysis of the primary experimental techniques used to measure nucleation rates, focusing on their application to pharmaceutical systems and APIs. It details the underlying protocols, summarizes quantitative performance data, and outlines the essential toolkit required for researchers in this field.

Comparative Analysis of Measurement Techniques

Two primary methodologies dominate the experimental measurement of nucleation rates for APIs: the Induction Time Method and the Metastable Zone Width (MSZW) Method. A third, Evaporative Crystallization Method, is particularly valuable for systems with temperature-independent solubility. The table below provides a structured comparison of these key techniques.

Table 1: Comparison of Nucleation Rate Measurement Techniques for Pharmaceutical Applications

| Feature | Induction Time Method [10] [19] | MSZW Method (Polythermal) [1] [20] | Evaporative Crystallization Method [20] |

|---|---|---|---|

| Core Principle | Measures stochastic time interval between supersaturation creation and crystal detection at a constant temperature. | Measures the maximum undercooling (ΔT_max) a solution can withstand before nucleating at a defined cooling rate. | Induces supersaturation through solvent evaporation at a constant temperature, measuring nucleation time. |

| Governing Equation | ( J = \frac{1}{V \cdot \bar{t}} ) (J: nucleation rate, V: volume, ( \bar{t} ): mean induction time) [19] | ( J = kn \exp(-\Delta G / RT{\text{nuc}}) ) derived from MSZW data at different cooling rates [1] | Utilizes CNT-based rate expressions with evaporation-driven supersaturation profiles [20]. |

| Typical Nucleation Rates for APIs | Varies with system; e.g., Ibuprofen in IL: ~10⁹ to 10¹¹ m⁻³s⁻¹ [19] | Reported range for various APIs: 10²⁰ to 10²⁴ molecules·m⁻³s⁻¹ [1] | Demonstrated for NaCl; applicable to APIs with similar solubility challenges. |

| Key Experimental Output | Cumulative probability distribution of induction times; nucleation rate constant. | Nucleation rate kinetic constant (( k_n )), Gibbs free energy of nucleation (ΔG). | Nucleation parameters (kinetic constant, energy barrier) from nucleation time distributions. |

| Best Suited For | Small-volume, high-throughput studies; expensive APIs; polymorph screening. | Continuous or semi-batch crystallization design; studying cooling rate effects. | Compounds with temperature-independent solubility (e.g., NaCl, some APIs). |

| Primary Advantage | High accuracy; low material consumption; direct probing of stochastic nature. | Directly relevant to common industrial cooling crystallization processes. | Enables study of nucleation driven purely by solvent removal. |

| Primary Limitation | Can be time-consuming without automation; requires many replicates. | Relies on model fitting; results can be influenced by detection sensitivity. | More complex setup; discontinuous due to solvent depletion. |

Detailed Experimental Protocols

Induction Time Method

The induction time method leverages the stochastic nature of nucleation in small, well-controlled volumes. The following diagram illustrates the core workflow for this method.

Diagram 1: Workflow for the Induction Time Method

Step-by-Step Protocol [10] [19]:

- Solution Preparation: A saturated solution of the API is prepared in the chosen solvent (e.g., organic solvent or Ionic Liquid) at a known temperature. The solution is often filtered (e.g., using a 0.22 μm hydrophilic PTFE syringe filter) to remove any undissolved solids or particulate impurities that could act as heterogeneous nucleation sites [20].

- Supersaturation Generation: The solution is dispensed into multiple small-volume vials (e.g., 1-10 mL) in an automated parallel crystallizer (e.g., Crystal16 or Crystalline systems). Supersaturation is induced by rapidly cooling the solution to a predetermined target temperature. The use of parallel reactors is critical for obtaining statistical significance.

- Nucleation Detection: Each vial is stirred continuously, and nucleation is detected in real-time using non-invasive analytics. The most common method is a decrease in laser transmissivity (cloud point detection). The induction time ((t_i)) for each vial is recorded as the time elapsed between reaching the target temperature and the detected nucleation event.

- Data Collection & Repetition: Due to the inherent stochasticity, the experiment must be repeated numerous times (typically 50-100 replicates) under identical conditions of supersaturation and temperature to build a robust distribution of induction times [19].

- Data Analysis: The cumulative probability of nucleation versus time is plotted. For a system where a single nucleus causes detection, the nucleation rate (J) is calculated from the mean induction time (( \bar{t} )) and the volume of the solution ((V)) using the relationship: ( J = \frac{1}{V \cdot \bar{t}} ) [19]. This process is repeated at different supersaturation levels to model the dependence of the nucleation rate on the driving force.

Metastable Zone Width (MSZW) Method

The MSZW method is a polythermal technique that leverages different cooling rates to extract nucleation kinetics. The following diagram outlines its standard procedure.

Diagram 2: Workflow for the Metastable Zone Width (MSZW) Method

Step-by-Step Protocol [1] [20]:

- Initial Saturation: A solution is brought to a known saturation temperature ((T^*)) and held until fully dissolved.

- Linear Cooling: The solution is cooled linearly at a fixed, controlled cooling rate ((dT^*/dt)).

- Nucleation Detection: As the temperature decreases, the solution becomes supersaturated. The temperature at which the first crystals are detected ((T_{\text{nuc}})) is identified, typically by a sharp change in transmissivity.

- MSZW Determination: The metastable zone width is calculated as ( \Delta T{\text{max}} = T^* - T{\text{nuc}} ).

- Multiple Experiments: Steps 1-4 are repeated for the same solute-solvent system using several different cooling rates. This generates a dataset of ( \Delta T_{\text{max}} ) values as a function of cooling rate and saturation temperature.

- Model Fitting & Analysis: The data is fitted to a nucleation model. A recently developed model based on Classical Nucleation Theory (CNT) allows for the direct estimation of the nucleation rate (J), the nucleation rate constant ((kn)), and the Gibbs free energy of nucleation ((\Delta G)) from this MSZW data [1]. The model linearizes the relationship as: ( \ln(\Delta C{\text{max}} / \Delta T{\text{max}}) = \ln(kn) - \frac{\Delta G}{R T{\text{nuc}}} ) where ( \Delta C{\text{max}} ) is the supersaturation at the point of nucleation. A plot of ( \ln(\Delta C{\text{max}} / \Delta T{\text{max}}) ) versus ( 1/T{\text{nuc}} ) yields a straight line with a slope of ( -\Delta G/R ) and an intercept of ( \ln(kn) ), from which (J) can be computed.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful experimental validation of nucleation rates requires specific instrumentation, software, and reagents. The following table details the key components of a modern nucleation research toolkit.

Table 2: Essential Research Reagents and Solutions for Nucleation Studies

| Tool / Reagent | Function / Purpose | Example Specifications / Notes |

|---|---|---|

| Parallel Crystallizer [10] [20] | Enables high-throughput, statistically significant data collection by running multiple experiments (e.g., 8-16 reactors) simultaneously under tightly controlled conditions. | e.g., Crystal16 or Crystalline systems (Technobis). Includes individual temperature control, stirring, and transmissivity monitoring for each reactor. |

| Non-Invasive Analytics [10] [21] | Detects the onset of nucleation without disturbing the solution, allowing for accurate induction time measurement. | Laser transmissivity probes are standard. In-situ particle imaging (e.g., PVM) and particle counting (e.g., FBRM) provide additional crystal characterization. |

| Feedback Control Software [10] [21] | Automates experimental workflows (e.g., temperature cycling) to dramatically reduce the time required to collect nucleation data. | Can reduce total experiment time from weeks to a few hours by automatically triggering the next step upon dissolution or crystallization. |

| Model Compounds | Used for method validation and fundamental studies due to their well-characterized behavior. | e.g., Glycine (amino acid) [1], L-Glutamic Acid (polymorphs) [22], Sodium Chloride (inorganic) [20]. |

| Pharmaceutical Systems | The target systems for which nucleation kinetics are critical for process control. | e.g., Ibuprofen [19], various other APIs [1], Diprophylline (polymorphs) [10]. |

| Alternative Solvents | Green chemistry applications and studying solvent-specific effects on nucleation kinetics. | Ionic Liquids (e.g., BmimPF₆) [19]. |

| Mass Flow Controllers [20] | Provides precise control of gas flow in evaporative crystallization experiments for reproducible supersaturation generation. | Critical for ensuring a constant evaporation rate when studying systems with temperature-independent solubility. |

The choice of technique for measuring nucleation rates in pharmaceutical systems is dictated by the specific research or development goal. The Induction Time Method is unparalleled for fundamental, high-resolution studies of nucleation kinetics, especially for expensive APIs and polymorph screening, due to its statistical rigor and minimal material consumption. In contrast, the MSZW Method provides data that is directly transferable to the design and optimization of industrial cooling crystallization processes. For APIs with challenging solubility profiles, the Evaporative Crystallization Method offers a robust alternative.

The experimental data and protocols summarized in this guide underscore that modern, automated parallel crystallizers coupled with sophisticated data analysis models have made the reliable determination of nucleation rates more accessible than ever. This capability is a critical enabler for the rational design of crystallization processes, ultimately ensuring the consistent quality and performance of Active Pharmaceutical Ingredients.

Experimental Techniques for Nucleation Rate Measurement: From MSZW to Droplet Analysis

The Constant Cooling Rate (CCR) method serves as a critical experimental technique in materials science and pharmaceutical development for quantifying nucleation kinetics. This guide objectively compares the CCR method against other established techniques for measuring nucleation rates, such as induction time and droplet methods, supported by experimental data. Framed within the broader thesis of experimental validation research, this analysis highlights the distinct advantages of the CCR method in simulating industrial processing conditions and efficiently generating data over a wide temperature range. The following sections detail the fundamental principles, provide a direct performance comparison with alternative methods, outline standard experimental protocols, and present essential research tools for implementation.

Nucleation, the initial formation of a new thermodynamic phase from a parent phase, is a fundamental process governing the crystallization of materials, from metallic alloys to active pharmaceutical ingredients (APIs). The nucleation rate, defined as the number of nuclei formed per unit volume per unit time (typically expressed in m⁻³s⁻¹), is a key kinetic parameter that dictates critical material properties, including polymorphism, crystal size distribution, and final product morphology [10] [13]. Accurately measuring this rate is therefore essential for optimizing processes in drug development and materials engineering. However, direct measurement is notoriously complex due to the stochastic nature of nucleation, the sub-microscopic size of critical nuclei, and the interference of simultaneous processes like crystal growth and agglomeration [10].

The Constant Cooling Rate Method is one of several techniques developed to overcome these challenges. Its principle involves subjecting a sample to a controlled, linear decrease in temperature, which gradually increases supersaturation until nucleation occurs. The resulting data from multiple cooling rates can be analyzed to extract nucleation kinetics. This method is particularly valued for its close resemblance to industrial cooling crystallization processes, providing data that is directly applicable to scaling up and optimizing manufacturing operations [13].

Principles of the Constant Cooling Rate Method

The theoretical foundation of the CCR method is deeply rooted in Classical Nucleation Theory (CNT). According to CNT, nucleation is an activated process where a system must overcome a free energy barrier to form a stable nucleus. The nucleation rate, J, is commonly expressed in an Arrhenius-type equation: J = A · exp(-ΔG_crit / k_B T) where A is a pre-exponential factor, ΔG_crit is the Gibbs free energy barrier for the formation of a critical nucleus, k_B is the Boltzmann constant, and T is the absolute temperature [13]. During a CCR experiment, the cooling rate directly influences the supersaturation profile, which in turn governs the temporal evolution of the nucleation rate.

A significant advantage of the CCR method is its capacity to probe athermal nucleation mechanisms. Unlike thermal nucleation, which occurs isothermally after an incubation period, athermal nucleation happens during continuous cooling. In this regime, clusters retained from higher temperatures may exceed the critical size as the temperature drops, becoming viable nuclei without a distinct incubation time. This mechanism is particularly effective during rapid quenching, making CCR vital for studying processes like metal solidification [18]. The method's ability to generate a population of crystals over a range of undercoolings in a single experiment allows for the deconvolution of the nucleation rate from growth kinetics through microstructural analysis and numerical simulation [18].

The following diagram illustrates the logical workflow of a CCR experiment, from sample preparation to data analysis.

Comparative Analysis of Nucleation Rate Measurement Methods

Selecting the appropriate method for measuring nucleation rates depends on the specific research goals, material system, and required data precision. The following table summarizes the key characteristics of the CCR method alongside other prominent techniques.

Table 1: Comparison of Nucleation Rate Measurement Methods

| Method | Fundamental Principle | Typical Experimental Scale | Key Measurable Outputs | Primary Advantages | Primary Limitations |

|---|---|---|---|---|---|

| Constant Cooling Rate (CCR) | Linear temperature decrease induces supersaturation, leading to nucleation. | Bulk solution (mL scale) [18] | Nucleation rate as a function of temperature or undercooling. | Directly simulates industrial cooling crystallization; efficient data generation over a T range [18]. | Requires decoupling of nucleation and growth; may require quenching and simulation. |

| Induction Time | Measures stochastic time lag between achieving supersaturation and the first detection of a crystal. | Multiple small-scale solutions (e.g., 1-16 vials of ~1-2 mL) [10] | Probability distribution of induction times from which nucleation rate is calculated. | Statistically robust; can be highly automated with modern instrumentation [10]. | Time-consuming; measures a combined rate of nucleation and growth. |

| Droplet/Emulsion | System is dispersed into numerous small droplets to isolate nucleation events. | Numerous nano- or micro-liter droplets [10] | Number of crystallized droplets vs. time, giving nucleation rate. | Minimizes heterogeneous nucleation; allows study of homogeneous nucleation. | Technically challenging setup; potential for cross-contamination. |

| Gradient Annealing | A sample is subjected to a spatial temperature gradient, creating different microstructures. | Single solid sample (e.g., 4mm rod) [18] | Spatial distribution of nucleated features (e.g., droplets) after quenching. | Captures multiple stages of transformation in one experiment; reveals nucleation timeline. | Complex post-experiment analysis and simulation required [18]. |

Performance and Experimental Data Comparison

A direct comparison of performance metrics further elucidates the trade-offs between these methods. For instance, a study on an Al-Cu alloy using a gradient annealing method (conceptually similar to CCR) successfully determined a nucleation rate on the order of 10¹³ m⁻³s⁻¹ for liquid droplets in a solid solution, a value consistent with in-situ observations [18]. In contrast, induction time methods in solution crystallization, while powerful, can be prohibitively time-consuming. One study noted that a complete data set could take weeks to collect, though this can be reduced to a few hours using modern automated crystallizers with feedback control [10].

The choice of method often hinges on the state of the material (solution vs. melt) and the nucleation mechanism of interest (homogeneous vs. heterogeneous). For solution-based systems like API development, the induction time method is often preferred for its statistical rigor when coupled with automation. For metallurgical melts or studies of athermal nucleation, the CCR method and its variants are more applicable. The droplet method remains the gold standard for probing homogeneous nucleation kinetics by effectively eliminating heterogeneous sites.

Experimental Protocol for the Constant Cooling Rate Method

The following protocol outlines a generalized procedure for determining nucleation rates using the CCR method, adaptable for both solution and melt systems.

Materials and Preparation

- Sample Material: A homogeneous, representative sample is crucial. For solutions, this involves preparing a saturated or slightly undersaturated solution and filtering to remove dust or impurities that can act as unintended nucleation sites. For metal alloys, a homogenization anneal (e.g., 100 hours at 813 K for an Al-3.7wt% Cu alloy) is performed to create a uniform, coarse-grained structure [18].

- Equipment Setup: The core setup includes a temperature-controlled vessel (e.g., jacketed crystallizer, induction furnace) capable of a precise, linear cooling ramp. A reliable temperature probe (e.g., PRT, thermocouple) and a means of detecting nucleation (e.g., in-situ turbidity probe, laser backscattering, visual observation) are required. For post-analysis, equipment like Scanning Electron Microscopy (SEM) or automated particle size analyzers is needed.

Step-by-Step Procedure

- Loading and Stabilization: Place the prepared sample in the temperature control unit. Heat the sample to a temperature sufficiently above its saturation or melting point to ensure a completely dissolved or molten state, and hold isothermally to establish a uniform initial condition.

- Initiate Cooling: Program the thermostat to initiate a constant cooling rate. The selected rate (e.g., °C/min) should be slow enough to assume a uniform temperature within the sample but fast enough to avoid excessive time at low undercoolings. Multiple experiments at different cooling rates are typically performed.

- Nucleation Detection and Quenching: Monitor the system for the onset of nucleation. In transparent solutions, this can be seen as cloudiness (cloud point). Upon detection, or after a predetermined temperature is reached, rapidly quench the sample to "freeze" the microstructure and arrest further nucleation and growth. In the Al-Cu study, this was achieved by dropping the sample into water [18].

- Microstructural Analysis: Prepare the quenched sample for metallographic or microscopic examination. Identify and quantify the characteristics of the nucleated phase. In the Al-Cu experiment, researchers measured the size distribution of spherical θ-phase particles in various cross-sections, each corresponding to a different maximum temperature experienced in the gradient [18].

- Data Analysis and Simulation: The measured particle size distribution is a result of both nucleation and growth. To back-calculate the true nucleation rate, numerical simulations are employed. These models simulate the coupled processes of nucleation and diffusion-limited growth under the experimental thermal history. The model parameters for the nucleation rate law are iteratively adjusted until the simulated microstructure matches the experimentally observed one [18].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of the CCR method and other nucleation studies relies on specific instrumentation and materials. The following table details key solutions and tools used in this field.

Table 2: Key Research Reagent Solutions and Essential Materials

| Item Name | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Automated Crystallization Workstation (e.g., Crystal16) | Performs multiple parallel cooling and induction time experiments with integrated turbidity measurement for nucleation detection. | High-throughput measurement of nucleation rates from induction times in pharmaceutical solutions [10]. |

| Homogenized Alloy Rod | A solid sample with uniform chemical composition and microstructure, serving as the starting material for nucleation studies in melts. | Used in gradient annealing experiments to study the nucleation of liquid droplets in a solid Al-Cu matrix [18]. |

| Temperature Gradient Furnace | Applies a precise spatial temperature gradient to a single sample, creating regions with varying levels of undercooling. | Allows for the analysis of different stages of melting/nucleation in a single experiment, as demonstrated in [18]. |

| Quenching Medium (e.g., water bath) | A medium used to rapidly lower the temperature of a sample to preserve its high-temperature microstructure for post-analysis. | Critical step in the CCR and gradient annealing methods to halt nucleation and growth after the heat treatment [18]. |

| Numerical Simulation Software | Custom or commercial software used to model nucleation and growth kinetics, fitting model outputs to experimental data. | Essential for deconvoluting the nucleation rate from the final particle size distribution in CCR experiments [18]. |

The Constant Cooling Rate method stands as a powerful technique for quantifying nucleation kinetics, particularly in scenarios that mimic industrial cooling processes or involve athermal nucleation mechanisms. While its requirement for post-experiment analysis and simulation introduces complexity, its ability to efficiently provide data over a wide temperature range is a significant advantage. As with any analytical technique, the choice to use CCR must be informed by the specific research context. For studies of melt solidification or where process relevance is key, CCR is an excellent choice. For highly statistically resolved studies of solution-based nucleation, automated induction time methods may be more appropriate. The ongoing development of automated instrumentation and sophisticated simulation tools continues to enhance the accuracy and accessibility of all nucleation rate measurement methods, empowering researchers to better control crystallization outcomes in drug development and materials science.

Metastable Zone Width (MSZW) Analysis for Kinetic Parameter Extraction

Metastable Zone Width (MSZW) represents the range of supersaturation where a solution remains metastable, existing between the saturation concentration curve and the supersolubility curve, before spontaneous nucleation occurs [23]. In industrial crystallization, particularly for Active Pharmaceutical Ingredients (APIs), MSZW serves as a crucial parameter for process design and optimization, ensuring consistent crystal quality, purity, and polymorphic form [23] [24]. Operating within this zone enables controlled crystal growth while avoiding undesirable primary nucleation that can lead to inconsistent particle size distribution, agglomeration, or unwanted polymorphs [24]. The width of this metastable region is not an intrinsic property but varies with process conditions including cooling rate, agitation speed, saturation temperature, solvent composition, and the presence of impurities or external fields like ultrasound [23] [15]. This guide provides a comparative analysis of predominant theoretical models and experimental protocols for extracting nucleation kinetics from MSZW data, supporting research on nucleation rate experimental validation.

Theoretical Models for MSZW Analysis

The analysis of MSZW data enables researchers to extract critical nucleation kinetics and thermodynamic parameters. Several theoretical frameworks have been developed for this purpose, each with distinct foundations, applications, and limitations.

Table 1: Comparison of Primary Theoretical Models for MSZW Analysis

| Model Name | Theoretical Basis | Key Extractable Parameters | Primary Applications | Notable Limitations |

|---|---|---|---|---|

| Nývlt's Model [25] | Empirical / Semi-empirical | Apparent nucleation order (m), Nucleation rate constant (K) | Initial screening, basic nucleation kinetics [25] | Does not explicitly account for 3D nucleation thermodynamics [23] |

| Sangwal's Self-Consistent Model [23] [26] | Extension of Nývlt's theory | Nucleation order (m), Dissolution enthalpy (ΔHd), Constant (fK) | Improved correlation of MSZW with saturation temperature and cooling rate [26] | More complex than original Nývlt model |

| Kubota's Model [25] | Considers solution molecule number density | Nucleation parameters based on molecule density in solution | Systems where solution molecular properties are significant [25] | Less commonly applied compared to Nývlt and Sangwal approaches |

| Classical Nucleation Theory (CNT) Models [23] [1] | Classical 3D nucleation theory | Interfacial energy (γ), Pre-exponential factor (AJ), Gibbs free energy of nucleation (ΔG) | Fundamental nucleation studies, prediction of nucleation rates across cooling rates [1] | Requires more complex mathematical treatment |

| Simplified Linear Integral Model [23] [27] | Linearized integral based on CNT | Interfacial energy (γ), Pre-exponential factor (AJ) | Direct determination of γ and AJ from linear plots [27] | Relies on approximations in the integration method |

Recent Advancements in CNT-Based Models