Active Learning Strategies for Efficient Materials Experimentation: Accelerating Discovery with AI

This article provides a comprehensive guide to active learning (AL) strategies that are revolutionizing efficient materials experimentation.

Active Learning Strategies for Efficient Materials Experimentation: Accelerating Discovery with AI

Abstract

This article provides a comprehensive guide to active learning (AL) strategies that are revolutionizing efficient materials experimentation. Aimed at researchers and scientists, it covers the foundational principles of AL and Bayesian optimization that enable smarter navigation of vast experimental spaces. The piece details practical methodologies, from uncertainty sampling to multi-objective optimization, and showcases their successful application in both computational and real-world laboratory settings, including autonomous research systems. It further addresses common implementation challenges and presents rigorous benchmarking studies that validate the superior data efficiency of AL over traditional approaches, concluding with the transformative implications of these strategies for accelerating innovation in materials science and drug development.

What is Active Learning? The Core Principles Revolutionizing Materials Science

Active Learning (AL) represents a paradigm shift in machine learning, moving from passive data collection to an iterative, intelligent experiment design process. In scientific fields like materials science and drug discovery, where data acquisition is costly and time-consuming, AL minimizes labeling costs while maintaining or enhancing model accuracy by strategically selecting the most informative data points for experimentation [1]. This protocol outlines the core concepts, methodologies, and practical applications of AL, providing a framework for researchers to implement these strategies for efficient materials experimentation.

The traditional approach to data-driven discovery often relies on high-throughput methods that fully populate a material's phase space, which can be an inefficient strategy for navigating vast search spaces [2]. In contrast, Active Learning (AL) is a subfield of machine learning that enables models to achieve better performance with fewer labeled examples by intelligently selecting which data points should be labeled [1]. This is formalized through an iterative loop of adaptive sampling and Bayesian optimization, which prioritizes experiments that are expected to provide the maximum information gain or most improve a surrogate model for a given objective [2]. This approach is particularly powerful in domains like materials science and drug development, where each new data point from computation or experiment requires significant resources [3].

Core Conceptual Framework

The AL cycle is built upon a feedback loop between a predictive model and an acquisition function that guides data selection.

The Active Learning Cycle

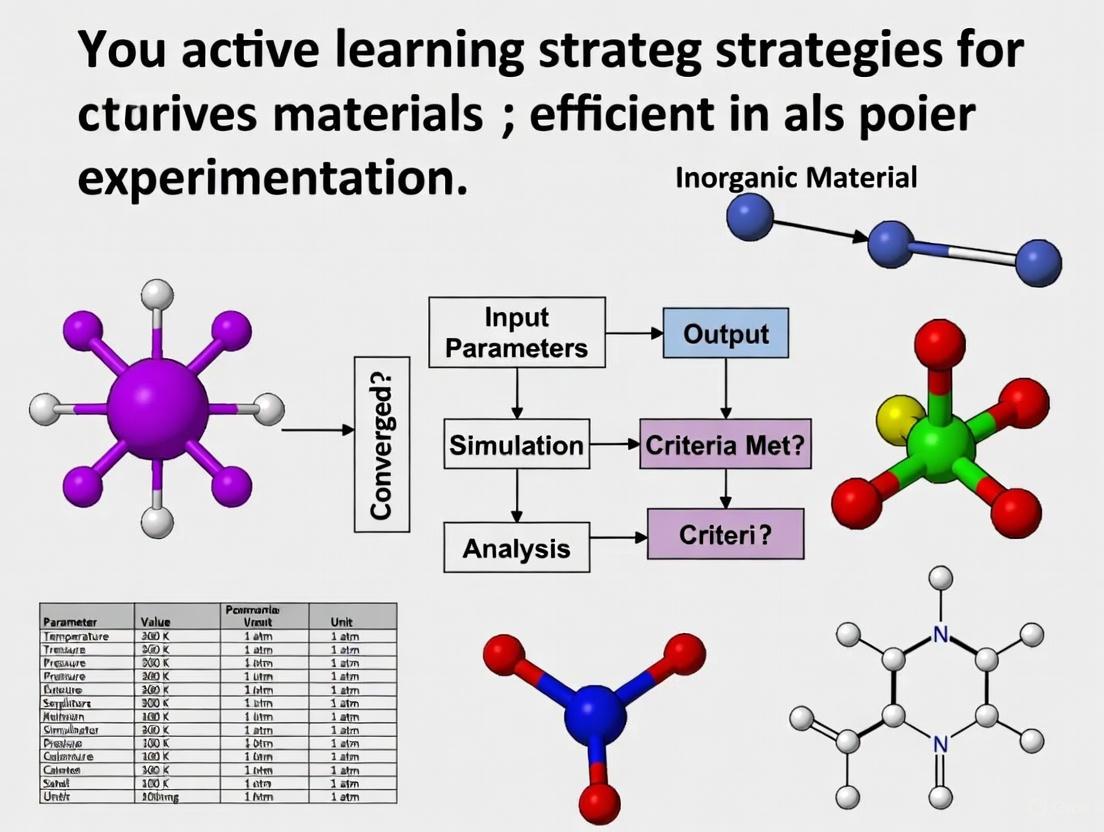

The following diagram illustrates the core, iterative workflow of an Active Learning system.

Key Components of the Framework

- Initial Labeled Dataset (LDB): A small starting set of labeled samples, ( L = {(xi, yi)}_{i=1}^l ), used to train the initial surrogate model [3].

- Unlabeled Data Pool (UDB): A large collection of unlabeled candidates, ( U = {xi}{i=l+1}^n ), from which the AL strategy will select samples for labeling [3].

- Surrogate Model: A machine learning model (e.g., regression or classification) that predicts properties or objectives. In advanced workflows, this can be an AutoML system that automatically selects the best model family and hyperparameters [3].

- Utility/Acquisition Function: A critical decision-making function that uses predictions from the surrogate model to quantify the potential usefulness of an unlabeled sample, thereby guiding the selection of the next experiment [2].

- Targeted Experiment/Calculation: The physical experiment or computational simulation performed on the selected sample to obtain its label, ( y^* ) [3].

Key Utility Functions and Sampling Strategies

The utility function is the core of the AL decision-making engine. Different functions are designed to optimize for different goals, such as reducing model uncertainty or error.

Quantitative Comparison of Common AL Strategies

Table 1: Summary of primary Active Learning strategies and their characteristics, based on benchmark studies [3].

| Strategy Category | Example Methods | Primary Principle | Key Advantage | Performance in Early AL Stages |

|---|---|---|---|---|

| Uncertainty Estimation | LCMD, Tree-based-R | Selects data points where the model's prediction is most uncertain. | Rapidly reduces model uncertainty; highly data-efficient initially. | Outperforms random sampling and geometry-based methods. |

| Diversity-Hybrid | RD-GS | Combines uncertainty with a measure of data diversity. | Prevents selection of clustered, redundant samples. | Clearly outperforms random sampling. |

| Geometry-Only | GSx, EGAL | Selects samples to cover the feature space geometry. | Ensures broad exploration of the design space. | Underperforms compared to uncertainty-driven methods. |

| Expected Model Change | EMCM | Selects data points that would cause the largest change in the model. | Aims for maximal impact on model parameters. | Varies; can be effective but computationally intensive. |

Detailed Experimental Protocol for Pool-Based Active Learning

This protocol provides a step-by-step methodology for implementing a pool-based AL cycle in a materials science or drug discovery context, suitable for regression tasks like predicting material properties or binding affinities.

Pre-experiment Planning and Setup

- Step 1: Problem Formulation

- Objective: Define the target property to be optimized (e.g., band gap, yield strength, binding affinity).

- Design Space: Identify the relevant materials descriptors or features (e.g., elemental properties, chemical composition, structural parameters) [2].

- Step 2: Data Curation and Initialization

- Create Unlabeled Pool (U): Compile a database of candidate materials or compounds represented by their feature vectors. This can be derived from existing databases or generated via combinatorial design [3].

- Establish Initial Labeled Set (L): Randomly select a small number of candidates (( n_{init} ), e.g., 5-10% of the pool) from U, perform experiments/computations to obtain their target property values, and add them to L [3].

- Step 3: Surrogate Model Configuration

- Model Selection: Choose a machine learning model. For robustness, consider using an AutoML framework to automatically search and optimize across model families (e.g., linear regressors, tree-based ensembles, neural networks) and their hyperparameters [3].

- Validation Scheme: Configure a cross-validation method (e.g., 5-fold cross-validation) to be used internally by the AutoML system for reliable performance estimation [3].

Iterative Active Learning Cycle

- Step 4: Model Training and Evaluation

- Train the surrogate model (or run the AutoML optimizer) on the current labeled set L.

- Evaluate the model's performance (e.g., using Mean Absolute Error - MAE, or Coefficient of Determination - R²) on a held-out test set [3].

- Step 5: Sample Selection via Utility Function

- Step 6: Targeted Experimentation and Data Augmentation

- Perform the physical experiment or high-fidelity computation on the selected candidate ( x^* ) to obtain its true label ( y^* ).

- Update Datasets:

- ( L = L \cup {(x^, y^)} )

- ( U = U \setminus {x^*} ) [3]

- Step 7: Loop Termination Check

- Continue: If the performance target has not been met and the budget allows, return to Step 4.

- Terminate: If the model performance has converged, a desired property threshold has been reached, or the experimental budget is exhausted [2].

Strategy Selection and Decision Logic

Choosing the right AL strategy depends on the specific context of the research problem. The following flowchart provides a guideline for this decision-making process.

The Scientist's Toolkit: Essential Research Reagents and Methods

This table details key computational and methodological "reagents" essential for implementing an effective Active Learning pipeline in a research environment.

Table 2: Essential components and their functions in an Active Learning workflow for materials science.

| Tool/Method Category | Specific Examples | Function in the AL Workflow |

|---|---|---|

| Surrogate Models | Gaussian Process Regression, Support Vector Machines, Random Forests, Neural Networks [3] | Acts as the predictive engine; maps material descriptors to target properties and provides uncertainty estimates. |

| Uncertainty Estimation Techniques | Monte Carlo Dropout [3] [1], Ensemble Methods, Bayesian Neural Networks [1] | Quantifies the model's uncertainty for its own predictions, which is the foundation for many acquisition functions. |

| Automated Machine Learning (AutoML) | AutoML frameworks [3] | Automates the selection and hyperparameter tuning of the surrogate model, ensuring robust performance even with a dynamically changing training set. |

| Acquisition Functions | Expected Improvement (EI) [2], Uncertainty Sampling (LCMD), Query-by-Committee [3] | The core decision-making function that scores and prioritizes unlabeled samples for the next experiment. |

| High-Throughput Computation/Experiment | Density Functional Theory (DFT) [2], Robotic Synthesis Platforms (e.g., A-Lab [3]) | Provides the high-fidelity "ground truth" data (labels) for the samples selected by the AL loop, thereby expanding the labeled dataset. |

Application Notes and Impact

The implementation of AL has demonstrated significant impact across various scientific domains:

- Accelerated Materials Discovery: AL has been successfully applied to reduce experimental campaigns in alloy design by more than 60% compared to traditional approaches [3]. In some computational studies, AL achieved performance parity with full-data baselines while using only 10-30% of the data, equivalent to a 70–95% savings in computational resources [3].

- Integration with Autonomous Laboratories: The "A-Lab" platform leveraged AL to autonomously synthesize 41 previously unreported inorganic compounds within 17 days, showcasing the power of closing the loop between computation and synthesis [3].

- Multi-fidelity and Multi-objective Optimization: AL frameworks can be generalized to incorporate data from multiple sources with different levels of accuracy (multi-fidelity) and to optimize for several properties simultaneously (multi-objective), making them highly adaptable to complex real-world design challenges [2].

Table 1: 2025 U.S. Construction Material Cost Increases Driven by Tariffs and Supply Chain Pressures [4]

| Material | Price Increase (Since Jan 2025) | Key Driver(s) |

|---|---|---|

| Steel | 15% - 25% | Reinstated 25% global tariff (March 2025) |

| Aluminum | 8% - 10% | Reinstated 10% global tariff (March 2025) |

| Lumber | 17.2% (YoY) | Canadian lumber tariffs at 34.5% |

| Concrete Products | 41.4% (Over 5 years) | Cumulative supply chain and energy costs |

| Appliances | Subject to 10% blanket tariff + 104% on Chinese imports | Layered import tariffs |

Table 2: Cross-Industry Survey on Economic and Operational Challenges in 2025 [5]

| Challenge | Percentage of Organizations Reporting | Impact on R&D |

|---|---|---|

| Cost Volatility (Top Operational Threat) | 65% | Unpredictable budgets for material procurements |

| Data Accessibility Issues | 68% | Delays in executive decision-making and experiment planning |

| Lack of System Integration (Fully Siloed) | 11% | Hinders data sharing and collaborative research |

| Organizations Planning AI Adoption for Forecasting | 41% | Indicates shift towards data-driven methods |

Experimental Protocols

Protocol 1: Implementing Density-Aware Greedy Sampling (DAGS) for Materials Discovery

1. Objective: To train effective regression models for predicting material properties using a minimal number of experimental data points by actively selecting the most informative samples [6].

2. Reagents and Equipment:

- Data Pool: A large, unlabeled dataset of possible material compositions or structures.

- Oracle: The means of obtaining a true label (e.g., a scientist conducting a physical experiment or a high-fidelity simulation).

- Regression Model: A machine learning model (e.g., Gaussian process regression, neural network).

- Computational Environment: Software for running the DAGS algorithm, which integrates uncertainty estimation with data density metrics [6].

3. Procedure: 1. Initialization: Start with a small, randomly selected subset of the data pool to form the initial training set. Acquire labels for this set from the oracle. 2. Model Training: Train the initial regression model on the labeled training set. 3. Iterative Active Learning Loop: * Step 1: Prediction & Uncertainty Estimation: Use the current model to predict outcomes for all remaining points in the unlabeled data pool. Calculate the uncertainty of each prediction. * Step 2: Density Calculation: Compute the representativeness of each unlabeled point by evaluating its density within the entire data pool or its similarity to the current training set. * Step 3: DAGS Selection: Rank the unlabeled data points using a criterion that balances high uncertainty with high density. Select the top-ranked point(s). * Step 4: Oracle Query & Update: Present the selected point(s) to the oracle for labeling. Add the newly labeled data to the training set. * Step 5: Model Retraining: Update the regression model with the expanded training set. 4. Termination: Repeat the loop until a predefined performance threshold is met or the experimental budget is exhausted.

4. Analysis: The performance of the model is evaluated on a held-out test set. The efficiency of DAGS is benchmarked against random sampling and other active learning techniques by comparing the learning curves (model performance vs. number of data points queried) [6].

Protocol 2: Validating an AI-Driven Materials Discovery Workflow Using the Nested Model

1. Objective: To ensure that an AI system for autonomous materials discovery and testing is clinically relevant, ethically sound, and compliant with regulatory standards [7].

2. Reagents and Equipment:

- AI System: A platform like CRESt (Copilot for Real-world Experimental Scientists), which includes robotic synthesizers, automated electrochemical testers, and characterization equipment (e.g., electron microscopy) [8].

- Multidisciplinary Team: AI experts, materials scientists, legal counsel, and regulatory specialists.

- Validation Framework: The Nested Model online tool for navigating regulatory questions [7].

3. Procedure: 1. Regulation Layer: * Identify the relevant regulations (e.g., EU Requirements for Trustworthy AI). * Categorize key requirements into ethical (privacy, data governance, societal well-being) and technical (robustness, transparency, fairness) ones [7]. * Define all stakeholders (domain experts, regulators, end-users). 2. Domain Layer: * Formulate the core materials science problem with domain experts (e.g., "Discover a low-cost, high-activity fuel cell catalyst"). * Define success metrics and clinical/market utility. 3. Data Layer: * Establish data governance and provenance protocols. * Address privacy using techniques like federated learning, where model training is decentralized and raw data is not shared [7]. * Implement bias detection and mitigation. 4. Model Layer: * Develop the AI model (e.g., a multimodal active learning system) with a focus on explainability (XAI). * Integrate human-in-the-loop feedback for critical oversight [7]. * Employ transfer learning and continuous learning to improve performance over time [7]. 5. Prediction Layer: * Deploy the model within the CRESt system to autonomously design experiments, synthesize materials (e.g., via carbothermal shock), and run performance tests [8]. * Use computer vision to monitor experiments for reproducibility issues and suggest corrections [8]. * Validate model predictions against held-out experimental results.

4. Analysis: The final discovered material (e.g., a multielement fuel cell catalyst) is evaluated against the initial objectives and regulatory requirements. The process is documented for auditability, demonstrating how each layer of the nested model was addressed [7].

Workflow and System Visualization

Diagram 1: DAGS Active Learning Cycle

Diagram 2: CRESt AI-Driven Materials Discovery Platform

Diagram 3: Nested Model for AI Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for an Automated Materials Discovery Lab [9] [8]

| Item | Function |

|---|---|

| Liquid-Handling Robot | Precisely dispenses precursor solutions for consistent and high-throughput synthesis of material libraries [8]. |

| Carbothermal Shock System | Enables rapid synthesis of nanomaterials (e.g., multielement catalysts) by subjecting precursors to extremely high temperatures for short durations [8]. |

| Automated Electrochemical Workstation | Performs high-volume, standardized tests to characterize key material properties like catalytic activity and stability [8]. |

| Automated Electron Microscope | Provides high-throughput microstructural imaging and analysis, crucial for understanding structure-property relationships [8]. |

| Federated Learning Platform | A privacy-enhancing software platform that allows training machine learning models on distributed datasets without centralizing the data, addressing key ethical requirements [7]. |

| Application Programming Interface (API) | Enables digital data flow by allowing different systems (e.g., design, inventory, testing) to automatically share and consume data, reducing manual entry and error [10]. |

Active learning (AL) represents a paradigm shift in materials experimentation, offering a data-efficient framework for sequential optimization that dramatically reduces the number of experiments or simulations required for discovery and optimization tasks. By strategically selecting the most informative data points for experimental validation, AL systems can navigate complex, high-dimensional design spaces with significantly reduced resource investment compared to traditional approaches. This methodology is particularly valuable in materials science and drug discovery, where experimental characterization demands substantial time, specialized equipment, and expert knowledge [3] [11]. The core architecture of an active learning loop integrates three fundamental components: surrogate models that approximate system behavior, acquisition functions that guide data selection, and iterative learning processes that refine predictions through cycles of experimentation and model updating. When implemented effectively, this approach has demonstrated order-of-magnitude improvements in optimization efficiency, enabling researchers to achieve research objectives with far fewer experimental iterations [11]. This protocol examines the key components of active learning loops, providing detailed application notes and experimental frameworks tailored to materials research applications.

Core Components of an Active Learning Loop

Surrogate Models

Surrogate models, also known as metamodels or emulators, serve as computationally efficient approximations of complex physical systems or expensive experimental processes. These models learn the relationship between input parameters (e.g., material composition, processing conditions) and output properties (e.g., melting temperature, fluorescence intensity) from limited initial data, enabling rapid exploration of the design space without constant recourse to costly experiments or simulations [12] [3].

Table 1: Common Surrogate Modeling Techniques in Materials Research

| Model Type | Key Characteristics | Best-Suited Applications | Representative References |

|---|---|---|---|

| Kriging/Gaussian Process | Provides uncertainty estimates alongside predictions; interpolates data exactly | Time- and space-dependent reliability analysis; problems requiring uncertainty quantification | [12] [13] |

| Neural Networks | High flexibility for complex, nonlinear relationships; requires substantial data | Gene regulatory network inference; DNA sequence design | [14] [15] |

| Transformer Models | Captures complex long-range dependencies in sequential data | Biological sequence-to-expression prediction for regulatory DNA | [14] |

| Random Forests | Handles mixed data types; provides feature importance metrics | Melting temperature prediction for multi-principal component alloys | [11] |

| Bayesian Neural Networks | Quantifies predictive uncertainty; robust to overfitting | Plasma turbulent transport surrogate modeling | [16] |

In materials science applications, Kriging models have gained particular prominence due to their ability to provide uncertainty estimates alongside predictions, a crucial feature for guiding active learning processes [12] [13]. For systems with multiple failure modes or performance metrics, constructing separate surrogate models for each failure mode has proven effective, though the focus should remain on accurately modeling regions where failure modes determine system failure [12]. Recent advances integrate specialized architectures for enhanced uncertainty quantification, such as Spectral-normalized Neural Gaussian Process (SNGP) for classification tasks and Bayesian Neural Networks with Normalizing Calibration Priors (BNN-NCP) for regression problems, particularly valuable when dealing with small datasets [16].

Acquisition Functions

Acquisition functions serve as the decision-making engine of active learning systems, quantifying the potential utility of candidate data points and guiding the selection of which experiments to perform next. These functions strategically balance exploration (sampling regions of high uncertainty) and exploitation (sampling regions likely to improve target properties) to maximize learning efficiency [3] [13] [14].

Table 2: Acquisition Functions for Materials Experimentation

| Acquisition Function | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Upper Confidence Bound (UCB) | Combines predicted mean and uncertainty: (J_i = (1-\alpha) \times \text{mean} + \alpha \times \text{std. dev.}) | Explicitly tunable exploration-exploitation balance | Requires careful parameter (α) tuning |

| Expected Predictive Information Gain (EPIG) | Selects samples that maximize reduction in predictive uncertainty | Prediction-oriented improvement; effective for molecule generation | Computationally intensive for large candidate sets |

| Uncertainty Sampling (LCMD) | Prioritizes samples with highest predictive variance | Simple implementation; effective early in AL process | May overlook promising regions with moderate uncertainty |

| Diversity-based (RD-GS) | Selects diverse samples covering input space | Prevents clustering of similar samples | May select uninformative samples in well-characterized regions |

| Expected Model Change | Prioritizes samples that would most alter the model | Maximizes learning per sample | Computationally expensive; requires model retraining for evaluation |

The Upper Confidence Bound (UCB) function exemplifies the exploration-exploitation balance, mathematically expressed as:

[ Ji = (1-\alpha) \times \frac{1}{r}\sum{j=1}^{r}y{ij} + \alpha \times \left(\frac{1}{r}\sum{j=1}^{r}(y{ij} - \frac{1}{r}\sum{j=1}^{r}y_{ij})^2\right)^{\frac{1}{2}} ]

where (y_{ij}) is the prediction for sample (i) by model (j) in an ensemble of (r) models, and (\alpha) controls the balance between mean performance (exploitation) and uncertainty (exploration) [14].

For gene regulatory network inference, novel acquisition functions including Equivalence Class Entropy Sampling (ECES) and Equivalence Class BALD Sampling (EBALD) have shown particular promise by leveraging Bayesian active learning principles to optimize intervention selection [15].

Iterative Learning Processes

The iterative learning process integrates surrogate models and acquisition functions into a cyclic framework of sequential experimentation and model refinement. This process begins with an initial dataset—often small—then progresses through repeated cycles of model training, candidate selection via acquisition functions, experimental validation, and model updating [3] [11] [14].

A key enhancement in advanced AL implementations is the incorporation of parallel updating strategies, which select multiple training samples simultaneously rather than single points per iteration. This approach substantially reduces total computational time by leveraging distributed computing resources, with methods including k-means clustering and correlation-based selection ensuring diverse sample selection [12]. For time- and space-dependent reliability analysis, specialized stopping criteria based on prediction probabilities of sample signs have been developed to balance accuracy and efficiency by terminating the updating process appropriately [12].

Diagram 1: Active Learning Workflow. The iterative process cycles through model training, candidate selection, experimental validation, and dataset updating until stopping criteria are met.

Application Notes for Materials Research

FAIR Data Principles and Workflow Infrastructure

The implementation of Findable, Accessible, Interoperable, and Reusable (FAIR) data principles within active learning frameworks dramatically accelerates materials discovery by enabling data reuse across optimization campaigns. Research demonstrates that leveraging FAIR data and workflows can yield a 10-fold increase in optimization speed compared to approaches without such infrastructure [11]. This infrastructure ensures that results from each workflow execution are automatically stored in structured databases, creating a growing knowledge base that benefits subsequent optimization tasks.

In practice, nanoHUB's Sim2L infrastructure provides a exemplar implementation of FAIR workflows for materials science, automatically indexing all input-output pairs from simulations into queryable databases [11]. This approach allows sequential optimizations to build upon previously acquired data, substantially reducing the number of experiments required to discover materials with optimal properties. For instance, initial work identifying high-melting-temperature alloys required testing approximately 15 compositions, while subsequent reuse of FAIR data enabled identification of optimal alloys with only 3 compositions tested [11].

Domain-Specific Adaptations

Successful implementation of active learning requires careful adaptation to domain-specific constraints and opportunities across materials research applications:

Regulatory DNA Optimization: For designing DNA sequences with improved protein expression, active learning outperforms one-shot optimization approaches particularly in complex landscapes with high epistasis. Implementation typically employs ensemble neural networks with directed evolution-inspired sampling, where new sequences generate through targeted mutations from promising candidates in previous iterations [14].

Small Molecule Discovery: In drug discovery, human-in-the-loop active learning integrates domain expertise to refine property predictors, with experts confirming or refuting model predictions to address generalization limitations. The Expected Predictive Information Gain (EPIG) criterion effectively selects molecules for expert evaluation, maximizing uncertainty reduction while leveraging chemical intuition [17].

PDE Surrogate Modeling: For simulating physical systems governed by partial differential equations, selective time-step acquisition strategies dramatically reduce computational costs by identifying critical time points for precise simulation while using surrogate predictions for less informative periods. This approach has demonstrated significant error reduction across Burgers' equation and Navier-Stokes equations while using fewer computational resources [18].

Experimental Protocols

Protocol: Active Learning for Alloy Melting Temperature Optimization

This protocol details the procedure for optimizing alloy melting temperatures using active learning with molecular dynamics simulations, based on the methodology demonstrating 10× acceleration through FAIR data reuse [11].

Research Reagent Solutions:

Table 3: Essential Materials for Alloy Melting Temperature Optimization

| Resource | Specification | Function/Purpose |

|---|---|---|

| nanoHUB Sim2L | FAIR workflow infrastructure | Provides molecular dynamics simulation platform and automated data capture |

| Initial Dataset | 100-1000 previously characterized alloys | Provides initial training data for surrogate model |

| Molecular Dynamics Simulator | LAMMPS or similar package | Calculates melting temperatures for candidate compositions |

| Random Forest Regressor | Scikit-learn implementation | Predicts melting temperatures and associated uncertainties |

| FAIR Database | Structured repository of input-output pairs | Enables data reuse across optimization campaigns |

Procedure:

Initialization Phase:

- Curate initial training dataset from existing FAIR data repository of alloy compositions with known melting temperatures (100-1000 samples recommended).

- Train random forest surrogate model using composition descriptors (elemental percentages, atomic radii, electronegativities) as features and melting temperatures as targets.

- Define candidate pool of 500+ alloy compositions within specified compositional ranges.

Active Learning Cycle:

- Use trained random forest to predict melting temperatures and uncertainty estimates for all candidates in the pool.

- Apply Upper Confidence Bound acquisition function (α = 0.2-0.5) to identify 3-5 most promising compositions balancing predicted performance and uncertainty.

- Execute molecular dynamics simulations for selected compositions using nanoHUB Sim2L workflow:

- Initialize simulation cells with random atom placement at candidate composition.

- Use solid-liquid coexistence method with automated Tsol and Tliq estimation.

- Run simulation until melting/freezing equilibrium established.

- Automatically store simulation results (composition, parameters, melting temperature) in FAIR database.

- Update training dataset with new experimental measurements.

- Retrain random forest model on expanded dataset.

Termination:

- Continue iterations until melting temperature convergence achieved (successive improvements < 2%).

- Typically requires 3-15 cycles depending on complexity of composition space.

Validation:

- Compare final optimal melting temperature with literature values if available.

- Perform additional molecular dynamics simulations with different initial conditions to confirm stability of predictions.

Protocol: Covalent Organic Framework Discovery for Fluorescence

This protocol outlines the AI-assisted iterative experiment-learning approach for discovering highly fluorescent covalent organic frameworks (COFs), which identified a material with 41.3% photoluminescence quantum yield after testing only 11 of 520 possible building block combinations [19].

Procedure:

Building Block Library Preparation:

- Assemble library of 20 amine and 26 aldehyde building blocks with diverse electronic properties.

- Calculate molecular descriptors for each building block (HOMO-LUMO energies, dipole moments, aromatic ring counts).

- Define candidate set of 520 possible COFs from all pairwise combinations.

Initial Model Training:

- Start with 3-5 experimentally characterized COFs covering diverse electronic configurations.

- Train gradient boosting model using building block features to predict photoluminescence quantum yield.

Active Learning Cycle:

- Use trained model to predict fluorescence for all candidate COFs.

- Identify top candidates using expected improvement acquisition function.

- Synthesize selected COFs via solvothermal synthesis:

- Combine amine and aldehyde building blocks in appropriate stoichiometry.

- Conduct reaction in mixed solvent system (typically dioxane/mesitylene) with acetic acid catalyst.

- Heat at 120°C for 72 hours in sealed Pyrex tube.

- Characterize synthesized COFs for crystallinity (PXRD), porosity (BET surface area), and fluorescence (photoluminescence quantum yield measurement).

- Update training dataset with characterization results.

- Retrain model, incorporating electronic structure insights from quantum chemical calculations if available.

Termination:

- Continue until fluorescence target achieved (>40% quantum yield) or diminishing returns observed.

- Typically requires 5-15 iterations.

Validation:

- Confirm reproducibility of top-performing COFs with repeated synthesis and characterization.

- Perform theoretical modeling of charge transfer processes to verify fluorescence mechanisms.

Performance Benchmarking and Data Analysis

Comprehensive benchmarking of active learning strategies provides critical insights for selecting appropriate methods across different materials research applications.

Table 4: Performance Comparison of Active Learning Strategies

| Application Domain | Optimal Strategy | Performance Gain | Key Efficiency Metric |

|---|---|---|---|

| Alloy Melting Temperature | Random Forest with UCB | 10× reduction in simulations | 3 compositions tested vs. 30 in prior work |

| DNA Sequence Design | Ensemble NN with Directed Evolution | 60% reduction in experimental cycles | Effective in landscapes with high epistasis |

| COF Fluorescence | Gradient Boosting with Expected Improvement | 98% reduction in experiments tested | 11 of 520 combinations tested to find optimal |

| Gene Regulatory Networks | ECES/EBALD Acquisition | Significant improvement in network inference | Enhanced prediction accuracy with fewer interventions |

| PDE Surrogate Modeling | Selective Time-Step Acquisition | Large margin error reduction | Improved 99%, 95%, and 50% error quantiles |

Critical success factors emerging from performance analysis include:

- Early Phase Strategy: Uncertainty-driven (LCMD, Tree-based-R) and diversity-hybrid (RD-GS) acquisition functions significantly outperform random sampling and geometry-only heuristics during initial cycles when labeled data is scarce [3].

- Convergence Behavior: As labeled sets grow, performance gaps between strategies narrow, with all methods eventually converging, indicating diminishing returns from active learning under fixed model frameworks [3].

- Parallelization Impact: Parallel updating strategies that select multiple samples per iteration can reduce total computational time by 30-50% compared to sequential approaches, particularly beneficial for distributed computing environments [12].

Diagram 2: Acquisition Function Logic. Acquisition functions balance high predicted performance against high uncertainty to select the most informative candidates for experimental validation.

The integration of surrogate models, acquisition functions, and iterative learning processes creates a powerful framework for accelerating materials discovery and optimization. The protocols and application notes presented provide actionable guidance for implementing active learning in diverse materials research contexts, from alloy development to molecular discovery. Key principles emerging from successful implementations include the strategic reuse of FAIR data, domain-specific adaptation of acquisition functions, and appropriate balancing of exploration and exploitation throughout the optimization campaign. When properly implemented, active learning strategies routinely achieve order-of-magnitude improvements in experimental efficiency, enabling researchers to navigate complex design spaces with significantly reduced resource investment. As materials research continues to face challenges of increasing complexity and resource constraints, the systematic application of these active learning components will play an increasingly vital role in accelerating the discovery and development of novel materials with tailored properties.

Bayesian Optimization (BO) is a powerful strategy for globally optimizing black-box functions that are expensive to evaluate, a scenario frequently encountered in materials science and drug development research. Within an active learning paradigm, BO operates through a sequential model-based approach to minimize the number of experiments required to find optimal conditions. The core strength of BO lies in its principled mathematical framework for balancing exploration (probing regions of high uncertainty) and exploitation (refining known promising regions). This balance is governed by its acquisition function, which quantifies the utility of evaluating unknown points in the parameter space based on a surrogate model, typically a Gaussian Process (GP). This makes BO exceptionally well-suited for accelerating materials discovery and experimental design where each data point comes from time-consuming or costly processes such as synthesis, characterization, or biological testing [20] [21] [22].

Theoretical Foundations

The Bayesian Optimization Loop

The BO algorithm can be abstracted into a recursive loop with four key stages [22] [23]:

- Surrogate Model Training: A probabilistic model (usually a GP) is trained on all data collected from previous evaluations of the black-box function.

- Acquisition Function Maximization: An acquisition function, computed using the surrogate model's predictions, is maximized to identify the most informative point(s) to evaluate next.

- Function Evaluation: The black-box function is evaluated at the proposed point(s). In materials research, this constitutes running a physical experiment or simulation.

- Data Augmentation: The new input-output pair is added to the dataset, and the loop repeats.

This process is visualized in the following workflow, which maps the logical relationships between the core components.

Key Components and Mathematical Formalism

Gaussian Process Surrogate

A Gaussian Process defines a distribution over functions and is fully specified by a mean function ( m(\mathbf{x}) ) and a covariance (kernel) function ( k(\mathbf{x}, \mathbf{x}') ). For a dataset ( \mathcal{D} = {(\mathbf{x}i, yi)}_{i=1}^n ), the GP posterior predictive distribution at a new point ( \mathbf{x} ) is Gaussian with mean ( \mu(\mathbf{x}) ) and variance ( \sigma^2(\mathbf{x}) ), representing the model's prediction and associated uncertainty, respectively [23].

Acquisition Functions for Exploration-Exploitation

The acquisition function ( \alpha(\mathbf{x}) ) is the mechanism for the exploration-exploitation trade-off. The following table summarizes prominent acquisition functions and their mathematical expressions [20] [23].

Table 1: Key Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mathematical Formulation | Exploration Bias | ||||

|---|---|---|---|---|---|---|

| Probability of Improvement (PI) | ( \text{PI}(\mathbf{x}) = \Phi\left(\frac{\mu(\mathbf{x}) - f(\mathbf{x}^+)}{\sigma(\mathbf{x})}\right) ) | Low | ||||

| Expected Improvement (EI) | ( \text{EI}(\mathbf{x}) = \mathbb{E}[\max(0, \mu(\mathbf{x}) - f(\mathbf{x}^+))] ) | Medium | ||||

| Upper Confidence Bound (UCB) | ( \text{UCB}(\mathbf{x}) = \mu(\mathbf{x}) + \kappa \sigma(\mathbf{x}) ) | Tunable (via ( \kappa )) | ||||

| Target-Oriented EI (t-EI) | ( \text{t-EI}(\mathbf{x}) = \mathbb{E}[\max(0, | y_{t.min}-t | - | Y-t | )] ) | Target-driven |

Where:

- ( \mathbf{x}^+ ) is the best-observed point.

- ( \Phi ) is the cumulative distribution function of the standard normal distribution.

- ( \kappa ) is a parameter controlling the exploration-exploitation balance.

- ( t ) is a target value, and ( y_{t.min} ) is the current observation closest to the target [20].

Target-oriented strategies like t-EI are particularly valuable in materials design, where the goal is often to achieve a specific property value (e.g., a transition temperature of 440°C for a shape memory alloy) rather than finding a global maximum or minimum [20].

Applications in Materials Experimentation

BO has demonstrated significant success in accelerating materials research by efficiently guiding high-throughput experimental cycles. The table below summarizes key applications and outcomes documented in recent literature.

Table 2: Documented Applications of Bayesian Optimization in Materials Science

| Material Class | Optimization Target | Key Outcome | Citation |

|---|---|---|---|

| Shape Memory Alloy (Ti-Ni-Cu-Hf-Zr) | Phase Transformation Temperature (Target: 440°C) | Discovered Ti({0.20})Ni({0.36})Cu({0.12})Hf({0.24})Zr(_{0.08}) with a difference of only 2.66°C from target in 3 iterations. | [20] |

| Hydrogen Evolution Reaction (HER) Catalyst | Hydrogen Adsorption Free Energy (Target: 0 eV) | Efficient search for optimal catalyst materials in a 2D layered MA(2)Z(4) database. | [20] |

| General Materials Discovery | Property (e.g., band gap) matching a predefined value. | Target-oriented BO (t-EGO) showed superior performance, requiring 1-2 times fewer iterations than standard methods. | [20] |

Experimental Protocols for Materials Research

This section provides detailed, actionable protocols for implementing BO in a materials experimentation workflow.

Protocol 1: Standard Bayesian Optimization for Property Maximization

Objective: To maximize a target material property (e.g., hardness, catalytic activity) with a minimal number of synthesis and characterization cycles.

Materials and Reagents:

- Precursor Materials: As required by the material system (e.g., metal salts, ligands).

- Synthesis Equipment: Depending on the method (e.g., ball mill, furnace, autoclave).

- Characterization Tools: Relevant for the target property (e.g., nanoindenter for hardness, electrochemical station for activity).

- Computational Resources: Computer with Python and BO libraries (e.g., BoTorch, Scikit-Optimize).

Procedure:

- Define the Design Space: Identify the compositional or processing parameters to be optimized (e.g., elemental ratios, annealing temperature). Normalize each parameter to the range [0, 1].

- Initialize with Space-Filling Design: Conduct 2D to 5D initial experiments (where D is the number of parameters) using a space-filling design like Latin Hypercube Sampling (LHS) or Sobol sequences to build an initial dataset [22].

- Commence the BO Loop: a. Model Training: Fit a Gaussian Process surrogate model to the current dataset of parameters and corresponding property values. b. Candidate Selection: Maximize an acquisition function (e.g., Expected Improvement) to propose the next experimental condition. c. Experiment Execution: Synthesize and characterize the material at the proposed condition. d. Data Augmentation: Add the new parameter-property pair to the dataset.

- Iterate: Repeat step 3 until a stopping criterion is met (e.g., a performance threshold is achieved, a maximum number of iterations is reached, or the improvement between cycles becomes negligible).

- Validation: Synthesize and test the final recommended optimal material configuration to confirm performance.

Protocol 2: Target-Oriented Optimization for Specific Properties

Objective: To discover a material with a property as close as possible to a specific target value (e.g., a band gap of 1.5 eV for photovoltaics, a transformation temperature of 37°C for biomedical implants).

Procedure:

- Define Target and Design Space: Specify the target property value ( t ) and the relevant material parameter space.

- Initialization: Perform a small set of initial experiments using LHS.

- Target-Oriented BO Loop: a. Model Training: Fit a GP model using the raw property values ( y ), not the absolute distance from the target [20]. b. Candidate Selection: Maximize the target-oriented Expected Improvement (t-EI) acquisition function [20]: ( \text{t-EI}(\mathbf{x}) = \mathbb{E}[\max(0, |y{t.min}-t| - |Y-t|)] ) where ( Y ) is the random variable of the GP prediction at ( \mathbf{x} ), and ( y{t.min} ) is the current best (closest) observation. c. Experiment and Update: Conduct the experiment and update the dataset as in Protocol 1.

- Iterate until the property value is sufficiently close to the target or resources are exhausted.

Human-in-the-Loop and Preferential Bayesian Optimization

Many material design choices involve subjective human judgment, such as the visual quality of a coating or the tactile feel of a polymer. Preferential Bayesian Optimization (PBO) is designed for such scenarios. It operates on pairwise comparisons ("Is sample A better than sample B?") rather than quantitative measurements [24]. A specialized variant, Constrained PBO (CPBO), further incorporates inequality constraints (e.g., "maximize subjective appeal while ensuring hardness > X GPa") [24]. The workflow for such interactive systems is shown below.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational "reagents" and their functions for setting up a Bayesian Optimization campaign in materials research.

Table 3: Essential Tools and Libraries for Implementing Bayesian Optimization

| Tool/Library | Language | Primary Function | Application Note |

|---|---|---|---|

| BoTorch | Python | A flexible library for Bayesian Optimization research and deployment, built on PyTorch. | Supports state-of-the-art acquisition functions, including Monte Carlo variants, and is ideal for high-dimensional problems [23] [25]. |

| Scikit-Optimize | Python | A simpler, easy-to-use library for sequential model-based optimization. | Excellent for getting started with standard BO, providing implementations of EI, GP, and space-filling sampling [22]. |

| GPyTorch | Python | A Gaussian Process library built on PyTorch for flexible and scalable GP modeling. | Often used in conjunction with BoTorch to define custom surrogate models when default GPs are insufficient [23]. |

| Sobol Sequence | N/A | A quasi-random number sequence for generating space-filling initial designs. | Superior to random sampling for covering the design space evenly; available in SciPy (scipy.stats.qmc.Sobol) [22]. |

Bayesian Optimization provides a rigorous and effective theoretical framework for navigating the critical trade-off between exploration and exploitation in experimental research. Its adaptability—from maximizing properties and hitting specific targets to incorporating human expertise—makes it an indispensable component of the modern materials scientist's toolkit. By leveraging the protocols, tools, and strategies outlined in this document, researchers can significantly accelerate the design and discovery of novel materials and drugs, dramatically reducing the time and cost associated with traditional empirical methods.

The Synergy Between Active Learning and the Materials Genome Initiative for Accelerated Discovery

The discovery and deployment of advanced materials are fundamental to technological progress across sectors from healthcare to energy. Traditional materials research, often reliant on trial-and-error, is increasingly challenged by the vastness of the possible design space and the high cost of experiments and computations. Two transformative paradigms—the Materials Genome Initiative (MGI) and Active Learning (AL)—have emerged to address this challenge. The MGI is a multi-agency initiative aiming to discover, manufacture, and deploy advanced materials twice as fast and at a fraction of the cost compared to traditional methods [26]. Active learning, a subfield of machine learning, accelerates this discovery by intelligently guiding experiments and computations, prioritizing the most informative data points to be acquired next [2] [27]. This article details the powerful synergy between these two fields, providing application notes and experimental protocols for researchers seeking to implement these strategies for efficient materials experimentation.

Quantitative Evidence of Efficacy

The integration of Active Learning within the MGI framework has demonstrated significant quantitative improvements in the efficiency of materials discovery across diverse applications. The following table summarizes key performance metrics from recent studies.

Table 1: Quantitative Performance of Active Learning in Materials Discovery Applications

| Application Domain | AL Strategy | Key Performance Metric | Result | Citation |

|---|---|---|---|---|

| General Materials Discovery | LLM-based AL | Data reduction to reach top candidates | >70% reduction | [28] |

| Functionalized Nanoporous Materials | Density-Aware Greedy Sampling (DAGS) | Model accuracy vs. state-of-the-art AL | Consistent outperformance | [6] |

| Small-Sample Regression (AutoML) | Uncertainty-driven (LCMD, Tree-based-R) | Early-stage model accuracy | Clear outperformance vs. baseline | [3] |

| Autonomous Laboratory Synthesis | AL-guided workflows | Novel inorganic compounds synthesized | 41 compounds in 17 days | [27] [3] |

| Alloy Design | Uncertainty-driven AL | Reduction in experimental campaigns | >60% reduction | [3] |

| Ternary Phase-Diagram Regression | AL-guided sampling | Data required for state-of-the-art accuracy | ~30% of typical data | [3] |

Table 2: Benchmarking of Active Learning Strategies within an AutoML Framework Data sourced from a comprehensive benchmark study on small-sample regression in materials science [3].

| AL Strategy Type | Example Strategies | Performance in Data-Scarce Phase | Performance as Data Grows |

|---|---|---|---|

| Uncertainty-Driven | LCMD, Tree-based-R | Clearly outperforms random sampling baseline | Converges with other methods |

| Diversity-Hybrid | RD-GS | Clearly outperforms random sampling baseline | Converges with other methods |

| Geometry-Only | GSx, EGAL | Outperformed by uncertainty and hybrid methods | Converges with other methods |

Detailed Experimental Protocols

Implementing a closed-loop active learning system is central to accelerating discovery. The following protocols provide a roadmap for setting up both computational and experimental AL workflows.

Protocol 1: Computational Active Learning for Property Prediction

This protocol is designed for using AL to efficiently build a surrogate machine learning model for predicting material properties, minimizing the number of expensive ab initio calculations required.

- Objective: To train a accurate data-driven model for a target material property (e.g., band gap, yield strength, gas uptake) with a minimal number of data points from a large candidate pool.

- Materials and Software:

- Candidate Pool (U): A large set of unlabeled candidate materials (e.g., compositions, crystal structures). Features must be precomputed.

- Initial Labeled Set (L): A small, randomly selected subset of the candidate pool, with target properties calculated via DFT or other methods.

- Surrogate Model: Gaussian Process Regression (GPR), Bayesian Neural Network (BNN), or an AutoML framework.

- Utility Function: A function to evaluate the "informativeness" of unlabeled samples (e.g., Expected Improvement, predictive uncertainty).

- Procedure:

- Initialization: Begin with a small labeled dataset (L = {(xi, yi)}{i=1}^l) and a large unlabeled pool (U = {xi}_{i=l+1}^n) [3].

- Model Training: Train the surrogate model on the current labeled set (L).

- Candidate Selection:

- Use the trained model to predict the property and its associated uncertainty for all candidates in (U).

- Apply the chosen utility function to rank all candidates in (U) by their potential informativeness.

- Select the top candidate (x^) that maximizes the utility function.

- Labeling: Obtain the true property value (y^) for the selected candidate (x^) through a high-fidelity calculation (e.g., DFT).

- Data Augmentation: Update the datasets: (L = L \cup {(x^, y^)}), (U = U \setminus {x^}).

- Iteration: Repeat steps 2-5 until a stopping criterion is met (e.g., performance plateaus, budget exhausted).

- Troubleshooting:

- Cold-Start Problem: If initial performance is poor, consider a small initial random batch instead of a single point.

- Model Drift: When using AutoML, the surrogate model may change between iterations. Ensure the utility function is adaptable or use model-agnostic strategies [3].

Protocol 2: Experimental Active Learning for Formulation Optimization

This protocol guides the use of AL in an experimental setting, such as a self-driving laboratory, to optimize synthesis conditions or material compositions.

- Objective: To discover a material formulation or processing condition that optimizes a target property (e.g., catalytic activity, tensile strength) with minimal experimental trials.

- Materials and Equipment:

- Automated Synthesis Platform: Robotic systems for precise dispensing and reaction control.

- High-Throughput Characterization: Automated equipment for rapid property measurement (e.g., spectrophotometer, mechanical tester).

- Oracle: The automated experimental system that provides the ground-truth measurement for a proposed experiment [6].

- Procedure:

- Define Search Space: Establish the bounds of the experimental variables (e.g., composition ratios, temperature, time).

- Initial Design: Perform a small set of initial experiments (e.g., via random sampling or space-filling design) to seed the model.

- Model Training: Train a surrogate model (commonly GPR for its native uncertainty estimation) on all data collected so far.

- Propose Next Experiment:

- The model proposes one or several candidate experiments from the search space that are predicted to maximize the target property or improve the model (e.g., via Expected Improvement) [2].

- In some cases, a human expert may approve or slightly modify the proposal based on domain knowledge.

- Execute and Characterize: The automated platform executes the proposed experiment(s) and characterizes the resulting property.

- Close the Loop: The new data is automatically added to the training set. The process loops back to Step 3 until a performance target is met or the budget is depleted.

- Safety and Compliance:

- Human-in-the-Loop: For safety-critical experiments, implement a mandatory review step before execution.

- Electronic Lab Notebooks: Use ELNs to automatically record all experimental parameters and outcomes, ensuring data is structured for machine readability and future reuse [29].

Workflow Visualization

The following diagram illustrates the core iterative feedback loop that underpins the synergy between Active Learning and the MGI.

Diagram 1: The AL-MGI closed-loop cycle for accelerated discovery.

The Scientist's Toolkit: Essential Research Reagents & Infrastructure

Successful implementation of the AL-MGI paradigm requires a foundation of specific tools and infrastructure. The following table catalogs key components.

Table 3: Essential Resources for Implementing AL within the MGI Framework

| Category | Item | Function & Importance | Examples / Notes |

|---|---|---|---|

| Data Infrastructure | FAIR Data Repositories | Hosts findable, accessible, interoperable, and reusable materials data; enables model training and validation. | Materials Project [29], AFLOW [29], OpenKIM [29], Materials Data Facility (MDF) [29]. |

| Electronic Lab Notebooks (ELNs) | Captures experimental data and metadata in structured, machine-readable format at the source. | Critical for automating data submission to repositories [29]. | |

| Surrogate Models | Gaussian Process Regression (GPR) | Provides property predictions with native uncertainty estimates, crucial for many utility functions. | Ideal for continuous, low-to-medium dimensional parameter spaces [2] [28]. |

| Tree-Based Ensembles (XGBoost, RFR) | Powerful for tabular data; often requires ensemble methods (e.g., Query-by-Committee) for uncertainty estimation. | Commonly used in materials informatics [3] [28]. | |

| Automated Machine Learning (AutoML) | Automates model and hyperparameter selection, reducing expert tuning time and maintaining robust performance. | Integrates with AL for a fully automated modeling pipeline [3]. | |

| Large Language Models (LLMs) | Acts as a generalizable, tuning-free surrogate model using textual prompts; mitigates cold-start problems. | Emerging tool for AL; uses in-context learning [28]. | |

| Utility Functions | Expected Improvement (EI) | Balances exploration (high uncertainty) and exploitation (high predicted value). | Common choice for global optimization [2] [27]. |

| Uncertainty Sampling | Selects points where the model is most uncertain, improving global model accuracy. | e.g., Predictive Variance, Monte Carlo Dropout [2] [3]. | |

| Diversity-Based Methods | Ensures selected samples are representative of the overall data distribution. | Can be hybridized with uncertainty methods [6] [3]. | |

| Experimental Infrastructure | Self-Driving Laboratories (SDLs) | Automated platforms that physically execute the experiments proposed by the AL algorithm. | Core for closing the experimental loop [27] [28]. |

| High-Throughput Synthesis | Rapidly produces many candidate material samples in parallel. | Enables rapid data generation for the AL cycle. | |

| Autonomous Characterization | Automated measurement of material properties from synthesized samples. | Provides the "oracle" function for experimental AL [6]. |

The structural integration of Active Learning within the Materials Genome Initiative creates a powerful, synergistic framework for accelerating materials discovery. As evidenced by quantitative benchmarks, this approach can reduce the number of required experiments and computations by over 60-70%, directly supporting the MGI's core mission [3] [28]. The provided protocols and toolkit offer researchers a concrete path to implement these strategies, enabling a shift from traditional, linear research to an agile, data-driven, and iterative paradigm. By embracing this integrated approach, the materials science community can significantly shorten the development timeline for advanced materials needed to address critical global challenges.

Implementing Active Learning: Key Strategies and Real-World Success Stories

In materials science, where a single data point can require expensive synthesis and characterization, the efficient use of data is paramount. Uncertainty sampling, a core technique in active learning (AL), directly addresses this challenge by strategically selecting the most informative data points for a model to learn from, thereby accelerating discovery while minimizing experimental costs [30] [27]. This approach is founded on the principle that a machine learning model's own uncertainty is a powerful guide for its improvement. By iteratively querying the labels for data points where the model's prediction is most uncertain, the learning process is focused on the most challenging aspects of the problem, leading to more rapid performance gains compared to learning from random data [30] [31]. This Application Note details the protocols and quantitative benefits of applying uncertainty sampling to efficiently guide materials experimentation.

Core Uncertainty Sampling Strategies

Uncertainty sampling encompasses several specific strategies for quantifying and leveraging model uncertainty. The choice of strategy can depend on the model's output format and the specific goal of the learning task.

Table 1: Key Uncertainty Sampling Strategies

| Strategy Name | Description | Key Metric | Best-Suited For |

|---|---|---|---|

| Least Confidence [30] [31] | Queries the instance for which the model has the lowest confidence in its most likely prediction. | ( 1 - P(\hat{y} \mid \mathbf{x}) ), where ( \hat{y} = \arg \max P(y \mid \mathbf{x}) ) | Quick identification of the most ambiguous individual predictions. |

| Margin Sampling [30] [31] | Queries the instance with the smallest difference between the two highest predicted probabilities. | ( P(ym \mid \mathbf{x}) - P(yn \mid \mathbf{x}) ), where ( ym ) and ( yn ) are the first and second most probable classes. | Distinguishing between strong candidate classes in multi-class settings. |

| Entropy Sampling [32] [31] | Queries the instance with the highest predictive entropy, indicating overall uncertainty across all classes. | ( - \sum_{y \in \mathcal{Y}} P(y \mid \mathbf{x}) \log P(y \mid \mathbf{x}) ) | Comprehensive uncertainty measurement when the probability distribution is flat. |

These strategies are primarily designed for classification tasks. For regression tasks common in materials property prediction (e.g., predicting melting points or band gaps), estimating uncertainty is more complex. Common approaches include using the variance of predictions from an ensemble of models [3] [32] or techniques like Monte Carlo Dropout, which performs multiple stochastic forward passes to generate a distribution of predictions from a single model [3] [32].

Advanced Frameworks and Hybrid Methods

Pure uncertainty sampling can sometimes lead to the selection of outliers or noisy data points. To enhance robustness, advanced frameworks and hybrid methods that combine uncertainty with other data characteristics have been developed.

- Evidential Uncertainty Sampling: This framework moves beyond standard probabilities to distinguish between epistemic uncertainty (reducible uncertainty due to a lack of knowledge) and aleatoric uncertainty (irreducible uncertainty due to data noise) [33] [31]. By specifically targeting points with high epistemic uncertainty, the model focuses on areas where new data can most effectively improve its knowledge [31].

- Density-Aware and Hybrid Methods: These methods mitigate the outlier risk by weighting a point's uncertainty with its representativeness. The Density-Aware Greedy Sampling (DAGS) method, for instance, integrates uncertainty estimation with data density and has been shown to consistently outperform random sampling and other AL techniques in training regression models for nanoporous materials [6]. Similarly, other hybrid strategies combine uncertainty with diversity metrics to ensure the selected data points are both challenging and cover a broad region of the design space [3] [32].

Application Notes & Case Studies in Materials Science

The following case studies demonstrate the practical efficacy and quantitative benefits of uncertainty sampling in real-world materials research.

Table 2: Quantitative Performance of Active Learning in Materials Discovery

| Application Domain | Baseline Method | AL Strategy | Performance Improvement | Reference |

|---|---|---|---|---|

| Alloy Melting Temperature Optimization | Standard workflow (15 compositions tested) | AL with FAIR data & workflows | 10x speedup; optimal alloy found with only ~3 compositions tested [11] | [11] |

| General Materials Science Regression | Random Sampling | Uncertainty-driven (LCMD, Tree-based-R) & Diversity-hybrid (RD-GS) | Clear outperformance in early acquisition stages; all methods converge as data grows [3] | [3] |

| Functionalized Nanoporous Materials | Random Sampling & State-of-the-Art AL | Density-Aware Greedy Sampling (DAGS) | Consistently superior performance in training regression models with limited data [6] | [6] |

| LLM-guided Materials Discovery | Traditional ML models (RFR, XGBoost, GPR) | LLM-based Active Learning (LLM-AL) | >70% reduction in experiments needed to find top candidates [28] | [28] |

Case Study: Accelerating Alloy Discovery with FAIR Data and Active Learning

Objective: To identify multi-principal component alloys with the highest melting temperature using molecular dynamics (MD) simulations, while minimizing the number of computationally expensive simulations required [11].

Experimental Protocol:

- Initialization & FAIR Data: Begin with an existing repository of FAIR (Findable, Accessible, Interoperable, and Reusable) data from previous MD simulation workflows. This data is used to train an initial machine learning model (e.g., Random Forest) to predict melting temperatures [11].

- Uncertainty Estimation: For all unexplored alloy compositions in the design space, use the trained model to predict the melting temperature. Calculate the prediction uncertainty (e.g., using the variance of predictions from an ensemble or the model's internal uncertainty estimate) [11].

- Query Function: Employ an acquisition function (e.g., upper confidence bound) that balances the predicted melting temperature (exploitation) and the associated uncertainty (exploration). Select the alloy composition that maximizes this function [11].

- Simulation & Labeling: Perform a molecular dynamics simulation to determine the true melting temperature of the selected alloy composition [11].

- Model Update: Add the new (composition, melting temperature) data point to the training set and retrain the machine learning model [11].

- Iteration: Repeat steps 2-5 until a convergence criterion is met (e.g., the model identifies a candidate with a melting temperature above a desired threshold and with low uncertainty) [11].

Diagram 1: This workflow illustrates the iterative cycle of an uncertainty sampling-driven active learning process for materials discovery.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Uncertainty Sampling in Materials Science

| Tool / Resource | Type | Function in Uncertainty Sampling |

|---|---|---|

| FAIR Data Repositories [11] | Data | Provides findable, accessible, interoperable, and reusable initial data to pre-train models and mitigate the "cold start" problem. |

| Automated Machine Learning (AutoML) [3] | Software | Automates model and hyperparameter selection, creating a robust and dynamic surrogate model for uncertainty estimation within the AL loop. |

| Gaussian Process Regression (GPR) [28] | Model | A probabilistic model that natively provides uncertainty estimates (variance) alongside predictions, making it a natural choice for AL. |

| Ensemble Methods (e.g., Random Forest) [28] [11] | Model | The variance in predictions across a committee of models serves as a reliable estimate of predictive uncertainty. |

| Large Language Models (LLMs) [28] | Model | Acts as a generalizable, tuning-free surrogate model using in-context learning to propose experiments, reducing dependency on feature engineering. |

Protocol: Implementing a Density-Aware Uncertainty Sampling Strategy

This protocol outlines the steps for implementing a robust, density-aware uncertainty sampling strategy for a regression task, such as predicting the adsorption capacity of metal-organic frameworks (MOFs).

Objective: To efficiently train a high-performance regression model with a minimal number of experimentally measured data points by selecting the most informative and representative samples.

Step-by-Step Methodology:

- Problem Formulation & Data Pool Preparation:

- Define the design space (e.g., the set of candidate MOF structures and their feature representations).

- Assemble the pool of unlabeled data ( U ), which contains the feature vectors for all candidate materials.

- Prepare a small, initially labeled set ( L ) via random sampling from ( U ), or leverage an existing FAIR dataset [11].

Model Selection and Uncertainty Quantification:

- Select a model capable of uncertainty estimation for regression. Recommended models include:

- Train the chosen model on the current labeled set ( L ).

Density-Aware Query Strategy:

- For each candidate material ( \mathbf{x}_i ) in the unlabeled pool ( U ), calculate two scores:

- Combine these scores into a final utility score. A common approach is a weighted sum:

Utility(\( \mathbf{x}_i \)) = \( \alpha \cdot s_u(\mathbf{x}_i) + (1-\alpha) \cdot s_d(\mathbf{x}_i) \)where ( \alpha ) is a parameter that balances exploration (high uncertainty) and exploitation (high density).

Query and Annotation:

- Select the material ( \mathbf{x}^* ) with the highest utility score.

- Query the oracle (e.g., perform a laboratory experiment or high-fidelity simulation) to obtain the true target value ( y^* ) (e.g., adsorption capacity) [6].

Iterative Learning Loop:

- Augment the labeled set: ( L = L \cup { (\mathbf{x}^, y^) } ).

- Remove ( \mathbf{x}^* ) from the unlabeled pool: ( U = U \setminus { \mathbf{x}^*} ).

- Retrain the model on the updated labeled set ( L ).

- Repeat steps 3-5 until the experimental budget is exhausted or model performance plateaus.

Uncertainty sampling has proven to be a transformative strategy for accelerating materials discovery. By enabling models to identify and query the most informative data points, it drastically reduces the number of costly and time-consuming experiments required, achieving up to a 10-fold speedup in optimization tasks as demonstrated in alloy design [11]. The integration of advanced techniques—such as distinguishing between epistemic and aleatoric uncertainty, combining uncertainty with density metrics, and leveraging the power of FAIR data and LLMs—further enhances the robustness and generalizability of these approaches. For researchers in materials science and drug development, adopting the structured protocols and strategies outlined in this note provides a clear pathway to maximizing research efficiency and achieving breakthroughs with constrained resources.

Expected Improvement and Other Acquisition Functions for Targeted Property Optimization

The discovery and development of new materials and molecular compounds are fundamental to progress in fields ranging from renewable energy to pharmaceuticals. However, this process often involves navigating vast, complex design spaces using experiments that are costly, time-consuming, and resource-intensive. Within this challenging context, active learning strategies have emerged as a powerful paradigm for accelerating research by intelligently and iteratively guiding the selection of experiments [2].

A cornerstone of many active learning frameworks for optimization is Bayesian Optimization (BO), a suite of techniques designed to find the global optimum of "black-box" functions that are expensive to evaluate [34]. BO is particularly effective because it strategically balances exploration (probing regions of high uncertainty) and exploitation (concentrating on areas known to yield high performance) [35]. This balance is critically governed by a component called the acquisition function. This article provides a detailed examination of key acquisition functions—Expected Improvement, Probability of Improvement, and Upper Confidence Bound—and offers structured protocols for their application in targeted property optimization.

Core Principles of Bayesian Optimization

Bayesian Optimization is a sequential design strategy for optimizing black-box functions. It operates through a two-component iterative cycle [34] [35]:

- Surrogate Model: A probabilistic model, typically a Gaussian Process (GP), is used to approximate the unknown objective function. The GP provides a posterior distribution for the function's value at any point in the design space, characterized by a mean,

μ(x), which predicts the function's value, and a standard deviation,σ(x), which quantifies the uncertainty in the prediction [35]. - Acquisition Function: This function leverages the surrogate model's predictions to determine the next most promising point to evaluate. By maximizing the acquisition function, the algorithm decides where to sample next, effectively managing the exploration-exploitation trade-off [34] [36].

The following diagram illustrates the logical workflow of the Bayesian Optimization cycle.

A Comparative Analysis of Key Acquisition Functions

The choice of acquisition function is pivotal, as it directly influences the efficiency and outcome of the optimization process. The table below summarizes the key characteristics of three widely used acquisition functions.

Table 1: Comparison of Common Acquisition Functions

| Acquisition Function | Mathematical Formulation | Key Mechanism | Exploration vs. Exploitation Balance | Primary Use Case |

|---|---|---|---|---|

| Probability of Improvement (PI) [34] [37] | PI(x) = Φ( (μ(x) - f(x⁺) - ξ) / σ(x) ) |

Probability that a point x will outperform the current best f(x⁺). |

Controlled by ξ parameter. Low ξ favors exploitation. |

Simple optimization tasks where the goal is to find a better solution quickly. |

| Expected Improvement (EI) [35] [36] [37] | EI(x) = (μ(x)-f(x⁺)-ξ)Φ(Z) + σ(x)φ(Z) where Z=(μ(x)-f(x⁺)-ξ)/σ(x) |

Expected value of improvement over f(x⁺), considering both probability and magnitude. |

Naturally balanced; can be tuned with ξ. |

General-purpose global optimization; considered a robust default choice. |

| Upper Confidence Bound (UCB) [36] [37] | UCB(x) = μ(x) + β * σ(x) |

Optimistic estimate of performance at x. |

Explicitly balanced by β parameter. |

Problems where a clear preference for exploration or exploitation is known. |

In-Depth Mathematical and Practical Insights

Probability of Improvement (PI) is one of the earliest acquisition functions. It computes the probability that evaluating a candidate point x will yield an improvement over the current best observation, f(x⁺) [36]. The ξ parameter is a small positive tolerance that can be introduced to encourage more exploration; a higher ξ value makes it harder to achieve an improvement, thus pushing the algorithm to explore more uncertain regions [34]. A key limitation of PI is that it only considers the likelihood of improvement, not its potential magnitude. This can lead to a greedy convergence to local optima, as it may favor points with a high probability of only a minuscule improvement [34] [36].

Expected Improvement (EI) was developed to overcome the shortcomings of PI. Instead of just the probability, EI calculates the expected value of the improvement I(x) = max(f(x) - f(x⁺), 0) [35] [36]. Its analytical form under a Gaussian Process surrogate is EI(x) = (μ(x) - f(x⁺) - ξ) * Φ(Z) + σ(x) * φ(Z), where Φ and φ are the cumulative and probability density functions of the standard normal distribution, respectively [36] [37]. The first term favors points with high predicted values (exploitation), while the second term favors points with high uncertainty (exploration). This intrinsic balance makes EI a highly effective and widely used acquisition function in practice [35].

Upper Confidence Bound (UCB) takes a different approach by forming an optimistic guess of the function's value. The acquisition function is simply UCB(x) = μ(x) + β * σ(x), where β is a hyperparameter that explicitly controls the trade-off [36] [37]. A higher β value weights uncertainty more heavily, leading to more exploratory behavior. Theoretical guarantees exist for UCB, making it popular in both optimal design and multi-armed bandit problems.

Advanced Targeted Discovery with Bayesian Algorithm Execution

While EI, PI, and UCB excel at finding a single global optimum, many scientific goals are more complex. Materials discovery, for instance, often requires finding a specific subset of the design space that meets user-defined criteria, such as all formulations that yield a nanoparticle size within a target range or all processing conditions that result in a material with multiple desired properties [38] [39].

The Bayesian Algorithm Execution (BAX) framework was developed to address these complex goals. In BAX, the user defines their experimental goal not as an optimization objective, but as an algorithm—a simple computer program that would return the target subset if the underlying function were perfectly known [38] [39]. The BAX framework then automatically constructs a tailored data acquisition strategy by simulating the algorithm on posterior samples of the surrogate model. Key strategies derived from this framework include:

- InfoBAX: Selects points that are expected to provide the most information about the algorithm's output (the target subset).

- MeanBAX: An exploration strategy that uses the model posterior, particularly effective with small datasets.

- SwitchBAX: A parameter-free strategy that dynamically switches between InfoBAX and MeanBAX for robust performance across different data regimes [38] [39].

This framework provides a practical and powerful solution for targeting non-trivial experimental goals without requiring the difficult task of designing a custom acquisition function from scratch.

Experimental Protocol for Implementing Bayesian Optimization

This protocol outlines the steps for using Bayesian Optimization with the Expected Improvement acquisition function to optimize a target property, such as the efficiency of a photovoltaic material or the binding affinity of a drug candidate.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Function/Description |

|---|---|

| Gaussian Process (GP) Surrogate Model | A probabilistic model that serves as a surrogate for the expensive black-box function, providing predictions and uncertainty estimates across the parameter space [35]. |