Accelerating Discovery: Machine Learning and High-Throughput Strategies for Thermodynamic Stability of New Materials

The discovery of new inorganic materials with targeted properties is a cornerstone of advancements in energy, electronics, and catalysis.

Accelerating Discovery: Machine Learning and High-Throughput Strategies for Thermodynamic Stability of New Materials

Abstract

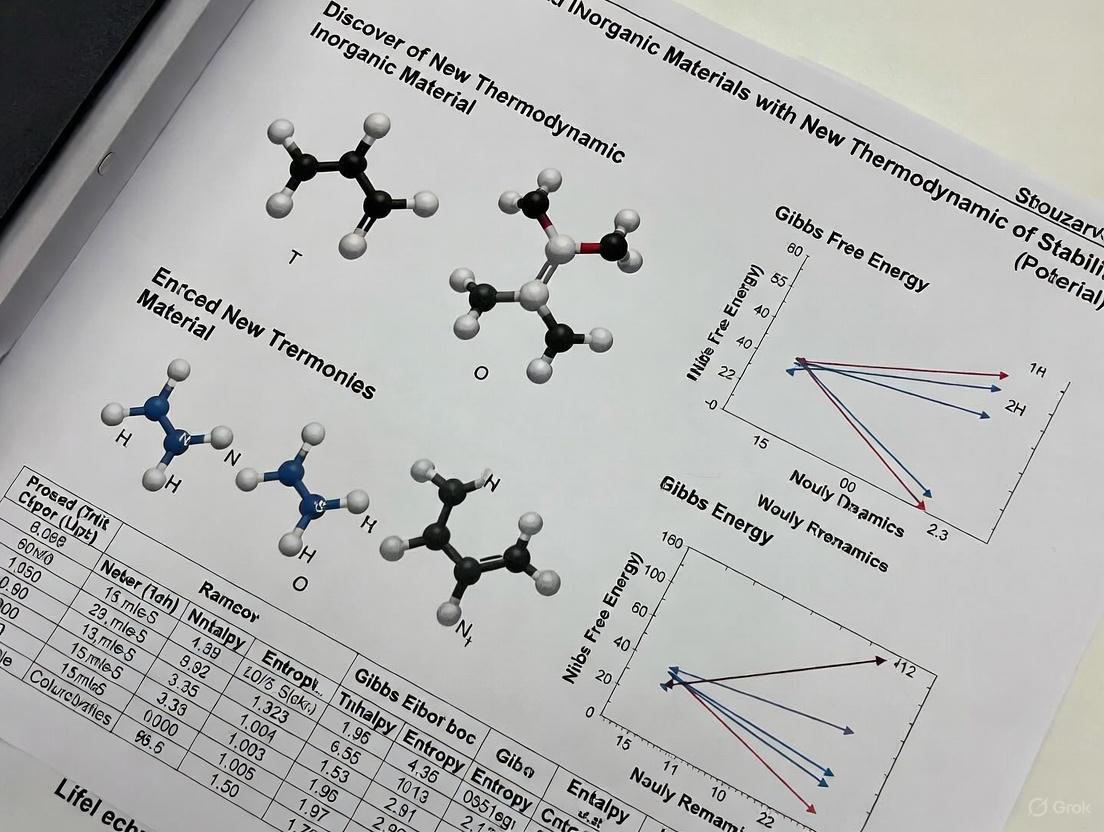

The discovery of new inorganic materials with targeted properties is a cornerstone of advancements in energy, electronics, and catalysis. However, the vast compositional space makes experimental exploration inefficient. This article explores the transformative role of computational and data-driven approaches in predicting thermodynamic stability—the foundational criterion for a material's synthesizability. We cover the fundamentals of stability assessment, highlight cutting-edge machine learning frameworks that dramatically accelerate discovery, address challenges like data and computational bottlenecks, and validate these new methods against established experimental and first-principles techniques. Aimed at researchers and scientists, this review synthesizes how integrated computational-experimental workflows are streamlining the path to novel, stable materials.

The Bedrock of Materials Discovery: Understanding Thermodynamic Stability

In the accelerated discovery of new inorganic materials, accurately predicting thermodynamic stability is a fundamental challenge. The vastness of compositional space, often described as a search for a needle in a haystack, makes the experimental or computational characterization of all potential compounds intractable [1]. Within this research context, two computational concepts have become cornerstone metrics for assessing stability: the decomposition energy (ΔHd) and its geometric derivation via the convex hull [1] [2]. These concepts allow researchers to quickly evaluate whether a proposed compound will remain stable or spontaneously decompose into other, more thermodynamically favorable phases.

This technical guide provides an in-depth examination of these core concepts. It details the underlying theory, outlines standard computational methodologies employed by major materials databases, and explores advanced machine-learning approaches that are reshaping the high-throughput screening landscape. The focus is placed squarely on stability evaluation within the framework of inorganic materials research.

Core Theoretical Concepts

Decomposition Energy (ΔHd)

The decomposition energy, often denoted as ΔHd or energy above hull (E_hull), is the primary quantitative measure of a compound's thermodynamic stability [1] [3]. It is defined as the energy difference between a given compound and a linear combination of other competing phases from the same chemical system that have the lowest combined energy at that composition.

Formation Energy (ΔEf) vs. Decomposition Energy (ΔHd): It is crucial to distinguish the formation energy from the decomposition energy. The formation energy, ΔEf, represents the energy change when a compound forms from its constituent elements in their standard states [2] [3]. In contrast, the decomposition energy, ΔHd, represents the energy change when a compound decomposes into other more stable compounds in its chemical space [1]. A negative ΔEf indicates stability relative to the elements, but a positive ΔHd indicates instability relative to other compounds.

Interpretation: A decomposition energy of zero (Ehull = 0) signifies that the compound is thermodynamically stable and lies directly on the convex hull. A positive value (Ehull > 0) indicates that the compound is unstable and will spontaneously decompose into the set of stable phases that define the hull at that composition. The magnitude of this positive value indicates the degree of instability [2] [3].

The Convex Hull

The convex hull is a geometric construction used to identify the set of thermodynamically stable compounds in a multi-component system at a given temperature and pressure (typically 0 K and 0 atm for computational studies) [2].

- Geometric Construction: The hull is built by plotting the formation energy per atom against composition for all known compounds in a chemical system (e.g., Li-Fe-O). The convex hull is then the lower envelope of this multi-dimensional energy-composition space. Any phase that lies on this hull is considered stable, while phases above it are unstable [2].

- Higher-Dimensional Systems: While easily visualized in binary (2D) and ternary (3D) systems, the convex hull concept extends to quaternary and higher-order systems. In these cases, the "envelope" becomes an (N-1)-dimensional hyperplane within an N-dimensional composition space [3]. The decomposition energy remains the vertical distance (in energy) from a compound's data point down to this hull surface.

The following diagram illustrates the relationship between formation energy, the convex hull, and decomposition energy in a binary system.

Figure 1: A schematic binary phase diagram. The blue circles represent stable compounds lying on the convex hull (yellow line). The red circle represents an unstable compound, whose decomposition energy (ΔHd) is the vertical distance to the hull.

Computational Methodology

The methodology for determining thermodynamic stability using density functional theory (DFT) has been standardized by large-scale computational efforts and is implemented in open-source packages.

Workflow for Stability Assessment

A standard workflow for calculating the decomposition energy of a new inorganic compound involves several key steps, integrating both quantum mechanical calculations and geometric analysis.

Figure 2: The standard computational workflow for assessing the thermodynamic stability of a new inorganic compound.

Constructing the Phase Diagram

The phase diagram is constructed from the calculated energies of all known compounds within a chemical system.

- Input Data: The process requires the calculated formation energies per atom and the compositions of all relevant compounds in the chemical system of interest [2].

- Convex Hull Algorithm: The convex hull is computed using algorithms like the Quickhull algorithm. This geometric operation identifies the set of points (compounds) that form the lowest-energy envelope in the energy-composition space [2] [3].

- Stability Evaluation: Each compound's energy is compared to the hull. If a compound's energy is on the hull, it is stable. If it is above the hull, its decomposition energy is calculated as the vertical distance to the hull surface [2] [3].

Calculating Decomposition Energy and Products

For a compound with a positive E_hull, the specific decomposition reaction and its energy are determined by the geometry of the hull.

- Decomposition Reaction: The unstable compound decomposes into a mixture of the stable phases that form the facet of the hull directly beneath it. The stoichiometry of the decomposition reaction is determined by the location of the target composition within the simplex formed by the decomposition products [3].

- Example Calculation: For a compound

BaTaNO2calculated to be 32 meV/atom above the hull, its decomposition reaction might be into a mixture of phases:BaTaNO2 → 2/3 Ba₄Ta₂O₉ + 7/45 Ba(TaN₂)₂ + 8/45 Ta₃N₅. The E_hull is calculated using the normalized (eV/atom) energies [3]:E_hull = E_BaTaNO2 - ( (2/3)*E_Ba4Ta2O9 + (7/45)*E_Ba(TaN2)2 + (8/45)*E_Ta3N5 )

Advanced Modeling: The Phase Stability Network

A novel paradigm for understanding thermodynamic stability uses complex network theory. In this model, the global phase diagram is represented as a network where nodes are stable compounds and edges (tie-lines) represent stable two-phase equilibria between them [4].

- Network Properties: The phase stability network of all inorganic materials is remarkably dense and interconnected, with ~21,300 nodes (stable compounds) and ~41 million edges. The average number of tie-lines per node (mean degree, 〈k〉) is ~3850, meaning each stable compound can coexist in equilibrium with thousands of others [4].

- Implications for Discovery: This network exhibits a hierarchical structure, where the mean degree 〈k〉 decreases as the number of components (N) in a compound increases. This reveals an inherent competition for stability, where higher-component compounds must have lower formation energies to "survive" against the plethora of stable lower-component compounds in their chemical space [4]. This explains why the number of known stable compounds peaks at ternaries (N=3).

Machine Learning for High-Throughput Stability Prediction

The high computational cost of DFT has spurred the development of machine learning (ML) models to predict stability directly from composition or structure.

Machine Learning Workflow

Advanced ML frameworks for stability prediction integrate multiple models to improve accuracy and reduce bias.

Figure 3: An ensemble machine learning framework (e.g., ECSG) that combines base models built on different physical principles (atomic statistics, graph networks, electron configuration) through a meta-learner to achieve high-accuracy stability prediction [1].

Quantitative Comparison of Stability Prediction Methods

The table below summarizes key computational and data-driven approaches for evaluating thermodynamic stability.

Table 1: Comparison of Methods for Assessing Thermodynamic Stability

| Method | Key Input | Underlying Principle | Key Metric / Output | Performance / Notes | | :--- | :--- | : :--- | :--- | | DFT + Convex Hull [2] [3] | Atomic Structure | Quantum Mechanics | Decomposition Energy (Ehull) | High accuracy but computationally expensive; considered the benchmark. | | Ensemble ML (ECSG) [1] | Chemical Formula | Ensemble Machine Learning | Stability Classification / Ehull | AUC = 0.988; high sample efficiency (1/7 data for same performance). | | Graph Neural Network [5] | Crystal Structure | Graph Neural Networks | Total Energy / Formation Enthalpy | MAE ~0.04 eV/atom; requires balanced training data of ground-state and high-energy structures. | | Atomic-Number Encoding [6] | Chemical Formula | Atomic Number Descriptor | Stability Classification | High accuracy for perovskites; enables rapid screening from composition alone. |

The Researcher's Toolkit

This section details essential computational and experimental resources for conducting stability analysis.

Table 2: Key Research Reagents and Tools for Stability Analysis

| Item / Resource | Type | Function / Application | Example / Source |

|---|---|---|---|

| DFT Software | Computational | Calculating the ground-state total energy of crystal structures. | VASP, Quantum ESPRESSO |

| pymatgen | Software Library | Analyzing phase diagrams, constructing convex hulls, and processing crystal structures. | Python Package [2] |

| Materials Project API | Database & Tool | Accessing pre-computed DFT data (formation energies, E_hull) for hundreds of thousands of materials. | materialsproject.org [2] |

| Machine Learning Models | Computational | Fast, high-throughput screening for stable materials based on composition or structure. | ECSG [1], CGCNN [5] |

| Precursors | Experimental | Source materials for solid-state synthesis of target inorganic compounds. | e.g., BaCO₃, TiO₂ for BaTiO₃ [7] |

The concepts of decomposition energy and the convex hull provide a rigorous and computationally accessible foundation for determining the thermodynamic stability of inorganic materials. While DFT-based hull construction remains the gold standard, the field is rapidly evolving with the integration of network-based analysis and sophisticated machine-learning models. These data-driven approaches, particularly those using ensemble methods and graph neural networks, are dramatically accelerating the discovery of new materials by enabling the rapid screening of vast compositional spaces. However, a critical challenge remains: thermodynamic stability is a necessary but not sufficient condition for successful material synthesis [7]. Kinetic barriers, precursor selection, and reaction pathways often dictate whether a theoretically stable compound can be realized in the laboratory. Future research will likely focus on integrating stability prediction with models that can also assess synthesizability, thereby closing the loop between computational design and experimental realization.

The discovery of new inorganic materials with desired thermodynamic stability is a fundamental pursuit in materials science, crucial for applications ranging from drug development to energy storage. The traditional pipeline for this discovery has long been reliant on two core methodologies: experimental trial-and-error and computational modeling via first-principles calculations, primarily based on Density Functional Theory (DFT). While powerful, these approaches present significant bottlenecks that slow the pace of innovation. Experimental synthesis is often a resource-intensive process plagued by challenges in achieving phase-pure materials, while DFT calculations, though providing atomic-level insights, are computationally prohibitive for exploring vast chemical spaces or simulating real-world synthesis conditions at scale [8] [7]. This guide examines these core bottlenecks and details the modern computational and data-driven methodologies emerging to overcome them, all within the critical context of thermodynamic stability assessment.

Core Bottlenecks in Traditional Methodologies

The High-Cost of First-Principles Calculations

First-principles calculations, particularly DFT, serve as the computational ground truth for determining key properties like formation energy and phase stability [8]. However, their application is constrained by profound computational demands. Table 1 summarizes the primary bottlenecks associated with DFT.

Table 1: Bottlenecks of Traditional First-Principles Calculations (DFT)

| Bottleneck Aspect | Specific Challenge | Impact on Discovery |

|---|---|---|

| Computational Cost | High computational resource requirements for complex systems [8]. | Limits high-throughput screening of vast chemical spaces [1]. |

| Spatial & Temporal Scale | Inability to simulate the vast atom counts (e.g., a grain of sand has ~10²⁰ atoms) or long timescales of real synthesis processes [7]. | Fails to capture kinetic phenomena and non-equilibrium conditions critical to synthesis [7]. |

| Synthesizability Gap | Identifies thermodynamic stability but does not predict synthesizability or provide viable synthesis pathways [7] [9]. | A material predicted to be stable may never be successfully made in the lab [7]. |

The Experimental Trial-and-Error Quagmire

The experimental path to new materials is fraught with challenges. A central difficulty is that thermodynamic stability does not guarantee synthesizability. Synthesis is a pathway problem, where kinetic competitors and low-energy intermediates can trap reactions, preventing the formation of the target phase [7] [10]. For instance, the synthesis of BiFeO₃ frequently results in impurities like Bi₂Fe₄O₉ because the target phase is only stable within a narrow window of conditions, and competing phases are kinetically favorable [7]. This problem is exacerbated in multi-component inorganic materials, where the high-dimensional phase diagram contains many potential by-product phases that can consume reactants and kinetic driving force [10]. The vastness of possible precursor combinations and reaction conditions makes exhaustive experimental exploration intractable, creating a primary bottleneck in translating predicted materials into realized ones [7].

Modern Computational Approaches Overcoming Traditional Bottlenecks

Machine learning (ML) and AI-driven frameworks are now being deployed to navigate around these traditional limitations, creating a more efficient and predictive discovery paradigm.

Machine Learning for Accelerated Stability Prediction

ML models can dramatically accelerate the prediction of thermodynamic stability while mitigating the data inefficiency of traditional approaches. A key advancement is the move towards ensemble models and descriptors that incorporate deeper physical insights. For example, the ECSG (Electron Configuration models with Stacked Generalization) framework integrates three models based on distinct knowledge domains—Magpie (atomic properties), Roost (interatomic interactions), and a novel Electron Configuration Convolutional Neural Network (ECCNN)—to create a super learner [1]. This ensemble approach reduces inductive bias and achieves an Area Under the Curve (AUC) score of 0.988 for predicting compound stability within the JARVIS database, a performance matched by existing models using only one-seventh of the data [1].

Table 2: Performance of Modern ML Frameworks for Stability and Property Prediction

| Framework/Model | Primary Approach | Key Performance Metric | Application in Discovery |

|---|---|---|---|

| ECSG (Ensemble) [1] | Stacked generalization using electron configuration, elemental statistics, and graph networks. | AUC = 0.988; high data efficiency. | Predicting thermodynamic stability of inorganic compounds. |

| Few-Shot Learning for Perovskites [11] | Physics-driven few-shot learning with atomic orbital descriptors and synthetic data. | MAE of 0.382 eV on a validation set of 52 ABO3 samples; 36% accuracy improvement. | Bandgap prediction and inverse design of photocatalysts. |

| Aethorix v1.0 AI Agent [8] | Integrated AI agent with generative design and physics-embedded prediction. | Accelerated property prediction with ab initio fidelity at industrial speeds. | Zero-shot inverse design and optimization of inorganic materials. |

Generative AI and Inverse Design

The field is rapidly evolving from passive screening to active, goal-directed inverse design [8]. Generative models, such as diffusion models and variational autoencoders, can creatively propose novel, stable crystal structures tailored to target properties, moving beyond the brute-force tweaking of known structures [8] [12]. Benchmarking studies show that while traditional methods like ion exchange are effective at generating novel stable compounds that resemble known ones, generative AI models excel at proposing novel structural frameworks and targeting specific properties when sufficient training data is available [12]. A critical enhancement for all generation methods is a post-generation screening step using pre-trained ML models and universal interatomic potentials, which significantly boosts the success rate of identifying viable, stable materials [12].

Experimental Protocols: From Prediction to Synthesis

Overcoming the synthesis bottleneck requires not just predicting what to make, but also determining how to make it. The following protocol, derived from recent high-impact research, provides a roadmap.

A Thermodynamic Protocol for Precursor Selection

This methodology uses thermodynamic data to select precursors that maximize phase purity and yield for a target multicomponent inorganic material [10].

Objective: To identify an optimal precursor pair for a target quaternary oxide, minimizing kinetically trapped by-products and maximizing reaction driving force. Primary Reagents & Materials:

- Computational Database: The Materials Project database for accessing DFT-calculated formation energies of the target and all competing phases in the chemical space [10].

- Precursor Candidates: Common inorganic salts, oxides, or pre-synthesized intermediate compounds (e.g., carbonates, phosphates).

Experimental Procedure:

Construct the Phase Diagram: Using the target's composition (e.g., LiBaBO₃) as a reference, construct the relevant pseudo-ternary or multi-component phase diagram from the DFT-calculated formation energies of all known phases in that chemical space. This forms the convex hull [10].

Identify Precursor Pairs: Enumerate all possible pairs of precursor compositions that can stoichiometrically combine to form the target material.

Evaluate Pairs Against Selection Principles: Rank the precursor pairs based on the following principles [10]:

- Principle 1 (Minimize Simultaneous Reactions): Prefer pairs over three or more precursors to minimize simultaneous pairwise reactions.

- Principle 2 (Maximize Driving Force): Precursors should be relatively high-energy (unstable) to maximize the thermodynamic driving force (ΔE) for the reaction.

- Principle 3 (Target as Deepest Point): The target material should be the lowest energy (deepest) point on the convex hull along the line (isopleth) connecting the two precursors.

- Principle 4 (Avoid Competing Phases): The reaction isopleth should intersect as few other stable competing phases as possible.

- Principle 5 (Large Inverse Hull Energy): If competitors are unavoidable, the target should have a large "inverse hull energy" (energy below its neighboring stable phases), ensuring a strong driving force for its nucleation over impurities.

Synthesis and Validation:

- Lab-Scale Synthesis: The top-ranked precursor pair is mixed, ground, and calcined in a furnace. The process is typically repeated with varying temperatures and dwell times to map reaction progression.

- Phase Purity Analysis: The reaction products are characterized using X-ray Diffraction (XRD). Rietveld refinement of the XRD patterns quantifies the weight fraction of the target phase versus impurity phases.

- Robotic Validation (High-Throughput): For large-scale validation, a robotic inorganic materials synthesis laboratory (e.g., Samsung ASTRAL) can be employed to execute hundreds of reactions in parallel, systematically testing the thermodynamic strategy against traditional precursors [13] [10].

Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Advanced Inorganic Synthesis Research

| Research Reagent / Material | Function in Research & Development |

|---|---|

| High-Purity Precursor Powders | Base starting materials for solid-state reactions (e.g., Li₂CO₃, TiO₂, B₂O₃). Purity is critical to avoid unintended impurities [13]. |

| Pre-Synthesized Intermediate Phases | High-energy intermediate compounds (e.g., LiBO₂) used as precursors to circumvent low-energy by-products and retain high driving force [10]. |

| Robotic Synthesis Laboratory | Automated platform for high-throughput, reproducible powder preparation, ball milling, oven firing, and characterization, enabling large-scale hypothesis testing [13] [10]. |

| Microfluidic Reactors | Automated systems for nanomaterial synthesis enabling high-throughput parameter screening, real-time optical monitoring, and superior reproducibility [14]. |

Integrated Workflow for Modern Thermodynamic Stability Discovery

The modern approach to discovering thermodynamically stable inorganic materials integrates computational and experimental cycles into a cohesive, AI-driven workflow. The following diagram illustrates this closed-loop paradigm, as implemented by state-of-the-art scientific AI agents.

Diagram 1: Integrated AI-Agent Workflow for Material Discovery. This workflow showcases the closed-loop, iterative process for the discovery and synthesis of new inorganic materials, combining generative AI, high-fidelity simulation, and robotic experimentation [8].

The process begins with defining the target properties and operational constraints. The AI agent then decomposes the problem and uses its reasoning capabilities to establish design principles [8]. The core of the discovery engine involves generative inverse design to propose novel crystal structures, which are subsequently optimized and screened using accelerated property predictors that approach the accuracy of first-principles calculations at a fraction of the cost [8]. Successful candidates proceed to the critical synthesis planning stage, where thermodynamic principles guide precursor selection [10]. This is followed by experimental validation, increasingly performed by robotic labs for high-throughput testing [13]. Failure at any stage triggers a causal analysis and refinement loop, allowing the system to learn from setbacks and recursively improve its proposals until a stable, synthesizable material is identified [8].

The traditional bottlenecks of experimental and first-principles calculations are being systematically dismantled by a new paradigm. This paradigm is characterized by the integration of AI-driven generative design, machine learning models with high physical fidelity and data efficiency, and thermodynamic principles for predictive synthesis. While first-principles calculations remain the foundational source of truth, and experimental validation the ultimate arbiter, their roles are evolving within an increasingly automated and intelligent workflow. The future of inorganic materials discovery lies not in choosing between computation and experiment, but in leveraging their deeply integrated synergy to navigate the vast chemical space efficiently and bring transformative materials from conception to reality.

The discovery and development of new inorganic materials with specific properties has long posed a significant challenge in materials science. A major hurdle stems from the extensive compositional space of materials, wherein the actual number of compounds that can be feasibly synthesized in a laboratory represents only a minute fraction of the total space [1]. This predicament, often likened to finding a needle in a haystack, necessitates effective strategies to constrict the exploration space. By meticulously evaluating the thermodynamic stability, it becomes plausible to winnow out a substantial proportion of materials that are arduous to synthesize, thereby notably amplifying the efficiency of materials development [1].

The paradigm of materials research has been transformed by the advent of high-throughput density functional theory (HT-DFT) calculations and the extensive databases they populate. The Materials Project (MP), the Open Quantum Materials Database (OQMD), and other similar initiatives have created large-scale, publicly accessible repositories of calculated material properties, serving as the foundational data layer for accelerated materials discovery [15] [16]. These databases provide researchers with immediate access to pre-computed quantum mechanical calculations, enabling rapid screening and identification of promising candidate materials before ever entering the laboratory. This technical guide examines the core infrastructure, data methodologies, and practical applications of these critical resources within the context of thermodynamic stability discovery for new inorganic materials.

Database Fundamentals: MP and OQMD

The Open Quantum Materials Database (OQMD)

The OQMD is a high-throughput database of DFT calculated thermodynamic and structural properties of 1,317,811 materials, created in Chris Wolverton's group at Northwestern University [17]. As of its 2015 publication, the database contained nearly 300,000 DFT total energy calculations of compounds from the Inorganic Crystal Structure Database (ICSD) and decorations of commonly occurring crystal structures [15]. A critical design philosophy of the OQMD is its commitment to being freely available without restrictions, supporting the open science goals of the Materials Genome Initiative [15].

The OQMD infrastructure is built on qmpy, a Python framework using the Django web framework as an interface to a MySQL database [15]. This decentralized model allows any research group to download and use qmpy to build their own databases, with simple programmatic access to calculations. The database contains two primary classes of structures: experimentally determined crystals from the ICSD and hypothetical compounds based on prototype structure decorations [15].

Table 1: Key Specifications of Major Materials Databases

| Aspect | OQMD | Materials Project (Legacy) | Materials Project (Newer) |

|---|---|---|---|

| Primary DFT Functional | PBE (GGA) | PBE (GGA) and GGA+U | r²SCAN (meta-GGA) |

| Database Size | ~300,000 (2015); ~1.3M (current) | Extensive calculated materials | Extensive calculated materials |

| Magnetic Moment Accuracy | Good | Closer to experiment for metals | Overestimated in transition metals |

| Thermodynamic Accuracy | Good | Good | Improved |

| Accessibility | Full database download | API access | API access |

The Materials Project (MP)

The Materials Project provides a comprehensive web-based platform for accessing computed materials data. A significant evolution in MP's methodology has been the transition from the PBE Generalized Gradient Approximation (GGA) functional to the r²SCAN meta-GGA functional in newer calculations [18]. This higher-level theory generally provides improved accuracy for many material properties, including magnetic moments in oxides, magnetic ordering in antiferromagnets, and overall thermodynamic stability [18].

When retrieving structures from the MP database, researchers should note that the default returned cell may not match conventional or primitive unit cells from textbooks. The database does not store or return the primitive or conventional unit cell by default, and the stored cell may contain multiple repeat units in a "non-standard" representation [18]. This design choice reflects the origin of structures from experimental sources or prototype substitutions, typically without symmetry reduction before calculations.

Comparative Analysis of HT-DFT Data Reproducibility

The variance between calculated properties across different high-throughput DFT databases is substantial and warrants careful consideration when leveraging these resources. A comprehensive comparison of AFLOW, Materials Project, and OQMD revealed that HT-DFT formation energies and volumes are generally more reproducible than band gaps and total magnetizations [16]. Notably, a significant fraction of records disagree on whether a material is metallic (up to 7%) or magnetic (up to 15%) [16].

The quantitative variance between calculated properties is as high as 0.105 eV/atom (median relative absolute difference of 6%) for formation energy, 0.65 ų/atom (MRAD of 4%) for volume, 0.21 eV (MRAD of 9%) for band gap, and 0.15 μB/formula unit (MRAD of 8%) for total magnetization [16]. These differences are comparable in magnitude to the discrepancies often observed between DFT and experimental measurements, highlighting the importance of understanding the source of these variations.

Table 2: Property Reproducibility Across HT-DFT Databases

| Property | Variance Between Databases | Median Relative Absolute Difference | Key Sources of Discrepancy |

|---|---|---|---|

| Formation Energy | 0.105 eV/atom | 6% | Chemical potential references, DFT+U parameters |

| Volume | 0.65 ų/atom | 4% | Pseudopotentials, convergence criteria |

| Band Gap | 0.21 eV | 9% | DFT functionals, k-point sampling |

| Total Magnetization | 0.15 μB/formula unit | 8% | Magnetic ordering assumptions, DFT+U |

| Metallic vs. Insulating | Up to 7% disagreement | - | Band gap thresholds, smearing methods |

The larger discrepancies can be traced to specific methodological choices involving pseudopotentials, the DFT+U formalism, and elemental reference states [16]. These findings suggest that further standardization of HT-DFT would be beneficial to reproducibility, though the current databases remain immensely valuable when their specific methodologies are understood and accounted for.

Thermodynamic Stability Assessment

Fundamentals of Stability Evaluation

The thermodynamic stability of materials is typically represented by the decomposition energy (ΔHd), defined as the total energy difference between a given compound and competing compounds in a specific chemical space [1]. This metric is ascertained by constructing a convex hull utilizing the formation energies of compounds and all pertinent materials within the same phase diagram [1]. The conventional approach for determining compound stability through convex hull construction typically requires experimental investigation or DFT calculations to determine the energy of compounds within a given phase diagram, consuming substantial computational resources and yielding low efficiency in exploring new compounds [1].

The OQMD implements sophisticated phase diagram analysis through its qmpy framework, with the PhaseSpace class representing regions of compositional space and enabling computation of formation energies and stabilities with respect to defined chemical potentials [19]. The compute_stabilities() method calculates the stability for every phase by evaluating the energy difference between the formation energy of a phase and the energy of the convex hull in its absence [19].

DFT Methodology and Accuracy Assessment

The OQMD employs carefully tested calculation settings to ensure converged results in an efficient manner for a variety of material classes. Extensive testing on a sample of ICSD structures has established calculation parameters that ensure consistency across all calculations, enabling direct comparison of results between different compounds [15]. The database uses both standard DFT and DFT+U calculations with consistent parameters to handle strongly correlated electrons.

The accuracy of DFT formation energies has been rigorously assessed by comparing OQMD predictions with experimental measurements. The apparent mean absolute error between experimental measurements and DFT calculations is 0.096 eV/atom [15]. However, when examining deviations between different experimental measurements themselves where multiple sources are available, there is a surprisingly large mean absolute error of 0.082 eV/atom [15]. This suggests that a significant fraction of the error between DFT and experimental formation energies may be attributed to experimental uncertainties rather than computational inaccuracies.

Machine Learning for Accelerated Stability Prediction

Ensemble Machine Learning Framework

Machine learning offers a promising avenue for expediting the discovery of new compounds by accurately predicting their thermodynamic stability, providing significant advantages in terms of time and resource efficiency compared to traditional experimental and DFT methods [1]. Recent advances include an ensemble framework based on stacked generalization (SG) that amalgamates models rooted in distinct domains of knowledge [1]. This approach integrates three models: Magpie (utilizing statistical features of elemental properties), Roost (conceptualizing chemical formulas as graphs of elements), and the Electron Configuration Convolutional Neural Network (ECCNN) - a novel model developed to address the limited understanding of electronic internal structure in existing models [1].

The resulting super learner, designated Electron Configuration models with Stacked Generalization (ECSG), effectively mitigates the limitations of individual models and harnesses synergy that diminishes inductive biases, ultimately enhancing predictive performance [1]. In experimental validation, this approach achieved an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database, demonstrating exceptional accuracy in stability classification [1].

Composition-Based versus Structure-Based Models

Machine learning models for predicting material properties are primarily of two types: structure-based models and composition-based models [1]. Structure-based models contain more extensive information, including the proportions of each element and the geometric arrangements of atoms, but determining precise structures of compounds can be challenging [1]. Composition-based models, while lacking structural information, have demonstrated remarkable capability in accurately predicting material properties such as energy and bandgap [1].

In the discovery of novel materials, composition-based models offer a significant practical advantage: they can significantly advance the efficiency of developing new materials, given that composition information can be known a priori [1]. This is particularly valuable when exploring uncharted compositional spaces where structural information is unavailable.

Table 3: Essential Research Tools and Resources

| Resource | Type | Primary Function | Application in Stability Research |

|---|---|---|---|

| OQMD Database | Computational Database | Provides DFT-calculated formation energies and structures | Foundation for convex hull construction and stability analysis |

| Materials Project API | Programming Interface | Enables automated retrieval of computed materials data | Access to updated DFT calculations with r²SCAN functional |

| qmpy | Python Framework | Database management and analysis for high-throughput DFT | Phase diagram analysis and stability computation |

| ECSG Model | Machine Learning Model | Predicts compound stability from composition | Rapid screening of uncharted compositional spaces |

| pymatgen | Python Library | Materials analysis and crystal manipulation | Structure conversion and materials informatics |

| DFT+U Parameters | Computational Parameters | Corrections for strongly correlated electrons | Improved accuracy for transition metal oxides |

Materials databases like the OQMD and Materials Project have established an indispensable data foundation for modern inorganic materials research, particularly in the domain of thermodynamic stability assessment. While variations exist between different databases due to methodological choices, the consolidated resources provide researchers with unprecedented access to calculated material properties at scale. The integration of machine learning approaches, especially ensemble methods that leverage multiple representations of materials, demonstrates remarkable potential for accelerating the discovery of stable compounds beyond the capabilities of DFT alone. As these resources continue to grow and evolve, they will undoubtedly remain cornerstone technologies in the quest to efficiently navigate the vast compositional space of inorganic materials and identify promising candidates for synthesis and application.

The pursuit of new functional materials has traditionally operated on a bottom-up paradigm, where macroscopic material properties are deduced from fundamental principles involving atomic arrangements and bonding characteristics. While this approach has yielded significant successes, it faces inherent limitations when navigating the vast compositional space of potential inorganic compounds. A transformative complementary approach has emerged: viewing the entire landscape of inorganic materials as an interconnected network. This paradigm shift, first articulated by Hegde et al., reconceptualizes materials discovery by analyzing the organizational structure of materials networks based on interactions between the materials themselves rather than solely their atomic constituents [20] [21].

This whitepaper elaborates on this network-centric paradigm, which characterizes the complete "phase stability network of all inorganic materials" as a complex system of 21,000 thermodynamically stable compounds (nodes) interconnected by 41 million tie-lines (edges) that define their two-phase equilibria [20] [21]. By applying network theory to materials science, researchers can uncover fundamental relationships and material characteristics that remain inaccessible through traditional atoms-to-materials approaches. This framework has already enabled the derivation of novel, data-driven metrics for material reactivity, such as the "nobility index," which quantitatively identifies the most noble (least reactive) materials in nature based solely on their connectivity within the phase stability network [20] [21].

The integration of this network-based approach with advanced computational methods, including high-throughput density functional theory (DFT) calculations and machine learning, establishes a powerful new foundation for thermodynamic stability discovery in inorganic materials research [20] [1]. This technical guide examines the core principles, methodologies, and applications of this paradigm, providing researchers with the conceptual framework and experimental protocols needed to leverage network analysis in accelerating materials discovery.

Quantitative Characterization of the Phase Stability Network

The phase stability network constitutes a comprehensive map of thermodynamic relationships between inorganic compounds. Through systematic analysis of this network, researchers have identified distinct topological features and quantitative relationships that offer predictive capabilities for materials behavior and stability.

Table 1: Key Quantitative Characteristics of the Phase Stability Network

| Network Metric | Value/Characteristics | Implications for Materials Discovery |

|---|---|---|

| Node Count | ~21,000 stable compounds | Represents the known thermodynamic landscape from high-throughput DFT [20] [21] |

| Edge Count | ~41 million tie-lines | Dense connectivity reflecting extensive two-phase equilibria [20] [21] |

| Node Connectivity Distribution | Lognormal distribution | Most materials have few connections, while few materials have many connections [21] |

| Connectivity vs. Composition | Decreases with number of elemental constituents | Binary compounds are more connected than ternary or quaternary systems [21] |

| Data-Driven Reactivity Metric | "Nobility Index" | Quantifies material reactivity based on network connectivity [20] [21] |

The topological analysis of this network reveals that node connectivity follows a lognormal distribution, indicating that while most materials exhibit limited connections within the network, a select few function as highly connected hubs [21]. This connectivity pattern mirrors features observed in other complex networks, including social and biological systems. Furthermore, researchers have established an inverse relationship between connectivity and compositional complexity: materials with fewer elemental constituents generally demonstrate higher connectivity within the network [21]. This fundamental insight provides crucial guidance for targeting specific regions of materials space when designing discovery campaigns.

The phase stability network enables the calculation of the nobility index, a revolutionary data-driven metric that quantifies material reactivity based solely on network connectivity [20] [21]. This index successfully identifies known noble materials while also predicting previously unrecognized candidates with exceptionally low reactivity, demonstrating how network topology can uncover material properties without explicit first-principles calculations for each compound.

Computational Methodologies and Experimental Protocols

Implementing the phase stability network paradigm requires integrating multiple computational approaches, from first-principles calculations to machine learning frameworks. This section details the essential methodologies and protocols for constructing and analyzing materials networks.

High-Throughput DFT Workflow

The foundation of the phase stability network rests on accurate thermodynamic data derived from high-throughput density functional theory calculations. The established protocol involves:

Structure Selection and Preparation: Curate known crystal structures from inorganic crystallographic databases (e.g., ICSD) and generate hypothetical compounds through symmetric decoration of common prototype structures.

DFT Calculation Parameters: Employ standardized DFT settings across all calculations: plane-wave basis sets with projector augmented-wave (PAW) pseudopotentials, Perdew-Burke-Ernzerhof (PBE) exchange-correlation functional, and kinetic energy cutoffs of 520 eV for plane waves.

Convex Hull Construction: For each compositional system, compute the formation energy (ΔHf) of all compounds and construct the phase diagram by determining the lower convex hull. Compounds lying on the hull are classified as thermodynamically stable.

Tie-Line Establishment: Identify all stable two-phase equilibria between compounds, which form the edges (tie-lines) in the phase stability network. This generates the complete network connectivity map.

Machine Learning for Stability Prediction

Recent advances in machine learning offer complementary approaches to DFT for predicting thermodynamic stability. The ECSG (Electron Configuration models with Stacked Generalization) framework demonstrates how ensemble methods can achieve exceptional accuracy while reducing computational burden [1].

Table 2: Machine Learning Approaches for Stability Prediction

| Model | Architecture | Input Features | Key Advantages |

|---|---|---|---|

| ECSG (Ensemble) | Stacked generalization combining multiple models | Electron configuration, elemental properties, interatomic interactions | Mitigates individual model biases; AUC = 0.988 [1] |

| ECCNN | Convolutional Neural Network | Electron configuration matrices | Incorporates electronic structure information; reduces manual feature engineering [1] |

| Roost | Graph Neural Network | Elemental compositions represented as complete graphs | Captures interatomic interactions through message passing [1] |

| Magpie | Gradient Boosted Regression Trees | Statistical features of elemental properties | Provides diverse elemental property representation [1] |

The ECSG framework exemplifies state-of-the-art methodology, achieving an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database [1]. Remarkably, this ensemble approach demonstrates exceptional sample efficiency, requiring only one-seventh of the data used by existing models to achieve equivalent performance [1]. This efficiency dramatically accelerates materials screening campaigns.

Generative AI and Baseline Comparisons

Generative artificial intelligence represents the frontier of computational materials discovery. Recent benchmarking studies have evaluated generative models against traditional baseline methods for discovering new inorganic crystals [12]. The experimental protocol involves:

Method Comparison: Benchmark four generative techniques (diffusion models, variational autoencoders, and large language models) against two baseline approaches - random enumeration of charge-balanced prototypes and data-driven ion exchange of known compounds [12].

Generation and Evaluation: Each method generates candidate structures, which are then evaluated for stability and properties using pre-trained machine learning models and universal interatomic potentials [12].

Performance Metrics: Quantify success rates based on novelty, stability, and ability to target specific properties like electronic band gap and bulk modulus [12].

Results indicate that while traditional methods like ion exchange excel at generating novel materials that are stable (though often resembling known compounds), generative models show superior capability in proposing novel structural frameworks and, with sufficient training data, more effectively target specific properties [12]. A critical finding is that a post-generation screening step using pre-trained ML models substantially improves success rates across all methods while maintaining computational efficiency [12].

Diagram 1: MatDiscovery Workflow - 76 characters

Implementing the phase stability network paradigm requires specific computational tools and resources. This section details the essential components of the research infrastructure needed for network-based materials discovery.

Table 3: Essential Research Resources for Phase Stability Network Analysis

| Resource Category | Specific Tools/Databases | Function/Purpose |

|---|---|---|

| Computational Databases | Materials Project (MP), Open Quantum Materials Database (OQMD), JARVIS | Provide foundational thermodynamic data and crystal structures for network construction [1] |

| First-Principles Codes | VASP, Quantum ESPRESSO, ABINIT | Perform density functional theory calculations for formation energies and electronic properties |

| Network Analysis Tools | NetworkX, Graph-tool, Gephi | Analyze topological properties, calculate connectivity metrics, and visualize materials networks |

| Machine Learning Frameworks | PyTorch, TensorFlow, Scikit-learn | Implement and train stability prediction models (ECSG, ECCNN, Roost, Magpie) [1] |

| High-Throughput Platforms | Atomate, AFLOW, FireWorks | Automate computational workflows and manage large-scale materials screening campaigns |

The integration of these resources creates a powerful infrastructure for network-driven materials discovery. The computational databases serve as the foundational source of thermodynamically validated compounds, while first-principles codes enable the calculation of new candidates. The network analysis tools provide the crucial capability to map and interrogate the topological relationships between materials, and machine learning frameworks dramatically accelerate the screening process. Finally, high-throughput platforms orchestrate these components into efficient, scalable discovery pipelines.

Applications and Validation Case Studies

The phase stability network paradigm has demonstrated practical utility across multiple materials domains. Validation studies confirm its effectiveness in discovering new materials with targeted properties.

Two-Dimensional Wide Bandgap Semiconductors

In one application, researchers employed the machine learning component of the network paradigm to explore new two-dimensional wide bandgap semiconductors [1]. Using the ECSG framework, they screened compositional spaces for stable two-dimensional materials with predicted band gaps exceeding 3 eV. First-principles calculations validated that the identified candidates were indeed thermodynamically stable and exhibited the targeted electronic properties [1]. This case study demonstrates how the network approach enables efficient navigation of unexplored composition space for specific application needs.

Double Perovskite Oxides Exploration

Another successful application involved the discovery of novel double perovskite oxides [1]. The ML framework identified numerous previously unrecognized perovskite structures with predicted stability. Subsequent DFT validation confirmed the remarkable accuracy of the method, with correctly identified stable compounds [1]. This example highlights the paradigm's strength in finding complex, multi-element compounds that might be overlooked through traditional trial-and-error approaches.

Novel Compound Generation and Screening

The integration of generative AI with network analysis has created powerful new pathways for discovery. As established in recent benchmarking studies, the combination of generative models with post-generation screening using pre-trained ML models creates a highly effective discovery pipeline [12]. This approach successfully identifies novel structural frameworks while maintaining thermodynamic stability, addressing one of the fundamental challenges in generative materials design.

Diagram 2: NetworkDiscovery Pipeline - 78 characters

The paradigm of viewing materials as a phase stability network represents a fundamental shift in materials discovery methodology. By complementing traditional bottom-up approaches with network science principles, this framework unlocks new knowledge inaccessible through conventional atoms-to-materials investigations. The demonstrated capabilities—from deriving data-driven reactivity metrics to accelerating the discovery of functional materials—underscore the transformative potential of this approach.

Future advancements will likely focus on several key areas: enhancing the integration of generative AI with network-based validation, expanding the scope to include dynamic and kinetic properties, and developing more sophisticated multi-property optimization frameworks. As these methodologies mature, the phase stability network paradigm promises to dramatically accelerate the discovery and development of next-generation inorganic materials with tailored properties for specific technological applications. The continued benchmarking of new approaches against established baselines, as exemplified by recent comparative studies, will ensure rigorous advancement of the field while maintaining computational efficiency and practical utility for researchers [12].

Next-Generation Tools: High-Throughput and Machine Learning Workflows

In the relentless pursuit of discovering new inorganic materials with targeted properties, researchers face a formidable challenge: the immense scale of possible compositional space. This process is often likened to finding a needle in a haystack, where the number of compounds that can be feasibly synthesized represents only a minute fraction of the total possibilities [1]. Within this context, accurately predicting a material's thermodynamic stability—typically represented by its decomposition energy (ΔHd)—is a critical first-principle step for constraining the exploration space and accelerating materials development [1]. Traditional methods for determining stability, primarily based on Density Functional Theory (DFT), are computationally intensive and resource-heavy, creating a bottleneck for high-throughput discovery.

Ensemble machine learning offers a powerful alternative, providing a framework to build highly accurate and robust predictive models that can rapidly assess material stability. Ensemble learning is a method where multiple models, which may be weak on their own, are combined to produce a single, superior predictive model [22] [23]. The core principle is that a collectivity of learners yields greater overall accuracy than any individual learner could achieve alone [24]. For materials scientists, this technique translates into the ability to create models that significantly reduce the variance and bias inherent in single-model approaches, leading to more reliable predictions of stability and enabling the efficient identification of promising candidate materials for laboratory synthesis [22] [25].

Core Principles of Ensemble Learning

The Bias-Variance Tradeoff and the "Wisdom of the Crowd"

The theoretical foundation of ensemble learning is deeply rooted in managing the bias-variance tradeoff, a fundamental dilemma in machine learning. Bias measures the average difference between a model's predicted values and the true values, representing errors from overly simplistic assumptions. Variance measures the inconsistency of model predictions across different datasets, representing sensitivity to fluctuations in the training data [24].

Any single model training algorithm involves numerous variables—training data, hyperparameters, and architectural choices—that collectively determine the model's total error, which is composed of bias, variance, and irreducible error [24]. Ensemble methods leverage the "wisdom of the crowd" effect by combining multiple diverse models, allowing them to compensate for each other's weaknesses. This aggregation results in a lower overall error rate than could be achieved by any constituent model [24] [23]. The geometric framework for ensemble learning visualizes each classifier's output as a point in a multi-dimensional space, with the ideal prediction as a target "ideal point." By averaging the outputs of base models, the ensemble's collective prediction is often closer to this ideal point than any individual model's output [23].

Key Ensemble Methodologies

Three primary techniques dominate the ensemble learning landscape, each with distinct mechanisms for combining models and optimizing performance.

Bagging (Bootstrap Aggregating)

Bagging is a parallel ensemble method that reduces variance and mitigates overfitting. It creates multiple diverse datasets from the original training data through bootstrap sampling—randomly selecting subsets of data with replacement [22] [24]. Each bootstrap sample is used to train a separate base learner (e.g., a decision tree) independently. During prediction, the outputs of all base learners are aggregated, typically through majority voting for classification or averaging for regression [22] [23]. A key advantage is Out-of-Bag (OOB) Evaluation, where data points not included in a model's bootstrap sample can be used for validation without separate cross-validation [22].

Table 1: Key Characteristics of Bagging

| Feature | Description |

|---|---|

| Training Mode | Parallel: Models are trained independently [24] |

| Primary Goal | Reduce variance and prevent overfitting [22] |

| Sampling Method | Bootstrap sampling (random selection with replacement) [22] |

| Base Learner Diversity | Often uses the same learning algorithm (homogeneous) [24] |

| Aggregation Method | Voting (classification) or Averaging (regression) [22] |

| Exemplar Algorithm | Random Forest [22] |

Boosting

Boosting is a sequential ensemble method designed primarily to reduce bias. It trains base learners one after another, with each new model focusing on correcting the errors made by its predecessors [22] [26]. The algorithm assigns weights to training data points, increasing the weight of misclassified examples so subsequent models pay more attention to them [22] [26]. Unlike bagging, which combines independent models, boosting creates an additive model where each new learner incrementally improves the overall performance. The final prediction is a weighted combination of all models [22].

Table 2: Comparison of Popular Boosting Algorithms

| Algorithm | Core Mechanism | Key Features |

|---|---|---|

| AdaBoost | Adaptively re-weights misclassified instances after each iteration [22] [26] | Historically significant, intuitive, can be sensitive to noisy data [26] |

| Gradient Boosting | Uses gradient descent to minimize loss function; trains on residual errors of previous model [24] | Often achieves higher accuracy, more complex implementation [24] |

| XGBoost | Optimized version of gradient boosting with tree pruning and regularization [22] | High performance, regularization prevents overfitting, parallel processing [22] |

Stacking (Stacked Generalization)

Stacking is a heterogeneous parallel method that combines different types of models using a meta-learner. First, multiple diverse base models (e.g., neural networks, decision trees, support vector machines) are trained on the same dataset [22] [24]. Their predictions then become the input features for a final meta-model, which learns how to best combine these predictions [22]. This approach is particularly powerful when base models are built from different domains of knowledge, as it can capture complementary patterns and mitigate the inductive biases of any single model or feature set [1]. Crucially, to prevent overfitting, the meta-learner should be trained on predictions generated from data not used in training the base learners, often achieved through cross-validation [24].

Ensemble Learning for Thermodynamic Stability Prediction

The Critical Need for Accurate Stability Prediction

The thermodynamic stability of materials, quantified by the decomposition energy (ΔHd), represents the energy difference between a compound and its competing phases in a specific chemical space [1]. Establishing the convex hull using formation energies of all relevant materials in a phase diagram is the conventional approach for determining stability, but this requires extensive DFT calculations or experimental investigation, consuming substantial computational resources and time [1]. Machine learning offers a promising alternative by learning the relationship between material composition/structure and stability from existing databases, enabling rapid screening of candidate materials.

However, standard machine learning approaches for predicting compound stability often suffer from significant limitations. A major issue is the inductive bias introduced by models relying on a single hypothesis or limited domain knowledge [1]. When models are built on idealized scenarios or incomplete understandings of chemical mechanisms, the ground truth may lie outside the model's parameter space, diminishing prediction accuracy [1]. Ensemble methods, particularly stacking, have emerged as a powerful solution to this problem by amalgamating models rooted in distinct knowledge domains.

Case Study: The ECSG Framework for Stability Prediction

A compelling example of ensemble learning in materials science is the Electron Configuration models with Stacked Generalization (ECSG) framework, developed to predict thermodynamic stability of inorganic compounds [1]. This approach integrates three distinct models based on different physical principles:

- Magpie: Utilizes statistical features (mean, deviation, range) of various elemental properties (atomic number, mass, radius) and employs gradient-boosted regression trees (XGBoost) [1].

- Roost: Conceptualizes the chemical formula as a complete graph of elements, using graph neural networks with attention mechanisms to capture interatomic interactions [1].

- ECCNN (Electron Configuration Convolutional Neural Network): A novel model that uses electron configuration—an intrinsic atomic characteristic—as direct input, processed through convolutional layers to extract relevant features [1].

The ECSG framework employs stacked generalization to combine these diverse models. The predictions from Magpie, Roost, and ECCNN serve as inputs to a meta-learner, which learns the optimal way to integrate them into a final, more accurate stability prediction [1]. This approach mitigates the limitations of individual models by leveraging a synergy that diminishes inductive biases. Experimental results validated the efficacy of this ensemble approach, achieving an Area Under the Curve (AUC) score of 0.988 in predicting compound stability within the JARVIS database—surpassing the performance of any individual model [1].

Diagram 1: ECSG Stacked Generalization Workflow for predicting thermodynamic stability.

Case Study: Ensemble Learning for Carbon Allotropes

Another significant application of ensemble learning involves predicting the formation energy and elastic constants of carbon allotropes using regression-tree-based ensembles [25]. This approach addresses the limitations of both DFT (computationally demanding) and molecular dynamics with classical interatomic potentials (limited accuracy) by using properties calculated from nine different classical potentials as input features for ensemble models [25].

Researchers extracted carbon structures from the Materials Project database and computed their formation energy and elastic constants using MD simulations with nine different classical interatomic potentials (ABOP, AIREBO, LJ, etc.) [25]. These computed properties served as features, with corresponding DFT references as targets, to train multiple ensemble models including Random Forest (RF), AdaBoost (AB), Gradient Boosting (GB), and XGBoost (XGB) [25]. The results demonstrated that ensemble models consistently outperformed individual classical potentials, with the ensemble's mean absolute error (MAE) lower than that of the most accurate individual potential (LCBOP) [25]. This showcases ensemble learning's ability to identify and leverage the most accurate features from a pool of inputs to improve prediction precision.

Table 3: Performance of Ensemble Methods for Carbon Allotropes Formation Energy Prediction

| Method | Description | Key Finding |

|---|---|---|

| Random Forest (RF) | Bagging ensemble of decision trees | Outperformed individual classical potentials [25] |

| AdaBoost (AB) | Sequential boosting with re-weighting | Improved accuracy over base estimators [25] |

| Gradient Boosting (GB) | Sequential boosting with gradient descent | Captured complex non-linear relationships in data [25] |

| XGBoost (XGB) | Optimized gradient boosting | High predictive accuracy with regularization [25] |

| Voting Regressor (VR) | Combined RF, AB, and GB predictions | Mitigated overall error through averaging [25] |

Experimental Protocols and Implementation

Implementation of Bagging for Material Classification

The following protocol outlines the implementation of a bagging classifier using decision trees as base estimators, applicable for material classification tasks such as crystal system prediction.

Protocol 1: Bagging Classifier Implementation

Import Libraries and Load Data

Split Dataset into Training and Testing Sets

Create Base Classifier

Initialize and Train Bagging Classifier

Make Predictions and Evaluate Accuracy

Implementation of Boosting for Regression Tasks

This protocol details the implementation of the AdaBoost algorithm for regression tasks, such as predicting formation energies of materials.

Protocol 2: AdaBoost Regressor Implementation

Import Necessary Libraries

Prepare Dataset and Split into Training/Testing Sets

Define Weak Learner

Create and Train AdaBoost Regressor

Make Predictions and Calculate Error

Research Reagent Solutions: Computational Tools for Ensemble Materials Discovery

Table 4: Essential Computational Tools for Ensemble Learning in Materials Research

| Tool/Resource | Type | Function in Research |

|---|---|---|

| Scikit-learn | Python Library | Provides implementations of Bagging, Random Forest, AdaBoost, and stacking ensembles [22] [25] |

| XGBoost | Optimized Boosting Library | Implements gradient boosting with regularization and parallel processing for enhanced performance [22] |

| Materials Project | Materials Database | Source of crystal structures and calculated properties for training and validation [1] [25] |

| JARVIS-FF | Force-Field Database | Contains properties calculated by classical potentials, used as features for ensemble models [25] |

| LAMMPS | Molecular Dynamics Simulator | Calculates material properties using classical interatomic potentials for feature generation [25] |

Diagram 2: Ensemble Learning Workflow for Materials Discovery.

Ensemble machine learning represents a paradigm shift in computational materials science, offering a robust framework for addressing the complex challenge of thermodynamic stability prediction. By strategically combining multiple models through bagging, boosting, or stacking, researchers can create super-learners that mitigate the biases and limitations of individual approaches [22] [1]. The documented success of ensemble methods in accurately predicting stability with remarkable efficiency—achieving state-of-the-art performance with only a fraction of the data required by conventional models—underscores their transformative potential in accelerating materials discovery [1].

The implementation of these techniques within the materials science workflow, from feature extraction from diverse databases to the application of sophisticated ensemble algorithms, provides a scalable and effective approach for navigating the vast compositional space of inorganic compounds [1] [25]. As the field progresses, the integration of ensemble learning with generative models and high-throughput experimental validation will likely play a pivotal role in bridging the gap between computational prediction and laboratory synthesis, ultimately enabling the discovery of novel materials with tailored properties for specific applications [12] [7].

The discovery of new inorganic materials with tailored properties is a central goal in materials science, driving innovations in catalysis, energy storage, and electronics. A critical challenge in this pursuit is the efficient identification of materials that are not only functionally superior but also thermodynamically stable and synthesizable. Traditional experimental approaches, reliant on trial-and-error, are time-consuming and resource-intensive. Similarly, computational screening via density functional theory (DFT), while powerful, remains computationally expensive for exploring vast compositional and structural spaces [7].

Descriptor-driven screening has emerged as a powerful paradigm to overcome these limitations. This approach leverages key physicochemical properties—descriptors—that act as proxies for complex material behaviors, enabling rapid prediction and prioritization of candidate materials. The evolution of these descriptors has progressed from single-value electronic properties, such as the d-band center, to comprehensive patterns like the full electronic Density of States (DOS). The d-band center, representing the average energy of the d-electron states relative to the Fermi level, has been exceptionally successful in predicting adsorption strengths and catalytic activity in transition-metal systems [27] [28]. However, capturing the complete electronic landscape requires analysis of the full DOS pattern, which provides a richer description of electronic structure but presents greater challenges for computation and machine learning [29].

Framed within the broader context of thermodynamic stability discovery, accurate descriptors allow researchers to navigate the high-dimensional space of inorganic materials by linking electronic structure to phase stability. This guide provides an in-depth technical examination of descriptor-driven screening, from foundational concepts to cutting-edge methodologies that integrate machine learning and generative models for the inverse design of stable inorganic materials.

Core Descriptor Concepts and Quantitative Foundations

The d-Band Center Theory

The d-band center (εd) is a cornerstone electronic descriptor in the catalysis of transition metals and their compounds. It is defined as the first moment of the d-projected density of states relative to the Fermi level, calculated using the formula:

εd = ∫ E ρd(E) dE / ∫ ρd(E) dE [28]

Where E is the energy and ρd(E) is the density of states of the d-orbitals. The position of the d-band center relative to the Fermi level provides a powerful rule of thumb: a higher d-band center (closer to the Fermi level) generally leads to stronger adsorbate bonding, as the anti-bonding states are pushed higher in energy and remain less filled. Conversely, a lower d-band center results in weaker adsorption due to increased population of anti-bonding states [27] [28]. This principle has been extensively applied to explain and predict catalytic activity for reactions such as the oxygen evolution reaction (OER), carbon dioxide reduction reaction (CO₂RR), and hydrogen evolution reaction (HER) [27].

Full Electronic Density of States (DOS) Patterns

While the d-band center offers a simplified, single-value metric, the full electronic Density of States (DOS) provides a complete energy-dependent distribution of electronic states. The DOS pattern captures complex features beyond a single energy level, including bonding/anti-bonding character, band gaps, and orbital hybridization effects, which are critical for understanding a material's total energy, stability, and multifaceted properties [29] [30].

In solid-state physics, the DOS, g(E), is defined as the number of electronic states per unit volume per unit energy. The filling of these states at thermodynamic equilibrium is governed by the Fermi-Dirac distribution, f(E) = 1 / (1 + exp((E-μ)/kBT)), where μ is the Fermi level [30]. The fundamental challenge lies in the computational cost of obtaining accurate DOS patterns from first-principles DFT calculations, which scales as O(N³) with the number of electrons, N [29].

Table 1: Comparison of Key Electronic Descriptors

| Descriptor | Mathematical Definition | Physical Interpretation | Computational Cost | Primary Applications |

|---|---|---|---|---|

| d-band Center | εd = ∫ E ρd(E) dE / ∫ ρd(E) dE |

Average energy of d-electrons; governs adsorbate bonding strength | Medium (requires PDOS) | Catalysis, adsorption strength prediction [27] [28] |

| DOS at Fermi level | g(EF) |

Number of states available for electronic excitation | Medium | Metallic behavior, superconductivity [29] |

| Full DOS Pattern | g(E) for E ∈ [Emin, Emax] |

Complete electronic structure landscape | High (O(N³) via DFT) | Thermodynamic stability, band gap, mechanical properties [29] [30] |

| Formation Energy | ΔE_f = E_total - Σ E_isolated_atoms |

Energy gain upon formation from elements | High | Thermodynamic stability assessment [1] |

Experimental and Computational Methodologies

DFT Protocol for Descriptor Calculation

Objective: To calculate the d-band center and full DOS pattern of an inorganic crystalline material using Density Functional Theory.

Workflow Overview:

- Structure Acquisition: Obtain a crystal structure file (e.g., CIF) from a database like the Materials Project [27] or generate a candidate structure.

- DFT Calculation Setup:

- Software: Vienna Ab initio Simulation Package (VASP) is standard [27].

- Functional: Use the Generalized Gradient Approximation (GGA) with the Perdew-Burke-Ernzerhof (PBE) parameterization. For systems with strongly correlated electrons (e.g., transition metal oxides), apply the DFT+U method with a Hubbard U parameter [27].

- Plane-Wave Cutoff Energy: Set to 520 eV for most inorganic solids.

- k-point Sampling: Use a Γ-centered k-point grid with a spacing of 2π × 0.03 Å⁻¹. A 6x6x6 Monkhorst-Pack grid is typical for a simple cubic cell.

- Convergence Criteria: Set the electronic energy convergence to 10⁻⁶ eV and the ionic force convergence to 0.01 eV/Å.

- Self-Consistent Field (SCF) Calculation: Perform to obtain the converged charge density.

- DOS Calculation: Run a non-self-consistent field (NSCF) calculation on a denser k-point grid (e.g., spacing of 2π × 0.01 Å⁻¹) to obtain a smooth DOS and projected DOS (PDOS).

- Post-Processing:

- d-band Center: Extract the d-orbital projected DOS (PDOS) from the output. The d-band center can be calculated by integrating the d-PDOS as per the equation in Section 2.1. Many DFT post-processing tools (e.g., pymatgen, VASPkit) can automate this [27].

- Full DOS Pattern: The total DOS and orbital-projected DOS are direct outputs from the calculation.

Machine Learning for DOS Pattern Prediction

To circumvent the high cost of DFT, machine learning (ML) models can be trained to predict DOS patterns directly from material composition or structure.

Pattern Learning (PL) Method via Principal Component Analysis (PCA) [29]:

- Data Preparation:

- Source: Collect a dataset of pre-computed DOS patterns from DFT for a range of materials (e.g., from the Materials Project).

- Digitization: Represent each continuous DOS curve as a vector

xby digitizing it within a fixed energy window (e.g., -10 eV to 5 eV) and DOS range (e.g., 0 to 3 states/eV). This creates a matrixXwhere each row is a material's DOS vector.

- Dimensionality Reduction:

- Standardize the DOS vectors to create matrix

Y. - Perform PCA on

Yto obtain the principal components (PCs, eigenvectorsu_p) and their corresponding eigenvaluesλ_p. The original DOS vector can be approximated asx ≈ Σ α_p u_p, whereα_pare the coefficients for the firstPPCs.

- Standardize the DOS vectors to create matrix

- Prediction for a New Material:

- For a new test material, identify the most similar training materials based on features like d-orbital occupation ratio (

n_d), coordination number (CN), and mixing factor. - Estimate the new coefficients

α'_pfor the test material by linearly interpolating theα_pof the similar training materials. - Reconstruct the predicted DOS pattern using the learned PCs:

x' = Σ α'_p u_p. - Transform

x'into a DOS probability matrix and obtain the final predicted DOS,ρ'[29].

- For a new test material, identify the most similar training materials based on features like d-orbital occupation ratio (

This method has demonstrated a pattern similarity of 91-98% compared to DFT calculations while being independent of the number of electrons, thus breaking the O(N³) scaling of DFT [29].

Advanced Generative Models for Inverse Design

The ultimate application of descriptors is in inverse design, where materials are generated to meet specific descriptor targets. Generative AI models have recently been developed that use descriptors as conditional inputs to create novel, stable crystal structures.

dBandDiff: A Diffusion Model for d-band Center Targeting

dBandDiff is a diffusion-based generative model that creates crystal structures conditioned on a target d-band center value and space group symmetry [27].

Architecture and Workflow:

- Conditional Inputs: The model accepts two primary conditions: a target d-band center value (e.g., 0 eV for strong adsorption) and a space group number.

- Diffusion Process: The model is based on a Denoising Diffusion Probabilistic Model (DDPM) framework.

- Forward Process: Noise is gradually added to crystal structures (lattice, atomic types, coordinates) in the training dataset.

- Reverse Process: A learned denoiser (a periodic feature-enhanced Graph Neural Network) progressively removes noise to generate new structures, guided by the conditional inputs.

- Symmetry Enforcement: Space group constraints are incorporated into both the noise initialization and reconstruction during inference, ensuring the generated structures adhere to the target symmetry via Wyckoff position constraints.

- Training: The model is trained end-to-end on structures from the Materials Project containing transition metals and their corresponding DFT-calculated d-band centers.

In evaluation, 98.7% of structures generated by dBandDiff conformed to the target space group, and 72.8% were found to be structurally reasonable by high-throughput DFT, with d-band center errors significantly lower than random generation [27]. In a case study targeting a d-band center of 0 eV, dBandDiff identified 17 theoretically reasonable compounds from just 90 generated candidates [27].

MatterGen: A Foundational Generative Model