A Comparative Guide to Particle Size Analysis Techniques for Solid-State Products in Pharmaceutical Development

This article provides a comprehensive comparative analysis of particle size analysis techniques essential for researchers and professionals in drug development.

A Comparative Guide to Particle Size Analysis Techniques for Solid-State Products in Pharmaceutical Development

Abstract

This article provides a comprehensive comparative analysis of particle size analysis techniques essential for researchers and professionals in drug development. It explores the foundational principles of prevalent methods, including laser diffraction, dynamic light scattering, dynamic image analysis, and sieving, detailing their specific applications for solid-state products. The content addresses common troubleshooting scenarios and optimization strategies for challenging samples, such as non-spherical crystals and agglomerates. By synthesizing validation data and comparative performance metrics across different techniques, this guide aims to inform robust analytical method selection to enhance product quality, process control, and regulatory compliance in pharmaceutical development.

Understanding Particle Size Analysis: Core Principles and Critical Parameters for Solid-State Products

In pharmaceutical development, the particle size and shape of an Active Pharmaceutical Ingredient (API) are not merely physical attributes but are critical quality attributes that directly influence a drug's safety, efficacy, and manufacturability. The foundational principles governing this relationship are rooted in classical physical chemistry. The Noyes-Whitney equation describes the dissolution rate as being directly proportional to the available surface area of the solid particle, implying that reduced particle size can enhance dissolution [1] [2]. Furthermore, the Ostwald-Freundlich equation establishes that saturation solubility itself can increase for particles in the nanometer range, providing an additional thermodynamic driver for absorption beyond just kinetics [1]. For suspensions, Stokes' law relates particle size to settling velocity, a key factor in ensuring dose uniformity and physical stability of the product [2]. Together, these principles provide a scientific basis for the meticulous control of particle characteristics across all stages of drug product development, from initial formulation to final manufacturing. The goal is to optimize bioavailability—the degree and rate at which a drug is absorbed into the systemic circulation—while ensuring the product can be consistently and reliably manufactured [3] [4].

The Impact of Particle Size on Drug Performance

Solubility and Dissolution Enhancement

Particle size reduction is a primary strategy for improving the performance of poorly soluble drugs (BCS Class II/IV). Reducing particle size increases the specific surface area (surface area per unit mass), which directly enhances the dissolution rate as described by the Noyes-Whitney equation [5]. This relationship is powerfully illustrated by dissolution studies. For example, research on esomeprazole demonstrated that a formulation with a median particle size (X50) of 494 µm had a median dissolution time (T50) of approximately 38 minutes, whereas a larger particle size of 648 µm resulted in a significantly longer T50 of about 61 minutes [5]. This inverse relationship between particle size and dissolution rate is a cornerstone of formulation science.

The following table summarizes key experimental findings from the literature demonstrating the impact of particle size on dissolution and solubility:

Table 1: Experimental Evidence of Particle Size Impact on Dissolution and Solubility

| Drug Substance | Particle Size | Experimental Findings | Source |

|---|---|---|---|

| Coenzyme Q10 Nanocrystals | 80 - 700 nm | Increased kinetic solubility in various dissolution media; dissolution velocity increased as particle size decreased. | [1] |

| Esomeprazole | 494 µm vs 648 µm | Smaller particles (494 µm) reduced median dissolution time (T50) to ~38 min vs ~61 min for larger particles. | [5] |

| General API (from review) | Nanoscale | Smaller particles provide larger specific surface area, promoting dissolution and interaction with cell membranes. | [5] |

Bioavailability and Absorption

The ultimate goal of enhancing dissolution is to improve oral bioavailability. The absorption of a drug involves not just dissolving in the gastrointestinal fluid, but also traversing the intestinal mucosa. Smaller particles, particularly nanoparticles, can leverage different absorption pathways. They can extend residence time in the mucus layer (with pore sizes of 10-200 nm) and enhance penetration through the intestinal wall via persorption, transcellular uptake, and paracellular uptake [5].

Multiple in vivo studies confirm this principle. In beagle dogs, a 0.12 µm formulation of aprepitant achieved a Cmax four times higher than a 5.5 µm formulation [5]. Similarly, rosuvastatin calcium nanoparticles in rabbits showed twice the Cmax and a 1.5-fold increase in AUC (Area Under the Curve, a measure of total exposure) compared to untreated drug [5]. A study on candesartan cilexetil in rats found that 127 nm nanoparticles increased AUC by 2.5-fold and Cmax by 1.7-fold compared to micronized suspensions, also reducing the time to peak concentration (Tmax) [5]. For coenzyme Q10, reducing particle size to 700 nm increased bioavailability (AUC) by 4.4-fold compared to coarse suspensions, with an 80 nm formulation boosting it by 7.3-fold [1].

Table 2: Experimental Evidence of Particle Size Impact on Bioavailability

| Drug Substance | Animal Model | Particle Size & Performance Results | Source |

|---|---|---|---|

| Aprepitant | Beagle Dogs | 0.12 µm formulation achieved a 4x higher Cmax than a 5.5 µm formulation. | [5] |

| Rosuvastatin Calcium | Rabbits | Nanoparticles showed 2x Cmax and 1.5x AUC vs. untreated drug. | [5] |

| Candesartan Cilexetil | Rats | 127 nm nanoparticles increased AUC by 2.5x and Cmax by 1.7x vs micronized suspensions. | [5] |

| Coenzyme Q10 | Beagle Dogs | 700 nm particles: 4.4x AUC vs coarse; 80 nm particles: 7.3x AUC vs coarse. | [1] |

Performance in Long-Acting Injectables (LAIs)

Particle size plays a uniquely critical role in the performance of long-acting injectable (LAI) crystalline aqueous suspensions. For these formulations, which are used to treat chronic diseases like HIV and neurological disorders, the drug absorption is often dissolution-rate limited [6] [7]. A larger particle size dissolves more slowly, providing sustained release over weeks or months. However, this requires a careful balance. While larger particles prolong release, they also increase sedimentation rates, raise the risk of needle clogging, and can cause injection pain due to higher back pressure [6]. Consequently, identifying the optimal particle size distribution (PSD) is a multidimensional challenge that balances pharmacokinetics with injectability, stability, and patient tolerance [6] [7].

Processability and Manufacturing

The influence of particle size extends beyond bioperformance into the practical realm of manufacturing and processability. Powder flowability is crucial for efficient tableting, and smaller particles generally flow less efficiently than larger, more uniform ones [4]. Poor flow can lead to variations in tablet weight and content uniformity. Furthermore, particle compressibility is affected by size; very fine particles may lack the ability to lock together effectively during compression, leading to defects such as capping (horizontal separation of the top or bottom of a tablet) or lamination (layer separation within the tablet) [4]. The presence of excessive fines (small, dusty particles) also reduces overall yield, increases cleaning costs, and accelerates machine wear [4]. Therefore, controlling particle size distribution is essential for robust, cost-effective, and high-quality pharmaceutical manufacturing.

The Critical Role of Particle Shape

While particle size is often the primary focus, particle shape is an equally critical parameter that can profoundly influence product performance and processing. Laser diffraction, a common sizing technique, assumes spherical particles, but real-world API crystals are rarely perfect spheres [2]. The shape of a particle directly affects its surface roughness, which in turn influences the actual surface area available for dissolution—a fact that can explain why smaller particles do not always dissolve faster than larger ones with a rougher surface morphology [2].

Shape also dictates powder flow and compaction behavior. In direct compression tableting, particle shape influences segregation behavior and compressibility, which affects the consistency of tablet weight, composition, and the mechanical properties of the final tablet [2]. For suspensions, particle shape, in conjunction with size distribution and zeta potential, impacts the stability of the dispersion and the rate of settling or aggregation [2]. Modern automated imaging techniques allow for the quantitative analysis of shape descriptors such as circularity, convexity, and elongation, providing a more complete material characterization than size analysis alone [2].

Analytical Techniques for Particle Characterization

A variety of analytical techniques are available for particle size and shape analysis, each with its own principles, advantages, and limitations. The choice of method depends on the sample's properties, the required size range, and the information needed (size vs. size and shape).

Table 3: Comparison of Particle Size and Shape Analysis Techniques

| Technique | Measured Parameter | Typical Size Range | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Laser Diffraction (LD) [8] [9] | Equivalent Spherical Diameter (Volume-based) | 0.01 µm - 2 mm | Wide size range, fast, high repeatability, suitable for dry powders and dispersions. | Assumes spherical particles; sampling errors can affect results. |

| Dynamic Light Scattering (DLS) [8] [9] | Hydrodynamic Diameter | 0.3 nm - 10 µm | Fast, good for proteins and nanoparticles in suspension. | Assumes spherical particles; struggles with polydisperse samples; sensitive to temperature. |

| Dynamic Image Analysis (DIA) [8] [9] | Size and Shape (e.g., aspect ratio, circularity) | 2 µm - 3 mm | Provides direct shape and size data for individual particles. | Not suitable for nanoparticles; sample preparation can be complex. |

| Sieving [8] [9] | Particle Size (Mass-based) | 30 µm - 120 mm | Low cost, robust, widely accepted. | Time-consuming; assumes spherical particles for size classification. |

| Scanning Electron Microscopy (SEM) [8] | Size, Shape, Surface Morphology | > 10 nm | High-resolution images, detailed surface and shape information. | Sample must be dry and often coated; analysis is slow and not statistical. |

| Nanoparticle Tracking Analysis (NTA) [9] | Hydrodynamic Diameter (Number-based) | 30 nm - 1 µm | Measures concentration; good for polydisperse samples. | Less reproducible than DLS; time-consuming; requires experienced users. |

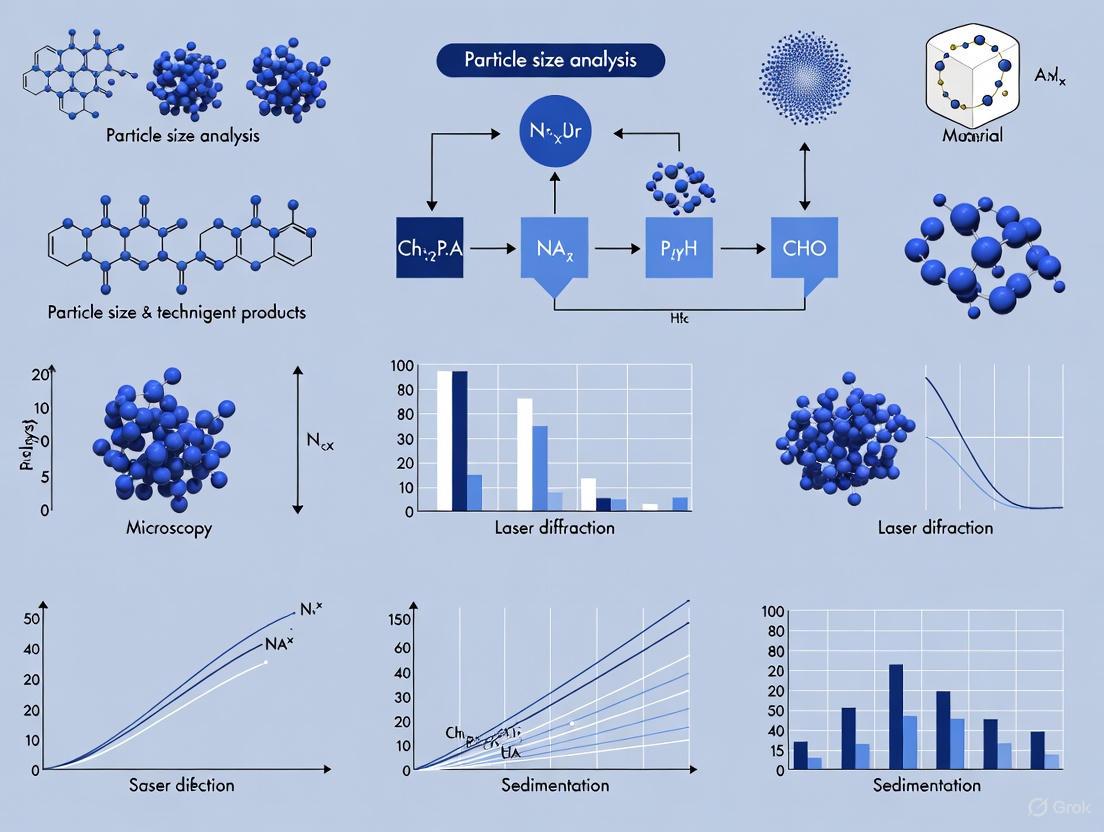

The following workflow diagram illustrates the decision-making process for selecting an appropriate characterization technique based on the primary analytical need:

It is crucial to note that different techniques can yield different results for the same sample, as they are based on different measurement principles (e.g., volume-based vs. number-based distributions) and make different assumptions about particle shape [2] [9]. Therefore, comparing results from different methods should be done with caution, and it is often beneficial to use techniques like imaging to complement and validate data from laser diffraction or DLS [2].

Experimental Protocols for Particle Size Studies

Protocol 1: Nanoparticle Formation via Liquid Antisolvent Crystallization with Focused Ultrasonication

This protocol is used to produce nanoscale drug particles, such as a Cinnarizine formulation with a target particle size below 200 nm [5].

- Preparation: Dissolve the API in a suitable organic solvent (e.g., ethanol) to form the organic phase. Heat may be applied (e.g., 60°C water bath) to facilitate dissolution.

- Precipitation: Rapidly inject the organic phase into a nonsolvent (e.g., distilled water) under controlled conditions. Key parameters include:

- Injection rate: Varies (e.g., 30 mL/min for 400 nm particles, 15 mL/min for 700 nm particles).

- Stirring speed: Varies (e.g., 800 rpm for 400 nm particles, 400 rpm for 700 nm particles).

- Size Reduction: Subject the resulting suspension to focused ultrasonication using a device like a Covaris instrument with Adaptive Focused Acoustics (AFA) technology.

- Duration: ~4500 seconds.

- Bath Temperature: 10°C.

- Power Mode: Frequency sweeping.

- Degassing Mode: Continuous.

- Concentration (Optional): Concentrate the final nanosuspension using methods like ultrafiltration if needed.

Protocol 2: In Vivo Bioavailability Study in Beagle Dogs

This protocol assesses the impact of particle size on oral absorption [1].

- Formulation Preparation: Prepare suspensions of the drug with different, well-characterized particle sizes (e.g., coarse suspension vs. 80 nm, 120 nm, 400 nm, and 700 nm nanocrystals). Adjust drug content to the desired concentration (e.g., ~1 mg/mL).

- Animal Dosing: Use beagle dogs as the model organism. Administer the formulations orally in a cross-over study design.

- Blood Sampling: Collect blood samples at predetermined time points post-administration over a specified period (e.g., 0-48 hours).

- Bioanalysis: Process plasma samples and quantify drug concentration using a validated analytical method, typically reverse-phase High-Performance Liquid Chromatography (HPLC).

- Pharmacokinetic Analysis: Calculate key pharmacokinetic parameters from the plasma concentration-time profile, including:

- AUC0-48: Area under the curve, indicating total drug exposure.

- Cmax: Maximum plasma concentration.

- Tmax: Time to reach Cmax.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagents and Materials for Particle Size Studies

| Item | Function/Application | Examples & Notes |

|---|---|---|

| Solvents & Antisolvents | Used in liquid antisolvent crystallization to precipitate nanoparticles. | Ethanol, isopropanol, water. Must not dissolve the final particles [1] [5]. |

| Stabilizers & Surfactants | Prevent aggregation and Ostwald ripening in nanosuspensions. | Tween 20, various polymers. Critical for long-term stability [1]. |

| Dispersion Media | Medium for particle size analysis of dispersions (LD, DLS). | Aqueous or organic solvents that do not interact with or dissolve the particles [8]. |

| HPLC-grade Solvents | For bioanalysis of in vivo samples to determine drug concentration in plasma. | Methanol, ethanol; used in reverse-phase HPLC [1]. |

| Standard Sieves | For traditional sieve analysis to determine particle size distribution by mass. | Assembled into a stack with decreasing mesh size according to ASTM/ISO standards [8] [9]. |

| Focused Ultrasonicator | Equipment for precise nanoparticle size reduction. | Covaris instrument with Adaptive Focused Acoustics (AFA) [5]. |

| Laser Diffraction Analyzer | Instrument for rapid, volume-based particle size distribution analysis. | Malvern analyzers; complies with ISO 13320 and USP <429> [8] [2]. |

The following diagram summarizes the logical relationships and workflows involved in a particle size reduction and characterization study as discussed in the protocols:

Particle size and shape are foundational material characteristics that exert a profound influence on the critical quality attributes of a pharmaceutical product. A deep understanding of their impact on solubility, dissolution, bioavailability, and processability is non-negotiable for successful drug development. Selecting the appropriate analytical technique is paramount, as different methods provide complementary information and can sometimes yield conflicting results. The chosen strategy for particle engineering—whether through micronization, nanonization, or controlled crystallization—must be a carefully balanced decision that aligns with the Target Product Profile (TPP). This decision must holistically consider the desired pharmacokinetics, stability, manufacturability, and patient experience. As pharmaceutical science continues to tackle increasingly complex and poorly soluble drug molecules, the precise control and thorough characterization of particle properties will remain a cornerstone of developing safe, effective, and high-quality medicines.

In the field of solid-state products research, particularly in pharmaceutical development, the precise characterization of materials is fundamental to ensuring product quality, performance, and stability. Particle properties such as size, shape, and the permeability of porous matrices directly influence critical parameters including dissolution rates, bioavailability, compressibility, and flow characteristics [10] [11] [12]. This guide provides an objective comparison of three pivotal analytical technique categories: light scattering, image analysis, and permeability measurement. By outlining the core principles, applicable standards, experimental protocols, and relative strengths and limitations of each method, this document serves to inform researchers and scientists in selecting the most appropriate characterization strategy for their specific application needs.

Core Principles and Techniques

Light Scattering

Light scattering techniques operate on the principle of measuring the interaction between a beam of light and dispersed particles to extract information about their size and distribution [12].

Static Light Scattering (SLS) / Laser Diffraction (LD): This method analyzes the time-averaged angular dependence of scattered light intensity. When a laser illuminates a sample, larger particles scatter light at smaller angles with higher intensity, while smaller particles scatter light at wider angles with lower intensity [13] [12]. The resulting scattering pattern is analyzed using algorithms based on Mie theory or the Fraunhofer approximation to calculate a volume-based particle size distribution [11] [13]. Laser diffraction is a rapid, high-throughput method covering a broad size range from sub-micron to several millimeters, making it a versatile tool for quality control [8] [11] [12].

Dynamic Light Scattering (DLS): Used primarily for nano-scale particles, DLS analyzes the time-dependent fluctuation in scattering intensity caused by the Brownian motion of particles in a dispersion [12]. Smaller particles diffuse more rapidly, causing faster intensity fluctuations, while larger particles move more slowly and cause slower fluctuations [8] [14]. An autocorrelation analysis of these fluctuations yields the diffusion coefficient, from which a hydrodynamic diameter is calculated via the Stokes-Einstein equation [14] [15]. DLS is ideal for proteins, nanoparticles, and microemulsions in the size range of 0.3 nm to 10 μm [8] [14].

Figure 1: Core light scattering measurement workflow.

Image Analysis

Image analysis provides a direct method for determining particle size and shape by capturing and analyzing digital images of individual particles [10] [16]. This technique does not assume spherical geometry, making it uniquely powerful for characterizing irregularly shaped particles such as rods or fibers [16].

The process involves four key steps [16]:

- Image Taking: A digital camera, often coupled with a microscope, captures images of dispersed particles, either stationary on a substrate or in motion.

- Image Processing: Software enhances image quality by eliminating noise, correcting brightness variations, and separating connected particles.

- Object Detection: Through "thresholding," each pixel is assigned to either a particle or the background, allowing the software to identify individual particles.

- Classification: Detected particles are classified based on extracted size and shape parameters (e.g., equivalent circular area diameter, length, width, aspect ratio) and grouped into distribution classes [10] [16].

Image analysis can be performed in static or dynamic mode. Static Image Analysis (SIA) examines particles on a static substrate, while Dynamic Image Analysis (DIA) captures images of particles flowing past a camera, enabling the analysis of a larger, more statistically significant number of particles in a random orientation [11] [14].

Figure 2: Image analysis workflow for particle characterization.

Permeability

Permeability measurement quantifies the ability of a fluid to flow through a porous medium, such as a packed powder bed or a reservoir rock core sample [17]. The standard methodology is based on Darcy's Law, which for a linear, incompressible flow is expressed as [17]:

[ Q = \frac{K A \Delta P}{\mu L} ]

Where:

- ( Q ) = volumetric flow rate

- ( K ) = permeability of the medium

- ( A ) = cross-sectional area to flow

- ( \Delta P ) = pressure drop across the medium

- ( \mu ) = dynamic viscosity of the fluid

- ( L ) = length of the medium

Two common experimental methods for measuring liquid permeability are [18]:

- Constant Head Method (CHM): Maintains a constant pressure differential across the sample during measurement. This method is fast, has low standard deviation, and is recommended for standardization, particularly for samples with pore sizes below 30 µm that require laminar flow conditions [18].

- Falling Head Method (FHM): Uses a declining pressure differential and is recommended for highly permeable samples where turbulent flow is present [18].

A critical consideration is the Klinkenberg Effect, which occurs when gases are used as the testing fluid. Due to gas molecule slippage along pore walls at low pressures, the measured gas permeability is higher than the intrinsic (liquid) permeability. This effect is significant for low-permeability materials and fine powders, and requires data extrapolation from multiple pressure measurements to determine the true absolute permeability [17].

Comparative Analysis of Techniques

Technical Comparison Table

Table 1: Comparative overview of key particle characterization techniques.

| Parameter | Laser Diffraction (LD) | Dynamic Light Scattering (DLS) | Image Analysis (DIA/SIA) | Permeability Measurement |

|---|---|---|---|---|

| Measured Property | Particle size distribution (Volume-based) [11] | Hydrodynamic diameter (Size distribution) [8] [14] | Particle size & shape distributions (Number-based) [10] [11] | Permeability of a porous medium [17] |

| Principle | Angle & intensity of scattered light [13] | Brownian motion & fluctuation of scattered light [12] | Direct imaging & digital analysis [16] | Fluid flow through a porous sample (Darcy's Law) [17] |

| Typical Size Range | 0.01 µm – 2000 µm [8] | 0.3 nm – 10 µm [8] | 0.5 µm – 3000 µm [8] [10] | N/A (Property of a packed bed or solid) |

| Sample Matrix | Dry powders or liquid dispersions [8] | Liquid dispersions only [8] | Dry powders, liquid suspensions, filters [10] | Core samples (e.g., compressed powder) [17] |

| Shape Sensitivity | Assumes spherical particles [8] | Assumes spherical particles [8] | Measures shape directly (e.g., aspect ratio, circularity) [10] [16] | Indirectly inferred from flow resistance |

| Throughput | High (Rapid analysis) [11] | Medium to High [12] | Low to Medium (Longer analysis times) [10] [11] | Medium (Requires sample preparation) [17] |

| Key Advantage | Wide size range, speed, high throughput [11] | Small particle sensitivity, measures in native solution [8] [12] | Direct visualization, no shape assumption, detects outliers [10] [14] | Directly measures a critical performance property [17] |

| Key Limitation | Inaccurate for non-spherical particles [8] [11] | Limited to nanoscale/submicron particles [14] | Low throughput, not for nanoparticles [10] [11] | Klinkenberg effect (if using gas) [17] |

Performance Data and Experimental Comparisons

Independent studies and technical reviews provide critical data for comparing the performance of these techniques in practical scenarios.

Table 2: Experimental findings and performance characteristics.

| Technique Comparison | Experimental Context | Key Findings & Performance Data |

|---|---|---|

| Laser Diffraction vs. Image Analysis | Analysis of ground coffee and cellulose fibers [14] | • LD results correspond to the area-equivalent diameter from DIA, but distributions appear broader as all particle dimensions are included and related to spheres [14].• For fibers, DIA differentiates between fiber width (~20 µm) and length (~400 µm), while LD produces a single, broad distribution that runs parallel to the width measurement before approaching the fiber length, failing to resolve the two dimensions [14]. |

| Permeability Methods (CHM vs. FHM) | Water permeability of woven filter meshes [18] | • Constant Head Method (CHM): Recommended for standardization. It was the fastest method with the lowest standard deviation and could provide laminar flow conditions for samples with pore sizes below 30 µm [18].• Falling Head Method (FHM): Operated only under turbulent flow and was thus recommended only for highly permeable samples [18].• All techniques (CHM, FHM, simulation) showed good agreement when working under a turbulent regime (pore size > 30 µm) [18]. |

| Detection Sensitivity | General capability of various techniques [14] | • Dynamic Image Analysis (DIA): Excellent sensitivity for oversized particles, with a detection limit as low as 0.01% [14].• Laser Diffraction (SLS): Relatively low sensitivity; modern analyzers can detect oversized grains only from approximately 2% by volume [14]. |

Detailed Experimental Protocols

Laser Diffraction (LD) Protocol

- Sample Preparation: For dry powders, use a vibrating feeder or air dispersion to ensure a steady, agglomerate-free stream of particles through the measurement zone. For liquid dispersions, select a suitable solvent that does not dissolve or react with the particles, and use sonication or stirring to achieve a homogeneous dispersion [8].

- Instrument Setup: Input the optical properties of the material, specifically the real and imaginary parts of the refractive index, for accurate application of Mie theory, which is crucial for particles below 20 µm [13].

- Measurement: Pass the dispersed sample through the laser beam. The optical system, comprising multiple light sources and a wide array of detectors, captures the scattered light pattern over a broad angular range [13] [12].

- Data Analysis: The instrument software inverts the scattered light data using Mie theory or the Fraunhofer approximation to compute a volume-based particle size distribution. Key percentiles such as D10, D50, and D90 are reported [11] [13].

Dynamic Image Analysis (DIA) Protocol

- Sample Dispersion: Disperse the sample in a suitable dry or wet state. For dry powders, a vibrating feeder or compressed air can be used to separate and convey particles. For liquids, disperse the powder in a solvent and pump the suspension through a flow cell [10] [16].

- Image Acquisition: As particles pass in a continuous flow through the detection zone, a pulsed light source (e.g., LED) and a high-speed camera capture high-resolution images of each particle [14].

- Image Processing and Analysis: The software performs thresholding to distinguish particles from the background. It then analyzes each particle image to determine a suite of size (e.g., length, width, equivalent circular area diameter) and shape (e.g., aspect ratio, circularity, convexity) parameters [10] [14].

- Data Reporting: Results are compiled into number-based distributions for size and shape. The system can analyze hundreds of thousands of particles to ensure statistical significance, and images are available for visual verification [10] [11].

Gas Permeability Protocol (with Klinkenberg Correction)

- Sample Preparation: For powdered materials, compress into a solid compact or core plug of known dimensions (length and diameter). The sample must be dried to remove any residual fluids [17].

- Core Holder Setup: Place the core sample in a holder and apply a confining pressure to simulate overburden stress and prevent fluid bypass [17].

- Flow Experiment: Flow a gas (e.g., air, nitrogen) through the sample at multiple, controlled flow rates. Precisely measure the flow rate (Q) and the upstream and downstream pressures (P1, P2) for each flow rate [17].

- Data Processing & Klinkenberg Correction:

- Calculate the apparent gas permeability (K_g) for each data point using Darcy's law.

- Plot the apparent gas permeability (Kg) against the inverse of the mean pressure (1/Pm).

- Fit a straight line through the data points. The intercept of this line at infinite pressure (where 1/Pm = 0) yields the absolute liquid permeability (KL) [17].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key reagents, materials, and equipment for particle characterization experiments.

| Item Name | Function/Application | Technical Notes |

|---|---|---|

| Dispersion Solvents | Liquid medium for dispersing powder samples in LD, DLS, and DIA [8]. | Must not dissolve or interact with the particles. Common choices include water, isopropanol, and cyclohexane. Salinity can be adjusted to prevent clay swelling in certain samples [8] [17]. |

| Standard Sieves | For pre-fractionating or comparative sieve analysis of coarse powders [8]. | Used according to ASTM or ISO standards. A stack with gradually decreasing apertures (30 µm to 120 mm) is assembled for gravimetric analysis [8]. |

| Refractive Index (RI) Standards | Verification of instrument alignment and accuracy in light scattering [13]. | Materials with known and stable RI are used to validate the performance of laser diffraction analyzers. |

| CZR Resin (Cyclohexanol) | A common solvent for preparing sample suspensions, particularly where water reactivity is a concern. | Ensves particles do not dissolve or undergo morphological changes during analysis in LD or DIA [8]. |

| Core Holder & Permeameter | Assembly for housing and testing the permeability of core samples or powder compacts [17]. | Applies confining pressure and allows for precise application of fluid pressure gradients and measurement of flow rates. |

| Metal or Carbon Coating | Preparation of non-conductive samples for Scanning Electron Microscopy (SEM) [8]. | A thin, conductive layer is applied to prevent charging and improve image quality for detailed shape and surface morphology analysis. |

| Soxhlet Extractor | Laboratory setup for thorough cleaning and drying of core samples before permeability testing [17]. | Removes residual fluids (e.g., water, oil) to ensure the core is 100% saturated with air before measurement. |

The selection of an appropriate characterization technique is paramount in solid-state research and drug development. Laser Diffraction stands out for its speed and wide size range, making it ideal for quality control where high throughput is essential, though its assumption of sphericity is a key limitation. Dynamic Light Scattering is the technique of choice for sub-micron and nano-scale particles in suspension, providing critical size information for proteins and nanomedicines. Image Analysis is unparalleled when particle shape is a critical performance attribute, offering direct visualization and quantification of morphology without shape assumptions, despite its lower throughput. Finally, Permeability Measurement provides unique insights into the bulk fluid transport properties of porous matrices, which is vital for understanding dissolution and filtration.

No single technique provides a complete picture for all materials and applications. The synergistic use of these methods—for instance, using LD for routine quality control and DIA for investigating process-induced shape changes—often yields the most comprehensive understanding of particle properties, ultimately guiding the development of more effective and reliable solid-state products.

Particle size analysis is a fundamental aspect of solid-state research, influencing everything from drug bioavailability to the mechanical properties of materials. However, most analytical techniques do not measure size directly but instead report an Equivalent Spherical Diameter (ESD), the diameter of a sphere that would behave identically to the particle under a specific measurement condition [19] [20]. This guide provides a comparative analysis of major particle sizing techniques, detailing their operating principles, reported ESDs, and the critical role of shape descriptors to equip researchers with the knowledge to accurately interpret data and select the optimal methodology.

Fundamentals of Equivalent Spherical Diameter

The ESD is a foundational concept in particle size analysis because it provides a standardized way to describe non-spherical, irregular particles using a single, comparable parameter [19]. The specific ESD reported varies drastically with the measurement principle, meaning that a single particle can have different "sizes" depending on the technique used [20]. The table below summarizes the most common types of ESDs.

Table 1: Common Types of Equivalent Spherical Diameters (ESD)

| Equivalent Spherical Diameter (ESD) Type | Definition | Primary Measurement Technique(s) |

|---|---|---|

| Volume-equivalent Diameter | The diameter of a sphere having the same volume as the particle [19] [20]. | Laser Diffraction [19] [20]. |

| Area-equivalent Diameter | The diameter of a sphere having the same projected area as the particle [19] [20]. | Static and Dynamic Image Analysis [19] [20]. |

| Sieve-equivalent Diameter | The diameter of a sphere that passes through the same sieve aperture as the particle [19] [20]. | Sieve Analysis [19] [20]. |

| Stokes Diameter | The diameter of a sphere having the same density and settling velocity as the particle [19] [20]. | Sedimentation Analysis [19] [20]. |

| Hydrodynamic Diameter | The diameter of a sphere that diffuses at the same rate as the particle in a specific fluid [9] [20]. | Dynamic Light Scattering (DLS), Nanoparticle Tracking Analysis (NTA) [9] [20]. |

Comparative Analysis of Particle Sizing Techniques

Different particle sizing techniques are suited for different size ranges, sample types, and provide distinct ESDs. The following table offers a direct comparison of the most prevalent methods.

Table 2: Comparison of Common Particle Size Analysis Techniques

| Method | Measurement Principle | Measured ESD | Typical Size Range | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Laser Diffraction (LD) | Analyzes the scattering pattern of laser light by particles [9] [21]. | Volume-equivalent diameter [19] [20]. | ~0.01 µm to 2000 µm [9] [8]. | High throughput, broad size range, suitable for wet or dry dispersion [9] [21]. | Assumes spherical particles; results are approximations for non-spherical ones [9] [8]. |

| Dynamic Light Scattering (DLS) | Measures Brownian motion to determine diffusion coefficient [9] [21]. | Hydrodynamic diameter [9] [20]. | ~0.3 nm to 10 µm [9] [8]. | Fast, calibration-free, ideal for proteins and nanoparticles in suspension [9]. | Low resolution for polydisperse samples; sensitive to aggregates and temperature [9] [21]. |

| Dynamic Image Analysis (DIA) | Captures and analyzes images of individual particles in flow [9]. | Area-equivalent diameter, Feret diameters [9] [20]. | ~2 µm to 3000 µm [8]. | Provides direct shape and morphological data (e.g., aspect ratio, circularity) [9]. | Not suitable for nanoparticles; lower throughput than LD or DLS [9] [8]. |

| Sieving | Separates particles by size via mechanical vibration through mesh screens [9] [22]. | Sieve-equivalent diameter [19] [20]. | ~20 µm to 120 mm [9] [8]. | Simple, robust, low-cost, and widely accepted [9]. | Time-consuming; low resolution for fine particles; results sensitive to particle orientation [9] [22]. |

| Sedimentation | Determines size from settling velocity under gravity or centrifugal force using Stokes' law [9] [22]. | Stokes diameter [19] [20]. | ~1 µm to 100 µm [9]. | High accuracy and repeatability for spherical particles in its range [9]. | Slow for small particles; biased by density differences and Brownian motion [9]. |

| Nanoparticle Tracking Analysis (NTA) | Tracks and analyzes the Brownian motion of individual particles via light scattering [9] [23]. | Hydrodynamic diameter [9] [20]. | ~30 nm to 1000 nm [9]. | Provides number-based distribution and concentration data for polydisperse nano-suspensions [9] [23]. | Less reproducible and more time-consuming than DLS; requires experienced users [9]. |

Experimental Protocols and Methodologies

Laser Diffraction (ISO 13320)

Laser diffraction is a high-throughput technique favored in quality control for its speed and broad dynamic range [9] [21].

Detailed Protocol:

- Sample Dispersion: The solid sample must be fully dispersed in a suitable medium (e.g., water, organic solvents) that does not dissolve or react with the particles. Surfactants may be added to break agglomerates [19]. Dry powder dispersion via air pressure is also common [19].

- Background Measurement: A measurement of the pure dispersion medium is first taken to establish a baseline "background" signal [19].

- Sample Measurement: The dispersed sample is passed through the laser beam, and detectors measure the intensity of light scattered at various angles [9] [21].

- Data Analysis: The instrument's software uses Mie theory or the Fraunhofer approximation to calculate a volume-based particle size distribution from the scattering pattern. The refractive indices of both the particle and the dispersion medium are critical inputs for this calculation [19] [9]. The results are expressed as a volume-weighted distribution, and key parameters like Dv10, Dv50, Dv90, and the De Brouckere mean diameter D[4,3] are reported [9].

Dynamic Image Analysis (ISO 13322-2)

DIA is used when particle shape is a critical attribute, such as in catalyst or granule analysis [9].

Detailed Protocol:

- Sample Presentation: Particles are dispersed in a liquid or air and passed as a thin stream in front of a high-speed camera and a pulsed light source to "freeze" motion [9].

- Image Acquisition & Thresholding: The system captures thousands of images. Software distinguishes particles from the background based on contrast (thresholding) [9].

- Particle Measurement: For each detected particle, multiple size and shape parameters are measured [9] [20]:

- Area-equivalent diameter: Calculated from the pixel count of the particle's projection.

- Feret Diameters: The distance between parallel tangents at different angles (e.g., Max Feret, Min Feret).

- Shape Descriptors: Parameters like Aspect Ratio (Min Feret / Max Feret), Circularity, and Roundness are calculated.

- Data Reporting: Results are typically number-based distributions. The analysis provides a comprehensive dataset linking individual particle size to its specific shape morphology [9].

Visual Workflow for Technique Selection

The following diagram illustrates the logical decision process for selecting an appropriate particle sizing technique based on key sample and research criteria.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful particle size analysis requires not only the right instrument but also the appropriate consumables and reagents to ensure a representative and stable measurement.

Table 3: Essential Materials for Particle Size Analysis

| Item | Function | Key Considerations |

|---|---|---|

| Dispersion Media | Liquid in which solid particles are suspended for wet measurement [19] [8]. | Must not dissolve or chemically react with the sample. Common choices are water, isopropanol, or cyclohexane [8]. |

| Surfactants | Chemicals added to a dispersion medium to break apart agglomerates and improve particle separation [19]. | Critical for measuring fine powders. Type (ionic/non-ionic) and concentration must be optimized to avoid altering the native particle state [19]. |

| Standard Sieve Stack | A set of sieves with precisely calibrated mesh sizes for sieve analysis [9] [8]. | Sieves must conform to ASTM or ISO standards. The stack is assembled with the largest mesh at the top and the smallest at the bottom [9] [8]. |

| Refractive Index (RI) | An optical property of both the particle and dispersion medium [19]. | Accurate RI values for the sample and medium are mandatory for correct analysis in laser diffraction using Mie theory [19] [9]. |

| Certified Reference Materials | Particles with a known, certified size distribution [24]. | Used for method development and regular instrument qualification/calibration to ensure data accuracy and compliance [24]. |

Selecting the right particle size analysis technique requires a clear understanding that different methods report different Equivalent Spherical Diameters. Laser diffraction offers high-throughput, volume-based data ideal for quality control, while image analysis provides invaluable shape descriptors for understanding particle behavior. Techniques like DLS and NTA are essential for the nano-regime. The choice is not about finding the one "true" size, but about applying the correct tool to obtain the most relevant ESD for your specific application, whether it is optimizing drug bioavailability, ensuring powder flowability, or controlling product stability.

Selecting the optimal particle size analysis technique is a critical step in solid-state research, as the choice directly influences the accuracy and relevance of the data obtained. No single method is universally superior; instead, the optimal selection is dictated by a interplay of three core parameters: the expected particle size range, the particle shape, and the nature of the sample matrix. This guide provides a comparative analysis of common techniques to inform researchers and development professionals in making data-driven method selection decisions.

Comparative Analysis of Particle Sizing Techniques

The table below summarizes the fundamental characteristics of common particle size analysis techniques, providing a high-level overview for initial method screening.

Table 1: Key Characteristics of Common Particle Sizing Techniques

| Method | Suitable Particle Shapes | Typical Size Range | Sample Matrix | Method Principle |

|---|---|---|---|---|

| Laser Diffraction (LD) [8] | Spherical [8] | 0.01 µm - 2,600 µm (up to 3,500 µm with imaging) [25] [8] | Dry powders or liquid dispersions [8] | Scattering/diffraction pattern of laser light [9] [8] |

| Dynamic Light Scattering (DLS) [8] | Spherical [8] | 0.3 nm - 10 μm [8] | Liquid dispersions [8] | Brownian motion (Hydrodynamic diameter) [9] [8] |

| Dynamic Image Analysis (DIA) [9] | All shapes [8] | 30 μm - 10,000 μm [9] [26] | Dry powders [9] [26] | Optical imaging of flowing particles [9] |

| Static Image Analysis | All shapes | 0.3 μm - 10,000 μm [26] | Dry & Wet dispersions [26] | Optical imaging of static particles [26] |

| Scanning Electron Microscopy (SEM) [8] | All shapes [8] | > 10 nm [8] | Dry powders (requires conductive coating) [8] | High-resolution electron imaging [21] [8] |

| Sieve Analysis [8] | All shapes [8] | 30 µm - 120 mm [8] | Dry powders [8] | Gravimetric separation by mesh size [9] [8] |

| X-ray Computed Tomography (XCT) [24] | All shapes (3D data) | Not specified (3D volumetric technique) | Solid volume | 3D X-ray imaging [24] |

Detailed Methodologies and Experimental Protocols

Laser Diffraction (LD)

- Principle: A laser beam passes through a dispersed sample, and particles scatter light at angles inversely proportional to their size. Detectors measure the intensity pattern, and complex algorithms based on Mie theory compare these measurements to theoretical values to calculate a volume-based particle size distribution (PSD) [9] [21].

- Experimental Protocol: The sample is dispersed in a suitable dry or liquid medium to ensure a representative concentration that avoids multiple scattering. The obscuration level is checked to fall within the instrument's recommended range. The measurement is rapid, taking seconds to minutes, and results are typically presented as D-values (D10, D50, D90) and a distribution graph [9] [21].

Dynamic Light Scattering (DLS)

- Principle: Also known as Photon Correlation Spectroscopy, DLS measures the random Brownian motion of particles suspended in a liquid. Smaller particles move faster than larger ones. A laser beam hits the particles, and the fluctuations in scattered light intensity are detected. These fluctuations are analyzed via an autocorrelation function, and the translational diffusion coefficient is used in the Stokes-Einstein equation to calculate the hydrodynamic diameter [9].

- Experimental Protocol: A dilute, dust-free suspension is essential. The sample is loaded into a cuvette, and the temperature is precisely controlled, as viscosity is a key parameter. Measurement times are typically a few minutes. Results include a hydrodynamic diameter and a polydispersity index (PDI) indicating the breadth of the distribution [9].

Image Analysis (Static and Dynamic)

- Principle: This technique captures two-dimensional projections of individual particles. For each particle, software calculates numerous size and shape parameters [26]. Static image analysis observes particles on a stationary substrate, while dynamic image analysis captures images of particles as they flow past a camera [9] [26].

- Experimental Protocol: The sample must be well-dispersed to prevent particle overlap. For dynamic analysis, a representative sample is fed through a flow cell. The software analyzes thousands of particles to build a number-based distribution. Measurable parameters include Feret diameters (max and min), aspect ratio, circularity, and length/width ratio [26].

X-ray Computed Tomography (XCT)

- Principle: XCT is a non-destructive technique that generates 3D volumetric data of a sample by collecting a series of 2D X-ray images from different angles. These projections are computationally reconstructed into a 3D model, allowing for the analysis of internal and external structures without physical sectioning [24].

- Experimental Protocol: A solid sample is mounted on a stage that rotates within the X-ray beam. The scan parameters (energy, resolution, exposure time) are set based on the material's density and the required resolution. The 3D data set can be segmented to identify individual particles, allowing for the measurement of true 3D size, shape, orientation, and even intraparticle porosity [24].

Experimental Data and Performance Comparison

A comparative study of laboratory-based techniques using spherical silica particles with known size ranges provides critical insights into method-specific biases [24].

Table 2: Experimental Findings from a Geoscience Study on Silica Spheres [24]

| Method | Reported Accuracy for <150 μm | Reported Accuracy for >150 μm | Key Limitation / Cause of Error |

|---|---|---|---|

| Laser Particle Size Analysis (LPSA) | Agrees with other techniques | Overestimates particle size | Calculation limitation of the technique |

| Optical Point Counting | Agrees with other techniques | Underestimates particle size | Stereology (effect of slicing particles) |

| 2D Automated Image Analysis | Agrees with other techniques | Underestimates particle diameter | Stereology (effect of slicing particles) |

| X-ray Computed Tomography (XCT) | Agrees with other techniques | Most accurate; lowest sorting values | 3D volumetric analysis avoids stereological errors |

The study concluded that XCT was the most accurate method for determining grain size distribution in sediments, as it is the only 3D analysis method that avoids the stereological errors inherent in 2D techniques [24].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials and Reagents for Particle Size Analysis

| Item | Function / Application |

|---|---|

| Spherical Silica Particles [24] | Reference materials for method calibration and validation of particle sizing techniques. |

| Isotope-labelled Internal Standards [27] [28] | Used in mass spectrometry to correct for matrix effects and ensure accurate quantitation. |

| Electrolyte Solutions [9] | Required for particle size analysis based on the Coulter principle, which relies on electrical conductivity. |

| Aqueous & Non-aqueous Dispersion Media [8] | Liquids (e.g., water, surfactants, organic solvents) used to create stable suspensions for laser diffraction, DLS, and image analysis. |

| Matrix-Matched Standards [29] [27] | Calibration standards with a composition similar to the sample, used to compensate for matrix effects in techniques like XRF and SIMS. |

Decision Framework and Visual Workflow

The following diagram outlines a logical workflow for selecting a particle size analysis method based on the three critical parameters.

Particle size analysis is a foundational characterization in solid-state product research. The comparative data and frameworks presented here underscore that a deliberate, parameter-driven selection process—prioritizing particle size range, shape, and sample matrix—is essential for generating reliable and meaningful data to guide research and development.

A Practical Guide to Particle Sizing Techniques: From Laser Diffraction to Image Analysis

Laser Diffraction (LD) has become one of the most widely used particle sizing techniques across numerous industries, including pharmaceuticals, chemicals, and materials science. As an ensemble technique that measures particle size distributions by analyzing the angular variation of scattered light, LD offers rapid analysis for materials ranging from hundreds of nanometers to several millimeters [30]. The technique's widespread adoption is supported by international standardization, most notably ISO 13320:2020, which provides comprehensive guidance on instrument qualification and size distribution measurement of particles in two-phase systems such as powders, sprays, aerosols, suspensions, and emulsions [31].

For researchers and drug development professionals, understanding the operational principles, regulatory compliance, and practical applicability of LD is crucial for obtaining reliable particle size data. Particle size is a critical quality attribute that profoundly impacts material performance and properties, influencing everything from the dissolution rate of pharmaceutical ingredients to the texture of food products and the efficiency of industrial catalysts [32]. This guide objectively examines LD technology within the context of particle size analysis techniques for solid-state research, comparing its performance with alternative methods and providing supporting experimental data.

Operational Principles of Laser Diffraction

Fundamental Light Scattering Theory

The underlying principle of laser diffraction particle sizing is based on the relationship between particle size and light scattering patterns. When a laser beam passes through a dispersed particulate sample, particles scatter light at angles inversely proportional to their size [33]. Large particles scatter light at small angles relative to the laser beam, while small particles scatter light at wide angles [30]. The angular scattering intensity data is collected by a detector array and analyzed through appropriate optical models to calculate particle size distribution.

The measurement principle leverages the definite mathematical relationship between scattered light intensity distribution and particle size [34]. Modern LD instruments capture this angular distribution data and calculate size distribution using computational algorithms that compare measured data to theoretical models [33]. The entire process from scattering pattern to size distribution involves sophisticated mathematical deconvolution to determine the proportion of different size classes that would produce the observed scattering pattern [30].

Optical Models: Mie Theory vs. Fraunhofer Approximation

Laser diffraction instruments employ two primary theoretical models for data analysis:

Mie Theory: This comprehensive light scattering solution accounts for diffraction, refraction, reflection, and absorption phenomena [34]. Mie theory requires knowledge of the optical properties (refractive index and its imaginary component) of both the sample and the dispersant medium [30]. It provides accurate results across the entire measurement range (0.1 μm to 3 mm), particularly for particles smaller than 50 μm where Fraunhofer approximation becomes less reliable [35]. ISO 13320:2020 recommends Mie theory as the preferred method, especially for measurements across wide dynamic ranges [35].

Fraunhofer Approximation: This simplified approach treats particles as opaque discs that only diffract light [34]. It does not require input of particle refractive index parameters, making it computationally simpler [33]. However, it is primarily suitable for large (>50 μm), opaque particles, and may produce unpredictable inaccuracies for finer or transparent materials [35].

The following diagram illustrates the complete laser diffraction measurement workflow, from sample preparation to result interpretation:

Equivalent Spherical Diameter and Data Representation

A fundamental concept in laser diffraction is the Equivalent Spherical Diameter (ESD). Since the technique's optical models assume spherical particles, it reports particle size as the diameter of a sphere that would produce the same scattering pattern as the measured particle [32]. For non-spherical particles, this means the resulting particle size distribution differs from that obtained by methods based on other physical principles such as sedimentation or sieving [31].

LD typically reports results as volume-based distributions, providing several characteristic parameters:

- D-values: These percentile values describe the particle size distribution, where D50 represents the median diameter below which 50% of the sample volume exists [36]. D10 and D90 values define the fine and coarse ends of the distribution, respectively [33].

- Mean diameters: The volume-weighted mean diameter D[4,3] (De Brouckere Mean Diameter) is commonly used in LD because of the technique's emphasis on particle volume [36]. This parameter is particularly sensitive to large particles in the distribution [32].

- Distribution width: Additional parameters such as span or specific ratios of D-values provide information about distribution polydispersity [36].

ISO 13320:2020 Compliance Framework

ISO 13320:2020, titled "Particle size analysis — Laser diffraction methods," serves as the global technical standard for LD measurements, providing a standardized approach to ensure comparability of results across different instruments and laboratories [34]. The current 2020 version represents the latest evolution of the standard, incorporating significant updates from the previous 2009 version, particularly in the areas of instrument qualification assessment, measurement accuracy evaluation, and technical guidance for fine particle measurement [34].

The standard defines the applicable size range from approximately 0.1 μm to 3 mm, though it acknowledges that with special instrumentation and conditions, this range can be extended both above and below these limits [31]. It provides guidance for particle size distribution measurement of many two-phase systems, including powders, sprays, aerosols, suspensions, emulsions, and gas bubbles in liquids, while explicitly noting that it does not address specific requirements for particle size measurement of specific materials, which may require supplementary industry-specific standards [31] [34].

Key Technical Requirements

ISO 13320:2020 establishes several critical technical requirements that ensure measurement reliability:

Instrument Qualification: A core addition in the 2020 version is the requirement for systematic instrument qualification, including calibration verification using Certified Reference Materials (CRM), performance verification through intermediate precision testing, and applicability evaluation to ensure reliability across the entire measurement range [34].

Optical Model Selection: The standard provides guidance on appropriate use of Mie theory versus Fraunhofer approximation, emphasizing Mie theory for wide dynamic ranges and accurate fine particle measurement [34] [35]. When using Mie theory, accurate determination of the complex refractive index (N = n - ik, where n is the real refractive index and k is the imaginary absorption component) for both particles and dispersion medium is essential [34].

Measurement Parameter Control: Proper control of measurement conditions is critical, including obscuration (typically maintained between 3%-15% to avoid multiple scattering effects), dispersion stability, and sample concentration optimization [34] [35].

Result Expression: The standard references the ISO 9276 series for appropriate result expression, requiring both graphical representation (particle size distribution curves) and characteristic parameters (D-values), along with documentation of measurement uncertainty sources [34].

Method Validation and Performance Verification

For pharmaceutical and other regulated applications, ISO 13320:2020 emphasizes the importance of method validation and regular performance verification. This includes:

- Repeatability Assessment: Evaluation of relative standard deviation (RSD) for repeated measurements, typically expecting <5% for D-values [36].

- Intermediate Precision: Testing variation under different conditions, operators, or instruments [34].

- Accuracy Determination: Through analysis of certified reference materials [34].

- Robustness Testing: Evaluating method resilience to small, deliberate parameter changes [35].

The standard also addresses specific considerations for non-spherical particles, noting that while LD assumes spherical particles in its optical model, the consistent nature of shape-induced errors makes the technique valuable for quality control even for irregular particles [31] [33].

Practical Application for Powders and Dispersions

Dispersion Techniques and Method Development

Successful laser diffraction analysis requires appropriate sample dispersion to ensure particles are measured as individual entities rather than agglomerates. The choice between wet and dry dispersion depends on the sample's natural state, application context, and material properties:

Wet Dispersion: Preferred for cohesive fine particles (<20 μm), toxic materials, and friable samples that might break under aggressive dry dispersion [35]. Wet dispersion requires selection of an appropriate dispersant that is transparent to the measurement wavelength, chemically compatible with instrument materials, non-dissolving for the particles, and capable of effective wetting [35]. Proper wetting can be assessed by mixing sample and dispersant and observing whether a uniform suspension forms or if sedimentation occurs [35].

Dry Dispersion: Suitable for free-flowing powders where dry state reflects the application context. Dry dispersion uses compressed air or gravity to create particle flow, with de-agglomeration occurring through particle-particle and particle-wall collisions [33]. Optimization of dispersion energy (air pressure) is critical to break agglomerates without fracturing primary particles [35].

The development of a robust method requires systematic optimization of dispersion parameters, including dispersant selection, surfactant use, energy input (stirrer speed, sonication), and sample concentration [35]. ISO 13320:2020 and pharmacopeial guidelines highlight microscopy as a valuable tool for verifying appropriate dispersion conditions [35].

Critical Method Parameters

Several parameters require careful optimization during method development:

Sample Concentration: Controlled through obscuration measurement, which indicates the percentage of emitted laser light lost by scattering or absorption [35]. Ideal concentration provides sufficient signal while avoiding multiple scattering. Obscuration titration helps identify the optimal concentration range, with submicron samples typically more susceptible to multiple scattering effects at higher obscurations [35].

Dispersion Energy: Must be sufficient to de-agglomerate particles without causing fragmentation. Sonication time and power, stir speed, and pump settings require optimization through stability testing [35].

Optical Parameters: For Mie theory, accurate refractive index values for both particle and dispersant are essential. Errors in refractive index can lead to significant measurement inaccuracies, potentially exceeding 10% [34].

Measurement Duration: Sufficient measurements must be taken to ensure representative sampling and stability assessment [35].

The following table summarizes essential research reagents and materials for laser diffraction analysis:

Table 1: Research Reagent Solutions for Laser Diffraction Analysis

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Aqueous Dispersants (Water) | Liquid dispersion medium | Polarity can be modified with surfactants; pH adjustment may be necessary for charged particles [35] |

| Organic Dispersants (Ethanol, Isopropanol, Hexane) | Liquid dispersion medium | Selected based on sample solubility and compatibility; range from polar to nonpolar [35] |

| Surfactants (SDS, Triton X-100) | Improve wetting and dispersion stability | Reduce surface tension between particles and dispersant; concentration requires optimization [35] |

| Certified Reference Materials | Instrument qualification and method validation | Polystyrene latex, glass beads, or other materials with certified size distributions [34] |

| Dispersant Additives (Salts, pH Modifiers) | Stabilize dispersion | Prevent flocculation in charged systems by modifying ionic strength or pH [35] |

Applications in Pharmaceutical and Material Research

Laser diffraction finds extensive applications across pharmaceutical and materials research:

Pharmaceutical Industry: Characterization of drug particles, excipients, and formulations to ensure uniformity and stability [30]. Particle size distribution of active pharmaceutical ingredients (APIs) directly influences dissolution rate and bioavailability [35]. LD also analyzes spray particle size in inhalation drug delivery systems [30].

Powder Metallurgy and Additive Manufacturing: Monitoring particle size distribution of metal powders to ensure density uniformity of sintered parts [34]. Specific tolerances on feedstock powder are critical for successful AM processes [37].

Food and Beverage: Assessment of particle size distribution in ingredients like flour, sugar, and spices to control product texture [30]. Analysis of emulsion droplet size for stability and shelf life optimization [30].

Environmental Monitoring: Analysis of particulate pollutants, aerosols, and sediments for air and water quality assessment [30].

Comparative Analysis with Alternative Techniques

Method Comparison: Laser Diffraction vs. Dynamic Light Scattering

Laser diffraction and dynamic light scattering (DLS) represent two established particle sizing methods with distinct principles and applications:

Table 2: Comparison of Laser Diffraction and Dynamic Light Scattering

| Characteristic | Laser Diffraction (LD) | Dynamic Light Scattering (DLS) |

|---|---|---|

| Size Range | 10 nm to 3500 μm [36] | 0.3 nm to 10 μm [36] |

| Measurement Principle | Angular variation of scattered light intensity [36] | Intensity fluctuations from Brownian motion [36] |

| Equivalent Diameter | Volume equivalent sphere diameter [30] | Hydrodynamic diameter [36] |

| Weighting Model | Volume-based [36] | Intensity-based [36] |

| Sample Concentration | Typically higher (obscuration 3-15%) [34] | Lower concentrations to avoid multiple scattering [36] |

| Theoretical Basis | Mie theory or Fraunhofer approximation [30] | Stokes-Einstein equation [36] |

| ISO Standard | ISO 13320 [36] | ISO 22412 [36] |

| Typical Output | D-values (D10, D50, D90), volume distribution [36] | Hydrodynamic mean diameter, polydispersity index [36] |

| Optical Parameters Required | Refractive index (for Mie theory) [30] | Refractive index and viscosity for conversion [36] |

The following diagram illustrates the conceptual differences in how various particle sizing techniques measure and interpret particle size, particularly for non-spherical particles:

Experimental Comparison Data

Independent studies comparing particle sizing techniques reveal how different methods produce varying results depending on particle shape and measurement principles:

Table 3: Experimental Comparison of Particle Sizing Techniques for Different Particle Shapes [38]

| Sample Type | Laser Diffraction D50 (μm) | Dynamic Image Analysis D50 (μm) | Sedimentation D50 (μm) | Electrical Sensing Zone D50 (μm) |

|---|---|---|---|---|

| Glass Beads (Spherical) | 50 | 50 | 50 | 50 |

| Garnet (Irregular) | 50 | 50 | 38 | 35 |

| Wollastonite (Needle-like) | 50 | 65 | 20 | 15 |

The data demonstrates that while different techniques produce similar results for spherical particles, significant discrepancies emerge for non-spherical particles. Laser diffraction tends to report larger sizes for anisotropic particles because it is sensitive to the largest particle dimension [38]. In contrast, sedimentation and electrical sensing zone methods report smaller equivalent spherical diameters based on different physical principles [38].

Advantages and Limitations in Solid-State Research

Laser diffraction offers several distinct advantages for solid-state product research:

- Wide Dynamic Range: Capability to measure from nanometers to millimeters without method modification [30]

- High Throughput: Rapid measurements (typically under one minute) enable hundreds of measurements per day [30]

- Statistical Relevance: Large numbers of particles sampled in each measurement ensure good representation [30]

- Non-Destructive: Samples can typically be recovered for additional testing [36]

- Well-Established: Comprehensive standardization through ISO 13320 and pharmacopeial methods [30]

However, the technique also presents certain limitations:

- Spherical Assumption: The equivalent spherical diameter may not fully represent non-spherical particles [31]

- Refractive Index Dependency: Mie theory requires accurate optical parameters [30]

- Ensemble Technique: Provides population statistics rather than individual particle data [35]

- Limited Morphology Information: Unlike image analysis, LD does not provide shape parameters [37]

For comprehensive particle characterization, particularly with non-spherical particles, research indicates that a combined approach using both laser diffraction and image analysis provides optimal understanding of powder characteristics, especially in applications like additive manufacturing where both size and shape critically influence process performance [37].

Laser diffraction remains a cornerstone technique for particle size analysis in solid-state research, offering an optimal balance of speed, reproducibility, and wide dynamic range. Compliance with ISO 13320:2020 ensures methodological rigor and inter-laboratory comparability, essential for pharmaceutical and advanced material applications. While the technique assumes spherical particles, producing equivalent spherical diameters that may differ from results obtained by sedimentation, sieving, or image analysis, its standardized methodology provides consistent data valuable for quality control and formulation development.

For comprehensive material characterization, particularly with irregularly shaped particles, researchers should consider supplementing LD data with complementary techniques such as dynamic image analysis to obtain both size and morphological information. Understanding the principles, capabilities, and limitations of laser diffraction enables researchers and drug development professionals to make informed decisions about particle characterization strategies, ultimately supporting the development of higher quality solid-state products.

Dynamic Light Scattering (DLS), also known as Photon Correlation Spectroscopy or Quasi-Elastic Light Scattering, is a widely adopted analytical technique for characterizing the size distribution of particles in suspension within the nanometer to submicron range (typically 1 nm to 1 μm) [39]. This non-invasive method leverages the phenomenon of light scattering from particles undergoing Brownian motion to determine their hydrodynamic size, making it indispensable in pharmaceutical development, biologics characterization, and nanomaterial science [40] [41] [42]. For solid-state product researchers, DLS provides a critical tool for assessing the colloidal stability of nano-formulations, a key factor influencing drug product shelf-life, efficacy, and safety profiles.

The fundamental principle of DLS involves illuminating a sample with a monochromatic laser beam and analyzing the fluctuating intensity of the light scattered by the particles in solution [41] [39]. These intensity fluctuations arise from constructive and destructive interference caused by the relative motion of particles as they undergo random Brownian motion. The velocity of this motion is inversely related to particle size; smaller particles diffuse rapidly, causing intensity to fluctuate quickly, while larger particles move more slowly, resulting in slower fluctuations [43] [39]. The core outcome of a DLS measurement is the hydrodynamic diameter (Dh), which represents the diameter of a sphere that diffuses at the same rate as the particle being measured. This includes the core particle itself along with any solvation layer or surface constituents attached to it in solution [39].

DLS in the Context of Particle Sizing Techniques

While several analytical methods are available for particle size analysis, DLS holds a distinct position, particularly for nano-range suspensions in solid-state and pharmaceutical research. The following table provides a comparative overview of DLS against other common techniques.

Table 1: Comparison of DLS with Other Particle Sizing Techniques

| Technique | Measurement Principle | Typical Size Range | Sample Condition | Key Outputs | Primary Advantages | Key Limitations |

|---|---|---|---|---|---|---|

| Dynamic Light Scattering (DLS) | Brownian motion analysis via scattered light intensity fluctuations [39]. | ~1 nm – 1 μm [39] | Native, hydrated state [42]. | Hydrodynamic diameter, Polydispersity Index (PDI) [43]. | Measures in native state, fast analysis, high sensitivity to aggregation [42]. | Intensity-based weighting biases toward larger particles; assumes sphericity [42] [44]. |

| Transmission Electron Microscopy (TEM) | High-resolution imaging of particles [42]. | <1 nm upwards | Dry, under vacuum (requires sample staining) [44]. | Core particle size, detailed morphology [42]. | Provides direct visual data on size and shape [42]. | Sample preparation may alter particles; no hydrodynamic information [44]. |

| Nanoparticle Tracking Analysis (NTA) | Tracks and analyzes Brownian motion of individual particles [42]. | ~10 nm – 1 μm | Solution-based, but often requires low concentration [42]. | Size distribution, particle concentration [42]. | Provides concentration data; good for polydisperse samples [42]. | Lower throughput than DLS; requires optimal concentration [42]. |

| DOSY-NMR | Measures diffusion coefficient via NMR signal decay [45]. | Atomic resolution upwards | Native, liquid state [45]. | Hydrodynamic radius, information on fast-exchanging species [45]. | Probes fast-exchanging oligomers; chemical specificity [45]. | Lower sensitivity to large aggregates; requires high sample concentration [45]. |

| Laser Diffraction | Angular dependence of scattered light intensity [46]. | ~0.1 μm – 3 mm | Liquid or dry dispersion [46]. | Volume-based size distribution [46]. | Very wide size range; robust for QC [46]. | Limited resolution for nano-range; assumes particle sphericity [46]. |

Orthogonality and Data Correlation with Other Methods

DLS and TEM are often used complementarily. TEM provides high-resolution information on the core particle size and morphology, while DLS characterizes the particle's size in its functional, hydrated state [44]. A common observation is that the hydrodynamic diameter from DLS is larger than the core diameter measured by TEM. While this is frequently attributed simply to a hydration shell, the discrepancy often stems from the different physical principles of the techniques: DLS reports an intensity-weighted harmonic mean size (Z-average) that is highly sensitive to larger aggregates, whereas TEM provides a number-based, direct visualization of the particle core [44]. Proper experimental procedure and data interpretation are essential to reconcile results from these techniques [44].

Similarly, DLS and DOSY-NMR provide orthogonal diffusion data. DLS scattering intensity is proportional to the sixth power of the radius, heavily weighting larger species in a mixture. In contrast, DOSY-NMR signal intensity often favors smaller molecules that produce sharper spectral lines [45]. This was demonstrated in a study of insulin drug products, where DLS resolved distinct oligomeric species (dimer, hexamer, dodecamer), while DOSY-NMR provided an averaged diffusion coefficient across fast-exchanging oligomers [45].

DLS Methodology and Experimental Protocols

Core Theoretical Foundation

The analytical pipeline of DLS begins with measuring the time-dependent scattering intensity, which is processed into an autocorrelation function (ACF) [43] [39]. The ACF decays over time, and the rate of this decay is governed by the diffusion coefficient of the particles. For monodisperse samples, the ACF is a single exponential decay. For polydisperse samples, it is a sum of contributions from all species present [39].

The diffusion coefficient (Dt) is extracted from the ACF and inserted into the Stokes-Einstein equation to calculate the hydrodynamic diameter (Dh) [39]:

Dh = kBT / (3 π η Dt)

Where:

- kB is Boltzmann's constant

- T is the absolute temperature (in Kelvin)

- η is the dynamic viscosity of the solvent

- Dt is the translational diffusion coefficient [39]

The result is typically expressed as the Z-average diameter, an intensity-weighted harmonic mean size, and the Polydispersity Index (PDI), a dimensionless measure of the breadth of the size distribution [43] [39]. A PDI below 0.1 indicates a highly monodisperse sample, while values above 0.5 suggest a very broad distribution or the presence of aggregates [42].

Key Experimental Workflow

The diagram below outlines the standard workflow for a reliable DLS experiment, from sample preparation to data interpretation.

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for DLS Experiments

| Item | Function/Application | Critical Considerations |

|---|---|---|

| High-Purity Solvents/Buffers | Dispersing medium for nanoparticles (e.g., water, PBS, specific buffer formulations). | Impurities can cause spurious scattering; use high-purity grades and filtration [42]. |

| Filtration Units (0.22 μm) | Removal of dust and large particulate contaminants from the sample prior to measurement. | Verify filter membrane compatibility with sample to avoid adsorption or degradation [42]. |

| Stabilizers & Surfactants (e.g., Polysorbates) | Excipients to prevent nanoparticle aggregation and ensure colloidal stability during measurement and storage. | Concentration must be optimized to avoid micelle formation that interferes with sizing [42]. |

| Viscosity Standards | Calibration and verification of solvent viscosity for accurate input into the Stokes-Einstein equation. | Essential for measurements in non-aqueous or viscous dispersion media [42]. |

| Reference Nanoparticles (e.g., latex beads) | System suitability testing and validation of instrument performance. | Use certified standards with known size and low polydispersity [39]. |

Experimental Data and Performance Comparison

Quantitative Instrument Comparison

Different DLS instruments employ varying specifications, such as laser wavelength and detection angle, which can influence the measured results. The following table synthesizes data from a comparison of different instrumental setups.

Table 3: Impact of Instrument Specifications on DLS Measurements [47]

| Instrument Specification | Example Configuration | Impact on Measurement & Data Interpretation |

|---|---|---|

| Detection Angle (θ) | 90° (Right-angle) | Standard angle; can be dominated by large particle scattering in polydisperse samples [47]. |

| 173° (Backscatter) | Reduces bias from large particles; provides better resolution for polydisperse samples [47]. | |

| Laser Wavelength (λ) | 633 nm (Visible, He-Ne) | Standard wavelength; Mie scattering resonances can complicate analysis for particles > ~100 nm [47]. |

| 1300 nm (Near-Infrared, NIR) | Penetrates turbid samples better; delays onset of Mie resonances, simplifying analysis for larger nanoparticles [47]. |

Case Study: DLS vs. DOSY-NMR for Protein Analysis