High-Throughput Solid-State Synthesis: Automated Workflows, AI Planning, and Experimental Optimization

This article provides a comprehensive overview of modern high-throughput solid-state synthesis, a field critical for accelerating the discovery of new functional materials for applications ranging from drug development to clean...

High-Throughput Solid-State Synthesis: Automated Workflows, AI Planning, and Experimental Optimization

Abstract

This article provides a comprehensive overview of modern high-throughput solid-state synthesis, a field critical for accelerating the discovery of new functional materials for applications ranging from drug development to clean energy. It covers the foundational principles of sub-solidus synthesis and the challenges of exploring vast chemical spaces. The article details cutting-edge automated workflows that combine robotic liquid handling with traditional ceramic processing, as well as AI-driven platforms for autonomous synthesis planning and precursor selection. Furthermore, it addresses common troubleshooting pitfalls, such as the formation of stable intermediate phases and the critical validation of synthesis outcomes. Finally, it presents a comparative analysis of different optimization algorithms, benchmarking their performance against experimental datasets. This resource is tailored for researchers, scientists, and development professionals seeking to implement efficient, data-driven materials discovery pipelines.

The Foundations of High-Throughput Synthesis: From Traditional Methods to Automated Exploration

The discovery of new inorganic solid-state materials is a critical driver of innovation in fields ranging from energy storage to catalysis. However, the path from a computationally predicted compound to a synthesized material is fraught with challenges. The primary obstacle lies in the exponential expansion of the compositional and synthetic parameter space; even for ternary systems, the number of potential combinations of elements, precursors, and processing conditions is vast and effectively impossible to explore exhaustively through traditional, labor-intensive trial-and-error methods [1] [2]. This process is further complicated by the fact that thermodynamic stability, often approximated by the energy above the convex hull (Ehull), is a necessary but insufficient condition for synthesizability, as kinetic barriers and the formation of stable intermediate phases can prevent the realization of a target material [3] [4].

The solid-state synthesis process is inherently complex. Unlike molecular synthesis, it involves concerted reactions and phase transformations between many solid precursors, making outcomes difficult to predict [3]. Consequently, the experimental validation of new materials has become a major bottleneck in materials discovery pipelines [2] [4]. This application note details how the integration of autonomous laboratories, machine learning, and active learning algorithms is creating a new paradigm for high-throughput solid-state synthesis, directly addressing these long-standing challenges.

Quantitative Landscape of the Challenge

The scale of the solid-state synthesis challenge can be quantified by examining both the computational screening rates and the experimental success rates of autonomous systems.

Table 1: Throughput Comparison: Computational Screening vs. Experimental Realization

| Metric | Traditional / Manual Synthesis | A-Lab (Autonomous Laboratory) |

|---|---|---|

| Experimental Throughput | Low (manual, time-consuming iterations) | High (41 novel compounds synthesized in 17 days) [2] |

| Overall Success Rate | Not systematically reported | 71% (41 out of 58 targets) [2] |

| Success Rate (Literature-Inspired Recipes) | N/A | 35 out of 41 successful syntheses [2] |

| Success Rate (Active-Learning-Optimized) | N/A | 6 out of 41 successful syntheses [2] |

| Primary Limitation | Labor-intensive experimentation, human bandwidth | Synthetic kinetics, precursor volatility, computational inaccuracy [2] |

Table 2: Analysis of Synthesis Failures in Autonomous Workflows

| Failure Mode | Description | Number of Affected Targets |

|---|---|---|

| Slow Reaction Kinetics | Reaction steps with low driving forces (<50 meV per atom) hinder target formation [2]. | 11 [2] |

| Precursor Volatility | Loss of precursor material during heating alters stoichiometry [2]. | 3 [2] |

| Amorphization | Failure of the product to crystallize, preventing identification by XRD [2]. | 2 [2] |

| Computational Inaccuracy | Incorrect stability prediction from the ab initio database [2]. | 1 [2] |

Core Protocols for High-Throughput Synthesis

This section outlines the key experimental and computational methodologies that form the foundation of modern, accelerated solid-state synthesis.

Protocol: Operation of an Autonomous Synthesis Laboratory (A-Lab)

The A-Lab represents a complete integration of computation, robotics, and machine learning for powder synthesis [2].

1. Goal Identification and Target Selection:

- Input: Targets are identified from large-scale ab initio databases (e.g., the Materials Project, Google DeepMind) as compounds predicted to be on or near (<10 meV per atom) the thermodynamic convex hull [2].

- Criterion: Targets must be predicted to be stable in open air, not reacting with O₂, CO₂, or H₂O [2].

2. Initial Recipe Proposal:

- Precursor Selection: A machine learning model, trained via natural-language processing on a large database of literature syntheses, proposes up to five initial precursor sets. This model assesses "target similarity" to base selections on known related materials [2] [3].

- Temperature Selection: A second ML model, trained on literature heating data, proposes an initial synthesis temperature [2].

3. Robotic Experimentation:

- Sample Preparation: A robotic station dispenses and mixes precursor powders before transferring them into alumina crucibles [2].

- Heating: A robotic arm loads crucibles into one of four box furnaces for heating [2].

- Characterization: After cooling, samples are robotically transferred to a station where they are ground and measured by X-ray diffraction (XRD) [2].

4. Phase Analysis:

- The XRD patterns are analyzed by probabilistic machine learning models to identify phases and determine their weight fractions [2].

- For novel targets with no experimental pattern, the XRD reference is simulated from the computed structure and corrected for density functional theory (DFT) errors [2].

- Results are confirmed with automated Rietveld refinement [2].

5. Active Learning Loop:

- If the target yield is below a set threshold (e.g., 50%), an active learning algorithm (ARROWS3) is engaged to propose new, optimized synthesis routes based on the experimental outcomes [2].

Protocol: The ARROWS3 Active Learning Algorithm

ARROWS3 is a key algorithm for autonomously selecting optimal precursors by learning from experimental outcomes [3].

1. Input and Initialization:

- Input: The target material's composition and structure, plus a list of available precursors [3].

- The algorithm generates all stoichiometrically balanced precursor sets that can yield the target.

- Initial Ranking: In the absence of experimental data, precursor sets are ranked by the thermodynamic driving force (most negative ΔG) to form the target, as calculated using data from the Materials Project [3].

2. Experimental Testing and Pathway Analysis:

- Top-ranked precursor sets are tested experimentally at multiple temperatures [3].

- The intermediates formed at each temperature are identified via XRD and machine learning analysis [3].

- The algorithm determines which pairwise reactions between phases led to the formation of each observed intermediate [3].

3. Knowledge Integration and Re-ranking:

- ARROWS3 uses the observed pairwise reactions to predict the intermediates that would form in untested precursor sets [3].

- The ranking of precursor sets is updated to prioritize those predicted to avoid intermediates that consume a large portion of the free energy. The new priority is to maximize the driving force remaining (ΔG') for the final step of forming the target material from the intermediates [3].

4. Iteration:

- Steps 2 and 3 are repeated until the target is synthesized with high yield or all precursor sets are exhausted [3].

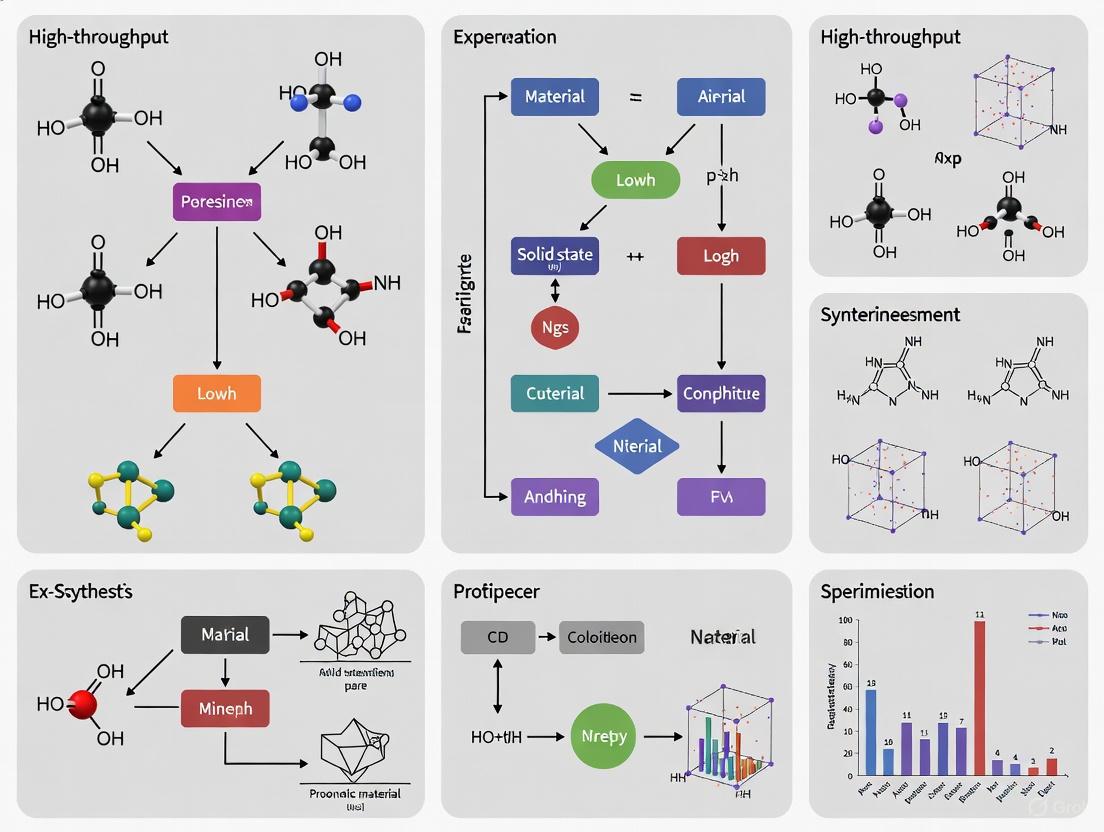

ARROWS3 Algorithm Flow: This workflow illustrates the active learning loop for autonomous precursor selection, integrating computational thermodynamics with experimental feedback [3].

The Scientist's Toolkit: Research Reagent Solutions

The transition to high-throughput solid-state synthesis relies on a suite of computational and experimental tools.

Table 3: Essential Research Reagent Solutions for High-Throughput Solid-State Synthesis

| Tool / Solution | Function | Role in High-Throughput Synthesis |

|---|---|---|

| Ab Initio Databases (e.g., Materials Project) | Provide calculated thermodynamic data (formation energies, phase stability) for millions of known and hypothetical compounds [2]. | Used for initial target identification and stability screening, and to calculate thermodynamic driving forces for reactions [2] [3]. |

| Text-Mined Synthesis Databases | Large datasets of synthesis procedures extracted from scientific literature using natural language processing (NLP) [4] [5]. | Train ML models to propose initial synthesis recipes (precursors, temperatures) by analogy to previously reported syntheses [2]. |

| Active Learning Algorithms (e.g., ARROWS3) | Optimization algorithms that learn from experimental outcomes to propose improved synthesis routes [3]. | Dynamically guide precursor selection and avoid kinetic traps, drastically reducing the number of experiments needed [2] [3]. |

| Robotic Synthesis Platforms | Automated workstations for dispensing, mixing, and heating powder samples [2]. | Execute synthesis experiments with high reproducibility and continuous 24/7 operation, enabling rapid data generation [2]. |

| Inline X-ray Diffraction (XRD) with ML Analysis | Automated characterization of synthesis products to identify crystalline phases and quantify their amounts [2]. | Provides the critical feedback data on reaction outcomes that drives active learning loops and autonomous decision-making [2] [3]. |

Implementation and Best Practices

Successfully implementing a high-throughput synthesis workflow requires attention to data quality and process design.

- Data Quality is Critical: The performance of models trained on text-mined data is limited by the quality of the underlying datasets. One analysis found that only about 15% of outlier entries in a text-mined dataset were extracted correctly, highlighting the value of human-curated data for validation and model training [4].

- Standardize Synthesis Reporting: The lack of standardization in protocol reporting severely hampers machine-reading capabilities. Adopting clear, consistent guidelines for writing synthesis procedures can significantly improve machine-readability and the effectiveness of text-mining tools [5].

- Focus on the Driving Force: The ARROWS3 algorithm demonstrates that prioritizing precursor sets which avoid highly stable intermediates—and thus retain a large thermodynamic driving force (ΔG') for the final target-forming step—is a highly effective strategy for synthesis planning [3].

- Plan for Failure Modes: Understanding common failure modes, such as sluggish kinetics from low driving forces or precursor volatility, allows for better initial target selection and the design of more robust synthetic workarounds [2].

Sub-solidus reaction pathways represent a fundamental class of ceramic processing techniques conducted entirely in the solid state at temperatures below the melting point (solidus) of any constituent phases or products. This method forms the backbone of the functional and electroceramics materials industries, enabling the production of advanced oxides and other inorganic materials through the thermal activation of solid-state diffusion and reaction kinetics [6] [7]. Unlike liquid-phase or vapor-phase synthesis routes, sub-solidus processing avoids melting, instead relying on atomic migration across particle boundaries to form new compound phases from mixed precursor powders.

The versatility of sub-solidus methods makes them particularly valuable for synthesizing multi-cation oxides where precise stoichiometric control is essential for functional properties. These pathways are inherently suited for high-throughput materials discovery because they enable systematic exploration of compositional spaces without the complications of solvent interactions or precursor decomposition that plague wet-chemical methods [7]. Recent advancements have demonstrated the applicability of sub-solidus synthesis to diverse material systems, from polyanion-based compositions beyond oxides to complex perovskite solid solutions [7].

Fundamental Principles of Sub-Solidus Reactions

Thermodynamic and Kinetic Foundations

Sub-solidus reactions are governed by thermodynamic driving forces and kinetic limitations. The fundamental principle involves heating intimately mixed solid precursors at temperatures sufficient to enable cation interdiffusion but insufficient to cause melting. The reaction proceeds through nucleation and growth mechanisms, where the product phase forms at the interfaces between reactant particles and gradually consumes the starting materials through continued atomic migration.

The kinetics of these solid-state reactions are predominantly controlled by diffusion rates, which follow an Arrhenius temperature dependence. Consequently, relatively small increases in processing temperature can significantly accelerate reaction rates. However, practical limitations exist, as excessive temperatures may promote undesirable phase transformations, promote exaggerated grain growth, or in specific material systems (like halide perovskites), lead to thermal decomposition [8]. In traditional oxide ceramics, sub-solidus sintering often requires ultrahigh temperatures (e.g., over 1000°C), whereas softer material systems like halide perovskites can be processed at significantly lower temperatures (e.g., 200°C) [8].

Microstructural Evolution and Phase Transformations

The evolution of microstructures during sub-solidus processing follows predictable pathways that can be manipulated through processing parameters. In oceanic gabbro systems, for example, Fe-Ti oxide micro-inclusions undergo specific transformations: initially forming as oriented, needle-shaped titanomagnetite through precipitation from Fe- and Ti-bearing plagioclase, then evolving into magnetite-ilmenite intergrowths through high-temperature oxidation above the Curie temperature of magnetite [9]. Further microstructural evolution occurs during hydrothermal alteration at lower temperatures, where intensive hydrothermal alteration under relatively reducing conditions can result in substantial recrystallization and formation of magnetite-ulvospinel micro-inclusions [9].

Table 1: Phase Transformation Pathways in Sub-Solidus Processing

| Initial Phase | Processing Conditions | Resultant Phase | Transformation Mechanism |

|---|---|---|---|

| Titanomagnetite | High-temperature oxidation (>Curie temp) | Magnetite-ilmenite intergrowths | Oxidation and exsolution |

| Fe- and Ti-bearing plagioclase | Solid-state precipitation (>600°C) | Needle-shaped titanomagnetite | Precipitation from host lattice |

| Magnetite-ulvospinel | Hydrothermal alteration ( | Magnetite-ilmenite ± ulvospinel aggregates | Dissolution and recrystallization |

| Powder precursors (PbI₂ + MAI) | FAST (200°C, 50 MPa) | MAPbI₃ perovskite | Electric and mechanical field-assisted sintering |

High-Throughput Implementation of Sub-Solidus Methods

Workflow Automation and Parallel Processing

The labor-intensive nature of conventional sub-solidus synthesis has historically challenged its implementation in high-throughput materials discovery. Recent advancements address this limitation through workflow optimization that combines manual steps performed on multiple samples simultaneously with researcher-hands-free automated processes [7]. This integrated approach significantly increases throughput while maintaining the phase purity essential for reliable materials characterization.

The high-throughput sub-solidus synthesis workflow enables rapid screening of oxide chemical space by enabling simultaneous expansion of explored compositions and synthetic conditions [7]. This methodology has been successfully demonstrated in extending the BaYₓSn₁₋ₓO₃₋ₓ/₂ solid solution beyond previously reported limits and exploring the Nb-Al-P-O composition space, showing applicability to polyanion-based compositions beyond oxides [7]. The workflow's versatility stems from its foundation in dry powder processing, which eliminates solvent-related complications and enables precise stoichiometric control across compositional gradients.

Diagram 1: High-throughput sub-solidus synthesis workflow (63 characters)

Advanced Sintering Techniques

Field-assisted sintering techniques represent significant advancements in sub-solidus processing, particularly for challenging material systems. The Electrical and Mechanical Field-Assisted Sintering Technique (EM-FAST) simultaneously applies electric and mechanical stress fields during synthesis, enabling ultrahigh yield, fast processing, and solvent-free production of bulk crystals with quality approaching single crystals [8]. This technique leverages the semiconducting nature and soft lattice characteristics of materials like halide perovskites, where applied pressure (approximately 50 MPa) enhances particle contact while pulsed electric current induces internal Joule heating concentrated at particle necks [8].

The FAST process demonstrates remarkable efficiency, synthesizing bulk crystals with diameters up to 12.7 cm and thickness of approximately 0.2 cm within 10 minutes—significantly faster than typical solution-based synthesis (<1 cm³ day⁻¹) [8]. The densification mechanism involves multiple mass transport pathways: compressive pressure enables better contact between particles, creating enlarged localized pressure that triggers densification by grain boundary diffusion, lattice diffusion, and plastic deformation or grain boundary sliding [8]. Simultaneously, pulsed electric current induces internal heating concentrating at particle necks, triggering mass transfer and grain merging between neighboring powders.

Experimental Protocols for Sub-Solidus Synthesis

Conventional Powder Processing Method

Materials and Equipment:

- High-purity oxide powders (e.g., BaCO₃, Y₂O₃, SnO₂)

- Mortar and pestle or ball milling apparatus

- Die set for powder compaction

- High-temperature furnace with controlled atmosphere

- Analytical balance (±0.1 mg precision)

Procedure:

- Formulation and Weighing: Calculate stoichiometric quantities of precursor powders based on target composition. Pre-dry hygroscopic powders at 200°C for 2 hours. Accurately weigh components using analytical balance.

- Mixing and Homogenization: Transfer powders to mixing container. For mortar and pestle mixing, grind continuously for 30-45 minutes until homogeneous. Alternatively, use ball milling with zirconia media for 2-4 hours at 200 RPM.

- Pelletization: Load mixed powder into die set. Apply uniaxial pressure of 50-100 MPa for 1-2 minutes to form green pellets with approximate density of 50-60% theoretical.

- Calcination: Place pellets in alumina crucible with powder bed of same composition to prevent contamination. Heat in furnace with controlled ramp rate (3-5°C/min) to intermediate temperature (typically 800-1000°C for oxides). Hold for 6-12 hours.

- Reaction Sintering: Regrind calcined pellets and repeat pelletization. Sinter at final temperature (typically 1100-1400°C for oxides) for 12-48 hours with controlled cooling rate (1-3°C/min).

- Characterization: Analyze phase purity by X-ray diffraction, microstructure by scanning electron microscopy, and functional properties as required.

Field-Assisted Sintering Technique (FAST) Protocol

Materials and Specialized Equipment:

- Precursor powders (e.g., PbI₂ and MAI for perovskites)

- Lab-customized FAST apparatus with mechanical loading system and high-power electrical circuit

- Controlled atmosphere chamber (argon or nitrogen)

- Graphite die and plungers

Procedure:

- Powder Preparation: Synthesize or obtain high-purity precursor powders. Optionally pre-react using ball milling (BM) process to obtain initial compound particles.

- Die Loading: Load powder into graphite die assembly. Apply minimal pre-pressure (∼10 MPa) to ensure powder contact.

- FAST Processing: Apply unidirectional mechanical stress (∼50 MPa) statically and uniformly to plunger head. Simultaneously apply low voltage but high pulse current (1-10 kA) to induce sufficient Joule heat. Quickly heat to target temperature (e.g., 200°C for MAPbI₃) within 1 minute and maintain for 2-5 minutes.

- Controlled Cooling: Implement controlled cooling ramp under maintained pressure to prevent cracking.

- Sample Extraction: Carefully remove sintered pellet from die assembly after cooling to room temperature.

- Post-Processing: Optionally anneal in controlled atmosphere to optimize properties. Characterize density, microstructure, and functional performance.

Table 2: Comparison of Sub-Solidus Synthesis Parameters for Different Material Systems

| Parameter | Conventional Oxide Ceramics | Halide Perovskites (FAST) | Geological Systems (Gabbro) |

|---|---|---|---|

| Temperature Range | 1100-1400°C | 150-250°C | 400-600°C (sub-solidus) |

| Processing Time | 12-48 hours | 2-10 minutes | Geological timescales |

| Pressure | 0.1 MPa (ambient) | ~50 MPa | Lithostatic pressure |

| Driving Force | Thermal energy | Electric field + mechanical stress | Hydrothermal alteration |

| Key Limitations | Slow diffusion rates | Thermal decomposition sensitivity | Redox condition dependency |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sub-Solidus Ceramic Processing

| Material/Equipment | Function in Sub-Solidus Processing | Application Examples |

|---|---|---|

| High-Purity Oxide Powders | Primary reactants for solid-state reactions | BaCO₃, Y₂O₃, SnO₂ for perovskite synthesis |

| Zirconia Milling Media | Homogenization of precursor mixtures | Ball milling for particle size reduction |

| Graphite Dies | Containment and pressure application | FAST processing under mechanical stress |

| Controlled Atmosphere Furnace | Thermal treatment without contamination | Oxidation-sensitive material synthesis |

| Analytical Balance | Precise stoichiometric control | Formulation of complex compositions |

| Hydraulic Press | Green body formation | Uniaxial pressing of powder pellets |

Characterization and Quality Control

The efficacy of sub-solidus processing must be verified through comprehensive materials characterization. X-ray powder diffraction (XRD) reveals crystallinity and phase purity, with successful reactions displaying characteristic product peaks without residual precursor signatures [8]. Microstructural analysis via scanning electron microscopy (SEM) and transmission electron microscopy (TEM) provides critical information about grain size, distribution, and internal microstructure, including the presence of oriented intergrowths and exsolution features [9] [8].

In functional materials, property measurements validate the success of synthesis protocols. For electronic ceramics, dielectric constant, loss tangent, and resistivity measurements confirm target properties. In magnetic systems like the oceanic gabbro with Fe-Ti oxide inclusions, paleomagnetic measurements provide insights into the remanent magnetization behavior, which correlates with the microstructural evolution during sub-solidus processing [9]. These characterization methodologies form essential feedback for optimizing sub-solidus reaction parameters in high-throughput experimentation.

Diagram 2: Quality control protocol for sub-solidus synthesis (54 characters)

Applications in Advanced Materials Development

Sub-solidus reaction pathways enable the synthesis of diverse functional materials across multiple technology domains. In electronic ceramics, these methods produce dielectric, piezoelectric, and ferroelectric components for sensors, actuators, and memory devices [6]. The exploration of Nb-Al-P-O composition space demonstrates the applicability of high-throughput sub-solidus synthesis to polyanion-based systems beyond simple oxides [7]. For energy applications, halide perovskites synthesized via FAST exhibit exceptional performance in photodetection and thermoelectric applications [8].

In geological systems, understanding sub-solidus evolution pathways provides crucial insights into paleomagnetic signals carried by Fe-Ti oxide micro-inclusions in oceanic gabbros [9]. The transformation of these inclusions from primary titanomagnetite to magnetite-ilmenite intergrowths through high-temperature oxidation, followed by further modification during hydrothermal alteration, creates distinct magnetic signatures that can be interpreted to understand the thermal and alteration history of the rock [9]. This principle demonstrates how sub-solidus reaction pathways create permanent records of material history that can be decoded through appropriate characterization techniques.

The integration of artificial intelligence (AI), robotics, and data science is fundamentally reshaping the discovery pipeline for both functional materials and pharmaceuticals. This paradigm shift toward automated and autonomous research addresses critical bottlenecks in traditional laboratory workflows, enabling rapid exploration of exponentially large chemical spaces with unprecedented efficiency. In functional materials science, this approach accelerates the development of advanced compounds for applications in energy storage, electronics, and catalysis. Similarly, within pharmaceutical research, automation compresses the timeline from target identification to clinical candidate, leveraging AI-driven design and high-throughput experimentation. These automated platforms are not merely about speed; they represent a fundamental transformation in how scientific inquiry is conducted, facilitating closed-loop systems where machine learning algorithms continuously refine experimental approaches based on real-time data. This document provides detailed application notes and protocols for implementing these technologies within the specific context of high-throughput solid-state synthesis, offering researchers practical methodologies for integrating automation into their discovery workflows.

Key Automated Platforms and Technologies

Materials Discovery Platforms

| Platform Name | Core Functionality | Primary Output | Throughput & Scale | Key Enabling Technologies |

|---|---|---|---|---|

| A-Lab (Berkeley Lab) [10] [2] | Autonomous solid-state synthesis of inorganic powders | Novel, synthesizable inorganic compounds | 41 novel compounds from 58 targets in 17 days [2] | Robotics, NLP for recipe proposal, active learning (ARROWS3), automated XRD analysis |

| Self-Driving Lab (NC State) [11] | Nanomaterial synthesis and optimization | Optimized quantum dots and nanomaterials | ≥10x more data than steady-state systems [11] | Dynamic flow experiments, real-time in situ characterization, machine learning |

| High-Throughput Sub-Solidus Workflow [12] | Solid-state synthesis of oxide materials | Free-standing pellets of oxide materials | 100-250 mg samples in multi-well formats [12] | Robotic liquid handling of slurries, freeze-drying, isopressing |

Pharmaceutical Discovery Platforms

| Platform/Company | Core AI & Automation Approach | Clinical Stage & Pipeline | Reported Efficiency Gains | Key Enabling Technologies |

|---|---|---|---|---|

| Exscientia [13] | Generative AI for small-molecule design, automated precision chemistry | Multiple Phase I/II candidates (e.g., CDK7, LSD1 inhibitors) [13] | Design cycles ~70% faster, 10x fewer synthesized compounds [13] | "Centaur Chemist" approach, patient-derived biology, AutomationStudio robotics |

| Insilico Medicine [13] | Generative AI for target discovery and molecular design | Phase IIa for idiopathic pulmonary fibrosis (ISM001-055) [13] | Target to Phase I in 18 months [13] | Generative chemistry, biomarker discovery |

| Schrödinger [13] | Physics-based simulation and machine learning | Phase III for TYK2 inhibitor (zasocitinib) [13] | Not specified | Physics-enabled design platform |

| Nuclera eProtein Discovery System [14] | Automated protein expression and purification | Soluble, active protein for R&D | DNA to protein in <48 hours (vs. weeks) [14] | Cartridge-based screening, cloud-based software, 24/7 operation |

Application Notes & Protocols

Protocol 1: High-Throughput Solid-State Synthesis of Oxides

This protocol, adapted from a published high-throughput workflow, details the synthesis of oxide materials using aqueous precursor slurries and automation, producing free-standing pellets suitable for characterization [12].

3.1.1 Research Reagent Solutions & Essential Materials

| Item | Function/Application in Protocol |

|---|---|

| Insoluble Raw Materials (Oxides, Carbonates, Oxalates) | Provide the cationic precursors for the target oxide material. |

| Zirconia Milling Media | Ensures effective wet milling of precursor suspensions to reduce particle size and enhance reactivity. |

| Ammonium Polyacrylate Dispersant | Reduces suspension viscosity to facilitate robotic liquid handling. |

| Water-Based Acrylic Emulsion Binder | Increases the mechanical strength of dried discs for handling and isopressing. |

| Sacrificial PET Trays | Vacuum-formed, transparent trays that hold individual samples; burn away cleanly during calcination. |

| Custom Silicone Holders | Protect the array of samples during isopressing within vacuum bags. |

3.1.2 Step-by-Step Workflow

Wet Milling (Manual Process):

- Input: Weigh out insoluble raw materials (oxides, carbonates, oxalates).

- Procedure: Mill raw materials in deionised water using a planetary mill (e.g., Fritsch Pulverisette 7) with zirconia media.

- Additives: Include ammonium polyacrylate dispersant and a water-based acrylic emulsion binder in the suspension.

- Quality Control: Check solids content by drying a 1 cm³ sample at 80°C overnight. Use this measured value to calculate molarity and dispensing volumes. Keep suspensions on a sample rotator to prevent sedimentation [12].

Wet Mixing (Automated Process):

- Equipment: Use an automated liquid handling station (e.g., Eppendorf epMotion 5075).

- Setup: Place starting material suspensions in vials on a custom low-profile magnetic stirrer to maintain homogeneity.

- Dispensing: Program the liquid handler to aspirate calculated volumes from different precursor vials and dispense them into a designated glass vial for each target formulation.

- Mixing: The handler mixes each formulation by repeated aspiration and dispensing. Adjust dispensing parameters for the density and viscosity of the suspensions [12].

Dispensing (Automated Process):

- Containers: Use specially designed, vacuum-formed transparent PET trays.

- Process: The liquid handler dispenses small aliquots (e.g., 0.2 cm³) of each mixture into individual wells of the PET trays.

- Handling: Trays are held on custom 3D-printed holders that mimic a standard 96-well plate footprint for compatibility [12].

Freeze Drying (Batch Manual Process):

- Freezing: Manually transfer trays to a freezer at -20°C overnight.

- Drying: Transfer frozen trays to a freeze drier (e.g., Labconco). Insulate trays from metal shelves with open-cell polymer foam.

- Output: Porous, flat-bottomed discs of precursor mixture [12].

Isopressing (Batch Manual Process):

- Preparation: Place the dried trays in custom silicone holders, cover with a silicone sheet, and vacuum-seal in nylon bags.

- Pressing: Isopress the sealed bags at 105–210 MPa (15,000–30,000 psi) to increase disc density and strength.

- Output: Dense, free-standing pellets ready for calcination [12].

Calcination (Batch Process):

- Transfer: Invert trays to eject pellets onto refractory batts.

- Heating: Fire pellets in a high-temperature furnace according to the desired thermal profile for the target material.

- Output: Final, sintered oxide pellets for characterization (e.g., XRD) [12].

3.1.3 Workflow Visualization

Protocol 2: Autonomous Synthesis in a Self-Driving Materials Lab

This protocol outlines the operation of a fully autonomous laboratory, as exemplified by the A-Lab, for the discovery of novel inorganic powders [2].

3.2.1 Research Reagent Solutions & Essential Materials

| Item | Function/Application in Protocol |

|---|---|

| Precursor Powders | Broad library of solid-state inorganic precursors (oxides, phosphates, etc.). |

| Alumina Crucibles | Contain precursor mixtures during high-temperature reactions. |

| Robotic Arms & Grippers | Handle samples and labware between stations without human intervention. |

| Box Furnaces | Provide controlled high-temperature environment for solid-state reactions. |

| X-ray Diffractometer (XRD) | Provides primary characterization data for reaction products. |

3.2.2 Step-by-Step Workflow

Target Identification & Recipe Proposal (Computational):

- Input: A list of target materials predicted to be stable via ab initio computations (e.g., from the Materials Project) [2].

- Recipe Generation: Initial synthesis recipes are proposed by natural language processing (NLP) models trained on historical literature data. A second ML model suggests a synthesis temperature [2].

- Active Learning Backend: The ARROWS³ algorithm, which uses computed reaction energies and observed outcomes, is primed to optimize failed attempts [2].

Sample Preparation & Mixing (Robotic):

- Process: A robotic station automatically dispenses and mixes precise masses of precursor powders.

- Output: Homogeneous powder mixtures are transferred into alumina crucibles [2].

Heating (Robotic):

- Transfer: A robotic arm loads crucibles into one of multiple available box furnaces. *Reaction: The furnace executes the programmed thermal profile (temperature, time, atmosphere) [2].

Characterization (Robotic):

- Transfer & Preparation: After cooling, a robotic arm transfers the crucible to a station where the sample is ground into a fine powder.

- Analysis: The powder is presented to an X-ray diffractometer (XRD) for phase analysis [2].

Data Analysis & Decision Making (AI):

- Phase Identification: The XRD pattern is analyzed by probabilistic ML models to identify phases and determine target yield (weight fraction) via automated Rietveld refinement [2].

- Decision Logic:

- If yield >50%: The synthesis is deemed successful. The result is logged, and the lab proceeds to the next target [2].

- If yield ≤50%: The result, including any identified intermediate phases, is fed to the active learning algorithm (ARROWS³). This algorithm uses thermodynamic data and accumulated knowledge of pairwise reactions to propose a new, optimized synthesis recipe (e.g., different precursors, temperature, or milling), and the loop (steps 2-5) repeats [2].

3.2.3 Workflow Visualization

Protocol 3: Dynamic Flow Experimentation for Nanomaterials

This protocol describes a self-driving lab approach that uses dynamic flow experiments for the high-throughput synthesis and optimization of nanomaterials like colloidal quantum dots [11].

3.3.1 Research Reagent Solutions & Essential Materials

| Item | Function/Application in Protocol |

|---|---|

| Liquid Precursors | Chemical solutions containing molecular or ionic precursors to the target nanomaterial. |

| Microfluidic Continuous Flow Reactor | A system where chemical mixtures are continuously varied and reactions occur in a microchannel. |

| In-line/In situ Sensors | Probes (e.g., for absorbance, photoluminescence) that characterize material properties in real-time as reactions occur. |

3.3.2 Step-by-Step Workflow

System Priming & Parameter Definition:

- Setup: Prime the continuous flow reactor system with liquid precursors.

- Objective: Define the goal for the nanomaterial (e.g., target bandgap, particle size, photoluminescence peak).

- Machine Learning: Initialize the machine learning algorithm that will control the experiment [11].

Dynamic Flow Experimentation:

- Process: Instead of running discrete, steady-state reactions, the system continuously varies chemical mixtures and reaction conditions (e.g., flow rate, temperature, precursor ratios) in the microchannel [11].

- Key Feature: The system does not sit idle waiting for reactions to complete. The composition is dynamically changing [11].

Real-Time, In Situ Characterization:

- Monitoring: A suite of in-line sensors continuously monitors the output stream of the reactor, collecting data on the properties of the formed nanomaterial (e.g., optical properties) every half-second [11].

- Data Output: This provides a high-resolution "movie" of the reaction outcome as a function of changing conditions, generating at least 10x more data than steady-state approaches [11].

Closed-Loop Optimization:

- Learning: The rich, real-time data stream is fed to the machine learning algorithm, which uses it to build a more accurate model of the synthesis landscape.

- Decision & Action: The algorithm makes intelligent predictions about the next best set of conditions to test in order to approach the defined objective and immediately adjusts the flow parameters accordingly [11].

- Completion: The loop continues until an optimal material is identified or a stopping criterion is met.

3.3.3 Workflow Visualization

Quantitative Performance Data

Efficiency Gains in Automated Discovery

| Metric | Traditional Workflow | Automated/AI Workflow | Improvement Factor | Source |

|---|---|---|---|---|

| Materials Discovery Speed | Years/Months | 41 novel compounds in 17 days [2] | >10x acceleration [10] | Berkeley Lab A-Lab [2] |

| Pharmaceutical Discovery (Early) | ~5 years (target to clinic) | 18 months (target to Phase I) [13] | ~3-4x acceleration | Insilico Medicine [13] |

| Lead Optimization Cycles | Industry standard | ~70% faster design cycles [13] | Significant compression | Exscientia [13] |

| Chemical Consumption & Waste | Standard amount | "Dramatic" reduction, "far less waste" [11] | Not quantified | Self-Driving Lab (NC State) [11] |

| Data Acquisition Efficiency | Steady-state experiments | Dynamic flow experiments [11] | >10x more data [11] | Self-Driving Lab (NC State) [11] |

Analysis of Autonomous Synthesis Outcomes (A-Lab)

| Synthesis Outcome | Number of Targets | Percentage of Total Targets | Key Contributing Factors |

|---|---|---|---|

| Successfully Synthesized | 41 | 71% | Effective initial recipe proposal by NLP; Successful active learning optimization [2] |

| Via literature-inspired recipes | 35 | 60% | High similarity between target and known materials [2] |

| Via active learning (ARROWS3) | 6 | 10% | Avoidance of low-driving-force intermediates [2] |

| Not Synthesized | 17 | 29% | Multiple failure modes identified [2] |

| Due to slow kinetics | 11 | 19% | Reaction steps with low driving force (<50 meV/atom) [2] |

| Due to precursor volatility | 2 | 3% | Evaporation of precursor during heating [2] |

| Due to amorphization | 2 | 3% | Failure to crystallize [2] |

| Due to computational inaccuracy | 2 | 3% | Incorrect stability prediction [2] |

The pursuit of novel materials for applications in batteries, catalysts, and other advanced technologies is limited by the traditional, labor-intensive methods of solid-state synthesis [12]. To accelerate discovery, research and development must embrace high-throughput techniques. However, full automation is not always appropriate or proportionate, particularly in discovery-oriented research where flexibility for intervention is crucial [12]. This document outlines a hybrid workflow that strategically integrates indispensable human expertise with hands-free automation, creating a powerful synergy for high-throughput solid-state synthesis within experimental planning research. This approach aims to reduce researcher time per sample while enabling the exploration of vast compositional spaces [12].

The core of the hybrid workflow is the synergistic division of labor between a scientist and an automated platform. The researcher provides critical inputs—target selection, precursor preparation, and data interpretation—while the automated system executes repetitive, precise, and scalable tasks such as dispensing, mixing, and heat treatment. An active learning loop, driven by automated characterization and computational analysis, closes the cycle by informing subsequent experimental iterations [2].

The following diagram illustrates the integrated workflow, highlighting the seamless handoffs between manual and automated operations:

Detailed Experimental Protocols

This section provides a step-by-step breakdown of the key procedures within the hybrid workflow, from initial precursor preparation to final data collection.

Manual Precursor Preparation and Wet Milling

This initial manual stage ensures precursor materials are optimally prepared for downstream automation [12].

- Objective: To reduce particle size and mix insoluble raw materials (oxides, carbonates, oxalates) uniformly in an aqueous suspension.

- Materials:

- Insoluble raw material powders.

- Deionized water.

- Zirconia milling media.

- Ammonium polyacrylate dispersant (to reduce suspension viscosity).

- Water-based acrylic emulsion binder (to increase green strength of discs).

- Equipment: Planetary mill (e.g., Fritsch Pulverisette 7).

- Procedure:

- Combine raw materials, deionized water, dispersant, and binder in the milling container.

- Add zirconia milling media.

- Mill for a predetermined duration to achieve a homogeneous suspension with fine particle size.

- Extract a small sample (e.g., 1 cm³) to check solids content by drying overnight at 80°C.

- Use the measured solids content to calculate the molarity of inorganic precursor per unit volume for subsequent automated dispensing.

- Place the final suspension on a disc-type sample rotator to prevent sedimentation prior to automated handling.

Automated Wet Mixing and Dispensing

This automated stage enables the precise and rapid formulation of numerous compositional variations without manual intervention [12].

- Objective: To accurately combine different precursor suspensions into discrete arrays of samples based on a predefined experimental design.

- Materials:

- Precursor suspensions from Section 3.1.

- Custom-designed vacuum-formed PET trays.

- Equipment: Automated liquid handling station (e.g., Eppendorf epMotion 5075), custom low-profile multi-position magnetic stirrer.

- Procedure:

- Place precursor suspension vials on the custom magnetic stirrer to maintain homogeneity during dispensing.

- The liquid handler aspirates calculated volumes from respective precursor vials and dispenses them into designated wells of the PET trays, creating one formulation per vial or well.

- The handler mixes each formulation by repeated aspiration and dispensing.

- Small aliquots (e.g., 0.2 cm³) of each mixture are dispensed into the wells of the sacrificial PET trays. The tray design (e.g., 12 wells per tray) allows for multiple samples to be processed simultaneously.

Hybrid Sample Processing and Curing

This phase involves a handoff from automation back to manual handling for strategic steps, then returns to automated processing.

- Objective: To convert the liquid aliquots into solid, dense pellets suitable for high-temperature reaction and analysis.

- Materials: PET trays with dispensed samples, silicone holders, nylon bags, refractory batts.

- Equipment: Freezer, freeze drier (e.g., Labconco), laboratory isopress (e.g., Autoclave Engineers), box furnaces.

- Procedure:

- Manual Transfer & Freeze-Drying: Manually transfer the trays to a freezer (-20°C), then to a freeze drier. The samples freeze and are lyophilized to form porous discs [12].

- Automated Isopressing: Place the dried trays in custom silicone holders, cover with a silicone sheet, and vacuum-seal in nylon bags. The packages are then isopressed at high pressure (105–210 MPa) to densify the discs [12].

- Automated Heat Treatment: A robotic arm loads the crucibles into box furnaces for calcination. Recipes, including temperature and atmosphere, can be adjusted based on prior learning [12] [2].

Automated Characterization and Data Analysis

The final stage involves automated data collection paired with expert-led interpretation to guide the next research cycle.

- Objective: To identify the phases present in the synthesized products and determine target yield.

- Materials: Synthesized powder samples.

- Equipment: X-ray Diffractometer (XRD), robotic sample handling.

- Procedure:

- An automated system grinds the sintered pellets into a fine powder and presents them to the XRD for measurement [2].

- Machine learning models analyze the XRD patterns to extract phase and weight fractions. For novel materials without experimental patterns, computed structures are used for identification [2].

- Results from automated Rietveld refinement are reported. A human researcher interprets these outcomes, making strategic decisions on the next steps, such as adjusting synthesis parameters or initiating new target searches [12] [2].

Table 1: Summary of Hybrid Workflow Stages and Their Key Features

| Workflow Stage | Primary Actor | Key Actions | Output |

|---|---|---|---|

| 1. Precursor Preparation | Manual | Wet milling, solids content verification | Homogeneous precursor suspensions |

| 2. Formulation | Automated | Robotic liquid mixing & dispensing | Array of samples in trays |

| 3. Sample Processing | Hybrid (Manual & Automated) | Freeze-drying, isopressing, heat treatment | Dense, solid pellets |

| 4. Analysis & Learning | Hybrid (Automated & Manual) | XRD, ML analysis, expert interpretation | Phase identification, next experiments |

The Scientist's Toolkit: Key Research Reagent Solutions

The following reagents and materials are essential for executing the described hybrid synthesis workflow.

Table 2: Essential Research Reagents and Materials

| Item | Function / Purpose | Specific Example / Note |

|---|---|---|

| Precursor Powders | Source of inorganic cations for reactions | Oxides, carbonates, oxalates [12] |

| Ammonium Polyacrylate | Dispersant to reduce suspension viscosity | Prevents agglomeration, ensures homogeneity [12] |

| Acrylic Emulsion Binder | Provides mechanical strength to dried discs | Enables isopressing without sample failure [12] |

| Sacrificial PET Trays | Sample holder for aliquots during dispensing/drying | Vacuum-formed, burns away cleanly during calcination [12] |

| Zirconia Milling Media | Particle size reduction and mixing | Used in planetary ball milling [12] |

| Alumina Crucibles | Container for samples during high-temperature treatment | Withstands repeated heating cycles [2] |

Implementation Considerations

Successfully implementing this hybrid model requires more than just equipment. Key considerations include:

- Computational Integration: The workflow is enhanced by integrating computational screening (e.g., using ab initio data from sources like the Materials Project) to identify stable target materials [2]. Active learning algorithms can use observed reaction data to propose improved synthesis routes with higher yield, creating a closed-loop discovery system [2].

- Flexibility Over Full Automation: Retaining manual intervention at key points provides resilience and flexibility. It allows researchers to make on-the-fly adjustments to calcination temperatures, atmospheres, or other parameters based on intermediate results, which is vital for exploring uncharted chemical spaces [12].

- Data-Driven Iteration: The power of the workflow is fully realized when characterization data (e.g., XRD patterns analyzed by ML models) is directly fed back to inform subsequent experimental cycles. This data-driven approach continuously refines synthesis hypotheses and accelerates the path to successful material discovery [2].

Methodologies in Action: Automated Workflows and AI-Driven Synthesis Planning

The discovery and development of new inorganic solid-state materials are fundamentally limited by traditional synthesis methods, which are labor-intensive, low-throughput, and poorly suited to exploring vast compositional spaces. Slurry-based high-throughput synthesis presents a transformative approach that combines the versatility of traditional solid-state reactions with the efficiency of automation. This methodology enables researchers to systematically investigate complex multi-component oxide systems, accelerating the discovery of novel functional materials for applications ranging from solid-state batteries to electroceramics.

Traditional sub-solidus synthesis methods, while versatile, process only one formulation at a time through repeated cycles of manual grinding and calcination [12]. As technological demands increase for higher-performance materials in batteries, catalysts, and other functional applications, these conventional approaches are no longer adequate for efficiently exploring the exponentially growing compositional space [12]. The workflow described in this application note addresses these limitations by implementing a parallel processing approach that maintains the accessibility of solid-state reagent systems while dramatically increasing throughput.

Experimental Design and Workflow Architecture

The overarching goal of this slurry-based workflow is to transform solid precursor handling from a serial, labor-intensive process into a parallelized, automated pipeline suitable for rapid materials discovery. The design philosophy emphasizes replacing "one-by-one" sample handling with batch processing of multiple samples, while strategically integrating automation where it provides maximum benefit [12]. This hybrid approach retains researcher oversight at critical decision points while eliminating bottlenecks in repetitive tasks.

The diagram below illustrates the complete slurry-based synthesis workflow, integrating both manual and automated processes from precursor preparation to final characterization:

Key Design Considerations

This workflow architecture incorporates several critical design elements that enable successful high-throughput solid-state synthesis:

- Flexibility in Composition Space: The use of multiple precursor suspensions allows investigation of ternary and higher-order oxide systems, with the current equipment supporting at least quaternary compositions [12].

- Sample Form Factor: The process generates free-standing pellets (100-250 mg) suitable for various characterization techniques, overcoming limitations of thin-film or substrate-supported approaches where substrate interference can complicate analysis [12].

- Process Resilience: Strategic retention of manual handling at intermediate stages provides opportunities for inspection and intervention, allowing researchers to adjust calcination parameters or other conditions based on observed results [12].

Materials and Reagent Solutions

Research Reagent Solutions

Table 1: Essential reagents and materials for slurry-based high-throughput synthesis

| Reagent/Material | Function | Examples & Specifications |

|---|---|---|

| Solid Precursors | Source of metal cations | Oxides, carbonates, oxalates (e.g., AKP-20 α-Al₂O³, 0.5 μm) [15] |

| Dispersant | Prevents particle aggregation, reduces viscosity | Ammonium polyacrylate (e.g., Aron A6114) [12] [15] |

| Binder | Provides mechanical strength after drying | Acrylic emulsion [12] or PVDF [16] |

| Milling Media | Particle size reduction and mixing | Zirconia grinding media [12] |

| Abrasive Particles | Precision polishing for characterization | Alumina, ceria, silica (10 nm-1.0 μm) [17] |

| Surface Treatment | Improves powder-binder bonding | Silane coupling agents (e.g., 2-(3,4-Epoxycyclohexyl) ethyltrimethoxysilane) [18] |

Equipment and Consumables

Table 2: Essential equipment for implementing the high-throughput workflow

| Equipment Category | Specific Examples | Application Notes |

|---|---|---|

| Milling Equipment | Planetary mill (e.g., Fritsch Pulverisette 7) [12] | Wet milling with zirconia media |

| Liquid Handling | Automated workstation (e.g., Eppendorf epMotion 5075) [12] | Custom stirrer for viscous suspensions |

| Drying Equipment | Freeze dryer (e.g., Labconco cabinet) [12] | With polymer foam insulation shelves |

| Forming Equipment | Laboratory isopress (e.g., Autoclave Engineers) [12] | 105-210 MPa pressure range |

| Consumables | Custom PET trays [12] | Vacuum-formed, transparent, sacrificial |

Detailed Experimental Protocols

Phase 1: Slurry Preparation and Optimization

Wet Milling and Suspension Preparation

Objective: Create stable, homogeneous suspensions of precursor materials with controlled particle size and known solids content.

Procedure:

- Weighing: Accurately weigh insoluble raw materials (oxides, carbonates, oxalates) based on target compositions.

- Suspension Formulation: Combine solids with deionized water in appropriate containers. Include 0.12-0.15% ammonium polyacrylate dispersant (based on solids weight) to reduce viscosity and prevent sedimentation [12] [15].

- Wet Milling: Process suspensions using zirconia media in a planetary mill (e.g., Fritsch Pulverisette 7). Mill for sufficient duration to achieve desired particle size distribution while minimizing contamination from milling media.

- Binder Addition: Introduce acrylic emulsion binder (typically 1-3% by weight) to increase mechanical strength of resulting discs [12].

- Solids Content Verification: Extract 1 cm³ sample from each mill and dry overnight at 80°C. Calculate exact solids content to enable precise volumetric dispensing in subsequent steps.

- Suspension Maintenance: Place completed suspensions on sample rotators to prevent sedimentation and ensure homogeneity during dispensing operations.

Critical Parameters:

- Particle Size: Directly affects reactivity and sintering behavior

- Viscosity: Must be optimized for liquid handling equipment (typically <10 mPa·s for wet-jet milled slurries) [15]

- Stability: Suspensions should remain homogeneous without settling during processing

Slurry Formulation Optimization

For specialized applications such as solid-state battery electrolytes, additional slurry optimization may be required:

Surface Treatment:

- Apply 1 wt% silane coupling agent to powder surfaces

- Stabilize at room temperature for 24 hours to ensure complete reaction [18]

Dispersion Enhancement:

- Utilize inline mixers (150 rpm for 3 hours) followed by bead milling (4500 rpm for 3 hours) [18]

- Monitor viscosity continuously to ensure proper dispersion

- Target hydrophilic surfaces with contact angles ~25° for improved binder adhesion [18]

Phase 2: Automated Liquid Handling and Sample Formation

Automated Liquid Handling Setup

Objective: Precisely dispense and mix multiple precursor suspensions to create combinatorial composition libraries.

Procedure:

- Equipment Preparation: Configure automated liquid handling station (e.g., Eppendorf epMotion 5075) with custom low-profile magnetic stirrer to maintain suspension homogeneity.

- Suspension Loading: Transfer precursor suspensions to glass vials on stirring stations. Use custom 3D-printed stirrers with center holes to minimize dead volume.

- Volumetric Dispensing: Program liquid handler to dispense calculated volumes of each suspension into mixing vials based on target compositions. Adjust dispensing parameters to account for increased density and viscosity compared to standard solutions.

- Mixing Protocol: Implement repeated aspiration and dispensing cycles (typically 5-10 cycles) to ensure complete homogenization of mixtures.

- Aliquot Dispensing: Transfer 0.2 mL aliquots of each mixture into custom vacuum-formed PET trays using specialized tips capable of handling particulate suspensions.

Critical Parameters:

- Stirring Speed: 240-960 rpm, optimized for each suspension type [12]

- Dispensing Accuracy: Regular verification through weight measurements

- Cross-contamination Prevention: Adequate tip cleaning between different compositions

Freeze-Drying Protocol

Objective: Convert liquid aliquots into porous solid discs suitable for subsequent processing.

Procedure:

- Freezing: Manually transfer filled trays to -20°C freezer for a minimum of 12 hours to ensure complete freezing.

- Freeze-Drying Setup: Place frozen trays on open-cell polymer foam-covered shelves in freeze-dryer cabinet to insulate from heat transfer and maintain frozen state during initial vacuum application.

- Drying Cycle: Apply vacuum and maintain until complete sublimation is achieved (typically 24-48 hours depending on sample volume and equipment).

- Quality Assessment: Verify formation of porous discs with flat-bottomed faces suitable for subsequent pressing and XRD analysis.

Phase 3: Pellet Formation and Thermal Processing

Isopressing and Green Body Formation

Objective: Convert porous freeze-dried discs into dense, robust green bodies suitable for high-temperature processing.

Procedure:

- Tray Preparation: Position dried sample trays in custom silicone holders containing metal inserts to improve disc flatness during pressing.

- Bag Sealing: Cover trays with 2 mm silicone sheet and vacuum seal in nylon bags using standard laboratory bag press.

- Isopressing: Process sealed bags in laboratory isopress at 105-210 MPa (15,000-30,000 psi) for sufficient duration to achieve desired density.

- Pellet Extraction: Carefully remove pressed discs from trays and inspect for structural integrity.

Critical Parameters:

- Pressure: Optimized for each materials system (105-210 MPa typical range) [12]

- Green Density: Typically >65% relative density for wet-jet milled slurries [15]

- Handling: Pressed discs must maintain structural integrity for transfer to calcination setup

Thermal Treatment and Characterization

Objective: Convert green bodies into fully reacted materials and characterize structural properties.

Procedure:

- Calcination Setup: Transfer pressed pellets to appropriate refractory supports (e.g., alumina crucibles or batt).

- Thermal Profile: Implement optimized temperature program including:

- Characterization: Present calcined pellets to automated characterization systems:

- X-ray diffraction for phase identification

- Scanning electron microscopy for microstructure analysis

- Impedance spectroscopy for functional properties [18]

Data Analysis and Quality Control

Compositional and Structural Characterization

X-ray Diffraction Analysis:

- Acquire patterns from flat face of pellets

- Implement automated phase identification algorithms

- Calculate lattice parameters and identify solid solution formation

Microstructural Characterization:

- Evaluate particle morphology and size distribution via SEM

- Assess sintered density through image analysis or Archimedes method

- Identify secondary phases or inhomogeneities

Performance Metrics and Quality Control

Table 3: Key quality control parameters and their target values

| Parameter | Target Value | Measurement Technique | Significance |

|---|---|---|---|

| Slurry Viscosity | <10 mPa·s [15] | Rotational viscometer | Dispersion quality, handling properties |

| Green Density | >65% theoretical [15] | Geometric measurement | Sintering behavior prediction |

| Shrinkage Uniformity | Consistent across compositions (~11% linear) [15] | Dilatometry or dimensional measurement | Process control indicator |

| Crystallite Size | System-dependent | XRD line broadening | Reactive surface area |

| Ionic Conductivity | >2.33×10⁻⁷ S/cm (LBA solid electrolyte) [18] | Impedance spectroscopy | Functional performance |

High-Throughput Data Management

For effective screening of large compositional libraries, implement automated data processing pipelines:

- Cluster Analysis: Apply ANOVA-based methods (e.g., CASANOVA) to identify consistent response patterns across replicate samples [19]

- Potency Estimation: Calculate AC₅₀ values for functional properties where applicable

- Hit Identification: Establish statistically significant thresholds for designating promising compositions

Applications and Case Studies

Extended Solid Solution Discovery

This workflow has successfully identified previously unreported composition ranges in the BaYₓSn₁₋ₓO₃₋ₓ/₂ solid solution, demonstrating its capability to expand known compositional boundaries in complex oxide systems [12]. The ability to systematically probe composition space with precise control of stoichiometry enables discovery of metastable phases and extended solid solutions not accessible through traditional methods.

Niobium-Aluminum-Phosphate-Oxygen System Exploration

The methodology has been applied to polyanion-based systems beyond simple oxides, specifically the Nb-Al-P-O composition space, highlighting its versatility for investigating diverse chemistries [12]. This demonstrates the workflow's applicability to phosphates and other complex anion systems relevant to battery materials and catalysts.

Solid-State Battery Electrolyte Development

Optimized slurry formulations have enabled production of thin-film sheets (21 μm thickness) of Li₂O-B₂O₃-Al₂O₃ (LBA) solid electrolytes for multilayer solid-state batteries [18]. The workflow facilitates optimization of dispersibility and low-temperature sintering behavior critical for practical solid-state battery manufacturing.

Troubleshooting and Optimization Guidelines

Common Challenges and Solutions

Table 4: Troubleshooting guide for slurry-based high-throughput synthesis

| Issue | Potential Causes | Solutions |

|---|---|---|

| High slurry viscosity | Particle aggregation, insufficient dispersant | Optimize dispersant concentration; implement wet-jet milling [15] |

| Settlement during dispensing | Inadequate mixing, particle size too large | Use sample rotators; optimize stirrer design [12] |

| Low green density | Inadequate pressing parameters, poor particle packing | Increase isopressing pressure; optimize particle size distribution [15] |

| Cracking during calcination | Rapid heating, binder removal issues | Implement controlled burnout stage; optimize heating rates [18] |

| Compositional inhomogeneity | Incomplete mixing, sedimentation | Extend mixing time; verify suspension stability [12] |

Process Optimization Strategies

- Dispensing Parameters: Systematically calibrate liquid handling parameters for each suspension type, accounting for density and viscosity variations

- Milling Efficiency: Compare planetary milling with wet-jet milling alternatives, which can provide superior stability and lower viscosity for certain systems [15]

- Binder Selection: Tailor binder chemistry to specific powder surfaces, utilizing surface treatment with coupling agents when necessary [18]

- Atmosphere Control: Implement specialized calcination atmospheres for oxygen-sensitive materials or reduction-prone cations

This integrated workflow represents a significant advancement in high-throughput materials discovery, providing researchers with a robust framework for accelerating the development of next-generation solid-state materials.

The exploration of inorganic materials chemical space is exponentially complex, making traditional, manual sub-solidus synthesis methods a bottleneck in the discovery of new functional oxides for applications in batteries, solar cells, and catalysts [12]. High-throughput (HT) methodologies are essential for accelerating this research, but the implementation of automation for solid-state synthesis has historically been challenging due to the physical nature of solid reagents [12]. The development of a slurry-based workflow, which adapts robotic liquid handling for the precise dispensing and mixing of precursor slurries, represents a significant advancement. This approach combines the versatility of traditional solid-state synthesis with the speed, reproducibility, and minimal manual intervention of laboratory automation [12]. This application note details the protocols and benefits of using automated liquid handling systems for the preparation of precursor slurries, framed within the context of a high-throughput solid-state synthesis research program.

The Role of Automated Liquid Handling in HT Solid-State Synthesis

Automated liquid handling robots are central to modern high-throughput experimentation, capable of performing repetitive pipetting tasks with superior accuracy and reproducibility, thereby freeing skilled researchers for more complex work [20] [21]. While initially developed for pharmaceutical and life science applications, these principles are directly transferable to materials science for handling precursor slurries.

The core challenge in solid-state synthesis is the transition from handling individual solid powders to managing them as stable, aqueous suspensions. A successfully automated workflow for solid-state synthesis involves several key stages, from initial powder preparation to the final heat treatment of samples, with robotic liquid handling playing a pivotal role in the intermediate stages.

The diagram below illustrates the logical sequence and decision points within a high-throughput slurry dispensing workflow.

Quantitative Performance Data

The implementation of automated liquid handling for slurry-based synthesis directly addresses critical inefficiencies in traditional methods. The table below summarizes key performance metrics and advantages.

Table 1: Performance Metrics of Automated Slurry Handling vs. Manual Solid-State Synthesis

| Performance Aspect | Traditional Manual Method | Automated Slurry Workflow | Improvement / Outcome |

|---|---|---|---|

| Throughput | Processing of one formulation at a time [12] | Handling of dozens of compositions in parallel (e.g., 12-well trays) [12] | Exponential increase in explored compositional space |

| Researcher Time | Labour-intensive, repetitive cycles of hand grinding and calcination [12] | Significant reduction; manual actions impact multiple samples simultaneously [12] | Freed for more complex tasks; reduced weekly hands-on time |

| Sample Output | Single, custom-sized samples | Typically 100-250 mg free-standing pellets in standardized formats [12] | Suitable for a wide range of subsequent automated characterization |

| Error Reduction | Prone to human error and inconsistency during manual pipetting and mixing [21] | Standardized protocol execution ensures uniformity across users and experiments [21] | Improved reproducibility and data quality |

| Liquid Handling Time | N/A (Manual process) | Up to 37% reduction in execution time for liquid transfers via optimized scheduling [22] | Faster overall workflow execution |

Detailed Experimental Protocol

This protocol describes a high-throughput workflow for synthesizing oxide materials from precursor slurries using an Eppendorf epMotion 5075 automated liquid handling station or equivalent. The goal is to produce arrays of free-standing pellets for subsequent calcination and characterization.

Materials and Equipment

Table 2: Research Reagent Solutions and Essential Materials

| Item | Function / Description | Example Specifications |

|---|---|---|

| Precursor Powders | Source of metal cations (e.g., oxides, carbonates, oxalates). | High purity (>99%), insoluble in water. |

| Zirconia Milling Media | For wet milling raw materials to a consistent particle size. | 5 mm diameter spheres. |

| Ammonium Polyacrylate | Dispersant to reduce suspension viscosity and prevent sedimentation. | - |

| Acrylic Emulsion Binder | Aqueous binder to increase mechanical strength of discs after drying. | - |

| Custom PET Trays | Vacuum-formed trays to hold individual slurry aliquots. | 12-wells, 10 mm diameter, ~0.2 mL capacity [12]. |

| Eppendorf epMotion 5075 | Automated liquid handling station for precise slurry transfer and mixing. | With custom low-profile magnetic stirrer. |

| Laboratory Freeze Dryer | For removing water from aliquots to form porous discs. | Labconco vacuum cabinet model or equivalent. |

| Laboratory Isopress | To compact dried discs, increasing density and strength. | Autoclave Engineers 75 mm diameter, capable of 105-210 MPa. |

Step-by-Step Procedure

Wet Milling of Raw Materials (Manual Preparation)

- Combine insoluble raw materials (e.g., oxides, carbonates) with deionized water, zirconia milling media, ammonium polyacrylate dispersant, and an acrylic emulsion binder in a planetary mill jar.

- Mill the suspension to achieve a homogeneous mixture with a consistent particle size.

- Keep the resulting suspensions on a sample rotator to prevent sedimentation and separation before dispensing.

Automated Liquid Handling: Slurry Mixing and Dispensing

- Setup: Load the precursor suspensions into glass vials placed on a custom, low-profile multi-position magnetic stirrer integrated into the liquid handler's deck. This prevents settling during aspiration.

- Programming: Using the robot's software (e.g., VIALAB for INTEGRA systems [21]), program a protocol to:

- Aspirate specified volumes from different precursor vials to achieve the target molar ratios for each composition.

- Dispense these volumes into a designated mixing vial (one per formulation).

- Mix the combined slurries thoroughly by repeated cycles of aspiration and dispensing. Adjust the pipetting parameters (speed, flow rate) to account for the higher density and viscosity of the suspensions compared to water [12].

- Dispensing: Following mixing, the liquid handler dispenses a small aliquot (e.g., 0.2 mL) of each mixture into the wells of custom, vacuum-formed PET trays. These trays are held in custom 3D-printed holders that mimic the footprint of a standard microplate.

Freeze-Drying

- Manually transfer the filled trays to a freezer at -20°C overnight.

- The following day, place the trays in a freeze drier until the aliquots are completely dry, forming porous, solid discs.

Isopressing

- Place the trays of dried discs into custom silicone holders.

- Cover with a silicone sheet and vacuum-seal the entire assembly in a nylon bag.

- Isopress the sealed bag at a pressure between 105 and 210 MPa to increase the density and mechanical strength of the discs, creating robust pellets for calcination.

Calcination

- Remove the pressed pellets from the trays and transfer them to a refractory batt.

- Fire the pellets in a furnace at the required temperature and atmosphere to facilitate solid-state reaction and crystal formation. Multiple arrays can be processed at different temperatures or atmospheres to expand the experimental parameter space.

The Scientist's Toolkit

Table 3: Essential Reagents and Materials for Slurry-Based Synthesis

| Category | Item | Critical Function |

|---|---|---|

| Precursors & Chemicals | High-Purity Precursor Powders (Oxides, Carbonates) | Provides the elemental composition for the target material. |

| Dispersant (e.g., Ammonium Polyacrylate) | Prevents particle agglomeration, ensures stable and homogenous slurry. | |

| Aqueous Binder (Acrylic Emulsion) | Imparts green strength to the dried pellet for handling and pressing. | |

| Consumables & Hardware | Zirconia Milling Media | Efficiently reduces particle size and mixes precursors during wet milling. |

| Custom PET or Bioplastic Trays | Acts as a sacrificial scaffold for forming individual sample discs. | |

| Wide-Bore Pipette Tips | Facilitates reliable aspiration and dispensing of particulate-laden slurries. |

The integration of robotic liquid handling for the dispensing and mixing of precursor slurries provides a practical and powerful solution for accelerating high-throughput solid-state synthesis. This workflow successfully addresses the key challenges of traditional methods by enabling the parallel processing of dozens of compositions, drastically reducing researcher hands-on time, and improving reproducibility through standardized protocols. By transforming solid powders into manageable liquid suspensions, researchers can leverage the precision and tirelessness of laboratory automation to explore vast inorganic material spaces more efficiently than ever before, paving the way for accelerated discovery of next-generation functional materials.

The discovery and synthesis of novel inorganic materials are pivotal for developing next-generation technologies in energy storage, conversion, and beyond. However, the transition from computationally predicted materials to physically realized compounds remains a major bottleneck, often requiring many months or even years of experimental iterations. Solid-state synthesis, in particular, is complicated by the frequent formation of stable intermediate phases that consume the thermodynamic driving force necessary to form the desired target material, often leading to failed synthesis attempts [3]. Traditionally, precursor selection and synthesis optimization have relied heavily on researcher intuition, domain expertise, and laborious trial-and-error processes.

The integration of Artificial Intelligence (AI) and active learning into materials science is transforming this paradigm, enabling more intelligent and efficient experimental planning. This document details the application of the ARROWS3 algorithm—Autonomous Reaction Route Optimization for Solid-State Synthesis—a system designed to autonomously select optimal precursors for solid-state reactions by actively learning from experimental outcomes [3] [23]. Framed within a broader thesis on high-throughput solid-state synthesis, these application notes and protocols provide researchers with a detailed guide to implementing this AI-driven approach, which has been validated to identify effective precursor sets with substantially fewer experimental iterations compared to black-box optimization methods [3] [24].

The ARROWS3 algorithm is engineered to automate and optimize the selection of precursor sets for synthesizing a target inorganic material. Its core operational principle is based on leveraging thermodynamic domain knowledge and iterative active learning to maximize the thermodynamic driving force for the target material's formation by avoiding reactions that lead to highly stable, inert intermediates [3] [23].

The algorithm's logic flow can be broken down into several key stages, illustrated in the workflow diagram below.

Initial Precursor Ranking: Given a target material, ARROWS3 first generates a list of precursor sets that can be stoichiometrically balanced to yield the target's composition. In the absence of prior experimental data, these precursor sets are initially ranked based on the calculated thermodynamic driving force (ΔG) to form the target, using formation energies from sources like the Materials Project database [3] [24]. Precursors with the largest (most negative) ΔG are prioritized for initial testing.

Experimental Phase Analysis: The top-ranked precursor sets are tested experimentally across a range of temperatures. The products at each temperature are characterized using X-ray diffraction (XRD), and the resulting patterns are analyzed using machine learning models (e.g., probabilistic deep learning) to identify the crystalline phases present [3] [24]. This provides snapshots of the reaction pathway.

Pairwise Reaction Identification: The algorithm then determines which pairwise reactions between solid phases led to the formation of each observed intermediate [3]. This step is crucial for understanding the step-by-step evolution of the solid-state reaction.

Active Learning and Model Update: When experiments fail to produce the target, ARROWS3 learns from the outcomes. It identifies which intermediate phases are highly stable and consume a large portion of the available free energy, thereby inhibiting the target's formation. This information is used to predict the intermediates that would form in precursor sets that have not yet been tested [3] [23].

Iterative Reprioritization: The algorithm subsequently proposes new experiments using precursors predicted to avoid these unfavorable intermediates. The new ranking prioritizes precursor sets that maintain a large thermodynamic driving force at the target-forming step (ΔG′), even after accounting for the formation of intermediates [3]. This closed-loop process continues until the target is synthesized with high yield or all precursor options are exhausted.

Key Research Reagents and Materials